High-Capacity Spatial Structured Light for Robust and Accurate Reconstruction

Abstract

1. Introduction

2. Key Technology and Algorithm

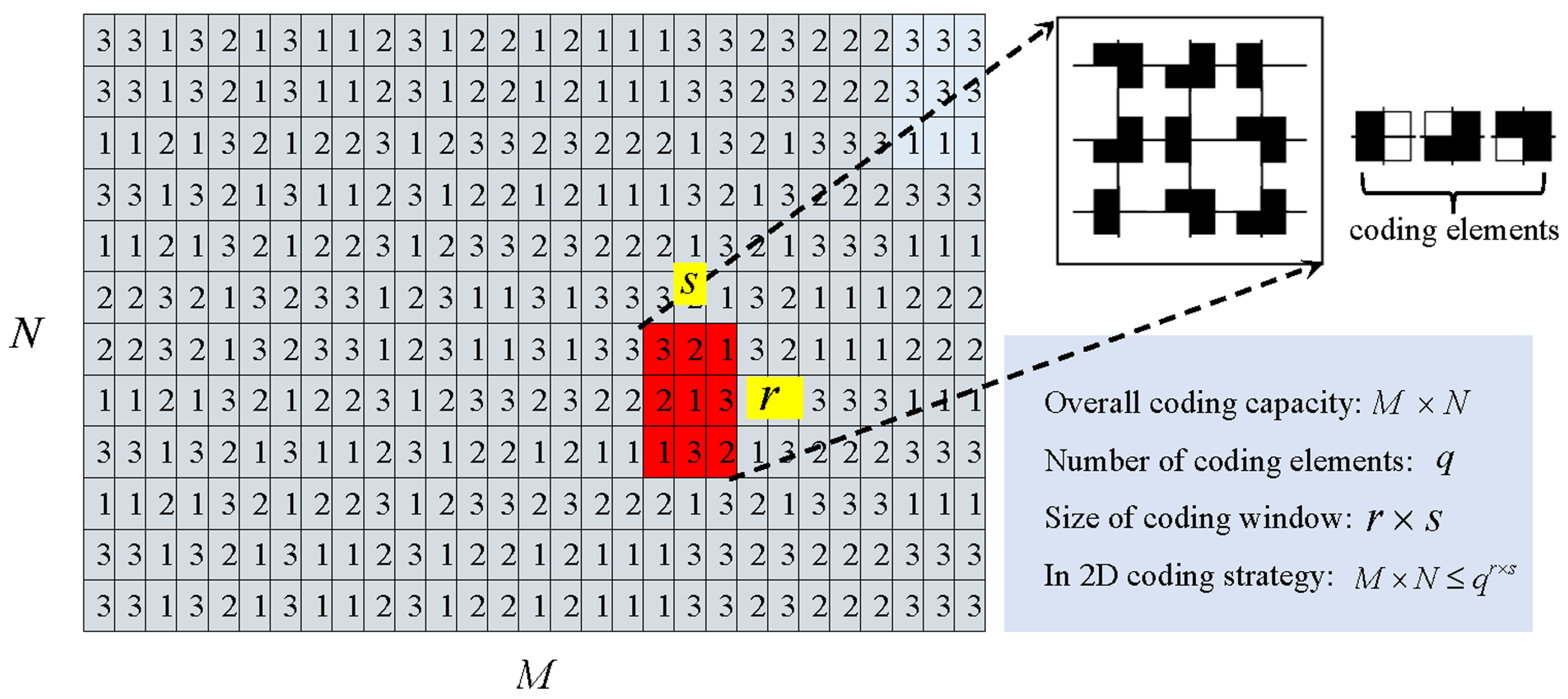

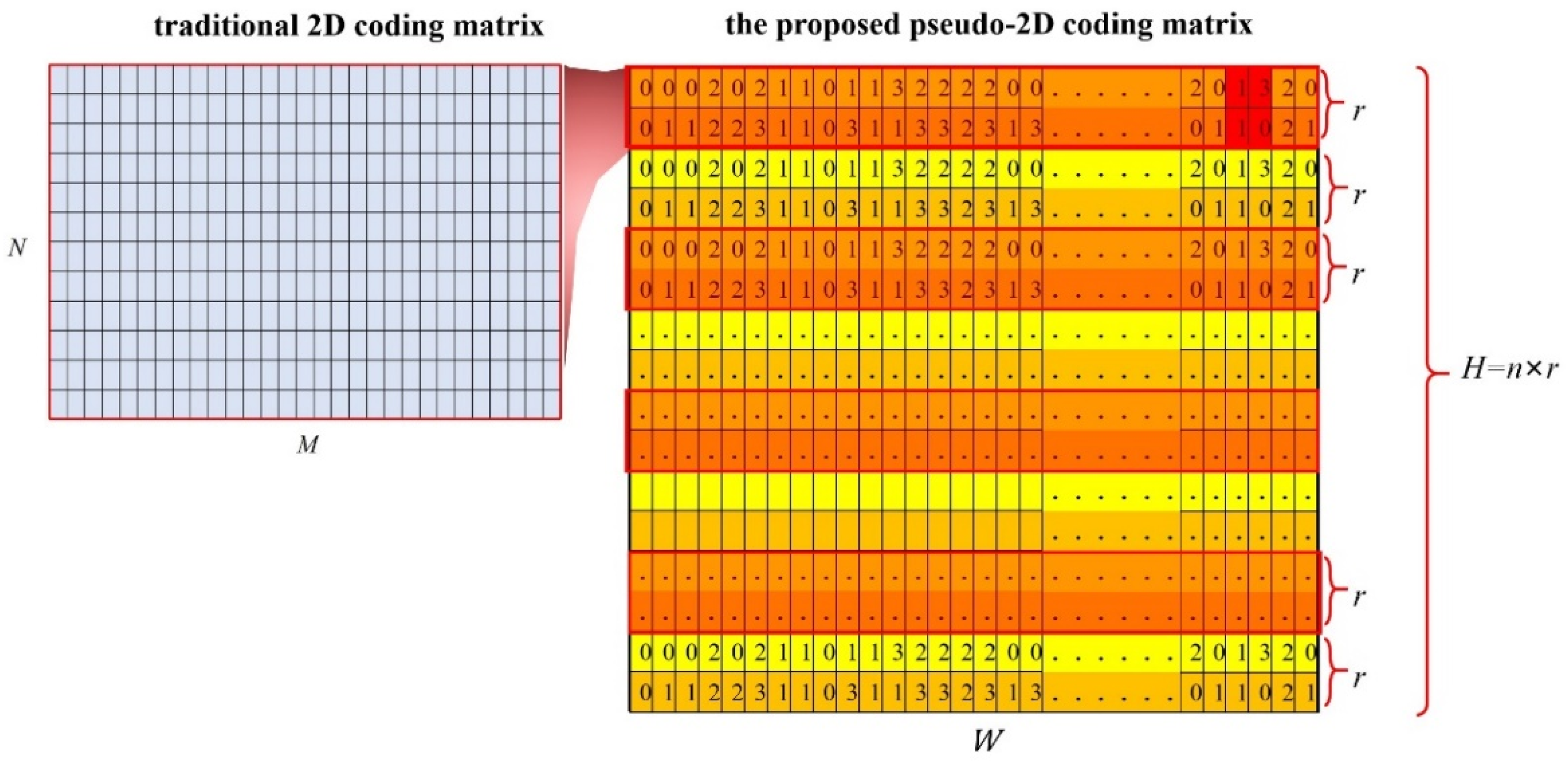

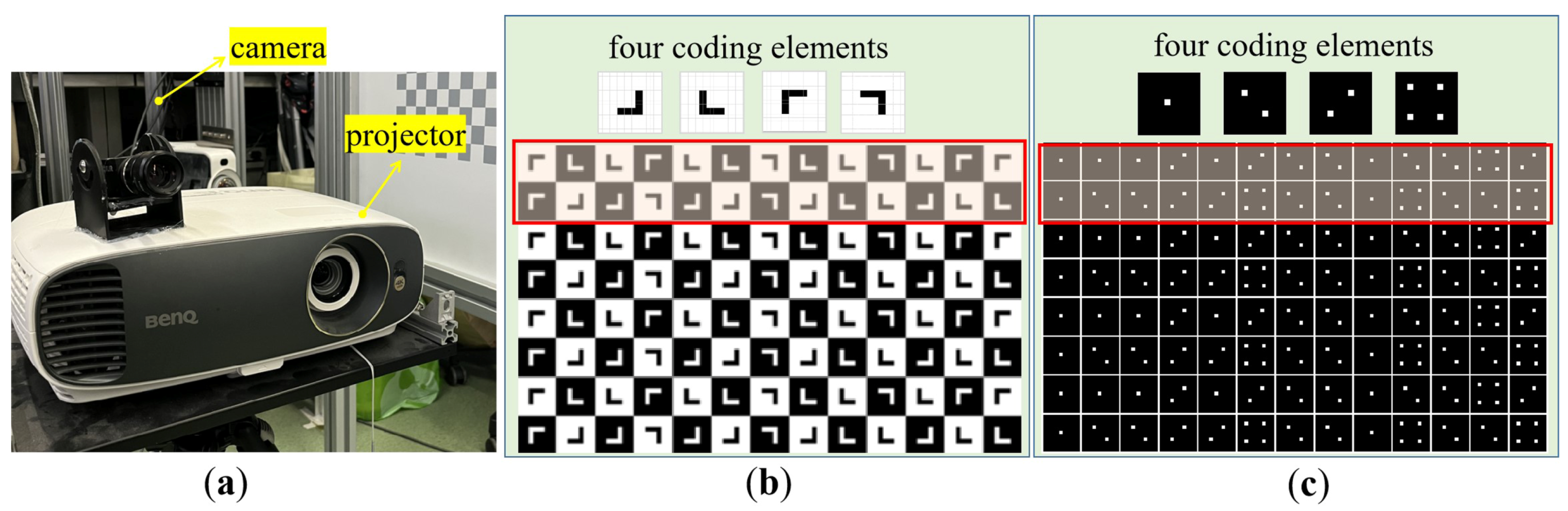

2.1. Pseudo-2D Coding Method

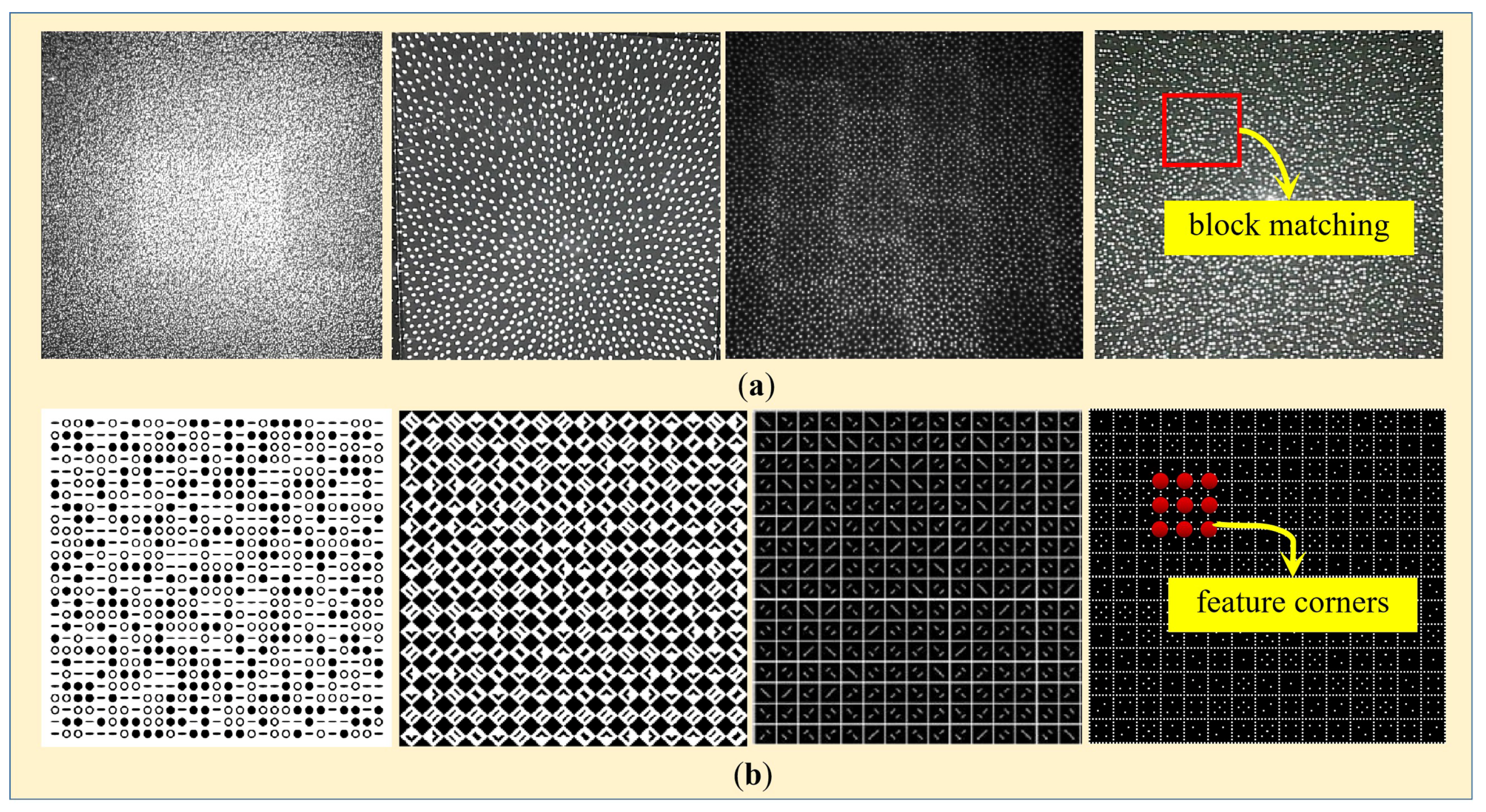

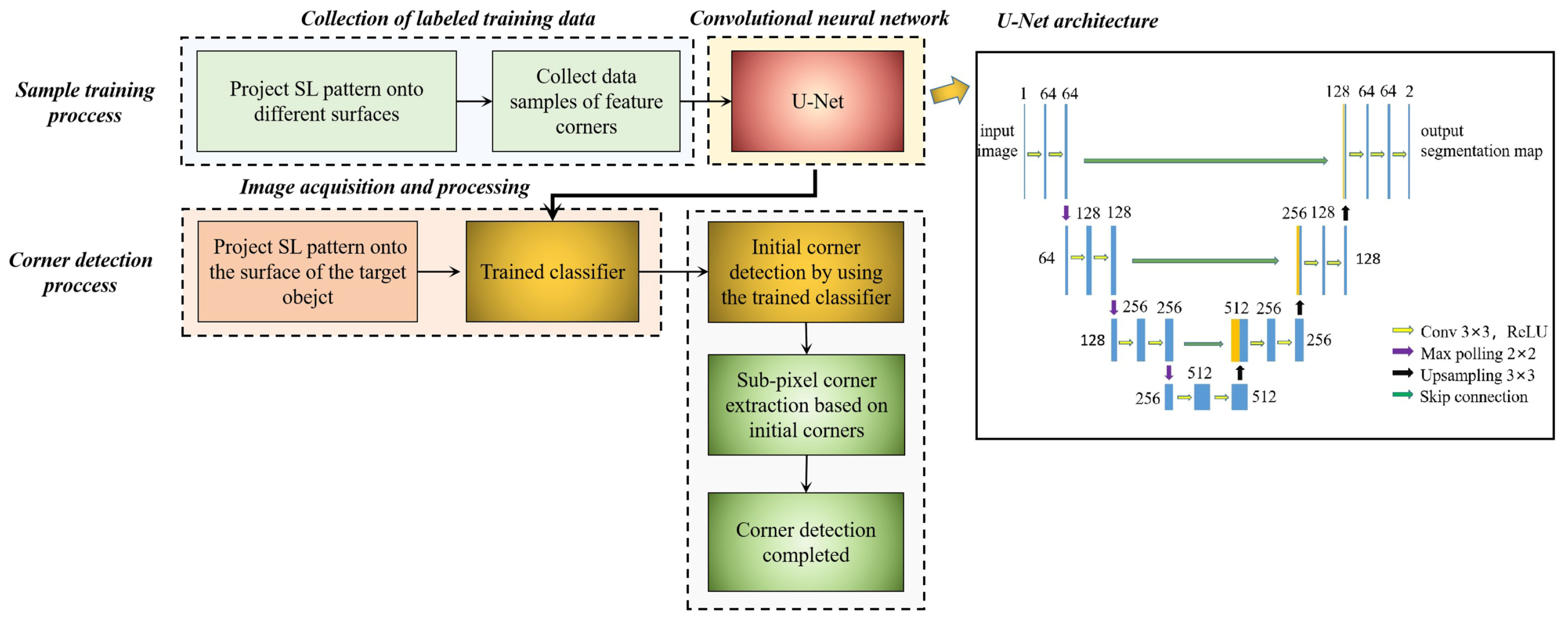

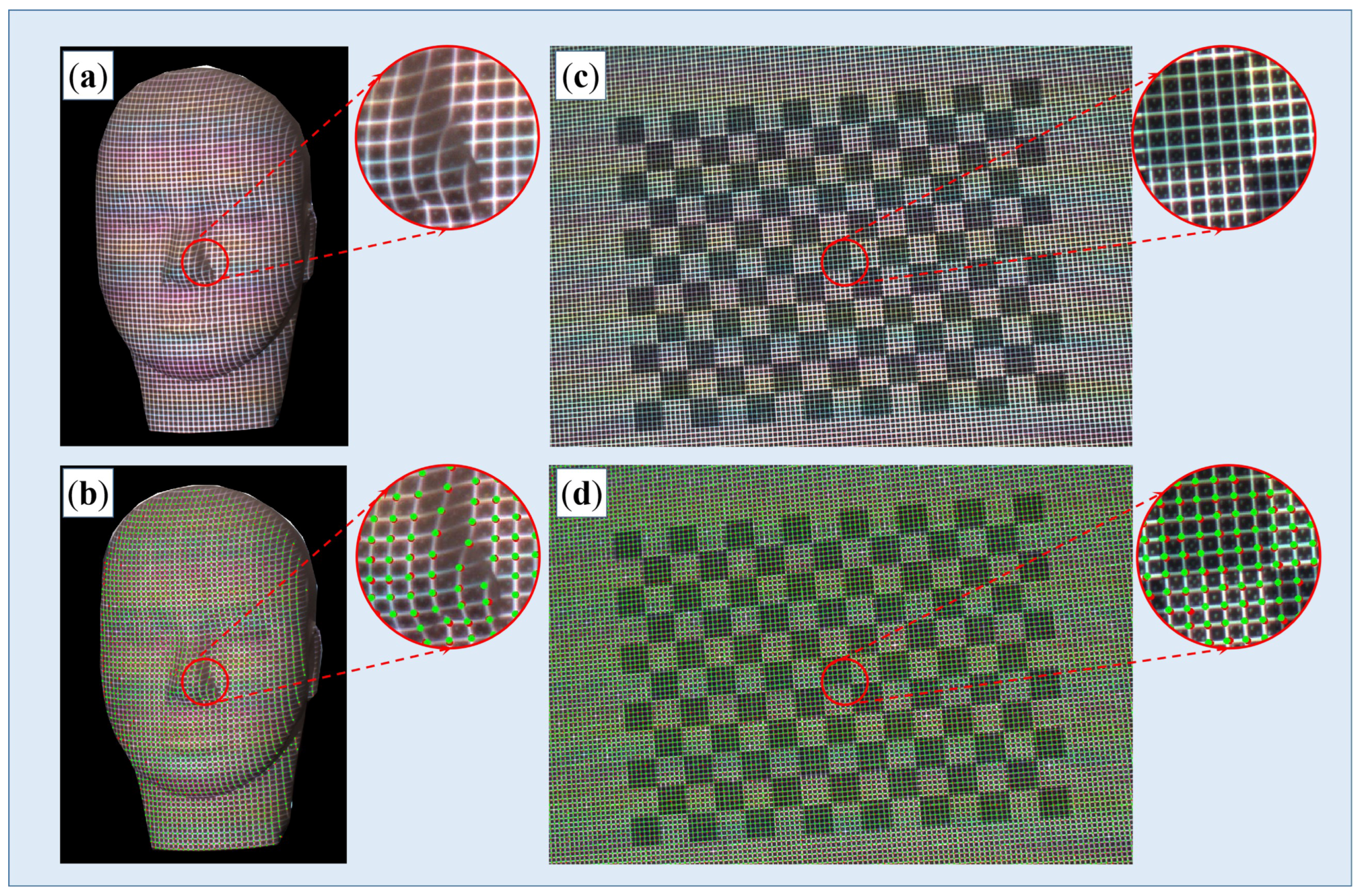

2.2. Detection of the Coded Feature Points

- (1)

- Collection of training Samples.

- (2)

- End-to-end corner detection with U-Net.

2.3. Decoding of the Pseudo-2D Coding Pattern

3. Experiments and Results

3.1. Performance Evaluation of the Developed End-to-End Corner Detection Algorithm

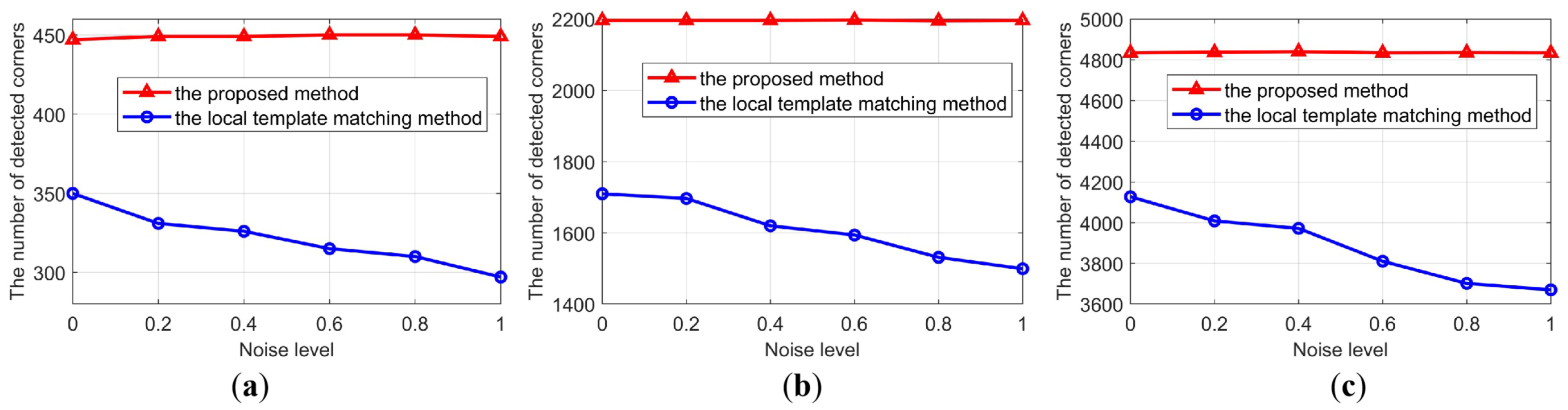

3.1.1. Performance w.r.t. (with Respect to) the Noise Level

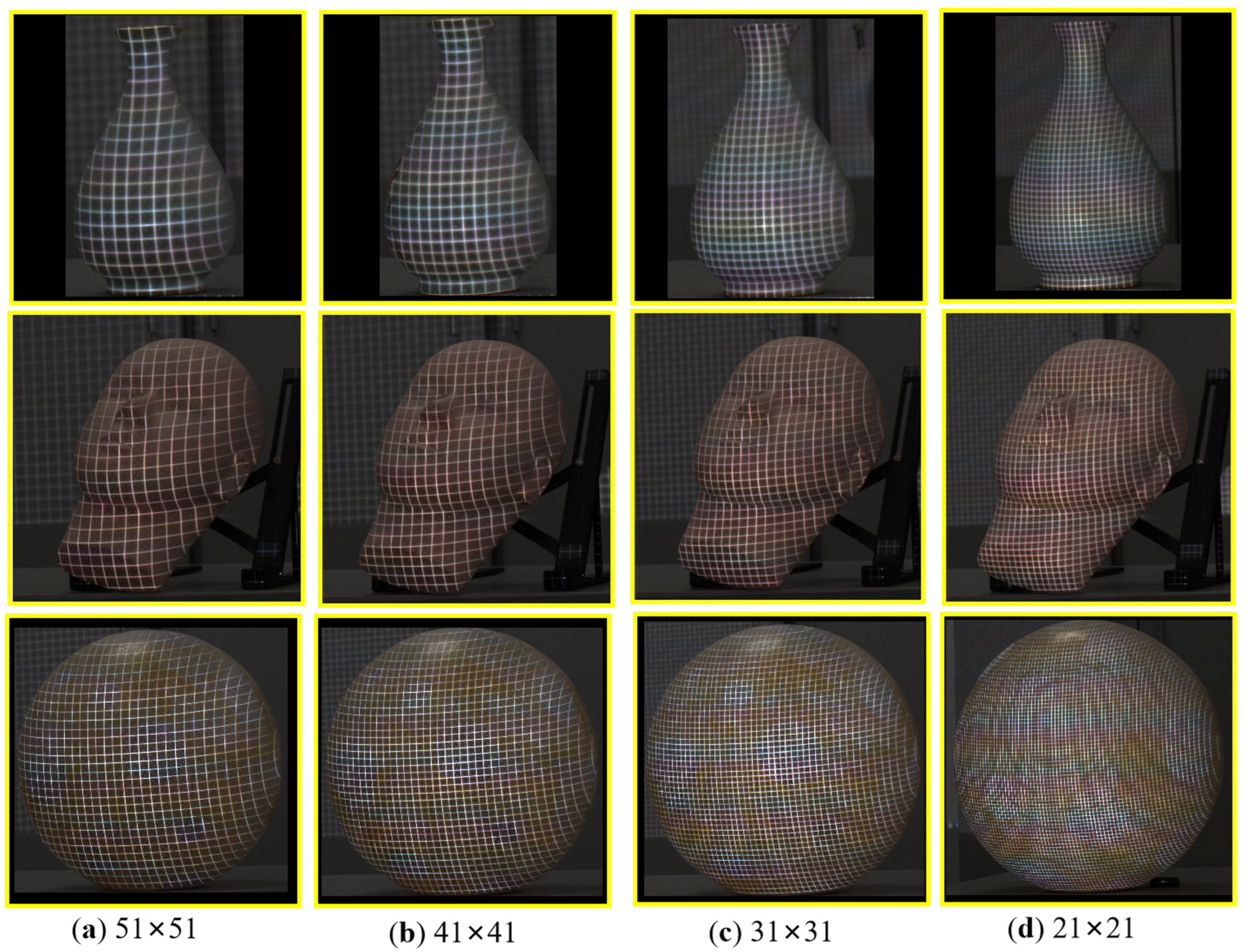

3.1.2. Performance w.r.t. the Density of the Feature Points

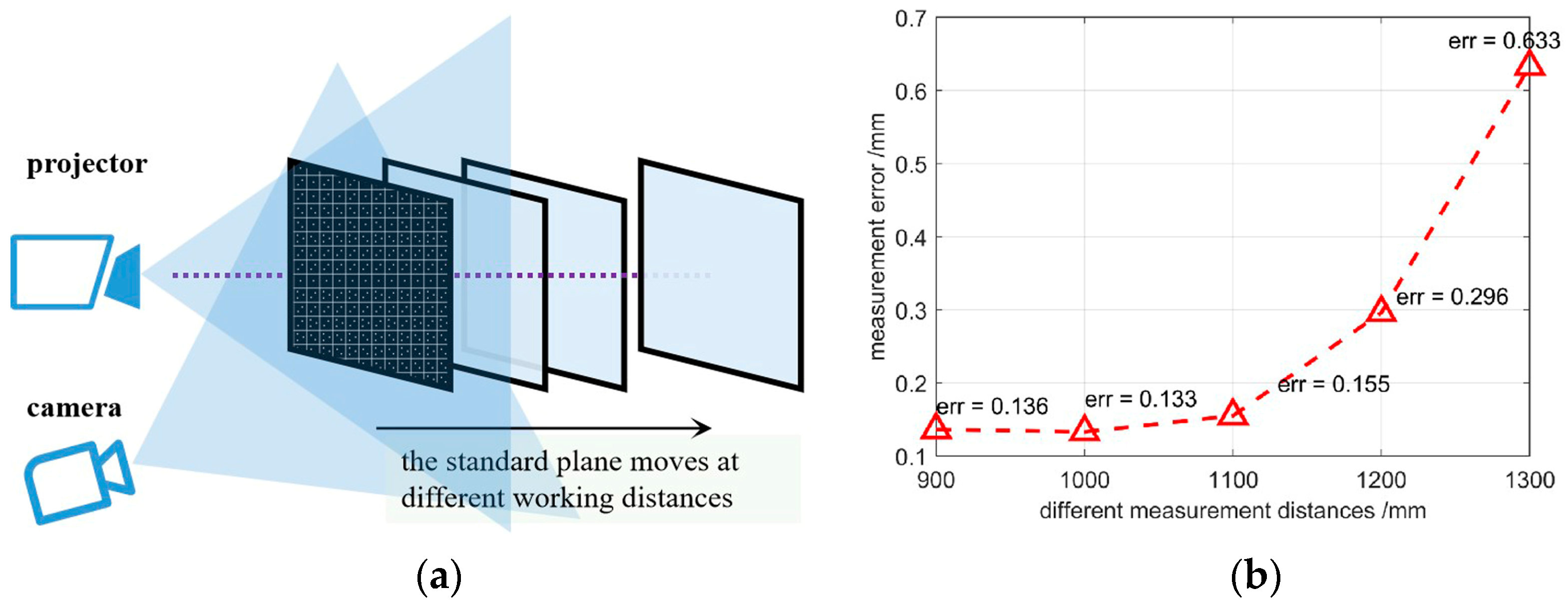

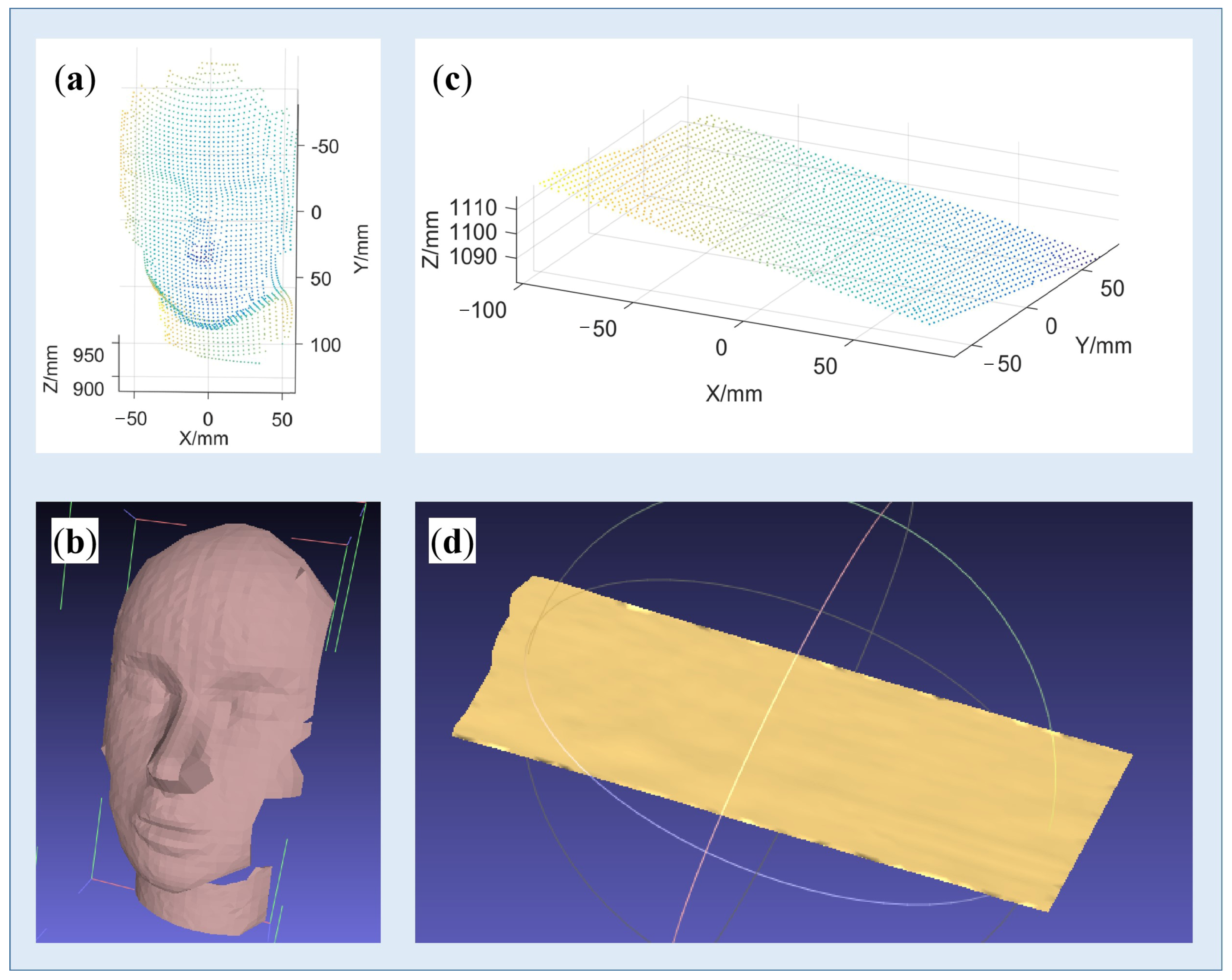

3.2. Accuracy Evaluation of the Developed System

3.3. Reconstruction of Surfaces with Rich Textures

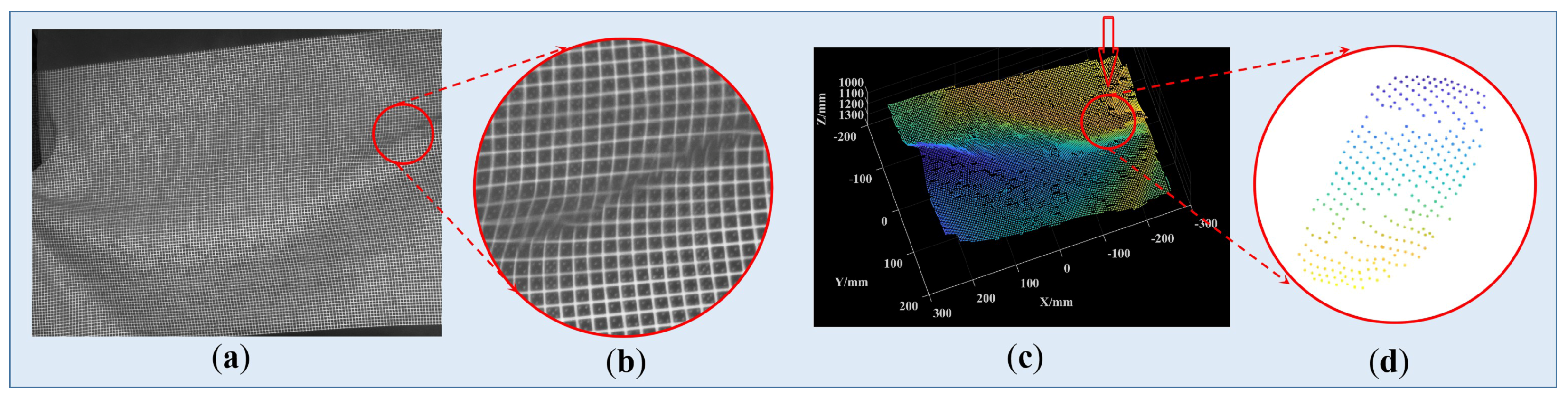

3.4. Reconstruction of Surfaces with Large Mutations

4. Discussions

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Tian, X.; Liu, R.; Wang, Z.; Ma, J. High quality 3D reconstruction based on fusion of polarization imaging and binocular stereo vision. Inf. Fusion 2022, 77, 19–28. [Google Scholar] [CrossRef]

- Samavati, T.; Soryani, M. Deep learning-based 3D reconstruction: A survey. Artif. Intell. Rev. 2023, 1–45. [Google Scholar] [CrossRef]

- Kartashov, O.O.; Chernov, A.V.; Alexandrov, A.A.; Polyanichenko, D.S.; Ierusalimov, V.S.; Petrov, S.A.; Butakova, M.A. Machine Learning and 3D Reconstruction of Materials Surface for Nondestructive Inspection. Sensors 2022, 22, 6201. [Google Scholar] [CrossRef] [PubMed]

- Nguyen, H.; Tran, T.; Wang, Y.; Wang, Z. Three-dimensional shape reconstruction from single-shot speckle image using deep convolutional neural networks. Opt. Lasers Eng. 2021, 143, 106639. [Google Scholar] [CrossRef]

- Wang, J.; Yang, Y. Phase extraction accuracy comparison based on multi-frequency phase-shifting method in fringe projection profilometry. Measurement 2022, 199, 111525. [Google Scholar] [CrossRef]

- Salvi, J.; Fernandez, S.; Pribanic, T.; Llado, X. A state of the art in structured light patterns for surface profilometry. Pattern Recognit. 2010, 43, 2666–2680. [Google Scholar] [CrossRef]

- Yin, W.; Hu, Y.; Feng, S.; Huang, L.; Kemao, Q.; Chen, Q.; Zuo, C. Single-shot 3D shape measurement using an end-to-end stereo matching network for speckle projection profilometry. Opt. Express 2021, 29, 13388–13407. [Google Scholar] [CrossRef] [PubMed]

- Gu, F.; Song, Z.; Zhao, Z. Single-shot structured light sensor for 3d dense and dynamic reconstruction. Sensors 2020, 20, 1094. [Google Scholar] [CrossRef] [PubMed]

- Khan, D.; Kim, M.Y. High-density single shot 3D sensing using adaptable speckle projection system with varying preprocessing. Opt. Lasers Eng. 2021, 136, 106312. [Google Scholar] [CrossRef]

- Salvi, J.; Pages, J.; Batlle, J. Pattern codification strategies in structured light systems. Pattern Recognit. 2004, 37, 827–849. [Google Scholar] [CrossRef]

- Kawasaki, H.; Furukawa, R.; Sagawa, R.; Yagi, Y. Dynamic scene shape reconstruction using a single structured light pattern. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2008), Anchorage, AK, USA, 23–28 June 2008; pp. 1–8. [Google Scholar]

- Albitar, C.; Graebling, P.; Doignon, C. Robust Structured Light Coding for 3D Reconstruction. In Proceedings of the 11th IEEE International Conference on Computer Vision (ICCV2007), Rio de Janeiro, Brazil, 14–21 October 2007; pp. 1–6. [Google Scholar]

- Tang, S.; Zhang, X.; Song, Z.; Jiang, H.; Nie, L. Three-dimensioanl surface reconstruction via a robust binay shape-coded structured ligh method. Opt. Eng. 2017, 56, 014102. [Google Scholar] [CrossRef]

- Song, Z.; Tang, S.M.; Gu, F.F.; Shi, C.; Feng, J.Y. DOE-based structured-light method for accurate 3D sensing. Opt. Lasers Eng. 2019, 120, 21–30. [Google Scholar] [CrossRef]

- Gu, F.; Cao, H.; Song, Z.; Xie, P.; Zhao, J.; Liu, J. Dot-coded structured light for accurate and robust 3D reconstruction. Appl. Opt. 2020, 59, 10574–10583. [Google Scholar] [CrossRef] [PubMed]

- Sagawa, R.; Furukawa, R.; Kawasaki, H. Dense 3D reconstruction from high frame-rate video using a static grid pattern. IEEE Trans. Pattern Anal. Mach. Intell. 2014, 36, 1733–1747. [Google Scholar] [CrossRef] [PubMed]

- Morano, R.A.; Ozturk, C.; Conn, R.; Dubin, S.; Zietz, S.; Nissano, J. Structured light using pseudorandom codes. IEEE Trans. Pattern Anal. Mach. Intell. 1998, 20, 322–327. [Google Scholar] [CrossRef]

- Lei, Y.; Bengtson, K.R.; Li, L.; Allebach, J.P. Design and decoding of an M-array pattern for low-cost structured light 3D reconstruction systems. In Proceedings of the IEEE International Conference on Image Processing (ICIP2013), Melbourne, Australia, 15–18 September 2013; pp. 2168–2172. [Google Scholar]

- Hall-Holt, O.; Rusinkiewicz, S. Stripe boundary codes for real-time structured-light range scanning of moving objects. In Proceedings of the Eighth IEEE International Conference on Computer Vision (ICCV 2001), Vancouver, BC, Canada, 7–14 July 2001; Volume 2, pp. 359–366. [Google Scholar]

- Trif, N.; Petriu, E.M.; McMath, W.S.; Yeung, S.K. 2-D pseudo-random encoding method for vision-based vehicle guidance. In Proceedings of the Intelligent Vehicles’ 93 Symposium, Tokyo, Japan, 14–16 July 1993; pp. 297–302. [Google Scholar]

- Gu, F.; Feng, J.; Xie, P.; Cao, H.; Song, Z. Robust feature detection method in high-density structured light system. In Proceedings of the 2019 3rd International Conference on Computer Science and Artificial Intelligence, Normal, IL, USA, 6–8 December 2019; pp. 191–195. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Medical Image Computing and Computer-Assisted Intervention–MICCAI 2015, Proceedings of the 18th International Conference, Munich, Germany, 5–9 October 2015; Proceedings, Part III 18; Springer International Publishing: New York, NY, USA, 2015; pp. 234–241. [Google Scholar]

- Neubeck, A.; Van Gool, L. Efficient non-maximum suppression. In Proceedings of the 18th International Conference on Pattern Recognition, Hong Kong, China, 20–24 August 2006. [Google Scholar]

- Feng, Z.; Man, D.; Song, Z. A pattern and calibration method for single-pattern structured light system. IEEE Trans. Instrum. Meas. 2019, 69, 3037–3048. [Google Scholar] [CrossRef]

| m (m = r × s) | q = 3 | q = 4 | q = 5 | q = 6 |

|---|---|---|---|---|

| 2 | x2 + x + 2 | x2 + x + A | x2 + Ax + A | x2 + x + A |

| 3 | x3 + 2x + 1 | x3 + x2 + x + A | x3 + x + A | x3 + x + A |

| 4 | x4 + x+ 2 | x4 + x2 + Ax + A2 | x4 + x+A3 | x4 + x + A5 |

| Focal Length/Pixels | Principal Points/Pixels | Lens Distortion Coefficients | |

|---|---|---|---|

| Camera | (8980.67, 8975.48) | (1991.58, 1511.24) | (−0.077,0, 0, 0, 0) |

| Projector | (6805.51, 6797.03) | (1942.82, 2946.19) | (−0.009, 0, 0, 0, 0) |

| Translation vector T/mm: (156.79, −108.33, 41.52) | |||

| Rotation vector om: (0.18, −0.17, 0.08) | |||

| Different Sizes of Pattern Blocks/Pixels | 51 × 51 | 41 × 41 | 31 × 31 | 21 × 21 | ||

|---|---|---|---|---|---|---|

| Number of detected feature corners | Vase | Ground truth | 135 | 251 | 449 | 845 |

| Traditional method | 110 | 197 | 350 | 661 | ||

| The proposed method | 134 | 250 | 447 | 835 | ||

| Ratio | ↑21.8% | ↑26.9% | ↑27.7% | ↑26.3% | ||

| Face model | Ground truth | 285 | 525 | 903 | 2218 | |

| Traditional method | 224 | 410 | 701 | 1710 | ||

| The proposed method | 285 | 523 | 902 | 2196 | ||

| Ratio | ↑27.2% | ↑27.6% | ↑28.7% | ↑27.8% | ||

| Ball | Ground truth | 643 | 1103 | 1932 | 4898 | |

| Traditional method | 551 | 935 | 1620 | 4128 | ||

| The proposed method | 642 | 1101 | 1926 | 4834 | ||

| Ratio | ↑16.5% | ↑17.8% | ↑18.9% | ↑17.1% | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gu, F.; Du, H.; Wang, S.; Su, B.; Song, Z. High-Capacity Spatial Structured Light for Robust and Accurate Reconstruction. Sensors 2023, 23, 4685. https://doi.org/10.3390/s23104685

Gu F, Du H, Wang S, Su B, Song Z. High-Capacity Spatial Structured Light for Robust and Accurate Reconstruction. Sensors. 2023; 23(10):4685. https://doi.org/10.3390/s23104685

Chicago/Turabian StyleGu, Feifei, Hubing Du, Sicheng Wang, Bohuai Su, and Zhan Song. 2023. "High-Capacity Spatial Structured Light for Robust and Accurate Reconstruction" Sensors 23, no. 10: 4685. https://doi.org/10.3390/s23104685

APA StyleGu, F., Du, H., Wang, S., Su, B., & Song, Z. (2023). High-Capacity Spatial Structured Light for Robust and Accurate Reconstruction. Sensors, 23(10), 4685. https://doi.org/10.3390/s23104685