Abstract

In the world reference context, although virtual reality, augmented reality and mixed reality have been emerging methodologies for several years, only today technological and scientific advances have made them suitable to revolutionize clinical care and medical contexts through the provision of enhanced functionalities and improved health services. This systematic review provides the state-of-the-art applications of the Microsoft® HoloLens 2 in a medical and healthcare context. Focusing on the potential that this technology has in providing digitally supported clinical care, also but not only in relation to the COVID-19 pandemic, studies that proved the applicability and feasibility of HoloLens 2 in a medical and healthcare scenario were considered. The review presents a thorough examination of the different studies conducted since 2019, focusing on HoloLens 2 medical sub-field applications, device functionalities provided to users, software/platform/framework used, as well as the study validation. The results provided in this paper could highlight the potential and limitations of the HoloLens 2-based innovative solutions and bring focus to emerging research topics, such as telemedicine, remote control and motor rehabilitation.

1. Introduction

Virtual reality (VR), augmented reality (AR) and mixed reality (MR) have been emerging methodologies for several years, but only today technological and scientific advances have made them suitable to allow users to experience a spectacular imaginary world, generating realistic images, sounds and other sensations [1].

Although VR, AR and MR may seem apparently similar terms, it is necessary to deepen their definition in order to differentiate their functioning.

VR, the most widely known technology, is completely immersive and deceives the senses into thinking that you are in a different environment or in a parallel world compared to the real one. In a virtual reality environment, using a head-mounted display (HMD) or headset, a user feels completely immersed in an alternate reality and can manipulate objects while experiencing computer-generated visual effects and sounds.

Alternatively, augmented reality [2] is characterized by the ability to overlay digital information on real elements. Augmented reality keeps the real world at the center, but enhances it with other digital details, bringing new layers of perception and complementing reality or environment.

Mixed reality [3] blends elements of the real and digital worlds. In mixed reality, the user can interact and move elements and environments, both physical and virtual, using the latest generation of sensory and imaging technologies. It offers the possibility of having one foot (or one hand) in the real world and the other in an imaginary place, breaking down the basic concepts of reality and imagination.

In the world reference context, the importance of VR, AR and MR technologies has been recognized in several fields (including healthcare, architecture and civil engineering, manufacturing, defense, tourism, automation and education) [1]. The wave of digital transformation has mainly involved the medical sectors, Indeed, the combination of the advanced digital platforms for handling big data and the high-performance viewing devices using a head-mounted display, has been definitely useful for diagnostics and treatment clinical decisions [4,5]

Scientific and technological advances in this area have enabled the design and development of several devices such as Google Glass, Vuzix Blade and Epson Moverio [6], making them suitable to revolutionize clinical care and medical contexts through the provision of enhanced functionalities and improved health services.

Microsoft® HoloLens [7] was developed and manufactured by Microsoft (MS) and can be presented as a pair of mixed reality smart glasses able to describe an environment in which real and virtual elements appear to coexist. More specifically, the Microsoft® HoloLens is a novel MR-based HMD that makes the user the protagonist of an immersive experience and allows him to interact with the surrounding environment using holograms whilst engaging their senses throughout. It is used in a variety of applications such as medical and surgical aids and systems, medical education and simulation, architecture and several engineering fields (civil, industrial etc.) [1].

The first generation of HoloLens [8], released in 2016, attracted the consideration of the scientific and technological context because of its advanced playing methods and concepts.

In November 2019, Microsoft Corporation released the subsequent HoloLens 2 [9], which is an upgrade in terms of hardware and software, compared with its predecessor. Indeed, to address the hardware and software limitations of the HoloLens version1, including its restricted field of view, limited battery life, and relatively heavy headset, Microsoft introduced the HoloLens 2, which presents an enhanced field of view (52°), reduced weight (566 g), and improved battery life (3 h) [10].

The rapid advancements in technology over the last decade has significantly impacted the medicine and health sciences. Driven by the growing need to make health care safer, the use of eXtended Reality (XR) (virtual, augmented, mixed) throughout the continuum of medical education and training is demonstrating appreciable benefit.

The epidemiological context of the coronavirus disease 2019 (COVID-19) pandemic is an unprecedented opportunity to speed up the development and implementation of innovative devices and biomedical solutions, as well as the adoption of eXtended Reality modalities that have experienced a tremendous increase in demand. Indeed, they played an important role in the fight of this pandemic through their deployment in various crucial areas such as telemedicine, online education and training, marketing and healthcare monitoring [11].

Remarkable advantages have already been identified from using the HoloLens for medical use [1], from training in anatomy and diagnostics to acute and critical patient care, such as for visualizing organs prior to surgery [12], teaching dental students [13], and in pathology education [14].

This paper aims to present the state-of-the-art applications of the Microsoft® HoloLens 2 in a medical and healthcare context. Focusing on the potential that this technology has to revolutionize care, also but not only in relation to the COVID-19 pandemic, studies that proved the applicability and feasibility of HoloLens 2 in a medical and healthcare scenario were considered.

The review presents a thorough examination of the different studies conducted since 2019, focusing on HoloLens 2 sub-field applications, device functionality as well as natively integrated or other integrative software used.

The rest of this paper is organized as follows: Section 2 details the methodology used for this review. Section 3 illustrates a synthetic overview of most popular commercially available optical see-through head-mounted displays and examines in depth the HoloLens 2 technical specifications and the comparison with the previous version (HoloLens 1), justifying the choice to focus our review only on the latest version. Section 4 summarizes the different existing solutions describing the HoloLens 2 applications in a medical and healthcare context. Section 5 discusses the current status and trends in research based on HoloLens 2 by year, subfield, type of visualization technology, device functionalities provided to users and software/platform/framework used, as well as study validation, while Section 6 concludes the study, focusing on the potential of this innovative technology in a biomedical scenario.

2. Research Methodology

2.1. Search Strategy

This systematic review was conducted following the preferred reporting items for systematic reviews and meta-analyses [15]. A comprehensive literature search was conducted on 19 April 2022. The most common engineering and medical databases (IEEE Xplore, PubMed, Science Direct and Scopus) were selected for research, as reported in Table 1. The review was limited to texts published in English between 2019 and 2022, and for which abstracts were available. Considering the scope of the systematic review, the specific keywords were defined. This structured search string was used to organize this paper: “HoloLens 2” OR “MS HoloLens 2”—AND—“Healthcare” OR “Medicine”. In addition, the articles identified through the reference list of previously retrieved articles were included in order to increase the likelihood that all the relevant studies were identified.

Table 1.

Databases used for this review.

2.2. Inclusion and Exclusion Criteria

Articles were considered for inclusion only if: (1) they used at least the Microsoft® HoloLens version 2 (studies that described a comparison between first and second generation HoloLens were also considered) but not exclusively version 1; (2) they described a partial or total demonstration of the feasibility, effectiveness, and applicability of HoloLens v.2 in a medical and healthcare context; (3) they described complete research; (4) they are written in the English language.

The articles were also screened for the following exclusion criteria: (1) contributions in which the information related to the HoloLens version is not specifically reported; (2) studies that described application of HoloLens 2 in studies involving animals and not human; (3) HoloLens 2 application field is different from medical or healthcare context; (4) articles without full-text available.

Exclusion criteria were also related to books or book chapters, letters, review articles, editorials, and short communications.

2.3. Study Selection

The state-of-the-art applications of MS HoloLens 2 in a medical and healthcare context is presented in this review.

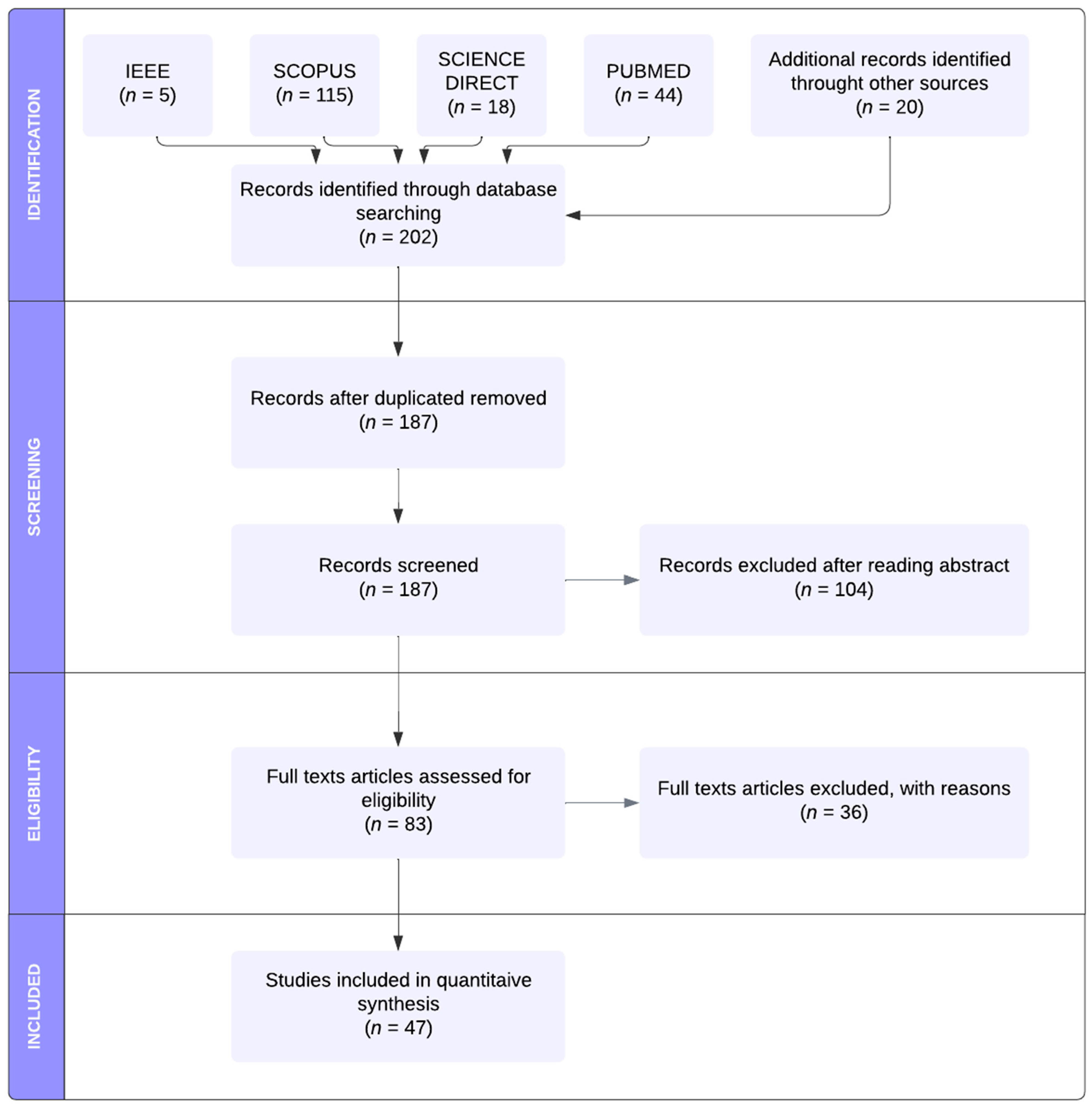

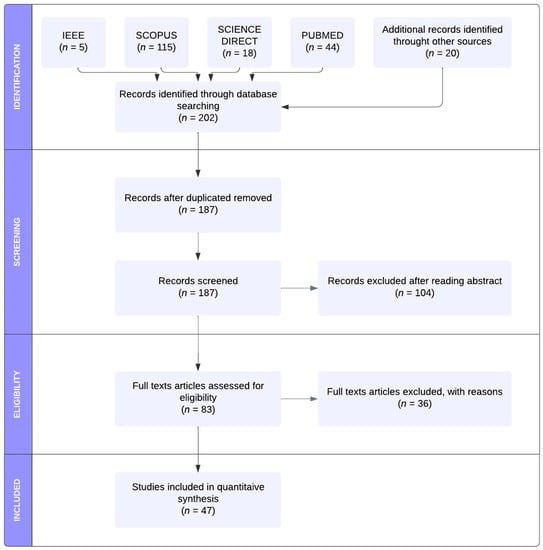

A total of 202 search results were identified through database searching and additional sources. After removing all duplicates, 187 studies underwent title and abstract screening, and the inclusion criteria were examined. The full texts of 83 papers assessed for eligibility were carefully analyzed. Thirteen articles [16,17,18,19,20,21,22,23,24,25,26,27,28] were excluded due to the exclusion criteria (1), one contribution [29] due to the exclusion criteria (2), 17 scientific results [30,31,32,33,34,35,36,37,38,39,40,41,42,43,44,45,46] due to the exclusion criteria (3) and 5 [47,48,49,50,51] contributions due to the exclusion criteria (4). Finally, only 47 studies were included in the quantitative synthesis. Figure 1 illustrated the methodological approach used. In order to facilitate analysis and comparisons, all relevant HoloLens 2-based existing solutions and related system parameters were summarized and discussed in Section 4.

Figure 1.

PRISMA workflow of the identification, screening, eligibility and inclusion of the studies in the systematic review.

3. HoloLens 2 versus Other Commercially Available Optical See-Through Head-Mounted Displays

The epidemiological context of the coronavirus disease 2019 pandemic has had wide-reaching impacts on all segments and sectors of society, imposing severe restrictions on the individuals’ participation in daily living activities, mobility and transport, on access to education, services and healthcare. This scenario represented a unique chance to speed up the significant investments by technology companies, including Google, Apple, Microsoft, and Meta (Facebook), into eXtended Reality HMD technology [52]. Table 2 presents a basic overview of the relevant commercially available OST-HMDs: Google Glass 2 Enterprise Edition (Google, Mountain View, CA, USA) [53], HoloLens 1 [8] and 2 [9] (Microsoft, Redmond, WA, USA), Magic Leap 1 and 2 (Magic Leap, Plantation, FL, USA) [54,55]. The associated technical specifications are included (see for more information [52,56]).

Table 2.

Basic technical specifications for commercially available optical see-through head-mounted displays.

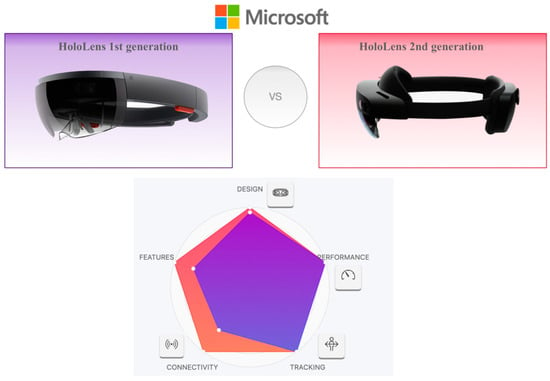

HoloLens First and Second Generation Comparison: A Detailed Study of Features, Functionalities and Performances

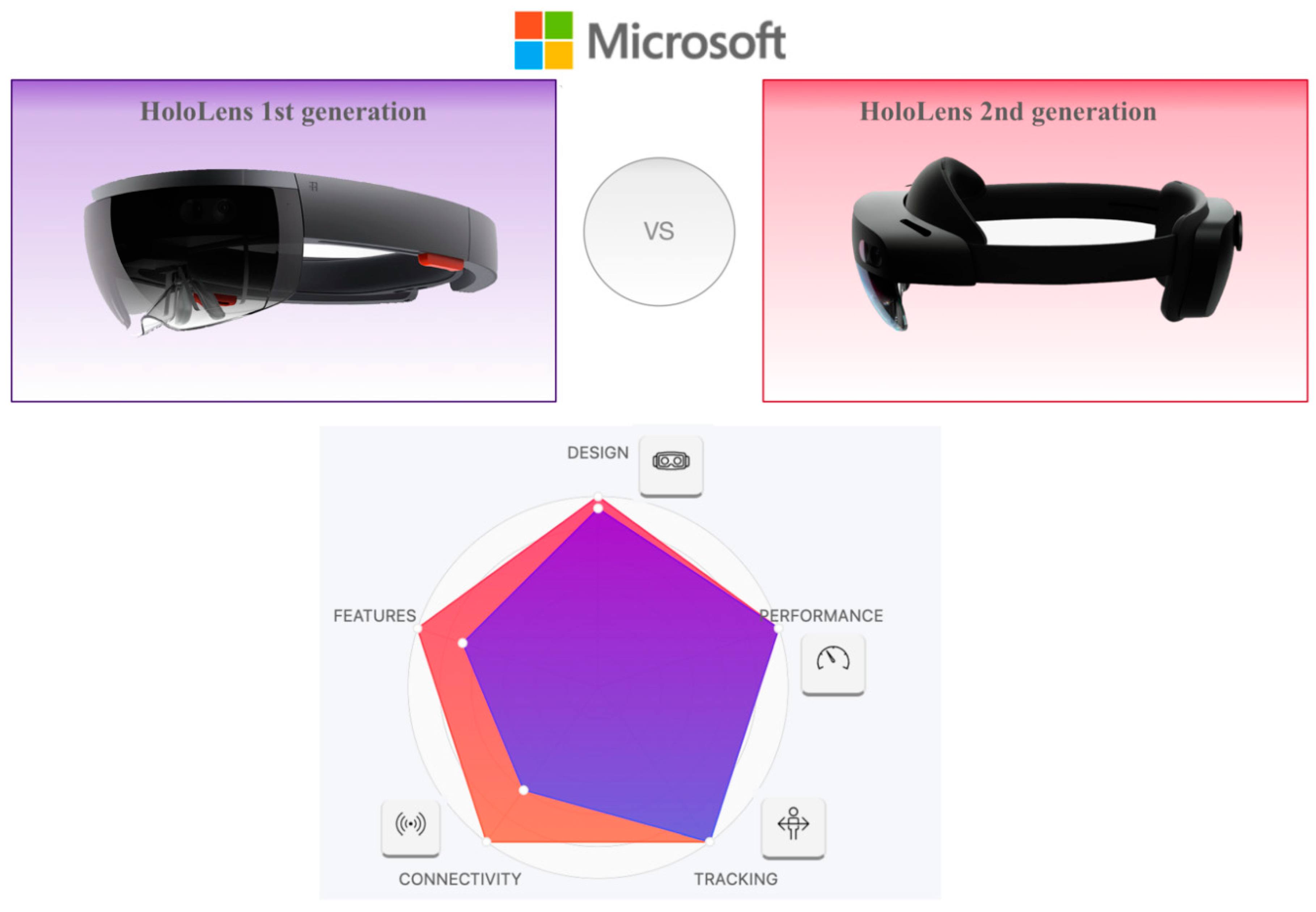

This section illustrates a more detailed overview of HoloLens 2 technical specifications and examines the comparison with the previous version (HoloLens 1) [8] (Figure 2, Table 3), justifying the choice to focus our review only on the latest version.

Figure 2.

HoloLens’ first- and second-generation comparison.

Table 3.

HoloLens 2 specifications compared to the first-generation HoloLens.

The first generation of HoloLens, released in 2016, attracted the consideration of the scientific and technological context because of its advanced playing methods and concepts.

However, the hardware and software limitations of the HoloLens version1, including its restricted field of view, limited battery life, and relatively heavy headset, prompted the company to introduce the HoloLens 2.

Indeed, in November 2019, Microsoft Corporation released the subsequent HoloLens 2, which is an upgrade in terms of hardware (enhanced field of view (52°), reduced weight (566 g) and improved battery life (3 h)) and software, compared with its predecessor.

Considering the technical characteristics shown in Table 2 and Table 3, the Microsoft HoloLens 2 is the best head-mounted display headset on the market. It is a very elegant device, made with high-quality materials and offers, undisputedly, the best position tracking. The hand tracking works extraordinarily well and the 3D viewing is much more realistic (objects hardly wobble when moving and remain super stable). Moreover, to our knowledge, no other similar commercially available system has undergone the rigorous validation process of the HoloLens 2 [57].

4. MS HoloLens 2 Applications in Medical and Healthcare Context: Literature Results

In this section, several existing applications of MS HoloLens 2 in medical and healthcare context are carefully analyzed and illustrated. The main characteristics in terms of clinical sub-field applications, device functionalities provided to users, software/platform/framework used, as well as study validation are summarized in Table 4.

The use of HoloLens 2 in a medical and healthcare context was analyzed by dividing contributions into the following sub-field applications: surgical navigation, AR-BCI (Brain-Computer Interface) systems integration and human computer interaction (HCI), gait analysis and rehabilitation, medical education and training/virtual teaching/tele-mentoring/tele-consulting and other applications.

4.1. Surgical Navigation

Most of the studies [58,59,60,61,62,63,64,65,66,67,68,69,70,71,72,73,74,75,76,77,78,79,80,81,82,83,84,85] in this review are focused on application of HoloLens 2 in surgical navigation in the operating room and in the emergency department. The use of the AR/MR based system in this context provided the user with computer-generated information superimposed to real-world environment and improved accuracy, safety and efficacy of surgical procedures [86]. The AR-based HoloLens 2 is mainly used as surgical aids aimed at the visualization of medical data, blood vessel search and targeting support for precise positioning of mechanical elements [86].

4.2. Human Computer Interaction and AR-BCI Systems Integration

In recent years, the Brain-Computer Interface application has been growing rapidly, establishing itself as an emerging technology [87] tested in several scenarios such as rehabilitation [88], robotics [89], precision surgery and speech recognition. However, the usability of many professional brain-sensing equipment remains limited. Indeed, these systems remain expensive, bulky, uncomfortable to wear due to the gel applied to the electrodes and tethered, as well as prone to classification errors. Thus, the modern trend of the scientific community is directed to the use of BCI systems in association with other input modalities such as gaze trackers [90], or HMDs such as Virtual Reality (VR) [91] and Augmented Reality (AR) headsets [92,93].

Two research contributions [94,95] integrated the BCI and AR HMD systems within the same physical prototype. More specifically, in a first pilot study [94], the authors proposed a prototype which combines the Microsoft HoloLens 2 with an EEG BCI system based on covert visuospatial attention (CVSA)—a process of focusing attention on different regions of the visual field without overt eye movements. Fourteen participants were enrolled to test the system over the course of two days using a CVSA paradigm. In another study [95], considering the introduced clip-on solution for the AR-BCI integration, the authors designed a simple 3D game, which changed in real time according to the user’s state of attention measured via EEG and coupled the prototype with a real-time attention classifier. The results of these studies, though promising, needed to be considered preliminary due to the small number of participants (n = 14).

In addition to the described contributions [94,95], the work of Wolf et al. [96] fits into the human–computer interaction (HCI) field. More specifically, the authors analyzed hand-eye coordination in real-time to predict hand actions during target selection and thus giving the possibility to avoid users’ potential errors before they occur. In a first user study, the authors enrolled 10 participants and recorded them playing a memory card game, which involves frequent hand-eye coordination with little task-relevant information. In a second user study, considering a group of 12 participants, the real time effectiveness of the authors’ method to stop participants’ motions in time (i.e., before they reach and start manipulating a target), was evaluated. Despite this contribution’s limitation being represented by the small number of participants, the results demonstrated that the support of the implemented method was effective with a mean accuracy of 85.9%.

Another hot topic in virtual reality research is the use of embodied avatars (i.e., 3D models of human beings controlled by the user), or so-called full-body illusions, a promising tool able to enhance the user’s mental health. To complement this research, augmented reality is able to incorporate real elements, such as the therapist or the user’s real body, into therapeutic scenarios. Wolf et al. [97] presented a holographic AR mirror system based on an OST device and markerless body tracking to collect qualitative feedback regarding its user experience. Additionally, authors compared quantitative results in terms of presence, embodiment and body weight perception to similar systems using video see-through (VST), AR and VR. As results, the comparative evaluation between OST AR, VST AR, and VR revealed significant differences in relevant measures (lower feelings of presence and higher body weight of the generic avatar when using the OST AR system).

4.3. Gait Analysis and Rehabilitation

Augmented reality may be a technology solution for the assessment of gait and functional mobility metrics in clinical settings. Indeed, they provide interactive digital stimuli in the context of ecologically valid daily activities while allowing one to objectively quantify the movements of the user by using the inertial measurement units (IMUs). The project of Koop et al. [57] aimed to determine the equivalency of kinematic outcomes characterizing lower-extremity function derived from the HoloLens 2 and three-dimensional (3D) motion capture systems (MoCap). Kinematic data of sixty-six healthy adults were collected using the HoloLens 2 and MoCap while they completed two lower-extremity tasks: (1) continuous walking and (2) timed up-and-go (TUG). The authors demonstrated that the TUG metrics, including turn duration and velocity, were statistically equivalent between the two systems.

In the rehabilitation context, the developed technologies such as virtual and augmented reality can also enable gait and balance training outside the clinics. The study of Held et al. [98] aimed to investigate the manipulation of the gait pattern of persons who have had a stroke based on virtual augmentation during overground walking compared to walking without AR performance feedback. Subsequently, authors evaluated the usability of the AR feedback prototype in a chronic stroke subject with minor gait and balance impairments. The results provided the first evidence of gait adaptation during overground walking based on real-time feedback through visual and auditory augmentation.

4.4. Medical Education and Training/Virtual Teaching/Tele-Mentoring/Tele-Consulting

During the COVID-19 pandemic, undergraduate medical training was significantly restricted with the suspension of medical student clerkships onwards. Aiming to continue to deliver training for medical students, augmented reality has started to emerge as a medical education and training tool, allowing new and promising possibilities for visualization and interaction with digital content.

Nine contributions [99,100,101,102,103,104,105,106,107] described the use of AR technology and the feasibility of using the HoloLens 2 headset to deliver remote bedside teaching or to enable 3D display for the facilitation of the learning process or in tele-mentoring and tele-consulting contexts.

Wolf et al. [99], for example, investigated the potential benefits of AR-based and step-by-step contextual instructions for ECMO cannulation training and compare them with the conventional training instructions regularly used at a university hospital. A comparative study between conventional and AR-based instructions for ECMO cannulation training was conducted with 21 medical students. The results demonstrated the high potential of AR instructions to improve ECMO cannulation training outcomes as a result of better information acquisition by participants during task execution.

Several studies [105,107] confirmed that the use of AR technology also enhanced the performance of tele-mentoring and teleconsulting systems in healthcare environments [105]. Tele-mentoring can be considered as an approach in which a mentor interactively guides a mentee at a different geographic location using a technological communication device.

Bui et al. [105] demonstrated the usability of AR technology in tele-mentoring clinical healthcare professionals in managing clinical scenarios. In a quasi-experimental study, four experienced health professionals and a minimum of 12 novice health practitioners were recruited for the roles of mentors and mentees, respectively. Each mentee wears the AR headset and performs a maximum of four different clinical scenarios (Acute Coronary Syndrome, Acute Myocardial Infarction, Pneumonia Severe Reaction to Antibiotics, and Hypoglycaemic Emergency) in a simulated learning environment. The role of a mentor, who stays in a separate room, is to use a laptop to provide the mentee remote instruction and guidance following the standard protocols related to each scenario. The mentors and mentees’ perception of the AR’s usability, the mentorship effectiveness, and the mentees’ self-confidence and skill performance were considered as outcome measures.

Bala et al. [106] presented a proof-of-concept study at a London teaching hospital using mixed reality (MR) technology (HoloLens 2™) to deliver a remote access teaching ward ward-round. The authors evaluated the feasibility, acceptability and effectiveness of this technology for educational purposes from the perspectives of students, faculty members and patients.

4.5. Other Applications

Four contributions [108,109,110,111] have been included in the “other applications” subgroup since, due to their characteristics, they cannot be configured as belonging to the subgroups mentioned. More specifically, in the study of Onishi et al. [108], the authors implemented a prototype system, named Gaze-Breath, in which gaze and breathing are integrated, using an MR headset and a thermal camera, respectively, for hands-free or intuitive inputs to control the cursor three-dimensionally and facilitate switching between pointing and selection. Johnson et al. [109] developed and preliminarily tested a radiotherapy system for patient posture correction and alignment using a mixed reality visualization. Kurazume et al. [110] presented a comparative study of two AR training systems for Humanitude dementia care, a multimodal comprehensive care methodology for patients with dementia. In this work, authors presented a new prototype called HEARTS 2 consisting of Microsoft HoloLens 2 as well as realistic and animated computer graphics (CG) models of older women. Finally, Matyash et al. [111] investigated accuracy measurement of HoloLens 2 inertial measurement units (IMUs) in medical environments. Indeed, the authors analyzed the accuracy and repeatability of the HoloLens 2 position finding to provide a quantitative measure of pose repeatability and deviation from a path while in motion.

Table 4.

Summary of Microsoft HoloLens 2 applications in medical and healthcare context.

In light of the results summarized in Table 4, this review allows us to present a thorough examination of the different studies conducted since 2019, focusing on HoloLens 2 applications and to analyze the current status of publications by year, typology of publications (articles or conference proceedings), sub-field applications, types of visualization technologies and device functionality.

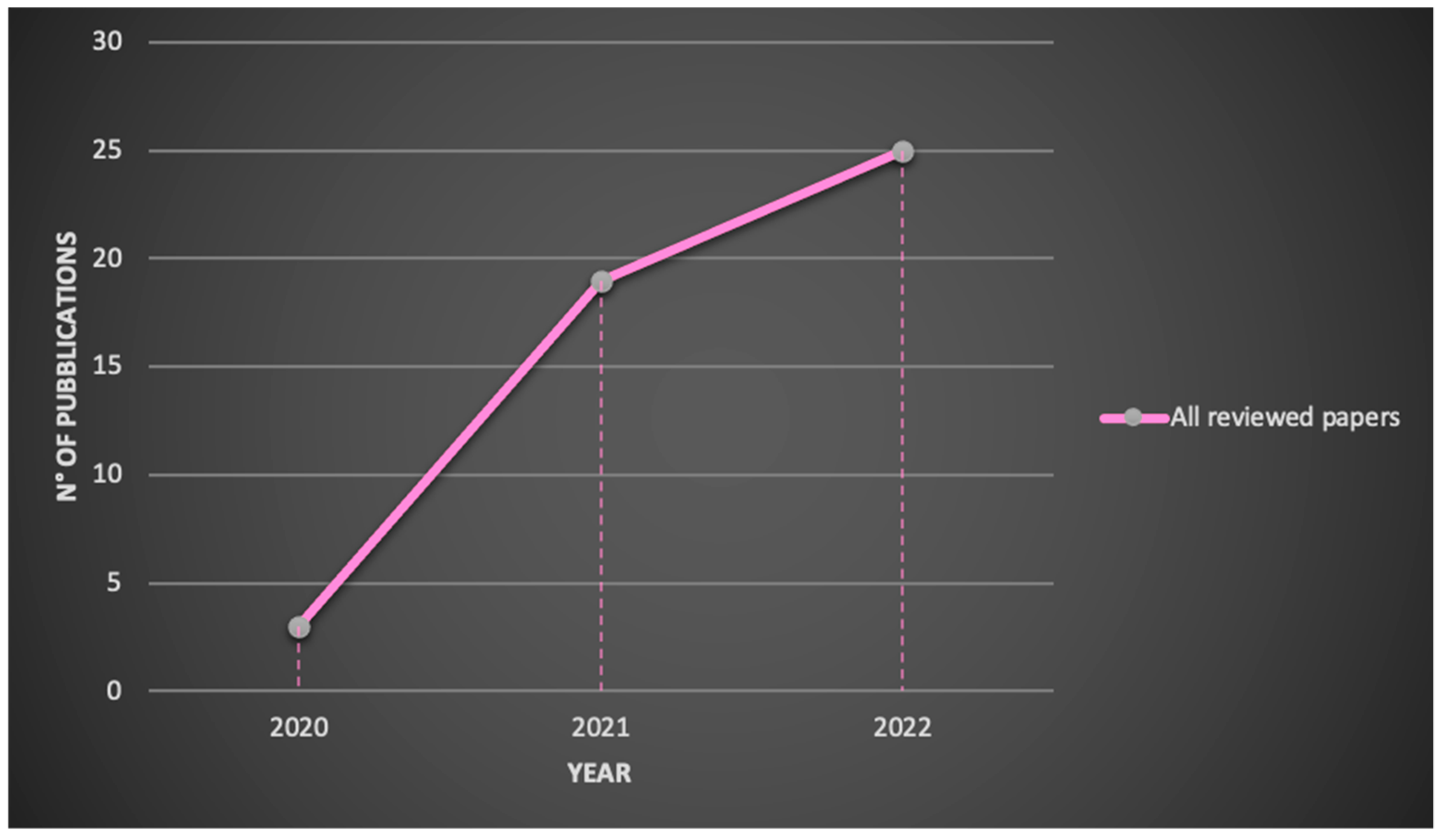

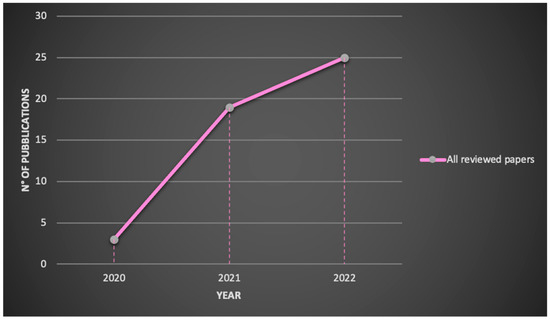

The number of publications on the Microsoft® HoloLens 2 application in a medical and healthcare context is shown in Figure 3.

Figure 3.

Reviewed publications related to HoloLens 2 research by year.

Starting from the year following the release of the second-generation product, the demand for HoloLens 2 has increased exponentially in medical sector until today and the research is expected to expand further in the future. Indeed, in 2020 the number of publications was 3, increasing to 19 in 2021 and to 25 in 2022.

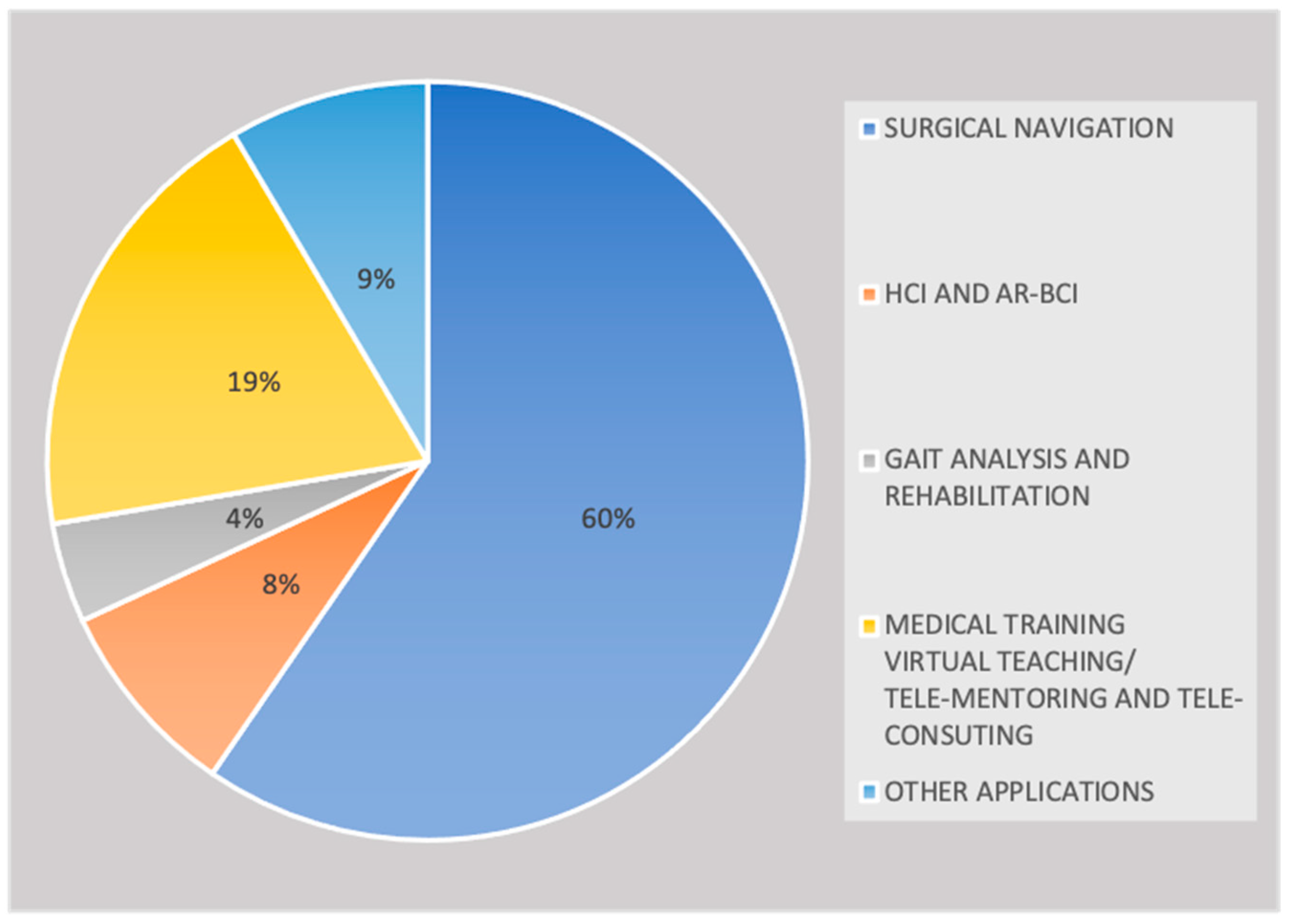

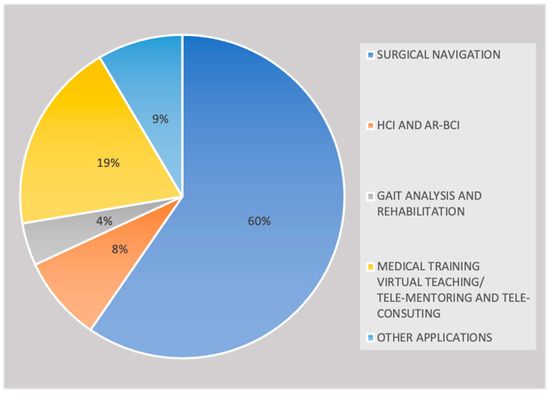

In addition, the use of HoloLens 2 in a medical and healthcare context was analyzed by dividing contributions into the following sub-field applications: surgical navigation, AR-BCI systems integration and human computer interaction, gait analysis and rehabilitation, medical education and training/virtual teaching/tele-mentoring/tele-consulting and other applications. Figure 4 illustrates that surgical navigation represents the most common application (60%, n = 28) of HoloLens 2 and that also in medical training /virtual teaching/tele-mentoring and tele-consulting contexts, the use of this methodology is increasing considerably (19%, n = 9).

Figure 4.

Reviewed publications related to HoloLens 2 research by sub-field applications.

Despite the enormous potential of augmented reality in gait analysis and rehabilitation as well as in a brain computer interface, few studies have been published so far (4%, n = 2 and 8%, n = 4).

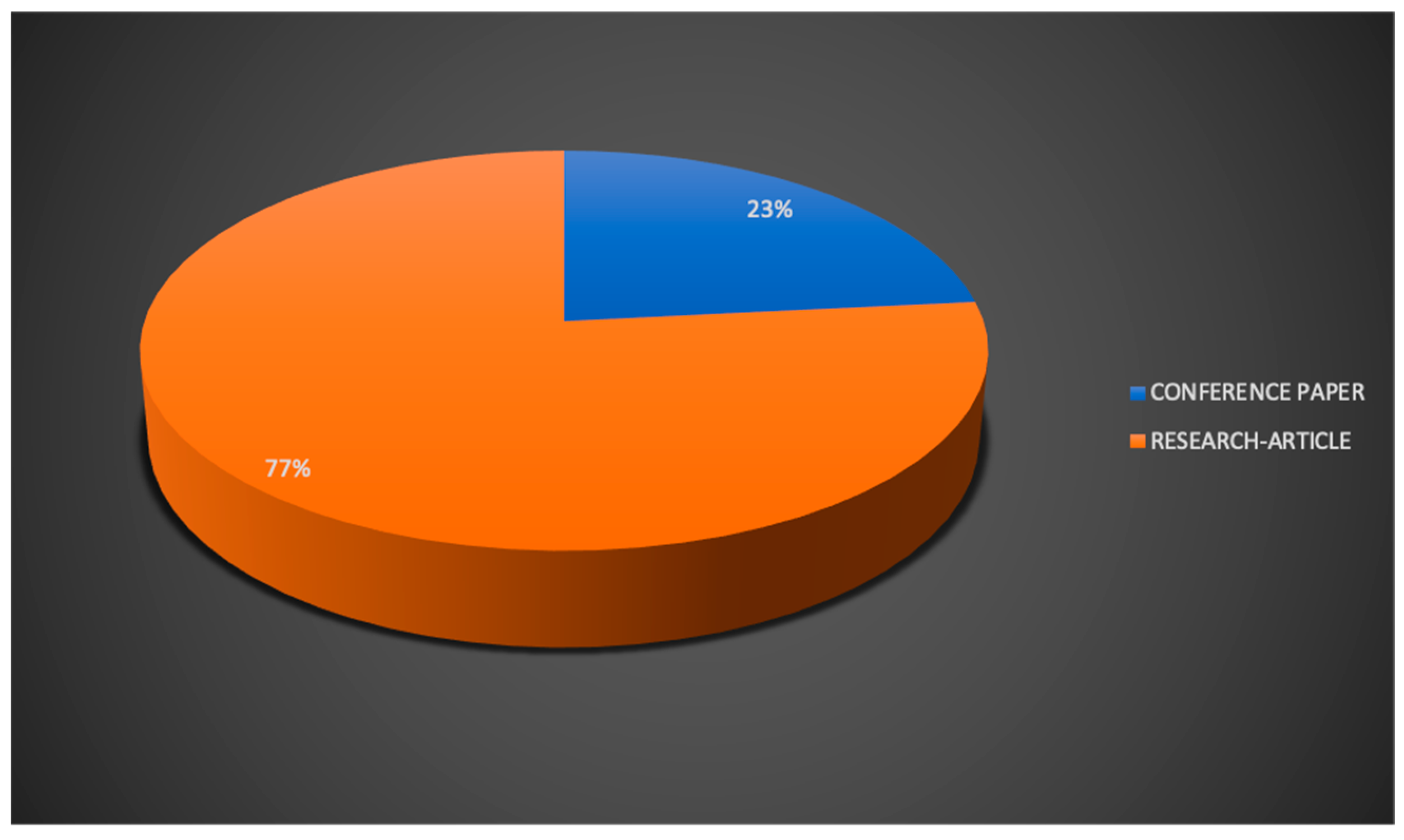

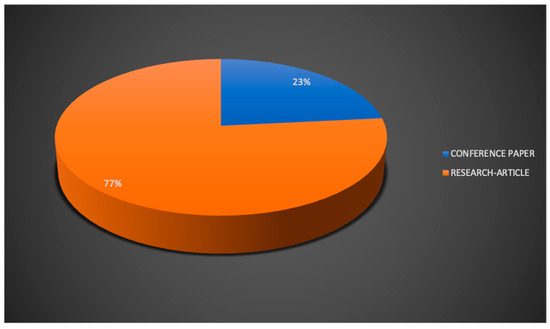

Concerning the type of publication, most of the reviewed papers were research articles (77%, n = 36), while a smaller percentage (23%, n = 11) was composed of conference proceedings (Figure 5).

Figure 5.

Reviewed publications related to HoloLens 2 research by type of publication.

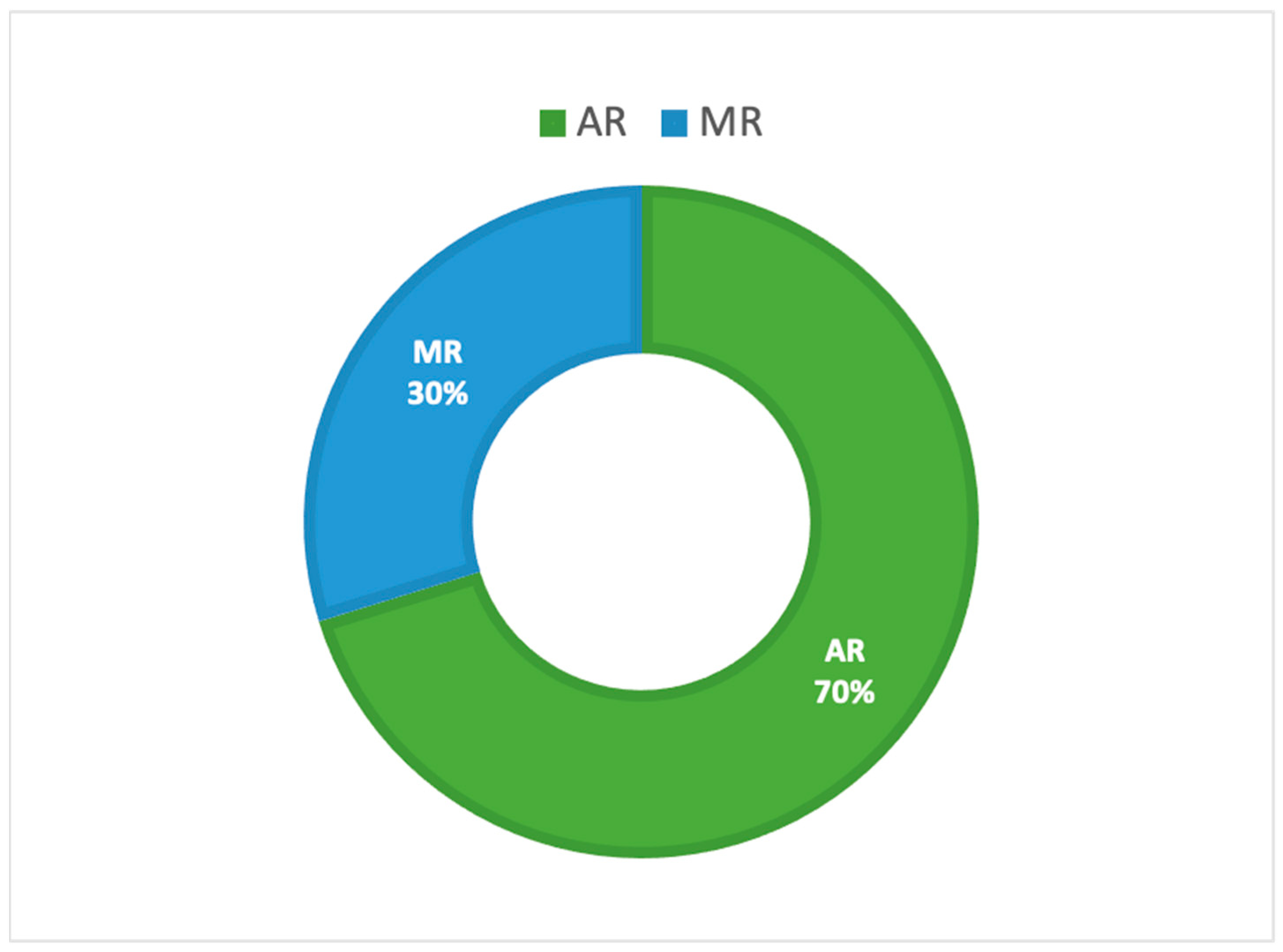

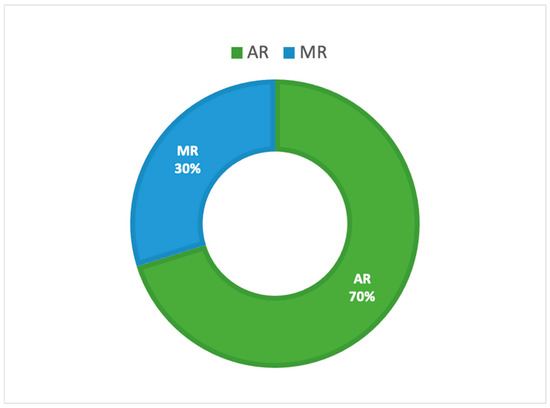

Analyzing our review results in terms of types of visualization technologies (Figure 6), the two types of approaches, AR and MR, were used for applications in a medical and healthcare context. More specifically, AR application was the most common, as evidenced by its use in = 33 research papers (70%), while MR was present in only 14 contributions (30%).

Figure 6.

Reviewed publications related to HoloLens 2 research by types of visualization technologies.

The results of our review demonstrate that in most of the works, information relating to study validation are often missing or poorly described. However, we believe it is appropriate to report, where available, some details on how the HoloLens 2 performance assessment was achieved.

5. Discussion

Our paper aims to present the state-of-the-art applications of the Microsoft® HoloLens 2 in a medical and healthcare context. This study reviewed academic papers that proved the applicability and feasibility of HoloLens 2 in a medical and healthcare context since 2019.

Although important benefits have already been identified from using the HoloLens 2 for medical use and vast improvements in eXtended Reality technologies have been achieved, some issues still need to be considered and resolved.

Indeed, despite augmented reality having demonstrated a great potential in clinical and healthcare context, the execution has been a little disappointing.

Some of the main technical limitations of today’s generations of AR headsets are the limited field of view in which overlays can be displayed and the limited battery life. In addition to the standard AR display-related performance, other characteristics such as ergonomics and mechanical design as well as the total weight of the headset play a crucial role in facilitating the acceptance of the AR HMD. Indeed, the HMD design itself can be the reason of a bad user experience due to limited FoV and the extra weight on a user’s head. This aspect is becoming less of an issue thanks to the rapid improvements in HMD design [112].

A recently published work [113] describes the ergonomic requirements that impact the mechanical design of the AR HMDs, suggesting the possible innovative solutions and how these solutions have been used to implement the AR headset in a clinical context.

HoloLens 2 is characterized by improved comfort compared with the alternatives. Indeed, it is lighter to wear, it is easier to get on and off too, it presents a more balanced center of gravity, and an improved heat management, meaning that the HoloLens 2 will fit a greater number of head shapes and sizes more comfortably [114]. More in detail, this device is incredibly balanced, thanks to the fact that the battery is on the rear and the display is on the front. The carefully studied weight distribution makes it so that the device does not touch the nose of the user, but it rests on his/her forehead.

Despite the ergonomic and design improvements, many people still report experiencing cybersickness symptoms from the AR HMD use [115,116,117].

Cybersickness is a term that identifies the cluster of symptoms that a user experiences during or after exposure to an immersive environment [118]. A physiological response to an unusual sensory stimulus, similar to motion sickness, characterizes this phenomenon, whose incidence and degree of intensity vary based on the exposure duration and nature of the virtual content and display technology [116]. The integration of the real and virtual environments in AR devices should reduce the adverse health effects that the user experienced in VR applications, such as blurred vision, disorientation and cybersickness [119].

In HoloLens devices, the precise 3D models presented, as well as the hands-free nature and ability to manipulate holographic images in real space, make this technology suitable for use in health science and medical education. In addition, based on scientific results [117], eyestrain seems to be the most common and prominent symptom caused by using the HoloLens, but it appeared less frequent and milder than in comparable virtual reality simulators. Innovative research on how to alleviate these symptoms would certainly be beneficial for allowing the prolonged use of these devices.

Another element of particular interest is to investigate how older adults interacted with this increasingly prevalent form of consumer immersive eXtended Reality technology to support Enhanced Activities of Daily Living (EADLs) and whether older adults’ psychological perception of technology is different compared to younger adults. Despite it being scientifically proven that older adults are more sensitive to simulator or cybersickness, the relationship between age effect and cybersickness may be complex [120,121].

Gender can be considered an additional relevant factor in the evaluation of eXtended Reality experiences. Although it has been proven that as older women may be especially susceptible to simulator/cybersickness, gender effects in the literature are inconsistent (for review, see [114]).

Another key point regarding the use of HoloLens in combination with XR modalities concerns the way to improve the holographic experience for the user, providing him/her with the haptic feedback. This term identifies the condition under which whenever the user touches (virtually) any projected hologram, the user has a sensation of a physical touch, depending on the inclinations of the object and the fingers of the user. The HoloLens 2 headset allows one to create a tactile virtual world for the users. Indeed, within holograms, audio effects give users the sense of pressing a button or flipping a switch.

In addition, it has a higher hand tracking precision compared to other windows-based devices, and its development suite created for XR (Interhaptics [122]) provides solid hand interaction performances and will optimize the immersive experience for the end-user.

The lack of guidelines, protocols and standardization in using HMD devices as well as poor information on study validation represent the most critical aspects in describing the feasibility and applicability of the HoloLens 2.

One potential direction for this research field is represented by the machine learning (ML) applications. Indeed, considering the increasing trend of machine learning adoptions in all medical sectors, and in particular in medical image processing, such methods are likely to be applied to OST-HMD solutions [56].

The wealth of information that all OST-HMD systems record (video images, gesture-based interaction data, eye tracking and generated surface meshes) could provide rich training data for ML algorithms. Especially Neural Networks (NNs), the most important ML method for image recognition, translation, speech detection, spelling correction, and many more applications, would offer great opportunities on AR/MR devices.

More specifically, the HoloLens 2 presents the built-in gaze tracking, thus offering innovative HCI applications that still need to be explored, especially in a surgical setup. The creation of available data bases with relevant user data, which can then serve as inputs for ML algorithms, could represent a fundamental step in terms of accelerating ML research in the surgical field.

In conclusion, the results provided in this review could highlight the potential and limitations of the HoloLens 2-based innovative solutions and bring focus to emerging research topics, such as telemedicine, remote control and motor rehabilitation.

Despite the aforementioned limitations, the integration of this technology into clinical workflows, when properly developed and validated, could bring significant benefits such as improved outcomes and reduced cost [123], as well as decreasing the time physicians spend in health parameter recording.

6. Conclusions

This systematic review provides state-of-the-art applications of the MS HoloLens 2 in the medical and healthcare scenario. It presents a thorough examination of the different studies conducted since 2019, focusing on HoloLens 2 clinical sub-field applications, device functionalities provided to users, software/platform/framework used, as well as the study validation. Considering the huge potential application of this technology, also demonstrated in the pandemic context of COVID-19, this systematic literature review aims to prove the feasibility and applicability of HoloLens 2 in a medical and healthcare context as well as to highlight the limitations in the use of this innovative approach and bring focus to emerging research topics, such as telemedicine, remote control and motor rehabilitation.

Funding

This work has been funded by the SIMpLE (Smart solutIons for health Monitoring and independent mobiLity for Elderly and disable people) project (Cod. SIN_00031—CUP B69G14000180008), a Smart Cities and Communities and Social Innovation project, funded by the Italian Ministry of Research and Education (MIUR).

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Not applicable.

Conflicts of Interest

The author declare no conflict of interest.

References

- Demystifying the Virtual Reality Landscape. Available online: https://www.intel.com/content/www/us/en/tech-tips-and-tricks/virtual-reality-vs-augmented-reality.html (accessed on 22 September 2021).

- Makhataeva, Z.; Varol, H.A. Augmented reality for robotics: A review. Robotics 2020, 9, 21. [Google Scholar] [CrossRef]

- Hu, H.Z.; Feng, X.B.; Shao, Z.W.; Xie, M.; Xu, S.; Wu, X.H.; Ye, Z.W. Application and Prospect of Mixed Reality Technology in Medical Field. Curr. Med. Sci. 2019, 39, 1–6. [Google Scholar] [CrossRef]

- Morimoto, T.; Kobayashi, T.; Hirata, H.; Otani, K.; Sugimoto, M.; Tsukamoto, M.; Yoshihara, T.; Ueno, M.; Mawatari, M. XR (Extended Reality: Virtual Reality, Augmented Reality, Mixed Reality) Technology in Spine Medicine: Status Quo and Quo Vadis. J. Clin. Med. 2022, 11, 470. [Google Scholar] [CrossRef] [PubMed]

- Morimoto, T.; Hirata, H.; Ueno, M.; Fukumori, N.; Sakai, T.; Sugimoto, M.; Kobayashi, T.; Tsukamoto, M.; Yoshihara, T.; Toda, Y.; et al. Digital Transformation Will Change Medical Education and Rehabilitation in Spine Surgery. Medicina 2022, 58, 508. [Google Scholar] [CrossRef] [PubMed]

- Liu, Y.; Dong, H.; Zhang, L.; El Saddik, A. Technical evaluation of HoloLens for multimedia: A first look. IEEE Multimed. 2018, 25, 8–18. [Google Scholar] [CrossRef]

- Microsoft HoloLens Docs. Available online: https://docs.microsoft.com/en-us/hololens/ (accessed on 22 September 2022).

- Microsoft HoloLens (1st Gen) Docs. Available online: https://docs.microsoft.com/en-us/hololens/hololens1-basic-usage (accessed on 22 September 2022).

- Microsoft HoloLens2 Docs. Available online: https://www.microsoft.com/it-it/hololens (accessed on 22 September 2022).

- Microsoft HoloLens vs Microsoft HoloLens 2. Available online: https://versus.com/en/microsoft-hololens-vs-microsoft-hololens-2#group_features (accessed on 22 September 2022).

- Gasmi, A.; Benlamri, R. Augmented reality, virtual reality and new age technologies demand escalates amid COVID-19. In Novel AI and Data Science Advancements for Sustainability in the Era of COVID-19; Academic Press: Cambridge, MA, USA, 2022; pp. 89–111. [Google Scholar]

- Cartucho, J.; Shapira, D.; Ashrafian, H.; Giannarou, S. Multimodal mixed reality visualisation for intraoperative surgical guidance. Int. J. Comput. Assist. Radiol. Surg. 2020, 15, 819–826. [Google Scholar] [CrossRef]

- Zafar, S.; Zachar, J.J. Evaluation of HoloHuman augmented reality application as a novel educational tool in dentistry. Eur. J. Dent. Educ. 2020, 24, 259–265. [Google Scholar] [CrossRef]

- Hanna, M.G.; Ahmed, I.; Nine, J.; Prajapati, S.; Pantanowitz, L. Augmented reality technology using microsoft hololens in anatomic pathology. Arch. Pathol. Lab. Med. 2018, 142, 638–644. [Google Scholar] [CrossRef]

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G. Preferred reporting items for systematic reviews and meta-analyses: The PRISMA statement. BMJ 2009, 339, b2535. [Google Scholar] [CrossRef]

- Zhu, T.; Jiang, S.; Yang, Z.; Zhou, Z.; Li, Y.; Ma, S.; Zhuo, J. A neuroendoscopic navigation system based on dual-mode augmented reality for minimally invasive surgical treatment of hypertensive intracerebral hemorrhage. Comput. Biol. Med. 2022, 140, 105091. [Google Scholar] [CrossRef] [PubMed]

- Wolf, E.; Dollinger, N.; Mal, D.; Wienrich, C.; Botsch, M.; Latoschik, M.E. Body Weight Perception of Females using Photorealistic Avatars in Virtual and Augmented Reality. In Proceedings of the 2020 IEEE International Symposium on Mixed and Augmented Reality, ISMAR 2020, Recife/Porto de Galinhas, Brazil, 9–13 November 2020; pp. 462–473. [Google Scholar]

- van Lopik, K.; Sinclair, M.; Sharpe, R.; Conway, P.; West, A. Developing augmented reality capabilities for industry 4.0 small enterprises: Lessons learnt from a content authoring case study. Comput. Ind. 2020, 117, 103208. [Google Scholar] [CrossRef]

- Velazco-Garcia, J.D.; Navkar, N.V.; Balakrishnan, S.; Younes, G.; Abi-Nahed, J.; Al-Rumaihi, K.; Darweesh, A.; Elakkad, M.S.M.; Al-Ansari, A.; Christoforou, E.G.; et al. Evaluation of how users interface with holographic augmented reality surgical scenes: Interactive planning MR-Guided prostate biopsies. Int. J. Med. Robot. Comput. Assist. Surg. 2021, 17, e2290. [Google Scholar] [CrossRef] [PubMed]

- Pezzera, M.; Chitti, E.; Borghese, N.A. MIRARTS: A mixed reality application to support postural rehabilitation. In Proceedings of the 2020 IEEE 8th International Conference on Serious Games and Applications for Health, SeGAH 2020, Vancouver, BC, Canada, 12–14 August 2020. [Google Scholar]

- Kumar, K.; Groom, K.; Martin, L.; Russell, G.K.; Elkin, S.L. Educational opportunities for postgraduate medical trainees during the COVID-19 pandemic: Deriving value from old, new and emerging ways of learning. Postgrad. Med. J. 2022, 98, 328–330. [Google Scholar] [CrossRef] [PubMed]

- Yamazaki, A.; Ito, T.; Sugimoto, M.; Yoshida, S.; Honda, K.; Kawashima, Y.; Fujikawa, T.; Fujii, Y.; Tsutsumi, T. Patient-specific virtual and mixed reality for immersive, experiential anatomy education and for surgical planning in temporal bone surgery. Auris Nasus Larynx 2021, 48, 1081–1091. [Google Scholar] [CrossRef]

- Koyachi, M.; Sugahara, K.; Odaka, K.; Matsunaga, S.; Abe, S.; Sugimoto, M.; Katakura, A. Accuracy of Le Fort I osteotomy with combined computer-aided design/computer-aided manufacturing technology and mixed reality. Int. J. Oral Maxillofac. Surg. 2021, 50, 782–790. [Google Scholar] [CrossRef] [PubMed]

- Sugahara, K.; Koyachi, M.; Koyama, Y.; Sugimoto, M.; Matsunaga, S.; Odaka, K.; Abe, S.; Katakura, A. Mixed reality and three dimensional printed models for resection of maxillary tumor: A case report. Quant Imaging Med. Surg. 2021, 11, 2187–2194. [Google Scholar] [CrossRef] [PubMed]

- Aoki, T.; Koizumi, T.; Sugimoto, M.; Murakami, M. Holography-guided percutaneous puncture technique for selective near-infrared fluorescence-guided laparoscopic liver resection using mixed-reality wearable spatial computer. Surg. Oncol. 2020, 35, 476–477. [Google Scholar] [CrossRef] [PubMed]

- Kostov, G.; Wolfartsberger, J. Designing a Framework for Collaborative Mixed Reality Training. Procedia Comput. Sci. 2022, 200, 896–903. [Google Scholar] [CrossRef]

- Sato, Y.; Sugimoto, M.; Tanaka, Y.; Suetsugu, T.; Imai, T.; Hatanaka, Y.; Matsuhashi, N.; Takahashi, T.; Yamaguchi, K.; Yoshida, K. Holographic image-guided thoracoscopic surgery: Possibility of usefulness for esophageal cancer patients with abnormal artery. Esophagus 2020, 17, 508–511. [Google Scholar] [CrossRef] [PubMed]

- Yoshida, S.; Sugimoto, M.; Fukuda, S.; Taniguchi, N.; Saito, K.; Fujii, Y. Mixed reality computed tomography-based surgical planning for partial nephrectomy using a head-mounted holographic computer. Int. J. Urol. 2019, 26, 681–682. [Google Scholar] [CrossRef]

- Shimada, M.; Kurihara, K.; Tsujii, T. Prototype of an Augmented Reality System to Support Animal Surgery using HoloLens 2. In Proceedings of the LifeTech 2022—IEEE 4th Global Conference on Life Sciences and Technologies, Osaka, Japan, 7–9 March 2022; pp. 335–337. [Google Scholar]

- Matsuhashi, K.; Kanamoto, T.; Kurokawa, A. Thermal model and countermeasures for future smart glasses. Sensors 2020, 20, 1446. [Google Scholar] [CrossRef] [PubMed]

- Vaz De Carvalho, C. Virtual Experiential Learning in Engineering Education. In Proceedings of the Frontiers in Education Conference, FIE, Covington, KY, USA, 16–19 October 2019. [Google Scholar]

- Hammady, R.; Ma, M.; Strathearn, C. User experience design for mixed reality: A case study of HoloLens in museum. Int. J. Technol. Mark. 2019, 13, 354–375. [Google Scholar] [CrossRef]

- Walko, C.; Maibach, M.J. Flying a helicopter with the HoloLens as head-mounted display. Opt. Eng. 2021, 60, 103103. [Google Scholar] [CrossRef]

- Dan, Y.; Shen, Z.; Xiao, J.; Zhu, Y.; Huang, L.; Zhou, J. HoloDesigner: A mixed reality tool for on-site design. Autom. Constr. 2021, 129, 103808. [Google Scholar] [CrossRef]

- Hertel, J.; Steinicke, F. Augmented reality for maritime navigation assistance—Egocentric depth perception in large distance outdoor environments. In Proceedings of the 2021 IEEE Conference on Virtual Reality and 3D User Interfaces, VR 2021, Lisboa, Portugal, 27 March–1 April 2021; pp. 122–130. [Google Scholar]

- Harborth, D.; Kümpers, K. Intelligence augmentation: Rethinking the future of work by leveraging human performance and abilities. Virtual Real. 2021, 26, 849–870. [Google Scholar] [CrossRef]

- de Boeck, M.; Vaes, K. Structuring human augmentation within product design. Proc. Des. Soc. 2021, 1, 2731–2740. [Google Scholar] [CrossRef]

- Shao, Q.; Sniffen, A.; Blanchet, J.; Hillis, M.E.; Shi, X.; Haris, T.K.; Liu, J.; Lamberton, J.; Malzkuhn, M.; Quandt, L.C.; et al. Teaching American Sign Language in Mixed Reality. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2020, 4, 152. [Google Scholar] [CrossRef]

- Jin, Y.; Ma, M.; Liu, Y. Interactive Narrative in Augmented Reality: An Extended Reality of the Holocaust. In Virtual, Augmented and Mixed Reality. Industrial and Everyday Life Applications; Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics); Springer: Cham, Switzerland, 2020; pp. 249–269. [Google Scholar]

- Nguyen, V.; Rupavatharam, S.; Liu, L.; Howard, R.; Gruteser, M. HandSense: Capacitive coupling-based dynamic, micro finger gesture recognition. In Proceedings of the SenSys 2019—p17th Conference on Embedded Networked Sensor Systems, New York, NY, USA, 10–13 November 2019; pp. 285–297. [Google Scholar]

- Lopez, M.A.; Terron, S.; Lombardo, J.M.; Gonzalez-Crespo, R. Towards a solution to create, test and publish mixed reality experiences for occupational safety and health learning: Training-MR. Int. J. Interact. Multimed. Artif. Intell. 2021, 7, 212–223. [Google Scholar] [CrossRef]

- Moghaddam, M.; Wilson, N.C.; Modestino, A.S.; Jona, K.; Marsella, S.C. Exploring augmented reality for worker assistance versus training. Adv. Eng. Inform. 2021, 50, 101410. [Google Scholar] [CrossRef]

- Maier, W.; Rothmund, J.; Möhring, H.-C.; Dang, P.-D.; Hoffarth, E.; Zinn, B.; Wyrwal, M. Experiencing the structure and features of a machine tool with mixed reality. Procedia CIRP 2022, 106, 244–249. [Google Scholar] [CrossRef]

- De Paolis, L.T.; De Luca, V. The effects of touchless interaction on usability and sense of presence in a virtual environment. Virtual Real. 2022. [Google Scholar] [CrossRef]

- Liao, H.; Dong, W.; Zhan, Z. Identifying map users with eye movement data from map-based spatial tasks: User privacy concerns. Cartogr. Geogr. Inf. Sci. 2022, 49, 50–69. [Google Scholar] [CrossRef]

- Nowak, A.; Zhang, Y.; Romanowski, A.; Fjeld, M. Augmented Reality with Industrial Process Tomography: To Support Complex Data Analysis in 3D Space. In Proceedings of the UbiComp/ISWC 2021—Adjunct Proceedings of the 2021 ACM International Joint Conference on Pervasive and Ubiquitous Computing and Proceedings of the 2021 ACM International Symposium on Wearable Computers, Virtual, 21–26 September 2021; pp. 56–58. [Google Scholar]

- Woodward, J.; Alemu, F.; López Adames, N.E.; Anthony, L.; Yip, J.C.; Ruiz, J. “It Would Be Cool to Get Stampeded by Dinosaurs”: Analyzing Children’s Conceptual Model of AR Headsets through Co-Design. In Proceedings of the Conference on Human Factors in Computing Systems, New Orleans, LA, USA, 29 April–5 May 2022. [Google Scholar]

- Cetinsaya, B.; Neumann, C.; Reiners, D. Using Direct Volume Rendering for Augmented Reality in Resource-constrained Platforms. In Proceedings of the 2022 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops, VRW 2022, Christchurch, New Zealand, 12–16 March 2022; pp. 768–769. [Google Scholar]

- Nishi, K.; Fujibuchi, T.; Yoshinaga, T. Development and evaluation of the effectiveness of educational material for radiological protection that uses augmented reality and virtual reality to visualise the behaviour of scattered radiation. J. Radiol. Prot. 2022, 42, 011506. [Google Scholar] [CrossRef]

- Ito, K.; Sugimoto, M.; Tsunoyama, T.; Nagao, T.; Kondo, H.; Nakazawa, K.; Tomonaga, A.; Miyake, Y.; Sakamoto, T. A trauma patient care simulation using extended reality technology in the hybrid emergency room system. J. Trauma Acute Care Surg. 2021, 90, e108–e112. [Google Scholar] [CrossRef]

- Iizuka, K.; Sato, Y.; Imaizumi, Y.; Mizutani, T. Potential Efficacy of Multimodal Mixed Reality in Epilepsy Surgery. Oper. Neurosurg. 2021, 20, 276–281. [Google Scholar] [CrossRef]

- Doughty, M.; Ghugre, N.R.; Wright, G.A. Augmenting Performance: A Systematic Review of Optical See-Through Head-Mounted Displays in Surgery. J. Imaging 2022, 8, 203. [Google Scholar] [CrossRef]

- Glass Enterprise Edition 2. Available online: https://www.google.com/glass/tech-specs/ (accessed on 22 September 2022).

- Magic Leap 1. Available online: https://www.magicleap.com/device (accessed on 22 September 2022).

- Magic Leap 2. Available online: https://ml1-developer.magicleap.com/en-us/home (accessed on 22 September 2022).

- Birlo, M.; Edwards, P.J.E.; Clarkson, M.; Stoyanov, D. Utility of optical see-through head mounted displays in augmented reality-assisted surgery: A systematic review. Med. Image Anal. 2022, 77, 102361. [Google Scholar] [CrossRef]

- Koop, M.M.; Rosenfeldt, A.B.; Owen, K.; Penko, A.L.; Streicher, M.C.; Albright, A.; Alberts, J.L. The Microsoft HoloLens 2 Provides Accurate Measures of Gait, Turning, and Functional Mobility in Healthy Adults. Sensors 2022, 22, 2009. [Google Scholar] [CrossRef]

- Wang, L.; Zhao, Z.; Wang, G.; Zhou, J.; Zhu, H.; Guo, H.; Huang, H.; Yu, M.; Zhu, G.; Li, N.; et al. Application of a three-dimensional visualization model in intraoperative guidance of percutaneous nephrolithotomy. Int. J. Urol. 2022, 29, 838–844. [Google Scholar] [CrossRef]

- Liu, X.; Sun, J.; Zheng, M.; Cui, X. Application of Mixed Reality Using Optical See-Through Head-Mounted Displays in Transforaminal Percutaneous Endoscopic Lumbar Discectomy. BioMed Res. Int. 2021, 2021, 9717184. [Google Scholar] [CrossRef]

- Eom, S.; Kim, S.; Rahimpour, S.; Gorlatova, M. AR-Assisted Surgical Guidance System for Ventriculostomy. In Proceedings of the 2022 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops, VRW 2022, Christchurch, New Zealand, 12–16 March 2022; pp. 402–405. [Google Scholar]

- Kitagawa, M.; Sugimoto, M.; Haruta, H.; Umezawa, A.; Kurokawa, Y. Intraoperative holography navigation using a mixed-reality wearable computer during laparoscopic cholecystectomy. Surgery 2022, 171, 1006–1013. [Google Scholar] [CrossRef] [PubMed]

- Doughty, M.; Ghugre, N.R. Head-Mounted Display-Based Augmented Reality for Image-Guided Media Delivery to the Heart: A Preliminary Investigation of Perceptual Accuracy. J. Imaging 2022, 8, 33. [Google Scholar] [CrossRef]

- Torabinia, M.; Caprio, A.; Fenster, T.B.; Mosadegh, B. Single Evaluation of Use of a Mixed Reality Headset for Intra-Procedural Image-Guidance during a Mock Laparoscopic Myomectomy on an Ex-Vivo Fibroid Model. Appl. Sci. 2022, 12, 563. [Google Scholar] [CrossRef]

- Gsaxner, C.; Pepe, A.; Schmalstieg, D.; Li, J.; Egger, J. Inside-out instrument tracking for surgical navigation in augmented reality. In Proceedings of the ACM Symposium on Virtual Reality Software and Technology, VRST, Osaka, Japan, 8–10 December 2021. [Google Scholar]

- García-sevilla, M.; Moreta-martinez, R.; García-mato, D.; Pose-diez-de-la-lastra, A.; Pérez-mañanes, R.; Calvo-haro, J.A.; Pascau, J. Augmented reality as a tool to guide psi placement in pelvic tumor resections. Sensors 2021, 21, 7824. [Google Scholar] [CrossRef]

- Amiras, D.; Hurkxkens, T.J.; Figueroa, D.; Pratt, P.J.; Pitrola, B.; Watura, C.; Rostampour, S.; Shimshon, G.J.; Hamady, M. Augmented reality simulator for CT-guided interventions. Eur. Radiol. 2021, 31, 8897–8902. [Google Scholar] [CrossRef] [PubMed]

- Park, B.J.; Hunt, S.J.; Nadolski, G.J.; Gade, T.P. Augmented reality improves procedural efficiency and reduces radiation dose for CT-guided lesion targeting: A phantom study using HoloLens 2. Sci. Rep. 2020, 10, 18620. [Google Scholar] [CrossRef] [PubMed]

- Benmahdjoub, M.; Niessen, W.J.; Wolvius, E.B.; Van Walsum, T. Virtual extensions improve perception-based instrument alignment using optical see-through devices. IEEE Trans. Vis. Comput. Graph. 2021, 27, 4332–4341. [Google Scholar] [CrossRef]

- Benmahdjoub, M.; Niessen, W.J.; Wolvius, E.B.; Walsum, T. Multimodal markers for technology-independent integration of augmented reality devices and surgical navigation systems. Virtual Real 2022. [Google Scholar] [CrossRef]

- Farshad, M.; Spirig, J.M.; Suter, D.; Hoch, A.; Burkhard, M.D.; Liebmann, F.; Farshad-Amacker, N.A.; Fürnstahl, P. Operator independent reliability of direct augmented reality navigated pedicle screw placement and rod bending. N. Am. Spine Soc. J. 2021, 8, 100084. [Google Scholar] [CrossRef]

- Doughty, M.; Singh, K.; Ghugre, N.R. SurgeonAssist-Net: Towards Context-Aware Head-Mounted Display-Based Augmented Reality for Surgical Guidance. In Medical Image Computing and Computer Assisted Intervention; Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics); Springer: Cham, Switzerland, 2021; pp. 667–677. [Google Scholar]

- Nagayo, Y.; Saito, T.; Oyama, H. Augmented reality self-training system for suturing in open surgery: A randomized controlled trial. Int. J. Surg. 2022, 102, 106650. [Google Scholar] [CrossRef] [PubMed]

- Nagayo, Y.; Saito, T.; Oyama, H. A Novel Suture Training System for Open Surgery Replicating Procedures Performed by Experts Using Augmented Reality. J. Med. Syst. 2021, 45, 60. [Google Scholar] [CrossRef]

- Haxthausen, F.V.; Chen, Y.; Ernst, F. Superimposing holograms on real world objects using HoloLens 2 and its depth camera. Curr. Dir. Biomed. Eng. 2021, 7, 20211126. [Google Scholar] [CrossRef]

- Wierzbicki, R.; Pawłowicz, M.; Job, J.; Balawender, R.; Kostarczyk, W.; Stanuch, M.; Janc, K.; Skalski, A. 3D mixed-reality visualization of medical imaging data as a supporting tool for innovative, minimally invasive surgery for gastrointestinal tumors and systemic treatment as a new path in personalized treatment of advanced cancer diseases. J. Cancer Res. Clin. Oncol. 2022, 148, 237–243. [Google Scholar] [CrossRef] [PubMed]

- Brunzini, A.; Mandolini, M.; Caragiuli, M.; Germani, M.; Mazzoli, A.; Pagnoni, M. HoloLens 2 for Maxillofacial Surgery: A Preliminary Study. In Design Tools and Methods in Industrial Engineering II; Lecture Notes in Mechanical Engineering; Springer: Cham, Switzerland, 2022; pp. 133–140. [Google Scholar]

- Thabit, A.; Benmahdjoub, M.; van Veelen, M.L.C.; Niessen, W.J.; Wolvius, E.B.; van Walsum, T. Augmented reality navigation for minimally invasive craniosynostosis surgery: A phantom study. Int. J. Comput. Assist. Radiol. Surg. 2022, 17, 1453–1460. [Google Scholar] [CrossRef]

- Cercenelli, L.; Babini, F.; Badiali, G.; Battaglia, S.; Tarsitano, A.; Marchetti, C.; Marcelli, E. Augmented Reality to Assist Skin Paddle Harvesting in Osteomyocutaneous Fibular Flap Reconstructive Surgery: A Pilot Evaluation on a 3D-Printed Leg Phantom. Front. Oncol. 2021, 11, 804748. [Google Scholar] [CrossRef] [PubMed]

- Felix, B.; Kalatar, S.B.; Moatz, B.; Hofstetter, C.; Karsy, M.; Parr, R.; Gibby, W. Augmented Reality Spine Surgery Navigation Increasing Pedicle Screw : Insertion Accuracy for Both Open and Minimally Invasive S Surgeries. Spine 2022, 47, 865–872. [Google Scholar] [CrossRef]

- Tu, P.; Gao, Y.; Lungu, A.J.; Li, D.; Wang, H.; Chen, X. Augmented reality based navigation for distal interlocking of intramedullary nails utilizing Microsoft HoloLens 2. Comput. Biol. Med. 2021, 133, 104402. [Google Scholar] [CrossRef]

- Zhou, Z.; Yang, Z.; Jiang, S.; Zhuo, J.; Zhu, T.; Ma, S. Augmented reality surgical navigation system based on the spatial drift compensation method for glioma resection surgery. Med. Phys. 2022, 49, 3963–3979. [Google Scholar] [CrossRef] [PubMed]

- Ivanov, V.M.; Krivtsov, A.M.; Strelkov, S.V.; Kalakutskiy, N.V.; Yaremenko, A.I.; Petropavlovskaya, M.Y.; Portnova, M.N.; Lukina, O.V.; Litvinov, A.P. Intraoperative use of mixed reality technology in median neck and branchial cyst excision. Future Internet 2021, 13, 214. [Google Scholar] [CrossRef]

- Heinrich, F.; Schwenderling, L.; Joeres, F.; Hansen, C. 2D versus 3D: A Comparison of Needle Navigation Concepts between Augmented Reality Display Devices. In Proceedings of the 2022 IEEE Conference on Virtual Reality and 3D User Interfaces, VR 2022, Christchurch, New Zealand, 12–16 March 2022; pp. 260–269. [Google Scholar]

- Morita, S.; Suzuki, K.; Yamamoto, T.; Kunihara, M.; Hashimoto, H.; Ito, K.; Fujii, S.; Ohya, J.; Masamune, K.; Sakai, S. Mixed Reality Needle Guidance Application on Smartglasses Without Pre-procedural CT Image Import with Manually Matching Coordinate Systems. CardioVascular Interv. Radiol. 2022, 45, 349–356. [Google Scholar] [CrossRef] [PubMed]

- Mitani, S.; Sato, E.; Kawaguchi, N.; Sawada, S.; Sakamoto, K.; Kitani, T.; Sanada, T.; Yamada, H.; Hato, N. Case-specific three-dimensional hologram with a mixed reality technique for tumor resection in otolaryngology. Laryngoscope Investig. Otolaryngol. 2021, 6, 432–437. [Google Scholar] [CrossRef]

- Vávra, P.; Roman, J.; Zonča, P.; Ihnát, P.; Němec, M.; Kumar, J.; Habib, N.; El-Gendi, A. Recent Development of Augmented Reality in Surgery: A Review. J. Healthc. Eng. 2017, 2017, 4574172. [Google Scholar] [CrossRef]

- Zabcikova, M.; Koudelkova, Z.; Jasek, R.; Lorenzo Navarro, J.J. Recent advances and current trends in brain-computer interface research and their applications. Int. J. Dev. Neurosci. 2022, 82, 107–123. [Google Scholar] [CrossRef]

- van Dokkum, L.E.H.; Ward, T.; Laffont, I. Brain computer interfaces for neurorehabilitation-its current status as a rehabilitation strategy post-stroke. Ann. Phys. Rehabil. Med. 2015, 58, 3–8. [Google Scholar] [CrossRef] [PubMed]

- Palumbo, A.; Gramigna, V.; Calabrese, B.; Ielpo, N. Motor-imagery EEG-based BCIs in wheelchair movement and control: A systematic literature review. Sensors 2021, 21, 6285. [Google Scholar] [CrossRef] [PubMed]

- Kos’Myna, N.; Tarpin-Bernard, F. Evaluation and comparison of a multimodal combination of BCI paradigms and eye tracking with affordable consumer-grade hardware in a gaming context. IEEE Trans. Comput. Intell. AI Games 2013, 5, 150–154. [Google Scholar] [CrossRef]

- Amores, J.; Richer, R.; Zhao, N.; Maes, P.; Eskofier, B.M. Promoting relaxation using virtual reality, olfactory interfaces and wearable EEG. In Proceedings of the 2018 IEEE 15th International Conference on Wearable and Implantable Body Sensor Networks, BSN 2018, Las Vegas, NV, USA, 4–7 March 2018; pp. 98–101. [Google Scholar]

- Semertzidis, N.; Scary, M.; Andres, J.; Dwivedi, B.; Kulwe, Y.C.; Zambetta, F.; Mueller, F.F. Neo-Noumena: Augmenting Emotion Communication. In Proceedings of the Conference on Human Factors in Computing Systems, Honolulu, HI, USA, 25–30 April 2020. [Google Scholar]

- Kohli, V.; Tripathi, U.; Chamola, V.; Rout, B.K.; Kanhere, S.S. A review on Virtual Reality and Augmented Reality use-cases of Brain Computer Interface based applications for smart cities. Microprocess. Microsyst. 2022, 88, 104392. [Google Scholar] [CrossRef]

- Kosmyna, N.; Hu, C.Y.; Wang, Y.; Wu, Q.; Scheirer, C.; Maes, P. A Pilot Study using Covert Visuospatial Attention as an EEG-based Brain Computer Interface to Enhance AR Interaction. In Proceedings of the International Symposium on Wearable Computers, ISWC, Cancun, Mexico, 12–16 September 2020; pp. 43–47. [Google Scholar]

- Kosmyna, N.; Wu, Q.; Hu, C.Y.; Wang, Y.; Scheirer, C.; Maes, P. Assessing Internal and External Attention in AR using Brain Computer Interfaces: A Pilot Study. In Proceedings of the 2021 IEEE 17th International Conference on Wearable and Implantable Body Sensor Networks, BSN 2021, Athens, Greece, 27–30 July 2021. [Google Scholar]

- Wolf, J.; Lohmeyer, Q.; Holz, C.; Meboldt, M. Gaze comes in Handy: Predicting and preventing erroneous hand actions in ar-supported manual tasks. In Proceedings of the 2021 IEEE International Symposium on Mixed and Augmented Reality, ISMAR 2021, Bari, Italy, 4–8 October 2021; pp. 166–175. [Google Scholar]

- Wolf, E.; Fiedler, M.L.; Dollinger, N.; Wienrich, C.; Latoschik, M.E. Exploring Presence, Avatar Embodiment, and Body Perception with a Holographic Augmented Reality Mirror. In Proceedings of the 2022 IEEE Conference on Virtual Reality and 3D User Interfaces, VR 2022, Christchurch, New Zealand, 12–16 March 2022; pp. 350–359. [Google Scholar]

- Held, J.P.O.; Yu, K.; Pyles, C.; Bork, F.; Heining, S.M.; Navab, N.; Luft, A.R. Augmented reality-based rehabilitation of gait impairments: Case report. JMIR mHealth uHealth 2020, 8, e17804. [Google Scholar] [CrossRef] [PubMed]

- Wolf, J.; Wolfer, V.; Halbe, M.; Maisano, F.; Lohmeyer, Q.; Meboldt, M. Comparing the effectiveness of augmented reality-based and conventional instructions during single ECMO cannulation training. Int. J. Comput. Assist. Radiol. Surg. 2021, 16, 1171–1180. [Google Scholar] [CrossRef] [PubMed]

- Mill, T.; Parikh, S.; Allen, A.; Dart, G.; Lee, D.; Richardson, C.; Howell, K.; Lewington, A. Live streaming ward rounds using wearable technology to teach medical students: A pilot study. BMJ Simul. Technol. Enhanc. Learn. 2021, 7, 494–500. [Google Scholar] [CrossRef]

- Levy, J.B.; Kong, E.; Johnson, N.; Khetarpal, A.; Tomlinson, J.; Martin, G.F.; Tanna, A. The mixed reality medical ward round with the MS HoloLens 2: Innovation in reducing COVID-19 transmission and PPE usage. Future Healthc. J. 2021, 8, e127–e130. [Google Scholar] [CrossRef]

- Sivananthan, A.; Gueroult, A.; Zijlstra, G.; Martin, G.; Baheerathan, A.; Pratt, P.; Darzi, A.; Patel, N.; Kinross, J. Using Mixed Reality Headsets to Deliver Remote Bedside Teaching during the COVID-19 Pandemic: Feasibility Trial of HoloLens 2. JMIR Form. Res. 2022, 6, e35674. [Google Scholar] [CrossRef]

- Rafi, D.; Stackhouse, A.A.; Walls, R.; Dani, M.; Cowell, A.; Hughes, E.; Sam, A.H. A new reality: Bedside geriatric teaching in an age of remote learning. Future Healthc. J. 2021, 8, e714–e716. [Google Scholar] [CrossRef]

- Dolega-Dolegowski, D.; Proniewska, K.; Dolega-Dolegowska, M.; Pregowska, A.; Hajto-Bryk, J.; Trojak, M.; Chmiel, J.; Walecki, P.; Fudalej, P.S. Application of holography and augmented reality based technology to visualize the internal structure of the dental root—A proof of concept. Head Face Med. 2022, 18, 12. [Google Scholar] [CrossRef] [PubMed]

- Bui, D.T.; Barnett, T.; Hoang, H.; Chinthammit, W. Usability of augmented reality technology in tele-mentorship for managing clinical scenarios-A study protocol. PLoS ONE 2022, 17, e0266255. [Google Scholar] [CrossRef] [PubMed]

- Bala, L.; Kinross, J.; Martin, G.; Koizia, L.J.; Kooner, A.S.; Shimshon, G.J.; Hurkxkens, T.J.; Pratt, P.J.; Sam, A.H. A remote access mixed reality teaching ward round. Clin. Teach. 2021, 18, 386–390. [Google Scholar] [CrossRef] [PubMed]

- Mentis, H.M.; Avellino, I.; Seo, J. AR HMD for Remote Instruction in Healthcare. In Proceedings of the 2022 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops, VRW 2022, Christchurch, New Zealand, 12–16 March 2022; pp. 437–440. [Google Scholar]

- Onishi, R.; Morisaki, T.; Suzuki, S.; Mizutani, S.; Kamigaki, T.; Fujiwara, M.; Makino, Y.; Shinoda, H. GazeBreath: Input Method Using Gaze Pointing and Breath Selection. In Proceedings of the Augmented Humans 2022, Kashiwa, Chiba, Japan, 13–15 March 2022; pp. 1–9. [Google Scholar]

- Johnson, P.B.; Jackson, A.; Saki, M.; Feldman, E.; Bradley, J. Patient posture correction and alignment using mixed reality visualization and the HoloLens 2. Med. Phys. 2022, 49, 15–22. [Google Scholar] [CrossRef]

- Kurazume, R.; Hiramatsu, T.; Kamei, M.; Inoue, D.; Kawamura, A.; Miyauchi, S.; An, Q. Development of AR training systems for Humanitude dementia care. Adv. Robot. 2022, 36, 344–358. [Google Scholar] [CrossRef]

- Matyash, I.; Kutzner, R.; Neumuth, T.; Rockstroh, M. Accuracy measurement of HoloLens2 IMUs in medical environments. Curr. Dir. Biomed. Eng. 2021, 7, 633–636. [Google Scholar] [CrossRef]

- Xu, X.; Mangina, E.; Campbell, A.G. HMD-Based Virtual and Augmented Reality in Medical Education: A Systematic Review. Front. Virtual Real. 2021, 2, 692103. [Google Scholar] [CrossRef]

- D’Amato, R.; Cutolo, F.; Badiali, G.; Carbone, M.; Lu, H.; Hogenbirk, H.; Ferrari, V. Key Ergonomics Requirements and Possible Mechanical Solutions for Augmented Reality Head-Mounted Displays in Surgery. Multimodal Technol. Interact. 2022, 6, 15. [Google Scholar] [CrossRef]

- Weech, S.; Kenny, S.; Barnett-Cowan, M. Presence and Cybersickness in Virtual Reality Are Negatively Related: A Review. Front. Psychol. 2019, 10, 158. [Google Scholar] [CrossRef]

- Rebenitsch, L.; Owen, C. Review on cybersickness in applications and visual displays. Virtual Real. 2016, 20, 101–125. [Google Scholar] [CrossRef]

- Hughes, C.L.; Fidopiastis, C.; Stanney, K.M.; Bailey, P.S.; Ruiz, E. The Psychometrics of Cybersickness in Augmented Reality. Front. Virtual Real. 2020, 1, 602954. [Google Scholar] [CrossRef]

- Vovk, A.; Wild, F.; Guest, W.; Kuula, T. Simulator Sickness in Augmented Reality Training Using the Microsoft HoloLens. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal, QC, Canada, 21–26 April 2018; pp. 1–9. [Google Scholar]

- McCauley, M.E.; Sharkey, T.J. Cybersickness: Perception of self-motion in virtual environments. Presence: Teleoper. Virtual Environ. 1992, 1, 311–318. [Google Scholar]

- Moro, C.; Štromberga, Z.; Raikos, A.; Stirling, A. The effectiveness of virtual and augmented reality in health sciences and medical anatomy. Anat. Sci. Educ. 2017, 10, 549–559. [Google Scholar] [CrossRef]

- Saredakis, D.; Szpak, A.; Birckhead, B.; Keage, H.A.D.; Rizzo, A.; Loetscher, T. Factors Associated With Virtual Reality Sickness in Head-Mounted Displays: A Systematic Review and Meta-Analysis. Front. Hum. Neurosci. 2020, 14, 96. [Google Scholar] [CrossRef]

- Dilanchian, A.T.; Andringa, R.; Boot, W.R. A Pilot Study Exploring Age Differences in Presence, Workload, and Cybersickness in the Experience of Immersive Virtual Reality Environments. Front. Virtual Real. 2021, 2, 736793. [Google Scholar] [CrossRef]

- Haptics for Virtual Reality (VR) and Mixed Reality (MR). Available online: https://www.interhaptics.com/products/haptics-for-vr-and-mr (accessed on 22 September 2021).

- Alberts, J.L.; Modic, M.T.; Udeh, B.L.; Zimmerman, N.; Cherian, K.; Lu, X.; Gray, R.; Figler, R.; Russman, A.; Linder, S.M. A Technology-Enabled Concussion Care Pathway Reduces Costs and Enhances Care. Phys. Ther. 2020, 100, 136–148. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).