Non-Local and Multi-Scale Mechanisms for Image Inpainting

Abstract

1. Introduction

- We aim to solve image inpainting tasks for randomly missing regions with a large proportion and employ an ID-MRF loss to tackle grid-shape artifacts and color discrepancy caused by style loss and correlation loss.

- We innovatively combine the CAM with the DFB module to assist our network to generate precise and fine-grained contents by borrowing features from distant spatial location and extracting multi-scale features.

- Experiments on multiple benchmark datasets intuitively show that our method is able to achieve competitive results.

2. Related Work

2.1. Non-Local Mechanisms

2.2. Multi-Scale Mechanisms

3. Proposed Methods

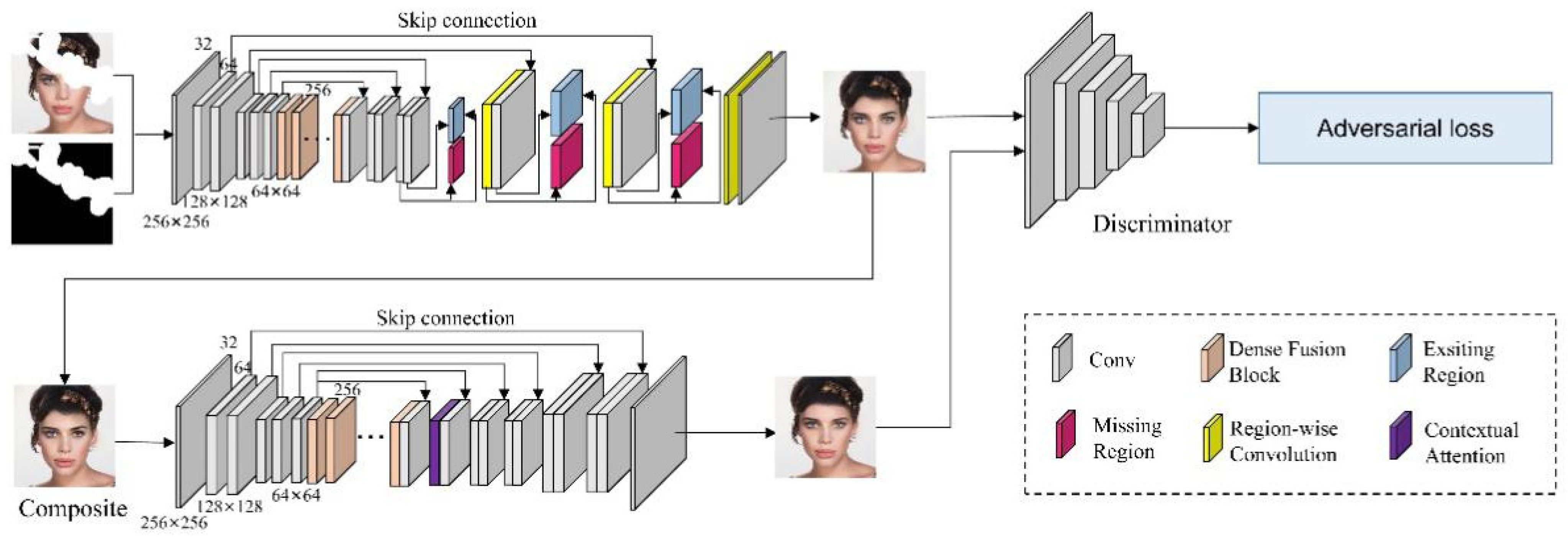

3.1. The Architecture of Our Framework

3.2. Contextual Attention Mechanism

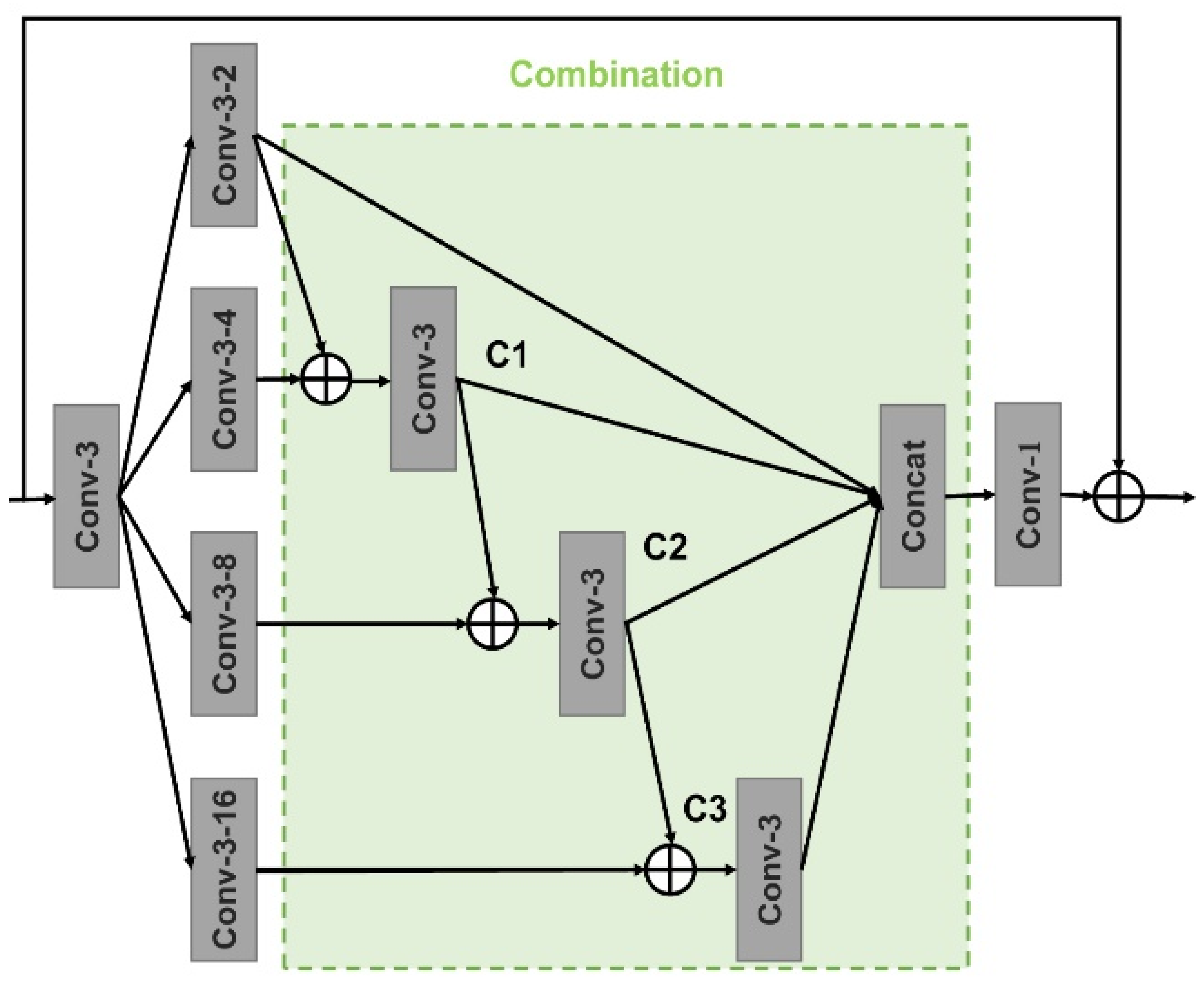

3.3. Dense Connection of Dilated Convolution

3.4. Loss Functions

3.4.1. Reconstruction Loss

3.4.2. ID-MRF Loss

3.4.3. Adversarial Loss

3.4.4. Overall Loss

4. Experiments

4.1. Datasets and Masks

4.2. Implementation Details

4.3. Comparative Experiments

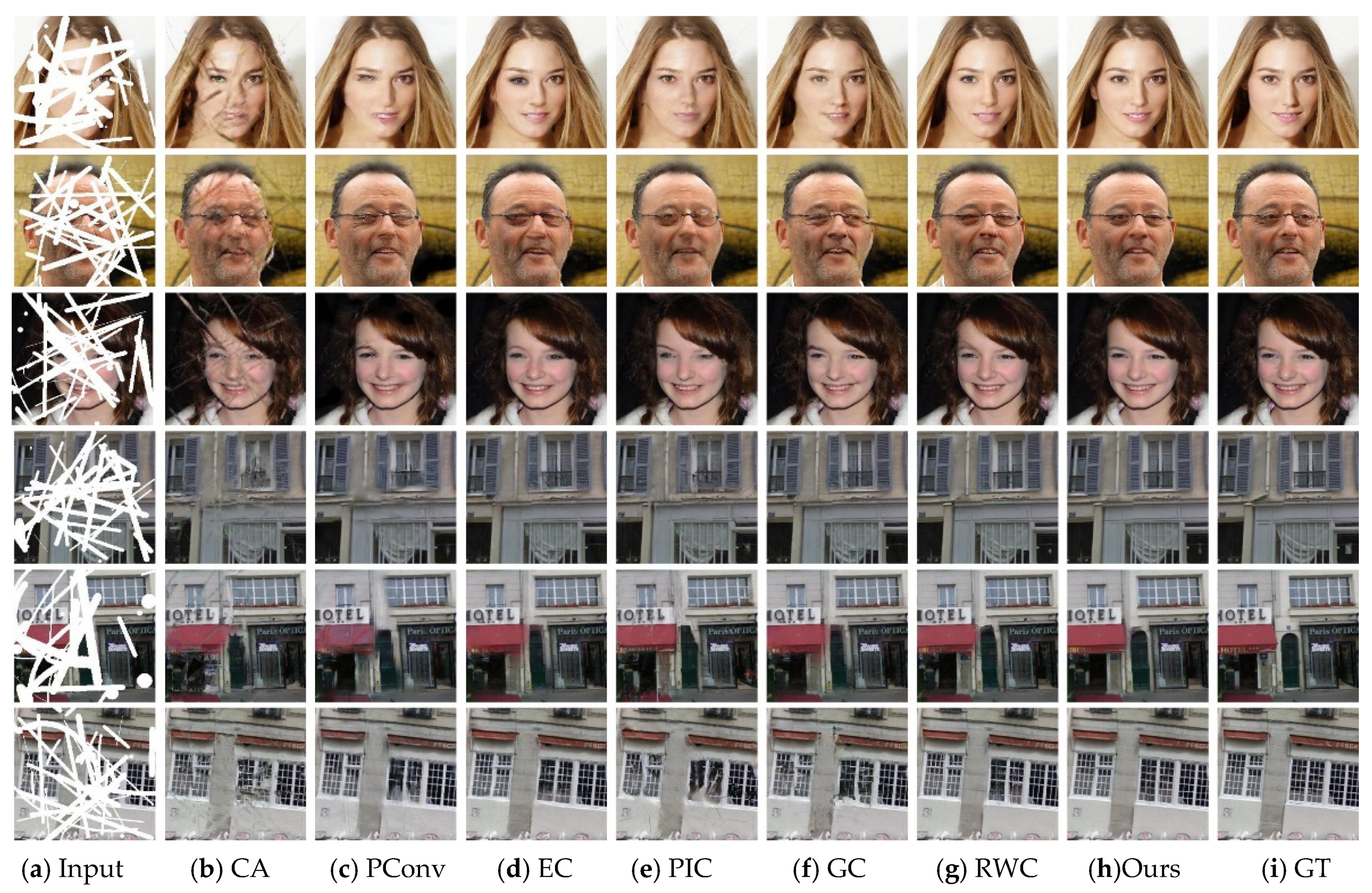

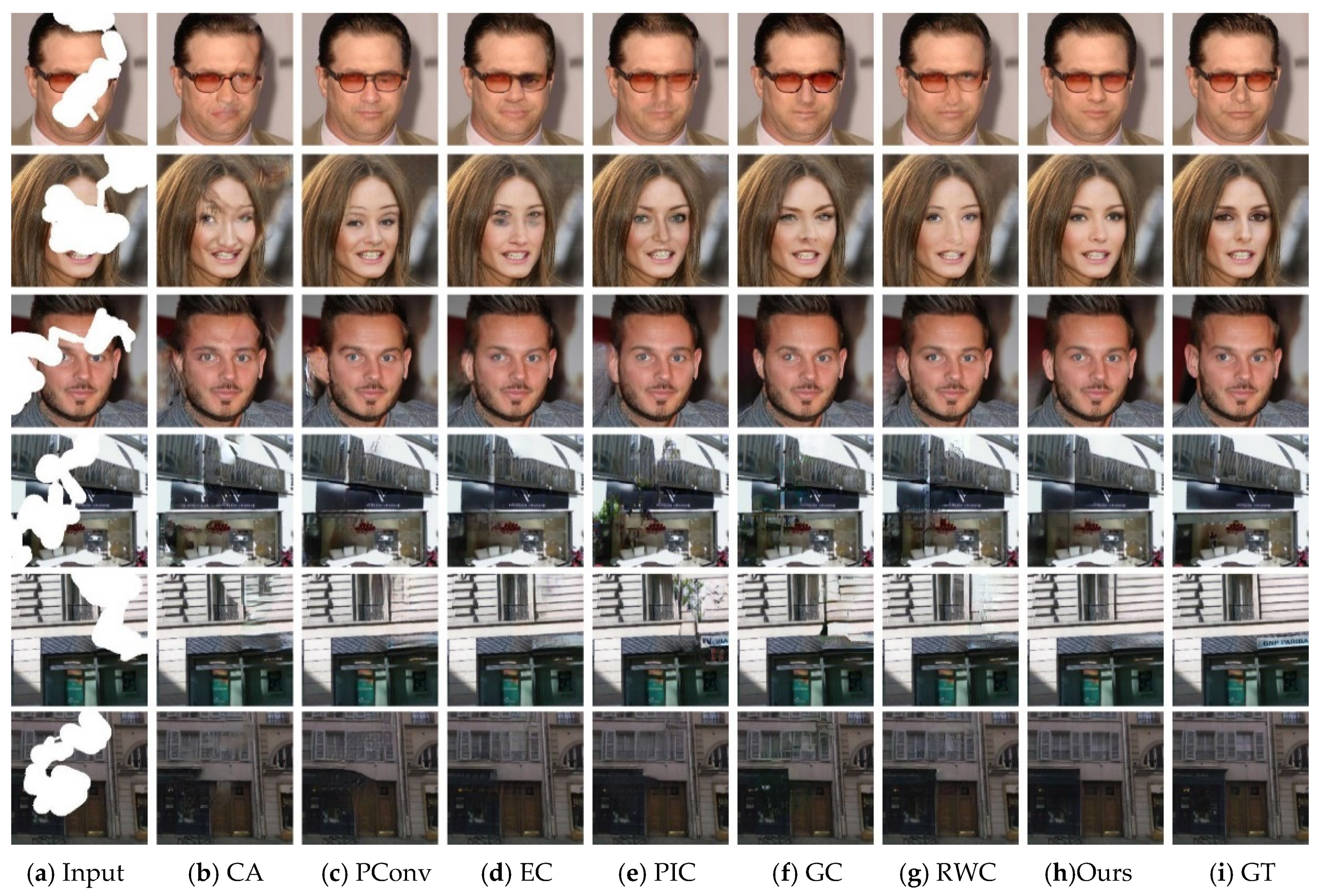

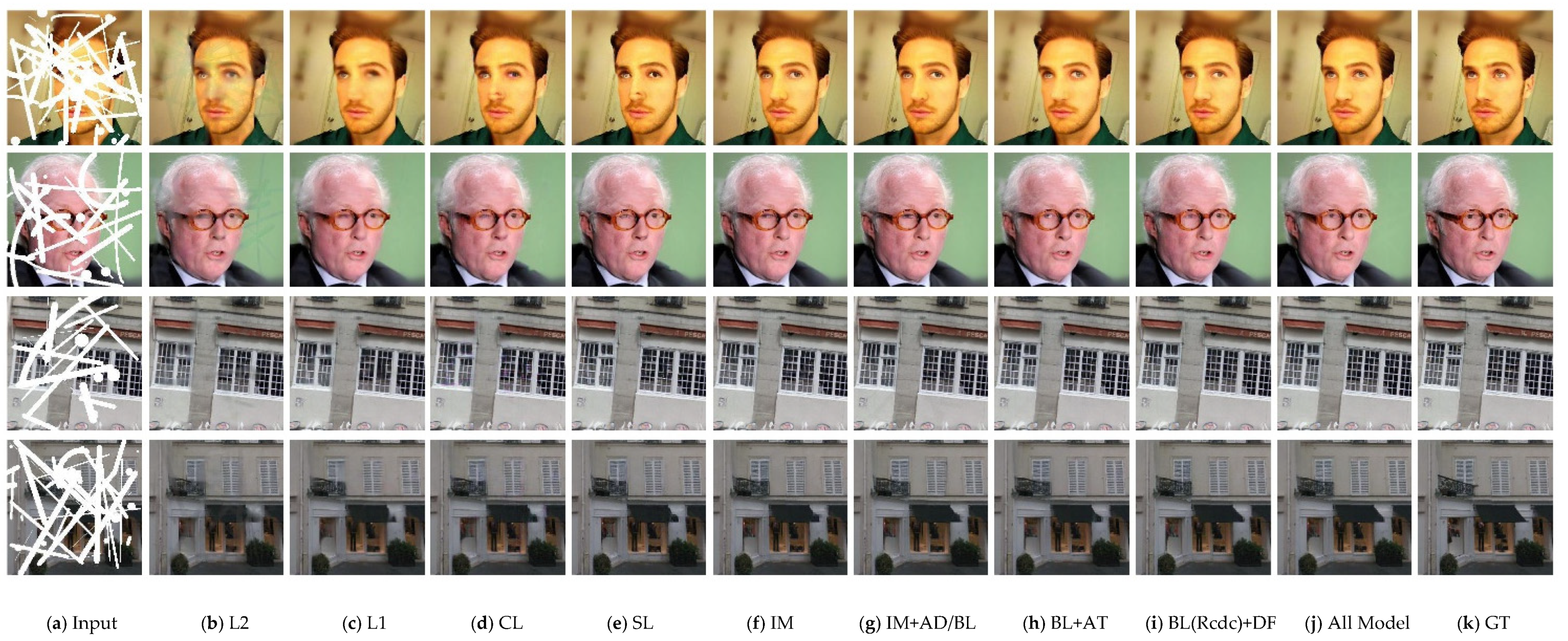

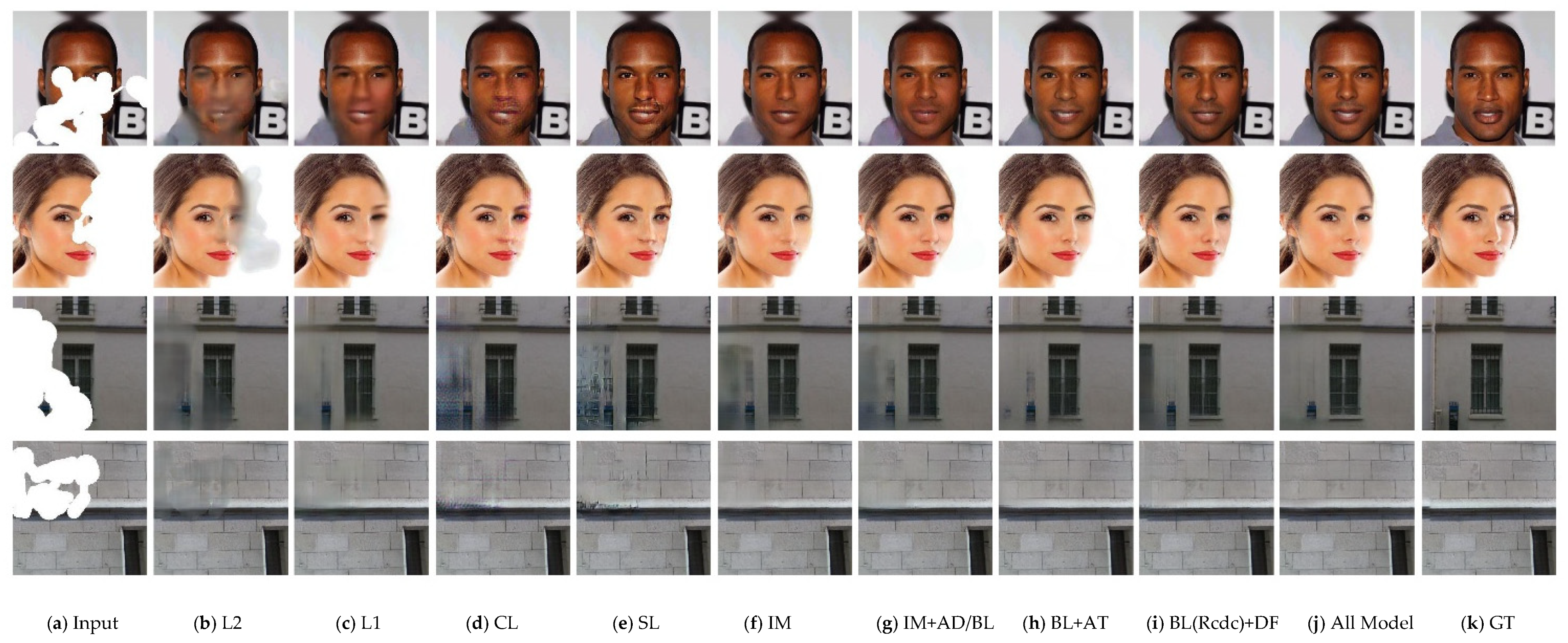

4.3.1. Qualitative Comparison

4.3.2. Quantitative Comparison

4.4. Ablation Studies

5. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Li, Z.; He, H.; Tai, H.-M.; Yin, Z.; Chen, F. Color-Direction Patch-Sparsity-Based Image Inpainting Using Multidirection Features. IEEE Trans. Image Process. 2014, 24, 1138–1152. [Google Scholar] [CrossRef]

- Li, Z.; Liu, J.; Cheng, J. Exploiting Multi-Direction Features in MRF-Based Image Inpainting Approaches. IEEE Access 2019, 7, 179905–179917. [Google Scholar] [CrossRef]

- Cao, J.; Zhang, Z.; Zhao, A.; Cui, H.; Zhang, Q. Ancient mural restoration based on a modified generative adversarial network. Herit. Sci. 2020, 8, 7. [Google Scholar] [CrossRef]

- Liu, Q.; Li, S.; Xiao, J.; Zhang, M. Multi-filters guided low-rank tensor coding for image inpainting. Signal Process. Image Commun. 2019, 73, 70–83. [Google Scholar] [CrossRef]

- Biradar, R.L.; Kohir, V.V. A novel image inpainting technique based on median diffusion. Sadhana 2013, 38, 621–644. [Google Scholar] [CrossRef]

- Bertalmio, M.; Vese, L.; Sapiro, G.; Osher, S. Simultaneous structure and texture image inpainting. IEEE Trans. Image Process. 2003, 12, 882–889. [Google Scholar]

- Yeh, R.A.; Chen, C.; Lim, T.Y.; Schwing, A.G.; Hasegawa-Johnson, M.; Do, M.N. Semantic Image Inpainting with Deep Generative Models. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 6882–6890. [Google Scholar]

- Li, X.; Hu, G.; Zhu, J.; Zuo, W.; Wang, M.; Zhang, L. Learning Symmetry Consistent Deep CNNs for Face Completion. IEEE Trans. Image Process. 2020, 29, 7641–7655. [Google Scholar] [CrossRef]

- Chen, M.; Liu, Z.; Ye, L.; Wang, Y. Attentional coarse-and-fine generative adversarial networks for image inpainting. Neurocomputing 2020, 405, 259–269. [Google Scholar] [CrossRef]

- Pathak, D.; Krahenbuhl, P.; Donahue, J.; Darrell, T.; Efros, A.A. Context Encoders: Feature Learning by Inpainting. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 2536–2544. [Google Scholar]

- Yu, J.; Lin, Z.; Yang, J.; Shen, X.; Lu, X.; Huang, T.S. Generative Image Inpainting with Contextual Attention. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 5505–5514. [Google Scholar]

- Iizuka, S.; Simo-Serra, E.; Ishikawa, H. Globally and locally consistent image completion. ACM Trans. Graph. 2017, 36, 1–14. [Google Scholar] [CrossRef]

- Liu, G.; Reda, F.A.; Shih, K.J.; Wang, T.-C.; Tao, A.; Catanzaro, B. Image Inpainting for Irregular Holes Using Partial Convolutions. In Proceedings of the Medical Image Computing and Computer-Assisted Intervention—MICCAI’99, Cambridge, UK, 19–22 September 2018; pp. 89–105. [Google Scholar]

- Yu, J.; Lin, Z.; Yang, J.; Shen, X.; Lu, X.; Huang, T. Free-Form Image Inpainting with Gated Convolution. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea, 27 October–2 November 2019; pp. 4470–4479. [Google Scholar]

- Ma, Y.; Liu, X.; Bai, S.; Wang, L.; Liu, A.; Tao, D.; Hancock, E. Region-wise Generative Adversarial Image Inpainting for Large Missing Areas. arXiv 2019, arXiv:1909.12507. [Google Scholar]

- Sagong, M.-C.; Shin, Y.-G.; Kim, S.-W.; Park, S.; Ko, S.-J. PEPSI: Fast Image Inpainting with Parallel Decoding Network. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019; pp. 11352–11360. [Google Scholar]

- Qiu, J.; Gao, Y.; Shen, M. Semantic-SCA: Semantic Structure Image Inpainting with the Spatial-Channel Attention. IEEE Access 2021, 9, 12997–13008. [Google Scholar] [CrossRef]

- Wang, X.; Girshick, R.; Gupta, A.; He, K. Non-local Neural Networks. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 7794–7803. [Google Scholar]

- Uddin, S.M.N.; Jung, Y.J.; Nadim, U.S.M. Global and Local Attention-Based Free-Form Image Inpainting. Sensors 2020, 20, 3204. [Google Scholar] [CrossRef]

- Yang, J.; Qi, Z.; Shi, Y. Learning to Incorporate Structure Knowledge for Image Inpainting. arXiv 2020, arXiv:2002.04170. [Google Scholar]

- Liu, D.; Wen, B.H.; Fan, Y.C.; Loy, C.C.; Huang, T.S. Non-Local Recurrent Network for Image Restoration. In Proceedings of the Advances in Neural Information Processing Systems, Montreal, QC, Canada, 2–8 December 2018; p. 31. [Google Scholar]

- Sun, T.; Fang, W.; Chen, W.; Yao, Y.; Bi, F.; Wu, B. High-Resolution Image Inpainting Based on Multi-Scale Neural Network. Electronics 2019, 8, 1370. [Google Scholar] [CrossRef]

- Yang, C.; Lu, X.; Lin, Z.; Shechtman, E.; Wang, O.; Li, H. High-Resolution Image Inpainting Using Multi-scale Neural Patch Synthesis. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 4076–4084. [Google Scholar]

- Wang, Y.; Tao, X.; Qi, X.J.; Shen, X.Y.; Jia, J.Y. Image Inpainting via Generative Multi-column Convolutional Neural Networks. In Proceedings of the Advances in Neural Information Processing Systems, Montreal, QC, Canada, 2–8 December 2018; p. 31. [Google Scholar]

- Wang, Q.; Fan, H.; Sun, G.; Cong, Y.; Tang, Y. Laplacian pyramid adversarial network for face completion. Pattern Recognit. 2019, 88, 493–505. [Google Scholar] [CrossRef]

- Mo, J.; Zhou, Y. The image inpainting algorithm used on multi-scale generative adversarial networks and neighbourhood. Automatika 2020, 61, 704–713. [Google Scholar] [CrossRef]

- Hui, Z.; Li, J.; Wang, X.; Gao, X. Image Fine-grained Inpainting. arXiv 2020, arXiv:2002.02609. [Google Scholar]

- Miyato, T.; Kataoka, T.; Koyama, M.; Yoshida, Y. Spectral Normalization for Generative Adversarial Networks. arXiv 2018, arXiv:1802.05957. [Google Scholar]

- Isola, P.; Zhu, J.-Y.; Zhou, T.; Efros, A.A. Image-to-Image Translation with Conditional Adversarial Networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 5967–5976. [Google Scholar]

- Li, C.; Wand, M. Combining Markov Random Fields and Convolutional Neural Networks for Image Synthesis. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 27–30 June 2016; pp. 2479–2486. [Google Scholar]

- Vo, H.V.; Duong, N.Q.K.; Pérez, P. Structural inpainting. In Proceedings of the 2018 ACM Multimedia Conference (Mm′18), Seoul, Korea, 22–26 October 2018; pp. 1948–1956. [Google Scholar]

- Nazeri, K.; Ng, E.; Joseph, T.; Qureshi, F.Z.; Ebrahimi, M. EdgeConnect: Generative Image Inpainting with Adversarial Edge Learning. arXiv 2019, arXiv:1901.00212. [Google Scholar]

- Zheng, C.; Cham, T.-J.; Cai, J. Pluralistic Image Completion. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019; pp. 1438–1447. [Google Scholar]

- Heusel, M.; Ramsauer, H.; Unterthiner, T.; Nessler, B.; Hochreiter, S. GANs Trained by a Two Time-Scale Update Rule Converge to a Local Nash Equilibrium. arXiv 2017, arXiv:1706.08500. [Google Scholar]

- Singh, V.K.; Abdel-Nasser, M.; Pandey, N.; Puig, D. LungINFseg: Segmenting COVID-19 Infected Regions in Lung CT Images Based on a Receptive-Field-Aware Deep Learning Framework. Diagnostics 2021, 11, 158. [Google Scholar] [CrossRef]

| MASK | CelebA-HQ | Paris StreetView | |||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| CA | PConv | EC | PIC | GC | RWC | Ours | CA | PConv | EC | PIC | GC | RWC | Ours | ||

| PSNR + | 0–10% | 34.89 | 34.24 | 34.58 | 34.69 | 39.28 | 41.76 | 42.25 | 35.30 | 34.20 | 34.56 | 34.02 | 37.85 | 41.52 | 42.19 |

| 10–20% | 27.54 | 31.01 | 31.22 | 31.31 | 32.65 | 34.32 | 34.95 | 28.83 | 30.52 | 30.91 | 30.26 | 30.97 | 34.26 | 35.11 | |

| 20–30% | 24.14 | 28.21 | 28.58 | 28.61 | 29.07 | 30.46 | 31.18 | 25.56 | 27.67 | 28.13 | 27.30 | 27.01 | 30.15 | 31.12 | |

| 30–40% | 22.83 | 26.95 | 27.35 | 27.56 | 27.92 | 29.45 | 30.18 | 23.85 | 26.31 | 26.70 | 25.83 | 25.96 | 29.08 | 30.04 | |

| SSIM + | 0–10% | 0.972 | 0.938 | 0.945 | 0.944 | 0.984 | 0.990 | 0.991 | 0.972 | 0.948 | 0.950 | 0.947 | 0.983 | 0.989 | 0.990 |

| 10–20% | 0.904 | 0.908 | 0.917 | 0.912 | 0.950 | 0.964 | 0.966 | 0.902 | 0.909 | 0.915 | 0.904 | 0.937 | 0.961 | 0.965 | |

| 20–30% | 0.838 | 0.872 | 0.879 | 0.876 | 0.908 | 0.930 | 0.935 | 0.835 | 0.869 | 0.871 | 0.850 | 0.873 | 0.918 | 0.927 | |

| 30–40% | 0.763 | 0.839 | 0.847 | 0.843 | 0.873 | 0.905 | 0.913 | 0.754 | 0.810 | 0.829 | 0.799 | 0.828 | 0.892 | 0.904 | |

| L1−(10−3) | 0–10% | 5.40 | 13.40 | 12.94 | 13.00 | 4.10 | 1.63 | 1.56 | 5.06 | 13.34 | 13.19 | 13.48 | 4.26 | 2.50 | 1.61 |

| 10–20% | 18.09 | 16.22 | 16.00 | 6.03 | 8.68 | 5.67 | 5.37 | 12.49 | 17.13 | 16.79 | 17.56 | 9.92 | 6.42 | 5.24 | |

| 20–30% | 24.43 | 21.03 | 20.33 | 20.18 | 16.82 | 10.30 | 9.63 | 21.46 | 22.17 | 21.78 | 23.64 | 18.20 | 12.11 | 10.34 | |

| 30–40% | 32.98 | 24.12 | 23.29 | 23.08 | 18.40 | 13.59 | 12.67 | 29.93 | 27.09 | 25.99 | 28.37 | 23.22 | 15.47 | 13.43 | |

| L2−(10−3) | 0–10% | 0.61 | 0.54 | 0.50 | 0.48 | 0.19 | 0.13 | 0.11 | 0.42 | 0.55 | 0.52 | 0.58 | 0.29 | 0.16 | 0.13 |

| 10–20% | 2.25 | 1.12 | 0.98 | 0.95 | 0.69 | 0.49 | 0.42 | 1.51 | 1.19 | 1.13 | 1.27 | 1.09 | 0.59 | 0.49 | |

| 20–30% | 4.64 | 1.94 | 1.75 | 1.70 | 1.58 | 1.13 | 0.96 | 3.28 | 2.33 | 2.10 | 2.54 | 2.73 | 1.45 | 1.18 | |

| 30–40% | 6.19 | 2.55 | 2.23 | 2.11 | 1.95 | 1.39 | 1.17 | 4.68 | 3.15 | 2.80 | 3.38 | 3.37 | 1.83 | 1.49 | |

| FID− | 0–10% | 1.97 | 1.58 | 1.47 | 1.37 | 0.37 | 0.23 | 0.18 | 8.32 | 5.52 | 4.47 | 6.12 | 3.06 | 1.55 | 1.13 |

| 10–20% | 8.95 | 2.77 | 2.61 | 2.29 | 1.37 | 0.84 | 0.70 | 25.37 | 13.12 | 10.33 | 14.06 | 12.66 | 6.24 | 4.58 | |

| 20–30% | 13.98 | 4.26 | 3.74 | 3.20 | 2.40 | 1.62 | 1.38 | 36.14 | 17.53 | 15.67 | 21.94 | 24.58 | 13.68 | 9.90 | |

| 30–40% | 27.06 | 6.18 | 5.40 | 4.36 | 3.55 | 2.14 | 1.82 | 57.93 | 30.06 | 26.26 | 34.20 | 34.18 | 17.00 | 12.98 | |

| MASK | CelebA-HQ | Paris Street View | |||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| CA | PConv | EC | PIC | GC | RWC | Ours | CA | PConv | EC | PIC | GC | RWC | Ours | ||

| PSNR + | 0–10% | 32.11 | 32.02 | 32.70 | 32.91 | 36.43 | 37.70 | 38.30 | 32.83 | 31.48 | 33.09 | 32.30 | 33.98 | 36.18 | 37.69 |

| 10–20% | 25.33 | 27.33 | 28.05 | 28.00 | 29.00 | 29.54 | 30.02 | 26.39 | 27.14 | 28.74 | 27.18 | 27.28 | 29.32 | 30.58 | |

| 20–30% | 22.86 | 24.59 | 25.11 | 24.81 | 25.63 | 26.10 | 26.61 | 23.40 | 24.47 | 26.02 | 24.26 | 24.33 | 26.13 | 27.50 | |

| 30–40% | 20.21 | 21.32 | 21.99 | 21.58 | 22.31 | 22.80 | 23.39 | 20.69 | 21.73 | 23.24 | 21.60 | 21.79 | 23.24 | 24.31 | |

| SSIM + | 0–10% | 0.971 | 0.936 | 0.945 | 0.942 | 0.979 | 0.982 | 0.982 | 0.962 | 0.901 | 0.933 | 0.926 | 0.960 | 0.977 | 0.981 |

| 10–20% | 0.905 | 0.898 | 0.906 | 0.901 | 0.944 | 0.944 | 0.947 | 0.900 | 0.880 | 0.895 | 0.881 | 0.903 | 0.928 | 0.940 | |

| 20–30% | 0.863 | 0.845 | 0.866 | 0.853 | 0.896 | 0.897 | 0.903 | 0.837 | 0.833 | 0.844 | 0.828 | 0.847 | 0.872 | 0.891 | |

| 30–40% | 0.790 | 0.790 | 0.801 | 0.792 | 0.835 | 0.837 | 0.849 | 0.756 | 0.757 | 0.782 | 0.748 | 0.770 | 0.808 | 0.836 | |

| L1−(10−3) | 0–10% | 7.15 | 15.38 | 14.26 | 14.21 | 4.95 | 2.84 | 2.65 | 6.28 | 15.10 | 14.14 | 14.83 | 5.78 | 4.15 | 2.83 |

| 10–20% | 17.26 | 20.25 | 19.77 | 19.91 | 11.05 | 8.85 | 8.26 | 15.60 | 22.67 | 19.55 | 22.42 | 14.49 | 10.84 | 8.75 | |

| 20–30% | 27.49 | 28.12 | 26.93 | 27.69 | 18.98 | 16.76 | 17.19 | 27.03 | 28.85 | 26.23 | 31.54 | 24.46 | 19.22 | 15.85 | |

| 30–40% | 45.36 | 41.68 | 39.75 | 41.24 | 31.91 | 29.80 | 27.72 | 42.51 | 40.24 | 36.98 | 45.10 | 37.66 | 31.28 | 26.97 | |

| L2−(10−3) | 0–10% | 1.23 | 0.96 | 0.84 | 0.64 | 0.54 | 0.46 | 0.41 | 0.82 | 1.14 | 0.73 | 0.97 | 0.72 | 0.52 | 0.39 |

| 10–20% | 3.93 | 2.55 | 2.15 | 1.04 | 1.49 | 1.58 | 1.46 | 3.08 | 3.03 | 1.92 | 3.12 | 2.81 | 1.78 | 1.46 | |

| 20–30% | 6.92 | 4.87 | 4.00 | 1.61 | 3.07 | 3.21 | 2.95 | 6.00 | 5.56 | 3.42 | 5.79 | 5.23 | 3.56 | 2.75 | |

| 30–40% | 12.99 | 9.21 | 8.01 | 2.08 | 6.38 | 6.66 | 5.98 | 10.60 | 9.23 | 6.35 | 9.77 | 8.91 | 6.53 | 5.44 | |

| FID− | 0–10% | 1.09 | 1.63 | 1.56 | 1.48 | 0.53 | 0.49 | 0.43 | 7.52 | 9.14 | 6.72 | 8.39 | 6.21 | 4.78 | 3.83 |

| 10–20% | 3.28 | 3.06 | 2.84 | 2.38 | 1.47 | 1.49 | 1.36 | 15.44 | 14.03 | 12.09 | 13.32 | 15.79 | 12.45 | 11.79 | |

| 20–30% | 8.02 | 4.15 | 4.02 | 3.31 | 2.46 | 3.48 | 2.45 | 27.66 | 23.45 | 20.11 | 24.53 | 27.41 | 21.56 | 19.81 | |

| 30–40% | 12.44 | 6.23 | 5.86 | 4.63 | 4.13 | 6.20 | 4.01 | 38.53 | 36.23 | 28.43 | 37.37 | 38.85 | 30.45 | 30.53 | |

| CelebA-HQ | Paris Street View | ||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| MASK | L2 | L1 | CL | SL | IM | IM+AD (BL) | BL+AT | BL(Rcdc) +DFB | All Model | L2 | L1 | CL | SL | IM | IM+AD (BL) | BL+AT | BL(Rcdc) +DFB | All Model | |

| PSNR + | 0–10% | 39.40 | 41.49 | 41.47 | 41.45 | 41.46 | 41.60 | 41.55 | 41.61 | 42.25 | 40.49 | 41.63 | 41.31 | 41.18 | 41.69 | 41.74 | 42.14 | 42.01 | 42.19 |

| 10–20% | 32.87 | 34.76 | 34.13 | 34.05 | 34.75 | 34.88 | 34.85 | 34.93 | 34.95 | 33.66 | 34.57 | 34.23 | 34.01 | 34.63 | 34.70 | 35.00 | 34.90 | 35.11 | |

| 20–30% | 29.35 | 30.95 | 30.26 | 30.19 | 30.95 | 31.09 | 31.04 | 31.18 | 31.18 | 29.92 | 30.57 | 30.20 | 30.03 | 30.54 | 30.69 | 31.03 | 30.99 | 31.12 | |

| 30–40% | 28.35 | 30.02 | 29.26 | 29.18 | 30.02 | 30.14 | 30.12 | 30.22 | 30.18 | 28.82 | 29.51 | 29.16 | 28.96 | 29.63 | 30.03 | 29.92 | 29.87 | 30.04 | |

| SSIM + | 0–10% | 0.983 | 0.990 | 0.990 | 0.990 | 0.989 | 0.989 | 0.989 | 0.989 | 0.991 | 0.987 | 0.989 | 0.989 | 0.989 | 0.989 | 0.989 | 0.990 | 0.990 | 0.990 |

| 10–20% | 0.947 | 0.966 | 0.962 | 0.962 | 0.965 | 0.965 | 0.965 | 0.965 | 0.966 | 0.954 | 0.962 | 0.960 | 0.960 | 0.961 | 0.962 | 0.964 | 0.963 | 0.965 | |

| 20–30% | 0.907 | 0.935 | 0.925 | 0.925 | 0.933 | 0.933 | 0.933 | 0.934 | 0.935 | 0.909 | 0.922 | 0.916 | 0.916 | 0.920 | 0.921 | 0.925 | 0.923 | 0.927 | |

| 30–40% | 0.872 | 0.913 | 0.898 | 0.899 | 0.911 | 0.912 | 0.912 | 0.912 | 0.913 | 0.878 | 0.896 | 0.889 | 0.889 | 0.896 | 0.897 | 0.902 | 0.899 | 0.904 | |

| L1−(10−3) | 0–10% | 4.54 | 3.80 | 1.73 | 1.70 | 3.77 | 3.76 | 3.78 | 3.76 | 1.56 | 2.11 | 1.73 | 2.59 | 2.54 | 1.74 | 1.73 | 1.64 | 1.64 | 1.61 |

| 10–20% | 8.15 | 7.00 | 6.03 | 5.92 | 6.91 | 6.85 | 6.92 | 6.86 | 5.37 | 7.03 | 5.68 | 6.59 | 6.51 | 5.78 | 5.67 | 5.36 | 5.35 | 5.24 | |

| 20–30% | 14.70 | 11.16 | 11.12 | 10.80 | 10.96 | 10.80 | 10.92 | 10.76 | 9.63 | 13.75 | 11.69 | 12.39 | 12.22 | 11.56 | 11.18 | 10.60 | 10.59 | 10.34 | |

| 30–40% | 19.32 | 13.75 | 14.61 | 14.25 | 13.50 | 13.32 | 12.73 | 13.29 | 13.67 | 18.06 | 14.67 | 15.87 | 15.68 | 14.54 | 14.54 | 13.78 | 13.76 | 13.34 | |

| L2−(10−3) | 0–10% | 0.19 | 0.14 | 0.13 | 0.14 | 0.14 | 0.14 | 0.14 | 0.13 | 0.11 | 0.16 | 0.14 | 0.16 | 0.16 | 0.14 | 0.13 | 0.13 | 0.13 | 0.13 |

| 10–20% | 0.64 | 0.45 | 0.51 | 0.52 | 0.45 | 0.44 | 0.44 | 0.43 | 0.42 | 0.60 | 0.53 | 0.58 | 0.60 | 0.52 | 0.52 | 0.49 | 0.50 | 0.49 | |

| 20–30% | 1.39 | 1.02 | 1.19 | 1.20 | 1.01 | 0.99 | 1.00 | 0.97 | 0.96 | 1.42 | 1.31 | 1.44 | 1.49 | 1.29 | 1.28 | 1.23 | 1.23 | 1.18 | |

| 30–40% | 1.72 | 1.23 | 1.46 | 1.48 | 1.22 | 1.20 | 1.20 | 1.18 | 1.17 | 1.81 | 1.63 | 1.79 | 1.87 | 1.65 | 1.61 | 1.54 | 1.51 | 1.49 | |

| FID− | 0–10% | 0.51 | 0.30 | 0.27 | 0.24 | 0.24 | 0.22 | 0.22 | 0.21 | 0.18 | 2.39 | 2.01 | 1.96 | 1.80 | 1.39 | 1.37 | 1.26 | 1.32 | 1.13 |

| 10–20% | 1.84 | 1.11 | 1.07 | 0.93 | 0.84 | 0.77 | 0.78 | 0.74 | 0.70 | 8.68 | 7.47 | 7.31 | 6.67 | 5.47 | 4.91 | 4.68 | 4.85 | 4.58 | |

| 20–30% | 3.53 | 2.28 | 2.19 | 1.79 | 1.67 | 1.51 | 1.53 | 1.45 | 1.38 | 20.77 | 18.59 | 16.22 | 14.37 | 12.17 | 10.23 | 9.98 | 9.76 | 9.90 | |

| 30–40% | 4.81 | 3.02 | 3.01 | 2.39 | 2.21 | 1.93 | 1.95 | 1.87 | 1.82 | 28.51 | 25.04 | 27.71 | 19.62 | 15.82 | 13.40 | 13.97 | 13.20 | 12.98 | |

| CelebA-HQ | Paris Street View | ||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| MASK | L2 | L1 | CL | SL | IM | IM+AD (BL) | BL+AT | BL(Rcdc) +DFB | All Model | L2 | L1 | CL | SL | IM | IM+AD (BL) | BL+AT | BL(Rcdc) +DFB | All Model | |

| PSNR + | 0–10% | 34.70 | 37.65 | 37.00 | 37.03 | 37.17 | 37.29 | 37.44 | 38.19 | 38.30 | 34.39 | 36.92 | 35.49 | 35.67 | 37.16 | 37.26 | 37.47 | 37.58 | 37.69 |

| 10–20% | 27.95 | 29.81 | 29.08 | 28.98 | 29.72 | 29.84 | 29.93 | 29.95 | 30.03 | 28.22 | 29.94 | 28.90 | 28.95 | 30.13 | 30.22 | 30.47 | 30.32 | 30.58 | |

| 20–30% | 24.77 | 26.39 | 25.71 | 25.58 | 26.31 | 26.44 | 26.44 | 26.53 | 26.61 | 25.31 | 27.01 | 25.79 | 25.83 | 27.04 | 27.11 | 27.28 | 27.38 | 27.50 | |

| 30–40% | 21.65 | 23.14 | 22.54 | 22.42 | 23.02 | 23.15 | 23.30 | 23.34 | 23.39 | 22.81 | 23.93 | 22.95 | 22.83 | 24.01 | 24.19 | 24.23 | 24.33 | 24.31 | |

| SSIM + | 0–10% | 0.975 | 0.982 | 0.980 | 0.980 | 0.980 | 0.981 | 0.981 | 0.983 | 0.982 | 0.974 | 0.979 | 0.976 | 0.976 | 0.979 | 0.980 | 0.980 | 0.980 | 0.981 |

| 10–20% | 0.931 | 0.947 | 0.938 | 0.939 | 0.944 | 0.945 | 0.946 | 0.947 | 0.947 | 0.924 | 0.935 | 0.926 | 0.925 | 0.935 | 0.936 | 0.937 | 0.937 | 0.940 | |

| 20–30% | 0.881 | 0.904 | 0.887 | 0.889 | 0.900 | 0.901 | 0.901 | 0.903 | 0.903 | 0.868 | 0.886 | 0.869 | 0.868 | 0.886 | 0.887 | 0.889 | 0.889 | 0.891 | |

| 30–40% | 0.821 | 0.851 | 0.824 | 0.824 | 0.846 | 0.846 | 0.850 | 0.850 | 0.849 | 0.811 | 0.830 | 0.803 | 0.800 | 0.830 | 0.832 | 0.833 | 0.834 | 0.836 | |

| L1−(10−3) | 0–10% | 6.62 | 2.86 | 3.13 | 3.04 | 5.15 | 5.09 | 5.03 | 2.69 | 2.65 | 4.43 | 3.96 | 4.33 | 4.20 | 3.71 | 2.97 | 2.95 | 2.92 | 2.83 |

| 10–20% | 14.89 | 8.85 | 9.69 | 9.42 | 10.80 | 10.61 | 10.38 | 8.39 | 8.26 | 13.37 | 10.44 | 11.53 | 11.22 | 9..91 | 9.13 | 8.92 | 9.04 | 8.75 | |

| 20–30% | 23.84 | 16.61 | 18.18 | 17.76 | 18.22 | 17.88 | 17.60 | 17.50 | 17.19 | 23.63 | 17.88 | 20.30 | 19.97 | 17.37 | 16.68 | 16.82 | 16.93 | 15.85 | |

| 30–40% | 42.68 | 29.01 | 31.61 | 30.93 | 30.57 | 30.24 | 28.71 | 27.72 | 27.72 | 37.10 | 29.85 | 33.05 | 32.72 | 28.58 | 27.81 | 27.57 | 27.36 | 26.97 | |

| L2−(10−3) | 0–10% | 0.66 | 0.43 | 0.52 | 0.52 | 0.46 | 0.45 | 0.45 | 0.43 | 0.41 | 0.60 | 0.45 | 0.53 | 0.55 | 0.43 | 0.42 | 0.42 | 0.40 | 0.39 |

| 10–20% | 2.12 | 1.51 | 1.77 | 1.77 | 1.55 | 1.52 | 1.52 | 1.47 | 1.46 | 2.08 | 1.58 | 1.86 | 1.94 | 1.53 | 1.52 | 1.45 | 1.51 | 1.46 | |

| 20–30% | 4.14 | 3.08 | 3.58 | 3.62 | 3.15 | 3.06 | 3.01 | 2.96 | 2.95 | 3.78 | 2.85 | 3.60 | 3.76 | 2.86 | 2.91 | 2.81 | 2.81 | 2.75 | |

| 30–40% | 8.32 | 6.34 | 7.24 | 7.30 | 6.50 | 6.35 | 6.08 | 6.00 | 5.98 | 6.61 | 5.67 | 6.92 | 7.18 | 5.53 | 5.42 | 5.51 | 5.38 | 5.44 | |

| FID− | 0–10% | 0.91 | 0.61 | 0.58 | 0.52 | 0.53 | 0.49 | 0.47 | 0.45 | 0.43 | 7.58 | 5.93 | 5.32 | 4.95 | 4.72 | 3.88 | 3.59 | 3.79 | 3.83 |

| 10–20% | 2.99 | 1.96 | 1.89 | 1.61 | 1.68 | 1.51 | 1.46 | 1.45 | 1.36 | 19.02 | 15.08 | 16.61 | 13.73 | 14.01 | 12.67 | 12.31 | 12.31 | 11.79 | |

| 20–30% | 6.18 | 3.69 | 3.51 | 2.84 | 3.02 | 2.67 | 2.63 | 2.63 | 2.45 | 30.95 | 28.48 | 28.86 | 23.89 | 25.29 | 21.49 | 20.55 | 20.21 | 19.81 | |

| 30–40% | 11.15 | 6.53 | 5.98 | 4.60 | 4.98 | 4.48 | 4.10 | 4.33 | 4.01 | 44.30 | 40.31 | 40.80 | 32.89 | 37.71 | 34.80 | 32.60 | 32.61 | 30.53 | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

He, X.; Yin, Y. Non-Local and Multi-Scale Mechanisms for Image Inpainting. Sensors 2021, 21, 3281. https://doi.org/10.3390/s21093281

He X, Yin Y. Non-Local and Multi-Scale Mechanisms for Image Inpainting. Sensors. 2021; 21(9):3281. https://doi.org/10.3390/s21093281

Chicago/Turabian StyleHe, Xu, and Yong Yin. 2021. "Non-Local and Multi-Scale Mechanisms for Image Inpainting" Sensors 21, no. 9: 3281. https://doi.org/10.3390/s21093281

APA StyleHe, X., & Yin, Y. (2021). Non-Local and Multi-Scale Mechanisms for Image Inpainting. Sensors, 21(9), 3281. https://doi.org/10.3390/s21093281