Underwater Target Tracking Using Forward-Looking Sonar for Autonomous Underwater Vehicles

Abstract

1. Introduction

2. FLS Overview

- (1)

- The number of transducers that can be packed in an array is physically restricted because of the limitations of transducer size. Thus, the resolution of an FLS image is lower, and the gray level of the target area is generally smaller, so it is more difficult to find some details of targets inside it.

- (2)

- The scattering capability of different parts of the target surface is different, which is affected by the shape, material, and relative position between target and sonar. The incident angle of an acoustic wave is also changed with target movement, so different regions may be generated for the same target in the acoustic image, and they often appear to be unconnected regions in acoustic vision.

- (3)

- The phenomenon of multipath propagation is a distinctive feature in acoustic images, and reflected acoustic waves may have greater energy than that of ones reflected from obstacles, leading to false or lack of target detection, increasing the difficulty of acoustic-image processing.

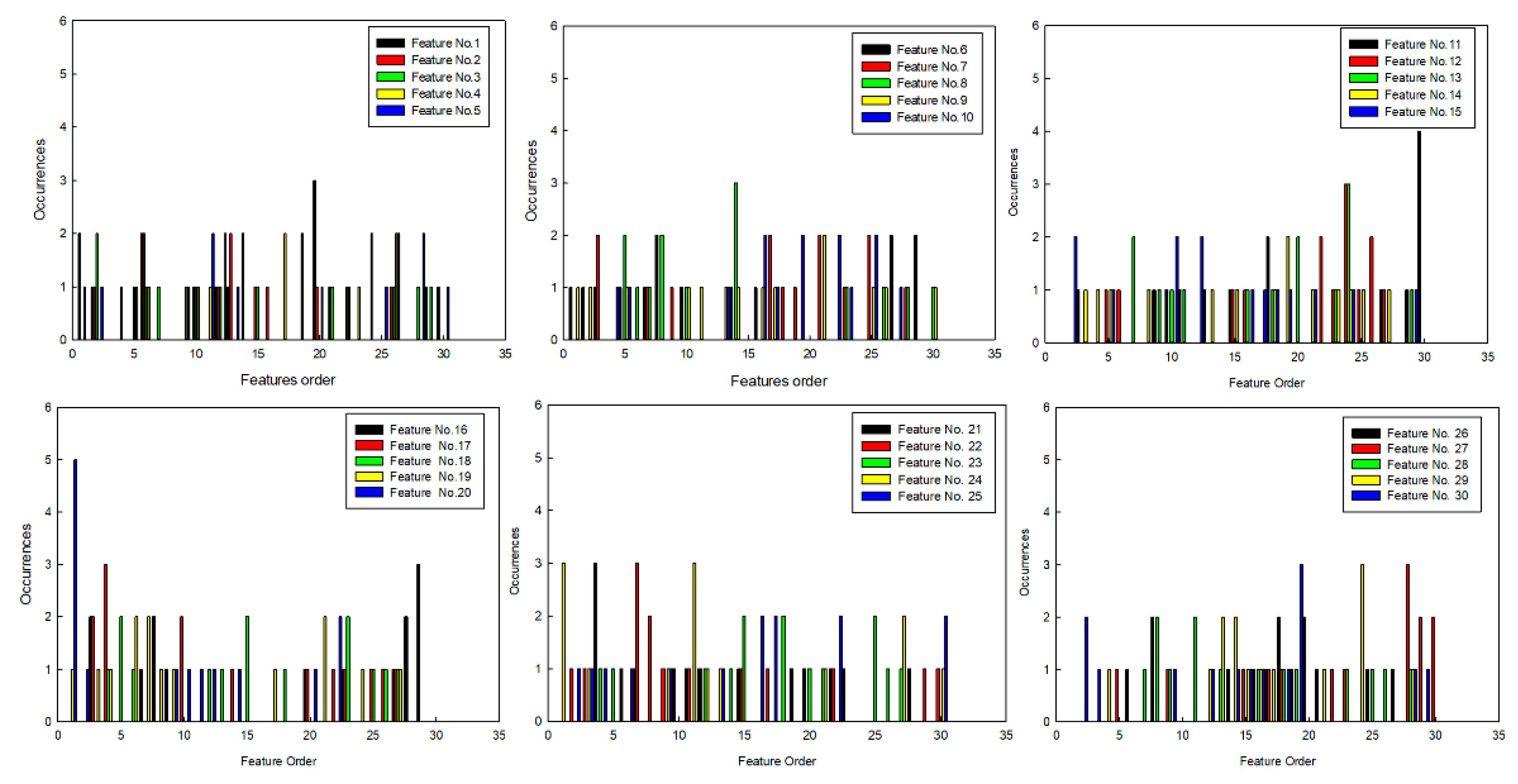

3. Feature Selection Based on GRNN

3.1. Feature Description

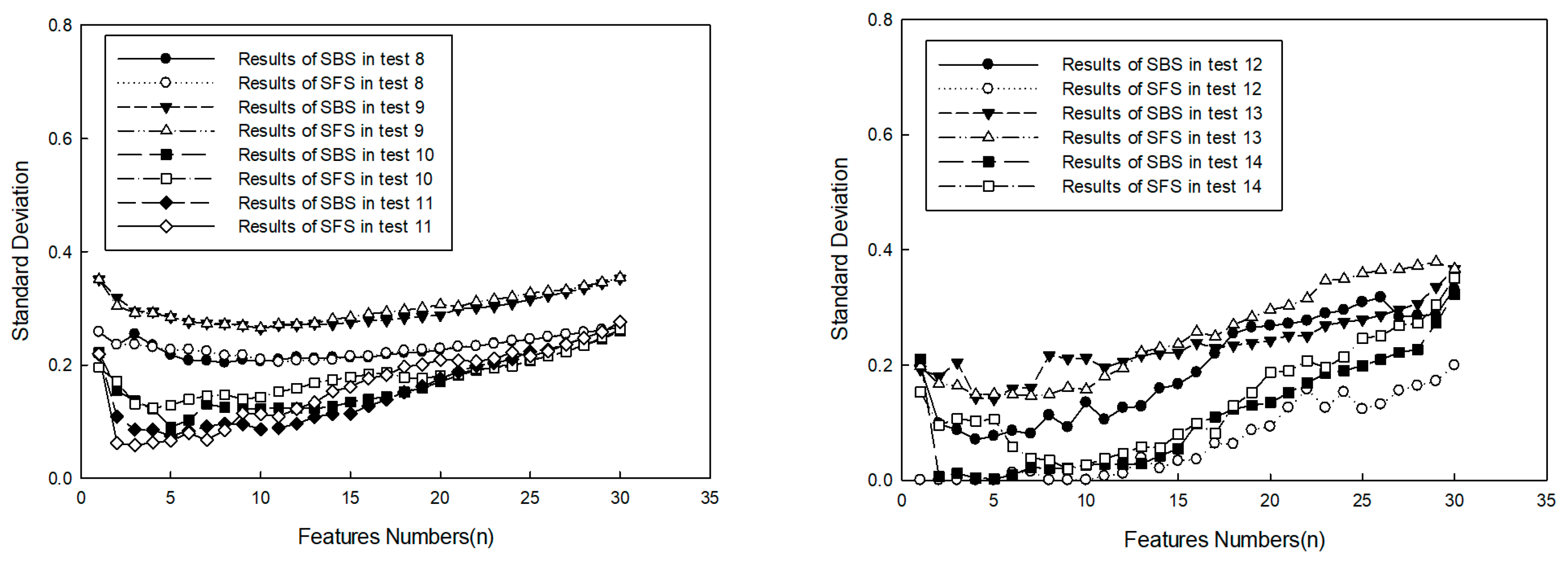

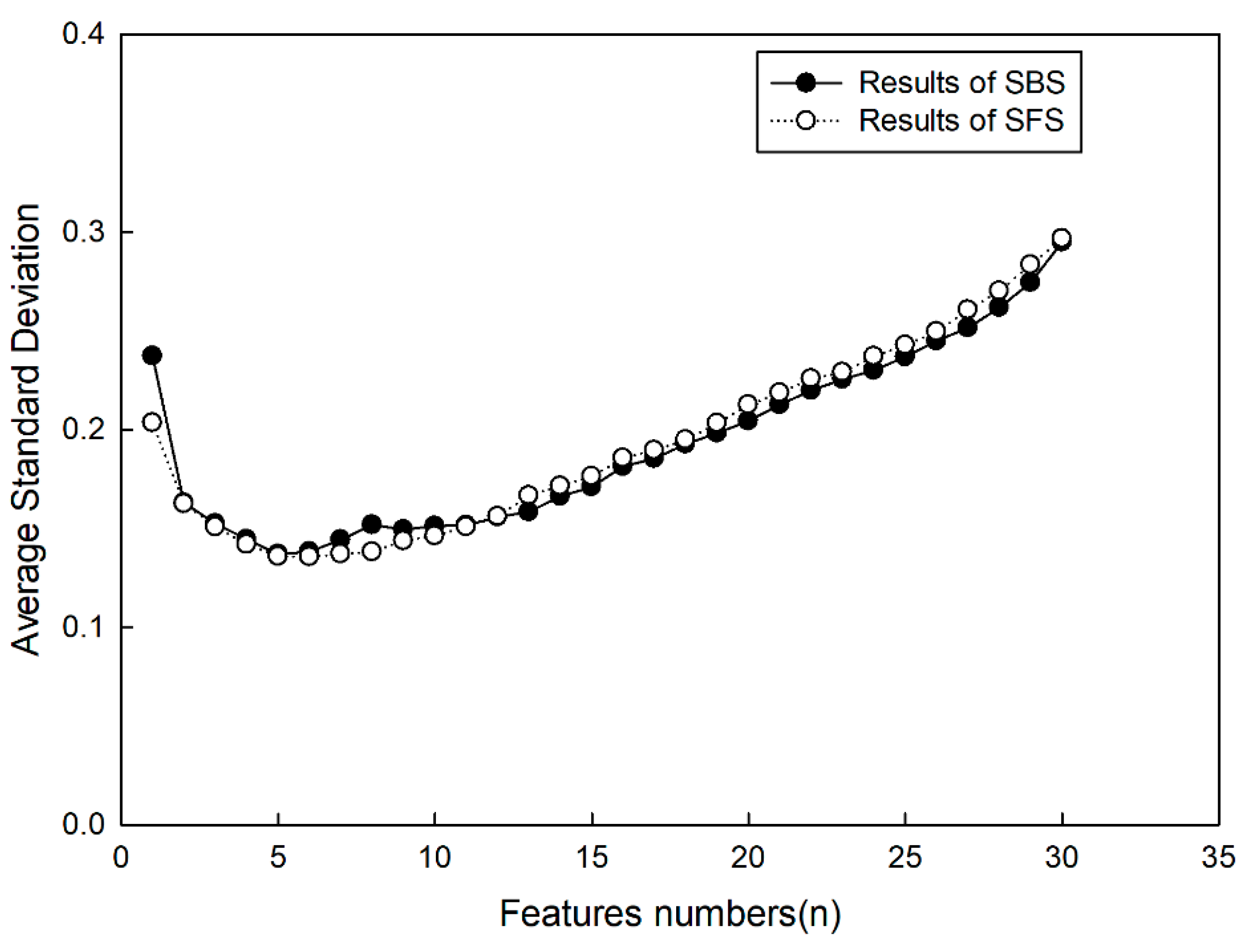

3.2. Search Procedure

3.3. GRNN for Classification

3.4. Experiments and Analysis

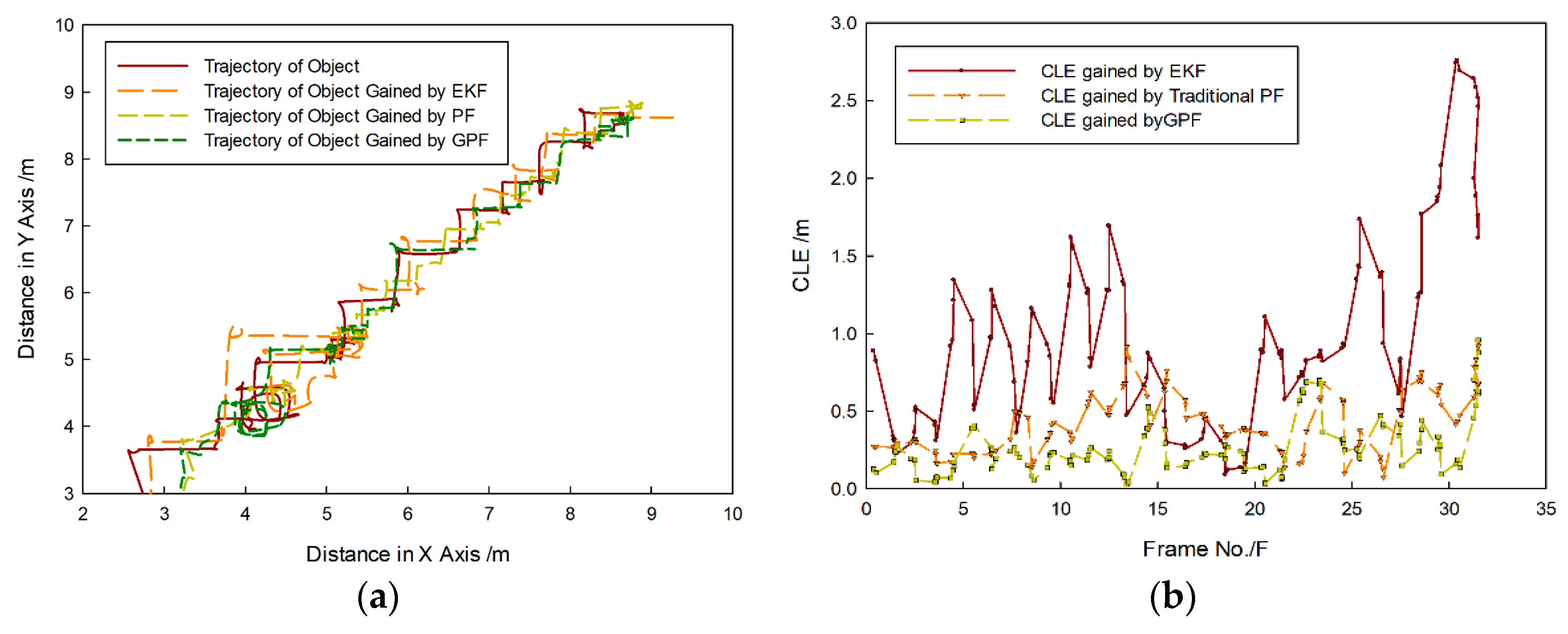

4. Gaussian Particle Filtering

4.1. Basic Principle

4.2. Gaussian Particle-Filter Improvement

4.2.1. Likelihood-Function Representation

4.2.2. Feature-Set Fusion Strategy

4.2.3. Target-Tracking steps

- Initialization: to select interesting targets in first image frame. After the image is processed, target features in Table 7 are calculated, and the number of sample particles is determined. It is assumed that the initial importance function is normal distribution function. Then, the mean value is the center coordinate of the target, and covariance is determined by the tracking environment, that is, particles collected by the initial importance function in the x- and y-axes can be written as , and each particle is calculated according to the kinematics model.

- To capture the image in the next frame, calculate features of particles . According to Equation (17), feature clues are analyzed to check whether they are degenerated, and the fused weighted value of particles is calculated. The weighted particle value is normalized as ; then, and are calculated.

- To sample according to posterior probability distribution , and is gained. Then, can be calculated by the kinematics model. According to Equation (18), the predicted mean and covariance values are calculated. If targets are lost, covariance value is expanded, otherwise, it is turned into Step 2.

5. Example Test and Discussion

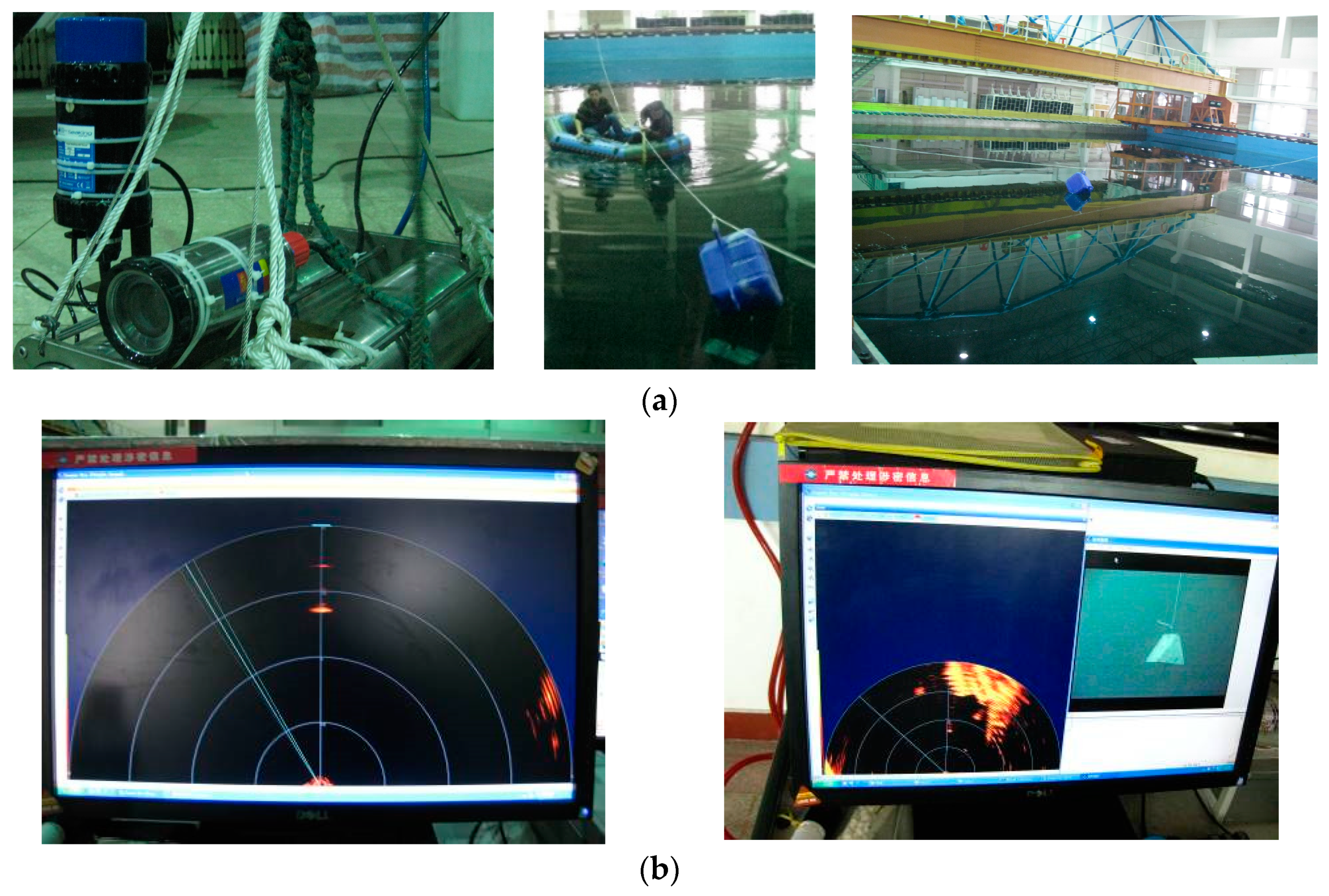

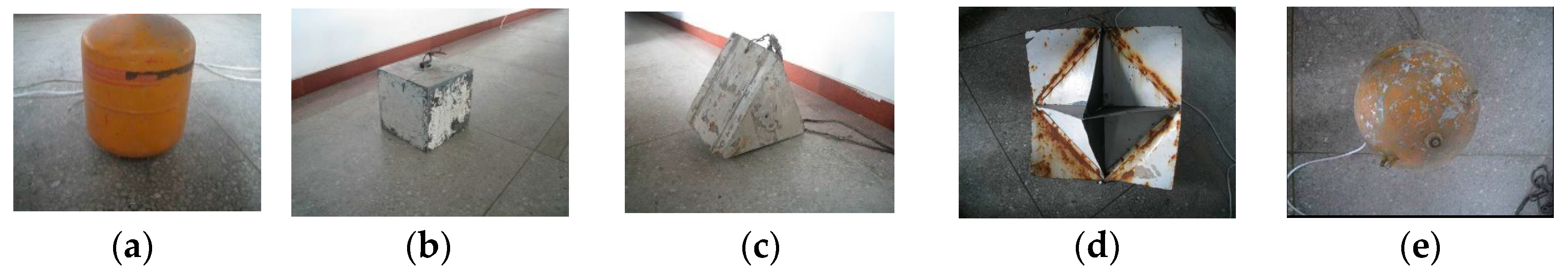

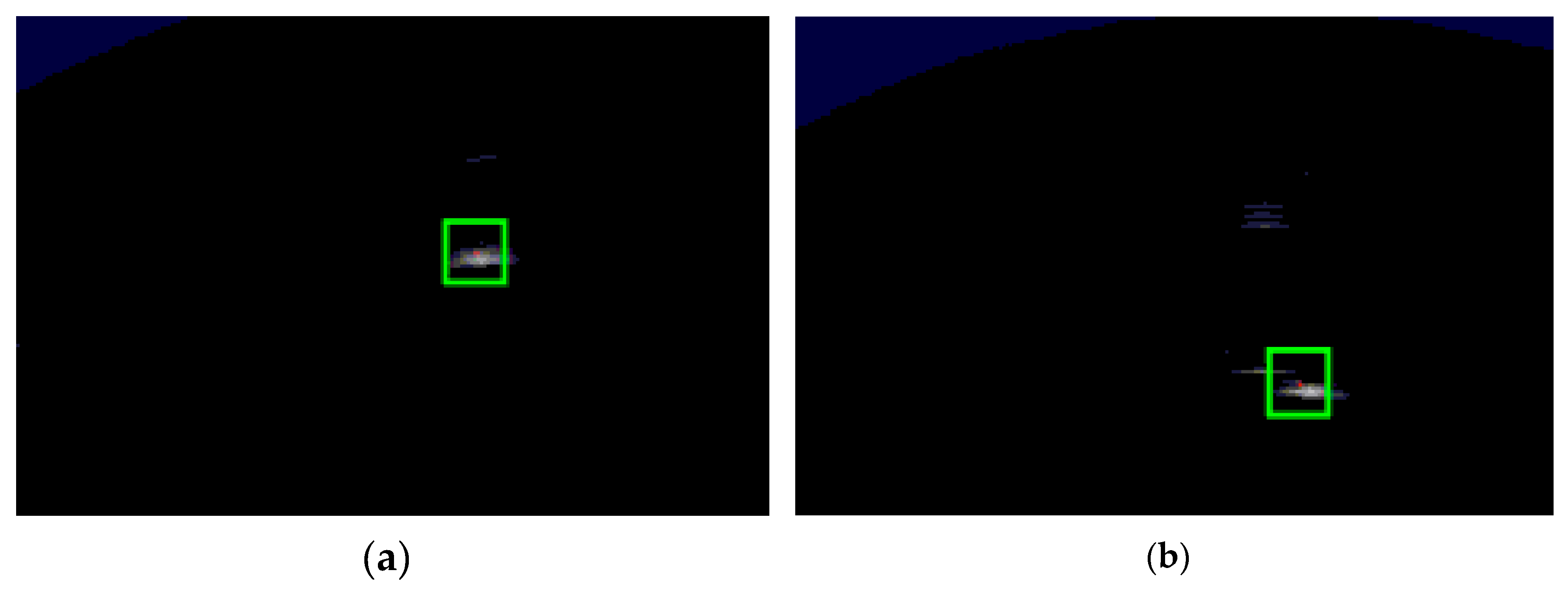

5.1. Tank Experiment

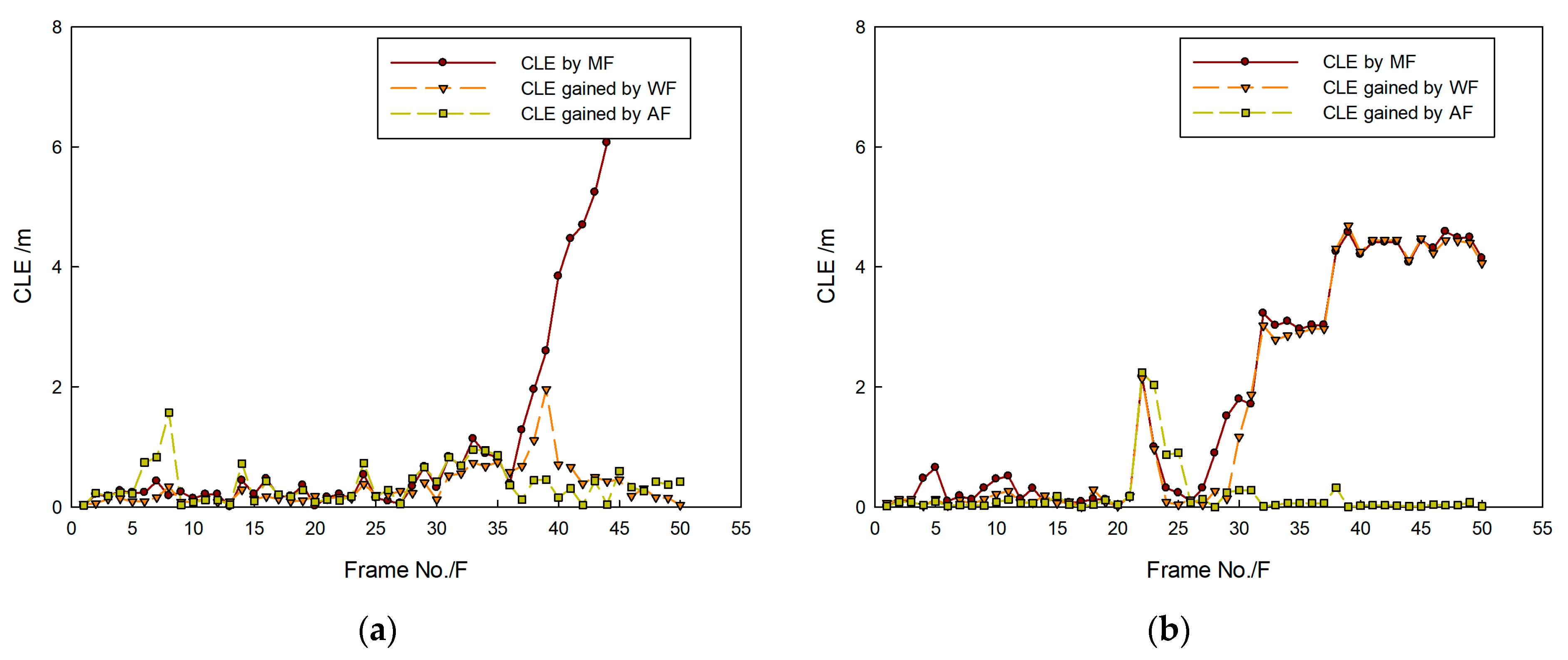

5.1.1. Comparative Experiments of Tracking Methods

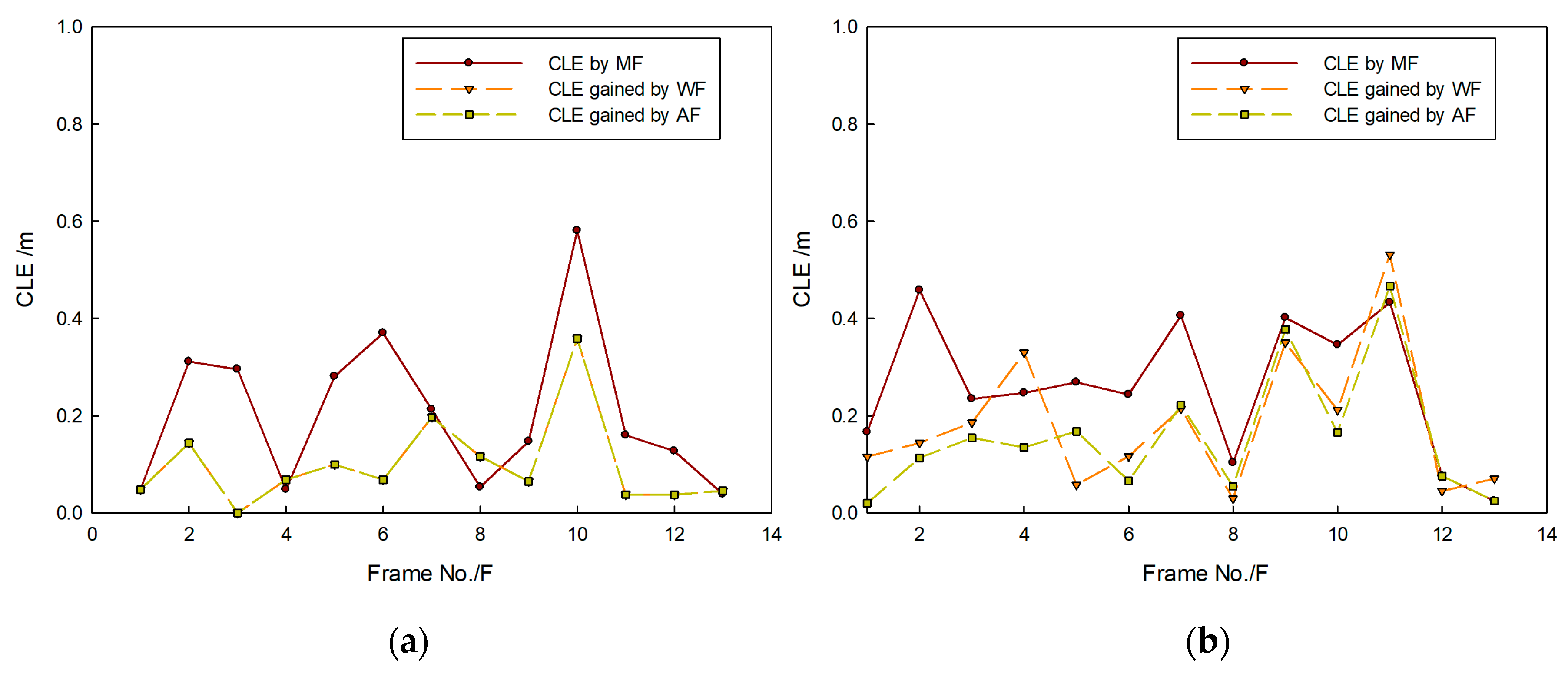

5.1.2. Fusion-Strategy Experiments

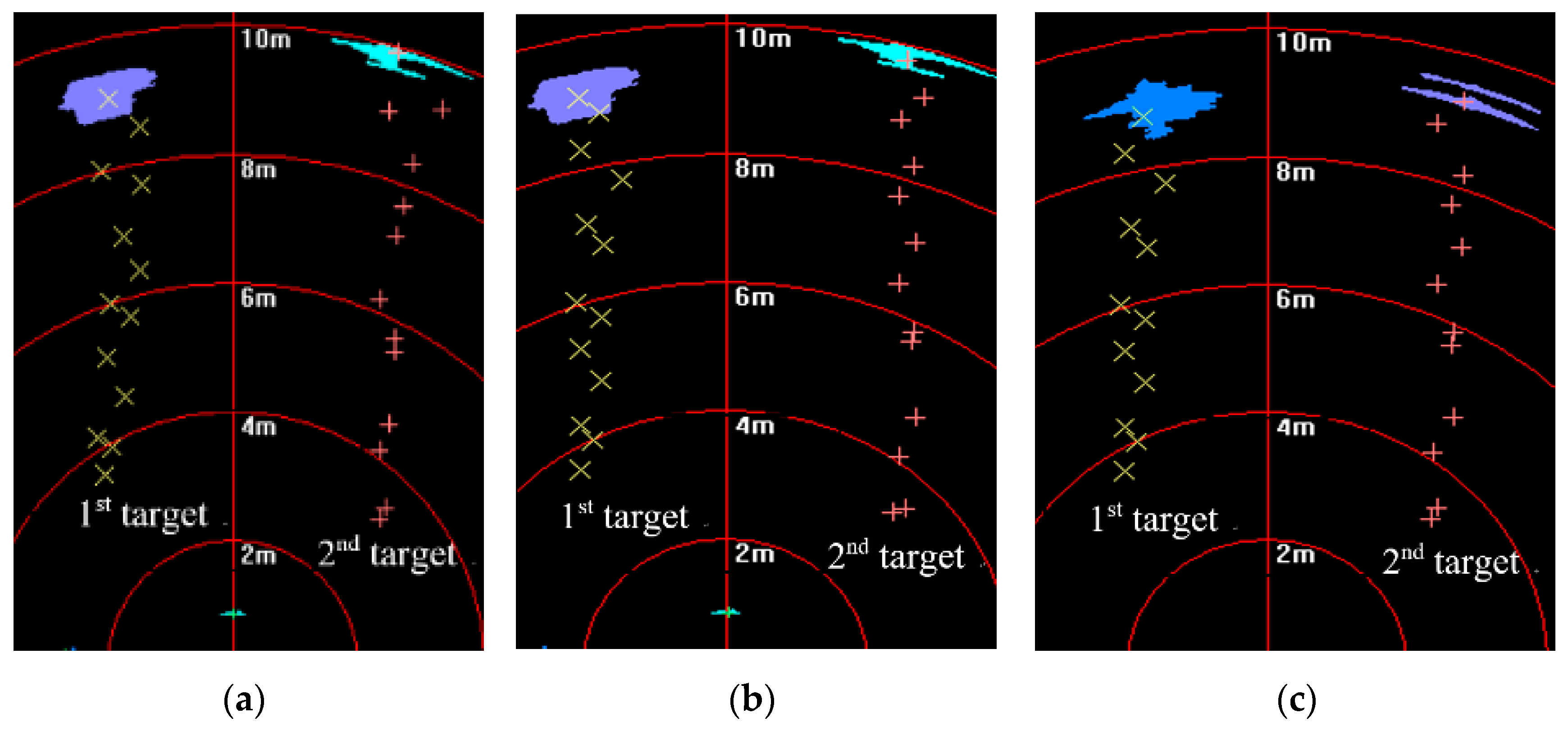

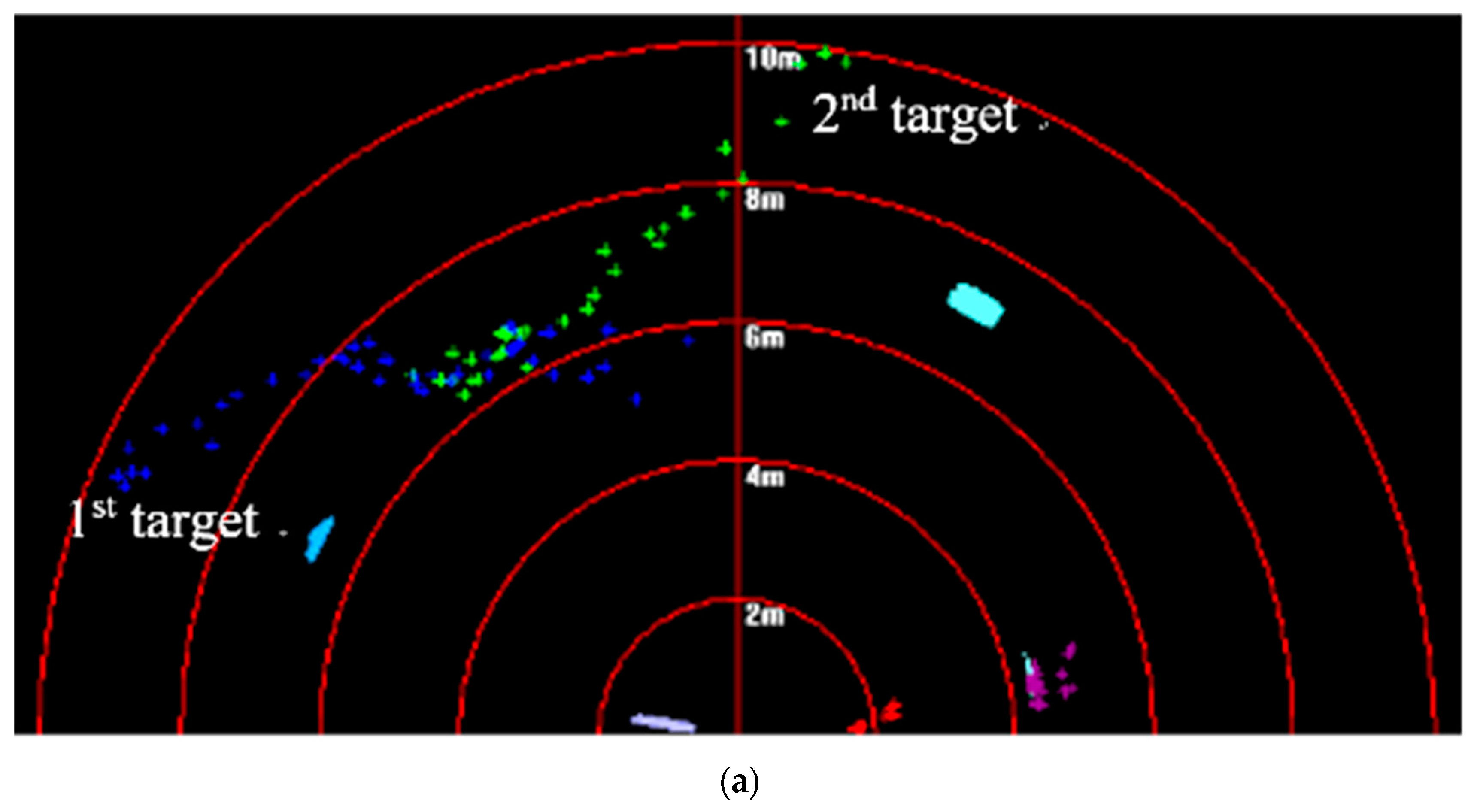

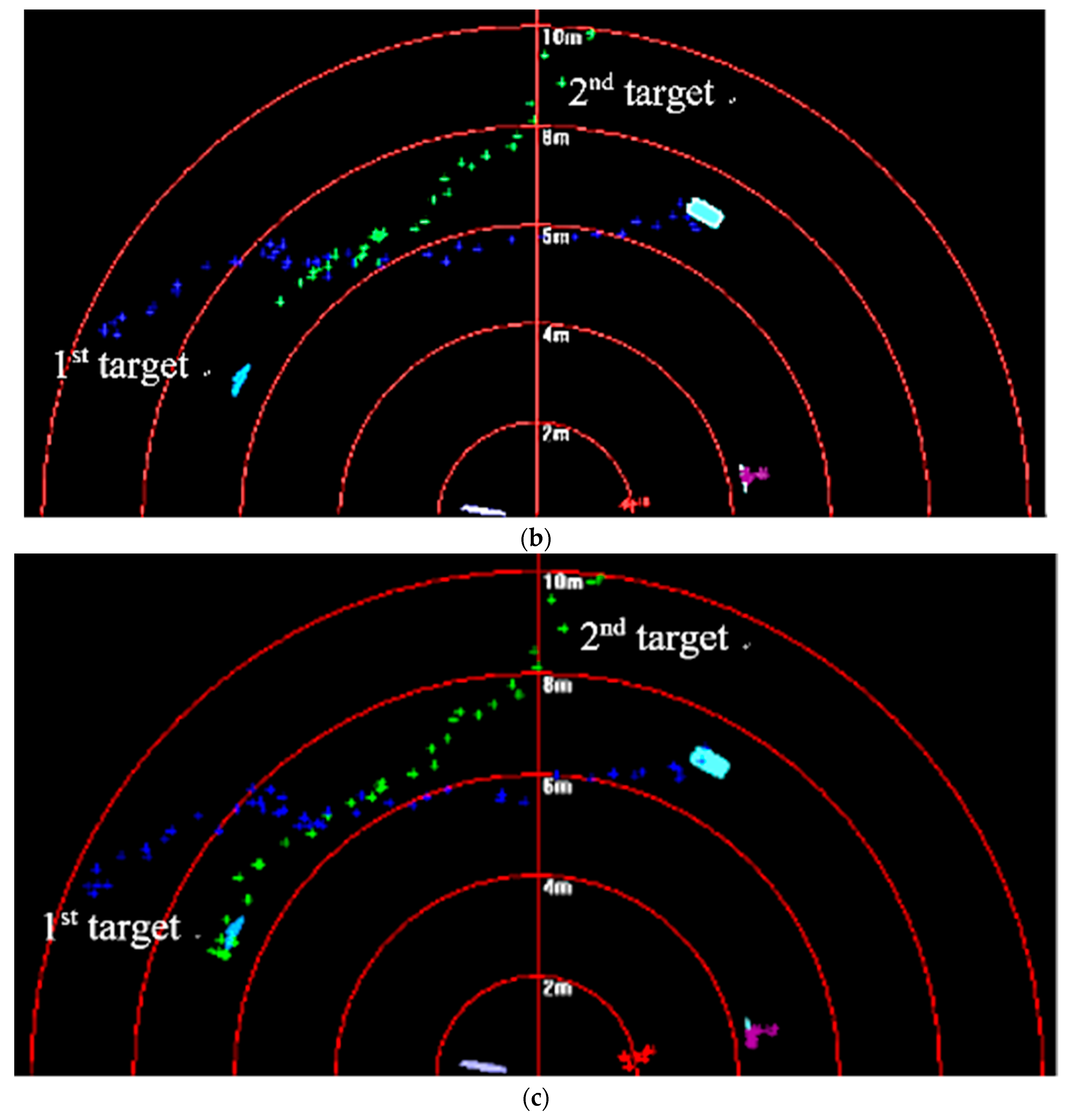

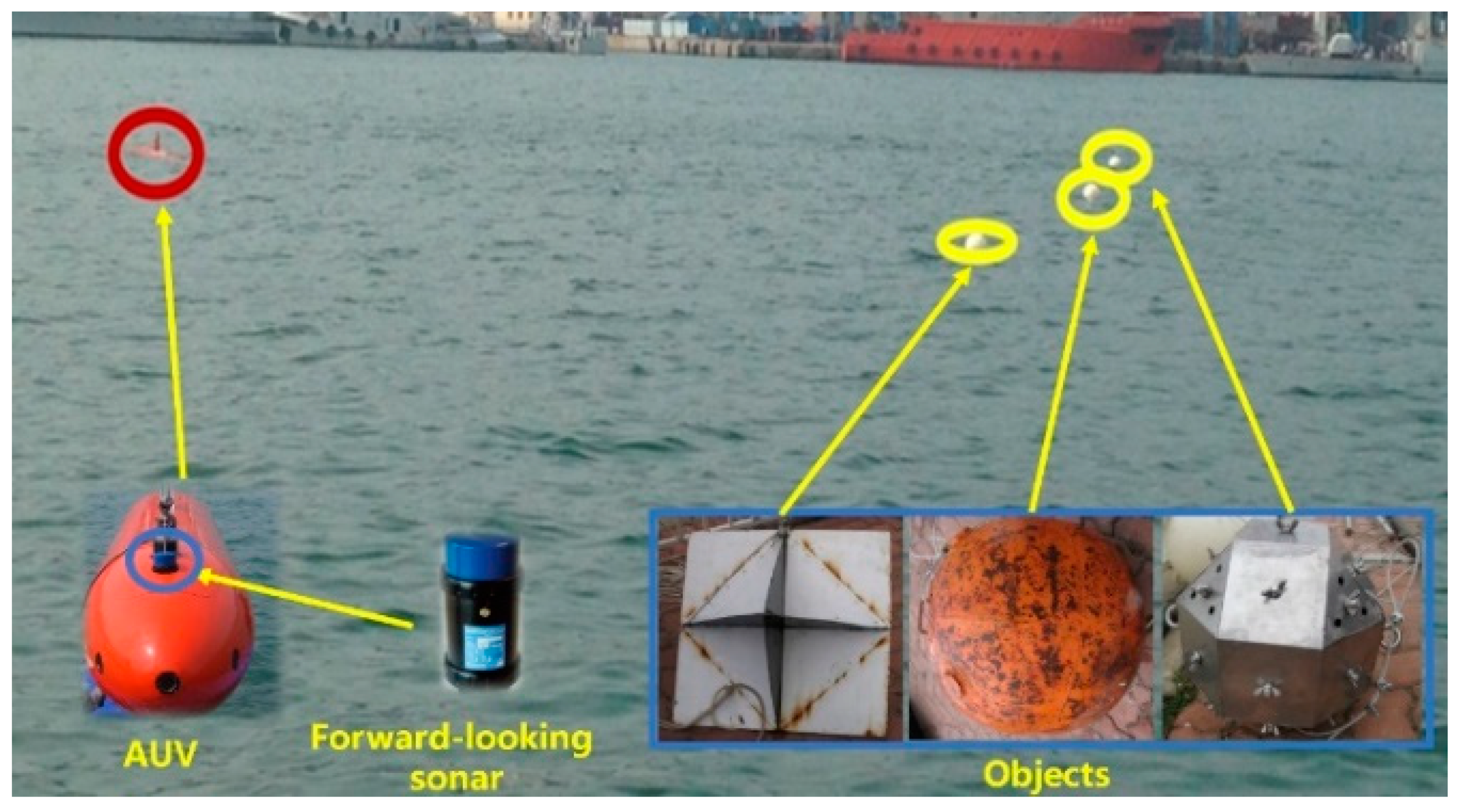

5.2. Sea Trial

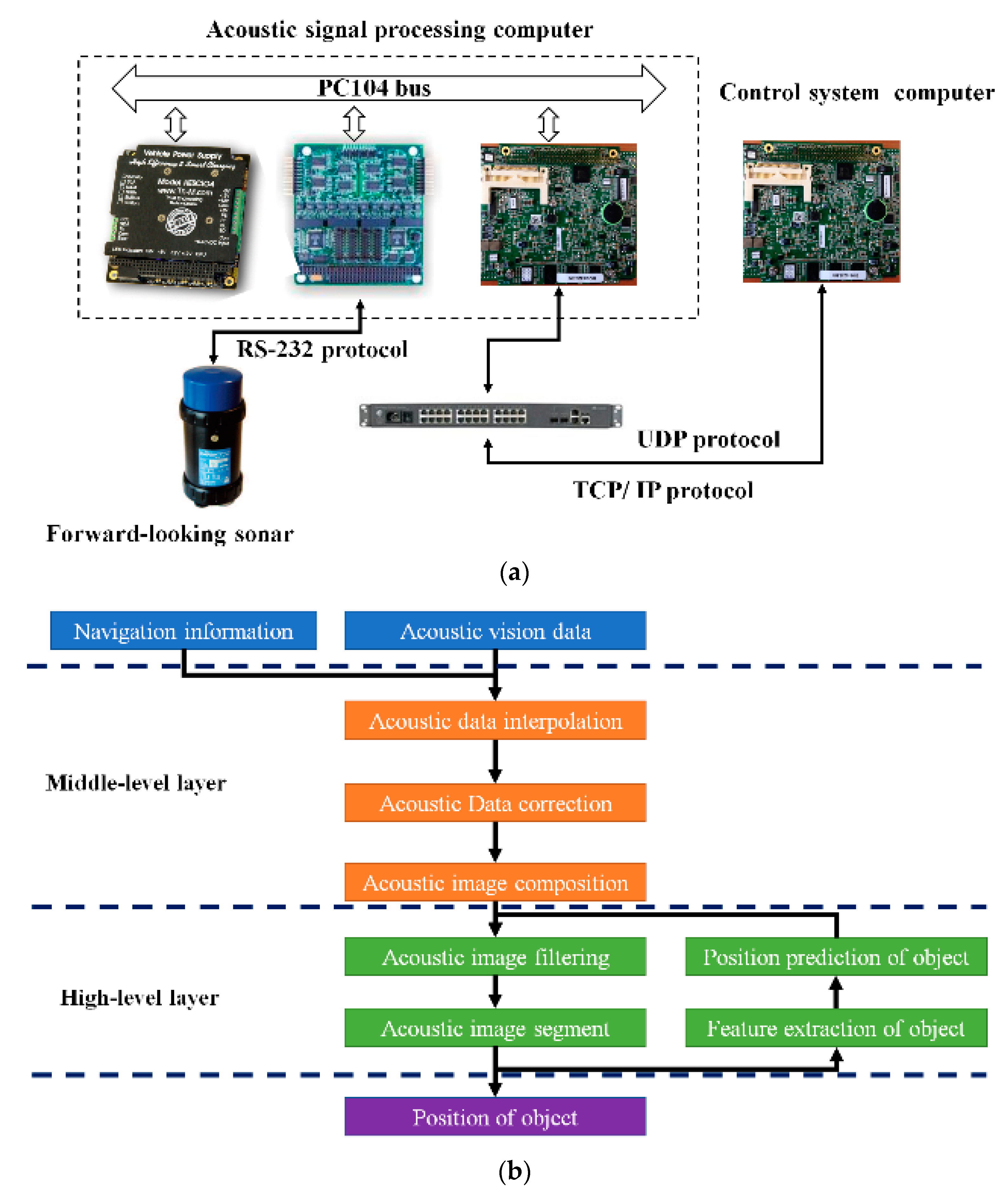

5.2.1. Acoustic-Vision-Based Processing Framework

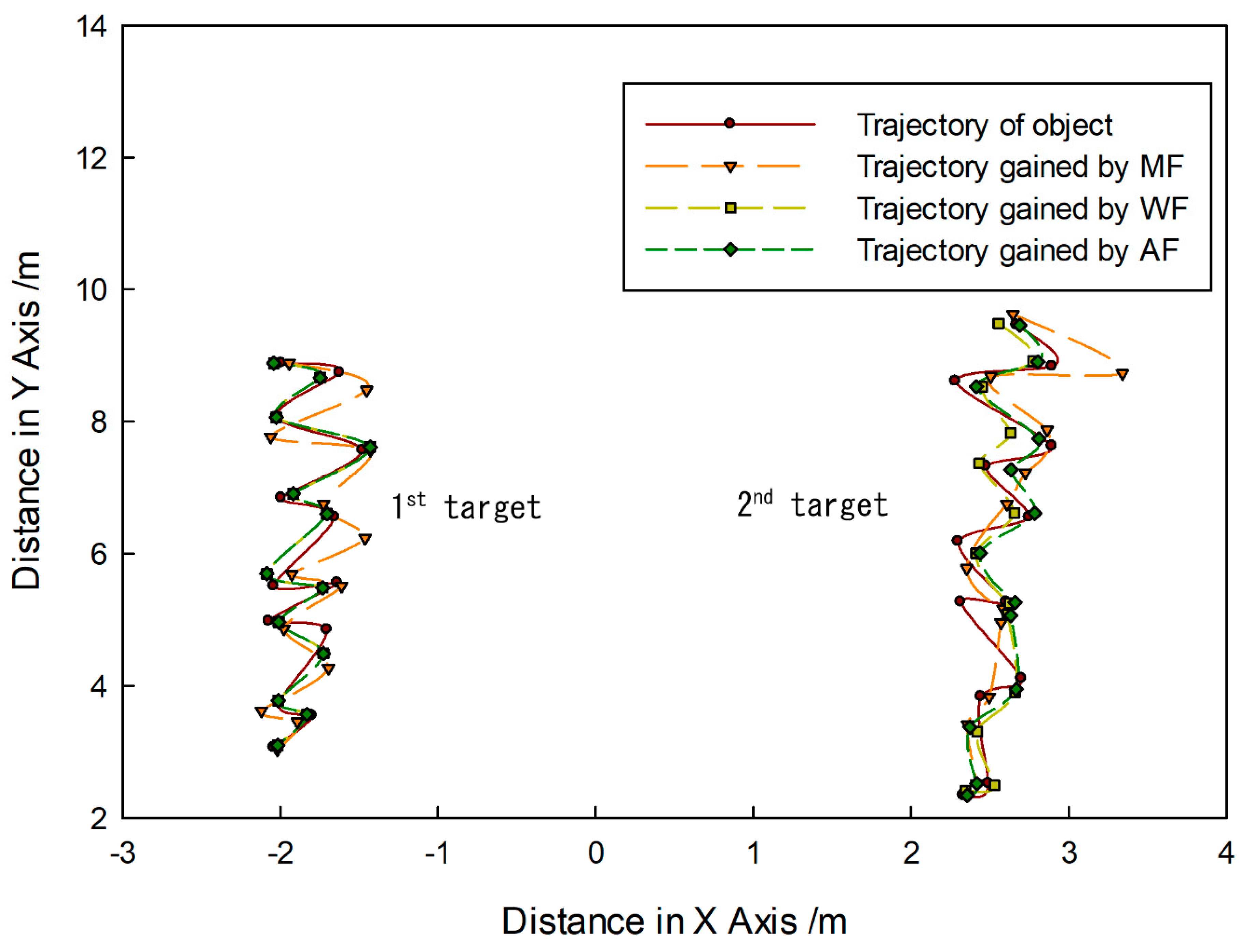

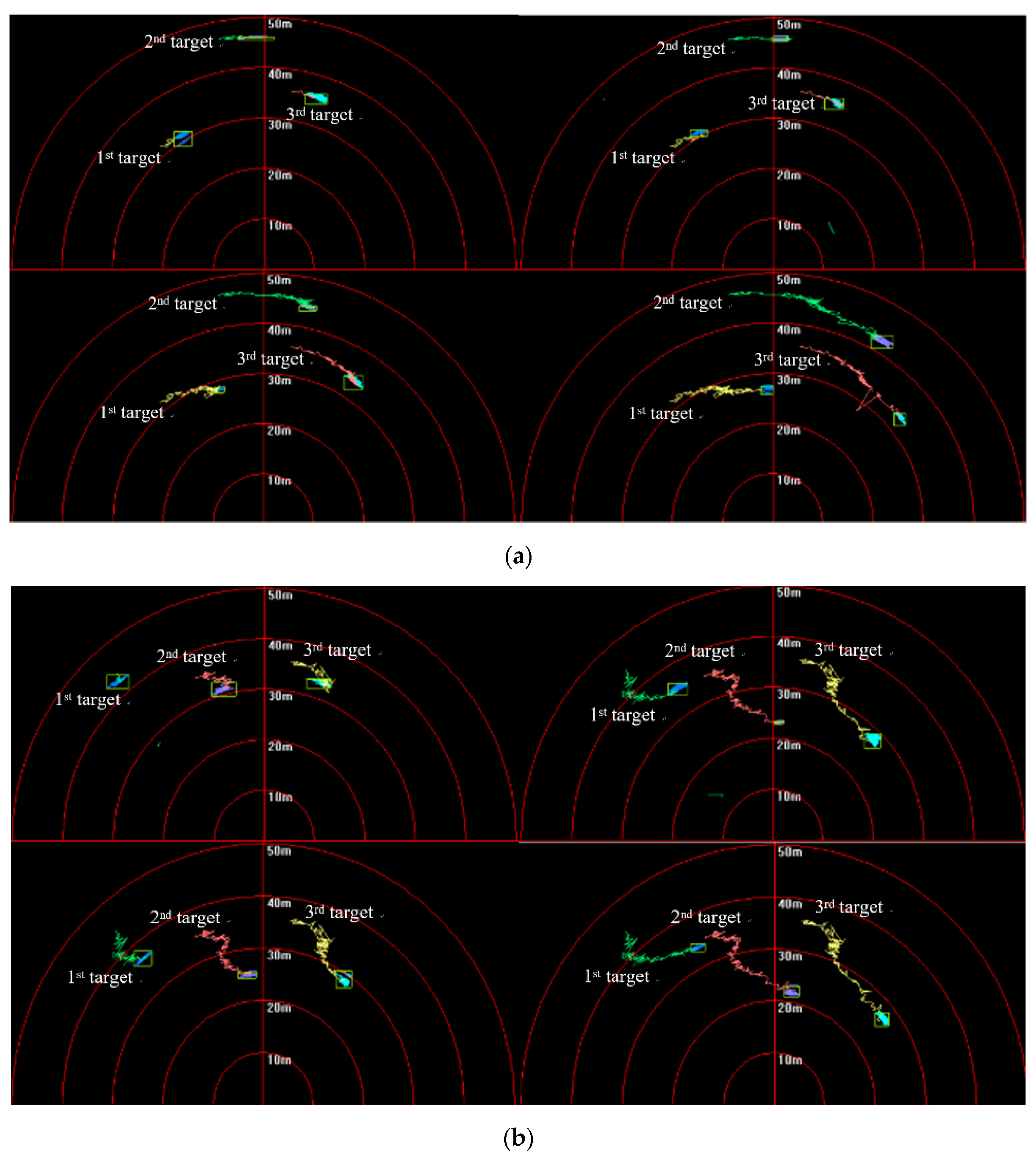

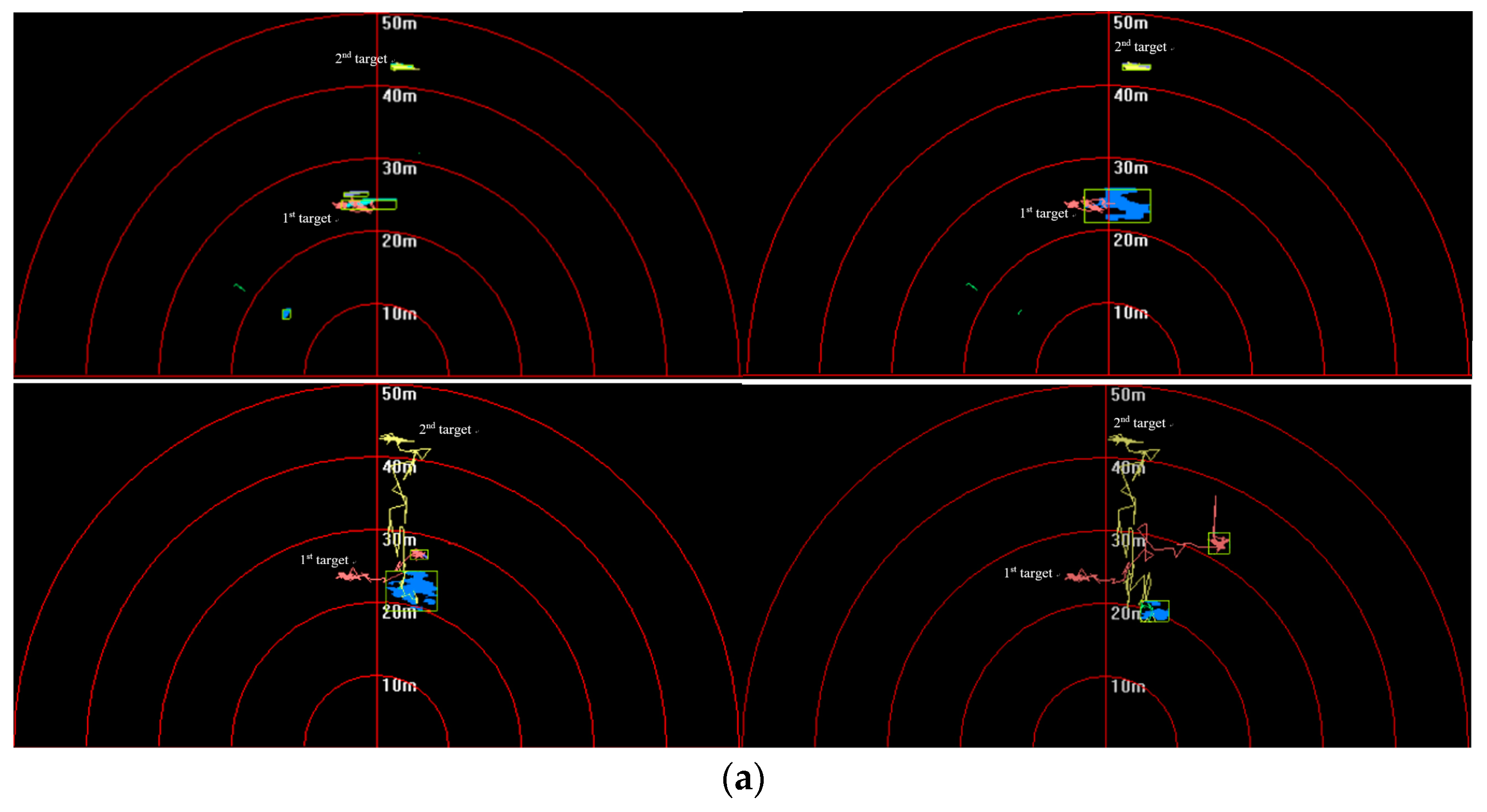

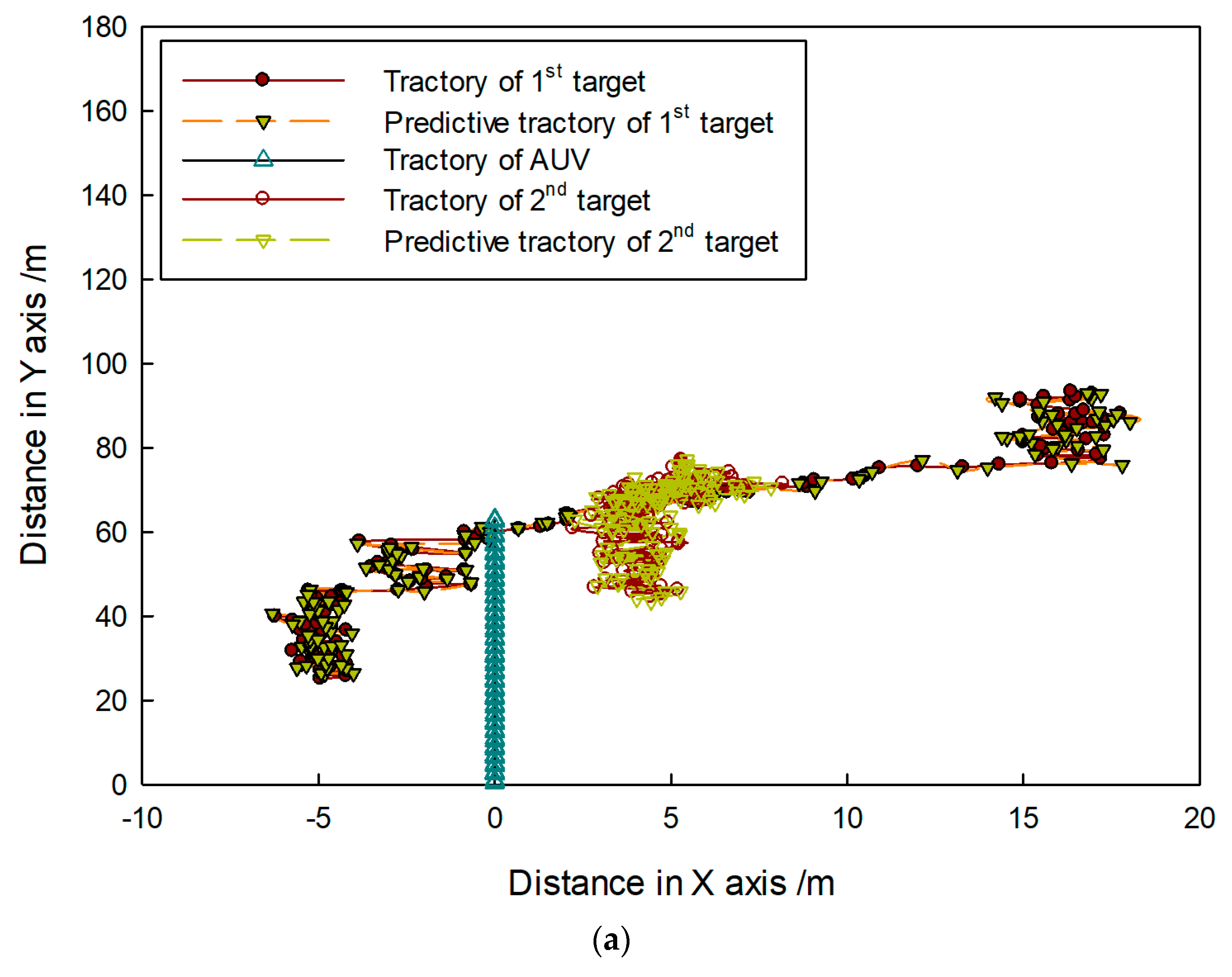

5.2.2. Target-Tracking Test under Noncrossing-Movement Condition

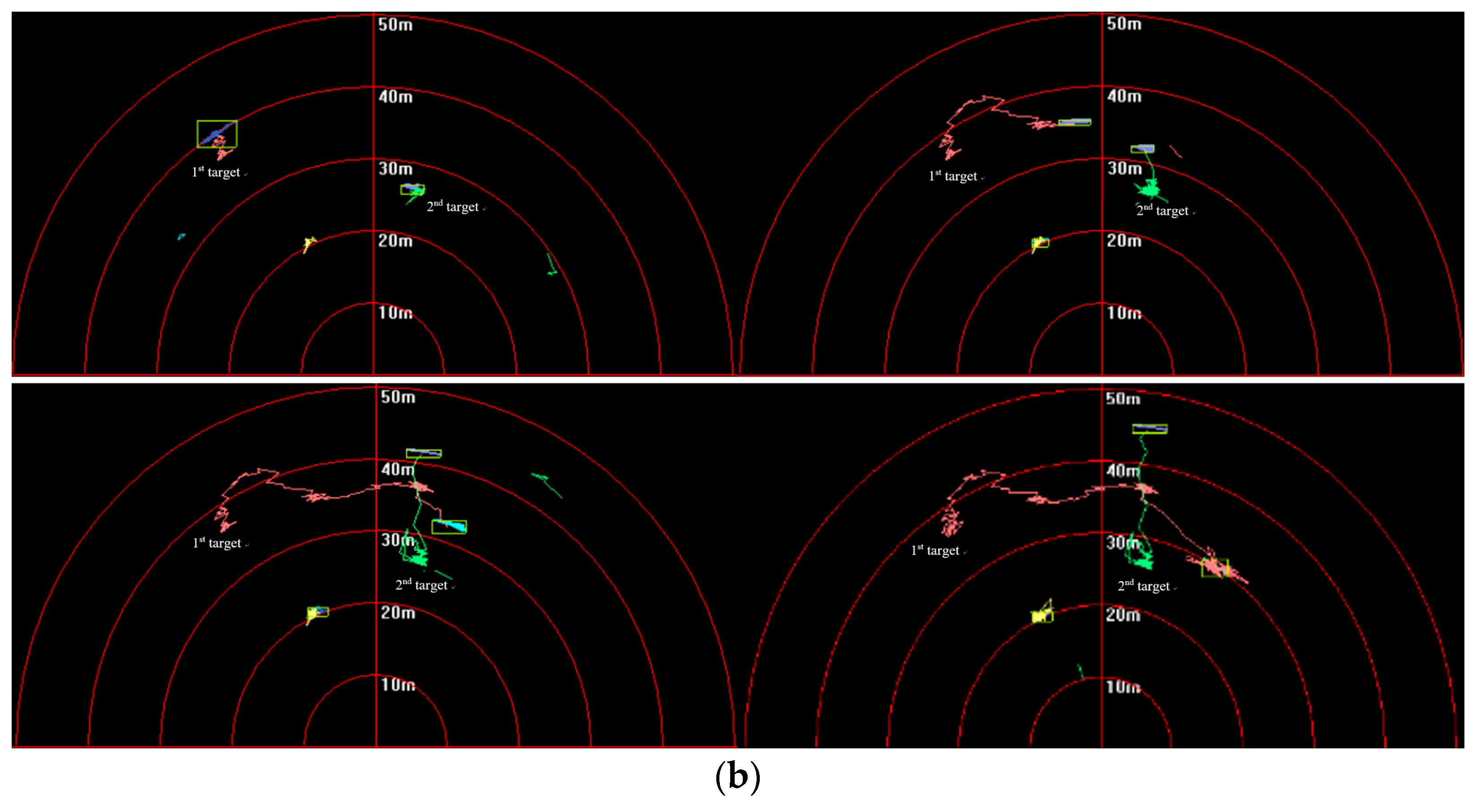

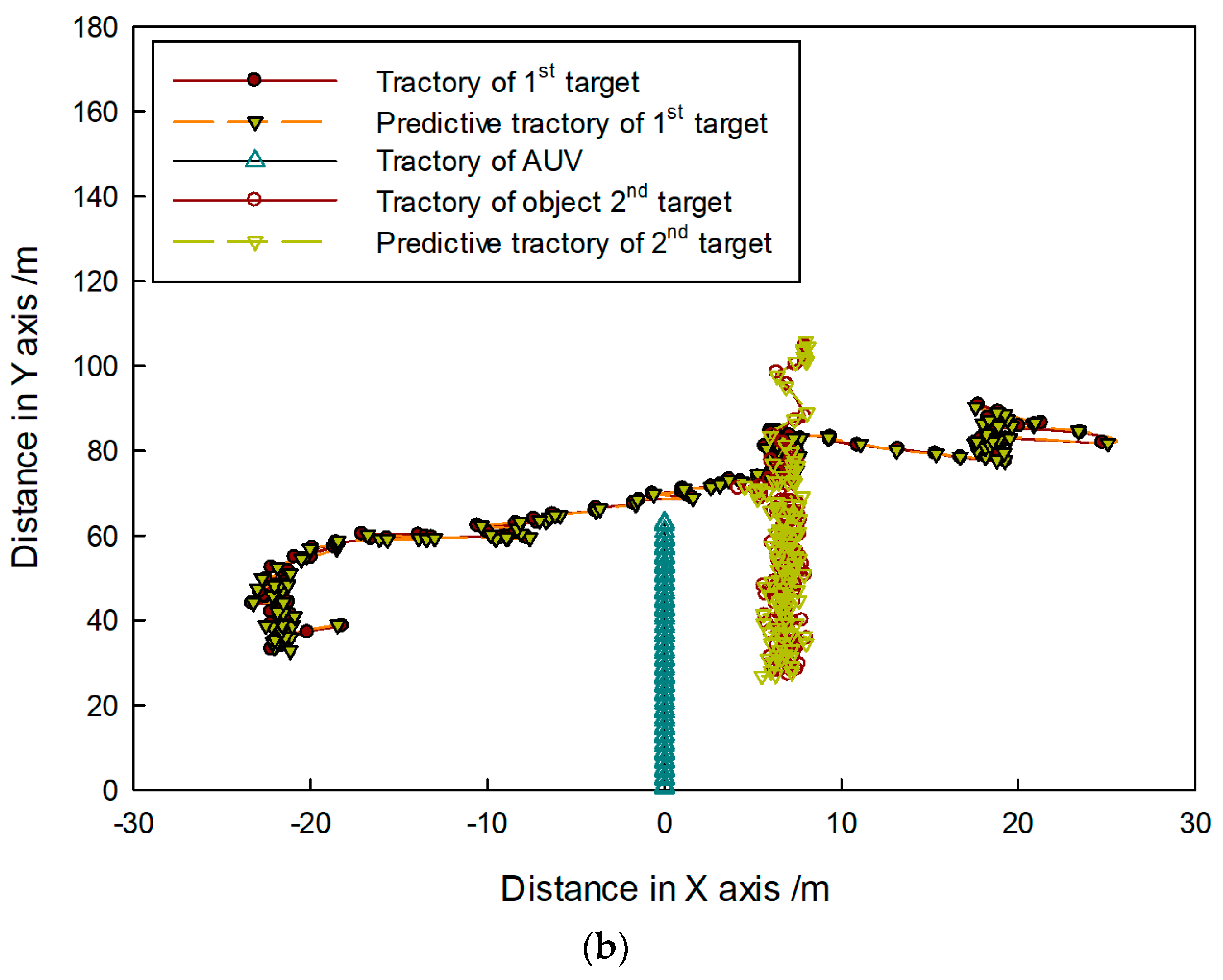

5.2.3. Target-Tracking Test under Crossing-Movement Condition

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Ursula, K.; Aniceto, V.A.S. A review of unmanned vehicles for the detection and monitoring of marine fauna. J. Mar. Pollut. Bull. 2019, 140, 17–29. [Google Scholar]

- Meyer, D. Glider technology for ocean observations: A review. J. Ocean Sci. Discuss 2016, 26, 1–26. [Google Scholar] [CrossRef]

- Daniel, O.B.; Jones Andrew, R. Autonomous marine environmental monitoring: Application in decommissioned oil fields. J. Sci. Total Environ. 2019, 668, 835–853. [Google Scholar]

- Wynn, R.B.; Huvenne, V.A.I. Autonomous underwater vehicles (AUVs): Their past, present and future contributions to the advancement of marine geoscience. J. Mar. Geol. 2014, 352, 451–468. [Google Scholar] [CrossRef]

- Bingham, B.; Foley, B. Robotic tools for deep water archaeology: Surveying an ancient shipwreck with an autonomous underwater vehicle. J. Field Robot. 2010, 27, 702–717. [Google Scholar] [CrossRef]

- Herries, K.; Wiener, C. Adaptive robots at sea: AUVs, ROVs and AI are changing how we do oceanography. J. Sea Technol. 2018, 59, 14–16. [Google Scholar]

- Xu, Y.; Xiao, K. Technology Development of Autonomous Ocean Vehicle. J. Acta Autonatica Sin. 2007, 33, 518–521. [Google Scholar]

- Unmanned Systems Integrated Roadmap FY2017-2042. Available online: https://www.defensedaily.com/wp-content/uploads/post_attachment/206477.pdf (accessed on 30 June 2019).

- Williams, N.; Lane, D.M. Classification of Sector-Scanning Sonar Image Sequences. In Proceedings of the Fifth International Conference on Image Processing and its Applications, Edinburgh, UK, 4–6 July 1995; pp. 83–97. [Google Scholar]

- Williams, N.; Lane, D.M. A Spatial-Temporal Approach for Segmentation of Moving and Static Objects in Sector Scan Sonar Image Sequences. In Proceedings of the 5th International Conference on Image Processing and its Applications, Stevenage, UK, 4–6 July 1995; pp. 163–167. [Google Scholar]

- Williams, N.; Lane, D.M. Robust Tracking of Multiple Objects in Sector-Scan Sonar Image Sequences Using Optical Flow Motion Estimation. J. Ocean. Eng. 1998, 23, 31–46. [Google Scholar]

- Chantler, M.J.; Stoner, J.P. Automatic Interpretation of Sonar Image Sequences Using Temporal Feature Measures. J. Ocean. Eng. 1997, 22, 29–34. [Google Scholar] [CrossRef]

- Ruiz, I.T.; Lane, D.M. A Comparison of Inter-Frame Feature Measures for Robust Object Classification in Sector Scan Sonar Image Sequences. J. Ocean. Eng. 1999, 24, 67–78. [Google Scholar]

- Perry, S.W.; Ling, G. Pulse-Length-Tolerant Features and Detectors for Sector-Scan Sonar Imagery. J. Ocean. Eng. 2004, 29, 35–45. [Google Scholar] [CrossRef]

- Perry, S.W.; Ling, G. A Recurrent Neural Network for Detecting Objects in Sequences of Sector-Scan Sonar Images. J. Ocean. Eng. 2004, 29, 47–52. [Google Scholar] [CrossRef]

- Williams Glen, N.; Lagace Glenn, E. A collision avoidance controller for autonomous underwater vehicles. In Proceedings of the Symposium on Autonomous Underwater Vehicle Technology, Washington, DC, USA, 5–6 June 1990; pp. 206–212. [Google Scholar]

- DeMarco, K.J.; West, M.E. Sonar-Based Detection and Tracking of a Diver for Underwater Human-Robot Interaction Scenarios. In Proceedings of the 2013 IEEE International Conference on Systems, Man, and Cybernetics, Manchester, UK, 13–16 October 2013; pp. 2378–2383. [Google Scholar]

- Petillot, Y.; Tena-Ruiz, I.; Lane David, M. Underwater Vehicle Obstacle Avoidance and Path Planning Using a Multi-Beam Forward Sonar. J. Ocean. Eng. 2001, 26, 240–251. [Google Scholar]

- Clark, D.E.; Bell, J. Bayesian Multiple Target Tracking in Forward Scan Sonar Images Using the PHD filter. J. Radar Sonar Navig. 2005, 152, 327–334. [Google Scholar] [CrossRef]

- Clark, D.E.; Tena-Ruiz, I.; Petillot, Y.; Bell, J. Multiple Target Tracking and Data Association in Sonar Images. In Proceedings of the 2006 IEEE Seminar on Target Tracking: Algorithms and Applications, Birmingham, UK, 19 June 2006; pp. 149–154. [Google Scholar]

- Handegard, N.O.; Williams, K. Automated tracking of fish in trawls using the didson (dual frequency identification sonar). J. Mar. Sci. 2008, 65, 636–644. [Google Scholar] [CrossRef]

- Ma, Y. Research of Underwater Object Detection and Tracking Based on Sonar. Master’s Thesis, Harbin Engineering University, Harbin, China, 2008. [Google Scholar]

- Liu, D. Sonar Image Target Detection and Tracking Based on Multi-Resolution Analysis. Ph.D. Thesis, Harbin Engineering University, Harbin, China, 2011. [Google Scholar]

- Quidu, I.; Jaulin, L.; Bertholom, A. Robust multitarget tracking in forward-looking sonar image sequences using navigational data. Ocean Eng. 2012, 37, 417–430. [Google Scholar] [CrossRef]

- Hurtós, N.; Palomeras, N. Autonomous detection, following and mapping of an underwater chain using sonar. Ocean Eng. 2017, 130, 336–350. [Google Scholar] [CrossRef]

- AI Muallim, M.T.; Duzenli, O. Improve Divers Tracking and Classification in Sonar Images Using Robust Diver Wake Detection Algorithm. In Proceedings of the 19th International Conference on Machine Learning and Computing, Vancouver, BC, Canada, 7–8 August 2017; pp. 85–90. [Google Scholar]

- Ye, X.; Sun, Y. FCN and Siamese Network for Small Target Tracking in Forward-looking Sonar Images. In Proceedings of the 2018 OCEANS, Charleston, SC, USA, 22–25 October 2018; pp. 149–154. [Google Scholar]

- Super SeaKing Sonar—Mechanical Scanning (Work-Class ROV). Available online: https://www.tritech.co. uk/product/mechanical-scanning-sonar-tritech-super-seaking (accessed on 11 January 2019).

- Sonka, M.; Hlavac, V.; Boyle, R. Shape Representation and Description. Image Processing, Analysis, and Machine Vision, 4th ed.; Cengage Learning: Boston, MA, USA, 2014; pp. 228–279. [Google Scholar]

- Gu, N.; Fan, M. Efficient sequential feature selection based on adaptive eigenspace model. Neurocomputing 2015, 20, 1–11. [Google Scholar] [CrossRef]

- Borboudakis, G.; Tsamardinos, I. Forward-Backward Selection with Early Dropping. J. Mach. Learn. Res. 2019, 20, 199–209. [Google Scholar]

- Applications of General Regression Neural Networks in Dynamic Systems. Available online: http://dx.doi.org/10.5772/intechopen.80258 (accessed on 20 June 2019).

- Stateczny, A. Neural Manoeuvre Detection of the Tracked Target in ARPA Systems. IFAC Proc. 2002, 34, 209–214. [Google Scholar] [CrossRef]

- Stateczny, A.; Kazimierski, W. Selection of GRNN Network Parameters for the Needs of State Vector Estimation of Manoeuvring Target in ARPA Devices. Proc. Soc. Photo Opt. Instrum. Eng. 2006, 6159, 1591–1612. [Google Scholar]

- Kazimierski, W.; Zaniewicz, G. Analysis of the Possibility of Using Radar Tracking Method Based on GRNN for Processing Sonar Spatial Data. In Proceedings of the 2nd International Conference on Rough Sets and Emerging Intelligent Systems Paradigms, Warsaw, Poland, 28–30 June 2007; Volume 8537, pp. 319–326. [Google Scholar]

- Kotecha, J.H.; Djuric, P.M. Gaussian Particle Filtering. IEEE Trans. Signal Process. 2003, 51, 2592–2601. [Google Scholar] [CrossRef]

- Hu, X.; Thomas, B. A Basic Convergence Result for Particle Filtering. IEEE Trans. Signal Process. 2008, 56, 1337–1348. [Google Scholar] [CrossRef]

- Dou, J.; Li, J. Robust visual tracking base on adaptively multi-feature fusion and particle filter. Optik 2014, 5, 1680–1686. [Google Scholar] [CrossRef]

- Zhong, X.; Xue, J. An Adaptive Fusion Strategy Based Multiple-Cue Tracking. J. Electron. Inf. Technol. 2007, 29, 1017–1022. [Google Scholar]

- Wu, D.; Tang, Y. A Novel Adaptive Fusion Strategy Based on Tracking Background Complexity. J. Shanghai Jiao Tong Univ. 2015, 49, 1868–1875. [Google Scholar]

- Li, T.; Fan, H.; Jesús, G.; Corchado, J.M. Second-Order Statistics Analysis and Comparison between Arithmetic and Geometric Average Fusion: Application to Multi-sensor Target Tracking. J. Inf. Fusion 2019, 51, 233–243. [Google Scholar] [CrossRef]

- Specht, D. Probabilistic neural networks. Neural Netw. 1990, 3, 109–118. [Google Scholar] [CrossRef]

| Parameter | Operating Frequency | Horizontal Beam Width | Vertical Beam Width | Maximum Range | Range Resolution | Scan Size | Weight |

|---|---|---|---|---|---|---|---|

| Low-frequency model | 325 KHz | 3.0° | 20° | 300 m | about 15 m | 0°–360° | 3 kg in air, 1.4 kg in water |

| High-frequency model | 675 KHz | 1.5° | 40° | 100 m |

| No. | Function |

|---|---|

| (a) | |

| 1 | Area |

| 2 | Perimeter length |

| 3 | Mean intensity |

| 4 | Intensity standard deviation |

| 5 | Compactness |

| 6 | Background mean |

| (b) | |

| 7 | |

| 8 | |

| 9 | |

| (c) | |

| 10 | |

| 11 | |

| 12 | |

| 13 | |

| (d) | |

| 14 | |

| 15 | |

| 16 | |

| 17 | |

| 18 | |

| 19 | |

| 20 | |

| (e) | |

| 21 | Inertia |

| 22 | Entropy |

| 23 | Angular second moment |

| 24 | Inverse difference moment |

| 25 | Correlation |

| 26 | Variance |

| 27 | Sum average , |

| 28 | Sum entropy |

| 29 | Sum variance |

| 30 | Difference entropy |

| Algorithm of SBS | |

|---|---|

| 1 | Start with the full set |

| 2 | Remove the worst feature , |

| 3 | Update ; |

| 4 | Go to 2 |

| Algorithm of SFS | |

|---|---|

| 1 | Start with the empty set |

| 2 | Select the next best feature , |

| 3 | Update ; |

| 4 | Go to 2 |

| No. | Description |

|---|---|

| 1 | Only first target moves. |

| 2 | Only second target moves. |

| 3 | Only third target moves. |

| 4 | Only fourth target moves. |

| 5 | First and fourth targets move together in the same direction. |

| 6 | Second and fourth targets move together in the same direction. |

| 7 | Fourth and fifth targets move together in the same direction. |

| 8 | Third and fourth targets move together in the opposite direction, and their trajectory is crossed. |

| 9 | Third and fifth targets move together in the opposite direction, and their trajectory is crossed. |

| 10 | First and second targets move together in the opposite direction, and their trajectory is crossed. |

| 11 | Second and third targets move together in the opposite direction, and their trajectory is crossed. |

| 12 | Second, third, and fourth target moves together in the same direction. |

| 13 | Second, third, and fourth targets move together in the opposite direction. |

| 14 | Second target does not move. |

| Interval No | B | C | D | E | F | G |

|---|---|---|---|---|---|---|

| Feature No | 1–5 | 6–10 | 11–15 | 16–20 | 21–25 | 26–30 |

| Feature Order Gained by SFS | 1 | 2 | 3 | 4 | 5 |

|---|---|---|---|---|---|

| Feature No. | 20 | 17 | 3 | 6 | 24 |

| 1 | Calculate value , which is written as: |

| 2 | Design fuzzy controller to translate and to fuzzy domain; fuzzy-rule table is shown in Table 9. |

| 3 | Input and into the fuzzy controller, and obtain fuzzy output of ith feature. |

| 4 | Calculate weighting coefficients of each feature , which is written as: |

| 3 | 4 | 4 | 5 | 5 | |

| 2 | 3 | 4 | 4 | 5 | |

| 2 | 2 | 3 | 4 | 4 | |

| 1 | 2 | 2 | 3 | 4 | |

| 1 | 1 | 2 | 2 | 3 | |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, T.; Liu, S.; He, X.; Huang, H.; Hao, K. Underwater Target Tracking Using Forward-Looking Sonar for Autonomous Underwater Vehicles. Sensors 2020, 20, 102. https://doi.org/10.3390/s20010102

Zhang T, Liu S, He X, Huang H, Hao K. Underwater Target Tracking Using Forward-Looking Sonar for Autonomous Underwater Vehicles. Sensors. 2020; 20(1):102. https://doi.org/10.3390/s20010102

Chicago/Turabian StyleZhang, Tiedong, Shuwei Liu, Xiao He, Hai Huang, and Kangda Hao. 2020. "Underwater Target Tracking Using Forward-Looking Sonar for Autonomous Underwater Vehicles" Sensors 20, no. 1: 102. https://doi.org/10.3390/s20010102

APA StyleZhang, T., Liu, S., He, X., Huang, H., & Hao, K. (2020). Underwater Target Tracking Using Forward-Looking Sonar for Autonomous Underwater Vehicles. Sensors, 20(1), 102. https://doi.org/10.3390/s20010102