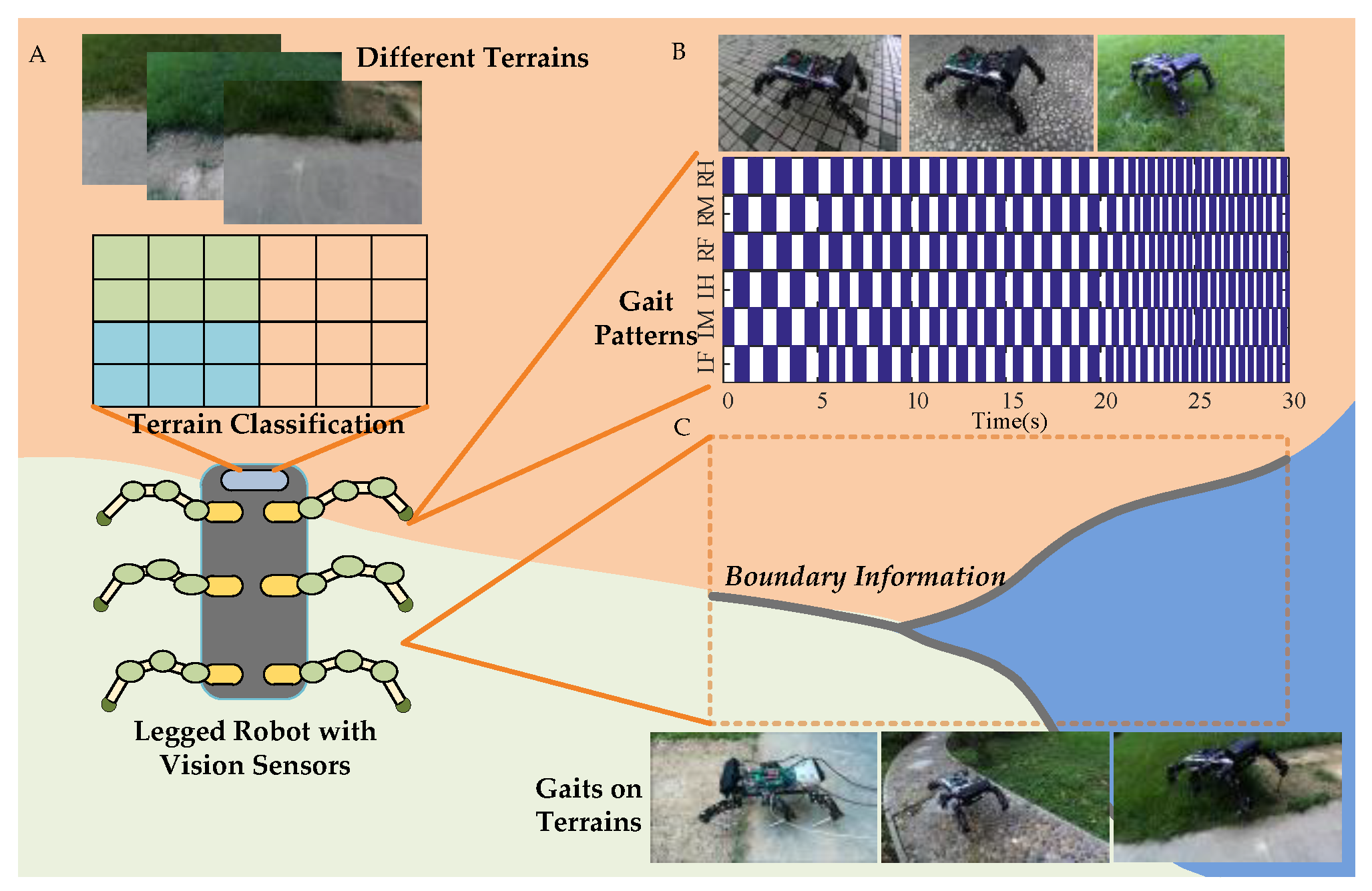

Superpixel Segmentation Based Synthetic Classifications with Clear Boundary Information for a Legged Robot

Abstract

1. Introduction

2. Motivations

3. Methods

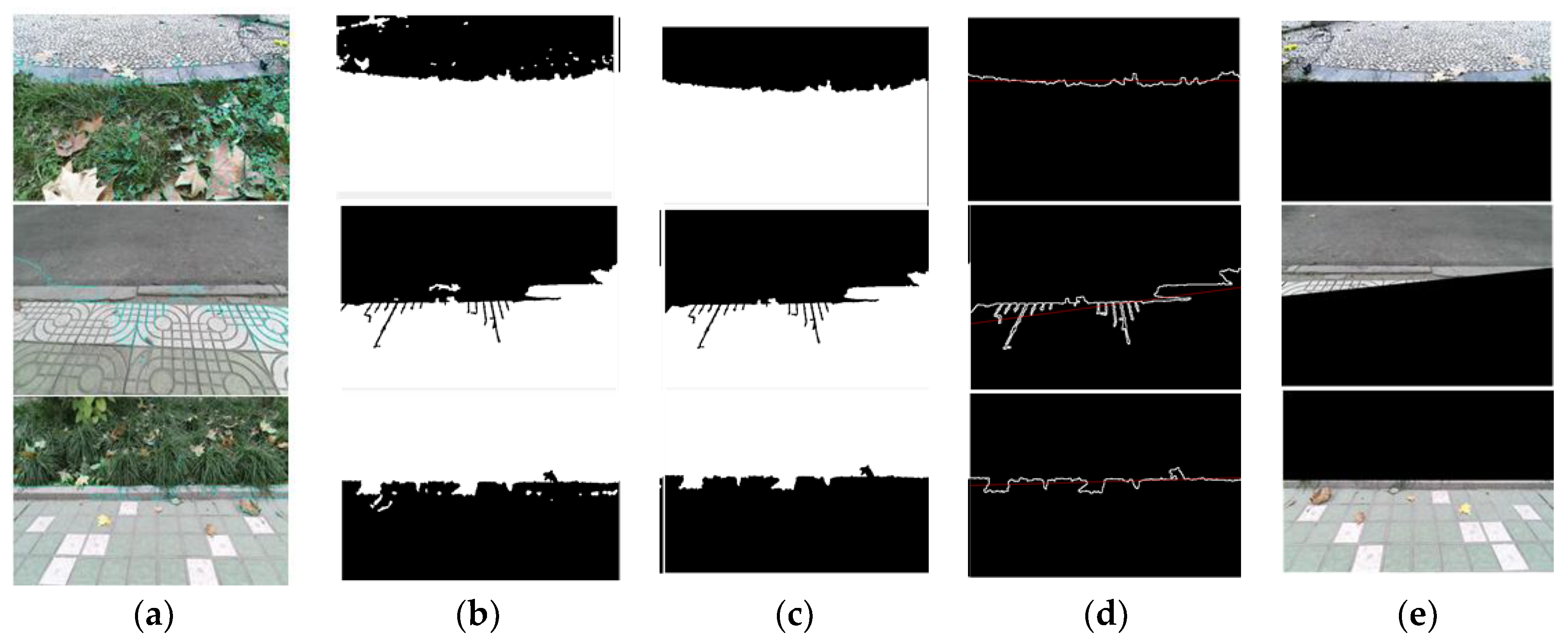

3.1. Superpixel Segmentation for Clear Boundary Information

- Initialize the cluster center. According to the set number of superpixels K, evenly distribute the seed points in the image. The superpixel size is N/K, where N is the number of pixels.

- Calculate the gradient values of all pixels in the seed points’ neighborhood, and move the cluster center to the position of the lowest gradient within the grid that contains the pixels to reduce the chance of selecting noisy pixels.

- Assign a class label to each pixel in the neighborhood of each reselected cluster center. The search range is 2 S × 2 S. The desired superpixel size is S × S.

- Distance metrics. The SLIC clustering is based on color similarity and proximity between pixels, where the measure of color similarity is (l, a, b), the color space norm, and the measure of color proximity is the two-dimensional coordinate space of the image (x, y). Therefore, the comprehensive metric factor is the five-dimensional space, [l, a, b, x, y]. For each pixel, its distance from the seed point is calculated. The corresponding distances are calculated by:where dc represents the color distance, ds represents the spatial distance, Ns is the maximum spatial distance in the cluster, and Ns = s = sqrt(N/K). The maximum color distance Nc varies from picture to picture and from cluster to cluster, here we take a fixed constant (value range [1, 40], generally 10). Since every pixel is searched by multiple seed points, every pixel has a certain distance from the surrounding seed points, and the seed point corresponding to the minimum value is used as the clustering center of a pixel.

- Iterative optimization is performed by:where I (x, y) denotes the experimental vector corresponding to the pixel position (x, y) and it denotes a norm. After each pixel in the image is associated with the cluster center, a new center is obtained as the average experimental vector, and each pixel is continuously and iteratively associated with the nearest cluster center, and the cluster center is recalculated until the process convergence is achieved.

- Enhanced Connectivity. Distribute discontinuous superpixels and oversized superpixels to the neighboring superpixels. The traversed pixels are assigned to the corresponding labels until all points are traversed.

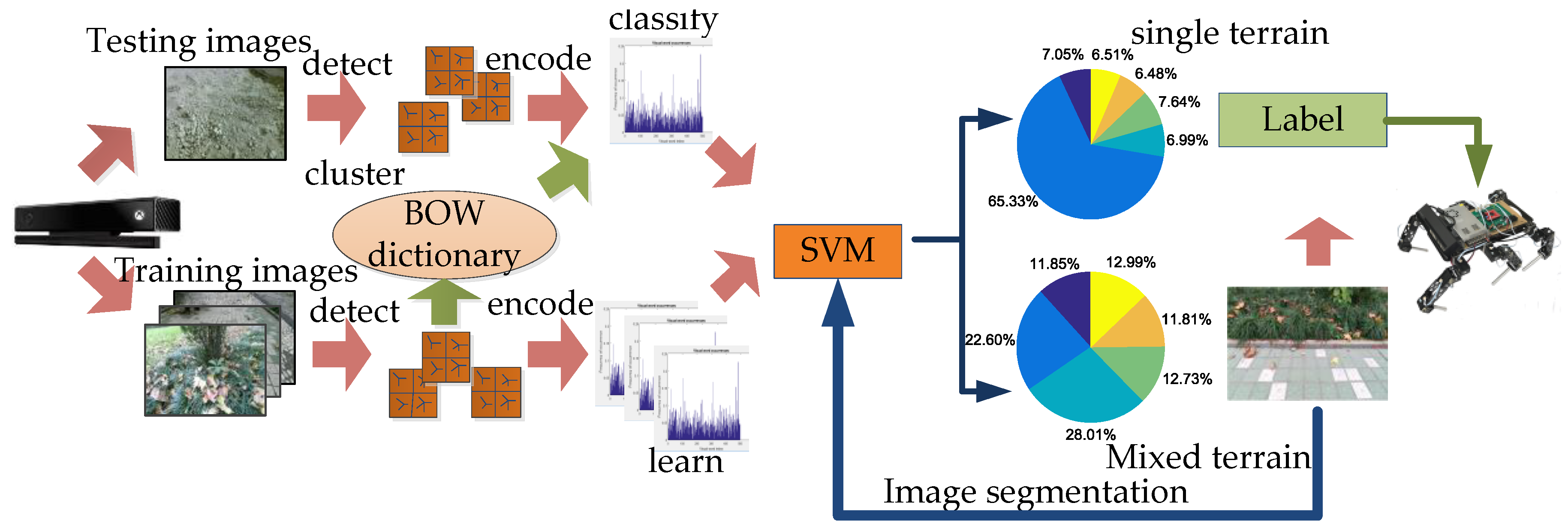

3.2. SLIC-SVM Terrain Classification

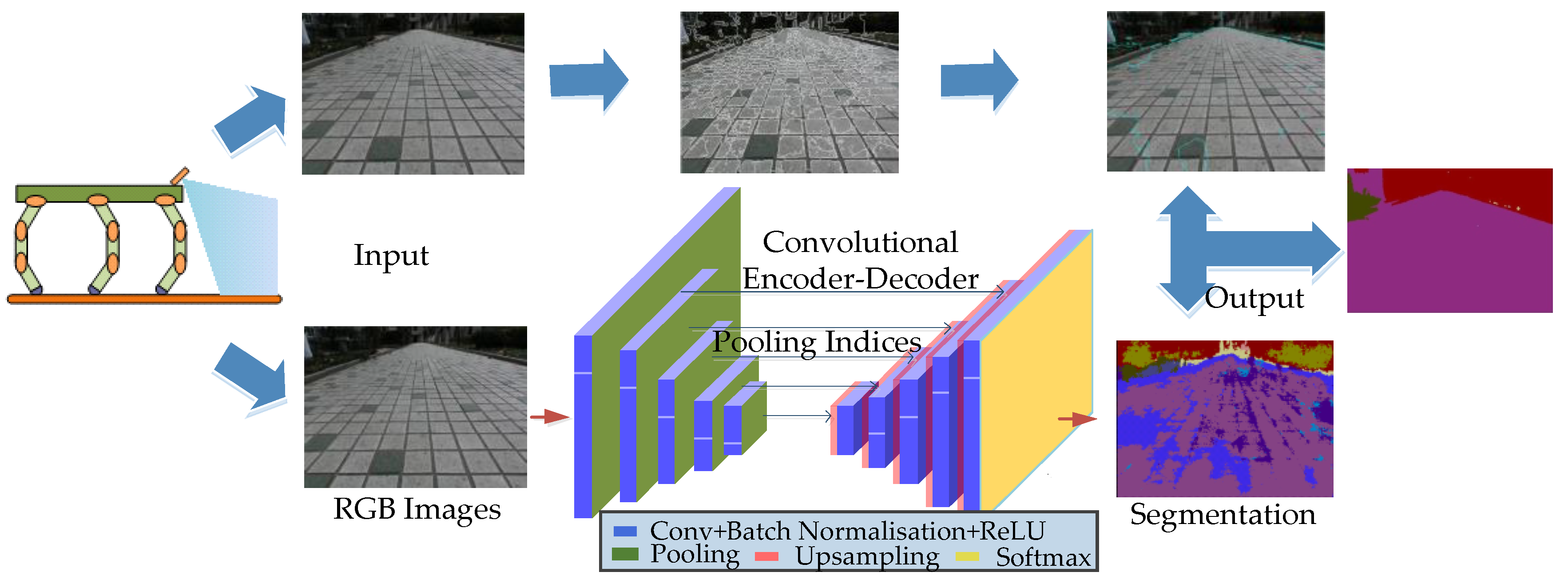

3.3. SLIC-SegNet Terrain Classification

| Algorithm 1. Pseudo code of SLIC-SegNet algorithm. |

| Preparatory work for SegNet module [30]: a: Convolution operation, get the feature value x; b: Batch Normalizing Transform; c: Training a Batch-Normalized Network; Result: the trained SegNet module. |

| Initialize: k = {1 … K}, i = {1 … 480}, j = {1 … 360}, n = {1 … N}, m = { 1… M}. Bi(i−1 ,…, n) represents the set of pixels in each sorted area, the RGB components of each pixel are denoted as IBm(x, y); IAn(x, y) stand for the pixels in , Mode represents the component value of the most frequently occurring RGB components of all pixels in , recorded as IMode Repeat 1. Collect kth image: Ik(xi, yj) For k = 1…K do 2. Run the SLIC module: Assign the best matching pixels; Compute new cluster centers and residual error E; until E threshold; Enforce connectivity; Output the each pixel block ∈ {A1, A2 … AN|}; where An = n each pixel IAn(x, y) ∈ 3. Run the trained SegNet module: Activate feature value; Deconvolution, get the feature value Xn; Find the maximum probability of each pixel in all categories. Output the label set ∈ {B1, B2 … BM|}, IBm(x, y) ∈ 4. Match , define set = For i = 1 … n find each xAn, yAn of ICn = Mode(IB(xAn, yAn)); For each (xAn, yAn) ∈ Assignthe Mode of the RGB component to ICn(xAn, yAn): ICn(xAn, yAn) = End for End for Output ∈ {C1, C2 … CN|}, ICn(x, y) ∈ 5. Gait selection and run the Robot; End for Until the Robot switched off. |

4. Experiment

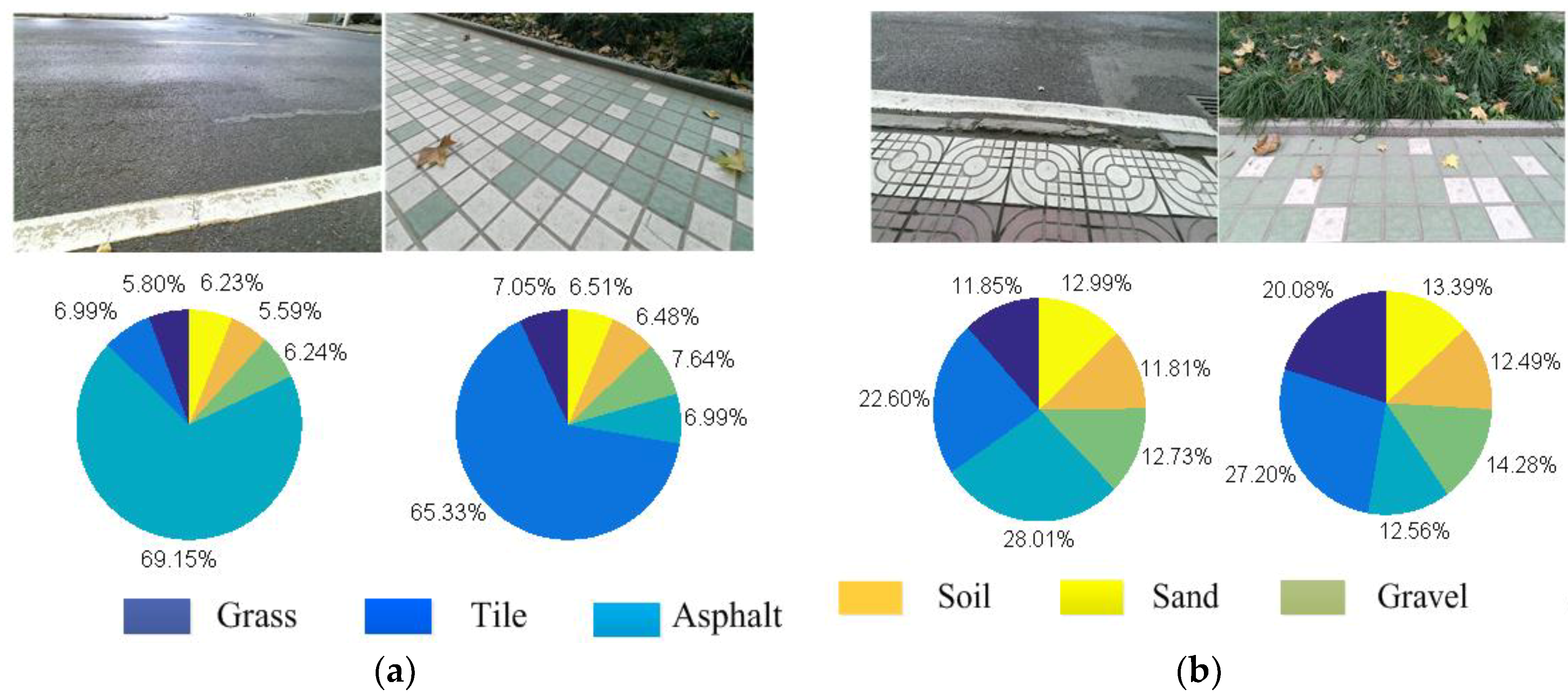

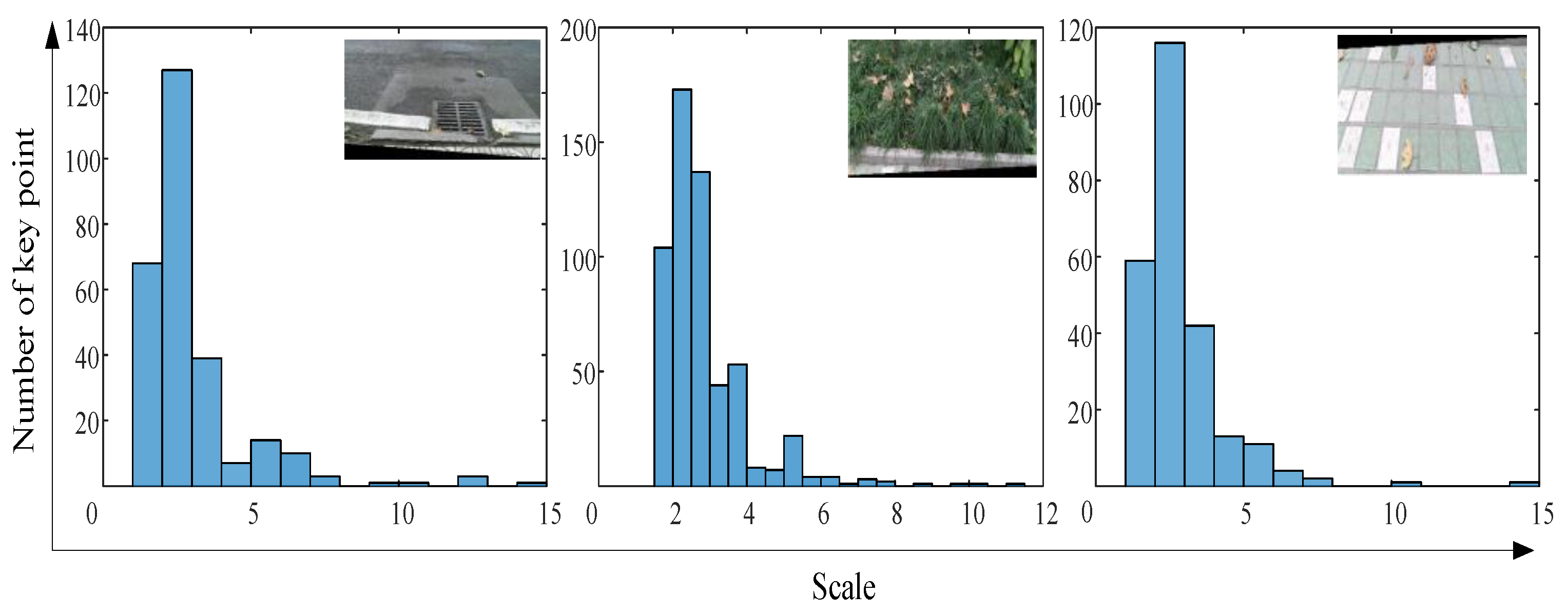

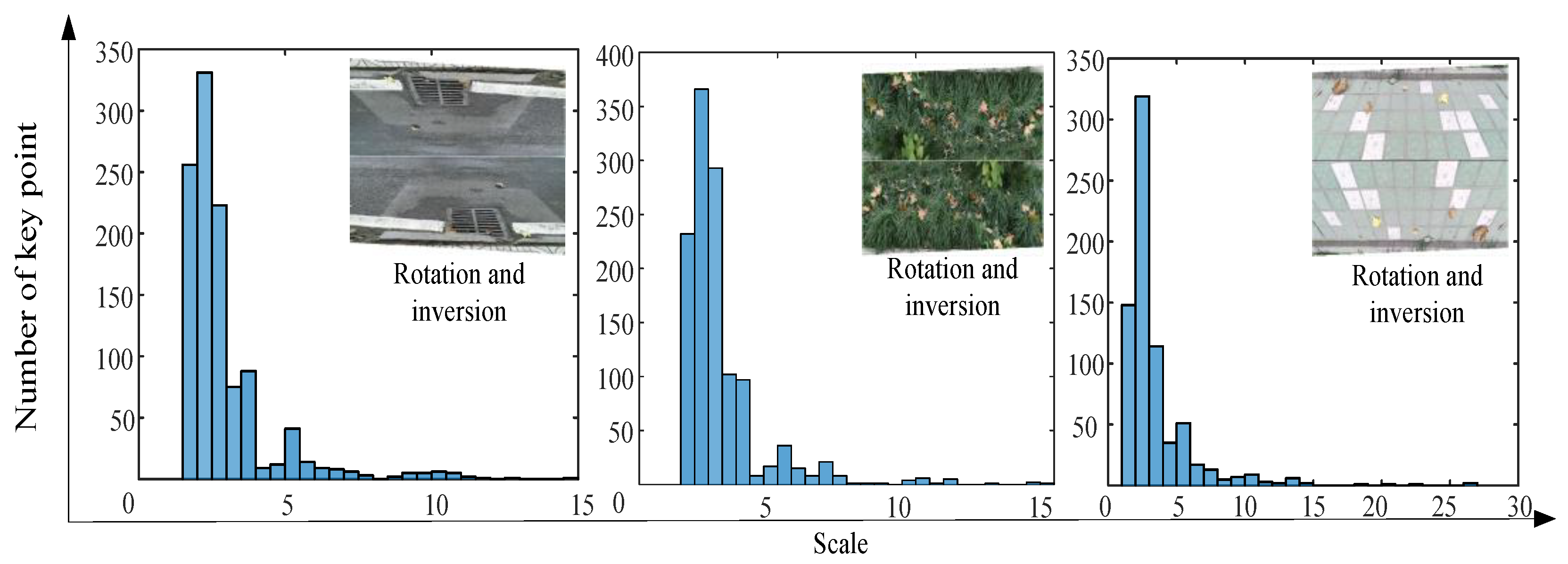

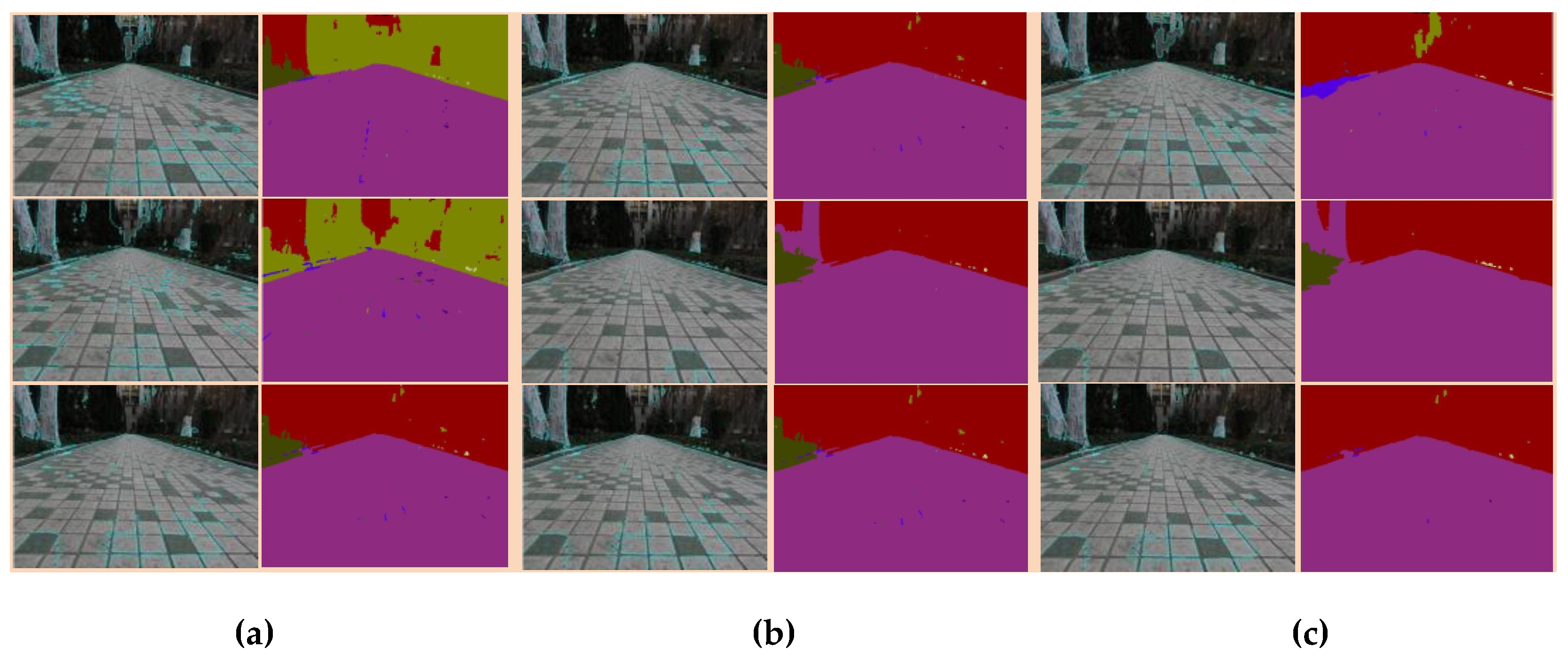

4.1. SLIC-SVM Experiments

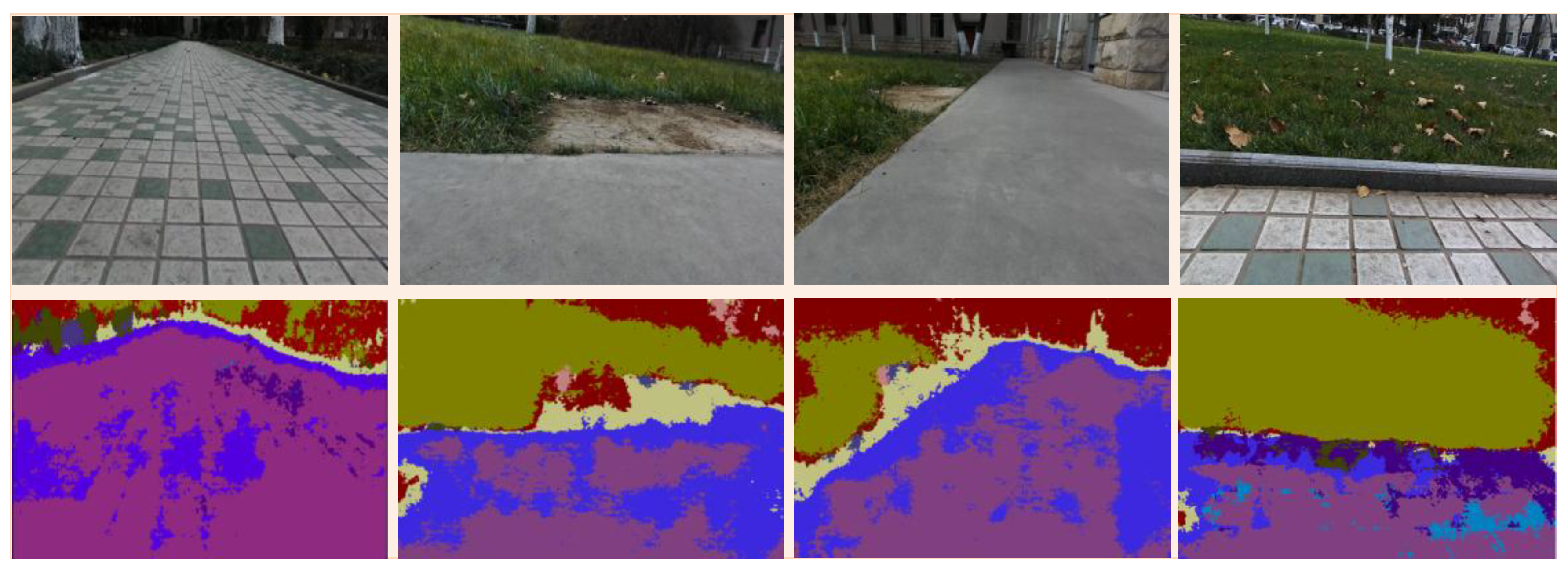

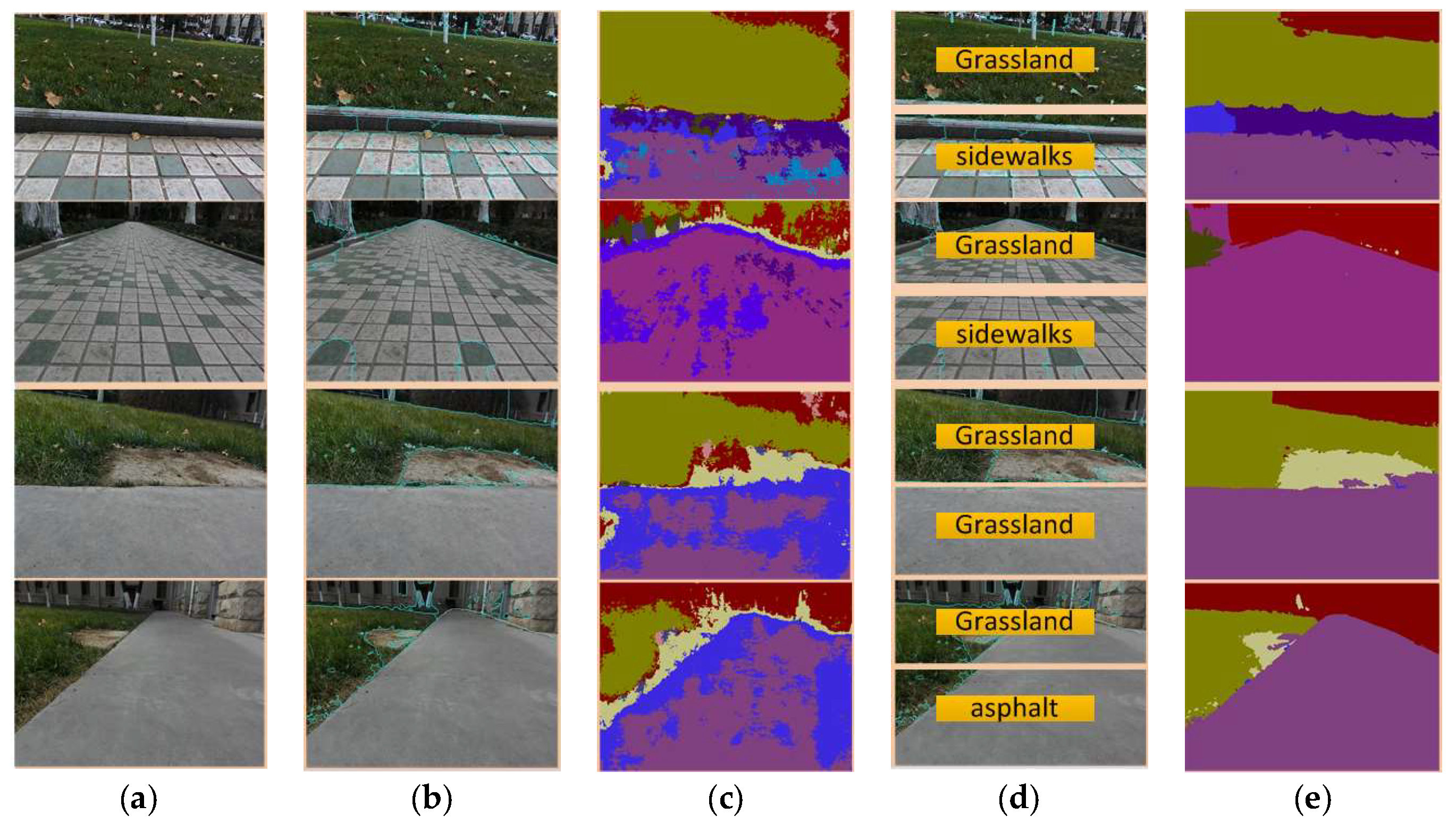

4.2. SLIC-SegNet Experiments

4.3. Comparison of SLIC-SVM and SLIC-SegNet

- (1)

- In contrast to the terrain classification method based on the SLIC-SVM, the convolutional neural network terrain classification method based on the superpixel segmentation belongs to the single-input multi-output model. Using a single image of a mixed terrain multiple terrain recognition and marking processes can be achieved simultaneously. However, for the SLIC-SVM of single-input single-output model, it is necessary to divide different terrains first, and then to identify them separately.

- (2)

- In the mixed terrain classification by the SLIC-SVM terrain classification method, different terrains need to be segmented, and then feature points are extracted to recognize the terrains. However, the reduction of the number of feature points after image segmentation inevitably leads to the low terrain recognition rate. The SLIC-SegNet can process the input image without segmentation ensuring the requirement for pixels and feature points of the segmentation process, and can identify a variety of mixed terrain accurately and quickly.

- (3)

- The SLIC-SVM can divide only the mixed terrain with the regular terrain features. The mixed terrain with irregular terrain features cannot be segmented, and the terrain cannot be identified accurately. The SLIC-SegNet terrain classification method can accurately identify each terrain type, even the irregular mixed terrains.

5. Discussion

6. Conclusions

- The SLIC-SVM is proposed to solve the problem that the SVM can only output a single terrain label and fail to identify the mixed terrain. The presented method can not only recognize a variety of mixed terrains but also provide the clear terrain boundary for gait transformation and stability of multi-legged robot.

- The SLIC-SegNet single-input multi-output terrain classification model is derived to improve the applicability of the terrain classifier. Since terrain classification results of high quality for legged robot are hard to gain, the SLIC-SegNet obtains the satisfied information without too much effort.

- Both superpixel segmentation based synthetic classification methods can supply reliable mixed terrain classification result with clear boundary information and will put the terrain depending gait selection and path planning of the multi-legged robots into practice.

Author Contributions

Funding

Conflicts of Interest

References

- Zhu, Y.G.; Jin, B. Trajectory Correction and Locomotion Analysis of a Hexapod Walking Robot with Semi-Round Rigid Feet. Sensors 2016, 9, 1392. [Google Scholar] [CrossRef] [PubMed]

- Ji, A.H.; Dai, Z.D. Research Development of Bio-inspired Robotics. Robot 2005, 27, 284–288. [Google Scholar]

- Abbaspour, R. Design and implementation of multi-sensor based autonomous minesweeping robot. In Proceedings of the Ultra Modern Telecommunications & Control Systems & Workshops International Congre, Moscow, Russia, 18–20 October 2010; pp. 443–449. [Google Scholar] [CrossRef]

- Caltabiano, D.; Muscato, G.; Russo, F. Localization and self-calibration of a robot for volcano exploration. In Proceedings of the IEEE International Conference on Robotics and Automation(ICRA), New Orleans, LA, USA, 26 April–1 May 2004. [Google Scholar] [CrossRef]

- Zhao, S.D.; Yuh, J.K. Experimental Study on Advanced Underwater Robot Control. IEEE Trans. Robot. 2005, 4, 695–703. [Google Scholar] [CrossRef]

- Cui, Y.; Gejima, Y.; Kobayashi, T. Study on Cartesian-Type Strawberry-Harvesting Robot. Sens. Lett. 2013, 11, 1223–1228. [Google Scholar] [CrossRef]

- Semler, L.; Furst, J. Wavelet-Based Texture Classification of Tissues in Computed Tomography. In Proceedings of the 18th IEEE Symposium on Computer-Based Medical Systems (CBMS'05), Ireland, UK, 23–24 June 2005. [Google Scholar]

- Paschos, G. Perceptually uniform color spaces for color texture analysis: An empirical evaluation. IEEE Trans. Image Process. 2001, 10, 932–937. [Google Scholar] [CrossRef]

- Khan, Y.; Komma, P.; Bohlamnn, K. Grid-based visual terrain classification for outdoor robot using local features. In Proceedings of the IEEE Conference on IEEE Symposium on Computational Intelligence in Vehicles and Transportation Systems, Paris, France, 11–15 April 2011; pp. 16–22. [Google Scholar]

- Zenker, S.; Aksoy, E.E.; Goldschmidt, D. Visual Terrain Classification for Selecting Energy Efficient Gaits of a Hexapod Robot. In Proceedings of the 2013 IEEE/ASME International Conference on Advanced Intelligent Mechatronics, Wollongong, NSW, Australia, 9–12 July 2013; pp. 577–584. [Google Scholar] [CrossRef]

- Filitchkin, P.; Byl, K. Feature-based terrain classification for LittleDog. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robot & Systems, Vilamoura, Portugal, 7–12 October 2012. [Google Scholar] [CrossRef]

- Bao, S.; Chung, A.C.S. Multi-scale structured CNN with label consistency for brain MR image segmentation. Comput. Methods Biomech. Biomed. Eng.: Imaging Vis. 2018, 6, 113–117. [Google Scholar] [CrossRef]

- Rothrock, B.; Kennedy, R.; Cunningham, C.; Papon, J.; Heverly, M.; Ono, M. Spoc: Deep learning-based terrain classification for Mars rover missions. In Proceedings of the AIAA SPACE 2016, Long Beach, CA, USA, 13–16 September 2016. [Google Scholar] [CrossRef]

- Levinshtein, A.; Stere, A.; Kutulakos, K.N.; Fleet, D.J.; Dickinson, S.J.; Siddiqi, K. Fast superpixels using geometric flows. IEEE Trans. Pattern Anal. Mach. Mach. 2009, 31, 2290–2297. [Google Scholar] [CrossRef] [PubMed]

- Stutz, D.; Hermans, A.; Leibe, B. Superpixels: An evaluation of the state-of-the-art. Comput. Vis. Image Underst. 2018, 166, 1–27. [Google Scholar] [CrossRef]

- Ren, X.; Malik, J. Learning a Classification Model for Segmentation. In Proceedings of the IEEE International Conference on Computer Vision, Nice, France, 13–16 October 2003; pp. 11–17. [Google Scholar] [CrossRef]

- Song, X.; Zhou, L.; Li, Z. Review on superpixel methods in image segmentation. J. Image Graph. 2015, 20, 599–608. [Google Scholar]

- Nguyen, B.P.; Heemskerk, H.; So, P.T.C.; Tucker-Kellogg, L. Superpixel-based segmentation of muscle fibers in multi-channel microscopy. BMC Syst. Biol. 2016, 10 (Suppl. 5), 39–50. [Google Scholar] [CrossRef] [PubMed]

- Chen, X.; Nguyen, B.P.; Chui, C.K.; Ong, S.H. Automated brain tumor segmentation using kernel dictionary learning and superpixel-level features. In Proceedings of the 2016 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Budapest, Hungary, 9–12 October 2016. [Google Scholar] [CrossRef]

- Marcin, C. Automated coronal hole segmentation from Solar EUV Images using the watershed transform. J. Vis. Commun. Image Represent. 2015, 33, 203–218. [Google Scholar]

- Cousty, J.; Bertrand, G.; Najman, L.; Couprie, M. Watershed cuts: Thinnings, shortest path forests, and topological watershed. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 925–939. [Google Scholar] [CrossRef] [PubMed]

- Zhang, K.; Zhang, L.; Lam, K.M.; Zhang, D. A level set approach to image segmentation with intensity inhomogeneity. IEEE Trans. Cybern. 2016, 46, 546–557. [Google Scholar] [CrossRef] [PubMed]

- Min, H.; Jia, W.; Wang, X.F.; Zhao, Y.; Hu, R.X.; Luo, Y.T. An intensity-texture model based level set method for image segmentatio. Pattern Recognit. 2015, 48, 1547–1562. [Google Scholar] [CrossRef]

- Holder, C.J.; Breckon, T.P. From On-Road to Off: Transfer Learning within a Deep Convolutional Neural Network for Segmentation and Classification of Off-Road Scenes. In European Conference on Computer Vision, Proceedings of the Computer Vision—ECCV 2016 Workshops, Amsterdam, the Netherlands, 8–10 and 15–16 October 2016; Springer: Berlin/Heidelberg, Germany, 2016. [Google Scholar] [CrossRef]

- Ordonez, C. Terrain identification for RHex-type robots. Unmanned Syst. Technol. XV 2013, 3, 292–298. [Google Scholar] [CrossRef]

- Lee, S.Y.; Kwak, D.M. A terrain classification method for UGV autonomous navigation based on SRUF. In Proceedings of the International Conference on Ubiquitous Robots & Ambient Intelligence, Incheon, Korea, 23–26 November 2011; pp. 303–306. [Google Scholar] [CrossRef]

- Dallaire, P. Learning Terrain Types with the Pitman-Yor Process Mixtures of Gaussians for a Legged Robot. In Proceedings of the Intelligent Robots and Systems (IROS), Hamburg, Germany, 28 September–2 October 2015. [Google Scholar] [CrossRef]

- Manduchi, R.; Castano, A.; Talukder, A. Obstacle Detection and Terrain Classification for Autonomous Off-Road Navigation. Auton. Robot. 2005, 1, 81–102. [Google Scholar] [CrossRef]

- Greiffenhagen, M.; Ramesh, V.; Comaniciu, D.; Niemann, H. Statistical modeling and performance characterization of a real-time dual camera surveillance system. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Hilton Head Island, SC, USA, 15 June 2000. [Google Scholar]

- Badrinarayanan, V.; Handa, A.; Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for robust semantic pixel-wise labelling. arXiv 2015, arXiv:1505.07293. [Google Scholar]

- Hoepflinger, M.A.; Remy, C.D.; Hutter, M.; Haag, S.; Siegwart, R. Haptic Terrain Classification on Natural Terrains for Legged Robots. In Proceedings of the International Conference on Climbing & Walking Robot, Anchorage, AK, USA, 3–8 May 2010. [Google Scholar] [CrossRef]

- Hoffmann, M.; Štěpánová, K.; Reinstein, M. The effect of motor action and different sensory modalities on terrain classification in a quadruped robot running with multiple gaits. Robot. Autonom. Syst. 2014, 62, 1790–1798. [Google Scholar] [CrossRef]

- Zhu, Y.; Wu, Y.S.; Liu, Q. A backward control based on σ -Hopf oscillator with decoupled parameters for smooth locomotion of bio-inspired legged robot. Robot. Autonom. Syst. 2018, 106, 165–178. [Google Scholar] [CrossRef]

- Bay, H.; Ess, A.; Tuytelaars, T.; Gool, V.L. Speeded-Up Robust Features (SURF). Comput. Vis. Image Underst. 2008, 110, 346–359. [Google Scholar] [CrossRef]

- Comaniciu, D.; Meer, P. Mean shift: A robust approach toward feature space analysis. IEEE Trans. Pattern Anal. Mach. Intell. 2002, 5, 603–619. [Google Scholar] [CrossRef]

- Rekeczky, C. CNN architectures for constrained diffusion based locally adaptive image processing. Int. J. Circuit Theory Appl. 2002, 30, 313–348. [Google Scholar] [CrossRef]

- Loffe, S.; Szegedy, C. Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift. arXiv 2015, arXiv:1502.03167. [Google Scholar]

- Achanta, R.; Shaji, A.; Smith, K.; Lucchi, A.; Fua, P.; SãSstrunk, S. Slic superpixels compared to state-of-the-art superpixel methods. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 34, 2274–2282. [Google Scholar] [CrossRef] [PubMed]

- Gonzalez, R.; Iagnemma, K. DeepTerramechanics: Terrain Classification and Slip Estimation for Ground Robots via Deep Learning. arXiv 2018, arXiv:1806.07379. [Google Scholar]

- Valada, A.; Spinello, L.; Burgard, W. Deep Feature Learning for Acoustics-Based Terrain Classification; Springer: Cham, Switzerland, 2017. [Google Scholar] [CrossRef]

- Maghsoudi, O.H. Superpixels based marker tracking vs. hue thresholding in rodent biomechanics application. In Proceedings of the 2017 51st Asilomar Conference on Signals, Systems, and Computers, Pacific Grove, CA, USA, 29 October–1 November 2017. [Google Scholar]

- Maghsoudi, O.H.; Vahedipour, A.; Robertson, B.; Spence, A. Application of Superpixels to Segment Several Landmarks in Running Rodents. arXiv 2018, arXiv:1804.02574. [Google Scholar]

| Images |  |  |  |  |  |

| Actual terrain | Tile | Grass | Tile | Grass | Grass |

| Output label | Asphalt | Tile | Asphalt | Asphalt | Soil |

| Actual Terrain | Tile | Grass | Tile | Grass | Grass | |||||

|---|---|---|---|---|---|---|---|---|---|---|

| Output Label | Asphalt | Tile | Asphalt | Asphalt | Soil | |||||

| R-I | Tile | Grass | Tile | Grass | Grass | |||||

| Scores | Before | After | Before | After | Before | After | Before | After | Before | After |

| Sand | 12.21 | 12.78 | 14.55 | 11.94 | 12.53 | 12.18 | 11.19 | 10.41 | 12.95 | 7.59 |

| Grass | 10.40 | 13.33 | 14.56 | 29.30 | 12.88 | 14.92 | 24.59 | 39.52 | 16.71 | 59.12 |

| Asphalt | 33.39 | 14.29 | 19.72 | 13.47 | 25.05 | 18.32 | 26.70 | 11.16 | 18.17 | 7.96 |

| Gravel | 12.73 | 13.41 | 17.71 | 20.01 | 12.27 | 12.79 | 12.66 | 15.61 | 15.85 | 8.17 |

| Tile | 20.38 | 35.05 | 19.95 | 12.96 | 24.86 | 29.37 | 12.27 | 11.41 | 16.12 | 8.16 |

| Soil | 10.89 | 11.15 | 13.51 | 12.33 | 12.42 | 12.42 | 12.59 | 11.89 | 20.20 | 9.30 |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhu, Y.; Luo, K.; Ma, C.; Liu, Q.; Jin, B. Superpixel Segmentation Based Synthetic Classifications with Clear Boundary Information for a Legged Robot. Sensors 2018, 18, 2808. https://doi.org/10.3390/s18092808

Zhu Y, Luo K, Ma C, Liu Q, Jin B. Superpixel Segmentation Based Synthetic Classifications with Clear Boundary Information for a Legged Robot. Sensors. 2018; 18(9):2808. https://doi.org/10.3390/s18092808

Chicago/Turabian StyleZhu, Yaguang, Kailu Luo, Chao Ma, Qiong Liu, and Bo Jin. 2018. "Superpixel Segmentation Based Synthetic Classifications with Clear Boundary Information for a Legged Robot" Sensors 18, no. 9: 2808. https://doi.org/10.3390/s18092808

APA StyleZhu, Y., Luo, K., Ma, C., Liu, Q., & Jin, B. (2018). Superpixel Segmentation Based Synthetic Classifications with Clear Boundary Information for a Legged Robot. Sensors, 18(9), 2808. https://doi.org/10.3390/s18092808