1. Introduction

Noise interferences often occur in many systems such as wireless communications [

1] and social networks [

2,

3]. Hence, images are inevitably corrupted by both blur and noise during the acquisition and transmission. Hence, the restoration of clean images from blurred and noisy observations is a fundamental task in the image processing community. A wide range of approaches has been proposed to remove additive Gaussian noise [

4,

5,

6]. However, many other noises, such as impulse noise [

7,

8,

9,

10,

11,

12], multiplicative noise [

13,

14], Poisson noise [

15,

16,

17], Cauchy noise [

18,

19], and Rician noise [

20], commonly appear in the real world and thus are studied by many researchers. Another impulsive noise is often caused by alpha-stable noise, which normally appears in many applications, such as wireless communication systems, synthetic aperture radar (SAR) images, biomedical images, and medical ultrasound images [

21,

22].

Mathematically, the image restoration problem can be expressed as

where

is obtained from a two-dimensional pixel-array with dimension

and defined on a connected bounded domain

with compact Lipschitz boundary,

denotes a known linear and continuous blurring operator,

is the noise obeys certain distribution (for example alpha-stable noise is the noise which obeying alpha-stable distribution), and

is the blurred image with the additive noise. In particular, when

f is corrupted only by noise, it is then given by

.

It is well known that restoring

u from

f is normally an ill-conditioned problem. Variational methods are proposed to handle this ill-posed inverse imaging problems. These methods are usually summarized as convex and non-convex methods, respectively. The total variation (TV) regularization method [

23] plays a significant role in convex variational-based image processing, since it can preserve sharp edges in images due to the piecewise smooth property of the TV norm.

The ROF (Rudin Osher and Fatemi) denoising model is one of the most famous total variational models for restoring images with additive Guassian noise, which was proposed by Rudin et al. [

6], as given by

where

is the TV regularization term,

is the space of the functions of bounded variation,

is the data fidelity term, and

is the regularization parameter, which represents the trade-off between the data fidelity term and the TV regularization term. It is possible to modify the ROF denoising model to incorporate a linear blurring operator

K [

6]. The ROF deblurring and denoising model is then given as follows:

Although the ROF deblurring and denoising model is a very useful deblurring and denoising approach with additive Gaussian noise, it does not achieve good performance in the scenario of non-Guassian environments. As a result, many kinds of variational models based on TV have been proposed for restoring clean images from blurred and non-Guassian noise distribution, such as that of impulse noise [

7,

8,

9,

10,

11,

12], multiplicative noise [

13,

14], Poisson noise [

15], Cauchy noise [

18,

19], and Rician noise [

20]. Based on different noise distributions, and data fidelity terms, one can obtain appropriate variational models for image denoising and deblurring in the presence of different noises. For example,

is the data fidelity term of TVL1 deblurring and denoising model with impulse noise [

11], and

is the data fidelity term of Cauchy deblurring and denoising model with Cauchy noise [

18].

Recently, some methods have been considered to mitigate alpha-stable noise. For example, Zozor et al. [

24] employed a parametric approach for suboptimal signal detection. They dealt with the detection of a known signal embedded in alpha-stable noise and discussed the robustness of the detector against the signal amplitude and the stability index. Sadreazami et al. [

25] modeled the contourlet coefficients of noise-free images with the alpha-stable distribution. They have also presented a new approach for despeckling SAR images and a multiplicative watermark detection in the contourlet domain using the alpha-stable distribution [

26,

27]. Yang et al. [

28] proposed a total variational method to restore images that are degraded by alpha-stable noise based on the property of meridian distributed.

Until now, to the best of our knowledge, there is no paper reporting on a variational method for blurred image restoration in the presence of alpha-stable noise. In order to restore images from blur and alpha-stable noise while also preserving their edges, this paper proposes a novel variational method based on the statistical property of meridian distribution and the TV, and our numerical experiments demonstrate that it performs better than many standard deblurring and denoising method in impulsive noisy environments (with small values, i.e., ), while providing comparable or better performance in less demanding, light-tailed environments (with high values, i.e., ).

The main contributions of this paper are summarized as follows. (i) Based on the statistical properties of meridian distribution and the TV, we propose a new variational method for restoring blurred images with alpha-stable noise and then analyze the existence of the solution for the variational model. (ii) By adding a penalty term, we propose a strictly convex variational method and prove the existence and uniqueness of the solution for the convex variational model. (iii) The primal-dual algorithm is employed to solve the novel convex variational problem, with its convergence being analyzed. (iv) We compare our proposed method to state-of-the-art methods such as the TVL1 model [

11], the Cauchy model [

18], and the meridian filter [

29] and show the effectiveness of our proposed method.

The rest of this paper is organized as follows. In

Section 2, we describe the alpha-stable and the meridian distributions. In

Section 3, we propose a variational method for simultaneous deblurring and denoising, and study the existence of the solution for the proposed model. We also propose a convex variational method to restore blurred images with alpha-stable noise, and analyze the existence and uniqueness of the solution for the convex variational model. The primal-dual algorithm for solving the proposed convex restoration problems is given in

Section 4.

Section 5 presents extensive numerical results to evaluate the performance of the proposed method in comparison with well-known methods. Finally, concluding remarks are provided in

Section 6.

2. A Brief Review of the Alpha-Stable and Meridian Distributions

The alpha-stable noise which obeys alpha-stable distribution is often found in radar- and sonar-related applications. The heaviness of the alpha-stable distribution tails is controlled by the parameter

, namely, the tails grow thicker as

values becomes smaller. Hence, alpha-stable noise can be seen as a type of impulsive noise with small

values (

) [

21].

The alpha-stable distributions are closed under additions, i.e., the sum of two alpha-stable random variables is still an alpha-stable random variable. Moreover, the alpha-stable random variables obey the generalized central limit theorem [

21]. However, this class of alpha-stable distribution random variables has no closed-form expressions for densities and distribution functions (except for Gaussian distribution, Cauchy distribution, and Levy distribution). The distribution with

corresponds to the well-known Gaussian distribution, and the one with

corresponds to the Cauchy distribution.

Figure 1 shows the probability density functions (PDFs) of alpha-stable distributions

with different values of

. We can see that the distributions of this class are all bell-shaped, with increasing density on the left and decreasing on the right. In addition, the tail of the bells becomes heavier as the value of

decreases.

The meridian distribution is a member of the generalized Cauchy distributions (GCD) family [

30], and it combines the advantages of the GCD and alpha-stable distributions. Moreover, an estimator derived from the meridian distribution is robust to the impulsive noise [

30]. The probability density function (PDF) of the meridian distribution is given by

where

is the scale parameter, and

is the localization parameter. Without loss of generality, we consider

in our paper. A careful inspection of the meridian distribution shows that its PDF tail decays slower than the Cauchy case, resulting in a heavier-tailed PDF, that is, the meridian PDF exhibits tails heavier than that of the Cauchy PDF [

29]. Moreover, by examining the well-established statistical relation between the Laplacian and meridian distributions, we can find that the ratio of two independent Laplacian distributed random variables is a meridian distribution [

29].

The influence function of the meridian distribution is given by

where

is the sign function. The influence function determines the effect of contamination. The rejection point of the meridian is smaller than that of the Cauchy distribution as it has a higher influence function decay rate. This indicates that a signed detection algorithm in the presence of the impulsive noise with the meridian distribution is more robust than that in the Cauchy distributed noise [

29].

3. The Proposed Variational Model

In this section, we propose a new variational model for restoring blurred images under the alpha-stable noise environments.

Motivated by existing work [

6,

13,

18,

29], we propose a variational model by applying the Bayes rule and the maximum a posteriori (MAP) estimator to restore the blurred images with alpha-stable noise based on the property of the meridian distribution and the TV.

First, we focus only on the denoising scenario. Given a known image

f, as in [

6,

13], by using the Bayes rule as well as the MAP estimation, we have

In obtaining Equation (

6), we have omitted

since it is a constant respect to

u.

As the image is corrupted by alpha-stable noise, for each pixel

, we have

where

stands for the scale parameter. Therefore,

Inspired by the idea of Aubert et al. [

13],

u is assumed to follow a Gibbs prior distribution. Therefore, we can obtain the TV regularization of

u as follows:

where

is a parameter, and

R is the normalization factor. Hence, solving Equation (

6) is equivalent to find the minimization of the following logarithmic probability. That is,

Here, please note that the is omitted since the three terms are all constants with respect to u.

Therefore, our pure denoising with alpha-stable noise is given by

where

is a regularization parameter. As one can see, we keep the same regularization term as in the ROF denoising model (Equation (

2)) since the TV regularization term is useful for preserving edges, but we adapt the data fidelity term to the alpha-stable noise, introducing one that is suitable for such noise. We emphasize that the proposed model can be extended to other modern regularization terms such as framelets, sharelets, rank surrogates, dictionary learning, or the tight-frame approach. These regularization terms are effective for the restoration of blurred and noisy images.

Thus, we start to prove the existence of the solution for Equation (

11).

Theorem 1. Let with , then Equation (11) has a solution satisfying: Proof. Set , , and let . Noting that , we have . This leads to being lower-bounded, and we can find a minimal sequence .

In addition, for any fixed

, let

. Therefore, if

, we have

, else if

, we get

. From the above two inequalities, we know that the function

is decreasing if

and increasing if

. This implies that

if

. Hence,

if

. Furthermore, it is known that

(see Lemma 1 in [

31]). Therefore, we can conclude that

. Similarly,

with

. Hence, we can assume that

, which implies that

is bounded in

.

According to the definition of

,

is bounded. In addition, it is proved that

is bounded in

since

is bounded [

31]. Hence, there is a subsequence that converges strongly in

and weakly in

to some

. Furthermore, given

, the lower semicontinuity of the TV, and the Fatou’s Lemma, the solution to Equation (

11) is obtained as

. ☐

We then extend Equation (

11) to the simultaneous deblurring and denoising scenarios. The restoration is conducted by solving the following optimization model:

It is worth mentioning that Equation (

12) is also a non-convex problem, as in the scenario of the pure denoising Equation (

11). Since Equations (

11) and (

12) are both nonconvex, they cannot guarantee a global optimal solution. To overcome this drawback, we incorporate an additional penalty term into Equations (

11) and (

12) to obtain novel convex variational models in the following section. This penalty term is based on the median-filtered result of the noise image.

In the following section, we propose a convex variational model for deblurring and denoising images, which is corrupted by both blur and alpha-stable noise.

We first also focus on a convex variational model for denoising only. By introducing a penalty term into Equation (

11), we obtain a convex variational model as follows:

where

(

g is the median filter function of

f ) [

18],

and

are the regularization parameters, respectively.

As a result, three theorems are provided to confirm that the above model is strictly convex under certain conditions, and there is a unique solution to Equation (

13).

Lemma 1. If , the objective function in Equation (13) is strictly convex. Proof. For each fixed

, let the real function

h on

be defined as

We can easily compute the first and second order derivatives of

h, as given by

Since

, we have

; thus,

, or

, that is

, i.e.,

h is convex. Furthermore, the function

h has only one minimizer, so

h is strictly convex when

. Since the total variation regularization is convex, we can also conclude that the objective function in Equation (

13) is strictly convex for

. ☐

Based on Lemma 1, we can now prove the existence and uniqueness of the solution to Equation (

13).

Lastly, we also extend our convex variational model for the following simultaneous deblurring and denoising case:

Since the blurring operator

K is linear and nonnegative, we can conclude that the model in Equation (

14) is convex when

. In the following theorem, we state the existence and uniqueness of its solution.

Theorem 2. Let with , , and a nonnegative linear operator . Assume that K does not annihilate constant functions, i.e., . Therefore, Equation (14) has a solution. Further, if and K is injective, the solution is unique. Proof. Let

be a minimizing sequence for Equation (

14). Since the objective function in (

14) is bounded, we know that

is bounded [

13,

18]. As in the proof of Theorem 2 of [

18], we can verify that

and

are bounded for each

n (where

,

denotes the measure of

). Due to the continuity of the operator

, we know that the sequence

is bounded in

and in

.

Moreover, for each

n, the objective function in Equation (

14) is bounded, hence

is bounded in

. Thus,

is bounded as well, and hence

is bounded. One can easily find that

is bounded from Equation (

15).

Since , is uniformly bounded. Moreover, is bounded, so is bounded in and in . Since is closed and convex, is also bounded in .

As a consequence, there is a possible subsequence

, which converges in

to some

, and

converges slightly as a measure to

. Since the linear operator

K is continuous,

converges to

in

. Thus,

is a solution of Equation (

14) according to the lower semicontinuity of TV and Fatou’s lemma.

Based on Lemma 1, when

, Equation (

14) is strictly convex. Furthermore,

K is injective, so its solution is unique. ☐

4. Primal-Dual Algorithm

In this section, we employ the primal-dual algorithm [

32,

33] to solve the minimization problem in (

14) since it is easy to implement and its convergence is guaranteed [

32]. Due to the convexity of Equation (

14), there are many algorithms that can be employed to solve the proposed image deblurring and denoising model such as the alternating direction method of multipliers (ADMM) [

5,

34,

35] and the split-Bregman algorithm [

36].

We address the general deblurring and denoising case, since the pure denoising case can be considered special when

K is an invariant parameter. At first, the discrete version of our proposed image deblurring and denoising Equation (

14) is derived, and the corresponding numerical solution is then given.

Suppose that the noisy image

is obtained from a two-dimensional pixel-array with dimension

, and

is the discretization of the continuous blurring operator. Now we introduce the discrete version of Equation (

14):

where

is defined as

The first term of Equation (

16) denotes the discrete total variation of the image

u, and it is defined as

where the discrete gradient

is given by

.

The first term on the right side of Equation (

17) is a robust distance metric, which can be defined as the meridian norm. The meridian norm tends to behave like the

norm for points within the unitary

ball and gives the same penalization to large sparse deviations as to small clustered deviations [

30].

As in [

32], we introduce new variables

and

, and Equation (

16) is then clearly equivalent to the following constrained optimization problem:

To employ the primal-dual algorithm, we study the following optimization problem:

where

and

are the dual variables,

X is a real vector space

, and

, where

is defined as

.

Now we apply the primal-dual algorithm to the optimization problem of Equation (

20). The primal-dual algorithm is defined through the following iterations:

In the following, we provide details on how to solve them. Since the objective functions of Equations (

21)–(

23) are quadratic, the update of

p,

q, and

u can be computed efficiently by

where the divergence operator

. The update in Equation (

24) can be obtained by applying the soft thresholding operator as

where

. The optimality condition for (

25) is given by

that is

where

.

We remark that, if K is the identity operator, i.e. the degraded image f is not blurred but is only corrupted by noise, there is no need to introduce the primal variable w and the dual variable q, and the algorithm can be simplified accordingly.

The primal-dual algorithm above to solve the optimization problem of Equation (

20) can be summarized in the following table.

The termination condition in Algorithm 1 will be discussed in

Section 5.

In the rest of this section, we study the existence of the solution to Equation (

20) and the convergence of Algorithm 1.

Define

,

,

, such that Equation (

20) is equivalent to

where

.

Proposition 1. The saddle-point set of Equation (35) is nonempty. Proof. The proof of the above proposition is the same as that for Proposition 2 of [

37]. We remark that we can easily verify that the required conditions in [

38] are satisfied for the proposed primal-dual formulation:

(H1): X and Y are nonempty closed convex sets;

(H2): The objective function (denote

) of (

35) is convex-concave on

in the following sense: for each

, the function

is convex, for each

, the function

is concave;

(H3): X is bounded, or such that when ;

(H4):

Y is bounded, or

such that

when

; Thus, there exists a nonempty convex compact set of saddle-points on

of Equation (

35). ☐

The following proposition shows the convergence of Algorithm 1.

| Algorithm 1: Primal-dual algorithm for solving model (20) |

Initialization: Given , , starting points , , , and , and iteration index Calculate: , , , , , , and from

The iteration is terminated if the termination condition is satisfied; otherwise, set and return to Step (2).

|

Proposition 2. Let be the operator 2-norm of A , and the iteration of be defined by Algorithm 1. If , then converges to a saddle point of primal-dual problem in Equation (35). Proof. The proposition can be seen as a special case of Theorem 1 in [

32]. The conclusion (a) of Theorem 1 in [

32] establishes that

is a bounded sequence, so that some subsequence

converges to some limit

. Observe that the conclusion (b) of Theorem 1 in [

32] implies that

, and

and

in particular converge, respectively, to

and

. It follows that the limit

is a fixed point of the iterations of Algorithm 1, hence a saddle-point of our problem. ☐

Since

(see [

4]),

(see [

37]), and

(see [

18,

39]),

. Therefore, in order to ensure the convergence of our algorithm we just need to choose

and

such that

.

5. Experimental Results and Analysis

In this section, numerical results are obtained by applying our proposed models to blurred images corrupted by alpha-stable noise. We also compare our models with other existing and well-known models.

We take six images—Cameraman (

), Peppers (

), Lena (

), Phantom (

), Boat (

), and Fruits (

)—for experiment and comparison. For further comparison, four objective image quality metrics—the peak signal noise ratio (PSNR) in dB, the measure of structural similarity index (SSIM) [

40], the multiscale SSIM (MS-SSIM) [

41], and the feature similarity index (FSIM) [

42]—are used to measure the performance of the proposed models for the test images. Each of the same experiments is repeated 10 times, so the PSNR, SSIM, MS-SSIM and FSIM values are the averaged results of 10 experiments. The PSNR and SSIM are respectively defined as follows:

where

is the restored image,

u is the original image,

and

are their respective mean,

and

are their respective variances,

is the covariance of them, and

are constants. PSNR, SSIM, MS-SSIM, and FSIM are all measures of the performance of an image. A higher PSNR indicates that the better restored image will be picked up, and the SSIM, MS-SSIM, and FSIM values are closer to 1. The characteristic of the restored image is more similar to the original image.

In our numerical simulations, we terminate the algorithm when the relative change of the objective function between two consecutive iterations becomes small enough, i.e.,

where

denotes the objective function of the proposed Equation (

14), and

is a tolerance. For Algorithm 1, we have found that smaller tolerance values (e.g.,

) do not consistently improve the relative error as the runtimes increase, so we set

in our numerical experiments.

Since

depends on the noise level, we take the same value of the parameter found in [

30], that is,

(where

denotes the

cth quantile of

f). We chose

and

. In addition, the regularization parameter

balances the trade-off between the TV regularization term and the data fidelity term. We manually tune it in order to obtain the highest PSNR values of the restored image.

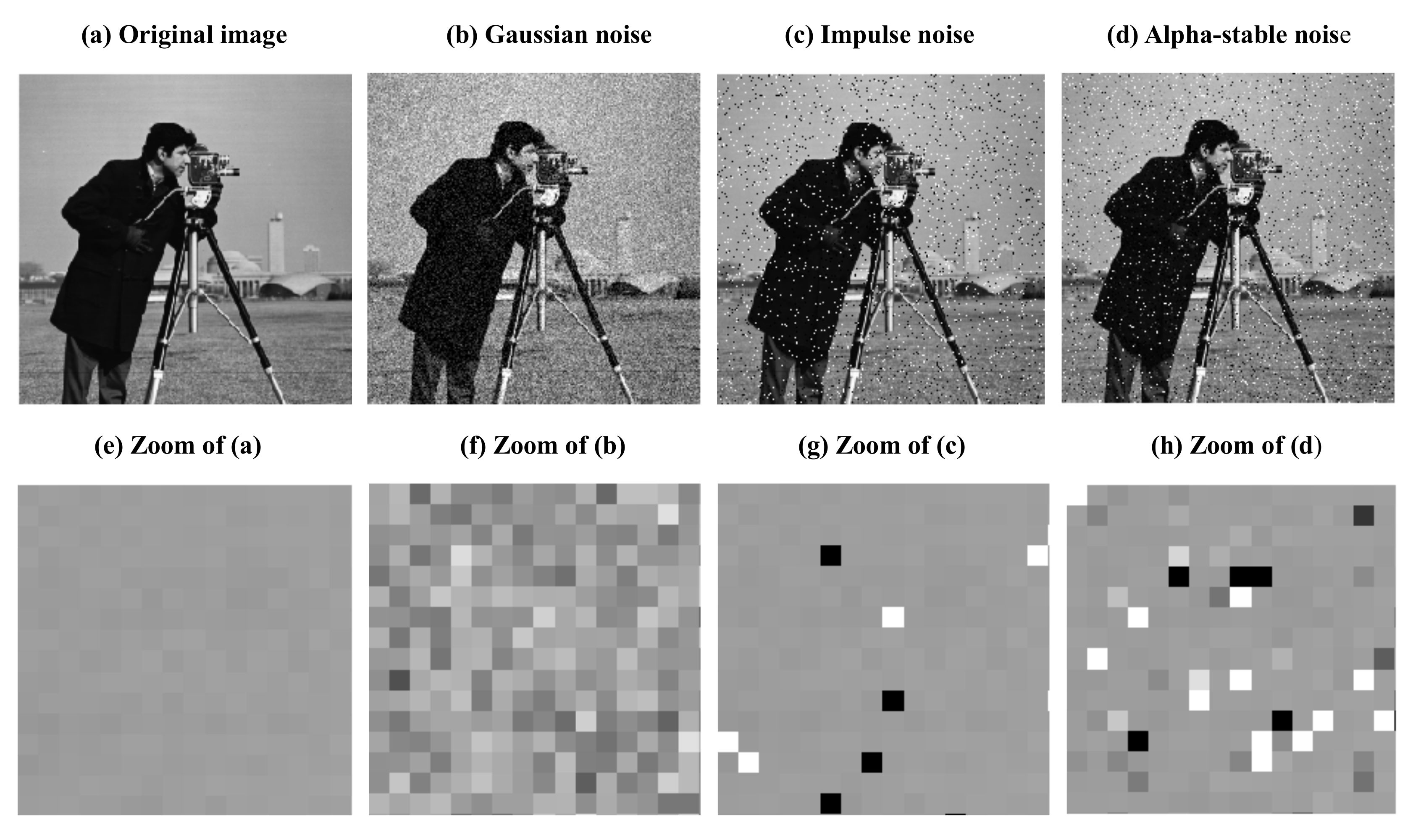

We would first like to illustrate the different effects of Gaussian noise, impulse noise, and alpha-stable noise.

Figure 2a shows the original Cameraman image, and

Figure 2b–d represent, respectively, the images degraded by Gaussion noise, impulse noise, and alpha-stable noise (with

).

Figure 2e–h show the zoomed top left corner of

Figure 2a–d.

It is clear from

Figure 2 that the image corrupted by Gaussian noise looks different from the images corrupted by impulse noise and alpha-stable noise (with

), while to some extent the alpha-stable noise and impulse noise are close to each other. For example, some pixels are degraded to white or black with the impulse noise and the alpha-stable noise (with

), while the image corrupted by Gaussian noise is uniformly modified and all the pixels are corrupted by noise (see

Figure 2f). Although the alpha-stable noise is similar to the impulse noise, there are also some very important differences, for instance, in the impulse noise, some pixels are noise-free (see

Figure 2g), while in the alpha-stable noise, the noise free pixels are very rare (see

Figure 2h). Thus, due to the impulsive character of the alpha-stable noise, we employ the meridian norm in our proposed model.

5.1. Image Denoising

In this subsection, we first focus only on the pure denoising case. The noisy image

f is generated as

where

follows the alpha-stable distribution, and

gives the noise level. We compare the proposed image denoising model with the Cauchy model [

18], the TVL1 model [

11], and the meridian filter [

29]. These models are all efficient for recovering images in impulsive noise.

The proposed image denoising model is applied to the Cameraman image in the presence of alpha-stable noise at different tail parameters

(with

and

following the alpha-stable distribution

). In order to evaluate quantitatively the performances of the proposed image denoising model, two objective criteria, PSNR and SSIM, are computed and provided in

Figure 3. The Cauchy and TVL1 models for image denoising perform similarly, so we only provide the results of the Cauchy model in

Figure 3.

Figure 3 gives the PSNR and the SSIM of the noisy Cameraman image and the recovered images resulting from the proposed image denoising model, the Cauchy model, and the meridian filter at different tail parameters

. As the tail parameter

increases, the PSNR values and the SSIM values become higher in all of these methods; And as the tail parameter

decreases, the superiority of the proposed method becomes obvious. Moreover, our proposed image denoising model outperforms the Cauchy model and the meridian filter in terms of the PSNR and SSIM at the same tail parameter. In all, the proposed model significantly outperforms the commonly employed image denoising models in impulsive noisy environments (with small

values) while providing comparable performances in less demanding, light-tailed environments (with high

values). In particular, the PSNR values of our proposed model are all above 30 dB at the tail parameter of

, and such values are considered to be perfect recovery results, so we employ the value of

, which, in this part, follows the alpha-stable distribution

.

For comparison of the performance quantitatively, the PSNR in dB and the SSIM are used to measure the performance of different models for the three noisy test images: Cameraman, Peppers, and Lena. The PSNR values in dB and the SSIM values for noisy images (

and

obeying

) and recovered images given by different methods are listed in

Table 1.

Table 1 gives the PSNR values and the SSIM values for three different test images and the recovered results of these noisy images resulting from our proposed image denoising model, the Cauchy model, the TVL1 model, and the meridian filter, respectively. Obviously, our proposed image denoising model outperforms the TVL1 model, the Cauchy model, and the meridian filter in terms of the PSNR and SSIM at the same noise levels (

and

following

). Take the Cameraman noisy image as an example, with our method, we can increase the PSNR values of the recovered images by 2.836 dB at the same noise levels and obtain the largest SSIM values.

5.2. Image Deblurring and Denoising

In the following subsection, we focus on the deblurring and denoising case. Here, we consider the recovery of the blurred images corrupted by both the Gaussian blur (a window size

and standard deviation of 1) and alpha-stable noise (

). As in the previous subsection, we compare our proposed deblurring and denoising model with other well-known image deblurring and denoising methods for impulsive noise, such as the TVL1 model [

11] and the Cauchy model [

18].

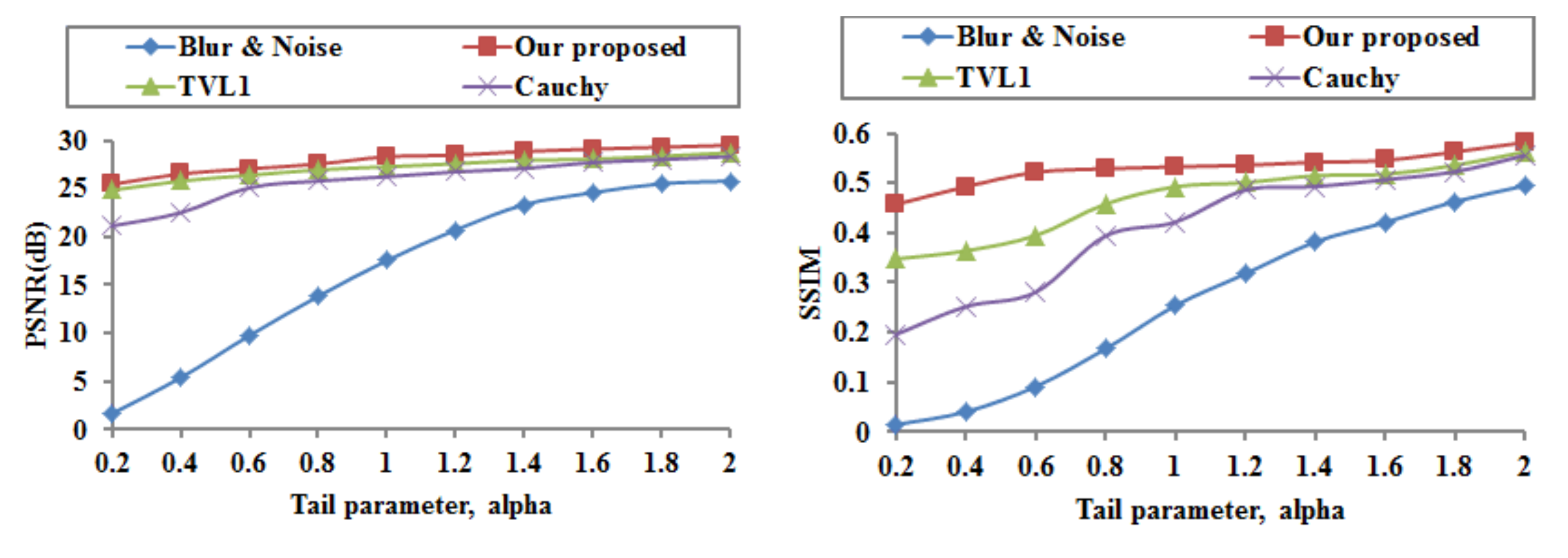

The proposed image deblurring and denoising model is applied to the blurred and noisy Cameraman image at different tail parameters

. The PSNR and SSIM are computed and provided in

Figure 4.

Figure 4 provides the quantitative results of our proposed image deblurring and denoising model, the TVL1 model, and the Cauchy model. It is clear that these methods perform well. As the alpha values increase, the PSNR and SSIM values become higher for all these methods. And, as the alpha values decrease, the superiority of our proposed model becomes obvious. Hence, our proposed model has better performance at the same tail parameter

than that of the TVL1 model and the Cauchy model.

Since the PSNR and SSIM performances depend on the tail parameter, it is necessary to choose an appropriate tail parameter for image deblurring and denoising. In the following test, the tail parameter is set to

. In practice, we can see from

Figure 4 that the recovered results with

are of good quality for all models.

In order to evaluate quantitatively the performance of the proposed image blurring and denoising model, we apply it now to recover three different images (Phantom, Boat, and Fruits) with the Gaussian blur (a window size

and standard deviation of 1) at the same noise level (

and

following

). Experimental results on these test images are shown in

Figure 5,

Figure 6 and

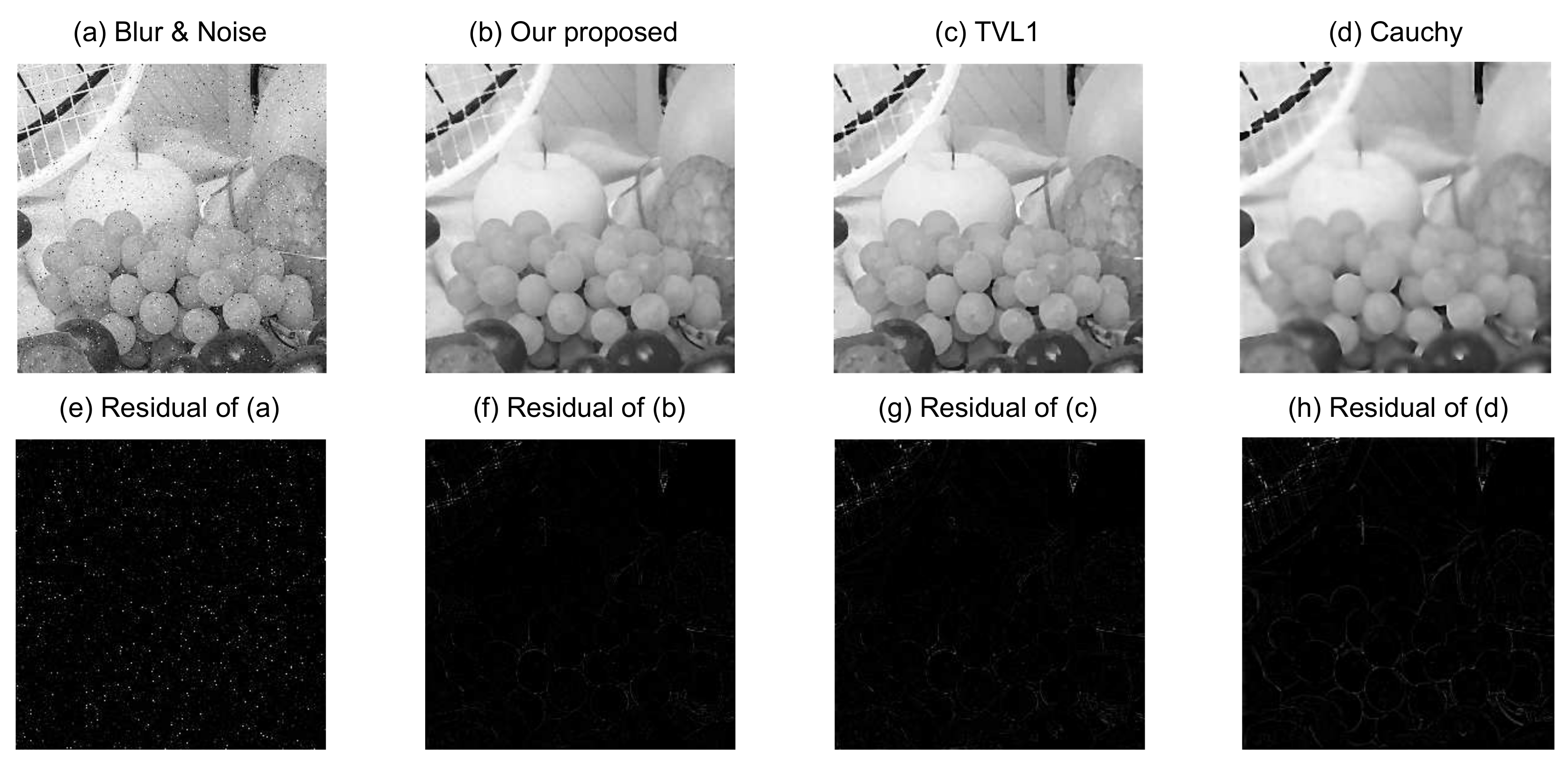

Figure 7, respectively.

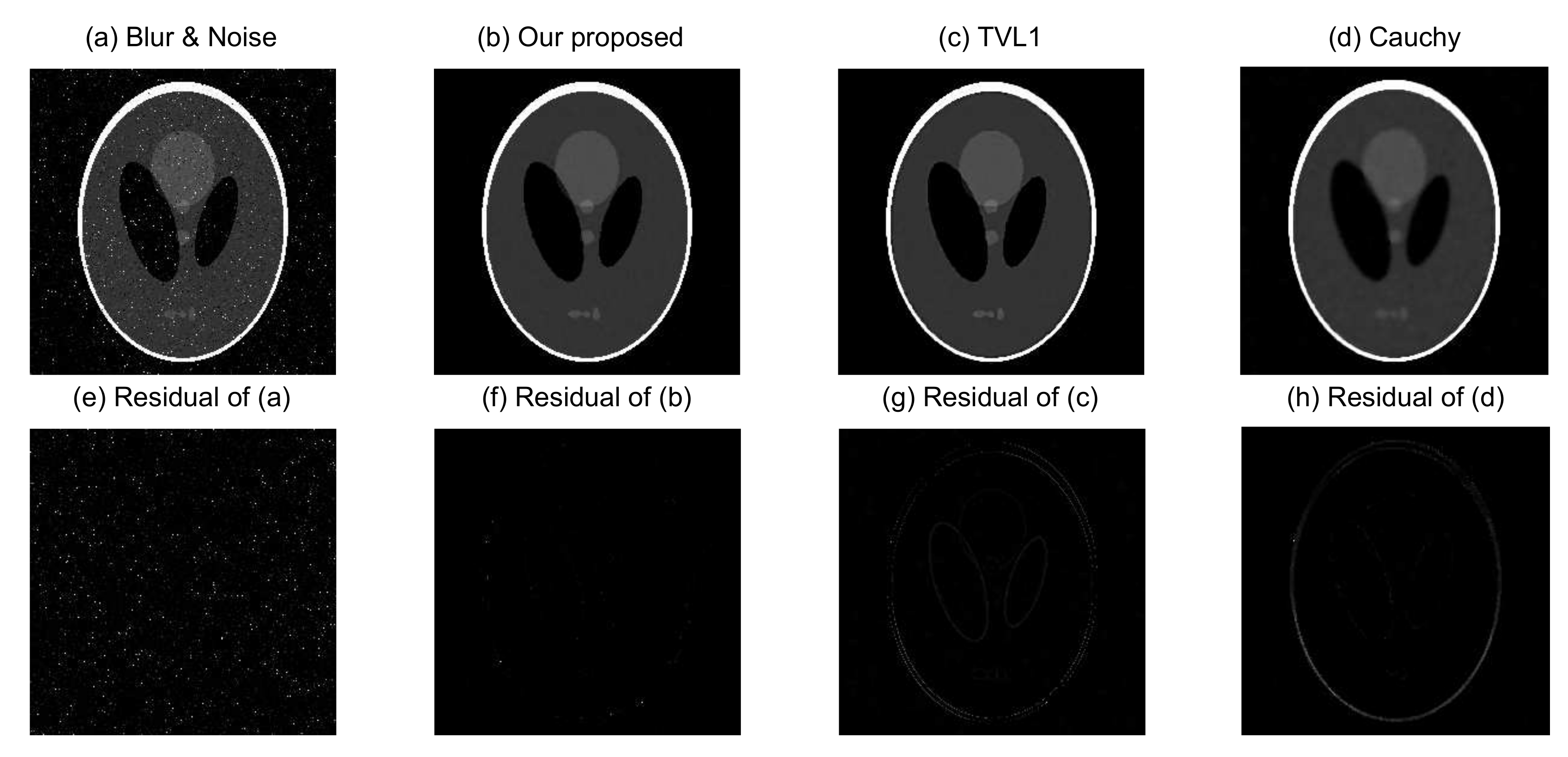

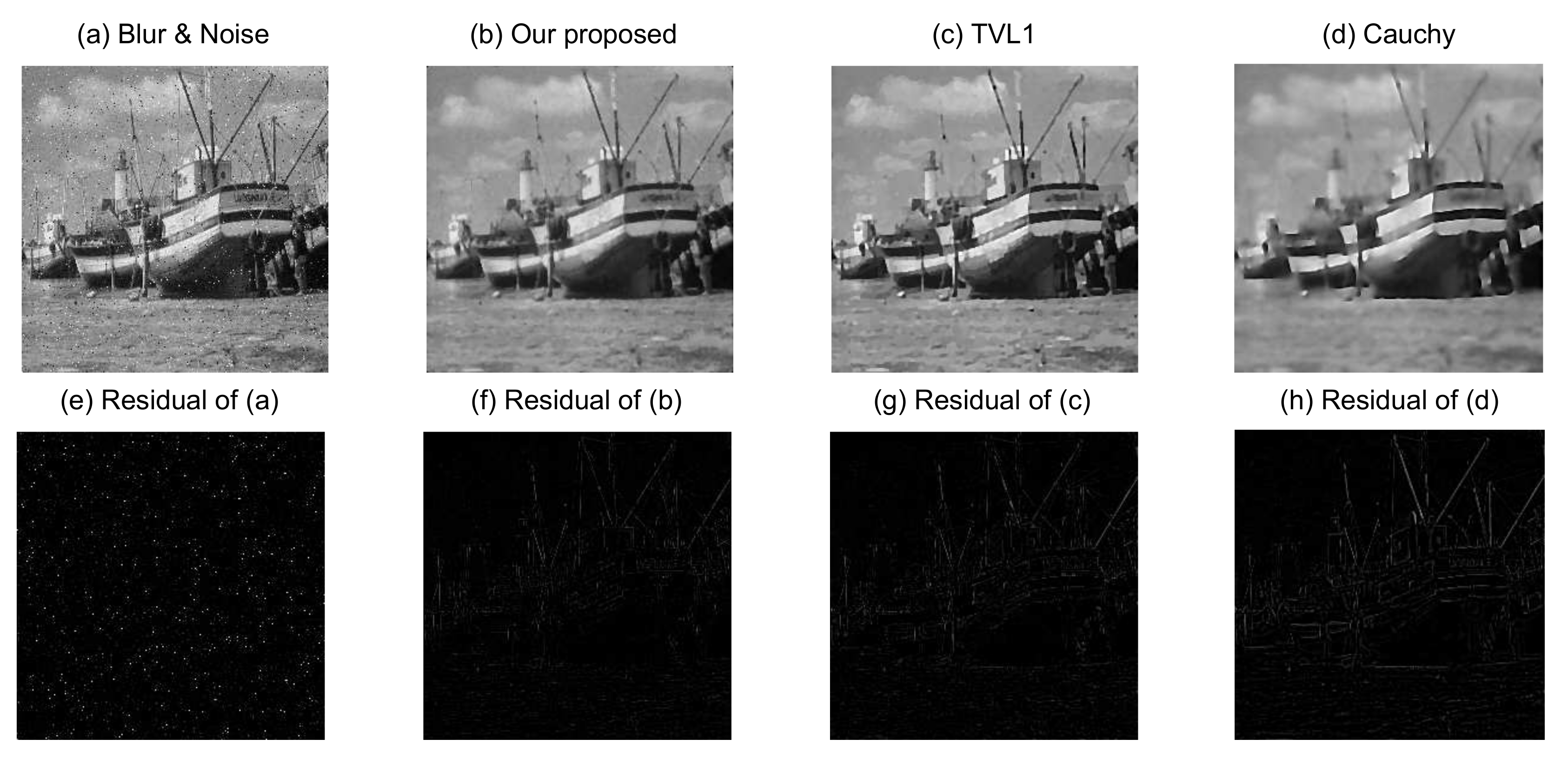

Figure 5a is the Phantom blurred and noisy image, and

Figure 5b–d are the recovered images from our proposed image blurring and denoising model, the TVL1 model, and the Cauchy model, respectively. The source images in

Figure 6 and

Figure 7 have similar situations for the Boat and Fruits images, respectively. It is clear from

Figure 5,

Figure 6 and

Figure 7 that the recovered images of our proposed image blurring and denoising model have more detailed information and are much closer to the original test images as compared with the recovered images from the TVL1 model and the Cauchy model.

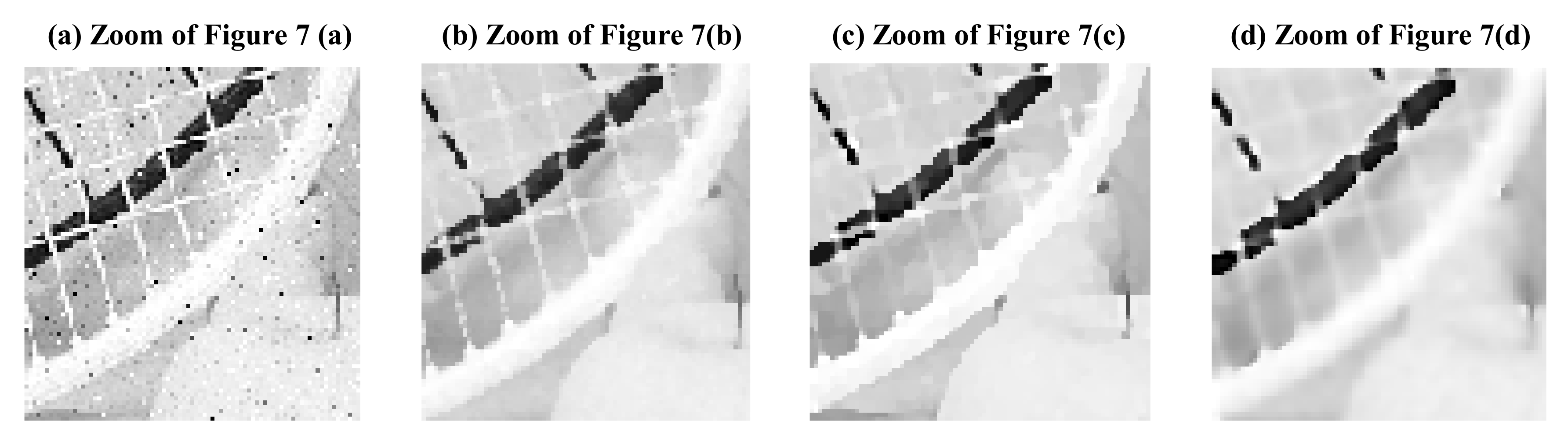

Figure 8a–d are the magnified top left regions of

Figure 7a–d, respectively. It is clear from

Figure 8 that the reconstruction result obtained with our proposed method produces characterizations that are superior to those of the TVL1 and Cauchy methods. We also can see that the restored result of the proposed method can maintain salient features of the line in the original image and has clearer outlines and reduced noise and blur effects.

For further quantitative comparison of the performance of the proposed image deblurring and denoising model, the PSNR in dB and SSIM were computed using the different models for the three different groups of blurred and noisy test images.

The PSNR and SSIM values for blurred and noisy three different test images: Cameraman, Peppers, and Lena (the Gaussian blur with a window size

and standard deviation of 1,

and

following

). The recovered images given by different methods are listed in

Table 2.

For easy observation, we took the Fruits image as an example and magnified the top left regions of the restored results with different algorithms. The magnified local regions of the restored results with different algorithms are shown in

Figure 8.

In general, larger PSNR values indicate that the recovered image can pick up more information. It is obvious from

Table 2 that a notable performance improvement has been achieved by the proposed image deblurring and denoising model as compared with the TVL1 model and the Cauchy model. For example, the PSNRs of the Cameraman image, resulting from the TVL1 model and the Cauchy model are 27.283 dB and 26.244 dB, respectively, while our proposed model gives 28.327 dB, implying that our proposed model provides an improvement of 2.083 dB, as compared with the Cauchy model. This is consistent with the visual effects of

Figure 5,

Figure 6,

Figure 7 and

Figure 8.

To further verify the performance of the algorithm, the PSNR, SSIM, MS-SSIM, and FSIM for blurred and noisy Phantom images and recovered images given by different methods are listed in

Table 3. It is obvious from

Table 3 that a notable performance improvement has been achieved by the proposed image deblurring and denoising model as compared with the TVL1 model and the Cauchy model in terms of these four image quality metrics. This is also consistent with the visual effects of

Figure 5. In addition, we have employed other classical test images to evaluate the deblurring and denoising performance and found that a similar performance gain in terms of the PSNR, SSIM, MS-SSIM, and FSIM has been achieved by the proposed method.