Analysis of Deep Convolutional Neural Networks Using Tensor Kernels and Matrix-Based Entropy

Abstract

1. Introduction

- We propose a kernel tensor-based approach to the matrix-based entropy functional that is designed for measuring MI in large-scale convolutional neural networks (CNNs).

- We provide new insights on the matrix-based entropy functional by showing its connection to well-known quantities in the kernel literature such as the kernel mean embedding and maximum mean discrepancy. Furthermore, we show that the matrix-based entropy functional is closely linked with the von Neuman entropy from quantum information theory.

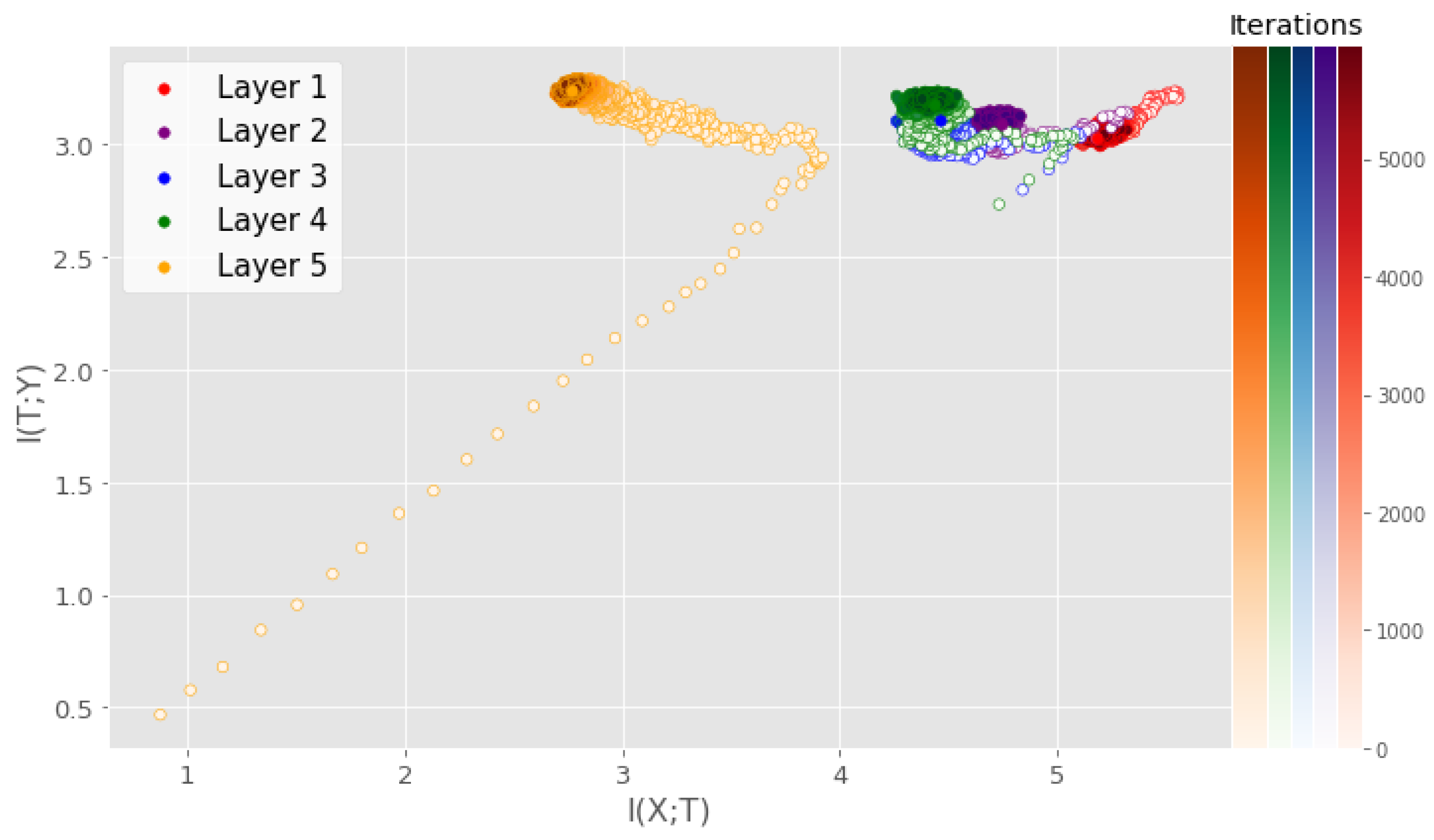

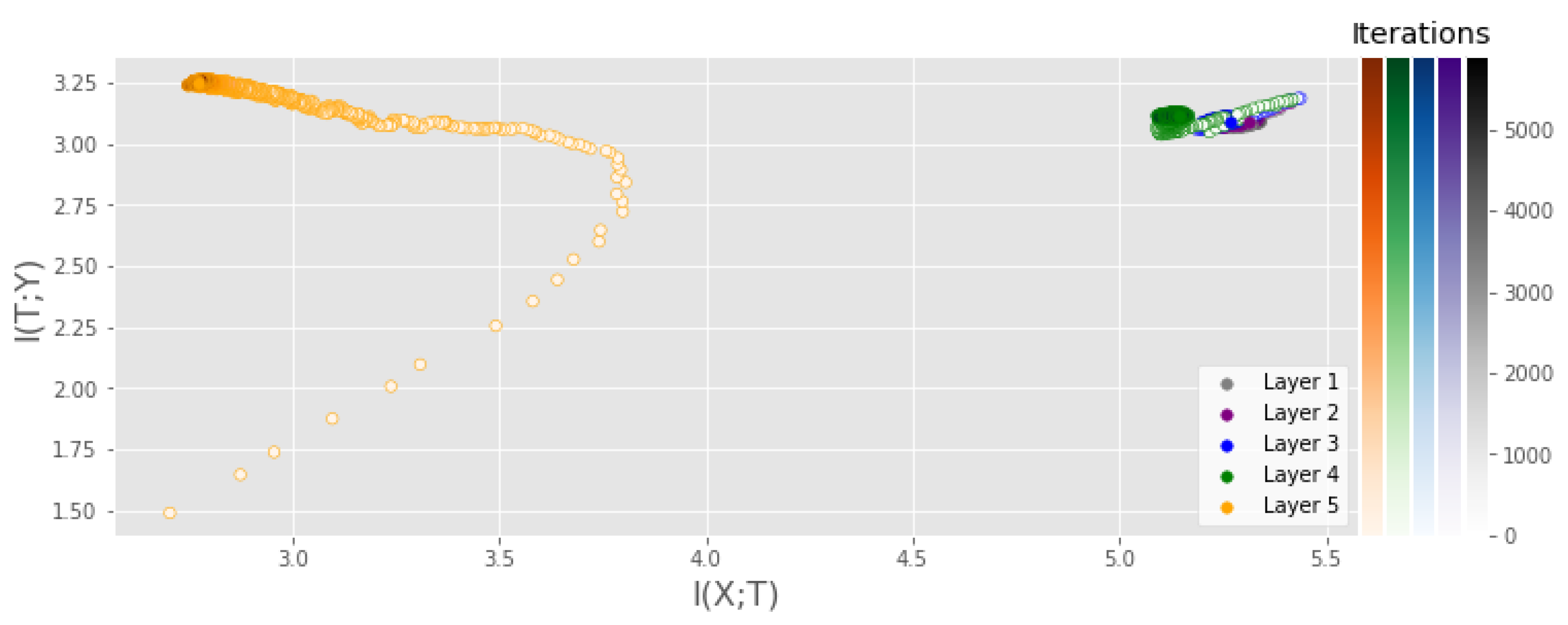

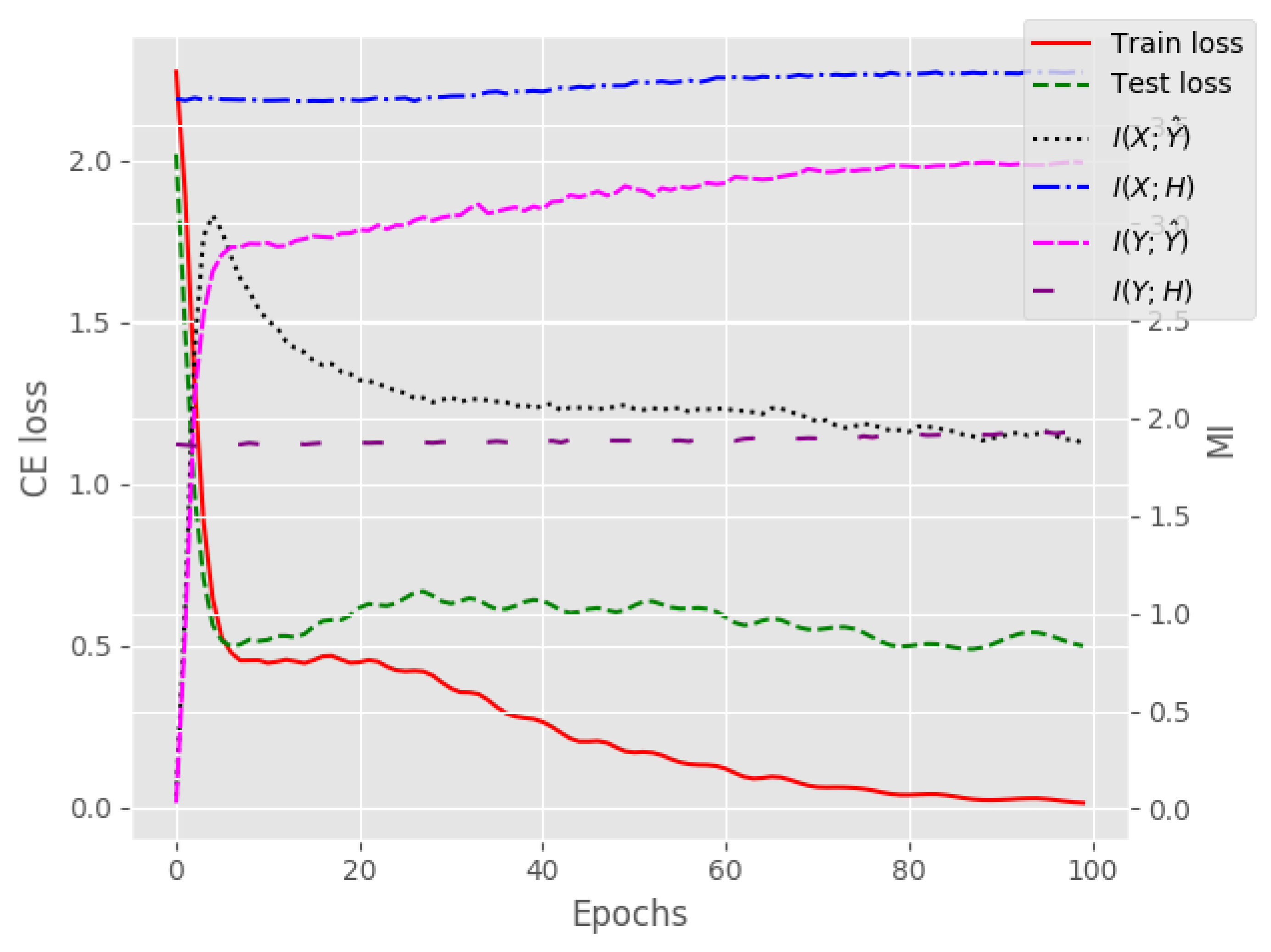

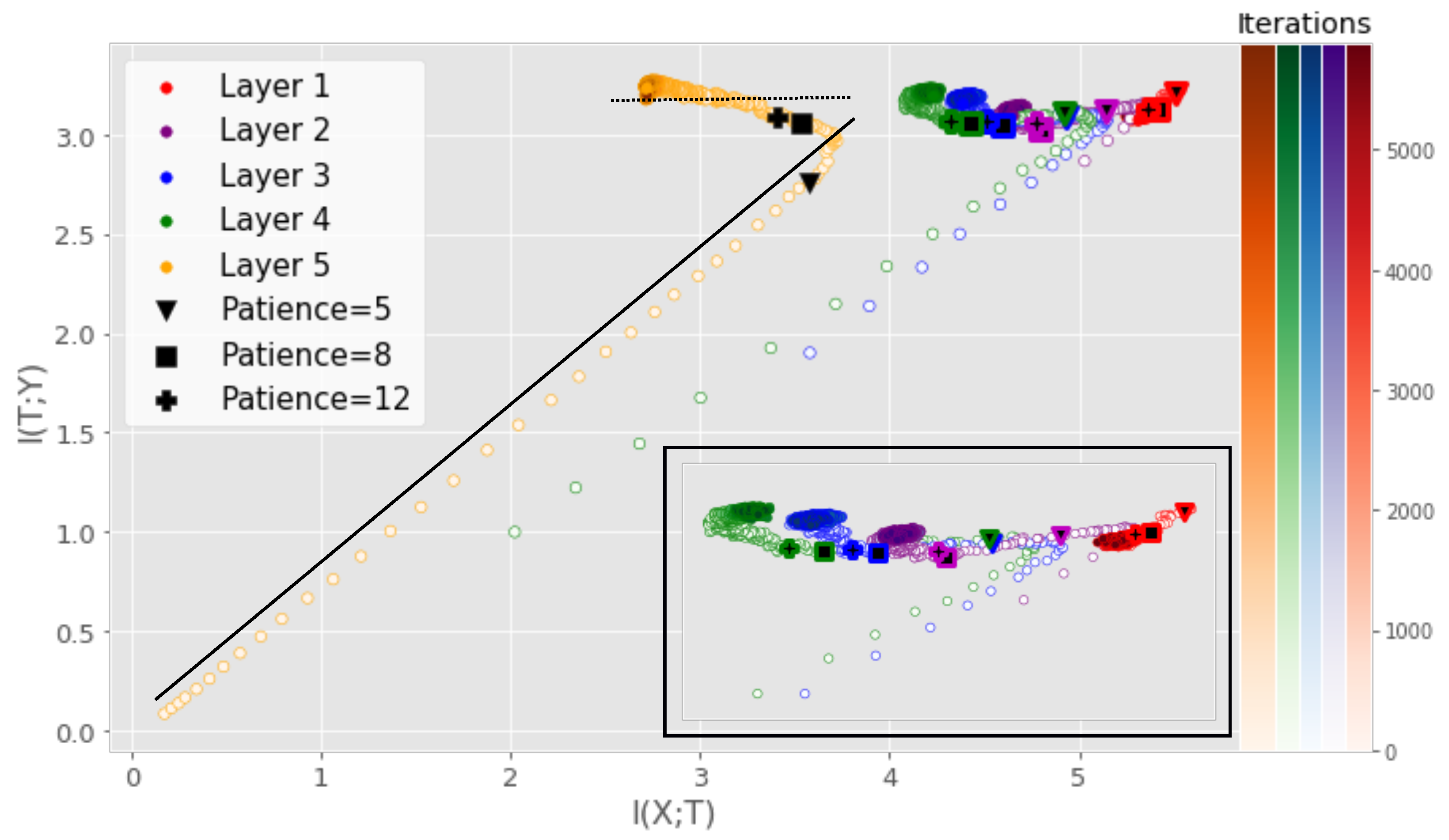

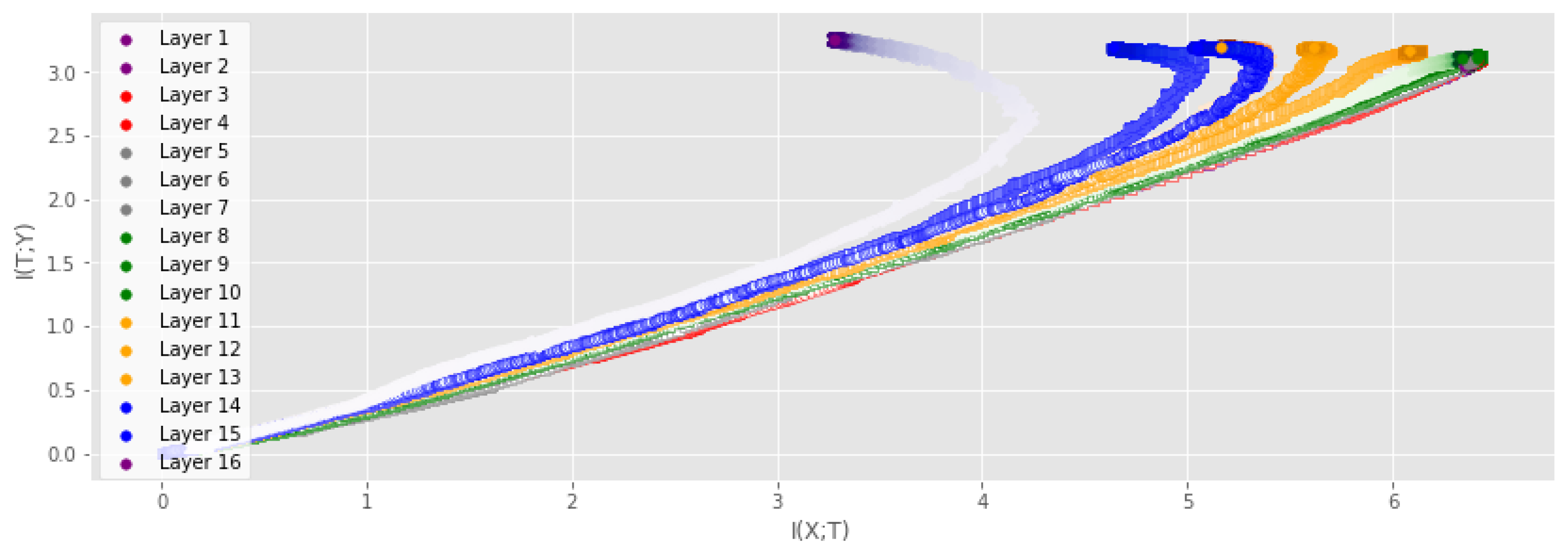

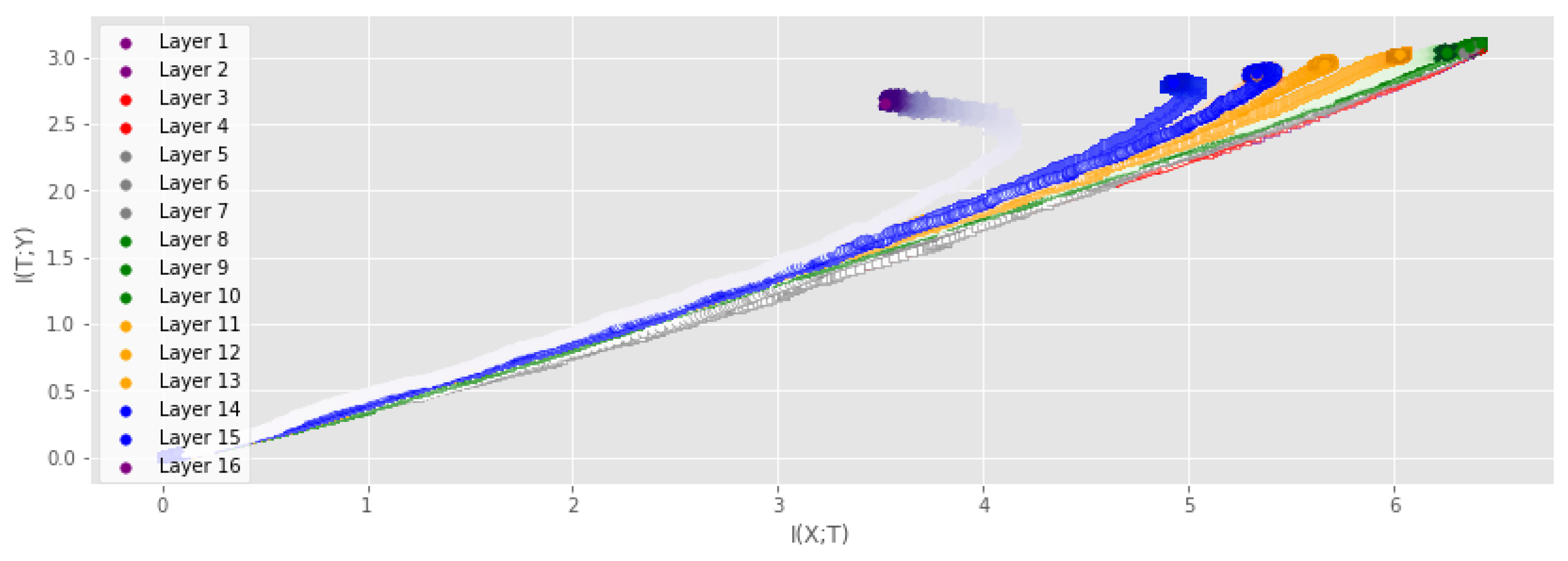

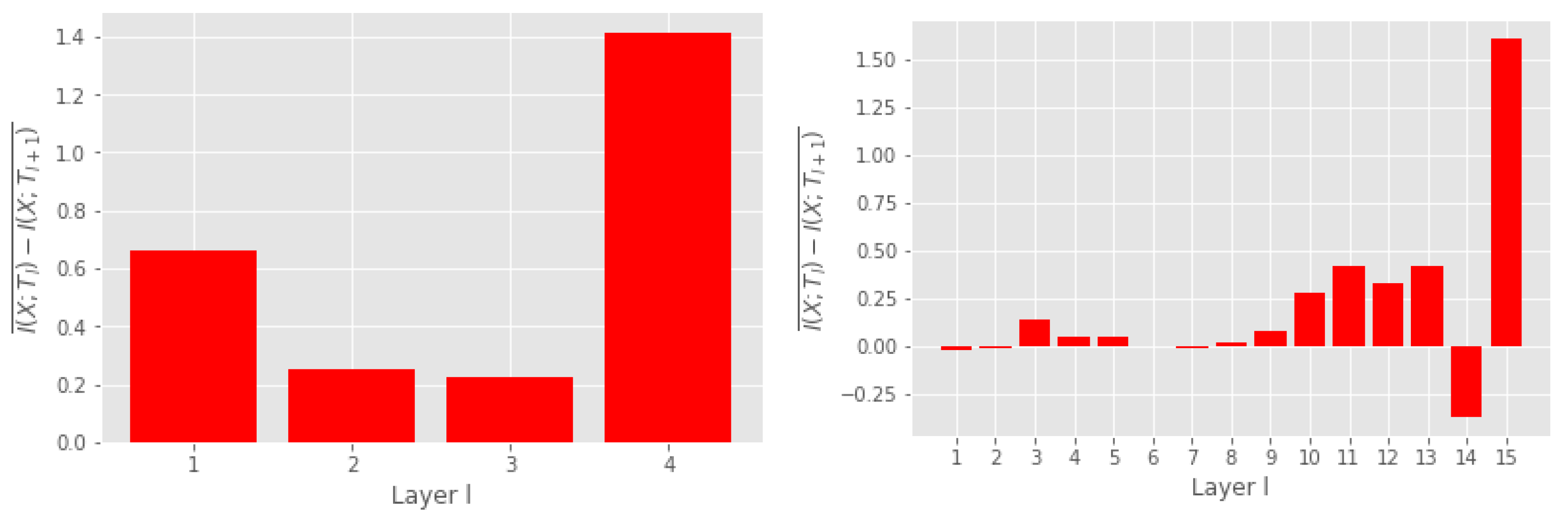

- Our results indicate that the compression phase is apparent mostly for the training data and less so for the test data, particularly for more challenging datasets. When using a technique such as early stopping to avoid overfitting, training tends to stop before the compression phase occurs (see Figure 1).

2. Related Work

3. Materials and Methods

3.1. Preliminaries on Matrix-Based Information Measures

3.1.1. Matrix-Based Entropy and Mutual Information

3.1.2. Bound on Matrix-Based Entropy Measure

3.2. Analysis of Matrix-Based Information Measures

3.2.1. A New Special-Case Interpretation of the Matrix-Based Renyi Entropy Definition

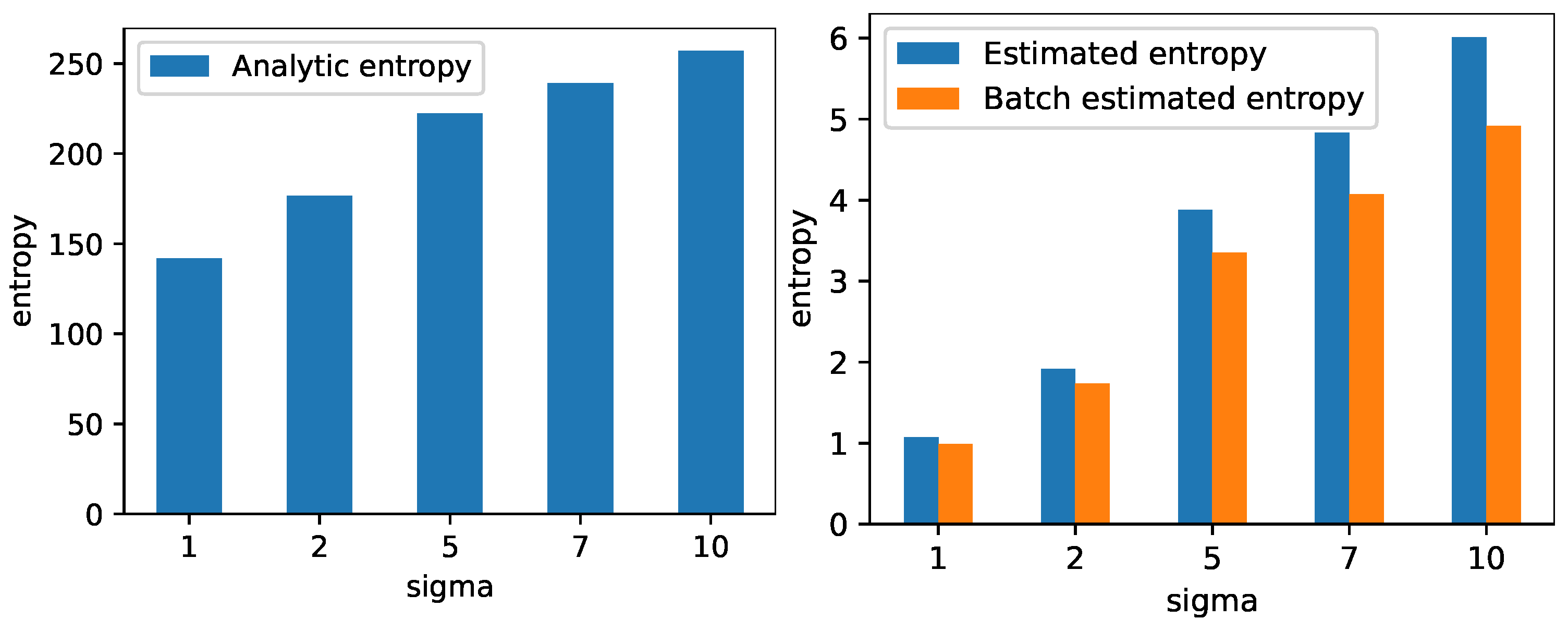

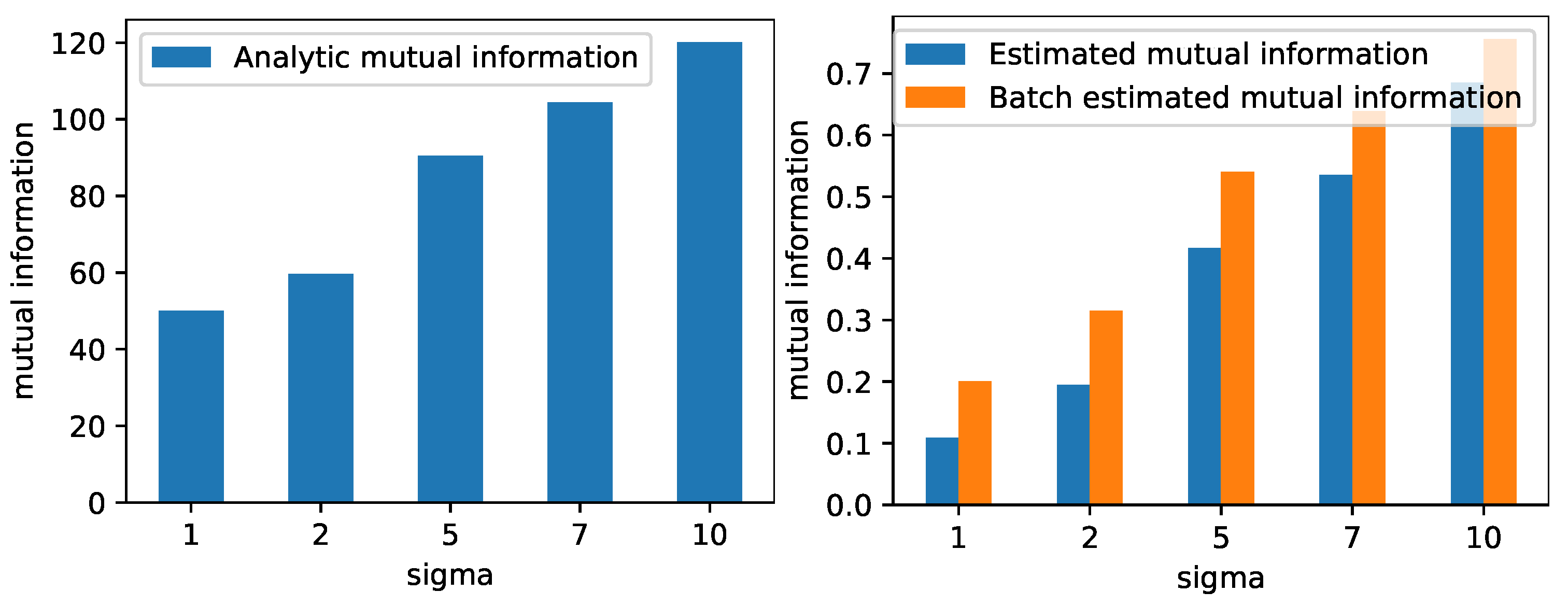

3.2.2. Link to Measures in Kernel Literature and Validation on High-Dimensional Synthetic Data

3.3. Novel Tensor-Based Matrix-Based Renyi Information Measures

3.4. Tensor Kernels for Measuring Mutual Information

3.4.1. Choosing the Kernel Width

4. Results

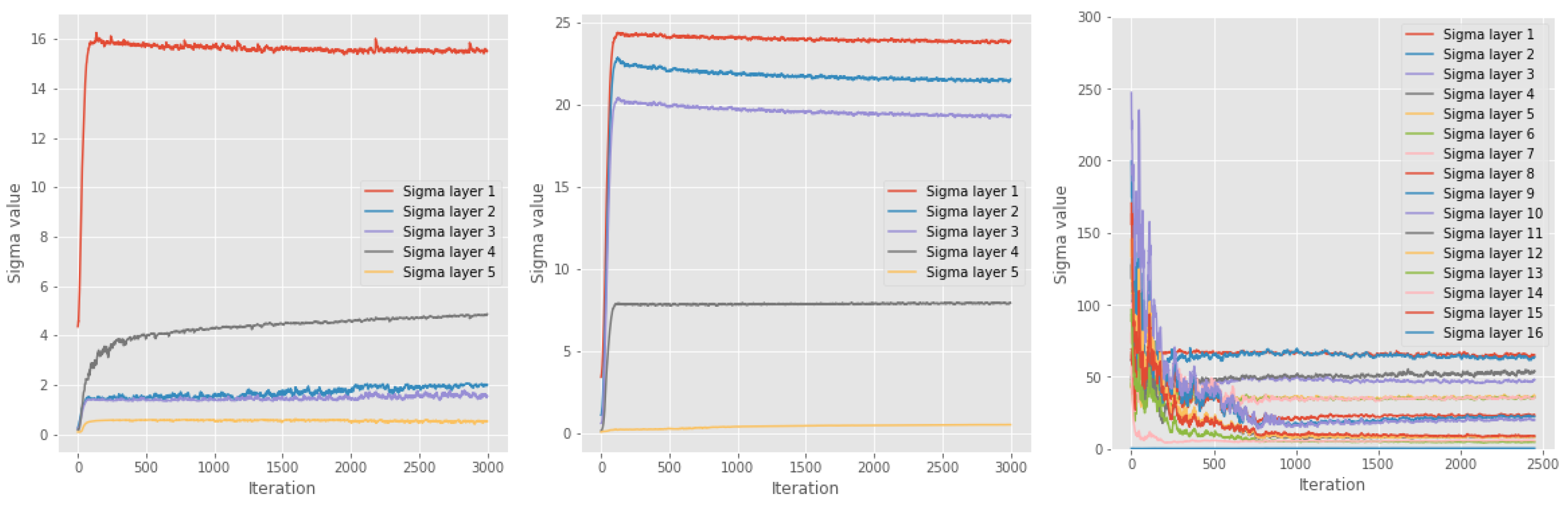

5. Kernel Width Sigma

6. Discussion and Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A. Preliminaries on Multivariate Matrix-Based Renyi’s Alpha-Entropy Functionals

Appendix B. Tensor-Based Approach Contains Multivariate Approach as Special Case

Appendix C. Structure Preserving Tensor Kernels and Numerical Instability of Multivariate Approach

Appendix D. Detailed Description of Networks from Section 4

Appendix D.1. Multilayer Perceptron Used in Section 4

- Fully connected layer with 784 inputs and 1024 outputs.

- Activation function.

- Batch normalization layer.

- Fully connected layer with 1024 inputs and 20 outputs.

- Activation function.

- Batch normalization layer.

- Fully connected layer with 20 inputs and 20 outputs.

- Activation function.

- Batch normalization layer.

- Fully connected layer with 20 inputs and 20 outputs.

- Activation function.

- Batch normalization layer.

- Fully connected layer with 784 inputs and 10 outputs.

- Softmax activation function.

Appendix D.2. Convolutional Neural Network Used in Section 4

- Convolutional layer with 1 input channel and 4 filters, filter size , stride of 1 and no padding.

- Activation function.

- Batch normalization layer.

- Convolutional layer with 4 input channels and 8 filters, filter size , stride of 1 and no padding.

- Activation function.

- Batch normalization layer.

- Max pooling layer with filter size , stride of 2 and no padding.

- Convolutional layer with 8 input channels and 16 filters, filter size , stride of 1 and no padding.

- Activation function.

- Batch normalization layer.

- Max pooling layer with filter size , stride of 2 and no padding.

- Fully connected layer with 400 inputs and 256 outputs.

- Activation function.

- Batch normalization layer.

- Fully connected layer with 256 inputs and 10 outputs.

- Softmax activation function.

Appendix E. IP of MLP with Tanh Activation Function from Section 4

Appendix F. IP of CNN with Tanh Activation Function from Section 4

Appendix G. Data Processing Inequality with EDGE MI Estimator

Appendix H. Connection with Epoch-Wise Double Descent

References

- Shwartz-Ziv, R.; Tishby, N. Opening the Black Box of Deep Neural Networks via Information. arXiv 2017, arXiv:1703.00810. [Google Scholar] [CrossRef]

- Geiger, B.C. On Information Plane Analyses of Neural Network Classifiers—A Review. IEEE Trans. Neural Netw. Learn. Syst. 2021, 33, 7039–7051. [Google Scholar] [CrossRef]

- Cheng, H.; Lian, D.; Gao, S.; Geng, Y. Evaluating Capability of Deep Neural Networks for Image Classification via Information Plane. In Proceedings of the European Conference on Computer Vision, Munich, Germany, 8–14 September 2018; pp. 168–182. [Google Scholar]

- Noshad, M.; Zeng, Y.; Hero, A.O. Scalable Mutual Information Estimation Using Dependence Graphs. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Brighton, UK, 12–17 May 2019; pp. 2962–2966. [Google Scholar]

- Saxe, A.M.; Bansal, Y.; Dapello, J.; Advani, M.; Kolchinsky, A.; Tracey, B.D.; Cox, D.D. On the information bottleneck theory of deep learning. J. Stat. Mech. Theory Exp. 2019, 2019, 124020. [Google Scholar] [CrossRef]

- Yu, S.; Wickstrøm, K.; Jenssen, R.; Príncipe, J.C. Understanding Convolutional Neural Network Training with Information Theory. IEEE Trans. Neural Netw. Learn. Syst. 2020, 32, 435–442. [Google Scholar] [CrossRef]

- Yu, S.; Principe, J.C. Understanding autoencoders with information theoretic concepts. Neural Netw. 2019, 117, 104–123. [Google Scholar] [CrossRef] [PubMed]

- Lorenzen, S.S.; Igel, C.; Nielsen, M. Information Bottleneck: Exact Analysis of (Quantized) Neural Networks. In Proceedings of the International Conference on Learning Representations, Virtual, 25–29 April 2022. [Google Scholar]

- Goldfeld, Z.; Van Den Berg, E.; Greenewald, K.; Melnyk, I.; Nguyen, N.; Kingsbury, B.; Polyanskiy, Y. Estimating Information Flow in Deep Neural Networks. In Proceedings of the International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; pp. 2299–2308. [Google Scholar]

- Chelombiev, I.; Houghton, C.; O’Donnell, C. Adaptive Estimators Show Information Compression in Deep Neural Networks. arXiv 2019, arXiv:1902.09037. [Google Scholar] [CrossRef]

- Zhouyin, Z.; Liu, D. Understanding Neural Networks with Logarithm Determinant Entropy Estimator. arXiv 2021, arXiv:2105.03705. [Google Scholar] [CrossRef]

- Sanchez Giraldo, L.G.; Rao, M.; Principe, J.C. Measures of Entropy From Data Using Infinitely Divisible Kernels. IEEE Trans. Inf. Theory 2015, 61, 535–548. [Google Scholar] [CrossRef]

- Tishby, N.; Zaslavsky, N. Deep learning and the information bottleneck principle. In Proceedings of the 2015 IEEE Information Theory Workshop (ITW), Jerusalem, Israel, 26 April–1 May 2015. [Google Scholar]

- Kolchinsky, A.; Tracey, B.D.; Kuyk, S.V. Caveats for information bottleneck in deterministic scenarios. arXiv 2019, arXiv:1808.07593. [Google Scholar] [CrossRef]

- Amjad, R.A.; Geiger, B.C. Learning Representations for Neural Network-Based Classification Using the Information Bottleneck Principle. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 42, 2225–2239. [Google Scholar] [CrossRef]

- Kornblith, S.; Norouzi, M.; Lee, H.; Hinton, G. Similarity of Neural Network Representations Revisited. In Proceedings of the International Conference on Machine Learning, Long Beach, CA, USA, 10–15 June 2019; pp. 3519–3529. [Google Scholar]

- Jónsson, H.; Cherubini, G.; Eleftheriou, E. Convergence Behavior of DNNs with Mutual-Information-Based Regularization. Entropy 2020, 22, 727. [Google Scholar] [CrossRef] [PubMed]

- Belghazi, M.I.; Baratin, A.; Rajeshwar, S.; Ozair, S.; Bengio, Y.; Courville, A.; Hjelm, D. Mutual Information Neural Estimation. In Proceedings of the 35th International Conference on Machine Learning, Stockholm, Sweden, 10–15 July 2018; Dy, J., Krause, A., Eds.; Volume 80, pp. 531–540. [Google Scholar]

- Geiger, B.C.; Kubin, G. Information Bottleneck: Theory and Applications in Deep Learning. Entropy 2020, 22, 1408. [Google Scholar] [CrossRef] [PubMed]

- Yu, S.; Sanchez Giraldo, L.G.; Jenssen, R.; Principe, J.C. Multivariate Extension of Matrix-based Renyi’s α-order Entropy Functional. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 42, 2960–2966. [Google Scholar] [CrossRef]

- Landsverk, M.C.; Riemer-Sørensen, S. Mutual information estimation for graph convolutional neural networks. In Proceedings of the 3rd Northern Lights Deep Learning Workshop, Tromso, Norway, 10–11 January 2022; Volume 3. [Google Scholar]

- Bhatia, R. Infinitely Divisible Matrices. Am. Math. Mon. 2006, 113, 221–235. [Google Scholar] [CrossRef]

- Renyi, A. On Measures of Entropy and Information. In Proceedings of the Fourth Berkeley Symposium on Mathematical Statistics and Probability, Volume 1: Contributions to the Theory of Statistics; University of California Press: Berkeley, CA, USA, 1961; pp. 547–561. [Google Scholar]

- Nielsen, M.A.; Chuang, I.L. Quantum Computation and Quantum Information, 10th ed.; Cambridge University Press: Cambridge, UK, 2011. [Google Scholar]

- Mosonyi, M.; Hiai, F. On the Quantum Rényi Relative Entropies and Related Capacity Formulas. IEEE Trans. Inf. Theory 2011, 57, 2474–2487. [Google Scholar] [CrossRef]

- Kwak, N.; Choi, C.H. Input feature selection by mutual information based on Parzen window. IEEE Trans. Pattern Anal. Mach. Intell. 2002, 24, 1667–1671. [Google Scholar] [CrossRef]

- Gretton, A.; Borgwardt, K.M.; Rasch, M.J.; Schölkopf, B.; Smola, A. A Kernel Two-sample Test. J. Mach. Learn. Res. 2012, 13, 723–773. [Google Scholar]

- Muandet, K.; Fukumizu, K.; Sriperumbudur, B.; Schölkopf, B. Kernel Mean Embedding of Distributions: A Review and Beyond. In Foundations and Trends® in Machine Learning; Now Foundations and Trends: Boston, FL, USA, 2017; pp. 1–141. [Google Scholar]

- Fukumizu, K.; Gretton, A.; Sun, X.; Schölkopf, B. Kernel Measures of Conditional Dependence. In Proceedings of the Advances in Neural Information Processing Systems, Vancouver, BC, Canada, 3–6 December 2008; pp. 489–496. [Google Scholar]

- Smola, A.; Gretton, A.; Song, L.; Schölkopf, B. A Hilbert Space Embedding for Distributions. In Proceedings of the Algorithmic Learning Theory, Sendai, Japan, 1–4 October 2007; Hutter, M., Servedio, R.A., Takimoto, E., Eds.; pp. 13–31. [Google Scholar]

- Evans, D. A Computationally Efficient Estimator for Mutual Information. Proc. Math. Phys. Eng. Sci. 2008, 464, 1203–1215. [Google Scholar] [CrossRef]

- Signoretto, M.; De Lathauwer, L.; Suykens, J.A. A kernel-based framework to tensorial data analysis. Neural Netw. 2011, 34, 861–874. [Google Scholar] [CrossRef]

- Shi, J.; Malik, J. Normalized Cuts and Image Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2000, 22, 888–905. [Google Scholar]

- Shi, T.; Belkin, M.; Yu, B. Data spectroscopy: Eigenspaces of convolution operators and clustering. Ann. Stat. 2009, 37, 3960–3984. [Google Scholar] [CrossRef]

- Silverman, B.W. Density Estimation for Statistics and Data Analysis; CRC Press: Boca Raton, FL, USA, 1986; Volume 26. [Google Scholar]

- Cristianini, N.; Shawe-Taylor, J.; Elisseeff, A.; Kandola, J.S. On kernel-target alignment. In Proceedings of the Advances in Neural Information Processing Systems, Vancouver, BC, Canada, 3–6 December 2002; pp. 367–373. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Belkin, M.; Hsu, D.; Ma, S.; Mandal, S. Reconciling modern machine-learning practice and the classical bias–variance trade-off. Proc. Natl. Acad. Sci. USA 2019, 116, 15849–15854. [Google Scholar] [CrossRef] [PubMed]

- Nakkiran, P.; Kaplun, G.; Bansal, Y.; Yang, T.; Barak, B.; Sutskever, I. Deep Double Descent: Where Bigger Models and More Data Hurt. arXiv 2020, arXiv:1912.02292. [Google Scholar] [CrossRef]

- Cover, T.M.; Thomas, J.A. Elements of Information Theory; Wiley Series in Telecommunications and Signal Processing; Wiley-Interscience: New York, NY, USA, 2006. [Google Scholar]

- Yu, X.; Yu, S.; Príncipe, J.C. Deep Deterministic Information Bottleneck with Matrix-Based Entropy Functional. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing, Toronto, ON, Canada, 6–11 June 2021; pp. 3160–3164. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving Deep into Rectifiers: Surpassing Human-Level Performance on ImageNet Classification. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 1026–1034. [Google Scholar]

- Glorot, X.; Bengio, Y. Understanding the difficulty of training deep feedforward neural networks. In Proceedings of the International Conference on Artificial Intelligence and Statistics, Sardinia, Italy, 13–15 May 2010; pp. 249–256. [Google Scholar]

- Paszke, A.; Gross, S.; Chintala, S.; Chanan, G.; Yang, E.; DeVito, Z.; Lin, Z.; Desmaison, A.; Antiga, L.; Lerer, A. Automatic Differentiation in PyTorch. 2017. Available online: https://pytorch.org (accessed on 1 January 2023).

- Ioffe, S.; Szegedy, C. Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift. In Proceedings of the International Conference on Machine Learning, Lille, France, 6–11 July 2015; pp. 448–456. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wickstrøm, K.K.; Løkse, S.; Kampffmeyer, M.C.; Yu, S.; Príncipe, J.C.; Jenssen, R. Analysis of Deep Convolutional Neural Networks Using Tensor Kernels and Matrix-Based Entropy. Entropy 2023, 25, 899. https://doi.org/10.3390/e25060899

Wickstrøm KK, Løkse S, Kampffmeyer MC, Yu S, Príncipe JC, Jenssen R. Analysis of Deep Convolutional Neural Networks Using Tensor Kernels and Matrix-Based Entropy. Entropy. 2023; 25(6):899. https://doi.org/10.3390/e25060899

Chicago/Turabian StyleWickstrøm, Kristoffer K., Sigurd Løkse, Michael C. Kampffmeyer, Shujian Yu, José C. Príncipe, and Robert Jenssen. 2023. "Analysis of Deep Convolutional Neural Networks Using Tensor Kernels and Matrix-Based Entropy" Entropy 25, no. 6: 899. https://doi.org/10.3390/e25060899

APA StyleWickstrøm, K. K., Løkse, S., Kampffmeyer, M. C., Yu, S., Príncipe, J. C., & Jenssen, R. (2023). Analysis of Deep Convolutional Neural Networks Using Tensor Kernels and Matrix-Based Entropy. Entropy, 25(6), 899. https://doi.org/10.3390/e25060899