Abstract

Metaheuristic optimization algorithms play an essential role in optimizing problems. In this article, a new metaheuristic approach called the drawer algorithm (DA) is developed to provide quasi-optimal solutions to optimization problems. The main inspiration for the DA is to simulate the selection of objects from different drawers to create an optimal combination. The optimization process involves a dresser with a given number of drawers, where similar items are placed in each drawer. The optimization is based on selecting suitable items, discarding unsuitable ones from different drawers, and assembling them into an appropriate combination. The DA is described, and its mathematical modeling is presented. The performance of the DA in optimization is tested by solving fifty-two objective functions of various unimodal and multimodal types and the CEC 2017 test suite. The results of the DA are compared to the performance of twelve well-known algorithms. The simulation results demonstrate that the DA, with a proper balance between exploration and exploitation, produces suitable solutions. Furthermore, comparing the performance of optimization algorithms shows that the DA is an effective approach for solving optimization problems and is much more competitive than the twelve algorithms against which it was compared to. Additionally, the implementation of the DA on twenty-two constrained problems from the CEC 2011 test suite demonstrates its high efficiency in handling optimization problems in real-world applications.

1. Introduction

Optimization is the process by which the best possible solution to a problem is identified. Each optimization problem can be modeled using three main components: variables, constraints, and objective functions [1]. With the advancement of science and technology, optimization problems have become more complex and require effective methods. Stochastic algorithms are effective methods for solving optimization problems by randomly scanning the search space and using random operators. These algorithms first generate a population of solvable solutions to a given problem and then improve those solutions in an iterative process to eventually converge on a suitable solution [2].

The best solution to an optimization problem is called the global optimum. However, there is no guarantee that the algorithms will precisely provide such an optimum. For this reason, the solution obtained from the optimization algorithm for a problem is called a quasi-optimal, which may or may not be equal to the global optimum [3].

Scientists have introduced numerous algorithms to provide better quasi-optimal solutions to optimization problems than the existing algorithms. These optimization algorithms are employed in various fields in the literature, such as training an artificial neural network [4,5], cyber-physical systems [6], energy and energy carriers [7,8,9], and electrical engineering [10,11,12,13,14,15], to solve the problem and achieve a better quasi-optimal solution.

With the advancement of computer processing capabilities in recent years, there has been a chance to study engineering problems more precisely and thoroughly, considering numerous uncertainties and constraints. Assuming diverse constraints and increasing the number of variables in various engineering challenges necessitates using more powerful problem-solving approaches. Metaheuristic algorithms are effective tools for modern researchers and engineers, as seen in numerous implementations [16]. Metaheuristic algorithms could be employed if an engineering problem can be algorithmically described, precisely defined, and parameterized. Solving real-world problems very often requires connecting several disciplines within the optimization procedure. The advances in metaheuristic algorithm studies continuously push the boundary of application feasibility, making the optimization processes more efficient and accurate. Metaheuristic algorithms are higher-level tools that can positively and decently impact any engineering problem [17].

Based on their fundamental design ideas, optimization algorithms can be divided into five groups: swarm-based, evolutionary-based, physics-based, game-based, and human-based approaches.

Swarm-based optimization algorithms are based on modeling observations in nature, behavior, and the life of animals, insects, and other living organisms. Particle swarm optimization (PSO) is a widely used algorithm based on simulating the behavior of a group of birds and fish [18]. The ant colony optimization (ACO) algorithm has been developed considering the behavior of ants in finding the shortest path to food sources [19]. The artificial bee colony (ABC) algorithm [20] is a nature-inspired optimization approach inspired by the collective behavior and intelligent foraging of honey bee colonies.

The krill herd (KH) algorithm simulates the herding behavior of krill [21]. Gray wolf optimization (GWO) is developed by simulating the natural behavior of gray wolves in hierarchical prey hunting [22]. The egret swarm optimization algorithm (ESOA) was proposed based on the simulation of two egret species’ hunting behavior (great egret and snowy egret) [23]. The beetle antennae search (BAS) was inspired by the food-foraging behavior of beetles [24]. Some other swarm-based approaches are the serval optimization algorithm (SOA) [25], subtraction-average-based optimizer (SABO) [26], marine predators algorithm (MPA) [27], coati optimization algorithm (COA) [28], tunicate swarm algorithm (TSA) [29], orchard algorithm (OA) [30], social spider optimizer (SSO) [31], emperor penguin optimizer (EPO) [32], lion optimization algorithm (LOA) [33], cuckoo search (CS) [34], green anaconda optimizer (GOA) [35], two-stage optimizer (TSO) [36], manta ray foraging optimizer (MRFO) [37], mountain gazelle optimizer (MGO) [38], and two-stage improved gray wolf optimizer (IGWO) [39].

Evolutionary-based optimization algorithms are introduced using random and evolutionary operators such as selection and simulation of genetic and biological sciences. The genetic algorithm (GA), one of the most famous optimization approaches to date, belongs to this group. In the design of the GA, modeling of the reproductive process with selection, crossover, and mutation operators is used [40]. Some other evolutionary algorithms are evolution strategy (ES) [41], biogeography-based optimizer (BBO) [42], genetic programming (GP) [43], and differential evolution (DE) [44].

Physics-based optimization algorithms are inspired by various physical laws and phenomena. The simulated annealing (SA) algorithm is one of the oldest physics-based methods, introduced according to the modeling of the metal melting process in metallurgy. The gravitational search algorithm (GSA) is based on gravitational force modeling and Newtonian laws of motion [45]. The turbulent flow of water-based optimization (TFWO) [46] is designed on abnormal oscillations in water turbulent flow. The thermal exchange optimizer (TEO) [47] is a technique that draws inspiration from Newton’s law of cooling. Some other physics-based approaches are the galaxy-based search algorithm (GbSA) [48], black hole (BH) [49], ray optimizer (RO) [50], big bang-big crunch (BB-BC) [51], small world optimization algorithm (SWOA) [52], magnetic optimization algorithm (MOA) [53], and artificial chemical reaction optimization algorithm (ACROA) [54].

Game-based optimization algorithms are developed according to the relationships and behavior of players in games, game rules, coaches’ movements, and referees. For example, the league championship algorithm (LCA) [55] and football game-based optimizer (FGBO) [56] are based on simulating players’ behavior and the interactions of football clubs during a tournament season. The volleyball premier league (VPL) algorithm was developed based on interaction of volleyball teams during one season [57]. Some other game-based algorithms are an improved football game optimizer (IFGO) [58], the running city game optimizer (RCGO) [59], Nash game-efficient global optimizer (Nash-EGO) [60], and a generalized soccer league optimizer (SLO) [61].

Human-based optimization algorithms are introduced and inspired by humans’ behaviors, choices, thoughts, decisions, and other strategies in their personal and social life. The teaching-learning-based optimizer (TLBO) [62] can be mentioned as one of this group’s most famous and widely used algorithms. TLBO is designed based on modeling communication and educational interactions between teachers and students, as well as students with each other in the classroom environment, to improve the level of classroom knowledge. Therapeutic interactions between doctors and patients to examine and treat patients are employed in the design of the doctor and patient optimizer (DPO) [63]. The process of voting in elections has been the main idea in the design of the election based optimization algorithm (EBOA) [64]. Some other human-based approaches are the teamwork optimization algorithm (TOA) [65], driving training-based optimizer (DTBO) [66], archery algorithm (AA) [67], group optimizer (GO) [68], and following optimization algorithm (FOA) [69].

The main research question is whether, once new metaheuristic algorithms have been designed, is there still a need to introduce a newer algorithm to deal with optimization problems or not? In response to this question, the no free lunch (NFL) [70] theorem explains that the high success of a particular algorithm in solving a set of optimization problems will not guarantee the same performance of that algorithm in other optimization problems. It cannot be assumed that implementing an algorithm on an optimization problem will necessarily lead to successful results. According to the NFL theorem, no metaheuristic algorithm is the best optimizer for solving all the corresponding problems. The NFL theorem motivates researchers to search for better solutions for optimization problems by designing newer metaheuristic algorithms. The NFL theorem has also inspired the authors of this article to provide more effective solutions in dealing with optimization problems by creating a new metaheuristic algorithm.

The novelty and innovation of the present study lie in designing a new human-based approach called the drawer algorithm (DA) for optimization applications. The main contributions of this article are stated as follows:

- A new metaheuristic algorithm is presented, motivated by people maintaining order in commode drawers.

- The DA is modeled by simulating the process of selecting the appropriate objects from different drawers to create an optimal combination.

- The DA’s performance is tested on fifty-two benchmark functions of unimodal, high-dimensional, fixed-dimensional multimodal types and the CEC 2017 test suite.

- The DA’s results are compared with the performance of twelve well-known metaheuristic algorithms.

- The efficiency of the DA in handling real-world applications is tested on twenty-two constrained optimization problems from the CEC 2011 test suite.

The rest of the article is organized as follows. In Section 2, the DA is introduced and modeled. Then, the simulation studies are presented in Section 3. Next, the performance of the DA in solving real-world applications is evaluated in Section 4. Finally, conclusions and suggestions for further studies in line with this work are provided in Section 5.

2. Drawer Algorithm

This section introduces the DA and its mathematical model. As mentioned, the main inspiration for designing the DA is to simulate the process of selecting objects from the drawers of a cupboard to form an optimal combination. In the DA, it is assumed that several drawers are available, each containing a certain number of objects. One object must be picked from each drawer to create a proper combination of objects inside the drawers. Picking up the appropriate objects from the drawers and putting them together is an optimization process that can inspire the design of an algorithm.

2.1. Originality of the DA

Human-based algorithms were presented in the introduction section as one of the classes of optimizers in grouping based on the source of inspiration in design. Many human behaviors are intelligent processes that can be the basis for designing new optimization algorithms. One of the intelligent behaviors of humans in life is their attempt to pick up the objects they want from the drawers of a closet. For example, this can be to choose a suitable style of clothing. In this case, it is assumed that in each drawer of the cabinet, a person faces different choices for each type of clothing: a drawer for watches, a drawer for jewelry, a drawer for shoes, a drawer for ties, a drawer for hats, etc. Therefore, choosing a suitable clothing style from cabinet drivers is a smart strategy with extraordinary potential for designing an optimization algorithm. Based on the best knowledge obtained from the literature review, the originality of the proposed approach was confirmed because no optimization algorithm has been designed based on modeling the strategy of humans in choosing objects from closet drawers. Thus, to address such a research gap, in this paper, a human-based optimizer called DA has been developed based on the mathematical modeling of human strategy in selecting the desired objects from the wardrobe drawers. The unique characteristics of the DA are as follows:

- In the design of the DA, the strong dependence of the population update process on specific members of the population, such as the best member, is avoided. This feature makes it possible to prevent the algorithm from getting stuck in local optima by increasing the ability to search for places exploring and directing the algorithm to the main optimal area in the search space.

- Stagnation in optimization algorithms occurs when all population members are gathered at the same position. In this case, all members of the population become similar. If the algorithm cannot remove the population from this condition, the update process will not be successful. In the DA design, using a random combination of population members in the updating process by making extensive changes in the position of the members can bring the algorithm out of the static state.

- In the design of the DA, it is assumed that the number of objects inside the drawers decreases during successive iterations of the algorithm. These conditions lead to a balance between exploration and exploitation in the search space. At the beginning of the implementation of the algorithm, the number of objects is at its maximum, which can lead to large changes in the position of the population members with the possibility of making more combinations of drawers. Hence, in the initial iterations, priority is given to global search and exploration as an all-around search in the problem-solving space to identify the main optimal region. Then, by increasing the iterations of the algorithm, the number of objects inside the drawers decreases, resulting in fewer combinations of drawers. These conditions lead to limited movements in the position of population members. Therefore, by increasing the iterations of the algorithm, priority is given to local search and exploitation so that the algorithm converges toward better solutions in promising areas.

2.2. Mathematical Modeling

The DA is a metaheuristic approach that solves optimization problems iteratively. During each iteration, the population members of the DA scan the search space of the problem to converge toward the optimal solution.

The population of the algorithm can be modeled using a matrix that is specified as

where is the population matrix of the DA, is the number of population members, is the number of variables, with being the th proposed solution, and being its th component (the th variable of the problem). In the beginning, all population members must be randomly initialized by means of

where is the value of the th variable determined by the th DA’s member , the function generates uniformly a random number in the interval , whereas and are the lower and upper bounds of the th problem variable, respectively.

Based on the population of the algorithm that is the proposed solution to the given problem, the objective function (with variables) can be evaluated. The values obtained for the objective function are shown using an expression given by

where is the vector of the obtained objective function values, and is the value of the objective function for the th proposed solution. Thus, with

The main concept behind updating the population matrix in the DA is to utilize a carefully selected combination of drawers containing variable information. Specifically, the DA assumes the presence of a commode with the same number of drawers as variables exist in the optimization problem. Each drawer in the commode contains different suggested values for the corresponding variable. The commode and drawers can be mathematically modeled using the equations formulated as

where is the drawer matrix, is the vector of values in the th drawer, for represents the usual mathematical ceiling function, is the total number of iterations, is the number of drawers in the th iteration, and is the corresponding element of the th column of the th row, where is the random function, which generates uniformly a random number from the set .

Metaheuristic algorithms based on random search in the corresponding space are able to find suitable solutions for optimization problems. Additionally, to provide effective search, metaheuristic algorithms must be able to scan the search space well at two levels: (i) globally with the concept of exploration and (ii) locally with the concept of exploitation.

In the DA design, choosing a random combination and using it in the update process leads to large population displacements in the search space and thus increases the exploration power. In addition, in the DA design, the number of proposed values for each variable in each drawer decreases according to Equation (4) during the iterations of the algorithm. This leads to smaller displacements increasing the exploitation power of the algorithm.

Equation (4) is selected so that, in the initial iterations, the number of suggested values for each variable is at the maximum value to increase exploration. Then, it decreases during the iterations of the algorithm to prioritize the exploitation ability. Therefore, in the DA design, the balance has been established between exploration and exploitation during the iterations of the algorithm.

In the DA, a random combination created by values from drawers is used to update each member of the population. This random combination directs the population members into the search space. The process of forming a random combination of drawers is such that exactly one value is selected from each drawer, which is considered the value of a problem variable. Then, these selected values from the drawers together produce a random combination to guide the population member. The process of forming this random combination is presented as

where is the random combination to guide the th population member, is its th dimension, and is the corresponding element of the th row of the th column of the matrix , with being a function that generates a random number from the set .

After determining the random composition, each population member is updated in the search space using the expressions presented as

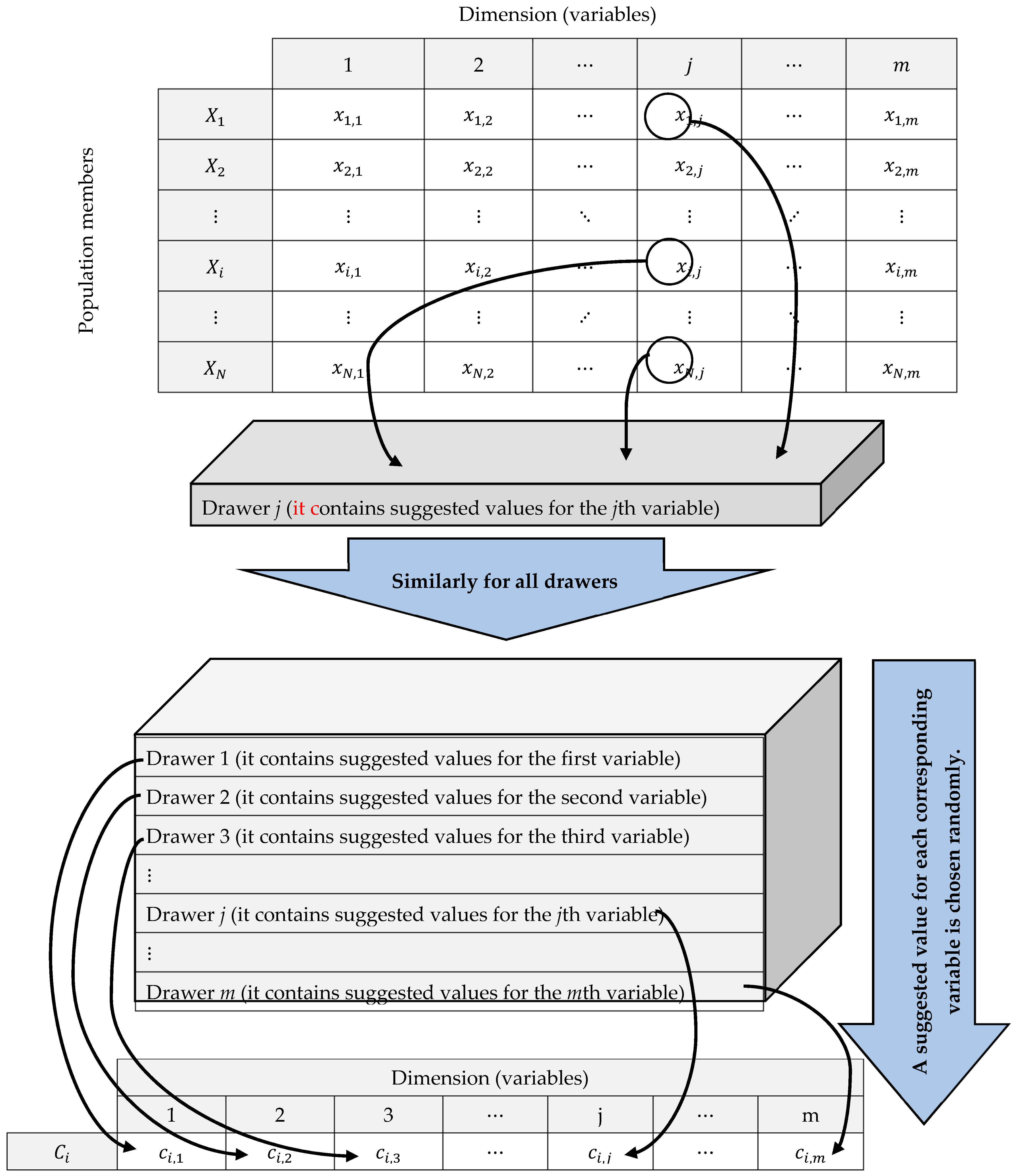

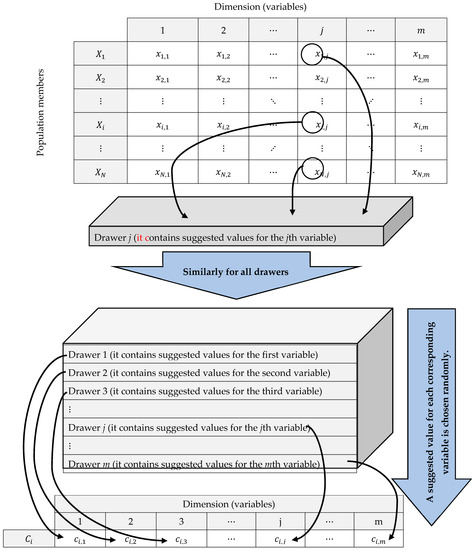

where is the new status of the th proposed solution, is its th dimension, is its objective function value, and is the objective function of random combination to guide the th population member. The process of forming the drawers, as well as the way of creating a random combination to guide each population member, is shown as a schematic representation in Figure 1.

Figure 1.

DA schematic: Forming drawers and random combination construction for population update.

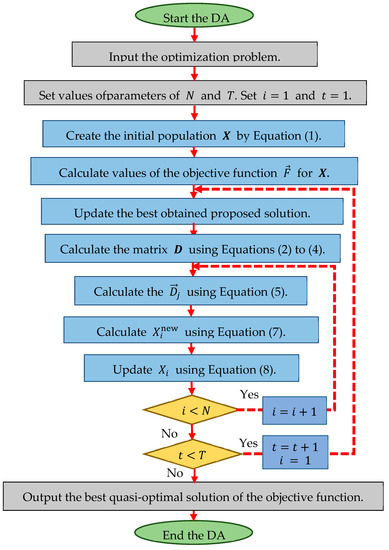

After updating the population, an iteration of the algorithm is completed. The process of updating the algorithm population continues until the end of its iterations, according to Equations (4)–(8). Algorithm 1 presents the pseudo-code of the DA, and Figure 2 shows the corresponding flowchart.

| Algorithm 1. Pseudocode of the DA. | ||

| Start DA. | ||

| 1. | Input: the optimization problem. | |

| 2. | Set the number of iterations and the number of members of the population . | |

| 3. | Generate the initial population at random by Equation (1). | |

| 4. | Evaluate the initial population (compute by Equation (2)). | |

| 5. | For | |

| 6. | Update the best proposed solution. | |

| 7. | Calculate the drawer matrix based on Equations (3)–(5). | |

| 8. | For | |

| 9. | Generate a random combination based on Equation (6). | |

| 10. | Calculate a new status of population member based on Equation (7). | |

| 11. | Update the th population member using Equation (8). | |

| 12. | end | |

| 13. | Save the best proposed solution so far. | |

| 14. | end | |

| 15. | Output: the best obtained proposed solution. | |

| End DA. | ||

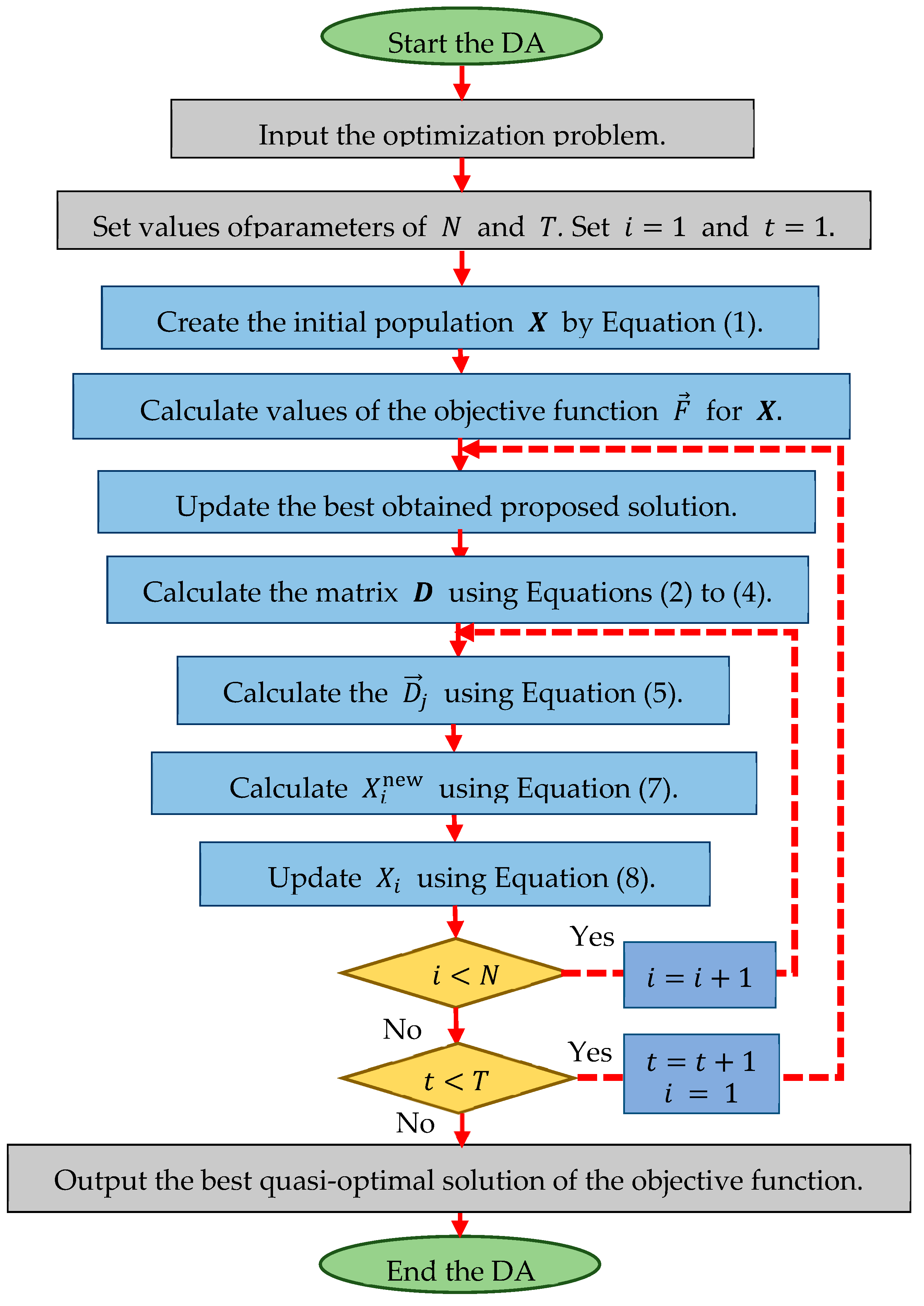

Figure 2.

Flowchart of the DA.

2.3. Computational Complexity

Based on the DA phases and implementation steps, the computational complexity of the proposed approach is as follows: DA initialization for solving an optimization problem based on m decision variables has a complexity of , where is the number of search agents. Furthermore, updating search agents has a complexity equal to , with being the total number of iterations of the algorithm. Therefore, the total computational complexity of the DA is equal to .

3. Simulation Studies and Results

In this section, the ability of the DA to solve optimization problems is studied. Various objective functions of unimodal, high-dimensional multimodal, and fixed-dimensional multimodal types and the CEC 2017 test suite [71] have been used to evaluate the proposed approach. The DA is compared with twelve algorithms: GA, PSO, GSA, TLBO, GWO, MVO, WOA, MPA, TSA, RSA, WSO, and AVOA. The values of the control parameters of the competitor algorithms are specified in Table 1.

Table 1.

Control parameter values.

The DA and each of the competing algorithms were implemented in twenty independent runs on the benchmark functions, where each independent run consisted of 1000 iterations. Optimization results were reported using six indicators: mean, best, worst, standard deviation (std), median, and rank. The ranking criterion for metaheuristic algorithms was based on providing a better value for the mean index.

3.1. Evaluation of Unimodal Functions

The results of optimizing the unimodal objective functions using the DA and twelve other algorithms are presented in Table 2. Based on the optimization results, the DA provided the best solution to the problem, that is, global optima, when solving F1, F2, F3, F4, F5, F6, and F7. The simulation results show that the DA significantly outperforms the other twelve algorithms.

Table 2.

Optimization results for unimodal functions (F1–F8).

3.2. Evaluation of High-Dimensional Multimodal Functions

The DA and twelve other algorithms were implemented on the functions F8 to F13, and the results are presented in Table 3. The analysis of this table shows that the DA provides the optimal solution for F9 and F11. The DA is also the best optimizer for solving F8, F10, F12, and F13. The optimization results indicate the superiority of the DA compared to the twelve other algorithms.

Table 3.

Optimization results for high-dimensional multimodal functions (F8–F13).

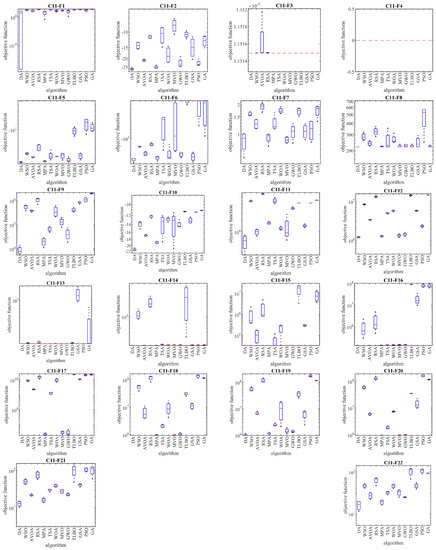

3.3. Evaluation of Fixed-Dimensional Multimodal Functions

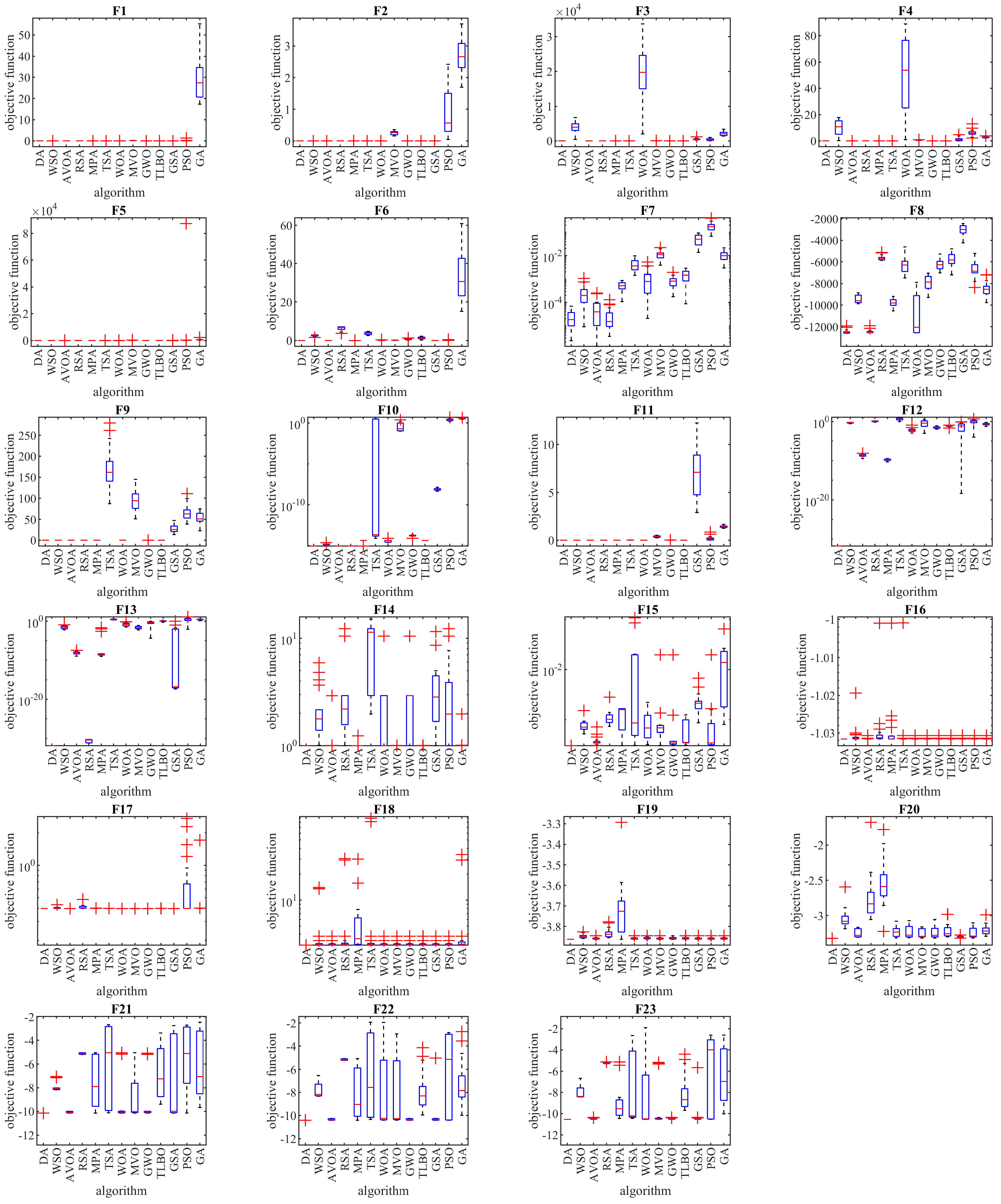

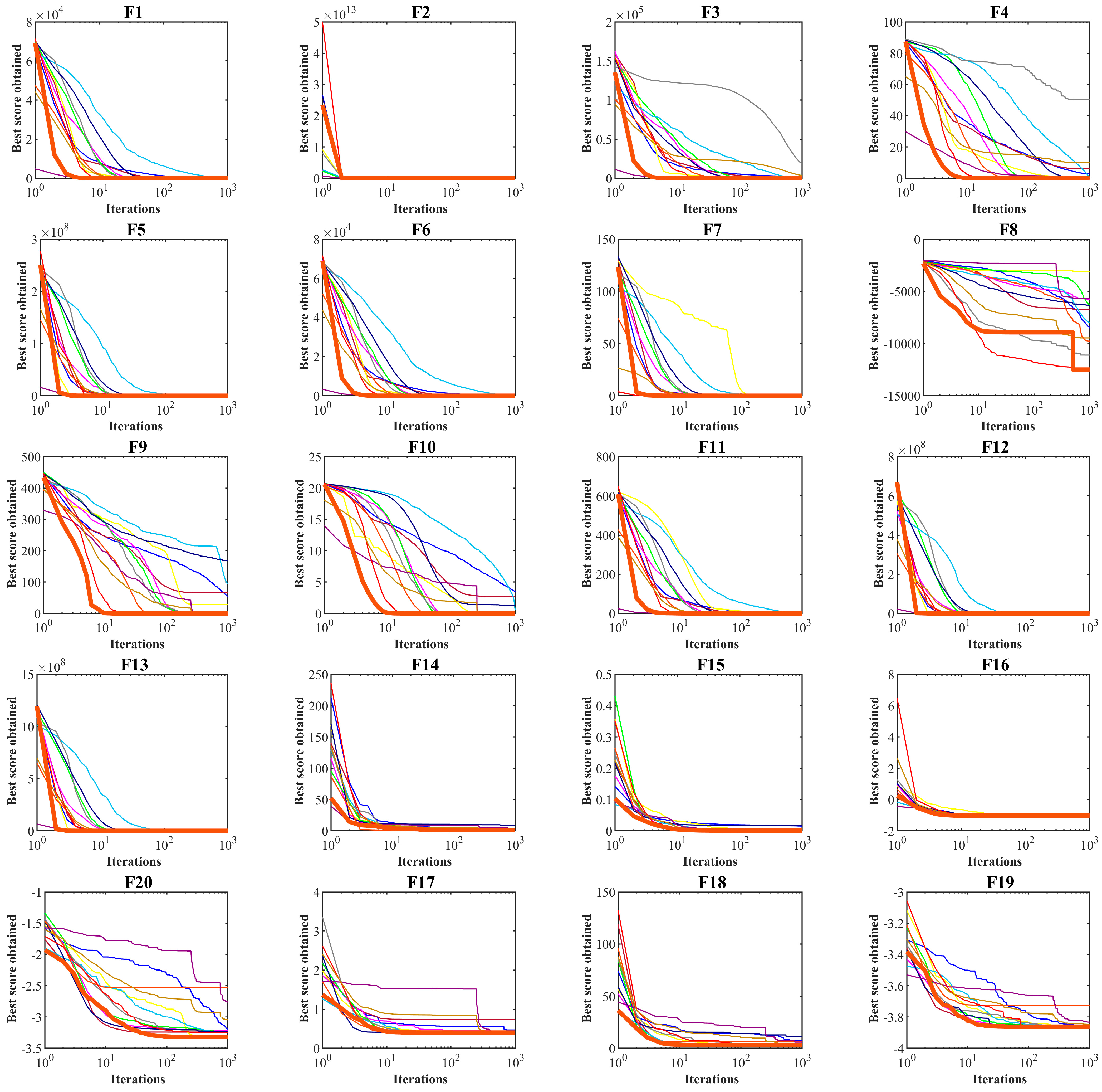

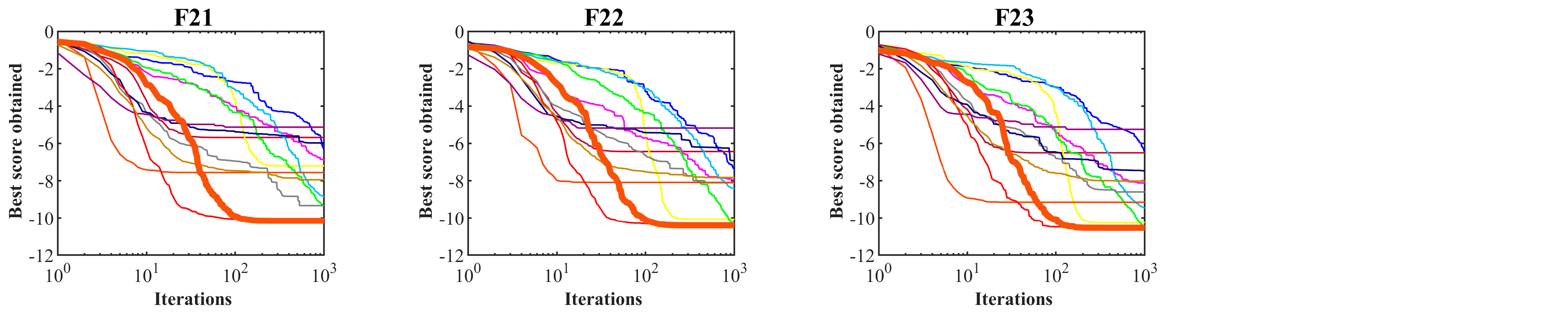

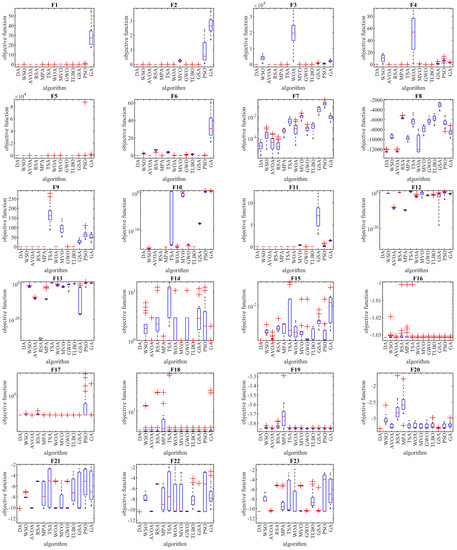

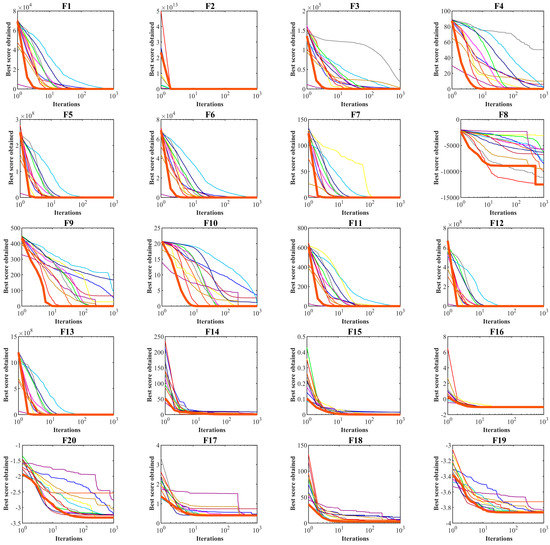

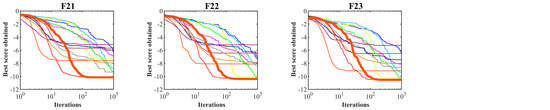

The results of optimizing F14 to F23 functions using the DA and twelve compared algorithms are presented in Table 4. This table shows that the DA presents the global optimum for F17. The DA is the best optimizer in solving F14, F15, F16, F18, F19, F20, F21, F22, and F23. Comparing the performance of optimization algorithms against DA indicates the high ability of the DA to solve multimodal problems. The performance of the DA and competitor algorithms in solving functions F1 to F23 is presented in the boxplots of Figure 3. The convergence curves of the DA and competing algorithms while solving algorithm iterations are drawn in Figure 4.

Table 4.

Optimization results for high-dimensional multimodal functions (F14–F23).

Figure 3.

Boxplots of the performance of the DA and competitor algorithms on F1 to F23 test functions.

Figure 4.

Convergence curves of the DA and competitor algorithms on F1 to F23 test functions.

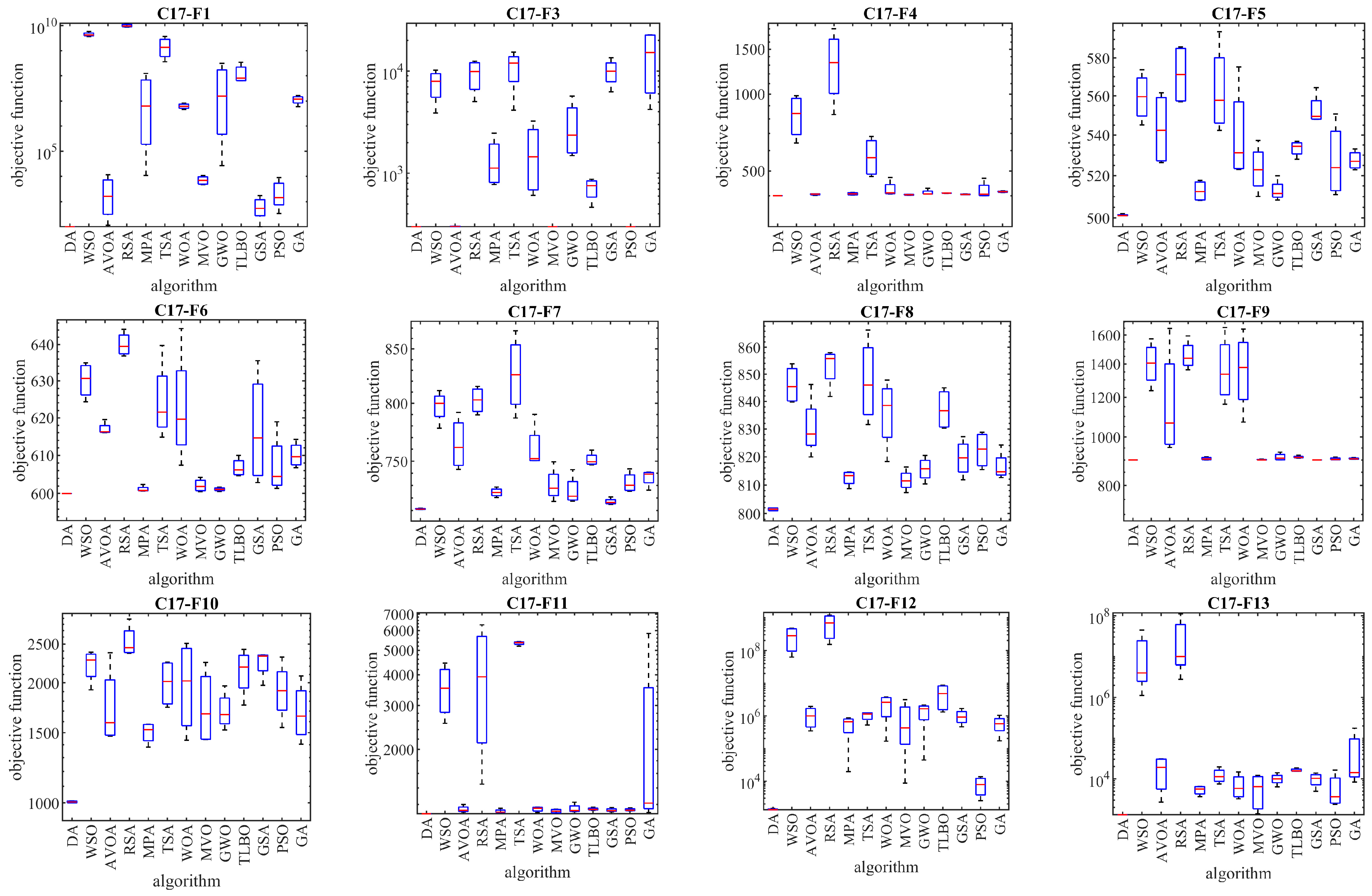

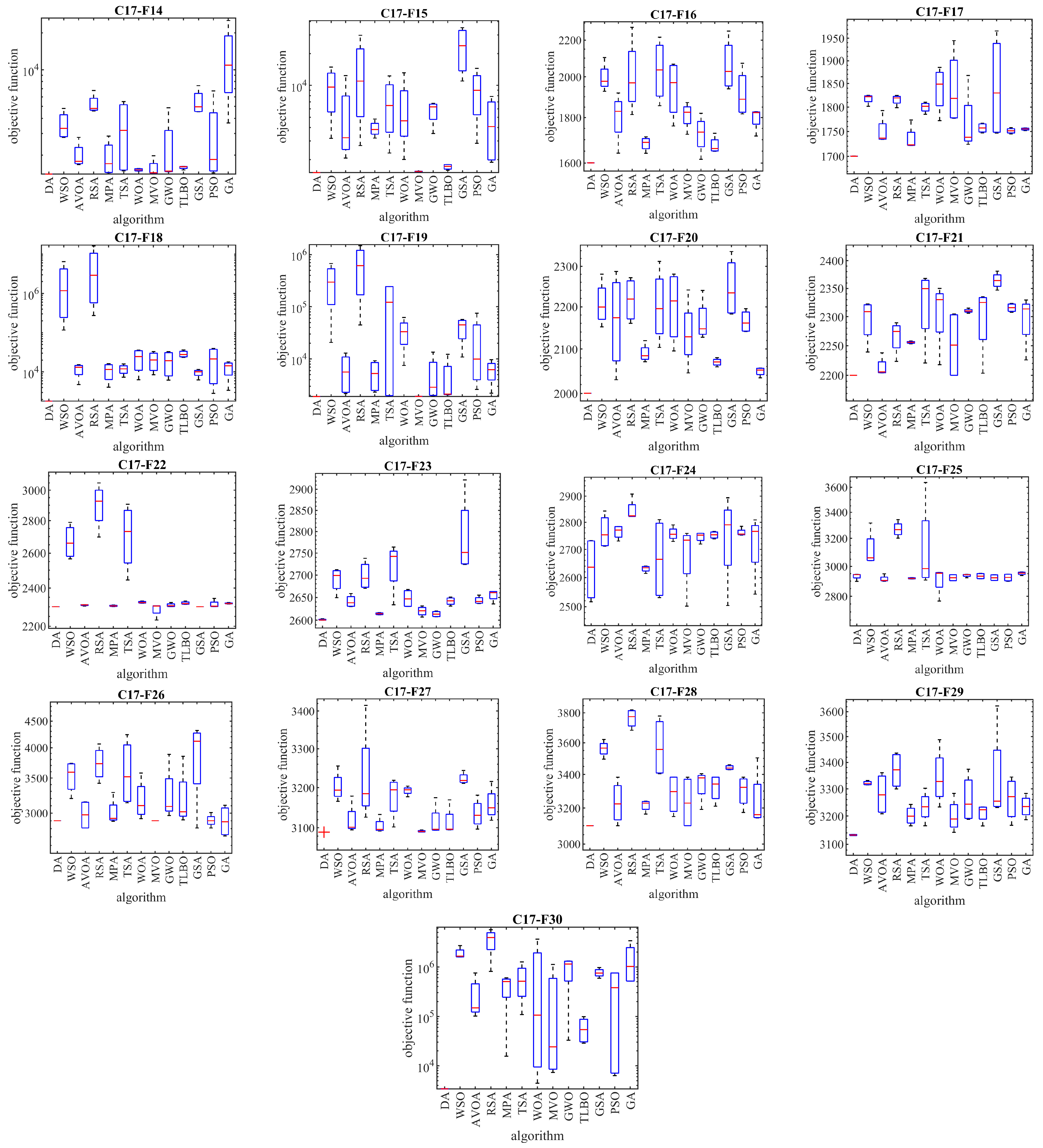

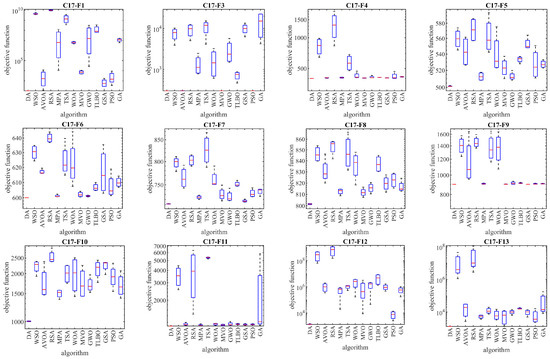

3.4. Evaluation of the CEC 2017 Test Suite

Next, the evaluation of the DA approach in dealing with optimization tasks on the CEC 2017 test suite is discussed. This test suite has thirty standard benchmark functions, consisting of three unimodal functions C17-F1 to C17-F3, seven multimodal functions C17-F4 to C17-F10, ten hybrid functions C17-F11 to C17-F20, and ten hybrid functions C17-F21 to C17-F30. Of these functions, the C17-F2 function was excluded from the simulation studies due to its unstable behavior. Complete information on the CEC 2017 test suite is provided in [63]. The optimization results for the CEC 2017 test suite using DA and competitor algorithms are reported in Table 5. The boxplots obtained from the performance of the optimization algorithms in handling the CEC 2017 test suite are drawn in Figure 3. Based on the simulation results, the DA is the first best optimizer for C17-F1, C17-F3 to C17-F21, C17-F23, C17-F24, and C17-F27 to C17-F30. Analysis of the simulation results shows that the DA, by providing better results in most benchmark functions of the CEC 2017 test suite, indicated superior performance compared to competitor algorithms Figure 5.

Table 5.

Optimization results for the CEC 2017 test suite.

Figure 5.

Boxplots of the performance of the DA and competitor algorithms on the CEC 2017 test suite.

3.5. Statistical Analysis

The simulation results based on the mean and standard deviation have already demonstrated the superior performance of the DA compared to the twelve competitor algorithms.

Now, we conduct a statistical analysis to determine whether the superiority of the DA over the other twelve algorithms is statistically significant. To this end, the non-parametric Wilcoxon signed-rank test [72] is employed. The statistical analysis results are presented in Table 6, where a -value indicates whether the difference in performance between the DA and a competitor algorithm is statistically significant. If a -value is less than 0.05, then the DA shows an important advantage over the corresponding algorithm in terms of statistical significance.

Table 6.

Wilcoxon signed-rank test results.

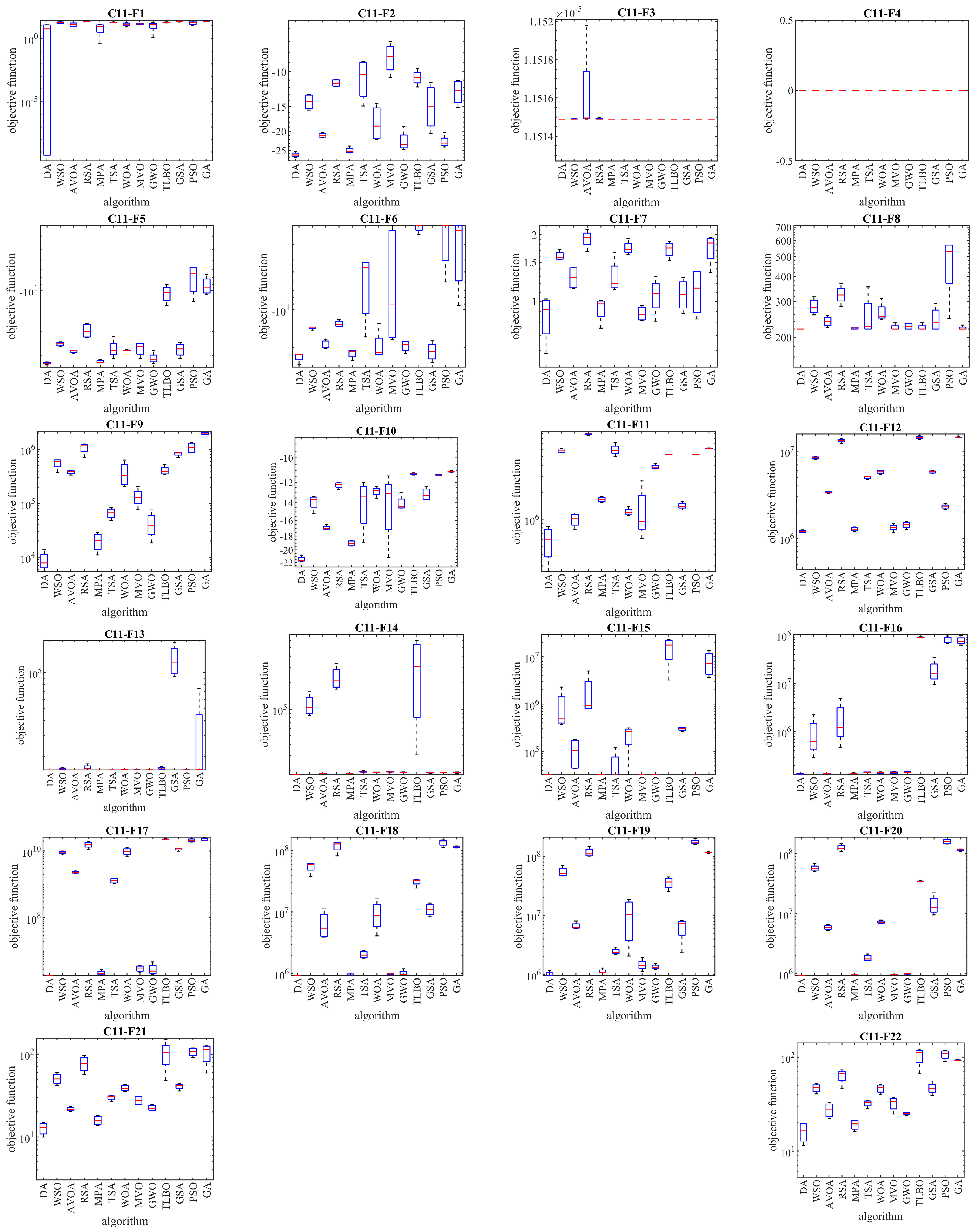

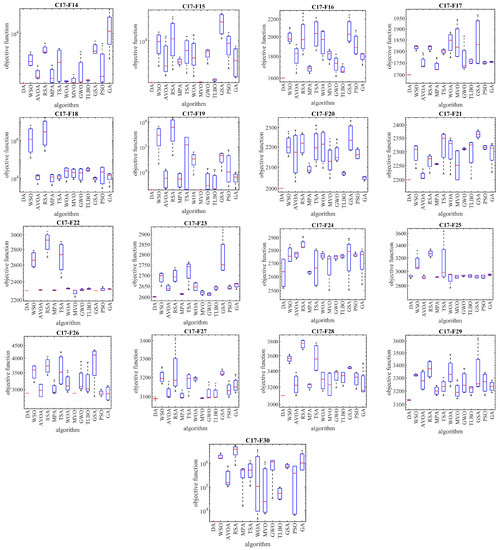

4. DA for Real-World Applications

In this section, we examine the effectiveness of the DA in tackling optimization problems in real-world applications. We apply the DA and competing algorithms to solve twenty-two optimization problems from the CEC 2011 test suite to accomplish this. These optimization problems include parameter estimation for frequency-modulated sound waves, the Lennard-Jones potential problem, the bifunctional catalyst blend optimal control problem, optimal control of a nonlinear stirred tank reactor, the Tersoff potential for the model Si (B), the Tersoff potential for the model Si (C), spread spectrum radar polyphase code design, transmission network expansion planning problem, large-scale transmission pricing problem, circular antenna array design problem, and the electronic logging device (ELD) problems (which consist of DED instance 1, DED instance 2, ELD instance 1, ELD instance 2, ELD instance 3, ELD instance 4, ELD instance 5, hydrothermal scheduling instance 1, hydrothermal scheduling instance 2, and hydrothermal scheduling instance 3), the Messenger spacecraft trajectory optimization problem, and the Cassini 2 spacecraft trajectory optimization problem. A full description of the CEC 2011 test suite is provided in [73]. The implementation results for the DA and competing algorithms on the CEC 2011 test suite are presented in Table 7. The boxplots obtained from the performance of the metaheuristic algorithms in solving this test suite are shown in Figure 6. Based on the simulation results, we find that the DA outperforms all other optimizers for C11-F1 to C11-F22. Analysis of the optimization results reveals that the DA, which provides better results for most optimization problems, demonstrates superior performance in handling the CEC 2011 test suite compared to the competing algorithms. Statistical analysis indicates that the superiority of the DA over competing algorithms is significant from a statistical standpoint. The simulation results demonstrate the high ability of the DA to handle optimization problems in real-world applications.

Table 7.

Optimization results for the CEC 2011 test suite.

Figure 6.

Boxplots of the performance of the DA and competitor algorithms on the CEC 2011 test suite.

5. Conclusions and Future Works

This article proposed a new metaheuristic approach called the drawer algorithm to solve optimization problems effectively. The main inspiration for this algorithm comes from the simulation of bringing out objects from different commode drawers and creating a suitable combination of those objects. First, the drawer algorithm was introduced, and then its mathematical modeling was studied. The ability of the drawer algorithm in optimization was tested by solving fifty-two objective functions, including unimodal functions, high-dimensional multimodal functions, fixed-dimensional multimodal functions, and the CEC 2017 test suite. The results of optimizing the unimodal functions indicated the high exploitation power of the proposed algorithm in solving problems. The optimization of multimodal function results showed that the drawer algorithm provides suitable quasi-optimal solutions by striking a suitable balance between exploration and exploitation. In addition, the drawer algorithm was compared with the results for twelve well-known algorithms. The simulation results showed that the drawer algorithm has a higher ability to optimize than similar algorithms and is much more competitive. Furthermore, implementing the drawer algorithm on twenty-two constrained optimization problems from the CEC 2011 test suite demonstrated the proposed approach’s precise capability in dealing with real-world applications.

We offer suggestions for future work related to the design of binary and multi-objective versions of the drawer algorithm. Additionally, applications of the drawer algorithm to solve optimization problems in various cyber-physical systems and real-world problems to achieve optimal solutions are other suggestions for future research.

Author Contributions

Conceptualization, E.T. and V.L.; data curation, E.T.; formal analysis, E.T., M.D. and V.L.; investigation, E.T.; methodology, M.D. and V.L.; project administration, E.T.; supervision, M.D.; software, M.D. and E.T.; validation, M.D. and V.L.; visualization, E.T. and V.L.; writing—original draft preparation, M.D. and E.T.; writing—review and editing, E.T. and V.L. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Data Availability Statement

Not applicable.

Acknowledgments

E.T. and M.D. thank the Project of Excellence of the Faculty of Science, University of Hradec Králové, No. 2209/2023-2024 for the support.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Clerc, M. Particle Swarm Optimization; Wiley-ISTE: London, UK, 2006. [Google Scholar]

- Yang, X.-S. Nature-Inspired Algorithms and Applied Optimization; Springer International Publishing AG: New York, NY, USA, 2017. [Google Scholar]

- Iba, K. Reactive power optimization by genetic algorithm. IEEE Trans. Power Syst. 1994, 9, 685–692. [Google Scholar] [CrossRef]

- Mirjalili, S.; Sadiq, A.S. Magnetic Optimization Algorithm for training Multi Layer Perceptron. In Proceedings of the 2011 IEEE 3rd International Conference on Communication Software and Networks, Xi’an, China, 27–29 May 2011; pp. 42–46. [Google Scholar]

- Mirjalili, S.; Hashim, S.Z.M.; Sardroudi, H.M. Training feedforward neural networks using hybrid particle swarm optimization and gravitational search algorithm. Appl. Math. Comput. 2012, 218, 11125–11137. [Google Scholar] [CrossRef]

- Yi, N.; Xu, J.; Yan, L.; Huang, L. Task optimization and scheduling of distributed cyber–physical system based on improved ant colony algorithm. Future Gener. Comput. Syst. 2020, 109, 134–148. [Google Scholar] [CrossRef]

- Rezk, H.; Fathy, A.; Aly, M.; Ibrahim, M.N.F. Energy management control strategy for renewable energy system based on spotted hyena optimizer. Comput. Mater. Contin. 2021, 67, 2271–2281. [Google Scholar] [CrossRef]

- Akbari, E.; Ghasemi, M.; Gil, M.; Rahimnejad, A.; Gadsden, S.A. Optimal Power Flow via Teaching-Learning-Studying-Based Optimization Algorithm. Electr. Power Compon. Syst. 2021, 49, 584–601. [Google Scholar] [CrossRef]

- Adhvaryyu, P.K.; Chattopadhyay, P.K.; Bhattacharjya, A. Application of bio-inspired krill herd algorithm to combined heat and power economic dispatch. In Proceedings of the 2014 IEEE Innovative Smart Grid Technologies—Asia (ISGT ASIA), Kuala Lumpur, Malaysia, 20–23 May 2014; pp. 338–343. [Google Scholar]

- Panda, M.; Nayak, Y.K. Impact analysis of renewable energy Distributed Generation in deregulated electricity markets: A context of Transmission Congestion Problem. Energy 2022, 254, 124403. [Google Scholar] [CrossRef]

- Kottath, R.; Singh, P. Influencer buddy optimization: Algorithm and its application to electricity load and price forecasting problem. Energy 2023, 263, 125641. [Google Scholar] [CrossRef]

- Xing, Z.; Zhu, J.; Zhang, Z.; Qin, Y.; Jia, L. Energy consumption optimization of tramway operation based on improved PSO algorithm. Energy 2022, 258, 124848. [Google Scholar] [CrossRef]

- Montazeri, Z.; Niknam, T. Optimal utilization of electrical energy from power plants based on final energy consumption using gravitational search algorithm. Electr. Eng. Electromechanics 2018, 4, 70–73. [Google Scholar] [CrossRef]

- Song, Y.H.; Xuan, Q.Y. Combined heat and power economic dispatch using genetic algorithm based penalty function method. Electr. Mach. Power Syst. 1998, 26, 363–372. [Google Scholar] [CrossRef]

- Premkumar, M.; Sowmya, R.; Jangir, P.; Nisar, K.S.; Aldhaifallah, M. A new metaheuristic optimization algorithms for brushless direct current wheel motor design problem. CMC Comput. Mater. Contin. 2021, 67, 2227–2242. [Google Scholar] [CrossRef]

- Carbas, S.; Toktas, A.; Ustun, D. Nature-Inspired Metaheuristic Algorithms for Engineering Optimization Appzlications; Springer: New York, NY, USA, 2021. [Google Scholar]

- Yang, X.-S. Optimization and metaheuristic algorithms in engineering. In Metaheuristics in Water, Geotechnical and Transport Engineering; Elsevier: Amsterdam, The Netherlands, 2013; pp. 1–23. [Google Scholar]

- Kennedy, J.; Eberhart, R. Particle swarm optimization. In Proceedings of the ICNN’95—International Conference on Neural Networks, Perth, WA, Australia, 27 November–1 December 1995; Volume 4, pp. 1942–1948. [Google Scholar]

- Dorigo, M.; Maniezzo, V.; Colorni, A. Ant system: Optimization by a colony of cooperating agents. IEEE Trans. Syst. Man Cybern. Part B 1996, 26, 29–41. [Google Scholar] [CrossRef]

- Karaboga, D.; Basturk, B. A powerful and efficient algorithm for numerical functionoptimization: Artificial bee colony (ABC) algorithm. J. Glob. Optim. 2007, 39, 459–471. [Google Scholar] [CrossRef]

- Gandomi, A.H.; Alavi, A.H. Krill herd: A new bio-inspired optimization algorithm. Commun. Nonlinear Sci. Numer. Simul. 2012, 17, 4831–4845. [Google Scholar] [CrossRef]

- Mirjalili, S.; Mirjalili, S.M.; Lewis, A. Grey wolf optimizer. Adv. Eng. Softw. 2014, 69, 46–61. [Google Scholar] [CrossRef]

- Chen, Z.; Francis, A.; Li, S.; Liao, B.; Xiao, D.; Ha, T.T.; Li, J.; Ding, L.; Cao, X. Egret Swarm Optimization Algorithm: An Evolutionary Computation Approach for Model Free Optimization. Biomimetics 2022, 7, 144. [Google Scholar] [CrossRef]

- Khan, A.H.; Cao, X.; Xu, B.; Li, S. Beetle antennae search: Using biomimetic foraging behaviour of beetles to fool a well-trained neuro-intelligent system. Biomimetics 2022, 7, 84. [Google Scholar] [CrossRef]

- Dehghani, M.; Trojovský, P. Serval Optimization Algorithm: A New Bio-Inspired Approach for Solving Optimization Problems. Biomimetics 2022, 7, 204. [Google Scholar] [CrossRef]

- Trojovský, P.; Dehghani, M. Subtraction-Average-Based Optimizer: A New Swarm-Inspired Metaheuristic Algorithm for Solving Optimization Problems. Biomimetics 2023, 8, 149. [Google Scholar] [CrossRef]

- Faramarzi, A.; Heidarinejad, M.; Mirjalili, S.; Gandomi, A.H. Marine predators algorithm: A nature-inspired metaheuristic. Expert Syst. Appl. 2020, 152, 113377. [Google Scholar] [CrossRef]

- Dehghani, M.; Montazeri, Z.; Trojovská, E.; Trojovský, P. Coati Optimization Algorithm: A new bio-inspired metaheuristic algorithm for solving optimization problems. Knowl. Based Syst. 2023, 259, 110011. [Google Scholar] [CrossRef]

- Kaur, S.; Awasthi, L.K.; Sangal, A.L.; Dhiman, G. Tunicate swarm algorithm: A new bio-inspired based metaheuristic paradigm for global optimization. Eng. Appl. Artif. Intell. 2020, 90, 103541. [Google Scholar] [CrossRef]

- Kaveh, M.; Mesgari, M.S.; Saeidian, B. Orchard Algorithm (OA): A new meta-heuristic algorithm for solving discrete and continuous optimization problems. Math. Comput. Simul. 2023, 208, 95–135. [Google Scholar] [CrossRef]

- Cuevas, E.; Cienfuegos, M.; Zaldívar, D.; Pérez-Cisneros, M. A swarm optimization algorithm inspired in the behavior of the social-spider. Expert Syst. Appl. 2013, 40, 6374–6384. [Google Scholar] [CrossRef]

- Dhiman, G.; Kumar, V. Emperor penguin optimizer: A bio-inspired algorithm for engineering problems. Knowl. Based Syst. 2018, 159, 20–50. [Google Scholar] [CrossRef]

- Yazdani, M.; Jolai, F. Lion Optimization Algorithm (LOA): A nature-inspired metaheuristic algorithm. J. Comput. Des. Eng. 2016, 3, 24–36. [Google Scholar] [CrossRef]

- Yang, X.S.; Deb, S. Engineering optimisation by cuckoo search. Int. J. Math. Model. Numer. Optim. 2010, 1, 330–343. [Google Scholar] [CrossRef]

- Dehghani, M.; Trojovský, P.; Malik, O.P. Green Anaconda Optimization: A New Bio-Inspired Metaheuristic Algorithm for Solving Optimization Problems. Biomimetics 2023, 8, 121. [Google Scholar] [CrossRef]

- Doumari, S.A.; Givi, H.; Dehghani, M.; Montazeri, Z.; Leiva, V.; Guerrero, J.M. A new two-stage algorithm for solving optimization problems. Entropy 2021, 23, 491. [Google Scholar] [CrossRef]

- Zhao, W.; Zhang, Z.; Wang, L. Manta ray foraging optimization: An effective bio-inspired optimizer for engineering applications. Eng. Appl. Artif. Intell. 2020, 87, 103300. [Google Scholar] [CrossRef]

- Abdollahzadeh, B.; Gharehchopogh, F.S.; Khodadadi, N.; Mirjalili, S. Mountain Gazelle Optimizer: A new Nature-inspired Metaheuristic Algorithm for Global Optimization Problems. Adv. Eng. Softw. 2022, 174, 103282. [Google Scholar] [CrossRef]

- Shen, C.; Zhang, K. Two-stage improved Grey Wolf optimization algorithm for feature selection on high-dimensional classification. Complex Intell. Syst. 2022, 8, 2769–2789. [Google Scholar] [CrossRef]

- Goldberg, D.E.; Holland, J.H. Genetic algorithms and machine learning. Mach. Learn. 1988, 3, 95–99. [Google Scholar] [CrossRef]

- Beyer, H.G.; Schwefel, H.P. Evolution strategies—A comprehensive introduction. Nat. Comput. 2002, 1, 3–52. [Google Scholar] [CrossRef]

- Simon, D. Biogeography-based optimization. IEEE Trans. Evol. Comput. 2008, 12, 702–713. [Google Scholar] [CrossRef]

- Banzhaf, W.; Nordin, P.; Keller, R.E.; Francone, F.D. Genetic Programming: An Introduction; Morgan Kaufmann Publishers: San Francisco, CA, USA, 1998; Volume 1. [Google Scholar]

- Storn, R.; Price, K. Differential evolution—A simple and efficient heuristic for global optimization over continuous spaces. J. Glob. Optim. 1997, 11, 341–359. [Google Scholar] [CrossRef]

- Rashedi, E.; Nezamabadi-Pour, H.; Saryazdi, S. GSA: A gravitational search algorithm. Inf. Sci. 2009, 179, 2232–2248. [Google Scholar] [CrossRef]

- Ghasemi, M.; Davoudkhani, I.F.; Akbari, E.; Rahimnejad, A.; Ghavidel, S.; Li, L. A novel and effective optimization algorithm for global optimization and its engineering applications: Turbulent Flow of Water-based Optimization (TFWO). Eng. Appl. Artif. Intell. 2022, 92, 103666. [Google Scholar] [CrossRef]

- Kaveh, A.; Dadras, A. A novel meta-heuristic optimization algorithm: Thermal exchange optimization. Adv. Eng. Softw. 2017, 110, 69–84. [Google Scholar] [CrossRef]

- Shah-Hosseini, H. Principal components analysis by the galaxy-based search algorithm: A novel metaheuristic for continuous optimisation. Int. J. Comput. Sci. Eng. 2011, 6, 132–140. [Google Scholar]

- Hatamlou, A. Black hole: A new heuristic optimization approach for data clustering. Inf. Sci. 2013, 222, 175–184. [Google Scholar] [CrossRef]

- Kaveh, A.; Khayatazad, M. A new meta-heuristic method: Ray optimization. Comput. Struct. 2012, 112–113, 283–294. [Google Scholar] [CrossRef]

- Erol, O.K.; Eksin, I. A new optimization method: Big Bang–Big Crunch. Adv. Eng. Softw. 2006, 37, 106–111. [Google Scholar] [CrossRef]

- Du, H.; Wu, X.; Zhuang, J. Small-world optimization algorithm for function optimization. In Advances in Natural Computation; Jiao, L., Wang, L., Gao, X., Liu, J., Wu, F., Eds.; Springer: Berlin/Heidelberg, Germany, 2006; pp. 264–273. [Google Scholar]

- Tayarani-N, M.H.; Akbarzadeh-T, M.R. Magnetic optimization algorithms a new synthesis. In Proceedings of the Congress on Evolutionary Computation (IEEE World Congress on Computational Intelligence), Hong Kong, China, 1–6 June 2008; pp. 2659–2664. [Google Scholar]

- Alatas, B. ACROA: Artificial chemical reaction optimization algorithm for global optimization. Expert Syst. Appl. 2011, 38, 13170–13180. [Google Scholar] [CrossRef]

- Kashan, A.H. League Championship Algorithm (LCA): An algorithm for global optimization inspired by sport championships. Appl. Soft Comput. 2014, 16, 171–200. [Google Scholar] [CrossRef]

- Dehghani, M.; Mardaneh, M.; Guerrero, J.M.; Malik, O.; Kumar, V. Football game based optimization: An application to solve energy commitment problem. Int. J. Intell. Eng. Syst. 2020, 13, 514–523. [Google Scholar] [CrossRef]

- Moghdani, R.; Salimifard, K. Volleyball Premier League Algorithm. Appl. Soft Comput. 2018, 64, 161–185. [Google Scholar] [CrossRef]

- Subramaniyan, S.; Ramiah, J. Improved football game optimization for state estimation and power quality enhancement. Comput. Electr. Eng. 2020, 81, 106547. [Google Scholar] [CrossRef]

- Ma, B.; Hu, Y.; Pengmin Lu, P.; Liu, Y. Running city game optimizer: A game-based metaheuristic optimization algorithm for global optimization. J. Comput. Des. Eng. 2023, 10, 65–107. [Google Scholar] [CrossRef]

- Xu, S.; Chen, H. Nash game based efficient global optimization for large-scale design problems. J. Glob. Optim. 2018, 71, 361–381. [Google Scholar] [CrossRef]

- Srilakshmi, K.; Babu, P.R.; Venkatesan, Y.; Palanivelu, A. Soccer league optimization for load flow analysis of power systems. Int. J. Numer. Model. Electron. Netw. Devices Fields 2021, 35, e2965. [Google Scholar] [CrossRef]

- Rao, R.V.; Savsani, V.J.; Vakharia, D. Teaching–learning-based optimization: A novel method for constrained mechanical design optimization problems. Comput. Aided Des. 2011, 43, 303–315. [Google Scholar] [CrossRef]

- Dehghani, M.; Mardaneh, M.; Guerrero, J.M.; Malik, O.P.; Ramirez-Mendoza, R.A.; Matas, J.; Vasquez, J.C.; Parra-Arroyo, L. A new “Doctor and Patient” optimization algorithm: An application to energy commitment problem. Appl. Sci. 2020, 10, 5791. [Google Scholar] [CrossRef]

- Trojovský, P.; Dehghani, M. A new optimization algorithm based on mimicking the voting process for leader selection. PeerJ Comput. Sci. 2022, 8, e976. [Google Scholar] [CrossRef]

- Dehghani, M.; Trojovský, P. Teamwork Optimization Algorithm: A New Optimization Approach for Function Minimization/Maximization. Sensors 2021, 21, 4567. [Google Scholar] [CrossRef]

- Dehghani, M.; Trojovská, E.; Trojovský, P. A new human-based metaheuristic algorithm for solving optimization problems on the base of simulation of driving training process. Sci. Rep. 2022, 12, 9924. [Google Scholar] [CrossRef]

- Zeidabadi, F.-A.; Dehghani, M.; Trojovský, P.; Hubálovský, Š.; Leiva, V.; Dhiman, G. Archery Algorithm: A Novel Stochastic Optimization Algorithm for Solving Optimization Problems. Comput. Mater. Contin. 2022, 72, 399–416. [Google Scholar] [CrossRef]

- Dehghani, M.; Montazeri, Z.; Dehghani, A.; Malik, O.P. GO: Group optimization. Gazi Univ. J. Sci. 2020, 33, 381–392. [Google Scholar] [CrossRef]

- Dehghani, M.; Mardaneh, M.; Malik, O. FOA: ‘Following’Optimization Algorithm for solving Power engineering optimization problems. J. Oper. Autom. Power Eng. 2020, 8, 57–64. [Google Scholar]

- Wolpert, D.H.; Macready, W.G. No free lunch theorems for optimization. IEEE Trans. Evol. Comput. 1997, 1, 67–82. [Google Scholar] [CrossRef]

- Awad, N.; Ali, M.; Liang, J.; Qu, B.; Suganthan, P.N. Problem Definitions and Evaluation Criteria for the CEC 2017 Special Session and Competition on Single Objective Real-Parameter Numerical Optimization; Technical Report; Nanyang Technological University: Singapore, 2016. [Google Scholar]

- Wilcoxon, F. Individual comparisons by ranking methods. In Breakthroughs in Statistics; Springer: New York, NY, USA, 1992; pp. 196–202. [Google Scholar]

- Das, S.; Suganthan, P.N. Problem Definitions and Evaluation Criteria for CEC 2011 Competition on Testing Evolutionary Algorithms on Real World Optimization Problems. Technical Reports. 2010. Available online: al-roomi.org/multimedia/CEC_Database/CEC2011/CEC2011_TechnicalReport.pdf (accessed on 4 June 2023).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).