Abstract

The paper deals with a finite-source queueing system serving one class of customers and consisting of heterogeneous servers with unequal service intensities and of one common queue. The main model has a non-preemptive service when the customer can not change the server during its service time. The optimal allocation problem is formulated as a Markov-decision one. We show numerically that the optimal policy which minimizes the long-run average number of customers in the system has a threshold structure. We derive the matrix expressions for performance measures of the system and compare the main model with alternative simplified queuing systems which are analysed for the arbitrary number of servers. We observe that the preemptive heterogeneous model operating under a threshold policy is a good approximation for the main model by calculating the mean number of customers in the system. Moreover, using the preemptive and non-preemptive queueing models with the faster server first policy the lower and upper bounds are calculated for this mean value.

1. Introduction

The finite-source or finite-population queueing systems comparing to the ordinary markovian queues have no longer a Poisson arrival stream as in systems with an infinite source of customers, but rather have a finite source capacity N of possible customers. In such systems a customer can be inside the system, consisting in our case of one common queue with capacity N and K heterogeneous servers or outside the system in so-called arriving state. It is assumed that each customer outside arrives to the system in exponentially distributed time. After receiving the service a customer returns to the arriving area. Much attention by the study of finite-source queueing systems has been paid in terms of the machine repairman problem, see e.g., [1,2]. The customers outside the queueing system can be interpreted as unreliable machines with independent exponentially distributed life times. The queueing system represents then the repair facility where the failed machines must be recovered. Such systems are also used in various dispatching problems, they are appropriate queueing models for telephone registration systems, call centers, Ethernet systems, local-area networks, mobile communications, magnetic disk memory systems and so on.

The primary objective of this paper is to analyze optimal control and to evaluate performance metrics under fixed control policy for a non-preemptive finite-source queueing system with one class of customers and heterogeneous servers. In such a system, a customer that receives service on a slower server cannot change it if a faster server becomes available in the course of service. Unfortunately, performance analysis of this system in analytical form is limited firstly by the need to have a known allocation mechanism between the servers or control policy and secondly by the dimensionality of the corresponding Markov process, which is affected by the number of servers. To calculate the optimal allocation policy with the aim to minimize the long-run average number of customers in the system we formulate the Markov decision process (MDP) and apply the policy-iteration algorithm. This algorithm can be used not only for the optimal allocation policy calculation but also to obtain the mean number of customers in the system operating under that policy. Numerical experiments confirm our expectations that the optimal policy is of threshold type as in the models with an infinite source capacity [3]. According to this policy the fastest server must be activated whenever there is a customer in the system while the slower servers must be used only if the number of customers in the queue reaches some prespecified threshold level. The model of the non-preemptive queue operating under the optimal threshold policy (OTP) will referred to in the paper as the OTP-model.

The task of calculating other system performance characteristics for a given control policy remains unresolved. Furthermore, it should be taken into account that despite of advantages the policy-iteration algorithm has a limitation on the dimensionality of the random process for an arbitrary number of servers. In case of a threshold control policy for a particular states’ ordering the corresponding Markov chain is a quasi-birth-and-death (QBD) process with a three-diagonal block infinitesimal matrix, where the blocks depend on the values of thresholds as it was shown in [4] for the infinite population system. In this case, matrix-analytic solution methods can be applied, but for a limited number of servers. This led us to discuss here in addition some simplified variants of the main model. The non-preemptive queueing system operating under a Fastest Server First (FSF) policy which prescribes for service the usage of the fastest idle server in each state and the preemptive queueing system (PS), where the service in a slower server can be interrupted if during the service time the faster server becomes idle. This system will operates according to a threshold policy, when the slower servers are activated or deactivated if the number of customers in the queue respectively exceeds or falls below a certain threshold level. Although these systems are simpler than the main model and have a low dimensions of the state-space, there are very few publications on such specific systems, especially those with analytical results.

Description of standard finite-source models with classical results, motivation examples and literature overview can be found in [5]. In [6] the author obtained the product form solution for the stationary state distribution for the finite population queueing model with a queue-dependent servers. A non-preemptive finite-population queueing system with heterogeneous classes of customers and a single server was studied in [7]. The problem of the throughput maximizing in a finite-source system with parallel queues was analyzed in [8], where some structural properties of the optimal control policy was proved. Heterogeneous multi-server finite-source queues with a FSF-policy and retrial phenomenon have been studied in [9], where numerical results were carried out by the help of the MOSEL tool. The model with machines having non-identical exponential service times was analysed in [10], where the repair policies which minimize the mean processing cost were considered. As for finite-source systems operating under a threshold policy, we know only research papers dedicated to single server systems with a unit threshold N-policy, see e.g., [11]. Here the threshold policy states that the server in a repair facility must be switched on only when the number of failed machines reaches to predefined threshold level N. In [12], the authors generalized the model to the case of two heterogeneous unreliable servers operating under policies and . Although there is a considerable amount of results on finite-source queues, the controlled systems with heterogeneous servers operating without preemption have not been investigated before. Therefore, the models presented in this paper and the corresponding results of the system analysis differ from those previously discussed.

The main contributions of paper are as follows. The structural or threshold properties of an optimal control policy are numerically established. We derive for the main model the matrix expressions used further by calculating different performance measures such as the mean number of waiting customers, the mean number of busy servers, the mean length of a busy period. The matrix-analytic solution for the stationary state distribution and performance measures are obtained for the FSF-model. Here we used the recursive definition of some blocks in the infinitesimal matrix. For the PS-models we obtain analytical product-form result for an arbitrary number of servers. Moreover, it is shown that performance characteristics of these systems in certain operation modes are the same or very close to those of the main system functioning under the optimal policy. Thus, these simplified models can be used under certain conditions to calculate upper and lower bounds for some performance characteristics and also as approximating models. We develop also the first step analysis to study the mean number of customers served in the system or by the kth server in a busy period and the probability of the maximum queue length observed during this period.

The rest of the paper is organized as follows. In Section 2, we describe the Markov-decision process of the main model and show that the system has a threshold-based optimal allocation policy. In this section we develop also the computational analysis for the mean values of performance measures including those characterizing the behaviour of the system in a busy period. The FSF-Model is presented and analysed in Section 3. Section 4 is devoted to the PS-model. Comparison analysis of the proposed models and illustrative examples are summarized in Section 5.

The following notations will be used throughout this paper: , and stand respectively for the unit vector of dimension n, for the basis vector of dimension n in with or depending on the context, and for the identity matrix of dimension n. If it is not necessary to specify a vector dimension, we will omit the corresponding argument. For example, denotes a column unit vector of an appropriate dimension. The notation is used for the transpose. The notation ⊗ stands for the Kronecker product of two matrices, – for the indicator function, where if the condition A holds, and 0 otherwise. The notation is used for the magnitude of a finite set A.

2. OTP-Model

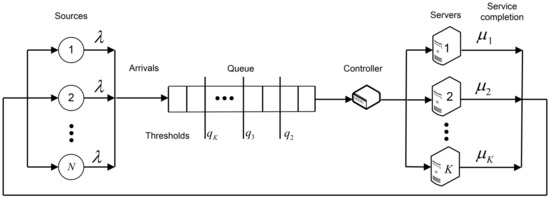

Here we discuss the main model of the non-preemptive finite-source controlled queueing system of the type illustrated in Figure 1. The system has K heterogeneous servers with different rates and N customers in a source. It operates under the optimal allocation policy which minimizes the mean number of customers in the system. It will be shown that this policy is defined through a sequence of threshold levels for the queue lengths which prescribe the activation of slower servers. The analysed system can be treated as a model for the machine-repairman problem, where N unreliable machines in a working area with exponential distributed life times and equal rates must be repaired by K heterogeneous repair stations. The machines fail independently of each other. The stream of failed machines can be treated as an arrival stream of customers to the queueing system. Hereafter, we will refer to the customer as a failed machine which enters the repair system and gets there a repair service. After the repair the machine becomes as good as a new one and it returns to the working area. The aim is to dynamically allocate the customers to the servers in order to minimize the long-run average number of customers in the system and to calculate the corresponding mean performance measures.

Figure 1.

The schema of the finite-source queueing system.

2.1. MDP Formulation

We formulate the optimal allocation problem in this machine-repairman system as a Markov Decision Process (MDP) in the following way. The behaviour of the system is described by a multi-dimensional continuous-time Markov-chain

where stands for the number of customers waiting in the queue at time t and specifies the state of the jth server at time t, where

State space: The set consists of dimensional row vectors,

where denotes the number of customers in the queue and – the status of the jth server in state x. The total number of states in the set is equal to .

Decision epochs: The arrival and service completion epochs in the system with waiting customers.

Action space:. To identify the group of idle and busy servers, the following sets are defined,

With this notations the set of admissible control actions in state can be defined as . The action means that in state x a customer must be allocated to an idle server, while means that the customer must be routed to the queue. At an arrival epoch, which occurs only if the number of customers in the system is less than N, the arrived customer joins the queue and simultaneously another one from the head of the queue must be routed to some idle server or returned back to the queue. At a service completion epoch the same happens, i.e. the customer from the head of the queue is routed either to one of idle servers or to the queue again. By service completion in a system without waiting customers no actions have to be performed.

Immediate cost: The function specifies the number of customers in a state , i.e.,

which is in fact independent of a control action a.

Transition rates: The policy-dependent infinitesimal matrix of the Markov-chain (1) includes the rates to go from state x to state y given the control action is a defined as

with , where and stand for the shift operators applied to the vector state x in the following way,

Due to the finiteness of the state space and boundedness of the immediate cost function , a stationary average-cost optimal policy exists with a finite constant mean value for the number of customers in the system

which is independent of the initial state x. In this case the policy-iteration algorithm introduced in Algorithm 1 converges.

This algorithm consists of two main parts: Policy evaluation and Policy improvement. In the first part, a system of linear equations with immediate costs

is solved for the unknown real-valued dynamic-programming value function and mean value given a control policy is f. The second part of the algorithm is responsible for improving the previous policy, which for a given system consists in determining, for each system state, a control action a that minimizes the value function . The improved control action in state x is defined then as for . Thus, the algorithm constructs a sequence of improved control policies until it finds one that minimizes the gain .

In Algorithm 1 we perform a conversion of the -dimensional state space of the Markov chain (1) to one-dimensional equivalent state space using the function , where

In one-dimensional state space the transitions due to arrivals and service completions can be defined then as

For more details about derivation of the optimality equation for heterogeneous queueing systems the interested reader is referred to relevant publications, e.g., [3].

| Algorithm 1 Policy-iteration algorithm |

|

Numerical analysis confirms our expectation that the optimal control policy in heterogeneous systems for a finite number of customers also belongs to a class of threshold policies, as in infinite population case. Theoretical justification of this statement is still difficult. For this purpose it is necessary to prove that the dynamic-programming operator B defined for our queueing model as

where and are the events operators in case of a new arrival and a service completion at server ,

preserves the monotonicity properties of the increments of the value function v:

In proving the inequality (7) we encounter difficulty. This is due primarily to the form of the operator B in (5). There is a term describing arriving customers whose coefficient depends on the system state x. Bringing the terms in inequality (7) to a common denominator by introducing fictitious transitions, we get terms which cannot be proved to be negative. We hope that we will be able to overcome these difficulties in our next paper, but to date we’re basing our statement about a threshold structure of the optimal control policy f exclusively on the performed numerical experiments. The following example makes the case vividly.

Example 1.

Consider the system with , and . The service rates take the following values: . The Table 1 of optimal control actions for selected system states x is of the form:

Table 1.

The optimal control actions.

Threshold levels , , are evaluated by comparing the optimal actions and for , , . In this example the optimal policy f is defined here through a sequence of threshold levels and .

2.2. Evaluation of System Performance Measures

We are concerned in calculation of the system performance measures for a given policy f. The state probabilities and performance characteristics defined here will refer to some particular fixed control policy f, so we will use in notations the corresponding upper index. The states x of the set with are ordered according to the number of busy servers while the states for are ordered with respect to the queue length, so that the infinitesimal matrix has a block three-diagonal structure for the fixed policy f. First we define the performance characteristics:

- The probability that the kth server is busy, ;

- The mean number of busy servers, ;

- The mean number of customers in the queue, .

- The mean number of customers in the system, .

The following vectors of dimension comprise the policy-dependent values and policy-independent values ,

where the first elements of the vectors are respectively and . Denote by one of the performance characteristics , , and .

Proposition 1.

The performance measure satisfies the relation

where the vector is a solution of the system

The matrix is obtained from by removing the first column and the first row, and

Proof.

We multiply the both sides of the equality (9) by the row-vector of the stationary state probabilities ,

where for the corresponding function is obviously equal to the performance measure . The following sequence of relations

validates the statement. □

The following measures characterize the behaviour of the system in a busy period which we define as a duration starting when the arrived customer enters the empty system in state and finishes when the system visits again after a service completion.

- The mean length of a busy period, ;

- The mean number of customers served in a busy period by the kth server, ;

- The total mean number of customers served in a busy period,.

In the following proposition we describe a general way to calculate these characteristics for the fixed control policy f. Denote by one of the performance characteristics , and .

Proposition 2.

The performance measure satisfies the relation

where the vector is a solution of the system

The matrix is obtained from by removing the first column and the first row, and

Proof.

Denote by , , the Laplace-Stiltjes transform (LST) of the probability density function (PDF) for the first passage time to state given that the initial state is , the control policy is f and by the corresponding first moment. According to the first step analysis we get for the LST the system

We take into account that , we can obtain from (13) the system for the conditional moments

By expressing relations (15) in matrix form and taking into account that we obtain the expressions (11) for .

Denote now by , , the probability generating function (PGF) of the PDF of the number of service completion at server k up to the end of busy period given that the initial state is . With respect to the law of the total probability we get the following relations for the function ,

The first term on the right hand side of (16) represents the transition to state u accompanied with an event we count, that is a service completion at server k. The second term stands for other possible transitions. The system (16) can be rewritten in terms of the PGF in the following form,

The expressions (17) can be modified using the property in such a way that we get a system for the corresponding first moments,

For the model under study the system (18) is of the form

Finally, one more performance measure in this section is of our interest, namely, the distribution of the maximal queue length in a busy period for the given control policy f. Denote by the maximum number of customers waiting in the queue during a busy period. For each fixed value the event is equivalent to the event that the process starting in state , where the first server is busy, hits the empty state before visiting the subset of states

The probability will be calculated by means of absorption probabilities for states in a set of absorbing states given that the initial state is . Denote by

the column-vectors of dimension , where n is fixed. Denote further by one of performance characteristics , .

Proposition 3.

The performance measure satisfies the relation

where the vector is a solution of the system

The matrix is obtained from by removing all columns and rows starting with the , and

Proof.

Denote by the probability of absorption into empty state starting in , where , where as before is the state after an arrival to an empty state . The following system can be obtained by conditioning on the next visited state Using again the first principles,

As we can see, calculating the performance characteristics requires solving very similar systems of equations. Thus, the same algorithm can be used for this purpose by substituting appropriate values into vectors and b, This versatility of the proposed approach greatly simplifies the application of algorithmic types of analysis of complex controlled queueing systems. In principle, we assume that for a fixed control threshold policy, the structure of the infinitesimal matrix can be even fully defined for an arbitrary number of servers, as will be proposed in the next section for the special case of the control policy where all thresholds are equal to 1. Thus we believe that matrix expressions can be derived explicitly from the presented matrix systems for performance characteristics. We leave this problem for our research in the near future.

3. FSF-Model

Here we discuss the FSF-Model which is a special case of the OTP-model, where . The Markov-chain operating under the FSF-policy has a state space

The states in are divided in to levels y in the following way,

Denote by for , then for and for . Within each level y, , the states are ordered in the lexicographic order, where the rank of x in the level y with and can be evaluated by

where is the number of slower busy servers as the ith idle one. Note that this ordering of states differs from that defined in (4) and used in the policy iteration algorithm. In the lexicographic ordering within each level of states it is possible to obtain explicit matrix expressions for state probabilities in case of an arbitrary number of servers K. Denote further by , , matrices whose rows consist of ordered elements of level y.

Proposition 4.

The the system under FSF-policy is described by a QBD process with a block-three diagonal infinitesimal matrix of the form

The square blocks of dimension for and 1 for consist of the rates to stay in the yth level, are defined as

The blocks of dimension for and of dimension 1 for consist of the rates to move upwards from the level to y due to arrivals and are defined as

The blocks of dimension for and of dimension 1 for consist of the rates to move downwards from the level to y due to service completions and are defined as recursive matrices

Proof.

Analysing the transitions of the Markov-chain we get a system of balance equations in form

where , . Expressing Equation (30) for the sub-vectors , , and the scalars and , , by means of defined blocks and taking into account the states’ ordering (25) we get the system

Denote by the macro-vector of the stationary state probabilities, i.e.

Proposition 5.

The elements of the stationary probability macro-vector π satisfy the relations

where the matrices satisfies the recursive relations

Proof.

The probability and sub-vectors , , can be expressed from the balance equations (31) using a block forward elimination-backward substitution as

We similarly obtain an expression for ,

To calculate performance characteristics the expressions from the previous section applied to a control policy can be used. As an alternative to the policy-iteration algorithm we can use the proposed matrix-analytic solution (32)–(35) to obtain the matrix expressions for the performance characteristics in an explicit form.

Corollary 1.

The probability that the kth server is busy and the mean number of busy servers,

The mean number of customers in the queue,

The mean number of customers in the system,

Mean length of a busy period,

Mean number of customers served in a busy period,

The mean number of customers served by the kth server in a busy period and the distribution of the maximal queue length can be evaluated using the matrix systems (11) and (20) taking into account the structure (26) of the infinitesimal matrix .

Proposition 6.

The mean number of customers served in a busy period by the kth server satisfies the relation

where

The column-vector for and the scalar for .

Proof.

The system (11) can be rewritten for appropriate blocks in form

The elements of are equal to if for some state x of the level . This implies the relations for . Using a forward elimination - backward substitution we get the recursive relations

where and are defined by (38). This statement follows through recurrence substitution taking into account that , since the level 1 consists of K states. □

The following statement for the matrix equation (20) can be proved in a similar way taking into account the structure (26) of the infinitesimal matrix .

Proposition 7.

The probability of the maximum queue length in a busy period satisfies the relation

where

4. PS-Model

In this section we discuss a queueing system with a preemption operating under a general threshold policy f defined as a sequence of threshold levels . The first server in this system is permanently available for service while the jth slower server must be used as soon as the number of customers in the system increases up to the value . This server must be removed from the system when the number of customers becomes again less as . Denote by the continuous-time Markov-chain with a state space . All the rates are the same as in the model without preemption. The infinitesimal matrix is then of the form:

where and . The state transition diagram of the process is illustrated in Figure 2.

Figure 2.

The state transition diagram for the queueing system .

Proposition 8.

The steady-state probabilities of the PS-Model satisfy the relations

where , and .

Proof.

The proposition follows by solving the following equations

recursively for , where is calculated by means of the normalizing condition . □

Corollary 2.

The kth server is busy with a probability,

The mean number of busy servers,

The mean number of customers in the queue,

The mean number of customers in the system .

The mean length of busy period,

The mean number of customers served in a busy period,

Further we use a similar methodology that has been employed in previous section to derive expressions for and with the knowledge that all levels consist now of only one state, and hence in the sequel we omit some details.

Proposition 9.

The mean number of customers served by the kth server in a busy period satisfies the relation

where

Proof.

Proposition 10.

The distribution function of the maximum queue length observed in a busy period satisfies the relation

where

The last result can be rewritten in explicit form as well.

Proposition 11.

The distribution function of the maximum queue length observed in a busy period is given by

where the function has the following product form,

Proof.

The function , for the given policy f satisfy the following system,

These difference equations can be rewritten as recurrent relation for ,

5. Comparison Analysis

In this section we discuss the results after having computed the performance metrics for the following finite-source heterogeneous queueing models: Non-preemptive queueing system operating under the optimal threshold policy (OTP-Model), non-preemptive queueing system with a fastest server first policy (FSF-Model), preemptive queueing system (PS1-Model), where the kth server is used when at least k customers present in the system, and preemptive queueing system (PS2-Model) operating according to a given threshold policy. This policy we calculate using a similar heuristic formula obtained in [13], which can be rewritten in form

where and is an average arrival rate in the PS1-Model which is derived in explicit form.

In our experiments we fix the number of servers , the source capacity and service intensities . The rate will be varied in the interval . The choice of this interval is not random. At higher values of , the analysed functions become indistinguishable, since the corresponding queueing systems will have similar stochastic behaviour in a so-called heavy-traffic mode.

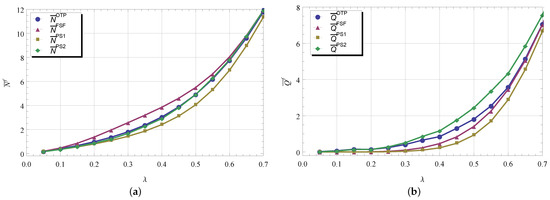

In Figure 3, we display the functions (figure labeled by “a”) and (figure labeled by “b”) for different models as varies. We observe that the functions and models are the natural upper and low bounds for . It is clear that the FSF-model is a particular case of the OTP and the queue with a preemption is always superior in performance comparing to the non-preemptive case.

Figure 3.

(a) and (b) vs. .

Differences between the functions and are almost not visible. This effect is also observed for other values of system parameters. It allows the preemptive system under a threshold policy to be used as an approximation of the original OTP-model. In contrary, the PS2-model exhibits the higher values of queue lengths while the PS1-model—the shortest. The OTP-model also has in average more waiting customers as in FSF-model which is not surprising, since the optimal policy minimizes in our case the mean number of customers in the system but not in the queue. It should also be noted that the higher the degree of heterogeneity of the servers, the greater the differences in performance functions for different models become.

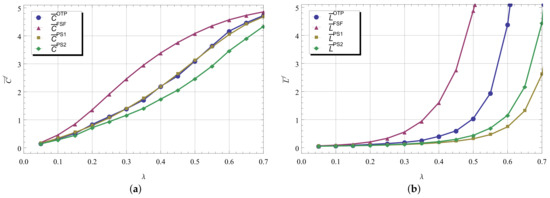

Figure 4 illustrates the influence of and model types on the functions (figure labelled by “a”) and (figure labelled by “b”). The functions of the mean number of busy servers for the OTP- and PS1-models are very close to each other. Thus, by subtracting the mean number of busy servers in PS1-model from the mean number of customers in PS2-model, an approximation can be obtained for the mean queue length of the OTP-model. The functions and represent the upper and low bound for . The longest busy period appears in FSF-model. In this case the slower servers can be occupied with higher probability and then these servers remain busy for a very long time. As expected, the shortest busy period exhibits the preemptive PS1-model.

Figure 4.

(a) and (b) vs. .

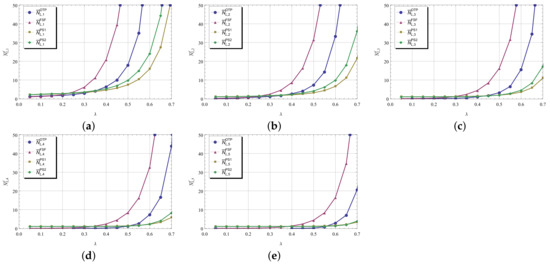

Figure 5 shows the effect of the service speed of kth server, , to the mean number of customers served in a busy period (figures are labeled respectively by “a”–“f”). We observe that the slow servers begin to contribute to the number of customers served as the intensity of increases. The functions are proportional to the rate , they are simply shifted to the right as is getting higher without changing their form. It can be observed also that the FSF-policy maximizes the number of customers served in a busy period at any server. This observation coincides with a statement in [14] that the fastest available server stochastically maximizes the number of service completions.

Figure 5.

The mean number of customers for respectively in (a–e) vs. .

We now focus on the results obtained for the maximum queue length observed during a busy period. To study the influence of system parameters and model type we summarized the results in Table 2 for OTP- and FSF-models and in Table 3—for PS1- and PS2-models. In tables we vary the rate keeping as before other parameters constant. The results compiled and presented in tables correlate with the graphs for the mean length of the busy period. The longer the busy period is, the more likely there will be fewer waiting customers in the queue. In the FSF-model it is more likely that there is an empty waiting line. As increases, the queues grow and hence we observe that for all models that the 99th percentile increases.

Table 2.

The distribution function of the maximum queue length as varies for OTP and FSF.

Table 3.

The distribution function of the maximum queue length as varies for PS1 and PS2.

We have also conducted various experiments where we analyzed the effect of the number of servers, the source capacity, the level of heterogeneity and so on to performance metrics of non-preemptive heterogeneous systems and possible approximations through their preemptive equivalents. Due to the space limitations of the paper, we omit these results. As a generalisation, we can state that the main observations we made in the presented examples remain valid also for other values of system parameters.

6. Conclusions

Finite-source multi-server heterogeneous systems without priority service interruption are described using a multivariate Markov-chains. For such a systems we have found the optimal threshold policy and calculated the corresponding performance measure. Both analytical and numerical studies of such a system face constraints on the dimensionality of the problem, i.e., on the number of servers. In this paper we have also tried to understand, whether there are simplified variations of the main model which are appropriate for boundary values calculation or even for approximation of the main model but without constraint on the number of servers. We have analyzed non-preemptive and preemptive queues and provided comparison analysis of the performance characteristics.

Author Contributions

Conceptualization, D.E. and N.S.; formal analysis, investigation, methodology, software and writing, D.E., N.S. and J.S. All authors have read and agreed to the published version of the manuscript.

Funding

This research is supported by the Austro-Hungarian Cooperation (OMAA) Grant No. 106öu4, 2021. (D. Efrosinin and J. Sztrik: Mathematical model development, investigation, methodology, N. Stepanova: Numerical analysis and validation). This paper has been supported by the RUDN University Strategic Academic Leadership Program (D. Efrosinin: Formal analysis).

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Not applicable.

Acknowledgments

The authors are very grateful to the reviewers of the paper for their comments which improved its quality.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Ke, J.; Wang, K. Cost analysis of the M/M/R machine repair problem with balking, reneging and server breakdown. J. Oper. Res. Soc. 1999, 50, 275–282. [Google Scholar] [CrossRef]

- Stecke, K.E. Machine interference: Assignment of machines to operators. In Handbook of Industrial Engineering, 2nd ed.; John Wiley & Sons Inc.: New York, NY, USA, 1992; pp. 1460–1494. [Google Scholar]

- Efrosinin, D. Controlled Queueing Systems with Heterogeneous Servers: Dynamic Optimization and Monotonicity Properties of Optimal Control Policies in Multiserver Heterogeneous Servers; VDM Verlag: Saarbrücken, Germany, 2008. [Google Scholar]

- Efrosinin, D.V.; Rykov, V.V. On performance characteristics for queueing systems with heterogeneous servers. Autom. Remote Control 2008, 69, 61–75. [Google Scholar] [CrossRef]

- Sztrik, J. Finite-source queueing systems and their applications. In Formal Methods in Computing; Ferenczi, M., Pataricza, A., Rnyai, L., Eds.; Akadémia Kiadó: Budapest, Hungary, 2005; pp. 311–356. [Google Scholar]

- Jain, M. Finite source M/M/r queueing system with queue-dependent servers. Comput. Math. Appl. 2005, 50, 187–199. [Google Scholar] [CrossRef][Green Version]

- Iravani, S.M.R.; Krishnamurthy, V.; Chao, G.H. Optimal server scheduling in nonpreemptive finite-population queueing systems. Queueing Syst. 2007, 55, 95–105. [Google Scholar] [CrossRef]

- Deslay, M.; Kolfal, B.; Ingolfsson, A. Maximizing throughput in finite-Source parallel queue systems. Eur. J. Oper. Res. 2012, 217, 554–559. [Google Scholar]

- Sztrik, J.; Roszik, J. Performance Analysis of Finite-Source Retrial Queueing Systems with Heterogeneous Non-Reliable Servers and Different Service Policies; Research Report; University Debrecen: Debrecen, Hungary, 2001. [Google Scholar]

- Chakka, R.; Mitrani, I. Heterogeneous multiprocessor systems with breakdowns. Theor. Comput. Sci. 1994, 125, 91–109. [Google Scholar] [CrossRef]

- Jain, M.; Rakhee, M.S. N-policy for a machine repair system with spares and reneging. Appl. Math. Model. 2004, 28, 513–531. [Google Scholar] [CrossRef]

- Jain, M.; Meena, R.K. Vacation model for Markov machine repair problem with two heterogeneous unreliable servers and threshold recovery. J. Ind. Eng. Int. 2018, 14, 143–152. [Google Scholar] [CrossRef]

- Efrosinin, D.; Stepanova, N.; Sztrik, J.; Plank, A. Approximations in performance analysis of a controllable queueing System with heterogeneous servers. Mathematics 2020, 8, 1803. [Google Scholar] [CrossRef]

- Righter, R. Optimal policies for scheduling repairs and allocating heterogeneous servers. J. Appl. Probab. 1996, 33, 536–547. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).