MTS-PRO2SAT: Hybrid Mutation Tabu Search Algorithm in Optimizing Probabilistic 2 Satisfiability in Discrete Hopfield Neural Network

Abstract

1. Introduction

- (a)

- To propose a modified metaheuristic algorithm, namely the hybrid mutation tabu search algorithm, which integrates a mutation operator and segment operation as neighborhood operations. Through these newly constructed neighborhood operations, the tabu search algorithm has been successfully incorporated into the systematic logic for minimizing the cost function.

- (b)

- To optimize the retrieval phase by introducing a mutation operator. The mutation operator randomly flips the literals in unsatisfied clauses, aiming to disrupt the bias in the final state of neurons and enhance the diversity of the global minimum.

- (c)

- To propose the average similarity metric to evaluate the variability and diversity of solutions in the retrieval phase by calculating the similarity between non-repeated solutions and benchmark solutions.

- (d)

- This study examined the effectiveness of the mutation tabu search algorithm through the analysis of different performance measures. The performance of the proposed approach was thoroughly evaluated against that of the leading logical rule in both the learning and retrieval phase.

2. Motivation

2.1. Inefficient Learning Phase of DHNNs

2.2. Limited Solution Diversity in Retrieval Phase of DHNN

3. Probabilistic 2 Satisfiability (PRO2SAT)

4. in Discrete Hopfield Neural Network (DHNN)

5. Objective Function of PRO2SAT in Learning Phase

6. Proposed Metaheuristics

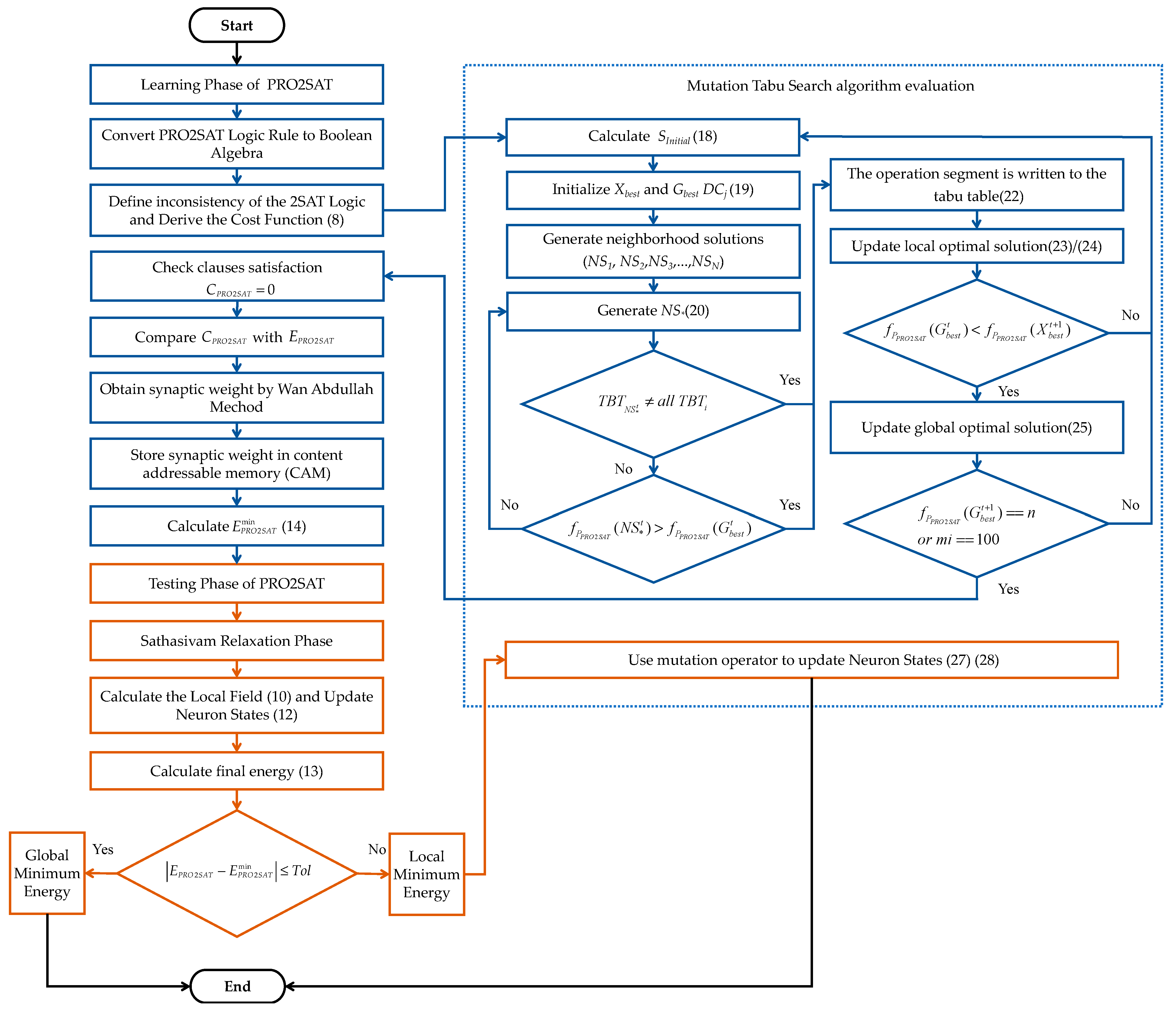

6.1. Proposed Mutation Tabu Search Algorithm (MTS)

6.1.1. Initialization

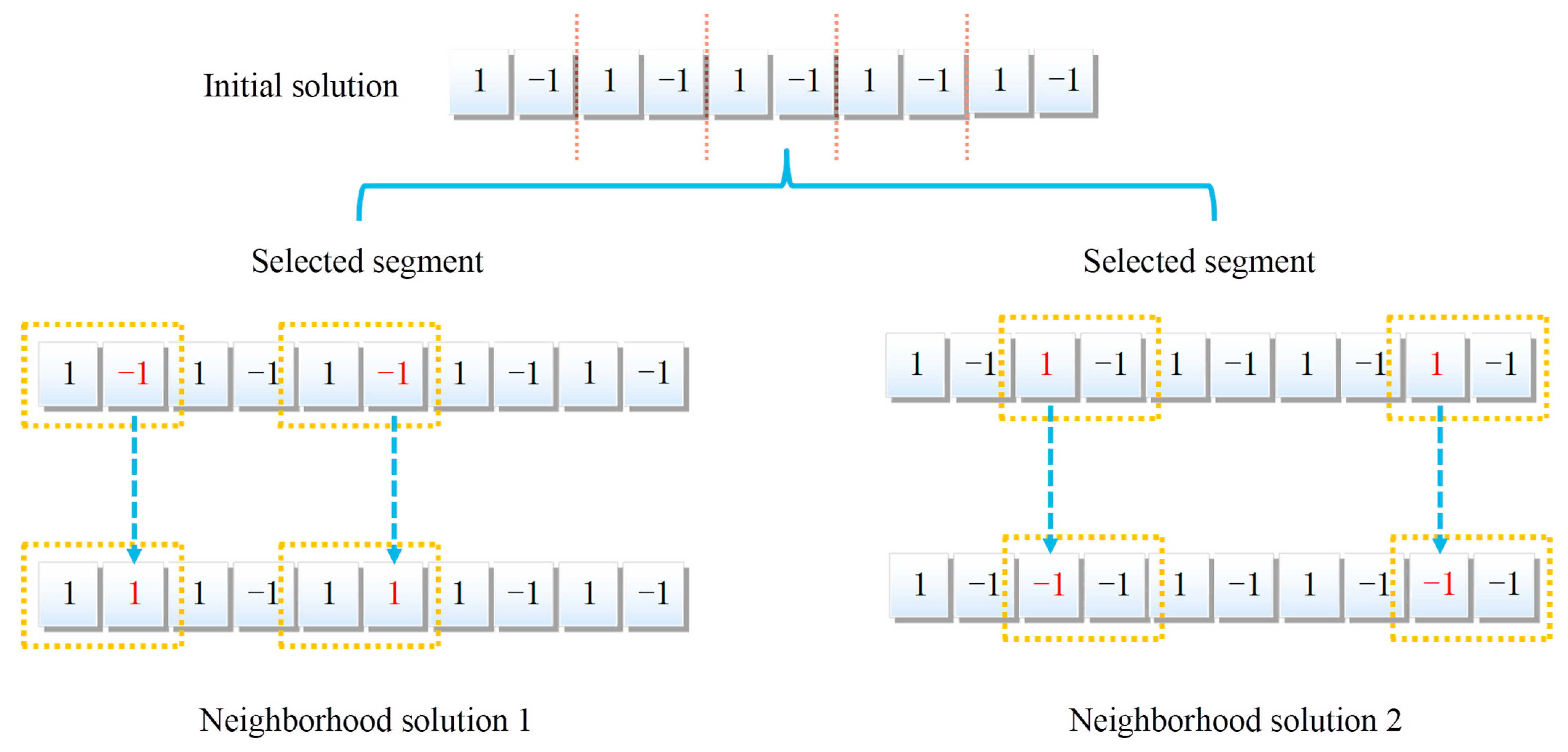

6.1.2. Generation Strategy to Neighborhood Solution

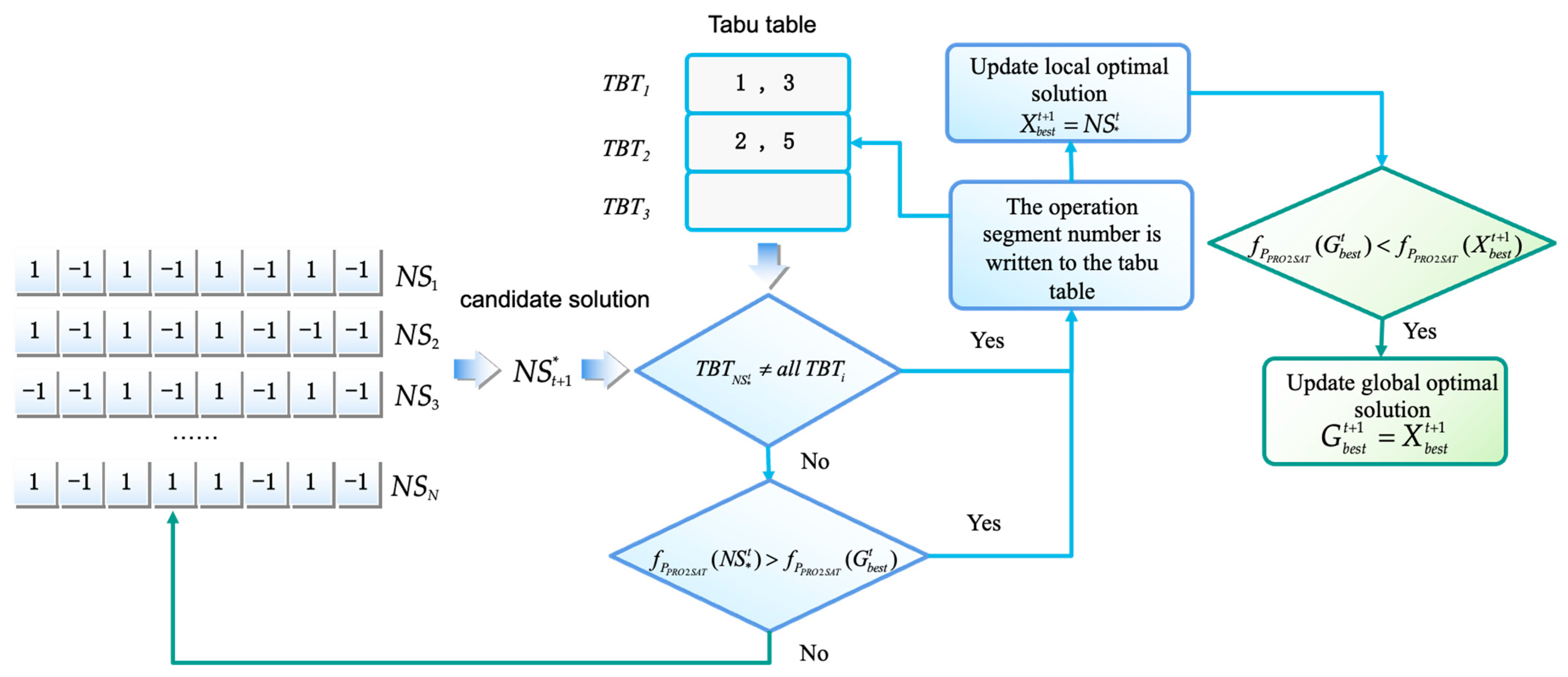

6.1.3. Generation Strategy to Candidate Solution

6.1.4. Fitness Assessment

6.1.5. Mutation

6.2. Baseline Model

- (a)

- GA [11]: This study integrated the strengths of the genetic algorithm and Hopfield neural network to efficiently find solutions to the logic satisfiability problem. The genetic algorithm is a global search algorithm which usually uses a binary encoding technique for optimization problems [26]. At the time of solving, the initial solution to the actual problem is coded to form a gene string, i.e., a chromosome, which is selected, crossed, and mutated to form a new chromosome. The resulting chromosome will be retained if it is closer to the maximum fitness than the previous one.

- (b)

- EA [12]: The EA algorithm is inspired by the election of a national president. The algorithm was proposed by Emami, H [27], which combined the features of evolutionary algorithms and SIA. In this algorithm [13], positive advertisement, negative advertisement, and coalition were used to implement intelligent search in a synergy mechanism. All solutions will be considered as voters from whom candidates will be selected, and each candidate determines his own voters based on his social relationship to the voters, thus forming a political party. As the leader of this party, the candidate will positively influence his own supporters (voters) through positive advertisement and positively influence the supporters (voters) for the leaders of other parties through negative advertisement in order to expand the search space and increase their probability of being elected. The most popular candidate will ultimately receive the most votes.

- (c)

- ACO [14]: Kho introduced the ACO algorithm in HDNNs (Kho, 2021). In this work, the ant colony algorithm is used to minimize the cost function of the corresponding logic rule in DHNNs. In the ACO algorithm, the pheromone density is used to find the optimal path, thus achieving a zero-cost function without consuming more learning iterations. In this study, the potential application of the ACO algorithm was fully demonstrated in optimization problems, including propositional logic.

- (d)

- EDA [28]: This algorithm is a probability-based population evolution algorithm. By generating a new population through random sampling, the evolution of the population is achieved through iterations. The main function of MTS is to estimate and predict the distribution of the data. This algorithm is able to predict future data trends by inferring the distribution of the data through statistical analysis. The standard EDA has two important operations, namely the selection operations and the modeling of the probability distribution. The selection operation is the same as the selection strategy in GA. The probability distribution model can be a univariate marginal distribution algorithm (UMDA), which is calculated.

- (e)

- DE [29]: A novel binary differential evolution algorithm based on Taper-shaped transfer functions (T-NBDE) is proposed to address the knapsack problem in [29]. The DE and GA algorithms are both evolutionary algorithms, which are adaptive global search algorithms first proposed by Price and Storn in the 1990s to solve the real number solution optimization problems. The DE algorithm has the main features of a simple structure, easy implementation, robustness and fast convergence, etc. In addition, the DE algorithm also has memory function, which can dynamically track the search situation, and the control parameters mainly include population size, variation operator, crossover operator, and selection operator. Unlike the GA algorithm, the variation operators of DE randomly select three individuals as parents for mutation operation to form new individuals.

- (f)

- GWO [23]: The GWO algorithm has been successfully applied to RDHNNs in the work by Ba et al. [16]. Therefore, we used GWO in the learning phase of PRO2SAT for comparative analysis. It is a heuristic optimization algorithm based on the behavior of grey wolf packs in nature, which simulates the social hierarchy of grey wolves and divides the individuals within the pack into four classes: head wolf (), subordinate wolf (), common wolf (), and bottom wolf (). In the GWO algorithm, each grey wolf represents a potential solution, and each wolf has an adaptation value. The higher the adaptation value, the better the indicated solution. Starting from any position in the solution space, the individual with the best fitness is set as the leader wolf , the one with the second fitness is set as the subordinate wolf , the one with the third fitness is set as the ordinary wolf , and the rest are the bottom wolves . The leader wolf is responsible for guiding the behavior of the pack, the subordinate wolf assists the leader wolf in making decisions, the ordinary wolf obeys the leader wolf and the subordinate wolf, and dominates the bottom wolf to catch and hunt the target. The bottom wolf needs to obey the guidance of other wolves and follows other wolves to complete hunting, and mainly takes charge of the balance of intra-pack relationships.

- (g)

- (PSO [30]: The PSO algorithm, proposed by Eberhart and Kenndy in 1995, is a type of SIA algorithm, which is widely used in the field of combinatorial optimization, and it can be applied to PRO2SAT. Inspired by the foraging behavior of a flock of birds, this algorithm includes evolutionary theory. The idea of the PSO algorithm is that each individual searches for a better solution based on the optimal solution that has been found and compares the optimal solution currently found by the population to update its speed. The algorithm searches for the global optimal solution by constantly updating the position and velocity of the population, which is a process of movement from simple individuals to complex global solutions.

- (h)

- SA [31]: The SA algorithm is a probability-based search algorithm proposed by Kirkpatrick, Gelatt, and Vecchi in 1983 to describe the physical annealing process of an object. It can be used to solve complex optimization problems. The basic idea is the process of finding the global optimal solution of the objective function randomly in the space of all local solutions starting from an initial solution (annealing point) in an isothermal process combined with the probabilistic sudden jump property, i.e., the ability to probabilistically jump out of each local solution and finally obtain the global optimal solution. The advantage of the SA algorithm is that it can find the global optimal solution and have a relatively large search space to find a better solution.

- (i)

- ABC [13]: This study introduces the combination of the artificial bee colony algorithm with the Hopfield network to minimize or maximize the cost function of any combinatorial problem. With this literature approach, we processed the secondary values using the ABC algorithm. Each bee was assigned an initial nectar source, and the employed bee dances through the solution space to compute the new nectar source. This explores the solution space of consistent solutions during the learning phase of the Hopfield neural network and identifies potential solutions. The combination of the ABC algorithm and the Hopfield neural network demonstrates the superior performance of the artificial bee colony in solving the 2 Satisfiability problem.

7. Experimental Setup

7.1. Simulation Design

7.2. Parameters Assignment

7.3. Performance Evaluation Metrics

7.3.1. Learning Phase Metrics

- (a)

- Mean absolute error of clause adaptation ()

- (b)

- Mean absolute error of the adaptation of logic rules ()

- (c)

- Mean similarity of consistency interpretations ()

- l indicates the overall occurrence count of in ;

- m indicates the overall occurrence count of in ;

- n indicates the overall occurrence count of in ;

- o indicates the overall occurrence count of in .

- (d)

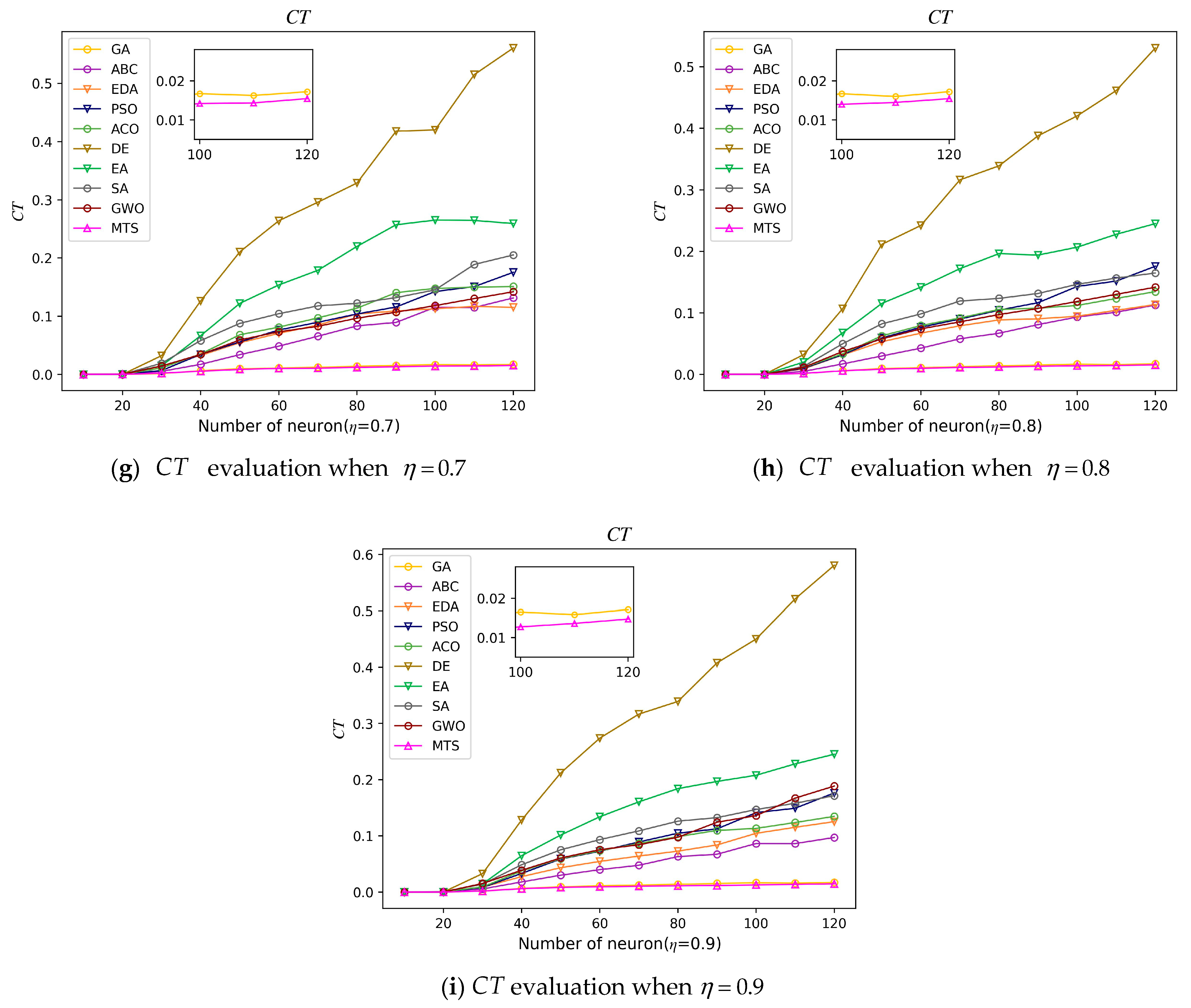

- Computational Time ()

- (e)

- Average iterations ()

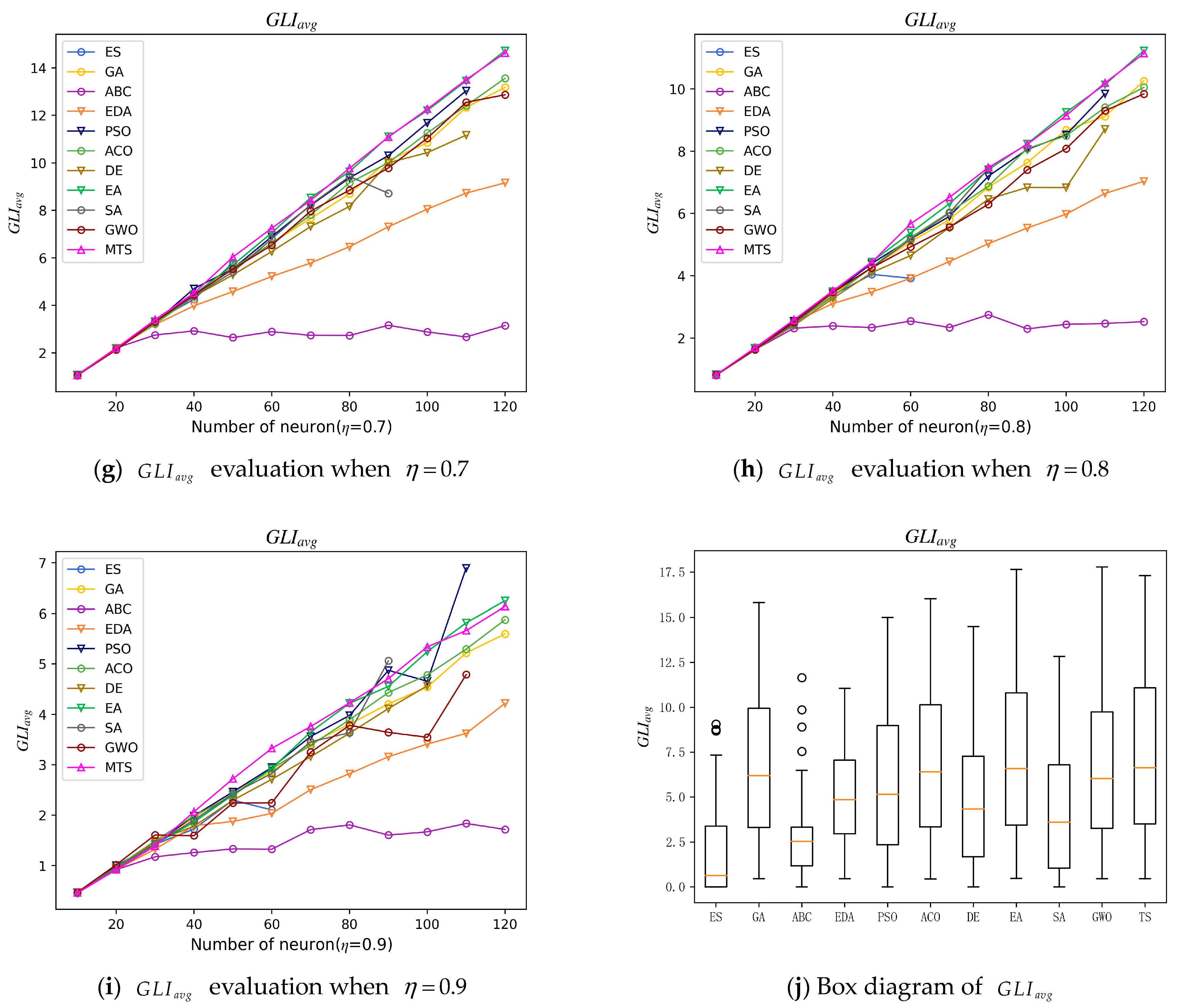

7.3.2. Retrieval Phase Metrics

- (f)

- Global minimum proportion ()

- (g)

- The average similarity of solutions ()

- (h)

- Mean Absolute Error of Logic Rule Energy ()

- (i)

- Friedman Statistical Analysis ()

7.4. Simulation Dataset

8. Result and Discussions

8.1. Learning Phase

- (a)

- Experimental model for

- (b)

- Experimental model for

8.2. Retrieval Phase

8.3. Friedman Test

8.4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Beam, A.L.; Kohane, I.S. Big Data and Machine Learning in Health Care. JAMA 2018, 319, 1317–1318. [Google Scholar] [CrossRef] [PubMed]

- Vigilante, K.; Escaravage, S.; McConnell, M. Big Data and the Intelligence Community-Lessons for Health Care. N. Engl. J. Med. 2019, 380, 1888–1890. [Google Scholar] [CrossRef] [PubMed]

- Egmont-Petersen, M.; de Ridder, D.; Handels, H. Image processing with neural networks—A review. Pattern Recognit. 2002, 35, 2279–2301. [Google Scholar] [CrossRef]

- Dang, X.; Tang, X.; Hao Ren, J. Discrete Hopfield neural network based indoor Wi-Fi localization using CSI. EURASIP J. Wirel. Commun. Netw. 2020, 2020, 76. [Google Scholar] [CrossRef]

- Mérida-Casermeiro, E.; Galán-Marín, G.; Muoz-Pérez, J. An Efficient Multivalued Hopfield Network for the Traveling Salesman Problem. Neural Process. Lett. 2001, 14, 203–216. [Google Scholar] [CrossRef]

- Chu, P.P. Applying Hopfield network to find the minimum cost coverage of a Boolean function. In Proceedings of the First Great Lakes Symposium on VLSI, Kalamazoo, MI, USA, 1–2 March 1991; IEEE Computer Society: Washington, DC, USA, 1991; pp. 182–183. [Google Scholar]

- Abdullah, W.A.T.W. Logic programming on a neural network. Int. J. Intell. Syst. 1992, 7, 513–519. [Google Scholar] [CrossRef]

- Chen, J.; Kasihmuddin, M.S.M.; Gao, Y.; Mansor, M.A.; Romli, N.A.; Chen, W.; Zheng, C. PRO2SAT: Systematic Probabilistic Satisfiability logic in Discrete Hopfield Neural Network. Adv. Eng. Softw. 2023, 175, 103355. [Google Scholar] [CrossRef]

- Hussain, K.; Mohd Salleh, M.N.; Cheng, S.; Shi, Y. Metaheuristic research: A comprehensive survey. Artif. Intell. Rev. 2019, 52, 2191–2233. [Google Scholar] [CrossRef]

- Abdel-Basset, M.; Abdel-Fatah, L.; Sangaiah, A.K. Metaheuristic algorithms: A comprehensive review. In Computational Intelligence for Multimedia Big Data on the Cloud with Engineering Applications; Elsevier: Amsterdam, The Netherlands; Academic Press: Cambridge, MA, USA, 2018; pp. 185–231. [Google Scholar]

- Zamri, N.E.; Azhar, S.A.; Mansor, M.A.; Alway, A.; Kasihmuddin MS, M. Weighted Random k Satisfiability for k = 1, 2 (r2SAT) in Discrete Hopfield Neural Network. Appl. Soft Comput. 2022, 126, 109312. [Google Scholar] [CrossRef]

- Someetheram, V.; Marsani, M.F.; Mohd Kasihmuddin, M.S.; Zamri, N.E.; Muhammad Sidik, S.S.; Mohd Jamaludin, S.Z.; Mansor, M.A. Random Maximum 2 Satisfiability Logic in Discrete Hopfield Neural Network Incorporating Improved Election Algorithm. Mathematics 2022, 10, 4734. [Google Scholar] [CrossRef]

- Muhammad Sidik, S.S.; Zamri, N.E.; Mohd Kasihmuddin, M.S.; Wahab, H.A.; Guo, Y.; Mansor, M.A. Non-Systematic Weighted Satisfiability in Discrete Hopfield Neural Network Using Binary Artificial Bee Colony Optimization. Mathematics 2022, 10, 1129. [Google Scholar] [CrossRef]

- Kho, L.C.; Kasihmuddin, M.S.M.; Mansor, M.A.; Sathasivam, S. Propositional Satisfiability Logic via Ant Colony Optimization in Hopfield Neural Network. Malays. J. Math. Sci. 2022, 16, 37–53. [Google Scholar]

- Ba, S.; Xia, D.; Gibbons, E.M. Model identification and strategy application for Solid Oxide Fuel Cell using Rotor Hopfield Neural Network based on a novel optimization method. Int. J. Hydrogen Energy 2020, 45, 27694–27704. [Google Scholar] [CrossRef]

- Glover, F. Tabu search: A tutorial. Interfaces 1990, 20, 74–94. [Google Scholar] [CrossRef]

- Glover, F.; Laguna, M. Tabu search. In Handbook of Combinatorial Optimization; Springer: Berlin/Heidelberg, Germany, 1998; pp. 2093–2229. [Google Scholar]

- Gopalakrishnan, M.; Mohan, S.; He, Z. A tabu search heuristic for preventive maintenance scheduling. Comput. Ind. Eng. 2001, 40, 149–160. [Google Scholar] [CrossRef]

- Meeran, S.; Morshed, M.S. A hybrid genetic tabu search algorithm for solving job shop scheduling problems: A case study. J. Intell. Manuf. 2012, 23, 1063–1078. [Google Scholar] [CrossRef]

- Žulj, I.; Kramer, S.; Schneider, M. A hybrid of adaptive large neighborhood search and tabu search for the order-batching problem. Eur. J. Opeproportionnal Res. 2018, 264, 653–664. [Google Scholar] [CrossRef]

- Lin, G.; Guan, J.; Li, Z.; Feng, H. A hybrid binary particle swarm optimization with tabu search for the set-union knapsack problem. Expert Syst. Appl. 2019, 135, 201–211. [Google Scholar] [CrossRef]

- Mohd Jamaludin, S.Z.; Mohd Kasihmuddin, M.S.; Md Ismail, A.I.; Mansor, M.A.; Md Basir, M.F. Energy based logic mining analysis with hopfield neural network for recruitment evaluation. Entropy 2021, 23, 40. [Google Scholar] [CrossRef]

- Mirjalili, S.; Mirjalili, S.M.; Lewis, A. Grey wolf optimizer. Adv. Eng. Softw. 2014, 69, 46–61. [Google Scholar] [CrossRef]

- Gendreau, M.; Iori, M.; Laporte, G.; Martello, S. A Tabu search heuristic for the vehicle routing problem with two-dimensional loading constraints. Int. J. 2008, 51, 4–18. [Google Scholar] [CrossRef]

- Misevicius, A. A tabu search algorithm for the quadratic assignment problem. Comput. Optim. Appl. 2005, 30, 95–111. [Google Scholar] [CrossRef]

- Holland, J.H. Genetic algorithms and the optimal allocation of trials. SIAM J. Comput. 1973, 2, 88–105. [Google Scholar] [CrossRef]

- Emami, H.; Derakhshan, F. Election algorithm: A new socio-politically inspired strategy. AI Commun. 2015, 28, 591–603. [Google Scholar] [CrossRef]

- Pelikan, M.; Sastry, K.; Goldberg, D.E. Multiobjective estimation of distribution algorithms. In Scalable Optimization via Probabilistic Modeling; Springer: Berlin/Heidelberg, Germany, 2006; pp. 223–248. [Google Scholar]

- He, Y.; Zhang, F.; Mirjalili, S.; Zhang, T. Novel binary differential evolution algorithm based on taper-shaped transfer functions for binary optimization problems. Swarm Evol. Comput. 2022, 69, 101022. [Google Scholar] [CrossRef]

- Poli, R.; Kennedy, J.; Blackwell, T. Particle swarm optimization. Swarm Intell. 2007, 1, 33–57. [Google Scholar] [CrossRef]

- Kirkpatrick, S.; Gelatt, C.D., Jr.; Vecchi, M.P. Optimization by Simulated Annealing. Science 1983, 220, 671–680. [Google Scholar] [CrossRef] [PubMed]

- Eiben, A.E.; Smith, J.E. Introduction to Evolutionary Computation; Springer: Berlin/Heidelberg, Germany, 2015. [Google Scholar]

- Deng, W.; Shang, S.; Cai, X.; Zhao, H.; Song, Y.; Xu, J. An improved differential evolution algorithm and its application in optimization problem. Soft Comput. 2021, 25, 5277–5298. [Google Scholar] [CrossRef]

- Qin, A.K.; Huang, V.L.; Suganthan, P.N. Differential evolution algorithm with strategy adaptation for global numerical optimization. IEEE Trans. Evol. Comput. 2008, 13, 398–417. [Google Scholar] [CrossRef]

| 8, 0.1 | 1 | |

| 8, 0.3 | 2 | |

| 8, 0.5 | 4 | |

| 8, 0.7 | 6 | |

| 8, 0.9 | 7 |

| Parameter | Parameter Value |

|---|---|

| Number of neurons () | |

| Neuron combination (a) | 100 |

| Number of trials (b) | 100 |

| Number of learnings (c) | 100 |

| Tolerance value (Tol) | 0.001 |

| Activation function | HTAF |

| Synaptic weight method | Wan Abdullah method |

| Initialization of neuron states | Random |

| CPU computing time | 24 h |

| Ratio of positive literal () | |

| Control probability () | {,} |

| Length of tabu table () | 3 |

| Number of segments (w) | 8 |

| Number of neighborhood operation segments | 2 |

| Number of mutated neurons | 2 |

| Neighborhood solution | 14 |

| Selection rate | 1 |

| Mutation rate | 1 |

| Maximum number of iterations | 100 |

| η | Measure | ES | GA | ABC | EDA | PSO | ACO | DE | EA | SA | GWO | MTS |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0.1 | Max | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 |

| Min | 0.000 | 1.000 | 0.000 | 0.690 | 0.000 | 0.850 | 0.000 | 1.000 | 0.000 | 0.220 | 1.000 | |

| Mean | 0.263 | 1.000 | 0.262 | 0.937 | 0.620 | 0.954 | 0.609 | 1.000 | 0.536 | 0.850 | 1.000 | |

| Std | 0.389 | 0.000 | 0.406 | 0.093 | 0.434 | 0.055 | 0.443 | 0.000 | 0.455 | 0.261 | 0.000 | |

| Avg Rank | 9.375 | 3.667 | 9.625 | 5.333 | 6.458 | 4.958 | 6.917 | 3.667 | 7.458 | 4.875 | 3.667 | |

| 0.2 | Max | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 |

| Min | 0.000 | 1.000 | 0.000 | 0.780 | 0.000 | 0.800 | 0.000 | 1.000 | 0.000 | 0.040 | 1.000 | |

| Mean | 0.256 | 1.000 | 0.294 | 0.943 | 0.613 | 0.953 | 0.603 | 1.000 | 0.524 | 0.790 | 1.000 | |

| Std | 0.386 | 0.000 | 0.423 | 0.069 | 0.438 | 0.062 | 0.445 | 0.000 | 0.465 | 0.341 | 0.000 | |

| Avg Rank | 9.583 | 3.625 | 9.500 | 5.042 | 6.667 | 5.000 | 6.708 | 3.625 | 7.417 | 5.208 | 3.625 | |

| 0.3 | Max | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 |

| Min | 0.000 | 1.000 | 0.000 | 0.780 | 0.100 | 0.870 | 0.000 | 1.000 | 0.000 | 0.050 | 1.000 | |

| Mean | 0.253 | 1.000 | 0.360 | 0.953 | 0.615 | 0.971 | 0.608 | 1.000 | 0.523 | 0.774 | 1.000 | |

| Std | 0.383 | 0.000 | 0.448 | 0.069 | 0.432 | 0.044 | 0.443 | 0.000 | 0.457 | 0.349 | 0.000 | |

| Avg Rank | 9.792 | 3.625 | 9.000 | 5.208 | 6.250 | 5.042 | 7.000 | 3.625 | 7.635 | 5.208 | 3.625 | |

| 0.4 | Max | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 |

| Min | 0.000 | 1.000 | 0.000 | 0.74 | 0.000 | 0.81 | 0.000 | 1.000 | 0.000 | 0.040 | 1.000 | |

| Mean | 0.263 | 1.000 | 0.483 | 0.939 | 0.620 | 0.956 | 0.599 | 1.000 | 0.520 | 0.754 | 1.000 | |

| Std | 0.394 | 0.000 | 0.459 | 0.078 | 0.435 | 0.065 | 0.445 | 0.000 | 0.462 | 0.370 | 0.000 | |

| Avg Rank | 9.792 | 3.583 | 8.333 | 5.417 | 6.833 | 5.083 | 7.000 | 3.583 | 7.583 | 5.208 | 3.583 | |

| 0.5 | Max | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 |

| Min | 0.000 | 1.000 | 0.820 | 0.830 | 0.000 | 0.880 | 0.000 | 1.000 | 0.000 | 0.030 | 1.000 | |

| Mean | 0.266 | 1.000 | 0.960 | 0.957 | 0.612 | 0.963 | 0.614 | 1.000 | 0.525 | 0.750 | 1.000 | |

| Std | 0.395 | 0.000 | 0.064 | 0.064 | 0.438 | 0.045 | 0.439 | 0.000 | 0.463 | 0.375 | 0.000 | |

| Avg Rank | 9.917 | 3.833 | 5.167 | 5.167 | 7.5 | 5.583 | 7.042 | 3.833 | 8.208 | 5.917 | 3.833 |

| η | Measure | ES | GA | ABC | EDA | PSO | ACO | DE | EA | SA | GWO | MTS |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0.6 | Max | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 |

| Min | 0.000 | 1.000 | 1.000 | 0.770 | 0.010 | 0.820 | 0.000 | 1.000 | 0.000 | 0.050 | 1.000 | |

| Mean | 0.300 | 1.000 | 1.000 | 0.935 | 0.265 | 0.920 | 0.225 | 1.000 | 0.150 | 0.420 | 1.000 | |

| Std | 0.391 | 0.000 | 0.000 | 0.084 | 0.436 | 0.057 | 0.445 | 0.000 | 0.458 | 0.367 | 0.000 | |

| Avg Rank | 10.417 | 4.042 | 4.042 | 5.625 | 6.917 | 5.542 | 7.250 | 4.042 | 8.167 | 5.917 | 4.042 | |

| 0.7 | Max | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 |

| Min | 0.000 | 1.000 | 1.000 | 0.740 | 0.000 | 0.83 | 0.000 | 1.000 | 0.000 | 0.070 | 1.000 | |

| Mean | 0.510 | 1.000 | 1.000 | 0.950 | 0.140 | 0.965 | 0.150 | 1.000 | 0.255 | 0.475 | 1.000 | |

| Std | 0.393 | 0.000 | 0.000 | 0.080 | 0.434 | 0.061 | 0.439 | 0.000 | 0.463 | 0.355 | 0.000 | |

| Avg Rank | 10.375 | 4.083 | 4.083 | 5.375 | 7.000 | 5.708 | 7.167 | 4.083 | 8.208 | 5.833 | 4.083 | |

| 0.8 | Max | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 |

| Min | 0.000 | 1.000 | 1.000 | 0.740 | 0.000 | 0.800 | 0.000 | 1.000 | 0.000 | 0.160 | 1.000 | |

| Mean | 0.150 | 1.000 | 1.000 | 0.930 | 0.100 | 0.930 | 0.350 | 1.000 | 0.575 | 0.705 | 1.000 | |

| Std | 0.395 | 0.000 | 0.000 | 0.090 | 0.443 | 0.071 | 0.447 | 0.000 | 0.457 | 0.298 | 0.000 | |

| Avg Rank | 9.917 | 4.000 | 4.000 | 5.625 | 6.833 | 5.625 | 7.625 | 4.000 | 8.667 | 5.708 | 4.000 | |

| 0.9 | Max | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 | 1.000 |

| Min | 0.000 | 1.000 | 1.000 | 0.740 | 0.000 | 0.700 | 0.000 | 1.000 | 0.000 | 0.000 | 1.000 | |

| Mean | 0.170 | 1.000 | 1.000 | 0.895 | 0.300 | 0.945 | 0.200 | 1.000 | 0.225 | 0.400 | 1.000 | |

| Std | 0.388 | 0.000 | 0.000 | 0.086 | 0.430 | 0.082 | 0.445 | 0.000 | 0.462 | 0.341 | 0.000 | |

| Avg Rank | 9.875 | 4.167 | 4.167 | 5.500 | 7.333 | 5.792 | 7.208 | 4.167 | 8.167 | 5.458 | 4.167 |

| η | Algorithm | 10 | 20 | 30 | 40 | 50 | 60 | 70 | 80 | 90 | 100 | 110 | 120 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0.1 | MTS | 0.457 | 0.946 | 1.426 | 1.949 | 2.462 | 3.150 | 3.626 | 4.212 | 4.785 | 5.161 | 5.776 | 6.242 |

| EA | 0.466 | 0.919 | 1.422 | 1.873 | 2.498 | 3.076 | 3.588 | 4.259 | 4.641 | 5.278 | 5.855 | 6.505 | |

| 0.2 | MTS | 0.800 | 1.652 | 2.586 | 3.610 | 4.564 | 5.324 | 6.510 | 7.409 | 8.278 | 9.232 | 9.991 | 11.074 |

| EA | 0.814 | 1.629 | 2.514 | 3.465 | 4.257 | 5.418 | 6.439 | 7.497 | 8.339 | 9.481 | 10.072 | 11.181 | |

| 0.3 | MTS | 1.072 | 2.146 | 3.464 | 4.713 | 5.894 | 7.462 | 8.457 | 9.725 | 11.091 | 12.319 | 13.426 | 14.755 |

| EA | 1.062 | 2.175 | 3.352 | 4.556 | 5.648 | 7.089 | 8.397 | 9.698 | 10.752 | 12.402 | 13.340 | 14.484 | |

| 0.4 | MTS | 1.198 | 2.447 | 3.876 | 5.284 | 6.736 | 8.285 | 9.791 | 11.122 | 12.610 | 13.891 | 15.477 | 16.744 |

| EA | 1.197 | 2.512 | 3.834 | 5.201 | 6.688 | 8.112 | 9.697 | 10.942 | 12.663 | 13.986 | 15.338 | 16.892 | |

| 0.5 | MTS | 1.252 | 2.590 | 4.020 | 5.611 | 7.098 | 8.682 | 10.061 | 11.571 | 13.080 | 14.564 | 15.870 | 17.312 |

| EA | 1.241 | 2.561 | 3.902 | 5.211 | 6.944 | 8.421 | 9.791 | 11.501 | 12.936 | 14.555 | 16.169 | 17.662 | |

| 0.6 | MTS | 1.198 | 2.506 | 3.857 | 5.316 | 6.754 | 8.175 | 9.725 | 11.160 | 12.761 | 13.992 | 15.323 | 16.634 |

| EA | 1.184 | 2.443 | 3.841 | 5.249 | 6.667 | 8.058 | 9.690 | 11.122 | 12.723 | 13.981 | 15.630 | 16.839 | |

| 0.7 | MTS | 1.062 | 2.191 | 3.400 | 4.520 | 6.032 | 7.248 | 8.420 | 9.775 | 11.084 | 12.265 | 13.502 | 14.629 |

| EA | 1.056 | 2.163 | 3.328 | 4.463 | 5.692 | 7.057 | 8.533 | 9.633 | 11.107 | 12.203 | 13.448 | 14.723 | |

| 0.8 | MTS | 0.816 | 1.691 | 2.586 | 3.518 | 4.432 | 5.670 | 6.519 | 7.476 | 8.221 | 9.145 | 10.194 | 11.138 |

| EA | 0.808 | 1.686 | 2.471 | 3.496 | 4.451 | 5.379 | 6.319 | 7.407 | 8.237 | 9.250 | 10.140 | 11.228 | |

| 0.9 | MTS | 0.458 | 0.918 | 1.390 | 2.068 | 2.721 | 3.325 | 3.759 | 4.228 | 4.708 | 5.339 | 5.661 | 6.138 |

| EA | 0.455 | 0.957 | 1.423 | 1.862 | 2.401 | 2.926 | 3.649 | 4.218 | 4.554 | 5.244 | 5.814 | 6.249 |

| Model | 10 | 20 | 30 | 40 | 50 | 60 | 70 | 80 | 90 | 100 | 110 | 120 | Probability |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| MTS | 7 | 6 | 8 | 9 | 7 | 8 | 8 | 7 | 5 | 4 | 4 | 1 | 69% |

| EA | 2 | 3 | 1 | 0 | 2 | 1 | 1 | 2 | 4 | 5 | 5 | 8 | 31% |

| Index | GA | Time Complexity | MTS | Time Complexity |

|---|---|---|---|---|

| 1 | Selection operation | O(clogc) | Initializing the initial solutions | O(clogc) |

| 2 | Crossover operation | O(m2 + nlogn) | Generation strategy to neighborhood solution | O(w × NN/2 + wlogw + w) |

| 3 | Mutation operation | O(n × NN) | Generation strategy to candidate solution | O(2) |

| 4 | Fitness evaluation | O(n × NN) | Fitness evaluation | O(NN/2) |

| collect | GATC = O(clogc + w × NN/2 + wlogw +m2 +w × NN) | MTSTC = O(clogc + w × NN/2 + wlogw + w+2 + NN/2) | ||

| η | Measure | ES | GA | ABC | EDA | PSO | ACO | DE | EA | SA | GWO | MTS |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0.1 | Max | 1.000 /20 | 1.000 | 1.000 /20 | 1.000 /70 | 1.000 /60 | 1.000 /60 | 1.000 /60 | 1.000 | 1.000 /50 | 1.000 /70 | 1.000 |

| Min | 0.000 /70 | 1.000 | 0.000 /80 | 0.690 /120 | 0.000 /120 | 0.780 /120 | 0.000 /120 | 1.000 | 0.000 /110 | 0.180 /120 | 1.000 | |

| Mean | 0.262 | 1.000 | 0.262 | 0.937 | 0.620 | 0.954 | 0.609 | 1.000 | 0.536 | 0.850 | 1.000 | |

| Std | 0.405 | 0.000 | 0.424 | 0.097 | 0.445 | 0.075 | 0.467 | 0.000 | 0.479 | 0.282 | 0.000 | |

| Avg Rank | 9.540 | 3.670 | 9.620 | 5.330 | 6.370 | 4.830 | 6.540 | 3.670 | 7.580 | 5.170 | 3.670 | |

| 0.2 | Max | 1.000 /20 | 1.000 | 1.000 /20 | 1.000 /60 | 1.000 /60 | 1.000 /60 | 1.000 /60 | 1.000 | 1.000 /50 | 1.000 /70 | 1.000 |

| Min | 0.000 /80 | 1.000 | 0.000 /80 | 0.780 /120 | 0.000 /120 | 0.800 /120 | 0.000 /110 | 1.000 | 0.000 /120 | 0.040 /120 | 1.000 | |

| Mean | 0.256 | 1.000 | 0.294 | 0.943 | 0.613 | 0.953 | 0.603 | 1.000 | 0.524 | 0.790 | 1.000 | |

| Std | 0.403 | 0.000 | 0.442 | 0.087 | 0.458 | 0.065 | 0.465 | 0.000 | 0.485 | 0.356 | 0.000 | |

| Avg Rank | 9.580 | 3.620 | 9.500 | 5.000 | 6.710 | 5.000 | 6.750 | 3.620 | 7.460 | 5.120 | 3.620 | |

| 0.3 | Max | 1.000 /20 | 1.000 | 1.000 /30 | 1.000 /60 | 1.000 /60 | 1.000 /50 | 1.000 /50 | 1.000 | 1.000 /50 | 1.000 /70 | 1.000 |

| Min | 0.000 /90 | 1.000 | 0.000 /100 | 0.780 /120 | 0.010 /120 | 0.870 /120 | 0.000 /110 | 1.000 | 0.000 /110 | 0.050 /120 | 1.000 | |

| Mean | 0.255 | 1.000 | 0.360 | 0.953 | 0.615 | 0.971 | 0.608 | 1.000 | 0.523 | 0.774 | 1.000 | |

| Std | 0.401 | 0.000 | 0.468 | 0.719 | 0.451 | 0.046 | 0.462 | 0.000 | 0.477 | 0.365 | 0.000 | |

| Avg Rank | 9.750 | 3.620 | 9.040 | 5.210 | 6.250 | 5.040 | 7.000 | 3.620 | 7.620 | 5.210 | 3.620 | |

| 0.4 | Max | 1.000 /20 | 1.000 | 1.000 /40 | 1.000 /50 | 1.000 /50 | 1.000 /60 | 1.000 /50 | 1.000 | 1.000 /50 | 1.000 /70 | 1.000 |

| Min | 0.000 /80 | 1.000 | 0.000 /120 | 0.740 /120 | 0.000 /110 | 0.810 /120 | 0.000 /110 | 1.000 | 0.000 /110 | 0.040 /120 | 1.000 | |

| Mean | 0.264 | 1.000 | 0.483 | 0.939 | 0.538 | 0.956 | 0.517 | 1.000 | 0.520 | 0.754 | 1.000 | |

| Std | 0.412 | 0.000 | 0.479 | 0.082 | 0.471 | 0.068 | 0.477 | 0.000 | 0.483 | 0.386 | 0.000 | |

| Avg Rank | 9.710 | 3.580 | 8.170 | 5.330 | 7.080 | 5.080 | 7.250 | 3.580 | 7.420 | 5.210 | 3.580 | |

| 0.5 | Max | 1.000 /20 | 1.000 | 1.000 /70 | 1.000 /70 | 1.000 /50 | 1.000 /50 | 1.000 /60 | 1.000 | 1.000 /50 | 1.000 /70 | 1.000 |

| Min | 0.000 /70 | 1.000 | 0.820 /120 | 0.830 /120 | 0.000 /110 | 0.890 /120 | 0.000 /120 | 1.000 | 0.000 /100 | 0.030 /120 | 1.000 | |

| Mean | 0.267 | 1.000 | 0.960 | 0.957 | 0.612 | 0.963 | 0.614 | 1.000 | 0.525 | 0.750 | 1.000 | |

| Std | 0.413 | 0.000 | 0.665 | 0.669 | 0.458 | 0.047 | 0.459 | 0.000 | 0.483 | 0.392 | 0.000 | |

| Avg Rank | 9.920 | 3.830 | 5.170 | 5.170 | 7.500 | 5.580 | 7.040 | 3.830 | 8.210 | 5.920 | 3.830 |

| η | Measure | ES | GA | ABC | EDA | PSO | ACO | DE | EA | SA | GWO | MTS |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0.6 | Max | 1.000 /10 | 1.000 | 1.000 | 1.000 /60 | 1.000 /60 | 1.000 /60 | 1.000 /60 | 1.000 | 1.000 /50 | 1.000 /50 | 1.000 |

| Min | 0.000 /70 | 1.000 | 1.000 | 0.770 /120 | 0.010 /120 | 0.820 /120 | 0.000 /100 | 1.000 | 0.000 /110 | 0.050 /120 | 1.000 | |

| Mean | 0.258 | 1.000 | 1.000 | 0.942 | 0.611 | 0.955 | 0.607 | 1.000 | 0.529 | 0.746 | 1.000 | |

| Std | 0.409 | 0.000 | 0.000 | 0.874 | 0.456 | 0.060 | 0.465 | 0.000 | 0.479 | 0.383 | 0.000 | |

| Avg Rank | 10.420 | 4.040 | 4.040 | 5.620 | 6.920 | 5.540 | 7.080 | 4.040 | 8.170 | 5.920 | 4.040 | |

| 0.7 | Max | 1.000 /10 | 1.000 | 1.000 | 1.000 /70 | 1.000 /60 | 1.000 /60 | 1.000 /60 | 1.000 | 1.000 /50 | 1.000 /70 | 1.000 |

| Min | 0.000 /60 | 1.000 | 1.000 | 0.740 /120 | 0.020 /120 | 0.830 /120 | 0.000 /120 | 1.000 | 0.000 /100 | 0.070 /100 | 1.000 | |

| Mean | 0.254 | 1.000 | 1.000 | 0.957 | 0.619 | 0.959 | 0.608 | 1.000 | 0.536 | 0.761 | 1.000 | |

| Std | 0.411 | 0.000 | 0.000 | 0.084 | 0.453 | 0.064 | 0.458 | 0.000 | 0.483 | 0.371 | 0.000 | |

| Avg Rank | 10.370 | 4.080 | 4.080 | 5.370 | 7.000 | 5.710 | 7.170 | 4.080 | 8.210 | 5.830 | 4.080 | |

| 0.8 | Max | 1.000 /20 | 1.000 | 1.000 | 1.000 /60 | 1.000 /60 | 1.000 /60 | 1.000 /50 | 1.000 | 1.000 /40 | 1.000 /70 | 1.000 |

| Min | 0.000 /70 | 1.000 | 1.000 | 0.740 /120 | 0.000 /120 | 0.800 /120 | 0.000 /120 | 1.000 | 0.000 /90 | 0.160 /120 | 1.000 | |

| Mean | 0.259 | 1.000 | 1.000 | 0.937 | 0.618 | 0.948 | 0.603 | 1.000 | 0.520 | 0.818 | 1.000 | |

| Std | 0.413 | 0.000 | 0.000 | 0.094 | 0.463 | 0.074 | 0.467 | 0.000 | 0.478 | 0.311 | 0.000 | |

| Avg Rank | 9.920 | 4.000 | 4.000 | 5.620 | 6.830 | 5.620 | 7.620 | 4.000 | 8.670 | 5.710 | 4.000 | |

| 0.9 | Max | 1.000 /20 | 1.000 | 1.000 | 1.000 /70 | 1.000 /50 | 1.000 /60 | 1.000 /50 | 1.000 | 1.000 /50 | 1.000 /90 | 1.000 |

| Min | 0.000 /70 | 1.000 | 1.000 | 0.740 /120 | 0.000 /120 | 0.700 /120 | 0.000 /110 | 1.000 | 0.000 /100 | 0.000 /120 | 1.000 | |

| Mean | 0.257 | 1.000 | 1.000 | 0.946 | 0.617 | 0.952 | 0.599 | 1.000 | 0.530 | 0.817 | 1.000 | |

| Std | 0.405 | 0.000 | 0.000 | 0.090 | 0.450 | 0.086 | 0.463 | 0.000 | 0.482 | 0.356 | 0.000 | |

| Avg Rank | 9.870 | 4.120 | 4.120 | 5.460 | 7.250 | 5.750 | 7.580 | 4.120 | 8.170 | 5.420 | 4.120 |

| η | Measure | ES | GA | ABC | EDA | PSO | ACO | DE | EA | SA | GWO | MTS |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0.1 | Max | 14.604 /120 | 0.000 | 16.077 /120 | 2.668 /120 | 15.498 /120 | 3.160 /120 | 14.857 /120 | 0.000 | 14.944 /120 | 12.505 /120 | 0.000 |

| Min | 0.000 /20 | 0.000 | 0.000 /20 | 0.000 /50 | 0.000 /60 | 0.000 /60 | 0.000 /60 | 0.000 | 0.000 /50 | 0.000 /70 | 0.000 | |

| Mean | 7.388 | 0.000 | 7.452 | 0.659 | 4.925 | 0.589 | 4.955 | 0.000 | 5.587 | 2.112 | 0.000 | |

| Std | 5.287 | 0.000 | 5.854 | 1.013 | 6.200 | 1.021 | 6.230 | 0.000 | 6.148 | 4.151 | 0.000 | |

| Avg Rank | 9.333 | 3.625 | 9.500 | 5.417 | 6.750 | 4.917 | 6.667 | 3.625 | 7.417 | 5.125 | 3.625 | |

| 0.2 | Max | 15.559 /120 | 0.000 | 17.358 /120 | 3.559 /120 | 14.524 /120 | 2.077 /120 | 15.431 /120 | 0.000 | 14.747 /120 | 13.961 /120 | 0.000 |

| Min | 0.000 /20 | 0.000 | 0.000 /20 | 0.000 /60 | 0.000 /50 | 0.000 /60 | 0.000 /60 | 0.000 | 0.000 /50 | 0.000 /70 | 0.000 | |

| Mean | 7.409 | 0.000 | 7.228 | 0.762 | 4.760 | 0.489 | 5.106 | 0.000 | 5.541 | 2.775 | 0.000 | |

| Std | 5.422 | 0.000 | 5.740 | 1.252 | 6.049 | 0.756 | 6.286 | 0.000 | 6.050 | 4.963 | 0.000 | |

| Avg Rank | 9.667 | 3.625 | 9.417 | 5.167 | 6.750 | 4.833 | 6.833 | 3.625 | 7.333 | 5.125 | 3.625 | |

| 0.3 | Max | 15.045 /120 | 0.000 | 14.590 /120 | 3.271 /120 | 14.255 /120 | 2.920 /120 | 14.752 /120 | 0.000 | 15.040 /120 | 14.117 /120 | 0.000 |

| Min | 0.000 /20 | 0.000 | 0.000 /30 | 0.000 /60 | 0.000 /60 | 0.000 /50 | 0.000 /50 | 0.000 | 0.000 /50 | 0.000 /70 | 0.000 | |

| Mean | 7.374 | 0.000 | 6.935 | 0.739 | 4.843 | 0.604 | 4.881 | 0.000 | 5.633 | 3.190 | 0.000 | |

| Std | 5.346 | 0.000 | 5.493 | 1.197 | 6.089 | 1.019 | 6.082 | 0.000 | 6.143 | 5.226 | 0.000 | |

| Avg Rank | 9.917 | 3.625 | 8.792 | 5.167 | 6.583 | 4.958 | 6.792 | 3.625 | 7.625 | 5.292 | 3.625 | |

| 0.4 | Max | 15.272 /120 | 0.000 | 17.675 /120 | 5.091 /120 | 15.229 /120 | 3.289 /120 | 14.988 /120 | 0.000 | 14.791 /120 | 14.167 /120 | 0.000 |

| Min | 0.000 /20 | 0.000 | 0.000 /40 | 0.000 /50 | 0.000 /60 | 0.000 /60 | 0.000 /60 | 0.000 | 0.000 /50 | 0.000 /70 | 0.000 | |

| Mean | 7.420 | 0.000 | 5.929 | 0.834 | 4.863 | 0.607 | 5.021 | 0.000 | 5.631 | 3.399 | 0.000 | |

| Std | 5.392 | 0.000 | 6.541 | 1.465 | 6.149 | 1.000 | 6.207 | 0.000 | 6.185 | 5.306 | 0.000 | |

| Avg Rank | 9.833 | 3.667 | 8.167 | 5.458 | 6.542 | 5.250 | 6.792 | 3.667 | 7.625 | 5.333 | 3.667 | |

| 0.5 | Max | 15.067 /120 | 0.000 | 5.601 /120 | 3.354 /120 | 14.711 /120 | 2.880 /120 | 14.581 /120 | 0.000 | 14.902 /120 | 14.661 /120 | 0.000 |

| Min | 0.000 /20 | 0.000 | 0.000 /70 | 0.000 /70 | 0.000 /50 | 0.000 /50 | 0.000 /60 | 0.000 | 0.000 /50 | 0.000 /70 | 0.000 | |

| Mean | 7.396 | 0.000 | 0.990 | 0.666 | 5.094 | 0.498 | 4.993 | 0.000 | 5.641 | 3.392 | 0.000 | |

| Std | 5.332 | 0.000 | 1.715 | 1.120 | 6.140 | 0.869 | 6.092 | 0.000 | 6.142 | 5.371 | 0.000 | |

| Avg Rank | 9.833 | 3.833 | 5.333 | 5.083 | 7.542 | 5.542 | 6.875 | 3.833 | 8.292 | 6.000 | 3.833 |

| η | Measure | ES | GA | ABC | EDA | PSO | ACO | DE | EA | SA | GWO | MTS |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0.6 | Max | 14.723 /120 | 0.000 | 0.000 | 3.678 /120 | 15.109 /120 | 1.692 /120 | 14.908 /120 | 0.000 | 14.852 /120 | 14.030 /120 | 0.000 |

| Min | 0.000 /10 | 0.000 | 0.000 | 0.000 /60 | 0.000 /60 | 0.000 /60 | 0.0000 /60 | 0.000 | 0.000 /50 | 0.000 /70 | 0.000 | |

| Mean | 7.383 | 0.000 | 0.000 | 0.696 | 4.803 | 0.474 | 4.853 | 0.000 | 5.555 | 3.399 | 0.000 | |

| Std | 5.311 | 0.000 | 0.000 | 1.142 | 6.063 | 0.695 | 6.068 | 0.000 | 6.091 | 5.386 | 0.000 | |

| Avg Rank | 10.083 | 4.042 | 4.042 | 5.667 | 7.250 | 5.500 | 7.500 | 4.042 | 7.917 | 5.917 | 4.042 | |

| 0.7 | Max | 14.888 /120 | 0.000 | 0.000 | 2.596 /120 | 14.221 /120 | 1.691 /120 | 14.912 /120 | 0.000 | 14.865 /120 | 14.975 /120 | 0.000 |

| Min | 0.000 /10 | 0.000 | 0.000 | 0.000 /70 | 0.000 /60 | 0.000 /60 | 0.000 /60 | 0.000 | 0.000 /50 | 0.000 /70 | 0.000 | |

| Mean | 7.384 | 0.000 | 0.000 | 0.625 | 4.694 | 0.457 | 4.947 | 0.000 | 5.677 | 3.085 | 0.000 | |

| Std | 5.357 | 0.000 | 0.000 | 0.944 | 6.009 | 0.704 | 6.190 | 0.000 | 6.209 | 5.346 | 0.000 | |

| Avg Rank | 10.083 | 4.083 | 4.083 | 5.458 | 6.833 | 5.500 | 7.583 | 4.083 | 7.917 | 6.292 | 4.083 | |

| 0.8 | Max | 14.930 /120 | 0.000 | 0.000 | 2.892 /120 | 15.01 /120 | 2.513 /120 | 14.982 /120 | 0.000 | 14.813 /120 | 12.733 /120 | 0.000 /120 |

| Min | 0.000 /20 | 0.000 | 0.000 | 0.000 /60 | 0.000 /60 | 0.000 /60 | 0.000 /50 | 0.000 | 0.000 /40 | 0.000 /70 | 0.000 /10 | |

| Mean | 7.551 | 0.000 | 0.000 | 0.633 | 4.949 | 0.539 | 4.909 | 0.000 | 5.620 | 2.597 | 0.000 | |

| Std | 5.421 | 0.000 | 0.000 | 0.962 | 6.114 | 0.932 | 6.063 | 0.000 | 6.093 | 4.531 | 0.000 | |

| Avg Rank | 9.917 | 4.000 | 4.000 | 5.708 | 7.375 | 5.458 | 7.500 | 4.000 | 8.250 | 5.792 | 4.000 | |

| 0.9 | Max | 14.821 /120 | 0.000 | 0.000 | 2.955 /120 | 14.389 /120 | 2.222 /120 | 15.276 /120 | 0.000 | 15.069 /120 | 11.345 /120 | 0.000 |

| Min | 0.000 /20 | 0.000 | 0.000 | 0.000 /70 | 0.000 /50 | 0.000 /60 | 0.000 /50 | 0.000 | 0.000 /50 | 0.000 /90 | 0.000 | |

| Mean | 8.841 | 0.000 | 0.000 | 0.997 | 8.143 | 0.515 | 5.186 | 0.000 | 9.681 | 4.500 | 0.000 | |

| Std | 5.304 | 0.000 | 0.000 | 0.979 | 5.835 | 0.822 | 6.216 | 0.000 | 6.197 | 3.805 | 0.000 | |

| Avg Rank | 9.667 | 4.125 | 4.125 | 5.583 | 7.125 | 5.625 | 7.958 | 4.125 | 8.292 | 5.250 | 4.125 |

| Model | η | Chi-Square Value, χ2 | p-Value | Accept(A)/Reject(R), H0 | Model | Chi-Square Value, χ2 | p-Value | Accept/Reject, H0 |

|---|---|---|---|---|---|---|---|---|

| 0.1 | 67.727 | 1.215 × 10−10 | R H0 | 79.697 | 5.7561 × 10−13 | R H0 | ||

| 0.2 | 70.182 | 4.089 × 10−11 | R H0 | 80.273 | 4.4381 × 10−13 | R H0 | ||

| 0.3 | 69.546 | 5.426 × 10−11 | R H0 | 77.994 | 1.2399 × 10−12 | R H0 | ||

| 0.4 | 64.303 | 5.509 × 10−10 | R H0 | 73.484 | 9.3768 × 10−12 | R H0 | ||

| 0.5 | 75.046 | 4.662 × 10−12 | R H0 | 71.689 | 2.0903 × 10−11 | R H0 | ||

| 0.6 | 77.455 | 1.581 × 10−12 | R H0 | 78.046 | 1.2112 × 10−12 | R H0 | ||

| 0.7 | 72.167 | 1.689 × 10−12 | R H0 | 77.552 | 1.5129 × 10−12 | R H0 | ||

| 0.8 | 81.742 | 2.285 × 10−13 | R H0 | 76.690 | 2.229 × 10−12 | R H0 | ||

| 0.9 | 71.621 | 2.154 × 10−11 | R H0 | 71.525 | 2.249 × 10−11 | R H0 | ||

| GLIavg | 0.1 | 41.028 | 1.116 × 10−5 | R H0 | CT | 98.527 | 1.074 × 10−16 | R H0 |

| 0.2 | 40.920 | 1.166 × 10−5 | R H0 | 87.320 | 1.818 × 10−14 | R H0 | ||

| 0.3 | 54.616 | 3.725 × 10−8 | R H0 | 97.046 | 2.122 × 10−16 | R H0 | ||

| 0.4 | 53.175 | 6.909 × 10−8 | R H0 | 96.696 | 2.493 × 10−16 | R H0 | ||

| 0.5 | 54.191 | 4.472 × 10−8 | R H0 | 93.146 | 1.271 × 10−15 | R H0 | ||

| 0.6 | 58.906 | 5.835 × 10−9 | R H0 | 100.993 | 3.448 × 10−17 | R H0 | ||

| 0.7 | 55.253 | 2.833 × 10−8 | R H0 | 90.348 | 4.569 × 10−15 | R H0 | ||

| 0.8 | 63.445 | 8.032 × 10−10 | R H0 | 93.743 | 9.667 × 10−16 | R H0 | ||

| 0.9 | 57.113 | 1.270 × 10−8 | R H0 | 93.733 | 9.711 × 10−16 | R H0 | ||

| AI | 0.1 | 76.419 | 2.517 × 10−12 | R H0 | ZM | 79.650 | 5.878 × 10−13 | R H0 |

| 0.2 | 72.226 | 1.645 × 10−11 | R H0 | 80.273 | 4.438 × 10−13 | R H0 | ||

| 0.3 | 76.282 | 2.677 × 10−12 | R H0 | 77.843 | 1.327 × 10−12 | R H0 | ||

| 0.4 | 74.333 | 6.416 × 10−12 | R H0 | 72.248 | 1.629 × 10−11 | R H0 | ||

| 0.5 | 75.121 | 4.507 × 10−12 | R H0 | 71.689 | 2.090 × 10−11 | R H0 | ||

| 0.6 | 81.908 | 2.120 × 10−13 | R H0 | 78.046 | 1.211 × 10−12 | R H0 | ||

| 0.7 | 71.021 | 2.816 × 10−11 | R H0 | 77.552 | 1.513 × 10−12 | R H0 | ||

| 0.8 | 76.477 | 2.452 × 10−12 | R H0 | 76.690 | 2.229 × 10−12 | R H0 | ||

| 0.9 | 74.019 | 7.383 × 10−12 | R H0 | 73.164 | 1.082 × 10−11 | R H0 | ||

| TVsimilar | 0.1 | 31.566 | 4.729 × 10−4 | R H0 | MAEtest | 74.835 | 5.124 × 10−12 | R H0 |

| 0.2 | 28.559 | 1.467 × 10−3 | R H0 | 78.643 | 9.257 × 10−13 | R H0 | ||

| 0.3 | 40.200 | 1.563 × 10−5 | R H0 | 76.486 | 2.443 × 10−11 | R H0 | ||

| 0.4 | 40.246 | 1.53 × 10−5 | R H0 | 68.753 | 7.714 × 10−11 | R H0 | ||

| 0.5 | 27.707 | 2.011 × 10−3 | R H0 | 69.862 | 4.714 × 10−11 | R H0 | ||

| 0.6 | 40.627 | 1.313 × 10−3 | R H0 | 73.134 | 1.097 × 10−11 | R H0 | ||

| 0.7 | 43.478 | 4.084 × 10−6 | R H0 | 71.994 | 1.825 × 10−12 | R H0 | ||

| 0.8 | 40.727 | 1.261 × 10−5 | R H0 | 73.922 | 7.790 × 10−12 | R H0 | ||

| 0.9 | 52.180 | 1.057 × 10−7 | R H0 | 72.254 | 1.625 × 10−11 | R H0 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chen, J.; Gao, Y.; Kasihmuddin, M.S.M.; Zheng, C.; Romli, N.A.; Mansor, M.A.; Zamri, N.E.; When, C. MTS-PRO2SAT: Hybrid Mutation Tabu Search Algorithm in Optimizing Probabilistic 2 Satisfiability in Discrete Hopfield Neural Network. Mathematics 2024, 12, 721. https://doi.org/10.3390/math12050721

Chen J, Gao Y, Kasihmuddin MSM, Zheng C, Romli NA, Mansor MA, Zamri NE, When C. MTS-PRO2SAT: Hybrid Mutation Tabu Search Algorithm in Optimizing Probabilistic 2 Satisfiability in Discrete Hopfield Neural Network. Mathematics. 2024; 12(5):721. https://doi.org/10.3390/math12050721

Chicago/Turabian StyleChen, Ju, Yuan Gao, Mohd Shareduwan Mohd Kasihmuddin, Chengfeng Zheng, Nurul Atiqah Romli, Mohd. Asyraf Mansor, Nur Ezlin Zamri, and Chuanbiao When. 2024. "MTS-PRO2SAT: Hybrid Mutation Tabu Search Algorithm in Optimizing Probabilistic 2 Satisfiability in Discrete Hopfield Neural Network" Mathematics 12, no. 5: 721. https://doi.org/10.3390/math12050721

APA StyleChen, J., Gao, Y., Kasihmuddin, M. S. M., Zheng, C., Romli, N. A., Mansor, M. A., Zamri, N. E., & When, C. (2024). MTS-PRO2SAT: Hybrid Mutation Tabu Search Algorithm in Optimizing Probabilistic 2 Satisfiability in Discrete Hopfield Neural Network. Mathematics, 12(5), 721. https://doi.org/10.3390/math12050721