Accurate Forecasting of Global Horizontal Irradiance in Saudi Arabia: A Comparative Study of Machine Learning Predictive Models and Feature Selection Techniques

Abstract

1. Introduction

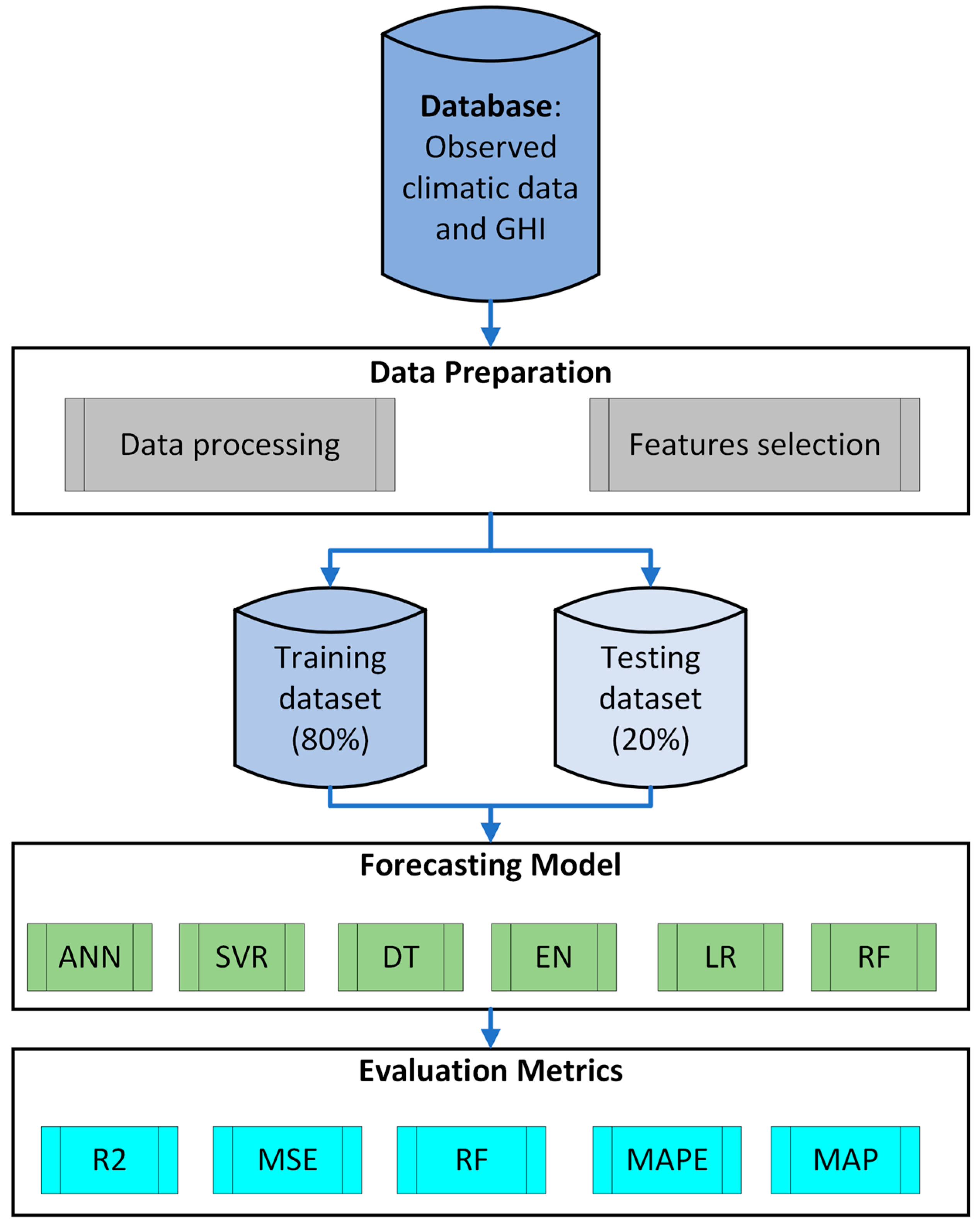

2. Materials and Methods

2.1. Data Acquisition and Processing

2.2. Forecasting Models

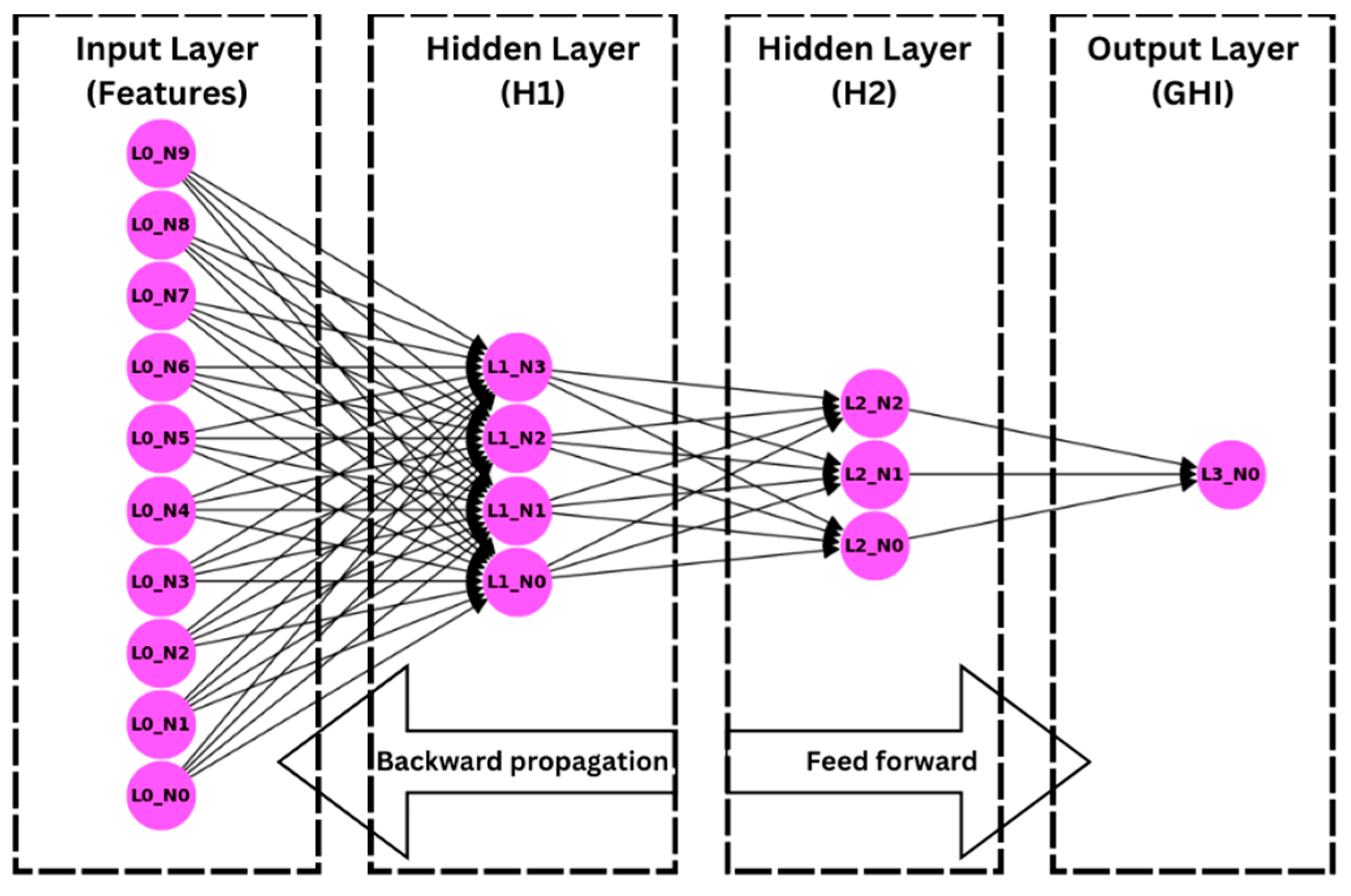

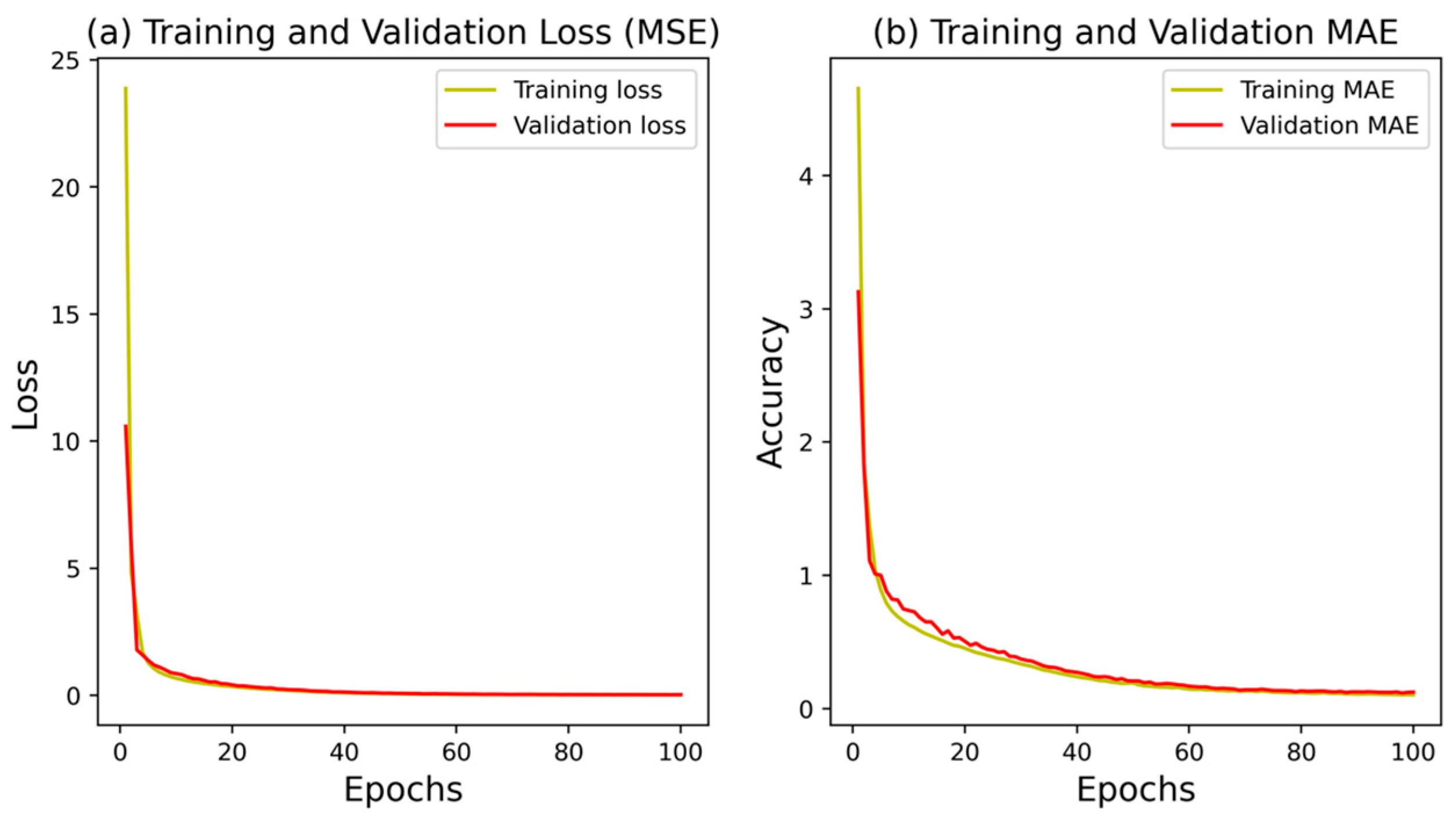

2.2.1. Artificial Neural Network (ANN)

2.2.2. Decision Tree (DT)

2.2.3. Elastic Net (EN)

2.2.4. Linear Regression (LR)

2.2.5. Random Forest (LR)

2.2.6. Support Vector Regression (SVR)

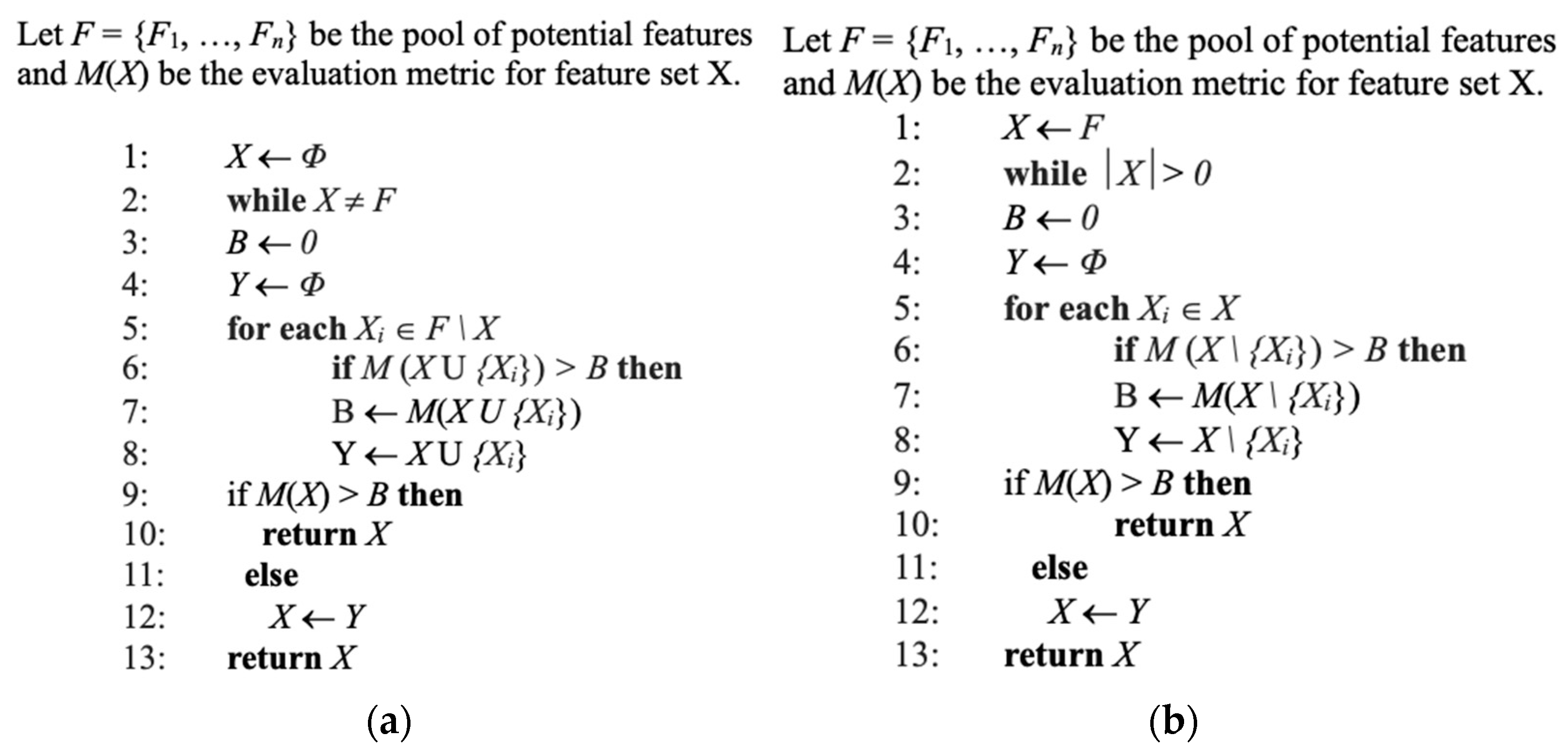

2.3. Features Selection

- Initialize the model with no features;

- For each feature not in the model:

- Temporarily add the feature to the model;

- Evaluate the model using a chosen metric (e.g., cross-validation error).

- Select the feature that most improves the model;

- Repeat steps 2–3 until no significant improvement is achieved or a stopping criterion is met.

- Initialize the model with all features;

- For each feature in the model:

- Temporarily remove the feature from the model;

- Evaluate the model using a chosen metric (e.g., cross-validation error).

- Select the feature whose removal has the least impact on the model;

- Repeat steps 2–3 until a stopping criterion is met (e.g., a specified number of features remain or no significant improvement is observed).

- Generate all possible combinations of features;

- For each combination:

- Train the model using the selected combination of features;

- Evaluate the model using a chosen metric (e.g., cross-validation error).

- Select the combination that yields the best performance.

2.4. Evaluation Metrics

2.5. Cross-Validation

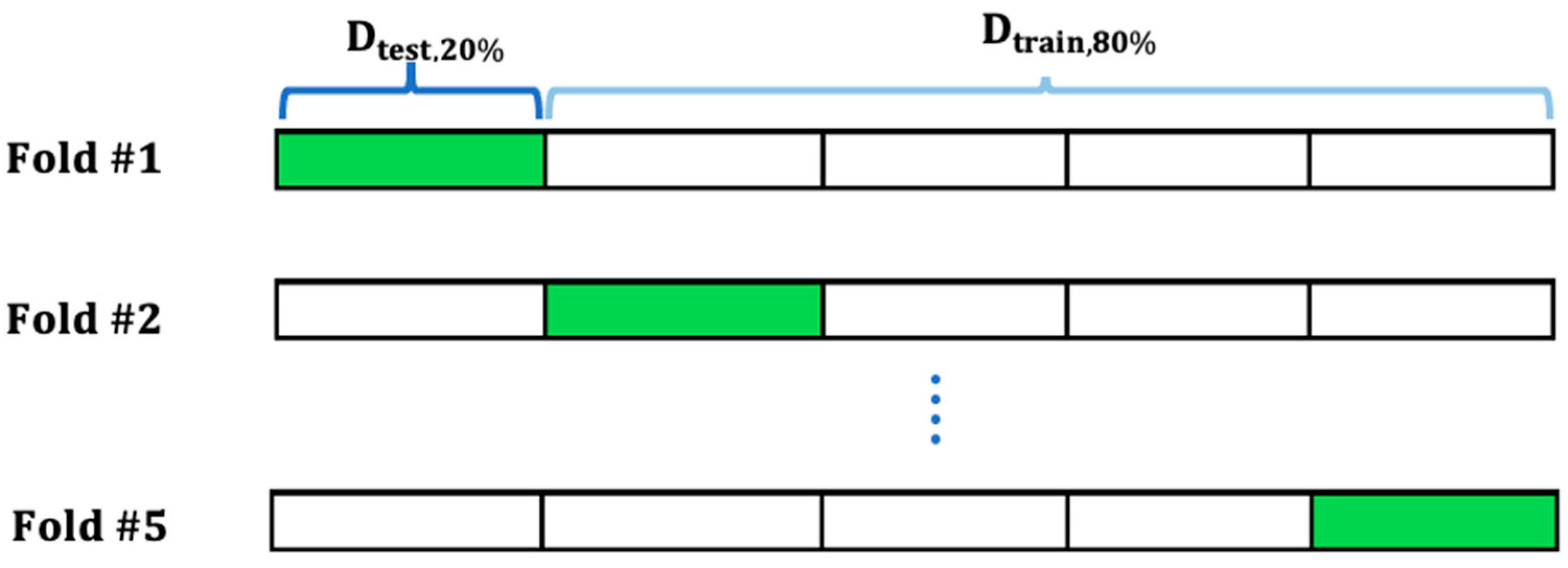

2.5.1. K-Fold CV Method

2.5.2. Shuffle Split CV Method

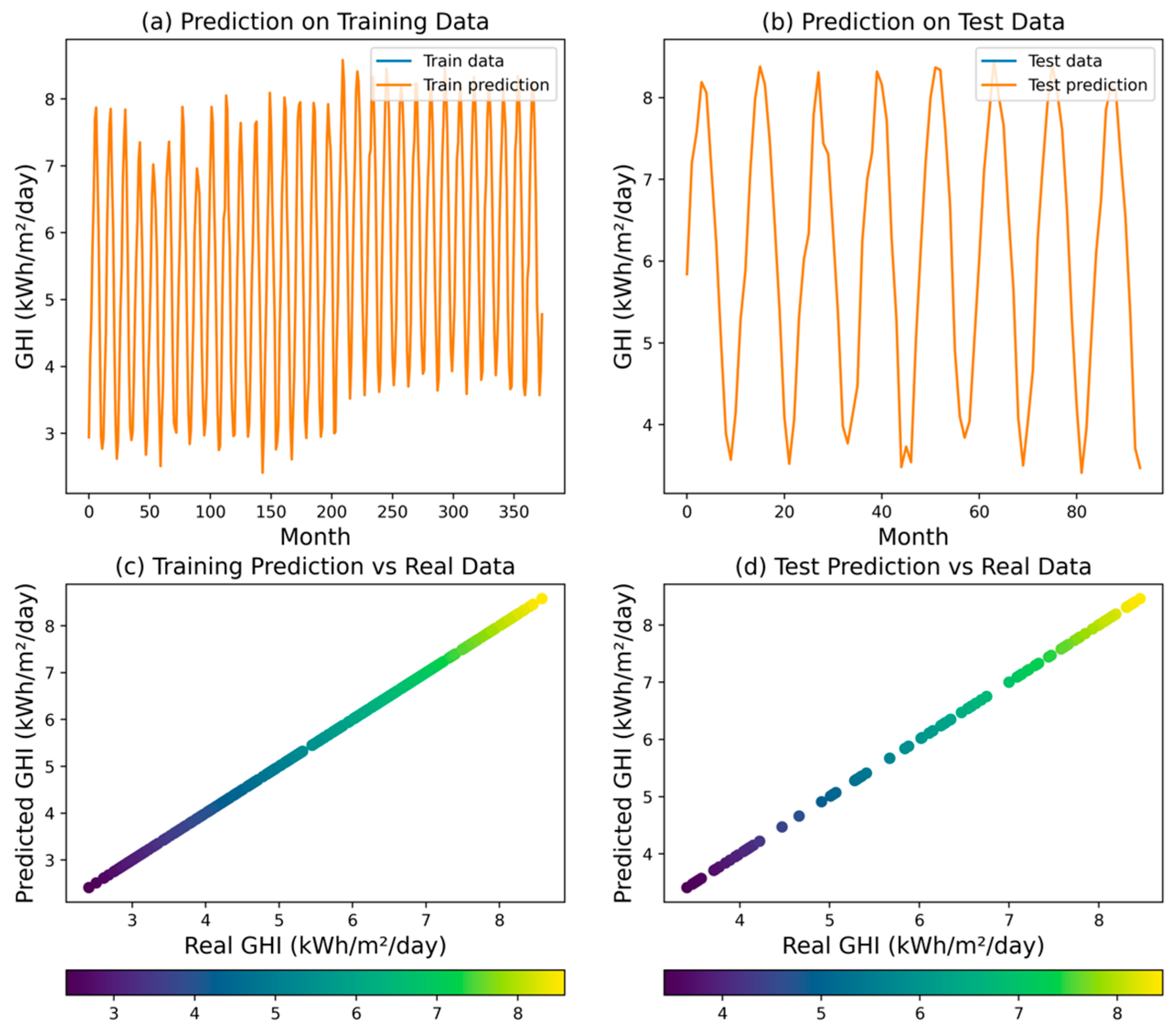

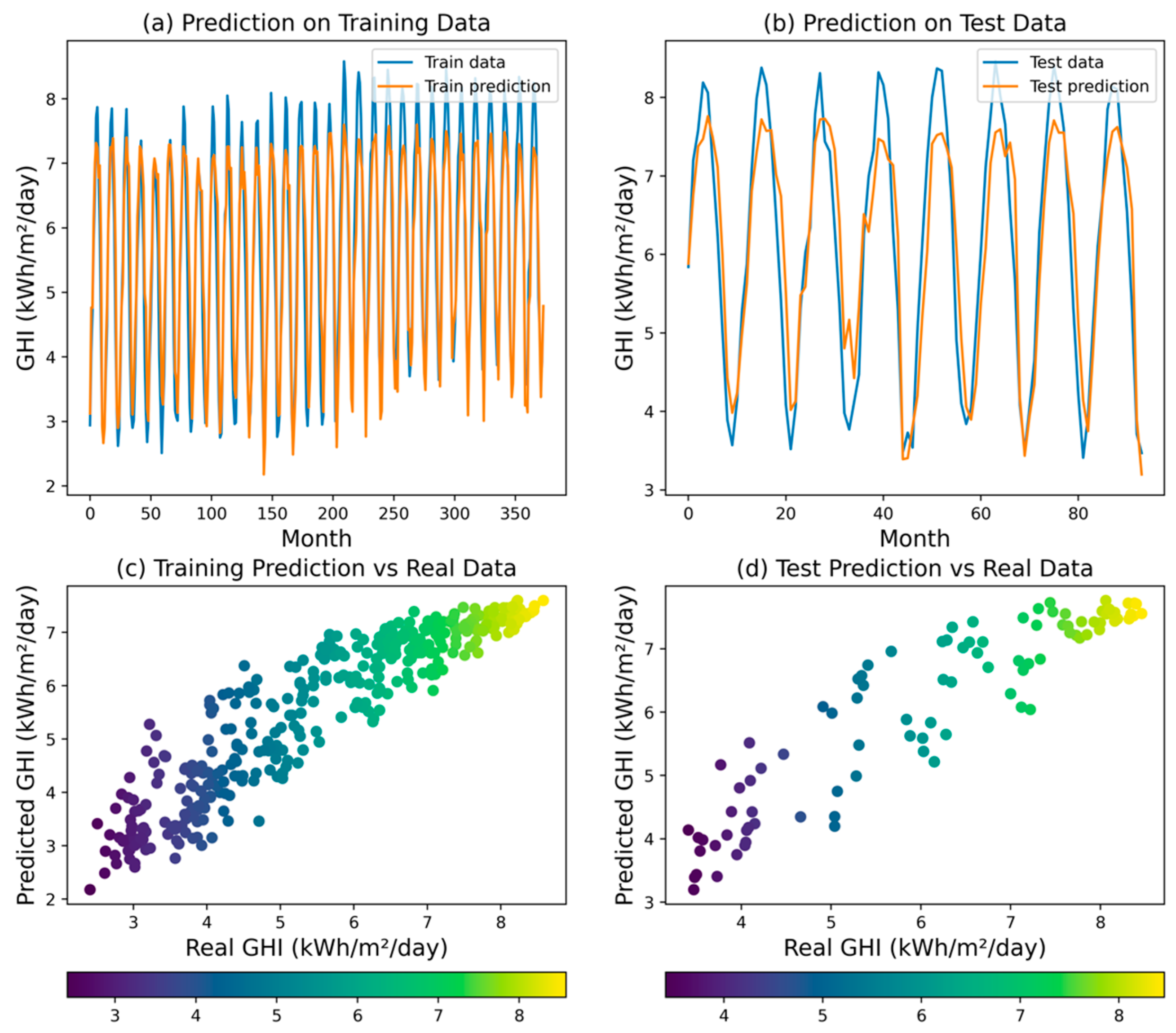

3. Results and Discussion

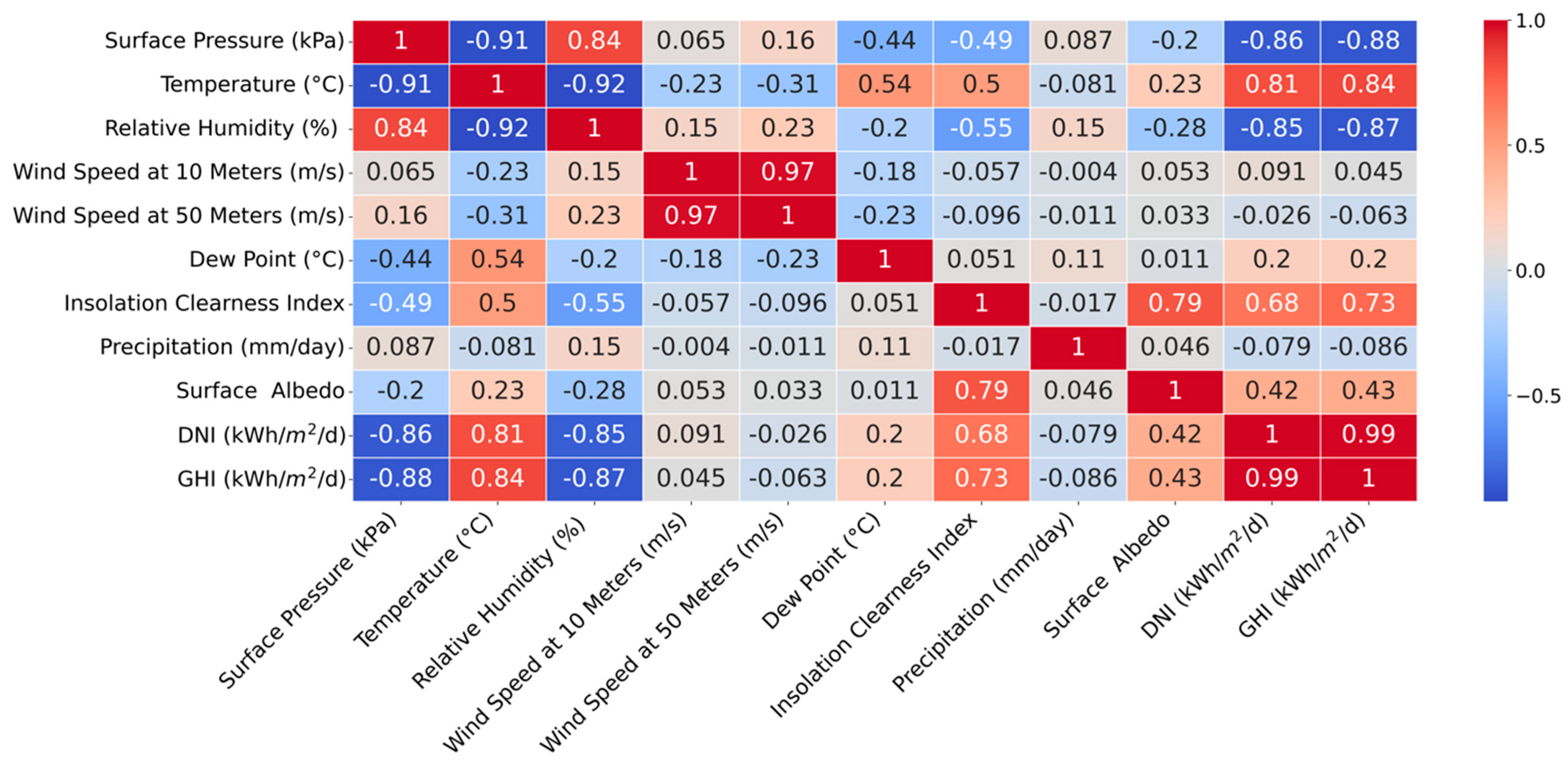

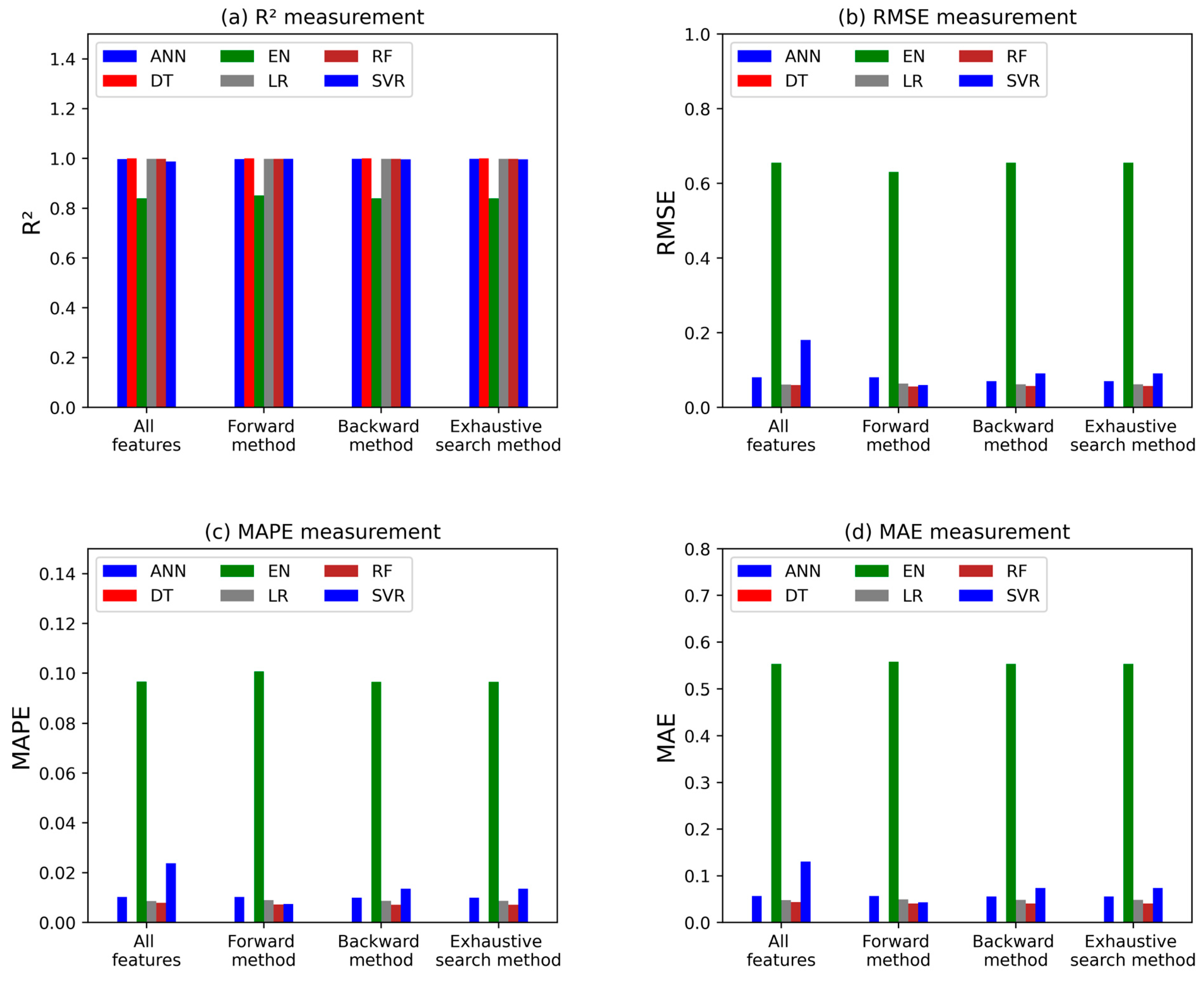

3.1. Features Selection Analysis

3.2. Analysis of K-Fold and Shuffle Splits Cross-Validation

- Artificial Neural Network: NN: Although the ANN showed promising accuracy, its reliance on a large amount of data for training can be a limitation, especially in regions with sparse historical data. Additionally, ANNs can be prone to overfitting if not carefully regulated, which may affect their generalization to unseen data.

- Decision Trees: DTs are intuitive and easy to interpret; however, they can be sensitive to small variations in the data. This sensitivity may lead to different models with slight data changes, which can hinder their reliability in dynamic climatic conditions.

- Elastic Net: While the EN effectively handles multicollinearity, its performance can be limited by the choice of hyperparameters. Finding the optimal balance between LASSO and Ridge penalties is crucial, and this tuning process can be computationally intensive.

- Linear Regression: LR assumes a linear relationship between predictors and the response variable, which may not capture the complexities of solar irradiance patterns. This simplification can lead to significant errors, particularly in non-linear scenarios.

- Random Forest: RF models, while robust and generally accurate, can suffer from interpretability issues. The ensemble nature of RF makes it difficult to understand the contribution of individual features, which is critical for stakeholders seeking actionable insights.

- Support Vector Regression: SVR is effective in high-dimensional spaces, but its performance can degrade with the presence of noise in the data. Additionally, selecting the appropriate kernel and tuning hyperparameters can be challenging and requires careful validation.

4. Conclusions

- DT Model: Achieved perfect accuracy with an R2 value of 1.0 and zero errors across all metrics, highlighting its capability to flawlessly capture the relationship between input variables and GHI;

- ANN, RF, and LR Models: Demonstrated high accuracy with R2 values exceeding 0.99, indicating their strong potential for precise GHI forecasting;

- EN Model: While effective in capturing broad trends, it showed limitations in predicting individual data points accurately, reflected in a lower R2 and higher error metrics compared to other models;

- SVR Model: Performed reasonably well but struggled with capturing finer details and subtle fluctuations, as indicated by a lower R2 value and higher error metrics;

- Feature Selection: Backward selection and exhaustive search improved the SVR model’s performance, but for the majority of the models, using all features was more beneficial, indicating minimal gains from feature selection methods.

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Gao, X.-Y.; Huang, C.-L.; Zhang, Z.-H.; Chen, Q.-X.; Zheng, Y.; Fu, D.-S.; Yuan, Y. Global horizontal irradiance prediction model for multi-site fusion under different aerosol types. Renew. Energy 2024, 227, 120565. [Google Scholar] [CrossRef]

- Lopes, F.M.; Silva, H.G.; Salgado, R.; Cavaco, A.; Canhoto, P.; Collares-Pereira, M. Short-term forecasts of GHI and DNI for solar energy systems operation: Assessment of the ECMWF integrated forecasting system in southern Portugal. Sol. Energy 2018, 170, 14–30. [Google Scholar] [CrossRef]

- Pereira, S.; Canhoto, P.; Salgado, R.; Costa, M.J. Development of an ANN based corrective algorithm of the operational ECMWF global horizontal irradiation forecasts. Sol. Energy 2019, 185, 387–405. [Google Scholar] [CrossRef]

- Huertas-Tato, J.; Aler, R.; Galván, I.M.; Rodríguez-Benítez, F.J.; Arbizu-Barrena, C.; Pozo-Vázquez, D. A short-term solar radiation forecasting system for the Iberian Peninsula. Part 2: Model blending approaches based on machine learning. Sol. Energy 2020, 195, 685–696. [Google Scholar] [CrossRef]

- Garniwa, P.M.P.; Rajagukguk, R.A.; Kamil, R.; Lee, H. Intraday forecast of global horizontal irradiance using optical flow method and long short-term memory model. Sol. Energy 2023, 252, 234–251. [Google Scholar] [CrossRef]

- Gupta, P.; Singh, R. Combining simple and less time complex ML models with multivariate empirical mode decomposition to obtain accurate GHI forecast. Energy 2023, 263, 125844. [Google Scholar] [CrossRef]

- Lee, J.; Wang, W.; Harrou, F.; Sun, Y. Reliable solar irradiance prediction using ensemble learning-based models: A comparative study. Energy Convers. Manag. 2020, 208, 112582. [Google Scholar] [CrossRef]

- Kumari, P.; Toshniwal, D. Long short term memory–convolutional neural network based deep hybrid approach for solar irradiance forecasting. Appl. Energy 2021, 295, 117061. [Google Scholar] [CrossRef]

- Elizabeth Michael, N.; Hasan, S.; Al-Durra, A.; Mishra, M. Short-term solar irradiance forecasting based on a novel Bayesian optimized deep Long Short-Term Memory neural network. Appl. Energy 2022, 324, 119727. [Google Scholar] [CrossRef]

- Chen, Y.; Bai, M.; Zhang, Y.; Liu, J.; Yu, D. Proactively selection of input variables based on information gain factors for deep learning models in short-term solar irradiance forecasting. Energy 2023, 284, 129261. [Google Scholar] [CrossRef]

- Weyll, A.L.C.; Kitagawa, Y.K.L.; Araujo, M.L.S.; da Silva Ramos, D.N.; de Lima, F.J.L.; dos Santos, T.S.; Jacondino, W.D.; Silva, A.R.; Araújo, A.C.; Pereira, L.K.M.; et al. Medium-term forecasting of global horizontal solar radiation in Brazil using machine learning-based methods. Energy 2024, 300, 131549. [Google Scholar] [CrossRef]

- Lai, C.S.; Zhong, C.; Pan, K.; Ng, W.W.Y.; Lai, L.L. A deep learning based hybrid method for hourly solar radiation forecasting. Expert Syst. Appl. 2021, 177, 114941. [Google Scholar] [CrossRef]

- Castangia, M.; Aliberti, A.; Bottaccioli, L.; Macii, E.; Patti, E. A compound of feature selection techniques to improve solar radiation forecasting. Expert Syst. Appl. 2021, 178, 114979. [Google Scholar] [CrossRef]

- Cannizzaro, D.; Aliberti, A.; Bottaccioli, L.; Macii, E.; Acquaviva, A.; Patti, E. Solar radiation forecasting based on convolutional neural network and ensemble learning. Expert Syst. Appl. 2021, 181, 115167. [Google Scholar] [CrossRef]

- Gupta, P.; Singh, R. Combining a deep learning model with multivariate empirical mode decomposition for hourly global horizontal irradiance forecasting. Renew. Energy 2023, 206, 908–927. [Google Scholar] [CrossRef]

- Ahmed, U.; Khan, A.R.; Mahmood, A.; Rafiq, I.; Ghannam, R.; Zoha, A. Short-term global horizontal irradiance forecasting using weather classified categorical boosting. Appl. Soft Comput. 2024, 155, 111441. [Google Scholar] [CrossRef]

- Imam, A.A.; Abusorrah, A.; Marzband, M. Potentials and opportunities of solar PV and wind energy sources in Saudi Arabia: Land suitability, techno-socio-economic feasibility, and future variability. Results Eng. 2024, 21, 101785. [Google Scholar] [CrossRef]

- NASA. NASA Prediction of Worldwide Energy Resources (POWER) Project. Available online: https://power.larc.nasa.gov/data-access-viewer/ (accessed on 3 August 2022).

- Jain, S.; Shukla, S.; Wadhvani, R. Dynamic selection of normalization techniques using data complexity measures. Expert Syst. Appl. 2018, 106, 252–262. [Google Scholar] [CrossRef]

- Lucas, S.; Portillo, E. Methodology based on spiking neural networks for univariate time-series forecasting. Neural Netw. 2024, 173, 106171. [Google Scholar] [CrossRef]

- de Dios Rojas Olvera, J.; Gómez-Vargas, I.; Vázquez, J.A. Observational Cosmology with Artificial Neural Networks. Universe 2022, 8, 120. [Google Scholar] [CrossRef]

- Usman Saeed Khan, M.; Mohammad Saifullah, K.; Hussain, A.; Mohammad Azamathulla, H. Comparative analysis of different rainfall prediction models: A case study of Aligarh City, India. Results Eng. 2024, 22, 102093. [Google Scholar] [CrossRef]

- Pekel, E. Estimation of soil moisture using decision tree regression. Theor. Appl. Climatol. 2020, 139, 1111–1119. [Google Scholar] [CrossRef]

- Thomas, T.; Vijayaraghavan, A.P.; Emmanuel, S. Machine Learning Approaches in Cyber Security Analytics; Springer: Singapore, 2020. [Google Scholar]

- Ye, N. The Handbook of Data Mining Human Factors and Ergonomics; CRC Press: Boca Raton, FL, USA, 2003. [Google Scholar]

- Grąbczewski, K. Meta-Learning in Decision Tree Induction; Springer International Publishing: Cham, Switzerland, 2014; Volume 498. [Google Scholar]

- Nikodinoska, D.; Käso, M.; Müsgens, F. Solar and wind power generation forecasts using elastic net in time-varying forecast combinations. Appl. Energy 2022, 306, 117983. [Google Scholar] [CrossRef]

- Gupta, R.; Yadav, A.K.; Jha, S.K.; Pathak, P.K. Predicting global horizontal irradiance of north central region of India via machine learning regressor algorithms. Eng. Appl. Artif. Intell. 2024, 133, 108426. [Google Scholar] [CrossRef]

- Ballesteros, M.; Nivre, J. MaltOptimizer: Fast and effective parser optimization. Nat. Lang. Eng. 2016, 22, 187–213. [Google Scholar] [CrossRef]

- Alway, A.; Zamri, N.E.; Mansor, M.A.; Kasihmuddin, M.S.M.; Jamaludin, S.Z.M.; Marsani, M.F. A novel Hybrid Exhaustive Search and data preparation technique with multi-objective Discrete Hopfield Neural Network. Decis. Anal. J. 2023, 9, 100354. [Google Scholar] [CrossRef]

- Al-Dahidi, S.; Hammad, B.; Alrbai, M.; Al-Abed, M. A novel dynamic/adaptive K-nearest neighbor model for the prediction of solar photovoltaic systems’ performance. Results Eng. 2024, 22, 102141. [Google Scholar] [CrossRef]

- Al-Ali, E.M.; Hajji, Y.; Said, Y.; Hleili, M.; Alanzi, A.M.; Laatar, A.H.; Atri, M. Solar Energy Production Forecasting Based on a Hybrid CNN-LSTM-Transformer Model. Mathematics 2023, 11, 676. [Google Scholar] [CrossRef]

- Pachouly, J.; Ahirrao, S.; Kotecha, K.; Selvachandran, G.; Abraham, A. A systematic literature review on software defect prediction using artificial intelligence: Datasets, Data Validation Methods, Approaches, and Tools. Eng. Appl. Artif. Intell. 2022, 111, 104773. [Google Scholar] [CrossRef]

- Berrar, D. Cross-Validation. In Encyclopedia of Bioinformatics and Computational Biology; Elsevier: Amsterdam, The Netherlands, 2019; pp. 542–545. [Google Scholar]

- Abrasaldo, P.M.B.; Zarrouk, S.J.; Kempa-Liehr, A.W. A systematic review of data analytics applications in above-ground geothermal energy operations. Renew. Sustain. Energy Rev. 2024, 189, 113998. [Google Scholar] [CrossRef]

- Xu, Q.-S.; Liang, Y.-Z. Monte Carlo cross validation. Chemom. Intell. Lab. Syst. 2001, 56, 1–11. [Google Scholar] [CrossRef]

| Metric | Algorithm | |||||

|---|---|---|---|---|---|---|

| ANN | DT | EN | LR | RF | SVR | |

| R2 | 0.9976 | 1 | 0.8396 | 0.9986 | 0.9987 | 0.9878 |

| Mean Squared Error (MSE) | 0.0065 | 0 | 0.4289 | 0.0037 | 0.0036 | 0.0325 |

| Root Mean Squared Error (RMSE) | 0.0803 | 0 | 0.6549 | 0.0610 | 0.0599 | 0.1803 |

| Mean Absolute Percentage Error (MAPE) | 0.0102 | 0 | 0.0966 | 0.0086 | 0.0079 | 0.0238 |

| Mean Absolute Error (MAE) | 0.0567 | 0 | 0.5534 | 0.0480 | 0.0438 | 0.1305 |

| All Features Were Selected | ||||||

|---|---|---|---|---|---|---|

| Metric | Algorithm | |||||

| ANN | DT | EN | LR | RF | SVR | |

| R2 | 0.9976 | 1 | 0.8396 | 0.9986 | 0.9987 | 0.9878 |

| Mean Squared Error (MSE) | 0.0065 | 0 | 0.4289 | 0.0037 | 0.0036 | 0.0325 |

| Root Mean Squared Error (RMSE) | 0.0803 | 0 | 0.6549 | 0.061 | 0.0599 | 0.1803 |

| Mean Absolute Percentage Error (MAPE) | 0.0102 | 0 | 0.0966 | 0.0086 | 0.0079 | 0.0238 |

| Mean Absolute Error (MAE) | 0.0567 | 0 | 0.5534 | 0.048 | 0.0438 | 0.1305 |

| Forward features selection method | ||||||

| Metric | Algorithm | |||||

| ANN | DT | EN | LR | RF | SVR | |

| R2 | 0.9976 | 1 | 0.8513 | 0.9985 | 0.9988 | 0.9987 |

| Mean Squared Error (MSE) | 0.0065 | 0 | 0.3977 | 0.0040 | 0.0031 | 0.0035 |

| Root Mean Squared Error (RMSE) | 0.0803 | 0 | 0.6306 | 0.0634 | 0.0556 | 0.0595 |

| Mean Absolute Percentage Error (MAPE) | 0.0102 | 0 | 0.1007 | 0.0089 | 0.0072 | 0.0074 |

| Mean Absolute Error (MAE) | 0.0567 | 0 | 0.5577 | 0.0491 | 0.0405 | 0.0433 |

| Backward features selection method | ||||||

| Metric | Algorithm | |||||

| ANN | DT | EN | LR | RF | SVR | |

| R2 | 0.9982 | 1 | 0.8396 | 0.9986 | 0.9988 | 0.9969 |

| Mean Squared Error (MSE) | 0.0049 | 0 | 0.4289 | 0.0038 | 0.0032 | 0.0083 |

| Root Mean Squared Error (RMSE) | 0.0699 | 0 | 0.6549 | 0.0618 | 0.0569 | 0.0910 |

| Mean Absolute Percentage Error (MAPE) | 0.0099 | 0 | 0.0966 | 0.0087 | 0.0071 | 0.0136 |

| Mean Absolute Error (MAE) | 0.0554 | 0 | 0.5534 | 0.0482 | 0.0403 | 0.0737 |

| Exhaustive features selection method | ||||||

| Metric | Algorithm | |||||

| ANN | DT | EN | LR | RF | SVR | |

| R2 | 0.9982 | 1 | 0.8396 | 0.9986 | 0.9988 | 0.9969 |

| Mean Squared Error (MSE) | 0.0049 | 0 | 0.4289 | 0.0038 | 0.0032 | 0.0083 |

| Root Mean Squared Error (RMSE) | 0.0699 | 0 | 0.6549 | 0.0618 | 0.0569 | 0.0910 |

| Mean Absolute Percentage Error (MAPE) | 0.0099 | 0 | 0.0966 | 0.0087 | 0.0071 | 0.0136 |

| Mean Absolute Error (MAE) | 0.0554 | 0 | 0.5534 | 0.0482 | 0.0403 | 0.0737 |

| Number of Folds | ANN | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.9981 | 0.0052 | 0.0721 | 0.0099 | 0.0529 |

| 2 | 0.9893 | 0.0312 | 0.1766 | 0.0255 | 0.1263 |

| 3 | 0.9975 | 0.0066 | 0.0815 | 0.0095 | 0.0501 |

| 4 | 0.9927 | 0.0212 | 0.1456 | 0.0216 | 0.1101 |

| 5 | 0.9928 | 0.0211 | 0.1454 | 0.0210 | 0.1090 |

| 6 | 0.9977 | 0.0060 | 0.0777 | 0.0100 | 0.0560 |

| 7 | 0.9934 | 0.0194 | 0.1391 | 0.0207 | 0.1055 |

| 8 | 0.9978 | 0.0060 | 0.0772 | 0.0096 | 0.0529 |

| 9 | 0.9926 | 0.0216 | 0.1471 | 0.0219 | 0.1110 |

| 10 | 0.9979 | 0.0056 | 0.0748 | 0.0097 | 0.0559 |

| Average | 0.9950 | 0.0144 | 0.1137 | 0.0159 | 0.0830 |

| Number of Folds | DT | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.9981 | 0.0048 | 0.0690 | 0.0078 | 0.0460 |

| 2 | 0.9970 | 0.0054 | 0.0738 | 0.0097 | 0.0608 |

| 3 | 0.9973 | 0.0072 | 0.0849 | 0.0115 | 0.0616 |

| 4 | 0.9986 | 0.0032 | 0.0567 | 0.0100 | 0.0517 |

| 5 | 0.9975 | 0.0069 | 0.0828 | 0.0135 | 0.0697 |

| 6 | 0.9992 | 0.0029 | 0.0541 | 0.0088 | 0.0510 |

| 7 | 0.9992 | 0.0016 | 0.0399 | 0.0053 | 0.0338 |

| 8 | 0.9975 | 0.0071 | 0.0843 | 0.0133 | 0.0689 |

| 9 | 0.9983 | 0.0041 | 0.0638 | 0.0123 | 0.0596 |

| 10 | 0.9960 | 0.0114 | 0.1069 | 0.0164 | 0.0858 |

| Average | 0.9978 | 0.0055 | 0.0716 | 0.0109 | 0.0589 |

| Number of Folds | EN | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.8655 | 0.3355 | 0.5793 | 0.0890 | 0.5001 |

| 2 | 0.8071 | 0.3514 | 0.5928 | 0.0734 | 0.4799 |

| 3 | 0.8779 | 0.3234 | 0.5687 | 0.0862 | 0.4384 |

| 4 | 0.6138 | 0.8785 | 0.9373 | 0.1708 | 0.8662 |

| 5 | 0.8177 | 0.4943 | 0.7030 | 0.1146 | 0.6365 |

| 6 | 0.7762 | 0.6543 | 0.8089 | 0.1193 | 0.7343 |

| 7 | 0.7255 | 0.5294 | 0.7276 | 0.1016 | 0.6800 |

| 8 | 0.8777 | 0.3523 | 0.5936 | 0.0774 | 0.4734 |

| 9 | 0.8372 | 0.3845 | 0.6201 | 0.1033 | 0.4986 |

| 10 | 0.8903 | 0.3153 | 0.5615 | 0.0739 | 0.4456 |

| Average | 0.8089 | 0.4619 | 0.6693 | 0.1010 | 0.5753 |

| Number of Folds | LR | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.9875 | 0.0312 | 0.1767 | 0.0251 | 0.1470 |

| 2 | 0.9918 | 0.0150 | 0.1226 | 0.0177 | 0.1080 |

| 3 | 0.9893 | 0.0284 | 0.1686 | 0.0193 | 0.1230 |

| 4 | 0.9797 | 0.0461 | 0.2148 | 0.0254 | 0.1330 |

| 5 | 0.9652 | 0.0943 | 0.3070 | 0.0505 | 0.2322 |

| 6 | 0.9783 | 0.0634 | 0.2518 | 0.0154 | 0.1678 |

| 7 | 0.9886 | 0.0221 | 0.1485 | 0.0154 | 0.1067 |

| 8 | 0.9725 | 0.0793 | 0.2815 | 0.0451 | 0.2289 |

| 9 | 0.9961 | 0.0092 | 0.0959 | 0.0172 | 0.0889 |

| 10 | 0.9794 | 0.0592 | 0.2432 | 0.0277 | 0.1689 |

| Average | 0.9828 | 0.0448 | 0.2011 | 0.0276 | 0.1504 |

| Number of Folds | RF | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.9920 | 0.0200 | 0.1414 | 0.0192 | 0.1181 |

| 2 | 0.9893 | 0.0195 | 0.1398 | 0.0190 | 0.1112 |

| 3 | 0.9887 | 0.0298 | 0.1726 | 0.0179 | 0.1079 |

| 4 | 0.9757 | 0.0552 | 0.2349 | 0.0312 | 0.1608 |

| 5 | 0.9829 | 0.0465 | 0.2155 | 0.0371 | 0.1546 |

| 6 | 0.9948 | 0.0153 | 0.1238 | 0.0164 | 0.1018 |

| 7 | 0.9947 | 0.0103 | 0.1013 | 0.0111 | 0.0777 |

| 8 | 0.9881 | 0.0342 | 0.1850 | 0.0297 | 0.1553 |

| 9 | 0.9948 | 0.0123 | 0.1108 | 0.0190 | 0.0901 |

| 10 | 0.9949 | 0.0148 | 0.1216 | 0.0122 | 0.0848 |

| Average | 0.9949 | 0.0258 | 0.1547 | 0.0213 | 0.1162 |

| Number of Folds | SVR | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.9808 | 0.0480 | 0.2192 | 0.0269 | 0.1662 |

| 2 | 0.9757 | 0.0443 | 0.2106 | 0.0264 | 0.1640 |

| 3 | 0.9932 | 0.0179 | 0.1339 | 0.0194 | 0.1169 |

| 4 | 0.9665 | 0.0762 | 0.2761 | 0.0437 | 0.2115 |

| 5 | 0.9535 | 0.1260 | 0.3550 | 0.0583 | 0.2353 |

| 6 | 0.9803 | 0.0575 | 0.2397 | 0.0272 | 0.1721 |

| 7 | 0.9917 | 0.0160 | 0.1266 | 0.0162 | 0.1053 |

| 8 | 0.9954 | 0.0132 | 0.1148 | 0.0170 | 0.0975 |

| 9 | 0.9150 | 0.2008 | 0.4481 | 0.0612 | 0.2684 |

| 10 | 0.6314 | 1.0598 | 1.0295 | 0.1608 | 0.7485 |

| Average | 0.9384 | 0.1660 | 0.3153 | 0.0457 | 0.2286 |

| Number of Splits | ANN | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.9934 | 0.0192 | 0.1386 | 0.0197 | 0.1025 |

| 2 | 0.9969 | 0.0082 | 0.0903 | 0.0121 | 0.0638 |

| 3 | 0.9929 | 0.0208 | 0.1444 | 0.0214 | 0.1090 |

| 4 | 0.9961 | 0.0103 | 0.1016 | 0.0120 | 0.0639 |

| 5 | 0.9983 | 0.0046 | 0.0679 | 0.0079 | 0.0456 |

| 6 | 0.9866 | 0.0392 | 0.1979 | 0.0281 | 0.1420 |

| 7 | 0.9983 | 0.0045 | 0.0670 | 0.0077 | 0.0431 |

| 8 | 0.9949 | 0.0149 | 0.1219 | 0.0180 | 0.0933 |

| 9 | 0.9977 | 0.0061 | 0.0782 | 0.0096 | 0.0527 |

| 10 | 0.9877 | 0.0359 | 0.1894 | 0.0265 | 0.1381 |

| Average | 0.9943 | 0.0164 | 0.1197 | 0.0163 | 0.0854 |

| Number of Splits | DT | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.9981 | 0.0059 | 0.0768 | 0.0154 | 0.0590 |

| 2 | 0.9981 | 0.0046 | 0.0677 | 0.0100 | 0.0629 |

| 3 | 0.9987 | 0.0070 | 0.0838 | 0.0110 | 0.0652 |

| 4 | 0.9982 | 0.0052 | 0.0719 | 0.0117 | 0.0633 |

| 5 | 0.9983 | 0.0066 | 0.0812 | 0.0088 | 0.0617 |

| 6 | 0.9979 | 0.0059 | 0.0770 | 0.0117 | 0.0484 |

| 7 | 0.9984 | 0.0059 | 0.0770 | 0.0094 | 0.0484 |

| 8 | 0.9983 | 0.0052 | 0.0719 | 0.0129 | 0.0497 |

| 9 | 0.9982 | 0.0070 | 0.0834 | 0.0132 | 0.0701 |

| 10 | 0.9974 | 0.0096 | 0.0981 | 0.0080 | 0.0713 |

| Average | 0.9980 | 0.0062 | 0.0789 | 0.0112 | 0.0598 |

| Number of Splits | EN | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.8680 | 0.3541 | 0.5950 | 0.1240 | 0.5710 |

| 2 | 0.8617 | 0.3773 | 0.6142 | 0.1002 | 0.7535 |

| 3 | 0.7740 | 0.2436 | 0.4936 | 0.0955 | 0.6943 |

| 4 | 0.8391 | 0.3887 | 0.6235 | 0.0960 | 0.6523 |

| 5 | 0.8759 | 0.5224 | 0.7228 | 0.0957 | 0.4565 |

| 6 | 0.8707 | 0.1463 | 0.3825 | 0.0792 | 0.5736 |

| 7 | 0.9305 | 0.3961 | 0.6294 | 0.1559 | 0.6751 |

| 8 | 0.8661 | 0.6477 | 0.8048 | 0.0893 | 0.6860 |

| 9 | 0.8909 | 0.6786 | 0.8238 | 0.1030 | 0.6000 |

| 10 | 0.9026 | 0.6799 | 0.8246 | 0.1225 | 0.5764 |

| Average | 0.8679 | 0.4435 | 0.6514 | 0.1061 | 0.6238 |

| Number of Splits | LR | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.9913 | 0.0436 | 0.2087 | 0.0303 | 0.1632 |

| 2 | 0.9828 | 0.0628 | 0.2505 | 0.0377 | 0.2221 |

| 3 | 0.9595 | 0.0420 | 0.2049 | 0.0225 | 0.1105 |

| 4 | 0.9657 | 0.0730 | 0.2702 | 0.0231 | 0.1405 |

| 5 | 0.9632 | 0.0712 | 0.2669 | 0.0244 | 0.1732 |

| 6 | 0.9656 | 0.0552 | 0.2349 | 0.0302 | 0.1742 |

| 7 | 0.9715 | 0.0362 | 0.1904 | 0.0302 | 0.1942 |

| 8 | 0.9620 | 0.0685 | 0.2618 | 0.0315 | 0.1411 |

| 9 | 0.9775 | 0.0154 | 0.1242 | 0.0250 | 0.1695 |

| 10 | 0.9836 | 0.0444 | 0.2107 | 0.0311 | 0.1605 |

| Average | 0.9723 | 0.0512 | 0.2449 | 0.0292 | 0.1649 |

| Number of Splits | RF | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.9831 | 0.0062 | 0.0785 | 0.0234 | 0.1773 |

| 2 | 0.9848 | 0.0237 | 0.1539 | 0.0197 | 0.1133 |

| 3 | 0.9833 | 0.0289 | 0.1700 | 0.0182 | 0.1179 |

| 4 | 0.9861 | 0.0327 | 0.1808 | 0.0334 | 0.1068 |

| 5 | 0.9861 | 0.0151 | 0.1227 | 0.0265 | 0.1582 |

| 6 | 0.9938 | 0.0322 | 0.1795 | 0.0241 | 0.1138 |

| 7 | 0.9900 | 0.0139 | 0.1181 | 0.0222 | 0.1434 |

| 8 | 0.9891 | 0.0250 | 0.1582 | 0.0359 | 0.1296 |

| 9 | 0.9880 | 0.0256 | 0.1601 | 0.0361 | 0.0716 |

| 10 | 0.9880 | 0.0164 | 0.1282 | 0.0223 | 0.0988 |

| Average | 0.9880 | 0.0220 | 0.1450 | 0.0262 | 0.1231 |

| Number of Splits | SVR | ||||

| R2 | MSE | RMSE | MAPE | MAE | |

| 1 | 0.9056 | 0.2011 | 0.4484 | 0.0838 | 0.2483 |

| 2 | 0.9499 | 0.0837 | 0.2893 | 0.0370 | 0.2509 |

| 3 | 0.9718 | 0.2508 | 0.5008 | 0.0310 | 0.2738 |

| 4 | 0.9084 | 0.0801 | 0.2831 | 0.0512 | 0.1557 |

| 5 | 0.9504 | 0.2467 | 0.4967 | 0.0433 | 0.4121 |

| 6 | 0.9412 | 0.3042 | 0.5515 | 0.0626 | 0.3009 |

| 7 | 0.9501 | 0.2116 | 0.4600 | 0.0504 | 0.1633 |

| 8 | 0.9228 | 0.2087 | 0.4569 | 0.0643 | 0.2541 |

| 9 | 0.9776 | 0.1299 | 0.3605 | 0.0236 | 0.1592 |

| 10 | 0.9867 | 0.0610 | 0.2471 | 0.0411 | 0.3380 |

| Average | 0.9464 | 0.1778 | 0.4094 | 0.0488 | 0.2556 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Imam, A.A.; Abusorrah, A.; Seedahmed, M.M.A.; Marzband, M. Accurate Forecasting of Global Horizontal Irradiance in Saudi Arabia: A Comparative Study of Machine Learning Predictive Models and Feature Selection Techniques. Mathematics 2024, 12, 2600. https://doi.org/10.3390/math12162600

Imam AA, Abusorrah A, Seedahmed MMA, Marzband M. Accurate Forecasting of Global Horizontal Irradiance in Saudi Arabia: A Comparative Study of Machine Learning Predictive Models and Feature Selection Techniques. Mathematics. 2024; 12(16):2600. https://doi.org/10.3390/math12162600

Chicago/Turabian StyleImam, Amir A., Abdullah Abusorrah, Mustafa M. A. Seedahmed, and Mousa Marzband. 2024. "Accurate Forecasting of Global Horizontal Irradiance in Saudi Arabia: A Comparative Study of Machine Learning Predictive Models and Feature Selection Techniques" Mathematics 12, no. 16: 2600. https://doi.org/10.3390/math12162600

APA StyleImam, A. A., Abusorrah, A., Seedahmed, M. M. A., & Marzband, M. (2024). Accurate Forecasting of Global Horizontal Irradiance in Saudi Arabia: A Comparative Study of Machine Learning Predictive Models and Feature Selection Techniques. Mathematics, 12(16), 2600. https://doi.org/10.3390/math12162600