A Novel Deep Reinforcement Learning Based Framework for Gait Adjustment

Abstract

1. Introduction

2. Novelty and Contribution of the Study

3. Related Works

3.1. Adaptive Control Techniques

3.2. DRL-Based Control Methods

4. Preliminaries

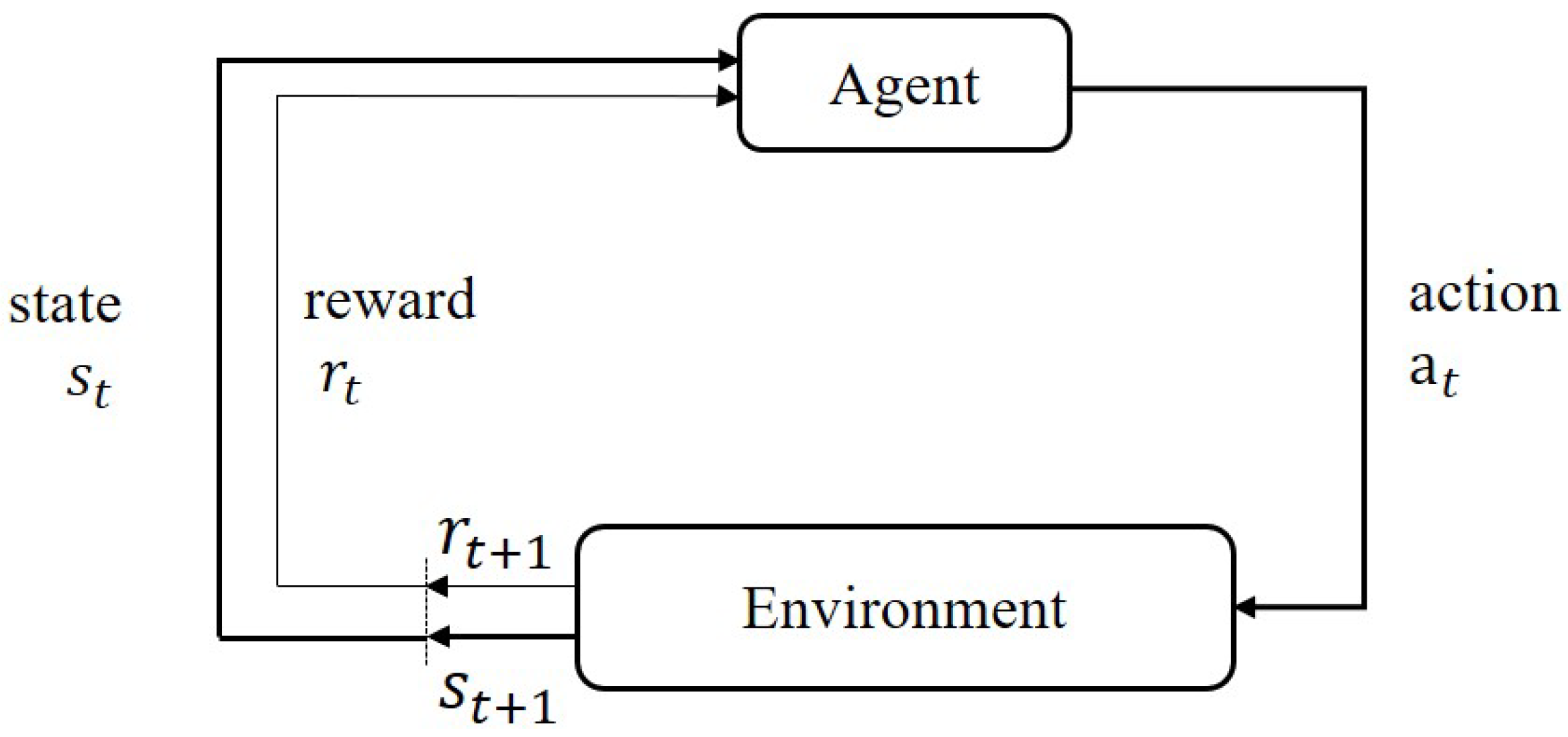

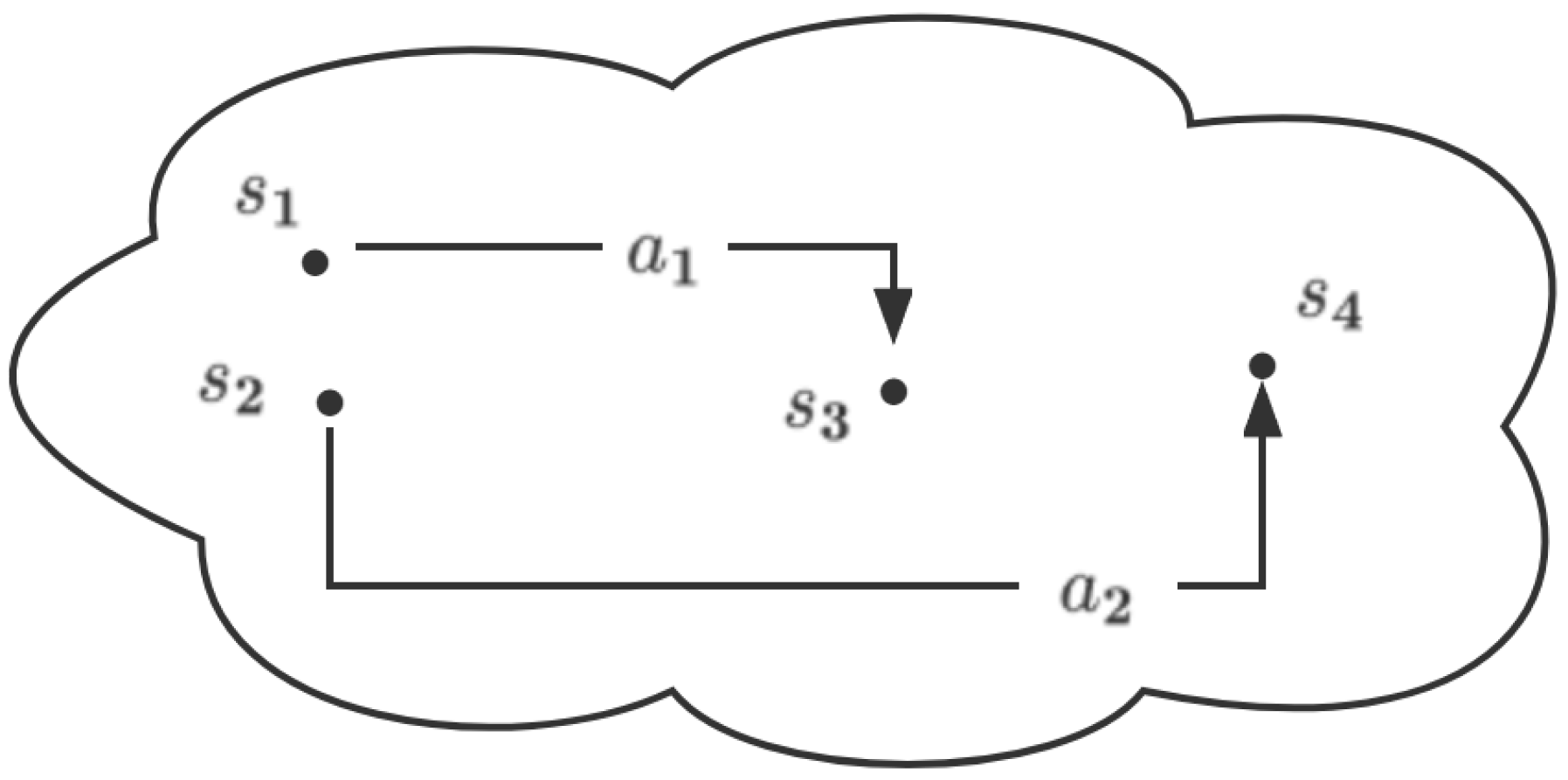

4.1. Reinforcement Learning

4.2. Softmax Deep Double Deterministic Policy Gradients

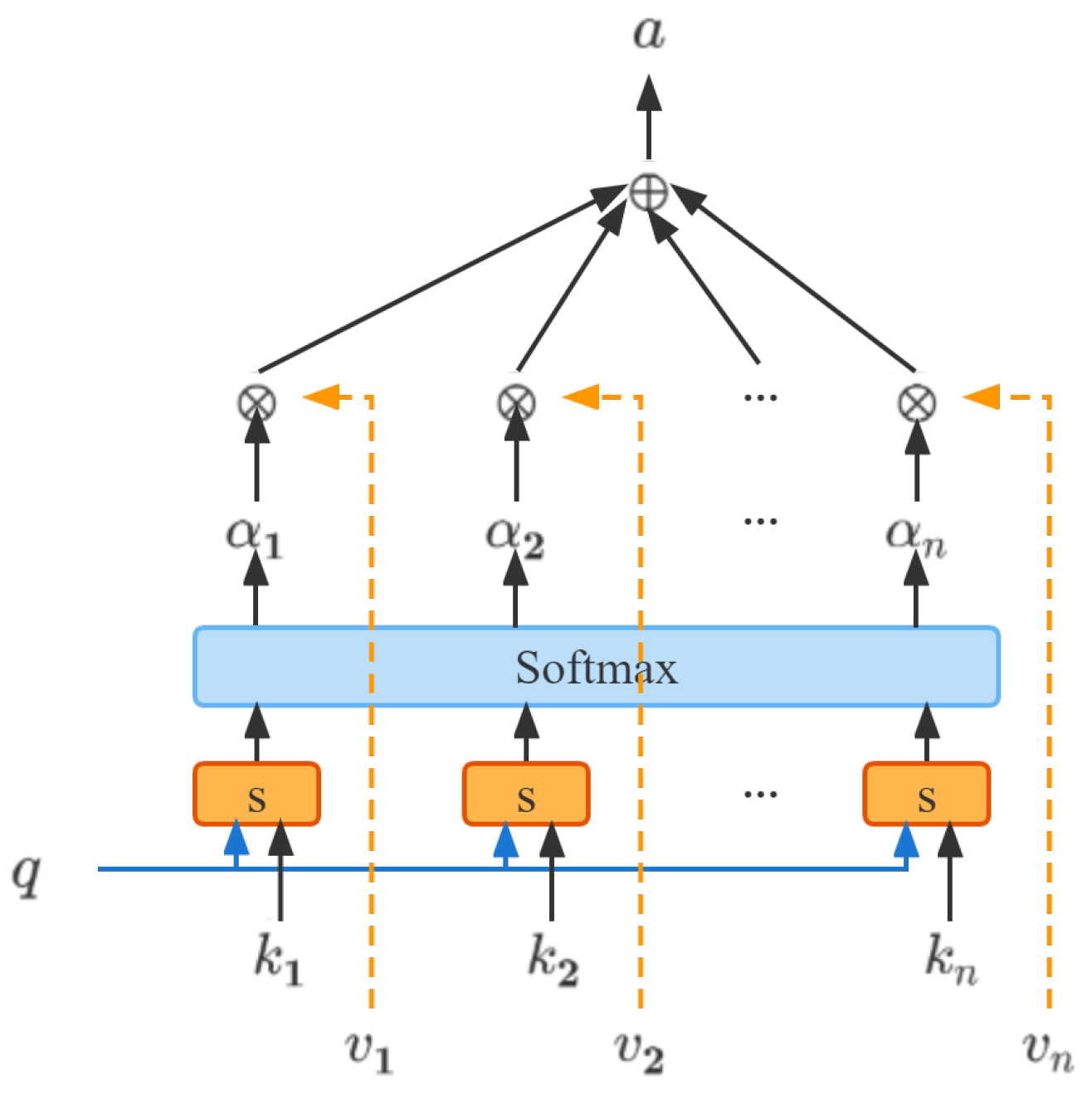

4.3. Key-Value Attention Mechanism

4.4. Parameter Space Noise for Exploration

5. Problem Modeling

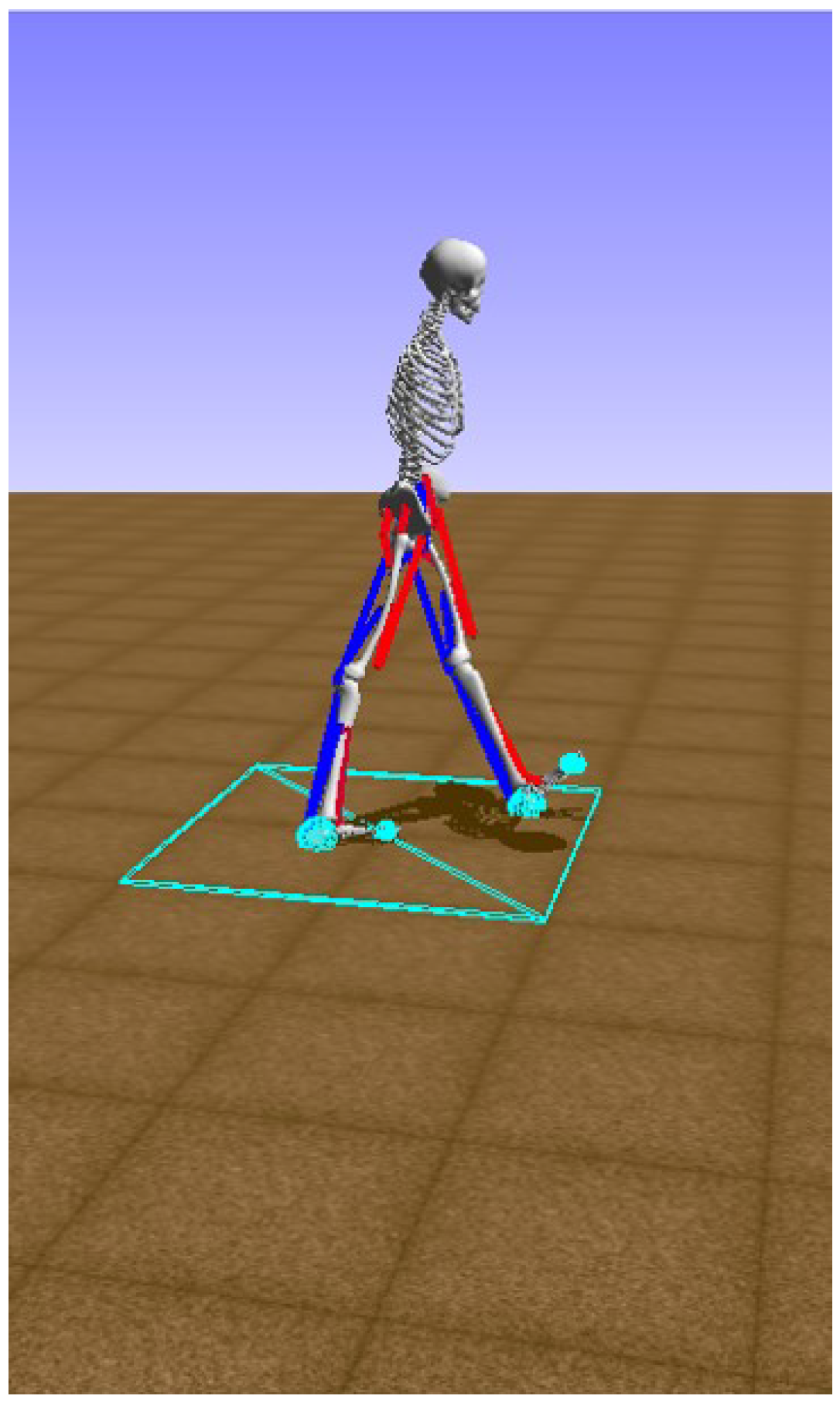

5.1. The Lower Limb Musculoskeletal Model

5.2. MDP Modeling

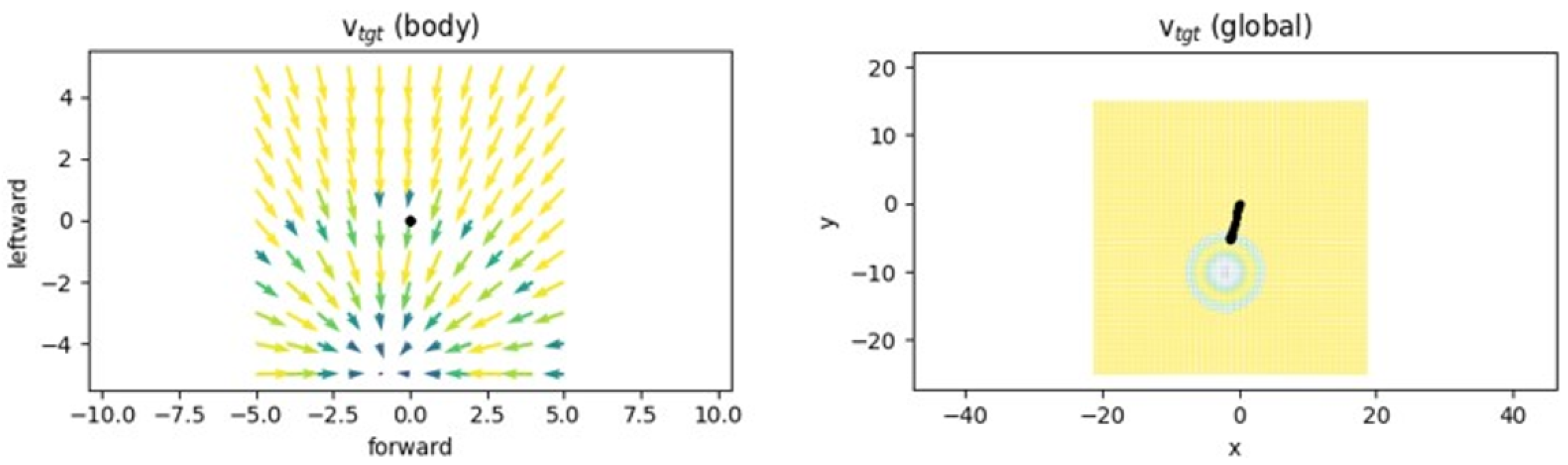

5.2.1. State Space

5.2.2. Action Space

5.2.3. Reward Function

6. Methodology

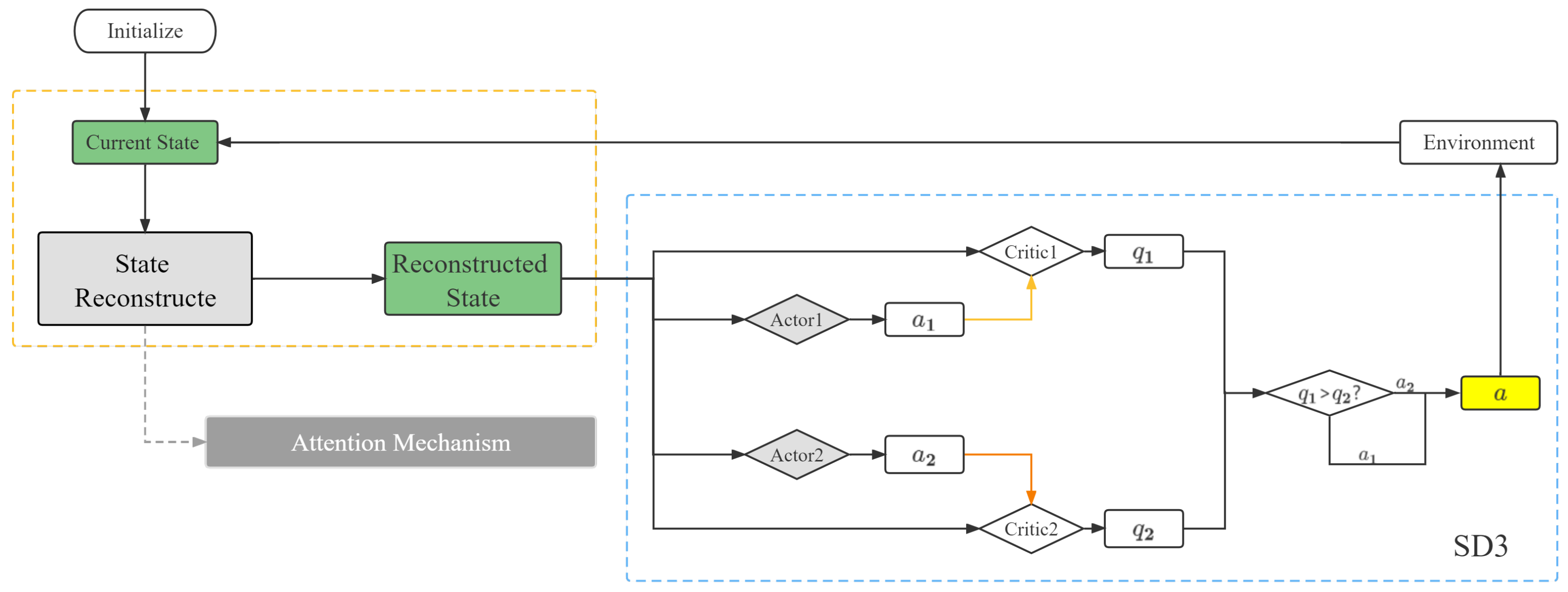

6.1. Overall Framework

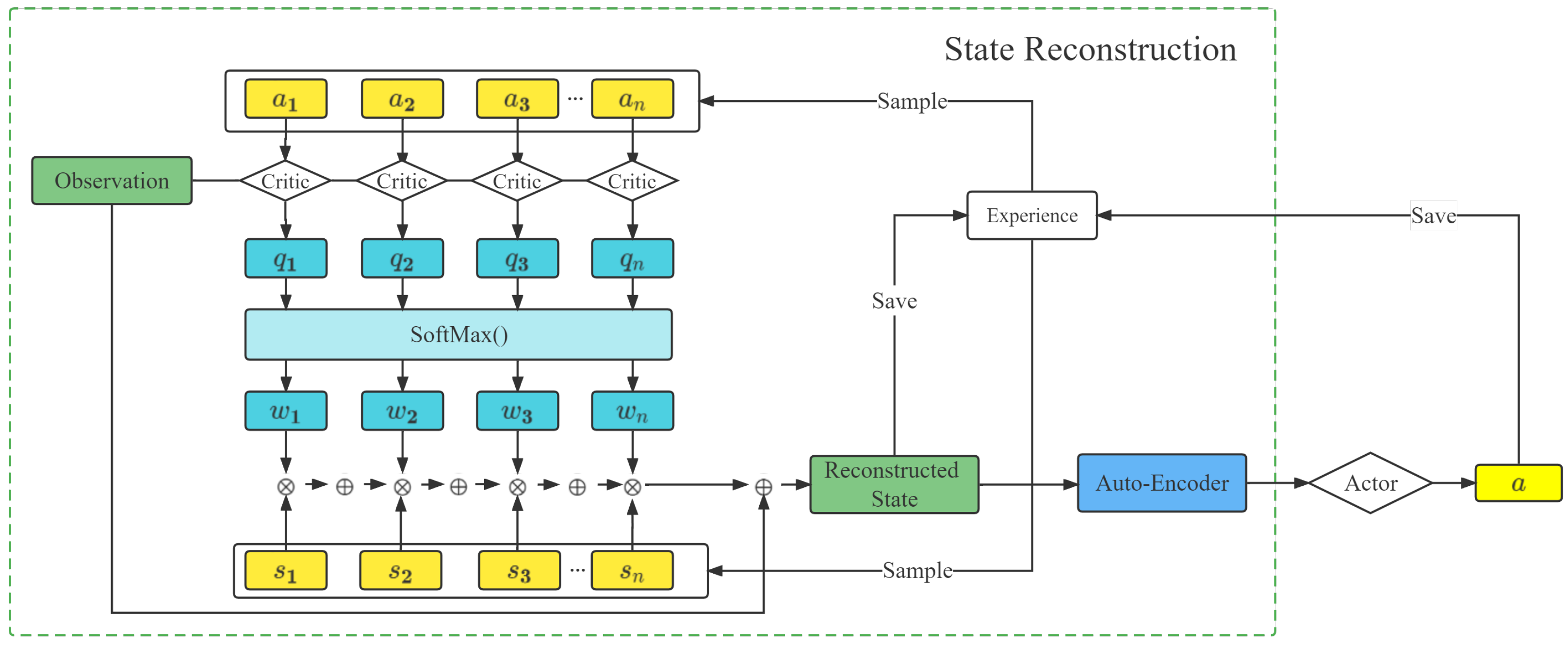

6.2. Key-Value Attention-Based State Reconstruction

6.3. AT_SD3 for Gait Adjustment

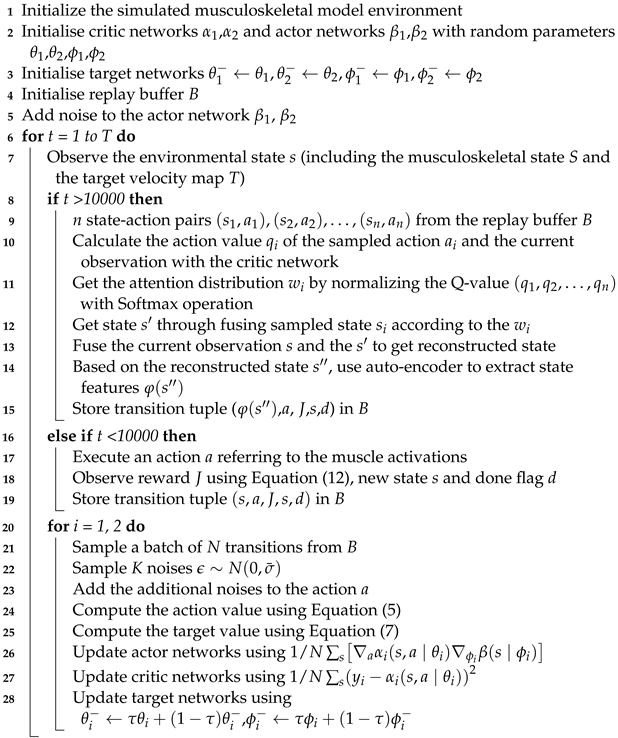

| Algorithm 1: AT_SD3 for the gait adjustment. |

|

7. Experiment Analysis

7.1. Experiment Preparation

7.1.1. Dataset

7.1.2. Evaluation Metrics

7.1.3. Parameter Settings

7.2. Results and Analysis

7.2.1. Algorithm Performance

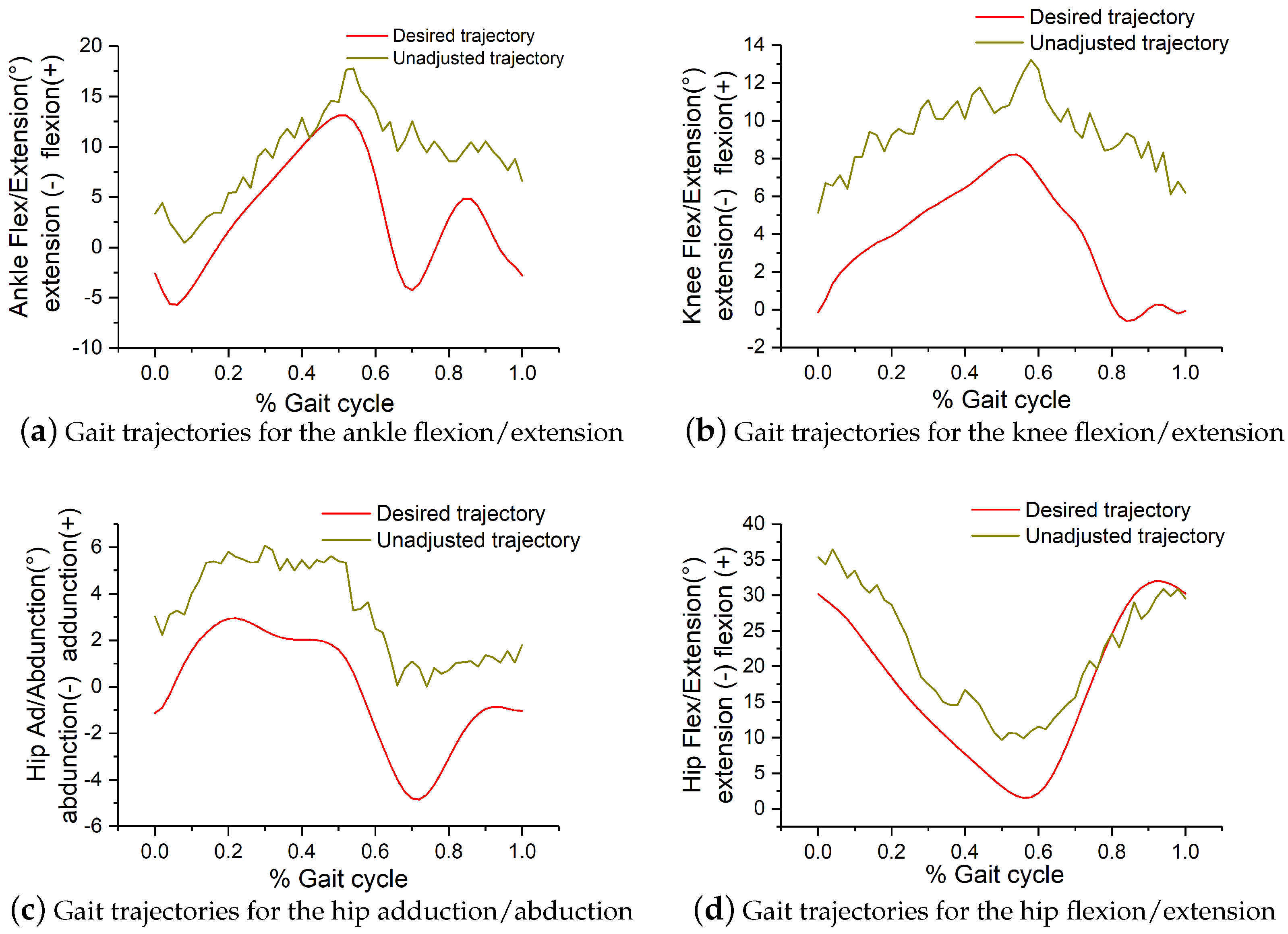

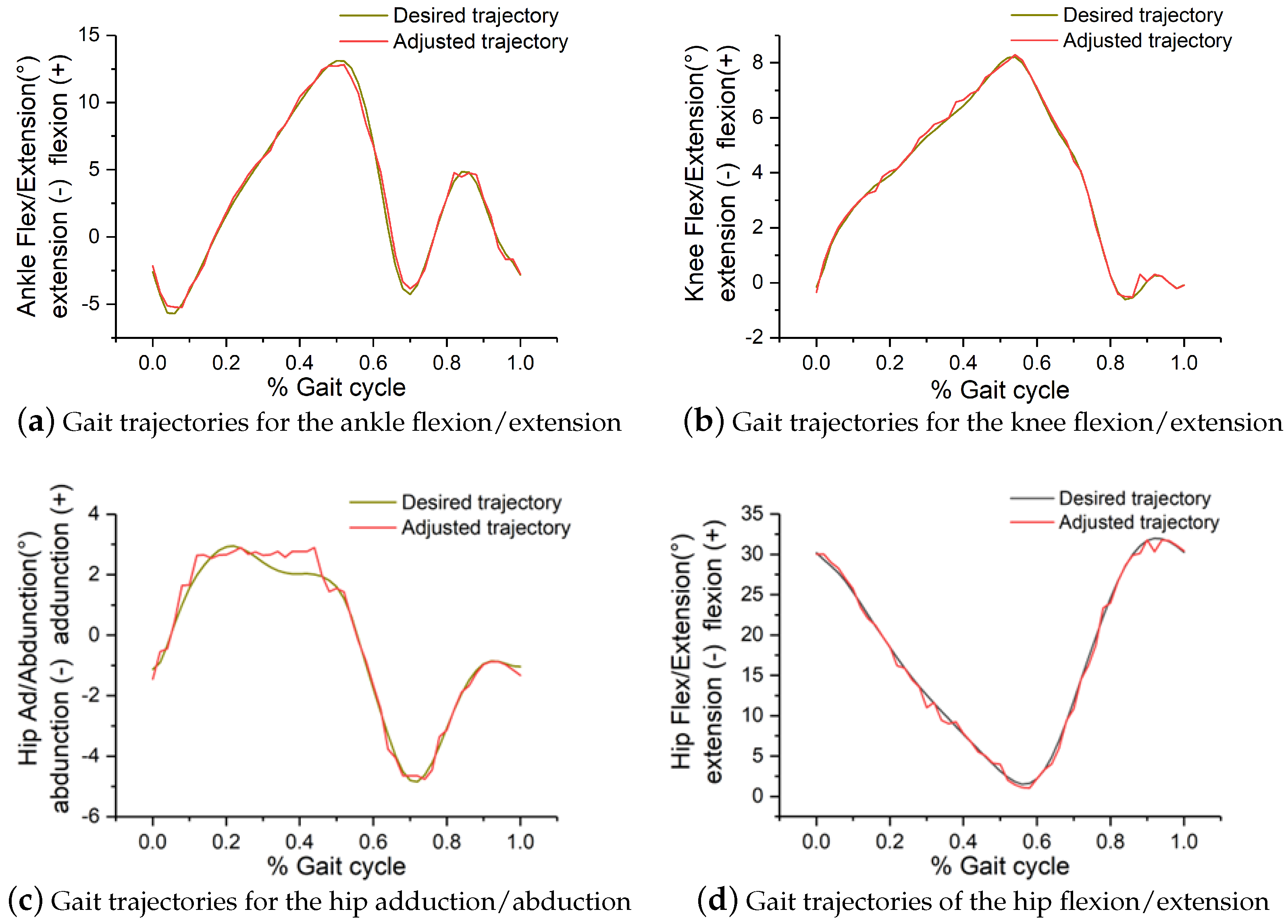

7.2.2. Gait Adjustment

8. Conclusions and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| RL | Reinforcement Learning |

| DRL | Deep Reinforcement Learning |

| MDP | Markov Decision Process |

| SD3 | Softmax Deep Double Deterministic policy gradients |

| DDPG | Deep Deterministic policy gradients |

| TD3 | Twin Delayed Deep Deterministic policy gradient |

| PPO | Proximal Policy Optimization |

| SD | Standard Deviation |

| MAE | Mean Absolute Error |

| RMSE | Root Mean Square Error |

References

- Louie, D.R.; Eng, J.J. Powered robotic exoskeletons in post-stroke rehabilitation of gait: A scoping review. J. Neuroeng. Rehabil. 2016, 13, 53. [Google Scholar] [CrossRef] [PubMed]

- Riener, R.; Lunenburger, L.; Jezernik, S.; Anderschitz, M.; Colombo, G.; Dietz, V. Patient-cooperative strategies for robot-aided treadmill training: First experimental results. IEEE Trans. Neural Syst. Rehabil. Eng. 2005, 13, 380–394. [Google Scholar] [CrossRef] [PubMed]

- Chen, B.; Ma, H.; Qin, L.Y.; Gao, F.; Chan, K.M.; Law, S.W.; Qin, L.; Liao, W.H. Recent developments and challenges of lower extremity exoskeletons. J. Orthop. Transl. 2015, 5, 26–37. [Google Scholar] [CrossRef] [PubMed]

- Mendoza-Crespo, R.; Torricelli, D.; Huegel, J.C.; Gordillo, J.L.; Rovira, J.L.P.; Soto, R. An Adaptable Human-Like Gait Pattern Generator Derived From a Lower Limb Exoskeleton. Front. Robot. AI 2019, 6, 36. [Google Scholar] [CrossRef] [PubMed]

- Hussain, S.; Xie, S.Q.; Jamwal, P.K. Control of a robotic orthosis for gait rehabilitation. Robot. Auton. Syst. 2013, 61, 911–919. [Google Scholar] [CrossRef]

- Sado, F.; Yap, H.J.; Ghazilla, R.A.B.R.; Ahmad, N. Exoskeleton robot control for synchronous walking assistance in repetitive manual handling works based on dual unscented Kalman filter. PLoS ONE 2018, 13, e0200193. [Google Scholar] [CrossRef] [PubMed]

- Castro, D.L.; Zhong, C.H.; Braghin, F.; Liao, W.H. Lower Limb Exoskeleton Control via Linear Quadratic Regulator and Disturbance Observer. In Proceedings of the 2018 IEEE International Conference on Robotics and Biomimetics (ROBIO), Kuala Lumpur, Malaysia, 12–15 December 2018; pp. 1743–1748. [Google Scholar]

- Chinimilli, P.T.; Subramanian, S.C.; Redkar, S.; Sugar, T. Human Locomotion Assistance using Two-Dimensional Features Based Adaptive Oscillator. In Proceedings of the 2019 Wearable Robotics Association Conference (WearRAcon), Scottsdale, AZ, USA, 25–27 March 2019; pp. 92–98. [Google Scholar]

- Sado, F.; Yap, H.J.; Ghazilla, R.A.B.R.; Ahmad, N. Design and control of a wearable lower-body exoskeleton for squatting and walking assistance in manual handling works. Mechatronics 2019, 63, 102272. [Google Scholar] [CrossRef]

- Bingjing, G.; Jianhai, H.; Xiangpan, L.; Lin, Y.Z. Human–robot interactive control based on reinforcement learning for gait rehabilitation training robot. Int. J. Adv. Robot. Syst. 2019, 16, 1729881419839584. [Google Scholar] [CrossRef]

- Zhang, Y.; Li, S.; Nolan, K.J.; Zanotto, D. Adaptive Assist-as-needed Control Based on Actor-Critic Reinforcement Learning. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Macau, China, 3–8 November 2019; pp. 4066–4071. [Google Scholar]

- Khan, S.G.; Tufail, M.; Shah, S.H.; Ullah, I. Reinforcement learning based compliance control of a robotic walk assist device. Adv. Robot. 2019, 33, 1281–1292. [Google Scholar] [CrossRef]

- Rose, L.; Bazzocchi, M.C.F.; Nejat, G. End-to-End Deep Reinforcement Learning for Exoskeleton Control. In Proceedings of the 2020 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Toronto, ON, Canada, 11–14 October 2020; pp. 4294–4301. [Google Scholar]

- Oghogho, M.; Sharifi, M.; Vukadin, M.; Chin, C.; Mushahwar, V.K.; Tavakoli, M. Deep Reinforcement Learning for EMG-based Control of Assistance Level in Upper-limb Exoskeletons. In Proceedings of the 2022 International Symposium on Medical Robotics (ISMR), Atlanta, GA, USA, 13–15 April 2022; pp. 1–7. [Google Scholar]

- Kumar, V.C.V.; Ha, S.; Sawicki, G.; Liu, C.K. Learning a Control Policy for Fall Prevention on an Assistive Walking Device. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), Paris, France, 31 May–31 August 2019; pp. 4833–4840. [Google Scholar]

- Gou, J.; He, X.; Lu, J.; Ma, H.; Ou, W.; Yuan, Y. A class-specific mean vector-based weighted competitive and collaborative representation method for classification. Neural Netw. 2022, 150, 12–27. [Google Scholar] [CrossRef] [PubMed]

- Silver, D.; Lever, G.; Heess, N.M.O.; Degris, T.; Wierstra, D.; Riedmiller, M.A. Deterministic Policy Gradient Algorithms. In Proceedings of the ICML, Beijing, China, 21–26 June 2014. [Google Scholar]

- Lillicrap, T.P.; Hunt, J.J.; Pritzel, A.; Heess, N.M.O.; Erez, T.; Tassa, Y.; Silver, D.; Wierstra, D. Continuous control with deep reinforcement learning. arXiv 2015, arXiv:1509.02971. [Google Scholar]

- Pan, L.; Cai, Q.; Huang, L. Softmax Deep Double Deterministic Policy Gradients. arXiv 2020, arXiv:2010.09177. [Google Scholar]

- Fujimoto, S.; van Hoof, H.; Meger, D. Addressing Function Approximation Error in Actor-Critic Methods. arXiv 2018, arXiv:1802.09477. [Google Scholar]

- Ciosek, K.; Vuong, Q.H.; Loftin, R.T.; Hofmann, K. Better Exploration with Optimistic Actor-Critic. In Proceedings of the NeurIPS, Vancouver, BC, Canada, 8–14 December 2019. [Google Scholar]

- Vaswani, A.; Shazeer, N.M.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is All you Need. arXiv 2017, arXiv:1706.03762. [Google Scholar]

- Fortunato, M.; Azar, M.G.; Piot, B.; Menick, J.; Osband, I.; Graves, A.; Mnih, V.; Munos, R.; Hassabis, D.; Pietquin, O.; et al. Noisy Networks for Exploration. arXiv 2017, arXiv:1706.10295. [Google Scholar]

- Plappert, M.; Houthooft, R.; Dhariwal, P.; Sidor, S.; Chen, R.Y.; Chen, X.; Asfour, T.; Abbeel, P.; Andrychowicz, M. Parameter Space Noise for Exploration. arXiv 2017, arXiv:1706.01905. [Google Scholar]

- Haarnoja, T.; Zhou, A.; Abbeel, P.; Levine, S. Soft Actor-Critic: Off-Policy Maximum Entropy Deep Reinforcement Learning with a Stochastic Actor. In Proceedings of the International Conference on Machine Learning, Stockholm, Sweden, 10–15 July 2018. [Google Scholar]

- Baldi, P. Autoencoders, Unsupervised Learning, and Deep Architectures. In Proceedings of the ICML Unsupervised and Transfer Learning, Bellevue, WA, USA, 2 July 2011. [Google Scholar]

- Schwartz, M.H.; Rozumalski, A.; Trost, J.P. The effect of walking speed on the gait of typically developing children. J. Biomech. 2008, 41, 1639–1650. [Google Scholar] [CrossRef] [PubMed]

| Symbols | Description | |

|---|---|---|

| Body state S | pelvis state | |

| ground reaction forces | ||

| joint angles | ||

| muscle states | ||

| Target velocity map T | target velocity (global) | |

| target velocity (body) |

| Method | Parameters | Results |

|---|---|---|

| Shared hyperparameters | Batch size | 256 |

| Critic network | 256 →128→64→1 | |

| Actor network | 256 →128→64→22 | |

| Optimizer | Adam | |

| Replay buffer size | 5 | |

| Discount factor | 0.99 | |

| Target update rate | 0.01 | |

| SD3 | Learning rate | 0.0001 |

| TAU | 0.005 | |

| Policy noise | 0.2 | |

| Sample size | 50 | |

| Noise clip | 0.5 | |

| Beta | 0.05 | |

| Importance sampling | 0 | |

| PPO | Learning rate | 0.0001 |

| Iteration | 8 | |

| AT_SD3 | Learning rate | 0.0001 |

| Encoder | 256→128→64→32→16→3 | |

| Decoder | 3→16→32→64→100 | |

| Initial standard deviation (SD) | 1.55 | |

| Desired action SD | 0.001 | |

| Adaptation coefficient | 1.05 | |

| DDPG | Learning rate | 0.01 |

| TD3 | Learning rate | 0.0001 |

| TAU | 0.005 | |

| SD3_AE | Encoder | 256→128→64→32→16→3 |

| Decoder | 3→16→32→64→100 | |

| Learning rate | 0.0001 |

| Metrics | Hip Ad/Abduction | Hip Flex/Extension | Knee | Ankle |

|---|---|---|---|---|

| RMSE | 1.66 | 2.64 | 1.58 | 2.13 |

| MAE | 1.22 | 2.19 | 1.32 | 1.74 |

| Metrics | Hip Ad/Abduction | Hip Flex/Extension | Knee | Ankle |

|---|---|---|---|---|

| RMSE | 0.32 | 0.18 | 0.14 | 0.28 |

| MAE | 0.23 | 0.1 | 0.1 | 0.22 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Li, A.; Chen, J.; Fu, Q.; Wu, H.; Wang, Y.; Lu, Y. A Novel Deep Reinforcement Learning Based Framework for Gait Adjustment. Mathematics 2023, 11, 178. https://doi.org/10.3390/math11010178

Li A, Chen J, Fu Q, Wu H, Wang Y, Lu Y. A Novel Deep Reinforcement Learning Based Framework for Gait Adjustment. Mathematics. 2023; 11(1):178. https://doi.org/10.3390/math11010178

Chicago/Turabian StyleLi, Ang, Jianping Chen, Qiming Fu, Hongjie Wu, Yunzhe Wang, and You Lu. 2023. "A Novel Deep Reinforcement Learning Based Framework for Gait Adjustment" Mathematics 11, no. 1: 178. https://doi.org/10.3390/math11010178

APA StyleLi, A., Chen, J., Fu, Q., Wu, H., Wang, Y., & Lu, Y. (2023). A Novel Deep Reinforcement Learning Based Framework for Gait Adjustment. Mathematics, 11(1), 178. https://doi.org/10.3390/math11010178