An Energy-Efficient Neuromorphic Processor Using Unified Refractory Control-Based NoC for Edge AI

Abstract

1. Introduction

- Hop distance overhead: In a 2D mesh topology, as the physical distance between neurons increases, spike packets must traverse more intermediate routers, leading to an increase in the number of hops [15]. As the hop count grows, data transmission latency increases, and energy consumption accumulates at each hop, raising the likelihood of network congestion. These structural inefficiencies become particularly problematic in SNNs, where a large number of spike events occur within short time windows, significantly degrading performance in real-time, energy-constrained edge device environments.

- Topology mismatch: Most neuromorphic processors utilizing STDP are structured as fully connected single-layer networks. In such architectures, each neuron is connected to all others, resulting in frequent non-local communications. However, the 2D mesh topology is optimized for local, spatially adjacent communication. Consequently, when large-scale communication between distant neurons is repeated—as in fully connected structures—this leads to increased latency and energy overhead.

- Lack of NoC-level control: Most neuromorphic NoC designs focus primarily on routing efficiency and scalability. Few architectures manage neuron state-synchronized control signals at the network level. In reality, many neurons remain inactive during computation cycles [16], yet still receive unnecessary clock signals and maintain their state, leading to power waste. This lack of fine-grained computation control results in energy inefficiencies, especially detrimental in low-power edge AI scenarios.

2. Neuron Model and Learning Rule

2.1. Neuron Model

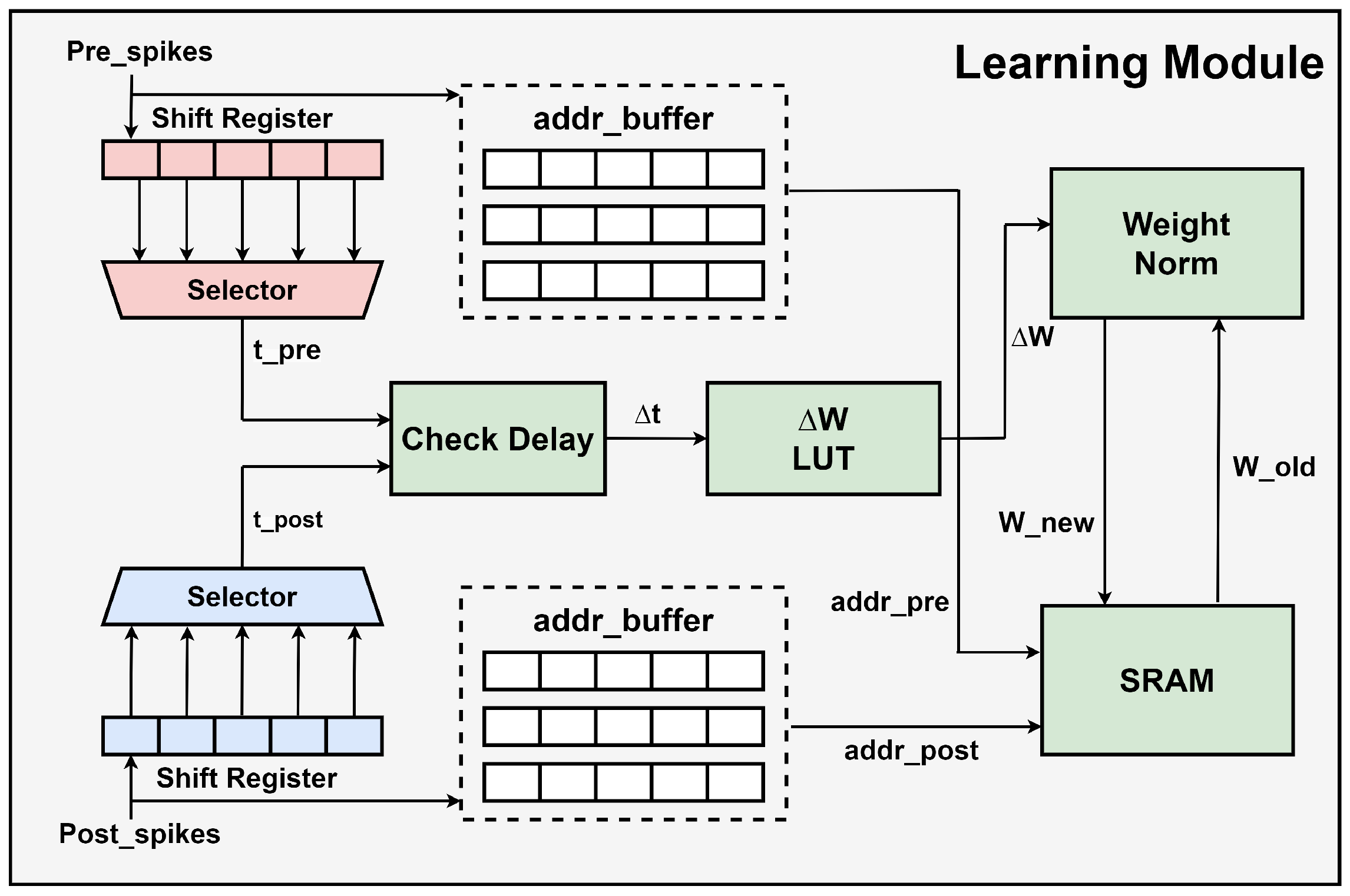

2.2. Learning Rule

3. Hardware Architecture

3.1. System Architecture Overview

3.2. Network Interface

3.3. Neuron Core

4. Experimental Results

4.1. Experimental Setup

4.2. Performance Evaluation

4.3. MPW Fabrication Results

4.4. Comparative Analysis

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Srinivasan, K.; Cowan, G. Subthreshold CMOS Implementation of the Izhikevich Neuron Model. In Proceedings of the 2022 IEEE International Symposium on Circuits and Systems (ISCAS), Austin, TX, USA, 27 May–1 June 2022; pp. 1062–1066. [Google Scholar] [CrossRef]

- Raymond, C.; Gutierrez, E. A low power and low area mixed-signal neuronal cell for spiking neural networks. In Proceedings of the 2021 IEEE International Midwest Symposium on Circuits and Systems (MWSCAS), Lansing, MI, USA, 9–11 August 2021; pp. 313–316. [Google Scholar] [CrossRef]

- Yang, H.; Lam, K.Y.; Xiao, L.; Xiong, Z.; Hu, H.; Niyato, D.; Vincent Poor, H. Lead federated neuromorphic learning for wireless edge artificial intelligence. Nat. Commun. 2022, 13, 4269. [Google Scholar] [CrossRef] [PubMed]

- Frenkel, C.; Bol, D.; Indiveri, G. Bottom-up and top-down approaches for the design of neuromorphic processing systems: Tradeoffs and synergies between natural and artificial intelligence. Proc. IEEE 2023, 111, 623–652. [Google Scholar] [CrossRef]

- Moradi, S.; Qiao, N.; Stefanini, F.; Indiveri, G. A scalable multicore architecture with heterogeneous memory structures for dynamic neuromorphic asynchronous processors (DYNAPs). IEEE Trans. Biomed. Circuits Syst. 2018, 12, 106–122. [Google Scholar] [CrossRef] [PubMed]

- Zhu, Q.B.; Li, B.; Yang, D.D.; Liu, C.; Feng, S.; Chen, M.L.; Sun, D.M. A flexible ultrasensitive optoelectronic sensor array for neuromorphic vision systems. Nat. Commun. 2021, 12, 1798. [Google Scholar] [CrossRef]

- Aitsam, M.; Davies, S.; Nuovo, A.D. Neuromorphic Computing for Interactive Robotics: A Systematic Review. IEEE Access 2022, 10, 122261–122279. [Google Scholar] [CrossRef]

- Schuman, C.D.; Kulkarni, S.R.; Parsa, M.; Mitchell, J.P.; Date, P.; Kay, B. Opportunities for neuromorphic computing algorithms and applications. Nat. Comput. Sci. 2022, 2, 10–19. [Google Scholar] [CrossRef] [PubMed]

- Diehl, P.U.; Cook, M. Unsupervised learning of digit recognition using spike-timing-dependent plasticity. Front. Comput. Neurosci. 2015, 9, 99. [Google Scholar] [CrossRef] [PubMed]

- Zhao, D.; Schrape, O.; Stamenkovic, Z.; Krstic, M. ImSTDP: Implicit Timing On-Chip STDP Learning. IEEE Trans. Circuits Syst. I Regul. Pap. 2025, 72, 868–881. [Google Scholar] [CrossRef]

- Eshraghian, J.K.; Ward, M.; Neftci, E.O.; Wang, X.; Lenz, G.; Dwivedi, G.; Lu, W.D. Training Spiking Neural Networks Using Lessons From Deep Learning. Proc. IEEE 2023, 111, 1016–1054. [Google Scholar] [CrossRef]

- Li, Z.; Lemaire, E.; Abderrahmane, N.; Bilavarn, S.; Miramond, B. Efficiency analysis of artificial vs. Spiking Neural Networks on FPGAs. J. Syst. Archit. 2022, 133, 102765. [Google Scholar] [CrossRef]

- CEPTNACLK. Neuromorphic NoC Architecture for SNNs. 2021. GitHub Repository. Available online: https://github.com/cepdnaclk/e18-4yp-Neuromorphic-NoC-Architecture-for-SNNs (accessed on 15 September 2025).

- Fang, H.; Shrestha, A.; Ma, D.; Qiu, Q. Scalable NoC-based Neuromorphic Hardware Learning and Inference. In Proceedings of the 2018 International Joint Conference on Neural Networks (IJCNN), Rio de Janeiro, Brazil, 8–13 July 2018; pp. 1–8. [Google Scholar] [CrossRef]

- Xue, J.; Xie, L.; Chen, F.; Wu, L.; Tian, Q.; Zhou, Y.; Ying, R.; Liu, P. EdgeMap: An Optimized Mapping Toolchain for Spiking Neural Network in Edge Computing. Sensors 2023, 23, 6548. [Google Scholar] [CrossRef] [PubMed]

- Wang, B.; Wong, M.M.; Li, D.; Chong, Y.S.; Zhou, J.; Wong, W.F.; Do, A.T. 1.7pJ/SOP Neuromorphic Processor with Integrated Partial Sum Routers for In-Network Computing. In Proceedings of the 2023 IEEE International Symposium on Circuits and Systems (ISCAS), Monterey, CA, USA, 21–25 May 2023; pp. 1–5. [Google Scholar] [CrossRef]

- Cakin, A.; Dilek, S.; Tosun, S. Energy-aware application mapping methods for mesh-based hybrid wireless network-on-chips. J. Supercomput. 2024, 80, 15582–15612. [Google Scholar] [CrossRef]

- Ma, D.; Shen, J.; Gu, Z.; Zhang, M.; Zhu, X.; Xu, X.; Xu, Q.; Shen, Y.; Pan, G. Darwin: A neuromorphic hardware co-processor based on spiking neural networks. J. Syst. Archit. 2017, 77, 43–51. [Google Scholar] [CrossRef]

- Nambiar, V.P.; Pu, J.; Lee, Y.K.; Mani, A.; Koh, E.K.; Wong, M.M.; Do, A.T. Energy Efficient 0.5V 4.8pJ/SOP 0.93µW Leakage/Core Neuromorphic Processor Design. IEEE Trans. Circuits Syst. II Express Briefs 2021, 68, 3148–3152. [Google Scholar] [CrossRef]

- Kim, J.; Park, J.; Joo, S.; Jung, S.O. Efficient Hardware Implementation of STDP for AER Based Large-Scale SNN Neuromorphic System. In Proceedings of the 2020 35th International Technical Conference on Circuits/Systems, Computers and Communications (ITC-CSCC), Nagoya, Japan, 3–6 July 2020; pp. 1–4. [Google Scholar]

- Nain, Z.; Ali, R.; Anjum, S.; Afzal, M.K.; Kim, S.W. A Network Adaptive Fault-Tolerant Routing Algorithm for Demanding Latency and Throughput Applications of Network-on-a-Chip Designs. Electronics 2020, 9, 1076. [Google Scholar] [CrossRef]

- Cassidy, A.; Alvarez-Icaza, R.; Akopyan, F.; Sawada, J.; Arthur, J.; Merolla, P.; Datta, P.; Gonzalez Tallada, M.; Taba, B.; Andreopoulos, A.; et al. Real-Time Scalable Cortical Computing at 46 Giga-Synaptic OPS/Watt with 100× Speedup in Time-to-Solution and 100,000× Reduction in Energy-to-Solution. In Proceedings of the International Conference for High Performance Computing, Networking, Storage and Analysis (SC), New Orleans, LA, USA, 16–21 November 2014. [Google Scholar] [CrossRef]

- Davies, M.; Srinivasa, N.; Lin, T.H.; Chinya, G.; Cao, Y.; Choday, S.H.; Wang, H. Loihi: A Neuromorphic Manycore Processor with On-Chip Learning. IEEE Micro 2018, 38, 82–99. [Google Scholar] [CrossRef]

- Akopyan, F.; Sawada, J.; Cassidy, A.; Alvarez-Icaza, R.; Arthur, J.; Merolla, P.; Imam, N.; Nakamura, Y.; Datta, P.; Nam, G.; et al. TrueNorth: Design and tool flow of a 65 mW 1 million neuron programmable neurosynaptic chip. IEEE Trans. Comput.-Aided Des. Integr. Circuits Syst. 2015, 34, 1537–1557. [Google Scholar] [CrossRef]

- Yerima, W.Y.; Ikechukwu, O.M.; Dang, K.N.; Abdallah, A.B. Fault-Tolerant Spiking Neural Network Mapping Algorithm and Architecture to 3D-NoC-Based Neuromorphic Systems. IEEE Access 2023, 11, 52429–52443. [Google Scholar] [CrossRef]

- Frenkel, C.; Legat, J.D.; Bol, D. Morphic: A 65-nm 738 Ksynapse/mm2 quad-core binary-weight digital neuromorphic processor with stochastic spike-driven online learning. IEEE Trans. Biomed. Circuits Syst. 2019, 13, 999–1010. [Google Scholar] [CrossRef] [PubMed]

| Parameter | Value |

|---|---|

| # Input Neurons | 256 |

| # Output Neurons | 4096 |

| Time Step | 350 |

| Initial Weight Range | [0, 0.1] |

| / | 0/1.5 |

| (leak rate) | 0.2 |

| (learning rate) | 0.01 |

| 0.8/0.3 | |

| 8/5 | |

| 0 | |

| −30 | |

| −20 | |

| Refractory Time | 15 |

| (threshold) | 15 |

| Resource/Parameter | Utilization | Available | Utilization (%) | Remarks |

|---|---|---|---|---|

| LUT | 68,551 | 1,143,000 | 6.0 | Logic implementation |

| FF | 55,201 | 2,286,000 | 2.4 | Sequential elements |

| BRAM (18 k) | 4 | 2160 | <0.1 | On-chip buffering |

| URAM (36 k) | 32 | 960 | 3.3 | Weight storage |

| Dynamic Power | 3.319 W | Clocks 6%, Logic 4%, I/O 37% | ||

| Worst Negative Slack (Setup) | 0.367 ns | Timing met | ||

| Total Negative Slack (Hold) | 0.267 ns | Timing met | ||

| Pulse Width Slack | 0.003 ns | All constraints met | ||

| Reference | Akopyan et al. [24] | Yerima et al. [25] | Frenkel et al. [26] | This Work |

|---|---|---|---|---|

| Cores | 4096 | 64 | 4 | max. 8 |

| Neurons/core | 256 | 256 | 512 | 512 |

| Synaptic Precision | 1-bit | 8-bit | 1-bit | 8-bit |

| NoC Topology | 2D Mesh | 3D Mesh | Hierarchical | Star Topology (UREN Router) |

| Max. Hop Delay | 4 hops | 4 hops | – | 1 hop |

| Learning Rule | – | STDP | S-SDSP | Nearest-STDP |

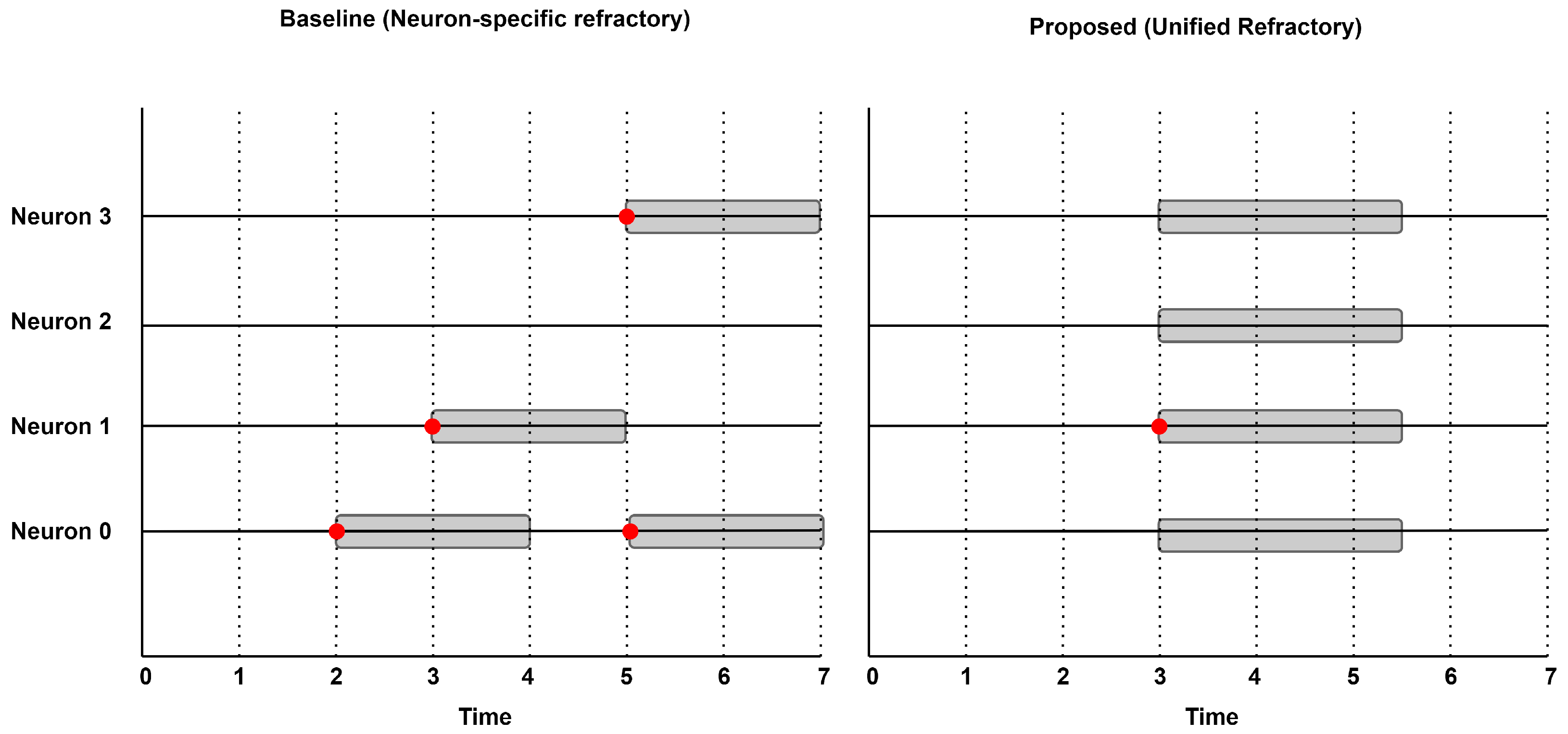

| Refractory Control | No | Local Refractory | No | Unified Refractory |

| MNIST Accuracy (%) | 99.42 (offline) | 79.4/84.5 (online) | 97.8 (offline) | 86.1 (online) |

| Memory/core | – | 64 KB | 64 KB + 8 KB | 128 KB + 3 KB |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Na, S.-H.; Kim, D.-S. An Energy-Efficient Neuromorphic Processor Using Unified Refractory Control-Based NoC for Edge AI. Electronics 2025, 14, 4959. https://doi.org/10.3390/electronics14244959

Na S-H, Kim D-S. An Energy-Efficient Neuromorphic Processor Using Unified Refractory Control-Based NoC for Edge AI. Electronics. 2025; 14(24):4959. https://doi.org/10.3390/electronics14244959

Chicago/Turabian StyleNa, Su-Hwan, and Dong-Sun Kim. 2025. "An Energy-Efficient Neuromorphic Processor Using Unified Refractory Control-Based NoC for Edge AI" Electronics 14, no. 24: 4959. https://doi.org/10.3390/electronics14244959

APA StyleNa, S.-H., & Kim, D.-S. (2025). An Energy-Efficient Neuromorphic Processor Using Unified Refractory Control-Based NoC for Edge AI. Electronics, 14(24), 4959. https://doi.org/10.3390/electronics14244959