1. Introduction

1.1. Motivation

With the rapid advancement of artificial intelligence (AI) technology, particularly the breakthroughs of generative AI in the field of Natural Language Processing (NLP), AI-based question-and-answer (Q&A) systems have emerged as key applications across various domains. Among them, OpenAI’s ChatGPT, a powerful large-scale language model (widely known in versions such as GPT-3.5 and GPT-4), has garnered significant attention. As technology continues to progress, the demand for AI Q&A systems is growing, prompting many fields to explore how to leverage such technologies to deliver personalized services and enhance both work and learning efficiency.

In academic environments, students often encounter challenges such as delayed feedback and reluctance to seek help. Observational and survey data indicate that a small proportion of students dominate classroom interactions, while many remain silent due to concerns about insufficient knowledge, unpreparedness, or fear of appearing unintelligent [

1]. These factors can hinder learning efficiency and motivation. Integrating AI Q&A systems to support TAs can help reduce repetitive workloads and provide students with more immediate assistance, potentially encouraging more frequent questioning and fostering self-directed learning.

Despite the impressive performance of existing cloud-based generative AI systems (e.g., ChatGPT) in Q&A functionalities, they raise concerns regarding user data privacy and intellectual property protection due to their reliance on cloud processing. Additionally, the high costs associated with cloud services pose barriers to widespread adoption in educational settings. In response, this study selected search technologies and system architectures that align with the research objectives and developed a closed-end generative AI teaching assistant system (CE-GAITA). When deploying the model locally, the system not only safeguards data privacy and security, but also offers efficient and real-time learning support. By ‘local deployment’, we refer to running the AI model entirely on institutional servers, without relying on cloud services, thus ensuring data privacy and control.

1.2. Purpose

The intended audience for this study comprises readers with experience in educational technology and the application of artificial intelligence. This study aims to develop a CE-GAITA that integrates semantic embedding and RAG based on textbook content in order to provide real-time, textbook-aligned intelligent Q&A functionalities. The system is designed to support students’ needs in after-school learning, self-directed inquiry, and review processes.

By allowing students to receive immediate responses to course-related questions without the pressure or time constraints typically associated with asking questions, the system seeks to improve students’ motivation, promote autonomous learning, and improve overall learning outcomes. In addition, it helps reduce the repetitive workload faced by instructors and teaching assistants, serving as an effective supplementary tool for remedial instruction and digital learning support.

2. Related Work

2.1. Trends in the Application of Generative AI and LLMs in the Educational Field

With the increasing adoption of LLMs, a growing body of research has explored their potential applications in education. Kasneci et al. highlight that, although generative AI holds great promise for enhancing learning and fostering pedagogical innovation, several challenges persist in educational contexts. These challenges include a lack of technical knowledge and skills in educators, the high costs associated with system installation and maintenance, and significant concerns about student data privacy and security [

2]. To address these issues, this study adopts a closed-end system architecture and leverages open-source LLMs to develop a controllable, privacy-conscious, generative AI teaching assistant system.

2.2. Retrieval-Augmented Generation (RAG) and Its Components

2.2.1. Overview of RAG Technology

Lewis et al. proposed the RAG framework, which integrates semantic retrieval with generative modeling, allowing language models to extract relevant knowledge from external sources to improve the accuracy and relevance of their responses [

3]. Kandpal et al. further emphasize that LLMs exhibit limitations in handling long-tail knowledge, particularly when training data is imbalanced. They note that these models tend to perform poorly on low-frequency entities and their factual accuracy is strongly correlated with the amount of relevant data encountered during pretraining [

4]. The RAG framework effectively mitigates these limitations by allowing dynamic access to external information, thereby improving the model’s capacity to cover rare or underrepresented knowledge. In light of this, the present study adopts the RAG framework and implements it within a closed-end educational environment to improve the knowledge coverage and response performance of the system in instructional support tasks.

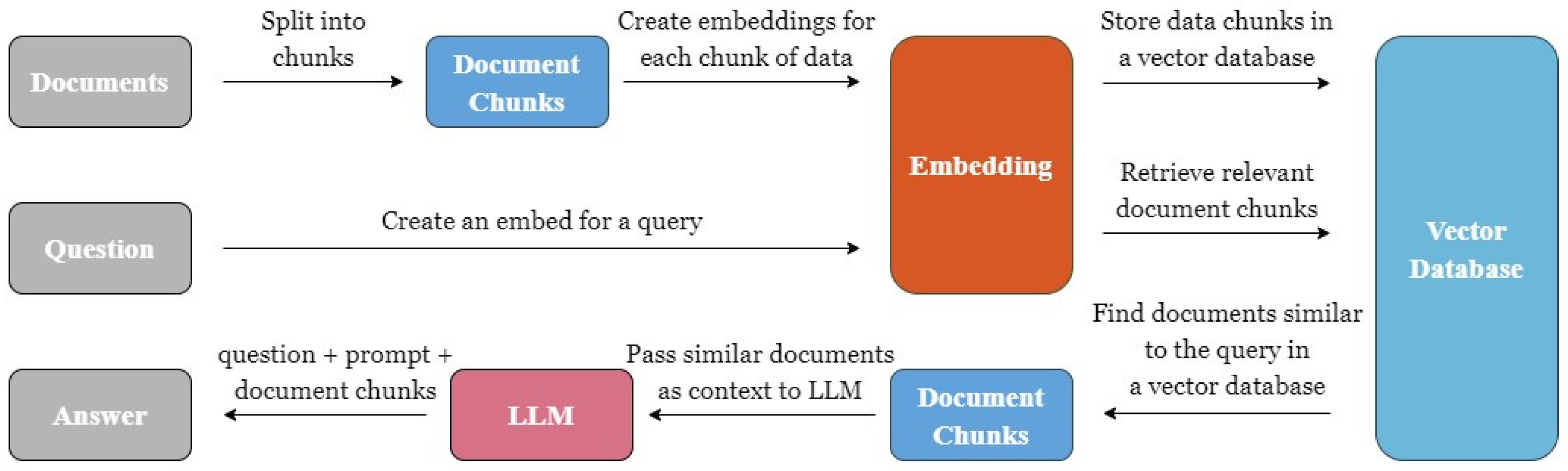

Figure 1 illustrates the architecture of the RAG model as designed in this study.

2.2.2. Vector Databases

Jing et al. highlighted that the integration of vector databases (VecDBs) with LLMs allows these systems to function as external domain-specific knowledge bases, while also efficiently storing historical interactions to support semantic memory or serve as semantic caches [

5]. Because LLMs may occasionally generate responses that seem accurate but are incorrect, incorporating vector databases can significantly improve the precision of domain-specific responses. Additionally, VecDBs help mitigate hallucinations, improve system editability, and optimize computational resource utilization. In this study, the vector database most compatible with the system requirements will be selected and integrated to enable more accurate, efficient, and adaptable educational support functions.

2.2.3. Embedding Models

Embedding is a technique that transforms non-vector data, such as text, into vector representations, allowing computers to better understand, compare, and process these data types. The Sentence-BERT model, proposed by Reimers and Gurevych, has demonstrated strong performance in semantic representation and question-answering tasks [

6]. In this study, Sentence-BERT serves as the foundational model for the embedding layer, enabling efficient semantic retrieval and alignment between user queries and relevant instructional content.

2.3. Existing AI Teaching Assistant Systems

Recent studies have explored the integration of LLM and RAG frameworks into intelligent tutoring systems to enhance personalized learning. Hicke et al. introduced AI-TA, a privacy-conscious assistant built on LLaMA-2, combining RAG, SFT, and DPO to improve response quality [

7]. Modran et al. proposed a custom LLM-based system that takes advantage of RAG for context-aware support in higher education [

8]. Alier et al. developed LAMB, an open-source framework for LMS-integrated AI assistants, focusing on modularity and educator control [

9]. Neumann et al. validated the effectiveness of supporting self-regulated learning through RAG and TAM methodologies [

10], while Zhu et al. presented UnrealMentor GPT, a multi-agent system for programming education that can improve debugging and student satisfaction [

11]. Although these AI-assisted systems demonstrate significant potential, their reliance on cloud-based architectures introduces notable limitations. Cloud platforms provide computational flexibility and scalability; however, they raise concerns regarding data privacy and may hinder the alignment of instructional content with specific curriculum requirements. Similarly, while the adoption of high-accuracy models improves response quality, it often involves substantial computational and maintenance costs. In addition, the predominance of English resources in existing systems restricts their applicability in multilingual settings and domain-specific educational contexts.

2.4. Positioning and Contributions of This Study

In contrast, the CE-GAITA developed in this study addresses these limitations by supporting local deployment, integrating RAG with optional LLMs and embedding models, and allowing the upload of course-specific PDFs tailored to Chinese-language learners. This architecture protects data privacy, ensures consistency with curricular content, and facilitates targeted post-class review as well as self-directed learning, thus demonstrating both pedagogical and technical advantages over existing cloud-based platforms. Building on these features, this study further incorporates optimized open-source components such as DeepSeek-r1:14b and EmbedChain and conducts a rigorous evaluation of RAG frameworks, embedding models, and LLMs, with performance improvements validated by McNemar’s test. In doing so, it fills critical gaps in privacy-preserving deployment, localized language support, and empirical benchmarking, offering a scalable and curriculum-specific solution for AI-assisted learning in higher education.

3. Materials and Methods

3.1. System Architecture and Educational Application Scenario Design

The CE-GAITA developed in this study is built using responsive web technologies and integrates LLMs, vector databases, embedding models, and the RAG framework. This integration forms a question-and-answer platform designed to support students in various educational settings. Users can pose questions based on their individual needs, and the system provides immediate, context-aware responses. The platform is suitable for multiple educational applications, including classroom assistance, self-directed learning, and teacher–student counseling.

To enhance the educational relevance of the system, this study adopts an undergraduate level ‘Database Systems’ course as a representative scenario for system design and evaluation. In this context, the instructor uploads course slides, totaling approximately 180 pages, in PDF format to the system, which serves as the primary source of knowledge. The system is designed so that, after class, students could potentially ask questions related to course concepts they find unclear, and the system would provide real-time responses to reduce the risk of learning interruption. However, the evaluation scope of this study primarily focuses on students’ performance in multiple-choice questions and does not yet encompass the observation and analysis of students’ actual interaction processes with the system. Additionally, test questions from the database course are used for subsequent performance evaluation, allowing analysis of the system’s effectiveness in curriculum-based learning environments.

3.2. System Components Selection and Evaluation Process

3.2.1. Comparison of RAG Framework Performance

To develop an AI teaching assistant with effective knowledge retrieval capabilities, this study evaluates three RAG frameworks, LangChain [

12], LlamaIndex [

13], and EmbedChain [

14], based on four main criteria: ease of use, performance, flexibility of development, and adaptability of the system. Experimental results indicate that EmbedChain performs best in most dimensions, offering superior ease of use, robust embedding support, and rapid chatbot construction capabilities with good scalability. Although LangChain and LlamaIndex offer powerful functionalities, their complexity increases development time and difficulty. Teixeira also pointed out that EmbedChain, as an open-source RAG framework, supports automated data ingestion, vector embedding generation, and seamless integration with vector databases. Furthermore, its support for multilingual models and flexible deployment options makes it particularly suitable for building knowledge-intensive conversational applications efficiently [

15]. Based on these characteristics, this study adopts EmbedChain as the core technological framework for the CE-GAITA.

3.2.2. Comparison of Large Language Models (LLMs)

This study compares the performance and applicability of four open-source LLMs: Llama 3.2, LLaVA, DeepSeek-r1:14b, and Gemma3:12b. Experimental evaluations demonstrate that DeepSeek-r1:14b outperforms the others in terms of traditional Chinese comprehension and completeness of generated responses, making it particularly well suited for classroom support and instructional explanations in a Mandarin-speaking academic environment.

3.2.3. Comparison of Vector Databases

In this study, to maintain a focus on the core objectives of ‘local feasibility’ and ‘privacy protection’ in educational applications, ChromaDB and Weaviate were selected for a preliminary comparison. ChromaDB is positioned as a lightweight, fully local solution, whereas Weaviate represents a hybrid alternative that, while partly reliant on cloud APIs, also provides a self-hosted open-source version. Other commonly used vector databases, such as Pinecone, offer advantages in large-scale distributed retrieval and cloud scalability; however, their installation and maintenance requirements are comparatively complex. Consequently, this study adopts ChromaDB and Weaviate as representative baselines for testing. Future research may extend the evaluation to additional vector databases to investigate its performance and applicability in large-scale instructional materials or cross-course scenarios.

Two vector databases, ChromaDB [

16] and Weaviate [

17], were tested with evaluation criteria that included indexing speed, query efficiency, and resource utilization. ChromaDB was ultimately selected due to its open-source nature and compatibility with local closed systems. Unlike Weaviate, ChromaDB does not require reliance on cloud APIs, ensuring that all data is stored locally. It also avoids the storage limitations and potential payment requirements associated with the free version, making it a more practical and secure option for educational deployment.

3.2.4. Comparison of Embedding Models

In comparing the embedding models provided by GPT4All and Hugging Face, the Hugging Face all-MiniLM-L6-v2 model demonstrates the best overall performance. In tasks involving textbook semantic conversion and retrieval matching, this model achieved the highest accuracy and semantic alignment, effectively improving the system’s ability to generate semantically relevant responses and construct meaningful mappings to educational content.

The all-MiniLM-L6-v2 model is based on the MiniLM architecture and employs the Sentence-BERT (SBERT) training strategy, which uses sentence-level contrastive learning to convert sentences into semantically meaningful vector embeddings. According to Reimers and Gurevych, the SBERT framework performs exceptionally well in tasks involving semantic similarity assessment and question-answer retrieval [

6], indicating its strong potential as a semantic representation tool for educational materials. Its demonstrated performance further supports its suitability for enhancing the semantic processing capabilities of AI-assisted educational systems.

3.3. Experimental Process and Data Analysis Methods

3.3.1. Test Data Construction

The test data set used in this study comprises a multiple-choice question bank from a university-level database systems course, consisting of 97 questions, each with four answer options. The questions are categorized into four sections based on instructional themes: DBS I (Comprehensive, 25 questions), DBS II (Introduction, 27 questions), DBS III (Entity-Relationship Model, 34 questions), and DBS IV (Normalization, 11 questions). Each question is accompanied by a predefined correct answer, which serves as a reference for evaluating the accuracy of responses generated by LLMs.

To measure response accuracy, this study adopts the exact match evaluation method. During testing, the model is required to produce a single output corresponding to one of the four options (i.e., 1, 2, 3, or 4). A response is considered correct only if it exactly matches the standard answer; otherwise, it is marked as incorrect.

This evaluation approach is objective and reproducible, eliminating potential issues related to semantic ambiguity, subjective interpretation, or inconsistency in scoring criteria. It also avoids reliance on human judgment or semantic similarity metrics, ensuring a clear and consistent basis for model performance comparison.

3.3.2. Model Testing Design

At the initial stage of experimentation, this study used LLaVA and LLaMA 3.2 in combination with embedding models such as GPT4All and Hugging Face. However, the results revealed that their accuracy in the database question bank was generally low, making them unsuitable for practical teaching applications where high response accuracy is critical. To address this issue, the study introduced DeepSeek-r1:14b and Gemma3:12b models for further testing. These models demonstrated significantly improved accuracy and therefore, all subsequent system evaluations were conducted using these two models.

To assess the effectiveness of the developed system and validate the application of RAG, both models were tested under conditions ‘native LLM’ and ‘with RAG’. For consistency and fair comparison, both models were integrated with the Hugging Face all-MiniLM-L6-v2 embedding model and ChromaDB as the vector database. This configuration served as the standard RAG framework setup for testing.

3.3.3. Statistical Analysis Methods

Given that the AI model’s responses in this study are binary classification outcomes (“correct” or “incorrect”) and that the set of questions is fixed (97 in total), the following statistical approach was employed to evaluate the impact of RAG application on model performance.

The McNemar test is a non-parametric statistical method used to analyze binary categorical data in paired samples. It is particularly suitable for evaluating whether there is a statistically significant difference in outcomes under two different treatment conditions applied to the same sample.

To examine the effect of RAG application on the accuracy of model prediction, this study constructed a paired 2 × 2 contingency table for the responses of each model under two conditions: native LLM (Model A) and RAG (Model B). Each of the 97 fixed questions was categorized into one of the following four groups based on the correctness of the responses.

Both correct (a)

Only Model A correct (b)

Only Model B correct (c)

Both incorrect (d)

Subsequently, McNemar’s test was applied to evaluate whether the distribution of these paired outcomes differed significantly between the two conditions.

The null hypothesis (H0) posits that there is no difference in the answer accuracy between the native LLM and RAG, implying that RAG has no effect on model performance. The alternative hypothesis (H1) states that there is a significant difference, suggesting that RAG either enhances or diminishes performance. A p-value less than the significance threshold leads to the rejection of the null hypothesis, indicating that RAG exerts a statistically significant effect on model accuracy.

- 3.

Statistical Tools

McNemar’s test was conducted using the ‘statsmodels’ package (version 0.14.5) in Python (version 3.12.11), with the default significance level set at α = 0.05 for statistical inference.

4. Results

In this study, CE-GAITA was successfully developed. Several rounds of technical evaluations and optimizations were conducted to ensure that the system’s performance, privacy, and feasibility were effectively enhanced. Following a series of performance comparisons and empirical analyzes, the system ultimately adopted the following configuration as its core architecture.

RAG framework: EmbedChain

Language models: DeepSeek-r1:14b, Gemma3:12b

Embedding model: Hugging Face all-mpnet-base-v2

Vector database: ChromaDB

To assess the performance of different model configurations within the system, the study initially evaluated combinations of open-source LLMs, including LLaMA3.2 and LLaVA, paired with embedding models from GPT4All and Hugging Face. A total of four test configurations were constructed and evaluated based on their accuracy in responding to a set derived from a database system course. Preliminary results indicated that the average accuracy of these open-ended combinations ranged from 44.8% to 60.5%, which fell below the threshold for practical classroom application.

Through comparative analysis and screening, DeepSeek-r1:14b and Gemma3:12b were identified as models with more stable and promising performance, achieving average accuracies of 77.3% and 71.1%, respectively, under native LLM conditions. Consequently, subsequent analyses focused on these two models, using a closed-end question format for standardized evaluation. This allowed for a more rigorous assessment of the practical benefits of incorporating RAG technology to improve response accuracy. Detailed analyses and discussions of the results obtained from these two core models are presented in the following sections.

4.1. Descriptive Statistical Analysis

This study employed two models, DeepSeek-r1:14b and Gemma3:12b, each evaluated under two conditions: without RAG (native LLM) and with RAG. The corresponding response accuracy rates are presented in

Figure 2. The results reveal that both models exhibited notable performance improvements when integrated with RAG. Specifically, the accuracy of the Gemma3:12b model increased from 71.1% to 82.5%, while that of the DeepSeek-r1:14b model improved from 77.3% to 85.6%. These findings suggest that the incorporation of RAG enhances the ability to retrieve relevant knowledge and generate accurate responses, thus improving overall answer accuracy.

4.2. McNemar’s Test Analysis

To assess whether the same model exhibits statistically significant differences in performance after the application of RAG technology, the McNemar test was employed, with the results summarized in

Table 1.

As shown in

Table 1, the analysis indicated that the Gemma model experienced a statistically significant improvement in accuracy after the integration of RAG (

p = 0.0266). This suggests that RAG effectively compensates for the model’s original limitations in knowledge-intensive tasks and enhances its overall problem-solving capability. For the DeepSeek model, the test result was not statistically significant at the 0.05 level (

p = 0.0768), though a positive trend was observed, as more responses shifted from incorrect to correct. This implies that RAG still offered performance gains, albeit to a lesser extent.

In addition to the

p-values, this study also calculated the odds ratio (OR) to provide a clearer indication of the practical impact of RAG on model performance. The OR is computed as follows:

where

For the Gemma model, OR = 16/5 = 3.2, indicating that the proportion of responses that changed from incorrect to correct after applying RAG was substantially higher than those that changed from correct to incorrect. This finding complements the statistically significant p-value and further confirms the improvement in performance. For the DeepSeek model, OR = 12/4 = 3. Although the direction of the effect is similar to that observed for Gemma, it did not reach statistical significance, probably because the model’s baseline accuracy was already relatively high. Overall, RAG appears to have a more pronounced effect on models with lower initial accuracy (e.g., Gemma), while still offering potential for incremental improvement in high-performing models, as reflected by the OR values.

4.3. System Development

To accommodate variability in data sources and hardware environments, a flexible modular architecture was developed, allowing users to select appropriate model configurations via a user-friendly front-end interface to suit different educational scenarios.

To ensure data privacy and system security, the AI teaching assistant system adopts a ground-based closed-end architecture, which operates independently of external networks, thus preventing confidential data leakage. Built on the RAG framework, the CE-GAITA provides the following key features:

AI Q&A System: Users can interact with the system by submitting queries, to which the system responds by retrieving relevant content and generating responses via integrated language models.

PDF Teaching Material Support: Users can upload classroom PDF materials, ensuring that AI-generated responses are aligned with the course content.

Responsive Web Design (RWD): The front-end interface adopts responsive web technologies, ensuring smooth functionality across various devices and enhancing user experience and accessibility.

5. System Demonstration

This study develops a CE-GAITA, which offers multiple combinations of LLMs and embedding models to accommodate various application scenarios. Users can select the most suitable model according to their specific needs. Additionally, the system includes an interactive Q&A interface that allows users to input questions. Through the integration of RAG technology, the system retrieves relevant data and generates accurate responses accordingly. A file upload function is also provided that enables users to upload classroom materials in PDF format. This ensures that the system’s AI-generated responses are aligned with the actual course content.

Figure 3 shows an example of how the system responds to the teaching question, showing the results with LLM = Gemma3:12b and DeepSeek-r1:14b and the embedded model = Hugging Face all-mpnet-base-v2.

6. Discussion

The CE-GAITA developed by this institute integrates large-scale language models with RAG technology to realize intelligent question-answering and teaching assistance functions. To safeguard data privacy, the system adopts a closed-end architecture that allows autonomous operation within a controlled environment, thereby enhancing information security and system stability. In this study, the combination of LLM = DeepSeek-r1:14b, embedding model = Hugging Face all-mpnet-base-v2, and vector database = ChromaDB was identified as the optimal configuration. The system achieved an accuracy rate of 85.6% on quiz questions, effectively supporting students’ after-school self-learning and knowledge reinforcement. In addition, it has the potential to increase the willingness to ask questions while alleviating the burden of teacher responses, suggesting its possible value in educational settings.

The results also confirm that the integration of RAG technology significantly improves the accuracy of the response and semantic relevance, with an average accuracy increase of 9.85% compared to the native LLM alone. This indicates that RAG enhances the performance of the generative model by retrieving pertinent information in real time. With the inclusion of more educational materials, RAG technology is expected to further realize its potential to improve the system’s effectiveness and accuracy of the system in diverse domains.

Furthermore, compared to existing cloud-based generative AI teaching platforms such as Khanmigo [

18] and LearnLM [

19], which, despite their powerful generation capabilities, remain limited in flexibility of textbook integration and data privacy, the system developed in this study offers full control to educators over the update and scope of teaching content. It also operates reliably in a network-isolated environment, demonstrating superior adaptability to educational settings.

In addition to its technical advantages, the system introduces distinctive pedagogical features. Unlike general-purpose educational assistants designed for broad instructional support, the proposed system enables teachers to upload specific course materials, ensuring close alignment with actual teaching content. This design supports student-targeted post-class review and fosters self-directed learning, thereby helping to reduce students’ anxiety about asking questions and enhancing their engagement with textbook-based resources. Such course-specific customization not only represents a unique educational contribution but also underscores the benefits of local deployment.

This study not only validates the feasibility and technical effectiveness of local deployment, but also provides empirical support for the development of intelligent teaching assistant systems, highlighting their potential applications in after-school tutoring, textbook-based quizzing, and personalized learning support.

7. Conclusions

This study developed and evaluated a locally deployed generative AI teaching assistant system based on Retrieval-Augmented Generation (RAG). Using a standardized question bank and McNemar’s test, the system achieved significant improvements in response accuracy (up to 85.6%), demonstrating its feasibility in higher education contexts.

Beyond technical validation, the findings suggest potential educational implications. By aligning responses with course-specific materials, the system is designed to offer reliable formative feedback that may support self-directed learning and reduce students’ anxiety about asking questions. For instructors, it has the potential to alleviate repetitive workloads while maintaining consistency between classroom content and AI-generated support. These pedagogical benefits position the system as more than a technical prototype, highlighting its promise as a practical tool in learning technologies.

However, the present study is limited to an evaluation based on multiple choice items and does not involve direct participation from students or educators. As such, the pedagogical claims remain theoretical and warrant empirical validation. Future work will involve classroom-based studies to examine learning outcomes, usability, and teacher feedback. From a technical perspective, future research should also address the issue of hallucinations. Although integration of the RAG architecture can ground responses in course materials and substantially reduce irrelevant or fabricated output, it cannot entirely eliminate this risk. In educational settings, even occasional inaccuracies can mislead learners if not properly managed. Potential strategies include the implementation of user feedback mechanisms to continuously refine knowledge bases, source citation features to improve transparency, and ensemble or cross-validation approaches to reduce erroneous output. Collectively, these directions will enhance both the pedagogical and technical robustness of the system, supporting its broader adoption in real-world educational settings.

This study has addressed several underexplored areas in AI tutoring systems: (1) local deployment for enhanced privacy and institutional control. (2) selection of an empirical framework for RAG and LLMs, (3) support for traditional Chinese and textbook-based learning, (4) statistical validation of performance improvements, and (5) flexible modular architecture for various educational scenarios. These contributions fill important gaps in the current literature and offer a practical blueprint for deploying AI tutors in privacy-sensitive, multilingual, and curriculum-aligned educational environments.