Improving Accuracy of Contactless Respiratory Rate Estimation by Enhancing Thermal Sequences with Deep Neural Networks

Featured Application

Abstract

1. Introduction

2. Methodology

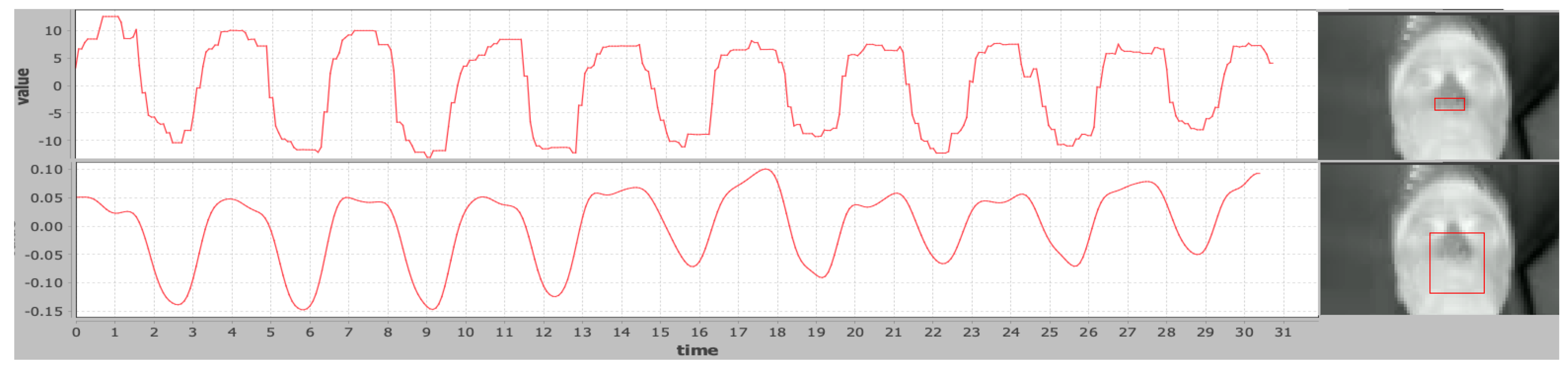

2.1. Respiratory Rate Estimation

2.2. Data Collection and Pre-Processing

2.2.1. Datasets

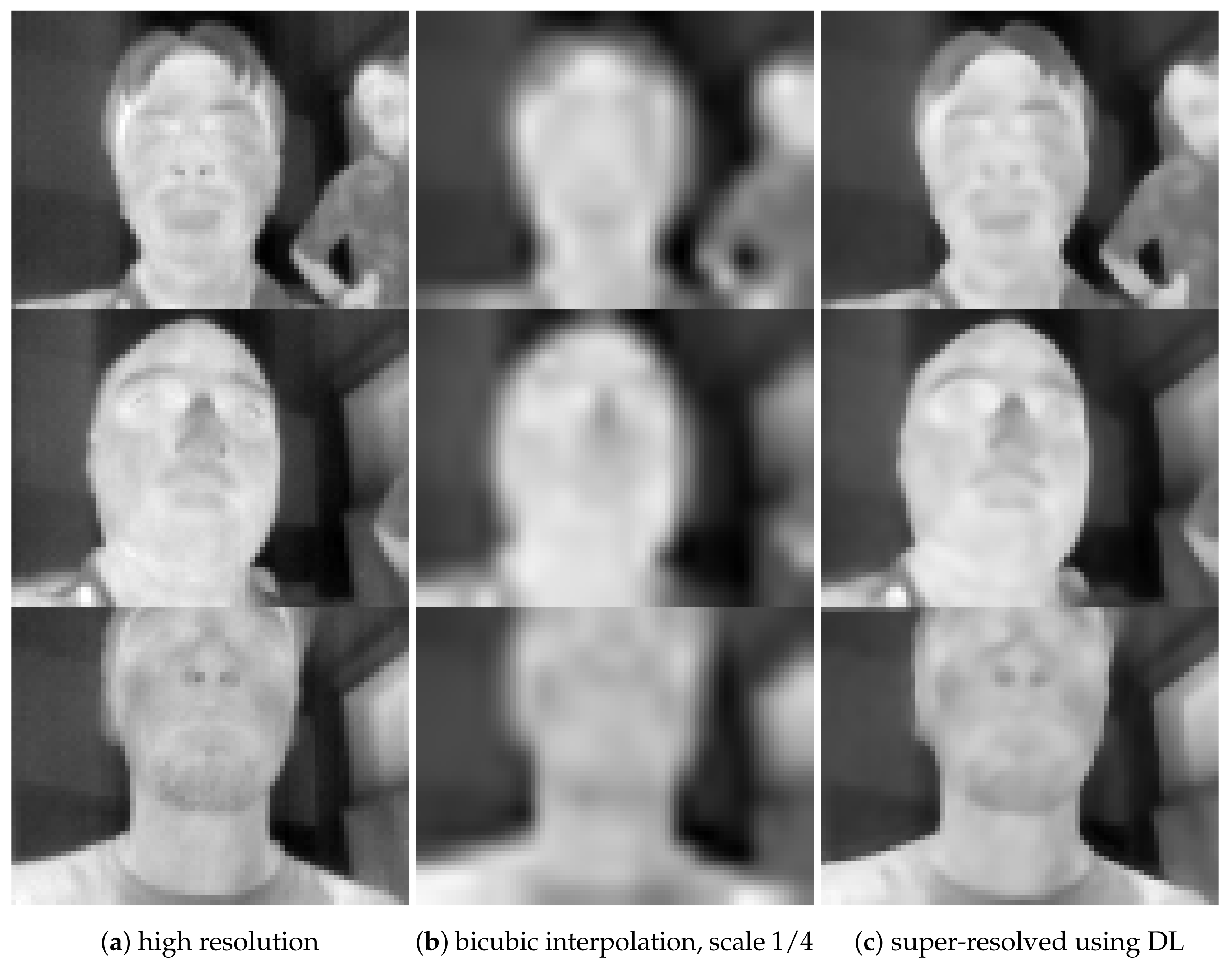

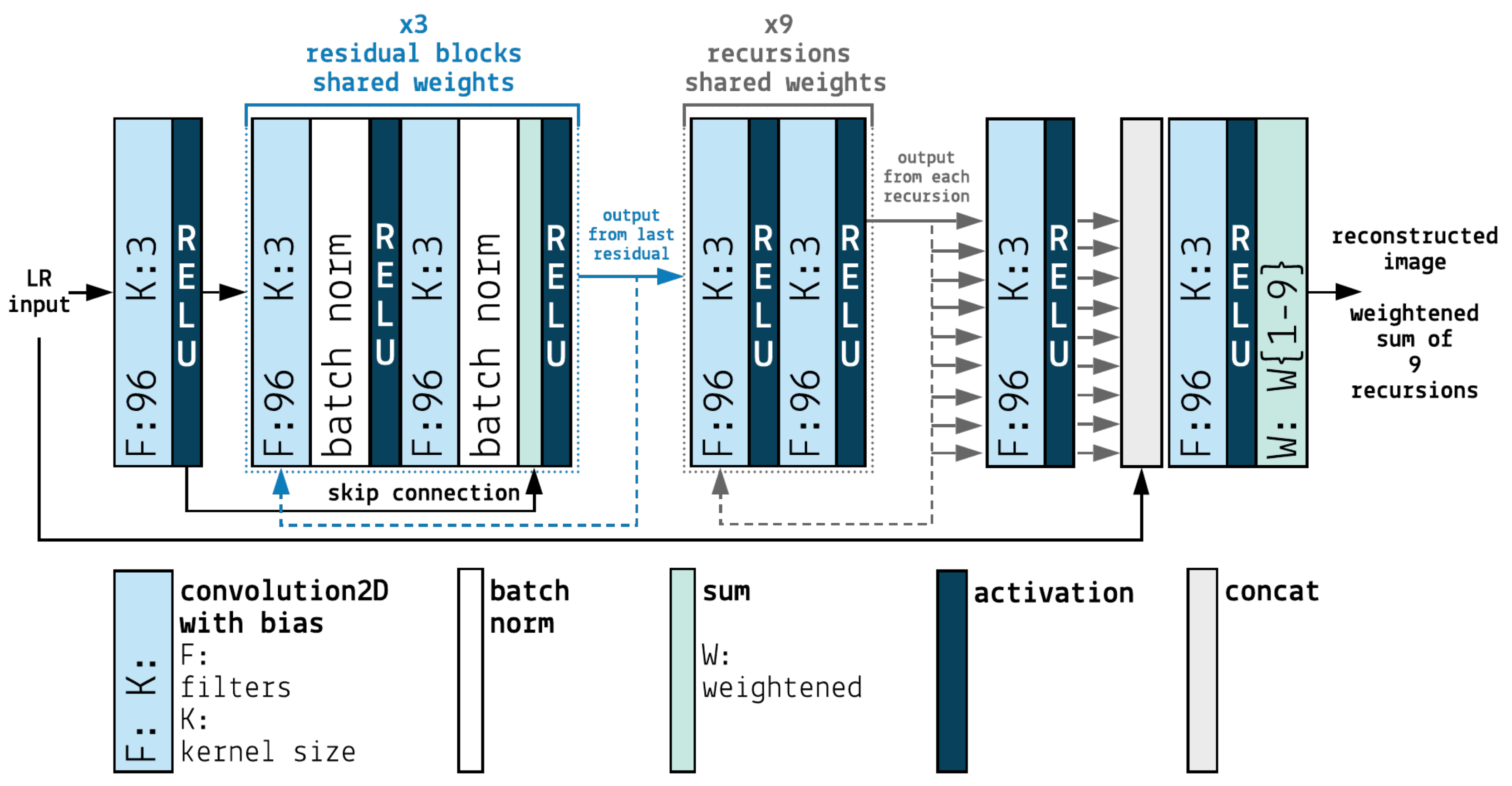

2.2.2. Image Resolution Degradation and Enhancement

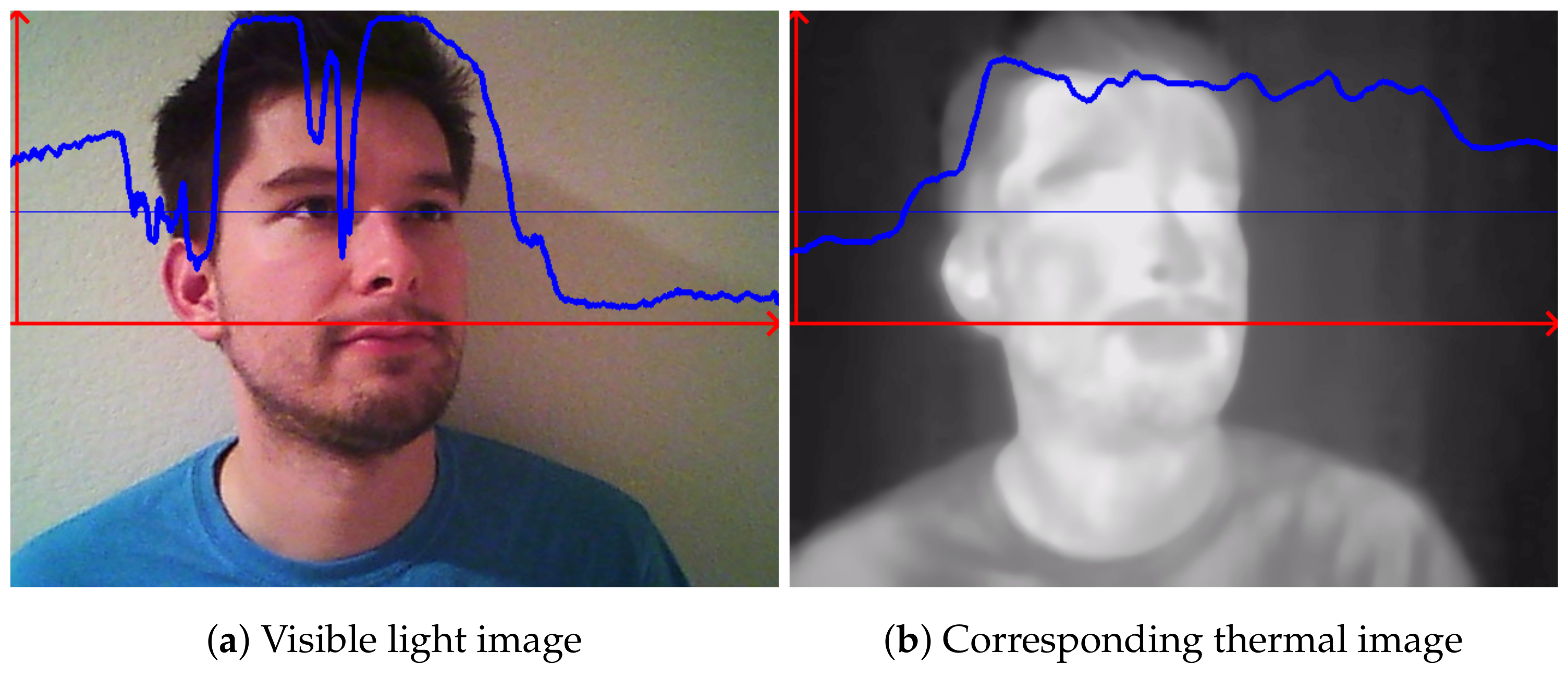

2.2.3. Color Changes Magnification

3. Results

4. Discussion

5. Materials and Methods

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| CNN | Convolutional Neural Network |

| DL | Deep Learning |

| EVM | Eulerian Video Magnification |

| FPS | Frames Per Second |

| HR | High Resolution |

| LR | Low Resolution |

| NN | Neural Network |

| PSNR | Peak Signal to Noise Ratio |

| RR | Respiratory Rate |

| SISR | Single Image Super Resolution |

| SR | Super Resolution |

| SSIM | Structural Similarity Index |

References

- Liu, W.; Wen, Y.; Yu, Z.; Li, M.; Raj, B.; Song, L. Sphereface: Deep hypersphere embedding for face recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 212–220. [Google Scholar]

- Schroff, F.; Kalenichenko, D.; Philbin, J. Facenet: A unified embedding for face recognition and clustering. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 815–823. [Google Scholar]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.Y.; Berg, A.C. Ssd: Single shot multibox detector. In European Conference on Computer Vision; Springer: Cham, Switzerland, 2016; pp. 21–37. [Google Scholar]

- Girshick, R. Fast r-cnn. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 1440–1448. [Google Scholar]

- Qi, C.R.; Su, H.; Mo, K.; Guibas, L.J. Pointnet: Deep learning on point sets for 3d classification and segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 652–660. [Google Scholar]

- Ledig, C.; Theis, L.; Huszár, F.; Caballero, J.; Cunningham, A.; Acosta, A.; Aitken, A.; Tejani, A.; Totz, J.; Wang, Z.; et al. Photo-realistic single image super-resolution using a generative adversarial network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 4681–4690. [Google Scholar]

- Chaichulee, S.; Villarroel, M.; Jorge, J.; Arteta, C.; Green, G.; McCormick, K.; Zisserman, A.; Tarassenko, L. Multi-task convolutional neural network for patient detection and skin segmentation in continuous non-contact vital sign monitoring. In Proceedings of the 2017 12th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2017), Washington, DC, USA, 30 May–3 June 2017; pp. 266–272. [Google Scholar]

- Wang, Z.; Yang, Z.; Dong, T. A review of wearable technologies for elderly care that can accurately track indoor position, recognize physical activities and monitor vital signs in real time. Sensors 2017, 17, 341. [Google Scholar] [CrossRef] [PubMed]

- Abbas, A.K.; Heimann, K.; Jergus, K.; Orlikowsky, T.; Leonhardt, S. Neonatal non-contact respiratory monitoring based on real-time infrared thermography. Biomed. Eng. Online 2011, 10, 93. [Google Scholar] [CrossRef] [PubMed]

- Ng, E.Y.K. Is thermal scanner losing its bite in mass screening of fever due to SARS? Med. Phys. 2005, 32, 93–97. [Google Scholar] [CrossRef] [PubMed]

- Kwasniewska, A.; Ruminski, J.; Szankin, M.; Czuszynski, K. Remote Estimation of Video-Based Vital Signs in Emotion Invocation Studies. In Proceedings of the 2018 40th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Honolulu, HI, USA, 17–21 July 2018; pp. 4872–4876. [Google Scholar]

- Fei, J.; Zhu, Z.; Pavlidis, I. Imaging breathing rate in the co 2 absorption band. In Proceedings of the 2005 IEEE Engineering in Medicine and Biology 27th Annual Conference, Shanghai, China, 17–18 January 2006; pp. 700–705. [Google Scholar]

- Murthy, R.; Pavlidis, I. Non-contact monitoring of respiratory function using infrared imaging. IEEE Eng. Med. Biol. Mag. 2006, 25, 57. [Google Scholar] [CrossRef]

- Murthy, J.N.; van Jaarsveld, J.; Fei, J.; Pavlidis, I.; Harrykissoon, R.I.; Lucke, J.F.; Faiz, S.; Castriotta, R.J. Thermal infrared imaging: A novel method to monitor airflow during polysomnography. Sleep 2009, 32, 1521–1527. [Google Scholar] [CrossRef]

- Fei, J.; Pavlidis, I. Thermistor at a distance: Unobtrusive measurement of breathing. IEEE Trans. Biomed. Eng. 2009, 57, 988–998. [Google Scholar]

- Rumiński, J. Evaluation of the respiration rate and pattern using a portable thermal camera. In Proceedings of the 13th Quantitative Infrared Thermography Conference, Gdansk, Poland, 4–8 July 2016. [Google Scholar]

- Rumiński, J. Reliability of pulse measurements in videoplethysmography. Metrol. Meas. Syst. 2016, 23, 359–371. [Google Scholar] [CrossRef]

- Zhou, Y.; Tsiamyrtzis, P.; Lindner, P.; Timofeyev, I.; Pavlidis, I. Spatiotemporal smoothing as a basis for facial tissue tracking in thermal imaging. IEEE Trans. Biomed. Eng. 2012, 60, 1280–1289. [Google Scholar] [CrossRef]

- Al-Khalidi, F.Q.; Saatchi, R.; Burke, D.; Elphick, H. Tracking human face features in thermal images for respiration monitoring. In Proceedings of the ACS/IEEE International Conference on Computer Systems and Applications-AICCSA 2010, Hammamet, Tunisia, 16–19 May 2010; pp. 1–6. [Google Scholar]

- Chauvin, R.; Hamel, M.; Brière, S.; Ferland, F.; Grondin, F.; Létourneau, D.; Tousignant, M.; Michaud, F. Contact-free respiration rate monitoring using a pan–tilt thermal camera for stationary bike telerehabilitation sessions. IEEE Syst. J. 2014, 10, 1046–1055. [Google Scholar] [CrossRef]

- Flir Lepton Camera Modules. Available online: https://www.flir.com/products/lepton/ (accessed on 24 August 2019).

- Wu, H.Y.; Rubinstein, M.; Shih, E.; Guttag, J.; Durand, F.; Freeman, W.T. Eulerian Video Magnification for Revealing Subtle Changes in the World. ACM Trans. Graph. 2012, 31, 1–8. [Google Scholar] [CrossRef]

- Wadhwa, N.; Rubinstein, M.; Durand, F.; Freeman, W.T. Phase-based video motion processing. ACM Trans. Graph. (TOG) 2013, 32, 1–9. [Google Scholar] [CrossRef]

- Oh, T.H.; Jaroensri, R.; Kim, C.; Elgharib, M.; Durand, F.; Freeman, W.T.; Matusik, W. Learning-based video motion magnification. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 633–648. [Google Scholar]

- Estrada, M.; Stowers, A. Amplification of Heart Rate in Multi-Subject Videos. Available online: Https://web.stanford.edu/class/ee368/Project_Spring_1415/Reports/Stowers_Estrada.pdf (accessed on 30 September 2019).

- Balakrishnan, G.; Durand, F.; Guttag, J. Detecting Pulse from Head Motions in Video. In Proceedings of the 2013 IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 23–28 June 2013; pp. 3430–3437. [Google Scholar]

- Aubakir, B.; Nurimbetov, B.; Tursynbek, I.; Varol, H.A. Vital sign monitoring utilizing Eulerian video magnification and thermography. In Proceedings of the 2016 38th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Orlando, FL, USA, 16–20 August 2016; pp. 3527–3530. [Google Scholar]

- Bennett, S.L.; Goubran, R.; Knoefel, F. Comparison of motion-based analysis to thermal-based analysis of thermal video in the extraction of respiration patterns. In Proceedings of the 2017 39th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Seogwipo, Korea, 11–15 July 2017; pp. 3835–3839. [Google Scholar]

- Dong, C.; Loy, C.C.; He, K.; Tang, X. Images super-resolution using deep convolutional networks. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 38, 295–307. [Google Scholar] [CrossRef] [PubMed]

- Kim, J.; Kwon Lee, J.; Mu Lee, K. Deeply-recursive convolutional network for image super-resolution. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 1637–1645. [Google Scholar]

- Tai, Y.; Yang, J.; Liu, X. Image super-resolution via deep recursive residual network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; Volume 1, p. 5. [Google Scholar]

- Zhang, X.; Li, C.; Meng, Q.; Liu, S.; Zhang, Y.; Wang, J. Infrared Image Super Resolution by Combining Compressive Sensing and Deep Learning. Multidiscip. Digit. Publ. Inst. Sens. J. 2018, 8, 2587. [Google Scholar] [CrossRef] [PubMed]

- Liu, C.; Sun, X.; Chen, C.; Rosin, P.L.; Yan, Y.; Jin, L.; Peng, X. Multi-Scale Residual Hierarchical Dense Networks for Single Image Super-Resolution. IEEE Access 2019, 7, 60572–60583. [Google Scholar] [CrossRef]

- Wang, X.; Yu, K.; Dong, C.; Change Loy, C. Recovering realistic texture in image super-resolution by deep spatial feature transform. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 606–615. [Google Scholar]

- Bulat, A.; Tzimiropoulos, G. Super-FAN: Integrated facial landmark localization and super-resolution of real-world low resolution faces in arbitrary poses with GANs. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 109–117. [Google Scholar]

- Blau, Y.; Mechrez, R.; Timofte, R.; Michaeli, T.; Zelnik-Manor, L. The 2018 PIRM challenge on perceptual image super-resolution. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018. [Google Scholar]

- Cho, Y.; Bianchi-Berthouze, N.; Marquardt, N.; Julier, S.J. Deep Thermal Imaging: Proximate Material Type Recognition in the Wild through Deep Learning of Spatial Surface Temperature Patterns. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems, Montreal, QC, Canada, 21–26 April 2018; p. 2. [Google Scholar]

- Kniaz, V.; Gorbatsevich, V.; Mizginov, V. THERMALNET: A Deep Convolutional Network for Synthetic Thermal Image Generation. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, 42, 41. [Google Scholar] [CrossRef]

- Bhattacharya, P.; Riechen, J.; Zölzer, U. Infrared Image Enhancement in Maritime Environment with Convolutional Neural Networks. VISIGRAPP 2018, 4, 37–46. [Google Scholar]

- Kuang, X.; Sui, X.; Liu, Y.; Chen, Q.; Gu, G. Single infrared image enhancement using a deep convolutional neural network. Neurocomputing 2019, 332, 119–128. [Google Scholar] [CrossRef]

- He, Z.; Tang, S.; Yang, J.; Cao, Y.; Yang, M.Y.; Cao, Y. Cascaded Deep Networks with Multiple Receptive Fields for Infrared Image Super-Resolution. IEEE Trans. Circuits Syst. Video Technol. 2018, 29, 2310–2322. [Google Scholar] [CrossRef]

- Zhang, X.; Li, C.; Meng, Q.; Liu, S.; Zhang, Y.; Wang, J. Infrared Image Super Resolution by Combining Compressive Sensing and Deep Learning. Sensors 2018, 18, 2587. [Google Scholar] [CrossRef]

- Kwasniewska, A.; Szankin, M.; Ruminski, J.; Kaczmarek, M. Evaluating Accuracy of Respiratory Rate Estimation from SuperResolved Thermal Imagery. In Proceedings of the IEEE EMBC Conference, Berlin, Germany, 23–27 July 2019; pp. 1–4. [Google Scholar]

- Ruminski, J.; Kwasniewska, A. Evaluation of respiration rate using thermal imaging in mobile conditions. In Application of Infrared to Biomedical Sciences; Springer: Singapore, 2017; pp. 311–346. [Google Scholar]

- Kaczmarek, M.; Bujnowski, A.; Wtorek, J.; Polinski, A. Multimodal platform for continuous monitoring of the elderly and disabled. J. Med. Imaging Health Inform. 2012, 2, 56–63. [Google Scholar] [CrossRef]

- Ruminski, J.; Bujnowski, A.; Czuszynski, K.; Kocejko, T. Estimation of respiration rate using an accelerometer and thermal camera in eGlasses. In Proceedings of the 2016 Federated Conference on Computer Science and Information Systems (FedCSIS), Gdańsk, Poland, 11–14 September 2016; pp. 1431–1434. [Google Scholar]

- Kwaśniewska, A.; Giczewska, A.; Rumiński, J. Big data significance in remote medical diagnostics based on deep learning techniques. Task Q. 2017, 21, 309–319. [Google Scholar]

- Kim, J.; Kwon Lee, J.; Mu Lee, K. Accurate image super-resolution using very deep convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 1646–1654. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Eulerian Video Magnification Online Tool. Available online: https://lambda.qrilab.com/site/ (accessed on 10 August 2019).

- Ruminski, J.; Kwasniewska, A.; Szankin, M.; Kocejko, T.; Mazur-Milecka, M. Evaluation of Facial Pulse Signals Using Deep Neural Net Models. In Proceedings of the IEEE EMBC Conference, Berlin, Germany, 23–27 July 2019; pp. 1–4. [Google Scholar]

- Hochhausen, N.; Barbosa Pereira, C.; Leonhardt, S.; Rossaint, R.; Czaplik, M. Estimating Respiratory Rate in Post-Anesthesia Care Unit Patients Using Infrared Thermography: An Observational Study. Sensors 2018, 18, 1618. [Google Scholar] [CrossRef] [PubMed]

- Alam, S.; Singh, S.P.; Abeyratne, U. Considerations of handheld respiratory rate estimation via a stabilized Video Magnification approach. In Proceedings of the 2017 39th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Jeju Island, Korea, 11–15 July 2017; pp. 4293–4296. [Google Scholar]

- Szankin, M.; Kwasniewska, A.; Sirlapu, T.; Wang, M.; Ruminski, J.; Nicolas, R.; Bartscherer, M. Long Distance Vital Signs Monitoring with Person Identification for Smart Home Solutions. In Proceedings of the 2018 40th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), Honolulu, HI, USA, 17–21 July 2018; pp. 1558–1561. [Google Scholar]

| Scaling Factor | Dataset | Bit Resolution | Evaluation Metrics on the Test Set | |

|---|---|---|---|---|

| PSNR | SSIM | |||

| 1/2 and 2 | Lepton Bicubic | 8 bits | 41.82± 0.55 | 0.96± 0.01 |

| 16 bits | 63.82± 0.73 | 0.99± 0.01 | ||

| Lepton SR | 8 bits | 43.21± 0.33 | 0.97± 0.01 | |

| 16 bits | 72.92± 4.47 | 0.99± 0.01 | ||

| 1/4 and 4 | Lepton Bicubic | 8 bits | 39.91± 0.46 | 0.81± 0.11 |

| 16 bits | 61.31± 0.69 | 0.99± 0.01 | ||

| Lepton SR | 8 bits | 42.18± 0.24 | 0.95± 0.01 | |

| 16 bits | 67.34± 5.83 | 0.99± 0.02 | ||

| 1/2 and 2 | SC3000 Bicubic | 8 bits | 42.69± 3.36 | 0.81± 0.22 |

| 16 bits | 68.98± 1.02 | 0.99± 0.01 | ||

| SC3000 SR | 8 bits | 43.61± 0.18 | 0.96± 0.01 | |

| 16 bits | 70.05± 0.91 | 0.99± 0.01 | ||

| 1/4 and 4 | SC3000 Bicubic | 8 bits | 41.36± 2.30 | 0.79± 0.21 |

| 16 bits | 65.74± 0.95 | 0.99± 0.01 | ||

| SC3000 SR | 8 bits | 43.97± 0.22 | 0.96± 0.01 | |

| 16 bits | 66.50± 0.93 | 0.99± 0.01 | ||

| Scaling Factor | Dataset | Bit Resolution | RMSE of Respiratory Rate Estimation RR Estimator Aggregation Operation | |||

|---|---|---|---|---|---|---|

| eRR_sp Average | eRR_sp Skewness | eRR_as Average | eRR_as Skewness | |||

| 0 | Lepton | 8 bits | 4.97 | 6.28 | 15.61 | 12.80 |

| 16 bits | 5.15 | 6.35 | 5.68 | 7.39 | ||

| Lepton * EVM | 8 bits | 5.58 | 7.04 | 9.40 | 11.94 | |

| 16 bits | 5.58 | 6.81 | 7.98 | 11.28 | ||

| 1/2 and 2 | Lepton Bicubic | 8 bits | 5.66 | 7.21 | 9.14 | 7.96 |

| 16 bits | 4.93 | 7.20 | 8.08 | 7.77 | ||

| Lepton SR | 8 bits | 4.89 | 5.64 | 4.95 | 6.21 | |

| 16 bits | 4.93 | 6.72 | 6.29 | 7.41 | ||

| 1/4 and 4 | Lepton Bicubic | 8 bits | 5.61 | 7.64 | 8.57 | 7.90 |

| 16 bits | 6.40 | 7.32 | 8.37 | 7.34 | ||

| Lepton SR | 8 bits | 4.96 | 5.93 | 7.41 | 12.25 | |

| 16 bits | 4.89 | 6.10 | 10.31 | 8.00 | ||

| 0 | SC3000 | 8 bits | 3.48 | 3.59 | 17.19 | 11.06 |

| 16 bits | 3.61 | 5.61 | 12.11 | 14.72 | ||

| SC3000 EVM | 8 bits | 5.00 | 6.15 | 5.98 | 7.82 | |

| 16 bits | 4.56 | 6.09 | 5.84 | 7.65 | ||

| 1/2 and 2 | SC3000 Bicubic | 8 bits | 6.35 | 6.04 | 17.05 | 11.26 |

| 16 bits | 5.91 | 8.46 | 34.43 | 14.52 | ||

| SC3000 SR | 8 bits | 2.94 | 2.46 | 5.56 | 4.27 | |

| 16 bits | 4.09 | 3.59 | 8.39 | 8.90 | ||

| 1/4 and 4 | SC3000 Bicubic | 8 bits | 5.73 | 8.23 | 17.05 | 11.65 |

| 16 bits | 5.73 | 6.32 | 14.31 | 11.35 | ||

| SC3000 SR | 8 bits | 3.48 | 5.11 | 13.36 | 12.12 | |

| 16 bits | 3.48 | 5.38 | 14.48 | 14.61 | ||

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kwasniewska, A.; Ruminski, J.; Szankin, M. Improving Accuracy of Contactless Respiratory Rate Estimation by Enhancing Thermal Sequences with Deep Neural Networks. Appl. Sci. 2019, 9, 4405. https://doi.org/10.3390/app9204405

Kwasniewska A, Ruminski J, Szankin M. Improving Accuracy of Contactless Respiratory Rate Estimation by Enhancing Thermal Sequences with Deep Neural Networks. Applied Sciences. 2019; 9(20):4405. https://doi.org/10.3390/app9204405

Chicago/Turabian StyleKwasniewska, Alicja, Jacek Ruminski, and Maciej Szankin. 2019. "Improving Accuracy of Contactless Respiratory Rate Estimation by Enhancing Thermal Sequences with Deep Neural Networks" Applied Sciences 9, no. 20: 4405. https://doi.org/10.3390/app9204405

APA StyleKwasniewska, A., Ruminski, J., & Szankin, M. (2019). Improving Accuracy of Contactless Respiratory Rate Estimation by Enhancing Thermal Sequences with Deep Neural Networks. Applied Sciences, 9(20), 4405. https://doi.org/10.3390/app9204405