Abstract

There is a need for multimodal strategies to keep research participants informed about study results. Our aim was to characterize preferences of genomic research participants from two institutions along four dimensions of general research result updates: content, timing, mechanism, and frequency. Methods: We conducted a web-based cross-sectional survey that was administered from 25 June 2018 to 5 December 2018. Results: 397 participants completed the survey, most of whom (96%) expressed a desire to receive research updates. Preferences with high endorsement included: update content (brief descriptions of major findings, descriptions of purpose and goals, and educational material); update timing (when the research is completed, when findings are reviewed, when findings are published, and when the study status changes); update mechanism (email with updates, and email newsletter); and update frequency (every three months). Hierarchical cluster analyses based on the four update preferences identified four profiles of participants with similar preference patterns. Very few participants in the largest profile were comfortable with budgeting less money for research activities so that researchers have money to set up services to send research result updates to study participants. Conclusion: Future studies may benefit from exploring preferences for research result updates, as we have in our study. In addition, this work provides evidence of a need for funders to incentivize researchers to communicate results to participants.

1. Introduction

Recruiting and retaining participants for biobanks and observational studies are well-known challenges for biomedical research [1,2]. Population biobanks are essential structures to store and manage biological samples and information that can be used for research [3,4]. With the willingness to participate in biobanks correlated to opportunities to be updated about the biobanks [5], soliciting preferences will be key to maintaining successful and patient-centered population biobanks. Providing such opportunities for genomic research participants to be updated on general research results, in particular, holds promise to encourage new and continued participation [6,7] and also offers potential value back to the participant as a form of reciprocity and a signal of respect [8]. Research participants, however, have different preferences for when and how they would like to be updated [9]. Thus, there is a need to understand if there are distinct groups of individuals who have similar preferences for being updated about research (i.e., preference profiles). Such knowledge of preference profiles for target research populations can help inform what options researchers provide to eligible participants at the time of study enrollment to be inclusive. The aim of this project was to characterize the preference profiles of genomic study participants from two institutions.

There is broad recognition of a need for mechanisms for researchers to share results with participants [10]. Previous research to understand study participants’ preferences for research results have focused on three main areas: individual results, aggregate results, and general research results [11]. Individual results provide study participants with access to their own data, which may include lab measurements, genome sequences, responses to survey questions, etc. Aggregate results provide similar data types at an aggregate level. General research results include basic information about a study and its outcomes [12]. Helping participants to understand their individual results is considered a best practice and is supported in the literature [13,14,15]; however, many researchers are concerned about the feasibility of returning those data [16]. As highlighted in the National Academies of Sciences, Engineering and Medicine guidance for a new research paradigm [17], there is a balance between the value and feasibility of returning results, with justification for return being strongest when both are high. General research results may be considered the most feasible of the three types of results to return. The value of such results to study participants is similar to the value recognized with the return of aggregate results: affirming the value of their participation, building trust in the research enterprise, and education about the research process [18]. Thus, as it becomes more feasible to return individual results, the return of general research results will remain important.

There remain gaps in our knowledge of study participant preferences for the dissemination of general research results [19,20]. For biobanks, there is the capacity to generate genetic data that may have health implications for participants, raising the need to address return of individual results, aggregate results, and general research results.

Our study considers participant preferences for general research result updates along four dimensions: content, timing, mechanism, and frequency. We assessed: the level of endorsement of a preference statement and ranked those statements along the four dimensions; identified profiles of individuals with similar preferences; and examined associations between preference profiles with opinions about using clinical information in research and comfort with reallocating money for research activities to set up services providing research result updates to participants.

2. Materials and Methods

This was a web-based cross-sectional survey study at two institutions (Johns Hopkins University (JHU) and Columbia University (CU)) of adult patients who had previously enrolled in a research study. The survey was administered from 25 July 2018 to 5 December 2018.

2.1. Recruitment Criteria and Survey Distribution

At JHU, we recruited patients who were seen as inpatients or outpatients at Johns Hopkins Hospital, participated in one of 35 studies registered with the database of Genotypes and Phenotypes (dbGAP, https://www.ncbi.nlm.nih.gov/gap/(accessed on May 7, 2021), and had a MyChart (patient portal) account they had logged into within the last 12 months. Patients were excluded if they were known to be deceased, had previously opted out of being contacted for recruitment through MyChart, had an invalid or null email address, or were previously contacted as part of a related pilot survey study. For CU, we recruited patients who were recently seen in outpatient clinics at Columbia/New York-Presbyterian Hospital (including the Herbert Irving Comprehensive Cancer Center), and had consented to be re-contacted by email for research. Surveys were distributed using a Web-based Qualtrics survey embedded in an email distributed by MyChart (at JHU) and by the site PI (at CU).

2.2. Measures

Our primary outcome was the preference of a participant for general research results, along the four dimensions mentioned earlier, with potential preference modifiers based on social and demographic characteristics.

2.2.1. Social and Demographic Characteristics

Demographic measures included gender, age, ethnicity, race, and highest level of education. We also asked respondents to report their primary health care institution, if they speak English as their first language, and if they remembered donating samples of any kind for use in research. We also asked respondents if they wanted to be updated about general research results. Respondents were asked if they agree or disagree with three statements about desired types of updates: research on health topics I choose, research that uses samples and clinical information from my institution, research that uses my samples and clinical information (Questions 6–8). Response options were on a 3-point Likert scale (agree, neither agree nor disagree, disagree). Taking an opt-in perspective, we labeled an individual as “want to be updated” if they answered “agree” to at least one of Questions 6–8. Otherwise they were labeled as “do not want/no preference to be updated.” See Supplementary Materials for survey.

2.2.2. Preferences for Research Updates

Update content: Respondents were asked about their preference for each of seven types of content updates: number of published articles about the research, brief descriptions of the research, brief descriptions of major findings from the research, brief descriptions of any media coverage of the research, educational material about the research, community events about the research, and announcements about online platforms to interact with others with similar interests (Questions 10–16). Response options were on a 3-point Likert scale (high, medium, low).

Update timing: Respondents were asked about their preference for each of seven options for when to receive updates: when the research is completed, when research findings are reviewed (validated) by other researchers and clinicians, when research findings are published, when educational materials about the research are available, when there is a media release about the research, when there is a community event about the research, and when status of the research changes (Questions 17–23). Response options were on a 3-point Likert scale (high, medium, low).

Update mechanism: Respondents were asked about their preference for each of five mechanisms to receive updates: a call on your phone to deliver a prerecorded message, a text (SMS) message, a mailed newsletter, an email, and an electronic newsletter by email (Questions 26–30). Response options were on a 3-point Likert scale (high, medium, low).

Update frequency: Respondents were asked how often they would like to receive updates about the research (Question 25): never, less than once a year, once a year, quarterly (once every 3 months), once a month, once every 2 weeks, once a week, and more than once a week. We created a three-group measure to represent a preference for update frequency: once a month or more frequent (once a month, once every 2 weeks, once a week, more than once a week); once every 3 months (quarterly); and once a year or less frequent (never, less than once a year, once a year).

2.2.3. Opinions about Research Focus and Budgeting

Interest in research focus: Respondents were asked if it is important that their samples and clinical information are used in different types of research: a disease in general, a disease that effects a loved one, and diseases seen in their community (Questions 3–5). Response options were on a 3-point Likert scale (agree, neither agree nor disagree, disagree). An individual was labeled as interested in research focus if they answered “agree” to at least one of Questions 3–5. Otherwise they were labeled as no interest in/indifferent on research focus.

Comfort with budgeting less money for research: Respondents were asked if they would support budgeting a bit less money for research activities so that researchers have money to set up services to send research study updates to study participants (Question 31). Response options were yes, no, unsure. An individual was labeled as comfortable with less money for research if they answered yes to Question 31, and labeled as not comfortable/unsure if they answered no or unsure to Question 31.

2.3. Analytical Strategy

Descriptive analyses were used for social and demographic characteristics and research update preferences.

We assessed the level of endorsement of a preference statement and ranked preference statements by ordering the frequency of individuals indicating that they agree with a statement from the largest (rank 1) to the smallest. We hypothesized that preference statements with high endorsement (>50% of the survey respondents) would be content types that are already routinely prepared by research teams (e.g., description of study purpose and goals), that are provided by research teams at common times points (e.g., when the research is completed), that are digitally-based (e.g., email or SMS texting updates), and are at a frequency of once a year or more (e.g., once every three months, once a month or more frequent).

We tested our hypothesis that there would be distinct preference profiles among surveyed individuals by conducting a hierarchical cluster analysis based on the four dimensions of general research result updates: content, timing, mechanism and frequency. A cluster dendrogram diagram was created to show a hierarchical clustering relationship between similar sets of data. In order to further characterize preference profiles, comparisons between clusters were made using χ2 test. To test our hypotheses that preference profiles would be associated with different opinions about how clinical information is used in research, we conducted a bivariate analysis by χ2 test. We also tested associations with different demographics, also using χ2 test. All statistical analyses were conducted using R (version 3.6.2).

3. Results

3.1. Social and Demographic Characteristics

A total of 397 participants completed the survey. Almost two thirds of the survey participants were female (268, 68%), more than two thirds were 45 years and older (290, 73%), nearly a third of participants had a bachelor’s degree (121, 31%) and 44% of participants had a graduate or professional degree. The majority were non-Hispanic (362, 91%), with 84.6% non-Hispanic White. Most of the participants were JHU patients (313, 79%), with the remaining from CU. Most of the participants (382, 96%) wanted to be updated about the research (Table 1).

Table 1.

Social and demographic characteristics of the survey respondents.

3.2. Ranking of Preferences for Research Updates

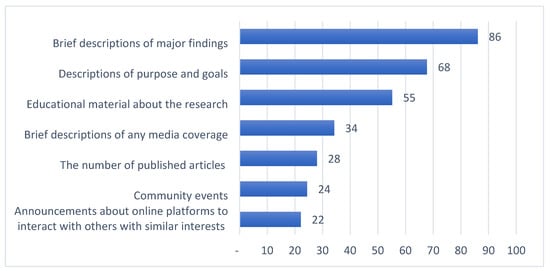

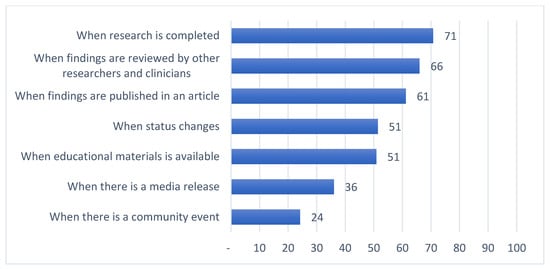

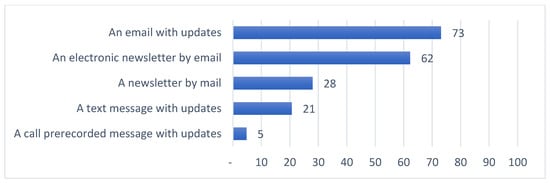

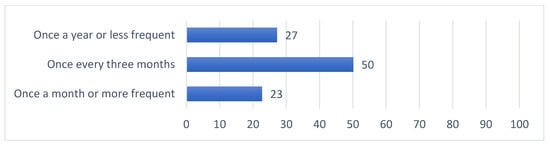

Summaries of preferences for updates on general research results along four dimensions are provided in Figure 1, Figure 2, Figure 3 and Figure 4. Among those preferences receiving a high level of endorsement (>50% of the survey respondents), the highest-ranked content type was brief descriptions of major findings, followed by descriptions of purpose and goals, and educational material about the research (Figure 1); the highest-ranked update timing was when the research is completed, followed by when findings are reviewed by other researchers and clinicians, when findings are published, and when the status of the study changes (Figure 2); the highest-ranked update mechanism was via email, followed by an electronic newsletter by email (Figure 3); and the highest-ranked update frequency was every three months (Figure 4).

Figure 1.

Percent of survey respondents indicating that they agree with each update content statement.

Figure 2.

Percent of survey respondents indicating that they agree with each update timing statement.

Figure 3.

Percent of survey respondents indicating that they agree with each update mechanism statement.

Figure 4.

Percent of survey respondents indicating that they agree with each update frequency statement.

3.3. Cluster Analyses to Identify Preference Profiles

Our cluster analysis identified four preference profiles:

- cluster 1 (n = 75), moderate value-driven and moderate engagement-driven, MVME

- cluster 2 (n = 170), moderate value-driven and low engagement-driven, MVLE

- cluster 3 (n = 69), low value-driven and low engagement-driven, LVLE

- cluster 4 (n = 83), high value-driven and high engagement-driven, HVHE

The strength of endorsement with content type, timing, mechanism and frequency preference dimension attributes define each preference profile (Table 2). The rankings within each preference dimension can help to differentiate the four preference profiles. Most of the distinguishing preferences were in the content type and timing categories. For instance, receiving brief descriptions of any media coverage and receiving updates when there is a media release were top preferences for cluster 4, but those preferences were ranked below 3 for all other clusters. In addition, receiving the number of published articles was the lowest ranked content type (ranked 7) for clusters 3 and 4, but was ranked 4 for both clusters 1 and 2.

Table 2.

Percent of survey respondents endorsing a preference statement in each cluster and its ranking within each update dimension (content, timing, mechanism, and frequency).

Other distinguishing preferences between clusters for timing were to receive updates when the status of the study changes (ranked differently for all clusters) and to receive updates when there is a community event (ranked high for only cluster 4). The preference to receive updates when the status of the study changes was ranked in the top 3 for clusters 1 and 3 but not for clusters 2 and 4 (ranked 5 and 4). Receiving updates when there is a community event was ranked lowest for all but cluster 4 (ranked 2). Finally, the highest-ranked preference for update frequency was every three months for all clusters except cluster 1 (ranked 2). For cluster 1, the highest-ranked preference for update frequency was once a month or more.

To label clusters, we considered distinguishing preference dimension attributes. The media coverage and published articles content types conveyed the value of the research, and were used to label clusters as low, medium or high value-driven. We also considered update timing, update frequency, and two update content types (community events and announcements about online platforms to interact with others) that conveyed engagement to label clusters as low, medium or high engagement-driven.

3.4. Opinions about Research Focus and Budgeting among Preference Profiles

There was a statistically significant difference between preference profiles by comfort with budgeting less money for research activities so that researchers have money to set up services to send research updates to participants (Table 3): In cluster 2, 13.5% (23/170) of respondents agreed with this statement vs. 21.7–31.3% of respondents from the three other clusters. There was no statistically significant difference in preference profile by interest in research focus.

Table 3.

Percent of survey respondents from each cluster indicating comfort with budgeting less money for research, and an importance to them that their samples and clinical information be used in certain types of research.

3.5. Characteristics of Preference Profiles

The demographic characteristics of the four preference profiles are shown in Table 4. There were no statistically significant differences in preferences by gender, age group or education among the clusters. Additionally, there was a borderline significance in preference profiles between the two institutions (p = 0.047). Differences by demographic characteristics are summarized in Supplemental Materials Table S1.

Table 4.

Percent of survey respondents assigned to each cluster, according to demographic characteristics, health institution, and opinions about budgeting less money for research activities and interest in research focus.

4. Discussion

In this study, we explored preferences for updates on general research results, including the content, timing, mechanism and frequency, among individuals who have previously donated samples and clinical information for use in genomic research (Figure 1, Figure 2, Figure 3 and Figure 4, Table 2). This work confirms the findings in the literature indicating that most research participants want results from studies in which they participate [19,21,22,23,24,25,26]. A “one-size-fits-all” dissemination approach, however, is not sufficient to address participant desires, because we found at least four clusters of preference profiles. In our assessments of specific preferences receiving high endorsement, our findings were mixed with respect to our hypotheses.

First, as hypothesized, we found that there was high endorsement of preferences to receive updates on content types that are already routinely prepared by research teams, including preparing descriptions of study purpose and goals and brief descriptions of major findings. Most clusters also showed a high endorsement for updates on both study purpose and goals (clusters 1, 2, and 4), and on brief descriptions of major findings (clusters 1, 2, and 4). With some revisions to target a lay public audience, those descriptions may be repurposed to provide to participants at a low cost to the study team. There was also, however, high endorsement of preferences to receive updates on one content type that is less often prepared by research teams: educational material about the research. Two clusters showed a high endorsement for updates on educational material (clusters 1 and 4). The desire for educational material about the research has been described in one prior study where participants wanted to know how research findings apply to health care and policy and what impact it has for future decision-making in healthcare [19].

Second, in support of our hypothesis that there would be a preference for updates at time points that are already common for research studies, we found that there was high endorsement of preferences to receive updates when the research is completed. Most clusters also showed a high endorsement for updates when the research is completed (clusters 1, 2, and 4). For some forms of research, such as community-based research, it is already considered best practice for researchers to disseminate updates when the research is completed [19,26]. Less common time points for which there was also high endorsement included: when findings are reviewed by other researchers and clinicians, when findings are published, and when the status of the study changes. Three clusters showed a high endorsement for updates when findings are reviewed by others (clusters 1, 2, and 4); three for when findings are published (clusters 1, 2, and 4); and two for when the status of a study changes (clusters 1 and 4). The desire to be updated when findings are reviewed by other researchers and clinicians, and when findings are published, however, is consistent with the work of others that indicates study participants are willing to wait until results have been reviewed by other researchers for accuracy and until after the study has been published [24].

Third, as we hypothesized, our review of preferences for mechanisms to deliver updates indicated high endorsement of digital approaches: email with updates and electronic newsletter by email. Three clusters also showed high endorsement for email (clusters 1, 2, and 4), and for electronic newsletter by email (clusters 1, 2, and 4). Texting (SMS) updates, however, were not included in this group and none of the clusters showed high endorsement. Given that enabling mechanisms for text message updates may be more expensive than sending emails, this result adds to the literature showing that participants are open to receiving results through low-cost digital channels such as email and websites [23,24].

Fourth, there was high endorsement of preferences to receive updates every three months. Two clusters also showed high endorsement (clusters 2 and 4). This finding was complementary to results from a focus group study where participants preferred multiple contacts over time (at least every three months) to be kept informed [19]. While studies registered with ClinicalTrials.gov must report updates when the recruitment status changes (e.g., ongoing, completed, terminated), it is not required that these updates trigger communications with study participants. These findings highlight content types and mechanisms that research teams do not typically use, but that could be prioritized when designing research dissemination strategies.

In addition to finding several commonly endorsed preferences among clusters, we also identified several unique characteristics (Table 2 and Table 3). The MVME group (cluster 1) was distinct from other clusters as the only one with a majority of survey respondents indicating a preference for updates once a month or more frequent, indicating a possible greater desire to stay informed than other groups. The largest preference profile (cluster 2-MVLE) indicated that few wanted to take money away from research (14%, 23/170) and few endorsed more frequent updates (1%, 1/170, endorsing a preference for updates once a month or more frequent). The other three preference profiles included more individuals that felt comfortable with budgeting less money for research (20% to 33%) and that endorsed a preference for updates once a month or more frequent (17% to 74%). The smallest preference profile (cluster 3-LVLE) showed lower ranging endorsement of preferences in all four dimensions (<50% across all dimensions). Distinct for cluster 4 (HVHE) was that a majority endorsed a preference for updates when there is a media release about the research (92%, 76/83) when compared to other clusters (7–33%).

Finally, we tested associations of preference profiles with participant characteristics and with opinions about research focus and about providing funding to update study participants (Table 3 and Table 4). Unlike the findings of others, showing that preferences vary with study topic and participant characteristics [23,25], we did not find differences in opinions about research focus or demographic characteristics between the preference profiles. Our finding that there are statistically significant differences between preference profiles with respect to comfort budgeting less money for research, suggests an opportunity for funders to incentivize researchers to communicate results to participants, for example, by requiring and providing funding to update study participants. Without such a budget, patients seeking such feedback are likely not to participate, and so research will continue to recruit only a subset of target patient groups. Others have also encouraged funders to provide incentives for researchers, given that many now call for better dissemination of general research results [24].

4.1. Limitations

This study has some limitations. First, survey participants had already decided to participate in research and most of them wanted to be updated about the research. Our study population, therefore, may not represent the general public with respect to their motivations to participate in research. For example, personal/family benefit is a common motivator to participate in large-scale genomic sequencing studies [27]. For our selected studies, there were not opportunities for personal/family benefit; thus, this was unlikely to be a motivator. Second, demographic characteristics of the current study population differ from the general US population. This survey population represents an older, mostly white race, highly educated and predominantly female population. Although the study population is different from the general population, other studies have shown that the characteristics of individuals that agree to participate in health-related studies are different from the general population [28,29,30]. This may be, at least in part, due to ineffective outreach to groups that are less willing to participate. Others have found that a systematic plan to contact and track participants or potential participants may differentiate effective from ineffective interventions to recruit and retain study participants [31]. Our work helps to lay the foundation for addressing this limitation by identifying different types of update content, mechanisms, timings, and frequencies that might be considered when developing a plan for recruiting and retaining participants.

4.2. Implications for Stakeholders

Our cluster analysis identified four different preference profiles among survey participants, which adds to existing evidence suggesting that there exists variability in the communication preferences of study participants. There is a growing desire to attract diverse populations (with potentially diverse views on what results are valuable) to participate in initiatives such as the All of Us Research Program [20,32]. A multi-pronged approach is required to meet the needs and preferences of individuals from diverse populations. Though the range and granularity of data being collected in research is increasing, preferences with regard to the types, timing and approaches to return results to participants is largely uncharted territory [20]. Models to return general research results that are multidimensional and responsive to participant preferences hold promise to provide the most value to study participants [33].

One study, for example, found that focus group participants were open to a variety of pathways and platforms for receiving study findings [19]. Participants wanted to have control over how, when, and how often they receive study results. They also wanted the opportunity to adjust the frequency and timing during the course of a longitudinal study. Furthermore, recent studies of the return of individual results have captured experiences with participant choice for the return of genomic results, indicating that some elect different choices when offered options [34,35]. Such processes to offer options for the return of individual results might be extended to also include general research results like those explored in this study.

Our efforts and the efforts of others to characterize the desires of study participants justify the use of multimodal strategies that could be considered when disseminating research findings. To lower the potential burden of providing research result updates, biobank data management systems might provide mechanisms that automate or semi-automate the process of curating preferences and for delivering some update types. As an important step in this direction, some groups have explored IT strategies to manage dynamic consent [36,37,38,39] that might be adapted for managing preferences for and delivery of research result updates. Future studies on processes to return results may benefit from exploring preference profiles, as we have in the current study, and also using those profiles in research result dissemination strategies.

5. Conclusions

This study adds an in-depth exploration of the specific preferences of research participants for different types of content, mechanisms, timings, and frequencies for updates on general research results. We also identify four preference profiles among survey participants that had already decided to participate in research, which adds to existing evidence suggesting variability in the communication preferences of study participants. Future studies on processes to return a range of research result types including individual, aggregate, and general research results may benefit from exploring preference profiles as we have in our study. Furthermore, this work provides evidence of a need for funders to incentivize researchers to communicate results to participants.

Supplementary Materials

The following are available online at https://www.mdpi.com/article/10.3390/jpm11050399/s1, Table S1: Demographic characteristics of survey respondents, by institution. Materials S1: Survey.

Author Contributions

Conceptualization, C.O.T., N.F.M., D.E.F., H.L. and D.M.; methodology, C.O.T. and N.F.M.; formal analysis, N.F.M.; investigation, C.O.T.; data curation, C.O.T. and N.F.M.; writing—original draft preparation, C.O.T., N.F.M. and D.M.; writing—review and editing, C.O.T., N.F.M., K.D.C., C.W., J.J.C., C.G.C., D.E.F., H.L., A.K.R., I.J.K., P.J.C., I.A.H. and D.M.; visualization, N.F.M.; supervision, C.O.T.; project administration, C.O.T., K.D.C. and D.M. All authors have read and agreed to the published version of the manuscript.

Funding

The third phase of the eMERGE Network was initiated and funded by the NHGRI through the following grants: U01HG8657 (Group Health Cooperative/University of Washington); U01HG8685 (Brigham and Women’s Hospital); U01HG8672 (Vanderbilt University Medical Center); U01HG8666 (Cincinnati Children’s Hospital Medical Center); U01HG6379 (Mayo Clinic); U01HG8679 (Geisinger Clinic); U01HG8680 (Columbia University Health Sciences); U01HG8684 (Children’s Hospital of Philadelphia); U01HG8673 (Northwestern University); U01HG8701 (Vanderbilt University Medical Center serving as the Coordinating Center); U01HG8676 (Partners Healthcare/Broad Institute); and U01HG8664 (Baylor College of Medicine).

Institutional Review Board Statement

The study was conducted according to the guidelines of the Declaration of Helsinki, and approved by the Institutional Review Board (or Ethics Committee) of Johns Hopkins University (protocol code IRB00107475, approved 1/5/2017).

Informed Consent Statement

Informed consent was obtained from all subjects involved in the study.

Data Availability Statement

The survey instrument used in this study is included as part of the Supplementary Materials. Data supporting Figure 1, Figure 2, Figure 3 and Figure 4, Table 1, Table 2 and Table 3 and Supplementary Table S1 can be made available upon request.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Johnsson, L.; Helgesson, G.; Rafnar, T.; Halldorsdottir, I.; Chia, K.-S.; Eriksson, S.; Hansson, M.G. Hypothetical and factual willingness to participate in biobank research. Eur. J. Hum. Genet. 2010, 18, 1261–1264. [Google Scholar] [CrossRef]

- Boden-Albala, B.; Carman, H.; Southwick, L.; Parikh, N.S.; Roberts, E.; Waddy, S.; Edwards, R. Examining Barriers and Practices to Recruitment and Retention in Stroke Clinical Trials. Stroke 2015, 46, 2232–2237. [Google Scholar] [CrossRef]

- Caenazzo, L.; Tozzo, P. The Future of Biobanking: What Is Next? BioTech 2020, 9, 23. [Google Scholar] [CrossRef]

- Malsagova, K.; Kopylov, A.; Stepanov, A.; Butkova, T.; Sinitsyna, A.; Izotov, A.; Kaysheva, A. Biobanks—A Platform for Scientific and Biomedical Research. Diagnostics 2020, 10, 485. [Google Scholar] [CrossRef]

- Critchley, C.R.; Nicol, D.; Otlowski, M.F.; Stranger, M.J. Predicting intention to biobank: A national survey. Eur. J. Public Health 2010, 22, 139–144. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Mester, J.L.; Mercer, M.; Goldenberg, A.; Moore, R.A.; Eng, C.; Sharp, R.R. Communicating with Biobank Participants: Preferences for Receiving and Providing Updates to Researchers. Cancer Epidemiol. Biomark. Prev. 2015, 24, 708–712. [Google Scholar] [CrossRef]

- O’Daniel, J.; Haga, S. Public Perspectives on Returning Genetics and Genomics Research Results. Public Health Genom. 2011, 14, 346–355. [Google Scholar] [CrossRef]

- Mathews, D.J.H.; Jamal, L. Revisiting Respect for Persons in Genomic Research. Genes 2014, 5, 1–12. [Google Scholar] [CrossRef] [PubMed]

- Overby, C.L.; Maloney, K.A.; Alestock, T.D.; Chavez, J.; Berman, D.M.; Sharaf, R.M.; Fitzgerald, T.; Kim, E.-Y.; Palmer, K.; Shuldiner, A.R.; et al. Prioritizing Approaches to Engage Community Members and Build Trust in Biobanks: A Survey of Attitudes and Opinions of Adults within Outpatient Practices at the University of Maryland. J. Pers. Med. 2015, 5, 264–279. [Google Scholar] [CrossRef] [PubMed]

- Long, C.R.; Stewart, M.K.; McElfish, P.A. Health research participants are not receiving research results: A collaborative solution is needed. Trials 2017, 18, 449. [Google Scholar] [CrossRef]

- Knoppers, B.M.; Deschênes, M.; Zawati, M.H.; Tassé, A.M. Population studies: Return of research results and incidental findings Policy Statement. Eur. J. Hum. Genet. 2012, 21, 245–247. [Google Scholar] [CrossRef]

- Shalowitz, D.I.; Miller, F.G. Disclosing Individual Results of Clinical Research: Implications of respect for participants. JAMA 2005, 294, 737–740. [Google Scholar] [CrossRef]

- Jarvik, G.P.; Amendola, L.M.; Berg, J.S.; Brothers, K.; Clayton, E.W.; Chung, W.; Evans, B.J.; Evans, J.P.; Fullerton, S.M.; Gallego, C.J.; et al. Return of Genomic Results to Research Participants: The Floor, the Ceiling, and the Choices in between. Am. J. Hum. Genet. 2014, 94, 818–826. [Google Scholar] [CrossRef]

- McEwen, J.E.; Boyer, J.T.; Sun, K.Y. Evolving approaches to the ethical management of genomic data. Trends Genet. 2013, 29, 375–382. [Google Scholar] [CrossRef] [PubMed]

- Beskow, L.M.; Burke, W. Offering Individual Genetic Research Results: Context Matters. Sci. Transl. Med. 2010, 2, 38cm20. [Google Scholar] [CrossRef]

- Khodyakov, D.; Mendoza-Graf, A.; Berry, S.; Nebeker, C.; Bromley, E. Return of Value in the New Era of Biomedical Research—One Size Will Not Fit All. AJOB Empir. Bioeth. 2019, 10, 265–275. [Google Scholar] [CrossRef]

- Downey, A.S.; Busta, E.R.; Mancher, M.; Botkin, J.R. (Eds.) Returning Individual Research Results to Participants: Guidance for a New Research Paradigm; The National Academies Press: Washington, DC, USA, 2018. [Google Scholar] [CrossRef]

- Beskow, L.M.; Burke, W.; Fullerton, S.M.; Sharp, R.R. Offering aggregate results to participants in genomic research: Opportunities and challenges. Genet. Med. 2012, 14, 490–496. [Google Scholar] [CrossRef]

- Cook, S.; Mayers, S.; Goggins, K.; Schlundt, D.; Bonnet, K.; Williams, N.; Alcendor, D.; Barkin, S. Assessing research participant preferences for receiving study results. J. Clin. Transl. Sci. 2019, 4, 243–249. [Google Scholar] [CrossRef]

- Wong, C.A.; Hernandez, A.F.; Califf, R.M. Return of Research Results to Study Participants. JAMA 2018, 320, 435–436. [Google Scholar] [CrossRef] [PubMed]

- Shalowitz, D.I.; Miller, F.G. Communicating the Results of Clinical Research to Participants: Attitudes, Practices, and Future Directions. PLoS Med. 2008, 5, e91. [Google Scholar] [CrossRef] [PubMed]

- Augustine, E.F.; Dorsey, E.R.; Hauser, R.A.; Elm, J.J.; Tilley, B.C.; Kieburtz, K.K. Communicating with participants during the conduct of multi-center clinical trials. Clin. Trials 2016, 13, 592–596. [Google Scholar] [CrossRef]

- Elzinga, K.E.; Khan, O.F.; Tang, A.R.; Fernandez, C.V.; Elzinga, C.L.; Heng, D.Y.; Vickers, M.M.; Truong, T.H.; Tang, P.A. Adult patient perspectives on clinical trial result reporting: A survey of cancer patients. Clin. Trials 2016, 13, 574–581. [Google Scholar] [CrossRef]

- Long, C.R.; Stewart, M.K.; Cunningham, T.V.; Warmack, T.S.; McElfish, P.A. Health research participants’ preferences for receiving research results. Clin. Trials 2016, 13, 582–591. [Google Scholar] [CrossRef] [PubMed]

- Purvis, R.S.; Abraham, T.H.; Long, C.R.; Stewart, M.K.; Warmack, T.S.; McElfish, P.A. Qualitative study of participants’ perceptions and preferences regarding research dissemination. AJOB Empir. Bioeth. 2017, 8, 69–74. [Google Scholar] [CrossRef]

- Partridge, A.H.; Winer, E.P. Informing clinical trial participants about study results. JAMA 2002, 288, 363–365. [Google Scholar] [CrossRef]

- Scherr, C.L.; Aufox, S.; Ross, A.A.; Ramesh, S.; Wicklund, C.A.; Smith, M. What People Want to Know About Their Genes: A Critical Review of the Literature on Large-Scale Genome Sequencing Studies. Healthcare 2018, 6, 96. [Google Scholar] [CrossRef] [PubMed]

- Webb, F.J.; Khubchandani, J.; Striley, C.W.; Cottler, L.B. Black–White Differences in Willingness to Participate and Perceptions About Health Research: Results from the Population-Based HealthStreet Study. J. Immigr. Minor. Health 2019, 21, 299–305. [Google Scholar] [CrossRef] [PubMed]

- Fry, A.; Littlejohns, T.J.; Sudlow, C.; Doherty, N.; Adamska, L.; Sprosen, T.; Collins, R.; Allen, N.E. Comparison of Sociodemographic and Health-Related Characteristics of UK Biobank Participants with Those of the General Population. Am. J. Epidemiol. 2017, 186, 1026–1034. [Google Scholar] [CrossRef]

- Wendler, D.; Kington, R.; Madans, J.; Van Wye, G.; Christ-Schmidt, H.; Pratt, L.A.; Brawley, O.W.; Gross, C.P.; Emanuel, E. Are Racial and Ethnic Minorities Less Willing to Participate in Health Research? PLoS Med. 2005, 3, e19. [Google Scholar] [CrossRef] [PubMed]

- Mullarkey, M.C.; Dobias, M.; Maron, A.; Bearman, S.K. A systematic review of randomized trials for engaging socially disadvantaged groups in health research: A distillation approach. PsyArXiv 2019. [Google Scholar] [CrossRef]

- All of Us Research Program. All of Us Research Program Protocol; National Institute of Health: Bethesda, MD, USA, 2020. Available online: https://allofus.nih.gov/about/all-us-research-program-protocol (accessed on 18 July 2020).

- Kohane, I.S.; Taylor, P.L. Multidimensional Results Reporting to Participants in Genomic Studies: Getting It Right. Sci. Transl. Med. 2010, 2, 37cm19. [Google Scholar] [CrossRef]

- Hoell, C.; Wynn, J.; Rasmussen, L.V.; Marsolo, K.; Aufox, S.A.; Chung, W.K.; Connolly, J.J.; Freimuth, R.R.; Kochan, D.; Hakonarson, H.; et al. Participant choices for return of genomic results in the eMERGE Network. Genet. Med. 2020, 22, 1821–1829. [Google Scholar] [CrossRef] [PubMed]

- Bishop, C.L.; Strong, K.A.; Dimmock, D.P. Choices of incidental findings of individuals undergoing genome wide sequencing, a single center’s experience. Clin. Genet. 2017, 91, 137–140. [Google Scholar] [CrossRef]

- Teare, H.J.; Morrison, M.; Whitley, E.A.; Kaye, J. Towards ‘Engagement 2.0′: Insights from a study of dynamic consent with biobank participants. Digit. Health 2015, 1. [Google Scholar] [CrossRef] [PubMed]

- Thiel, D.B.; Platt, J.; Platt, T.; King, S.B.; Fisher, N.; Shelton, R.; Kardia, S.L.R. Testing an Online, Dynamic Consent Portal for Large Population Biobank Research. Public Health Genom. 2015, 18, 26–39. [Google Scholar] [CrossRef] [PubMed]

- Teare, H.J.A.; Hogg, J.; Kaye, J.; Luqmani, R.; Rush, E.; Turner, A.; Watts, L.; Williams, M.; Javaid, M.K. The RUDY study: Using digital technologies to enable a research partnership. Eur. J. Hum. Genet. 2017, 25, 816–822. [Google Scholar] [CrossRef]

- Aguilar-Quesada, R.; Aroca-Siendones, I.; de la Torre, L.; Panadero-Fajardo, S.; Rejón, J.; Sánchez-López, A.; Miranda, B. The Andalusian Registry of Donors for Biomedical Research: Five Years of History. BioTech 2021, 10, 6. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).