1. Introduction

Gangue sorting is one of the crucial processes in coal preparation because raw coal is mixed with gangue impurities during production. A gangue has a low calorific value and releases toxic gases, such as SO

2, CO, CO

2, and NO

x, when burned, which results in coal quality reduction and environmental pollution, affecting the clean and efficient use of coal [

1]. The existing coal gangue sorting methods mainly involve manual and mechanical sorting. As shown in

Figure 1, when the raw coal flow flows into the raw coal preparation workshop, the iron and sundries in the raw coal flow are removed through the iron remover, and then enter the raw coal classification screen for screening. Those with particle sizes of less than 50 mm directly enter the mechanical separation operation. Those with particle sizes of more than 50 mm are manually selected to remove part of the sundries in the coal and visible gangue, and then broken into qualified particle sizes (less than 50 mm), and further separation is carried out using other mechanical separation methods such as a moving screen jig. When manually sorting gangue, illustrated in

Figure 2, the workers judge the gangue in the coal flow through the naked eye and pick it out by hand. The labor intensity is high, the working environment is bad, the workers can very easily inhale fine particles (although wearing protective masks) and can easily be injured by the high-speed belt or scraper conveyor, seriously affecting the health of the workers and posing great potential safety risks [

2]. To keep the sorting workers away from the harsh working environment, intelligent coal gangue sorting equipment, especially coal gangue sorting robots, has received considerable attention in the industry [

3,

4,

5,

6]. Coal gangue recognition is the foundation of its intelligent sorting and is a crucial coal gangue sorting robot technology.

The investigations on coal gangue identification can be traced back to the 1960s with more than 10 identification methods, such as the γ-ray, X-ray, photoelectric, and infrared methods [

7,

8,

9,

10,

11]. Despite numerous accomplishments, these methods have bottlenecks, such as limited application occasions, radiation hazards, and low recognition accuracy. With the development of image processing technology and machine learning, coal gangue identification methods have shifted their focus onto coal gangue image recognition. Wang, Li, and Yang [

12] investigated coal gangue response characteristics under different illuminations and used a SVM classifier to realize the identification of coal and gangue. Zhao et al. [

13] constructed a PSO-SVM model to recognize coal and gangue. Su et al. [

14] and Pu et al. [

15] introduced transfer learning for the identification of coal and gangue based on a convolutional neural network (CNN). Li et al. [

16] proposed a hierarchical framework for coal gangue detection based on CNNs. McCoy and Auret [

17] reviewed the machine learning applications in mineral processing. Hou [

18] established a coal gangue separation system based on the difference between coal and gangue in their surface textures and grayscale features, and proposed a method of combining image feature extraction and a feed-forward artificial neural network.

Alfarzaeai et al. [

19] addressed the topic of coal gangue recognition. They created a new model called CGR-CNN based on CNN using thermal images as standard images for coal gangue recognition. Lei et al. [

3] constructed a visual depth neural network fast coal classification net (FCCN) based on CNN, and implemented a visual coal classification detection algorithm for coal gangue sorting robots. Liu, Li, et al. [

1] studied coal gangue detection based on enhanced YOLOv4. Li et al. [

20] conducted research on a coal gangue detection and recognition algorithm based on deformable convolution YOLOv3 (DCN-YOLOv3). Yan et al. [

21] studied an intelligent classification method of coal gangue based on multispectral imaging technology and target detection by the YOLOv5.1. In the coal preparation streamline, the state of gangue in raw coal flow is diverse, such as exposing outside coal, being partially or fully covered by pulverized coal. Furthermore, extracting the features of a coal gangue image is challenging due to the harsh site conditions of low illumination and high dust. The present coal gangue recognition methods in the literature have limited applications. Therefore, further study is required for the segmentation, enhancement, feature extraction, and recognition of a coal gangue image. A coal gangue recognition algorithm based on deep learning does not need to consider the extracted features. Still, the classifier’s training needs a large number of marked samples and a large amount of calculation; thus, the requirements for lightweight and real-time performance pose a significant challenge to its field application. Li et al. [

22] investigated the effects of illuminance and external moisture on grayscale and texture features of coal and gangue images, which provided an essential guide for the image-based identification of coal and gangue under working conditions. Li and Gong [

23] studied a preprocessing model for low-quality images of coal and gangue based on a bilateral filtering joint enhancement algorithm.

Above all, literature investigations on coal gangue recognition have been extensively conducted, but a practical solution has not yet been satisfactorily provided. Wang, Li, and Yang [

12] and Li et al. [

4] have studied the coal gangue recognition model based on a support vector machine (SVM). Still, low accuracy and insufficient robustness problems were caused by its insensitivity to noise and difficult parameter adjustment. There are few studies on the use of integrated algorithms to improve the accuracy of SVM coal gangue classification.

Therefore, the novel contributions of this paper are as follows:

Aiming at the shortcomings of the SVM classifier for coal gangue recognition, this paper used a genetic optimization algorithm to improve its noise insensitivity and difficult parameter adjustment, used an adaptive boosting (AdaBoost) algorithm to enhance its recognition accuracy, and constructed a coal gangue recognition and classification model;

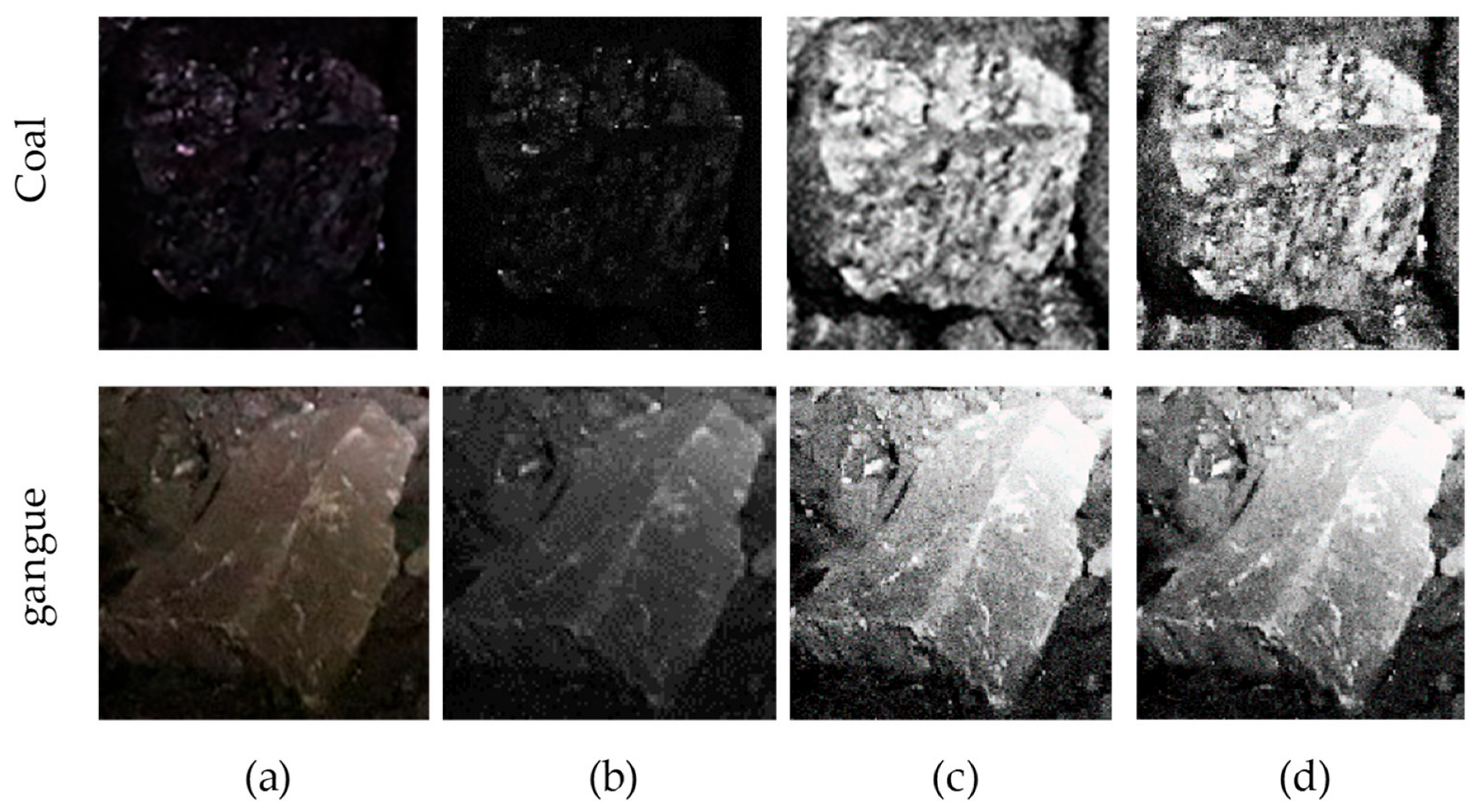

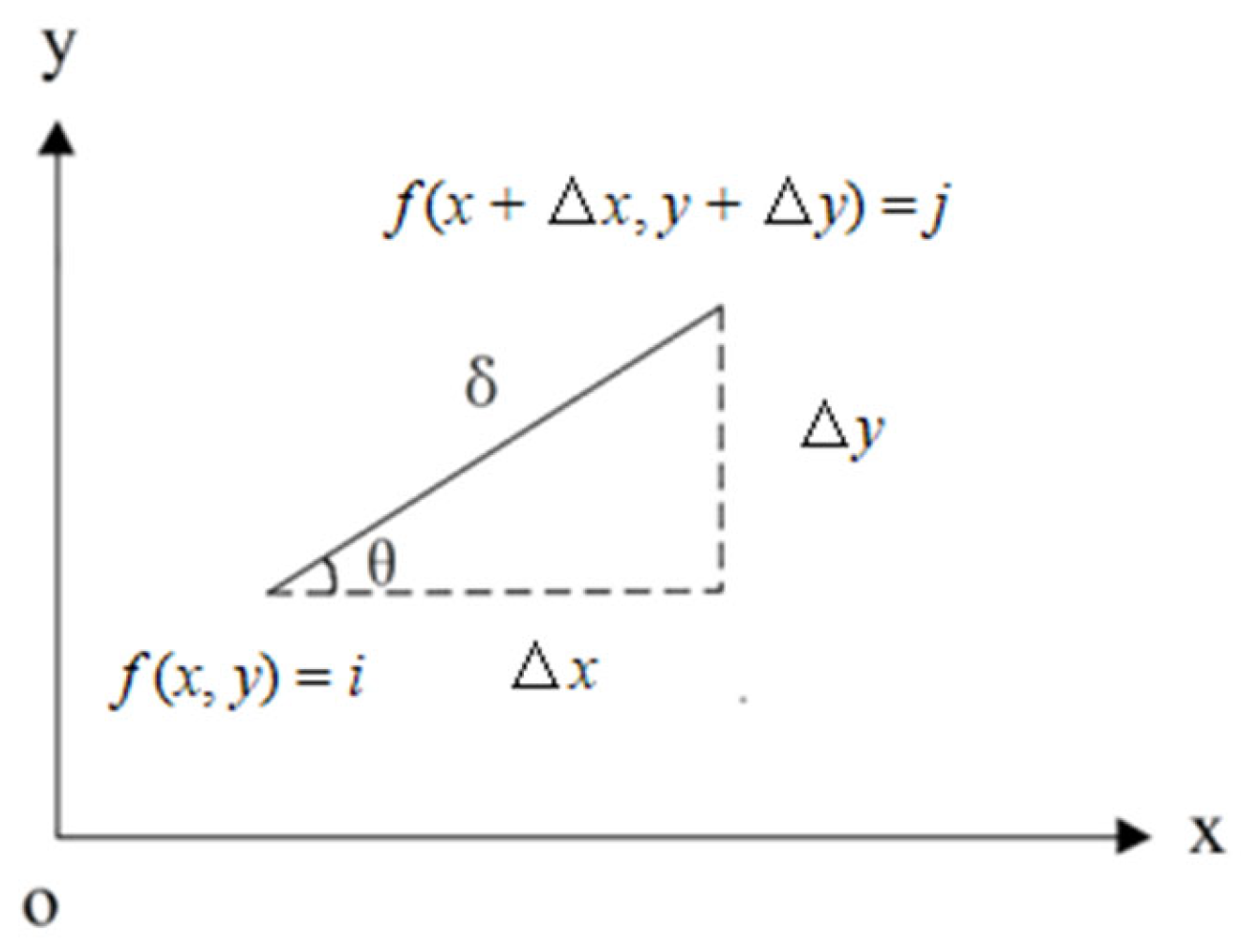

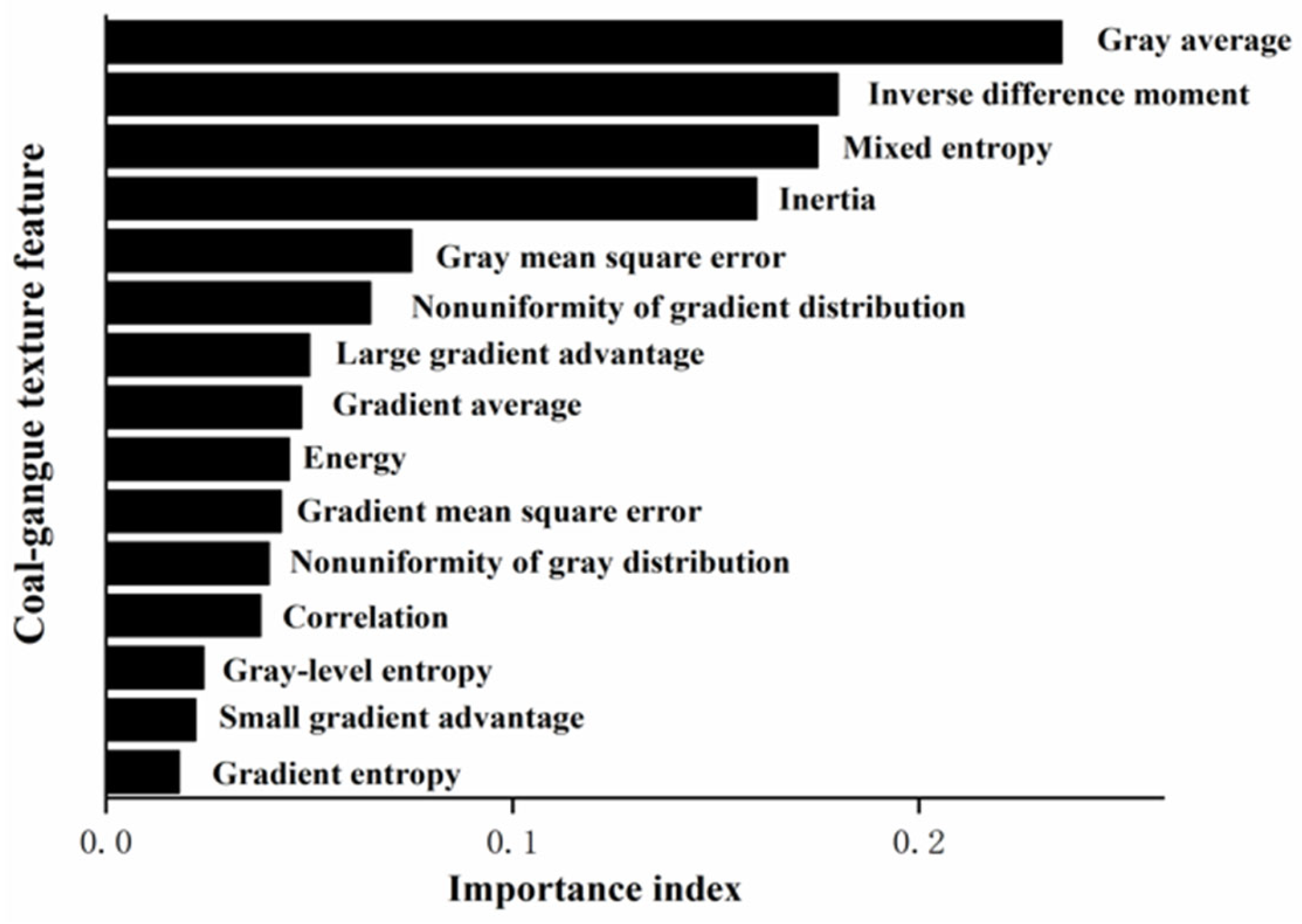

The indices of GGCM were introduced to characterize the features of the coal and gangue and a coal-gangue sample dataset was constructed with the coal and gangue images obtained by experiment and on-site to verify the performance of the proposed algorithm.

2. Principle and Theory

This section presents the proposed method and its algorithm. This study used an SVM classifier as the AdaBoost integration base classifier, and a genetic algorithm (GA) was employed to optimize the SVM parameters.

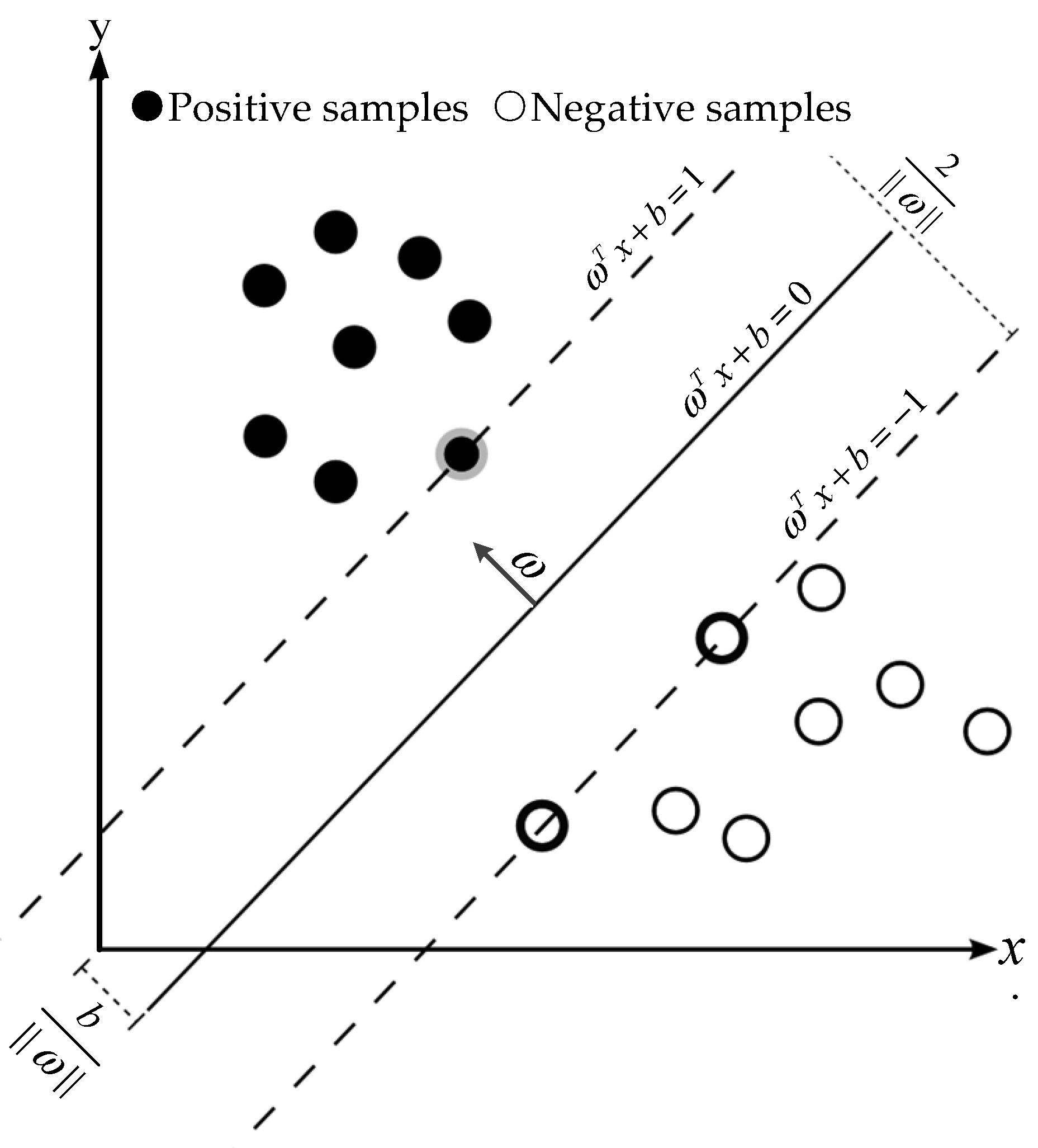

2.1. SVM Algorithm and SVM Classifier

SVM is a machine learning method based on a statistical theory proposed by Vapnik and Chervonenkis [

24]. It is widely used in text classification, handwritten character recognition, and image classification owing to its excellent generalization performance and ability to process high-dimensional data. The fundamental principle is to find an optimal hyperplane that can meet the classification requirements and which has the most considerable interval, as presented in

Figure 3.

Let

D = {(

xi, yi),

i = 1, 2, …,

N,

x ∈

R,

y ∈ (−1,1)},

xi is the sample data to be classified, and

yi is the label of the data

xi. The classification plane (

ω,

b) can be described using the following linear equation:

where

ω denotes the normal vector to the classification plane, and

b indicates the displacement term. For any sample in the linearly separable sample set, there are

Equation (2) can be abbreviated as

In all the vectors

ω, there is a vector whose distance from the classification plane is the smallest and satisfies the equal sign of Equation (2), which is called the support vector. The sum of the distances

γ from all the support vectors to the hyperplane is

The hyperplane with the largest distance

γ is the optimal hyperplane. Then, the classification problem is transformed into the problem of finding the optimal hyperplane, namely, finding the optimal parameters

ω and

b in Equation (1) under constraints

or:

For calculation convenience, remove the root sign in Equation (6), then

If the Lagrange function is introduced into the above formula, there is

where the Lagrange multiplier

αi ≥ 0. Then, the problem of finding the optimal classification plane comes down to solving

For the nonlinear classification problem, the kernel function of

K(

xi,

xj) is introduced, and the classification problem is reduced to

where

C denotes the penalty factor. The final classification discriminant function is defined as

where

sgn(

x) is the sign discrimination function. When

x > 0, it returns 1; otherwise, it returns 0.

The loss function of SVM is an unbounded convex function, resulting in the same penalty on classification error samples, and exceptionally susceptible to noise while ensuring the confidence of the classification results. In addition, SVM has parameter sensitivity, complex parameter tuning, and unstable classification accuracy. The coal gangue recognition model based on the basic SVM algorithm lacks accuracy and robustness, impacting the effect of the algorithm on coal gangue recognition.

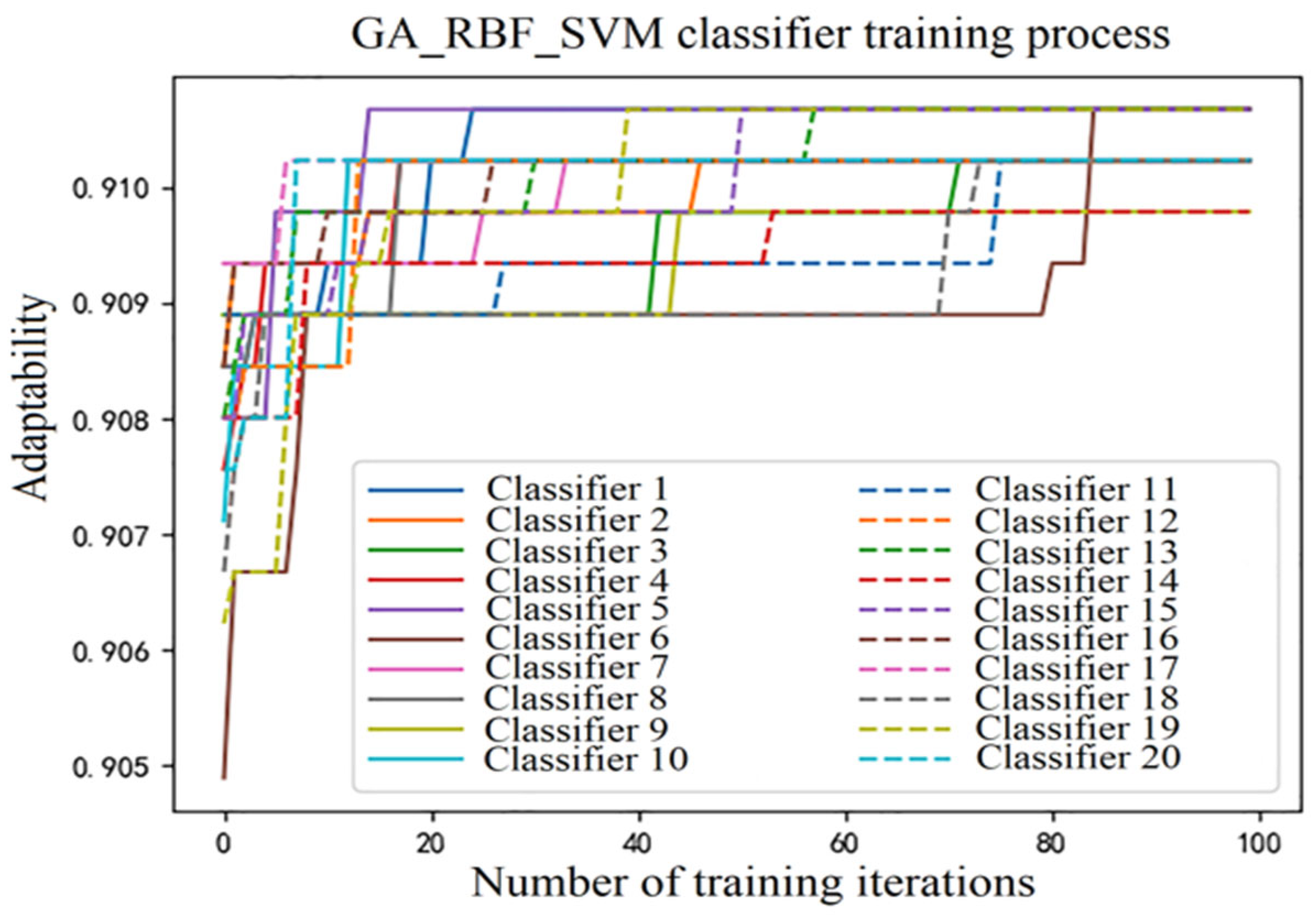

2.2. GA Optimization and SVM Base Classifier Construction

The introduction of parameter optimization algorithms, such as the GA algorithm [

25], particle swarm optimization (PSO) algorithm [

26], and ant colony algorithm [

27], can adaptively search the global optimization and effectively reduce the difficulty of the parameter tuning of the SVM model. This paper introduced GA into the SVM algorithm to optimize the penalty factor and kernel function parameters, and the GA-SVM model was created.

GA is a method for searching for the optimal solution by simulating the natural evolutionary process. This algorithm converts problem-solving into a process that is similar to the crossover and mutation of chromosomal genes in biological evolution. Owing to its strong robustness, it is widely used in combination optimization, machine learning, signal processing, adaptive control, and artificial life. The optimization process of the GA is as follows:

Set the evolutionary iteration counter t = 0, the maximum evolutionary iteration T, and randomly generate M individuals as the initial population P(0);

Calculate the fitness of each individual in the population P(t);

Obtain the next generation’s population P(t + 1) through selection, crossover, and mutation of population P(t);

Judge whether the termination condition is reached. If t < T, repeat Step 3; else, if t = T, terminate the evolution;

Take the individual with the greatest fitness obtained in the evolution process as the optimal solution, and the SVM base classifier is constructed using the optimal parameters.

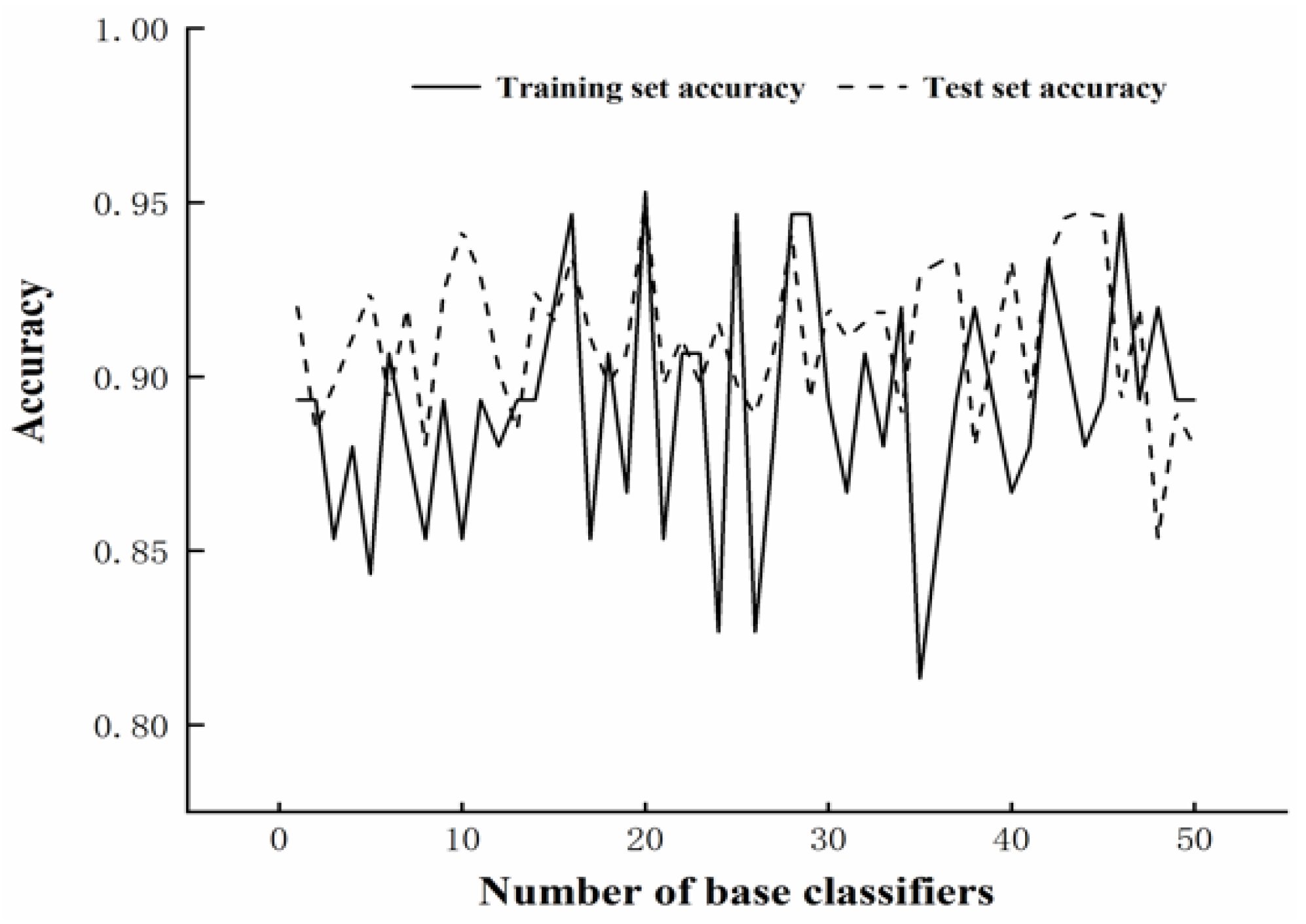

2.3. ADAB-GA-SVM Classifier Construction

The AdaBoost algorithm was employed to obtain a strong classifier model to improve the anti-noise performance of the SVM algorithm, referred to as the AdaB-GA-SVM model. AdaBoost is the most representative boosting tree algorithm proposed based on Boosting by Freund and Schapire [

28]. The AdaBoost tree algorithm is widely used for classification in various fields as it can keep the training between classifiers unaffected to ensure structural stability and maximize model generality. Dou, Chen, and Yue [

29] proposed a multi-classification algorithm based on AdaBoost, which exhibited an excellent remote sensing image classification performance. Zhang et al. [

30] employed the AdaBoost algorithm to integrate the SVM base classifier into strong classifiers to achieve higher classification accuracy in different dataset sizes. The AdaBoost algorithm flow is as follows:

- (1)

Initialize the weight of the sample set D.

- (2)

Let the iteration number be M, for t = 1, 2, …, M:

- (3)

Build the final strong classifier as

Generally, the step size and maximum number of iterations are used together to determine the fitting effect of the AdaBoost algorithm, and the constructed strong classifier is given by the following equation:

where

ν denotes the learning rate, 0 <

ν ≤ 1. The classification discriminant function

g(

x) is defined as

where

sgn(

x) is the same as Equation (11).