Algorithms for Hyperparameter Tuning of LSTMs for Time Series Forecasting

Abstract

1. Introduction

- Trade-off between the exploration and exploitation of the search space of hyperparameters. This affects the bias of the algorithm to perform a local search near the current best-selected search agents or perform a random search to attain a new set of search agents. Such trade-offs are generally affected by resource constraints.

- Trade-off between inference and search of hyperparameters. The algorithm generates a new set of hyperparameters from the currently available set of hyperparameters either by searching for better hyperparameters or inferencing from the existing set. This refers to the exploitation characteristic of the algorithm.

- Decomposing Time Series Data using Fast Fourier Transform to extract logical and meaningful information from the raw data.

- Two algorithms and an optimized workflow for tuning hyperparameters of LSTM networks using HBO and GA have been designed and developed for potential operational-ready applications.

- The effect of two different optimizers on LSTM Networks and the effect of data decomposition on the network’s forecast performance and efficiency have been analyzed and inter-compared.

- The optimized LSTM Network with the default network using 90 min ahead forecast has been evaluated and compared. The impact and the necessity of hyperparameter tuning are highlighted and depicted through the comparisons performed.

2. Methodology

2.1. Data Processing

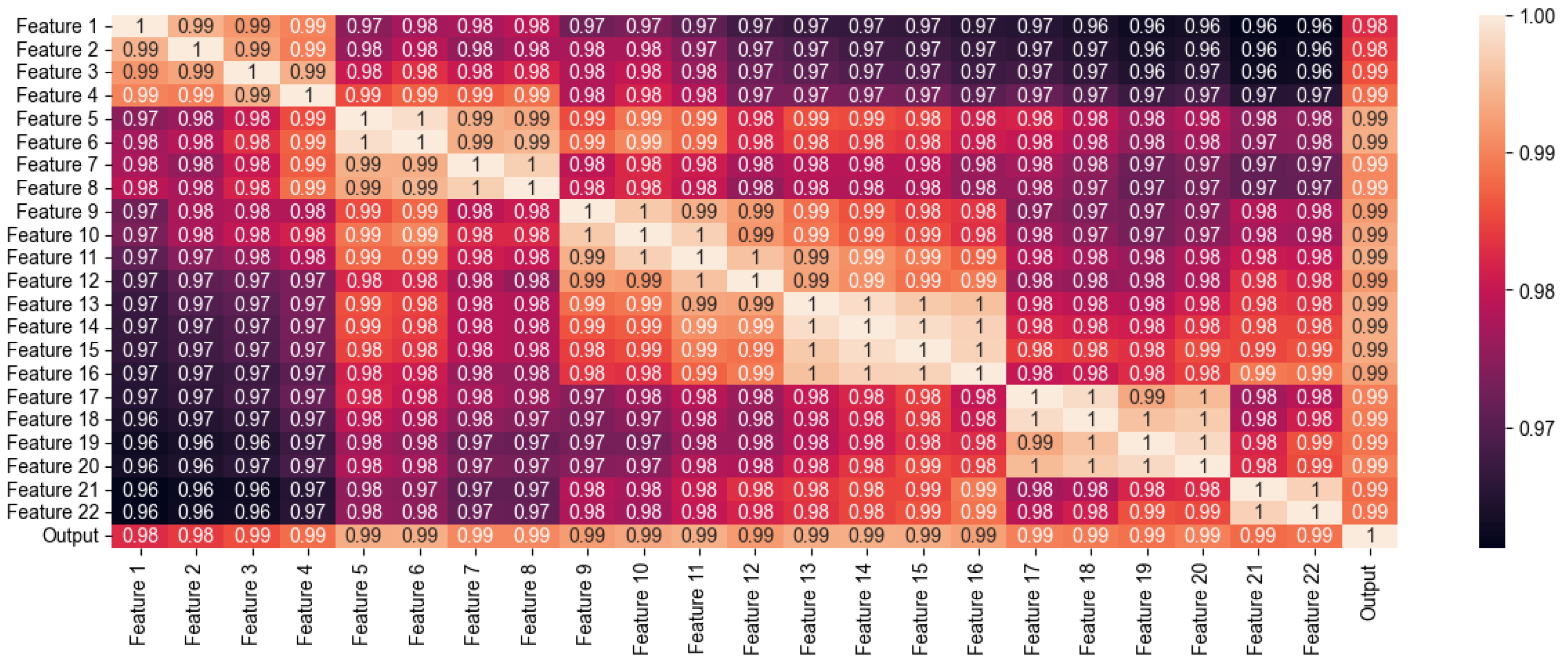

2.1.1. Feature Selection and Data Processing

2.1.2. Conversion to Supervised Time Series

- 1.

- Column Axis Shifting to generate Time Series:All the input features were shifted by 18 to 1 records on the column axis to create a time series of length 18 points as follows,The past value of the output variable is also considered as an input feature and forms the input pattern similar to one observed in Jursa and Rohrig, 2008 [25]. The output variable is shifted forward by 18 points i.e., is the actual time series output which is to be forecasted.

- 2.

- Reshaping the Data for LSTM Network:After creating the time series, the data consisted of 18 × 23 = 414 input features and 1 output column. The input vector is a two-dimensional vector and is not compatible with LSTM networks. The input data needs to be reshaped as,Hence the input vector is reshaped into a three-dimensional vector,

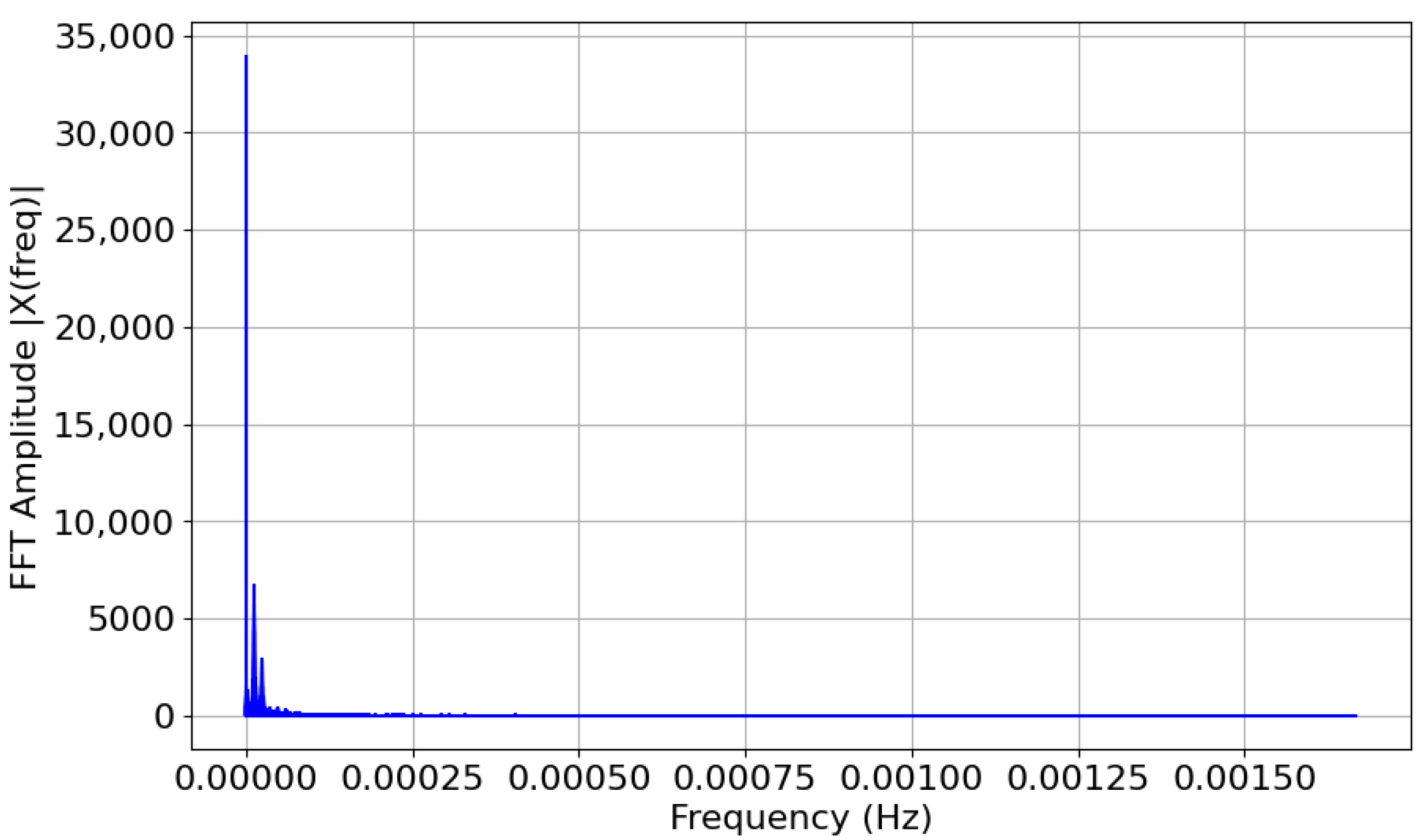

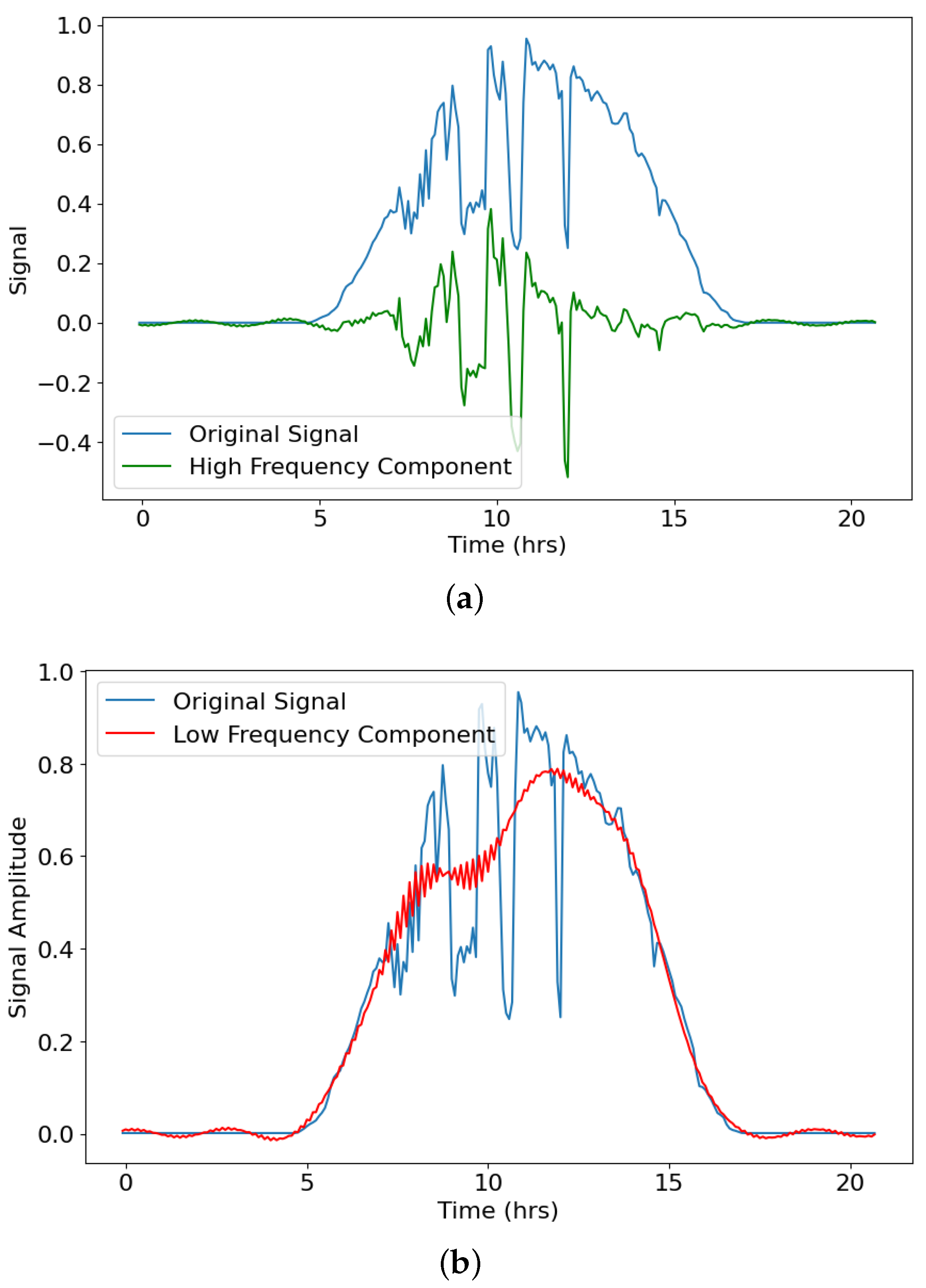

2.1.3. Data Decomposition

2.2. Optimiser Design

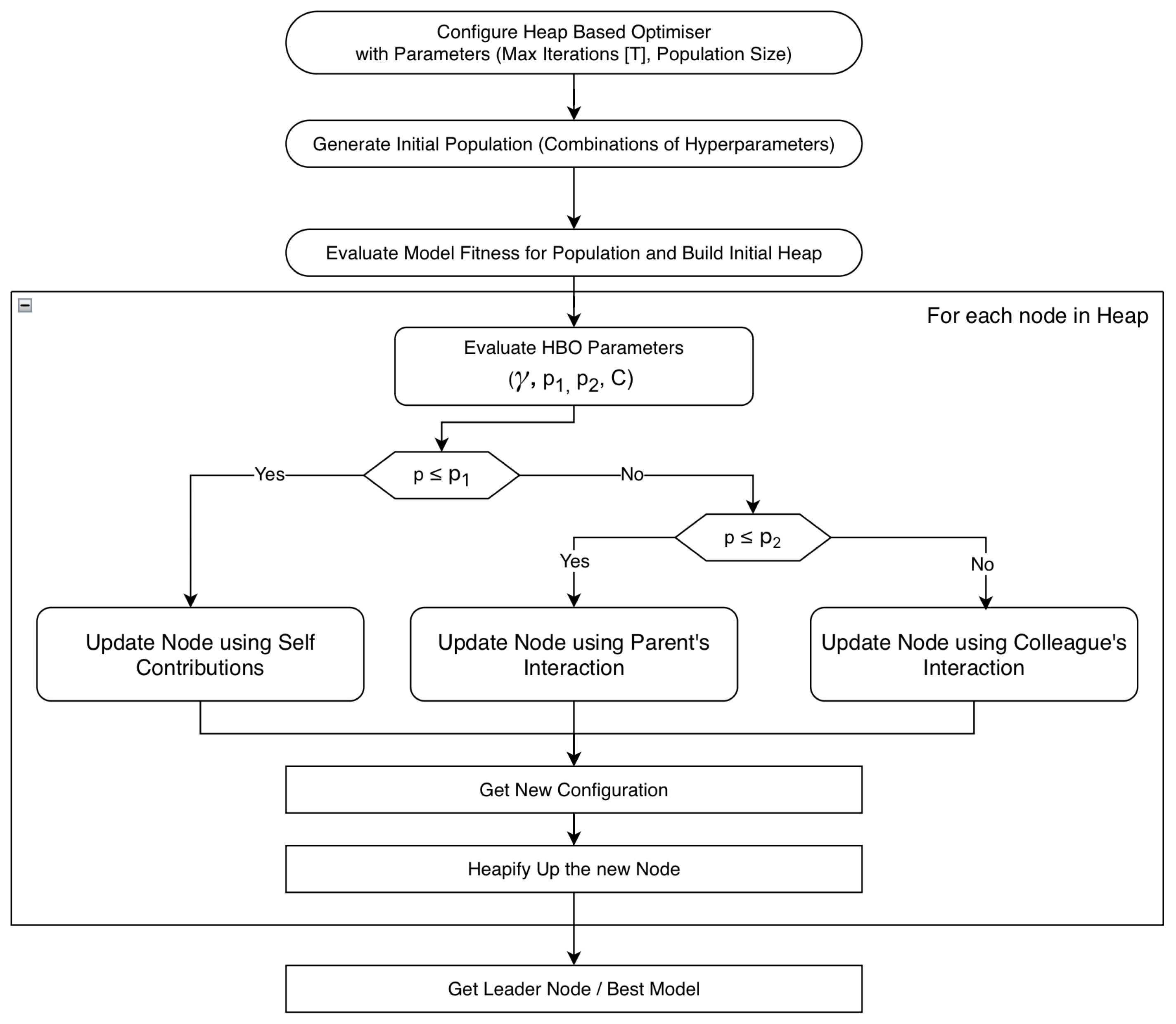

2.2.1. Heap Based Optimiser

- Impact of the immediate parent or the superior nodeIt implies that the next optimal point may be located in the neighbourhood of the parent node and hence the search area is modified to match the neighbourhood of the parent heap node.

- Impact of cousin nodes or colleaguesNodes at the same level as the current node balance the exploration and exploitation of the search space for the optimal solution.

- Impact of the heap node on itself (Self-contribution)The node has some effect on itself for the next iteration and remains unchanged for the particular iteration.

- 1.

- Initialise the algorithm with the optimiser parameters like Initial Population Size (Heap Size) and Maximum Iterations (T).

- 2.

- Random-generate the initial population and build the heap structure of Configuration Vectors (Section 2.3).

- 3.

- Update each component of the configuration vector using HBO’s updation policy. After updating all the components in the configuration vector, a new configuration vector that implies a new possible LSTM network has been obtained.

- 4.

- Heapify-Up [23] the newly obtained configuration vector.

- 5.

- Repeat steps 3–4 until T iterations are done.

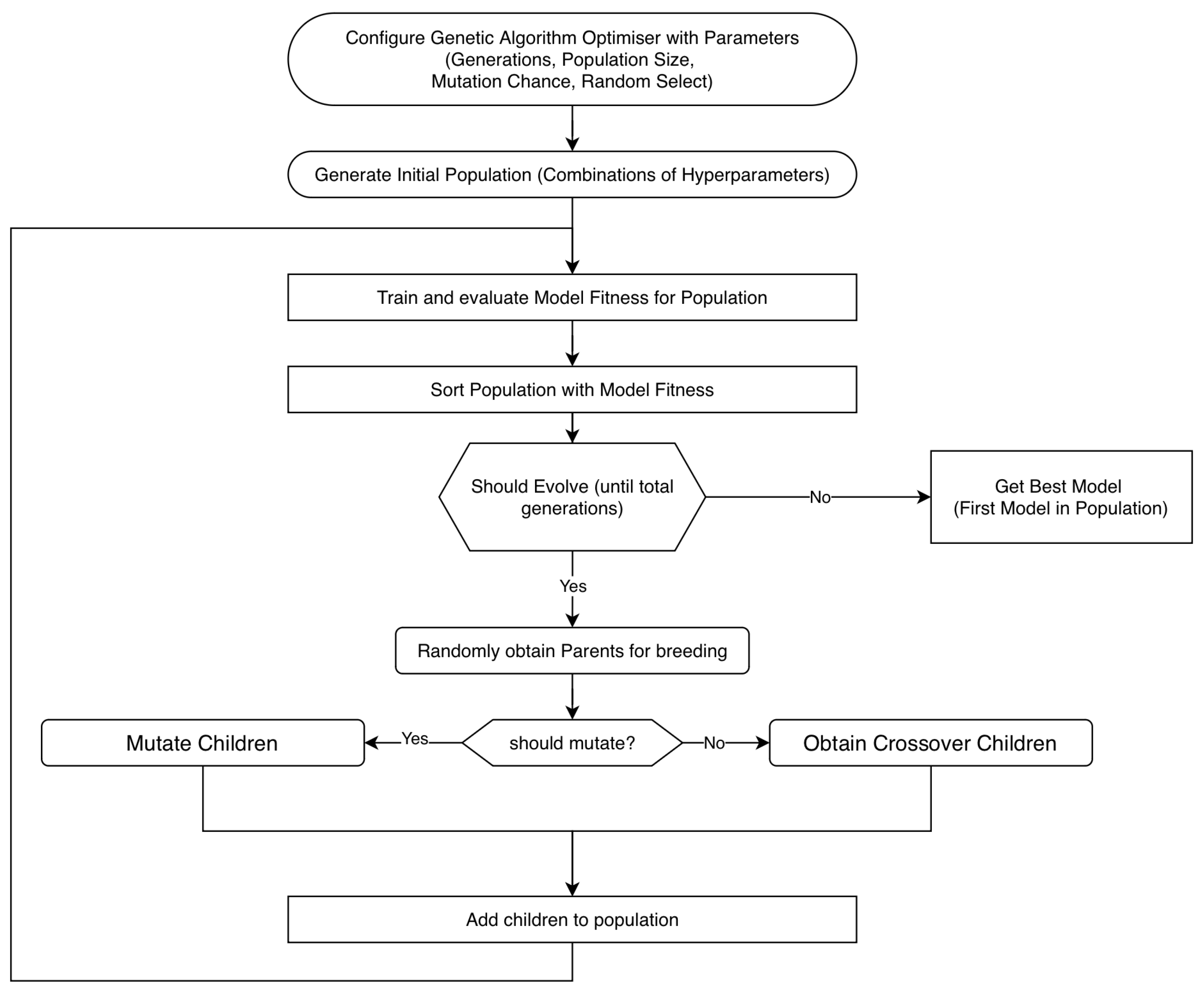

2.2.2. Genetic Algorithm Based Optimiser

- 1.

- Configure the optimiser with its parameters like the number of generations, population size, mutation chance and random selection chance.

- 2.

- Generate the initial population and evaluate the fitness of each of the configuration vectors in the population.

- 3.

- Breed children from two randomly selected parents and either evolve or mutate them to obtain new configuration vectors based on GA parameters Mutation Chance and Random Select Chance. The parents are selected using a uniform random distribution.

- 4.

- Add the children obtained to the population and sort the population based on fitness.

- 5.

- Repeat steps 3–4 until the required number of generations is reached.

2.3. Hyperparameter Configuration Space Setup

2.4. LSTM Network Properties

- Section 2.4.1: Network Generation using the Configuration Vector.

- Section 2.4.2: LSTM Network’s Performance Parameters.

- Section 2.4.3: Optimised LSTM Network Training Setup.

2.4.1. Network Generation

2.4.2. Network Performance Parameters

- Mean Squared Error (MSE)

- Root Mean Squared Error (RMSE)

- Mean Squared Logarithmic Error (MSLE)

- Coefficient of Determination ( Score)

- Model FitnessModel Fitness is a custom metric designed to give a balanced Score in the range of [−100, 100]. Model Fitness was used as the objective/fitness function in both HBO-algorithm and GAO-algorithm for updating the population. Model Fitness is calculated as,

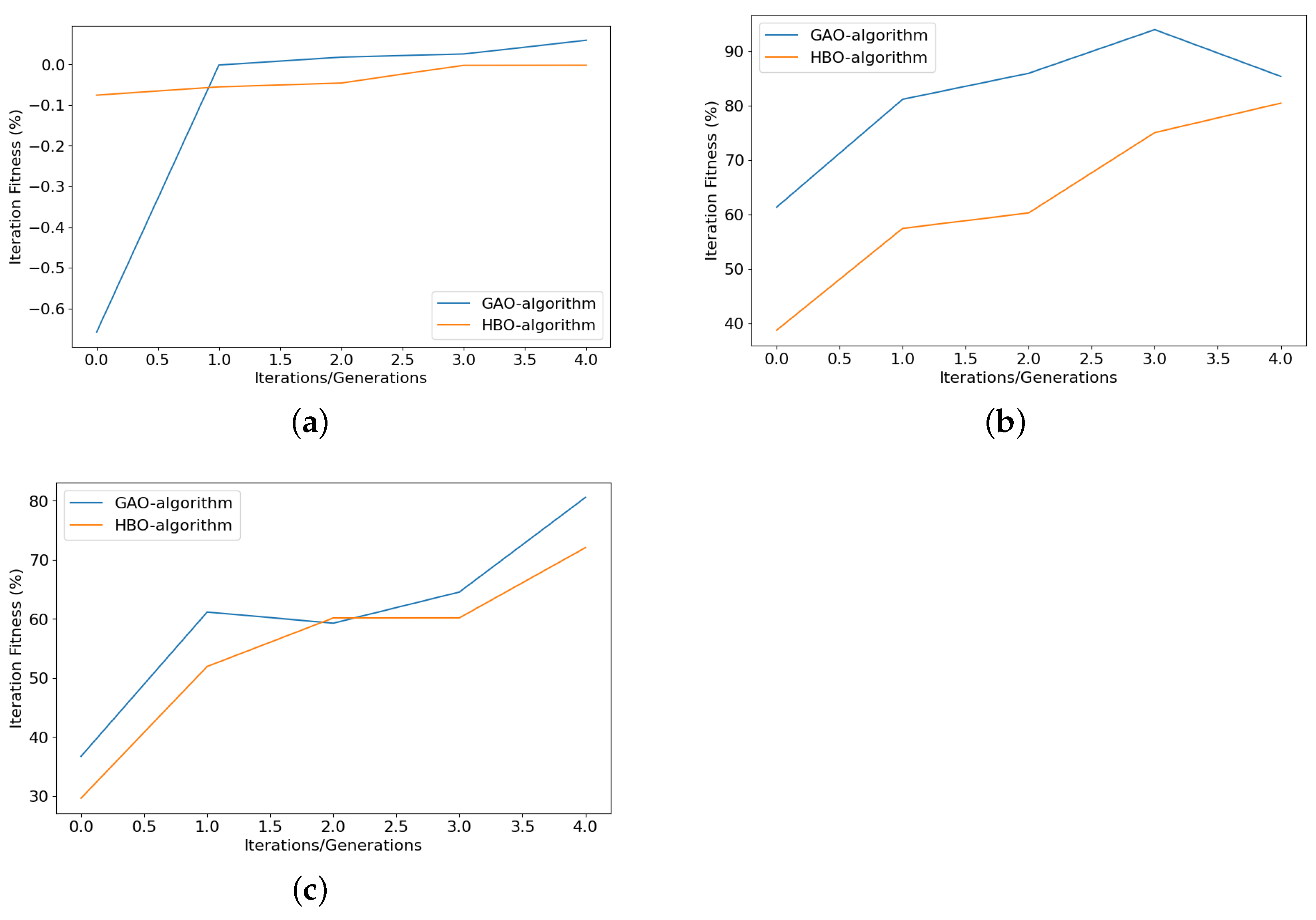

- Iteration FitnessThe Hyperparameter Tuning algorithms (Section 2.2.1 and Section 2.2.2) carry out their operations in multiple steps which are repeated a certain number of times. Each such cycle is known as Iteration. Hence, Iteration Fitness is defined as the average Model Fitness of the entire population for any particular iteration. This metric helps to understand the performance of the algorithm in improving the Model Fitness of the population collectively.where Model Fitnessi refers to the fitness of the ith configuration vector in the population and n (population) is the total number of elements in the population.

2.4.3. Optimised LSTM Network Training Setup

3. Results and Discussions

- Section 3.1: Comparison of Frequencies for Data Decomposition

- Section 3.2: Comparison of Optimisers

- Section 3.3: Effect of Data Decomposition versus Tuning Algorithms

- Section 3.4: Further Discussions

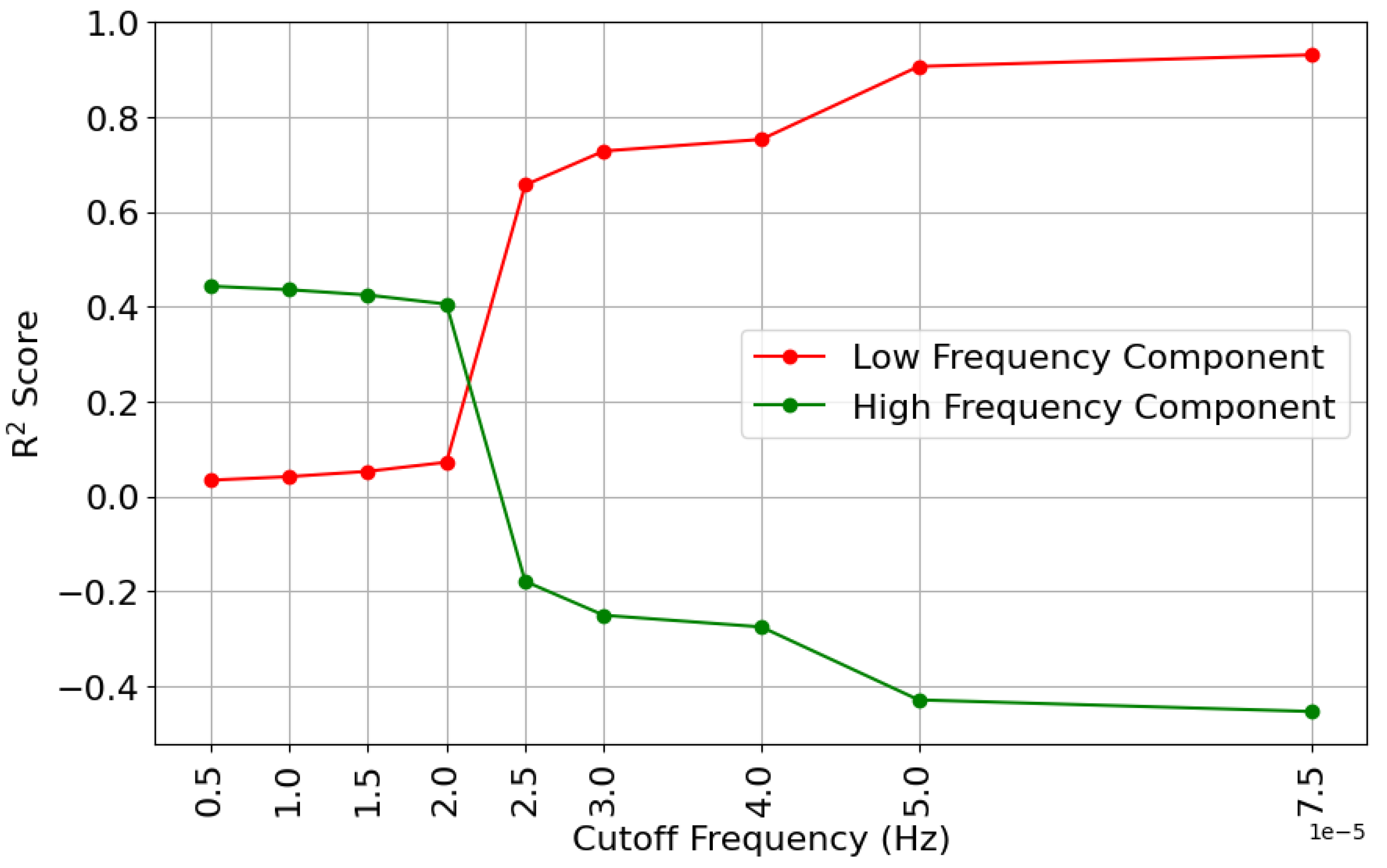

3.1. Comparison of Frequencies for Data Decomposition

3.2. Comparison of Optimisers

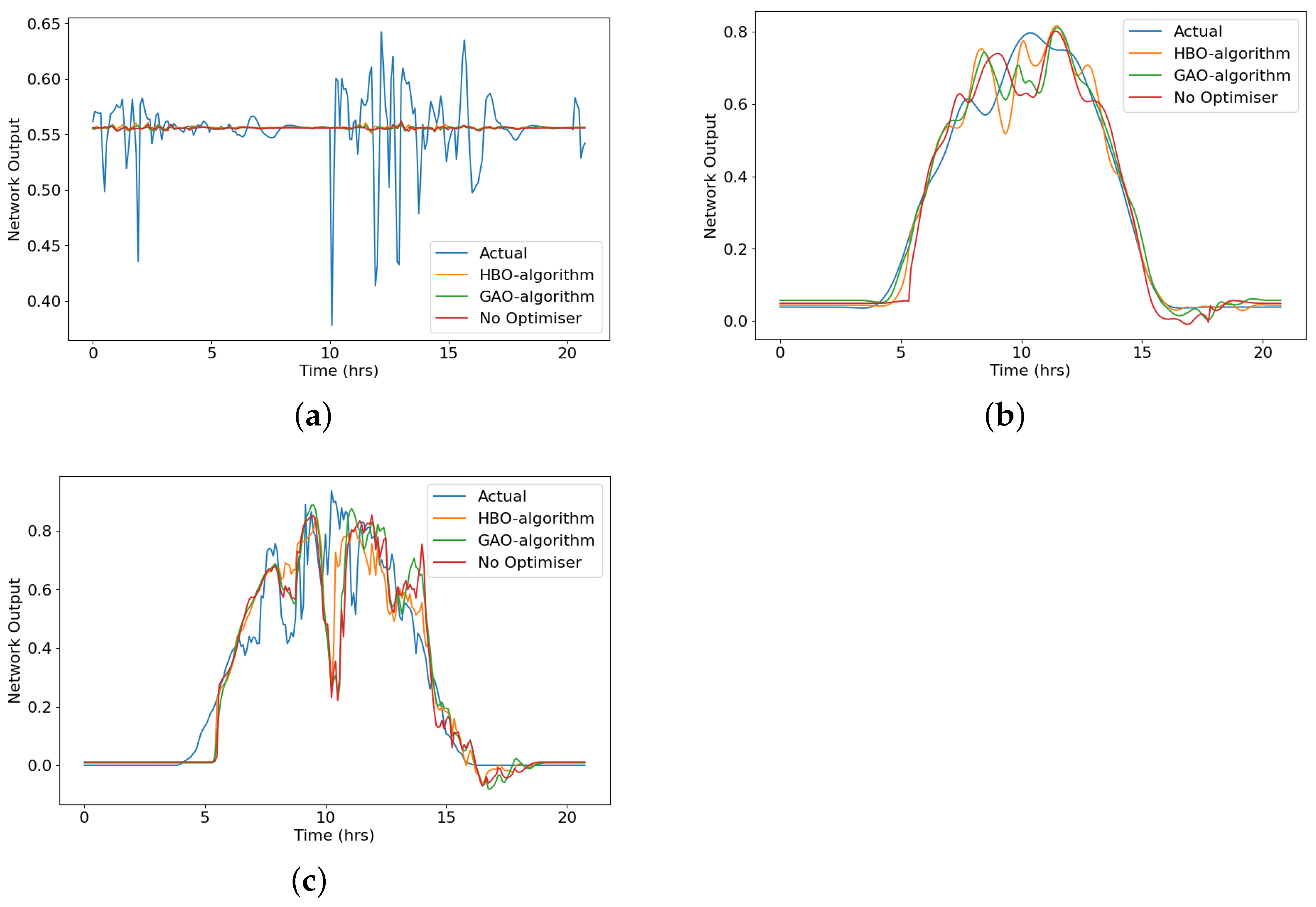

3.3. Effect of Data Decomposition versus Tuning Algorithms

- 1.

- High-Frequency Data produces the lowest amount of errors (from Table 2) but this can be misleading. After checking the Model Forecast Plot in Figure 8 and Model Fitness from Table 3 it was verified that the High-Frequency Data causes the system to forecast the mean value of data and hence the low error but a flat line forecast and very low model fitness.

- 2.

- 3.

- Original Data produces the highest error (amongst the 3 data sets). This is explained by the fact that original data contains characteristics from both high-frequency and low-frequency data. The Model Fitness is also a little lower than Low Frequency but much higher than High-Frequency Data.

3.4. Further Discussions

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| LSTM | Long Short Term Memory |

| HBO | Heap Based Optimiser |

| GAO | Genetic Algorithm Optimiser |

| ML | Machine Learning |

| NNs | Neural Networks |

| AI | Artificial Intelligence |

| ARIMA | Auto Regression Integrated Moving Average |

| RNNs | Recurrent Neural Networks |

| GA | Genetic Algorithm |

| CRH | Corporate Ranking Hierarchy |

| HF | High Frequency |

| LF | Low Frequency |

| FFT | Fast Fourier Transform |

| MSE | Mean Squared Error |

| RMSE | Root Mean Squared Error |

| MSLE | Mean Squared Logarithmic Error |

References

- Sharma, V.; Yang, D.; Walsh, W.; Reindl, T. Short term solar irradiance forecasting using a mixed wavelet neural network. Renew. Energy 2016, 90, 481–492. [Google Scholar] [CrossRef]

- Wang, Q.; Li, S.; Li, R. Forecasting energy demand in China and India: Using single-linear, hybrid-linear, and non-linear time series forecast techniques. Energy 2018, 161, 821–831. [Google Scholar] [CrossRef]

- Zou, H.; Yang, Y. Combining time series models for forecasting. Int. J. Forecast. 2004, 20, 69–84. [Google Scholar] [CrossRef]

- Clements, M.P.; Franses, P.H.; Swanson, N.R. Forecasting economic and financial time-series with non-linear models. Int. J. Forecast. 2004, 20, 169–183. [Google Scholar] [CrossRef]

- Koudouris, G.; Dimitriadis, P.; Iliopoulou, T.; Mamassis, N.; Koutsoyiannis, D. A stochastic model for the hourly solar radiation process for application in renewable resources management. Adv. Geosci. 2018, 45, 139–145. [Google Scholar] [CrossRef]

- Colak, I.; Yesilbudak, M.; Genc, N.; Bayindir, R. Multi-period prediction of solar radiation using ARMA and ARIMA models. In Proceedings of the 2015 IEEE 14th International Conference on Machine Learning and Applications (ICMLA), Miami, FL, USA, 9–11 December 2015; IEEE: Piscataway, NJ, USA, 2015; pp. 1045–1049. [Google Scholar]

- Shadab, A.; Ahmad, S.; Said, S. Spatial forecasting of solar radiation using ARIMA model. Remote Sens. Appl. Soc. Environ. 2020, 20, 100427. [Google Scholar] [CrossRef]

- Meenal, R.; Binu, D.; Ramya, K.; Michael, P.A.; Vinoth Kumar, K.; Rajasekaran, E.; Sangeetha, B. Weather forecasting for renewable energy system: A review. Arch. Comput. Methods Eng. 2022, 29, 2875–2891. [Google Scholar] [CrossRef]

- Siami-Namini, S.; Tavakoli, N.; Siami Namin, A. A Comparison of ARIMA and LSTM in Forecasting Time Series. In Proceedings of the 2018 17th IEEE International Conference on Machine Learning and Applications (ICMLA), Orlando, FL, USA, 17–20 December 2018; pp. 1394–1401. [Google Scholar] [CrossRef]

- Rahimzad, M.; Moghaddam Nia, A.; Zolfonoon, H.; Soltani, J.; Danandeh Mehr, A.; Kwon, H.H. Performance comparison of an LSTM-based deep learning model versus conventional machine learning algorithms for streamflow forecasting. Water Resour. Manag. 2021, 35, 4167–4187. [Google Scholar] [CrossRef]

- Yu, Y.; Si, X.; Hu, C.; Zhang, J. A Review of Recurrent Neural Networks: LSTM Cells and Network Architectures. Neural Comput. 2019, 31, 1235–1270. [Google Scholar] [CrossRef]

- De, V.; Teo, T.T.; Woo, W.L.; Logenthiran, T. Photovoltaic power forecasting using LSTM on limited dataset. In Proceedings of the 2018 IEEE Innovative Smart Grid Technologies-Asia (ISGT Asia), Singapore, 22–25 May 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 710–715. [Google Scholar]

- Ewees, A.A.; Al-qaness, M.A.; Abualigah, L.; Abd Elaziz, M. HBO-LSTM: Optimized long short term memory with heap-based optimizer for wind power forecasting. Energy Convers. Manag. 2022, 268, 116022. [Google Scholar] [CrossRef]

- Bischl, B.; Binder, M.; Lang, M.; Pielok, T.; Richter, J.; Coors, S.; Thomas, J.; Ullmann, T.; Becker, M.; Boulesteix, A.L.; et al. Hyperparameter optimization: Foundations, algorithms, best practices, and open challenges. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 2021, 13, e1484. [Google Scholar] [CrossRef]

- Falkner, S.; Klein, A.; Hutter, F. BOHB: Robust and efficient hyperparameter optimization at scale. In Proceedings of the International Conference on Machine Learning, PMLR, Stockholm, Sweden, 10–15 July 2018; pp. 1437–1446. [Google Scholar]

- Bergstra, J.; Bardenet, R.; Bengio, Y.; Kégl, B. Algorithms for hyper-parameter optimization. In Advances in Neural Information Processing Systems; Curran Associates Inc.: Sydney, NSW, Australia, 2011; Volume 24. [Google Scholar]

- Gorgolis, N.; Hatzilygeroudis, I.; Istenes, Z.; Gyenne, L.G. Hyperparameter optimization of LSTM network models through genetic algorithm. In Proceedings of the 2019 10th International Conference on Information, Intelligence, Systems and Applications (IISA), Patras, Greece, 15–17 July 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1–4. [Google Scholar]

- Chung, H.; Shin, K.S. Genetic algorithm-optimized long short-term memory network for stock market prediction. Sustainability 2018, 10, 3765. [Google Scholar] [CrossRef]

- Ali, M.A.; P.P., F.R.; Abd Elminaam, D.S. An Efficient Heap Based Optimizer Algorithm for Feature Selection. Mathematics 2022, 10, 2396. [Google Scholar] [CrossRef]

- AbdElminaam, D.S.; Houssein, E.H.; Said, M.; Oliva, D.; Nabil, A. An efficient heap-based optimizer for parameters identification of modified photovoltaic models. Ain Shams Eng. J. 2022, 13, 101728. [Google Scholar] [CrossRef]

- Abdel-Basset, M.; Mohamed, R.; Elhoseny, M.; Chakrabortty, R.K.; Ryan, M.J. An efficient heap-based optimization algorithm for parameters identification of proton exchange membrane fuel cells model: Analysis and case studies. Int. J. Hydrogen Energy 2021, 46, 11908–11925. [Google Scholar] [CrossRef]

- Ginidi, A.R.; Elsayed, A.M.; Shaheen, A.M.; Elattar, E.E.; El-Sehiemy, R.A. A novel heap-based optimizer for scheduling of large-scale combined heat and power economic dispatch. IEEE Access 2021, 9, 83695–83708. [Google Scholar] [CrossRef]

- Askari, Q.; Saeed, M.; Younas, I. Heap-based optimizer inspired by corporate rank hierarchy for global optimization. Expert Syst. Appl. 2020, 161, 113702. [Google Scholar] [CrossRef]

- Kumar, A.; Kashyap, Y.; Kosmopoulos, P. Enhancing Solar Energy Forecast Using Multi-Column Convolutional Neural Network and Multipoint Time Series Approach. Remote Sens. 2022, 15, 107. [Google Scholar] [CrossRef]

- Jursa, R.; Rohrig, K. Short-term wind power forecasting using evolutionary algorithms for the automated specification of artificial intelligence models. Int. J. Forecast. 2008, 24, 694–709. [Google Scholar] [CrossRef]

- Heckbert, P. Fourier transforms and the fast Fourier transform (FFT) algorithm. Comput. Graph. 1995, 2, 15–463. [Google Scholar]

- Sevgi, L. Numerical Fourier transforms: DFT and FFT. IEEE Antennas Propag. Mag. 2007, 49, 238–243. [Google Scholar] [CrossRef]

- Zhang, X.; Wen, S. Heap-based optimizer based on three new updating strategies. Expert Syst. Appl. 2022, 209, 118222. [Google Scholar] [CrossRef]

- Katoch, S.; Chauhan, S.S.; Kumar, V. A review on genetic algorithm: Past, present, and future. Multimed. Tools Appl. 2021, 80, 8091–8126. [Google Scholar] [CrossRef] [PubMed]

- Lambora, A.; Gupta, K.; Chopra, K. Genetic algorithm-A literature review. In Proceedings of the 2019 International Conference on Machine Learning, Big Data, Cloud and Parallel Computing (COMITCon), Faridabad, India, 14–16 February 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 380–384. [Google Scholar]

- Fentis, A.; Bahatti, L.; Mestari, M.; Chouri, B. Short-term solar power forecasting using Support Vector Regression and feed-forward NN. In Proceedings of the 2017 15th IEEE International New Circuits and Systems Conference (NEWCAS), Strasbourg, France, 25–28 June 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 405–408. [Google Scholar]

- Elsaraiti, M.; Merabet, A. Solar power forecasting using deep learning techniques. IEEE Access 2022, 10, 31692–31698. [Google Scholar] [CrossRef]

- Serttas, F.; Hocaoglu, F.O.; Akarslan, E. Short term solar power generation forecasting: A novel approach. In Proceedings of the 2018 International Conference on Photovoltaic Science and Technologies (PVCon), Ankara, Turkey, 4–6 July 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 1–4. [Google Scholar]

- Haider, S.A.; Sajid, M.; Sajid, H.; Uddin, E.; Ayaz, Y. Deep learning and statistical methods for short-and long-term solar irradiance forecasting for Islamabad. Renew. Energy 2022, 198, 51–60. [Google Scholar] [CrossRef]

- Lai, J.P.; Chang, Y.M.; Chen, C.H.; Pai, P.F. A survey of machine learning models in renewable energy predictions. Appl. Sci. 2020, 10, 5975. [Google Scholar] [CrossRef]

- Liu, T.; Jin, H.; Li, A.; Fang, H.; Wei, D.; Xie, X.; Nan, X. Estimation of Vegetation Leaf-Area-Index Dynamics from Multiple Satellite Products through Deep-Learning Method. Remote Sens. 2022, 14, 4733. [Google Scholar] [CrossRef]

- Wang, J.; Wang, Z.; Li, X.; Zhou, H. Artificial bee colony-based combination approach to forecasting agricultural commodity prices. Int. J. Forecast. 2022, 38, 21–34. [Google Scholar] [CrossRef]

- Shahid, F.; Zameer, A.; Muneeb, M. A novel genetic LSTM model for wind power forecast. Energy 2021, 223, 120069. [Google Scholar] [CrossRef]

| Cutoff Frequency | Low Frequency Signal (%) | High Frequency Signal (%) |

|---|---|---|

| 0.5 × Hz | 3.40 | 44.32 |

| Hz | 4.15 | 43.57 |

| Hz | 5.27 | 42.45 |

| Hz | 7.19 | 40.53 |

| Hz | 65.64 | −17.92 |

| Hz | 72.80 | −25.08 |

| Hz | 75.25 | −27.54 |

| Hz | 90.64 | −42.92 |

| Hz | 93.09 | −45.37 |

| Hz | 94.19 | −46.48 |

| Hz | 95.73 | −48.02 |

| Hz | 95.94 | −48.22 |

| Optimiser | MSE | RMSE | MSLE | Data Set |

|---|---|---|---|---|

| Normalised Solar Irradiance × | ||||

| HBO-algorithm | 2.1312 | 46.1630 | 0.9030 | High Frequency |

| 3.7411 | 61.1602 | 1.7730 | Low Frequency | |

| 14.3627 | 119.8435 | 7.2800 | Original | |

| GAO-algorithm | 2.1309 | 46.1539 | 0.9021 | High Frequency |

| 4.4532 | 66.7310 | 2.1203 | Low Frequency | |

| 17.0361 | 130.5221 | 8.3667 | Original | |

| No Optimiser * | 2.1319 | 46.1617 | 0.9034 | High Frequency |

| 6.8290 | 82.6388 | 3.3598 | Low Frequency | |

| 17.2401 | 131.3036 | 8.4711 | Original | |

| Data Set | HBO-Algorithm | GAO-Algorithm | No Optimiser * |

|---|---|---|---|

| High Frequency | 0.0888% | 0.09468% | −0.00329% |

| Low Frequency | 95.278% | 94.38% | 91.38% |

| Original | 84.336% | 81.42% | 81.20% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Dhake, H.; Kashyap, Y.; Kosmopoulos, P. Algorithms for Hyperparameter Tuning of LSTMs for Time Series Forecasting. Remote Sens. 2023, 15, 2076. https://doi.org/10.3390/rs15082076

Dhake H, Kashyap Y, Kosmopoulos P. Algorithms for Hyperparameter Tuning of LSTMs for Time Series Forecasting. Remote Sensing. 2023; 15(8):2076. https://doi.org/10.3390/rs15082076

Chicago/Turabian StyleDhake, Harshal, Yashwant Kashyap, and Panagiotis Kosmopoulos. 2023. "Algorithms for Hyperparameter Tuning of LSTMs for Time Series Forecasting" Remote Sensing 15, no. 8: 2076. https://doi.org/10.3390/rs15082076

APA StyleDhake, H., Kashyap, Y., & Kosmopoulos, P. (2023). Algorithms for Hyperparameter Tuning of LSTMs for Time Series Forecasting. Remote Sensing, 15(8), 2076. https://doi.org/10.3390/rs15082076