1. Introduction

Facial expressions are the most direct non-verbal channel for communicating human emotion, a capability that has been a determinant factor for the survival and evolution of humans throughout history [

1]. These expressions are grounded in physiological responses to environmental stimuli [

2] and involve the coordinated movement of a complex array of approximately 43 facial muscles [

3]. The accurate classification of these movements is critical for applications ranging from human–computer interaction to clinical monitoring of neurological disorders [

4,

5]. For instance, recent studies have demonstrated the utility of analyzing regional facial information for the automatic diagnosis of facial palsy [

6].

The foundational framework for analyzing facial movement is the Facial Action Coding System (FACS) proposed by Ekman and Friesen [

7], which decomposes expressions into specific Action Units (AUs) corresponding to muscle contractions. While this categorical approach is standard, researchers have also explored dimensional models, such as the circular model of affect proposed by Barrett and Russell [

8], which positions emotions on axes of pleasantness and activation. However, modeling the multifaceted nature of emotion computationally remains a significant challenge [

9].

Regarding automated recognition, while recent advancements in Convolutional Neural Networks (CNNs) have achieved high accuracy [

10], they often lack explainability. Conversely, geometric approaches that track the deformation of specific facial regions preserve the anatomical context of the analysis [

11]. Standard geometric methods rely on the detection of 68 facial landmarks, a process extensively reviewed by Ouanan et al. [

12].

To bridge the gap between these raw points and muscular mechanics, recent research has attempted to infer muscle activity directly from visual data. Benli and Eskil [

13] utilized optical flow to track landmark displacement as a proxy for muscle features. Similarly, hybrid approaches have combined computer vision with wearable electromyography (EMG) to map muscle synergies to observable kinematics [

14,

15]. Building on the concept of non-intrusive muscle estimation, Arrazola et al. [

5] proposed measuring the deformation of triangles anchored by the

centroid to estimate muscle activation, providing a robust set of features for emotion recognition. Building on this, Aguilera et al. [

11] demonstrated that the centroid is not always the most discriminative point. They improved performance by selecting the best

notable point (e.g., Incenter, Fermat point, Nagel point) for each triangle from a finite set of candidates [

11]. To address this limitation, the present work proposes a new method to find the optimal inner point for each triangle in a continuous search space. Metaheuristic optimization algorithms—such as Differential Evolution (DE), Particle Swarm Optimization (PSO), and Convex Partition (CP)—are used for determining the precise linear combination of vertices that maximizes the discriminative power of the geometric features. This approach generalizes previous geometric works using inner point location as a tunable parameter, thereby enhancing the performance of standard machine learning classifiers.

The emphasis on explainability in this work may justify the difference in accuracy between the proposal (which achieves 0.91 in KDEF and 0.81 in JAFFE) and the state-of-the-art deep learning methods ad hoc for each database (0.96 in KDEF and 0.98 in JAFFE) [

10]. In critical application scenarios, such as clinical diagnosis aids for neurological disorders or forensic psychological assessment, the ability to validate a decision provided with explainability at the muscle level is vital, with a minimum acceptable performance threshold typically set close to 0.80 [

6]. Our method fulfills this by mapping feature deformation directly to specific muscle groups, allowing results to be interpreted through the lens of anatomical dynamics rather than uninterpretable pixel-level correlations.

Section 2 briefly introduces the databases, the methodology for constructing geometric descriptors, and the optimization metaheuristics algorithm, along with evaluated machine learning classifiers.

Section 3 details how metaheuristics are applied for tuning 22 FACS-consistent triangles from 68 landmarks.

Section 4 reports the performance of tested strategies and utilizes the non-parametric

Friedman test for statistical analysis and visual resources to discuss results. Finally,

Section 5 summarizes the successful deployment of the evolutionary metaheuristics, confirming that the proposed methodology yields state-of-the-art results among geometric FER approaches.

4. Results and Discussion

The computational experiments were executed on a system running the Windows 11 Pro operating system, featuring an Intel Core i5-6500, 3.2 GHz. processor, and 16 GB of RAM. The core machine learning analysis, including the implementation of the classifiers and optimization procedures, was performed in Python 3.14, utilizing the scikit-learn library version 1.4.1. The overall experimental workflow, which included the coordination of the Python scripts and the generation of final summary reports and statistical analyses, was conducted by scripts written in the statistical computing language R, version 4.3. The resulting optimal accuracy for each combination

is reported and discussed in this section.

Table 4 and

Table 5 report the mean accuracy and its corresponding standard deviation estimated by a 5-fold cross-validation for each pair

for both databases.

Table 6 organizes running time in minutes for each tested combination

, additionally it reports the total time by column (machine learning method) and row (metaheuristic). To contrast the time efficiency of each method,

Table 7 shows the average time taken in milliseconds for classifying an image.

To statistically compare each combination, the non-parametric Friedman test is selected because the distribution of performance metrics did not satisfy the necessary assumptions for parametric testing, specifically regarding the lack of normality and homoscedasticity (homogeneity of variance) in samples (See

Table 5). The Friedman test operates on the rank of the performance metrics rather than the raw values, making it robust against outliers and non-normal distributions, thus providing a reliable statistical basis for determining if significant differences exist between the methods.

Figure 3 illustrates the results of the Friedman test through bar charts representing the sum of ranks.

Figure 3a displays the comparison among machine learning methods. The test yielded a

p-value of 3

, which is well below the significance threshold (

). This allows us to reject the null hypothesis (

) that all classifiers are equivalent, confirming that the choice of classifier significantly impacts performance. As observed in the chart, MLP and SVM obtained the highest rank sums, statistically outperforming LDA, DT, and XGB. Conversely,

Figure 3b compares the optimization metaheuristics. Here, the test returned a

p-value of

. In this case, we fail to reject the null hypothesis, indicating that there is no statistically significant difference between the performance of DE, PSO, and CP. The statistical analysis confirms that the selection of the machine learning classifier is critical (with MLP and SVM being superior choices), while the choice of optimization metaheuristic (among DE, PSO, and CP) has a negligible impact on the final model performance. Similarly,

Figure 4 illustrates Friedman tests for contrasting optimizing–training time between (a) machine learning methods and (b) metaheuristics. In both cases, the hypothesis of equality is rejected: (a) MLP and SVM are significantly slower, and (b) CP is significantly slower than DE and PSO. Although metaheuristics perform approximately the same number of function evaluations 1

, CP is a surrogate-based technique, which implies additional time to estimate a latent Bayesian model at each iteration [

29].

Table 4 indicates that the Multi-layer Perceptron (MLP) + Differential Evolution (DE) achieved the highest performance on the KDEF database, achieving an accuracy of

. Similarly, the combination of Support Vector Machine (SVM) and Convex Partition (CP) demonstrated robust stability on the JAFFE dataset, achieving an accuracy of

. In contrast, the Decision Tree (DT) classifier produced the lowest results, with an accuracy of

on the JAFFE dataset. The Friedman test supports that the best experimental results were obtained by utilizing the MLP+DE (Accuracy:

) and SVM+CP (Accuracy:

), for KDEF and JAFFE respectively. Hence, further analysis is focused on these best models.

Figure 5 shows how the mean accuracy converges for MLP and SVM during the execution of the three tested metaheuristics. These convergence charts visually exhibit the superiority of combinations MLP+DE and SVM+CP. In all cases, the metaheuristic search stops when the trend gets stuck.

The results presented validate the hypothesis that optimizing the geometric landmark points within facial triangles significantly improves emotion recognition performance. By relaxing the constraints imposed by previous methods—which relied on fixed points like centroids [

5] or a discrete selection of triangle centers [

11]—the continuous optimization approach allows the model to adapt to the specific deformation dynamics of each muscle group. The superiority of the Multi-Layer Perceptron (MLP) combined with Differential Evolution (DE), achieving an accuracy of

on the KDEF dataset, suggests that this classifier is the best suited to interpret the complex geometric relationships discovered by the metaheuristic search. Comparing these findings to the state-of-the-art, the proposed method outperforms the fixed-center approaches reported by Aguilera-Hernández et al. [

11] This indicates that the

most informative point on a facial triangle is often an arbitrary location best found through stochastic search rather than geometric intuition.

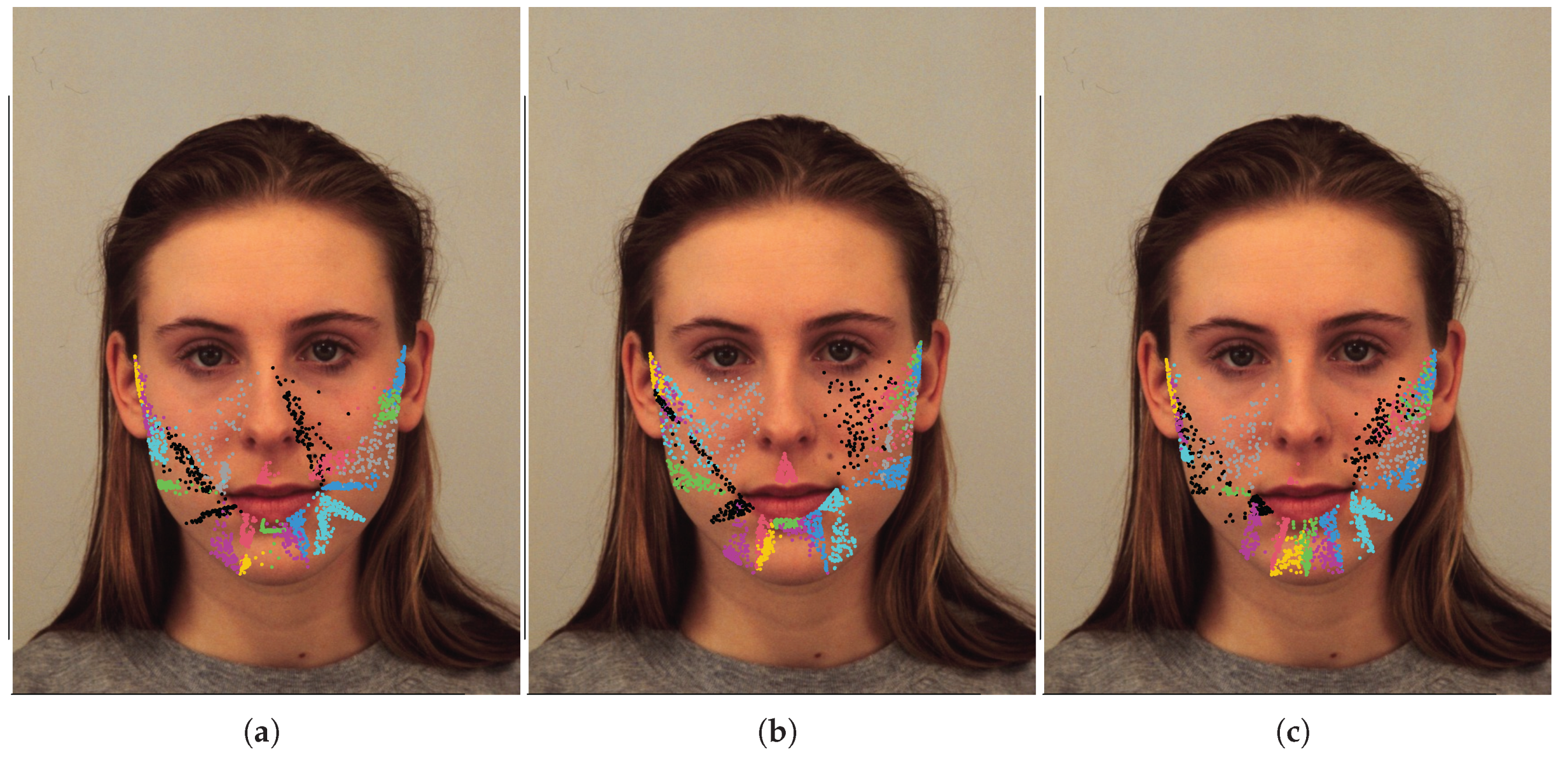

Figure 6 illustrates the best 100 sets of inner points (coded by

V) found for (a) MLP+DE, (b) SVM+CP, and (c) LDA+CP. It is possible to visually state that points are not focused at the center of triangles. The distribution of points allows us to identify areas that are intuitively relevant for emotions recognition:

Mouth and Chin Region: There is a heavy concentration of points around the lips, corners of the mouth, and the chin/jawline. This region is critical for expressing emotions and it is associated with AUs 20, 25, and 26.

Cheeks and Nasolabial Folds.: Points are distributed across the cheeks and along the nasolabial folds (the lines running from the sides of the nose to the corners of the mouth). These areas are associated with AUs 9, 6, and 12.

Figure 7 shows two confusion matrices for (a) MLP+DE on the KDEF database and (b) SVM+CP on the JAFFE database, respectively. Matrix (a) demonstrates superior results, marked by exceptionally high true positive counts across all emotions and very minimal confusion, with errors primarily isolated to instances like

Angry/Disgust and

Neutral/Sad misclassifications. In contrast, matrix (b) shows a moderate-to-high overall score; it particularly struggles with discrimination among

Fear,

Sad, and

Surprise categories. The geometric features derived from the optimization process are more robust and distinctive on the KDEF dataset.

Figure 8 shows six cases of misclassification produced by the best model MLP+DE. The misclassifications shown, such as

Fear being confused with

Surprise or

Anger with

Disgust, strongly support the argument that these specific emotions are inherently ambiguous and difficult to classify, even for a high-performing model. This difficulty arises because the facial expressions share significant overlaps in their underlying muscle movements (Action Units): for example, both Fear and Surprise often involve wide eyes and an open mouth. Those errors are not mere methodological failures, but rather a reflection of the subtle individual variations and the blurring lines between emotion categories. Consider that these specific expressions would likely confuse even human observers due to their high structural similarity. The inner points added to the plots visually indicate where the model is focused, so these points may constitute a form of explainable AI (XAI). They reveal where the MLP+DE model is attending on the face—typically highlighting critical facial regions like the cheeks, mouth and chin, i.e., AUs [

2]: 6, 9, 12, 20, 25, 26, etc. Observing the scatter of these points directly may help understand which specific facial features are driving the misclassification. This crucial insight then guides the design of future models by allowing developers to reinforce weights on truly discriminative features or, conversely, to ignore confounding noise in ambiguous regions.

To contrast the proposed method with state-of-the-art results,

Table 8 lists the accuracy scores of ten different methodologies applied to FER, divided into Deep Learning, Hybrid, and Geometric approaches. To visually compare these methodologies,

Figure 9 presents bar charts of accuracy scores listed in

Table 8. In the charts for KDEF and JAFFE, the proposed method, along with one or two Deep Learning models, appears in the third quartile (indicated by a vertical line). Notably, the proposed method is consistently the only geometric approach positioned in the third quartile. These results indicate that metaheuristic optimization allows geometric features to achieve competitive accuracy, despite registering lower accuracy than Deep Learning approaches.

While

Figure 9 suggests that methodologies based on Deep Learning capture more discriminating information, the proposed method maintains the crucial advantage of modeling muscle action directly through continuously optimized geometric landmarks. This provides a white-box solution that explicitly validates the psychological plausibility of the Facial Action Coding System (FACS). The complexity of these attention-based deep learning models comes at the cost of explainability, whereas the proposed method remains fully transparent; it achieves competitive performance using inner points, making it a robust, computationally efficient, and highly explainable tool for emotion recognition.

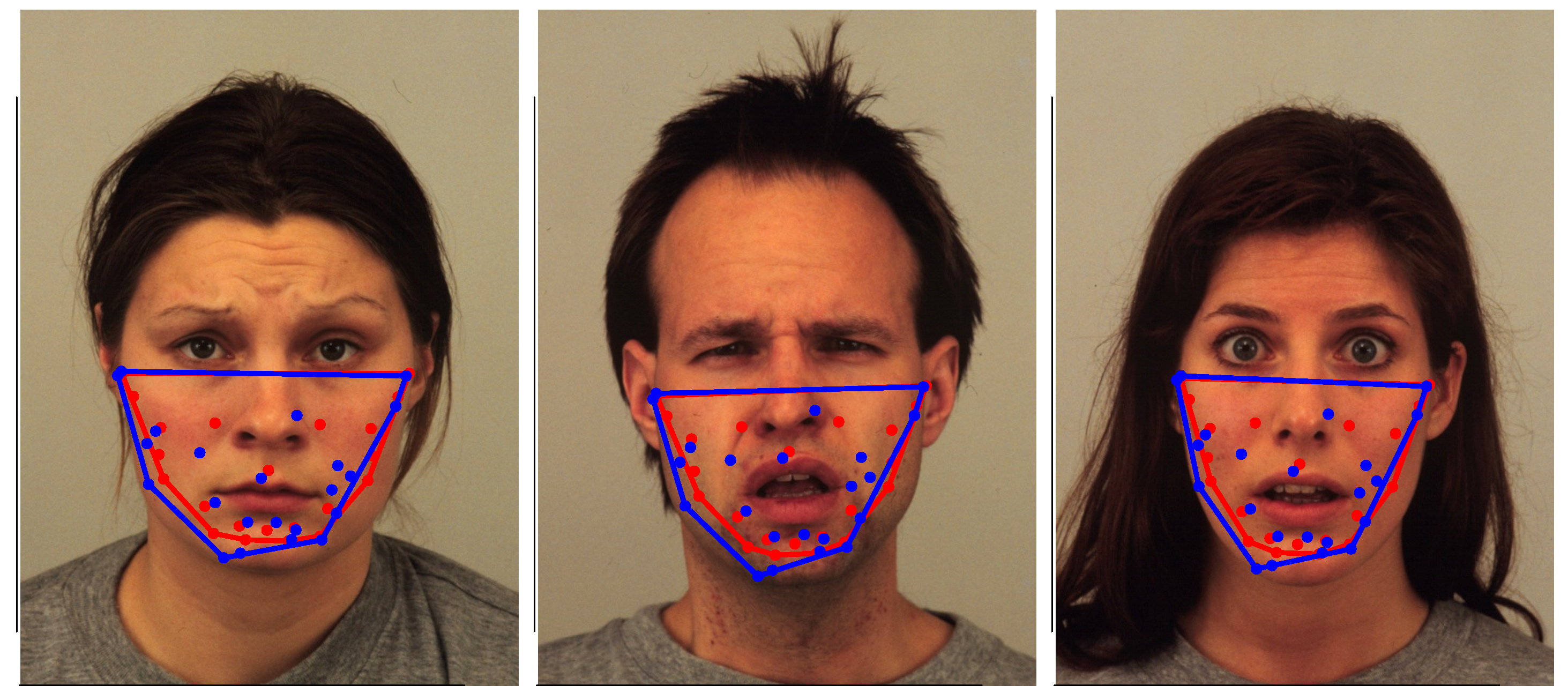

In this context, it is relevant to compare the best proposed model (MLP+DE) against another explainable geometric approach based on inner points, for instance, Arrazola et al. [

5], which uses centroids as inner points. To visually contrast these last two referred methods,

Figure 10 and

Figure 11 show collections of images correctly classified and misclassified, respectively. In both figures, blue points locate the optimized inner points (the best proposed model MLP+DE), and red points mark the centroids. For both classes of points, the convex hull is traced with the corresponding color. The blue convex hull associated with the best proposed model covers more area than the red convex hull associated with the centroids proposed by [

5]. In addition, the blue points seem to be more uniformly distributed over their corresponding convex hull. Considering the traced convex hulls in

Figure 10 and

Figure 11, and the triangles shown in

Figure 6 and

Figure 8, the proposed approach constitutes a framework to generate more powerful interpreting tools than those offered by geometric approaches merely based on central points, such as [

5,

11].

The proposed method based on metaheuristics is evaluated against the other strategies based on inner points under identical conditions (the same databases, 5-fold cross-validation, and classifiers) to ensure a fair comparison.

Table 9 presents the mean accuracy for these three approaches across various machine learning models. The baseline strategies involve (1) using the centroid as proposed by [

5], and (2) selecting the best of several notable points (centroid, incenter, Nagel, or Torricelli) as proposed by [

11]. As shown, the proposed method consistently outperforms the others, demonstrating that metaheuristic optimization of inner points significantly enhances classification accuracy.

To provide a visual and explainable relation between the optimized inner points and Action Units (AUs) listed in

Table 1,

Figure 12 illustrates the facial landmarks where the radius of each point is proportional to the sum of the weights w assigned to it during the optimization process. This weighting scheme reflects the discriminative importance of each landmark, analogous to the loading vectors in Principal Component Analysis (PCA), which reveal the contribution of each dimension to the total variance [

38]. In this context, the size of a landmark illustrates its relevance to the classification task. The points are numbered for reference. For instance, points 20 and 25 around the mouth are related to the Lip Corner Puller (AU12) and the Risorius (AU20). Similarly, points 5, 8, and 10 are associated with Jaw Drop (AU26), while points 1, 2, 17, and 18 capture the activation of the Inner Eyebrow Raiser (AU1) and the Outer Eyebrow Raiser (AU2).