Defect Detection and Segmentation Framework for Remote Field Eddy Current Sensor Data

Abstract

:1. Introduction

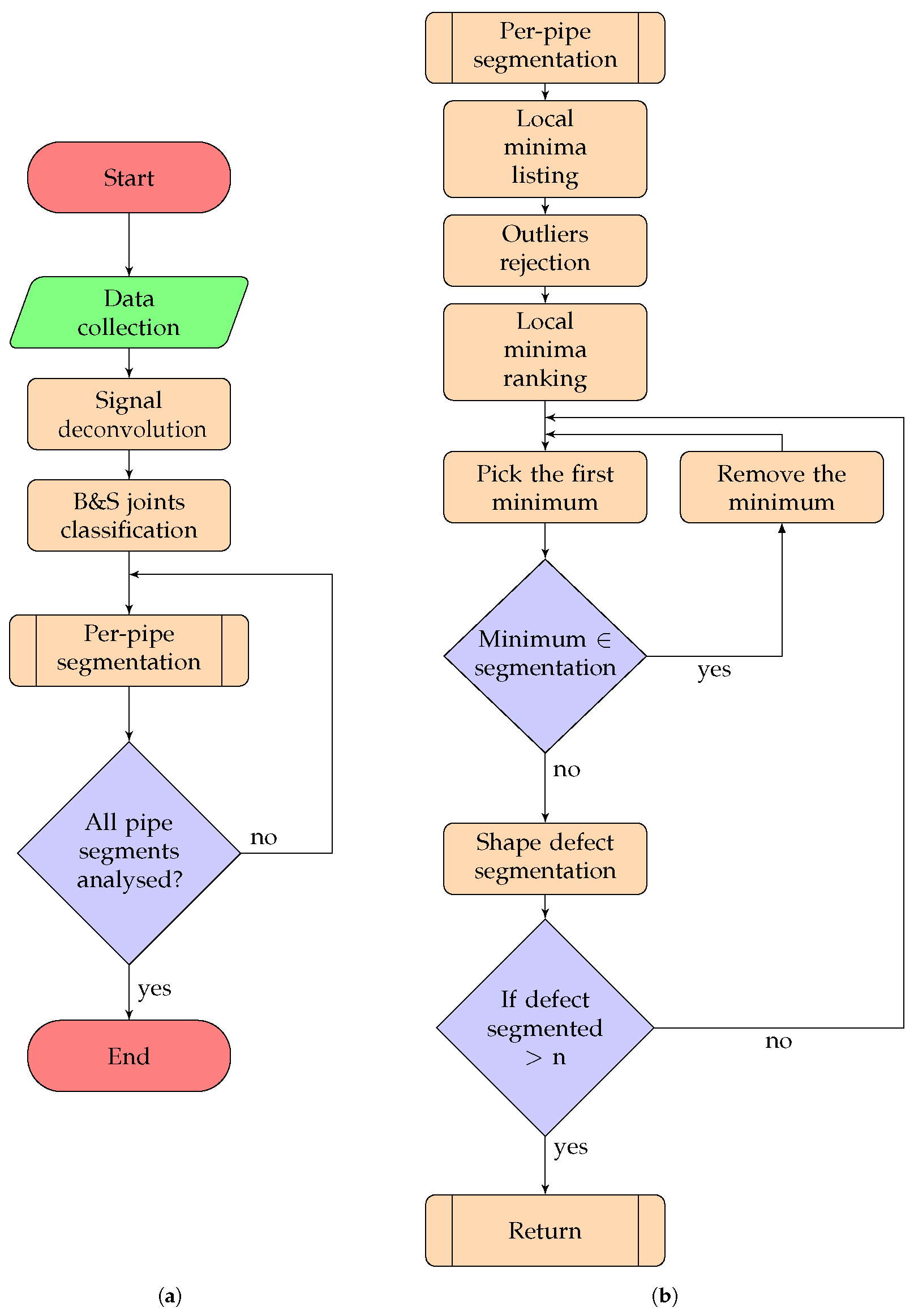

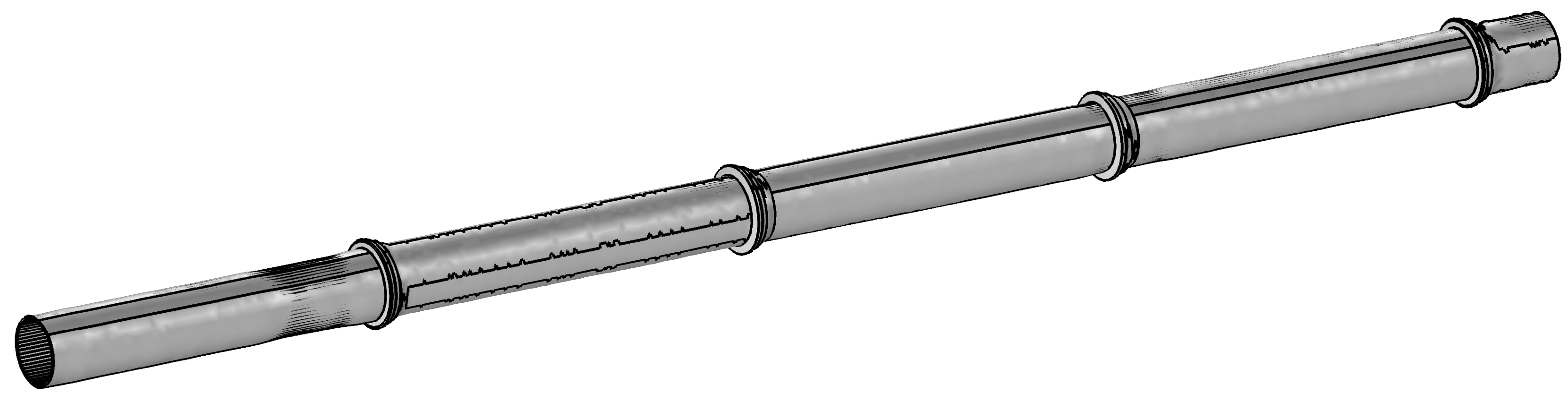

2. Proposed Approach

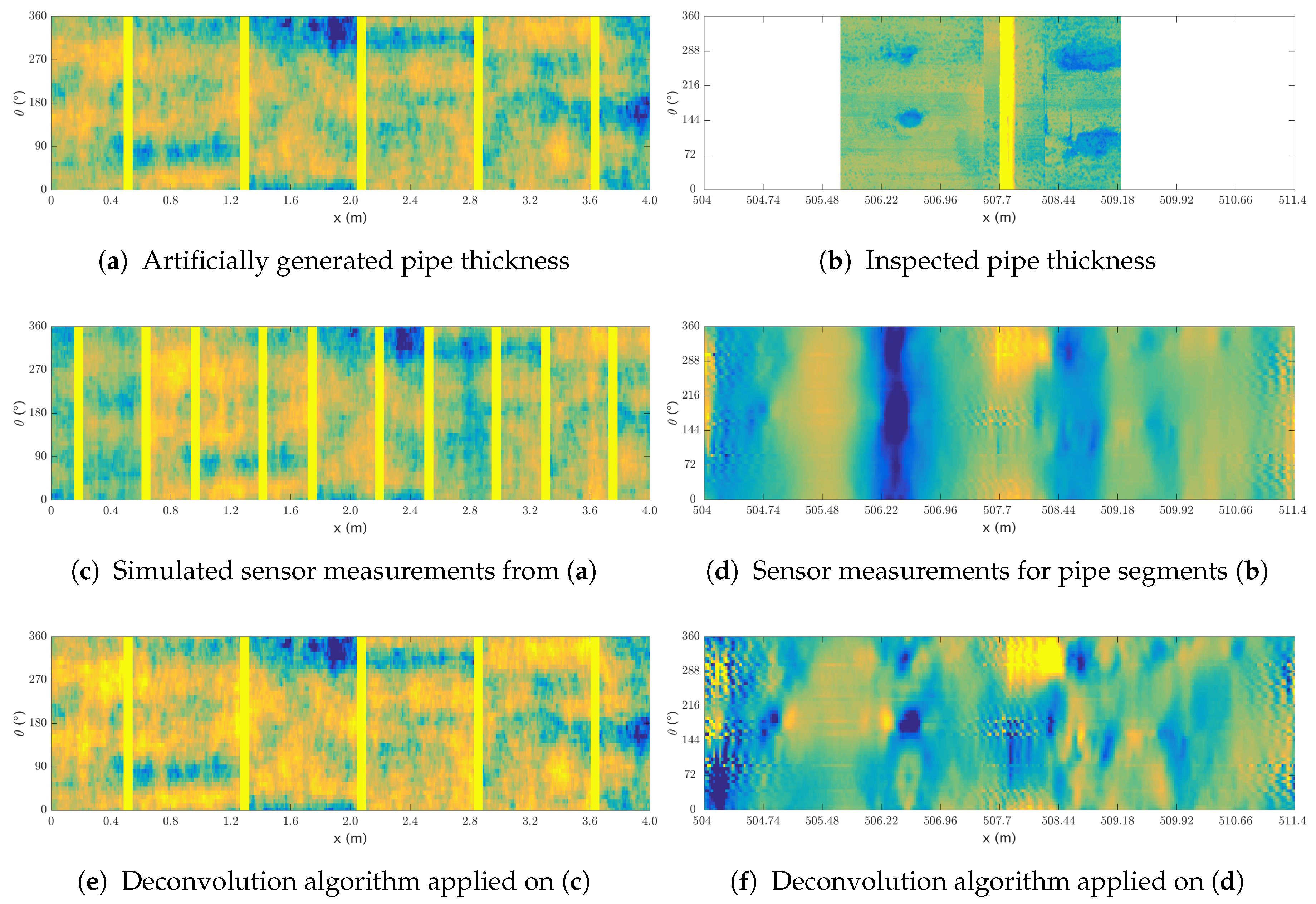

2.1. Signal Deconvolution

| Algorithm 1: Signal deconvolution |

|

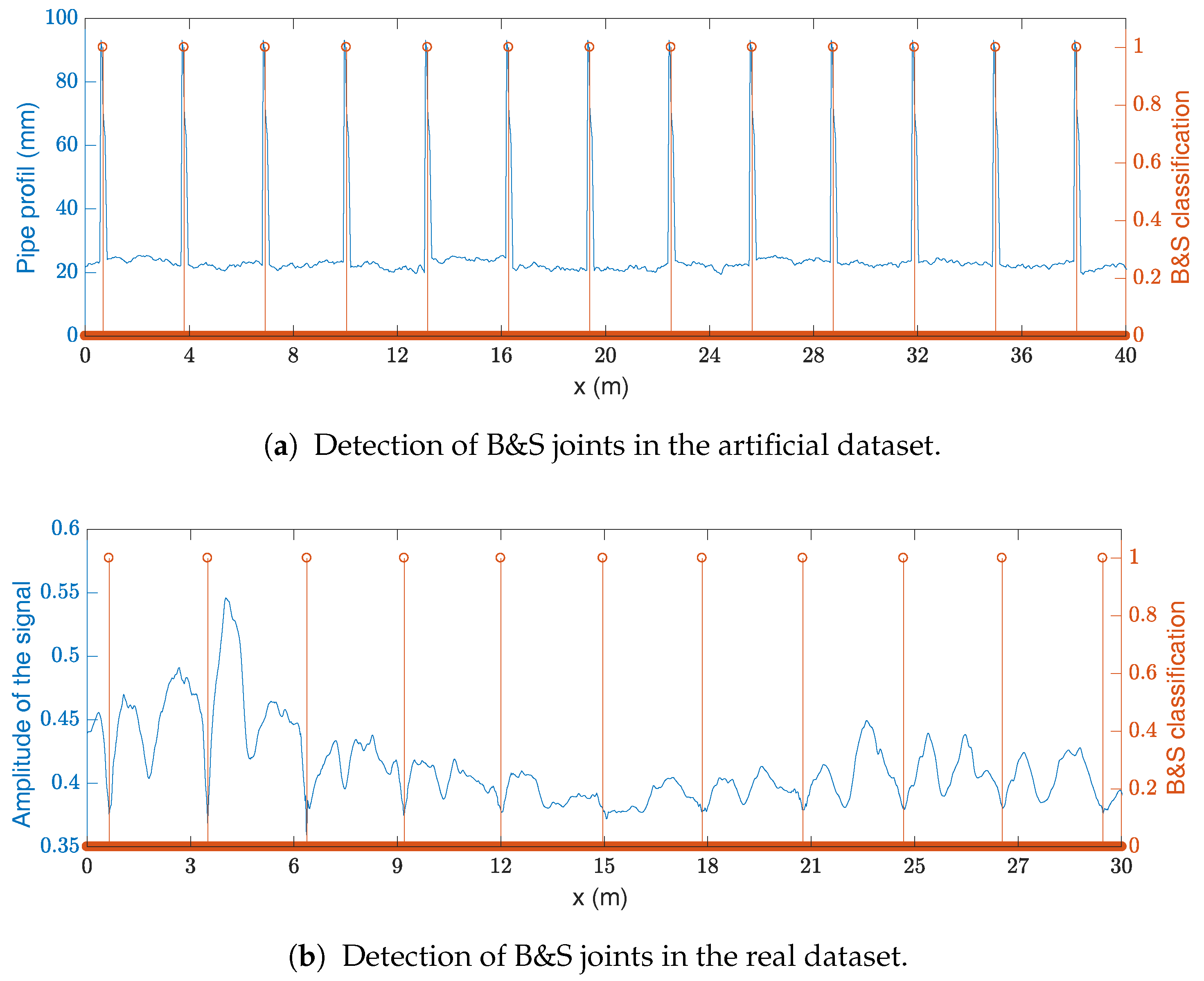

2.2. Localization of Bell and Spigot Joints

2.2.1. Feature Construction

2.2.2. Classifiers Description

- Naive Bayes Classifiers require the features to be independent and identically distributed. First, a likelihood table is generated for any event by doing a frequency analysis on the training set. A probability of a class is then obtained by using the Bayes theorem as . The class with the maximum probability is then chosen. Naive Bayes classifiers are popular for text classification (e.g., spam filters) and have also been applied for medical diagnosis [15]. It is possible to train the classifier with a closed-form expression [16], which allows training with a linear computational complexity.

- Random Forests are obtained by training a set of independent decision trees on a set of randomly sampled data. Once these decision trees are trained, a new sample is classified by considering the class which is most often obtained from all the independent decision trees [21]. The utilization of multiple classifiers is referred to as bagging and is used to avoid overfitting [22].

- Support Vector Machine finds the linear decision boundary which maximizes the distance between the closest points of each class—i.e., finds a fat margin—while minimizing the distance between the miss-classified samples and the decision boundary [23]. Additionally, to create a more flexible classification, a kernel trick [24] can be used to bring the features into a higher dimension (in our case the Support Vector Machine (SVM) is using a Radial Basis Functions (RBF) kernel).

2.3. Defect Detection

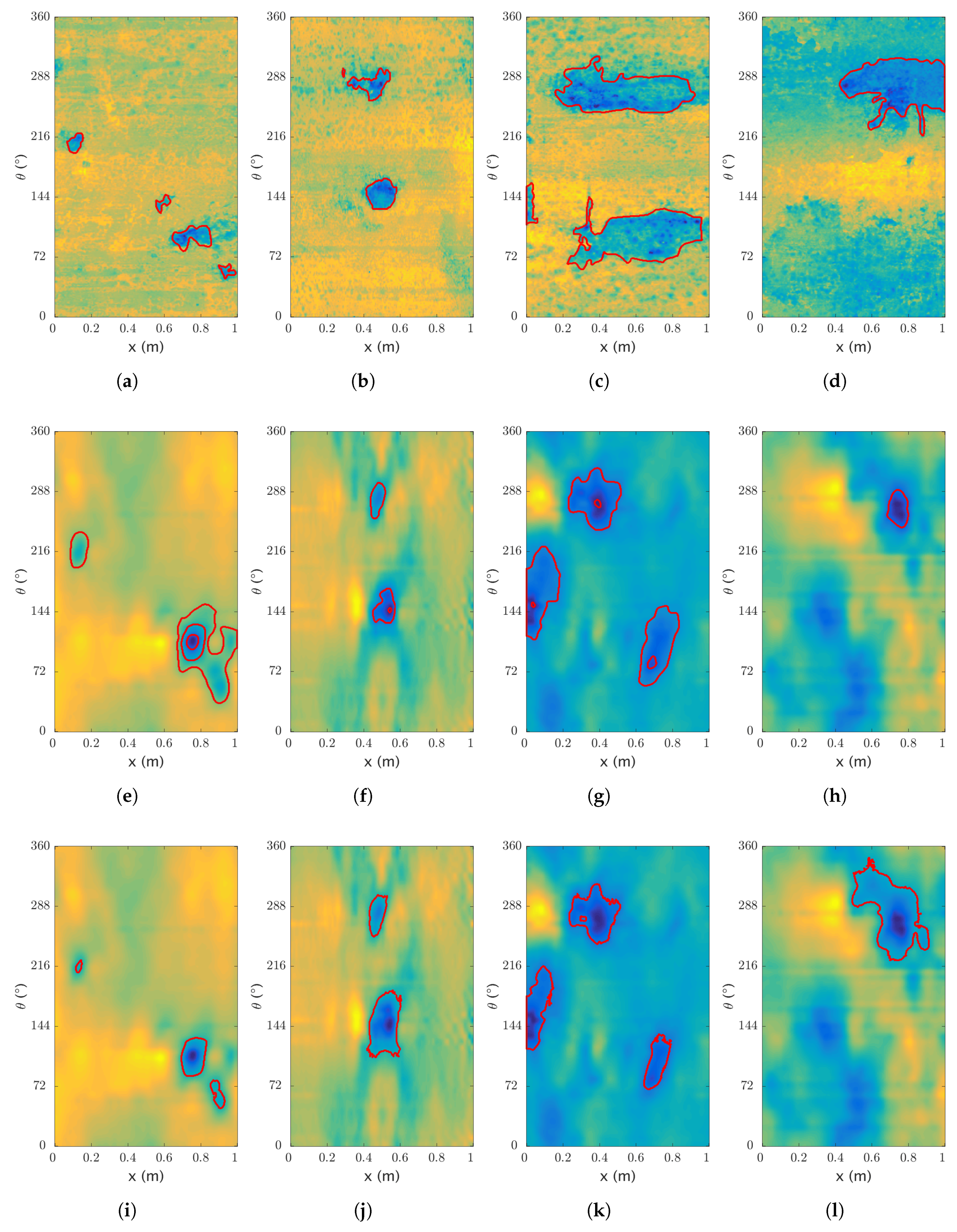

2.4. Defect Segmentation

2.4.1. Region Growing

| Algorithm 2: Region Growing |

|

2.4.2. Active Contour Segmentation

| Algorithm 3: Active Contour Without Edges |

|

3. Results

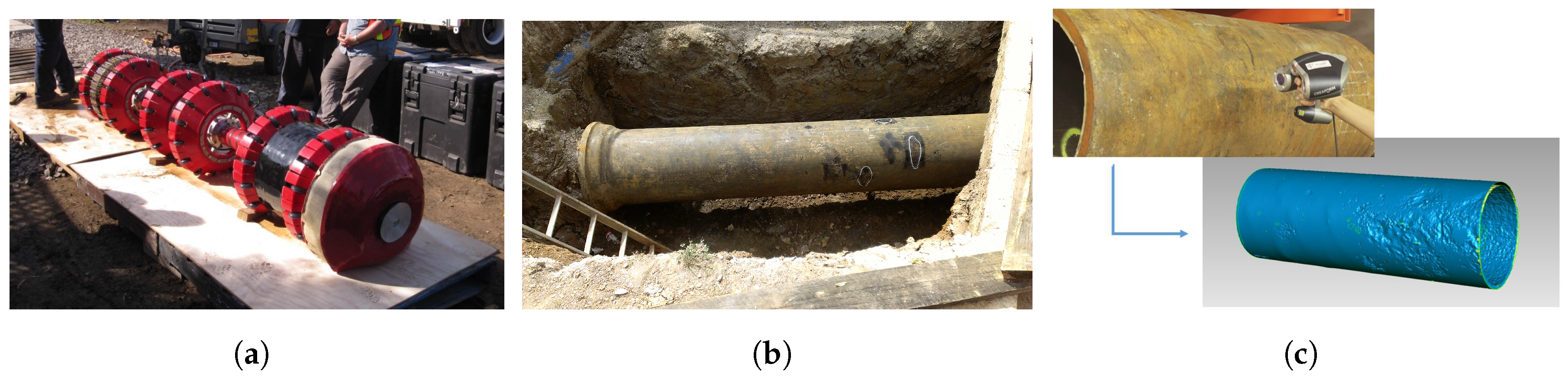

3.1. Artificial Dataset

3.2. Real Dataset

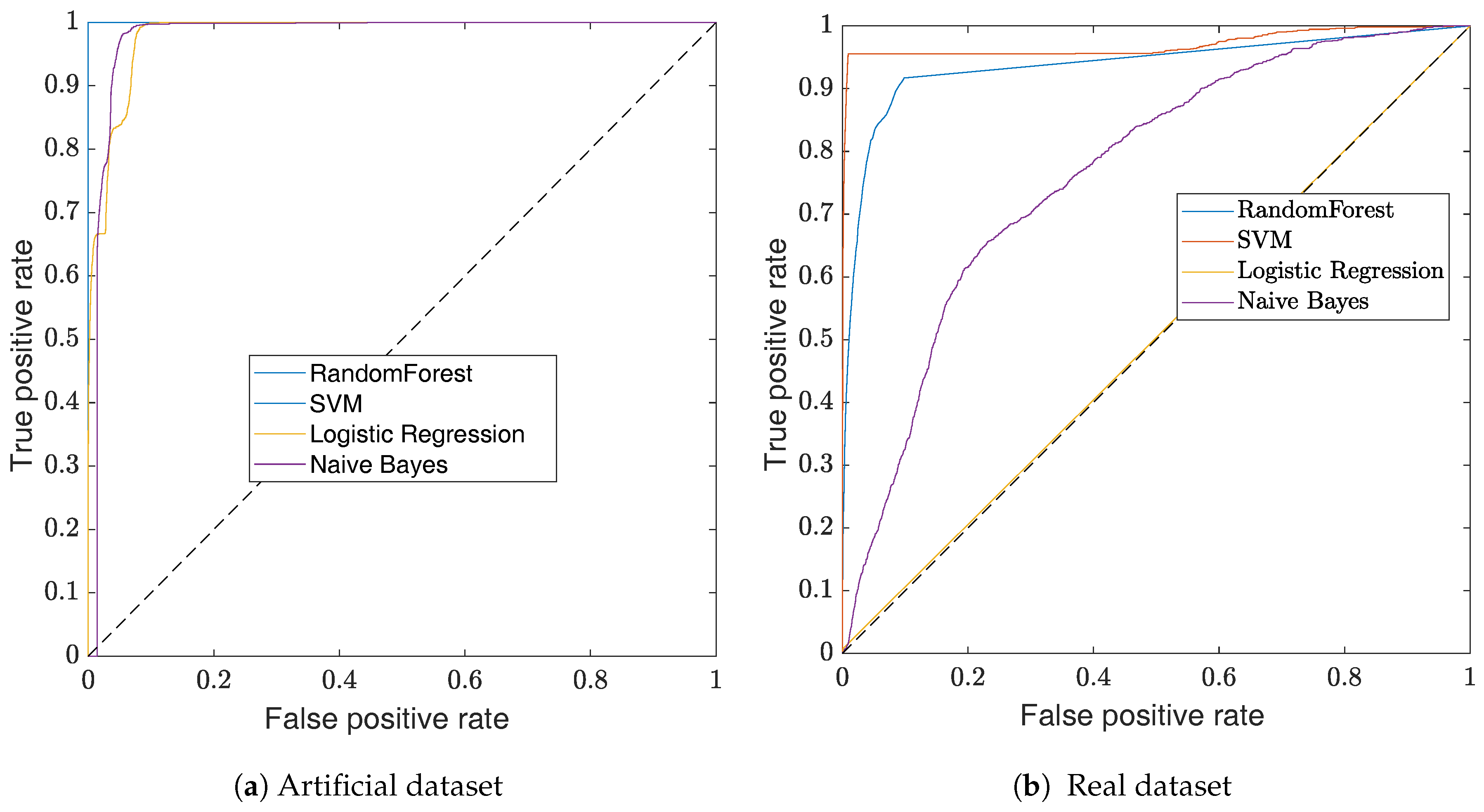

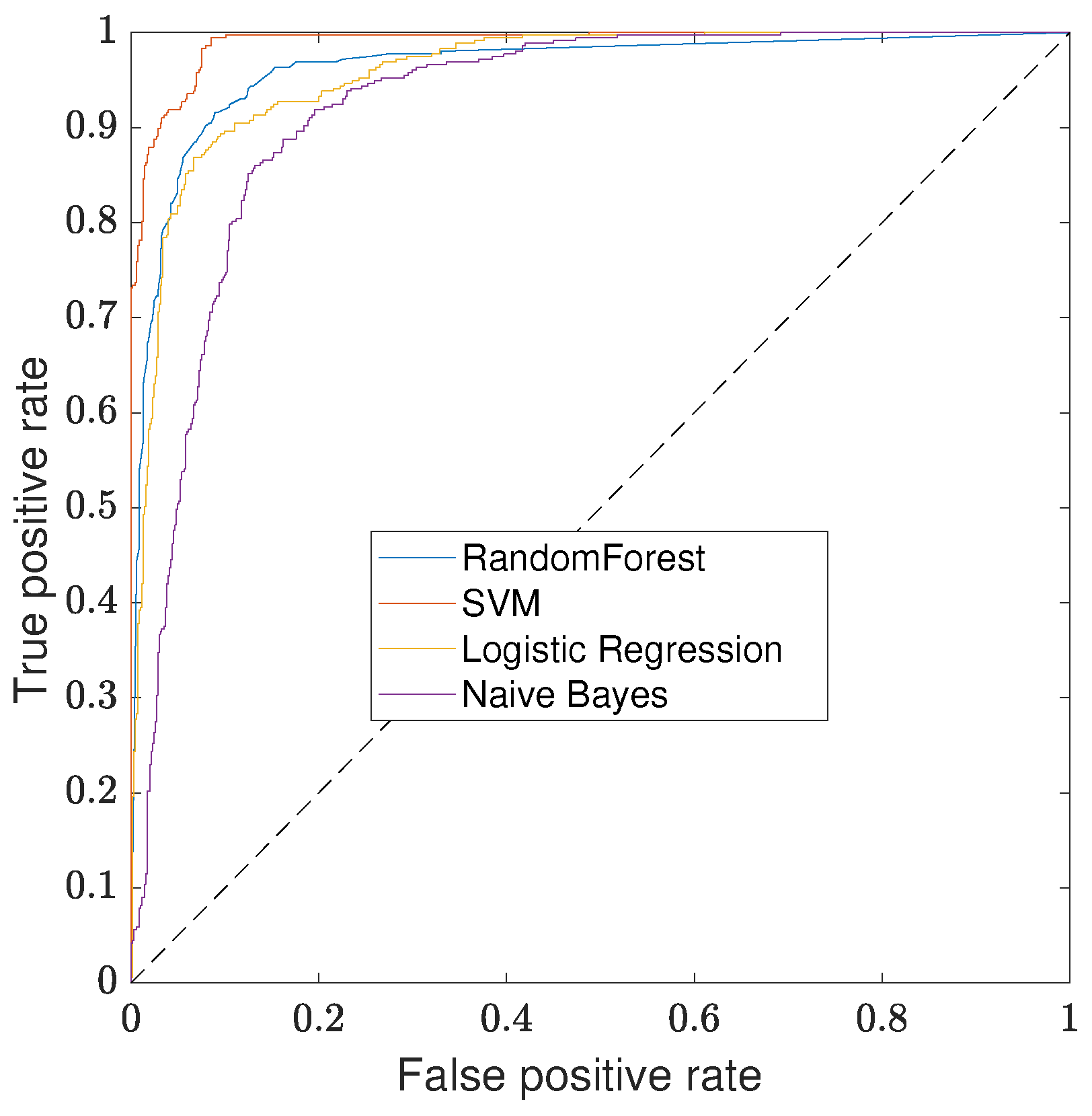

3.3. Classifiers Evaluation

3.3.1. K-Fold Cross-Validation

3.3.2. Confusion Matrix and Standard Metrics

3.3.3. ROC Curves

3.4. Signal Deconvolution

3.5. Bell and Spigot Joint Detection

3.6. Defect Detection

3.7. Defect Segmentation

4. Discussion and Final Remarks

Acknowledgments

Author Contributions

Conflicts of Interest

References

- MacLean, W.R. Apparatus for Magnetically Measuring Thinckness of Ferrous Pipe. U.S. Patent 2573799, 6 November 1951. [Google Scholar]

- Lord, W.; Sun, Y.S.; Udpa, S.; Nath, S. A finite element study of the remote field eddy current phenomen. IEEE Trans. Magn. 1988, 24, 435–438. [Google Scholar] [CrossRef]

- Palanisamy, R. Electromagnetic field calculations for the low frequency eddy current testing of tubular products. IEEE Trans. Magn. 1987, 23, 2663–2665. [Google Scholar] [CrossRef]

- Teitsma, A.; Takach, S.; Maupin, J.; Fox, J.; Shuttleworth, P.; Seger, P. Small diameter remote field eddy current inspection for unpiggable pipelines. J. Press. Vessel Technol. 2005, 127, 269–273. [Google Scholar] [CrossRef]

- Davoust, M.È.; Brusquet, L.; Fleury, G. Robust estimation of flaw dimensions using remote field eddy current inspection. Meas. Sci. Technol. 2006, 17, 3006–3014. [Google Scholar] [CrossRef]

- Davoust, M.È.; Fleury, G.; Oksman, J. A parametric estimation approach for groove dimensioning using remote field eddy current inspection. Res. Nondestr. Eval. 1999, 11, 39–57. [Google Scholar] [CrossRef]

- Saranya, R.; Jackson, D.; Abudhahir, A.; Chermakani, N. Comparison of segmentation techniques for detection of defects in non-destructive testing images. In Proceedings of the 2014 International Conference on Electronics and Communication Systems (ICECS), Coimbatore, India, 13–14 February 2014. [Google Scholar]

- Falque, R.; Vidal-Calleja, T.; Dissanayake, G.; Miro, J.V. From the skin-depth equation to the inverse RFEC sensor model. In Proceedings of the 2016 14th International Conference on Control, Automation, Robotics and Vision (ICARCV), Phuket, Thailand, 13–15 November 2016. [Google Scholar]

- Luo, Q.W.; Shi, Y.B.; Wang, Z.G.; Zhang, W.; Zhang, Y. Approach for removing ghost-images in remote field eddy current testing of ferromagnetic pipes. Rev. Sci. Instrum. 2016, 87. [Google Scholar] [CrossRef] [PubMed]

- Falque, R.; Vidal-Calleja, T.; Valls Miro, J. Towards Inverse modeling of RFEC via an optimization based signal deconvolution. arXiV 2017. preprint. [Google Scholar]

- Zhang, Y. Electric and Magnetic Contributions and Defect Interactions in Remote Field Eddy Current Techniques. Ph.D. Thesis, Queen’s University, Kingston, ON, Canada, 1997. [Google Scholar]

- Falque, R.; Vidal-Calleja, T.; Valls Miro, J.; Lingnau, D.C.; Russell, D.E. Background segmentation to enhance remote field eddy current signals. In Proceedings of the Australasian Conference on Robotics and Automation (ACRA), Melbourne, Australia, 2–4 December 2014. [Google Scholar]

- Vidal-Calleja, T.; Miro, J.V.; Martin, F.; Lingnau, D.C.; Russell, D.E. Automatic detection and verification of pipeline construction features with multi-modal data. In Proceedings of the 2014 IEEE/RSJ International Conference on Intelligent Robots and Systems, Chicago, IL, USA, 14–18 September 2014. [Google Scholar]

- Hjorth, B. EEG analysis based on time domain properties. Electroencephalogr. Clin. Neurophysiol. 1970, 29, 306–310. [Google Scholar] [CrossRef]

- Rish, I. An Empirical Study of the Naive Bayes Classifier; Technical Report; IBM Research—Thomas J. Watson Research Center: Albany, NY, USA, 2001; pp. 41–46. [Google Scholar]

- Russell, Stuart, J.; Norvig, P. Artificial Intelligence: A Modern Approach; Prentice Hall: Bergen, NJ, USA, 2009; p. 1152. [Google Scholar]

- Cox, D.R. The regression analysis of binary sequences. J. R. Stat. Soc. Ser. B Methodol. 1958, 20, 215–242. [Google Scholar]

- Boyd, C.R.; Tolson, M.A.; Copes, W.S. Evaluating Trauma Care. J. Trauma Inj. Infect. Crit. Care 1987, 27, 370–378. [Google Scholar] [CrossRef]

- Truett, J.; Cornfield, J.; Kannel, W. A multivariate analysis of the risk of coronary heart disease in Framingham. J. Chronic Dis. 1967, 20, 511–524. [Google Scholar] [CrossRef]

- Harrell, F.E. Regression Modeling Strategies; Springer Series in Statistics; Springer: New York, NY, USA, 2001; p. 289. [Google Scholar]

- Ho, T.K. Random decision forests. In Proceedings of the 3rd International Conference on Document Analysis and Recognition, Montreal, QC, Canada, 14–16 August 1995. [Google Scholar]

- Hastie, T.; Tibshirani, R.; Friedman, J. The Elements of Statistical Learning; Number 2 in Springer Series in Statistics; Springer: New York, NY, USA, 2009; pp. 587–588. [Google Scholar]

- Cortes, C.; Vapnik, V. Support-vector networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Hofmann, T.; Schölkopf, B.; Smola, A.J. Kernel methods in machine learning. Ann. Stat. 2008, 36, 1171–1220. [Google Scholar] [CrossRef]

- Fernández-Delgado, M.; Cernadas, E.; Barro, S.; Amorim, D. Do we need hundreds of classifiers to solve real world classification problems? J. Mach. Learn. Res. 2014, 15, 3133–3181. [Google Scholar]

- Cormen, T.H.; Leiserson, C.E.; Rivest, R.; Stein, C. Introduction to Algorithms; MIT Press: Cambridge, MA, USA, 2009; p. 1312. [Google Scholar]

- Chan, T.; Vese, L. Active contours without edges. IEEE Trans. Image Process. 2001, 10, 266–277. [Google Scholar] [CrossRef] [PubMed]

- Goldberg, D.E. Genetic Algorithms in Search, Optimization and Machine Learning; Addison-Wesley Longman Publishing Co., Inc.: Boston, MA, USA, 1989; p. 372. [Google Scholar]

- Shi, L.; Sun, L.; Vidal Calleja, T.; Valls Miro, J. Kernel-specific gaussian process for predicting pipe wall thickness maps. In Proceedings of the Australasian Conference on Robotics and Automation, Canberra, Australia, 2–4 December 2015. [Google Scholar]

- Skinner, B.; Vidal-Calleja, T.; Valls Miro, J.; Bruijn, F.D.; Falque, R. 3D point cloud upsampling for accurate reconstruction of dense 2.5D thickness maps. In Proceedings of the Australasian Conference on Robotics and Automation (ACRA), Melbourne, Australia, 2–4 December 2014. [Google Scholar]

- Falque, R.; Vidal-Calleja, T.; Miro, J.V. Kidnapped laser-scanner for evaluation of RFEC tool. In Proceedings of the IEEE International Conference on Intelligent Robots and Systems (IROS), Hamburg, Germany, 28 September–2 October 2015. [Google Scholar]

- Cohen, J. A coefficient of agreement for nominal scales. Educ. Psychol. Meas. 1960, 20, 37–46. [Google Scholar] [CrossRef]

- Fawcett, T. An introduction to ROC analysis. Pattern Recognit. Lett. 2006, 27, 861–874. [Google Scholar] [CrossRef]

- Platt, J.C. Sequential Minimal Optimization: A Fast Algorithm for Training Support Vector Machines; Technical Report MSR-TR-98-14; Microsoft Research: Redmond, WA, USA, 1998; pp. 185–208. [Google Scholar]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Alpert, S.; Galun, M.; Brandt, A.; Basri, R. Image segmentation by probabilistic bottom-up aggregation and cue integration. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 34, 315–327. [Google Scholar] [CrossRef] [PubMed]

- Arbel, P.; Maire, M.; Fowlkes, C.; Malik, J. Contour detection and hierarchical image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 33, 1–20. [Google Scholar]

- Ulapane, N.; Alempijevic, A.; Vidal-Calleja, T.; Miro, J.V.; Rudd, J.; Roubal, M. Gaussian process for interpreting pulsed eddy current signals for ferromagnetic pipe profiling. In Proceedings of the 2014 IEEE 9th Conference on Industrial Electronics and Applications (ICIEA), Hangzhou, China, 9–11 June 2014. [Google Scholar]

- Felzenszwalb, P.F.; Huttenlocher, D.P. Efficient graph-based image segmentation. Int. J. Comput. Vis. 2004, 59, 1–26. [Google Scholar] [CrossRef]

- Long, J.; Shelhamer, E.; Darrell, T. Fully convolutional networks for semantic segmentation. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015. [Google Scholar]

- Sadiq, R.; Rajani, B.; Kleiner, Y. Probabilistic risk analysis of corrosion associated failures in cast iron water mains. Reliab. Eng. Syst. Saf. 2004, 86, 1–10. [Google Scholar] [CrossRef]

| Predicted Condition | |||

|---|---|---|---|

| true | false | ||

| true | true | ||

| condition | false | ||

| coef | Precision | Recall | Accuracy | |

|---|---|---|---|---|

| SVM | 1.00 | 1.00 | 1.00 | 1.00 |

| Random Forest | 1.00 | 1.00 | 1.00 | 1.00 |

| Naive Bayes | 0.69 | 0.78 | 0.96 | 0.71 |

| Logistic Regression | 0.72 | 0.67 | 0.95 | 0.67 |

| coef | Precision | Recall | Accuracy | |

|---|---|---|---|---|

| SVM | 1.00 | 0.21 | 0.97 | 0.34 |

| Random Forest | 0.70 | 0.42 | 0.97 | 0.51 |

| Naive Bayes | 0.06 | 0.86 | 0.49 | 0.04 |

| Logistic Regression | 0.21 | 0.01 | 0.93 | 0.01 |

| coef | Precision | Recall | Accuracy | |

|---|---|---|---|---|

| SVM | 0.96 | 0.83 | 0.90 | 0.84 |

| Random Forest | 0.87 | 0.87 | 0.92 | 0.80 |

| Logistic Regression | 0.84 | 0.89 | 0.90 | 0.79 |

| Naive Bayes | 0.74 | 0.87 | 0.84 | 0.69 |

| Predicted Condition | |||

|---|---|---|---|

| defects | outliers | ||

| true | defects | 298 | 59 |

| condition | outliers | 12 | 693 |

| coef | Precision | Recall | Accuracy | F-Score |

|---|---|---|---|---|

| Region Growing | 0.60 | 0.27 | 0.88 | 0.19 |

| Active Contour | 0.77 | 0.69 | 0.99 | 0.66 |

| coef | Precision | Recall | Accuracy | F-Score |

|---|---|---|---|---|

| Region Growing | 0.63 | 0.14 | 0.92 | 0.22 |

| Active Contour | 0.53 | 0.48 | 0.93 | 0.48 |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Falque, R.; Vidal-Calleja, T.; Miro, J.V. Defect Detection and Segmentation Framework for Remote Field Eddy Current Sensor Data. Sensors 2017, 17, 2276. https://doi.org/10.3390/s17102276

Falque R, Vidal-Calleja T, Miro JV. Defect Detection and Segmentation Framework for Remote Field Eddy Current Sensor Data. Sensors. 2017; 17(10):2276. https://doi.org/10.3390/s17102276

Chicago/Turabian StyleFalque, Raphael, Teresa Vidal-Calleja, and Jaime Valls Miro. 2017. "Defect Detection and Segmentation Framework for Remote Field Eddy Current Sensor Data" Sensors 17, no. 10: 2276. https://doi.org/10.3390/s17102276

APA StyleFalque, R., Vidal-Calleja, T., & Miro, J. V. (2017). Defect Detection and Segmentation Framework for Remote Field Eddy Current Sensor Data. Sensors, 17(10), 2276. https://doi.org/10.3390/s17102276