Abstract

The free energy principle from neuroscience has recently gained traction as one of the most prominent brain theories that can emulate the brain’s perception and action in a bio-inspired manner. This renders the theory with the potential to hold the key for general artificial intelligence. Leveraging this potential, this paper aims to bridge the gap between neuroscience and robotics by reformulating an FEP-based inference scheme—Dynamic Expectation Maximization—into an algorithm that can perform simultaneous state, input, parameter, and noise hyperparameter estimation of any stable linear state space system subjected to colored noises. The resulting estimator was proved to be of the form of an augmented coupled linear estimator. Using this mathematical formulation, we proved that the estimation steps have theoretical guarantees of convergence. The algorithm was rigorously tested in simulation on a wide variety of linear systems with colored noises. The paper concludes by demonstrating the superior performance of DEM for parameter estimation under colored noise in simulation, when compared to the state-of-the-art estimators like Sub Space method, Prediction Error Minimization (PEM), and Expectation Maximization (EM) algorithm. These results contribute to the applicability of DEM as a robust learning algorithm for safe robotic applications.

1. Introduction

The free energy principle (FEP) has emerged from neuroscience as a unifying theory of the brain [1] and has begun to guide the search for a brain-inspired learning algorithm for robots. Many attempts have been made in this direction, including the state and input observer [2,3], the adaptive controller for robot manipulators [4,5,6], the body perception and action scheme for humanoid robots [7], the robot navigation of ground robots [8] etc. However, the design of a parameter estimation algorithm for linear systems with colored noise remains unexplored. Since the design of an accurate parameter estimator for dynamic systems sits at the core of control systems and robotics, the reformulation of FEP into a brain-inspired estimation algorithm has an influential impact on the industry and applied robotics.

A wide range of estimators have been proposed in the literature for linear time-invariant (LTI) systems [9], including the blind system identification [10,11,12]. However, most of them assume the noises to be temporally uncorrelated (white noise), which is often violated in practice. This results in biased estimation for the least-square (LS)-based methods [13], and an inaccurate convergence for the iterative methods [14]. Although many attempts have been made to solve this problem, mainly through bias compensation methods [15,16], none of them perform state, input, parameter, and noise estimation for systems with colored noises [17]. The only method that does it is the Dynamic Expectation Maximization (DEM) [18] algorithm, which uses FEP to invert a highly nonlinear and hierarchical brain model from sensory data. However, the disconnect between neuroscience and control system literature hinders the wide use of this method for practical robotics applications. Although FEP-based tools have already been applied to practical robotics applications [2,3,4,5,6,7,8,19,20], there is a gap in the literature on the applications of DEM owing to the mathematical formidability of the theory and its lack of formalism in the control systems domain. Therefore, it is important to reformulate DEM for the control systems audience. While DEM from computational neuroscience focuses on emulating the brain’s perception through the hierarchical abstraction of a number of interacting non-linear dynamic systems, our work focuses on reformulating it into a blind system-identification algorithm for an LTI system with colored noise, which is a well-known challenge in robotics. In this attempt, we keep all the brain-related approximations intact, thereby aiming for a biologically plausible parameter estimation algorithm.

According to an FEP proposed by Karl Friston, the brain’s inference mechanism is a gradient ascent over its free energy, where free energy is the information-theoretic measure that bounds the brain’s sensory surprisal [21]. FEP emerges as a unified brain theory [22] by providing a mathematical description of brain functions [23]; unifying action and perception [24]; connecting physiological constructs like memory, attention, value, reinforcement, and salience [23]; and remaining consistent with Freudian ideas [25]. Similarities of FEP with reinforcement learning [26], neural networks [21,27], PID controller [28], Kalman Filter [2] and active learning [24] open up possibilities for biologically plausible parameter estimation algorithms. Although FEP emerged as a brain theory, the recent works have pushed the boundaries towards systems that survive over time, such as social and cultural dynamics. Notable works include the variational approach to culture [29], collective intelligence [30], cumulative cultural evolution [31], etc.

Numerous methods have been proposed based on the FEP framework. Predictive coding [32] models perception through a hierarchy of dynamical systems [27] with the brain’s priors at the top, minimizing the prediction error at each level of the hierarchy. Bayesian message passing algorithms [33,34] use similar ideas for belief propagation. Active inference [24,35] uses FEP to model the brain’s action and perception under one framework. On the perception side, there are two main type of methods to deal with dynamic systems: variational filtering and generalized filtering. Variational filtering [18,36] uses mean-field approximation (conditional independence between densities), whereas generalized filtering [37] does not. DEM [18] is a type of variational filtering that uses a Laplace approximation [38] (a fixed-form assumption for the conditional density of variables), whereas [36] uses ensemble dynamics to model the free form of the conditional densities. We focus on DEM for this work.

DEM is an FEP-based variational inference algorithm that models the brain’s inference process as a maximization of its free energy for state, input, parameter, and noise estimation from data. Although the method shows high similarity to the variational inference [39], the key difference is in the use of generalized coordinates, which enables DEM to track the evolution of the trajectory of states instead of just the point estimates. This renders DEM with the capability of gracefully handling colored noises, a feature that conventional point estimators such as the Kalman Filter (KF) lacks. The modeling of noise color as analytic using generalized coordinates results in an improved state estimation under colored noise for LTI systems [2,3] and for nonlinear filtering [40], which directly improves the parameter estimation accuracy, making DEM a topic of interest [20]. This work directly impacts various sub-domains of robotics community: input estimation to the industrial automation community for fault detection systems, state estimation to the control systems community, parameter estimation to the system identification community, and hyperparameter estimation to the signal processing community.

With this paper, we aim to present DEM to the robotics audience as a robot learning algorithm for the blind system identification of LTI systems with colored noise. We elaborate on various components of DEM that are most relevant to the robotics community: (1) the derivation of the free energy objectives from Bayesian principles, (2) the modeling of colored noise using generalized coordinates, and (3) the simplification of update rules for LTI systems with colored noise. We reformulate DEM for LTI systems into a form that is widely used in the robotics domain and use this mathematical formulations to prove that the estimation steps of DEM have theoretical guarantees of convergence [41]. In our prior work, we have discussed the stability conditions of our DEM-based linear state and input observer design [2]. This convergence guarantees and stability criteria are essential for robot safety while in operation and is of high relevance to the robotics community. Through extensive simulations on a range of random systems, we show that DEM is a competitive estimator when compared to other benchmarks in the control systems domain. The core contributions of the paper are:

- Reformulating DEM into an estimation algorithm for LTI systems with colored noise (Section 12).

- Proving that the estimator has theoretical guarantees of convergence for the estimation steps (Section 14).

- Proving through rigorous simulation that DEM outperforms the state-of-the-art system identification methods for parameter estimation under colored noise (Section 16).

2. Problem Statement

Consider the linear plant dynamics given in Equation (1), where , and are constant system matrices, is the hidden state, is the input and is the output.

Here, and represent the process and measurement noise, respectively. The notations of the plant are denoted in boldface, whereas its estimates are denoted in nonboldface letters. The noises in this paper are generated through the convolution of white noise with a Gaussian kernel. The use of colored noise is motivated by the fact that in robotics, the unmodelled dynamics and the non-linearity errors can enter the plant dynamics through the noise terms, thereby violating the white noise assumption in practice [20].

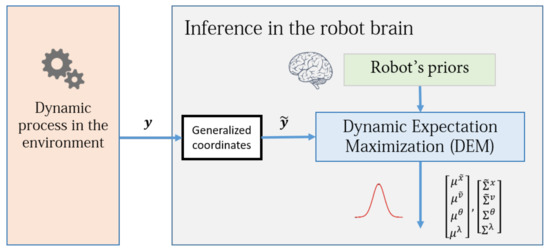

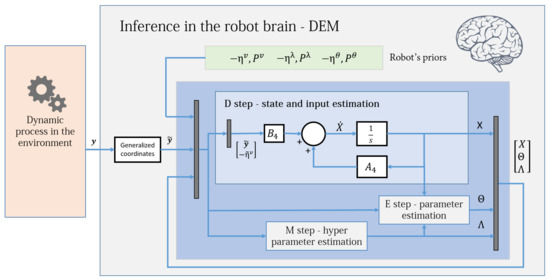

The goal of this paper is to reformulate DEM for an LTI system such that given the output of the system , the estimator computes the associated states x, inputs v, parameter containing the matrices and C, hyperparameters that model the noise precision , and the uncertainties of all its estimates , with the help of the prior (learned) knowledge encoded in the robot brain, such that the estimate best predicts the data. The schematic of the proposed robot brain’s inference process is given in Figure 1.

Figure 1.

A simple block diagram of the robot brain’s inference process using DEM. It uses the measurement data generated from the environment (also called generative process). DEM enables the direct fusion of the prior information into the inference process. The concept of generalized coordinates will be detailed in Section 3.1.

3. Preliminaries

To reformulate DEM into an estimation algorithm for LTI systems, this section introduces the key concepts and terminologies that are familiar to the FEP audience.

3.1. Generative Model

The generative model (plant model) is the robot brain’s estimate of the generative process in the environment that generated data. The robot brain infers this model via model evidencing from the measurement data. The key idea behind DEM to deal with colored noise is to model the trajectory of the time-varying components (states, for example) using generalized coordinates. The use of generalized coordinates is new to the control systems literature and is different from the familiar definition in classical mechanics. In mechanics, it is the minimum number of independent coordinates that define the configuration of a system, whereas in DEM, it is the vector defining the motion of a point using its higher derivatives. In DEM, the emphasis is on tracking the trajectories of states, inputs and outputs instead of their point estimates. The states are expressed in generalized coordinates using its higher-order derivatives, i.e., . The generalized state vector with an order of generalized motion of p will have terms, with the copy of the state vector as the first term, followed by its p derivatives. The variables in generalized coordinates are denoted by a tilde, and its components (higher derivatives) are denoted by primes. The evolution of states is written as:

The colored noises are modeled such that the covariance of noise derivatives and are well defined (to be explained in Section 3.3). The generative model representing the system is compactly written as:

where , performs the block derivative operation, equivalent to shifting up all components in generalized coordinates by one block. A similar definition holds for (appears later) with size , where p and d are the order of generalized motion of states and inputs, respectively. Here, , , and , where ⊗ is the Kronecker tensor product.

3.2. Parameters and Hyperparameters

To simplify the parameter estimation steps of DEM for LTI systems (Section 13.2) and to facilitate the convergence proof (Section 14), we introduce an alternative generative model which is the direct reformulation of Equation (3) given by:

where

Throughout the paper, represents the set of parameters, whereas represents the hyperparameters that define the precision matrices (inverse covariance matrices) of the observation noise and the process noise. For noise modelling, we parametrize the noise precisions using an exponential relation with the hyperparameters, given by [18]:

where and represent constant matrices encoding how different noises are correlated. Here and are the inverse covariances or precisions of the noises. This parameterization ensures the selection of positive definite noise precision matrices through hyperparameter updates. We assume zero cross-correlation between w and z. We also assume that and are time-invariant.

3.3. Colored Noise

The next step towards handling the colored noise is to embed the noise correlation between different components in the generalized noises and given in Equation (3). DEM uses generalized coordinates, which models a correlation between noise derivatives through the temporal precision matrix S (inverse of covariance matrix). The generalized noise correlation is assumed to be due to a Gaussian filter, where S can be calculated as [18]:

where (from the Gaussian kernel) denotes the noise smoothness level. While denotes white noise, nonzero denotes colored noise. The covariance between noise derivatives increases exponentially with the order of noise derivatives. Simulations show that derivatives above 6 can be neglected [18]. The generalized noise precision matrices are given by and , where and are the inverse noise covariances.

3.4. Generalized Motion of the Outputs and Noises

The generalized motion of output is practically expensive and inaccessible to robotics systems. Moreover, most sensors such as encoders operate with discrete measurements. Therefore, as a pre-processing step for estimation (refer to Figure 1), should be computed from discrete measurements. The goal is to first express the discrete measurements as a function of output derivatives and then invert the function to compute the derivatives from the discrete measurements. Given the p temporal derivatives at time t, the p output sequence surrounding can be approximated using Taylor series as follows [18]:

where , is the smallest integer function and has the size . Therefore, generalized motion of output at time t is:

Using embeds more temporal information about the plant into the data in the form of conditional trajectories, with the disadvantage of a time latency of in estimation. For robotic systems with high sampling rates, this latency in estimation is negligible [3,20]. The next section employs this generalized output along with the generative model for observer designs.

3.5. Notations and Conventions

Throughout the paper, the superscript notation will be used to represent the quantity being referred to, and the subscript notation will be used to represent the derivative operation. For example, represents the derivative of the generalized precision of the process noise w with respect to the hyperparameters . The tilde operator is used to represent a quantity in its generalized coordinates, whereas the bar operator is used to represent the time integral of a quantity. All the probability distributions will be represented by the notation, whereas its expectation will be represented by the notation.

4. Free Energy Objectives

With the preliminaries in place, we can build up towards the complete DEM algorithm. Eventually, in Section 8 and Section 12, we will see that it is an optimization algorithm that finds the best estimates for states, inputs, parameters, and hyperparameters for given measurement data. This result is achieved by optimizing two objective functions, which are the core objectives under the entire Free Energy Principle: the free energy and the free action [1]. Here we derive and simplify this objective.

The derivation starts from the fundamentals of Variational Inference (VI) [39]. In VI, the posterior distribution of parameter , given the measurement y, is expressed as:

However, the marginalization over to calculate is often intractable because the search space of is large. A widely used technique is to introduce a variational distribution known as the recognition density, which acts as an approximate representation of the posterior distribution with . A common method used among variational Bayes algorithms is to minimize the Kullback–Leibler (KL) divergence between both the distributions, defined as:

where represents the expectation over . Substituting Equation (10) in Equation (11) and using yields:

The rearrangement of terms yield:

where is the log-evidence, is the internal energy and is the entropy of the density. The free energy term in Equation (13) is defined as the sum of an energy term and its entropy. It acts as the lower bound on the log-evidence because the KL divergence term is always positive. The maximization of free energy minimizes the divergence term in Equation (13) because the log-evidence is independent of , thereby rendering the variational density as a close approximation of . Therefore, the difficult evaluation of an intractable integral term in Equation (10) is converted into a much simpler optimization problem of maximizing the free energy. This reduces the problem of inference to a direct optimization of its free energy objectives and is the fundamental idea behind variation inference. The free energy term in Equation (13) is equivalent to the Evidence Lower Bound (ELBO) [39]. It can be simplified as:

However, when the parameter set to be estimated includes both the time-variant and the time-invariant parameters, the free action is used as the objective function to be maximized. The free action is defined as the time integral of the free energy and is given by:

where is called the variational free energy (VFE).

The next sections will deal with two main assumptions used in DEM to simplify the free energy objectives, namely the Laplace approximation and the mean-field assumption. The aim is to derive the simplified free energy objectives for an LTI system under these assumptions.

5. Laplace Approximation

The first common approach to simplify the free energy objective is to assume the variational density to be Gaussian in nature with variational parameters and as its mode and covariance, respectively, [38]. Here, the inverse of (denoted by and known as the conditional precision), represents the confidence in estimation. The recognition density takes the following form:

There are two main advantages with this approximation:

- It simplifies the internal energy expression ,

- It facilitates an easy computation of the conditional precision (derived in Section 7.2) as the negative curvature of the internal energy at it’s mode .

Therefore, the main aim of this section is to simplify the expression for internal energy using the Laplace approximation, for an LTI system with its states, inputs, and outputs expressed in generalized coordinates.

The internal energy in Equation (13) can be expressed as the sum of log-likelihood and prior terms as:

The parameter set includes two types of parameters:

- the states and inputs, which are time-varying and therefore expressed in generalized coordinates,

- the parameters and hyperparameters, which are time-invariant and not expressed in generalized coordinates.

Equation (17) can be simplified by assuming the conditional independence of and with and . This factorization separates the deterministic quantities from the stochastic ones, thereby providing a separation of temporal scales. This is one of the core ideas behind DEM, which will be detailed in Section 6. With the redefinition of , Equation (17) simplifies to:

DEM combines the new sensory information y coming from the environment with the robot brain’s priors (refer to Figure 1) in a Bayesian fashion, through the internal energy expression given in Equation (18). The next sections will deal with simplifying and its action by first modeling the probability distributions for the generative model and the priors.

5.1. Generative Model

The probability distribution , given in Equation (18), represents the generative model that predicts the output from the current parameter estimates. This probability can be assumed to be Gaussian-distributed, centered around the model’s output prediction (from Equation (3)), with the same uncertainty as that of the measurement noise . The distribution becomes:

Since the robot cannot directly measure in Equation (3), we track the motion of the generalized states through the approximation . The prediction for motion is with an uncertainty of . The Gaussian distribution becomes:

5.2. Prior Distributions

The remaining distributions and are the priors of the robot brain that can be transferred from prior (learned) experiences to the inference process. Similar to the previous section, a Gaussian prior is placed over the inputs as well, with mean and prior covariance , as:

The prior distribution of parameters is assumed to be a Gaussian-centred around the prior parameter value , with the prior covariance :

Similarly, a Gaussian prior is placed over the hyperparameters :

A higher value of and represents the robot’s higher confidence in its prior estimates , , and , respectively.

5.3. Simplification of the Internal Energy Action

This section aims at using the distributions from the previous sections to simplify . The logarithm of a Gaussian prior after dropping constants takes the general form:

Therefore, substituting Equations (19)–(23) in Equation (18) and simplifying it using the prediction error terms and , after dropping constants, yields:

where , and . Here is the block diagonal operator. Grouping the internal energy terms of the temporal and nontemporal parameters yields:

where

Summing up the internal energy of all the temporal parameters over time yields the action of internal energy as follows:

It can be observed from Equation (28) that the robot’s priors enter the free energy objective through the internal energy term. Intuitively, this can be seen as the direct influence of the robot’s prior beliefs on the inference process through the mismatches in the robot’s predictions. These weighed prediction errors drive the robot’s desire to maintain an equilibrium between its internal model and the generative process in the environment. A large mismatch between the robot’s predictions and the data results in a large prediction error, which gets precision-weighted and enters the free energy objective through its internal energy.

6. Mean-Field Approximation

The second widely used assumption for the simplification of free energy objectives is the factorization of parameters into independent subdensities for the recognition density [18], given by:

This approximation assumes the conditional independence between subdensities (states and parameters, for example). The subdensities are assumed to interact with each other only through the mean-field quantities. The strong biological plausibility of this approximation in terms of biological brain’s inference process is delineated in [27]. The main advantage of this approximation is the simplification of in Equation (15). However, the mathematical proof for this simplification is missing from the DEM literature [18]. Therefore, this section aims to fill this gap by deriving these proofs by delineating all the intermediate assumptions.

6.1. Simplification of the Entropy Action

The entropy of the density in Equation (14), given by , can be simplified for all parameters as:

Substituting the mean-field assumption given in Equation (29) in Equation (30) and using the property of normalized recognition densities yields:

where , and . We place the Laplace approximation over the marginals of the recognition densities of all parameters as:

The recognition densities given in Equation (32) might look similar to the priors distributions given in Equations (21)–(23), mainly due to the common Gaussian distribution. The prior distributions are centered around the prior mean and prior covariances, whereas the recognition density is centered around the mean and conditional covariance of the i-th parameter set.

The action of entropy can be calculated by substituting Equation (32) in Equation (31) and summing up the entropy terms of time dependent parameters with respect to time. Upon dropping the constant terms, it simplifies to:

Equation (33) shows how the uncertainty in the robot’s inference directly enters the objective , through .

6.2. Mean-Field Terms

Given the simplified expressions for and , the next step towards finding in Equation (15) is to evaluate given by:

can be simplified using the second-degree Taylor series expansion near the mean as:

where we use the shorthand and . This approximation of the internal energy has nontrivial implications in terms of the biological brain’s decision-making process [21]. The second order approximation is justified because the Laplace and mean-field approximations entail an internal energy that is quadratic in , , and , as given in Equation (25), thereby reducing all its higher-order derivatives to zero. Moreover, the higher derivatives of with respect to , reduce to zero because of the assumptions made in Section 9. By differentiating Equation (25) at the mean , can be found to be all zeroes, which upon substitution in Equation (35) simplifies to:

Substituting it in Equation (34), upon simplification yields:

where

Since the parameters are assumed to be independent of each other, the covariance between them drops to zero, resulting in:

where

is defined as the mean-field term. Equation (38) shows that is the sum of internal energy action and the mean field terms. The simplification of is one of the main advantages of using mean-field approximation. However, this approximation can be relaxed to build Generalized Filtering [37,42], which is mainly relevant to nonlinear identification. It involves the modeling of parameters and hyperparameters in generalized coordinates (together with states) for online system identification. However, in this work, we take a simpler approach.

7. Simplified Free Energy Objectives

This section aims at simplifying the free energy objectives using the results from Section 5 and Section 6.

7.1. Simplification of Free Action

is simplified by substituting Equations (28), (33) and (38) into Equation (15), yielding:

We highlight three important terms in Equation (40): the combined prediction error of (generalized) outputs, inputs, and states , the log determinant of noise precision , and the mean field term . These terms will be used rigorously in the rest of the document.

7.2. Simplification of the Parameter Precisions

One of the main advantages of Laplace approximation is the simple evaluation of the covariance associated with the parameter estimation. This is achieved by setting the gradient of the free action with respect to individual parameter covariance as zero. The free action gradients with respect to covariance of paramaters is given by:

The optimal parameter covariance is the covariance that maximizes the free action with zero gradients. Assuming that the parameter covariances are time-invariant, and equating the gradients to zero yields , resulting in:

From Equation (42), it is clear that the precision of parameters can be estimated just by evaluating the negative curvature of the internal energy at the conditional mode. These precision values denote the confidence of the estimator in the parameter estimate. Ideally, the parameter precision increases as the estimation process proceeds. Intuitively, the robot is more confident about its estimates (higher precision) when its predictions on the sensory data show the least variance.

7.3. Free Action at Optimal Precision

Substituting Equation (42) into Equation (39) at optimal precisions results in constant mean field terms. Therefore, the mean field terms in the free action given in Equation (40), reduces to a constant under the optimal precision given by Equation (42). Therefore, the free action at optimal precision for an LTI system reduces to:

Note that the free action is not a function of the latent variables () but the sufficient statistics () of the approximate posterior. For example, the weighted output prediction error term of is , where denotes the evaluation at . We regroup the time-dependent components into one variable , and use capital letters for the mean estimates of time-independent components (). Note that , and are part of the generative model and are the mean estimate of the components of plant dynamics.

7.4. Equivalence with the EM Algorithm

One of the most popular approaches to solve the Expectiation Maximization (EM) algorithm for state space models is to use the Maximum Likelihood Estimation (MLE), where the objective function is the log-likelihood of data, given by [43]:

Comparing the objective functions of EM and DEM given by Equation (44) and Equation (43), respectively, DEM is equivalent to EM when:

- the mean field terms are neglected,

- the generalized motion is not considered, and

- the robot’s priors on are not considered.

Therefore, DEM can be considered as a generalized version of the EM algorithm with additional capabilities.

The free action at optimal precision (Equation (43)) is the sum of prediction errors (PE) and entropy of generalized states, parameters, noise, and hyperparameters and is independent of mean-field terms. Although the mean-field terms turns into a constant at optimal precision, their gradients do not. This property is leveraged in Section 8 to account for the uncertainties in the parameter estimation during state estimation and vice versa, through the gradient of mean-field terms.

8. Update Rules for Estimation

The Free Energy objectives (Section 4), together with the two simplifying approximations (Section 5 and Section 6), will be combined with an optimization procedure to form the ultimate DEM algorithm. The optimization procedure itself consists of the following two sets of update rules:

- a gradient ascent over its free action for the time invariant parameters and ,

- a gradient ascent over its free energy F for the time varying parameters X,

where F and are related through . The core idea is that the time varying parameters and can be estimated online from the robot’s instantaneous free energy, whereas the time-invariant parameters and can be estimated from its free action after observing a sequence of data. Accordingly, the update rules for both the gradient ascends are given by

for the parameter update of the time invariant parameters and

for the time=varying parameters, where is the learning rate. The presence of term in Equation (46) differentiates the update rule from the general gradient ascent equation used in machine learning. This is to accommodate the boundary condition that when F is maximized, and . In other words, when the free energy is maximized, the motion of the generalized states becomes their generalized motion [44]. However, the update equations for the time-invariant parameters, and , do not require the term. Therefore, the update equations at time, parameter update step, and hyperparameter update step, after regrouping the (generalized) states and inputs as , is given by:

where . Note that the gradient update rules are written not on the latent variables ( and ), but on their mean estimates ( and ). Since the update rules should be implemented in the discrete domain for robotics applications, Equation (47) is discretized under local linearization with the corresponding Jacobians as , and . The (generalized) state and input update at time t, parameter update at step a and hyperparameter update at step b are given by:

Equation (48) shows that the update rules are dependent only on the gradients and curvatures of the free energy objectives.

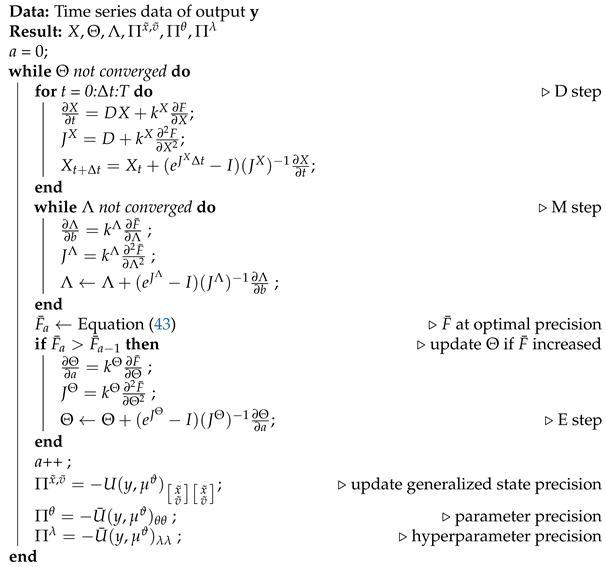

8.1. The DEM Algorithm

The DEM algorithm is an iterative model inversion algorithm that uses Equations (42) and (48) to perform estimation on causal dynamic systems. It can be expressed using three steps:

- D step: (generalized) state and input estimation,

- E step: parameter estimation,

- M step: noise hyperparameter estimation,

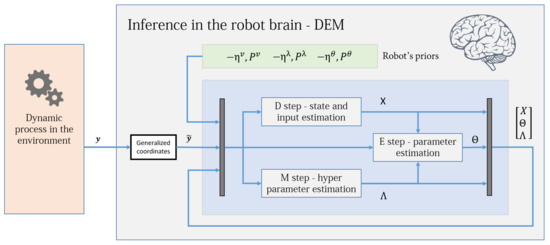

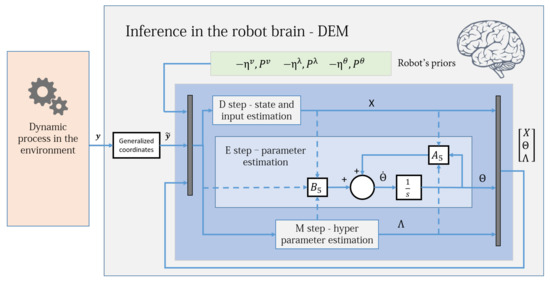

a nomenclature that is similar to the EM algorithm. Figure 2 shows an intuitive block diagram that demonstrates the inference process of DEM as a coupled dynamics between D, E, and M steps. The data from the environment and the robot brain’s prior distributions are used to infer the generative process. The pseudocode given in Algorithm 1 demonstrates how DEM performs estimation using only the gradient and curvatures of the free energy objectives. The next sections will focus on deriving the algebraic expressions for these quantities.

Figure 2.

The DEM algorithm is represented using three coupled steps: D, E, and M steps. The algorithm combines the data from the environment with the robot’s prior beliefs to infer the states, inputs, parameters and hyperparameters of the system. For each parameter update in the E step, the D step updates the (generalized) states and inputs for all times instances, and the M step iterates until hyperparameter convergence, as demonstrated in Algorithm 1. The dynamic process is the generative model in Section 3.1, the priors are the distributions given in Section 5.2 and the generalized coordinates block is defined in Section 3.4. Section 13 will elaborate on the D, E, and M blocks.

8.2. Updated Equations for Estimation

The free energy gradients in Equation (47) can be evaluated by differentiating Equation (40) with . Substituting the resulting expression in Equation (47), upon simplification yields:

where is the gradient of the prediction error with respect to , is the gradient of the mean field term of with respect to , and is the gradient of the log-determinant of the precision matrix with respect to . From Equation (49), the Jacobians that are required for the updates given in Equation (48) can be evaluated as:

The Jacobians are employed in different loops corresponding to the D, E, and M steps in the Algorithm 1.

| Algorithm 1: Dynamics Expectation Maximization |

|

8.3. Update Equation for Precision of Estimates

The uncertainty in estimation is represented by the inverse of precision matrices . Differentiating Equations (25) and (28) twice and substituting it into Equation (42) upon simplification yield:

The only unknowns in the DEM update equations given by Equations (49)–(51) are the gradients and curvatures of E, W, and G. Section 9, Section 10 and Section 11 will deal with evaluating the simplified algebraic expressions for these gradients and curvatures. Using these simplifications, Section 12 will proceed towards expanding Algorithm 1.

9. Gradients of (Log Determinant of) Precision

This section aims at evaluating the gradients of the log determinant of noise precision () that are required for the hyperparameter update rules of the DEM algorithm. The precision matrix for hyperparameter estimation is modeled as:

where S is the noise smoothness matrix given by Equation (7) and are the constant matrices given in Equation (6). Here the precision matrix is parametrized using , which is the only unknown in Equation (52). Therefore, the log determinant of precision and its gradients can be written as:

where is the element in . The gradients of precision in Equation (53) can be evaluated by differentiating Equation (52) as:

Substituting Equations (52) and (54) in Equation (53) yields:

where is the identity matrix of size , which is the size of the matrix. and are modeled to have an exponential relation with , so that any updates on would result in positive semi-definite precision matrices. However, this relation entails an infinitely differentiable precision matrix with respect to , increasing the computational complexity of the algorithm. Therefore, an approximation is made by forcefully setting , while maintaining the exponential relation between and , thereby ensuring that the optimization process proceeds along the correct gradients . Together with Equation (54), this approximation results in . This assumption has two direct consequences:

- It simplifies the precision update rule for hyperparameters given in Equation (51).

10. Gradients of Prediction Error

As opposed to the result above, the gradients of the prediction errors are not constant, as is shown in this section.

10.1. Gradients of Prediction Error along (Generalized) States

The error in prediction of (generalized) outputs, inputs and states is represented together by , which makes up the precision weighted prediction error defined by , where . The error and its gradient are:

The gradient of prediction error with respect to X can be simplified as:

where and . Since is linear in X, differentiating Equation (59) with respect to X yields a simple expression for curvature as:

10.2. Gradients of Prediction Error along Parameters

To evaluate the gradients of prediction error along the parameters , the reformulated definition of is used:

where M and N are given in Equation (5). This is to ensure that the variable can be separated out of the expression for such that it is linear in as follows:

Since is linear in , differentiating Equation (62) with respect to yields a simple expression for as:

10.3. Gradients of Prediction Error along Hyperparameters

The gradients of prediction error along the hyperparameters is simpler, and is given by , which upon using , gives:

In summary, Equations (59), (62) and (64) represent the gradients of the prediction error term, whereas Equations (60), (63) and (64) represent its curvatures. The next section will deal with evaluating the analytic expressions for all the gradients and curvatures of the mean field term.

11. Gradients of Mean Field Terms

This section aims to derive the analytic expressions for the mean field terms and their gradients: and .

11.1. Gradients of Mean Field Terms along Hyperparameters

In this section, we prove that all the gradients and curvatures of (namely and ) are zeroes. The mean field term for hyperparameters can be expressed as:

To compute the gradients of , we need the curvature of internal energy with respect to . This can be evaluated by first differentiating Equation (25) with respect to and evaluating it at , which yields:

where is given by Equation (55). Upon further differentiation, we get:

The assumption of applied to Equation (67) yields:

which contains only constants. Therefore, the assumption of reduces all the gradients and curvatures of mean field terms of to zeros:

Since the internal energy given in Equation (25) is quadratic in , and since , all the gradients and curvatures of the mean field term of with respect to itself are zeros:

11.2. Gradients of Mean Field Terms along Generalized States

The mean-field term of the combined generalized states X can be expressed as:

The curvature of internal energy with respect to X can be calculated by differentiating Equation (25) with respect to X twice, resulting in . Substituting it in Equation (71) upon differentiation with yields:

where the gradient of the mean field with respect to is given by . Similarly, the elements of the curvature matrix of the mean field term with respect to is given by:

The gradient and curvature of the mean field term of X with respect to can be evaluated as:

where is a component of . Here the curvature vanishes due to the assumption that .

11.3. Gradients of Mean Field Terms along Parameters

The mean-field term of the parameters can be expressed as

Differentiating Equation (75) with X and substituting Equation (61) in it yields the gradient as:

and the elements of the curvature matrix as:

Differentiating Equation (75) with respect to yields the gradient and curvature as:

Here, vanishes due to the assumption that .

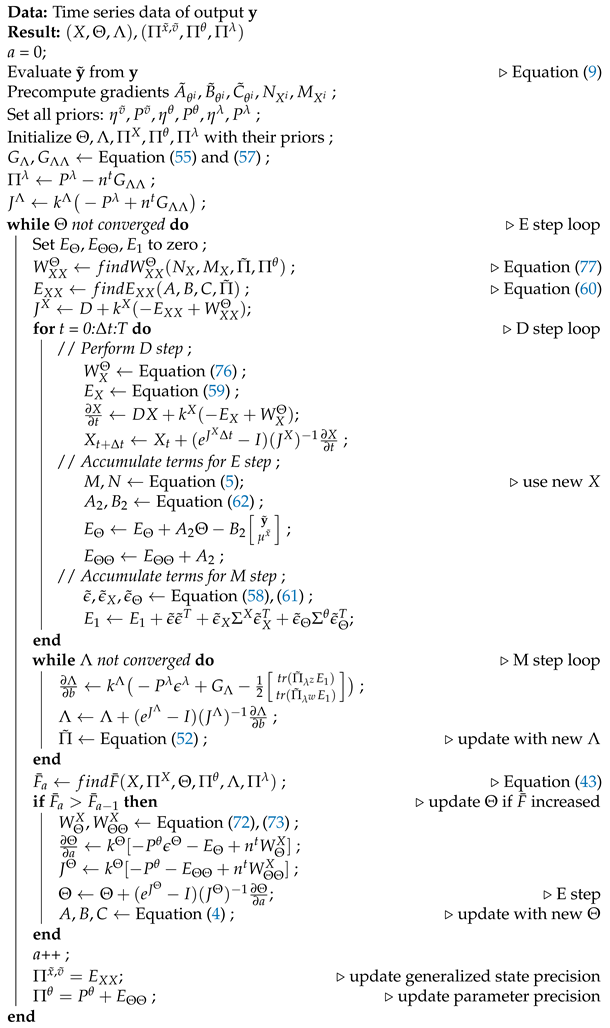

12. The Complete DEM Algorithm

By combining the gradients found from Section 9, Section 10, and Section 11 with the Algorithm 1, we can finalize the full DEM algorithm so that it can iteratively compute the estimates and the associated precisions from data.

12.1. DEM Estimates

The main equations that are required to perform the update rules of DEM given in Equation (48) can be summarized as:

where are given by Equations (55), (57), (59), (60), (62)–(64), (72)–(74), (76)–(78), respectively. The hyperparameter update rule can be further simplified to reduce the computational complexity as:

where

Substituting Equation (57) to the expression for in Equation (79) yields:

which is independent of . This reduces the algorithm’s computational complexity, as can now be pre-computed.

12.2. Precision of Estimates

This section simplifies the precision for DEM’s estimates for an LTI system. The confidence in the estimate of (generalized) states and inputs can be simplified using Equations (51) and (60) as:

From Equation (82), the precisions for state and input estimation are and , respectively. The cross-correlation between the (generalized) states and inputs are given by . Since is independent of X, it can be updated outside the D step.

Combining the results of Equations (51) and (63) yields the precision of parameter estimates , which is independent of , as:

From Equations (51), (57) and (64), the precision of hyperparameter estimation is:

which is a constant and hence is never updated in the algorithm. In conclusion, the estimation using Equation (79), along with the precision of these estimates given by Equations (82)–(84) completely define the DEM algorithm for an LTI system with colored noises. The complete DEM algorithm is given in Algorithm 2.

| Algorithm 2: Dynamics Expectation Maximization |

|

13. Translation into Simplified Mathematical Form

Although the pseudocode derived in the previous sections is sufficient to replicate the DEM algorithm for an LTI system with colored noise, it is not sufficient to analyze DEM using the standard control systems tools for stability checks, convergence, etc. Therefore, in this section we translate the algorithm into a simplified mathematical form that control engineers can easily analyze. The following subsections aim at converting the DEM updates into a coupled linear system.

13.1. State and Input Estimation as a Linear Observer

This section deals with reformulating the D step of DEM for an LTI system as a (generalized) state and input observer. Substituting Equation (59) in Equation (79) with a learning rate of yields [2]:

We now aim to mathematically prove that the (generalized) state and input observer of DEM can be reduced into an augmented LTI system, for which an exact discretization can be performed. We proceed by simplifying the mean field terms in Equation (39) as:

where,

Since M and N can be obtained from linear transformation of X, and can be written as:

where and are matrices with elements 0 and 1. This leads to the mean field term being expressed as a linear transformation of X:

Substituting Equation (89) into Equation (85) simplifies the observer as:

The (generalized) state and input observer given by Equation (90) is of the form of an augmented LTI system. Therefore, an exact discretization can be used to solve it without using the second order gradient as given in Equation (48). This reduces the algorithm’s computational complexity because and for calculation are no longer necessary. Figure 3 shows the simplified control diagram of the observer. The stability condition of this observer (under known and ) and its similarity with the Kalman Filter is discussed in our prior work [2]. To evaluate , one could either use Equation (89) or Equation (76). Equation (90) is derived mainly for simplification and exact discretization.

Figure 3.

The DEM algorithm for an LTI system, with the D step simplified as an augmented LTI system given by Equation (90). The D-step block corresponds to the D-step loop in Algorithm 2 and operates at a different frequency from the E and M blocks.

13.2. Parameter Estimation—System Identification

This section aims to mathematically prove that the E step can be reduced to an augmented LTI system, for which an exact discretization can be performed. We proceed by first simplifying the parameter update equation given in Equation (79):

Grouping all using Equation (72) yields:

where

Here and are constant matrices with elements 0 and 1. Substituting from Equation (93) in Equation (92) yields:

Substituting Equations (62) and (94) in Equation (91), simplifies the parameter update equation to

where and are given in Equation (62). Equation (95) is a linear differential equation in for which an exact discretization can be computed. For each update in Algorithm 2, and are also updated, consequently updating and . Therefore, Equation (95) is equivalent to a linear time-varying system. Figure 4 shows the simplified parameter estimation step of the robot brain. To evaluate , one could use either Equation (72) or Equation (94). Equation (94) was derived mainly for the exact discretization and for the convergence proof in Section 14.

Figure 4.

The DEM algorithm for an LTI system, with the E step simplified as an augmented LTI system given by Equation (95). The E-step block corresponds to the E-step outer loop in Algorithm 2 and operates at a different frequency when compared to the D and M blocks. The dotted lines illustrate the flow of variables from other blocks and demonstrate the coupled nature of D, E, and M steps. This diagram is illustrative and should not be confused with a control diagram.

13.3. Hyperparameter Update

The update equation in Equation (80) can be simplified as:

where , and are constants that are independent of . Since Equation (96) is nonlinear in , an approximate discretization like the conventional Gauss–Newton update scheme given in Equation (48) should be used for the M step. In summary, the D and E steps follow an exact discretization, whereas the M step follows an approximate discretization.

14. Convergence Proof for Parameter and Hyperparameter Estimation

In robotics, it is important that learning algorithms provide a stable solution, especially when robot safety during operation is a concern. Therefore, a proof of convergence for DEM is important for its widespread use in robotics as a learning algorithm. However, the DEM literature lacks any such mathematical proof of convergence for the estimator. Therefore, this section aims at providing one for the parameter and hyperparameter estimation step on LTI systems.

Since the update equation given by Equation (95) is a linear differential equation, proving that is sufficient to prove that converges to a stable solution. Substituting the expression for from Equation (62) to the in Equation (95), yields:

Since the prior precision matrix can be chosen to be positive definite, . It is straightforward to note from the expression for in Equation (62) that , because , , and . Therefore, the proof of convergence is complete if we prove that . Simplifying the expressions for and from Equations (92) and (93), after some nontrivial linear algebra [41], yields:

where

It is straightforward from Equation (98) that because , and . Combining all the results from this section, , and . This completes the proof that the parameter estimation step of DEM converges for an LTI system. Similarly, from Equation (81), proves the convergence of hyperparameter estimation step. For a detailed account of the linear algebra behind the proof of convergence, readers may refer to [41].

15. A Demonstrative Example

This section aims to provide the proof of concept for DEM through simulation for the estimation of an LTI system with colored noise. Since the algorithm can find an infinite number of solutions for a black box estimation of , , and from , a black box estimation is not ideal as a demonstrative example. Therefore, we restrict this section to the joint estimation of x, A, B, , and from known and C.

15.1. Generative Model

A stable LTI system of the form Equation (3) was selected, with randomly generated parameters having

A Gaussian bump input signal of was centered around and sampled at till was used. The colored noise was generated with a smoothness value of for the Gaussian kernel. The noise precisions were and , making . The embedding order of the generalized motion of states and inputs were and , respectively.

15.2. Priors for Estimation

As discussed in Section 5.2, three prior distributions are necessary for the algorithm. Since the inputs are known, the input prior is initialized with the known input v, and a tight prior precision of is used to restrict any changes in v. Similarly, since the parameter C is known, the corresponding prior parameters in are initialized with C, with tight priors of . The prior parameters for the unknown A and B matrices are randomly sampled from the range of [−2,2], and a low prior precision of is used to encourage exploratory behavior. In summary, and . Since the hyperparameters are unknown, their priors were set to zero , with a prior precision of to encourage exploration.

15.3. Results of Estimation

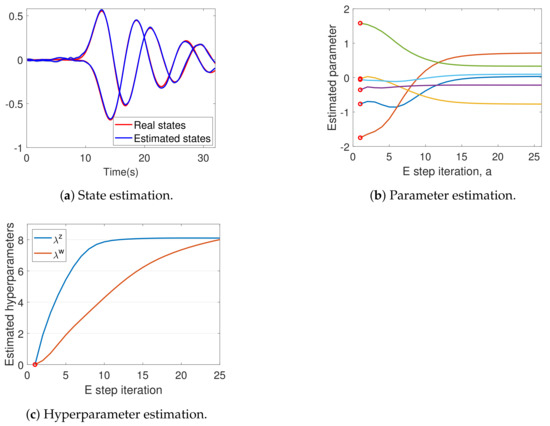

The data generated from the system in Section 15.1 was used to run the DEM algorithm given in Algorithm 2. Figure 5a demonstrates the successful state estimation of the algorithm. The results of parameter estimation (A and B) are shown in Figure 5b. The updates began from randomly selected priors , marked by red circles, to finally converge. Table 1 shows that the DEM’s estimate of A and B are close to the real values.

Figure 5.

The results of DEM’s estimation process. (a) The estimated states in blue closely resembles the real states in red. (b) The parameter estimation starts from randomly selected , marked by red circles and converges with each E step iteration a. (c) Both the hyperparameters start from , and converge close to the correct value of 8.

Table 1.

DEM’s estimate of A and B converges to real value.

This confirms that the parameter estimation can converge close to the real parameters, even when is randomly selected from the range that is double the size of the real parameter range . Figure 5c shows the successful hyperparameter convergence close to .

DEM’s confidence on its estimates increase with the E step iterations, as can be seen from Figure 6a, which demonstrates an increase in parameter precision . A similar trend can be observed for . However, remains a constant during the entire algorithm, as proved in Section 12.2. The key idea behind DEM’s inference is the maximization of free energy objectives. Read together, Figure 5 and Figure 6 demonstrates that DEM successfully estimates , and , with increasing confidence on its estimates as the estimation proceeds by maximizing from Equation (40). In summary, DEM can be used for the joint estimation of states, parameters and hyperparameters of an LTI system, subjected to colored noise.

Figure 6.

Maximization of improves the confidence on estimates. (a) Parameter precision . (b) Free action .

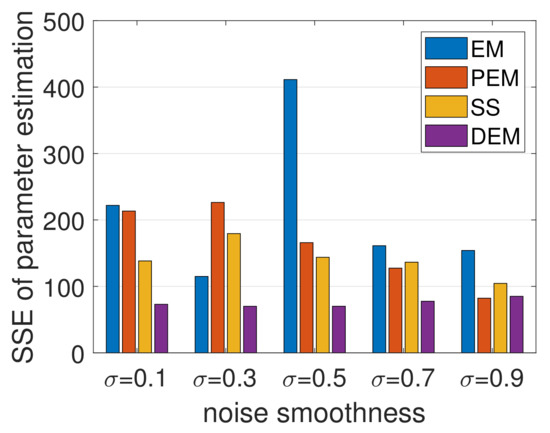

16. Benchmarking

This section deals with benchmarking DEM against the state-of-the-art parameter estimation methods such as Expectation Maximization (EM), Subspace method (SS), and Prediction Error Minimization (PEM), for black-box estimation (fully unknown x, and ).

16.1. Evaluation Metric for Parameter Estimation

For the black box identification, with completely unknown x, , and , there are infinite solutions with accurate input–output mapping. However, for LTI systems, there exists a unique transformation for identical systems. We use the companion canonical form to check the validity of parameter estimation by transforming both the real and the estimated parameters into their companion canonical form and then using the (square of) Euclidean distance between them as the sum of squared error (SSE) in parameter estimation. This evaluation metric will be used for parameter estimation in the next section.

16.2. Simulation Setup

A total of 500 (5 × 100) different randomly generated stable systems were used with five different noise smoothness values for parameter estimation. All systems were selected with same number of parameters ( and ), with each , while ensuring that A matrix is stable. All the noises were generated with the precision of , with the embedding orders of states and inputs as and . A Gaussian bump of was used as the input signal with and . The prior parameter was randomly initialized such that all with a tight prior precision of . Both the hyperparameter priors were set to zero, with a prior precision of .

The System Identification toolbox from MATLAB was used for SS () and PEM methods. The solution of SS was used to initialize PEM. An implementation of EM algorithm for state space models was written in MATLAB based on [45]. is inherently designed to handle colored noise, whereas the implemented EM algorithm is not. The code for the DEM algorithm will be openly available at: https://github.com/ajitham123/DEM_LTI.

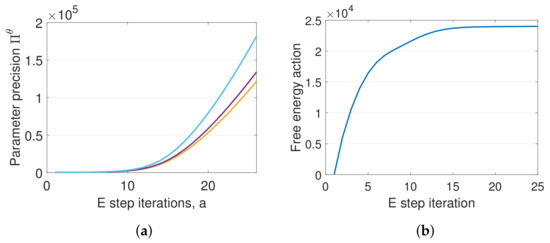

16.3. Results

The results shown in Figure 7 demonstrate the superior performance of DEM in comparison with EM, PEM, and SS, with minimum SSE during parameter estimation across different noise smoothness. Additionally, EM and PEM exploded occasionally ( times), resulting in outliers in SSE, which were removed for better visualization. DEM demonstrated a consistent performance without generating any such outliers or exploding solutions, which could be explained by DEM’s convergence guarantees for parameter estimation under colored noise [41], as proved in Section 14. In summary, DEM is a competitive parameter estimator for LTI systems with colored noise.

Figure 7.

The sum of all SSE of for 100 random systems each, for 5 different noise smoothnesses. DEM outperforms EM, PEM, and SS with minimum SSE for parameter estimation under colored noise.

17. Discussion

The quest for a brain-inspired learning algorithm for robots has culminated in the free energy principle that postulates biological brain’s perception as an optimization over its free energy objectives. FEP is of prime importance to robotics because of the use of generalized coordinates that enables it to gracefully handle colored noises. Colored noises appear in real robotics systems through the unmodeled dynamics and the non-linearity errors in the model, thereby providing an advantage for DEM during estimation when compared to other estimators. An example could be the unmodeled wind disturbances acting on an unmanned aerial vehicle while in flight, or the non linearity errors in the dynamic model of a robotic manipulator arm involved in a pick and place operation. The scope of this work spans across the blind system identification of such linear dynamic systems with colored noise.

The fundamental difference between this work and the prior work is in the reformulation of DEM for an LTI system. While DEM from computational neuroscience focuses on emulating the biological brain’s perception through the hierarchical abstraction of a number of non-linear dynamic systems that interact with each other, our work focuses on reducing this method into an algorithm for the system identification of an LTI system with colored noise, which is a well-known problem in robotics. This reformulation enables the standard analysis for convergence, stability and unbiased estimation, which is an essential analysis in practical robotics. It also enables DEM to be compared with other existing estimation algorithms in a control systems domain. The widespread use of DEM in robotics necessitates these mathematical analyses, especially when concerning the stable and safe operation of robots in industry and during human–robot interaction.

An algorithm with proved convergence for estimation is preferred for safe robotic applications. Therefore, one of the main contribution of this work was the reduction of the estimation algorithm into a coupled augmented system to prove the convergence of parameter and hyperparameter estimation steps. This work also demonstrated the successful applicability of DEM for the estimation of a randomly selected LTI system. Furthermore, we showed through rigorous simulations on a wide range of randomly generated LTI systems that DEM is a competitive algorithm for system identification under colored noise, thereby widening the scope of DEM to a large number of LTI systems in robotics.

One of the main drawbacks of the algorithm is its higher computational complexity when compared to the estimation algorithms that do not keep track of the trajectory of states. Therefore, future work can focus on the online estimation using DEM with reduced computational load. Future work can also focus on extending this algorithm for linear time varying systems to deal with robots with changing system parameters while in operation—a delivery drone dropping deliveries in mid-flight, for example. From a practical robotics point of view, DEM’s parameter estimation module can be directly applied to a wide range of robots such as quadrotors, robotic arms, wheeled robots, etc. for black-box system identification, the input estimation module can be employed for fault-detection systems, and the hyperparameter estimation module can be used for online noise estimation for robust control. DEM can also be extended with a control loop for active inference to perform simultaneous perception and action on robots. This would result in the development of cognitive robots that can learn the generative model in the environment by interacting with it and actively seeking new information (active learning) for uncertainty resolution. This would influence multiple domains in robotics such as human–robot interaction for task learning, swarm robotics for collective learning and distributed control, informative path planning of aerial robots for environment monitoring, etc. The development of such brain-inspired autonomous agents sits at the core of cognitive robotics research. In summary, DEM has a huge potential to be the bioinspired learning algorithm for future robots.

18. Conclusions

The free energy principle from neuroscience has a great potential to be one of the most prominent frameworks for learning and control for the autonomous systems in future. Therefore, this paper converted the FEP-based inference scheme called DEM into a joint state, input, parameter, and hyperparameter estimation algorithm for LTI systems with colored noise. We derived the mathematical framework of DEM for LTI systems to prove that the resulting estimator is a combination of linear estimators that are coupled. We provided the proof of convergence for the estimation steps. Through rigorous simulations on randomly generated linear systems with colored noise at varying smoothness levels, we demonstrated that the DEM algorithm outperforms EM, PEM, and SS methods for parameter estimation with minimal estimation error. In light of the potential for DEM to solve the parameter estimation problem, the future research will aim at applying DEM to a quadcopter flying in wind.

Author Contributions

A.A.M.: Conceptualization, methodology, software, validation, formal analysis, writing—original draft preparation, visualization. M.W.: Conceptualization, writing—review and editing, supervision. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The MATLAB code for the DEM algorithm will be openly available at: https://github.com/ajitham123/DEM_LTI.

Acknowledgments

The authors would like to thank Karl Friston for the thought-provoking discussions on the use of generalized coordinates within the FEP framework.

Conflicts of Interest

The authors declare no conflict of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| LTI system | Linear time invariant system |

| DEM | Dynamic Expectation Maximization |

| FEP | Free energy principle |

| KF | Kalman Filter |

| KL divergence | Kullback–Leibler divergence |

References

- Friston, K. The free-energy principle: A unified brain theory? Nat. Rev. Neurosci. 2010, 11, 127–138. [Google Scholar] [CrossRef] [PubMed]

- Anil Meera, A.; Wisse, M. Free Energy Principle Based State and Input Observer Design for Linear Systems with Colored Noise. In Proceedings of the 2020 American Control Conference (ACC), Denver, CO, USA, 1–3 July 2020; pp. 5052–5058. [Google Scholar]

- Bos, F.; Anil Meera, A.; Benders, D.; Wisse, M. Free Energy Principle for State and Input Estimation of a Quadcopter Flying in Wind. arXiv 2021, arXiv:2109.12052. [Google Scholar]

- Pezzato, C.; Ferrari, R.; Corbato, C.H. A novel adaptive controller for robot manipulators based on active inference. IEEE Robot. Autom. Lett. 2020, 5, 2973–2980. [Google Scholar] [CrossRef]

- Pezzato, C.; Hernandez, C.; Wisse, M. Active Inference and Behavior Trees for Reactive Action Planning and Execution in Robotics. arXiv 2020, arXiv:2011.09756. [Google Scholar]

- Baioumy, M.; Duckworth, P.; Lacerda, B.; Hawes, N. Active inference for integrated state-estimation, control, and learning. arXiv 2020, arXiv:2005.05894. [Google Scholar]

- Oliver, G.; Lanillos, P.; Cheng, G. Active inference body perception and action for humanoid robots. arXiv 2019, arXiv:1906.03022. [Google Scholar]

- Çatal, O.; Verbelen, T.; Van de Maele, T.; Dhoedt, B.; Safron, A. Robot navigation as hierarchical active inference. Neural Netw. 2021, 142, 192–204. [Google Scholar] [CrossRef]

- Lennart, L. System Identification: Theory for the User; PTR Prentice Hall: Upper Saddle River, NJ, USA, 1999; Volume 28. [Google Scholar]

- Zhang, L.Q.; Cichocki, A.; Amari, S. Kalman filter and state-space approach to blind deconvolution. In Neural Networks for Signal Processing X, Proceedings of the 2000 IEEE Signal Processing Society Workshop, Sydney, Australia, 11–13 December 2000; IEEE: Piscataway, NJ, USA, 2000; pp. 425–434. [Google Scholar]

- Han, R.; Bohn, C.; Bauer, G. Blind identification of state-space models in physical coordinates. arXiv 2021, arXiv:2108.08498. [Google Scholar]

- Abed-Meraim, K.; Qiu, W.; Hua, Y. Blind system identification. Proc. IEEE 1997, 85, 1310–1322. [Google Scholar] [CrossRef]

- Zhang, Y. Unbiased identification of a class of multi-input single-output systems with correlated disturbances using bias compensation methods. Math. Comput. Model. 2011, 53, 1810–1819. [Google Scholar] [CrossRef]

- Liu, X.; Lu, J. Least squares based iterative identification for a class of multirate systems. Automatica 2010, 46, 549–554. [Google Scholar] [CrossRef]

- Zheng, W.X. On a least-squares-based algorithm for identification of stochastic linear systems. IEEE Trans. Signal Process. 1998, 46, 1631–1638. [Google Scholar] [CrossRef]

- Zhang, Y.; Cui, G. Bias compensation methods for stochastic systems with colored noise. Appl. Math. Model. 2011, 35, 1709–1716. [Google Scholar] [CrossRef][Green Version]

- Cui, T.; Chen, F.; Ding, F.; Sheng, J. Combined estimation of the parameters and states for a multivariable state-space system in presence of colored noise. Int. J. Adapt. Control. Signal Process. 2020, 34, 590–613. [Google Scholar] [CrossRef]

- Friston, K.J.; Trujillo-Barreto, N.; Daunizeau, J. DEM: A variational treatment of dynamic systems. Neuroimage 2008, 41, 849–885. [Google Scholar] [CrossRef] [PubMed]

- Van de Laar, T.; Özçelikkale, A.; Wymeersch, H. Application of the free energy principle to estimation and control. arXiv 2019, arXiv:1910.09823. [Google Scholar]

- Anil Meera, A.; Wisse, M. A Brain Inspired Learning Algorithm for the Perception of a Quadrotor in Wind. arXiv 2021, arXiv:2109.11971. [Google Scholar]

- Buckley, C.L.; Kim, C.S.; McGregor, S.; Seth, A.K. The free energy principle for action and perception: A mathematical review. J. Math. Psychol. 2017, 81, 55–79. [Google Scholar] [CrossRef]

- Hohwy, J. The Predictive Mind; Oxford University Press: Oxford, UK, 2013. [Google Scholar]

- Friston, K. The free-energy principle: A rough guide to the brain? Trends Cogn. Sci. 2009, 13, 293–301. [Google Scholar] [CrossRef]

- Friston, K.J.; Daunizeau, J.; Kilner, J.; Kiebel, S.J. Action and behavior: A free-energy formulation. Biol. Cybern. 2010, 102, 227–260. [Google Scholar] [CrossRef] [PubMed]

- Carhart-Harris, R.L.; Friston, K.J. The default-mode, ego-functions and free-energy: A neurobiological account of Freudian ideas. Brain 2010, 133, 1265–1283. [Google Scholar] [CrossRef]

- Friston, K.J.; Daunizeau, J.; Kiebel, S.J. Reinforcement learning or active inference? PLoS ONE 2009, 4, e6421. [Google Scholar] [CrossRef] [PubMed]

- Friston, K. Hierarchical models in the brain. PLoS Comput. Biol. 2008, 4, e1000211. [Google Scholar] [CrossRef] [PubMed]

- Baltieri, M.; Buckley, C.L. PID control as a process of active inference with linear generative models. Entropy 2019, 21, 257. [Google Scholar] [CrossRef]

- Veissière, S.P.; Constant, A.; Ramstead, M.J.; Friston, K.J.; Kirmayer, L.J. Thinking through other minds: A variational approach to cognition and culture. Behav. Brain Sci. 2020, 43, e90. [Google Scholar] [CrossRef] [PubMed]

- Kaufmann, R.; Gupta, P.; Taylor, J. An active inference model of collective intelligence. arXiv 2021, arXiv:2104.01066. [Google Scholar]

- Miu, E.; Gulley, N.; Laland, K.N.; Rendell, L. Innovation and cumulative culture through tweaks and leaps in online programming contests. Nat. Commun. 2018, 9, 1–8. [Google Scholar] [CrossRef] [PubMed]

- Friston, K.; Kiebel, S. Predictive coding under the free-energy principle. Philos. Trans. R. Soc. Biol. Sci. 2009, 364, 1211–1221. [Google Scholar] [CrossRef]

- Parr, T.; Markovic, D.; Kiebel, S.J.; Friston, K.J. Neuronal message passing using Mean-field, Bethe, and Marginal approximations. Sci. Rep. 2019, 9, 1–18. [Google Scholar] [CrossRef]

- Van de Laar, T.W.; de Vries, B. Simulating active inference processes by message passing. Front. Robot. AI 2019, 6, 20. [Google Scholar] [CrossRef]

- Friston, K.; FitzGerald, T.; Rigoli, F.; Schwartenbeck, P.; Pezzulo, G. Active inference and learning. Neurosci. Biobehav. Rev. 2016, 68, 862–879. [Google Scholar] [CrossRef]

- Friston, K.J. Variational filtering. NeuroImage 2008, 41, 747–766. [Google Scholar] [CrossRef]

- Friston, K.; Stephan, K.; Li, B.; Daunizeau, J. Generalised filtering. Math. Probl. Eng. 2010, 2010. [Google Scholar] [CrossRef]

- Friston, K.; Mattout, J.; Trujillo-Barreto, N.; Ashburner, J.; Penny, W. Variational free energy and the Laplace approximation. Neuroimage 2007, 34, 220–234. [Google Scholar] [PubMed]

- Blei, D.M.; Kucukelbir, A.; McAuliffe, J.D. Variational inference: A review for statisticians. J. Am. Stat. Assoc. 2017, 112, 859–877. [Google Scholar] [CrossRef]

- Balaji, B.; Friston, K. Bayesian state estimation using generalized coordinates. In Proceedings of the Signal Processing, Sensor Fusion, and Target Recognition XX, Orlando, FL, USA, 5 May 2011; Volume 8050, p. 80501Y. [Google Scholar]

- Anil Meera, A.; Wisse, M. On the convergence of DEM’s linear parameter estimator. In International Workshop on Active Inference; Springer: Berlin/Heidelberg, Germany, 2021; accepted. [Google Scholar]

- Li, B.; Daunizeau, J.; Stephan, K.E.; Penny, W.; Hu, D.; Friston, K. Generalised filtering and stochastic DCM for fMRI. Neuroimage 2011, 58, 442–457. [Google Scholar] [CrossRef]

- Mader, W.; Linke, Y.; Mader, M.; Sommerlade, L.; Timmer, J.; Schelter, B. A numerically efficient implementation of the expectation maximization algorithm for state space models. Appl. Math. Comput. 2014, 241, 222–232. [Google Scholar] [CrossRef]

- Friston, K. A free energy principle for a particular physics. arXiv 2019, arXiv:1906.10184. [Google Scholar]

- Cara, F.J.; Juan, J.; Alarcón, E. Using the EM algorithm to estimate the state space model for OMAX. Practice 2014, 1000, 3. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).