Teleoperation of Dual-Arm Manipulators via VR Interfaces: A Framework Integrating Simulation and Real-World Control

Abstract

1. Introduction

- A unified VR teleoperation architecture that integrates Webots (simulation) and a physical dual-arm robot through the same Unreal Engine 5.6.1 immersive interface.

- A modular communication design based on a middleware abstraction (static library) that enables replacing the robotics middleware (e.g., RoboComp/Ice, ROS2) without modifying the Unreal Engine application.

- A real-time digital-twin workflow for dual-arm end-effector control using VR motion controllers, including continuous bidirectional synchronization and a safety-oriented dead-man switch mechanism.

- A scalable 3D perception visualization pipeline that fuses multi-sensor point clouds and renders >1 M colored points in VR via GPU-based Niagara, preserving interactive performance.

- An experimental validation including (i) technical performance benchmarking of point-cloud rendering and (ii) a user study on six bimanual manipulation tasks reporting success and collision metrics.

2. Related Works

3. System Architecture and Implementation

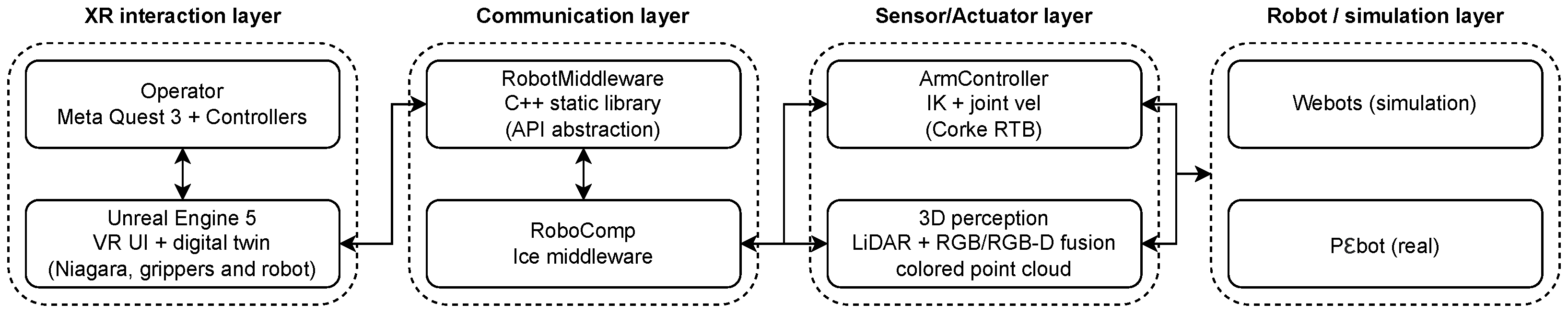

3.1. System Overview

- XR interaction Layer: The operator issues bimanual end-effector commands using a Meta Quest 3 headset and tracked controllers, while Unreal Engine renders the immersive interface. This interface includes the robot’s digital twin, the operator’s phantom grippers, and a dense colored point cloud rendered in real time via Niagara, providing the user with an intuitive control mechanism.

- Communication Layer: Unreal Engine exchanges the target end-effector poses and feedback, such as robot state information, with a dedicated C++ abstraction layer, RobotMiddleware. This middleware bridges the XR front-end and the robotics back-end, such as RoboComp/Ice, ensuring seamless data transfer between the user interface and the robot.

- Actuator/Sensor Layer: This layer is composed of two main components: the ArmController, which computes joint velocity commands via inverse kinematics (IK) with a safety mechanism (dead-man switch) for the end-effector, and the multi-sensor perception system, which fuses data into a dense colored point cloud.

- Robot/Simulator Layer: The final layer consists of the physical robot P3bot or simulator Webots. It receives the commands from the Actuator/Sensor layer and executes them, performing the desired manipulation tasks.

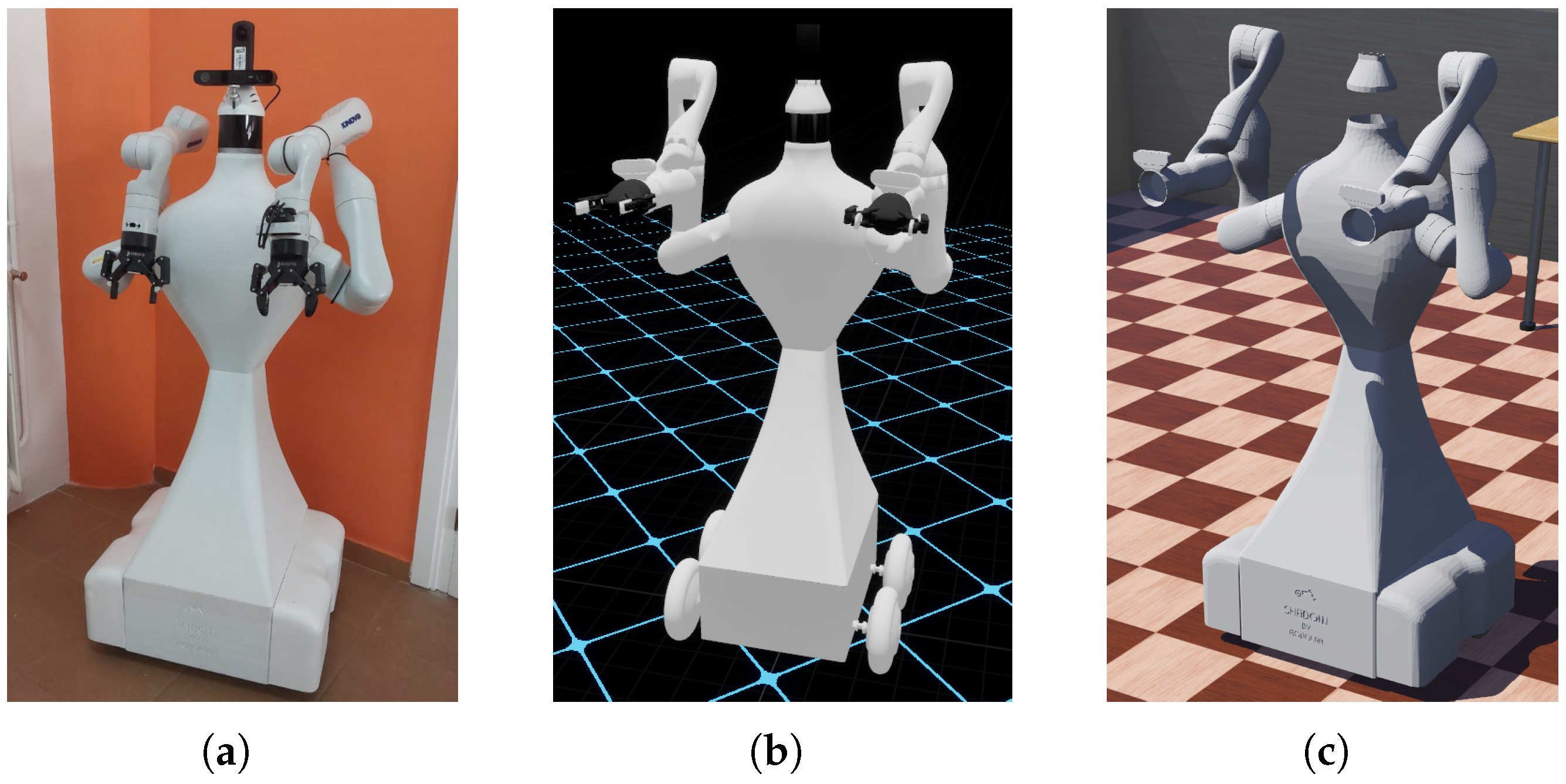

3.2. Robotic Platform and Digital Representations

3.3. XR Runtime and Interaction in Unreal Engine

3.4. Communication Architecture and Middleware Abstraction

3.5. Control Architecture, Kinematics, and Safety

3.5.1. Kinematic Solver and Constraint Handling

- Joint Limit Avoidance: The solver penalizes joint configurations approaching physical limits.

- Self-Collision Proximity: The framework monitors the distance between the two manipulators and between each arm and the robot’s chassis.

- Singularity Management: The damping factor in the Levenberg-Marquardt algorithm prevents high joint velocities when the arms are near singular configurations.

3.5.2. Haptic and Visual Feedback Loop

3.5.3. Safety State-Machine and Dead-Man Switch

- IDLE State: This is the default standby mode. The robot maintains zero joint velocity and active brakes. The system remains in this state until a dual-concurrence condition is met: a stable heartbeat signal from the RobotMiddleware and the dead-man switch engaged.

- ACTIVE State: Once the dead-man switch is pressed, the FSM transitions to this state, enabling the real-time stream of velocity commands () derived from the IK solver. If the operator releases the dead-man switch, the system transitions directly back to IDLE for an immediate but nominal halt.

- SAFE-STOP State: This state acts as a dedicated fault-handling mechanism. It is triggered automatically from the ACTIVE state if a communication timeout (>100 ms) or a connection loss is detected. In this mode, the system executes an emergency deceleration profile to neutralize inertia before transitioning back to IDLE once a stable connection is re-established or the system is reset.

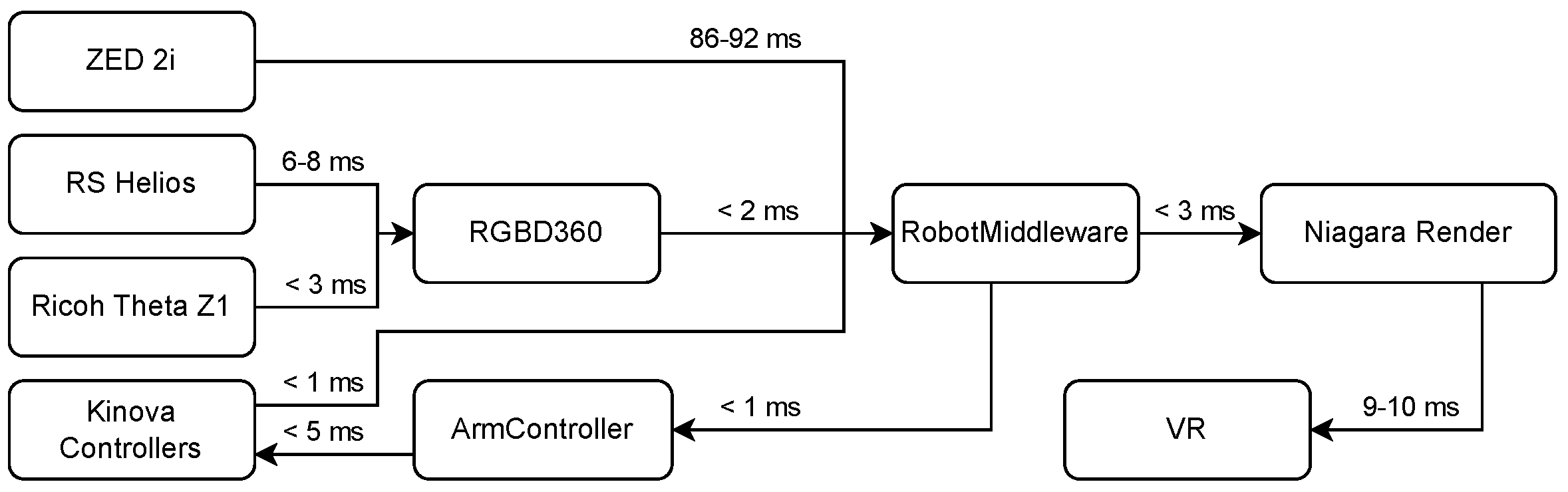

3.6. Perception Pipeline and Point-Cloud Rendering

3.7. Temporal Synchronization and Latency Management

3.8. Simulation-to-Real Operation

4. Experimental Results

4.1. Technical Experiment

4.1.1. CPU and GPU Usage

4.1.2. Memory Usage (RAM and VRAM)

4.1.3. Latency and FPS

4.1.4. System Latency

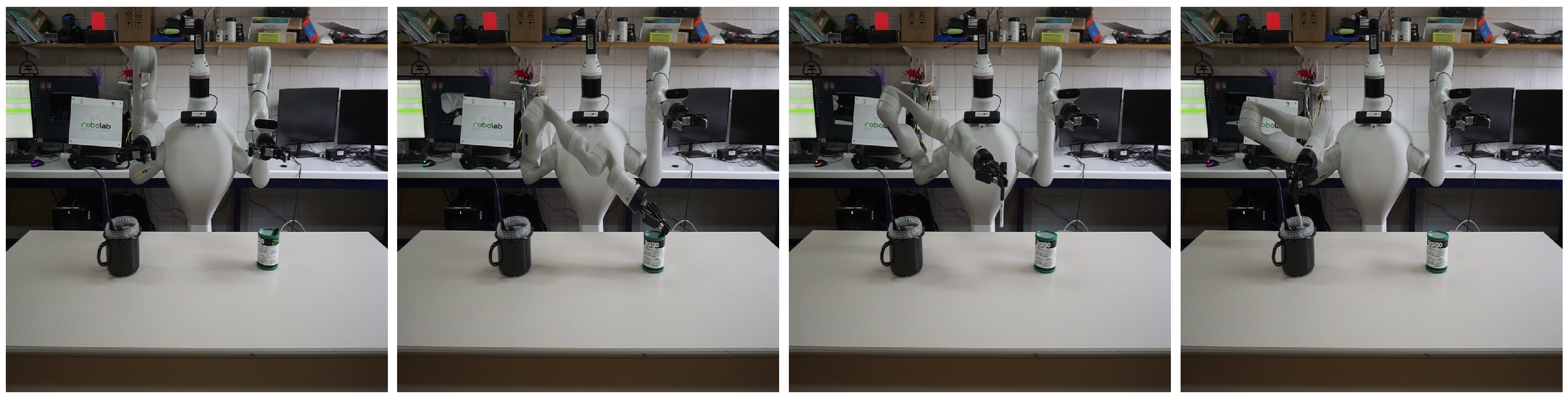

4.2. Real-World Tests: Teleoperation Evaluation

4.2.1. Pick and Place

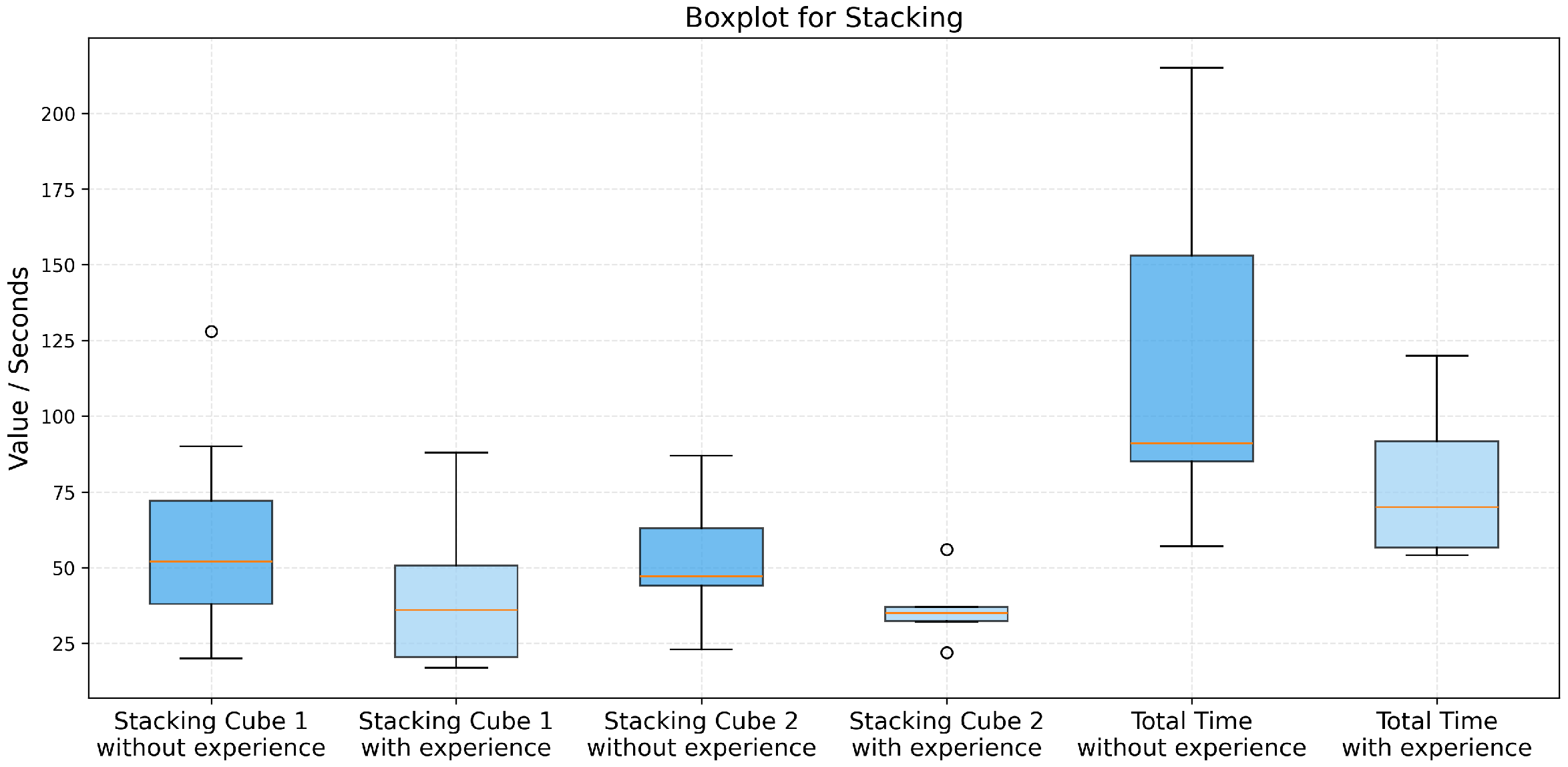

4.2.2. Stacking Cubes

4.2.3. Toy Handover

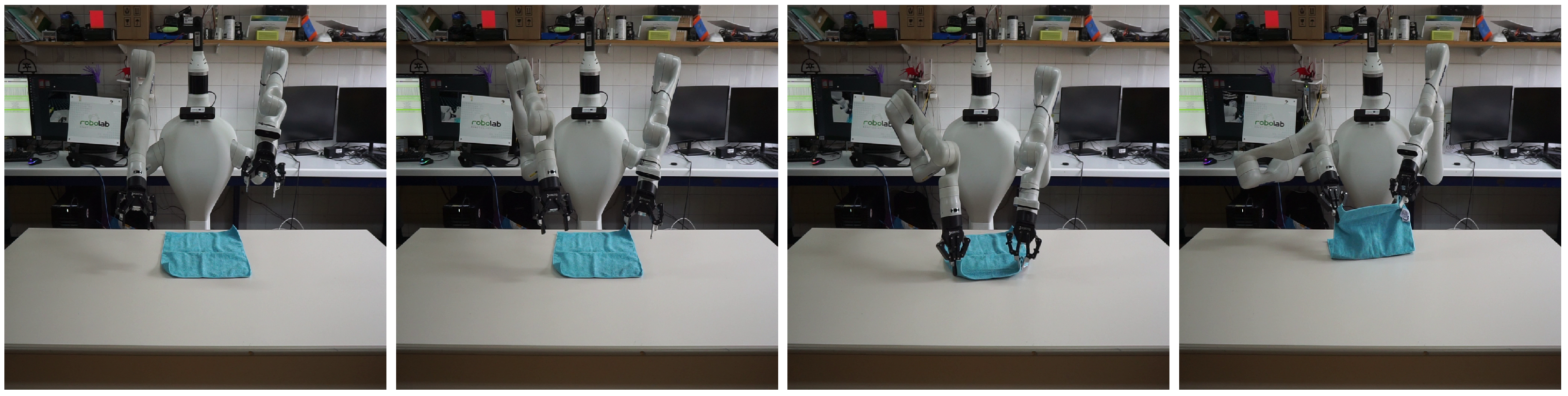

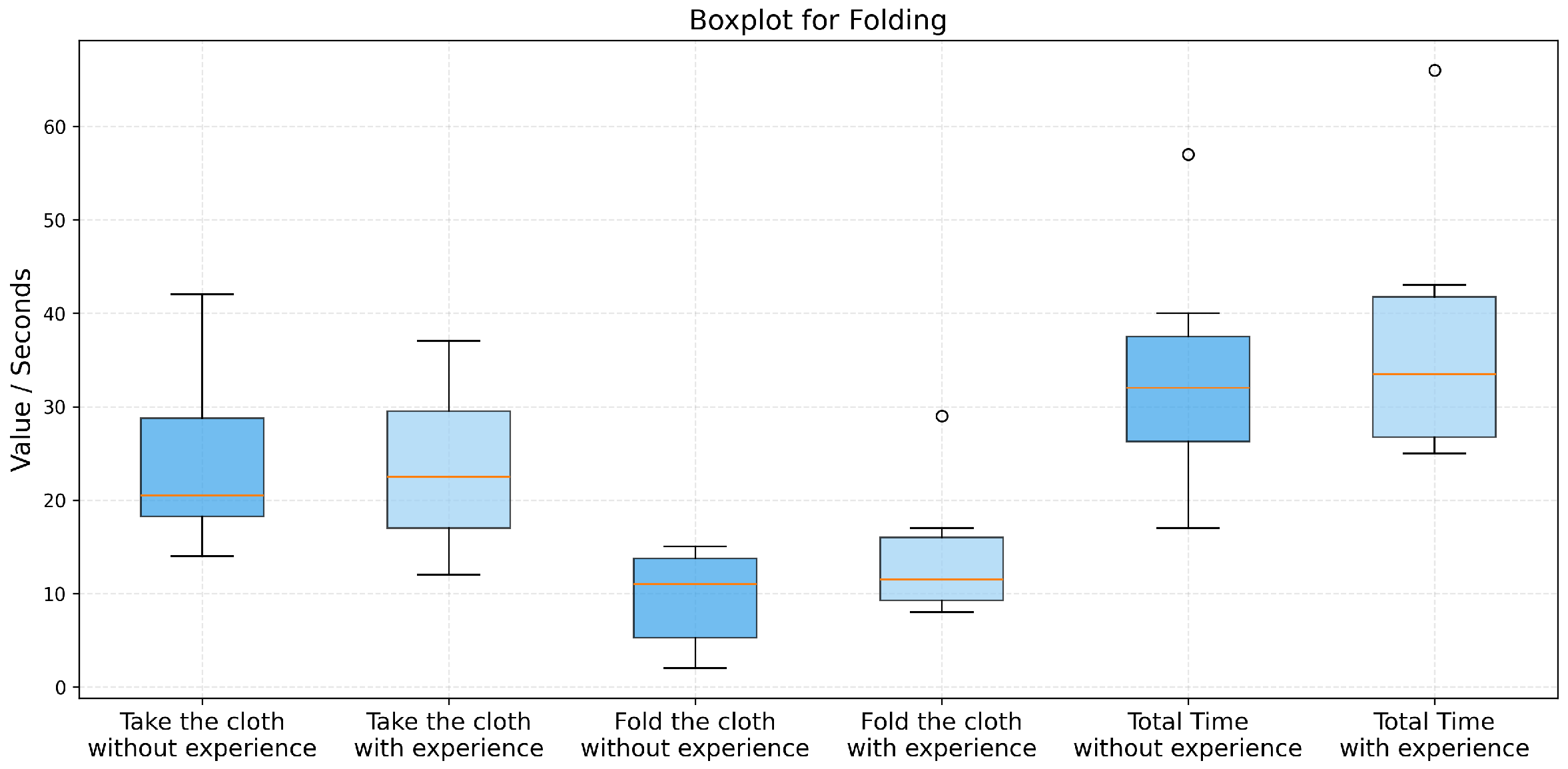

4.2.4. Folding Cloth

4.2.5. Writing “HI”

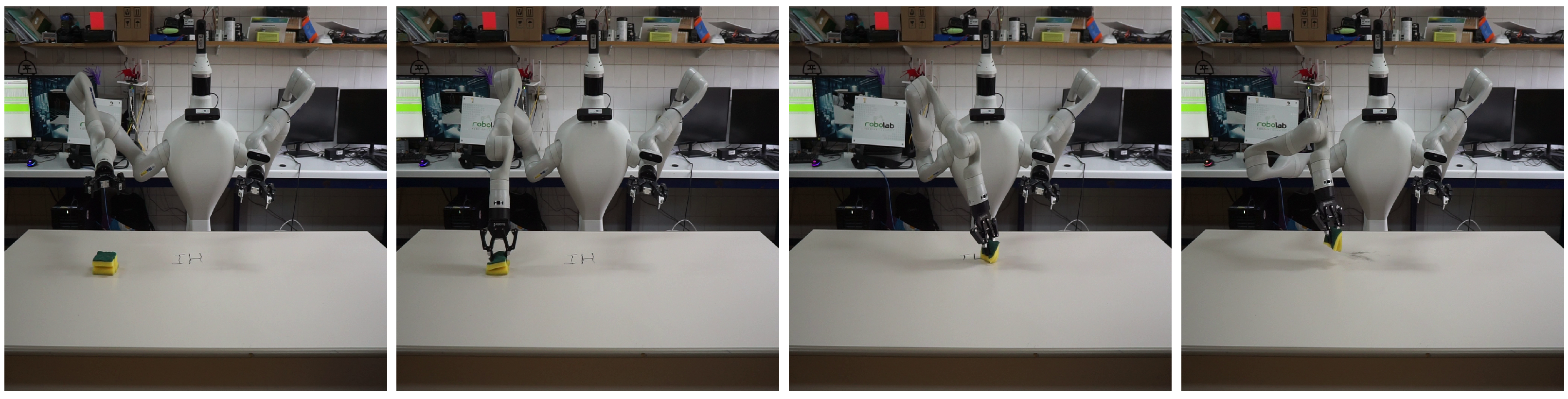

4.2.6. Erasing “HI”

4.2.7. Summary Success Rate

4.2.8. Post-Test User Feedback

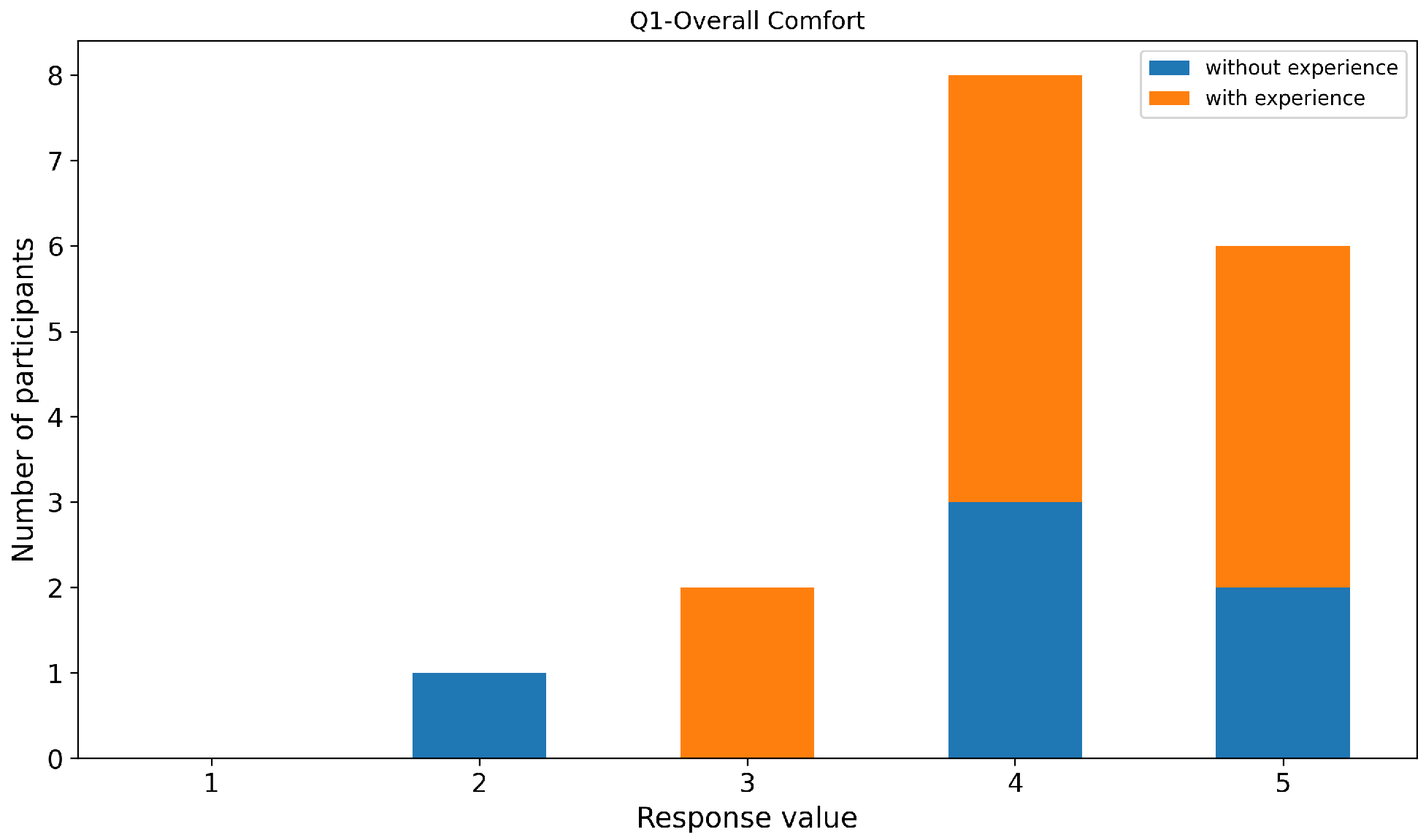

- The overall experience was comfortable in terms of hardware fit, weight, cables, and controllers.Overall comfort received consistently high ratings (Figure 24), with most participants scoring between 4 and 5. This indicates that the hardware setup (headset fit, weight, controllers, and cables) was well tolerated during the experiments.

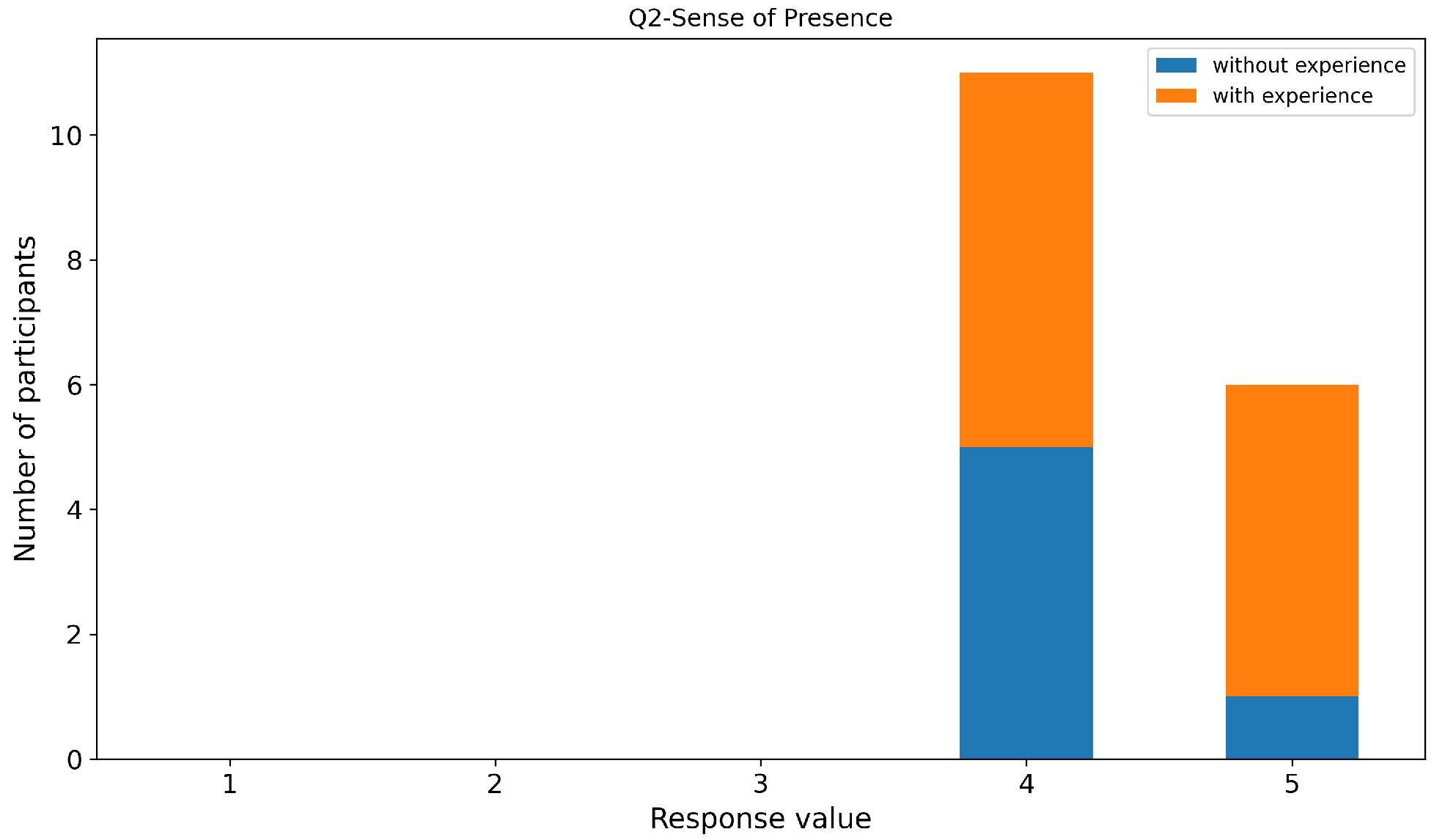

- I felt a strong sense of presence, as if I were present in the robot’s environment or controlling the robot through its perspective.Similarly to the previous question, the sense of presence was rated positively (Figure 25), with the majority of participants reporting a strong feeling of being present in the robot’s environment or controlling the robot through its perspective. These results suggest that the immersive visualization and motion mapping effectively support embodiment in the teleoperation task.

- I did not experience symptoms of motion sickness (e.g., dizziness, nausea, or headache) during or after the experiment.The system demonstrated very good tolerance to motion sickness. As shown in Figure 26, the vast majority of participants reported no symptoms at all, selecting the maximum score on the scale. Only three participants reported mild discomfort, while none reported moderate or severe motion sickness symptoms.

- The mapping between my manual movements in VR and the movements of the robotic manipulator was intuitive.The intuitiveness of the mapping between user hand movements and robotic manipulator motions was rated highly, with most participants scoring 4 or 5 (Figure 27). This suggests that the control scheme was easy to understand and required minimal cognitive effort.

- I was able to perform precise movements (e.g., picking up small objects or inserting them) with the accuracy I desired.Perceived precision of manipulation received slightly more varied responses (Figure 28). While many users felt capable of performing precise actions, some participants—particularly those without prior VR experience—reported moderate difficulty. This indicates that fine manipulation tasks may require additional adaptation time or enhanced visual feedback.

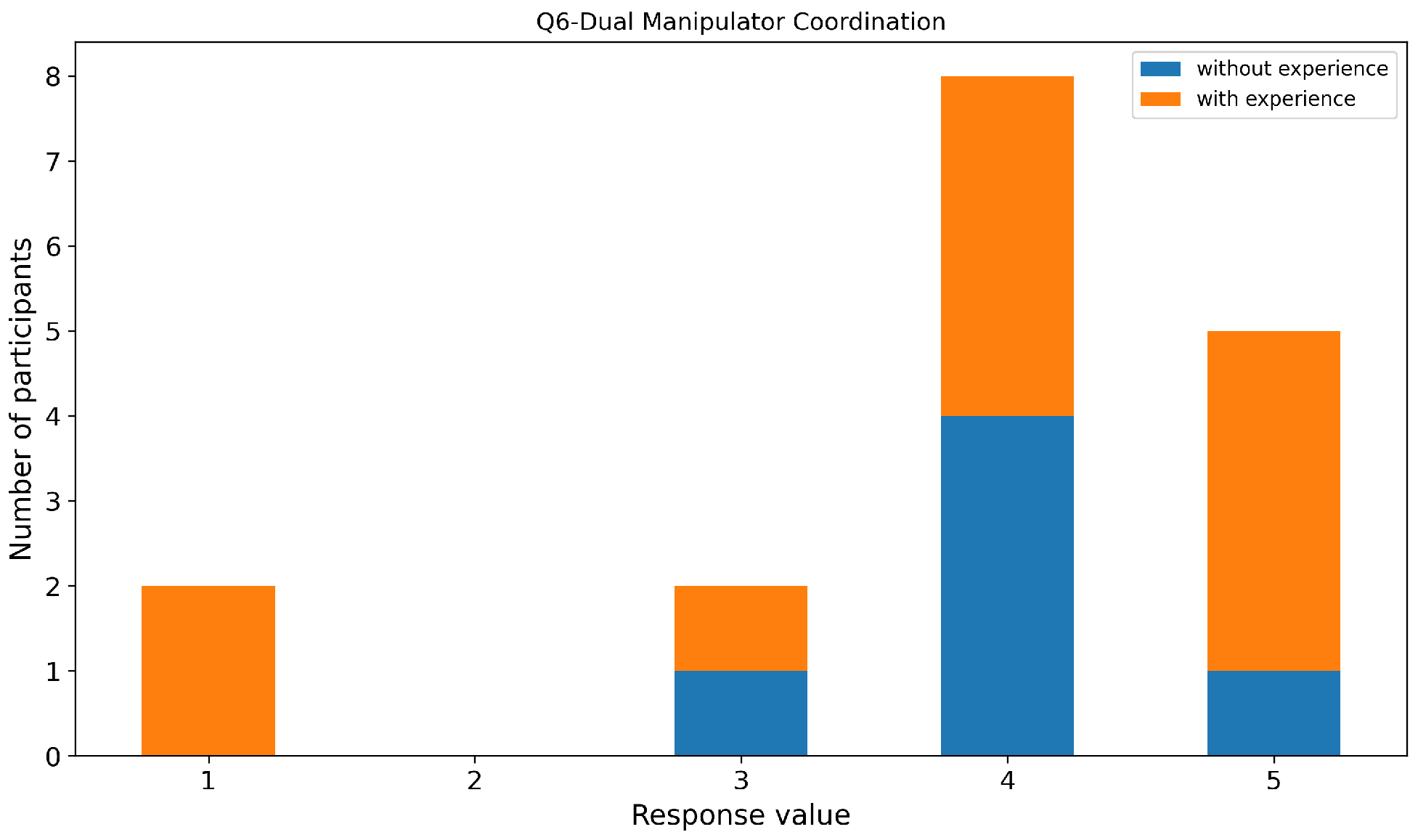

- Coordinating the two manipulators simultaneously to perform the task was easy.Simultaneous coordination of the two manipulators was identified as one of the more challenging aspects of the system. Although several participants rated this capability positively (Figure 29), a noticeable portion reported moderate difficulty.

- I did not perceive noticeable latency or delay between my actions and the robot’s response or visual updates.Perceived system latency was generally rated as low. Most participants did not notice significant delays between their actions and the robot’s response or visual updates (Figure 30). This subjective perception is consistent with the low frame rendering times measured in the technical evaluation. However, a small number of participants reported perceiving latency that was attributed not to communication or rendering delays but rather to the intentionally limited physical motion speed of the robotic manipulators. As a critical safety measure, the arm joints were capped at a maximum velocity of 1.5 rad/s. Since this hardware speed is lower than a user’s natural hand movement in VR, some participants interpreted the safety-driven motion limit as system latency, even though data transmission and command execution remained near real-time.

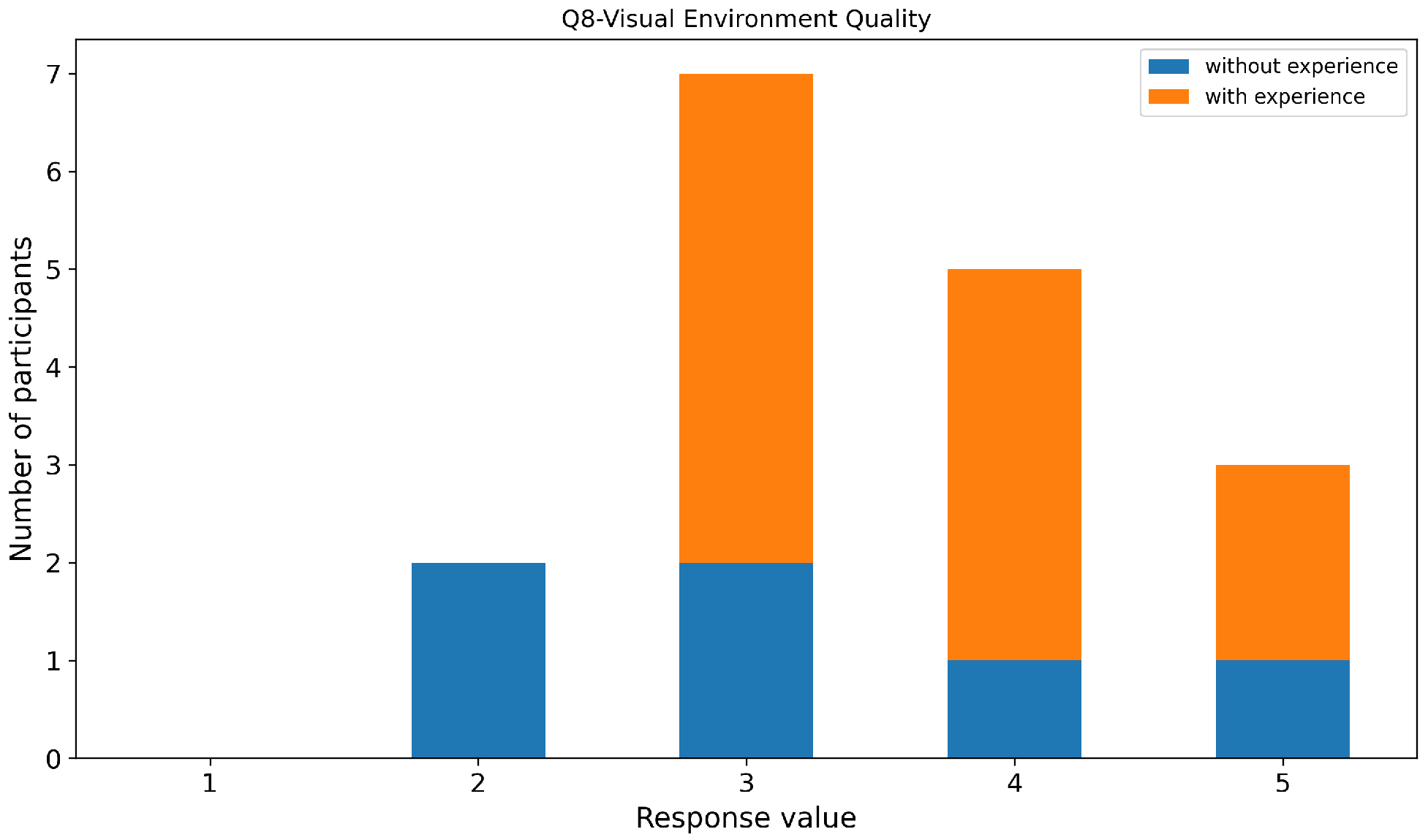

- The virtual environment and point cloud visualization provided sufficient detail to judge distances, object sizes, and textures.The quality of the virtual environment and point cloud visualization received mixed-to-positive ratings (Figure 31). While a majority of participants reported that the visualization provided sufficient detail to accurately judge distances and object sizes, several users identified limitations related to object occlusion and shadowing effects caused by the robotic arms.

- I felt confident when performing complex manipulations, trusting the visual feedback and the robot’s response.User confidence during complex manipulations followed a similar trend (Figure 32). Most participants reported feeling confident when performing tasks, though confidence was slightly lower in tasks requiring high precision or bimanual coordination.

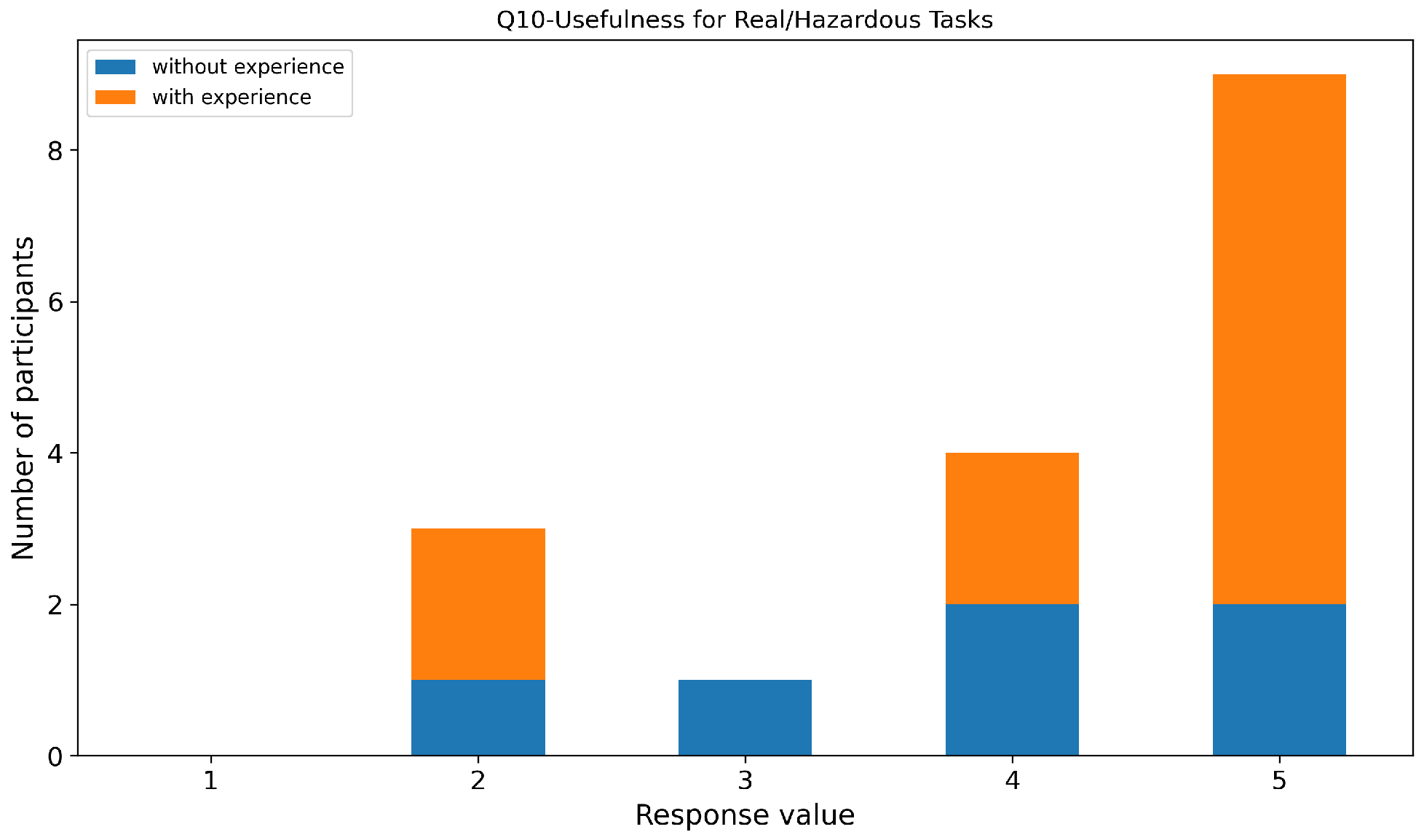

- Considering ease of use and performance, this VR teleoperation system is useful for tasks in real or hazardous environments.Finally, the system was rated as highly useful for teleoperation tasks in real or hazardous environments. The majority of participants selected values of 4 or 5 (Figure 33), indicating strong perceived applicability of the system beyond the experimental setting.

- Strengths

- Low Latency and Precision: Several users highlighted the system’s low latency, which contributed to a smooth and responsive experience. The movements of the robotic arms, particularly the grippers, were praised for their precision and their ability to accurately follow the user’s hand orientation. This precision was especially notable in tasks that required fine manipulation, such as Writingand Folding.

- Immersion and Visual Feedback: Many participants reported a strong sense of immersion and presence in the virtual environment. The system’s ability to provide realistic visual feedback, including the synchronized movement of the robot’s arms with the user’s hands, was cited as a key strength. Users felt they could easily estimate depth and object positioning.

- Intuitiveness and Ease of Use: A recurring theme in the feedback was the intuitiveness of the system. Participants, regardless of their VR experience, found the controls to be easy to learn and use. The system’s simple and effective control scheme, which allows simultaneous manipulation of both arms, was appreciated for its straightforwardness, especially by those with no prior VR experience.

- Areas for Improvement

- Shadows and Perception Issues: A significant number of participants pointed out that the shadows generated by the robot’s arms were problematic. These shadows often distorted their perception of the objects and the task environment, making it difficult to accurately position and manipulate objects, particularly in tasks requiring high precision. Many participants suggested that improving shadow handling or providing clearer visual cues for depth and object positioning would greatly enhance the experience.

4.3. Comparison with Related Work

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| IK | Inverse Kinematic |

| PTP | Precision Time Protocol |

| R-CNN | Region-based Convolutional Neural Network |

| RRMC | Resolved-Rate Motion Control |

| UE | Unreal Engine 5.6.1 |

| VR | Virtual Reality |

| XR | Extended Reality |

References

- Tadokoro, S. (Ed.) Disaster Robotics, 1st ed.; Springer Tracts in Advanced Robotics; Springer: Cham, Switzerland, 2019; p. 534. [Google Scholar] [CrossRef]

- Yoshinada, H.; Kurashiki, K.; Kondo, D.; Nagatani, K.; Kiribayashi, S.; Fuchida, M.; Tanaka, M.; Yamashita, A.; Asama, H.; Shibata, T.; et al. Dual-Arm Construction Robot with Remote-Control Function. In Disaster Robotics: Results from the ImPACT Tough Robotics Challenge; Tadokoro, S., Ed.; Springer International Publishing: Cham, Switzerland, 2019; pp. 195–264. [Google Scholar] [CrossRef]

- Nagatani, K.; Kiribayashi, S.; Okada, Y.; Otake, K.; Yoshida, K.; Tadokoro, S.; Nishimura, T.; Yoshida, T.; Koyanagi, E.; Fukushima, M.; et al. Emergency response to the nuclear accident at the Fukushima Daiichi Nuclear Power Plants using mobile rescue robots. J. Field Robot. 2013, 30, 44–63. [Google Scholar] [CrossRef]

- Phillips, B.T.; Becker, K.P.; Kurumaya, S.; Galloway, K.C.; Whittredge, G.; Vogt, D.M.; Teeple, C.B.; Rosen, M.H.; Pieribone, V.A.; Gruber, D.F.; et al. A Dexterous, Glove-Based Teleoperable Low-Power Soft Robotic Arm for Delicate Deep-Sea Biological Exploration. Sci. Rep. 2018, 8, 14779. [Google Scholar] [CrossRef] [PubMed]

- Jakuba, M.V.; German, C.R.; Bowen, A.D.; Whitcomb, L.L.; Hand, K.; Branch, A.; Chien, S.; McFarland, C. Teleoperation and robotics under ice: Implications for planetary exploration. In Proceedings of the 2018 IEEE Aerospace Conference, Big Sky, MT, USA, 3–10 March 2018; pp. 1–14. [Google Scholar] [CrossRef]

- Das, R.; Baishya, N.J.; Bhattacharya, B. A review on tele-manipulators for remote diagnostic procedures and surgery. CSI Trans. ICT 2023, 11, 31–37. [Google Scholar] [CrossRef]

- Sam, Y.T.; Hedlund-Botti, E.; Natarajan, M.; Heard, J.; Gombolay, M. The Impact of Stress and Workload on Human Performance in Robot Teleoperation Tasks. IEEE Trans. Robot. 2024, 40, 4725–4744. [Google Scholar] [CrossRef]

- Wu, P.; Shentu, Y.; Yi, Z.; Lin, X.; Abbeel, P. GELLO: A General, Low-Cost, and Intuitive Teleoperation Framework for Robot Manipulators. In Proceedings of the 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Abu Dhabi, United Arab Emirates, 14–18 October 2024; IEEE: Piscataway, NJ, USA, 2024. [Google Scholar] [CrossRef]

- Stanton, C.; Bogdanovych, A.; Ratanasena, E. Teleoperation of a humanoid robot using full-body motion capture, example movements, and machine learning. In Proceedings of the 2012 Australasian Conference on Robotics and Automation, Wellington, New Zealand, 3–5 December 2012. [Google Scholar]

- Kanazawa, K.; Sato, N.; Morita, Y. Considerations on interaction with manipulator in virtual reality teleoperation interface for rescue robots. In Proceedings of the 2023 32nd IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), Busan, Republic of Korea, 28–31 August 2023; IEEE: Piscataway, NJ, USA, 2023; pp. 386–391. [Google Scholar]

- George, A.; Bartsch, A.; Barati Farimani, A. OpenVR: Teleoperation for manipulation. SoftwareX 2025, 29, 102054. [Google Scholar] [CrossRef]

- Bao, M.; Tao, Z.; Wang, X.; Liu, J.; Sun, Q. Comparative Performance Analysis of Rendering Optimization Methods in Unity Tuanjie Engine, Unity Global and Unreal Engine. In Proceedings of the 2024 IEEE Smart World Congress (SWC), Nadi, Fiji, 2–7 December 2024; pp. 1627–1632. [Google Scholar] [CrossRef]

- Soni, L.; Kaur, A. Merits and Demerits of Unreal and Unity: A Comprehensive Comparison. In Proceedings of the 2024 International Conference on Computational Intelligence for Green and Sustainable Technologies (ICCIGST), Vijayawada, India, 18–19 July 2024; pp. 1–5. [Google Scholar] [CrossRef]

- Wonsick, M.; Padir, T. A Systematic Review of Virtual Reality Interfaces for Controlling and Interacting with Robots. Appl. Sci. 2020, 10, 9051. [Google Scholar] [CrossRef]

- Hetrick, R.; Amerson, N.; Kim, B.; Rosen, E.; de Visser, E.J.; Phillips, E. Comparing Virtual Reality Interfaces for the Teleoperation of Robots. In Proceedings of the 2020 Systems and Information Engineering Design Symposium (SIEDS), Charlottesville, VA, USA, 24 April 2020; IEEE: Piscataway, NJ, USA, 2020. [Google Scholar] [CrossRef]

- De Pace, F.; Manuri, F.; Sanna, A. Leveraging Enhanced Virtual Reality Methods and Environments for Efficient, Intuitive, and Immersive Teleoperation of Robots. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; IEEE: Piscataway, NJ, USA, 2021. [Google Scholar] [CrossRef]

- Rosen, E.; Jha, D.K. A Virtual Reality Teleoperation Interface for Industrial Robot Manipulators. arXiv 2023, arXiv:2305.10960. [Google Scholar] [CrossRef]

- Ponomareva, P.; Trinitatova, D.; Fedoseev, A.; Kalinov, I.; Tsetserukou, D. GraspLook: A VR-based Telemanipulation System with R-CNN-driven Augmentation of Virtual Environment. In Proceedings of the 2021 20th International Conference on Advanced Robotics (ICAR), Ljubljana, Slovenia, 6–10 December 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 166–171. [Google Scholar] [CrossRef]

- Audonnet, F.P.; Ramirez-Alpizar, I.G.; Aragon-Camarasa, G. IMMERTWIN: A Mixed Reality Framework for Enhanced Robotic Arm Teleoperation. arXiv 2024, arXiv:2409.08964. [Google Scholar] [CrossRef]

- García, A.; Solanes, J.E.; Muñoz, A.; Gracia, L.; Tornero, J. Augmented Reality-Based Interface for Bimanual Robot Teleoperation. Appl. Sci. 2022, 12, 4379. [Google Scholar] [CrossRef]

- Gallipoli, M.; Buonocore, S.; Selvaggio, M.; Fontanelli, G.A.; Grazioso, S.; Di Gironimo, G. A virtual reality-based dual-mode robot teleoperation architecture. Robotica 2024, 42, 1935–1958. [Google Scholar] [CrossRef]

- Stedman, H.; Kocer, B.B.; Kovac, M.; Pawar, V.M. VRTAB-Map: A Configurable Immersive Teleoperation Framework with Online 3D Reconstruction. In Proceedings of the 2022 IEEE International Symposium on Mixed and Augmented Reality Adjunct (ISMAR-Adjunct), Singapore, 17–21 October 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 104–110. [Google Scholar] [CrossRef]

- Tung, Y.S.; Luebbers, M.B.; Roncone, A.; Hayes, B. Stereoscopic Virtual Reality Teleoperation for Human Robot Collaborative Dataset Collection. In Proceedings of the HRI 2024 Workshop on Virtual, Augmented, and Mixed Reality for Human-Robot Interaction (VAM-HRI), Boulder, CO, USA, 11 March 2024. [Google Scholar]

- Corke, P.; Haviland, J. Not your grandmother’s toolbox–the Robotics Toolbox reinvented for Python. In Proceedings of the 2021 IEEE International Conference on Robotics and Automation (ICRA), Xi’an, China, 30 May–5 June 2021; IEEE: Piscataway, NJ, USA, 2021; pp. 11357–11363. [Google Scholar]

- Mazeas, D.; Namoano, B. Study of Visualization Modalities on Industrial Robot Teleoperation for Inspection in a Virtual Co-Existence Space. Virtual Worlds 2025, 4, 17. [Google Scholar] [CrossRef]

- Darvish, K.; Penco, L.; Ramos, J.; Cisneros, R.; Pratt, J.; Yoshida, E.; Ivaldi, S.; Pucci, D. Teleoperation of humanoid robots: A survey. IEEE Trans. Robot. 2023, 39, 1706–1727. [Google Scholar] [CrossRef]

- Turco, E.; Castellani, C.; Bo, V.; Pacchierotti, C.; Prattichizzo, D.; Baldi, T.L. Reducing Cognitive Load in Teleoperating Swarms of Robots through a Data-Driven Shared Control Approach. In Proceedings of the 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Abu Dhabi, United Arab Emirates, 14–18 October 2024; pp. 4731–4738. [Google Scholar] [CrossRef]

| Work | Year | XR | Real | Sim | 3D Scene |

|---|---|---|---|---|---|

| Wonsick & Padir (Appl. Sci.) [14] | 2020 | VR | N/A | N/A | N/A |

| Hetrick et al. (SIEDS) [15] | 2020 | VR | Yes | No | Point cloud + cameras |

| De Pace et al. (ICRA) [16] | 2021 | VR | Yes | N/S | RGB-D capture |

| Ponomareva et al. (ICAR) [18] | 2021 | VR | N/S | N/S | Digital twins (R-CNN) |

| Stedman et al. (ISMAR Adjunct) [22] | 2022 | VR | Yes | N/S | Online 3D recon |

| García et al. (Appl. Sci.) [20] | 2022 | AR | Yes | No | Holographic overlays |

| Rosen & Jha (arXiv) [17] | 2023 | VR | Yes | No | N/S |

| Tung et al. (VAM-HRI) [23] | 2024 | VR | Yes | N/S | Stereo 2D video |

| Gallipoli et al. (Robotica) [21] | 2024 | VR | Yes | N/S | Digital twin (N/S) |

| Wu et al. (IROS) [8] | 2024 | – | Yes | No | No |

| George et al. (SoftwareX) [11] | 2025 | VR | Yes | No | N/S |

| Ours | 2025 | VR | Yes | Yes | Dense colored point cloud |

| Work | Key Aspects | Relation to Our Proposal |

|---|---|---|

| Wonsick & Padir [14] | Systematic review of design dimensions for VR robot operation. | Motivates the use of XR interfaces but lacks a unified sim-to-real implementation. |

| Hetrick et al. [15] | Comparison of VR control paradigms with live point-cloud reconstruction. | Focuses on specific tasks (Baxter) but not on engine-agnostic sim/real unification. |

| De Pace et al. [16] | RGB-D environment capture with VR-controller teleoperation. | Strong perceptual focus, but does not address dual-arm unified sim/real workflows. |

| Ponomareva et al. [18] | Augmented virtual environment with object detection and digital twin. | Specific to workload reduction, lacking a general dual-arm sim/real architecture. |

| Stedman et al. [22] | Configurable baseline using RTAB-Map for online 3D reconstruction. | Highlights data density challenges, primarily for mobile robot platforms. |

| García et al. [20] | AR (HoloLens) and gamepad for bimanual industrial teleoperation. | Addresses ergonomics but lacks immersive VR with high-density point-cloud integration. |

| Rosen & Jha [17] | Command filtering for industrial arms with black-box controllers. | Complementary approach, whereas we focus on the unified architecture and perception. |

| Tung et al. [23] | Stereoscopic egocentric feedback for dataset collection. | Lacks a dual-arm digital-twin framework with dense 3D real-time reconstruction. |

| Gallipoli et al. [21] | Dual-mode architecture for safety and workflow flexibility. | Related in safety intent but does not feature GPU-accelerated point-cloud rendering. |

| Wu et al. [8] | Low-cost kinematic replica for demonstration collection. | Efficient for contact-rich tasks but lacks immersive digital-twin perception. |

| George et al. [11] | Open-source VR teleoperation for Franka Panda via Oculus. | Aimed at demonstrations; does not provide a unified interface for simulation and hardware. |

| Ours | Unified UE5 interface for Webots and physical dual-arm control. | Provides a modular middleware abstraction with scalable GPU-based 3D reconstruction. |

| Number of Points | Communication Latency | Data Size |

|---|---|---|

| 50 K | 14–17 ms | 3.6 Mbits |

| 500 K | 76–80 ms | 36 Mbits |

| 1 M | 91–98 ms | 72 Mbits |

| 1.5 M | 140–151 ms | 108 Mbits |

| 2 M | 184–196 ms | 144 Mbits |

| Task | Success Rate (%) | Self-Collision Count | Environment Collision Count | Timeout |

|---|---|---|---|---|

| Pick and Place (Pencil) | 76.47 | 1 | 2 | 1 |

| Cube Stacking | 76.47 | 1 | 0 | 3 |

| Toy Handover | 94.11 | 0 | 0 | 1 |

| Cloth Folding | 88.24 | 1 | 1 | 0 |

| Writing | 82.35 | 1 | 0 | 2 |

| Erasing | 100.0 | 0 | 0 | 0 |

| Metric | IMMERTWIN [19] | Proposed Framework |

|---|---|---|

| GPU Hardware | NVIDIA RTX 4090 | NVIDIA RTX 3070 |

| Sensors | 2× StereoLab ZED 2i (Fixed) | Robosense Helios LiDAR + StereoLab ZED 2i (Mobile) |

| Point Cloud | 1.6 M points | 1.0 M points |

| Update Freq. | 10 Hz | 20 Hz |

| Deployment | Static setup | Mobile Robot |

| FPS | 40 | 90 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Torrejón, A.; Eslava, S.; Calderón, J.; Núñez, P.; Bustos, P. Teleoperation of Dual-Arm Manipulators via VR Interfaces: A Framework Integrating Simulation and Real-World Control. Electronics 2026, 15, 572. https://doi.org/10.3390/electronics15030572

Torrejón A, Eslava S, Calderón J, Núñez P, Bustos P. Teleoperation of Dual-Arm Manipulators via VR Interfaces: A Framework Integrating Simulation and Real-World Control. Electronics. 2026; 15(3):572. https://doi.org/10.3390/electronics15030572

Chicago/Turabian StyleTorrejón, Alejandro, Sergio Eslava, Jorge Calderón, Pedro Núñez, and Pablo Bustos. 2026. "Teleoperation of Dual-Arm Manipulators via VR Interfaces: A Framework Integrating Simulation and Real-World Control" Electronics 15, no. 3: 572. https://doi.org/10.3390/electronics15030572

APA StyleTorrejón, A., Eslava, S., Calderón, J., Núñez, P., & Bustos, P. (2026). Teleoperation of Dual-Arm Manipulators via VR Interfaces: A Framework Integrating Simulation and Real-World Control. Electronics, 15(3), 572. https://doi.org/10.3390/electronics15030572