1. Introduction

Insulators are critical components in transmission lines, primarily serving the functions of insulation and mechanical suspension. However, with its advantages of flexibility and maneuverability, wide coverage, high inspection efficiency, and convenient data acquisition, UAV remote sensing technology has become the mainstream technical means for monitoring the operational status of transmission line equipment [

1,

2,

3,

4,

5]. Due to prolonged exposure to natural environmental conditions, insulators are vulnerable to various external factors such as temperature and humidity fluctuations, lightning strikes, strong electric fields, and contamination erosion, which can result in breakage, self-explosion, or surface degradation, posing serious threats to the safety and stability of transmission lines [

6,

7]. Given that most high-voltage transmission lines are located in remote and inaccessible regions such as mountainous terrains and river valleys, traditional manual inspection methods are not only costly and inefficient but also require substantial human and financial resources. Therefore, developing efficient algorithms and deploying them on embedded AI modules for real-time, edge-side UAV-based insulator inspection has become a key solution to enhancing inspection efficiency [

8].

Traditional object detection algorithms can be broadly classified into two categories: two-stage methods (e.g., R-CNN [

9] and Faster R-CNN [

10]), which first generate region proposals followed by classification and localization, and one-stage approaches (e.g., YOLO [

11] and SSD [

12]), which directly predict bounding boxes and class probabilities. Among these, YOLO has emerged as a mainstream object detection algorithm due to its real-time inference capabilities, end-to-end trainability, low memory footprint, reduced background false-positive rates, and strong generalization ability, thereby attracting extensive research attention. For instance, PPYOLOE [

13] and YOLOv6 [

14] improved detection performance through structural re-parameterization and task-aligned learning (TAL) strategies, while YOLOv7 [

15] further optimized cross-stage partial networks using extended efficient layer aggregation networks (ELAN) and bag-of-freebies techniques. YOLOv9 [

16] addressed information bottlenecks through generalized efficient layer aggregation networks (GELAN) and programmable gradient information (PGI), whereas DAMO-YOLO [

17] achieved superior backbone–neck feature fusion with the aid of re-parameterized generalized FPN (RepGFPN). Gold-YOLO [

18] incorporated local–global feature interaction via a gather-and-distribute (GD) mechanism, combining convolution and self-attention modules. In AE-YOLOv5 [

19], an attention-enhanced YOLOv5 (AE-YOLOv5) was proposed by embedding a channel-spatial attention module (CSAttention) and a multi-scale attention module (MAttention), which significantly enhanced the model’s feature representation capability for insulator defects under complex backgrounds. In ID-YOLO [

20], a cross-stage residual attention network (CSP-ResNeSt) combined with a bidirectional weighted feature pyramid network (Bi-SimAM-FPN) was designed, leveraging grouped convolution and inter-class attention mechanisms to effectively address the scale-sensitivity issues associated with multi-type insulator defects. In LiteYOLO-ID [

21], a lightweight ECA-GhostNet-C2f module (EGC) and its derivative network architecture were constructed, achieving real-time and high-precision detection on embedded devices through parameter sharing strategies and attention-guided feature compression. In addition, some methods [

22,

23,

24,

25,

26,

27,

28] utilize the strategy with the integration between spatial information and spectral information to achieve good detection performance. Meanwhile, traditional CNN-based detectors still heavily depend on threshold-based candidate screening and non-maximum suppression (NMS), which can reduce model robustness and impair detection speed to a certain extent. To overcome these limitations, the Facebook AI team introduced detection transformer (DETR) [

29], a model that utilizes a global feature representation through the Transformer architecture, replaces anchor-based proposal generation with object queries, and employs a bipartite graph matching algorithm combined with a novel loss function, thereby eliminating the need for NMS and simplifying the detection pipeline. However, despite its superior detection accuracy, DETR suffers from time-consuming training processes and complex query optimization procedures, which limit its practical deployment.

To address these limitations, the Baidu team proposed a real-time end-to-end detection transformer model (RT-DETR) [

30] and its variants [

31,

32,

33,

34] that significantly enhances detection performance by eliminating post-processing steps such as confidence screening and NMS. Deformable DETR [

32] is proposed to focus on a small set of key sampling points to deal with slow convergence and limited feature spatial resolution due to the limitation of Transformer attention modules in processing image feature maps. MS-DETR [

33] places one-to-many supervision to the object queries of the primary decoder that is used for inference. Moreover, YOLOv10 [

35] aims to further advance the performance efficiency boundary of YOLOs from both the post-processing and the model architecture. YOLOv11 [

36] incorporates advanced feature extraction techniques, allowing for more nuanced detail capture. Mamba YOLO [

37] introduce a State Space Model (SSM) with linear complexity to address the quadratic complexity of self-attention. Compared with mainstream models like the YOLO series [

35,

36,

37,

38,

39], which capture multiscale information, RT-DETR offers advantages in both detection accuracy and inference speed. Nonetheless, its large computational load and parameter count hinder its deployment on mobile and resource-constrained edge devices. Additionally, in aerial transmission line scenarios with complex backgrounds, RT-DETR still exhibits shortcomings in small target detection, leading to missed detections and false alarms, which in turn affect the stability and accuracy of insulator defect detection.

To address these challenges, this paper proposes a lightweight insulator multi-defect detection algorithm. The proposed method effectively reduces the computational complexity and parameter count of the RT-DETR model while enhancing small-target detection accuracy, thereby meeting the demands of industrial practical deployment. Specifically, the improvements are made in the following three aspects:

(1) We design a lightweight multi-starblock feature extractor (LMS-FE), which enhances feature extraction capability while significantly reducing the model’s computational complexity. This design achieves model lightweighting and makes the model suitable for edge deployment on UAV platforms with limited computing resources.

(2) We propose a multi-scale feature pyramid named Small Object Enhancement Pyramid (SOEP). The SOEP incorporates SPD convolutional module and the CSPO module. The CSPO module extends the receptive field and enhances global feature extraction capability by parallelizing full-kernel morphological convolutions. Moreover, SOEP integrates the shallow 160 × 160 feature map; this modification enhances the model’s sensitivity to small defective targets in UAV remote sensing imagery, addressing the issue of low spatial resolution of small defects in aerial photography.

(3) We propose a lightweight multi-branch gradient feedback paths feature fusion module (LMB-FF). Specifically, a small-scale receptive field sensing module is designed and embedded into LMB-FF, which effectively suppresses complex background noise in UAV imagery and improves the detection accuracy of small targets under aerial shooting conditions.

The remainder of this article is organized as follows.

Section 2 covers the related work.

Section 3 details the implementation of the proposed method.

Section 4 presents the experimental results and analysis.

Section 5 provides the conclusion and future work of this article.

2. Related Work

The RT-DETR model leverages the Transformer architecture and offers multiple variants, including R18, R34, R50, R101, L, and X configurations. In response to the challenges posed by complex backgrounds and stringent model size constraints inherent in UAV-based small object detection tasks, this paper selects RT-DETR-R18 as the baseline model due to its reduced computational overhead and superior detection accuracy. The architecture of RT-DETR-R18 is composed of three principal components: a Backbone, a Neck Hybrid Encoder, and a Transformer Decoder equipped with an auxiliary detection head.

In the backbone component, the model employs a Convolutional Neural Network (CNN) based on ResNet18 [

40] for hierarchical feature extraction. The feature outputs from the last three stages, denoted as S3, S4, and S5, are utilized as inputs to the encoder. The neck hybrid encoder integrates two key modules: the Attention-based Intra-scale Feature Interaction (AIFI) module and the CNN-based Cross-scale Feature Fusion Module (CCFM). The AIFI module is designed to enhance and preserve fine-grained information by encoding features across multiple scales, thereby improving the model’s ability to capture small object characteristics. Meanwhile, the CCFM employs a bidirectional fusion mechanism, effectively aggregating multi-scale features from S3, S4, and S5 through a combination of upsampling and downsampling operations. This process incorporates both bottom-up and top-down pathways to strengthen feature representation and maintain semantic integrity. The fused features are subsequently aggregated via element-wise addition and reshaped into a sequence of image features through flattening.

In the decoder component, initial object queries are selected from the encoder output sequence using the IoU-aware Query Selection module. These queries are then refined through iterative optimization to produce final bounding box predictions and their corresponding confidence scores, enabling efficient target detection. The overall architectural design achieves a balance between computational efficiency and detection precision, meeting the practical requirements of small object detection in resource-constrained edge computing environments.

3. Methods

The proposed insulator defect detection method IDD-DETR includes three modules: a feature extraction module, a feature enhancement module, and a feature fusion module. First, IDD-DETR adopts the lightweight multi-starblock (LMS-FE) as the feature extractor. While improving the computational efficiency of the model, it realizes the lightweight deployment on UAV edge platforms, effectively addressing the issue of excessive resource consumption of traditional models on edge devices. Following this, aiming at the pain points of insufficient low-level feature representation and low detection rate of small defect targets in small target detection tasks, a small target enhancement pyramid module SOEP is designed. By fusing the shallow features of the P2 layer, it not only strengthens the low-level feature representation capability but also significantly improves the detection sensitivity for small defect targets. Finally, a lightweight multi-branch feature fusion module LMB-FF is proposed, which utilizes multi-branch gradient feedback paths to achieve efficient fusion of spatial information and semantic information. This design not only suppresses the interference of complex background noise but also further enhances the robustness of the model, ensuring the stability of detection performance. The overall architecture is depicted in

Figure 1.

3.1. Lightweight Multi-Starblock Feature Extractor

To address the high computational complexity caused by traditional backbone networks, IDD-DETR proposes a lightweight multi-starblock feature extractor LMS-FE based on StarNet [

41] to meet the requirements of mobile deployment, thereby further optimizing the overall performance of the model.

The architecture of feature extraction process comprises four hierarchical stages, as shown in

Figure 2. Each stage of the module performs downsampling via a convolution layer and extracts features using the starblock. Specifically, the operation process of each starblock is as follows: First, the input feature map is processed with a 7 × 7 depth-wise convolution (DW-Conv); this operation efficiently captures local spatial features while significantly reducing the model’s parameters and computational complexity. Subsequently, a batch normalization layer is introduced to standardize the input distribution of each layer, thereby stabilizing the training process, accelerating model convergence, and enhancing robustness against input noise. Finally, the normalized feature map is divided into two parallel branches: one branch adopts a 1 × 1 pointwise convolution (PWConv) for cross-channel feature fusion and nonlinear transformation, while the other leverages the ReLU6 activation function to introduce nonlinear feature responses.

The starblock module leverages element-wise multiplication operations, which can be expressed by the following formula:

where

and

are distinct weight matrices used to perform linear transformations on the input feature

, where

denotes the output feature of DW-Conv module,

and

correspond to the bias terms of the two linear transformations, and

represents the element-wise multiplication operation, i.e., the core operator of the star operation.

This operation significantly strengthens the model’s capability to capture complex and fine-grained features.

3.2. Small Object Enhancement Pyramid

To address the structural redundancy and high computational complexity issues of traditional schemes in small target detection tasks, we propose the small object enhancement pyramid (SOEP) method to efficiently enhance the shallow feature representation required for small target detection, as illustrated in

Figure 3.

To improve the perception capability of defective small targets, we introduce the 160 × 160 shallow features from the P2 layer into the SOEP structure and integrate them into the neck network. Specifically, the P2 layer leverages SPD convolution (SPDConv) [

42] to generate scale-aware features that are rich in small-object information. The detailed design of SPDConv is shown in

Figure 3, which can be formulated as follows:

where

is a region sampled from the inpu

with a stride of scale.

Subsequently, after upsampling the P5 layer, it is concatenated and fused with the P4 layer. The fused feature is then upsampled again and concatenated with the output feature of the P3 layer and , denoted as .

To alleviate the problem of information redundancy in feature fusion, we feed

into CSP Omni-Kernel (CSPO) module, as illustrated in

Figure 4. It consists of a global branch, a large-kernel branch, and a local branch. Specifically, the global branch extracts global contextual information using a dual-domain channel attention module (DCAM) and a frequency-based spatial attention module (FSAM). The large-kernel branch captures large-scale structural features via depth-wise convolutions with an extremely large kernel size. The local branch supplements fine-grained local details through pointwise depth-wise convolutions.

where

is depth-wise convolutional layer.

Finally, the outputs of these three branches are fused via a 1 × 1 convolutional layer to generate the final output:

Specifically, DCAM enhances the representation of global features by reinforcing the coarse-grained dual-domain characteristics of the channel dimension. It leverages the Fast Fourier Transform (FFT) and its inverse (IFFT) combined with Frequency Channel Attention (FCA) to refine global feature representations based on the spectral convolutional theorem. Subsequently, these enhanced features are further processed by the spatial channel attention (SCA) module to capture comprehensive global information in the frequency domain. Meanwhile, the FSAM refines the spectral feature representations from the spatial dimension, thereby strengthening the characterization of fine-grained details.

By integrating global, large, and local branches, the module achieves efficient feature extraction across multiple scales, from global structures to local details, while effectively suppressing interference from complex backgrounds. As a result, the proposed SOEP structure substantially improves the detection performance of small targets by efficiently fusing multi-scale features without incurring additional computational overhead.

3.3. Lightweight Multi-Branch Feature Fusion Module

To improve the detection accuracy of small-object detection under complex backgrounds, we propose the LMB-FF method, a lightweight, efficient, and parameterized aggregation module designed to enhance feature fusion through a multi-branch gradient feedback mechanism. This design aggregates local and contextual features of small targets, improving detection performance.

The LMB-FF module, as illustrated in

Figure 5, implements a feature split, multi-branch processing, concatenation fusion workflow to enhance feature representation while balancing efficiency [

43]. Specifically, the input feature map first undergoes dimensionality reduction via a 1 × 1 convolutional kernel, and is then split into multiple sub-feature maps through a split operation. These sub-feature maps are processed in parallel across three distinct branches: a 3 × 3 convolutional kernel branch integrated with the NCSP structure, a standard 3 × 3 convolutional kernel branch, and a combined branch consisting of Conv3XC-NCSP and 3 × 1 convolutional kernel. Notably, the Conv3XC-NCSP itself adopts a multi-branch parallel design, which includes a 3 × 3 convolutional kernel branch, a Conv3XC and 3 × 3 convolutional kernel branch, and another 3 × 3 convolutional kernel branch. The outputs of these internal branches are fused via element-wise addition. After processing, the outputs of all branches are concatenated, and the channel dimension is adjusted using a 1 × 1 convolutional kernel to generate the final output of the LMB-FF module.

To further optimize deployment efficiency without sacrificing performance, the Conv3XC component within the module leverages a reparameterization strategy [

34] that decouples training and inference structures. During the training phase, it employs a multi-branch parallel architecture composed of 1 × 1 convolutional layer, 3 × 3 convolutional layer, and 1 × 1 convolutional layer, with branch outputs fused via element-wise addition to ensure sufficient feature diversity. In the inference phase, this multi-branch structure is equivalently re-parameterized into a single 3 × 3 convolutional kernel through Fuse BN, which simplifies the model architecture and reduces computational overhead while retaining the learned feature representation capabilities.

The convolutional layer is represented by the equation in Equation (9), while the BN layer is described in Equation (10). The fusion process is illustrated in Equation (11), which effectively combines the operations of convolution and BN to reduce computational overhead while maintaining the model’s performance.

where x is the input value, w is the convolution module weight, b is the bias, avg is the input mean,

is the input variance,

is the BN weight, and

is the weight; Equation (9) represents the expression for the weights and biases of the newly fused convolutional layer. Specifically, during the inference phase, the convolutional weights are fused with the batch normalization (BN) parameters, thereby enabling the reparameterization of model weights and biases. Equation (11) corresponds to the fused weights of the convolutional layer and the BN layer. The determination of these weights combines parameter learning during the training phase with reparameterization optimization during the inference phase, and the weights of the convolution module enhance the stability of small target feature representation and accelerate the convergence of the proposed IDD-DETR model and optimize small target detection accuracy. This process reduces computational complexity and enhances inference speed. During the model conversion process, the weights of each branch are uniformly transformed to a 3 × 3 convolutional kernel size. To achieve consistency in kernel size, appropriate padding operations are applied to the 1 × 1 convolutional layers. Ultimately, the weights and biases of each branch are weighted and summed to form the final weights and biases of the single-branch convolution, thereby completing the optimization step of weight integration.

By optimizing both the feature transfer path and the aggregation mechanism, the LMB-FF module significantly enhances the fusion and representation of multi-scale features, while simultaneously reducing the computational resource overhead. This efficient feature aggregation mechanism plays a crucial role in preserving detailed information, particularly when handling complex tasks. As a result, it considerably improves the model’s performance in small target detection, enabling more accurate and effective detection under challenging conditions.

5. Discussion

Firstly, in terms of the model performance, it can be observed in

Table 3 in that, compared with other popular object detection algorithms based on YOLO and DETR models, the proposed IDD-DETR algorithm achieves the best results in terms of mAP for small targets such as self-exploded, fractured, and flashover-contaminated insulators. While ensuring strong detection performance for small objects, IDD-DETR also has a relatively low number of parameters and relatively low FLOPs, indicating that the IDD-DETR algorithm has low complexity and high computational efficiency, making it well-suited for deployment on edge devices.

Table 7 also shows that the IDD-DETR algorithm exhibits excellent small-object detection performance on both public datasets, CPLID and IDID, further demonstrating its advantages. The main reason lies in the ability of IDD-DETR to capture local–global information, thereby extracting effective semantic features while suppressing background noise interference, which results in its superior small-target detection performance.

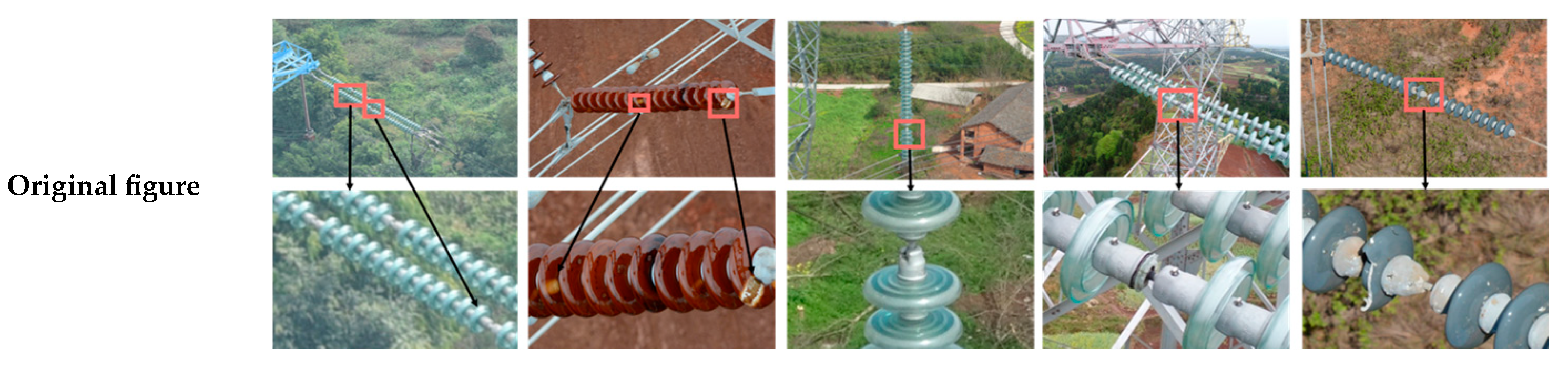

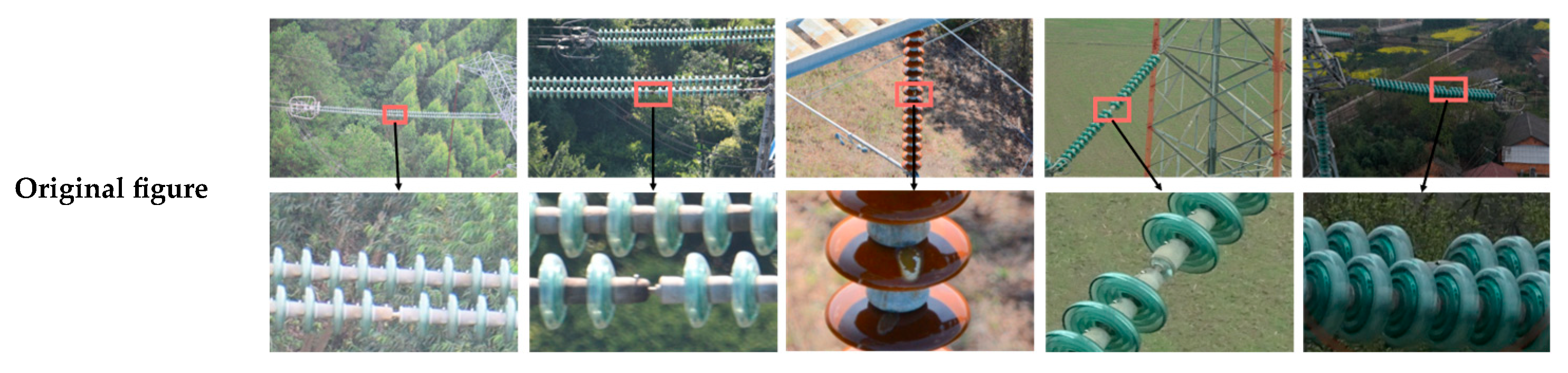

Secondly, in terms of visualization results, as shown in

Figure 7,

Figure 8 and

Figure 9, the proposed IDD-DETR algorithm is capable of accurately detecting small insulator targets in various scenarios such as grassland, sky, snow, dry land, and construction scenes. Whether under sunny or cloudy weather conditions, it consistently maintains precise detection of insulators. Moreover, even in scenes with significant background noise (e.g., construction scenes), it achieves strong detection performance. These detection outcomes are attributed to the synergistic interaction of every module within the IDD-DETR algorithm. The ablation studies in

Table 6 and the module selection experiments for lightweight design in

Table 4 demonstrate that modules such as starblock, LMS-FE, SOEP, and LMB-FE work collaboratively to enable accurate detection of small targets across diverse scenes and varying weather conditions. Furthermore, the convergence curve of the algorithm in

Figure 10 shows that the loss value continuously decreases throughout training. This indicates that the internal modules of IDD-DETR interact effectively to capture meaningful semantic information, thereby contributing to its superior performance in small object detection.

6. Conclusions

Specifically designed for UAV remote sensing imagery of transmission lines, the proposed IDD-DETR effectively solves the key problems of UAV edge deployment, such as limited computing resources, small defect targets, and complex background interference. It provides a practical and efficient solution for real-time insulator defect detection in UAV power inspection. Firstly, the LMS-FE module based on StarNet is incorporated, which significantly reduces both the model parameters and computational overhead, while simultaneously enhancing detection accuracy, thereby achieving a lightweight model. Secondly, for small target detection, a multi-scale feature pyramid, named SOEP, is introduced. This approach effectively replaces the traditional method of increasing the P2 detection head by fusing shallow feature layers with the P3 detection head. This modification not only reduces computational costs but also improves feature extraction from the underlying layers. Additionally, this study introduces a lightweight, highly parameterized, and efficient feature fusion module, LMB-FF, which incorporates multi-branch gradient feedback paths. This module refines and effectively merges low-level spatial information with high-level semantic features of defective targets. It enhances the expression capability for small-scale features and suppresses complex background noise, using a small-scale, heavily parameterized receptive field design. The incorporation of this field design accelerates model convergence, further reduces parameter count, and minimizes computational demand. The experimental results demonstrate that the proposed algorithm achieves 96.1% mAP on the proprietary insulator defect dataset, reduces model parameters and computational volume by 44.9% and 47.1%, respectively, and attains a detection speed of 61.2 frames per second, meeting the real-time monitoring requirements for insulator defects on UAV platforms. Future work will focus on integrating multi-sensor data to improve defect detection performance under extreme weather conditions and exploring UAV swarm collaborative detection based on the proposed lightweight model to enhance inspection coverage and efficiency.