Synergistic Use of Radar Sentinel-1 and Optical Sentinel-2 Imagery for Crop Mapping: A Case Study for Belgium

Abstract

1. Introduction

- ▪

- What is the pixel-level classification accuracy in function of the input source (optical, radar, or a combination of both)?

- ▪

- What is the evolution of classification accuracy during the growing season and how can this be framed in terms of crop phenology?

- ▪

- What is the individual importance of each input predictor for the classification task?

- ▪

- What is the classification confidence and how can this information be used to assess the accuracy?

2. Materials and Methods

2.1. Data

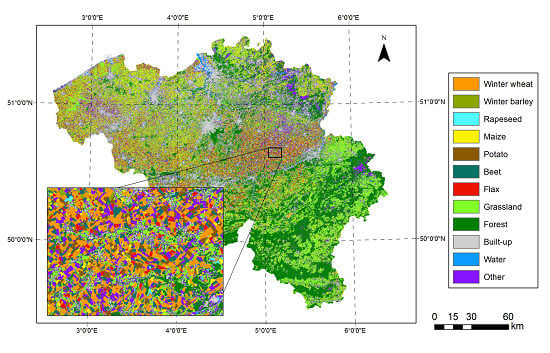

2.1.1. Field Data

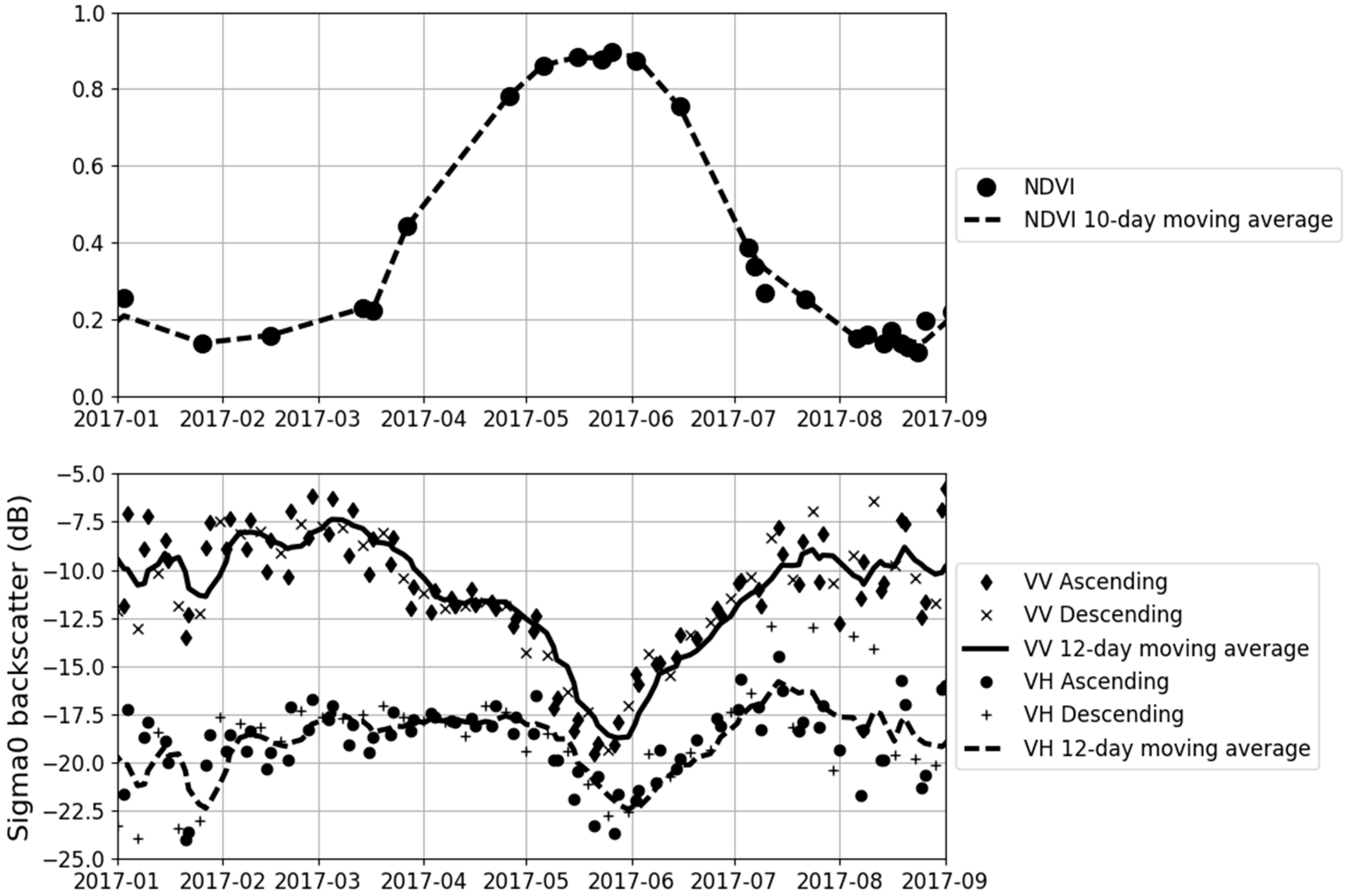

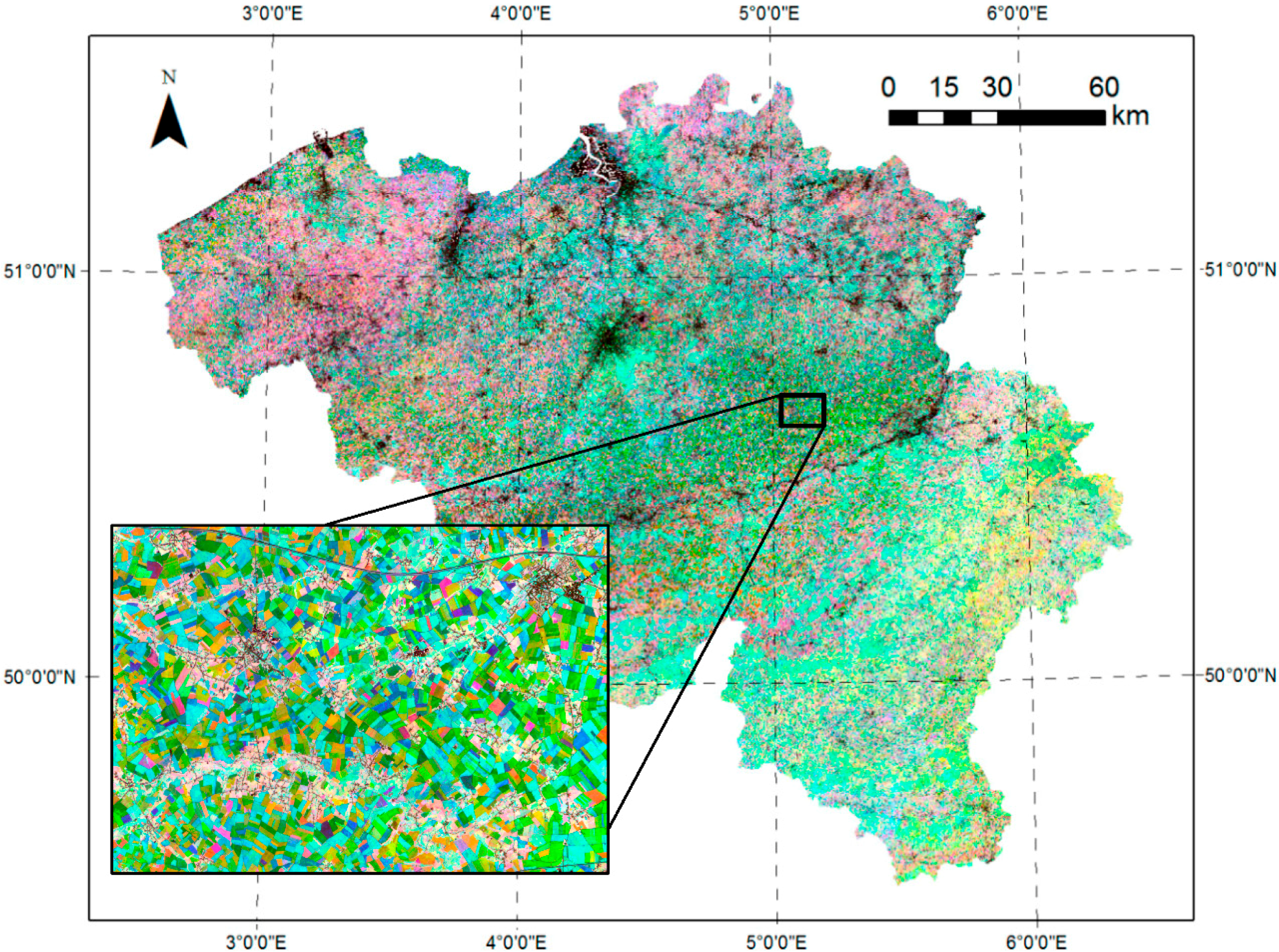

2.1.2. Sentinel-1 Data

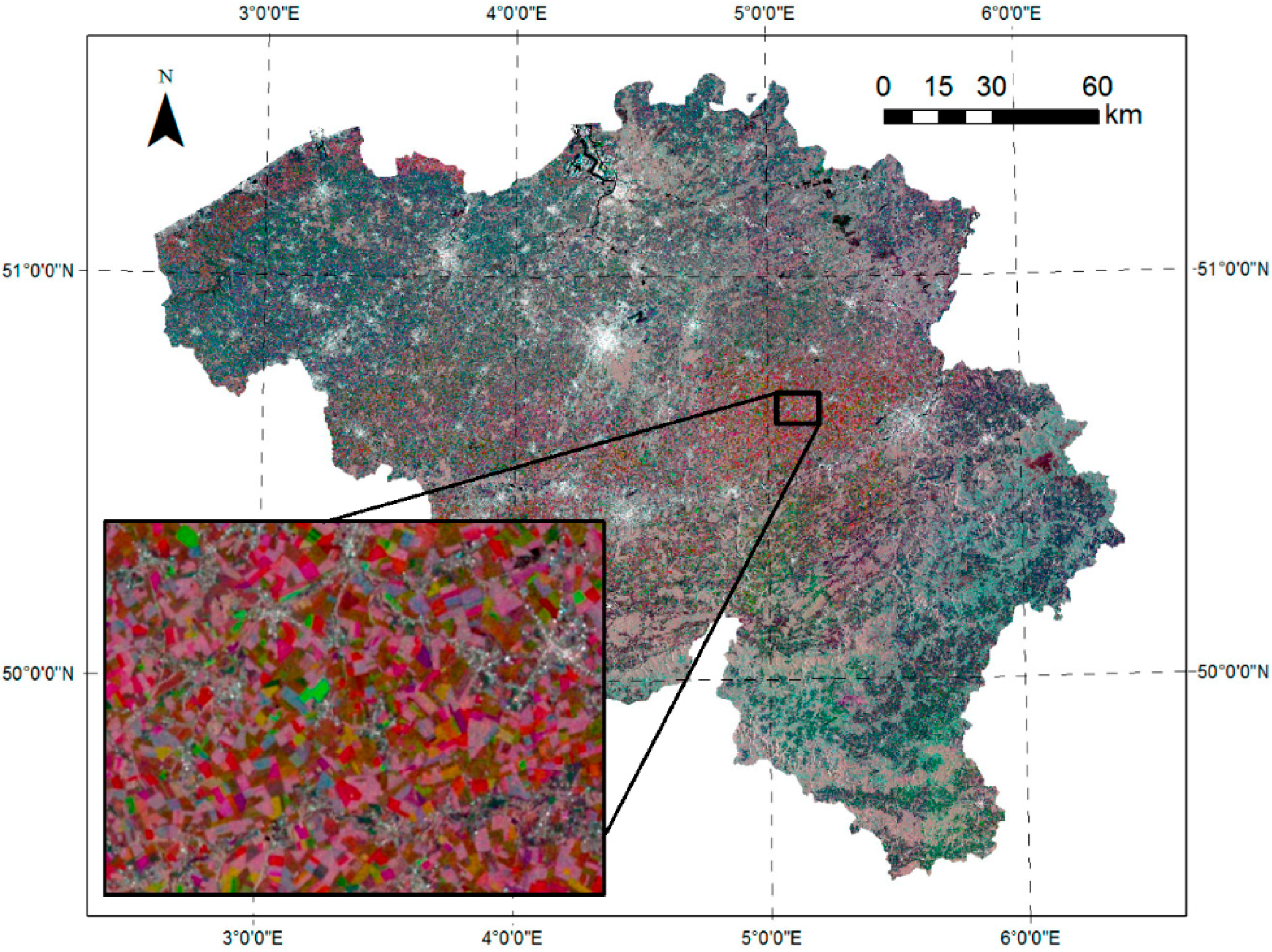

2.1.3. Sentinel-2 Data

2.2. Methodology

2.2.1. Hierarchical Random Forest Classification

2.2.2. Calibration and Validation Data

2.2.3. Classification Schemes

2.2.4. Classification Confidence

3. Results

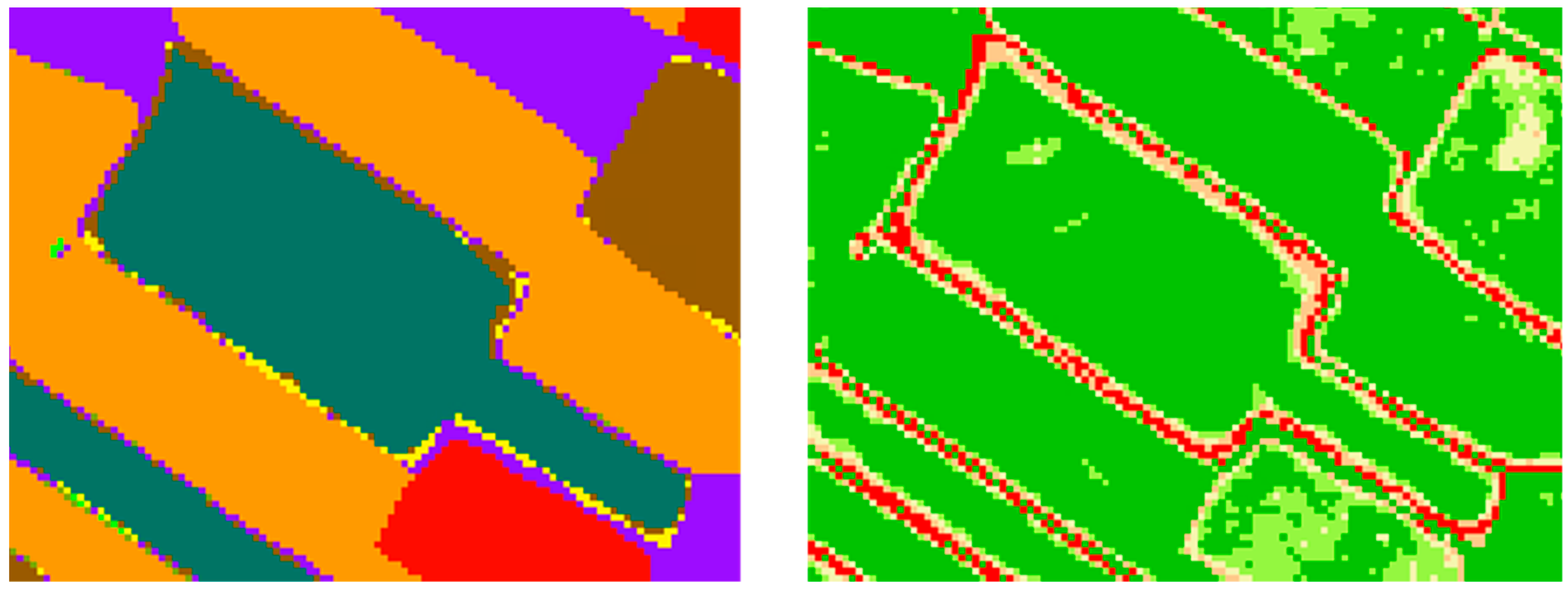

3.1. Classification Accuracy

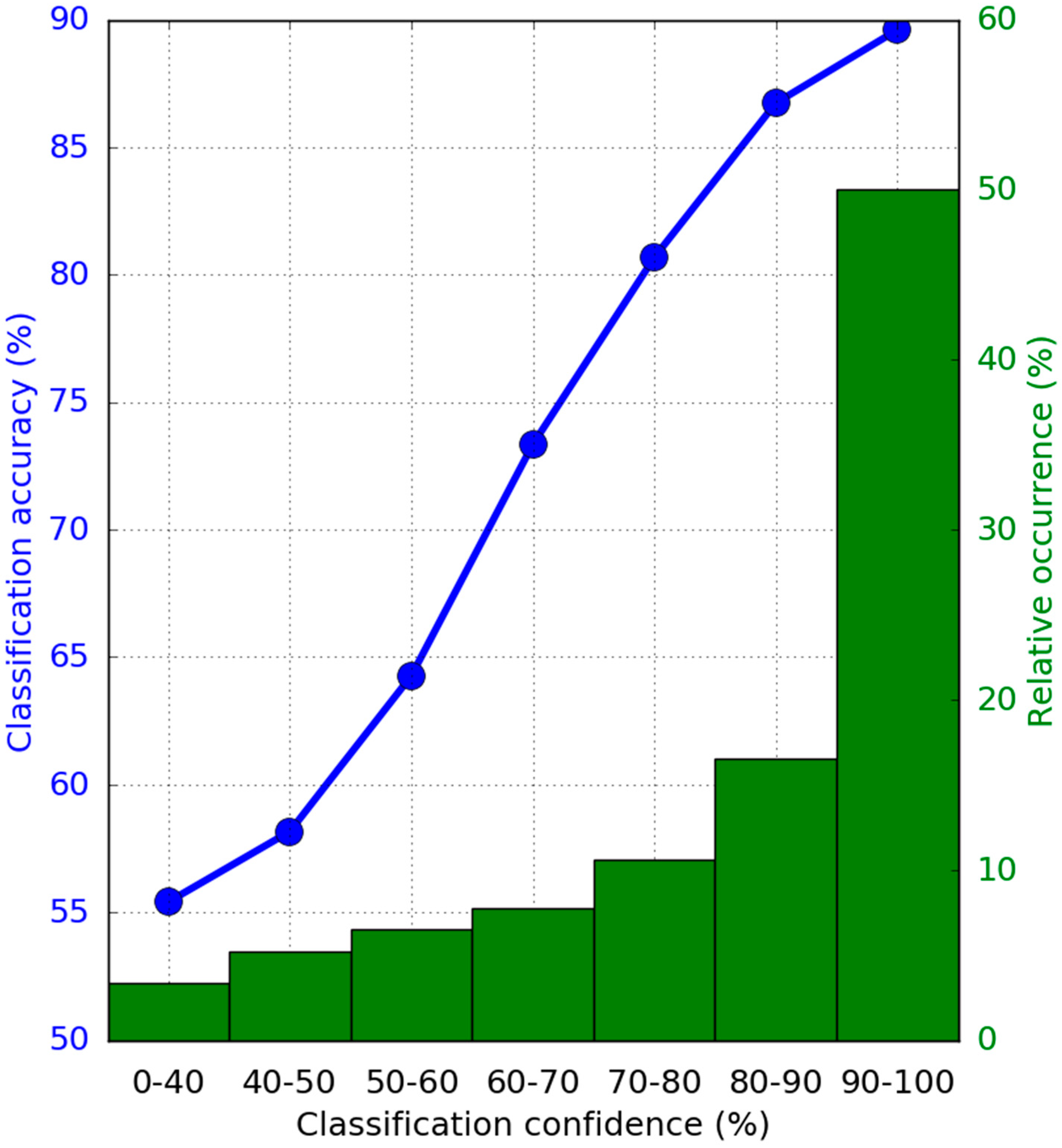

3.2. Classification Confidence

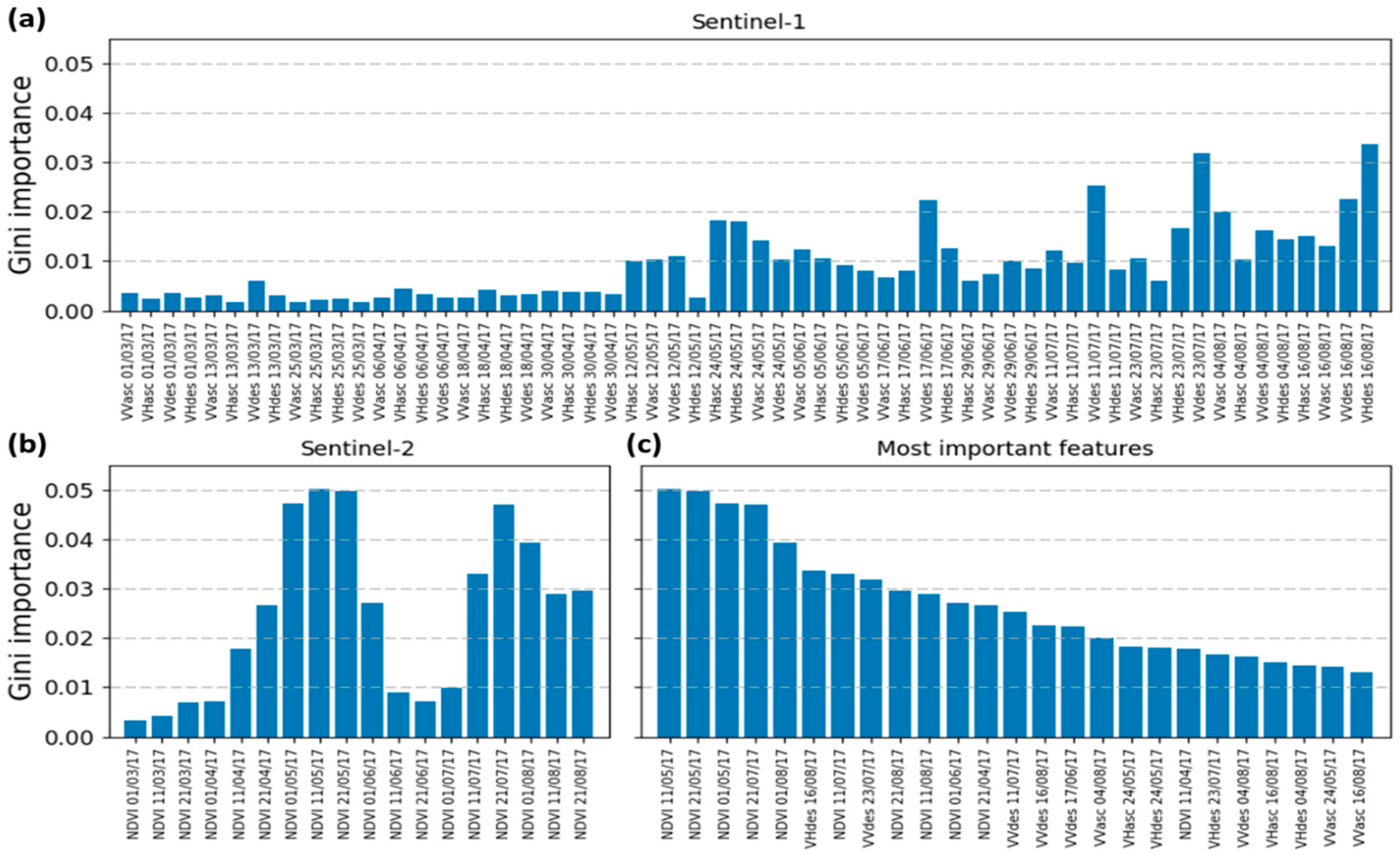

3.3. Feature Importance

4. Discussion

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Thenkabail, P.S. Global Croplands and their Importance for Water and Food Security in the Twenty-first Century: Towards an Ever Green Revolution that Combines a Second Green Revolution with a Blue Revolution. Remote Sens. 2010, 2, 2305–2312. [Google Scholar] [CrossRef]

- Fritz, S.; See, L.; Bayas, J.C.L.; Waldner, F.; Jacques, D.; Becker-Reshef, I.; Whitcraft, A.; Baruth, B.; Bonifacio, R.; Crutchfield, J.; et al. A comparison of global agricultural monitoring systems and current gaps. Agric. Syst. 2018. [Google Scholar] [CrossRef]

- Coleman, E.; Dick, W.; Gilliams, S.; Piccar, I.; Rispoli, F.; Stoppa, A. Remote Sensing for Index Insurance. Findings and Lessons Learned for Smallholder Agriculture. Available online: https://www.ifad.org/documents/38714170/39144386/RemoteSensing_LongGuide_2017.pdf/f2d22adb-c3b0-4fe3-9cbb-c25054d756fe (accessed on 12 October 2018).

- Wardlow, B.D.; Egbert, S.L. Large-area crop mapping using time-series MODIS 250 m NDVI data: An assessment for the U.S. Central Great Plains. Remote Sens. Environ. 2008, 112, 1096–1116. [Google Scholar] [CrossRef]

- Arvor, D.; Jonathan, M.; Meirelles, M.S.P.; Dubreuil, V.; Durieux, L. Classification of MODIS EVI time series for crop mapping in the state of Mato Grosso, Brazil. Int. J. Remote Sens. 2011, 32, 7847–7871. [Google Scholar] [CrossRef]

- Kussul, N.; Skakun, S.; Shelestov, A.; Lavreniuk, M.; Yailymov, B.; Kussul, O. Regional scale crop mapping using multi-temporal satellite imagery. ISPRS-Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2015, XL-7/W3, 45–52. [Google Scholar] [CrossRef]

- Panigrahy, S.; Sharma, S.A. Mapping of crop rotation using multidate Indian Remote Sensing Satellite digital data. ISPRS J. Photogramm. Remote Sens. 1997, 52, 85–91. [Google Scholar] [CrossRef]

- Janssen, L.L.F.; Middelkoop, H. Knowledge-based crop classification of a Landsat Thematic Mapper image. Int. J. Remote Sens. 1992, 13, 2827–2837. [Google Scholar] [CrossRef]

- Bauer, M.E.; Cipra, J.E.; Anuta, P.E.; Etheridge, J.B. Identification and area estimation of agricultural crops by computer classification of LANDSAT MSS data. Remote Sens. Environ. 1979, 8, 77–92. [Google Scholar] [CrossRef]

- Batista, G.T.; Hixson, M.M.; Bauer, M.E. LANDSAT MSS crop classification performance as a function of scene characteristics. Int. J. Remote Sens. 1985, 6, 1521–1533. [Google Scholar] [CrossRef]

- Tatsumi, K.; Yamashiki, Y.; Canales Torres, M.A.; Taipe, C.L.R. Crop classification of upland fields using Random forest of time-series Landsat 7 ETM+ data. Comput. Electron. Agric. 2015, 115, 171–179. [Google Scholar] [CrossRef]

- Li, Q.; Wang, C.; Zhang, B.; Lu, L. Object-Based Crop Classification with Landsat-MODIS Enhanced Time-Series Data. Remote Sens. 2015, 7, 16091–16107. [Google Scholar] [CrossRef]

- Jia, K.; Wei, X.; Gu, X.; Yao, Y.; Xie, X.; Li, B. Land cover classification using Landsat 8 Operational Land Imager data in Beijing, China. Geocarto Int. 2014, 29, 941–951. [Google Scholar] [CrossRef]

- Durgun, Y.; Gobin, A.; Van De Kerchove, R.; Tychon, B. Crop Area Mapping Using 100-m Proba-V Time Series. Remote Sens. 2016, 8, 585. [Google Scholar] [CrossRef]

- Drusch, M.; Del Bello, U.; Carlier, S.; Colin, O.; Fernandez, V.; Gascon, F.; Hoersch, B.; Isola, C.; Laberinti, P.; Martimort, P.; et al. Sentinel-2: ESA’s Optical High-Resolution Mission for GMES Operational Services. Remote Sens. Environ. 2012, 120, 25–36. [Google Scholar] [CrossRef]

- Immitzer, M.; Vuolo, F.; Atzberger, C. First Experience with Sentinel-2 Data for Crop and Tree Species Classifications in Central Europe. Remote Sens. 2016, 8, 166. [Google Scholar] [CrossRef]

- Vuolo, F.; Neuwirth, M.; Immitzer, M.; Atzberger, C.; Ng, W.-T. How much does multi-temporal Sentinel-2 data improve crop type classification? Int. J. Appl. Earth Obs. Geoinf. 2018, 72, 122–130. [Google Scholar] [CrossRef]

- Sonobe, R.; Yamaya, Y.; Tani, H.; Wang, X.; Kobayashi, N.; Mochizuki, K. Crop classification from Sentinel-2-derived vegetation indices using ensemble learning. J. Appl. Remote Sens. 2018, 12, 026019. [Google Scholar] [CrossRef]

- Baret, F.; Weiss, M.; Lacaze, R.; Camacho, F.; Makhmara, H.; Pacholcyzk, P.; Smets, B. GEOV1: LAI and FAPAR essential climate variables and FCOVER global time series capitalizing over existing products. Part1: Principles of development and production. Remote Sens. Environ. 2013, 137, 299–309. [Google Scholar] [CrossRef]

- Whitcraft, A.K.; Vermote, E.F.; Becker-Reshef, I.; Justice, C.O. Cloud cover throughout the agricultural growing season: Impacts on passive optical earth observations. Remote Sens. Environ. 2015, 156, 438–447. [Google Scholar] [CrossRef]

- Joshi, N.; Baumann, M.; Ehammer, A.; Fensholt, R.; Grogan, K.; Hostert, P.; Jepsen, M.R.; Kuemmerle, T.; Meyfroidt, P.; Mitchard, E.T.A.; et al. A review of the application of optical and radar remote sensing data fusion to land use mapping and monitoring. Remote Sens. 2016, 8, 70. [Google Scholar] [CrossRef]

- Campbell, J.B.; Wynne, R.H. Introduction to Remote Sensing, 5th ed.; The Guilford Press: New York, NY, USA, 2011; ISBN 160918176X. [Google Scholar]

- Kasischke, E.S.; Melack, J.M.; Craig Dobson, M. The use of imaging radars for ecological applications—A review. Remote Sens. Environ. 1997, 59, 141–156. [Google Scholar] [CrossRef]

- McNairn, H.; Brisco, B. The application of C-band polarimetric SAR for agriculture: A review. Can. J. Remote Sens. 2004, 30, 525–542. [Google Scholar] [CrossRef]

- Torres, R.; Snoeij, P.; Geudtner, D.; Bibby, D.; Davidson, M.; Attema, E.; Potin, P.; Rommen, B.; Floury, N.; Brown, M.; et al. GMES Sentinel-1 mission. Remote Sens. Environ. 2012, 120, 9–24. [Google Scholar] [CrossRef]

- Malenovský, Z.; Rott, H.; Cihlar, J.; Schaepman, M.E.; García-Santos, G.; Fernandes, R.; Berger, M. Sentinels for science: Potential of Sentinel-1, -2, and -3 missions for scientific observations of ocean, cryosphere, and land. Remote Sens. Environ. 2012, 120, 91–101. [Google Scholar] [CrossRef]

- Veloso, A.; Mermoz, S.; Bouvet, A.; Le Toan, T.; Planells, M.; Dejoux, J.-F.; Ceschia, E. Understanding the temporal behavior of crops using Sentinel-1 and Sentinel-2-like data for agricultural applications. Remote Sens. Environ. 2017, 199, 415–426. [Google Scholar] [CrossRef]

- Setiyono, T.D.; Holecz, F.; Khan, N.I.; Barbieri, M.; Quicho, E.; Collivignarelli, F.; Maunahan, A.; Gatti, L.; Romuga, G.C. Synthetic Aperture Radar (SAR)-based paddy rice monitoring system: Development and application in key rice producing areas in Tropical Asia. IOP Conf. Ser. Earth Environ. Sci. 2017, 54, 012015. [Google Scholar] [CrossRef]

- Nelson, A.; Setiyono, T.; Rala, A.; Quicho, E.; Raviz, J.; Abonete, P.; Maunahan, A.; Garcia, C.; Bhatti, H.; Villano, L.; et al. Towards an Operational SAR-Based Rice Monitoring System in Asia: Examples from 13 Demonstration Sites across Asia in the RIICE Project. Remote Sens. 2014, 6, 10773–10812. [Google Scholar] [CrossRef]

- Rüetschi, M.; Schaepman, M.; Small, D. Using Multitemporal Sentinel-1 C-band Backscatter to Monitor Phenology and Classify Deciduous and Coniferous Forests in Northern Switzerland. Remote Sens. 2017, 10, 55. [Google Scholar] [CrossRef]

- Ndikumana, E.; Ho Tong Minh, D.; Baghdadi, N.; Courault, D.; Hossard, L. Deep Recurrent Neural Network for Agricultural Classification using multitemporal SAR Sentinel-1 for Camargue, France. Remote Sens. 2018, 10, 1217. [Google Scholar] [CrossRef]

- Pereira, L.D.; Freitas, C.D.; Sant’ Anna, S.J.; Lu, D.; Moran, E.F. Optical and radar data integration for land use and land cover mapping in the Brazilian Amazon. GISci. Remote Sens. 2013, 50, 301–321. [Google Scholar] [CrossRef]

- Chureesampant, K.; Susaki, J. Land cover classification using multi-temporal SAR data and optical data fusion with adaptive training sample selection. In Proceedings of the 2012 IEEE International Geoscience and Remote Sensing Symposium, Munich, Germany, 22–27 July 2012; pp. 6177–6180. [Google Scholar]

- Erasmi, S.; Twele, A. Regional land cover mapping in the humid tropics using combined optical and SAR satellite data—A case study from Central Sulawesi, Indonesia. Int. J. Remote Sens. 2009, 30, 2465–2478. [Google Scholar] [CrossRef]

- Lehmann, E.A.; Caccetta, P.A.; Zhou, Z.S.; McNeill, S.J.; Wu, X.; Mitchell, A.L. Joint processing of landsat and ALOS-PALSAR data for forest mapping and monitoring. IEEE Trans. Geosci. Remote Sens. 2012, 50, 55–67. [Google Scholar] [CrossRef]

- Lehmann, E.A.; Caccetta, P.; Lowell, K.; Mitchell, A.; Zhou, Z.S.; Held, A.; Milne, T.; Tapley, I. SAR and optical remote sensing: Assessment of complementarity and interoperability in the context of a large-scale operational forest monitoring system. Remote Sens. Environ. 2015, 156, 335–348. [Google Scholar] [CrossRef]

- Blaes, X.; Vanhalle, L.; Defourny, P. Efficiency of crop identification based on optical and SAR image time series. Remote Sens. Environ. 2005, 96, 352–365. [Google Scholar] [CrossRef]

- McNairn, H.; Champagne, C.; Shang, J.; Holmstrom, D.; Reichert, G. Integration of optical and Synthetic Aperture Radar (SAR) imagery for delivering operational annual crop inventories. ISPRS J. Photogramm. Remote Sens. 2009, 64, 434–449. [Google Scholar] [CrossRef]

- Soria-Ruiz, J.; Fernandez-Ordoñez, Y.; Woodhouse, I.H. Land-cover classification using radar and optical images: A case study in Central Mexico. Int. J. Remote Sens. 2010, 31, 3291–3305. [Google Scholar] [CrossRef]

- Inglada, J.; Vincent, A.; Arias, M.; Marais-Sicre, C. Improved Early Crop Type Identification by Joint Use of High Temporal Resolution SAR and Optical Image Time Series. Remote Sens. 2016, 8, 362. [Google Scholar] [CrossRef]

- Zhou, T.; Pan, J.; Zhang, P.; Wei, S.; Han, T. Mapping Winter Wheat with Multi-Temporal SAR and Optical Images in an Urban Agricultural Region. Sensors 2017, 17, 1210. [Google Scholar] [CrossRef] [PubMed]

- Ferrant, S.; Selles, A.; Le Page, M.; Herrault, P.A.; Pelletier, C.; Al-Bitar, A.; Mermoz, S.; Gascoin, S.; Bouvet, A.; Saqalli, M.; et al. Detection of Irrigated Crops from Sentinel-1 and Sentinel-2 Data to Estimate Seasonal Groundwater Use in South India. Remote Sens. 2017, 9, 1119. [Google Scholar] [CrossRef]

- Torbick, N.; Huang, X.; Ziniti, B.; Johnson, D.; Masek, J.; Reba, M. Fusion of Moderate Resolution Earth Observations for Operational Crop Type Mapping. Remote Sens. 2018, 10, 1058. [Google Scholar] [CrossRef]

- Van De Vreken, P.; Gobin, A.; Baken, S.; Van Holm, L.; Verhasselt, A.; Smolders, E.; Merckx, R. Crop residue management and oxalate-extractable iron and aluminium explain long-term soil organic carbon sequestration and dynamics. Eur. J. Soil Sci. 2016, 67, 332–340. [Google Scholar] [CrossRef]

- Gorelick, N.; Hancher, M.; Dixon, M.; Ilyushchenko, S.; Thau, D.; Moore, R. Google Earth Engine: Planetary-scale geospatial analysis for everyone. Remote Sens. Environ. 2017, 202, 18–27. [Google Scholar] [CrossRef]

- Lee, J.-S. Refined filtering of image noise using local statistics. Comput. Graph. Image Process. 1981, 15, 380–389. [Google Scholar] [CrossRef]

- Topouzelis, K.; Singha, S.; Kitsiou, D. Incidence angle normalization of Wide Swath SAR data for oceanographic applications. Open Geosci. 2016, 8. [Google Scholar] [CrossRef]

- Main-Knorn, M.; Pflug, B.; Louis, J.; Debaecker, V.; Müller-Wilm, U.; Gascon, F. Sen2Cor for Sentinel-2. In Image and Signal Processing for Remote Sensing XXIII; Bruzzone, L., Bovolo, F., Benediktsson, J.A., Eds.; SPIE: Bellingham, DC, USA, 2017; p. 3. [Google Scholar]

- De Keukelaere, L.; Sterckx, S.; Adriaensen, S.; Knaeps, E.; Reusen, I.; Giardino, C.; Bresciani, M.; Hunter, P.; Neil, C.; Van der Zande, D.; et al. Atmospheric correction of Landsat-8/OLI and Sentinel-2/MSI data using iCOR algorithm: Validation for coastal and inland waters. Eur. J. Remote Sens. 2018, 51, 525–542. [Google Scholar] [CrossRef]

- Zheng, B.; Myint, S.W.; Thenkabail, P.S.; Aggarwal, R.M. A support vector machine to identify irrigated crop types using time-series Landsat NDVI data. Int. J. Appl. Earth Obs. Geoinf. 2015, 34, 103–112. [Google Scholar] [CrossRef]

- Bellón, B.; Bégué, A.; Lo Seen, D.; de Almeida, C.; Simões, M. A Remote Sensing Approach for Regional-Scale Mapping of Agricultural Land-Use Systems Based on NDVI Time Series. Remote Sens. 2017, 9, 600. [Google Scholar] [CrossRef]

- Cai, Y.; Guan, K.; Peng, J.; Wang, S.; Seifert, C.; Wardlow, B.; Li, Z. A high-performance and in-season classification system of field-level crop types using time-series Landsat data and a machine learning approach. Remote Sens. Environ. 2018, 210, 35–47. [Google Scholar] [CrossRef]

- Estel, S.; Kuemmerle, T.; Alcántara, C.; Levers, C.; Prishchepov, A.; Hostert, P. Mapping farmland abandonment and recultivation across Europe using MODIS NDVI time series. Remote Sens. Environ. 2015, 163, 312–325. [Google Scholar] [CrossRef]

- Swets, D.L.; Reed, B.C.; Rowland, J.R.; Marko, S.E. A weighted least-squares approach to temporal smoothing of NDVI. In 1999 ASPRS Annual Conference, From Image to Information; American Society for Photogrammetry and Remote Sensing: Bethesda, MD, USA, 1999. [Google Scholar]

- Klisch, A.; Royer, A.; Lazar, C.; Baruth, B.; Genovese, G. Extraction of phenological parameters from temporally smoothed vegetation indices. In Proceedings of the ISPRS Archives XXXVI-8/W48 Workshop Proceedings: Remote Sensing Support to Crop Yield Forecast and Area Estimates, Stresa, Italy, 30 November–1 December 2006. [Google Scholar]

- Rembold, F.; Meroni, M.; Urbano, F.; Royer, A.; Atzberger, C.; Lemoine, G.; Eerens, H.; Haesen, D. Remote sensing time series analysis for crop monitoring with the SPIRITS software: New functionalities and use examples. Front. Environ. Sci. 2015, 3, 46. [Google Scholar] [CrossRef]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Civco, D.L. Artificial neural networks for land-cover classification and mapping. Int. J. Geogr. Inf. Syst. 1993, 7, 173–186. [Google Scholar] [CrossRef]

- Belgiu, M.; Drăguţ, L. Random forest in remote sensing: A review of applications and future directions. ISPRS J. Photogramm. Remote Sens. 2016, 114, 24–31. [Google Scholar] [CrossRef]

- Wilkes, P.; Jones, S.; Suarez, L.; Mellor, A.; Woodgate, W.; Soto-Berelov, M.; Haywood, A.; Skidmore, A.; Wilkes, P.; Jones, S.D.; et al. Mapping Forest Canopy Height Across Large Areas by Upscaling ALS Estimates with Freely Available Satellite Data. Remote Sens. 2015, 7, 12563–12587. [Google Scholar] [CrossRef]

- Karlson, M.; Ostwald, M.; Reese, H.; Sanou, J.; Tankoano, B.; Mattsson, E.; Karlson, M.; Ostwald, M.; Reese, H.; Sanou, J.; et al. Mapping Tree Canopy Cover and Aboveground Biomass in Sudano-Sahelian Woodlands Using Landsat 8 and Random Forest. Remote Sens. 2015, 7, 10017–10041. [Google Scholar] [CrossRef]

- Hao, P.; Zhan, Y.; Wang, L.; Niu, Z.; Shakir, M.; Hao, P.; Zhan, Y.; Wang, L.; Niu, Z.; Shakir, M. Feature Selection of Time Series MODIS Data for Early Crop Classification Using Random Forest: A Case Study in Kansas, USA. Remote Sens. 2015, 7, 5347–5369. [Google Scholar] [CrossRef]

- Rodriguez-Galiano, V.F.; Ghimire, B.; Rogan, J.; Chica-Olmo, M.; Rigol-Sanchez, J.P. An assessment of the effectiveness of a random forest classifier for land-cover classification. ISPRS J. Photogramm. Remote Sens. 2012, 67, 93–104. [Google Scholar] [CrossRef]

- Hastie, T.; Tibshirani, R.; Friedman, J. The Elements of Statistical Learning, 2nd ed.; Springer: New York, NY, USA, 2009; ISBN 978-0-387-84857-0. [Google Scholar]

- Cohen, J. A coefficient of agreement of nominal scales. Educ. Psychol. Meas. 1960, 20, 37–46. [Google Scholar] [CrossRef]

- Bostrom, H. Estimating class probabilities in random forests. In Proceedings of the Sixth International Conference on Machine Learning and Applications (ICMLA 2007), Cincinnati, OH, USA, 13–15 December 2007; pp. 211–216. [Google Scholar]

- Breiman, L.; Friedman, J.; Stone, C.J.; Olshen, R.A. Classification and Regression Trees; Taylor & Francis: New York, NY, USA, 1984; ISBN 9780412048418. [Google Scholar]

- Quegan, S.; Le Toan, T.; Yu, J.; Ribbes, F.; Floury, N. Multitemporal ERS SAR analysis applied to forest mapping. IEEE Trans. Geosci. Remote Sens. 2000, 38, 741–753. [Google Scholar] [CrossRef]

- Belgiu, M.; Csillik, O. Sentinel-2 cropland mapping using pixel-based and object-based time-weighted dynamic time warping analysis. Remote Sens. Environ. 2018, 204, 509–523. [Google Scholar] [CrossRef]

- Xiong, J.; Thenkabail, P.; Tilton, J.; Gumma, M.; Teluguntla, P.; Oliphant, A.; Congalton, R.; Yadav, K.; Gorelick, N. Nominal 30-m Cropland Extent Map of Continental Africa by Integrating Pixel-Based and Object-Based Algorithms Using Sentinel-2 and Landsat-8 Data on Google Earth Engine. Remote Sens. 2017, 9, 1065. [Google Scholar] [CrossRef]

- Yocky, D.A. Interferometric SAR coherence classification utility assessment. In Proceedings of the 1998 IEEE International Geoscience and Remote Sensing Symposium IGARSS ’98, Sensing and Managing the Environment, Seattle, WA, USA, 6–10 July 1998; pp. 1784–1786. [Google Scholar]

- Engdahl, M.E.; Hyyppa, J.M. Land-cover classification using multitemporal ERS-1/2 insar data. IEEE Trans. Geosci. Remote Sens. 2003, 41, 1620–1628. [Google Scholar] [CrossRef]

- Le Hegarat-Mascle, S.; Quesney, A.; Vidal-Madjar, D.; Taconet, O.; Normand, M.; Loumagne, C. Land cover discrimination from multitemporal ERS images and multispectral Landsat images: A study case in an agricultural area in France. Int. J. Remote Sens. 2000, 21, 435–456. [Google Scholar] [CrossRef]

- Blaes, X.; Defourny, P. Retrieving crop parameters based on tandem ERS 1/2 interferometric coherence images. Remote Sens. Environ. 2003, 88, 374–385. [Google Scholar] [CrossRef]

- Chust, G.; Ducrot, D.; Pretus, J.L. Land cover discrimination potential of radar multitemporal series and optical multispectral images in a Mediterranean cultural landscape. Int. J. Remote Sens. 2004, 25, 3513–3528. [Google Scholar] [CrossRef]

- Macriì-Pellizzeri, T.; Oliver, C.J.; Lombardo, P. Segmentation-based joint classification of SAR and optical images. IEE Proc.-Radar Sonar Navig. 2002, 149, 281. [Google Scholar] [CrossRef]

- Torbick, N.; Salas, W.; Xiao, X.; Ingraham, P.; Fearon, M.; Biradar, C.; Zhao, D.; Liu, Y.; Li, P.; Zhao, Y. Integrating SAR and optical imagery for regional mapping of paddy rice attributes in the Poyang Lake Watershed, China. Can. J. Remote Sens. 2011, 37, 17–26. [Google Scholar] [CrossRef]

- Campos-Taberner, M.; García-Haro, F.; Camps-Valls, G.; Grau-Muedra, G.; Nutini, F.; Busetto, L.; Katsantonis, D.; Stavrakoudis, D.; Minakou, C.; Gatti, L.; et al. Exploitation of SAR and Optical Sentinel Data to Detect Rice Crop and Estimate Seasonal Dynamics of Leaf Area Index. Remote Sens. 2017, 9, 248. [Google Scholar] [CrossRef]

- Castillejo-González, I.L.; López-Granados, F.; García-Ferrer, A.; Peña-Barragán, J.M.; Jurado-Expósito, M.; de la Orden, M.S.; González-Audicana, M. Object- and pixel-based analysis for mapping crops and their agro-environmental associated measures using QuickBird imagery. Comput. Electron. Agric. 2009, 68, 207–215. [Google Scholar] [CrossRef]

| Class | Calibration Parcels | Validation Parcels | Calibration Pixels | Validation Pixels |

|---|---|---|---|---|

| Wheat | 850 | 7653 | 171,865 | 5,700,538 |

| Barley | 177 | 1591 | 31,194 | 1,333,242 |

| Rapeseed | 9 | 78 | 1519 | 50,163 |

| Maize | 1896 | 17,065 | 293,878 | 15,614,299 |

| Potatoes | 614 | 5523 | 121,882 | 3,867,266 |

| Beets | 354 | 3182 | 73,060 | 1,913,307 |

| Flax | 65 | 580 | 15,118 | 366,584 |

| Grassland | 2617 | 23,557 | 322,422 | 21,200,413 |

| Forest | 84 | 21 | 180,783 | 282,571 |

| Built-up | 39 | 10 | 20,895 | 28,180 |

| Water | 54 | 14 | 35,628 | 54,996 |

| Other | 825 | 8236 | 158,273 | 6,103,789 |

| Total | 7584 | 67,510 | 1,426,517 | 56,515,348 |

| Sentinel-2 | Sentinel-1 | |||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| # | March | April | May | June | July | August | March | April | May | June | July | August | OA | κ |

| 1 | X | 0.47 | 0.31 | |||||||||||

| 2 | X | X | 0.62 | 0.49 | ||||||||||

| 3 | X | X | X | 0.70 | 0.60 | |||||||||

| 4 | X | X | X | X | 0.74 | 0.66 | ||||||||

| 5 | X | X | X | X | X | 0.75 | 0.67 | |||||||

| 6 | X | X | X | X | X | X | 0.76 | 0.68 | ||||||

| 7 | X | 0.39 | 0.22 | |||||||||||

| 8 | X | X | 0.54 | 0.39 | ||||||||||

| 9 | X | X | X | 0.66 | 0.55 | |||||||||

| 10 | X | X | X | X | 0.72 | 0.63 | ||||||||

| 11 | X | X | X | X | X | 0.76 | 0.68 | |||||||

| 12 | X | X | X | X | X | X | 0.78 | 0.70 | ||||||

| 13 | X | X | 0.53 | 0.38 | ||||||||||

| 14 | X | X | X | X | 0.66 | 0.55 | ||||||||

| 15 | X | X | X | X | X | X | 0.75 | 0.66 | ||||||

| 16 | X | X | X | X | X | X | X | X | 0.79 | 0.73 | ||||

| 17 | X | X | X | X | X | X | X | X | X | X | 0.82 | 0.76 | ||

| 18 | X | X | X | X | X | X | X | X | X | X | X | X | 0.82 | 0.77 |

| Wheat | Barley | Rapeseed | Maize | Potato | Beet | Flax | Grassland | Forest | Built-Up | Water | Other Crop | Total True | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Wheat | 7.6 | 0.0 | 0.0 | 0.9 | 0.1 | 0.0 | 0.0 | 1.1 | 0.0 | 0.0 | 0.0 | 0.4 | 10.1 |

| Barley | 0.0 | 1.5 | 0.0 | 0.2 | 0.0 | 0.0 | 0.0 | 0.4 | 0.0 | 0.0 | 0.0 | 0.1 | 2.4 |

| Rapeseed | 0.0 | 0.0 | 0.1 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.1 |

| Maize | 0.1 | 0.0 | 0.0 | 22.2 | 0.3 | 0.0 | 0.0 | 2.4 | 0.3 | 0.1 | 0.0 | 2.1 | 27.6 |

| Potato | 0.0 | 0.0 | 0.0 | 1.2 | 4.9 | 0.2 | 0.0 | 0.1 | 0.0 | 0.0 | 0.0 | 0.4 | 6.8 |

| Beet | 0.0 | 0.0 | 0.0 | 0.5 | 0.6 | 2.0 | 0.0 | 0.1 | 0.0 | 0.0 | 0.0 | 0.2 | 3.4 |

| Flax | 0.0 | 0.0 | 0.0 | 0.1 | 0.0 | 0.0 | 0.4 | 0.1 | 0.0 | 0.0 | 0.0 | 0.1 | 0.6 |

| Grassland | 0.1 | 0.0 | 0.0 | 1.3 | 0.1 | 0.0 | 0.0 | 31.2 | 1.3 | 0.3 | 0.0 | 3.2 | 37.5 |

| Forest | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.5 | 0.0 | 0.0 | 0.0 | 0.5 |

| Built-up | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 |

| Water | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.1 | 0.0 | 0.1 |

| Other crop | 0.2 | 0.0 | 0.0 | 1.9 | 0.4 | 0.1 | 0.0 | 3.3 | 0.3 | 0.1 | 0.0 | 4.4 | 10.8 |

| Total est. | 8.1 | 1.6 | 0.1 | 28.2 | 6.4 | 2.4 | 0.4 | 38.7 | 2.4 | 0.6 | 0.1 | 11.0 | OA = 75% |

| Wheat | Barley | Rapeseed | Maize | Potato | Beet | Flax | Grassland | Forest | Built-Up | Water | Other Crop | Total True | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Wheat | 8.6 | 0.1 | 0.0 | 0.1 | 0.0 | 0.0 | 0.0 | 0.5 | 0.0 | 0.0 | 0.0 | 0.7 | 10.1 |

| Barley | 0.0 | 1.9 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.2 | 0.0 | 0.0 | 0.0 | 0.2 | 2.4 |

| Rapeseed | 0.0 | 0.0 | 0.1 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.1 |

| Maize | 0.0 | 0.0 | 0.0 | 24.6 | 0.4 | 0.0 | 0.0 | 0.9 | 0.3 | 0.1 | 0.0 | 1.3 | 27.6 |

| Potato | 0.0 | 0.0 | 0.0 | 1.1 | 5.2 | 0.1 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.4 | 6.8 |

| Beet | 0.0 | 0.0 | 0.0 | 0.3 | 0.5 | 2.4 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.1 | 3.4 |

| Flax | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.5 | 0.0 | 0.0 | 0.0 | 0.0 | 0.1 | 0.7 |

| Grassland | 0.1 | 0.0 | 0.0 | 0.8 | 0.0 | 0.0 | 0.0 | 32.2 | 0.8 | 0.3 | 0.0 | 3.2 | 37.5 |

| Forest | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.5 | 0.0 | 0.0 | 0.0 | 0.5 |

| Built-up | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.1 |

| Water | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.1 | 0.0 | 0.1 |

| Other crop | 0.2 | 0.0 | 0.0 | 0.9 | 0.2 | 0.0 | 0.0 | 2.6 | 0.2 | 0.1 | 0.0 | 6.4 | 10.8 |

| Total est. | 9.0 | 2.0 | 0.1 | 27.9 | 6.4 | 2.6 | 0.5 | 36.5 | 1.9 | 0.7 | 0.1 | 12.4 | OA = 82.5% |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Van Tricht, K.; Gobin, A.; Gilliams, S.; Piccard, I. Synergistic Use of Radar Sentinel-1 and Optical Sentinel-2 Imagery for Crop Mapping: A Case Study for Belgium. Remote Sens. 2018, 10, 1642. https://doi.org/10.3390/rs10101642

Van Tricht K, Gobin A, Gilliams S, Piccard I. Synergistic Use of Radar Sentinel-1 and Optical Sentinel-2 Imagery for Crop Mapping: A Case Study for Belgium. Remote Sensing. 2018; 10(10):1642. https://doi.org/10.3390/rs10101642

Chicago/Turabian StyleVan Tricht, Kristof, Anne Gobin, Sven Gilliams, and Isabelle Piccard. 2018. "Synergistic Use of Radar Sentinel-1 and Optical Sentinel-2 Imagery for Crop Mapping: A Case Study for Belgium" Remote Sensing 10, no. 10: 1642. https://doi.org/10.3390/rs10101642

APA StyleVan Tricht, K., Gobin, A., Gilliams, S., & Piccard, I. (2018). Synergistic Use of Radar Sentinel-1 and Optical Sentinel-2 Imagery for Crop Mapping: A Case Study for Belgium. Remote Sensing, 10(10), 1642. https://doi.org/10.3390/rs10101642