Using Artificial Intelligence to Classify IEDs’ Control Scope from SCL Files

Abstract

1. Introduction

2. Background

2.1. Artificial Intelligence

2.1.1. Machine Learning

- Regression;

- Classification;

- Clustering;

- Association.

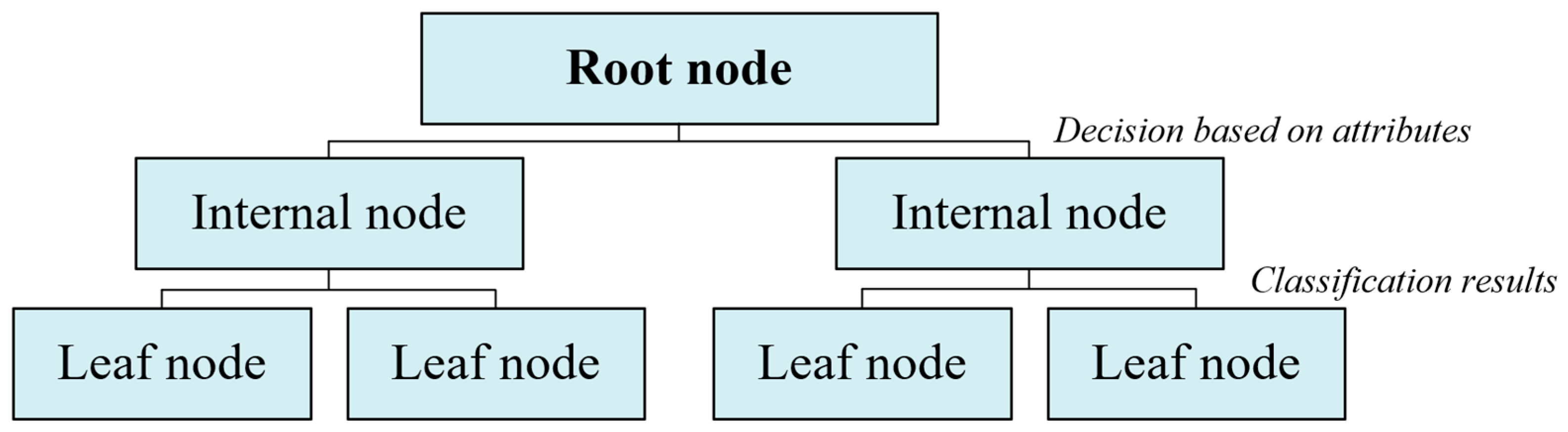

2.1.2. Decision Trees

2.2. IEC 61850

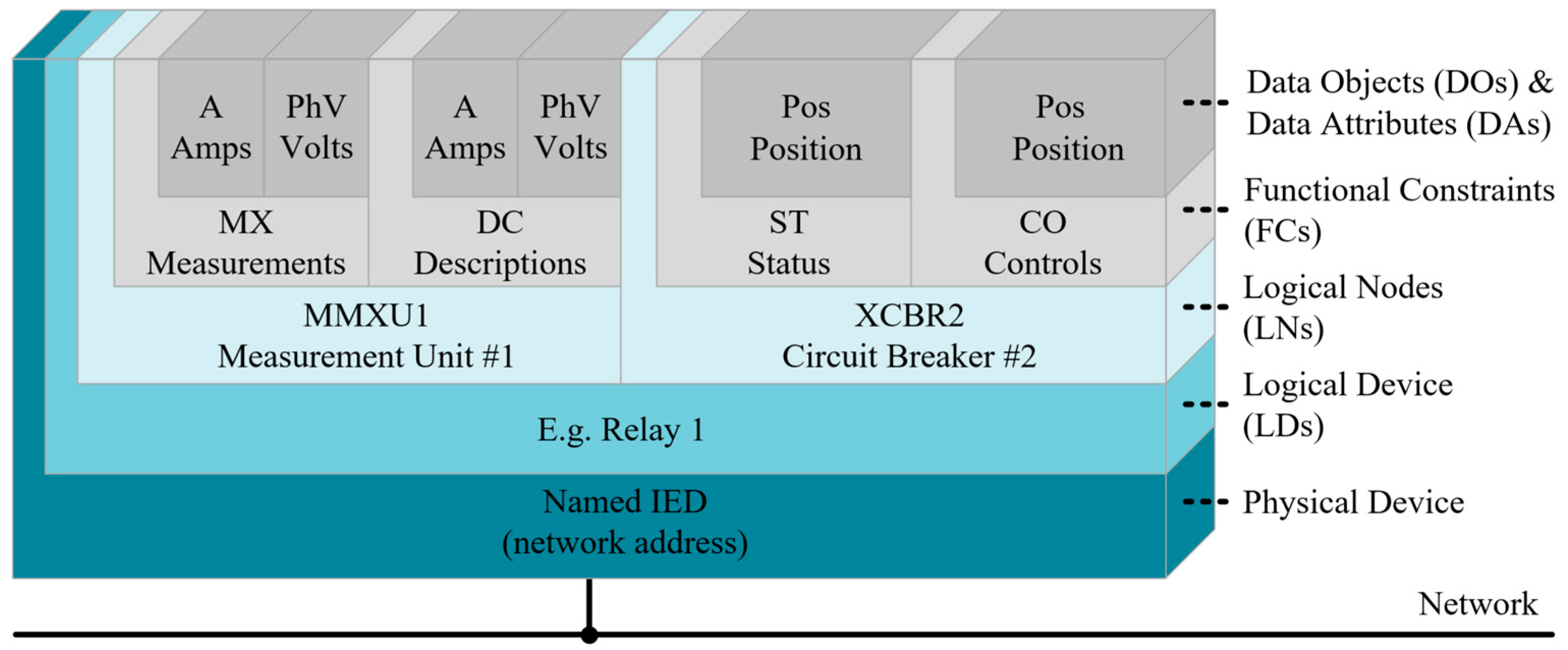

2.2.1. Data Model

2.2.2. Substation Configuration Language

- ICD: defines the functional and communication capabilities of an IED, serving as a basis for integration into the system;

- SSD (System Specification Description): describes the specification of the system, including the single-line diagram and the allocation of LNs to the parts and devices of the substation;

- SCD (System Configuration Description): consolidates the information of the ICD and SSD files, resulting in the complete configuration of the system, with associations between IEDs and their protection, control and supervision functions;

- IID (Instantiated IED Description): contains the detailed configuration of an IED already instantiated in the system, specifying operating parameters and relationships with other devices;

- CID: represents the final configuration of the IED, exported to the physical device during the commissioning phase;

- SED (System Exchange Description): enables the exchange of data between different IEC 61850 projects, allowing integration between independent engineering systems.

2.3. Related Work

3. Materials and Methods

3.1. Model Architecture

3.1.1. Software Environment

3.1.2. Parser Module

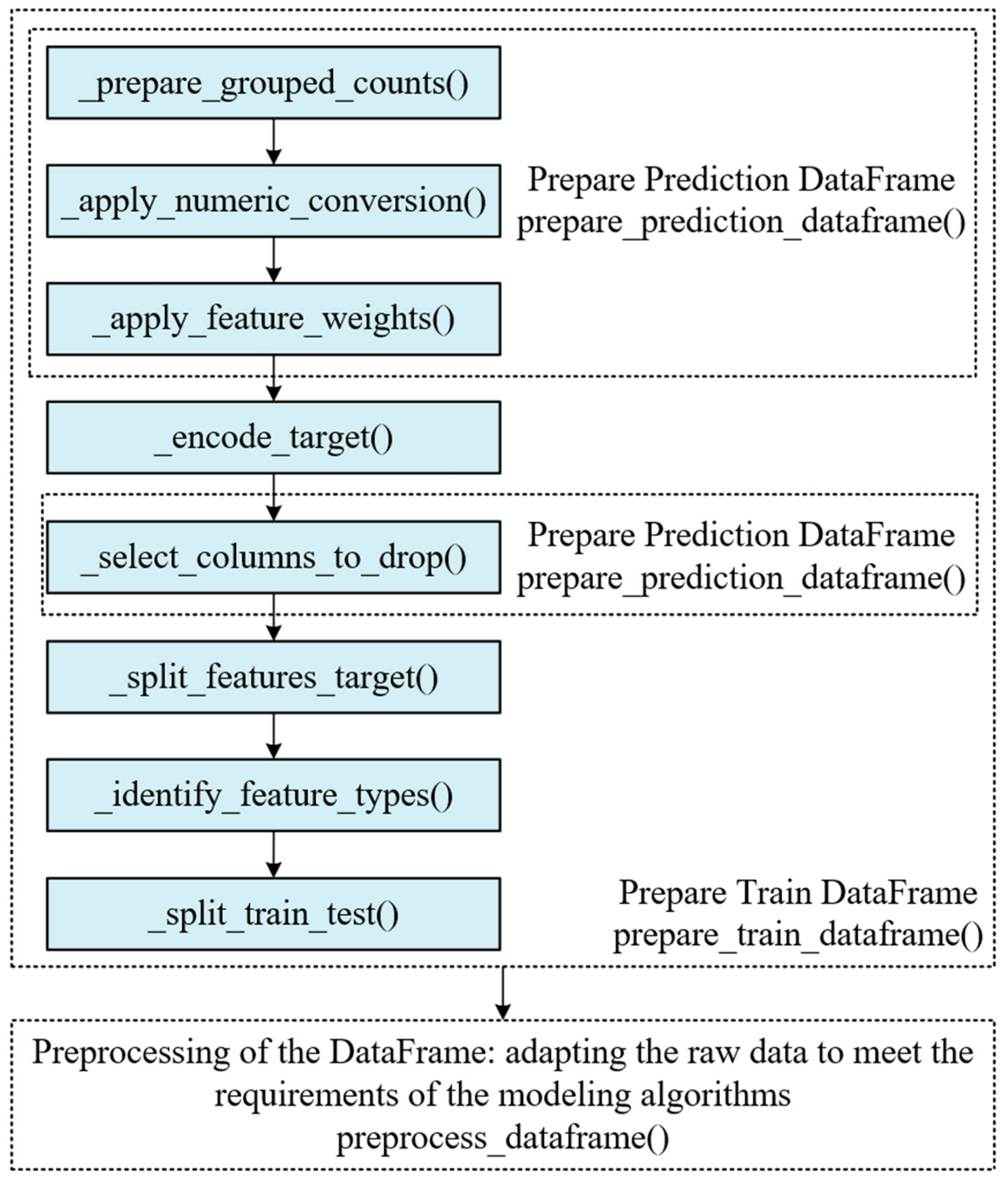

3.1.3. Classifier Module

3.1.4. Generator Module

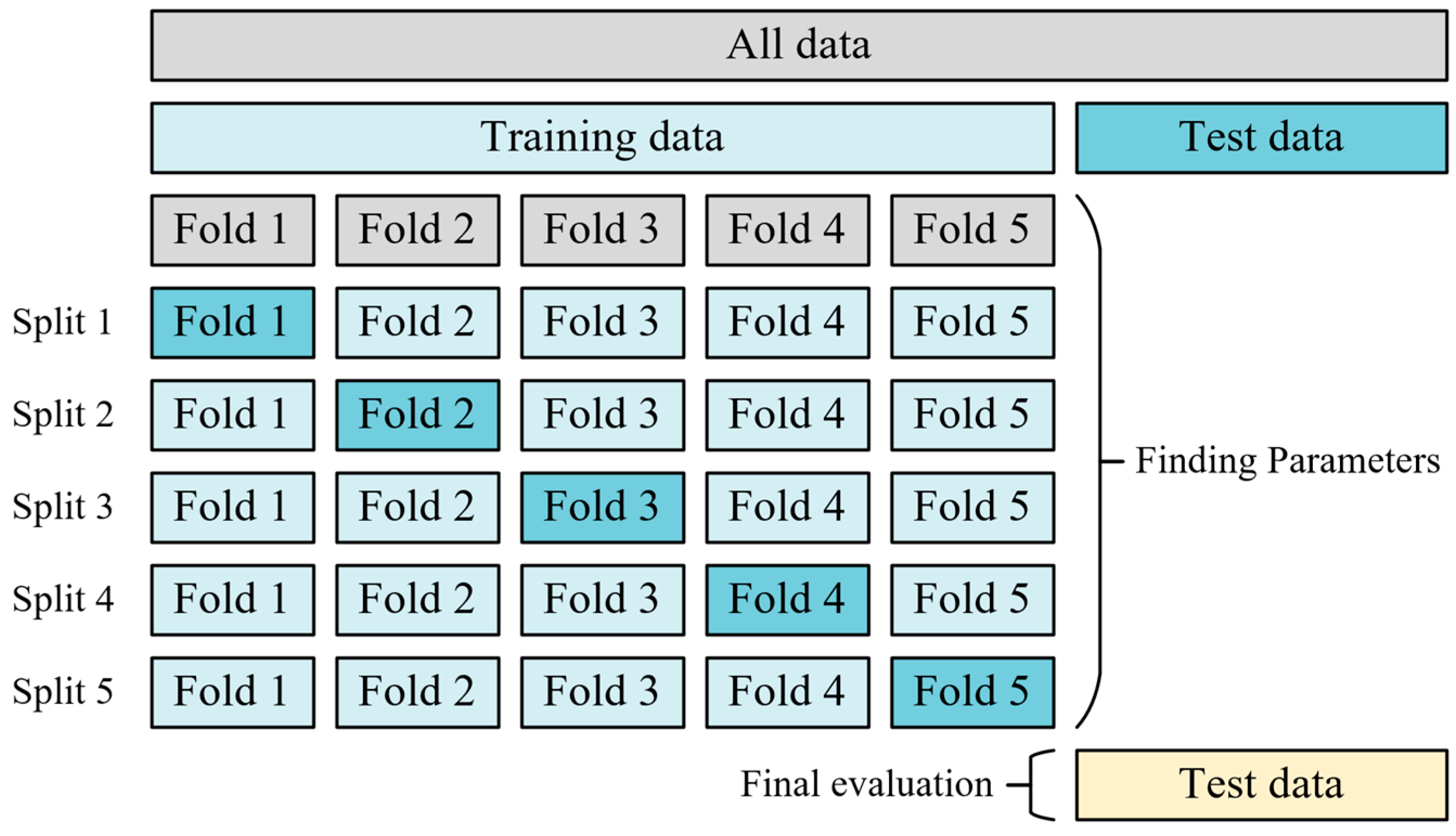

3.2. Training

4. Results

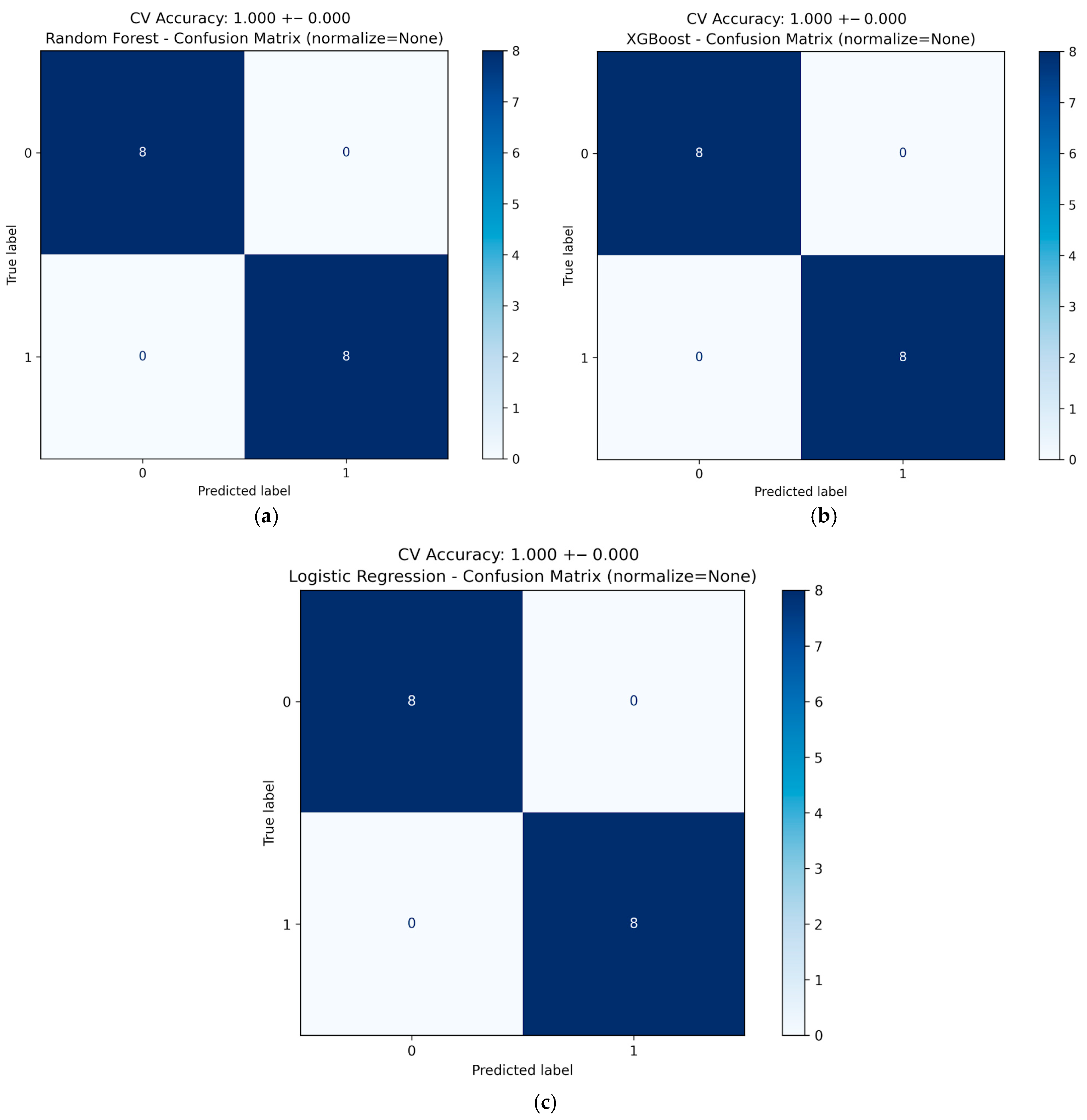

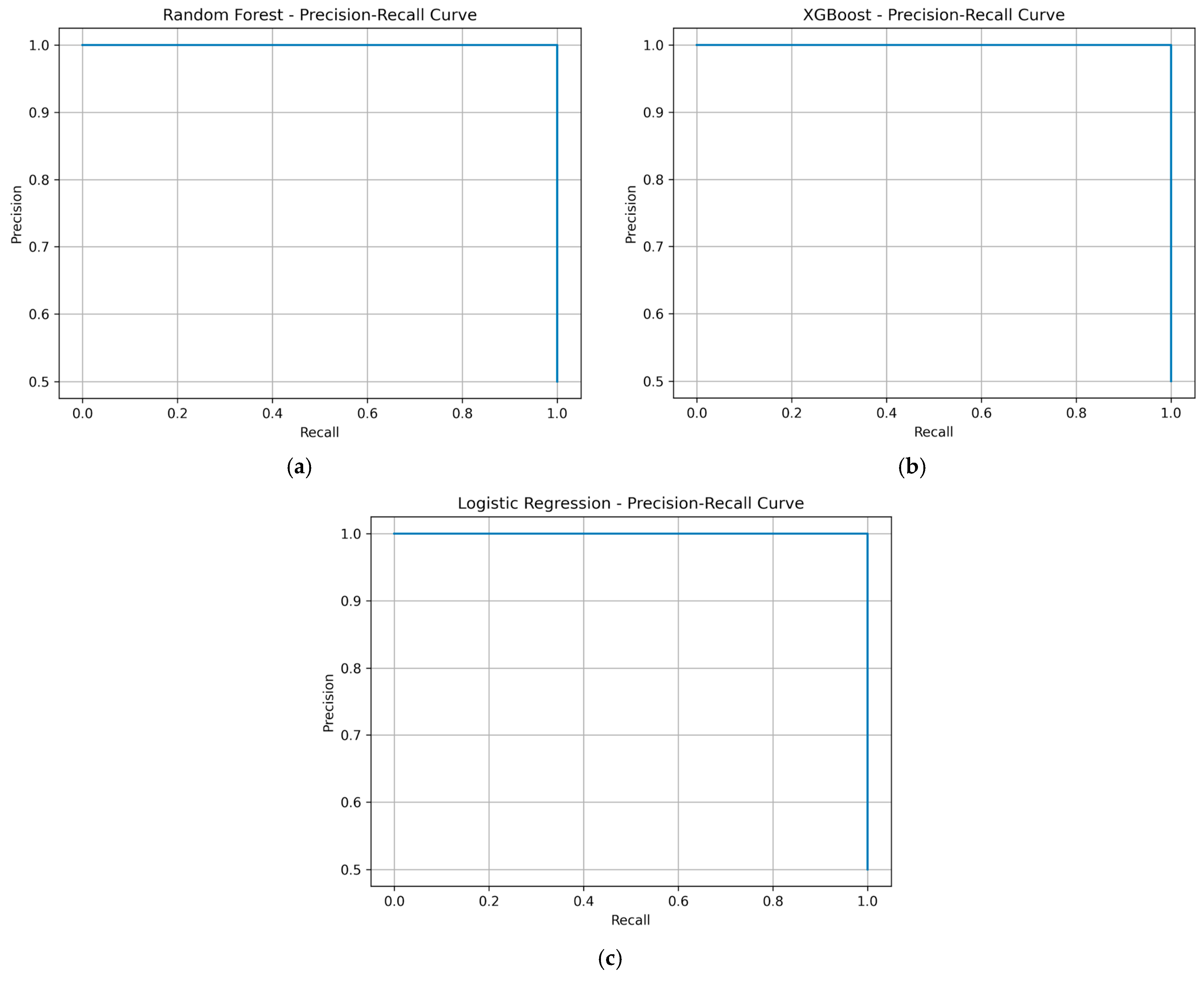

4.1. Model Metrics and Evaluation

4.2. Generated Output

5. Discussion and Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| ANN | Artificial Neural Network |

| CARTs | Classification and Regression Trees |

| CB | Circuit Breaker |

| CID | Configured IED Description |

| CIM | Common Information Model |

| CNN | Convolutional Neutral Network |

| CV | Cross-Validation |

| DA | Data Attributes |

| DCS | Distributed Control System |

| DL | Deep Learning |

| DNN | Deep Neural Network |

| DO | Data Objects |

| FA | Factory Automation |

| FC | Functional Constraints |

| FLISR | Fault Location, Isolation, and Service Restoration |

| GAN | Generative Adversarial Network |

| GOOSE | Generic Object Oriented Substation Event |

| GRU | Gated Recurrent Unit |

| HMI | Human–Machine Interface |

| HPC | High-Performance Computing |

| I/O | Input/Output |

| ICD | IED Capability Description |

| ID | Identifier |

| IEC | International Electrotechnical Commission |

| IED | Intelligent Electronic Device |

| IID | Instantiated IED Description |

| LD | Logical Devices |

| LN | Logical Nodes |

| LSTM | Long Short-Term Memory |

| ML | Machine Learning |

| MLP | Multilayer Perceptron |

| MMS | Manufacturing Message Specification |

| MU | Merging Unit |

| OS | Operator Station |

| P&C | Protection and Control |

| PA | Process Automation |

| PEP 8 | Python Enhancement Proposal 8 |

| RNN | Recurrent Neural Network |

| ROC | Receiver Operating Characteristic |

| RTU | Remote Terminal Unit |

| SCADA | Supervisory Control and Data Acquisition |

| SCD | System Configuration Description |

| SCL | Substation Configuration Language |

| SCN | Substation Communication Network |

| SED | System Exchange Description |

| sklearn | scikit-learn |

| SNTP | Simple Network Time Protocol |

| SSD | System Specification Description |

| SV | Sampled Values |

| SVM | Support Vector Machine |

| TC | Technical Committee |

| WAN | Wide Area Network |

| XML | Extensible Markup Language |

| YAML | YAML Ain’t Markup Language |

Appendix A. Generated FEEDER Object

| Object Name [37,38,39] | Meaning [37,38,39] | Automatically Mapped and Generated Addresses |

|---|---|---|

| Pos0_Val | Position of Q0 | Dc1/CSWI.ST.Pos.stVal |

| Pos1_Val | Position of Q1 | Dc2/CSWI.ST.Pos.stVal |

| Pos2_Val | Position of Q2 | Dc3/CSWI.ST.Pos.stVal |

| Pos8_Val | Position of Q8 | CB1/CSWI.ST.Pos.stVal |

| Pos9_Val | Position of Q9 | |

| Pos51_Val | Position of Q51 | |

| Pos52_Val | Position of Q52 | |

| Pos53_Val | Position of Q53 | |

| Q0_EnaCls_Val | IEC Interlock Enable Closing Switch Q0 | Dc1/CILO.ST.EnaCls.stVal |

| Q1_EnaCls_Val | IEC Interlock Enable Closing Switch Q1 | Dc2/CILO.ST.EnaCls.stVal |

| Q2_EnaCls_Val | IEC Interlock Enable Closing Switch Q2 | Dc3/CILO.ST.EnaCls.stVal |

| Q8_EnaCls_Val | IEC Interlock Enable Closing Switch Q8 | CB1/CILO.ST.EnaCls.stVal |

| Q9_EnaCls_Val | IEC Interlock Enable Closing Switch Q9 | |

| Q51_EnaCls_Val | IEC Interlock Enable Closing Switch Q51 | |

| Q52_EnaCls_Val | IEC Interlock Enable Closing Switch Q52 | |

| Q53_EnaCls_Val | IEC Interlock Enable Closing Switch Q53 | |

| Q0_EnaOpn_Val | IEC Interlock Enable Opening Switch Q0 | Dc1/CILO.ST.EnaOpn.stVal |

| Q1_EnaOpn_Val | IEC Interlock Enable Opening Switch Q1 | Dc2/CILO.ST.EnaOpn.stVal |

| Q2_EnaOpn_Val | IEC Interlock Enable Opening Switch Q2 | Dc3/CILO.ST.EnaOpn.stVal |

| Q8_EnaOpn_Val | IEC Interlock Enable Opening Switch Q8 | CB1/CILO.ST.EnaOpn.stVal |

| Q9_EnaOpn_Val | IEC Interlock Enable Opening Switch Q9 | |

| Q51_EnaOpn_Val | IEC Interlock Enable Opening Switch Q51 | |

| Q52_EnaOpn_Val | IEC Interlock Enable Opening Switch Q52 | |

| Q53_EnaOpn_Val | IEC Interlock Enable Opening Switch Q53 | |

| OpTmh_stVal | Operation Time | Application/LPHD.ST.OpTmh.units.stVal |

| OpTmh_Q | Operation Time Quality Code | Application/LPHD.ST.OpTmh.q |

| Loc_stVal | True: Local, False: Remote | Dc1/CSWI.ST.Loc.stVal |

| Loc_Q | True: Local, False: Remote Quality Code | Dc1/XSWI.ST.Loc.q |

| Q0_OpCnt_Val | Q0 Switch Count | Dc1/XSWI.ST.OpCnt.stVal |

| Q0_OpCnt_q | Q0 Switch Count quality code | Dc1/XSWI.ST.OpCnt.q |

| Q1_OpCnt_Val | Q1 Switch Count | Dc2/XSWI.ST.OpCnt.stVal |

| Q1_OpCnt_q | Q1 Switch Count quality code | Dc2/XSWI.ST.OpCnt.q |

| Q2_OpCnt_Val | Q2 Switch Count | Dc3/XSWI.ST.OpCnt.stVal |

| Q2_OpCnt_q | Q2 Switch Count quality code | Dc3/XSWI.ST.OpCnt.q |

| Q8_OpCnt_Val | Q8 Switch Count | CB1/XSWI.ST.OpCnt.stVal |

| Q8_OpCnt_q | Q8 Switch Count quality code | CB1/XSWI.ST.OpCnt.q |

| Q9_OpCnt_Val | Q9 Switch Count | |

| Q9_OpCnt_q | Q9 Switch Count quality code | |

| Q51_OpCnt_Val | Q51 Switch Count | |

| Q51_OpCnt_q | Q51 Switch Count quality code | |

| Q52_OpCnt_Val | Q52 Switch Count | |

| Q52_OpCnt_q | Q52 Switch Count quality code | |

| Q53_OpCnt_Val | Q53 Switch Count | |

| Q53_OpCnt_q | Q53 Switch Count quality code | |

| Beh_stVal | Device behavior: 1 = on; 2 = Locked; 3 = Test; 4 = Test/Locked; 5 = Off | Application/LLN0.ST.Beh.stVal |

| Beh_Q | Device Behavior Quality Code | Application/CALH.ST.Beh.q |

| Health_stVal | Device Health | Application/LLN0.ST.Health.stVal |

| Health_Q | Device Health Quality Code | Application/CALH.ST.Health.q |

| A_phsA_cVal_mag_f | Current Phase A | VI3p1_OperationalValues/MMXU.MX.A.phsA.f |

| A_phsA_q | Current Phase A Quality Code | VI3p1_OperationalValues/MMXU.MX.A.phsA.q |

| A_phsB_cVal_mag_f | Current Phase B | VI3p1_OperationalValues/MMXU.MX.A.instCVal.f |

| A_phsB_q | Current Phase B Quality Code | VI3p1_OperationalValues/MMXU.MX.A.phsA.q |

| A_phsC_cVal_mag_f″ | Current Phase C | VI3p1_OperationalValues/MMXU.MX.A.mag.f |

| A_phsC_q | Current Phase C Quality Code | VI3p1_OperationalValues/MMXU.MX.A.phsA.q |

| A_neut_mag_f | Current neut | VI3p1_OperationalValues/MMXU.MX.A.cVal.f |

| A_neut_q | Current neut Quality Code | VI3p1_OperationalValues/MMXU.MX.A.mag.q |

| Hz_mag_f | Frequency | VI3p1_OperationalValues/MMXU.MX.Hz.instMag.f |

| Hz_q | Frequency Quality Code | VI3p1_OperationalValues/MMXU.MX.Hz.mag.q |

| PPV_phsAB_cVal_mag_f | Phase Voltage A-B | VI3p1_OperationalValues/MMXU.MX.PPV.phsAB.f |

| PPV_phsAB_q | Phase Voltage A-B Quality Code | VI3p1_OperationalValues/MMXU.MX.PPV.phsAB.q |

| PPV_phsBC_cVal_mag_f | Phase Voltage B-C | VI3p1_OperationalValues/MMXU.MX.PPV.instCVal.f |

| PPV_phsBC_q | Phase Voltage B-C Quality Code | VI3p1_OperationalValues/MMXU.MX.PPV.phsAB.q |

| PPV_phsCA_cVal_mag_f | Phase Voltage C-A | VI3p1_OperationalValues/MMXU.MX.PPV.mag.f |

| PPV_phsCA_q | Phase Voltage C-A Quality Code | VI3p1_OperationalValues/MMXU.MX.PPV.phsAB.q |

| PhV_phsA_cVal_mag_f | Voltage phase A | VI3p1_OperationalValues/MMXU.MX.PhV.phsA.f |

| PhV_phsA_q | Voltage phase A quality code | VI3p1_OperationalValues/MMXU.MX.PhV.phsA.q |

| PhV_phsB_cVal_mag_f | Voltage phase B | VI3p1_OperationalValues/MMXU.MX.PhV.instCVal.f |

| PhV_phsB_q | Voltage phase B quality code | VI3p1_OperationalValues/MMXU.MX.PhV.phsA.q |

| PhV_phsC_cVal_mag_f | Voltage phase C | VI3p1_OperationalValues/MMXU.MX.PhV.mag.f |

| PhV_phsC_q | Voltage phase C quality code | VI3p1_OperationalValues/MMXU.MX.PhV.phsA.q |

| TotPF_mag_f | Total Power Factor | VI3p1_OperationalValues/MMXU.MX.TotPF.instMag.f |

| TotPF_q | Total Power Factor Quality Code | VI3p1_OperationalValues/MMXU.MX.TotPF.mag.q |

| TotP_mag_f | Total Active Power | VI3p1_OperationalValues/MMXU.MX.TotW.instMag.f |

| TotP_q | Total Active Power Quality Code | VI3p1_OperationalValues/MMXU.MX.TotW.mag.q |

| TotQ_mag_f | Total Reactive Power | VI3p1_OperationalValues/MMXU.MX.TotVAr.instMag.f |

| TotQ_q | Total Reactive Power Quality Code | VI3p1_OperationalValues/MMXU.MX.TotVAr.mag.q |

| TotS_mag_f | Total Apparent Power | VI3p1_OperationalValues/MMXU.MX.TotVA.instMag.f |

| TotS_q | Total Apparent Power Quality Code | VI3p1_OperationalValues/MMXU.MX.TotVA.mag.q |

| SupWh_actVal | Accumulated active energy towards busbar | |

| SupWh_q | Accumulated active energy towards busbar Quality Code | |

| SupVArh_actVal | Accumulated reactive energy towards busbar | |

| SupVArh_q | Accumulated reactive energy towards busbar Quality Code | |

| DmdWh_actVal | Accumulated active energy from busbar | |

| DmdWh_q | Accumulated active energy from busbar Quality Code | |

| Pos0_ctl | Command object for Q0 | Dc2/CSWI..Pos. |

| Pos1_ctl | Command object for Q1 | Dc2/CSWI..Pos. |

| Pos2_ctl | Command object for Q2 | Dc2/XSWI..Pos.pulseConfig. |

| Pos8_ctl | Command object for Q8 | Dc2/XSWI..Pos.pulseConfig. |

| Pos9_ctl | Command object for Q9 | Dc2/XSWI..Pos.pulseConfig. |

| Pos51_ctl | Command object for Q51 | Dc2/XSWI..Pos.pulseConfig. |

| Pos52_ctl | Command object for Q52 | Dc3/CSWI..Pos. |

| Pos53_ctl | Command object for Q53 | Dc3/CSWI..Pos. |

| PTOC1_Op | 51 Overcurrent Trip | VI3p1/PTRC.ST.Op.general |

| PTOC1_Str | 51 Overcurrent picked up | VI3p1/PTRC.ST.Str.general |

| PTOC2_Op | 51N Overcurrent Trip | VI3p1/GAPC.ST.Op.general |

| PTOC2_Str | 51N Overcurrent picked up | VI3p1/PHAR.ST.Str.general |

| PTOC3_Op | 67-TOC Overcurrent Trip | VI3p1_5051OC3phase1/PTRC.ST.Op.general |

| PTOC3_Str | 67-TOC Overcurrent picked up | VI3p1/GAPC.ST.Str.general |

| PTOC4_Op | 67N-TOC Overcurrent Trip | VI3p1_5051OC3phase1/PTOC.ST.Op.general |

| PTOC4_Str | 67N-TOC Overcurrent picked up | VI3p1_5051OC3phase1/PTRC.ST.Str.general |

| PTOC5_Op | 46-TOC Overcurrent Trip | VI3p1_5051OC3phase1/PTOC.ST.Op.general |

| PTOC5_Str | 46-TOC Overcurrent picked up | VI3p1_5051OC3phase1/PTOC.ST.Str.general |

| PTOC6_Op | 50-1 Overcurrent I > Trip | VI3p1_5051OC3phase1/PTOC.ST.Op.general |

| PTOC6_Str | 50-1 Overcurrent I > picked up | VI3p1_5051OC3phase1/PTOC.ST.Str.general |

| PTOC7_Op | 50-2 Overcurrent I >> Trip | VI3p1_5051NOCgndB1/PTRC.ST.Op.general |

| PTOC7_Str | 50-2 Overcurrent I >> picked up | VI3p1_5051OC3phase1/PTOC.ST.Str.general |

| PTOC8_Op | 50N-1 Overcurrent IE > Trip | VI3p1_5051NOCgndB1/PTOC.ST.Op.general |

| PTOC8_Str | 50N-1 Overcurrent IE > picked up | VI3p1_5051NOCgndB1/PTRC.ST.Str.general |

| PTOC9_Op | 50N-2 Overcurrent IE >> Trip | VI3p1_5051NOCgndB1/PTOC.ST.Op.general |

| PTOC9_Str | 50N-2 Overcurrent IE >> picked up | VI3p1_5051NOCgndB1/PTOC.ST.Str.general |

| PTOC10_Op | 67-1 Directional Overcurrent I > Trip | VI3p1_5051NOCgndB1/PTOC.ST.Op.general |

| PTOC10_Str | 67-1 Directional Overcurrent I > picked up | VI3p1_5051NOCgndB1/PTOC.ST.Str.general |

| PTOC11_Op | 67-2 Directional Overcurrent I >> Trip | VI3p1_SwitchOntoFault/PTRC.ST.Op.general |

| PTOC11_Str | 67-2 Directional Overcurrent I >> picked up | VI3p1_5051NOCgndB1/PTOC.ST.Str.general |

| PTOC12_Op | 67-2 Directional Overcurrent I >> picked up | VI3p1_SwitchOntoFault/RSOF.ST.Op.general |

| PTOC12_Str | 67N-1 Directional Overcurrent IE > picked up | VI3p1_SwitchOntoFault/PTRC.ST.Str.general |

| PTOC13_Op | 67N-2 Directional Overcurrent IE >> Trip | CB1/PTRC.ST.Op.general |

| PTOC13_Str | 67N-2 Directional Overcurrent IE >> picked up | VI3p1_SwitchOntoFault/RSOF.ST.Str.general |

| PTRC1_Tr | Trip signal for CB | CB1/PTRC.ST.Tr.general |

| PTRC1_Str | Trigger signal for CB | CB1/PTRC.ST.Str.general |

| PTRC2_Op | Trip signal for CB | |

| PTRC2_Str | Trigger signal for CB | |

| PTRC3_Op | Trip signal for CB | |

| PTRC3_Str | Trigger signal for CB | |

| RBRF1_OpEx | Breaker Failure—External Trip | |

| RBRF1_OpIn | Breaker Failure—Bay Internal Trip | |

| RBRF1_Str | Breaker Failure detected | |

| PDIF1_Op | Differential protection IDIFF > Trip | |

| PDIF1_Str | Differential protection IDIFF > picked up | |

| PDIF2_Op | Differential protection IDIFF >> Trip | |

| PDIF2_Str | Differential protection IDIFF >> picked up | |

| PDIS1_Op | Impedance protection Z1 Trip | |

| PDIS1_Str | Impedance protection Z1 picked up | |

| PDIS2_Op | Impedance protection Z2 Trip | |

| PDIS2_Str | Impedance protection Z2 picked up | |

| PDIS3_Op | Impedance protection Z1B Trip | |

| PDIS3_Str | Impedance protection Z1B picked up | |

| PDIS4_Op | Impedance protection general Trip | |

| PDIS4_Str | Impedance protection general picked up | |

| PDUP1_Op | Underexcitation protection Characterisitic 1 trip | |

| PDUP2_Op | Underexcitation protection Charactristic 2 trip | |

| PDUP3_Op | Underexcitation protection Characteristic 3 trip | |

| PTOV1_Op | Overvoltage U> trip | |

| PTOV1_Str | Overvoltage U > picked up | |

| PTOV2_Op | Overvoltage U >> trip | |

| PTOV2_Str | Over voltage U >> picked up | |

| PTUF1_Op | Frequency 1 under range trip | |

| PTUF1_Str | Frequency 1 under range picked up | |

| PTUF2_Op | Frequency 2 under range trip | |

| PTUF2_Str | Frequency 2 under range picked up | |

| PTUV1_Op | Under voltage protection U < trip | |

| PTUV1_Str | Under voltage protection U < picked up | |

| PTUV2_Op | Under voltage protection U << trip | |

| PTUV2_Str | Under voltage protection U << picked up | |

| PVOC2_Op | Voltage controlled overcurrent protection | |

| PDOP1_Op | Directional over power protection | |

| GAPC1_Op | External trip | |

| GAPC1_Str | External trip picked up |

Appendix B. Generated TRAFO Object

| Object Name [37,38,39] | Meaning [37,38,39] | Automatically Mapped and Generated Addresses |

|---|---|---|

| Loc | Operation Mode (1: Local, 0: Remote) | CB1/XCBR.ST.Loc.stVal |

| Error | alarm active | |

| Warning | warning active | Application/CALH.ST.GrWrn.stVal |

| PTOC1_Op | Overcurrent I > Trip | PTS1/PTRC.ST.Op.general |

| PTOC1_Str | Overcurrent I > picked up | PTS1/PTRC.ST.Str.general |

| PTOC2_Op | Overcurrent I >> Trip | PTS1/PTTR.ST.Op.general |

| PTOC2_Str | Overcurrent I >> picked up | PTS1/PTTR.ST.Str.general |

| PTOC3_Op | Overcurrent Ip Trip | PTS1/PDIF.ST.Op.general |

| PTOC3_Str | Overcurrent Ip picked up | PTS1/PDIF.ST.Str.general |

| PTOC7_Op | Overcurrent 3I0 > Trip | PTS2/PTRC.ST.Op.general |

| PTOC7_Str | Overcurrent 3I0 > picked up | PTS1/PHAR.ST.Str.general |

| PTOC8_Op | Overcurrent 3I0 >> Trip | PTS2_5051OC3phA1/PTRC.ST.Op.general |

| PTOC8_Str | Overcurrent 3I0 >> picked up | PTS2/PTRC.ST.Str.general |

| PTOC9_Op | Overcurrent 3I0p Trip | PTS2_5051OC3phA1/PTOC.ST.Op.general |

| PTOC9_Str | Overcurrent 3I0p picked up | PTS2/PHAR.ST.Str.general |

| PTOC12_Op | Unbalanced Load I > Trip | PTS2_5051OC3phA1/PTOC.ST.Op.general |

| PTOC12_Str | Unbalanced Load I > picked up | PTS2_5051OC3phA1/PTRC.ST.Str.general |

| PTOC13_Op | Unbalanced Load I >> Trip | PTS2_5051OC3phA1/PTOC.ST.Op.general |

| PTOC13_Str | Unbalanced Load I >> picked up | PTS2_5051OC3phA1/PTOC.ST.Str.general |

| PTOC15_Op | Unbalanced Load I2thTrip | PTD1/PTRC.ST.Op.general |

| PTOC15_Str | Unbalanced Load I2th picked up | PTS2_5051OC3phA1/PTOC.ST.Str.general |

| PTTR1_Op | Overload Theta Trip | PTD1_87TrafoDiffProt1/PDIF.ST.Op.general |

| PTTR1_Str | Overload Theta picked up | PTS2_5051OC3phA1/PTOC.ST.Str.general |

| PDIF1_Op_R24 | Differential protection IDIFF > Trip | PTD1_87TrafoDiffProt1/PDIF.ST.Op.general |

| PDIF1_Str_R25 | Differential protection IDIFF > picked up | PTD1/PTRC.ST.Str.general |

| PDIF2_Op_R26 | Differential protection IDIFF >> Trip | PTD1_87TrafoDiffProt1/PTRC.ST.Op.general |

| PDIF2_Str_R27 | Differential protection IDIFF >> picked up | PTD1_87TrafoDiffProt1/PDIF.ST.Str.general |

| PDIF3_Op_R28 | Differential protection I REF Trip | PTE1/PTRC.ST.Op.general |

| PDIF3_Str_R29 | Differential protection I REF picked up | PTD1_87TrafoDiffProt1/PDIF.ST.Str.general |

| GAPC1_Op_R30 | External trip | PTE1_50N51NOC1phA1/PTRC.ST.Op.general |

| GAPC1_Str_R31 | External trigger | PTD1_87TrafoDiffProt1/PTRC.ST.Str.general |

| RBRF1_OpEx_R32 | Breaker Failure—External Trip | CB1/RBRF.ST.OpEx.general |

| RBRF1_OpIn_R33 | Breaker Failure—Bay Internal Trip | CB1/RBRF.ST.OpIn.general |

| RBRF1_Str_R34 | Breaker Failure detected | PTE1/PTRC.ST.Str.general |

| PTRC1_Op_R35 | Trip signal for CB | PTE1_50N51NOC1phA1/PTOC.ST.Op.general |

| PTRC1_Str_R36 | Trigger signal for CB | PTE1_50N51NOC1phA1/PTRC.ST.Str.general |

| Beh_R1 | Device Behaviour | Application/LLN0.ST.Beh.stVal |

| Health_R2 | Device Health | Application/LLN0.ST.Health.stVal |

| OpCnt_R1 | Switch Count | CB1/XCBR.ST.OpCnt.stVal |

| OpTmh_R2 | Operation Time | Application/LPHD.ST.OpTmh.units.stVal |

| IP_A_P1 | Primary Phase Current A | PTS1_OperationalValues/MMXU.MX.A.phsA.f |

| IP_B_P2 | Primary Phase Current B | PTS1_OperationalValues/MMXU.MX.A.phsA.f |

| IP_C_P3 | Primary Phase Current C | PTS1_OperationalValues/MMXU.MX.A.units.f |

| IS_A_P4 | Secondary Phase Current A | PTS1_OperationalValues/MMXU.MX.A.phsB.f |

| IS_B_P5 | Secondary Phase Current B | PTS1_OperationalValues/MMXU.MX.A.phsB.f |

| IS_C_P6 | Secondary Phase Current C | PTS1_OperationalValues/MMXU.MX.A.units.f |

| IS2_A_P7 | Secondary 2 Phase Current A | |

| IS2_B_P8 | Secondary 2 Phase Current B | |

| IS2_C_P9 | Secondary 2 Phase Current C | |

| f | Frequency | PTS1_OperationalValues/MMXU.MX.Hz.units.f |

| U_AB_P11 | Phase Voltage A-B | PTS1_OperationalValues/MMXU.MX.PPV.phsAB.f |

| U_BC_P12 | Phase Voltage B-C | PTS1_OperationalValues/MMXU.MX.PPV.phsAB.f |

| U_CA_P13 | Phase Voltage C-A | PTS1_OperationalValues/MMXU.MX.PPV.units.f |

| U_n | Voltage neut | |

| TotPF_P15 | Total Power Factor | PTS1_OperationalValues/MMXU.MX.TotPF.f |

| TotP_P16 | Total Active Power | |

| TotQ_P17 | Total Reactive Power | PTS1_OperationalValues/MMXU.MX.TotVAr.units.f |

| TotS | Total Apparent Power | PTS1_OperationalValues/MMXU.MX.TotVA.units.f |

| Tmp1_P19 | Temperature 1 | |

| Tmp2_P20 | Temperature 2 | |

| Tmp3_P21 | Temperature 3 | |

| TmpMax_P22 | Temperatrue Max | |

| L_RES_Str_P23 | Load Reserve Warning | PTE1_50N51NOC1phA1/PTOC.ST.Str. |

| L_RES_Op_P24 | Load Reserve Alarm | PTE1_50N51NOC1phA1/PTOC.ST.Op. |

| ALT_RATE_P25 | Altering Rate | |

| SupWh_P1 | Accumulated active energy towards busbar | |

| SupVArh_P2 | Accumulated reactive energy towards busbar | |

| DmdWh_P3 | Accumulated active energy from busbar | |

| DmdVArh_P4 | Accumulated reactive energy from busbar |

Appendix C. Test Results

References

- Ali, N.H.; Borhanuddin, M.A.; Othman, L.M.; Abdel-Latif, K.M. Performance of communication networks for Integrity protection systems based on travelling wave with IEC 61850. Electr. Power Energy Syst. 2018, 95, 664–675. [Google Scholar] [CrossRef]

- Lozano, J.C.; Koneru, K.; Ortiz, N.; Cardenas, A.A. Digital Substations and IEC 61850: A Primer. IEEE Commun. Mag. 2023, 61, 28–34. [Google Scholar] [CrossRef]

- Habib, H.F.; Fawzy, N.; Brahma, S. Performance Testing and Assessment of Protection Scheme Using Real-Time Hardware-in-the-Loop and IEC 61850 Standard. IEEE Trans. Ind. Appl. 2021, 57, 4569–4578. [Google Scholar] [CrossRef]

- Elbez, G.; Keller, H.B.; Hagenmeyer, V. A Cost-efficient Software Testbed for Cyber-Physical Security in IEC 61850-based Substations. In Proceedings of the 2018 IEEE International Conference on Communications, Control, and Computing Technologies for Smart Grids (SmartGridComm), Aalborg, Denmark, 29–31 October 2018; pp. 1–6. [Google Scholar] [CrossRef]

- Da Cruz, A.K.; Lechner, D.; Siemers, C.; Kellermann, A.C.H.; Däubler, L. Novel approaches for the integration of the electrical substation IEC 61850 bay level in modern Industry 4.0 SCADA and Edge Systems based on OPC UA, TSN and MTP Standards. Cad. Pedagógico 2025, 22, 15221. [Google Scholar] [CrossRef]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Chen, T.; Guestrin, C. XGBoost: A scalable Tree Boosting System. In Proceedings of the KDD ’16: Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, San Francisco, CA, USA, 13 August 2016; pp. 785–794. [Google Scholar] [CrossRef]

- Reiche, L.; Fay, A. Concept for extending the Module Type Package with energy management functionalities. In Proceedings of the 2022 IEEE 27th International Conference on Emerging Technologies and Factory Automation (ETFA), Stuttgart, Germany, 6–9 September 2022; pp. 1–8. Available online: https://ieeexplore.ieee.org/document/9921612 (accessed on 15 November 2025).

- Process INDUSTRIE 4.0: The Age of Modular Production on the Doorstep to Market Launch. 2019. Available online: https://share.google/fzMcdmcAXUZir0k0s (accessed on 21 November 2025).

- Khan, A. Simulating Intelligence. In Artificial Intelligence: A Guide for Everyone; Springer Nature: Cham, Switzerland, 2024; pp. 105–114. [Google Scholar] [CrossRef]

- Jaboob, A.; Durrah, O.; Chakir, A. Artificial Intelligence: An Overview. In Engineering Applications of Artificial Intelligence; Springer: Berlin/Heidelberg, Germany, 2024; pp. 3–22. [Google Scholar] [CrossRef]

- Stryker, C.; Kavlakoglu, E. What is Artificial Intelligence (AI)? 2024. Available online: https://www.ibm.com/think/topics/artificial-intelligence (accessed on 18 November 2025).

- Khan, A. AI Subfields. In Artificial Intelligence: A Guide for Everyone; Springer Nature: Cham, Switzerland, 2024; pp. 179–195. [Google Scholar] [CrossRef]

- Sheikn, H.; Prins, C.; Schrijvers, E. Artificial Intelligence: Definition and Background. In Mission AI; Springer International Publishing: Cham, Switzerland, 2023; pp. 15–41. [Google Scholar] [CrossRef]

- Witten, I.H.; Frank, E.; Hall, M.A.; Pal, C.J. Data Mining: Practical Machine Learning Tools and Techniques, 4th ed.; Morgan Kaufmann Publishers Inc.: San Francisco, CA, USA, 2016; ISBN 978-0-12-804291-5. [Google Scholar]

- Kavlakoglu, E. What Is a Decision Tree? 2024. Available online: https://www.ibm.com/de-de/think/topics/decision-trees (accessed on 15 December 2025).

- Brunner, C. IEC 61850 for power system communication. In Proceedings of the IEEE/PES Transmission and Distribution Conference and Exposition, Chicago, IL, USA, 21–24 April 2008; pp. 1–6. Available online: https://ieeexplore.ieee.org/document/4517287 (accessed on 15 November 2025).

- Aftab, M.A.; Hussain, S.S.; Ali, I.; Ustun, T.S. IEC 61850 based substation automation system: A survey. Int. J. Electr. Power Energy Syst. 2020, 120, 106008. [Google Scholar] [CrossRef]

- Cavalieri, S. Semantic Interoperability between IEC 61850 and oneM2M for IoT-Enabled Smart Grids. Sensors 2021, 21, 2571. [Google Scholar] [CrossRef] [PubMed]

- Huang, Z.; Gao, L.; Yang, Y. IEC 61850 Standards and Configuration Technology. In IEC 61850-Based Smart Substations; Academic Press: Oxford, UK, 2019; pp. 25–62. [Google Scholar] [CrossRef]

- IEC 61850-6; Communication Networks and Systems for Power Utility Automation—Part 6: Configuration Description Language for Communication in Power Utility Automation Systems Related to IEDs. IEC: Geneva, Switzerland, 2018; ISBN 978-2-8322-5802-6.

- Hou, S.; Liu, W.; Kong, F. IEC 61580 Gateway SCL Configuration Document Research. In Proceedings of the 2013 Third International Conference on Intelligent System Design and Engineering Applications, Hong King, China, 16–18 January 2013; pp. 824–831. Available online: https://ieeexplore.ieee.org/abstract/document/6455739 (accessed on 15 November 2025).

- Della Giustina, D.; Sotomayor, A.A.; Dedè, A.; Ramos, F. A Model-Based Design of Distributed Automation Systems for the Smart Grid: Implementation and Validation. Energies 2020, 13, 3560. [Google Scholar] [CrossRef]

- Stock, S.; Babazadeh, D.; Becker, C. Applications of Artificial Intelligence in Distribution Power System Operation. IEEE Access 2021, 9, 150098–150119. Available online: https://ieeexplore.ieee.org/document/9599712 (accessed on 15 November 2025). [CrossRef]

- Alam, M.M.; Hossain, M.J.; Habib, M.A.; Arafat, M.Y.; Hannan, M.A. Artificial intelligence integrated grid systems: Technologies, potential frameworks, challenges, and research directions. Renew. Sustain. Energy Rev. 2025, 211, 115251. [Google Scholar] [CrossRef]

- Ahmadi, M.; Aly, H.; Gu, J. A comprehensive review of AI-driven approaches for smart grid stability and reliability. In Renewable and Sustainable Energy Reviews; Elsevier: Amsterdam, The Netherlands, 2026; p. 116424. [Google Scholar] [CrossRef]

- Saranavan, R.; Sujatha, P. A State of Art Techniques on Machine Learning Algorithms: A Perspective of Supervised Learning Approaches in Data Classification. In Proceedings of the 2018 Second International Conference on Intelligent Computing and Control Systems (ICICCS), Madurai, India, 14–15 June 2018; IEEE: New York, NY, USA, 2018; pp. 945–949. Available online: https://ieeexplore.ieee.org/document/8663155 (accessed on 15 November 2025).

- N Rincy, T.; Gupta, R. A Survey on Machine Learning Approaches and Its Techniques. In Proceedings of the 2020 IEEE International Students’ Conference on Electrical, Electronics and Computer Science (SCEECS), Bhopal, India, 22–23 February 2020; pp. 1–6. Available online: https://ieeexplore.ieee.org/document/9087123/authors (accessed on 15 November 2025).

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-learn: Machine Learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. Available online: https://dl.acm.org/doi/10.5555/1953048.2078195 (accessed on 15 November 2025).

- McKinney, W. Data Structures for Statistical Computing in Python. In Proceedings of the 9th Python in Science Conference (SciPy), Austin, TX, USA, 28 June–3 July 2010; pp. 56–61. [Google Scholar] [CrossRef]

- xml.etree.ElementTree—The ElementTree XML API. Available online: https://docs.python.org/3/library/xml.etree.elementtree.html (accessed on 26 November 2025).

- Harris, C.R.; Millman, K.J.; van der Walt, S.J.; Gommers, R.; Virtanen, P.; Cournapeau, D.; Wieser, E.; Taylor, J.; Berg, S.; Smith, N.J.; et al. Array programming with NumPy. Nature 2020, 585, 357–362. [Google Scholar] [CrossRef] [PubMed]

- Hunter, J.D. Matplotlib: A 2D Graphics Environment. Comput. Sci. Eng. 2007, 9, 90–95. [Google Scholar] [CrossRef]

- Waskom, M.L. Seaborn: Statistical Data Visualization. J. Open Source Softw. 2021, 6, 3021. [Google Scholar] [CrossRef]

- PEP 8—Style Guide for Python Code. Available online: https://peps.python.org/pep-0008 (accessed on 26 November 2025).

- IEC 61850-7-4; Communication Networks and Systems for Power Utility Automation—Part 7-4: Basic Communication Structure—Compatible Logical Node Classes and Data Object Classes. IEC: Geneva, Switzerland, 2020; ISBN 978-2-8322-7890-1.

- Process Control System PCS 7 PowerControl Library Objects. Available online: https://cache.industry.siemens.com/dl/files/788/109799788/att_1079692/v1/PCS7_PowerControlObjects_en.pdf (accessed on 26 November 2025).

- IEC 61850 Station Gateway for TIA Portal. Available online: https://support.industry.siemens.com/cs/ww/de/view/109820678 (accessed on 26 November 2025).

- Advanced Power Control Library for SIMATIC WinCC OA. Available online: https://support.industry.siemens.com/cs/ww/en/view/109995244 (accessed on 26 November 2025).

| Metrics | Random Forest | XGBoost | Logistic Regression |

|---|---|---|---|

| Confusion Matrix | + | + | + |

| Confidence Histogram | + | − | 0 |

| Calibration Curve | + | 0 | 0 |

| Precision–Recall Curve | + | + | + |

| ROC Curve | + | + | + |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Kniphoff da Cruz, A.; Hackenhaar Kellermann, A.C.; Meinhardt Swarowsky, J.V.; da Silva, I.C.; Jochims Kniphoff da Cruz, M.E.; Däubler, L. Using Artificial Intelligence to Classify IEDs’ Control Scope from SCL Files. Processes 2026, 14, 206. https://doi.org/10.3390/pr14020206

Kniphoff da Cruz A, Hackenhaar Kellermann AC, Meinhardt Swarowsky JV, da Silva IC, Jochims Kniphoff da Cruz ME, Däubler L. Using Artificial Intelligence to Classify IEDs’ Control Scope from SCL Files. Processes. 2026; 14(2):206. https://doi.org/10.3390/pr14020206

Chicago/Turabian StyleKniphoff da Cruz, Arthur, Ana Clara Hackenhaar Kellermann, João Vitor Meinhardt Swarowsky, Ingridy Caroliny da Silva, Marcia Elena Jochims Kniphoff da Cruz, and Lorenz Däubler. 2026. "Using Artificial Intelligence to Classify IEDs’ Control Scope from SCL Files" Processes 14, no. 2: 206. https://doi.org/10.3390/pr14020206

APA StyleKniphoff da Cruz, A., Hackenhaar Kellermann, A. C., Meinhardt Swarowsky, J. V., da Silva, I. C., Jochims Kniphoff da Cruz, M. E., & Däubler, L. (2026). Using Artificial Intelligence to Classify IEDs’ Control Scope from SCL Files. Processes, 14(2), 206. https://doi.org/10.3390/pr14020206