An Adaptive Early Warning Method for Wind Power Prediction Error

Abstract

1. Introduction

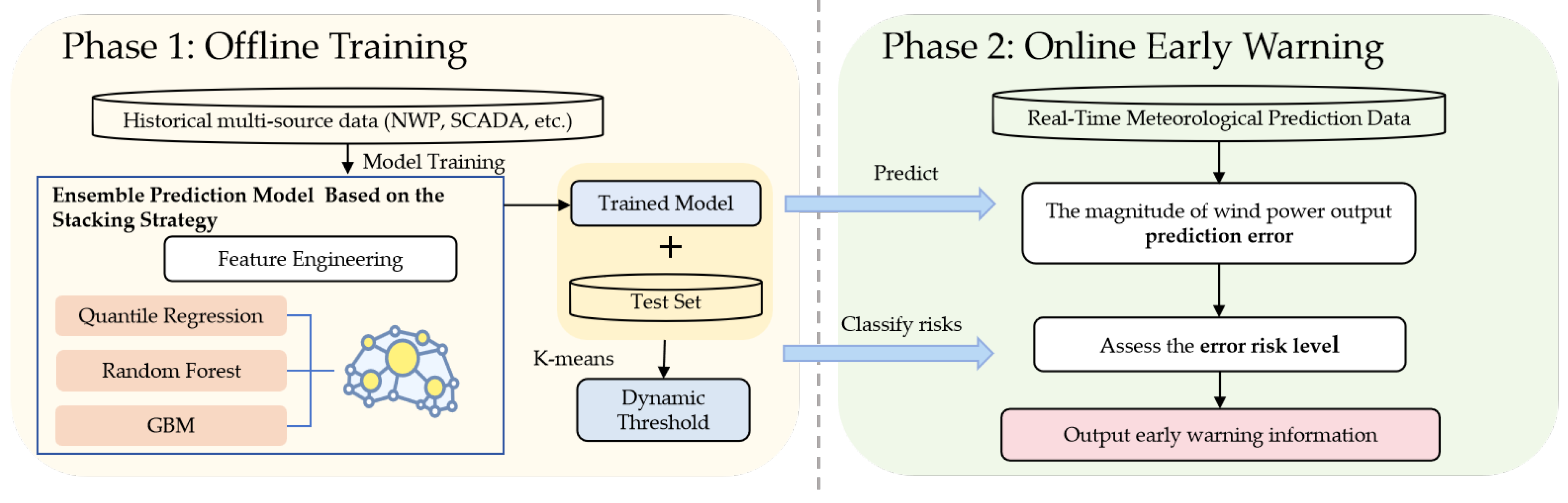

- Develop an end-to-end framework that directly maps NWP data to risk warnings, filling a technical gap in operational error management.

- Design a multi-model ensemble using Stacking integration, combining Quantile Regression, Random Forest, and Gradient Boosting to handle the complexity of error prediction.

- Introduce data-driven dynamic thresholds through K-means clustering, overcoming limitations of fixed thresholds under varying conditions.

2. From Forecasting to Warning: A Paradigm Shift in Error Management

2.1. The Fundamental Challenge

2.2. Architecting the Solution: A Dual-Stage Framework

3. Core Technologies of the Early Warning Method

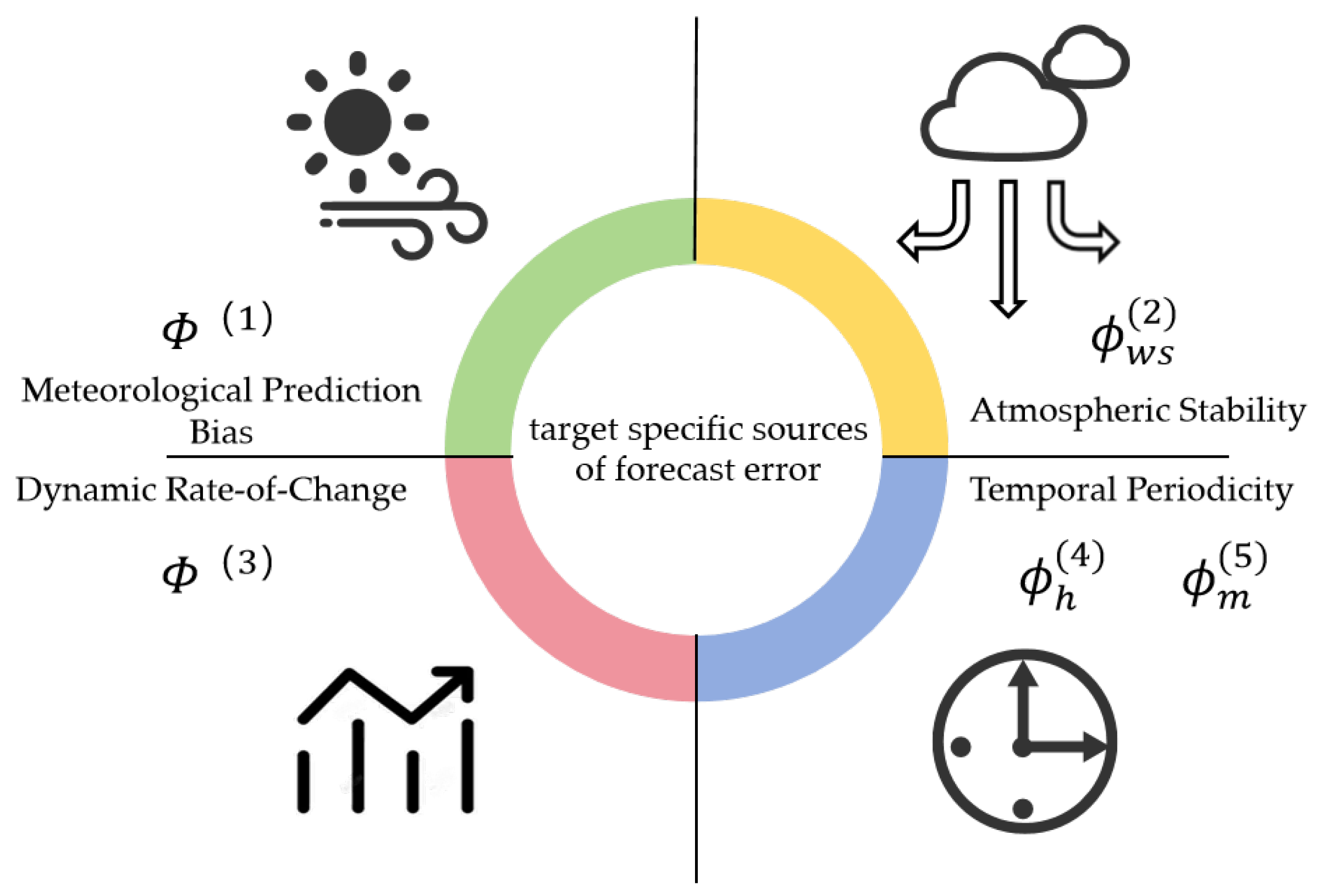

3.1. Feature Engineering: Capturing Error Generation Mechanisms

3.1.1. Problem Formulation

3.1.2. Meteorological Prediction Bias Features

3.1.3. Atmospheric Stability Features

3.1.4. Dynamic Rate-of-Change Features

3.1.5. Temporal Periodicity Features

3.2. Multi-Model Ensemble for Error Magnitude Prediction

3.2.1. Base Learner Construction

- Quantile Regression (QR): Uncertainty Quantification

- 2.

- Random Forest (RF): Robustness in High-Dimensional Noise

- 3.

- Gradient Boosting Machine (GBM): Deep Non-Linear Pattern Mining

3.2.2. Stacking Integration

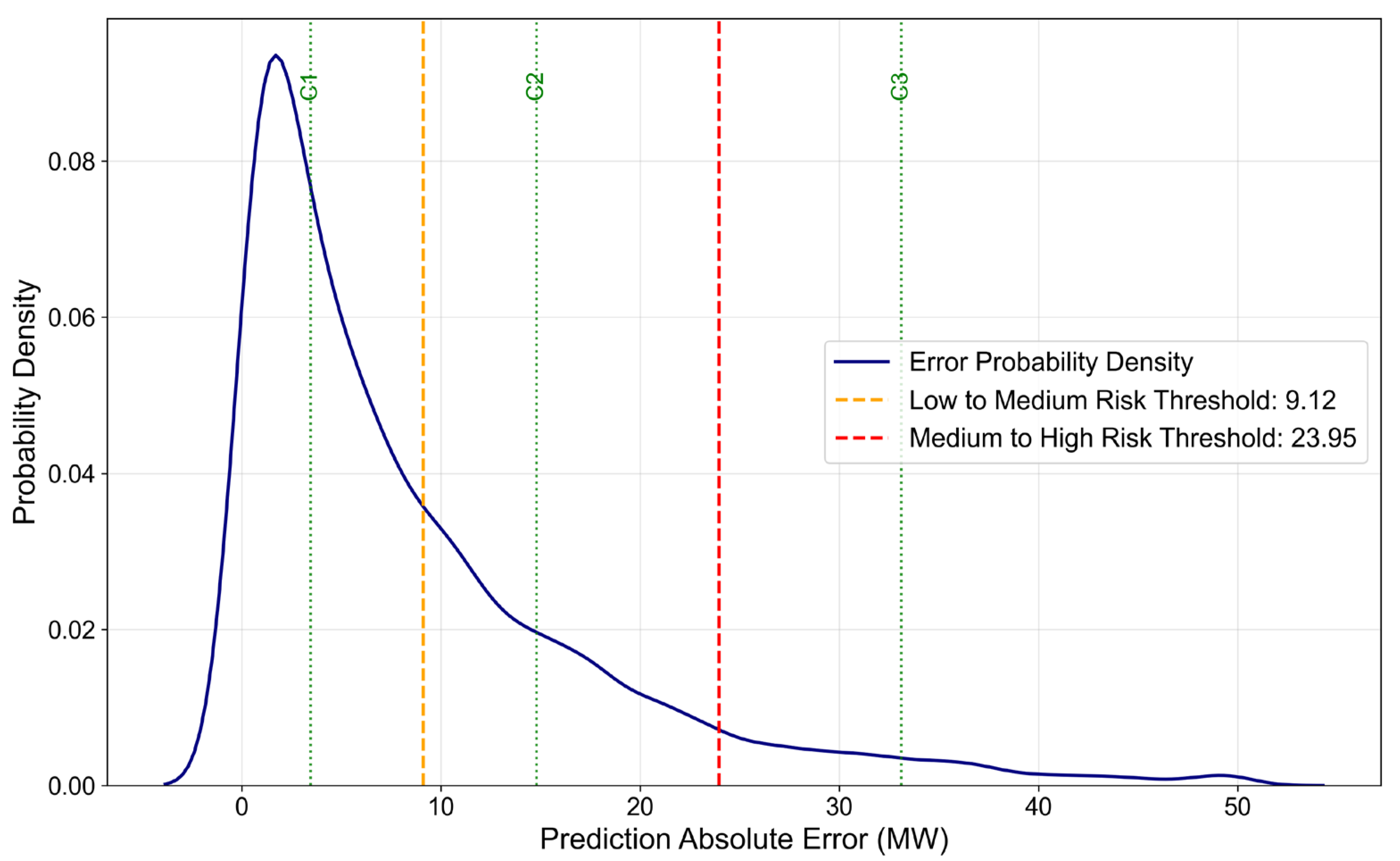

3.3. Adaptive Dynamic Risk Thresholds

3.3.1. From Prediction to Risk Classification

3.3.2. Adaptive Threshold via K-Means Clustering

3.3.3. Threshold Calculation from Clusters

3.4. Performance Metrics

3.4.1. Regression Metrics for Error Magnitude

3.4.2. Classification Metrics for Risk Warning

- 1.

- High-Risk Recall

- 2.

- High-Risk Precision (

- 3.

- High-Risk F1 Score ()

4. Results and Analysis

4.1. Dataset and Experimental Setup

4.1.1. Dataset Description

- Historical actual power output data from the wind farm (SCADA): The 15 min average net output power recorded by the wind farm’s monitoring system.

- Historical predicted power output data from the wind farm: Day-ahead predicted power generated by the commercial forecasting system used by the wind farm operator.

- Historical measured meteorological data from the wind farm: Including wind speed and direction at multiple altitudes, as well as temperature, atmospheric pressure, and humidity.

- NWP historical weather forecast data: Containing weather type, wind speed and direction at multiple altitudes, as well as temperature and atmospheric pressure.

4.1.2. Experimental Configuration

- 1.

- Quantile Regression

- Target Quantiles ;

- Regularization Type: L2 Regularization;

- Regularization Parameter: 0.01.

- 2.

- Random Forest

- Number of Decision Trees: 200;

- Maximum Tree Depth: 15;

- Feature Sampling Ratio: 0.7.

- 3.

- Gradient Boosting Machine

- The Gradient Boosting Machine was tuned with the following hyperparameters to optimize its sequential learning process:

- Learning Rate: 0.05;

- Number of Estimators: 150.

- 4.

- Stacking Ensemble

- The Stacking Ensemble model utilized a two-layer structure.

- The first layer consists of the QR, RF, and GBM models (details above).

- 5.

- K-Means Clustering

- Number of Clusters: 3;

- Initial Centroid Selection: K-means++ algorithm;

- Convergence Threshold: .

4.1.3. Validation Strategy

- Time-Series Stratified Data Splitting

- Unlike random data splitting, the dataset was divided chronologically to mimic practical forecasting cycles:

- Training Set (January 2023–February 2024, 14 months): Used for initial model fitting and feature importance analysis.

- Validation Set (March 2024–July 2024, 5 months): Exclusive for hyperparameter tuning and K-means cluster center calibration. No overlap with the training set was allowed to prevent overfitting to specific time periods.

- Test Set (August 2024–December 2024, 5 months): A fully independent dataset used for final performance evaluation. This ensured the results reflect the method’s generalization ability to unseen future data.

- 2.

- Time-Series 5-Fold Cross-Validation

4.2. Experimental Results

4.2.1. Performance of the Error Prediction Ensemble Model

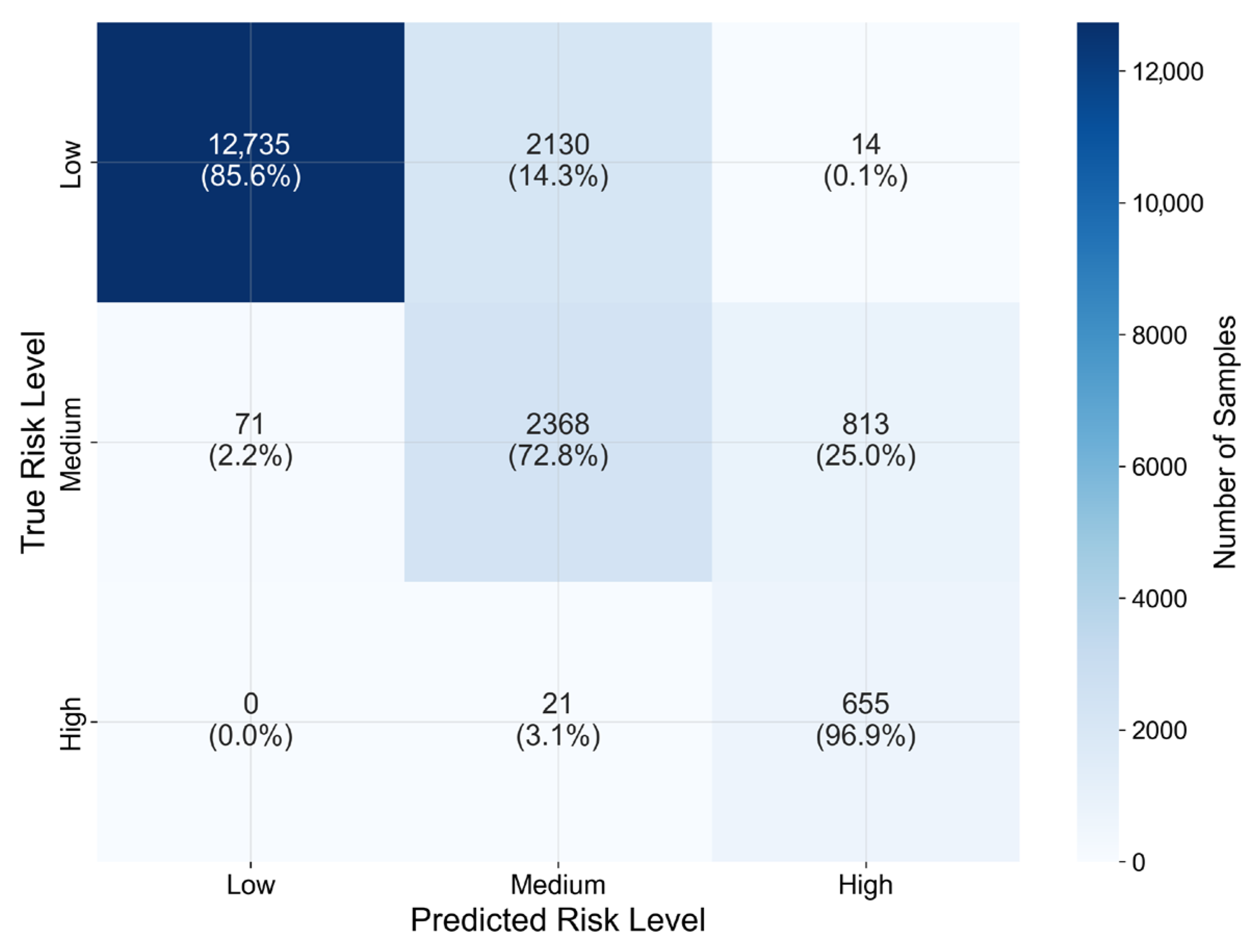

4.2.2. Effectiveness Validation of the Dynamic Threshold Mechanism

4.2.3. Performance Analysis Under a Typical Extreme Weather Event

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| NWP | Numerical Weather Prediction |

| QR | Quantile Regression |

| RF | Random Forest |

| GBM | Gradient Boosting Machine |

| LR | Linear Regression |

| MAE | Mean Absolute Error |

| RMSE | Root Mean Square Error |

| SCADA | Supervisory Control and Data Acquisition |

References

- Ahmed, S.D.; Al-Ismail, F.S.M.; Shafiullah, M.; Al-Sulaiman, F.A.; El-Amin, I.M. Grid Integration Challenges of Wind Energy: A Review. IEEE Access 2020, 8, 10857–10878. [Google Scholar] [CrossRef]

- Min, C.G. Analyzing the Impact of Variability and Uncertainty on Power System Flexibility. Appl. Sci. 2019, 9, 561. [Google Scholar] [CrossRef]

- Liu, Z.; Guo, H.; Zhang, Y.; Zuo, Z. A Comprehensive Review of Wind Power Prediction Based on Machine Learning: Models, Applications, and Challenges. Energies 2025, 18, 350. [Google Scholar] [CrossRef]

- Yang, M.; Jiang, Y.; Che, J.; Han, Z.; Lv, Q. Short-Term Forecasting of Wind Power Based on Error Traceability and Numerical Weather Prediction Wind Speed Correction. Electronics 2024, 13, 1559. [Google Scholar] [CrossRef]

- Li, J.; Geng, D.; Zhang, P.; Meng, X.; Liang, Z.; Fan, G. Ultra-Short Term Wind Power Forecasting Based on LSTM Neural Network. In Proceedings of the 2019 IEEE 3rd International Electrical and Energy Conference (CIEEC), Beijing, China, 7–9 September 2019; pp. 1815–1818. [Google Scholar]

- Xin, P.; Wang, H.; Wang, H. Short-Term Wind Power Forecasting Based on VMD-QPSO-LSTM. In Proceedings of the 2024 IEEE 4th International Conference on Power, Electronics and Computer Applications (ICPECA), Shenyang, China, 19–21 January 2024; pp. 474–478. [Google Scholar]

- Kisvari, A.; Lin, Z.; Liu, X. Wind Power Forecasting—A Data-Driven Method Along with Gated Recurrent Neural Network. Renew. Energy 2021, 163, 1895–1909. [Google Scholar] [CrossRef]

- Li, L.; Gao, G.; Wu, W.; Wei, Y.; Lu, S.; Liang, J. Short-term Day-ahead Wind Power Prediction Considering Feature Recombination and Improved Transformer. Power Syst. Technol. 2024, 48, 1466–1476. [Google Scholar]

- Yu, G.; Shen, L.; Dong, Q.; Cui, G.; Wang, S.; Xin, D.; Chen, X.; Lu, W. Ultra-Short-Term Wind Power Forecasting Techniques: Comparative Analysis and Future Trends. Front. Energy Res. 2024, 11, 1345004. [Google Scholar] [CrossRef]

- Liang, Z.; Chai, R.; Sun, Y.; Jiang, Y.; Sun, D. Cold Wave Recognition and Wind Power Forecasting Technology Considering Sample Scarcity and Meteorological Periodicity Characteristics. Appl. Sci. 2025, 15, 4312. [Google Scholar] [CrossRef]

- Li, J.; Zhang, S.; Yang, Z. A Wind Power Forecasting Method Based on Optimized Decomposition Prediction and Error Correction. Electr. Power Syst. Res. 2022, 208, 107886. [Google Scholar] [CrossRef]

- Ding, M.; Yang, R.; Zhang, C.; Wang, B. Short-Term Wind Speed Forecasting Using Recurrent Neural Networks with Error Correction. Energy 2020, 217, 119397. [Google Scholar]

- Cui, M.; Zhang, J.; Florita, A.R.; Hodge, B.M.; Ke, D.; Sun, Y. An Optimized Swinging Door Algorithm for Identifying Wind Ramping Events. IEEE Trans. Sustain. Energy 2016, 7, 150–162. [Google Scholar] [CrossRef]

- Pinson, P.; Kariniotakis, G. Conditional Prediction Intervals of Wind Power Generation. IEEE Trans. Power Syst. 2010, 25, 1845–1856. [Google Scholar] [CrossRef]

- Huang, C.; Wu, Y.; Tsai, C.; Hong, J.; Thang, P. Enhancing Wind Power Forecasts via Bias Correction Technologies for Numerical Weather Prediction Model. In Proceedings of the 2024 IEEE/IAS 60th Industrial and Commercial Power Systems Technical Conference (I&CPS), Las Vegas, NV, USA; 2024; pp. 1–6. [Google Scholar]

- Zhang, X.; Wang, F. Wind Power Prediction Based on Improved Dung Beetle Optimization Algorithm and Fusion Attention Mechanism. Guangdong Electr. Power 2025, 38, 32–40. [Google Scholar]

- Wu, B.; Yu, S.; Peng, L.; Wang, L. Interpretable Wind Speed Forecasting with Meteorological Feature Exploring and Two-Stage Decomposition. Energy 2024, 294, 130782. [Google Scholar] [CrossRef]

- Li, H. Analysis of Spatio-Temporal Variations of Wind Shear Index in China. Master’s Thesis, Lanzhou University, Lanzhou, China, 2016. [Google Scholar]

- Wei, C.C. Wind Features Extracted from Weather Simulations for Wind-Wave Prediction Using High-Resolution Neural Networks. J. Mar. Sci. Eng. 2021, 9, 1257. [Google Scholar] [CrossRef]

- Takara, L.d.A.; Teixeira, A.C.; Yazdanpanah, H.; Mariani, V.C.; Coelho, L.d.S. Optimizing multi-step wind power forecasting: Integrating advanced deep neural networks with stacking-based probabilistic learning. Appl. Energy 2024, 369, 123487. [Google Scholar] [CrossRef]

- Bachir Belmehdi, C.B.; Khiat, A.; Keskes, N. Predicting an Optimal Virtual Data Model for Uniform Access to Large Heterogeneous Data. Data Intell. 2024, 6, 504–530. [Google Scholar] [CrossRef]

- Zhu, J.; He, Y.; Yang, X.; Yang, S. Ultra-Short-Term Wind Power Probabilistic Forecasting Based on an Evolutionary Non-Crossing Multi-Output Quantile Regression Deep Neural Network. Energy Convers. Manag. 2024, 301, 118062. [Google Scholar] [CrossRef]

- Meng, Y.; Fan, S.; Shen, Y.; Xiao, J.C.; He, G.Y.; Li, Z.Y. Transmission and distribution network-constrained large-scale demand response based on locational customer directrix load for accommodating renewable energy. Appl. Energy 2023, 350, 121681. [Google Scholar] [CrossRef]

- Zhang, Y.; Meng, Y.; Fan, S.; Xiao, J.C.; Li, L.; He, G.Y. Multi-time scale customer directrix load-based demand response under renewable energy and customer uncertainties. Appl. Energy 2025, 383, 125334. [Google Scholar] [CrossRef]

- Bassett, G., Jr.; Koenker, R. Regression Quantiles. Econometrica 1978, 46, 33–50. [Google Scholar] [CrossRef]

- Olcay, K.; Tunca, S.G.; Özgür, M.A. Forecasting and Performance Analysis of Energy Production in Solar Power Plants Using Long Short-Term Memory (LSTM) and Random Forest Models. IEEE Access 2024, 12, 103299–103312. [Google Scholar] [CrossRef]

- Breiman, L. Random Forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Pang, C.; Shang, X.; Zhang, B.; Yu, J. Short-term Wind Power Probability Prediction Based on Improved Gradient Boosting Machine Algorithm. Autom. Electr. Power Syst. 2022, 46, 198–206. [Google Scholar] [CrossRef]

- Friedman, J.H. Greedy Function Approximation: A Gradient Boosting Machine. Ann. Stat. 2001, 29, 1189–1232. [Google Scholar] [CrossRef]

- Yu, H.; Li, J.; Wang, H.; Li, S.; Bian, J. Abnormal State Detection Method Based on Dynamic Clustering of Wind Turbines. Electr. Power Autom. Equip. 2025, 45, 64–70. [Google Scholar] [CrossRef]

- MacQueen, J. Some Methods for Classification and Analysis of Multivariate Observations. In Proceedings of the 5th Berkeley Symposium on Mathematical Statistics and Probability, Berkeley, CA, USA, 21 June–18 July 1967; pp. 281–297. [Google Scholar]

- Chai, T.; Draxler, R.R. Root Mean Square Error (RMSE) or Mean Absolute Error (MAE)? -Arguments against Avoiding RMSE in the Literature. Geosci. Model Dev. 2014, 7, 1247–1250. [Google Scholar] [CrossRef]

- Tang, M.; Zhao, Q.; Ding, S.X.; Wu, H.; Li, L.; Long, W.; Huang, B. An Improved LightGBM Algorithm for Online Fault Detection of Wind Turbine Gearboxes. Energies 2020, 13, 807. [Google Scholar] [CrossRef]

- Sokolova, M.; Lapalme, G. A Systematic Analysis of Performance mEasures for Classification Tasks. Inf. Process. Manag. 2009, 45, 427–437. [Google Scholar] [CrossRef]

| Model | MAE (MW) | RMSE (MW) |

|---|---|---|

| Quantile Regression | 3.066 | 5.101 |

| Random Forest | 2.852 | 4.597 |

| Gradient Boosting Machine | 2.864 | 4.593 |

| Ensemble model | 2.801 | 4.477 |

| Threshold Method | Overall Accuracy | High-Risk Recall |

|---|---|---|

| Dynamic Threshold | 0.838 | 0.969 |

| Fixed Threshold | 0.802 | 0.897 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, L.; He, F.; Chen, M.; He, C.; Huang, Z.; Wang, C.; Yan, L. An Adaptive Early Warning Method for Wind Power Prediction Error. Processes 2025, 13, 3941. https://doi.org/10.3390/pr13123941

Zhang L, He F, Chen M, He C, Huang Z, Wang C, Yan L. An Adaptive Early Warning Method for Wind Power Prediction Error. Processes. 2025; 13(12):3941. https://doi.org/10.3390/pr13123941

Chicago/Turabian StyleZhang, Li, Facai He, Mouyuan Chen, Chun He, Zhigang Huang, Chao Wang, and Lei Yan. 2025. "An Adaptive Early Warning Method for Wind Power Prediction Error" Processes 13, no. 12: 3941. https://doi.org/10.3390/pr13123941

APA StyleZhang, L., He, F., Chen, M., He, C., Huang, Z., Wang, C., & Yan, L. (2025). An Adaptive Early Warning Method for Wind Power Prediction Error. Processes, 13(12), 3941. https://doi.org/10.3390/pr13123941