1. Introduction

Long-range dependence has been a topic of interest in econometrics since Granger’s study on the shape of the spectrum of economic variables (

Granger 1966). The author found that

long-term fluctuations in economic variables, if decomposed into frequency components, are such that the amplitudes of the components decrease smoothly with decreasing period. As shown by

Adenstedt (

1974), this type of behavior implies long-lasting autocorrelations, that is they exhibit long-range dependence. In finance, long-range dependence has been estimated in volatility measures, inflation, and energy prices; see, for instance,

Baillie et al. (

2019),

Vera-Valdés (

2021b),

Hassler and Meller (

2014), and

Ergemen et al. (

2016).

In the time series literature, the fractional difference operator has become one of the most popular methods to model long-range dependence. Notwithstanding its popularity, Granger argued that processes generated by the fractional difference operator fall into the area of “empty boxes”,

about theory—either economic or econometric—on topics that do not arise in the actual economy (

Granger 1999). Moreover,

Veitch et al. (

2013) showed that fractionally differenced processes are brittle in the sense that small deviations such as adding small independent noise change the asymptotic variance structure qualitatively.

This paper developed an econometric-based model for long-range dependence to alleviate these concerns. One of the most cited theoretical explanations behind the presence of long-range dependence in real data is cross-sectional aggregation (

Granger 1980). We used cross-sectional aggregation as the inspiration for a nonfractional long-range-dependent model that arises in the actual economy.

The proposed model is simple to implement in real applications. In particular, we present two algorithms to generate long-range dependence by cross-sectional aggregation with similar computational requirements as the fractional difference operator. One is based on the linear convolution form of the process, while the second uses the discrete Fourier transform. The proposed algorithms are exact in the sense that no approximation to the number of aggregating units is needed. We showed that the algorithms can be used to reduce computational times for all sample sizes.

Moreover, we proved that cross-sectionally aggregated processes do not possess the antipersistent properties. We argue that these are restrictions imposed by the fractional difference operator that may not hold in real data. In this regard, the proposed model relaxes these restrictions, and it is thus less brittle than fractional differencing.

We showed that relaxing the antipersistent restrictions has implications for semiparametric estimators of long-range dependence in the frequency domain. In particular, we proved that estimators based on the log-periodogram regression are misspecified for long-range-dependent processes generated by cross-sectional aggregation. To solve the misspecification issue, we developed the maximum likelihood estimator for cross-sectionally aggregated processes. We used the recursive nature of the Beta function to speed up the computations. The estimator inherits the statistical properties of the maximum likelihood.

Finally, as an application, this paper illustrated how we can approximate a fractionally differenced process with a theoretically-based cross-sectionally aggregated one. Thus, we demonstrated that we can model similar behavior as the one induced by the fractional difference operator while providing theoretical support. Moreover, we used temperature data to show that the model provides a better, theoretically supported, fit to real data than the fractional difference operator when the source of long-range dependence is cross-sectional aggregation.

This paper proceeds as follows. In

Section 2, we present two distinct ways to generate long-range-dependent processes.

Section 3 discusses three different ways to generate cross-sectionally aggregated processes, two of them with similar computational requirements as for the fractional difference operator.

Section 4 discusses the antipersistence properties.

Section 5 develops the maximum likelihood estimator for cross-sectionally aggregated processes.

Section 6 shows a way to generate cross-sectionally aggregated processes that closely mimic the ones generated using the fractional difference operator and shows that the model provides a better fit to real data when the source of long-range dependence is cross-sectional aggregation.

Section 7 concludes.

3. Nonfractional Long-Range Dependence Generation

We denote by a series generated by cross-sectional aggregation with autoregressive parameters sampled from the Beta distribution, . The notation makes explicit the origin of the long-range dependence by cross-sectional aggregation and its dependence on the two parameters of the Beta distribution.

One practical difficulty of generating long-range dependence by cross-sectional aggregation is its high computational demands. For each cross-sectionally aggregated process, we need to simulate a vast number of

processes; see (

6).

Haldrup and Vera-Valdés (

2017) suggested that the cross-sectional dimension should increase with the sample size to obtain a good approximation to the limiting process. The computational demands are thus particularly large for long-range dependence generation by cross-sectional aggregation. We argue that the large computational demand may be one of the reasons behind the current reliance on using the fractional difference operator to model long-range dependence. In what follows, we present two algorithms to generate long-range-dependent processes by cross-sectional aggregation with similar computational requirements as fractional differencing.

Haldrup and Vera-Valdés (

2017) obtained the infinite moving average representation of the limiting process in (

6) for the long memory case, that is

or

. Proposition 2 extends their results to the long-range-dependent case with a negative parameter.

Proposition 2. Let for and be defined as in (6). Then, as , can be computed as: where and , for .

The moving average representation for cross-sectionally aggregated processes obtained in Proposition 2 compares to the moving average representation of the fractional difference operator (

2), that is Proposition 2 shows that cross-sectional aggregation can be computed as a linear convolution of the sequences

and

. A practitioner could use this formulation to generate long-range-dependent processes with similar computational requirements as the one for the fractional difference operator.

Furthermore, Theorem 1 presents a way to use the discrete Fourier transform to speed up computations for large sample sizes.

Theorem 1. Let be a sample of size of a process with and , that is let be defined as in (8), then can be computed as the first T elements of the vector:where F is the discrete Fourier transform, is the inverse transform, ⊙ denotes multiplication element-by-element, and , , where is a vector of zeros of size . Furthermore, , , where and , . Proof. Let

be the sample of size

T of a

process with

and

, that is let

be the linear convolution of the series

and

. Define

and

, where

is a vector of zeros of size

, and consider them as periodic sequences of period

, that is we consider the circular convolution of

and

. First, note that by construction:

where the second equality arises given that

for

and

for

. The last equality is true due to the periodicity of

.

Now, let

and

be the discrete Fourier transform of

and

, respectively, that is:

where

with

. Then, for

, we obtain:

where the last equality follows from:

Hence, (

9) proves that we can compute the coefficients of the discrete Fourier transform of

via element-by-element multiplication of the coefficients of the discrete Fourier transforms of

and

. We obtain the desired result by applying the inverse Fourier transform. □

Theorem 1 is an application of the periodic convolution theorem; see

Cooley et al. (

1969), and

Oppenheim and Schafer (

2010). In this sense, it is in line with the discrete Fourier transform algorithm of

Jensen and Nielsen (

2014) for the fractional difference operator, and thus, it achieves similar computational efficiency. Moreover, the algorithm is exact in the sense that no approximation regarding the number of cross-sectional units is required.

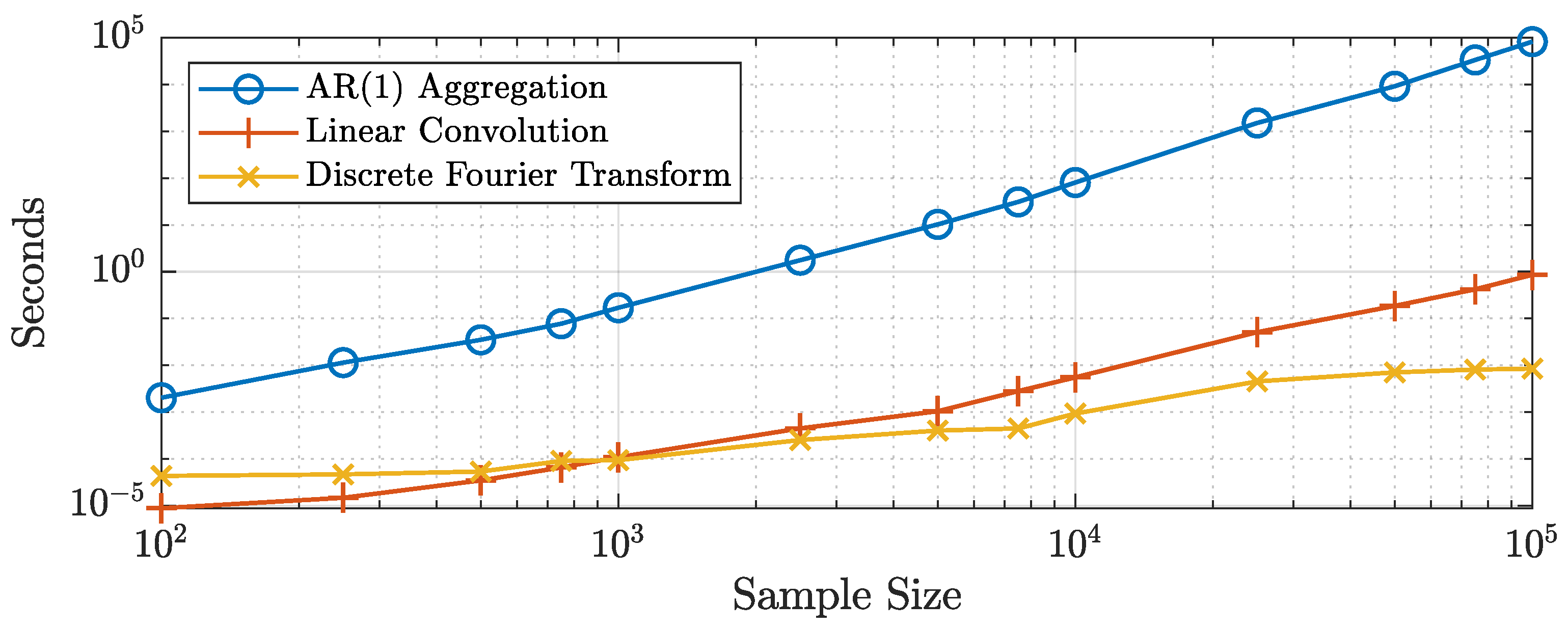

Figure 1 shows the computational times for a MATLAB implementation of the algorithms presented in this paper. The algorithms were run on a computer with an Intel Core i7-7820HQ at 2.90GHz running Windows 10 Enterprise and using the MATLAB 2019b release. Following the results of

Haldrup and Vera-Valdés (

2017), we generated the same number of

processes as the sample size for the standard aggregation algorithm (

6). To make fair comparisons, we used MATLAB’s built-in

filter function to generate the individual

processes and in the linear convolution algorithm. We generated the coefficients in the moving average representation using the recursive form instead of relying on the built-in Beta function, that is:

where

.

The figure shows that the linear convolution and discrete Fourier transform algorithms are several times faster than aggregating independent

processes for all sample sizes. In particular, the figure shows that generating long-range-dependent processes by aggregating

series becomes computationally infeasible as the sample size increases. Regarding the two proposed methods, the figure shows that the discrete Fourier transform algorithm is faster than the linear convolution algorithm for sample sizes greater than 750 observations. Moreover, the relative performance of the discrete Fourier transform algorithm increases with the sample size.

Table 1 presents a subset of the computational times for all algorithms considered.

It took approximately

s to generate one long-range-dependent series of size

by aggregating independent

processes, while more than 80 s to generate a sample of size

. These computational times make it impractical to use this algorithm for Monte Carlo experiments or bootstrap procedures. The computational times for the discrete Fourier transform and the linear convolution algorithms were approximately the same for sample sizes of around

observations. Nonetheless, the former was

-times faster than the latter for

observations. These results suggested using the discrete Fourier transform to generate large samples of long-range-dependent processes by cross-sectional aggregation. Moreover, note that these results are much in line with the ones obtained by

Jensen and Nielsen (

2014) for the fractional difference operator. In this regard, the proposed algorithms to generate long-range dependence have similar computational requirements as those of the fractional difference operator. Codes implementing the discrete Fourier transform algorithm for long-range dependence generation by cross-sectional aggregation in R (

Listing A1) and MATLAB (

Listing A2) are available in

Appendix B.

4. Nonfractional Long-Range Dependence and the Antipersistent Property

It is well known in the long memory literature that the fractional difference operator implies that the autocorrelation function is negative for negative degrees of the parameter

d. The sign of the autocorrelation function for a fractionally differenced process,

, depends on

in the denominator, which is negative for

; see (

3). Furthermore, let

, and let

be its spectral density, then:

where

are given as in (

2); see

Beran et al. (

2013). Thus,

as

for

, that is the fractional difference operator for negative values of the parameter implies a spectral density collapsing to zero at the origin. Moreover, note that the behavior of the spectral density at the origin implies that the coefficients of the moving average representation of a fractionally differenced process with negative degree of long-range dependence sum to zero.

These properties have been named antipersistence in the literature. It is thus necessary to distinguish between long memory and antipersistence for fractionally differenced processes depending on the sign of the long-range dependence parameter. We argue that the antipersistent properties are a restriction imposed by the use of the fractional difference operator. In this regard, the restriction on the sum of the coefficients in the moving average representation may be too strict for real data. It takes but a small deviation on any of the infinite coefficients to violate this restriction, providing further evidence of the brittleness of fractionally differenced processes; see

Veitch et al. (

2013). We showed that

processes do not share these restrictions and are thus less brittle.

First, (

7) demonstrates that the autocorrelation function for

processes only depends on the Beta function, which is always positive.

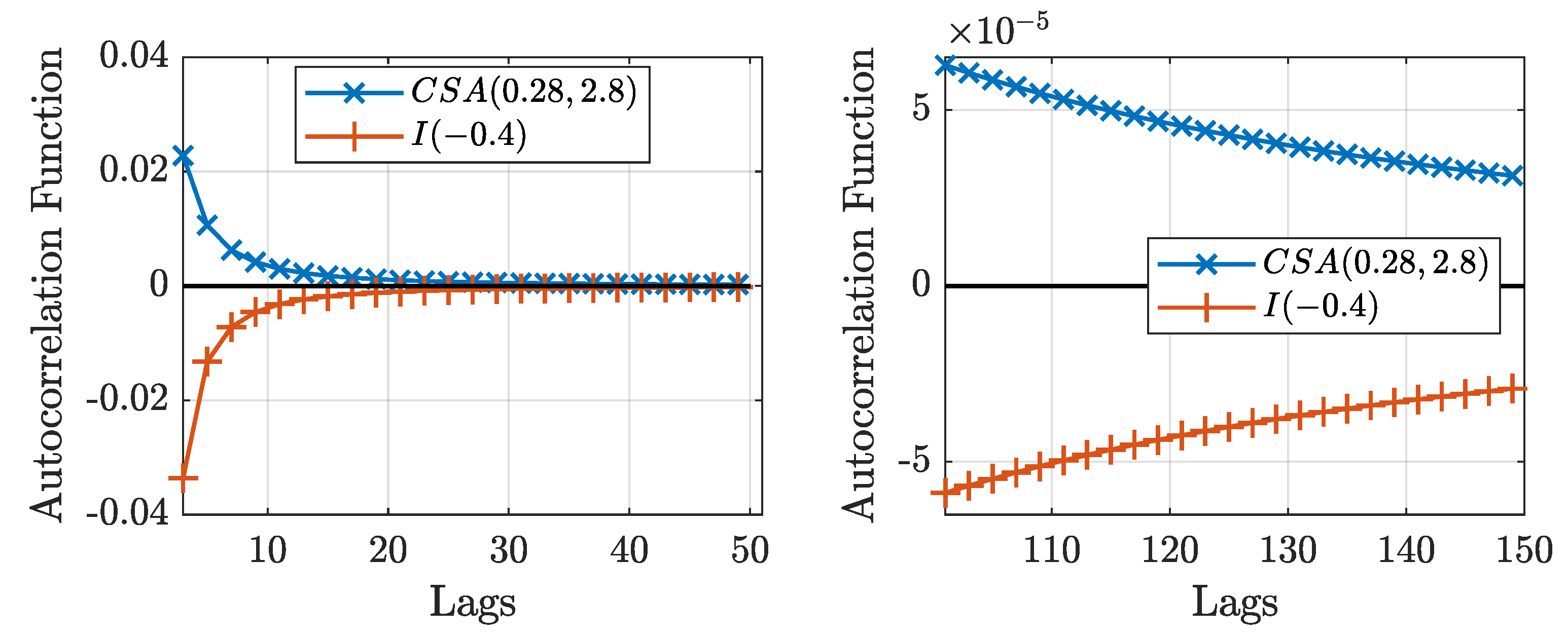

Figure 2 shows the autocorrelation function for an

process and a

process. The figure shows that both processes show the same rate of decay in their autocorrelation functions, but opposite signs.

Then, Theorem 2 proves that the spectral density for processes with converges to a positive constant as the frequency goes to zero.

Theorem 2. Let be defined as in (8) with , and let be its spectral density, then: where depends on the parameters of the Beta distribution.

Proof. Let

be defined as in (

8) with

and

; the spectral density of

at the origin is given by:

where

. Thus,

can be written as:

where in the previous to last equality, we used the large

k asymptotic formula for the ratios of Gamma functions:

(see

Phillips (

2009)) and the convergence of the series is guaranteed from the Euler–Riemann Zeta function. Moreover, note that all terms in the expression are positive. □

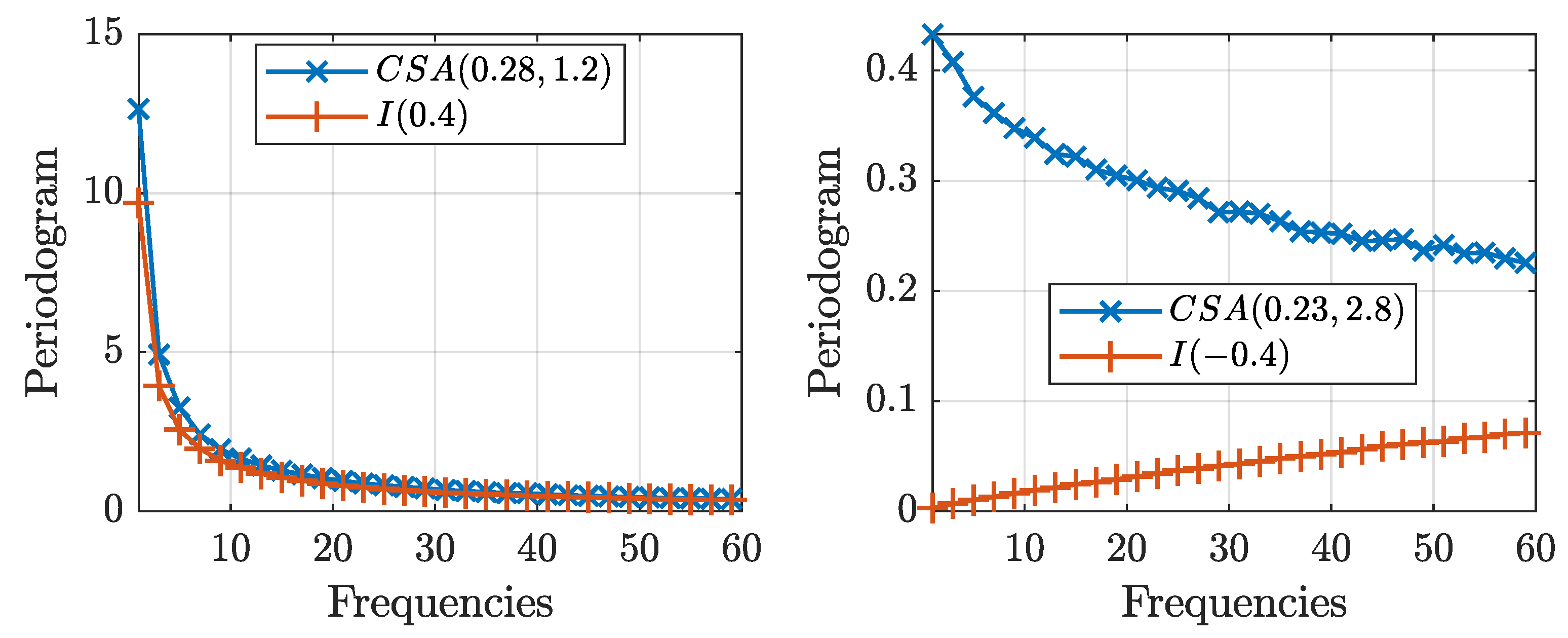

Figure 3 shows the periodogram, an estimate of the spectral density, for the

and

processes of size

averaged for

replications. The figure shows that the periodograms for both processes exhibit similar behavior for positive values of the long-range dependence parameter,

, diverging to infinity at the same rate. Nonetheless, for negative values of the long-range dependence parameter,

, the periodogram collapses to zero as the frequency goes to zero for

processes, while it converges to a constant for

processes. Following the discussion on the definitions for long memory in

Haldrup and Vera-Valdés (

2017), note that Theorem 2 implies that

processes with

are not long memory processes in the spectral sense. Nonetheless,

processes remain long-range-dependent in the covariance sense.

The behavior of the spectral density near zero has implications for estimation and inference. Pointedly, tests for long-range dependence in the frequency domain are affected. These types of tests are based on the behavior of the periodogram as the frequency goes to zero. Tests for long-range dependence in the frequency domain include the log-periodogram regression (see

Geweke and Porter-Hudak (

1983) and

Robinson (

1995b)) and the local Whittle approach (see

Künsch (

1987) and

Robinson (

1995a)).

On the one hand, the log-periodogram regression is given by:

where

is the periodogram,

are the Fourier frequencies,

c is a constant,

is the error term, and

m is a bandwidth parameter that grows with the sample size. On the other hand, the local Whittle estimator minimizes the function:

where

is the Hurst parameter,

is the periodogram, and

m is the bandwidth. From (

10), note that the log-periodogram regression provides an estimate of the long-range dependence parameter for

processes regardless of its sign. As Theorem 2 and

Figure 3 show, these tests will be misspecified for

processes with

.

To illustrate the misspecification problem,

Table 2 reports the long-range dependence parameter estimated by the method of

Geweke and Porter-Hudak 1983,

, the bias-reduced version of

Andrews and Guggenberger 2003,

, and the local Whittle approach by

Künsch (

1987),

, for several values of the long-range dependence parameter for both fractionally differenced and cross-sectionally aggregated processes.

Table 2 shows that the estimator is relatively close to the true parameter for both processes when

, if slightly overshooting it for the cross-sectionally aggregated process, as reported by

Haldrup and Vera-Valdés (

2017). This contrasts the

case. The table shows that the estimator remains precise for the

series, while it incorrectly estimates a value

for the

processes. This is of course not surprising in light of Theorem 2.

In sum, the lack of the antipersistent property in processes shows that care must be taken when estimating the long-range dependence parameters if the fractional difference operator does not generate the long-range dependence. We proved that wrong conclusions can be obtained when estimating the long-range dependence parameter using tests based on the frequency domain if the true nature of the long-range dependence is not the fractional difference operator. This result is particularly relevant in light of Granger’s argument of the fractional difference operator being in the “empty box” of econometric models that do not arise in the actual economy. To correctly estimate the long-range dependence parameter, the next section presents the maximum likelihood estimator, , for the processes.

5. Nonfractional Long-Range Dependence Estimation

Let

be a sample of size

T of a

process, and let

. Under the assumption that the error terms follow a normal distribution,

X follows a normal distribution with the probability density given by:

where

is given by:

with

the autocorrelation function in (

7).

Consider the log-likelihood function given by:

and estimate the parameters by:

The standard asymptotic theory for maximum likelihood estimation, , applies. We have the following theorem.

Theorem 3. Let be a sample of size T of a process with normally distributed error terms, and let . Furthermore, let be given by (11). Then:where stands for the limit in probability. Proof. Notice that

for

,

, or

, which shows that the log-likelihood function is identified. Moreover, the log-likelihood function is continuous and twice differentiable. Thus,

satisfies the standard regularity conditions, and it is thus a consistent estimator; see

Davidson and MacKinnon (

2004). □

Theorem 3 shows that

is a consistent estimator of the true parameters. Nonetheless, the finite sample properties may differ from the asymptotic ones, especially for smaller sample sizes (

Table 3). For implementation purposes, concentrating for

in the log-likelihood reduces the computational burden by reducing the number of parameters to estimate. Let

, and differentiate the log-likelihood with respect to

to obtain:

Thus, the concentrated log-likelihood is given by:

where we discarded the constant and divided by

T to reduce the effect of the sample size on the convergence criteria. Hence, we estimate the parameters by:

and the variance of the error term by:

where we obtain

by substituting the values of

.

Moreover, we used the recursive nature of the Beta function to reduce the computational burden.

Table 3 presents a Monte Carlo experiment for the

. We used

replications with sample sizes

,

, and

. As the table shows, the

estimates become closer to the true values as the sample size increases, in line with Theorem 3.

6. Application

As an application, we show that we can use the extra flexibility of the cross-sectionally aggregated process to approximate a process generated using the fractional difference operator.

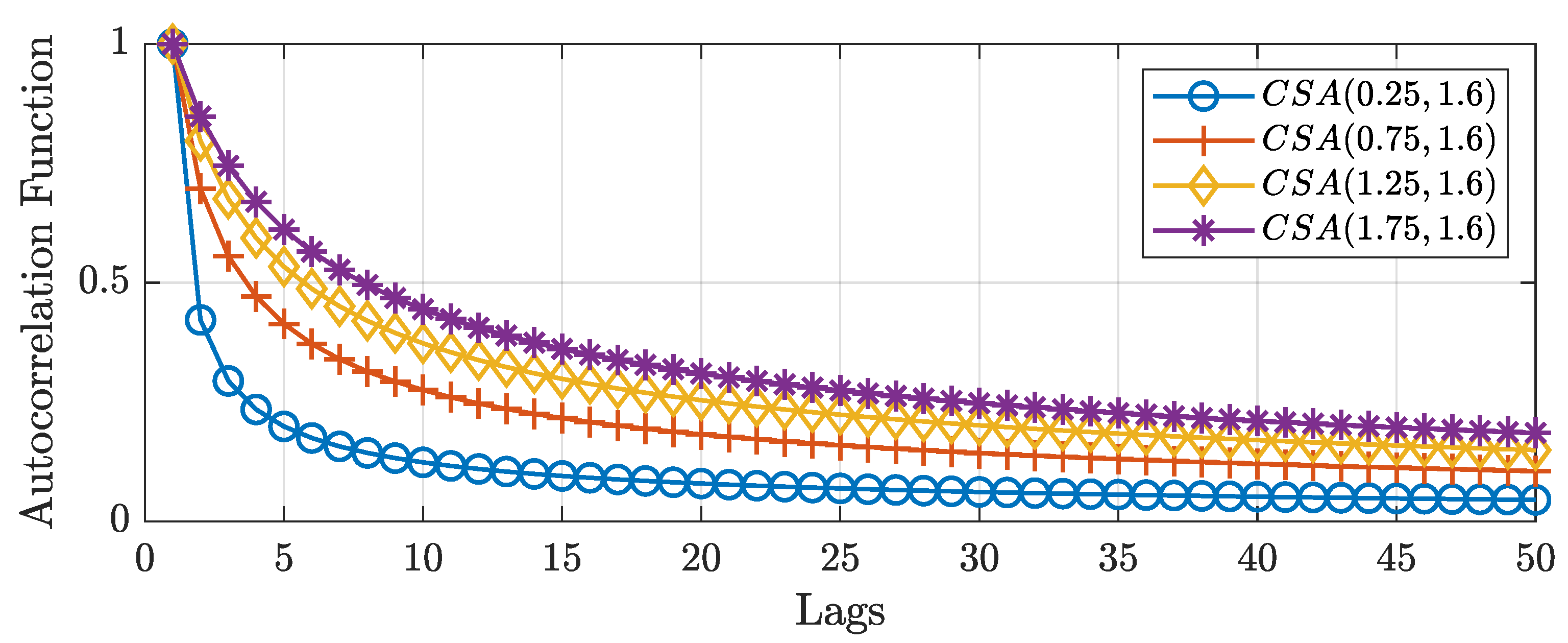

Figure 4 shows the autocorrelation function of cross-sectional aggregated processes for different values of the first parameter of the Beta distribution. The figure shows that as the first parameter increases, so does the autocorrelation function for the initial lags, while maintaining the same long-term behavior. Hence, the first argument models the short-term dynamics. In this regard, cross-sectionally aggregated processes are capable of capturing both short- and long-term dynamics in a single theoretically based framework.

Consider the function given by:

which measures the squared difference between autocorrelations at the first

k lags for

and

processes with the same long-range dynamics. Minimizing (12) with respect to the parameter

a, we find the

process that best approximates a long memory

process up to lag

k, while having the same long-range dependence. Given the different forms of the autocorrelation functions, there is in general no value of the parameter

a that minimizes (12) for all values of

k. For instance,

, while

.

Nonetheless, selecting a medium-sized

k, say

, the approximation turns out to be quite satisfactory in general. In

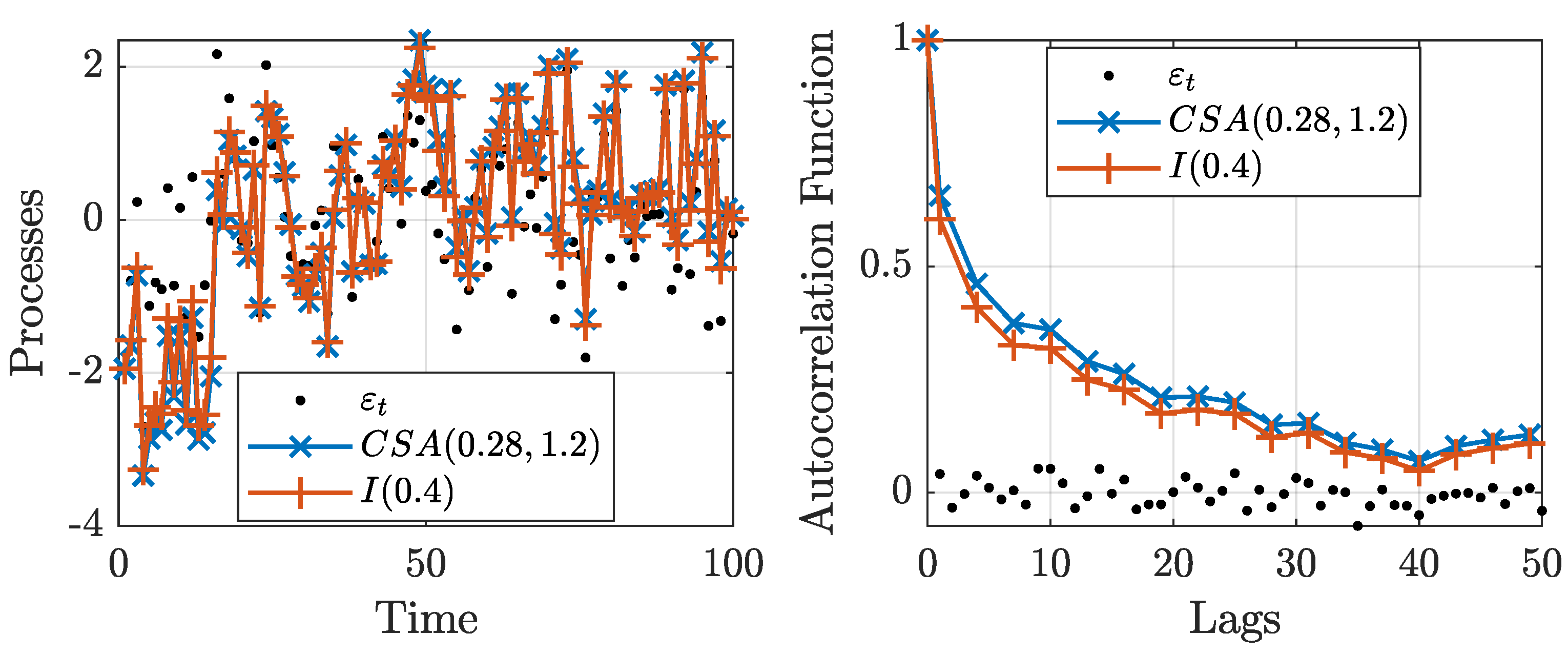

Figure 5, we present a white noise process,

, and long-range-dependent processes. These processes are obtained by using the fractional difference operator with parameter

and using the cross-sectional aggregated algorithm with parameters

and

.

The figure shows that the filtered series are almost identical. Moreover, the autocorrelation functions exhibit similar dynamics. In this context, the fractional difference operator can be viewed as another example of models that generate processes with similar properties to their theoretical explanations, but are not equivalent; see

Portnoy (

2019) for an example with the

model. Thus, the figure shows that it is possible to generate cross-sectionally aggregated processes that closely mimic the ones due to fractional differencing while providing theoretical support for the presence of long-range dependence.

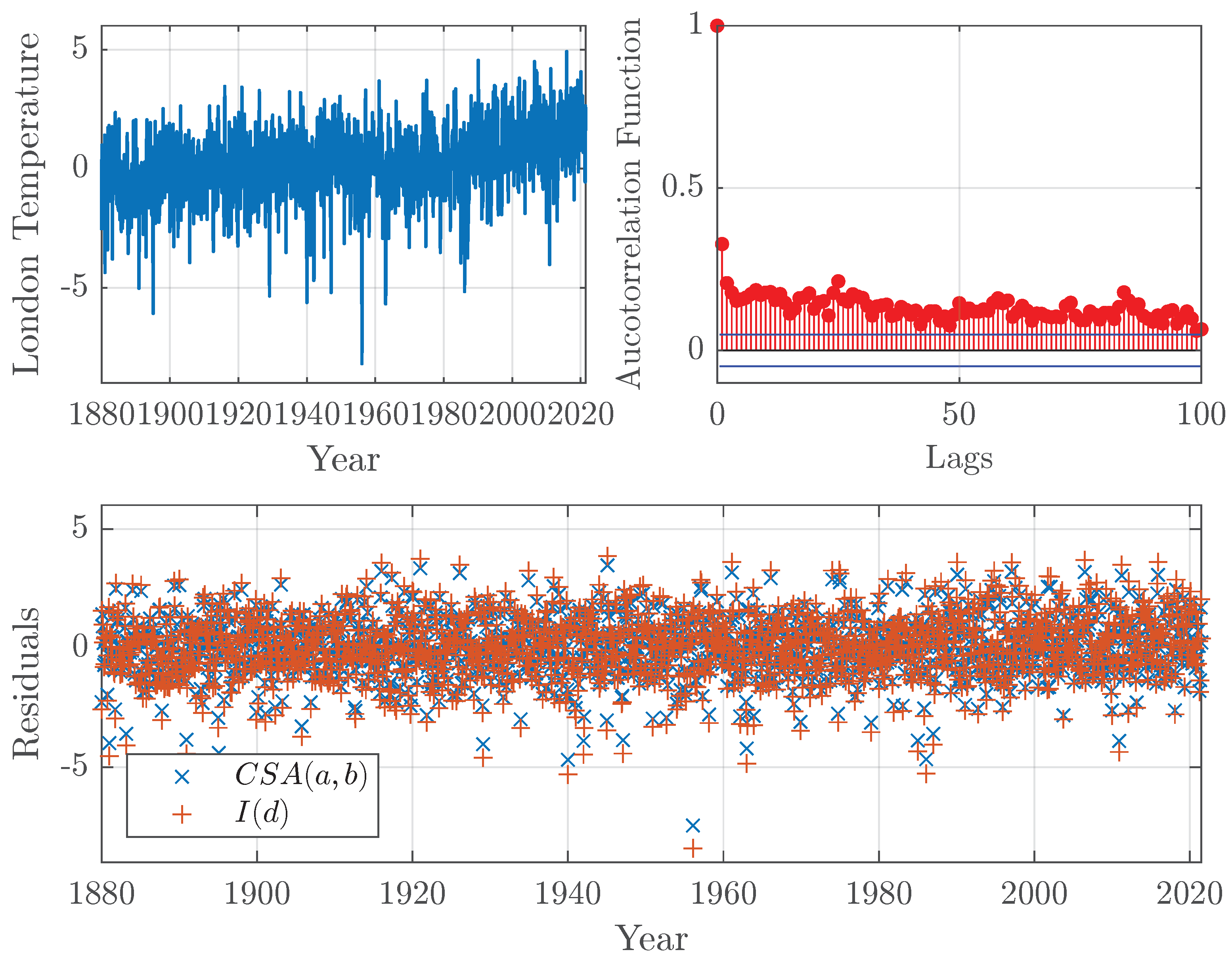

Figure 6 shows an example using temperature data. The data came from GISTEMP, an estimate of global surface temperature change constructed by the NASA Goddard Institute for Space Studies. GISTEMP specifies the temperature anomaly at a given location as the weighted average of the anomalies for all stations located in close proximity. The data are updated monthly and combine data from land and ocean surface temperatures; see

GISTEMP (

2020);

Lenssen et al. (

2019).

The figure shows temperature anomalies for the grid near London, the United Kingdom. The data possess long-range dependence, as seen in their autocorrelation function. To model the long-range dependence, we fit the

and

models to the data. On the one hand, the estimated long-range dependence parameter for the fractional difference model is

. On the other hand, the estimated parameters for the

model using the

developed in

Section 5 are

and

, which correspond to a long-range dependence parameter of

. The residuals from the estimated models are also shown. Note that the residuals from the

model are, on average, smaller than the residuals from the

model. The residual sum squares for the

and

models are 2405 and 3076, respectively. The example shows that the

model extracts more of the dynamics in the data than the fractional difference operator. Hence, given how the data were generated, as the aggregation of temperature series at different stations, the

model provides a better, theoretically supported fit to the data.

7. Conclusions

Granger argued that fractionally integrated processes fall into the “empty box” category of theoretical developments that do not arise in the real economy. Moreover,

Veitch et al. (

2013) argued that “

time series whose long-range dependence scaling derives directly from fractional differencing [...] are far from typical when it comes to their long-range dependence character”. Thus, this paper developed a long-range dependence framework based on cross-sectional aggregation, the most predominant theoretical explanation for the presence of long-range dependence in data. In this regard, this paper developed a framework to model long-range dependence that arises in the real economy, and it is thus not in the “empty box”.

This paper built on the long-range dependence literature by presenting two novel algorithms to generate long-range dependence by cross-sectional aggregation. The algorithms have a similar computational burden as the one for the fractional difference operator. They are exact in the sense that no approximation regarding the number of aggregating units is needed.

Moreover, we studied the antipersistent properties and proved that the autocorrelation function for processes is positive, and the spectral density does not collapse to zero as the frequency goes to zero. We argued that the antipersistent properties are a restriction imposed by the use of the fractional difference operator. We showed that processes do not share these restrictions, and are thus less brittle. The paper showed that the lack of antipersistence has implications for long-range dependence estimators in the frequency domain, which will be misspecified.

To solve the misspecification issue, we developed the maximum likelihood estimator for long-range dependence by cross-sectional aggregation to obtain a consistent estimator. Furthermore, we proposed to reduce the computational burden of the by taking advantage of the recursive nature of the autocorrelation function of cross-sectionally aggregated processes. As an application, we showed that cross-sectionally aggregated processes can approximate a fractionally differenced process.

Our results have implications for applied work with long-range-dependent processes where the source of the long-range dependence is cross-sectional aggregation. We showed on an example using temperature data that the model provides a better fit to the data than the fractional difference operator. We argued that cross-sectionally aggregation is a clear theoretical justification for the presence of long range-dependence. In this regard, this paper backs the case of

Portnoy (

2019)

to employ models only when the underlying model assumptions have clear and convincing scientific justification.