A Systematic Review of Large Language Models in Mental Health: Opportunities, Challenges, and Future Directions

Abstract

1. Introduction

- Psychiatry supports biological and medical needs.

- Psychology explains mental and emotional processes.

- Psychotherapy guides healing, growth, and long-term well-being.

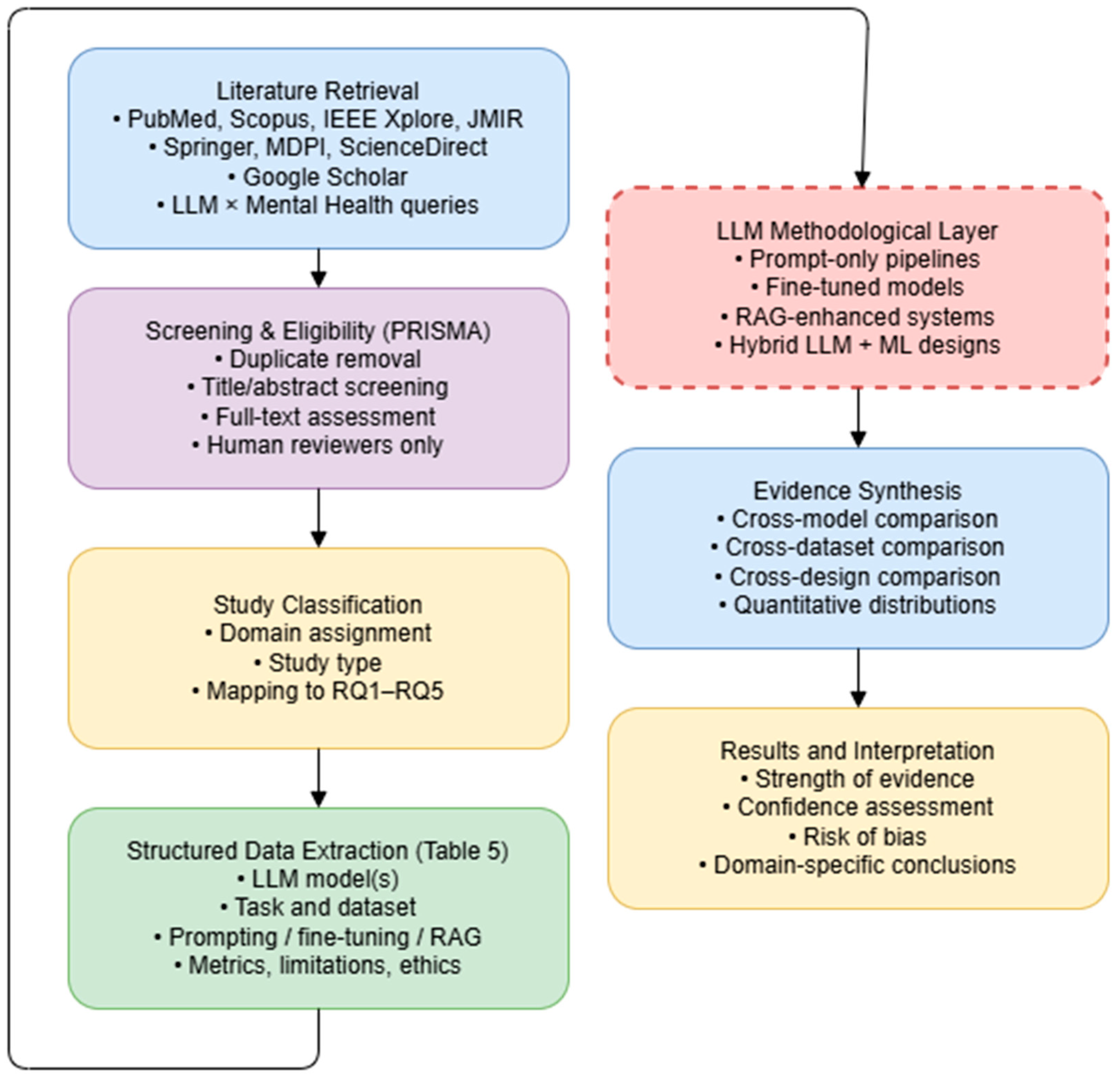

2. Materials and Methods

2.1. Eligibility Criteria

2.2. Study Search Strategy and Selection Process

2.3. Data Collection Process

2.4. Risk of Bias Assessment

2.5. Reporting Bias Assessment

2.6. Strength and Consistency of the Evidence

2.7. Evidence Synthesis and Confidence Assessment

3. Evidence Across Mental Health Domains

3.1. LLMs in Psychiatry

3.1.1. Diagnostic Application

3.1.2. Evidence-Aligned Benchmarking and Retrieval-Augmented Generation (RAG)

3.1.3. Ecological Assessment, Longitudinal Monitoring, and Symptom Inference

3.1.4. Simulation-Based Training, Clinical Education, and Psychoeducational Support

3.1.5. Automated Clinical Documentation and Workflow Optimization

3.1.6. AI-Augmented Therapeutic Interventions and Immersive Treatment Modalities

3.1.7. Personalized Mental Health Support and Emotion-Aware Interaction Systems

3.1.8. Clinical Decision Support and Predictive Analytics

3.1.9. Comparative Clinical Performance and Diagnostic Concordance with Human Clinicians

3.1.10. Bias and Ethical Issues in Clinical Psychiatry LLM Studies

3.1.11. Overview of LLM Applications in Psychiatry

3.1.12. Critical Appraisal of Psychiatric Evidence

3.2. LLMs in Psychology

3.2.1. Language-Based Psychological Signal Detection and Psychopathology Modeling

3.2.2. Stress, Sleep, and Behavior Prediction Using LLM-Enhanced Models

3.2.3. Evaluating and Enhancing LLM Psychological Reasoning Through Prompting

3.2.4. LLMs as Companions and Social Agents: Perceptions of Empathy and Consciousness

3.2.5. Psychometrics, Psychological Assessment, and Personality Modeling with LLMs

3.2.6. LLLM-Augmented Digital Mental Health Interventions and Mental Health Outcomes

3.2.7. Psychological Frameworks Applied to LLM Reasoning, Values, and Biases

3.2.8. Ethical and Safety Risks in Psychological and Therapeutic LLM Applications

3.2.9. Overview of LLM Research in Psychology

3.2.10. Critical Appraisal of LLM-Based Psychological Research

3.3. LLMs in Psychotherapy

3.3.1. AI Psychotherapists and Therapeutic Text Analysis

3.3.2. Therapeutic Chatbots and Adaptive Support Systems

3.3.3. Integration into Clinical Practice and Research Workflows

3.3.4. Model Adaptation, Fine-Tuning, and Therapeutic Quality

3.3.5. Clinical Trials and Therapy Session Summarization

3.3.6. LLM-Supported Psychotherapeutic Approaches

3.3.7. Multimodal, VR, and Robot-Assisted Therapeutic Systems

3.3.8. Ethical and Safety Issues in Psychotherapy-Oriented LLM Use

3.3.9. Overview of LLM Research in Psychotherapy

3.3.10. Critical Appraisal of LLM-Based Psychotherapy Evidence

3.4. Risks, Limitations, and Implementation Barriers in Mental Health LLM Systems

3.5. The Role of the Mental Health Workforce

3.5.1. Overview of LLM Applications for the Clinical Mental Health Workforce

3.5.2. Overall Critical Appraisal and Confidence in Evidence

3.6. Cross-Model, Cross-Dataset, and Cross-Design Comparisons

4. Discussion

Recommendations

5. Conclusions

Future Directions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Olawade, D.B.; Wada, O.Z.; Odetayo, A.; David-Olawade, A.C.; Asaolu, F.; Eberhardt, J. Enhancing mental health with Artificial Intelligence: Current trends and future prospects. J. Med. Surg. Public Health 2024, 3, 100099. [Google Scholar] [CrossRef]

- Gautam, S.; Jain, A.; Chaudhary, J.; Gautam, M.; Gaur, M.; Grover, S. Concept of mental health and mental well-being, it’s determinants and coping strategies. Indian J. Psychiatry 2024, 66, S231–S244. [Google Scholar] [CrossRef] [PubMed]

- Ford, K.; Freund, R.; Young Lives, University of Oxford. Young Lives Under Pressure: Protecting and Promoting Young People’s Mental Health at a Time of Global Crises. 2022. Available online: https://www.younglives.org.uk/sites/default/files/2022-11/YL-PolicyBrief-55-Sep22 Final.pdf (accessed on 4 April 2025).

- Funk, M.; World Health Organization. Guidance on Mental Health Policy and Strategic Action Plans. Module 1. Introduction, Purpose and Use of the Guidance; World Health Organization: Geneva, Switzerland, 2025; Available online: https://iris.who.int/bitstream/handle/10665/380465/9789240106796-eng.pdf?sequence=1 (accessed on 14 May 2025).

- Kestel, D.; Neira, M.; Azzi, M.; World Health Organization. Mental Health Atlas 2020. 2020. Available online: https://iris.who.int/bitstream/handle/10665/345946/9789240036703-eng.pdf?sequence=1&isAllowed=y (accessed on 18 April 2025).

- WHO (b). World Health Organization (WHO). New WHO Guidance Calls for Urgent Transformation of Mental Health Policies. 2025. Available online: https://www.who.int/news/item/25-03-2025-new-who-guidance-calls-for-urgent-transformation-of-mental-health-policies (accessed on 13 May 2025).

- McGrath, J.J.; Al-Hamzawi, A.; Alonso, J.; Altwaijri, Y.; Andrade, L.H.; Bromet, E.J.; Bruffaerts, R.; de Almeida, J.M.C.; Chardoul, S.; Chiu, W.T.; et al. Age of onset and cumulative risk of mental disorders: A cross-national analysis of population surveys from 29 countries. Lancet Psychiatry 2023, 10, 668–681. [Google Scholar] [CrossRef] [PubMed]

- WHO (a). World Health Organization (WHO). σελ. 1 Mental Disorders. 2022. Available online: https://www.who.int/news-room/fact-sheets/detail/mental-disorders/ (accessed on 22 April 2025).

- Centers for Disease Control and Prevention (CDC). Mental Health. 2024. Available online: https://www.cdc.gov/cdi/indicator-definitions/mental-health.html (accessed on 1 May 2025).

- Duckworth, K.; National Alliance on Mental Illness (NAMI). Mental Health By the Numbers. 2023. Available online: https://www.nami.org/about-mental-illness/mental-health-by-the-numbers/ (accessed on 10 May 2025).

- Sim, K.Y.H.; Choo, K.T.W. Envisioning an AI-Enhanced Mental Health Ecosystem. arXiv 2025, arXiv:2503.14883. [Google Scholar] [CrossRef]

- Ballout, S. Trauma Mental Health Workforce Shortages Health Equity: ACrisis in Public Health. Int. J. Environ. Res. Public Health 2025, 22, 620. [Google Scholar] [CrossRef]

- Wainberg, M.L.; Scorza, P.; Shultz, J.M.; Helpman, L.; Mootz, J.J.; Johnson, K.A.; Neria, Y.; Bradford, J.M.; Oquendo, M.; Arbucle, M. Challenges and Opportunities in Global Mental Health: A Research-to-Practice Perspective. Curr. Psychiatry Rep. 2017, 19, 28. [Google Scholar] [CrossRef] [PubMed]

- Satiani, A.; Niedermier, J.; Satiani, B.; Svendsen, D.P. Projected Workforce of Psychiatrists in the United States: A Population Analysis. Psychiatr. Serv. 2018, 69, 710–713. [Google Scholar] [CrossRef]

- Kazdin, A.E.; Blase, S.L. Rebooting Psychotherapy Research and Practice to Reduce the Burden of Mental Illness. Perspect. Psychol. Sci. 2011, 6, 21–37. [Google Scholar] [CrossRef]

- Lawrence, H.R.; Schneider, R.A.; Rubin, S.B.; Matarić, M.J.; McDuff, D.J.; Jones Bell, M. The Opportunities and Risks of Large Language Models in Mental Health. JMIR Ment. Health 2024, 11, e59479. [Google Scholar] [CrossRef]

- Hsu, S.L.; Shah, R.S.; Senthil, P.; Ashktorab, Z.; Dugan, C.; Geyer, W.; Yang, D. Helping the Helper: Supporting Peer Counselors via AI-Empowered Practice and Feedback. arXiv 2025, arXiv:2305.08982. [Google Scholar] [CrossRef]

- Thieme, A.; Hanratty, M.; Lyons, M.; Palacios, J.; Marques, R.F.; Morrison, C.; Doherty, G. Designing Human-centered AI for Mental Health: Developing Clinically Relevant Applications for Online CBT Treatment. ACM Trans. Comput.-Hum. Interact. 2023, 30, 1–50. [Google Scholar] [CrossRef]

- Tutun, S.; Johnson, M.E.; Ahmed, A.; Albizri, A.; Irgil, S.; Yesilkaya, I.; Ucar, E.N.; Sengun, T.; Harfouche, A. An AI-based Decision Support System for Predicting Mental Health Disorders. Inf. Syst. Front. 2023, 25, 1261–1276. [Google Scholar] [CrossRef]

- Afonso-Jaco, A.; Katz, B.F.G. Spatial Knowledge via Auditory Information for Blind Individuals: Spatial Cognition Studies and the Use of Audio-VR. Sensors 2022, 22, 4794. [Google Scholar] [CrossRef]

- Fulmer, R.; Joerin, A.; Gentile, B.; Lakerink, L.; Rauws, M. Using Psychological Artificial Intelligence (Tess) to Relieve Symptoms of Depression and Anxiety: Randomized Controlled Trial. JMIR Ment. Health 2018, 5, e64. [Google Scholar] [CrossRef]

- Fitzpatrick, K.K.; Darcy, A.; Vierhile, M. Delivering Cognitive Behavior Therapy to Young Adults With Symptoms of Depression and Anxiety Using a Fully Automated Conversational Agent (Woebot): A Randomized Controlled Trial. JMIR Ment. Health 2017, 4, e19. [Google Scholar] [CrossRef] [PubMed]

- Lee, S.S.; Li, N.; Kim, J. Conceptual model for Mexican teachers’ adoption of learning analytics systems: The integration of the information system success model and the technology acceptance model. Educ. Inf. Technol. 2024, 29, 13387–13412. [Google Scholar] [CrossRef]

- Zhou, W. Chat GPT Integrated with Voice Assistant as Learning Oral Chat-based Constructive Communication to Improve Communicative Competence for EFL earners. arXiv 2023, arXiv:2311.00718. [Google Scholar] [CrossRef]

- Bassett, C. The computational therapeutic: Exploring Weizenbaum’s ELIZA as a history of the present. AI Soc. 2019, 34, 803–812. [Google Scholar] [CrossRef]

- Anyoha, R.; Harvard University’s Science in the News (SITN). The History of Artificial Intelligence. 2017. Available online: https://sites.harvard.edu/sitn/2017/08/28/history-artificial-intelligence (accessed on 21 May 2025).

- Hua, Y.; Na, H.; Li, Z.; Liu, F.; Fang, X.; Clifton, D.; Torous, J. A scoping review of large language models for generative tasks in mental health care. Npj Digit. Med. 2025, 8, 230. [Google Scholar] [CrossRef]

- Katz, U.; Cohen, E.; Shachar, E.; Somer, J.; Fink, A.; Morse, E.; Shreiber, B.; Wolf, I. GPT versus Resident Physicians—A Benchmark Based on Official Board Scores. NEJM AI 2024, 1, 5. [Google Scholar] [CrossRef]

- American Psychiatric Association. Diagnostic and Statistical Manual of Mental Disorders, 5th ed.; American Psychiatric Publishing: Washington, DC, USA, 2013. [Google Scholar] [CrossRef]

- Henriques, G. A New Unified Theory of Psychology; Springer: New York, NY, USA, 2011. [Google Scholar] [CrossRef]

- Locher, C.; Meier, S.; Gaab, J. Psychotherapy: A World of Meanings. Front. Psychol. 2019, 10, 460. [Google Scholar] [CrossRef]

- Guo, Z.; Lai, A.; Thygesen, J.H.; Farrington, J.; Keen, T.; Li, K. Large Language Models for Mental Health Applications: Systematic Review. JMIR Ment. Health 2024, 11, e57400. [Google Scholar] [CrossRef]

- Hua, Y.; Liu, F.; Yang, K.; Li, Z.; Na, H.; Sheu, Y.; Zhou, P.; Moran, L.V.; Ananiadou, S.; Clifton, D.A.; et al. Large Language Models in Mental Health Care: A Scoping Review. arXiv 2025, arXiv:2401.02984. [Google Scholar] [CrossRef]

- Omar, M.; Soffer, S.; Charney, A.W.; Landi, I.; Nadkarni, G.N.; Klang, E. Applications of large language models in psychiatry: A systematic review. Front. Psychiatry 2024, 15, 1422807. [Google Scholar] [CrossRef]

- Brickman, D.; Gupta, M.; Oltmanns, J.R. Large Language Models for Psychological Assessment: A Comprehensive Overview. Adv. Methods Pract. Psychol. Sci. 2025, 8, 1–26. [Google Scholar] [CrossRef]

- Gautam, A.; Kellmeyer, P. Exploring the Credibility of Large Language Models for Mental Health Support: Protocol for a Scoping Review. JMIR Res. Protoc. 2025, 14, e62865. [Google Scholar] [CrossRef]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Akl, E.A.; Brennan, S.E.; et al. The PRISMA 2020 statement: An updated guideline for reporting systematic reviews. BMJ 2021, 372, n71. [Google Scholar] [CrossRef]

- Hong, Q.N.; Fàbregues, S.; Bartlett, G.; Boardman, F.; Cargo, M.; Dagenais, P.; Gagnon, M.P.; Griffiths, F.; Nicolau, B.; O’Cathain, A.; et al. The Mixed Methods Appraisal Tool (MMAT) version 2018 for information professionals and researchers. Educ. Inf. 2018, 34, 87–98. [Google Scholar] [CrossRef]

- Amugongo, L.M.; Mascheroni, P.; Brooks, S.; Doering, S.; Seidel, J. Retrieval augmented generation for large language models in healthcare: A systematic review. PLoS Digit. Health 2025, 4, e0000877. [Google Scholar] [CrossRef]

- He, K.; Mao, R.; Lin, Q.; Ruan, Y.; Lan, X.; Feng, M.; Cambria, E. A survey of large language models for healthcare: From data, technology, and applications to accountability and ethics. Inf. Fusion 2025, 118, 102963. [Google Scholar] [CrossRef]

- Zhang, K.; Meng, X.; Yan, X.; Ji, J.; Liu, J.; Xu, H.; Zhang, H.; Liu, D.; Wang, J.; Wang, X.; et al. Revolutionizing Health Care: The Transformative Impact of Large Language Models in Medicine. J. Med. Internet Res. 2025, 27, e59069. [Google Scholar] [CrossRef]

- Dergaa, I.; Fekih-Romdhane, F.; Hallit, S.; Loch, A.A.; Glenn, J.M.; Fessi, M.S.; Aissa, M.B.; Souissi, N.; Guelmami, N.; Swed, S.; et al. ChatGPT is not ready yet for use in providing mental health assessment and interventions. Front. Psychiatry 2024, 14, 1277756. [Google Scholar] [CrossRef]

- Han, Q.; Zhao, C. Unleashing the potential of chatbots in mental health: Bibliometric analysis. Front. Psychiatry 2025, 16, 1494355. [Google Scholar] [CrossRef]

- Vinod, K.D. The emergence of AI in mental health: A transformative journey. World J. Adv. Res. Rev. 2024, 22, 1867–1871. [Google Scholar] [CrossRef]

- Holmes, G.; Tang, B.; Gupta, S.; Venkatesh, S.; Christensen, H.; Whitton, A. Applications of Large Language Models in the Field of Suicide Prevention: Scoping Review. J. Med. Internet Res. 2025, 27, e63126. [Google Scholar] [CrossRef]

- Chang, Y.; Su, C.Y.; Liu, Y.C. Assessing the Performance of Chatbots on the Taiwan Psychiatry Licensing Examination Using the Rasch Model. Healthcare 2024, 12, 2305. [Google Scholar] [CrossRef]

- Wang, B.; Sun, Y.; Zi, Y.; Zhao, Y.; Qin, B. Scale-CoT: Integrating LLM with Psychiatric Scales for Analyzing Mental Health Issues on Social Media. In Proceedings of the 2024 IEEE International Conference on Bioinformatics and Biomedicine (BIBM), Lisbon, Portugal, 3–6 December 2024; IEEE: London, UK, 2024; pp. 2651–2658. [Google Scholar] [CrossRef]

- Bauer, B.; Norel, R.; Leow, A.; Rached, Z.A.; Wen, B.; Cecchi, G. Using Large Language Models to Understand Suicidality in a Social Media–Based Taxonomy of Mental Health Disorders: Linguistic Analysis of Reddit Posts. JMIR Ment. Health 2024, 11, e57234. [Google Scholar] [CrossRef]

- Li, R. Integrative diagnosis of psychiatric conditions using ChatGPT and fMRI data. BMC Psychiatry 2025, 25, 145. [Google Scholar] [CrossRef]

- Lan, K.; Jin, B.; Zhu, Z.; Chen, S.; Zhang, S.; Zhu, K.Q.; Wu, M. Depression Diagnosis Dialogue Simulation: Self-improving Psychiatrist with Tertiary Memory. arXiv 2024, arXiv:2409.15084. [Google Scholar]

- Ohse, J.; Hadžić, B.; Mohammed, P.; Peperkorn, N.; Danner, M.; Yorita, A.; Kubota, N.; Rätsch, M.; Shiban, Y. Zero-Shot Strike: Testing the generalisation capabilities of out-of-the-box LLM models for depression detection. Comput. Speech Lang. 2024, 88, 101663. [Google Scholar] [CrossRef]

- Wiest, I.C.; Verhees, F.G.; Ferber, D.; Zhu, J.; Bauer, M.; Lewitzka, U.; Pfennig, A.; Mikolas, P.; Kather, J.N. Detection of Suicidality Through Privacy-Preserving Large Language Models. Br, J. Psychiatry 2024, 225, 532–537. [Google Scholar] [CrossRef]

- Englhardt, Z.; Ma, C.; Morris, M.E.; Chang, C.C.; Xu, X.O.; Qin, L.; McDuff, D.; Liu, X.; Patel, S.; Iyer, V. From Classification to Clinical Insights. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2024, 8, 1–25. [Google Scholar] [CrossRef]

- Xu, X.; Yao, B.; Dong, Y.; Gabriel, S.; Yu, H.; Hendler, J.; Ghassemi, M.; Dey, A.K.; Wang, D. Mental-LLM: Leveraging Large Language Models for Mental Health Prediction via Online Text Data. Proc. ACM Interact. Mob. Wearable Ubiquitous Technol. 2024, 8, 1–32. [Google Scholar] [CrossRef]

- Scherbakov, D.A.; Hubig, N.C.; Lenert, L.A.; Alekseyenko, A.V.; Obeid, J.S. Natural Language Processing and Social Determinants of Health in Mental Health Research: AI-Assisted Scoping Review. JMIR Ment. Health 2025, 12, e67192. [Google Scholar] [CrossRef]

- Edgcomb, J.B.; Saha, A.; Lee, J.J.; Ponce, C.G.; Tascione, E.M.; Montero, A.E.; Ryan, N.D. 1.53 Large Language Models to Extract Information on Suicide From Children’s Medical Records. J. Am. Acad. Child. Adolesc. Psychiatry 2024, 63, S175. [Google Scholar] [CrossRef]

- Jin, Y.; Liu, J.; Li, P.; Wang, B.; Yan, Y.; Zhang, H.; Ni, C.; Wang, J.; Li, Y.; Bu, Y.; et al. The Applications of Large Language Models in Mental Health: Scoping Review. J. Med. Internet Res. 2025, 27, e69284. [Google Scholar] [CrossRef]

- Pavlopoulos, A.; Rachiotis, T.; Maglogiannis, I. An Overview of Tools and Technologies for Anxiety and Depression Management Using AI. Appl. Sci. 2024, 14, 9068. [Google Scholar] [CrossRef]

- Wang, X.; Zhou, Y.; Zhou, G. The Application and Ethical Implication of Generative AI in Mental Health: Systematic Review. JMIR Ment. Health 2025, 12, e70610. [Google Scholar] [CrossRef]

- Priyadarshana, Y.H.P.P.; Senanayake, A.; Liang, Z.; Piumarta, I. Prompt engineering for digital mental health: A short review. Front Digit Health 2024, 6, 1410947. [Google Scholar] [CrossRef]

- Kambeitz, J.; Chakraborty, M.; Wenzel, J.; Schwed, L.; Menne, F.; König, A.; Ruef, A.; Bonivento, C.; Dwyer, D.; Brambilla, P. Automated Analysis of Linguistic Measures Using Verbal Fluency Test in Psychotic and Affective Disorders: Findings From the PRONIA Study. Biol. Psychiatry 2025, 97, S50. [Google Scholar] [CrossRef]

- Esmaeilzadeh, P. Decoding the cry for help: AI’s emerging role in suicide risk assessment. AI Ethics 2025, 5, 4645–4679. [Google Scholar] [CrossRef]

- Chen, C.C.; Chen, J.A.; Liang, C.S.; Lin, Y.H. Large language models may struggle to detect culturally embedded filicide-suicide risks. Asian J. Psychiatry 2025, 105, 104395. [Google Scholar] [CrossRef]

- Rosenman, G.; Wolf, L.; Hendler, T. LLM Questionnaire Completion for Automatic Psychiatric Assessment. arXiv 2024, arXiv:2406.06636. [Google Scholar] [CrossRef]

- Ludlow, C. Investigating the Capability of Large Language Models to Identify Causal Relations in Psychiatric Case Studies: A Methodological Proof of Concept for the Analysis of Psychological Case Formulations. 2025. Available online: https://osf.io/preprints/psyarxiv/wfmv8_v1 (accessed on 21 April 2025).

- Gargari, O.K.; Fatehi, F.; Mohammadi, I.; Firouzabadi, S.R.; Shafiee, A.; Habibi, G. Diagnostic accuracy of large language models in psychiatry. Asian J. Psychiatry 2024, 100, 104168. [Google Scholar] [CrossRef]

- Arbanas, G. ChatGPT and other Chatbots in Psychiatry. Arch. Psychiatry Res. 2024, 60, 137–142. [Google Scholar] [CrossRef]

- Alhuwaydi, A. Exploring the Role of Artificial Intelligence in Mental Healthcare: Current Trends and Future Directions—A Narrative Review for a Comprehensive Insight. Risk Manag. Health Policy 2024, 17, 1339–1348. [Google Scholar] [CrossRef]

- Ghorbanian Zolbin, M.; Kujala, S.; Huvila, I. Experiences and Expectations of Immigrant and Nonimmigrant Older Adults Regarding eHealth Services: Qualitative Interview Study. J. Med. Internet Res. 2025, 27, e64249. [Google Scholar] [CrossRef]

- Rodríguez Gatta, D.; Rotarou, E.S.; Banks, L.M.; Kuper, H. Healthcare access among people with and without disabilities: A cross-sectional analysis of the National Socioeconomic Survey of Chile. Public Health 2025, 241, 144–150. [Google Scholar] [CrossRef]

- Rodado, J.; Crespo, F. Relational dimension versus Artificial Intellingence. Am. J. Psychoanal. 2024, 84, 268–284. [Google Scholar] [CrossRef]

- Bidargaddi, N.; Almirall, D.; Murphy, S.; Nahum-Shani, I.; Kovalcik, M.; Pituch, T.; Maaieh, H.; Strecher, V. To Prompt or Not to Prompt? A Microrandomized Trial of Time-Varying Push Notifications to Increase Proximal Engagement With a Mobile Health App. JMIR MHealth UHealth 2018, 6, e10123. [Google Scholar] [CrossRef]

- Banerjee, S.; Dunn, P.; Conard, S.; Ali, A. Mental Health Applications of Generative AI and Large Language Modeling in the United States. Int. J. Environ. Res. Public Health 2024, 21, 910. [Google Scholar] [CrossRef]

- Bzdok, D.; Thieme, A.; Levkovskyy, O.; Wren, P.; Ray, T.; Reddy, S. Data science opportunities of large language models for neuroscience and biomedicine. Neuron 2024, 112, 698–717. [Google Scholar] [CrossRef] [PubMed]

- Dart, M.; Ahmed, M. Evaluating Staff Attitudes, Intentions, and Behaviors Related to Cyber Security in Large Australian Health Care Environments: Mixed Methods Study. JMIR Hum. Factors 2023, 10, e48220. [Google Scholar] [CrossRef]

- Obradovich, N.; Khalsa, S.S.; Khan, W.U.; Suh, J.; Perlis, R.H.; Ajilore, O.; Paulus, M.P. Opportunities and risks of large language models in psychiatry. NPP—Digit. Psychiatry Neurosci. 2024, 2, 8. [Google Scholar] [CrossRef]

- Ni, Y.; Jia, F. A Scoping Review of AI-Driven Digital Interventions in Mental Health Care: Mapping Applications Across Screening, Support, Monitoring, Prevention, and Clinical Education. Healthcare 2025, 13, 1205. [Google Scholar] [CrossRef]

- Liu, Z.; Bao, Y.; Zeng, S.; Qian, R.; Deng, M.; Gu, A.; Li, J.; Wang, W.; Cai, W.; Li, W.; et al. Large Language Models in Psychiatry: Current Applications, Limitations, and Future Scope. Big Data Min. Anal. 2024, 7, 1148–1168. [Google Scholar] [CrossRef]

- Farhat, F. ChatGPT as a Complementary Mental Health Resource: A Boon or a Bane. Ann. Biomed. Eng. 2024, 52, 1111–1114. [Google Scholar] [CrossRef] [PubMed]

- Medina, J.C.; Andrade, R.R. Advancements in Artificial Intelligence for Health: A Rapid Review of AI-Based Mental Health Technologies Used in the Age of Large Language Models. In Bioinformatics and Biomedical Engineering; Springer: Berlin/Heidelberg, Germany, 2024; pp. 318–343. [Google Scholar] [CrossRef]

- Malgaroli, M.; Schultebraucks, K.; Myrick, K.J.; Andrade Loch, A.; Ospina-Pinillos, L.; Choudhury, T.; Kotov, R.; De Choudhury, M.; Torous, M. Large language models for the mental health community: Framework for translating code to care. Lancet Digit. Health 2025, 7, e282–e285. [Google Scholar] [CrossRef]

- Sree, Y.B.; Sathvik, A.; Hema Akshit, D.S.; Kumar, O.; Pranav Rao, B.S. Retrieval-Augmented Generation Based Large Language Model Chatbot for Improving Diagnosis for Physical and Mental Health. In Proceedings of the 2024 6th International Conference on Electrical, Control and Instrumentation Engineering (ICECIE), Pattaya, Thailand, 23–23 November 2024; IEEE: London, UK, 2024; pp. 1–8. [Google Scholar] [CrossRef]

- Singh, A.; Ehtesham, A.; Mahmud, S.; Kim, J.H. Revolutionizing Mental Health Care through LangChain: A Journey with a Large Language Model. In Proceedings of the 2024 IEEE 14th Annual Computing and Communication Workshop and Conference (CCWC), Las Vegas, NV, USA, 8–10 January 2024; IEEE: London, UK, 2024; pp. 73–78. [Google Scholar] [CrossRef]

- Ferizaj, D.; Lalk, C.; Lahmann, N.; Strube-Lahmann, S.; Rubel, J. Identifying Yalom’s group therapeutic factors in anonymous mental health discussions on Reddit: A mixed-methods analysis using large language models, topic modeling and human supervision. Front. Psychiatry 2025, 16, 1503427. [Google Scholar] [CrossRef]

- Levkovich, I.; Shinan-Altman, S.; Elyoseph, Z. Can large language models be sensitive to culture suicide risk assessment? J. Cult. Cogn. Sci. 2024, 8, 275–287. [Google Scholar] [CrossRef]

- Matsui, K.; Utsumi, T.; Aoki, Y.; Maruki, T.; Takeshima, M.; Takaesu, Y. Human-Comparable Sensitivity of Large Language Models in Identifying Eligible Studies Through Title and Abstract Screening: 3-Layer Strategy Using GPT-3.5 and GPT-4 for Systematic Reviews. J. Med. Internet Res. 2024, 26, e52758. [Google Scholar] [CrossRef] [PubMed]

- Abdullah, M.; Negied, N. Detection and Prediction of Future Mental Disorder From Social Media Data Using Machine Learning, Ensemble Learning, and Large Language Models. IEEE Access 2024, 12, 120553–120569. [Google Scholar] [CrossRef]

- Teferra, B.G.; Perivolaris, A.; Hsiang, W.N.; Sidharta, C.K.; Rueda, A.; Parkington, K.; Wu, Y.; Soni, A.; Samavi, R.; Jetly, R.; et al. Leveraging large language models for automated depression screening. PLoS Digit. Health 2025, 4, e0000943. [Google Scholar] [CrossRef]

- Bokolo, B.G.; Liu, Q. Deep Learning-Based Depression Detection from Social Media: Comparative Evaluation of ML and Transformer Techniques. Electronics 2023, 12, 4396. [Google Scholar] [CrossRef]

- Chowdhury, A.K.; Sujon, S.R.; Shafi, M.d.S.S.; Ahmmad, T.; Ahmed, S.; Hasib, K.M.; Shah, F.M. Harnessing large language models over transformer models for detecting Bengali depressive social media text: A comprehensive study. Nat. Lang. Process J. 2024, 7, 100075. [Google Scholar] [CrossRef]

- Qorich, M.; El Ouazzani, R. Advanced deep learning and large language models for suicide ideation detection on social media. Prog. Artif. Intell. 2024, 13, 135–147. [Google Scholar] [CrossRef]

- Nanda, M.; Inkpen, D.; Dargel, A. Detecting Multiple Mental Health Disorders with Large Language Models. In Proceedings of the 2024 28th International Conference Information Visualisation (IV), Coimbra, Portugal, 22–26 July 2024; IEEE: London, UK, 2024; pp. 252–257. [Google Scholar] [CrossRef]

- Liu, Y.; Ding, X.; Peng, S.; Zhang, C. Leveraging ChatGPT to optimize depression intervention through explainable deep learning. Front. Psychiatry 2024, 15, 1383648. [Google Scholar] [CrossRef]

- Hanss, K.; Sarma, K.V.; Glowinski, A.L.; Krystal, A.; Saunders, R.; Halls, A.; Gorrell, S.; Reilly, E. Assessing the Accuracy Reliability of Large Language Models in Psychiatry Using Standardized Multiple-Choice Questions: Cross-Sectional Study. J. Med. Internet Res. 2025, 27, e69910. [Google Scholar] [CrossRef]

- Kharitonova, K.; Pérez-Fernández, D.; Gutiérrez-Hernando, J.; Gutiérrez-Fandiño, A.; Callejas, Z.; Griol, D. Incorporating evidence into mental health Q&A: A novel method to use generative language models for validated clinical content extraction. Behav. Inf. Technol. 2025, 44, 2333–2350. [Google Scholar] [CrossRef]

- Wang, X.; Liu, K.; Wang, C. Knowledge-enhanced Pre-training large language model for depression diagnosis and treatment. In Proceedings of the 2023 IEEE 9th International Conference on Cloud Computing and Intelligent Systems (CCIS), Dali, China, 12–13 August 2023; IEEE: London, UK, 2023; pp. 532–536. [Google Scholar] [CrossRef]

- Xin, A.W.; Nielson, D.M.; Krause, K.R.; Fiorini, G.; Midgley, N.; Pereira, F.; Lossio-Ventura, J.A. Using large language models to detect outcomes in qualitative studies of adolescent depression. J. Am. Med. Inform. Assoc. 2024, 33, 79–89. [Google Scholar] [CrossRef]

- Adhikary, P.K.; Srivastava, A.; Kumar, S.; Singh, S.M.; Manuja, P.; Gopinath, J.K.; Krishnan, V.; Gupta, S.K.; Deb, K.S.; Chakraborty, T. Exploring the Efficacy of Large Language Models in Summarizing Mental Health Counseling Sessions: Benchmark Study. JMIR Ment. Health 2024, 11, e57306. [Google Scholar] [CrossRef] [PubMed]

- Gargari, O.K.; Habibi, G.; Nilchian, N.; Shafiee, A. Comparative analysis of large language models in psychiatry and mental health: A focus on GPT, AYA, and Nemotron-3–8B. Asian J. Psychiatry 2024, 99, 104148. [Google Scholar] [CrossRef] [PubMed]

- Shin, D.; Kim, H.; Lee, S.; Cho, Y.; Jung, W. Using Large Language Models to Detect Depression From User-Generated Diary Text Data as a Novel Approach in Digital Mental Health Screening: Instrument Validation Study. J. Med. Internet Res. 2024, 26, e54617. [Google Scholar] [CrossRef]

- Andibanbang, F.; Odunuga, K.V.; Osuji, C.I.; Adeniran, K.O.; Akinyemi, K.; Tyem, N.F.; Akinlade, H.O.; Asimiyu, A.A.; Oke, O.; Ariyo, T.S.; et al. AI-Powered Conversations: The Diagnostic Potential of Chatbots in Mental Health Care. Ann. Comput. 2025, 1, 19–25. [Google Scholar] [CrossRef]

- Pawar, D.; Phansalkar, S. A Binary Question Answering System for Diagnosing Mental Health Syndromes powered by Large Language Model with Custom-Built Dataset. In Proceedings of the 2024 IEEE 4th International Conference on ICT in Business Industry & Government (ICTBIG), Indore, India, 13–14 December 2024; IEEE: London, UK, 2024; pp. 1–8. [Google Scholar] [CrossRef]

- Colombatto, C.; Fleming, S.M. Folk psychological attributions of consciousness to large language models. Neurosci. Conscious. 2024, 2024, niae013. [Google Scholar] [CrossRef] [PubMed]

- Casu, M.; Triscari, S.; Battiato, S.; Guarnera, L.; Caponnetto, P. AI Chatbots for Mental Health: A Scoping Review of Effectiveness, Feasibility, and Applications. Appl. Sci. 2024, 14, 5889. [Google Scholar] [CrossRef]

- Monosov, I.E.; Zimmermann, J.; Frank, M.J.; Mathis, M.W.; Baker, J.T. Ethological computational psychiatry: Challenges and opportunities. Curr. Opin. Neurobiol. 2024, 86, 102881. [Google Scholar] [CrossRef]

- Pang, H.Y.M.; Meshkat, S.; Teferra, B.G.; Rueda, A.; Samavi, R.; Krishnan, S.; Doyle, T.; Rambhatla, S.; DeJong, S.; Sockalingam, S.; et al. Opportunities and Barriers of Generative Artificial Intelligence in the Training of Psychiatrists: A Competencies-Based Perspective. Acad. Psychiatry 2025, 49, 25–30. [Google Scholar] [CrossRef]

- Lee, Q.Y.; Chen, M.; Ong, C.W.; Ho, C.S.H. The role of generative artificial intelligence in psychiatric education—A scoping review. BMC Med. Educ. 2025, 25, 438. [Google Scholar] [CrossRef]

- Chen, S.; Wu, M.; Zhu, K.Q.; Lan, K.; Zhang, Z.; Cui, L. LLM-empowered Chatbots for Psychiatrist and Patient Simulation: Application and Evaluation. arXiv 2023, arXiv:2305.13614. [Google Scholar] [CrossRef]

- Asman, O.; Torous, J.; Tal, A. Responsible Design, Integration, and Use of Generative AI in Mental Health. JMIR Ment. Health 2025, 12, e70439. [Google Scholar] [CrossRef]

- Wang, L.; Bhanushali, T.; Huang, Z.; Yang, J.; Badami, S.; Hightow-Weidman, L. Evaluating Generative AI in Mental Health: Systematic Review of Capabilities and Limitations. JMIR Ment. Health 2025, 12, e70014. [Google Scholar] [CrossRef] [PubMed]

- Zhang, X.; Cui, W.; Wang, J.; Li, Y. Chat, Summary and Diagnosis: A LLM-Enhanced Conversational Agent for Interactive Depression Detection. In Proceedings of the 2024 4th International Conference on Industrial Automation, Robotics and Control Engineering (IARCE), Chengdu, China, 15–17 November 2024; IEEE: London, UK, 2024; pp. 343–348. [Google Scholar] [CrossRef]

- Patra, B.G.; Lepow, L.A.; Kumar, P.K.R.J.; Vekaria, V.; Sharma, M.M.; Adekkanattu, P.; Fennessy, B.; Hynes, G.; Land, I.; Sanchez-Ruiz, J.A.; et al. Extracting Social Support and Social Isolation Information from Clinical Psychiatry Notes: Comparing a Rule-based NLP System and a Large Language Model. Am. Med Inform. Assoc. 2024, 32, 218–226. [Google Scholar] [CrossRef] [PubMed]

- Kim, T.; Bae, S.; Kim, H.A.; Lee, S.W.; Hong, H.; Yang, C.; Kim, Y.H. MindfulDiary: Harnessing Large Language Model to Support Psychiatric Patients’ Journaling. In Proceedings of the CHI Conference on Human Factors in Computing Systems, New York, NY, USA, 11–16 May 2024; ACM: New York, NY, USA, 2024; pp. 1–20. [Google Scholar] [CrossRef]

- Fisher, C.E. The real ethical issues with AI for clinical psychiatry. Int. Rev. Psychiatry 2025, 37, 14–20. [Google Scholar] [CrossRef]

- Cardamone, N.C.; Olfson, M.; Schmutte, T.; Ungar, L.; Liu, T.; Cullen, S.W.; Williams, N.J.; Marcus, S.C. Classifying Unstructured Text in Electronic Health Records for Mental Health Prediction Models: Large Language Model Evaluation Study. JMIR Med. Inform. 2025, 13, e65454. [Google Scholar] [CrossRef]

- Bouguettaya, A.; Team, V.; Stuart, E.M.; Aboujaoude, E. AI-driven report-generation tools in mental healthcare: A review of commercial tools. Gen. Hosp. Psychiatry 2025, 94, 150–158. [Google Scholar] [CrossRef]

- Wu, Y.; Chen, J.; Mao, K.; Zhang, Y. Automatic Post-Traumatic Stress Disorder Diagnosis via Clinical Transcripts: A Novel Text Augmentation with Large Language Models. In Proceedings of the 2023 IEEE Biomedical Circuits and Systems Conference (BioCAS), Toronto, ON, Canada, 19–21 October 2023; IEEE: London, UK, 2023; pp. 1–5. [Google Scholar] [CrossRef]

- Volkmer, S.; Meyer-Lindenberg, A.; Schwarz, E. Large language models in psychiatry: Opportunities and challenges. Psychiatry Res. 2024, 339, 116026. [Google Scholar] [CrossRef]

- Lopes, M.C. The future of the sleep field using large language models in mental health care. J. Clin. Sleep. Med. 2025, 21, 1151–1152. [Google Scholar] [CrossRef]

- Shah, H.A.; Islam, A.; Tariq, Z.U.A.; Belhaouari, S.B.; Househ, M. Retrieval Augmented Generation System for Mental Health Information. Stud. Health Technol. Inform. 2025, 329, 693–697. [Google Scholar] [CrossRef] [PubMed]

- Kumar, A.; Sharma, S.; Gupta, S.; Kumar, D. Mental Healthcare Chatbot Based on Custom Diagnosis Documents Using a Quantized Large Language Model. In Proceedings of the 2024 11th International Conference on Reliability, Infocom Technologies and Optimization (Trends and Future Directions) (ICRITO), Toronto, ON, Canada, 19–21 October 2023; IEEE: London, UK, 2024; pp. 1–6. [Google Scholar] [CrossRef]

- Amirian, S.; Kekre, A.; Loganathan, B.J.; Chavan, V.; Kandula, P.; Littlefield, N.; Franco, J.R.; Tafti, A.P.; Ebuenyi, I.K. Advancing psychosocial disability and psychosocial rehabilitation research through large language models and computational text mining. Camb. Prism. Glob. Ment. Health 2024, 11, e123. [Google Scholar] [CrossRef]

- James, L.J.; Genga, L.; Montagne, B.; Hagenaars, M.; Van Gorp, P. Caregiver’s Evaluation of LLM-Generated Treatment Goals for Patients with Severe Mental Illnesses. In Proceedings of the 17th International Conference on PErvasive Technologies Related to Assistive Environments, New York, NY, USA, 26–28 June 2024; ACM: New York, NY, USA, 2024; pp. 187–190. [Google Scholar] [CrossRef]

- Alhuzali, H.; Alasmari, A. Pre- Trained Language Models for Mental Health: An Empirical Study on Arabic Q&A Classification. Healthcare 2025, 13, 985. [Google Scholar] [CrossRef]

- Li, L.; Kong, S.; Zhao, H.; Li, C.; Teng, Y.; Wang, Y. Chain of Risks Evaluation CORE: A framework for safer large language models in public mental health. Psychiatry Clin. Neurosci. 2025, 79, 299–305. [Google Scholar] [CrossRef]

- Cross, S.; Mangelsdorf, S.; Valentine, L.; O’Sullivan, S.; McEnery, C.; Scott, I.; Gilbertson, T.; Louis, S.; Myer, J.; Liu, P.; et al. Insights from fifteen years of real-world development, testing and implementation of youth digital mental health interventions. Internet Interv. 2025, 41, 100849. [Google Scholar] [CrossRef] [PubMed]

- Izumi, K.; Tanaka, H.; Shidara, K.; Adachi, H.; Kanayama, D.; Kudo, T.; Nakamura, S. Response Generation for Cognitive Behavioral Therapy with Large Language Models: Comparative Study with Socratic Questioning. arXiv 2024, arXiv:2401.15966. [Google Scholar] [CrossRef]

- Shankar, R.; Bundele, A.; Yap, A.; Mukhopadhyay, A. Development and feasibility testing of an AI-powered chatbot for early detection of caregiver burden: Protocol for a mixed methods feasibility study. Front. Psychiatry 2025, 16. [Google Scholar] [CrossRef]

- Khorev, V.; Kiselev, A.; Badarin, A.; Antipov, V.; Drapkina, O.; Kurkin, S.; Hramov, A. Review on the use of AI-based methods and tools for treating mental conditions and mental rehabilitation. Eur. Phys. J. Spec. Top. 2024, 234, 4139–4158. [Google Scholar] [CrossRef]

- Thapa, S.; Adhikari, S. GPT-4o and multimodal large language models as companions for mental wellbeing. Asian J. Psychiatry 2024, 99, 104157. [Google Scholar] [CrossRef]

- Elyoseph, Z.; Levkovich, I.; Shinan-Altman, S. Assessing prognosis in depression: Comparing perspectives of AI models, mental health professionals and the general public. Fam. Med. Community Health 2024, 12, e002583. [Google Scholar] [CrossRef]

- Wang, G.; Badal, A.; Jia, X.; Maltz, J.S.; Mueller, K.; Myers, K.J.; Niu, C.; Vannier, M.; Yan, P.; Yu, Z.; et al. Development of metaverse for intelligent healthcare. Nat. Mach. Intell. 2022, 4, 922–929. [Google Scholar] [CrossRef]

- Firth, J.; Torous, J.; López-Gil, J.F.; Linardon, J.; Milton, A.; Lambert, J.; Smith, L.; Jarić, I.; Fabian, H.; Vancampfort, D.; et al. From “online brains” to “online lives”: Understanding the individualized impacts of Internet use across psychological, cognitive and social dimensions. World Psychiatry 2024, 23, 176–190. [Google Scholar] [CrossRef]

- Ma, Y.; Zeng, Y.; Liu, T.; Sun, R.; Xiao, M.; Wang, J. Integrating large language models in mental health practice: A qualitative descriptive study based on expert interviews. Front Public Health 2024, 12, 1475867. [Google Scholar] [CrossRef]

- Patias, I.; Miteva, D.; Peltekova, E.; Wright, M.; Gasteiger-Klicpera, B. Leveraging Large Language Models to Enhance Mental Health Literacy and Diversity Awareness in Adolescents: The me_HeLi-D Project. In Proceedings of the 2024 8th International Symposium on Innovative Approaches in Smart Technologies (ISAS), İstanbul, Turkiye, 6–7 December 2024; IEEE: London, UK, 2024; pp. 1–5. [Google Scholar] [CrossRef]

- Floridi, L.; Cowls, J.; Beltrametti, M.; Chatila, R.; Chazerand, P.; Dignum, V.; Luetge, C.; Madelin, R.; Pagallo, U.; Rossi, F.; et al. AI4People—An Ethical Framework for a Good AI Society: Opportunities, Risks, Principles, and Recommendations. Minds Mach. 2018, 28, 689–707. [Google Scholar] [CrossRef]

- Friedman, S.F.; Ballentine, G. Trajectories of sentiment in 11,816 psychoactive narratives. Hum. Psychopharmacol. Clin. Exp. 2023, 39, e2889. [Google Scholar] [CrossRef]

- Park, C.; Lee, H.; Lee, S.; Jeong, O. Synergistic Joint Model of Knowledge Graph and LLM for Enhancing XAI-Based Clinical Decision Support Systems. Mathematics 2025, 13, 949. [Google Scholar] [CrossRef]

- Li, Y.; Zeng, C.; Zhong, J.; Zhang, R.; Zhang, M.; Zou, L. Leveraging Large Language Model as Simulated Patients for Clinical Education. arXiv 2024, arXiv:2404.13066. [Google Scholar] [CrossRef]

- Dalal, S.; Tilwani, D.; Gaur, M.; Jain, S.; Shalin, V.L.; Sheth, A.P. A Cross Attention Approach to Diagnostic Explainability Using Clinical Practice Guidelines for Depression. IEEE J. Biomed. Health Inform. 2025, 29, 1333–1342. [Google Scholar] [CrossRef]

- Garg, S.; Sharma, S. Impact of Artificial Intelligence in Special Need Education to Promote Inclusive Pedagogy. Int. J. Inf. Educ. Technol. 2020, 10, 523–527. [Google Scholar] [CrossRef]

- Tortora, L. Beyond Discrimination: Generative AI Applications and Ethical Challenges in Forensic Psychiatry. Front. Psychiatry 2024, 15, 1346059. [Google Scholar] [CrossRef] [PubMed]

- Ogunwale, A.; Smith, A.; Fakorede, O.; Ogunlesi, A.O. Artificial intelligence and forensic mental health in Africa: A narrative review. Int. Rev. Psychiatry 2025, 37, 3–13. [Google Scholar] [CrossRef]

- Baydili, İ.; Tasci, B.; Tasci, G. Artificial Intelligence in Psychiatry: A Review of Biological and Behavioral Data Analyses. Diagnostics 2025, 15, 434. [Google Scholar] [CrossRef] [PubMed]

- Sun, J.; Lu, T.; Shao, X.; Han, Y.; Xia, Y.; Zheng, Y.; Wang, J.; Li, X.; Ravindran, A.; Fan, L.; et al. Practical AI application in psychiatry: Historical review and future directions. Mol. Psychiatry 2025, 30, 4399–4408. [Google Scholar] [CrossRef] [PubMed]

- Sezgin, E.; Chekeni, F.; Lee, J.; Keim, S. Clinical Accuracy of Large Language Models and Google Search Responses to Postpartum Depression Questions: Cross-Sectional Study. J. Med. Internet Res. 2023, 25, e49240. [Google Scholar] [CrossRef]

- Desage, C.; Bunge, B.; Bunge, E.L. A Revised Framework for Evaluating the Quality of Mental Health Artificial Intelligence-Based Chatbots. Procedia Comput. Sci. 2024, 248, 3–7. [Google Scholar] [CrossRef]

- Elyoseph, Z.; Levkovich, I. Comparing the Perspectives of Generative AI, Mental Health Experts, and the General Public on Schizophrenia Recovery: Case Vignette Study. JMIR Ment. Health 2024, 11, e53043. [Google Scholar] [CrossRef]

- Perlis, R.H.; Goldberg, J.F.; Ostacher, M.J.; Schneck, C.D. Clinical decision support for bipolar depression using large language models. Neuropsychopharmacology 2024, 49, 1412–1416. [Google Scholar] [CrossRef]

- Hanss, K.; Sarma, K.V.; Halls, A.; Gorrell, S.; Reilly, E. 4.29 Can Artificial Intelligence Make the Diagnosis? Evaluating the Accuracy of Large Language Models in Diagnosing Child and Adolescent Psychiatry Clinical Cases. J. Am. Acad. Child. Adolesc. Psychiatry 2024, 63, S239–S240. [Google Scholar] [CrossRef]

- Lee, C.; Mohebbi, M.; O’Callaghan, E.; Winsberg, M. Large Language Models Versus Expert Clinicians in Crisis Prediction Among Telemental Health Patients: Comparative Study. JMIR Ment Health 2024, 11, e58129. [Google Scholar] [CrossRef]

- McBain, R.K.; Cantor, J.H.; Zhang, L.A.; Baker, O.; Zhang, F.; Halbisen, A.; Kofner, A.; Breslau, J.; Stein, B.; Mehrotra, A.; et al. Competency of Large Language Models in Evaluating Appropriate Responses to Suicidal Ideation: Comparative Study. J. Med. Internet Res. 2025, 27, e67891. [Google Scholar] [CrossRef]

- Lauderdale, S.A.; Schmitt, R.; Wuckovich, B.; Dalal, N.; Desai, H.; Tomlinson, S. Effectiveness of generative AI-large language models’ recognition of veteran suicide risk: A comparison with human mental health providers using a risk stratification model. Front. Psychiatry 2025, 16, 9. [Google Scholar] [CrossRef] [PubMed]

- Levkovich, I. Evaluating Diagnostic Accuracy and Treatment Efficacy in Mental Health: A Comparative Analysis of Large Language Model Tools and Mental Health Professionals. Eur. J. Investig. Health Psychol. Educ. 2025, 15, 9. [Google Scholar] [CrossRef] [PubMed]

- Zhou, S.; Xu, Z.; Zhang, M.; Xu, C.; Guo, Y.; Zhan, Z.; Fang, Y.; Ding, S.; Wang, J.; Xu, K.; et al. Large Language Models for Disease Diagnosis: A Scoping Review. arXiv 2025, arXiv:2409.00097. [Google Scholar] [CrossRef]

- Kim, J.; Leonte, K.G.; Chen, M.L.; Torous, J.B.; Linos, E.; Pinto, A.; Rodriguez, C.I. Large language models outperform mental and medical health care professionals in identifying obsessive-compulsive disorder. Npj Digit. Med. 2024, 7, 193. [Google Scholar] [CrossRef]

- Hadar-Shoval, D.; Asraf, K.; Mizrachi, Y.; Haber, Y.; Elyoseph, Z. Assessing the Alignment of Large Language Models With Human Values for Mental Health Integration: Cross-Sectional Study Using Schwartz’s Theory of Basic Values. JMIR Ment. Health 2024, 11, e55988. [Google Scholar] [CrossRef] [PubMed]

- Saha, K.; Jain, Y.; De Choudhury, M. Linguistic Comparison of AI- and Human-Written Responses to Mental Health Queries. arXiv 2025, arXiv:2504.09271. [Google Scholar]

- George, S.B.; Binu Rajan, M.R.; Ebin, P. Mello: A Large Language Model for Mental Health Counselling Conversations. In Proceedings of the 2024 3rd International Conference for Advancement in Technology (ICONAT), Goa, India, 6–8 September 2024; IEEE: London, UK, 2024; pp. 1–6. [Google Scholar] [CrossRef]

- Bouguettaya, A.; Stuart, E.M.; Aboujaoude, E. Racial bias in AI-mediated psychiatric diagnosis and treatment: A qualitative comparison of four large language models. Npj Digit. Med. 2025, 8, 332. [Google Scholar] [CrossRef] [PubMed]

- Guan, M.Y.; Joglekar, M.; Wallace, E.; Jain, S.; Barak, B.; Helyar, A.; Dias, R.; Vallone, A.; Ren, H.; Wei, J.; et al. Deliberative Alignment: Reasoning Enables Safer Language Models. arXiv 2025, arXiv:2412.16339. [Google Scholar] [CrossRef]

- Grabb, D.; Lamparth, M.; Vasan, N. Risks from Language Models for Automated Mental Healthcare: Ethics and Structure for Implementation. arXiv 2024, arXiv:2406.11852. [Google Scholar]

- Xu, Z.; Xu, J.; Luo, Y.; Zhang, K.; Zhang, J.; Zou, Y.; Liu, L. Utilizing Large Language Models for Psychological Assessment: Enhancing Suicide Risk Detection Through Social Media Analysis. In Proceedings of the 2024 6th International Conference on Frontier Technologies of Information and Computer (ICFTIC), Qingdao, China, 13–15 December; IEEE: London, UK, 2024; pp. 1418–1421. [Google Scholar] [CrossRef]

- Ghanadian, H.; Nejadgholi, I.; Osman, H.A. Socially Aware Synthetic Data Generation for Suicidal Ideation Detection Using Large Language Models. IEEE Access 2024, 12, 14350–14363. [Google Scholar] [CrossRef]

- Hur, J.K.; Heffner, J.; Feng, G.W.; Joormann, J.; Rutledge, R.B. Language sentiment predicts changes in depressive symptoms. Proc. Natl. Acad. Sci. USA 2024, 121, e2321321121. [Google Scholar] [CrossRef]

- Rathje, S.; Mirea, D.M.; Sucholutsky, I.; Marjieh, R.; Robertson, C.E.; Van Bavel, J.J. GPT is an effective tool for multilingual psychological text analysis. Proc. Natl. Acad. Sci. USA 2024, 121, e2308950121. [Google Scholar] [CrossRef]

- Hasan, M.J.; Sultana, J.; Ahmed, S.; Momen, S. Early detection of occupational stress: Enhancing workplace safety with machine learning and large language models. PLoS ONE 2025, 20, e0323265. [Google Scholar] [CrossRef]

- Wahab, O.; Adda, M.; Zrira, N. SereniSens: A Multimodal AI Framework with LLMs for Stress Prediction through Sleep Biometrics. Procedia Comput. Sci. 2024, 251, 342–349. [Google Scholar] [CrossRef]

- Corda, E.; Massa, S.M.; Riboni, D. Context-Aware Behavioral Tips to Improve Sleep Quality via Machine Learning and Large Language Models. Future Internet 2024, 16, 46. [Google Scholar] [CrossRef]

- Aich, A.; Quynh, A.; Osseyi, P.; Pinkham, A.; Harvey, P.; Curtis, B.; Depp, C.; Parde, N. Using LLMs to Aid Annotation and Collection of Clinically-Enriched Data in Bipolar Disorder and Schizophrenia. In Proceedings of the 10th Workshop Comput Linguist Clin Psychol CLPsych, Albuquerque, NM, USA, 3 May 2025; pp. 181–192. Available online: https://aclanthology.org/2025.clpsych-1.15/ (accessed on 21 April 2025).

- Pavez, J.; Allende, H. A Hybrid System Based on Bayesian Networks and Deep Learning for Explainable Mental Health Diagnosis. Appl. Sci. 2024, 14, 8283. [Google Scholar] [CrossRef]

- Yang, K.; Ji, S.; Zhang, T.; Xie, Q.; Kuang, Z.; Ananiadou, S. Towards Interpretable Mental Health Analysis with Large Language Models. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, Singapore, 6–10 December 2023. [Google Scholar] [CrossRef]

- Huang, J.; Gu, S.; Hou, L.; Wu, Y.; Wang, X.; Yu, H.; Han, J. Large Language Models Can Self-Improve. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, Singapore, 6–10 December 2023; Association for Computational Linguistics: Stroudsburg, PA, USA, 2023; pp. 1051–1068. [Google Scholar] [CrossRef]

- Zhang, C.; Li, R.; Tan, M.; Yang, M.; Zhu, J.; Yang, D.; Zhao, J.; Ye, G.; Li, C.; Hu, X. CPsyCoun: A Report-based Multi-turn Dialogue Reconstruction and Evaluation Framework for Chinese Psychological Counseling. arXiv 2024, arXiv:2405.16433. [Google Scholar]

- De Duro, E.S.; Improta, R.; Stella, M. Introducing CounseLLMe: A dataset of simulated mental health dialogues for comparing LLMs like Haiku, LLaMAntino and ChatGPT against humans. Emerg. Trends Drugs Addict. Health 2025, 5, 100170. [Google Scholar] [CrossRef]

- Zeng, Q.; Li, X.; Wang, S.; Liu, K. Adversarial Evaluation Algorithm for Detecting Extreme Behaviors of LLMs in Psychological Counseling Scenarios. In Proceedings of the 2025 2nd International Conference on Algorithms, Software Engineering and Network Security (ASENS), Guangzhou, China, 21–23 March 2025; IEEE: London, UK, 2025; pp. 412–415. [Google Scholar] [CrossRef]

- Zhang, Z.; Wang, J. Can AI replace psychotherapists? Exploring the future of mental health care. Front. Psychiatry 2024, 15, 1444382. [Google Scholar] [CrossRef] [PubMed]

- Wang, W.J. vFerryman: An Artificial Intelligence-Driven Personalized Companion Providing Calming Visuals and Social Interaction for Emotional Well-Being. Eng. Proc. 2025, 92, 22. [Google Scholar] [CrossRef]

- Yang, D.; Ziems, C.; Held, W.; Shaikh, O.; Bernstein, M.S.; Mitchell, J. Social Skill Training with Large Language Models. arXiv 2024, arXiv:2404.04204. [Google Scholar] [CrossRef]

- Li, Y.; Huang, Y.; Wang, H.; Zhang, X.; Zou, J.; Sun, L. Quantifying AI Psychology: A Psychometrics Benchmark for Large Language Models. arXiv 2024, arXiv:2406.17675. [Google Scholar] [CrossRef]

- Kjell, O.N.E.; Kjell, K.; Schwartz, H.A. Beyond rating scales: With targeted evaluation, large language models are poised for psychological assessment. Psychiatry Res. 2024, 333, 115667. [Google Scholar] [CrossRef]

- Liu, Z.; Kang, Y.; Li, X. Research on Psychological Test based on Large Language Model. In Proceedings of the 2024 3rd International Conference on Robotics, Artificial Intelligence and Intelligent Control (RAIIC), Mianyang, China, 5–7 July 2024; IEEE: London, UK, 2024; pp. 503–510. [Google Scholar] [CrossRef]

- Cong, Y.; LaCroix, A.N.; Lee, J. Clinical efficacy of pre-trained large language models through the lens of aphasia. Sci. Rep. 2024, 14, 15573. [Google Scholar] [CrossRef]

- Liu, I.; Liu, F.; Xiao, Y.; Huang, Y.; Wu, S.; Ni, S. Investigating the Key Success Factors of Chatbot-Based Positive Psychology Intervention with Retrieval- and Generative Pre-Trained Transformer (GPT)-Based Chatbots. Int. J. Hum.–Comput. Interact. 2025, 41, 341–352. [Google Scholar] [CrossRef]

- Huang, S.; Wang, Y.; Li, G.; Hall, B.J.; Nyman, T.J. Digital Mental Health Interventions for Alleviating Depression and Anxiety During Psychotherapy Waiting Lists: Systematic Review. JMIR Ment. Health 2024, 11, e56650. [Google Scholar] [CrossRef] [PubMed]

- Bellini-Leite, S.C. Dual Process Theory for Large Language Models: An overview of using Psychology to address hallucination and reliability issues. Adapt. Behav. 2024, 32, 329–343. [Google Scholar] [CrossRef]

- Pellert, M.; Lechner, C.M.; Wagner, C.; Rammstedt, B.; Strohmaier, M. AI Psychometrics: Assessing the Psychological Profiles of Large Language Models Through Psychometric Inventories. Perspect. Psychol. Sci. 2024, 19, 808–826. [Google Scholar] [CrossRef]

- Tavory, T. Regulating AI in Mental Health: Ethics of Care Perspective. JMIR Ment. Health 2024, 11, e58493. [Google Scholar] [CrossRef]

- Liu, J. ChatGPT: Perspectives from human–computer interaction and psychology. Front. Artif. Intell. 2024, 7, 1418869. [Google Scholar] [CrossRef]

- Ben-Zion, Z.; Witte, K.; Jagadish, A.K.; Duek, O.; Harpaz-Rotem, I.; Khorsandian, M.C.; Burrer, A.; Seifritz, E.; Homan, P.; Schulz, E.; et al. Assessing and alleviating state anxiety in large language models. Npj Digit. Med. 2025, 8, 132. [Google Scholar] [CrossRef] [PubMed]

- Jurblum, M.; Selzer, R. Potential promises and perils of artificial intelligence in psychotherapy—The AI Psychotherapist (APT). Australas. Psychiatry 2025, 33, 103–105. [Google Scholar] [CrossRef]

- Imel, Z.E.; Tanana, M.J.; Soma, C.S.; Hull, T.D.; Pace, B.T.; Stanco, S.C.; Creed, T.A.; Moyers, T.R.; Atkins, D.C. Outcomes in Mental Health Counseling From Conversational Content With Transformer-Based Machine Learning. JAMA Netw. Open 2024, 7, e2352590. [Google Scholar] [CrossRef]

- Rasool, A.; Shahzad, M.I.; Aslam, H.; Chan, V.; Arshad, M.A. Emotion-Aware Embedding Fusion in Large Language Models (Flan-T5, Llama 2, DeepSeek-R1, and ChatGPT 4) for Intelligent Response Generation. AI 2025, 6, 56. [Google Scholar] [CrossRef]

- Lalk, C.; Steinbrenner, T.; Kania, W.; Popko, A.; Wester, R.; Schaffrath, J.; Eberhardt, S.; Schwartz, B.; Lutz, W.; Rubel, J. Measuring Alliance and Symptom Severity in Psychotherapy Transcripts Using Bert Topic Modeling. Adm. Policy Ment. Health Ment. Health Serv. Res. 2024, 51, 509–524. [Google Scholar] [CrossRef]

- Sezgin, E.; McKay, I. Behavioral health and generative AI: A perspective on future of therapies and patient care. Npj Ment. Health Res. 2024, 3, 25. [Google Scholar] [CrossRef] [PubMed]

- Sufyan, N.S.; Fadhel, F.H.; Alkhathami, S.S.; Mukhadi, J.Y.A. Artificial intelligence and social intelligence: Preliminary comparison study between AI models and psychologists. Front. Psychol. 2024, 15, 1353022. [Google Scholar] [CrossRef] [PubMed]

- Raile, P. The usefulness of ChatGPT for psychotherapists and patients. Humanit. Soc. Sci. Commun. 2024, 11, 47. [Google Scholar] [CrossRef]

- Yang, F.; Wei, J.; Zhao, X.; An, R. Artificial Intelligence–Based Mobile Phone Apps for Child Mental Health: Comprehensive Review and Content Analysis. JMIR MHealth UHealth 2025, 13, e58597. [Google Scholar] [CrossRef] [PubMed]

- Nepal, S.; Pillai, A.; Campbell, W.; Massachi, T.; Choi, E.S.; Xu, X.; Kuc, J.; Huckins, J.; Holden, J.; Depp, C.; et al. Contextual AI Journaling: Integrating LLM and Time Series Behavioral Sensing Technology to Promote Self-Reflection and Well-being using the MindScape App. In Proceedings of the Extended Abstracts of the CHI Conference on Human Factors in Computing Systems, New York, NY, USA, 11–16 May 2024; ACM: New York, NY, USA, 2024; pp. 1–8. [Google Scholar] [CrossRef]

- Sargolzaei, P.; Rastogi, M.; Zaman, L. Advancing Mixed Reality Game Development: An Evaluation of a Visual Game Analytics Tool in Action-Adventure and FPS Genres. Proc. ACM Hum.-Comput. Interact. 2024, 8, 1–32. [Google Scholar] [CrossRef]

- Soman, G.; Judy, M.V.; Abou, A.M. Human guided empathetic AI agent for mental health support leveraging reinforcement learning-enhanced retrieval-augmented generation. Cogn. Syst. Res. 2025, 90, 101337. [Google Scholar] [CrossRef]

- Song, I.; Pendse, S.R.; Kumar, N.; De Choudhury, M. The Typing Cure: Experiences with Large Language Model Chatbots for Mental Health Support. arXiv 2025, arXiv:2401.14362. [Google Scholar] [CrossRef]

- Kuhail, M.A.; Alturki, N.; Thomas, J.; Alkhalifa, A.K.; Alshardan, A. Human-Human vs Human-AI Therapy: An Empirical Study. Int. J. Hum.–Comput. Interact. 2025, 41, 6841–6852. [Google Scholar] [CrossRef]

- Gabriel, S.; Puri, I.; Xu, X.; Malgaroli, M.; Ghassemi, M. Can AI Relate: Testing Large Language Model Response for Mental Health Support. arXiv 2024, arXiv:2405.12021. [Google Scholar] [CrossRef]

- Chiu, Y.Y.; Sharma, A.; Lin, I.W.; Althoff, T. A Computational Framework for Behavioral Assessment of LLM Therapists. arXiv 2024, arXiv:2401.00820. [Google Scholar] [CrossRef]

- Stade, E.C.; Stirman, S.W.; Ungar, L.H.; Boland, C.L.; Schwartz, H.A.; Yaden, D.B.; Sedoc, J.; DeRubeis, R.J.; Willer, R.; Eichstaedt, J.C. Large language models could change the future of behavioral healthcare: A proposal for responsible development and evaluation. Npj Ment. Health Res. 2024, 3, 12. [Google Scholar] [CrossRef]

- Yuan, A.; Garcia Colato, E.; Pescosolido, B.; Song, H.; Samtani, S. Improving Workplace Well-being in Modern Organizations: A Review of Large Language Model-based Mental Health Chatbots. ACM Trans. Manag. Inf. Syst. 2025, 16, 1–26. [Google Scholar] [CrossRef]

- Rollwage, M.; Habicht, J.; Juechems, K.; Carrington, B.; Viswanathan, S.; Stylianou, M.; Hauser, T.U.; Harper, R. Correction: Using Conversational AIto Facilitate Mental Health Assessments Improve Clinical Efficiency Within Psychotherapy Services: Real-World Observational Study. JMIR AI 2024, 3, e57869. [Google Scholar] [CrossRef] [PubMed]

- Montag, C.; Ali, R.; Al-Thani, D.; Hall, B.J. On artificial intelligence and global mental health. Asian J. Psychiatry 2024, 91, 103855. [Google Scholar] [CrossRef] [PubMed]

- Rickman, S. Evaluating gender bias in large language models in long-term care. BMC Med. Inf. Decis. Mak. 2025, 25, 274. [Google Scholar] [CrossRef]

- Lopes, E.; Jain, G.; Carlbring, P.; Pareek, S. Talking Mental Health: A Battle of Wits Between Humans and AI. J. Technol. Behav. Sci. 2023, 9, 628–638. [Google Scholar] [CrossRef]

- Salman, S.; Richards, D. Using Large Language Models to Embed Relational Cues in the Dialogue of Collaborating Digital Twins. Systems 2025, 13, 353. [Google Scholar] [CrossRef]

- Isaranuwatchai, W.; Wang, Y.; Soboon, B.; Tungsanga, K.; Nakamura, R.; Wee, H.-L.; Botwright, S.; Theantawee, W.; Laoharuangchaiyot, J.; Mongkolchaipak, T.; et al. An empirical study looking at the potential impact of increasing cost-effectiveness threshold on reimbursement decisions in Thailand. Health Policy Technol. 2024, 13, 100927. [Google Scholar] [CrossRef]

- Jiang, M.; Yu, Y.J.; Zhao, Q.; Li, J.; Song, C.; Qi, H.; Zhai, W.; Luo, D.; Wang, X.; Fu, G.; et al. AI-Enhanced Cognitive Behavioral Therapy: Deep Learning and Large Language Models for Extracting Cognitive Pathways from Social Media Texts. arXiv 2024, arXiv:2404.11449. [Google Scholar] [CrossRef]

- Na, H. CBT-LLM: A Chinese Large Language Model for Cognitive Behavioral Therapy-based Mental Health Question Answering. arXiv 2024, arXiv:2403.16008. [Google Scholar]

- Shen, H.; Li, Z.; Yang, M.; Ni, M.; Tao, Y.; Yu, Z.; Zheng, W.; Xu, C.; Hu, B. Are Large Language Models Possible to Conduct Cognitive Behavioral Therapy? In Proceedings of the 2024 IEEE International Conference on Bioinformatics and Biomedicine (BIBM), Lisbon, Portugal, 3–6 December 2024; IEEE: London, UK, 2024; pp. 3695–3700. [Google Scholar] [CrossRef]

- Hodson, N.; Williamson, S. Can Large Language Models Replace Therapists? Evaluating Performance at Simple Cognitive Behavioral Therapy Tasks. JMIR AI 2024, 3, e52500. [Google Scholar] [CrossRef]

- Kian, M.J.; Zong, M.; Fischer, K.; Singh, A.; Velentza, A.M.; Sang, P.; Upadhyay, S.; Gupta, A.; Faruki, M.A.; Browning, W.; et al. Can an LLM-Powered Socially Assistive Robot Effectively and Safely Deliver Cognitive Behavioral Therapy? A Study With University Students. arXiv 2024, arXiv:2402.17937. [Google Scholar] [CrossRef]

- Held, P.; Pridgen, S.A.; Chen, Y.; Akhtar, Z.; Amin, D.; Pohorence, S. A Novel Cognitive Behavioral Therapy–Based Generative AI Tool (Socrates 2.0) to Facilitate Socratic Dialogue: Protocol for a Mixed Methods Feasibility Study. JMIR Res. Protoc. 2024, 13, e58195. [Google Scholar] [CrossRef] [PubMed]

- Jiang, M.; Zhao, Q.; Li, J.; Wang, F.; He, T.; Cheng, X.; Yang, B.X.; Ho, G.W.K.; Fu, G. A Generic Review of Integrating Artificial Intelligence in Cognitive Behavioral Therapy. arXiv 2024, arXiv:2407.19422. [Google Scholar] [CrossRef]

- Hwang, G.; Lee, D.Y.; Seol, S.; Jung, J.; Choi, Y.; Her, E.S.; An, M.H.; Park, R.W. Assessing the potential of ChatGPT for psychodynamic formulations in psychiatry: An exploratory study. Psychiatry Res. 2024, 331, 115655. [Google Scholar] [CrossRef] [PubMed]

- Han, G.; Liu, W.; Huang, X.; Borsari, B. Chain-of-Interaction: Enhancing Large Language Models for Psychiatric Behavior Understanding by Dyadic Contexts. In Proceedings of the 2024 IEEE 12th International Conference on Healthcare Informatics (ICHI), Orlando, FL, USA, 3–6 June 2024; IEEE: London, UK, 2024; pp. 392–401. [Google Scholar] [CrossRef]

- Lundahl, B.; Howey, W.; Dilanchian, A.; Garcia, M.J.; Patin, K.; Moleni, K.; Burke, B. Addressing Suicide Risk: A Systematic Review of Motivational Interviewing Infused Interventions. Res. Soc. Work Pract. 2024, 34, 158–168. [Google Scholar] [CrossRef]

- Claire, V.; PositivePsychology.com. What Is Motivational Interviewing? A Theory of Change. 2020. Available online: https://positivepsychology.com/motivational-interviewing-theory/ (accessed on 2 June 2025).

- Yosef, S.; Zisquit, M.; Cohen, B.; Brunstein, A.K.; Bar, K.; Friedman, D.; Association for Computational Linguistics. Assessing Motivational Interviewing Sessions with AI-Generated Patient Simulations. 2024. Available online: https://aclanthology.org/2024.clpsych-1.1/ (accessed on 2 June 2025).

- Sharma, A.; Rushton, K.; Lin, I.W.; Nguyen, T.; Althoff, T. Facilitating Self-Guided Mental Health Interventions Through Human-Language Model Interaction: A Case Study of Cognitive Restructuring. In Proceedings of the CHI Conference on Human Factors in Computing Systems, Honolulu, HI, USA, 11–16 May 2024; ACM: New York, NY, USA, 2024; pp. 1–29. [Google Scholar] [CrossRef]

- Filienko, D.; Wang, Y.; Jazmi, C.E.l.; Xie, S.; Cohen, T.; De Cock, M.; Yuwen, W. Toward Large Language Models as a Therapeutic Tool: Comparing Prompting Techniques to Improve GPT-Delivered Problem-Solving Therapy. arXiv 2024, arXiv:2409.00112. [Google Scholar]

- Yang, M.; Tao, Y.; Cai, H.; Hu, B. Behavioral Information Feedback With Large Language Models for Mental Disorders: Perspectives and Insights. IEEE Trans. Comput. Soc. Syst. 2024, 11, 3026–3044. [Google Scholar] [CrossRef]

- Spiegel, B.M.R.; Liran, O.; Clark, A.; Samaan, J.S.; Khalil, C.; Chernoff, R.; Reddy, K.; Mehra, M. Feasibility of combining spatial computing and AI for mental health support in anxiety and depression. npj Digit. Med. 2024, 7, 22. [Google Scholar] [CrossRef]

- Scholich, T.; Barr, M.; Wiltsey Stirman, S.; Raj, S. A Comparison of Responses from Human Therapists and Large Language Model–Based Chatbots to Assess Therapeutic Communication: Mixed Methods Study. JMIR Ment. Health 2025, 12, e69709. [Google Scholar] [CrossRef] [PubMed]

- He, Q.; Wang, J.; He, D. The Influence of Task and Group Disparities Over Users’ Attitudes Toward Using Large Language Models for Psychotherapy. Proc. Hum. Factors Ergon. Soc. Annu. Meet. 2024, 68, 1147–1152. [Google Scholar] [CrossRef]

- Uptegraft, C.; Black, K.C.; Gale, J.; Marshall, A.; He, S. The Elastic Electronic Health Record: A Five-Tiered Framework for Applying Artificial Intelligence to Electronic Health Record Maintenance, Configuration, and Use. JMIR AI 2025, 4, e66741. [Google Scholar] [CrossRef]

- Siddals, S.; Torous, J.; Coxon, A. “It happened to be the perfect thing”: Experiences of generative AI chatbots for mental health. Npj Ment. Health Res. 2024, 3, 48. [Google Scholar] [CrossRef] [PubMed]

- Park, C.; Lee, H.; Jeong, O.R. Leveraging Medical Knowledge Graphs and Large Language Models for Enhanced Mental Disorder Information Extraction. Future Internet 2024, 16, 260. [Google Scholar] [CrossRef]

- Bolpagni, M.; Gabrielli, S. Development of a Comprehensive Evaluation Scale for LLM-Powered Counseling Chatbots (CES-LCC) Using the eDelphi Method. Informatics 2025, 12, 33. [Google Scholar] [CrossRef]

- Shen, J.; DiPaola, D.; Ali, S.; Sap, M.; Park, H.W.; Breazeal, C. Empathy Toward Artificial Intelligence Versus Human Experiences and the Role of Transparency in Mental Health and Social Support Chatbot Design: Comparative Study. JMIR Ment. Health 2024, 11, e62679. [Google Scholar] [CrossRef]

- Coşkun, Ö.; Kıyak, Y.S.; Budakoğlu, I.İ. ChatGPT to generate clinical vignettes for teaching and multiple-choice questions for assessment: A randomized controlled experiment. Med. Teach. 2025, 47, 268–274. [Google Scholar] [CrossRef]

- Rządeczka, M.; Sterna, A.; Stolińska, J.; Kaczyńska, P.; Moskalewicz, M. The Efficacy of Conversational AI in Rectifying the Theory-of-Mind and Autonomy Biases: Comparative Analysis. JMIR Ment. Health 2025, 12, e64396. [Google Scholar] [CrossRef]

- Alasmari, A. A Scoping Review of Arabic Natural Language Processing for Mental Health. Healthcare 2025, 13, 963. [Google Scholar] [CrossRef] [PubMed]

- Connors, E.H.; Janse, P.; de Jong, K.; Bickman, L. The Use of Feedback in Mental Health Services: Expanding Horizons on Reach and Implementation. Adm. Policy Ment. Health Ment. Health Serv. Res. 2025, 52, 1–10. [Google Scholar] [CrossRef]

- Villarreal-Zegarra, D.; Reategui-Rivera, C.M.; García-Serna, J.; Quispe-Callo, G.; Lázaro-Cruz, G.; Centeno-Terrazas, G.; Galvez-Arevalo, R.; Escobar-Agreda, S.; Dominguez-Rodriguez, A.; Finkelstein, J. Self-Administered Interventions Based on Natural Language Processing Models for Reducing Depressive and Anxiety Symptoms: Systematic Review and Meta-Analysis. JMIR Ment. Health 2024, 11, e59560. [Google Scholar] [CrossRef]

- Waaler, P.N.; Hussain, M.; Molchanov, I.; Bongo, L.A.; Elvevåg, B. Prompt Engineering an Informational Chatbot for Education on Mental Health Using a Multiagent Approach for Enhanced Compliance With Prompt Instructions: Algorithm Development and Validation. JMIR AI 2025, 4, e69820. [Google Scholar] [CrossRef]

- Stade, E.C.; Eichstaedt, J.C.; Kim, J.P.; Wiltsey Stirman, S. Readiness evaluation for artificial intelligence-mental health deployment and implementation (READI): A review and proposed framework. Technol. Mind Behav. 2025, 6, 111–122. [Google Scholar] [CrossRef]

- Shewcraft, R.A.; Schwarz, J.; Micsinai Balan, M. Algorithmic Classification of Psychiatric Disorder–Related Spontaneous Communication Using Large Language Model Embeddings: Algorithm Development and Validation. JMIR AI 2025, 4, e67369. [Google Scholar] [CrossRef]

- Rasool, A.; Aslam, S.; Hussain, N.; Imtiaz, S.; Riaz, W. nBERT: Harnessing NLP for Emotion Recognition in Psychotherapy to Transform Mental Health Care. Information 2025, 16, 301. [Google Scholar] [CrossRef]

- Lewis, C.C.; Boyd, M.; Puspitasari, A.; Navarro, E.; Howard, J.; Kassab, H.; Hoffman, M.; Scott, K.; Lyon, K.; Lyon, S.; et al. Implementing Measurement-Based Care in Behavioral Health. JAMA Psychiatry 2019, 76, 324. [Google Scholar] [CrossRef]

- O’Connor, K.; Muller Neff, D.; Pitman, S. Burnout in mental health professionals: A systematic review and meta-analysis of prevalence and determinants. Eur. Psychiatry 2018, 53, 74–99. [Google Scholar] [CrossRef] [PubMed]

- Barish, G.; Marlotte, L.; Drayton, M.; Mogil, C.; Lester, P. Automatically Enriching Content for a Behavioral Health Learning Management System: A First Look. In Proceedings of the 9th World Congress on Electrical Engineering and Computer Systems and Science, London, UK, 3–5 August 2023. [Google Scholar] [CrossRef]

- Sharma, A.; Lin, I.W.; Miner, A.S.; Atkins, D.C.; Althoff, T. Human-AI Collaboration Enables More Empathic Conversations in Text-based Peer-to-Peer Mental Health Support. arXiv 2022, arXiv:2203.15144. [Google Scholar] [CrossRef]

- Lam, K. ChatGPT for low- and middle-income countries: A Greek gift? Lancet Reg. Health-West. Pac. 2023, 41, 100906. [Google Scholar] [CrossRef]

- Dwivedi, Y.K.; Kshetri, N.; Hughes, L.; Slade, E.L.; Jeyaraj, A.; Kar, A.K.; Baabdullah, A.M.; Koohang, A.; Raghavan, R.; Ahuja, m.; et al. Opinion Paper: “So what if ChatGPTwrote it?” Multidisciplinary perspectives on opportunities challenges implications of generative conversational AIfor research practice policy. Int. J. Inf. Manag. 2023, 71, 102642. [Google Scholar] [CrossRef]

- Shahsavar, Y.; Choudhury, A. User Intentions to Use ChatGPT for Self-Diagnosis and Health-Related Purposes: Cross-sectional Survey Study. JMIR Hum. Factors 2023, 10, e47564. [Google Scholar] [CrossRef]

- Rothman, B.; Slomkowski, M.; Speier, A.; Rush, A.J.; Trivedi, M.H.; Lawson, E.; Fahmy, M.; Carpenter, D.; Chen, D.; Forbes, A. Evaluating the Efficacy of a Digital Therapeutic (CT-152) as an Adjunct to Antidepressant Treatment in Adults With Major Depressive Disorder: Protocol for the MIRAI Remote Study. JMIR Res. Protoc. 2024, 13, e56960. [Google Scholar] [CrossRef]

- Mogk, J.; Idu, A.E.; Bobb, J.F.; Key, D.; Wong, E.S.; Palazzo, L.; Stefanik-Guizlo, K.; King, D.; Beatty, T.; Dorsey, C.N.; et al. Prescription Digital Therapeutics for Substance Use Disorder in Primary Care: Mixed Methods Evaluation of a Pilot Implementation Study. JMIR Form. Res. 2024, 8, e59088. [Google Scholar] [CrossRef]

- Oh, S.; Choi, J.; Han, D.H.; Kim, E. Effects of game-based digital therapeutics on attention deficit hyperactivity disorder in children and adolescents as assessed by parents or teachers: A systematic review and meta-analysis. Eur. Child Adolesc. Psychiatry 2024, 33, 481–493. [Google Scholar] [CrossRef]

- Habicht, J.; Viswanathan, S.; Carrington, B.; Hauser, T.U.; Harper, R.; Rollwage, M. Closing the accessibility gap to mental health treatment with a personalized self-referral chatbot. Nat. Med. 2024, 30, 595–602. [Google Scholar] [CrossRef] [PubMed]

- Davenport, N.D.; Werner, J.K. A randomized sham-controlled clinical trial of a novel wearable intervention for trauma-related nightmares in military veterans. J. Clin. Sleep Med. 2023, 19, 361–369. [Google Scholar] [CrossRef] [PubMed]

- Kong, H.; Moon, S. When LLM Therapists Become Salespeople: Evaluating Large Language Models for Ethical Motivational Interviewing. arXiv 2025, arXiv:2503.23566. [Google Scholar] [CrossRef]

- Huang, Y.; Wang, W.; Zhou, J.; Zhang, L.; Lin, J.; Liu, H.; Hu, X.; Zhou, Z.; Dong, W. Integrative modeling enables ChatGPT to achieve average level of human counselors performance in mental health, Q&A. Inf. Process. Manag. 2025, 62, 104152. [Google Scholar] [CrossRef]

- Hosseini, S.M.B.; Momeni Nezhad, M.J.; Hosseini, M.; Zolnoori, M. Optimizing Entity Recognition in Psychiatric Treatment Data with Large Language Models. Stud. Health Technol. Inform. 2025, 329, 784–788. [Google Scholar] [CrossRef]

- Leow, J.J.D.; Chua, H.N.; Jasser, M.B.; Issa, B.; Wong, R.T.K. Comparison of Depression Detection Between LLMs and Zero-Shot Learning Using DAD Dataset. In Proceedings of the 2025 21st IEEE International Colloquium on Signal Processing and Its Applications (CSPA), Pulau Pinang, Malaysia, 7–8 February 2025; IEEE: London, UK, 2025; pp. 295–300. [Google Scholar] [CrossRef]

- Gao, Y.; Li, R.; Croxford, E.; Caskey, J.; Patterson, B.W.; Churpek, M.; Miller, T.; Dligach, D.; Afshar, M. Leveraging Medical Knowledge Graphs Into Large Language Models for Diagnosis Prediction: Design Application Study. JMIR AI 2025, 4, e58670. [Google Scholar] [CrossRef] [PubMed]

- Ferrario, A.; Sedlakova, J.; Trachsel, M. The Role of Humanization and Robustness of Large Language Models in Conversational Artificial Intelligence for Individuals With Depression: A Critical Analysis. JMIR Ment. Health 2024, 11, e56569. [Google Scholar] [CrossRef]

- Li, M.D.; Wang, Y. Editorial: Advances; opportunities and challenges of using modern AGI and AIGC technologies in depression and related disorders. Front. Psychiatry 2025, 16, 1625579. [Google Scholar] [CrossRef]

- Linardon, J.; Messer, M.; Anderson, C.; Liu, C.; McClure, Z.; Jarman, H.K.; Goldberg, S.B.; Torous, J. Role of large language models in mental health research: An international survey of researchers’ practices and perspectives. BMJ Ment. Health 2025, 28, e301787. [Google Scholar] [CrossRef] [PubMed]

- Qu, Y.; Du, P.; Che, W.; Wei, C.; Zhang, C.; Ouyang, W.; Bian, Y.; Xu, F.; Hu, B.; Du, K.; et al. Promoting interactions between cognitive science and large language models. Innovation 2024, 5, 100579. [Google Scholar] [CrossRef] [PubMed]

- Thazhath, M.B.; Michalak, J.; Hoang, T. Harpocrates: Privacy-Preserving and Immutable Audit Log for Sensitive Data Operations. arXiv 2022, arXiv:2211.04741. [Google Scholar] [CrossRef]

- Regueiro, C.; Seco, I.; Gutiérrez-Agüero, I.; Urquizu, B.; Mansell, J. A Blockchain-Based Audit Trail Mechanism: Design and Implementation. Algorithms 2021, 14, 341. [Google Scholar] [CrossRef]

- Diro, A.; Kaisar, S.; Saini, A.; Fatima, S.; Hiep, P.C.; Erba, F. Workplace security and privacy implications in the GenAI age: A survey. J. Inf. Secur. Appl. 2025, 89, 103960. [Google Scholar] [CrossRef]

- Doshi-Velez, F.; Kim, B. Towards A Rigorous Science of Interpretable Machine Learning. arXiv 2017, arXiv:1702.08608. [Google Scholar] [CrossRef]

- Gonzales, A.; Guruswamy, G.; Smith, S.R. Synthetic data in health care: A narrative review. PLoS Digit. Health 2023, 2, e0000082. [Google Scholar] [CrossRef]

- Liu, X.; Cruz Rivera, S.; Moher, D.; Calvert, M.J.; Denniston, A.K.; The SPIRIT-AI and CONSORT-AI Working Group; SPIRIT-AI and CONSORT-AI Steering Group; Chan, A.W.; Darzi, A.; Holmes, C.; et al. Reporting guidelines for clinical trial reports for interventions involving artificial intelligence: The CONSORT-AI extension. Nat. Med. 2020, 26, 1364–1374. [Google Scholar] [CrossRef]

- AI-READI Consortium; Writing Committee; Baxter, S.L.; De Sa, V.R.; Ferryman, K.; Jain, P.; Lee, C.S.; Li-Pook-Tha, J.; Liu, T.Y.A.; Owen, J.P.; et al. AI-READI: Rethinking AI data collection, preparation and sharing in diabetes research and beyond. Nat. Metab. 2024, 6, 2210–2212. [Google Scholar] [CrossRef] [PubMed]

- Taylor, N.; Kormilitzin, A.; Lorge, I.; Nevado-Holgado, A.; Cipriani, A.; Joyce, D.W. Model development for bespoke large language models for digital triage assistance in mental health care. Artif. Intell. Med. 2024, 157, 102988. [Google Scholar] [CrossRef]

- Babu, A.; Joseph, A.P. Digital wellness or digital dependency? a critical examination of mental health apps and their implications. Front. Psychiatry 2025, 16, 1581779. [Google Scholar] [CrossRef]

- Brega, J.R.F.; Rodello, I.A.; Dias, D.R.C.; Martins, V.F.; de Paiva Guimaraes, M. A virtual reality environment to support chat rooms for hearing impaired and to teach Brazilian Sign Language (LIBRAS). In Proceedings of the 2014 IEEE/ACS 11th International Conference on Computer Systems and Applications (AICCSA), Doha, Qatar, 10–13 November 2014; IEEE: London, UK, 2014; pp. 433–440. [Google Scholar] [CrossRef]

- Levkovich, I.; Omar, M. Evaluating of BERT-based and Large Language Mod for Suicide Detection, Prevention, and Risk Assessment: A Systematic Review. J. Med. Syst. 2024, 48, 113. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; Wang, Y.; Xiao, Y.; Escamilla, L.; Augustine, B.; Crace, K.; Zhou, G.; Zhang, Y. Evaluating an LLM-Powered Chatbot for Cognitive Restructuring: Insights from Mental Health Professionals. arXiv 2025. [Google Scholar] [CrossRef]

- Nie, J.; Shao, H.; Fan, Y.; Shao, Q.; You, H.; Preindl, M.; Jian, X. LLM-based Conversational AI Therapist for Daily Functioning Screening and Psychotherapeutic Intervention via Everyday Smart Devices. arXiv 2024, arXiv:2403.10779. [Google Scholar] [CrossRef]

- Han, X. NeuroPal: A Clinically-Informed Multimodal LLM Assistant for Mental Health Combining Sleep Chronotherapy, Cognitive Behavioral Reframing, and Adaptive Phytochemical Intervention. arXiv 2025, arXiv:2505.06640. [Google Scholar]

- Abbasi, M.A.; Mirnezami, F.S.; Neshati, A.; Naderi, H. HamRaz: A Culture-Based Persian Conversation Dataset for Person-Centered Therapy Using LLM Agents. arXiv 2025, arXiv:2502.05982. [Google Scholar]

- Alhuzali, H.; Alasmari, A.; Alsaleh, H. MentalQA: An Annotated Arabic Corpus for Questions and Answers of Mental Healthcare. IEEE Access 2024, 12, 101155–101165. [Google Scholar] [CrossRef]

- Podichetty, J.T.; Silvola, R.M.; Rodriguez-Romero, V.; Bergstrom, R.F.; Vakilynejad, M.; Bies, R.R.; Stratford, R.E., Jr. Application of machine learning to predict reduction in total PANSS score and enrich enrollment in schizophrenia clinical trials. Clin. Transl. Sci. 2021, 14, 1864–1874. [Google Scholar] [CrossRef]

- Kroenke, K.; Spitzer, R.L.; Williams, J.B.W. The PHQ-9: Validity of a brief depression severity measure. J. Gen. Intern. Med. 2001, 16, 606–613. [Google Scholar] [CrossRef]

- Carrozzino, D.; Patierno, C.; Fava, G.A.; Guidi, J. The Hamilton Rating Scales for Depression: A Critical Review of Clinimetric Properties of Different Versions. Psychother. Psychosom. 2020, 89, 133–150. [Google Scholar] [CrossRef] [PubMed]

- Aiello-Puchol, A.; García-Alandete, J. A systematic review on the effects of logotherapy and meaning-centered therapy on psychological and existential symptoms in women with breast and gynecological cancer. Support. Care Cancer 2025, 33, 465. [Google Scholar] [CrossRef] [PubMed]

- Kho, J.J.; Song, S.; Tan, S.M.X.; Fitriyah, N.H.; Lokadjaja, M.C.; Yee, J.Y.; Yang, Z.; Chen, E.Y.H.; Lee, J.; Goh, W.W.B.; et al. Leveraging computational linguistics and machine learning for detection of ultra-high risk of mental health disorders in youths. Schizophrenia 2025, 11, 98. [Google Scholar] [CrossRef]

- Lekadir, K.; Frangi, A.F.; Porras, A.R.; Glocker, B.; Cintas, C.; Langlotz, C.P.; Weicken, E.; Asselbergs, F.; Prior, F.; Collins, G.S.; et al. FUTURE-AI: International consensus guideline for trustworthy deployable artificial intelligence in healthcare. BMJ 2025, 388, e081554. [Google Scholar] [CrossRef] [PubMed]

- Liu, J.; Yu, B. FLLMM: A Federated Large-small Language Model Collaboration Based Music Therapy for Mental Disease. In Proceedings of the 2024 3rd International Conference on Artificial Intelligence and Education, Xiamen, China, 22–24 November 2024; ACM: New York, NY, USA, 2024; pp. 724–729. [Google Scholar] [CrossRef]

- Cruz Rivera, S.; Liu, X.; Chan, A.W.; Denniston, A.K.; Calvert, M.J.; The SPIRIT-AI and CONSORT-AI Working Group; Darzi, A.; Holmes, C.; Yau, C.; Moher, D.; et al. Guidelines for clinical trial protocols for interventions involving artificial intelligence: The SPIRIT-AI extension. Nat. Med. 2020, 26, 1351–1363. [Google Scholar] [CrossRef]

- Vasey, B.; Nagendran, M.; Campbell, B.; Clifton, D.A.; Collins, G.S.; Denaxas, S.; Denniston, A.; Faes, L.; Geerts, B.; Ibrahim, M.; et al. Reporting guideline for the early-stage clinical evaluation of decision support systems driven by artificial intelligence: DECIDE-AI. Nat. Med. 2022, 28, 924–933. [Google Scholar] [CrossRef]

- Semenza, J.C.; Paz, S. Climate change and infectious disease in Europe: Impact, projection and adaptation. Lancet Reg. Health-Eur. 2021, 9, 100230. [Google Scholar] [CrossRef]

- Borger, T.; Mosteiro, P.; Kaya, H.; Rijcken, E.; Salah, A.A.; Scheepers, F.; Spruit, M. Federated learning for violence incident prediction in a simulated cross-institutional psychiatric setting. Expert Syst. Appl. 2022, 199, 116720. [Google Scholar] [CrossRef]

- Ringeval, F.; Schuller, B.; Valstar, M.; Gratch, J.; Cowie, R.; Scherer, S.; Mozgai, S.; Cummins, N.; Schmitt, M.; Pantic, M. AVEC 2017: Real-life Depression, and Affect Recognition Workshop and Challenge. In Proceedings of the 7th Annual Workshop on Audio/Visual Emotion Challenge, Mountain View, CA, USA, 23 October 2017; ACM: New York, NY, USA, 2017; pp. 3–9. [Google Scholar] [CrossRef]

- Kessler, R.C.; Berglund, P.; Demler, O.; Jin, R.; Merikangas, K.R.; Walters, E.E. Lifetime Prevalence and Age-of-Onset Distributions of DSM-IV Disorders in the National Comorbidity Survey Replication. Arch. Gen. Psychiatry 2005, 62, 593. [Google Scholar] [CrossRef] [PubMed]

- Wiegand, T.; Krishnamurthy, R.; Kuglitsch, M.; Lee, N.; Pujari, S.; Salathé, M.; Wenzel, M.; Xu, S. WHO and ITU establish benchmarking process for artificial intelligence in health. Lancet 2019, 394, 9–11. [Google Scholar] [CrossRef] [PubMed]