1. Introduction

Accurate short-term load forecasting (STLF) plays a crucial role in the operation of modern power systems, particularly with the increasing integration of renewable energy sources and the ongoing transition toward smart grids [

1,

2]. Reliable demand prediction enables efficient energy management, optimal generator scheduling, and improved grid stability [

3]. In practical settings, even small forecasting errors can lead to significant economic losses and may compromise system reliability, underscoring the importance of robust and accurate forecasting methodologies [

4,

5]. As illustrated in

Figure 1, STLF serves as a key component in modern smart grid infrastructure by facilitating the seamless interaction between demand, generation, and intelligent energy systems.

Over time, forecasting techniques have evolved from traditional statistical models, such as autoregressive integrated moving average (ARIMA) and exponential smoothing, to more advanced machine learning approaches, including gradient boosting methods such as XGBoost and LightGBM. These methods have improved the modeling of nonlinear relationships in load data [

6,

7,

8,

9]. More recently, deep learning models, particularly recurrent neural networks (RNNs) such as long short-term memory (LSTM) and gated recurrent units (GRUs), have demonstrated strong capability in capturing temporal dependencies [

1,

10,

11,

12]. In addition, hybrid architectures that combine convolutional neural networks (CNNs) with recurrent layers, along with attention mechanisms, have been proposed to further enhance feature extraction and sequence modeling performance [

3].

Despite these advancements, several key challenges remain unresolved. First, most existing studies rely on a “one-model-fits-all” assumption, neglecting the fact that model performance is highly dependent on dataset characteristics such as variability, feature richness, and temporal structure [

13,

14]. Second, many works evaluate models on a single dataset, limiting the generalizability of their findings. Third, although modern architectures such as transformers offer increased modeling capacity, their added complexity does not always result in consistent performance gains and may introduce additional computational overhead. Furthermore, limited attention has been given to task-aware evaluation, particularly in distinguishing between single-step and multi-horizon forecasting scenarios [

15,

16].

These limitations highlight a critical research gap: the absence of a systematic and explainable framework for adaptive model selection in STLF. Rather than focusing solely on developing increasingly complex models, there is a growing need for intelligent approaches that can dynamically select the most appropriate model based on data characteristics and forecasting objectives. In addition, the black-box nature of many advanced models limits their applicability in real-world operational environments, where interpretability and transparency are essential [

7,

17,

18].

To address these challenges, this study proposes an explainable meta-learning framework for adaptive model selection in short-term load forecasting. The proposed approach integrates cross-dataset evaluation across multiple power systems (Panama, PJM, and Spanish datasets) and considers both single-step and multi-horizon forecasting tasks [

19,

20,

21]. A meta-learning model is developed to learn the relationship between dataset characteristics and model performance, enabling the selection of the most suitable forecasting model for each scenario [

13,

22]. Furthermore, SHapley Additive exPlanations (SHAP) are incorporated to provide interpretable insights into the model selection process. The increasing integration of electric vehicles (EVs) and renewable energy sources further highlights the need for adaptive and reliable forecasting models, as grid conditions become more dynamic and uncertain.

To clarify the positioning of this work, the proposed framework differs from conventional model selection strategies by adopting a cross-dataset, task-aware meta-learning formulation. While selecting models based on dataset characteristics has been explored in prior studies, existing approaches typically operate on a single dataset or rely on static selection rules. In contrast, the proposed method explicitly models the relationship between dataset properties, forecasting horizon, and model performance, enabling adaptive and context-aware decision-making across multiple datasets and forecasting scenarios.

The contribution lies in the integration of three complementary components within a unified framework: (i) cross-dataset evaluation, (ii) structured meta-feature representation capturing both data and task characteristics, and (iii) explainable meta-learning using SHAP to interpret model selection decisions. While these components have been studied independently, their combined application for adaptive model selection in short-term load forecasting provides a structured and practical advancement.

Unlike AutoML approaches, which primarily focus on optimizing performance within a single dataset through hyperparameter tuning or ensembling, the proposed framework addresses the problem of selecting the most suitable model across datasets and tasks. In addition, the inclusion of task-related factors, such as forecasting horizon and prediction type, allows the framework to capture variations across different forecasting scenarios.

It is important to note that the contribution is primarily framework-level and application-driven, rather than algorithmic. The use of Random Forest is motivated by its robustness, interpretability, and suitability for small-sample settings. Overall, the proposed approach provides a structured and explainable methodology for adaptive model selection in smart grid environments, with potential for practical deployment.

The main contributions of this work are summarized as follows:

Adaptive Model Selection Framework:

We propose a novel meta-learning framework that dynamically selects the most appropriate forecasting model based on dataset characteristics and forecasting tasks, overcoming the limitations of fixed-model approaches.

Cross-Dataset and Multi-Horizon Evaluation:

The framework is evaluated across multiple benchmark datasets and forecasting horizons, providing a comprehensive analysis of model performance under diverse conditions.

Explainable Meta-Learning:

We integrate SHAP-based explainability to provide transparent insights into the factors influencing model selection, enhancing interpretability and trust in the decision-making process.

Practical and Scalable Solution:

The proposed approach offers a robust and scalable methodology that can be integrated into real-world smart grid systems to improve forecasting accuracy and operational efficiency.

2. Related Work

The field of short-term load forecasting (STLF) has undergone significant advancements in recent years, driven by the transition toward smart grids and the increasing integration of renewable energy sources (RESs) [

4,

16,

23]. The growing penetration of solar and wind energy has introduced higher levels of variability and non-stationarity into load profiles, making accurate forecasting more challenging [

24,

25]. Consequently, recent research has focused on developing models capable of capturing complex spatiotemporal dependencies [

6,

26,

27]. However, achieving an effective balance between model complexity and generalization remains an open challenge [

9,

16,

28].

Early STLF approaches relied primarily on statistical models such as ARIMA, SARIMA, and exponential smoothing, which are valued for their interpretability and computational efficiency [

4,

6,

7,

29]. While these models perform adequately under stable and linear conditions, they are often insufficient for capturing nonlinear dynamics and abrupt variations observed in modern power systems [

6,

7,

8,

9]. To overcome these limitations, machine learning (ML) techniques, including XGBoost, Random Forest (RF), and Support Vector Machines (SVMs), have been widely adopted due to their ability to model nonlinear relationships [

1,

12,

30]. Nevertheless, these approaches typically depend on manual feature engineering and have limited capability in modeling long-term temporal dependencies [

8,

31,

32].

More recently, deep learning (DL) methods have gained prominence for their ability to learn hierarchical representations directly from data. Recurrent architectures such as long short-term memory (LSTM) and gated recurrent units (GRUs) have demonstrated strong performance in modeling sequential patterns [

3,

4,

6,

33]. Hybrid models that combine convolutional neural networks (CNNs) with recurrent layers have further improved performance by capturing both spatial and temporal features [

6,

24,

31,

34]. In addition, attention mechanisms and transformer-based models have been explored to capture long-range dependencies more effectively. However, their performance in STLF remains inconsistent, particularly when considering computational cost and data availability constraints [

3,

6,

9].

Despite these advancements, several limitations persist. Many studies continue to assume that a single model can consistently outperform others across different datasets, which is rarely valid in practice [

6,

13,

35]. Furthermore, most experimental evaluations are conducted on a single dataset, limiting the generalizability of the results [

24,

32]. Another critical limitation is the insufficient focus on multi-horizon forecasting, where models optimized for single-step prediction often exhibit performance degradation as the prediction horizon increases [

6,

16,

36]. Additionally, increasing model complexity does not always translate into improved accuracy, raising concerns about scalability and practical deployment [

2,

6].

Table 1 provides a comparative analysis of major time-series forecasting model categories, outlining their strengths and limitations based on previous studies.

These observations highlight a clear gap in the literature, particularly the lack of systematic approaches for cross-dataset evaluation and adaptive, task-aware model selection. Existing methods generally do not provide mechanisms to align model choice with dataset characteristics and forecasting objectives in a structured manner [

12,

13,

32]. To address these challenges, this study proposes an explainable meta-learning framework that enables adaptive model selection across multiple datasets (Panama, PJM, and Spanish) and forecasting scenarios (single-step and multi-horizon). The proposed approach aims to improve generalization, enhance interpretability, and provide a practical and scalable solution for real-world energy forecasting applications.

Recent studies have explored adaptive and ensemble-based approaches for time-series forecasting, including AutoML-based model selection, stacking ensembles, and hybrid frameworks that dynamically combine multiple models. While these methods aim to improve predictive performance, they often focus on combining model outputs rather than explicitly learning the relationship between dataset characteristics and model suitability. In contrast, the proposed framework adopts a meta-learning perspective, where model selection is guided by dataset-specific features and forecasting conditions. Furthermore, unlike many ensemble approaches, the proposed method incorporates explainability through SHAP analysis, enabling transparent and interpretable decision-making. Recent advancements in smart grid systems have increasingly focused on the integration of electric vehicles (EVs) and renewable energy sources (RESs), particularly photovoltaic (PV) and wind energy. The rapid growth of EV adoption introduces significant challenges related to load variability and grid stability, necessitating intelligent charging and discharging strategies. To address these challenges, recent studies have proposed multi-objective optimization frameworks that balance economic cost, user requirements, and grid constraints while maximizing renewable energy utilization. These approaches often incorporate vehicle-to-grid (V2G) mechanisms and energy storage systems to enhance flexibility and resilience in power distribution networks.

In addition, stochastic and real-time scheduling methods have been developed to handle uncertainties in renewable generation and EV demand. Techniques such as model predictive control, reinforcement learning, and hybrid optimization have been used to dynamically coordinate EV charging with renewable energy availability. While these methods demonstrate the importance of advanced control strategies, they also highlight the critical role of accurate forecasting of load and generation patterns in smart grid operation.

Despite these advancements, most existing studies focus on optimizing energy management and charging schedules, with limited attention to the variability of forecasting model performance across datasets and operational scenarios. In contrast, this work addresses the complementary problem of adaptive forecasting model selection, enabling more robust and data-driven decision-making in smart grid environments.

Furthermore, existing model selection approaches, including AutoML and dataset-driven strategies, typically focus on optimizing performance within a single dataset or rely on ensemble techniques. The proposed framework differs by adopting a cross-dataset, task-aware meta-learning approach, which explicitly models the relationship between dataset characteristics, forecasting tasks, and model performance. In addition, the integration of explainability provides transparent insights into the model selection process. Although the individual components are well-established, their integration into a unified and explainable framework represents a practical advancement for adaptive model selection in short-term load forecasting.

3. Methodology

This section introduces the proposed explainable meta-learning framework for adaptive model selection in short-term load forecasting (STLF). The main objective of this framework is to address the variation in model performance across different datasets and forecasting tasks by learning how dataset characteristics influence model effectiveness.

3.1. Problem Formulation

Let a time series dataset be represented as

where x

t denotes the electricity load at time t. The goal of STLF is to predict future load values over a given forecasting horizon h, such that:

where L represents the input sequence length.

In this study, two forecasting settings are considered: single-step forecasting, where h = 1, and multi-horizon forecasting, where h ∈ {1, 6, 12, 24}. Rather than searching for a single model that performs best in all cases, the problem is reformulated as a model selection task. The objective is to identify the most suitable model M* for a given dataset and forecasting horizon:

where 𝓜 represents the set of candidates forecasting models.

3.2. Overall Framework

The proposed framework is structured into four main stages: dataset preparation, model training and evaluation, meta-feature construction, and meta-learning for adaptive model selection.

The process begins with preparing multiple benchmark datasets, namely Panama, PJM, and Spanish. Each dataset is split chronologically into training and testing sets using a 70/30 ratio, and Min-Max normalization is applied based only on the training data to prevent data leakage.

Next, a range of forecasting models is trained on each dataset. Their performance is evaluated using standard metrics such as RMSE, MAE, and MAPE across both single-step and multi-horizon forecasting tasks. These results are then used to construct meta-features that summarize the characteristics of each dataset and task.

Finally, a meta-learning model is trained to learn the relationship between these meta-features and the most suitable forecasting model. This enables the framework to make adaptive and data-driven model selection decisions. Unlike conventional approaches, the proposed framework does not aim to develop a new forecasting model, but rather to learn when each model is most effective across different datasets and forecasting conditions.

To provide a clear and structured view of the proposed approach,

Figure 2 presents the overall architecture of the explainable meta-learning framework. The framework integrates multiple stages, including dataset preparation, base model training, meta-feature extraction, and adaptive model selection, within a unified pipeline. This design enables the framework to systematically learn the relationship between dataset characteristics and model performance across different forecasting tasks and datasets.

3.3. Base Forecasting Models

The proposed framework incorporates a diverse set of forecasting models spanning multiple methodological paradigms, enabling comprehensive evaluation under varying data characteristics and forecasting conditions. Specifically, ARIMA is included as a representative statistical model due to its interpretability and effectiveness in modeling linear temporal patterns. In parallel, XGBoost is employed as a machine learning baseline, given its strong capability to capture nonlinear relationships and handle structured tabular data.

To model complex temporal dependencies, deep learning architectures are also considered. Recurrent neural networks, including long short-term memory (LSTM) and gated recurrent units (GRUs), are utilized for their effectiveness in learning sequential patterns. In addition, the Transformer model is incorporated to capture long-range dependencies through self-attention mechanisms. Furthermore, hybrid architectures such as CNN-BiGRU and CNN-BiGRU-Attention are included to jointly exploit spatial feature extraction and temporal modeling, thereby enhancing predictive performance.

All models are trained using an input sequence length of 168 h, corresponding to one week of historical observations. For multi-horizon forecasting, a direct multi-output strategy is adopted to simultaneously predict multiple future time steps. In the case of the Transformer model, positional encoding is applied to preserve temporal order within the input sequences [

35]. This diverse model set ensures a robust evaluation and supports the subsequent meta-learning process for adaptive model selection.

3.4. Meta-Feature Design

Meta-features are used to characterize both the intrinsic properties of each dataset and the associated forecasting task. Rather than relying solely on raw input data, these features provide a structured representation that captures statistical, temporal, and contextual factors influencing model performance. The selection of meta-features is grounded in established time-series analysis principles and empirical evidence from short-term load forecasting (STLF) studies.

Statistical descriptors such as the coefficient of variation (cv) are included to quantify data variability, which directly impacts model stability and generalization. Datasets with high variability tend to benefit from deep learning models due to their ability to capture complex nonlinear dynamics, whereas lower variability datasets are often better suited to machine learning approaches such as XGBoost.

Temporal dependency is characterized using the autocorrelation coefficient at lag 168 (acf_lag168), corresponding to weekly seasonality commonly observed in electricity load data. This feature is particularly relevant for recurrent architectures such as LSTM and GRU, which are designed to model sequential dependencies.

To represent input complexity, feature richness indicators are incorporated, including the number of input variables (n_features) and the presence of exogenous variables such as weather conditions, electricity prices, and renewable energy signals. These factors influence the suitability of feature-driven models versus representation-learning approaches, as tree-based models rely on informative engineered features while deep learning models can learn latent representations directly from data.

Task-specific meta-features, including forecasting horizon and task type (single-step versus multi-horizon), are also included to reflect the increasing difficulty associated with long-term prediction. Forecasting error typically increases with horizon length, making these features essential for adaptive model selection.

The selected meta-features provide a compact and interpretable representation of the forecasting problem, which is particularly important given the limited size of the meta-dataset. Nevertheless, more advanced descriptors such as seasonality strength, trend components, entropy measures, and frequency-domain features could further enrich the representation and are identified as promising directions for future work.

In this study, the selected meta-features include fundamental dataset attributes such as the number of samples and the number of input variables, as well as contextual indicators such as the presence of weather data, electricity price signals, renewable energy information, and calendar-related features. Additionally, the availability of lag-based features is considered to reflect temporal dependency patterns. Task-specific attributes, including the forecasting horizon and the type of forecasting task (single-step or multi-horizon), are also incorporated.

These meta-features are carefully designed to capture key factors such as data variability, feature richness, and temporal structure, all of which play a critical role in determining model effectiveness. By providing a compact yet expressive representation of the forecasting problem, they enable the meta-learner to identify patterns and relationships that guide the selection of the most appropriate model for each scenario. A summary of the selected meta-features is presented in

Table 2.

3.5. Meta-Learner for Model Selection

The meta-learning problem is formulated as a supervised multi-class classification task, where each class corresponds to a candidate forecasting model. Given a meta-feature vector z describing a specific dataset–task configuration, the objective is to learn a mapping function that predicts the most suitable forecasting model in terms of minimizing prediction error.

A Random Forest classifier is selected as the meta-learner due to several key advantages. First, it is well-suited for small-scale datasets, making it appropriate for the small-sample meta-learning setting considered in this study. Second, Random Forest effectively captures nonlinear relationships between heterogeneous meta-features without requiring extensive hyperparameter tuning. Third, its ensemble structure provides robustness to noise and reduces the risk of overfitting. In addition, Random Forest offers intrinsic feature importance measures, which align with the explainability objective of the proposed framework.

Alternative meta-learning approaches, including support vector machines, gradient boosting methods, and neural networks, were also considered. However, support vector machines are sensitive to kernel selection and feature scaling, gradient boosting methods may overfit in small-data scenarios, and neural networks generally require larger training samples for stable generalization. Therefore, Random Forest provides a balanced trade-off between predictive performance, robustness, and interpretability in this context.

The meta-dataset is constructed by aggregating performance results across multiple datasets and forecasting horizons. Each instance is represented by a meta-feature vector and labeled with the model achieving the lowest Mean Absolute Percentage Error (MAPE). This formulation enables the meta-learner to capture the relationship between dataset characteristics and model suitability in a structured manner.

Instead, it operates under a small-sample meta-learning setting, where the limited number of meta-instances necessitates careful evaluation strategies such as leave-one-out cross-validation.

The meta-learning problem is formulated as a multi-class classification task, where each class corresponds to a candidate forecasting model. A Random Forest-based meta-learner is adopted due to its robustness, resistance to overfitting, and ability to effectively handle heterogeneous meta-features.

Let z denote the meta-feature vector describing a given dataset and forecasting task. The meta-learner aims to predict the optimal forecasting model

as:

where g(⋅) represents the learned mapping between meta-features and model selection.

The constructed meta-dataset consists of 15 instances, each representing a unique combination of dataset, forecasting task, and prediction horizon. Each instance is labeled with the best-performing model, determined based on the minimum Mean Absolute Percentage Error (MAPE).

In this context, each instance captures a distinct configuration of data characteristics and forecasting conditions. To ensure reliable evaluation under these constraints, leave-one-out (LOO) cross-validation is employed. This strategy maximizes data utilization while mitigating the risk of overfitting, making it particularly suitable for small-scale meta-datasets.

To further investigate the adaptive behavior of the proposed framework,

Figure 3 illustrates the optimal model selection across different datasets and forecasting horizons. The figure summarizes the meta-learner predictions for each dataset–task–horizon combination. The results clearly indicate that model performance is highly dependent on both dataset characteristics and forecasting horizon. In particular, hybrid models tend to dominate multi-horizon forecasting scenarios, while simpler models such as LSTM and XGBoost perform better in specific single-step settings. Model performance varies across datasets due to differences in data characteristics, such as variability, temporal dependency, and feature composition.

The meta-learning task is formulated as a multi-class classification problem, where each class corresponds to a forecasting model. A Random Forest classifier is employed due to its robustness and its ability to effectively handle heterogeneous feature types.

Due to the limited availability of diverse and publicly accessible STLF datasets, the resulting meta-dataset is relatively small. Therefore, the problem is formulated as a small-sample meta-learning setting, where each instance represents a unique dataset–task–horizon configuration.

3.6. Explainability via SHAP

To enhance interpretability, SHapley Additive exPlanations (SHAP) are employed to quantify the contribution of each meta-feature to the model selection process. SHAP provides a unified framework grounded in cooperative game theory, enabling the estimation of each feature’s marginal contribution to the meta-learner’s predictions.

In this study, SHAP values are used to analyze the decision-making behavior of the Random Forest-based meta-learner by estimating the relative importance of each meta-feature. Higher SHAP values indicate a stronger influence on the model selection outcome. This analysis enables a deeper understanding of how dataset characteristics—such as size, variability, feature richness, and forecasting horizon—affect the choice of the optimal forecasting model.

Furthermore, SHAP enhances the transparency of the proposed framework by providing interpretable insights into the relationship between data properties and model performance. This is particularly important in smart grid applications, where explainability is essential for building trust and supporting informed operational decisions.

3.7. Implementation Details

All experiments are conducted in Python 3.10 using widely adopted machine learning and deep learning libraries. The input sequence length is fixed at 168 h, corresponding to one week of historical observations. For multi-horizon forecasting, a direct multi-output strategy is employed to predict multiple future time steps simultaneously.

Model performance is evaluated using standard error metrics, including Root Mean Squared Error (RMSE), Mean Absolute Error (MAE), Normalized Root Mean Squared Error (NRMSE), and Mean Absolute Percentage Error (MAPE). In addition, training time is recorded to assess the trade-off between predictive accuracy and computational efficiency.

The proposed framework shifts the focus from identifying a single globally optimal model to understanding the conditions under which different models perform best. By integrating cross-dataset evaluation, meta-learning, and explainability, the approach provides a robust, adaptive, and interpretable solution for short-term load forecasting in modern power systems.

To ensure reproducibility, detailed implementation settings are provided for all models. For machine learning models, XGBoost is configured with a learning rate of 0.1, maximum depth of 6, and 100 estimators, while the Random Forest meta-learner uses 100 trees with default splitting criteria.

For deep learning models, LSTM and GRU architectures consist of two layers with 64 and 32 units, respectively, followed by dropout layers with a rate of 0.2 to mitigate overfitting. These models are trained using the Adam optimizer with a learning rate of 0.001 and a batch size of 32 for up to 50 epochs, with early stopping applied. The Transformer model uses a model dimension of 64 with 4 attention heads, while hybrid models such as CNN-BiGRU combine a convolutional layer with bidirectional recurrent layers.

All experiments are implemented in Python using standard libraries. Consistent preprocessing, normalization, and train–test splits are applied across all models to ensure fair comparison. The selected hyperparameters follow commonly used configurations in the literature and are chosen to provide stable and comparable performance rather than fully optimized results for individual models.

From a computational perspective, the framework consists of an offline training phase and an online inference phase. The offline phase, which involves training multiple forecasting models across datasets and forecasting horizons, represents the primary computational cost and scales with the number of models and datasets. However, this process can be efficiently parallelized. In contrast, the meta-learning component operates on a low-dimensional set of meta-features and introduces minimal computational overhead. During deployment, model selection requires only meta-feature extraction and a single prediction from the meta-learner, making the framework suitable for real-time or near real-time applications.

4. Results and Discussion

This section presents the experimental results obtained from evaluating the proposed framework across multiple datasets and forecasting tasks. The analysis focuses on comparing model performance, validating the research hypothesis, and assessing the effectiveness of the meta-learning approach in adaptive model selection.

4.1. Experimental Setup

The experimental evaluation is conducted on three benchmark datasets Panama, PJM, and Spanish representing diverse electricity consumption patterns and varying levels of complexity. Although these datasets differ in scale, variability, and feature composition, they all belong to the short-term load forecasting domain and therefore do not constitute cross-domain validation. Consequently, the results should be interpreted as indicative rather than conclusive with respect to generalization across broader forecasting scenarios.

Each dataset is split chronologically into 70% for training and 30% for testing, preserving temporal dependencies and preventing information leakage. All models use an input sequence length of 168 h (one week), enabling the capture of weekly seasonality patterns commonly observed in load data.

To ensure fair comparison, all models are evaluated under consistent experimental conditions, including identical preprocessing, feature scaling, and training protocols. Model performance is assessed using multiple evaluation metrics, including RMSE, MAE, NRMSE, and MAPE, providing a comprehensive evaluation of predictive accuracy.

In addition to accuracy, training time is recorded for each model to assess computational efficiency and practical applicability. This enables analysis of the trade-off between predictive performance and computational cost, which is critical for real-world deployment in smart grid environments.

4.2. Evaluation Metrics

To quantitatively evaluate forecasting performance, several widely used evaluation metrics are employed, including the Pearson correlation coefficient (R), coefficient of determination (R2), root mean squared error (RMSE), normalized RMSE (NRMSE), mean absolute error (MAE), and mean absolute percentage error (MAPE). These metrics collectively provide a comprehensive assessment of prediction accuracy and reliability.

Mean Absolute Error (MAE) measures the average magnitude of the prediction errors in megawatts (MW), providing a direct interpretation of the deviation between predicted and actual values in physical units. It is defined as:

where

represents the actual load value,

represents the predicted load value, and n denotes the total number of observations.

Root Mean Squared Error (RMSE) gives greater weight to larger errors due to the squaring operation, making it particularly useful for identifying models that produce large deviations. In smart grid operations, large forecasting errors, especially during peak demand periods, may affect grid stability; therefore, RMSE serves as an important metric for operational reliability. It is calculated as:

Mean Absolute Percentage Error (MAPE) expresses the prediction error as a percentage, enabling scale-independent comparisons between forecasting models. MAPE is selected as the primary metric for model comparison and labeling due to its interpretability and widespread use in short-term load forecasting studies. As a scale-independent metric, it allows consistent comparison across datasets with different magnitudes of electricity demand. This metric is particularly useful in energy markets where forecasting accuracy directly influences operational and economic decisions.

Coefficient of Determination (R

2) evaluates how well the predicted values reproduce the variance of the actual load series. Values closer to 1 indicate better agreement between predicted and observed values and reflect stronger model performance.

where

represents the mean of the observed load values.

In addition, the Pearson correlation coefficient (R) is used to measure the linear relationship between predicted and actual values, providing insight into the strength of their association.

Finally, the Normalized Root Mean Squared Error (NRMSE) is employed to account for scale differences across datasets, allowing fair comparison between models:

Together, these metrics provide a balanced evaluation of both absolute and relative forecasting performance, ensuring a comprehensive assessment of model accuracy and reliability.

4.3. Single-Step Forecasting Results

The performance of all models for single-step forecasting (t + 1) is presented in

Table 3.

Table 3 illustrates the variation in model performance across datasets, highlighting the strong influence of data characteristics such as variability, temporal dependency, and feature composition. The results show that model effectiveness depends on the alignment between dataset properties and model inductive biases. In particular, deep learning models perform better in datasets with complex temporal dynamics, whereas machine learning models such as XGBoost achieve strong performance in more structured scenarios. This observation supports the need for adaptive model selection strategies.

For the Panama dataset, LSTM achieves the best performance, with the lowest MAPE (2.88%) and the highest correlation coefficient (R = 0.971), demonstrating its effectiveness in capturing temporal dependencies. XGBoost and GRU also perform competitively, with slightly higher error values, while ARIMA and Transformer exhibit comparatively lower accuracy.

A similar trend is observed for the PJM dataset, where LSTM again provides the best performance (MAPE = 7.71%), closely followed by GRU (7.75%). These results suggest that recurrent architectures are particularly well-suited for datasets with strong temporal dynamics. In contrast, ARIMA shows poor performance (MAPE = 13.98%), confirming its limitations in modeling complex and nonlinear patterns in large-scale power systems.

For the Spanish dataset, the results differ notably. XGBoost achieves the best performance with a remarkably low MAPE of 1.07% and the highest correlation coefficient (R = 0.996), significantly outperforming all deep learning models. This indicates that, for datasets with well-structured features and lower variability, machine learning models can be more effective than deep learning approaches. Although LSTM and GRU still achieve strong results, their performance remains inferior to XGBoost in this case.

Overall, these findings clearly demonstrate that model effectiveness is highly dependent on dataset characteristics. While deep learning models, particularly LSTM, excel in capturing temporal dependencies in certain datasets, machine learning approaches such as XGBoost can outperform them when the underlying data structure is more suitable.

4.4. Multi-Horizon Forecasting Results

Figure 4,

Figure 5 and

Figure 6 provide a visual comparison of model performance across different forecasting horizons. A consistent trend can be observed, where prediction accuracy decreases as the forecasting horizon increases. This reflects the growing uncertainty associated with long-term forecasting. Additionally, the results highlight the robustness of hybrid models, particularly CNN-BiGRU-Attention, which maintains relatively stable performance across extended horizons compared to other models.

Across all datasets, a clear trend is observed: forecasting accuracy decreases as the prediction horizon increases, reflecting the inherent difficulty of long-term prediction. Nevertheless, hybrid architectures demonstrate strong robustness in maintaining relatively stable performance across extended horizons. In particular, CNN-BiGRU-Attention consistently achieves lower MAPE values than CNN-BiGRU in most cases, indicating that the attention mechanism enhances the model’s ability to focus on relevant temporal patterns.

Furthermore, the results highlight dataset-dependent behavior. While both models perform competitively on the Panama dataset, performance degradation is more pronounced in the PJM dataset due to its higher variability and complexity. In contrast, the Spanish dataset shows relatively stable performance across horizons, suggesting a more structured and predictable load pattern.

Overall, these findings confirm that hybrid deep learning models are particularly well-suited for multi-horizon forecasting tasks, as they effectively capture both local and long-range temporal dependencies. Compared to ensemble-based forecasting approaches, which combine predictions from multiple models, the proposed meta-learning framework focuses on selecting the most suitable model for each scenario. This reduces computational overhead and avoids the complexity associated with maintaining multiple models simultaneously. In addition, adaptive ensemble methods typically require extensive tuning and may lack interpretability, whereas the proposed approach provides a transparent and data-driven selection mechanism supported by explainability analysis.

4.5. Statistical Validation

To validate the observed differences in model performance, a Friedman test is conducted across all datasets and forecasting horizons. The resulting p-value (0.0477) is below the significance threshold of 0.05, indicating statistically significant differences among the models compared.

In addition, pairwise Wilcoxon signed-rank tests are performed to further assess the significance of performance differences between model pairs. The results (p < 0.05) suggest that performance differences exist, although results should be interpreted cautiously due to the limited sample size.

These findings provide strong statistical evidence supporting the rejection of the null hypothesis and confirm that no single forecasting model consistently outperforms others across all datasets and forecasting scenarios. Due to the limited availability of diverse and publicly accessible short-term load forecasting (STLF) datasets, the resulting meta-dataset is relatively small. This setting can be characterized as a few-shot meta-learning problem, where each instance represents a distinct dataset–task–horizon configuration. Such scenarios are common in meta-learning applications and require robust learning strategies capable of generalizing from limited samples. It is important to note that the reliability of statistical significance tests is limited in small-sample settings. Given the relatively small number of datasets and experimental instances, the results of the Friedman and Wilcoxon tests should be interpreted with caution.

4.6. Meta-Learner Performance

The performance of the proposed meta-learning model is evaluated using leave-one-out (LOO) cross-validation. The meta-learner achieves an accuracy of 0.60, which is substantially higher than the random baseline of 0.17 (uniform selection among six candidate models), indicating that it captures meaningful relationships between meta-features and model performance. The training accuracy of 0.80 further suggests that the model effectively learns patterns within the meta-dataset.

Given the limited size of the meta-dataset, the achieved accuracy should be interpreted with caution. However, the consistent improvement over the random baseline demonstrates that the meta-learner extracts non-trivial and informative patterns despite the small number of instances.

MAPE is used to label the best-performing model in the meta-learning stage, while additional metrics (RMSE, MAE, and NRMSE) are used to provide a comprehensive evaluation of forecasting performance.

To further contextualize these results, simple baseline strategies are considered, including selecting a fixed model or choosing the globally best-performing model across datasets. Unlike these approaches, the proposed framework adapts model selection based on dataset characteristics and forecasting conditions, enabling more flexible and context-aware decision-making.

Overall, the results demonstrate the feasibility of applying meta-learning in small-sample settings and support the use of Random Forest as a robust and interpretable meta-learner. In addition, the lightweight nature of the meta-learner ensures efficient model selection, supporting practical deployment.

4.7. Explainability Analysis

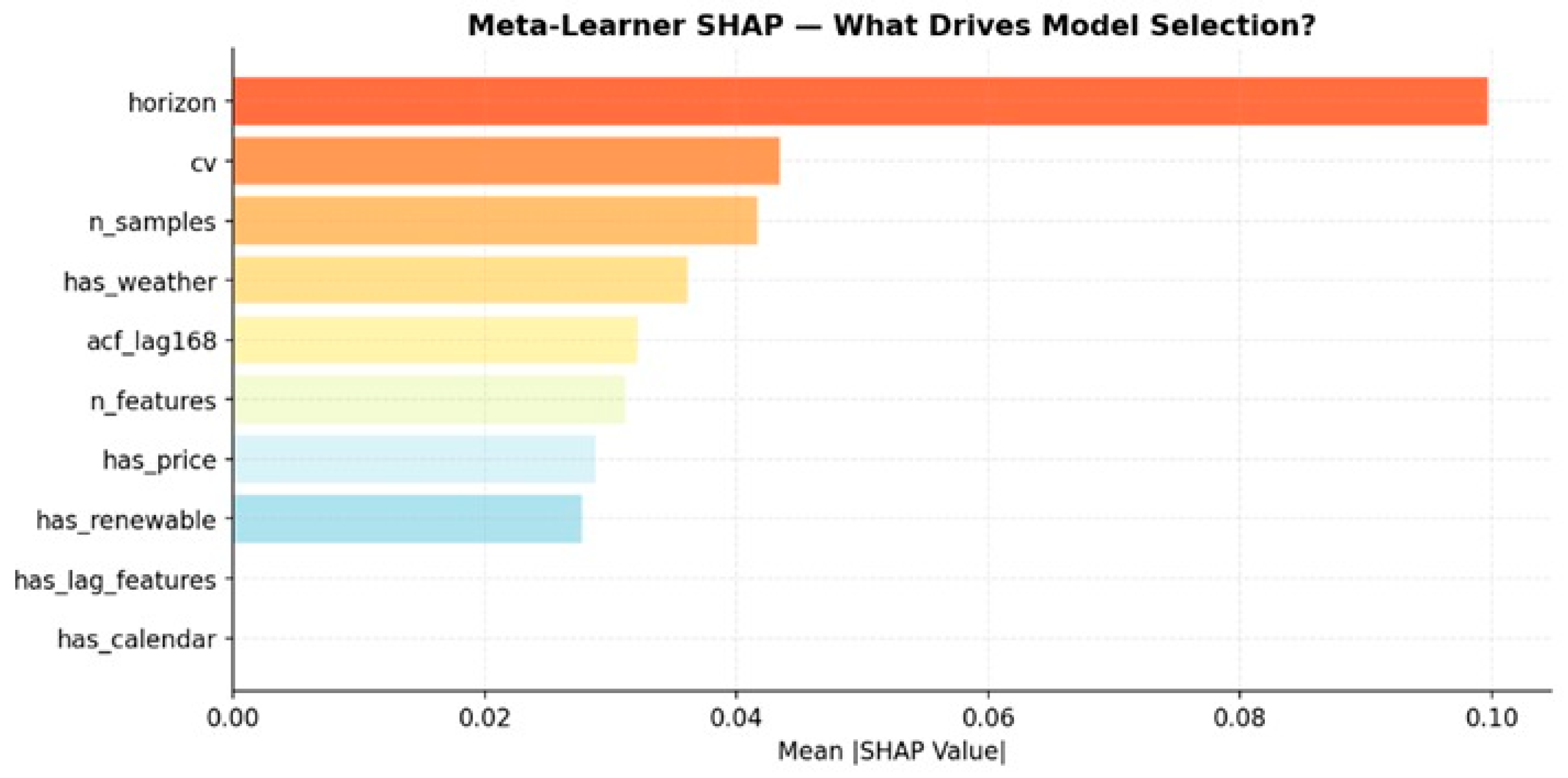

Figure 7 provides valuable insights into the relative importance of meta-features in the model selection process. The dominance of forecasting horizon confirms its critical role in determining model suitability, while the influence of dataset variability and size highlights the importance of data characteristics in guiding model selection. These findings support the effectiveness of the proposed meta-learning framework in capturing meaningful relationships between data properties and model performance.

The results indicate that the forecasting horizon is the most influential feature (SHAP = 0.100), highlighting its critical role in determining model suitability. This is followed by dataset variability, measured by the coefficient of variation (cv = 0.043), and dataset size (n_samples = 0.042), both of which significantly impact model performance. The presence of weather-related features (has_weather = 0.036) also contributes notably, reflecting the importance of contextual information in load forecasting.

Additional features, such as weekly autocorrelation (acf_lag168) and the number of input variables (n_features), exhibit moderate influence. In contrast, calendar-related features (has_calendar) and lag feature indicators show minimal impact, suggesting that these factors alone are insufficient to drive model selection decisions.

Overall, these findings confirm that forecasting horizon and intrinsic dataset characteristics—particularly variability and size—are the primary determinants of model effectiveness. This provides actionable insights for practitioners, enabling more informed and data-driven selection of forecasting models in smart grid environments.

While the importance of forecasting horizon may appear intuitive, the SHAP analysis provides a quantitative assessment of its relative influence compared to other meta-features. More importantly, it reveals how forecasting horizon interacts with dataset characteristics, such as variability and feature richness, to influence model selection decisions.

For instance, higher variability combined with longer forecasting horizons tends to favor hybrid and deep learning models, whereas lower variability and shorter horizons are more suitable for machine learning models such as XGBoost. These findings highlight the importance of feature interactions in guiding adaptive and context-aware model selection.

4.8. Discussion

The experimental results demonstrate that model performance varies significantly across datasets and forecasting tasks, highlighting the strong influence of data characteristics such as variability, temporal dependency, feature richness, and prediction horizon. This confirms that a single-model strategy is insufficient for real-world short-term load forecasting (STLF) applications and motivates the need for adaptive model selection.

Model performance can be explained by the interaction between dataset characteristics and model inductive biases. Recurrent models such as LSTM and GRU perform well in scenarios with strong temporal dependencies, while machine learning models such as XGBoost are more effective for structured datasets with informative features. Hybrid architectures, including CNN-BiGRU-Attention, demonstrate superior performance in more complex scenarios, particularly for longer forecasting horizons, due to their ability to capture both local and long-term patterns.

The results also show that increasing model complexity does not necessarily lead to better performance. Advanced models such as Transformers may underperform in moderate-sized datasets due to their sensitivity to hyperparameters and lack of strong inductive bias for sequential data. This highlights the importance of selecting models based on data characteristics rather than architectural complexity alone.

Forecasting horizon plays a critical role in model performance. As the prediction horizon increases, uncertainty accumulates, making the task more challenging and favoring models with higher representational capacity. This observation further supports the need for context-aware model selection strategies.

The proposed meta-learning framework addresses this challenge by learning the relationship between dataset characteristics and model performance, enabling adaptive and data-driven model selection. Compared to static or heuristic strategies, this approach improves robustness across datasets and reduces the risk of suboptimal model choice.

The integration of SHAP-based explainability provides additional insight into the decision-making process, identifying forecasting horizon, data variability, and dataset size as key factors influencing model selection. This interpretability is particularly important in smart grid applications, where transparency and trust are essential.

From a computational perspective, the framework is scalable in terms of its modular design. While the offline training phase incurs higher computational cost as the number of datasets and models increases, the inference phase remains efficient, requiring only meta-feature extraction and a lightweight prediction. This makes the framework suitable for real-time or near real-time applications.

Despite these advantages, several limitations should be acknowledged. The meta-dataset is relatively small, which may restrict generalization capability, and the evaluation is limited to datasets within the same domain. In addition, the framework focuses on deterministic forecasting and does not account for uncertainty. Furthermore, statistical tests are constrained by the small sample size and should be interpreted cautiously.

Future work will focus on expanding the meta-dataset with more diverse datasets, incorporating probabilistic forecasting, exploring advanced meta-features (e.g., spectral and entropy-based features), and applying more robust evaluation and optimization strategies.

Overall, the findings demonstrate that adaptive and explainable model selection represents a promising direction for improving forecasting performance in STLF. By focusing on understanding when and why different models perform best, the proposed framework provides both methodological insight and practical value.

5. Conclusions and Future Work

This study introduced an explainable meta-learning framework for adaptive model selection in short-term load forecasting (STLF). Unlike conventional approaches that aim to identify a single optimal model, the proposed framework learns to select the most suitable model based on dataset characteristics and forecasting conditions.

Experimental evaluation across three benchmark datasets demonstrated that model performance varies significantly with both the dataset and forecasting horizon. LSTM achieved the best single-step performance on the Panama (MAPE = 2.88%) and PJM (MAPE = 7.71%) datasets, while XGBoost outperformed other models on the Spanish dataset (MAPE = 1.07%). The statistical analysis suggests meaningful performance differences, supporting the need for adaptive and data-driven model selection strategies.

The proposed framework effectively captures the relationship between dataset properties and model performance, enabling more robust and informed model selection. In addition, the integration of SHAP-based explainability provides transparent insights into the factors influencing model choice, enhancing interpretability and trust in decision-making.

Despite these promising results, several limitations should be acknowledged. The meta-dataset is relatively small, which may restrict generalization capability, and the evaluation is limited to datasets within the same domain. Furthermore, the framework focuses on deterministic forecasting and does not explicitly account for uncertainty.

Future work will focus on expanding the framework through the inclusion of more diverse and cross-domain datasets, the integration of probabilistic forecasting techniques, and the incorporation of advanced meta-features such as spectral and entropy-based descriptors. In addition, exploring automated hyperparameter optimization and scalable implementations, including distributed and parallel training, will further enhance the robustness and applicability of the approach.

From a practical perspective, the framework is designed to support real-world deployment by separating computationally intensive training from efficient inference. Further validation on additional power systems, particularly in Middle Eastern and Gulf smart grids, will help assess its generalizability and practical relevance.

Overall, this work demonstrates that adaptive and explainable model selection is a promising direction for improving forecasting performance in smart grid applications.