1. Introduction

Anomaly segmentation aims to identify and outline anomalous regions (e.g., defects in objects) within an image and is a crucial task in computer vision. Traditional supervised approaches have not been practical, as they required a huge amount of normal or abnormal image samples for each object to train a model. Therefore, zero-shot anomaly segmentation (ZSAS) has emerged as a promising paradigm in real-world scenarios such as industrial visual inspection, where models must detect and localize defects in previously unseen objects without class-specific supervision. Thanks to the introduction of vision–language models such as CLIP [

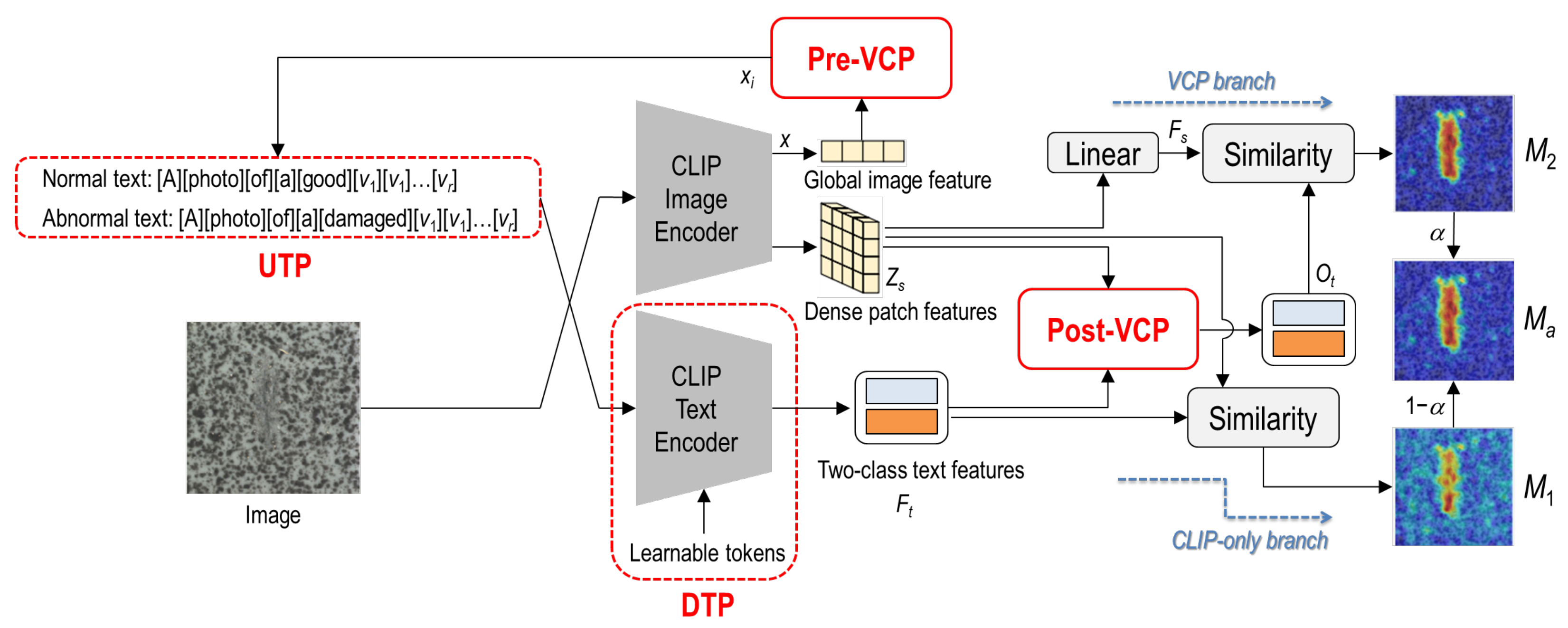

1], recent ZSAS approaches have demonstrated remarkable progress. In particular, VCP-CLIP [

2] achieved extremely high accuracy in anomaly localization across diverse industrial benchmarks by leveraging visual context prompting (VCP) to align image features with textual priors.

Despite its impressive performance, our analysis reveals that the VCP-CLIP framework still has several under-explored limitations that constrain its pixel-level localization quality and robustness. Specifically, its reliance on two learnable temperature scaling parameters leads to unstable training, and its simple aggregation of multi-branch anomaly cues separately supervised remains suboptimal for complex and inconsistent defect patterns or shapes. Furthermore, its prompting mechanism does not directly and fully transfer global visual context information to the text prompt, because the image representations are added as an auxiliary context to learnable category tokens.

These observations motivated us to revisit VCP-CLIP and introduce a set of impactful modifications to further increase pixel-level and image-level segmentation accuracy and reliability. We apply four modifications to VCP-CLIP. First, we propose fixing the temperature parameters used for scaling similarity scores, which we find to be more reliable and stable than learning them during optimization. Second, we propose adaptively integrating anomaly cues obtained from two complementary branches with a learnable weighting parameter, enabling more optimal fusion. Third, we propose dynamically balancing segmentation objectives with a learnable weighting parameter to better guide optimization. Finally, we propose an image-conditioned direct prompting (IDP) module that replaces category tokens with image-conditioned visual tokens in the text prompt. Although each modification is simple, their combination yields significant improvements in both pixel-level and image-level metrics. Importantly, the proposed modifications introduce negligible additional computational overhead and preserve full compatibility with CLIP. The upgraded VCP-CLIP with the proposed modifications, named VCP-CLIP+, consistently outperforms recent CLIP-based ZSAS methods including VCP-CLIP on multiple benchmark datasets [

3,

4,

5,

6], demonstrating its superiority in anomaly localization quality, robustness, and image-level anomaly detection. The main contributions of this study are summarized as follows:

We identify several problems or limitations of VCP-CLIP in its framework that make VCP-CLIP unstable and less optimized.

We provide simple yet effective solutions to remedy the problems.

We demonstrate that the solutions can significantly improve the performance of VCP-CLIP, consistently achieving the best performance among recent CLIP-based ZSAS methods on multiple industrial benchmark datasets.

The remainder of this paper is organized as follows. In

Section 2, we review previous studies related to ZSAS. In

Section 3, we explain VCP-CLIP in more detail because it is the baseline method of this study. Then, we elaborate on the methods proposed to improve VCP-CLIP’s performance in

Section 4. The experimental results are given in

Section 5, and the weakness and limitations of the proposed methodology are discussed in

Section 6. Finally, the conclusion is drawn in

Section 7.

2. Related Work

2.1. Zero-Shot Anomaly Segmentation

ZSAS aims to detect and localize anomalous regions within an image without relying on category-specific training data. Early unsupervised methods mainly modeled normal appearance [

7,

8,

9,

10,

11] or synthesized artificial defects [

12,

13,

14,

15,

16], thus lacking the ability to generalize to unseen categories.

With the emergence of large vision–language models such as CLIP, recent approaches have explored CLIP-based ZSAS frameworks [

2,

17,

18,

19,

20,

21,

22,

23] that leverage semantic alignment between image and text descriptors. WinCLIP [

17] and AnVoL [

18] proposed training-free pipelines by crafting dual text prompts (e.g., “a photo of a good object” vs. “a photo of a damaged object”) and computing cosine similarity between patch-level image features and text embeddings. However, their performance depended heavily on how they designed prompts and required extensive prompt engineering.

VCP-CLIP [

2] alleviated prompt sensitivity by introducing visual context prompting, injecting global and local visual cues into the text space through the Pre-VCP and Post-VCP modules. This design significantly improved localization quality while avoiding category-specific textual templates. Nevertheless, VCP-CLIP still relied on a fixed, manually designed rule for combining the anomaly maps from its two generation branches, along with learnable temperature scaling parameters whose optimization behavior has not been fully examined. These factors leave room for improving both the training stability and the spatial localization precision.

More recent studies further explored learnable prompting within CLIP-based frameworks. AnomalyCLIP [

19] introduced object-agnostic prompt learning and fine-grained region-level similarity computation, enabling unified image-level and pixel-level anomaly detection without additional normal-data training. AdaCLIP [

20] combined static and dynamic prompts to adapt representations to the context of the image, improving robustness across datasets while maintaining zero-shot capabilities. AA-CLIP [

21] strengthened image-level anomaly detection through anomaly-aware prompt learning. PA-CLIP [

22] introduced patch-aware prompting, enhancing fine-grained spatial sensitivity by aligning CLIP’s patch tokens with anomaly-relevant semantics. AF-CLIP [

23] further amplified anomaly separability by shifting visual feature distributions to anomaly-aware directions in the joint image-text space. These studies highlighted a growing trend toward anomaly-aware prompt optimization within CLIP frameworks.

Our study revisits the VCP-CLIP framework from a complementary perspective, i.e., rather than introducing additional complicated and heavy architectural components, we focus on improving optimization stability and performance through simple modifications such as training strategy re-establishment or loss function redesign [

24].

2.2. Prompt Learning in Vision–Language Models

Prompt learning has become a key strategy for adapting large-scale vision–language models like CLIP to downstream tasks. CoOp [

25] and CoCoOp [

26] introduced learnable text prompts for few-shot image classification. DenseCLIP [

27] extended prompt tuning to dense prediction tasks such as semantic segmentation using a transformer-based context-aware prompting module. ZegCLIP [

28] applied deep prompt tuning by injecting learnable prompts into multiple transformer layers of the CLIP image encoder. Visual prompt tuning [

29] presented a new direction by inserting learnable prompts into the input tokens of a Vision Transformer (ViT) [

30], offering a parameter-efficient alternative to the previous full model finetuning. These approaches demonstrate different ways to adapt visual-language models for global and dense visual understanding through prompt learning.

Inspired by the preceding prompt learning approaches, we adopt an efficient image-conditioned text prompting strategy that fuses the image encoder outputs with learnable tokens and uses the image-conditioned learnable tokens to compose the normal or abnormal text prompts. This design maintains the structural integrity of the ViT backbone and provides a stable mechanism for instance-adaptive visual conditioning, particularly beneficial for anomaly segmentation, where fine-grained spatial coherence is essential.

2.3. Reproducibility in Vision–Language Models

Reproducibility challenges in large pretrained models have been widely documented, and previous studies [

31,

32] reported significant performance variance arising from small changes in initialization, hardware, or optimization conditions. Motivated by these findings, we analyze the sensitivity of VCP-CLIP’s similarity scaling mechanism and provide a more stable alternative within our improved framework.

2.4. Evaluation Standardization

Recent efforts such as Anomalib [

33] and universal anomaly detection benchmarks [

34] have contributed to establishing standardized evaluation protocols. These frameworks highlight the importance of consistent metrics and reproducible testing environments. In this study, we also try to build a standardized evaluation environment and fairly analyze the performance of ZSAS methods.

3. VCP-CLIP

VCP-CLIP [

2] is a ZSAS framework built on CLIP (see

Figure 1). It replaces hand-crafted text prompts with learnable prompts and injects visual information into the text space through VCP. It produces two anomaly maps from two Siamese branches: one (

) from a CLIP-only branch that uses Unified Text Prompting (UTP) and Deep Text Prompting (DTP), and another (

) from an additional VCP branch where the text embeddings are refined by VCP modules. These two maps are finally fused into a single anomaly prediction at inference time.

3.1. Unified and Deep Text Prompting

UTP is adopted to avoid manual prompt engineering and category-specific templates. Instead of writing a separate sentence for each category, UTP uses a shared prompt template and a small number of learnable vectors that encode category semantics, allowing to encode normal and abnormal states in a category-agnostic manner. Let be learnable vectors in the word-embedding space, and be a token that indicates whether the prompt refers to a normal or abnormal condition. After testing several state word pairs and two prompt templates, the best-performing state pair and prompt template were chosen: . Here, is instantiated as “good” for the normal prompt and “damaged” for the abnormal prompt. is shared across all categories, allowing the model to capture category semantics without relying on hand-crafted descriptions.

DTP further adapts the CLIP text encoder to the anomaly segmentation task. At each transformer layer, a small number of learnable tokens are inserted at the beginning of the UTP-based text embedding. This deep prompt tuning enables the textual space to better align with the visual feature distribution learned by CLIP.

3.2. Visual Context Prompting Modules

Even if UTP and DTP are applied, the prompts are still constructed without explicitly using visual information, and the interaction between visual and textual modalities remains limited. To address this problem, VCP-CLIP introduces VCP, which consists of Pre-VCP and Post-VCP modules.

Pre-VCP. The image encoder (ViT-L/14-336) produces image embeddings at the [CLS] token position that captures global semantics of the input image. Pre-VCP passes this global image feature x through a lightweight “Mini-Net” and maps it into the same word-embedding space as the learnable category vectors. The resulting global visual vectors are added to the UTP vectors , yielding image-conditioned category tokens: . These enriched tokens are then used to compose the normal and abnormal prompts. In this way, the text encoder receives prompts that already encode the overall visual context of the current image, rather than purely category-level semantics.

Post-VCP. While Pre-VCP injects global visual context into the textual space, Post-VCP refines the text embeddings using fine-grained patch features. For each selected image-encoder layer l, the flattened patch features and the current text embeddings are projected into a shared latent space to form queries, keys, and values. A multi-head attention module then updates the text embeddings by attending to spatial image features, yielding refined normal and abnormal text embeddings . The attention maps from the Post-VCP module indicate that abnormal prompts tend to focus more on defective regions than normal prompts, showing that Post-VCP effectively injects fine-grained visual information into the textual space.

3.3. Anomaly Map Generation

Given an input image, the CLIP image encoder extracts patch-level features from several transformer layers l (l = 6, 12, 18, 24). For each layer, the patch features are projected into the joint image-text space and L2-normalized to obtain . On the text side, UTP and DTP generate two text embeddings corresponding to the normal and abnormal states of the image; after projection and normalization, their concatenation is denoted by .

The CLIP-only branch computes a two-class similarity matrix between the normalized patch embeddings and the normalized text embeddings, and converts it into a layer-wise anomaly map. Formally, for each layer l, where denotes bilinear upsampling to the input resolution and is a learnable temperature parameter. The softmax is applied along the class (normal/abnormal) dimension, and the abnormal channel of is taken as the anomaly score at layer l. Aggregating the maps over the selected layers yields the CLIP anomaly map .

In the VCP branch, visual context prompting refines the text representations using image features. Pre-VCP injects a global image feature into the prompts, and Post-VCP further updates the text embeddings through a cross-attention mechanism with patch-wise features. For each layer l, this process produces updated text embeddings . The corresponding layer-wise anomaly map is then computed as where denotes the L2-normalized patch features and is a learnable temperature parameter. Again, the abnormal channel of is used as the anomaly score, and averaging over layers produces the VCP anomaly map .

During training, and are separately supervised by the ground-truth segmentation mask using a combination of pixel-wise Focal loss and Dice loss, and the total loss is given by the sum of the losses from the two branches: . At inference time, the two anomaly maps generated from the CLIP-only and VCP branches are fused with a fixed weight = 0.75: = .

4. VCP-CLIP+

VCP-CLIP+ introduces a set of simple yet impactful modifications to the VCP-CLIP framework, each modification is described in the subsequent subsections.

4.1. Fixing Temperature Parameters for Stable Training

In

Section 3.3, the VCP-CLIP framework introduces two temperature parameters,

and

, which scale the cosine similarity matrices used to generate anomaly maps in the CLIP-only and VCP branches. These temperatures control the sharpness of the softmax distribution and directly influence how strongly the model distinguishes normal and abnormal regions.

In VCP-CLIP, both

and

are treated as learnable scalar parameters. However, our experiments in

Section 5 reveal that learning these parameters tends to be under-optimized and unstable, degrading the reproducibility of VCP-CLIP. Specifically, we often observed that both parameters continuously decreased during training, convergent to very small values. The problem is that small temperature values make the softmax distribution excessively sharp, amplifying minor feature differences and causing unstable or incorrect anomaly responses. Therefore, in this study, we propose to experimentally find parameter values that ensure stable training and reliable anomaly map generation and fix them during optimization. In addition, since two parameters work the same way, we fix them to an identical value (

). In

Section 5, we show that fixing these parameters to an optimal value, rather than learning them, leads to more stable training and more accurate anomaly map generation.

4.2. Optimizing a Unified Anomaly Map

As explained in

Section 3.3, the VCP-CLIP framework produces two anomaly maps from different branches: the CLIP anomaly map

and the VCP anomaly map

. During training, these two maps are supervised separately using pixel-level segmentation losses. However, at inference time, VCP-CLIP fuses the maps with a fixed weight

. This design introduces a fundamental training–inference inconsistency:

The model is never optimized to improve the final fused map that is actually used at inference.

The fixed fusion weight is manually chosen and may be suboptimal for different datasets or training steps.

The mismatch between supervision (for and ) and inference (for ) can hinder generalization.

To address these issues, we propose a unified anomaly map optimization strategy. Instead of supervising

and

separately, we directly optimize a fused anomaly map during training. Specifically, we compute the final anomaly map as follows:

where

is a learnable scalar parameter, initialized similarly to the fixed value used in VCP-CLIP, and

is the sigmoid function to constrain the range to [0, 1]. This allows the model to learn an optimal balance between the two anomaly maps in a data-driven and adaptive manner. This design also ensures consistent optimization between training and inference, eliminates reliance on manually tuned fusion weights, and improves overall segmentation performance without modifying the inference pipeline of VCP-CLIP. Our experiments in

Section 5 confirm that this approach provides more robust and accurate anomaly localization.

4.3. Rebalancing Losses with Learnable Weights

In the VCP-CLIP framework, the segmentation loss is computed independently for the CLIP and VCP branches, and each branch loss is simply computed by summing the Dice and Focal losses. This requires an assumption that the Dice and Focal losses contribute equally across all training stages and data distributions. However, in practice, these two losses behave very differently: Dice loss is more sensitive to spatial overlap, while Focal loss focuses on hard samples and class imbalance. Treating them equally may lead to unstable gradients or suboptimal supervision. To address this issue, we introduce a learnable weighting mechanism that balances the contributions of the Dice and Focal losses during training. Specifically, we define the total loss as follows:

where

is a learnable scalar parameter and is shared between the branches. This formulation offers two advantages:

It allows the model to adaptively adjust the relative importance of Focal and Dice losses depending on the current optimization state and data distribution.

It encourages smoother training, especially in the early epochs when Dice loss is dominant due to class imbalance.

In

Section 5, we show that this learnable reweighting consistently improves segmentation performance.

4.4. Direct Text Prompting for Visual Adaptation

In the VCP-CLIP framework, category semantics are encoded by learnable vectors in UTP and refined by Pre-VCP, where global image features are transformed by a Mini-Net and added as auxiliary context to the category tokens. We think that this design provides an indirect and incomplete visual conditioning for text prompts. Therefore, we reformulate the prompt conditioning mechanism by removing the category tokens in UTP and the Pre-VCP module, and propose an image-conditioned direct prompting (IDP) module, a simpler and more direct alternative. Instead of introducing category-specific prompt vectors and indirectly injecting image features to the category prompt vectors, IDP directly derives visual prompt tokens from the global image representation and uses them in place of UTP’s category tokens. By simplifying the visual conditioning pipeline, IDP avoids unnecessary intermediate transformation and alignment and reduces optimization complexity. As the visual context is incorporated into the text encoder in a more direct manner, IDP ensures that the visual context is more explicitly attended to within the text encoder. Therefore, we believe that IDP can work better than the prompting mechanism of VCP-CLIP. Importantly, IDP preserves the pretrained CLIP backbone entirely frozen and introduces only a small number of learnable visual tokens.

Figure 2 shows the process flow of the IDP module. Let

denote the global image feature output of the CLIP image encoder, where

C is the feature dimension. To enrich this representation, we introduce a set of learnable prompt tokens

, where

N is the number of prompt tokens, which are shared between all samples in a batch. The prompt tokens are summarized into a single prompt embedding and equivalently fused with the global image feature, resulting in an image-conditioned prompt token. In detail, the following steps are performed:

Prompt summarization: Prompt tokens

P are projected along the token dimension through a linear layer

, yielding a summary vector:

Normalization: Layer normalization is applied to the summarized prompt vector

s to stabilize training and improve generalization:

Feature fusion: The normalized prompt summary vector

is concatenated with the global image feature

x, and projected back to

using a linear layer

:

The resulting embedding y is treated as a single token and serves as an image-conditioned category-agnostic token in UTP: . The token count can be tuned based on training complexity and performance. In this study, N is set to 3 by default, which offers a good trade-off between performance and efficiency.

5. Experiments

5.1. Experimental Setup

Following previous studies, we evaluated our method in a cross-dataset ZSAS setting, where the model was trained on the VisA dataset [

5] and tested on the MVTec-AD dataset [

4]. VisA contains 12 real-world product categories with diverse anomaly types such as contamination, deformation, and missing components, and is used exclusively for training. MVTec-AD consists of 15 industrial object categories with pixel-level annotations and serves as an evaluation set, enabling assessment of generalization to completely unseen product types. Images in the categories of “bottle”, “hazelnut”, “wood”, “zipper”, and “leather” of MVTec-AD were used as the validation set to show the model’s zero-shot performance during the training process. We report both pixel-level and image-level evaluation metrics, including AUROC (Area Under the Receiver Operating Characteristic), AUPRO (Area Under Per-Region Overlap), AP (Average Precision), F1 score, and IoU (Intersection over Union). These metrics collectively measure segmentation quality, anomaly discrimination ability, and threshold-sensitive detection performance.

To establish a fair and consistent evaluation protocol between methods, we standardized the image-level anomaly score computation using the Top-k aggregation strategy originally adopted by VCP-CLIP. Specifically, we used k = 2000 as the primary evaluation setting and also report results with k = 1, since the single-point aggregation is closely correlated with the max-based scoring used in several recent ZSAS methods. This dual evaluation enables a more comprehensive comparison and avoids bias toward a particular aggregation scheme.

As our baseline, we reproduced VCP-CLIP using the official code and hyperparameters [

35]. We then incrementally incorporated four proposed modifications described in

Section 4 to analyze their individual and combined contributions. In addition to the reproduced baseline, we compared our final model, VCP-CLIP+, with representative CLIP-based ZSAS approaches, including WinCLIP [

17], AnoVL [

18], AnomalyCLIP [

19], AdaCLIP [

20], and AA-CLIP [

21] under identical training and inference conditions.

For VCP-CLIP and VCP-CLIP+, CLIP ViT-L/14 (336 × 336) was adopted as the vision backbone initialized with official pretrained weights. The input resolution was set to 518 × 518. and were initialized to 0.5. Training was conducted for 10 epochs using Adam with a learning rate of 4 × , = 0.5, = 0.999, and a batch size of 16. For the other ZSAS methods, we used the backbones and hyperparameters specified in the authors’ official implementations. The software implementation was carried out using Python 3.9.21 and PyTorch 2.5.1 in Ubuntu 20.04.6 LTS. All experiments were performed on a PC equipped with an Intel i7-13700F CPU, 64 GB RAM, and a single NVIDIA RTX 4060 Ti GPU.

5.2. Ablation Study

To better understand the behavior of VCP-CLIP+, we performed three types of ablation studies: (1) ablation on the proposed modifications, (2) ablation on the number of prompt tokens, and (3) ablation on the temperature parameters. These analyses allow us to isolate the contribution of each design choice and assess its influence on anomaly detection performance.

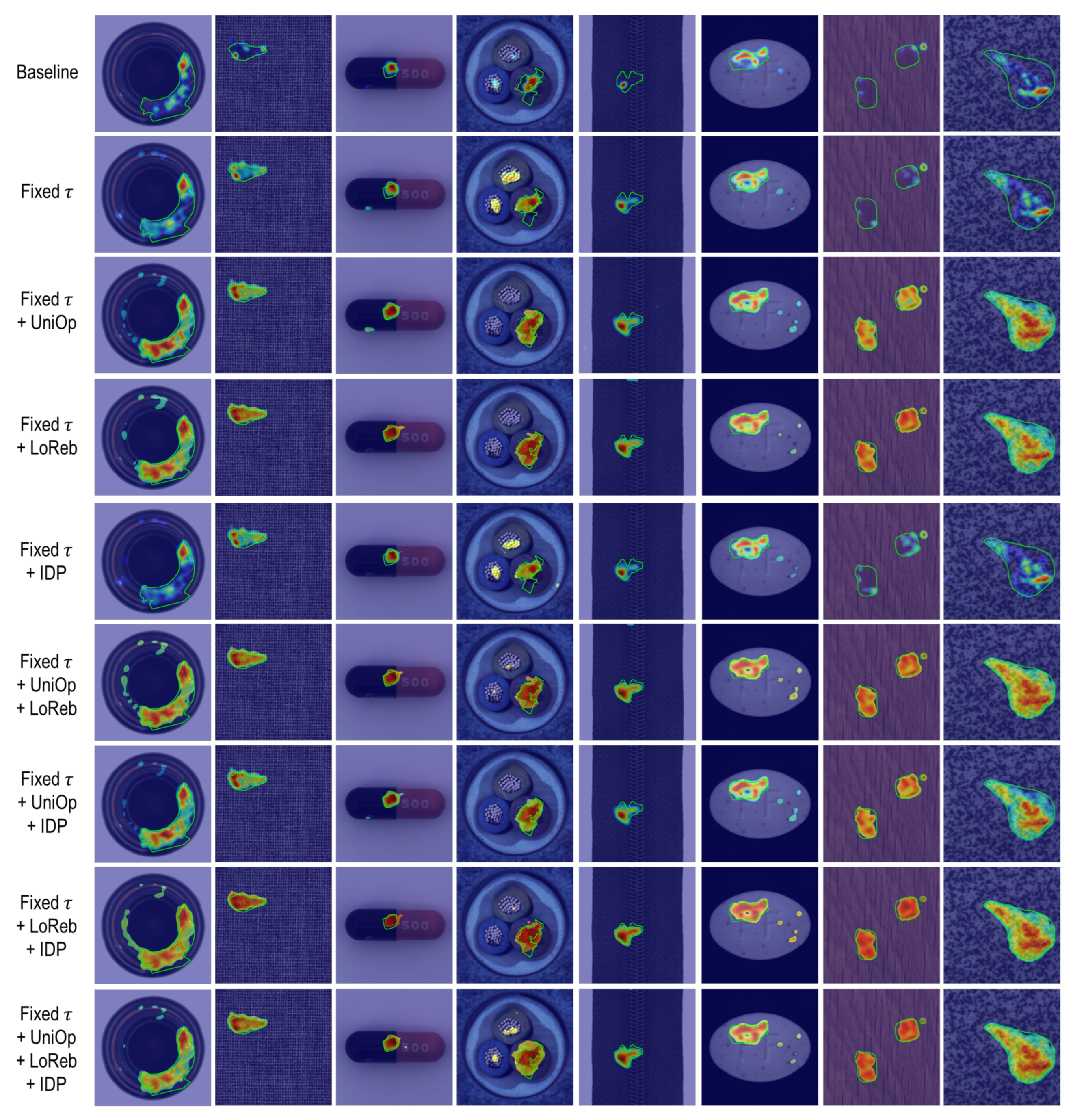

5.2.1. Ablation on Proposed Modifications

To evaluate the contribution of each proposed modification, we first introduced a fixed temperature ( = 0.07) at the baseline (VCP-CLIP), which serves as a stable starting point for all subsequent variants. Building on this configuration, we progressively enabled the unified anomaly map optimization (UniOp), the loss rebalancing (LoReb), and the image-conditioned direct prompting (IDP), either individually or in combination.

Quantitative Results. Table 1 shows the quantitative comparison of performance improvements based on each proposed modification. Fixing

alone yielded a dramatic improvement, increasing the pixel-level AUPRO from 49.5 to 83.4, highlighting the importance of stabilizing the optimization process. For the baseline, we tried to tune the hyperparameters to obtain better results but failed. The use of learnable

made the baseline under-optimized and degraded its reproducibility. In addition to this fixation, each additional modification–UniOp, LoReb, and IDP–provided further complementary gains, improving localization precision and consistency. In particular, UniOp and LoReb were highly effective in improving the pixel-level metrics, while IDP was highly effective in improving the image-level metrics. The full model incorporating all the modifications (Fixed

+ UniOP + LoReb + IDP) achieved the highest overall performance, balancing the pixel-level and image-level performance. This shows that the modifications are highly synergistic and collectively improve the accuracy and reliability of the VCP-CLIP framework.

Qualitative Results. Figure 3 illustrates the qualitative effects of each proposed modification. We observed several consistent trends across categories:

Baseline: The baseline frequently under-localized defects and produced weak, noisy activation maps, particularly for small or low-contrast anomalies.

Fixed : Fixing the temperature parameter yielded noticeably stronger and more coherent anomaly responses. Compared with the baseline, the predicted regions overlapped more with the defective areas, confirming that temperature instability was a major factor that affected localization quality.

Fixed + UniOp: The unified anomaly map optimization substantially improved the coverage of the anomaly by aggregating complementary cues from both branches. This led to more complete and spatially coherent overlap regions, filling in missing regions and enhancing structural consistency.

Fixed + LoReb: The loss rebalancing mechanism produced a similar degree of improvement. The anomaly regions became noticeably more complete, and the anomaly scores were more uniformly distributed. In addition, adaptively balancing the losses resulted in stable behavior across object or anomaly types without manual tuning.

Fixed + IDP: The visual changes introduced by IDP were subtle, but IDP consistently achieved larger overlap regions while reducing false predictions. This indicates that the visual prompt tokens provided better category-agnostic semantic cues, contributing to a more stable and reliable anomaly scoring.

Other partial combinations: When multiple modifications were combined, the anomaly maps became progressively cleaner and more structurally aligned with ground truth. In particular, in the fine-grained anomaly regions, there was less mis-prediction of the surrounding normal pixels as abnormal.

Full Model (Fixed

+ UniOp + LoReb + IDP): The full model generally provided the most coherent and spatially complete anomaly maps, capturing both global defect structures and fine-grained boundaries more reliably. Although slight over-segmentation can appear in a few texture-heavy categories (e.g., Cable in the fourth column of

Figure 3), the overall localization quality and consistency across diverse object types are noticeably improved compared with partial variants.

The qualitative comparisons clearly demonstrate that each proposed modification contributes to complementary improvements. Their combinations yielded sharper, more stable, and more complete anomaly localization, which is consistent with the quantitative gains reported in

Table 1.

Model Complexity. Table 2 shows the model complexity induced by each proposed modification. Only the application of IDP exhibited a slight change in model complexity. This is because proposed modifications minimize architectural changes in VCP-CLIP, which indicates that the quantitative and qualitative improvements can be achieved with little computational cost loss.

5.2.2. Ablation on the Number of Prompt Tokens in IDP

We investigated the impact of the number of prompt tokens on performance. As shown in

Table 3, varying the number of tokens from 2 to 10 resulted in minor differences between all evaluation metrics. However, using three tokens yielded the best balance, achieving the highest scores on the pixel-level AUROC (91.6) and F1 (48.2), IoU (33.2), and the image-level F1 (92.6) metrics. Although increasing the number of prompt tokens beyond 3 did not cause significant performance degradation, we also did not observe substantial improvements. This suggests that excessively increasing the prompt length introduces redundancy without clear benefit. These findings confirm that our prompting scheme is lightweight and robust and requires only a minimal number of tokens. Therefore, we adopted three tokens as the default configuration for all subsequent experiments, as they provided the best balance between performance and computational efficiency.

5.2.3. Ablation on Temperature Parameters

This section presents an ablation study that evaluates how different choices of temperature parameters () influence anomaly map sharpness and segmentation performance. Specifically, we analyzed (1) the behavior of the learnable temperature parameters during training and (2) the effect of fixing the parameters to different values.

Result of Letting the Parameters Learnable. In the VCP-CLIP framework, both temperature parameters

and

were initialized to 0.07 and optimized jointly with the model. However, when we enabled learning for

and

, we observed a consistent and monotonic decrease during the training process. As shown in

Figure 4, the parameters often ended up converging to extremely small values (

,

), far below the initial value of 0.07. This indicates that unconstrained optimization will likely force the model to be trained with overly sharp softmax distributions, which destabilize anomaly responses and degrade pixel-level segmentation quality.

Effect of Fixing to Different Values. To ensure stable training and reliable anomaly map generation, we fixed the temperature parameters to a shared parameter (

). However, to better understand the relationship between temperature scaling and anomaly segmentation, we performed an ablation experiment using different parameter values

. As shown in

Table 4, changing the parameter value had a noticeable influence on the quality of the segmentation. Both very small (e.g., 0.01) or large (e.g., 0.13) values degraded segmentation quality. Across all pixel-level metrics

achieved the highest performance. At the image level,

also reached the best performance for AUROC and F1, and scored similar to the highest (95.1 vs. 95.0) for AP. Given that

dominated all evaluation metrics, it was clearly the most robust and consistently optimal choice among all the temperature parameters tested.

In addition to the quantitative results in

Table 4, we further analyzed how different temperature values affect the structure and sharpness of the anomaly maps.

Figure 5 presents qualitative comparisons of anomaly maps generated using

= 0.01, 0.13, and 0.07. These visual results reveal a clear and interpretable trend consistent with the numerical metrics:

= 0.01 generally produced weak anomaly responses, often failing to detect small or fine-grained defective regions (i.e., resulting in under-segmentation).

= 0.13 generally produced overly strong responses, causing normal regions to be incorrectly activated as anomalies (i.e., resulting in over-segmentation).

= 0.07 achieved the most balanced behavior, yielding anomaly boundaries that align most closely with the ground-truth masks.

As a result, small

tended to segment the anomaly regions weakly or less, while large

tended to excessively segment the anomaly regions. However, this trend did not appear uniformly in every category, and some categories exhibited mild under-segmentation even at

= 0.13, as shown in

Figure 6. This means that more careful attention is needed to determine the value of

. Nevertheless, our experimental results strongly supported

= 0.07 as the most stable and reliable temperature value. In addition, the consistency between the qualitative and quantitative results further reinforced

= 0.07 as the optimal fixed choice.

5.3. Comparison with Other Methods

In this section, we conducted a comprehensive comparison with other state-of-the-art CLIP-based ZSAS methods, WinCLIP, AnoVL, AnomalyCLIP, AdaCLIP, and AA-CLIP, to evaluate both localization quality and image-level classification reliability of VCP-CLIP+.

5.3.1. Quantitative Comparison

Pixel-Level Metric Results. Table 5 shows pixel-level anomaly localization results. VCP-CLIP+ achieved the highest scores in all pixel-level metrics, significantly improving over prior CLIP-based ZSAS methods. In particular, VCP-CLIP+ yielded notable gains in AUPRO and IoU, indicating better boundary precision and robust anomaly localization. It also had the highest AP and F1 scores, which best addressed the class imbalance.

Image-Level Metric Results. We also compared image-level anomaly detection performance of ZSAS methods using single-point and multi-point aggregation schemes. Following VCP-CLIP, we adopted the Top-k pooling with k = 2000. In addition, we also used k = 1 as a single-point aggregation setting. Evaluating with both configurations enables a fair and comprehensive comparison without favoring a particular aggregation design.

In the Top-2000 aggregation results of

Table 6, AA-CLIP achieved the highest AUROC and AP scores, demonstrating the potential of its anomaly-aware prompt learning for image-level discrimination. VCP-CLIP+ ranked closely behind AA-CLIP in the AUROC and AP scores, but achieved the highest F1 score, indicating a more balanced trade-off between precision and recall under threshold-based evaluation.

Table 7 shows the Top-1 aggregation results, which were notably different from the Top-2000 aggregation results. However, AA-CLIP still scored the highest on all metrics, demonstrating its superiority in image-level anomaly detection. VCP-CLIP+ also remained competitive with AA-CLIP by yielding the second highest scores. Interestingly, AdaCLIP showed significant performance improvements on the Top-1 aggregation, unlike the other methods that exhibited a slight drop. However, it suggests that AdaCLIP overfits to sparse and few strong anomaly responses, which may degrade its generalization ability and reliability. These findings also show that evaluating both Top-

k settings ensures fair and precise comparison, and demonstrate that AA-CLIP and VCP-CLIP+ are insensitive to evaluation protocols and have high generalization ability and reliability, benefiting from richer and broader anomaly responses rather than few peak responses.

Image-Level TP/TN/FP/FN Analysis. The Top-2000 aggregation may disproportionately benefit models that produce diffuse or high-activation anomaly maps, becoming less sensitive to spatial accuracy and more sensitive to score distribution density. To further assess the accuracy and reliability of image-level anomaly detection, we analyzed true-positive rate (TPR), true-negative rate (TNR), false-positive rate (FPR), and false-negative rate (FNR) under the Top-2000 setting (see

Table 8). This evaluation directly measures the correctness of normal/abnormal classification and complements the image-level metrics in

Table 6.

Previous ZSAS methods have shown typical failure cases closely correlated with their low TNRs, which will also be observable in the visualization results in

Figure 7. WinCLIP presented an extremely high sensitivity (TPR = 98.1%), but often failed to activate the full extent of the defective regions and produced residual activations throughout the background or even in normal images. These noisy and wrong responses frequently entered the Top-k pool, leading to erroneous votes and extremely low specificity (TNR = 29.8%). AnoVL and AnomalyCLIP achieved significant improvements in specificity (TNR = 50.5% and 48.2%, respectively), but still produced activation maps with strong responses in normal regions, resulting in a high number of false alarms. AdaCLIP exhibited behavior similar to WinCLIP, but was relatively conservative in anomaly decision, resulting in slightly lower sensitivity and higher specificity. In addition, AnoVL, AnomalyCLIP, and AdaCLIP had to risk a significant increase in FNR to increase TNR. AA-CLIP achieved the highest specificity (TNR = 72.2%) thanks to its anomaly-aware prompting, which effectively suppressed background activations. However, this comes with the lowest sensitivity (TPR = 94.1%), indicating that AA-CLIP is too conservative in classifying images as abnormal and may fail to detect subtle or weak anomalies. In contrast, VCP-CLIP+ provided the most balanced results, achieving a high sensitivity (TPR = 97.1%) together with the second highest specificity (TNR = 62.5%). VCP-CLIP+ produced compact and coherent activation maps, minimizing both missed defects and spurious background responses, ensuring more stable and reliable image-level predictions compared with previous CLIP-based ZSAS methods.

5.3.2. Qualitative Comparison

Figure 7 presents a qualitative comparison of the anomaly localization performance of CLIP-based ZSAS methods. The visualization results align well with the quantitative metrics in

Table 5, showing how each method works for ZSAS and clearly illustrating the failure cases of previous ZSAS methods.

WinCLIP tended to detect only the most prominent anomaly regions, often missing large parts of the defective area. This limited coverage was consistent with its low pixel-level AUPRO and IoU. Its localization accuracy was relatively low, often resulting in false activations far from the true defective area, which is the reason for the low AP. AnoVL produced strong and broad activations that well covered the true defect regions, achieving a competitive AUROC in

Table 5. However, its activation maps frequently extended beyond the true anomaly boundaries, i.e., resulting in over-segmentation, especially on textured or fine-structured surfaces, correlated with its low TNR in

Table 8. AnomalyCLIP tended to produce more dispersed activation maps, often accompanied by scattered noisy responses. Although this helped to detect certain distributed anomalies, it also introduced spurious activations in background regions, adversely affecting its localization precision. AdaCLIP exhibited visually sharp but overly conservative activations, capturing only the core part of the defect area while missing peripheral regions. This behavior allowed small background artifacts to trigger false-positive classifications under strict thresholding, which explains its low TNR in

Table 8. AA-CLIP, despite its high performance in image-level detection, exhibited noticeable over-activation and over-segmentation at the pixel level. Although the method successfully activated most anomalous regions, it resulted in broad or image-wide false activations in several categories, particularly in Bottle (column 1) and Zipper (column 5), where large background areas were incorrectly activated. These behaviors align with its pixel-level metric scores—high AUROC and AUPRO but relatively limited IoU. Finally, VCP-CLIP+ produced dense and well-localized activation regions that best aligned with the ground-truth boundaries across diverse categories, minimizing spillover and background activations. This led to superior pixel-level segmentation performance—the highest scores in all metrics—and more reliable image-level predictions, as demonstrated by its balanced sensitivity (97.1%) and specificity (62.5%).

5.4. Comparison on Other Datasets

We compared the ZSAS methods on other benchmark datasets: BTAD [

3] and MPDD [

6], to further evaluate their performance and generalization ability in more detail. Both datasets differ significantly from the MVTec-AD dataset in terms of object appearance, defect characteristics, and background complexity. BTAD mainly contains texture-dominated industrial defects, and MPDD contains fine-grained surface defects with strong structural regularities.

As shown in

Table 9, VCP-CLIP+ achieved the best performance on the BTAD dataset across almost all metrics. These results clearly demonstrate that VCP-CLIP+ provides the most accurate and consistent anomaly localization on the BTAD dataset, outperforming other CLIP-based ZSAS methods by a substantial margin. Except for AA-CLIP and VCP-CLIP+, the performance of the ZSAS methods has decreased significantly, indicating a low generalization ability.

In particular, WinCLIP exhibited very limited localization capability, resulting in extremely low pixel-level scores due to fragmented and weak anomaly responses (see

Figure 8). AnoVL, while covering larger anomaly regions, tended to over-segment defects, leading to reduced precision and IoU despite relatively higher AUROC. AA-CLIP still showed strong image-level discrimination, but remained prone to pixel-level over-activation. In contrast, VCP-CLIP+ had high scores in AUPRO, F1, and IOU, indicating that VCP-CLIP+ not only detects anomalous regions reliably but also produces spatially coherent activation maps with well-aligned boundaries (clearly observable in

Figure 8). VCP-CLIP+ also maintained the best balance between anomaly coverage and background suppression, which can be seen from the highest image-level AP and F1 scores. Overall, only VCP-CLIP+ delivered robust and reliable anomaly segmentation and detection on BTAD with a high generalization ability.

The results on the MPDD dataset, summarized in

Table 10, further highlight the robustness and generalization ability of VCP-CLIP+. VCP-CLIP+ outperformed all competing methods in both pixel-level and image-level metrics.

In detail, VCP-CLIP+ still achieved the best performance in all pixel-level metrics, indicating high accuracy in anomaly localization and boundary consistency (see

Figure 8). Although AA-CLIP and AnomalyCLIP showed competitive AUROC scores, their localization quality was limited by over-activation or incomplete defect coverage. More importantly, VCP-CLIP+ defeated AA-CLIP at the image level. AA-CLIP has achieved strong image-level scores through anomaly-aware prompting, but did not effectively generalize to MPDD. These findings suggest that VCP-CLIP+ benefits from its high and robust anomaly localization fidelity, enabling more reliable image-level decisions on datasets with different defect distributions and visual characteristics. Overall, the results confirm that VCP-CLIP+ generalizes more consistently across datasets than other CLIP-based ZSAS methods.

5.5. Results of Exchanging Datasets for Training and Evaluation

Finally, unlike the experiments presented earlier, we tried to train the ZSAS methods using the MVTec-AD dataset and evaluate them using the VisA dataset to more comprehensively demonstrate the generalization ability of VCP-CLIP+. Images in the categories of “chewinggum”, “cashew”, “pipe_fryum”, “capsules”, and “candle” of VisA were used as the validation set during the training process. The hyperparameters were used as they are.

Table 11 shows the quantitative comparison results. Values of other metrics except AUROC and AUPRO were reduced compared with

Table 5 and

Table 6. In particular, the performance of AA-CLIP has significantly decreased. Although VCP-CLIP performed relatively better, the performance and generalization ability of VCP-CLIP+ varied the least compared with when trained using the VisA dataset. VCP-CLIP+ significantly improved the performance of VCP-CLIP and outperformed other ZSAS methods in both pixel-level and image-level metrics. The results show that VCP-CLIP+ with hyperparameters tuned on VisA works well on MVTec-AD without further tuning.

6. Weaknesses and Limitations of the Proposed Methodology

The proposed modifications were not advanced in principle, but devised experimentally; thus, they are close to engineering improvements. This weakness implies that their performance and generalization ability must be analyzed in depth and verified clearly, which is why we tried to show experimental results under various conditions and datasets as possible. Specifically, fixing temperature parameters can increase the risk of facing the overfitting problem. However, if not properly constrained, learning them as in VCP-CLIP is likely unstable and underperforms, as we demonstrated experimentally. It is clear that there is a need to find a way to learn the parameters stably. In addition, our findings may not provide deep insight. However, we focused on developing a practical ZSAS method, and we believe that the proposed methodology was effective and academically valuable, albeit limited.

7. Conclusions

In this study, we revisited the VCP-CLIP framework and proposed its upgraded version, VCP-CLIP+, improved with simple yet effective modifications for ZSAS. Rather than introducing heavy architectural changes, our focus was on identifying and improving the components that limit the training stability and anomaly localization fidelity of VCP-CLIP—leading to more stable optimization, sharper spatial localization, and more reliable image-level anomaly decisions.

We introduced four modifications. First, to address the problem that unconstrained learning of temperature parameters for similarity scaling often leads to degenerate temperature regimes that harm anomaly localization, we proposed fixing the parameters to optimal values. Second, to address the training–inference mismatch in anomaly map computation, we proposed optimizing a unified anomaly map with a learnable weighting parameter. Third, we proposed a learnable weighting mechanism that balances Dice and Focal losses during training, resulting in smoother optimization and more reliable pixel-level supervision. Fourth, we reformulated the text prompting mechanism and proposed an image-conditioned direct prompting module that injects image-conditioned learnable tokens directly into the text encoder, simplifying the prompting pipeline and enabling tighter instance-level coupling between image and text representations without relying on category-specific prompt parameters.

Extensive experiments on the VisA, MVTec-AD, BTAD, and MPDD datasets demonstrated that VCP-CLIP+ achieved state-of-the-art performance in pixel-level and image-level anomaly detection with high generalization abilities. Notably, while prior methods such as AA-CLIP showed competitive performance in image-level metrics, VCP-CLIP+ consistently delivered a more balanced sensitivity-specificity trade-off and superior spatial localization, outperforming the other ZSAS methods in both pixel-level and image-level metrics on more challenging datasets such as MPDD. Ablation studies confirmed that the proposed modifications contributed to complementary improvements, resulting in compact, boundary-aligned, and background-suppressing anomaly maps, with negligible computational overhead.

We believe that VCP-CLIP+ provides a robust and practical solution to advance CLIP-based ZSAS, and we hope that our findings facilitate further research on reliable and deployable ZSAS approaches for industrial inspection and real-world anomaly detection.

However, a closer analysis of the performance and generalization ability of VCP-CLIP+ remains an important future study, and in the near future, we plan to train and test VCP-CLIP+ using various datasets. Since the performance of VCP-CLIP+ relies heavily on the ability of CLIP encoders to capture visual and textual details, we plan to further improve the performance of VCP-CLIP+ using improved CLIP models [

36]. In addition, the unified anomaly map optimization might weaken the independent learning ability of each branch in

Figure 1. Therefore, finding effective ways to strengthen the independent learning ability of each branch would be an interesting topic for future study.

Author Contributions

Conceptualization, J.I. and H.P.; methodology, J.I. and H.P.; software, J.I.; validation, J.I. and H.P.; writing—original draft preparation, J.I.; writing—review and editing, H.P.; supervision, H.P. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The data that support the findings of this study are publicly available in the online repositories.

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| ZSAS | zero-shot anomaly segmentation |

| VCP | visual context prompting |

| IDP | image-conditioned direct prompting |

| ViT | vision Transformer |

| UTP | unified text prompting |

| DTP | deep text prompting |

| AUROC | area under the receiver operating characteristic |

| AUPRO | area under per-region overlap |

| AP | average precision |

| IoU | intersection over union |

| UniOp | unified anomaly map optimization |

| LoReb | loss rebalancing |

| TPR | true-positive rate |

| TNR | true-negative rate |

| FPR | false-positive rate |

| FNR | false-negative rate |

References

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning transferable visual models from natural language supervision. arXiv 2021, arXiv:2103.00020. [Google Scholar] [CrossRef]

- Qu, Z.; Tao, X.; Prasad, M.; Shen, F.; Zhang, Z.; Gong, X.; Ding, G. VCP-CLIP: A visual context prompting model for zero-shot anomaly segmentation. arXiv 2024, arXiv:2407.12276. [Google Scholar]

- Mishra, P.; Verk, R.; Fornasier, D.; Piciarelli, C.; Foresti, G. VT-ADL: A vision transformer network for image anomaly detection and localization. In Proceedings of the 30th IEEE/IES International Symposium on Industrial Electronics, Kyoto, Japan, 20–23 June 2021. [Google Scholar]

- Bergmann, P.; Fauser, M.; Sattlegger, D.; Steger, C. MVTec AD—A comprehensive real-world dataset for unsupervised anomaly detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: New York City, NY, USA, 2019; pp. 9592–9600. [Google Scholar]

- Zou, Y.; Jeong, J.; Pemula, L.; Zhang, D.; Dabeer, O. Spot-the-difference self-supervised pre-training for anomaly detection and segmentation. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; pp. 392–408. [Google Scholar]

- Jezek, S.; Jonak, M.; Burget, R.; Dvorak, P.; Skotak, M. Deep learning-based defect detection of metal parts: Evaluating current methods in complex conditions. In Proceedings of the 13th International Congress on Ultra Modern Telecommunications and Control Systems and Workshops; IEEE: New York City, NY, USA, 2021; pp. 66–71. [Google Scholar]

- Roth, K.; Pemula, L.; Zepeda, J.; Schölkopf, B.; Brox, T.; Gehler, P. Towards total recall in industrial anomaly detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: New York City, NY, USA, 2022; pp. 14318–14328. [Google Scholar]

- Defard, T.; Setkov, A.; Loesch, A.; Audigier, R. PaDiM: A patch distribution modeling framework for anomaly detection and localization. In Proceedings of the International Conference on Pattern Recognition, Virtual, 10–15 January 2021; pp. 475–489. [Google Scholar]

- Yu, J.; Zheng, Y.; Wang, X.; Li, W.; Wu, Y.; Zhao, R.; Wu, L. FastFlow: Unsupervised anomaly detection and localization via 2D normalizing flows. arXiv 2021, arXiv:2111.07677. [Google Scholar] [CrossRef]

- Gudovskiy, D.; Ishizaka, S.; Kozuka, K. CFLOW-AD: Real-time unsupervised anomaly detection with localization via conditional normalizing flows. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, Waikoloa, HI, USA, 3–8 January 2022; pp. 98–107. [Google Scholar]

- Liu, Z.; Zhou, Y.; Xu, Y.; Wang, Z. SimpleNet: A simple network for image anomaly detection and localization. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: New York City, NY, USA, 2023; pp. 20402–20411. [Google Scholar]

- Golan, I.; El-Yaniv, R. Deep anomaly detection using geometric transformations. Adv. Neural Inf. Process. Syst. 2018, 31, 9781–9791. [Google Scholar]

- Li, C.L.; Sohn, K.; Yoon, J.; Pfister, T. CutPaste: Self-supervised learning for anomaly detection and localization. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: New York City, NY, USA, 2021; pp. 9664–9674. [Google Scholar]

- Zavrtanik, V.; Kristan, M.; Skočaj, D. Dream—A discriminatively trained reconstruction embedding for surface anomaly detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision; IEEE: New York City, NY, USA, 2021; pp. 8330–8339. [Google Scholar]

- Schlüter, H.M.; Tan, J.; Hou, B.; Kainz, B. Natural synthetic anomalies for self-supervised anomaly detection and localization. In Proceedings of the European Conference on Computer Vision, Tel Aviv, Israel, 23–27 October 2022; pp. 474–489. [Google Scholar]

- Tsai, M.C.; Wang, S.D. Self-supervised image anomaly detection and localization with synthetic anomalies. In Proceedings of 2023 10th International Conference on Internet of Things: Systems, Management and Security; IEEE: New York City, NY, USA, 2023; pp. 90–95. [Google Scholar]

- Jeong, J.; Zou, Y.; Kim, T.; Zhang, D.; Ravichandran, A.; Dabeer, O. WinCLIP: Zero-/few-shot anomaly classification and segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: New York City, NY, USA, 2023; pp. 19606–19616. [Google Scholar]

- Deng, H.; Zhang, Z.; Bao, J.; Li, X. Bootstrap fine-grained vision-language alignment for unified zero-shot anomaly localization. arXiv 2023, arXiv:2308.15939. [Google Scholar]

- Zhou, Q.; Pang, G.; Tian, Y.; He, S.; Chen, J. AnomalyCLIP: Object-agnostic prompt learning for zero-shot anomaly detection. In Proceedings of International Conference on Learning Representations; Springer: Berlin/Heidelberg, Germany, 2024; pp. 49705–49737. [Google Scholar]

- Cao, Y.; Zhang, J.; Frittoli, L.; Cheng, Y.; Shen, W.; Boracchi, G. AdaCLIP: Adapting CLIP with hybrid learnable prompts for zero-shot anomaly detection. In Proceedings of the European Conference on Computer Vision, Milan, Italy, 29 September–4 October 2024; pp. 55–72. [Google Scholar]

- Ma, W.; Zhang, X.; Yao, Q.; Tang, F.; Wu, C.; Li, Y.; Yan, R.; Jiang, Z.; Zhou, S.K. AA-CLIP: Enhancing zero-shot anomaly detection via anomaly-aware CLIP. In Proceedings of the Computer Vision and Pattern Recognition Conference; IEEE: New York City, NY, USA, 2025; pp. 4744–4754. [Google Scholar]

- Pan, Y.; Wang, L.; Chen, Y.; Zhu, W.; Peng, B.; Chi, M. PA-CLIP: Enhancing zero-shot anomaly detection through pseudo-anomaly awareness. arXiv 2025, arXiv:2503.01292. [Google Scholar]

- Fang, Q.; Lv, W.; Su, Q. AF-CLIP: Zero-shot anomaly detection via anomaly-focused CLIP adaptation. In Proceedings of the 33rd ACM International Conference on Multimedia; IEEE: New York City, NY, USA, 2025; pp. 4846–4855. [Google Scholar]

- Chen, X.; Tao, H.; Zhou, H.; Zhou, P.; Deng, Y. Hierarchical and progressive learning with key point sensitive loss for sonar image classification. Multimed. Syst. 2024, 30, 380. [Google Scholar] [CrossRef]

- Zhou, K.; Yang, J.; Loy, C.C.; Liu, Z. Learning to prompt for vision-language models. Int. J. Comput. Vis. 2022, 130, 2337–2348. [Google Scholar] [CrossRef]

- Zhou, K.; Yang, J.; Loy, C.C.; Liu, Z. Conditional prompt learning for vision-language models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: New York City, NY, USA, 2022; pp. 16816–16825. [Google Scholar]

- Rao, Y.; Zhao, W.; Chen, G.; Tang, Y.; Zhu, Z.; Huang, G.; Zhou, J.; Lu, J. DenseCLIP: Language-guided dense prediction with context-aware prompting. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: New York City, NY, USA, 2022; pp. 18082–18091. [Google Scholar]

- Zhou, Z.; Lei, Y.; Zhang, B.; Liu, L.; Liu, Y. ZegCLIP: Towards adapting CLIP for zero-shot semantic segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: New York City, NY, USA, 2023; pp. 11175–11185. [Google Scholar]

- Jia, M.; Tang, L.; Chen, B.C.; Cardie, C.; Belongie, S.; Hariharan, B.; Lim, S.N. Visual prompt tuning. In Proceedings of European Conference on Computer Vision; Springer: Berlin/Heidelberg, Germany, 2022; pp. 709–727. [Google Scholar]

- Dosovitskiy, A. An image is worth 16 × 16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Dodge, J.; Ilharco, G.; Schwartz, R.; Farhadi, A.; Hajishirzi, H.; Smith, N. Fine-tuning pretrained language models: Weight initializations, data orders, and early stopping. arXiv 2020, arXiv:2002.06305. [Google Scholar] [CrossRef]

- Crane, M. Questionable answers in question answering research: Reproducibility and variability of published results. Trans. Assoc. Comput. Linguist. 2018, 6, 241–252. [Google Scholar] [CrossRef]

- Akcay, S.; Ameln, D.; Vaidya, A.; Lakshmanan, B.; Ahuja, N.; Genc, U. Anomalib: A deep learning library for anomaly detection. In Proceedings of 2022 IEEE International Conference on Image Processing; IEEE: New York City, NY, USA, 2022; pp. 1706–1710. [Google Scholar]

- Luo, W.; Cao, Y.; Yao, H.; Zhang, X.; Lou, J.; Cheng, Y.; Shen, W.; Yu, W. Exploring intrinsic normal prototypes within a single image for universal anomaly detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; IEEE: New York City, NY, USA, 2025; pp. 9974–9983. [Google Scholar]

- VCP-CLIP Official Code. Available online: https://github.com/xiaozhen228/VCP-CLIP (accessed on 3 December 2025).

- Li, Y.; Zhao, J.; Chang, H.; Hou, R.; Shan, S.; Chen, X. un2CLIP: Improving CLIP’s visual detail capturing ability via inverting unCLIP. arXiv 2025, arXiv:2505.24517. [Google Scholar]

Figure 1.

Process flow of VCP-CLIP. VCP-CLIP generates two anomaly maps in separate branches and fuses them.

Figure 1.

Process flow of VCP-CLIP. VCP-CLIP generates two anomaly maps in separate branches and fuses them.

Figure 2.

Overview of the proposed IDP module. A small set of learnable prompt tokens is summarized and fused with CLIP’s global image feature. The fused embedding is directly used as an image-conditioned prompt token in UTP.

Figure 2.

Overview of the proposed IDP module. A small set of learnable prompt tokens is summarized and fused with CLIP’s global image feature. The fused embedding is directly used as an image-conditioned prompt token in UTP.

Figure 3.

Qualitative comparison of anomaly maps obtained by applying the proposed modifications.

Figure 3.

Qualitative comparison of anomaly maps obtained by applying the proposed modifications.

Figure 4.

Behavior of learnable temperatures and during training.

Figure 4.

Behavior of learnable temperatures and during training.

Figure 5.

Effect of different temperature values in anomaly localization.

Figure 5.

Effect of different temperature values in anomaly localization.

Figure 6.

Exceptional cases where behaves inconsistently. Even large resulted in under-segmentation for some categories.

Figure 6.

Exceptional cases where behaves inconsistently. Even large resulted in under-segmentation for some categories.

Figure 7.

Qualitative comparison of anomaly localization results produced by different ZSAS methods.

Figure 7.

Qualitative comparison of anomaly localization results produced by different ZSAS methods.

Figure 8.

Qualitative comparison of anomaly localization results produced by different ZSAS methods on the BTAD and MPDD datasets.

Figure 8.

Qualitative comparison of anomaly localization results produced by different ZSAS methods on the BTAD and MPDD datasets.

Table 1.

Changes in quantitative metrics when applying proposed modifications. The best results are in bold.

Table 1.

Changes in quantitative metrics when applying proposed modifications. The best results are in bold.

| Method | Pixel-Level | Image-Level |

|---|

|

AUROC

|

AUPRO

|

AP

|

F1

|

IoU

|

AUROC

|

AP

|

F1

|

|---|

| Baseline (VCP-CLIP) | 86.5 | 49.5 | 37.2 | 40.2 | 25.8 | 89.9 | 95.2 | 91.3 |

| Fixed | 87.0 | 83.4 | 39.6 | 42.7 | 28.2 | 89.1 | 94.9 | 90.9 |

| Fixed + UniOp | 90.4 | 86.9 | 45.9 | 47.4 | 32.3 | 90.6 | 95.9 | 92.2 |

| Fixed + LoReb | 91.4 | 86.4 | 46.4 | 47.8 | 32.8 | 88.3 | 94.6 | 91.7 |

| Fixed + IDP | 87.7 | 84.3 | 41.6 | 44.0 | 29.3 | 90.9 | 96.1 | 92.0 |

| Fixed + UniOp + LoReb | 91.1 | 86.9 | 46.5 | 48.1 | 33.1 | 89.2 | 94.7 | 91.5 |

| Fixed + UniOp + IDP | 90.0 | 86.3 | 45.2 | 47.3 | 32.3 | 89.5 | 95.2 | 92.1 |

| Fixed + LoReb + IDP | 90.7 | 85.8 | 46.2 | 47.4 | 32.6 | 88.0 | 94.1 | 91.7 |

| Full model (VCP-CLIP+) | 91.6 | 86.5 | 46.6 | 48.2 | 33.2 | 89.6 | 95.0 | 92.6 |

Table 2.

Changes in model complexity when applying proposed modifications.

Table 2.

Changes in model complexity when applying proposed modifications.

| Method | # Params | FLOPs | Inference Time |

|---|

| Baseline (VCP-CLIP) | 437.035 M | 602.362 G | 170.538 ms |

| Fixed | 437.035 M | 602.362 G | 170.219 ms |

| Fixed + UniOp | 437.035 M | 602.362 G | 171.045 ms |

| Fixed + LoReb | 437.035 M | 602.362 G | 170.897 ms |

| Fixed + IDP | 438.217 M | 602.364 G | 170.826 ms |

| Fixed + UniOp + LoReb | 437.035 M | 602.362 G | 170.985 ms |

| Fixed + UniOp + IDP | 438.217 M | 602.364 G | 171.038 ms |

| Fixed + LoReb + IDP | 438.217 M | 602.364 G | 170.865 ms |

| Full model (VCP-CLIP+) | 438.217 M | 602.364 G | 170.804 ms |

Table 3.

Effect of the number of prompt tokens on anomaly segmentation performance (evaluation dataset = MVTec-AD). The best results are in bold.

Table 3.

Effect of the number of prompt tokens on anomaly segmentation performance (evaluation dataset = MVTec-AD). The best results are in bold.

| Number of Tokens (N) | Pixel-Level | Image-Level |

|---|

|

AUROC

|

AUPRO

|

AP

|

F1

|

IoU

|

AUROC

|

AP

|

F1

|

|---|

| 2 | 91.0 | 85.3 | 45.4 | 47.6 | 32.8 | 87.1 | 94.0 | 91.0 |

| 3 | 91.6 | 86.5 | 46.6 | 48.2 | 33.2 | 89.6 | 95.0 | 92.6 |

| 4 | 91.3 | 87.0 | 46.5 | 47.9 | 33.0 | 89.7 | 95.3 | 92.1 |

| 5 | 91.3 | 86.3 | 46.8 | 48.0 | 33.1 | 88.6 | 94.4 | 91.8 |

| 6 | 90.9 | 85.3 | 46.5 | 47.9 | 33.1 | 86.4 | 93.4 | 90.9 |

| 7 | 91.1 | 85.9 | 45.0 | 47.1 | 32.3 | 88.3 | 94.1 | 91.7 |

| 8 | 91.1 | 86.3 | 46.0 | 47.6 | 32.6 | 88.1 | 94.1 | 91.4 |

| 9 | 90.9 | 87.3 | 46.5 | 47.8 | 32.9 | 90.3 | 95.4 | 92.4 |

| 10 | 91.2 | 86.2 | 46.3 | 48.0 | 33.1 | 89.4 | 95.2 | 91.9 |

Table 4.

Segmentation performance under different fixed temperature values (evaluation dataset = MVTec-AD). The best results are in bold.

Table 4.

Segmentation performance under different fixed temperature values (evaluation dataset = MVTec-AD). The best results are in bold.

| Pixel-Level | Image-Level |

|---|

|

AUROC

|

AUPRO

|

AP

|

F1

|

IoU

|

AUROC

|

AP

|

F1

|

|---|

| 0.01 | 88.2 | 80.5 | 38.2 | 41.9 | 27.8 | 84.5 | 92.9 | 90.4 |

| 0.04 | 89.5 | 85.0 | 42.2 | 44.8 | 30.0 | 88.7 | 95.1 | 91.0 |

| 0.07 | 91.6 | 86.5 | 46.6 | 48.2 | 33.2 | 89.6 | 95.0 | 92.6 |

| 0.1 | 88.6 | 83.3 | 40.0 | 43.9 | 29.1 | 84.6 | 93.3 | 90.2 |

| 0.13 | 89.4 | 84.0 | 40.4 | 43.9 | 29.1 | 85.9 | 94.2 | 90.1 |

Table 5.

Quantitative comparison of pixel-level anomaly localization results produced by different ZSAS methods. The best results are in bold.

Table 5.

Quantitative comparison of pixel-level anomaly localization results produced by different ZSAS methods. The best results are in bold.

| Methods | AUROC | AUPRO | AP | F1 | IoU |

|---|

| WinCLIP | 81.3 | 60.8 | 17.8 | 24.8 | 14.8 |

| AnoVL | 88.5 | 78.3 | 34.3 | 38.5 | 25.3 |

| AnomalyCLIP | 91.3 | 81.5 | 34.4 | 39.1 | 25.1 |

| AdaCLIP | 89.3 | 68.3 | 31.3 | 36.3 | 22.4 |

| AA-CLIP | 90.9 | 85.8 | 43.9 | 45.3 | 30.6 |

| VCP-CLIP+ (ours) | 91.6 | 86.5 | 46.6 | 48.2 | 33.2 |

Table 6.

Quantitative comparison of image-level anomaly detection performance using Top-2000 aggregation. The best results are in bold.

Table 6.

Quantitative comparison of image-level anomaly detection performance using Top-2000 aggregation. The best results are in bold.

| Methods | AUROC | AP | F1 |

|---|

| WinCLIP | 71.9 | 84.9 | 86.8 |

| AnoVL | 84.2 | 92.6 | 89.2 |

| AnomalyCLIP | 76.8 | 88.9 | 89.2 |

| AdaCLIP | 70.4 | 87.8 | 86.1 |

| AA-CLIP | 90.6 | 96.0 | 91.8 |

| VCP-CLIP+ (ours) | 89.6 | 95.0 | 92.6 |

Table 7.

Quantitative comparison of image-level anomaly detection performance using Top-1 aggregation. The best results are in bold.

Table 7.

Quantitative comparison of image-level anomaly detection performance using Top-1 aggregation. The best results are in bold.

| Methods | AUROC | AP | F1 |

|---|

| WinCLIP | 71.7 | 84.8 | 86.8 |

| AnoVL | 83.9 | 92.2 | 88.8 |

| AnomalyCLIP | 78.3 | 85.5 | 88.6 |

| AdaCLIP | 84.7 | 90.5 | 90.4 |

| AA-CLIP | 89.8 | 95.7 | 91.6 |

| VCP-CLIP+ (ours) | 88.7 | 93.5 | 91.1 |

Table 8.

Image-level TP/TN/FP/FN analysis using Top-2000 aggregation.

Table 8.

Image-level TP/TN/FP/FN analysis using Top-2000 aggregation.

| Methods | TPR (Sensitivity) | TNR (Specificity) | FPR (Fall-Out) | FNR (Miss Rate) |

|---|

| WinCLIP | 98.1% | 29.8% | 70.2% | 1.9% |

| AnoVL | 96.3% | 50.5% | 49.5% | 3.7% |

| AnomalyCLIP | 96.3% | 48.2% | 51.8% | 3.7% |

| AdaCLIP | 96.0% | 35.6% | 64.4% | 4.0% |

| AA-CLIP | 94.1% | 72.2% | 27.8% | 5.9% |

| VCP-CLIP+ (ours) | 97.1% | 62.5% | 37.5% | 2.9% |

Table 9.

Quantitative comparison of different ZSAS methods on the BTAD dataset. The best results are in bold.

Table 9.

Quantitative comparison of different ZSAS methods on the BTAD dataset. The best results are in bold.

| Methods | Pixel-Level | Image-Level |

|---|

|

AUROC

|

AUPRO

|

AP

|

F1

|

IoU

|

AUROC

|

AP

|

F1

|

|---|

| WinCLIP | 66.7 | 22.7 | 7.3 | 12.3 | 6.8 | 55.2 | 63.0 | 68.4 |

| AnoVL | 86.5 | 51.1 | 26.3 | 31.6 | 21.1 | 86.1 | 74.2 | 76.7 |

| AnomalyCLIP | 79.9 | 55.2 | 14.9 | 21.5 | 12.7 | 42.9 | 52.2 | 64.3 |

| AdaCLIP | 74.4 | 49.2 | 9.8 | 14.4 | 8.3 | 42.6 | 56.7 | 64.3 |

| AA-CLIP | 91.9 | 73.4 | 42.1 | 45.1 | 31.2 | 92.5 | 95.3 | 91.5 |

| VCP-CLIP+ (ours) | 93.4 | 76.6 | 43.9 | 48.6 | 32.7 | 91.6 | 97.6 | 95.2 |

Table 10.

Quantitative comparison of different ZSAS methods on the MPDD dataset. The best results are in bold.

Table 10.

Quantitative comparison of different ZSAS methods on the MPDD dataset. The best results are in bold.

| Methods | Pixel-Level | Image-Level |

|---|

|

AUROC

|

AUPRO

|

AP

|

F1

|

IoU

|

AUROC

|

AP

|

F1

|

|---|

| WinCLIP | 64.7 | 39.0 | 11.6 | 12.4 | 8.8 | 44.9 | 58.9 | 74.5 |

| AnoVL | 86.5 | 63.5 | 14.1 | 17.7 | 13.9 | 58.1 | 68.0 | 75.0 |

| AnomalyCLIP | 94.1 | 78.5 | 19.6 | 24.2 | 16.2 | 55.5 | 62.7 | 75.9 |

| AdaCLIP | 94.5 | 59.1 | 14.9 | 22.3 | 14.3 | 43.4 | 59.4 | 72.8 |

| AA-CLIP | 95.4 | 85.8 | 24.7 | 28.3 | 19.2 | 63.8 | 70.9 | 76.5 |

| VCP-CLIP+ (ours) | 97.1 | 89.4 | 27.0 | 31.7 | 21.6 | 67.6 | 73.5 | 77.5 |

Table 11.

Quantitative comparison of different ZSAS methods on the VisA dataset. The best results are in bold.

Table 11.

Quantitative comparison of different ZSAS methods on the VisA dataset. The best results are in bold.

| Methods | Pixel-Level | Image-Level |

|---|

|

AUROC

|

AUPRO

|

AP

|

F1

|

IoU

|

AUROC

|

AP

|

F1

|

|---|

| VCP-CLIP | 95.1 | 86.7 | 27.8 | 33.8 | 22.2 | 81.8 | 86.1 | 80.0 |

| AnomalyCLIP | 93.1 | 86.0 | 14.6 | 22.3 | 13.4 | 56.4 | 68.3 | 75.5 |

| AdaCLIP | 95.8 | 86.2 | 22.6 | 29.2 | 16.3 | 77.1 | 83.0 | 78.4 |

| AA-CLIP | 95.5 | 84.7 | 27.4 | 33.3 | 21.7 | 77.4 | 83.0 | 77.2 |

| VCP-CLIP+ (ours) | 96.1 | 89.1 | 29.2 | 35.8 | 24.8 | 88.5 | 90.7 | 85.2 |

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |