1. Introduction

Successive advances in information and communication technology (ICT) have allowed unprecedented progress in the domain of crowdsourcing platforms. The basic idea behind crowdsourcing platforms is that complex tasks can be disintegrated into a great number of smaller pieces and delegated to a great number of individuals to address them, thus enabling a collective, but not necessarily conscious and optimally coordinated effort of complex problem solving. Critical in the debate on crowdsourcing platforms is the notion of task delegation, i.e., human intelligence tasks (HITs). HITs are a form of micro-work [

1]. Indeed, individuals performing such tasks are usually paid or remunerated for their input in alternative ways. Importantly, the great number of individuals dealing with the small tasks assigned to them use their cognitive skills to solve problems. The case of crowdsourcing platforms suggests, however, that micro-work is a necessary structural component of contemporary artificial intelligence production processes [

1].

Research on natural language processing (NLP), artificial intelligence (AI), and related applications and their uses, is continuing to proliferate [

2,

3]. However, if the development of NLP applications is to be maintained, language differences and culturally determined language specificity need to be brought into the analysis and application development process. This means that custom-made approaches and solutions to NLP are required to support different languages, such as Arabic, Urdu, and Persian [

4]. NLP crowdsourcing platforms attest to that. Literature suggests that considerable progress has been attained in this regard, especially in relation to English and Chinese [

5,

6]. As a result, relatively efficient and accurate crowdsourcing platforms have been created that, at this stage, support diverse forms of research requiring data collection [

7,

8,

9,

10]. This includes academic research, market and consumer satisfaction surveys, etc. Thanks to crowdsourcing platforms, the tasks that these activities require can be performed quickly and cost-effectively, while also allowing the collection of good quality data.

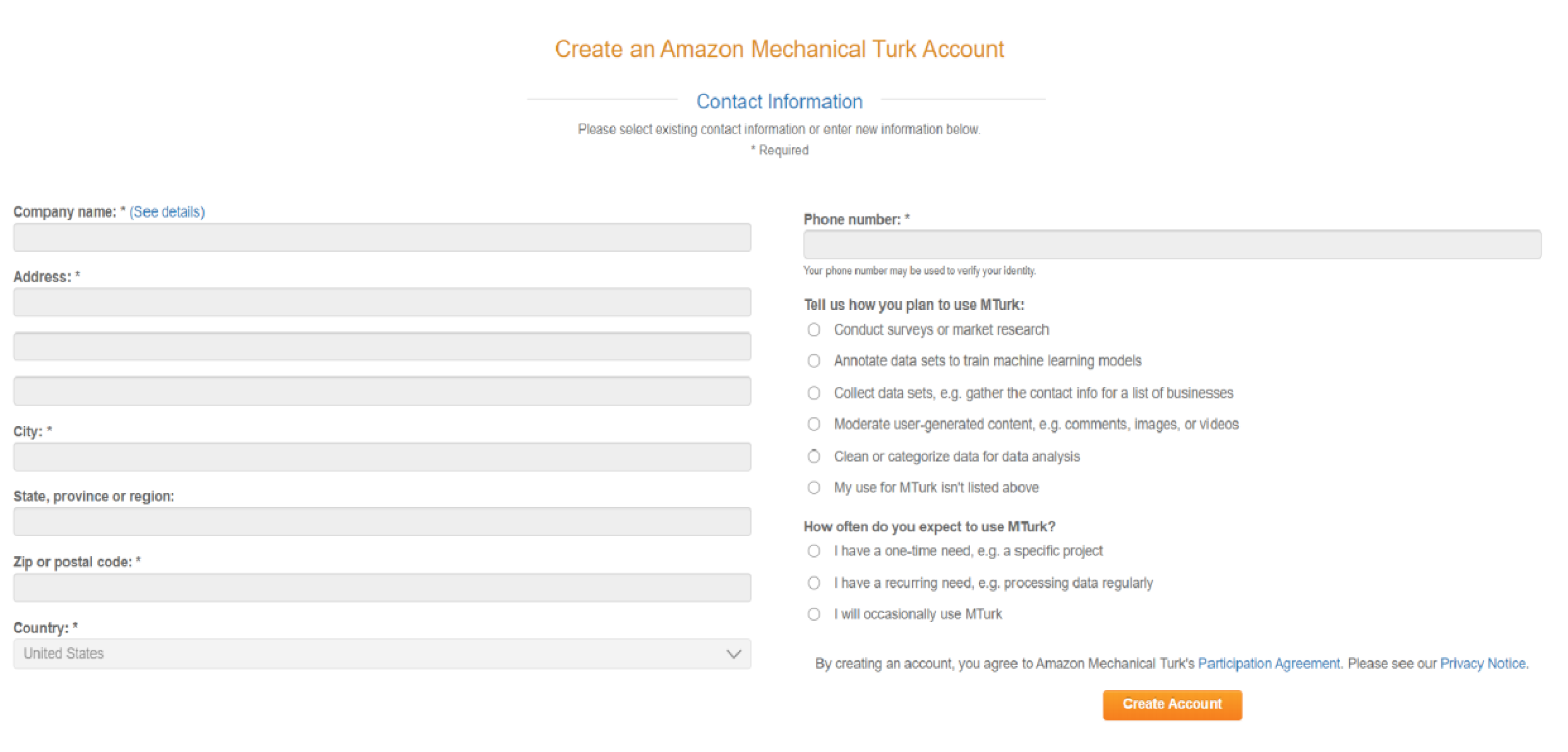

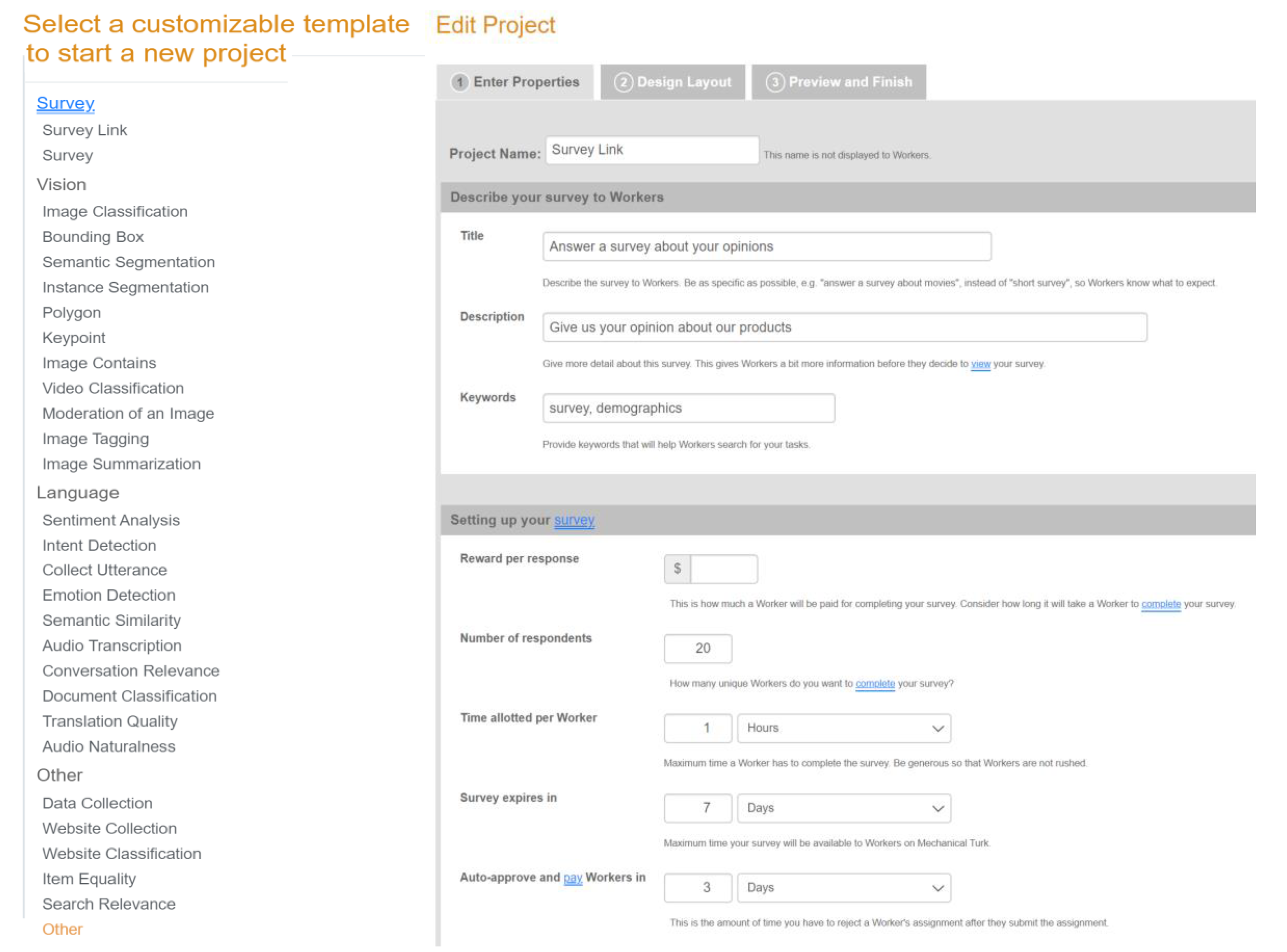

With regards to NLP, crowdsourcing platforms prove useful in a variety of research projects that require text summarization, machine translation, and speech recognition. To this end, the following crowdsourcing platforms have been commonly used: Amazon’s Mechanical Turk [

7], CrowdFlower [

8], Lionbridge [

9], Prolific Academic, and others [

1,

10]. Although crowdsourcing platforms have a great potential for research, each of these platforms has certain limitations. For instance, Amazon Mechanical Turk is difficult to employ outside of the United States. Sometimes, the complexity of specific languages limits the pool of participants involved in a crowdsourcing activity. This would be the case of the German ClickWorker crowdsourcing platform [

11], or the Chinese Zhubajie/Witmart crowdsourcing platform used as a benchmark for research in China [

12].

Some progress has been attained with regards to NLP research oriented on the Arabic language [

13,

14,

15]. Nevertheless, even though a few researchers sought to develop their own crowdsourcing platforms, the tendency was to employ the existing platforms, such as Amazon Mechanical Turk and CrowdFlower. The objective of this paper is to support NLP Arabic-based research. To this end, a new crowdsourcing platform, named Tashkeel, is proposed and elaborated. The name of the platform is inspired by the eight main diacritics that transform a word written with an Arabic letter into a vast range of forms and meaning. The power of those eight marks, including Fathah, Kasrah, Dhammah, Sukun, Shaddah, Tanwin (Fath), Tanwin (Kasr), and Tanwin (Dham), in enriching the Arabic language inspired us to give the platform this name, in order to have the same impact on NLP Arabic research. The argument is structured as follows. First, a review of the crowdsourcing platforms’ scene employed for studies related to the Arabic language is presented. In the next step, Tashkeel’s details are elaborated and an evaluation of Tashkeel is provided. It is argued that Tashkeel should be seen as a step toward the creation of comprehensive, language-sensitive crowdsourcing platforms useful and usable not only primarily for the purposes of research, but also toward the development of open platforms in the future for innovation generation, opinion aggregation, etc., in the context of smart cities, smart communities, and e-governments.

This paper is dedicated to Arabic-based NLP projects and research. Accordingly, in this paper, we develop a general-purpose Arabic crowdsourcing platform to provide opportunities to access the expertise of all types of Arabic speakers who are proficient users of different dialects and possess the necessary skills. To the best of our knowledge, this is the first general Arabic crowdsourcing platform for NLP research. As such, the added-value of this paper consists of four items: (i) Investigating the literature of Arabic NLP research that uses crowdsourcing platforms; (ii) identification of the need to use popular platforms for Arabic NLP research; (iii) conceptualization of the design for a general Arabic language platform to support Arabic NLP research; and (iv) development and evaluation of an Arabic language-sensitive crowdsourcing platform, named Tashkeel. This platform includes the interface and the requirements to empower Arabic research by indicating specific related skills, rewards, and ratings. The rest of the paper is structured as follows:

Section 2 illustrates the challenges facing Arabic research and the limitations of the available frameworks;

Section 3 provides an overview of the related work and the importance of Arabic crowdsourcing platforms;

Section 4 introduces the Tashkeel platform, followed by a feature comparison with other well-known platforms in

Section 5; In

Section 6, an evaluation of the platform via a case study is presented; and

Section 7 presents the conclusion and future work.

3. Related Work

Considerable work has been done in the context of crowdsourcing and Arabic language in recent years. Some of these studies have utilized existing crowdsourcing platforms, such as Amazon Mechanical Turk and CrowdFlower, while others have used self-created platforms. The following paragraphs elaborate on these.

With reference to annotating Arabic dialect in a sentence, Zaidan and Callison Burch [

19] relied on Amazon Mechanical Turk crowdsourcing to give labels to a randomly selected set of about 110,000 Arabic sentences. Those sentences are chosen from over three million sentences of the Arabic On-line Commentary dataset (AOC). AOC is a combination of three newspapers, including Al-Ghad from Jordan, Al-Riyadh from Saudi Arabia, and Al-Youm Al-Sabe’ from Egypt. The annotators were given short and simple instructions to label the sentences, wherein each label had to include details about the level and type of dialect in each sentence. The level indicated the extent of Arabic dialect in the sentence in accordance with available options. These included no dialect for the pure MSA sentence, a small amount of dialect, a mixed amount of dialect and MSA, an extensive amount of dialect, and a non-Arabic sentence. The type referred to which Arabic dialect was manifested in the sentence, whether Gulf, Egyptian, Levantine, Iraqi, or Maghrebi. The authors randomly grouped the sentences into sets of ten sentences. Each group was presented to each annotator on a single screen. Two more MSA sentences were randomly selected and added to each screen as control sentences to test the annotators’ accuracy. These two sentences had to be labeled as MSA. Any annotator who mislabeled them was considered a spammer and their work was rejected. Three different annotators were allocated to label the sentences on each screen.

Alsarsour and his associates [

15] created a large annotated multi-dialect dataset of about 25 thousand Arabic tweets called Dialectal Arabic Tweets (DART). For creating DART, they tapped into a list of Arabic words collected by [

23] and a list of Arabic phrases from the Mo3jam website using Twitter streaming Application Programing Interface (API). They used the CrowdFlower crowdsourcing platform to annotate each tweet, wherein the annotation label had to indicate the type Arabic dialect (Egyptian, Maghrebi, Levantine, Gulf, or Iraqi) used. To test the quality, the authors asked five native speakers, including one for each dialect group, to label about 300 to 400 tweets. They used this set as a test source to randomly select 10 tweets to test the annotators before they commenced the job. The annotators needed to get a minimum of 90% to pass the test. If the annotators achieved a score of below 90%, they were excluded.

Another team of authors [

24] created a corpus concentrating on the Levantine dialect used in Levantine countries. They used Twitter to create a Levantine dataset including 4000 tweets. They looked for the following information: (i) The overall sentiment of the tweet on a 5-point scale; (ii) the target to which the sentiment was expressed; (iii) how the sentiment was expressed; and (iv) the topic of the tweet. The researchers used the CrowdFlower platform. They provided the annotators with guided instructions to determine the overall sentiment and to select the target of this sentiment within the tweet. In addition, the annotators had to identify whether the sentiment was obtained explicitly or implicitly. The annotators also had to explain the topic included from predefined topics.

The four topics were presented as follows: Politics, religions, sports, and personal. If the topic was not included amongst the four topics given to them, the annotators had to specify their own opinion. Before the platform was used for the entire task, the clarity of the instructions was tested by applying a pilot task. A number of different annotators (from 5 to 9) were allocated for each tweet. The annotators’ accuracy on the platform was tested by comparing it against 181 tweets annotated by the authors as the gold standard. Only annotators with an accuracy higher than 75% were accepted for the large-scale task, whereas the others were rejected.

For annotating targets of opinion in Arabic, Fara et al. [

25] utilized the Amazon Mechanical Turk platform to annotate Arabic comments about Aljazeera newspaper articles chosen from the Qatar Arabic Language Bank (QALB). They chose a randomly selected sample of 1177 comments on articles about politics, sports, and culture topics for annotation. The process was divided into two different stages. Each had a series of tasks. The task instructions were written in Arabic. In the first stage, a comment was given to three annotators. They were asked to identify whether the entities in a comment represented nouns referring to people, places, things, or ideas. Their answers, if overlapping, were then used to refer to the entity in the comment. In the second stage, a comment with a single entity was given to five annotators. They were asked to identify the opinion about the entity in the comment in terms of whether it was positive, negative, or neutral.

Furthermore, by employing social media, [

18] created a corpus of 76,619 Arabic tweets mentioning forms of violence and abuse. They prepared a list of 237 words in Arabic denoting violence and tracked them using Twitter streaming API to collect the corpus. They used the CrowdFlower platform to assign annotations to each tweet in the corpus. Each tweet had to be annotated by at least five annotators. The annotation option referred to one of the seven types of violence, including human rights laws (HRA), political opinion, accidents, crime, conflict, crises, and violence, amongst others. They randomly selected a set of 206 tweets from the corpus and had these annotated manually by experts. This set was used as a test source to test the annotators’ performance before they started the job. The annotators needed to get at least 70% to continue working on the task. From the corpus, they annotated 20,151 tweets.

For annotating media content, Al-Muzaini and Al-Yahya [

26] created part of the Flickr and MS COCO caption dataset in an Arabic version as the first public Arabic image caption corpus. The corpus consists of 3427 images from both Flickr and MS COCO. The MS COCO dataset consists of 330,000 images with 2.5 M captions. The authors selected 1166 images with 5358 captions from the dataset. They utilized the CrowdFlower crowdsourcing platform as a human translator to provide Arabic captions for part of their database. Flickr8K consists of 8000 images. The authors selected 2261 images from the Flickr8K dataset. They had 150 images from Flickr with a total of 750 Arabic captions translated by professional English-Arabic translators. They translated the rest of the images by using the Google translator and then checked the translation provided by Arabic native speakers.

On the other hand, a game-based study [

22] developed the Kalemah system to digitize scanned Arabic documents. A challenging game was presented to the volunteers to encourage them to type the words swiftly and correctly. Moreover, volunteers had the ability to play with friends from social media who were invited to join the game. These words were extracted from scanned Arabic documents which required transformation into a digital format. They explained how micro-tasks could be achieved easily from crowdsourcing, especially if the platform introduced the task in a game context.

In another study [

21], the authors explored altruistic crowdsourcing, which is a volunteer-based crowdsourcing platform, to validate their approach named Kalam’DZ. They chose this type of crowdsourcing to harness community interest in a topic, rather than to draw people in to take up the task in pursuit of payment. They built a CrowdCrafting project that included two main tasks, i.e., Task Presenter and Task Creator. Workers who were craftsmen and employers were recruited. They created a form with target speech audio and buttons for responses about Algerian dialects, along with an Algerian map to help the craftsmen. Their study targeted only Algerian users and the written Arabic form. They showed how altruistic workers could achieve good results given that 81% of workers accurately matched the gold standard annotated by the experts.

This section has demonstrated several Arabic language research studies conducted with the help of crowdsourcing platforms. Our detailed investigation of the recent works proposed in this domain motivated the research team to propose specialized crowdsourcing for Arabic NLP research after concluding the following points.

There is a growing interest in Arabic NLP research; however, these platforms do not target Arabic audiences, which can lead to limited crowd participation. There is no platform that attracts Arabic speaking audiences using their language, and a single channel needs to be developed, which then will empower Arabic language research and unite efforts.

Although we have elaborated on a considerable body of Arabic research using the available platforms, we have also pointed out its limitations. At this point, we suggest that it is difficult to expand research objectives that highlight the unique Arabic language features and complexity.

A qualified Arabic audience with limited English language skills may have difficulties accessing the available platform and understanding the micro-task instructions.

The translation of the available interface platforms is not sufficient; however, the localization and addressing the complexity and diversity of the language are highly required.

4. Conceptualizing the Tashkeel Platform

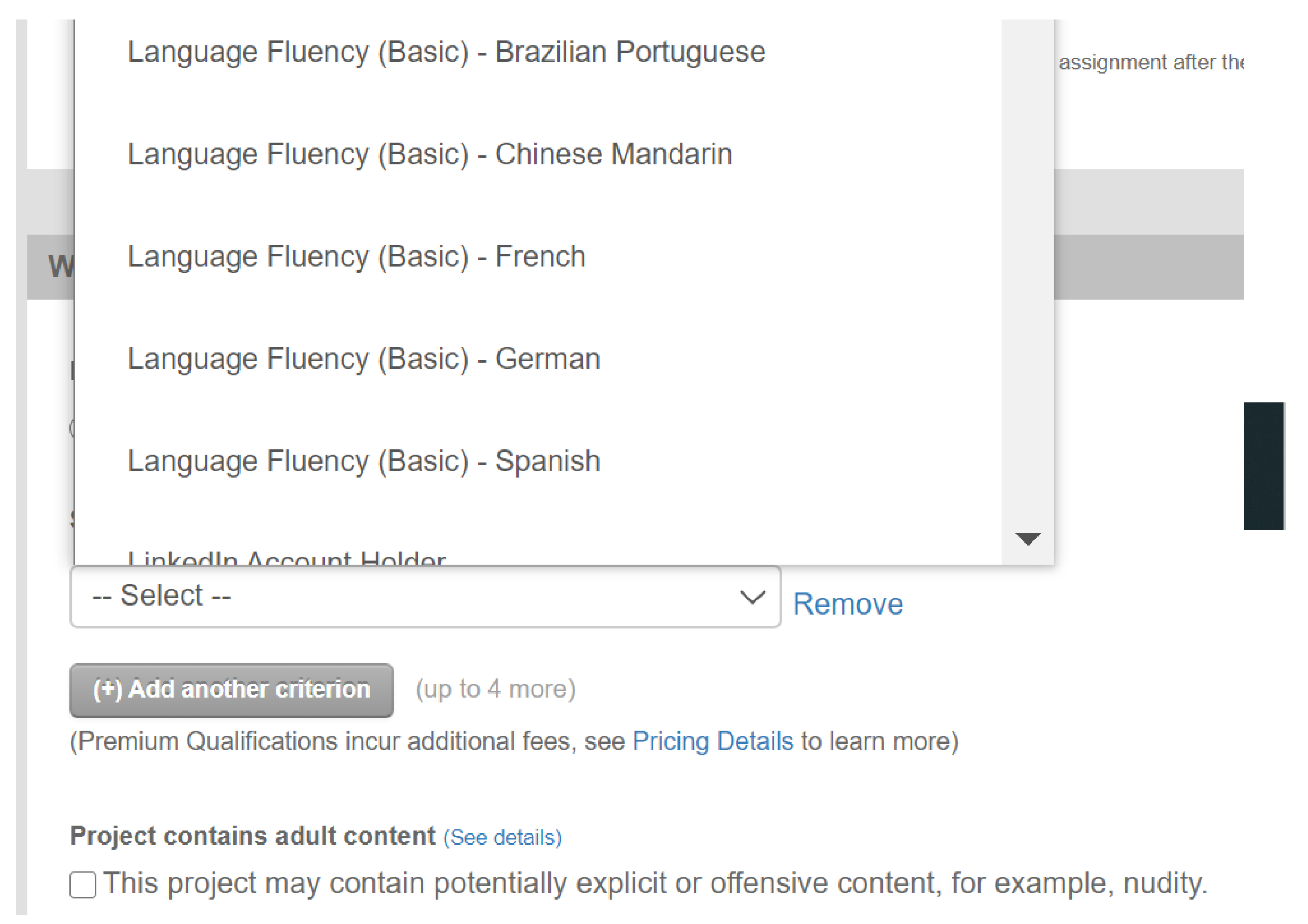

This paper proposes the Tashkeel platform [

27] as a crowdsourcing platform for Arabic language NLP research. This platform intends to support Arabic NLP language research. The platform addresses unique features and different dialects of the Arabic language, which may require specific qualifications. In addition to the features found in popular crowdsourcing platforms, Tashkeel offers an Arabic user interface, as well as new options that address the complexity of this rich language. For example, the project owner can specify the skillset needed for a project by choosing a specific dialect or qualification. This section illustrates the main aspects of the development of the Tashkeel platform.

To attain the specific research objectives thus defined, the paper employs a mixed method approach, bringing together qualitative research [

28] and applied research. To establish the context and build the conceptual frame, in which the case of Tashkeel is examined, content analysis and a case study method are employed [

29]. The critical evaluation of the findings thus attained is supported by the nested analysis method [

30].

4.1. Tashkeel Platform Design

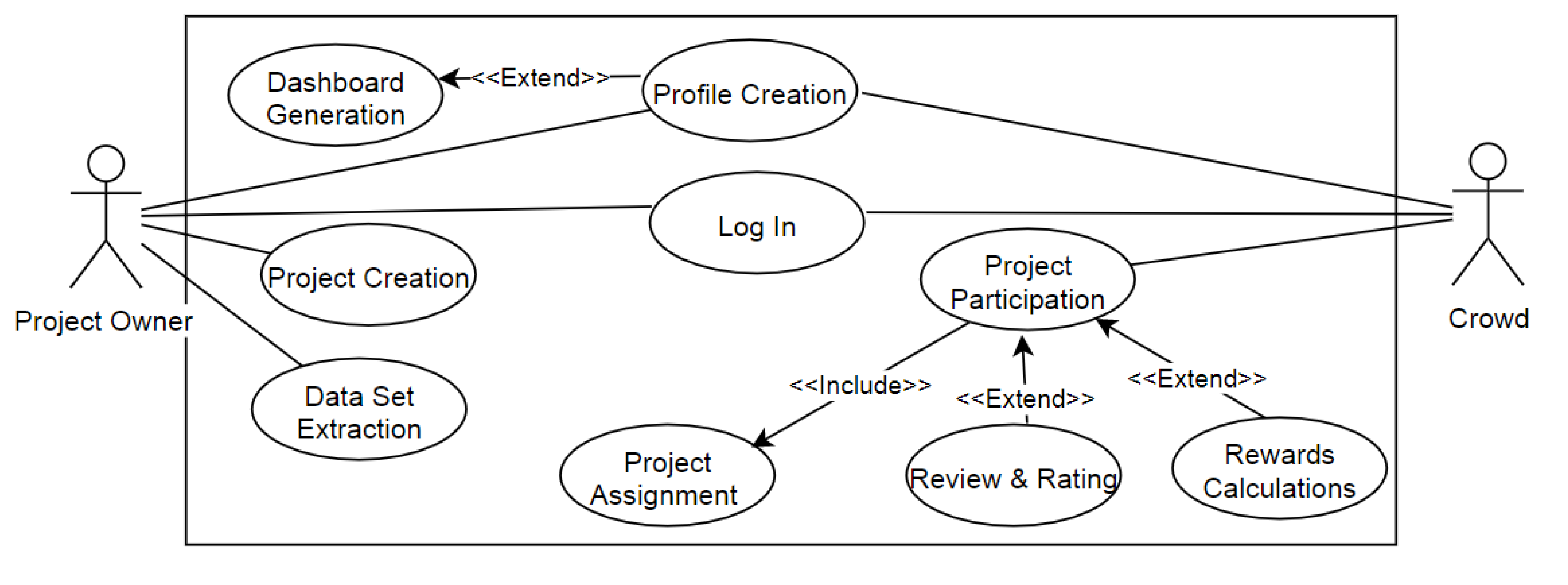

A use case diagram is employed to demonstrate system behavior in terms of actions that the system performs in collaboration with one or more users. The actions are illustrated in the use case, which provides observable and valuable results to system users.

Figure 1 depicts Tashkeel’s core function and main users in a use case diagram. The system has two main actors, namely, the project owner and the crowd. The project owner is a researcher who wants the crowd to help in preparing or creating the dataset for a specific study. The project owner is responsible for creating the project, identifying the skills required, and most importantly creating the micro-tasks for the crowd participants. After the micro-task submission, the project owner assesses the quality of the submitted micro-tasks themself and may accept or reject the participation, as well as rate the submitted work. The second actor is the crowd member who undertakes the micro-tasks. A crowd member is required to set up an account and specify their Arabic skill level. The system places an emphasis on three main Arabic skills, as follows:

The level of Arabic language, with at least one of the following characteristics or qualifications: Arabic speaker, mother language, bachelor’s degree in Arabic, or graduate studies in Arabic;

The dialect languages include Arabic dialects from all Arabic countries;

The adapted skills in Arabic include skills such as Arabic calligraphy, grammar, listening, public speaking, story-writing, and article-writing.

In addition to the actors, the use case diagram above illustrates the key functions of the Tashkeel platform, such as project creation; project participation; and rewards calculation, review, and rating. The system workflow is useful for clarifying the series of necessary activities and the sequence amongst these for completing a process. The Business Process Model and Notation (BPMN) diagram is used to model a high-level workflow of Tashkeel’s main business process. The diagram illustrates the two main actors, namely, the project owner and the crowd interacting with the Tashkeel platform. The process starts when a project owner creates a project. This activity allows the project owner to configure micro-task requirements and a submission workflow. It includes setting micro-tasks, skills required, rewards, project time windows, participation permissions, and task submission. Once the project is open, the Tashkeel platform displays the list of projects and micro-tasks to the crowd. To ensure that all of the micro-tasks have the same hiring opportunity, a round assignment logic is implemented. Once the crowd adds participation, the data is stored in the project dataset, the reward/payment is calculated, and the invoice is issued.

Figure 2 demonstrates Tashkeel’s main workflow using BPMN.

4.2. Applying Tashkeel

Tashkeel is a web-based system implemented using Asp.net, HTML, CSS, C#, JavaScript, and MSSQL. The Arabic language is employed for the user interface of Tashkeel’s web pages. An Agile development approach governs the development process in order to speed up the development time and get faster feedback. In alignment with agile principles, each function developed is tested and reviewed by target users. This implicitly refers to unit testing, integration testing, and user acceptance testing for each function developed. The remaining part of this section demonstrates important screenshots demonstrating the functioning of the Tashkeel platform.

The project owner is the main actor of the system, wherein project creation is the main activity in the Tashkeel platform. The project owner needs to specify the type of NLP projects using seven available types:

Image classification/تصنيف الصور: Classifies images based on specific questions;

Conversion from audio to text/تحويل من صوت إلى نص: Converts speech in an audio clip into written text;

Conversion from text image to editable text/تحويل من صورة إلى نص: Converts text in an image into written text;

Translation from dialect Arabic to Modern Standard Arabic/ترجمة من عربي الى عربي فصحى: Translates text written in dialect Arabic into Modern Standard Arabic;

Translation from any language to Arabic/ترجمة من اي لغة الى عربي: Translates text written in any language into Arabic;

Text classification/تصنيف النصوص: Classifies text based on specific questions;

Sound classification/تصنيف الأصوات: Classifies sound based on specific questions.

Figure 3,

Figure 4,

Figure 5 and

Figure 6 illustrate screenshots for project type specification. Please note that the screenshots depict figures described in Arabic characters, precisely because we have developed an Arabic language crowdsourcing platform. We are aware of the challenge that non-Arab readers will encounter at this stage. To bypass this challenge, under each of these figures, we provide a brief description of the content and purpose of the respective figure.

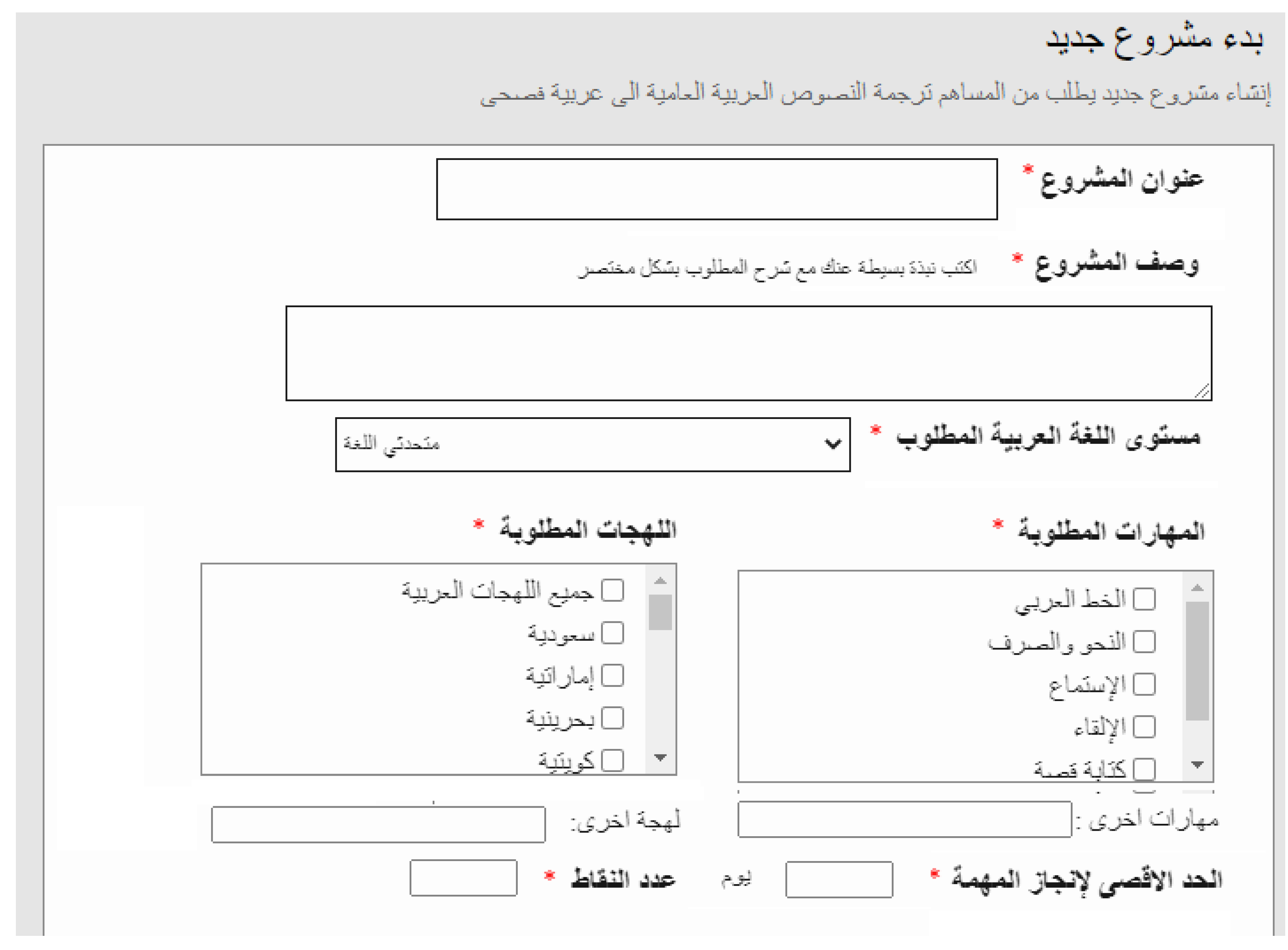

The project owner provides a description and instructions to clarify the tasks, skills required, and rewards, as shown in

Figure 4. The project owner can click to view the participation details, review and rate the participation, and perform other actions related to the work conducted. In addition, a search and filter using different fields, including the Arabic level, Arabic dialect, and project type, to view the crowd profiles available, as shown in

Figure 5, is available. In addition, the project owner dashboard where they can navigate the open and closed projects, task worker requests, submissions, and work pending approval is shown in

Figure 6.

Figure 4 depicts the settings and criteria required in a new project. The project owner will fill in fields such as the project title (

عنوان المشروع), a description of the project (

وصف المشروع), and the required level of qualification in Arabic language (

مستوى اللغة العربية المطلوبه). These may include at least one of the following characteristics or qualifications: Arabic speaker (

متحدثي اللغه), mother language (

الغه الأم), bachelor’s degree in Arabic (

بكالوريوس لغه عربيه), or graduate studies in Arabic (

شهادة دراسات عليا في اللعربية). Next, the owner will choose the specific Arabic dialects (

الهجة المطلوبه) from all Arabic countries. The project owner can also include skills, such as Arabic calligraphy(

الخط العربي), grammar (

النحو), listening (

الاستماع), public speaking (

الالفاء), and story-writing (

كتابه القصص, in the project setting, the micro task duration is specified in (

الحد الاقصى لانجاز المهمه).

Figure 5 shows all contributors listed and active in the platform. The owner can navigate through their profiles (

الملف الشخصي). In this way, the project owner can learn the contributors’ names (

اسم المساهم), country (

الدولة), ratings (

التقييم), date of joining the platform (

تاريخ الانضمام), Arabic language qualifications (

مستوى اللغه), skills (

المهارات اللغويه), and spoken dialect (

اللهجة المتعلقه).

Figure 6 depicts the screen featuring the dashboard that the project owner will use to follow the projects they are working on at a given time (

المشاؤيع الحالية). Accordingly, it serves as an overview of the project ID (

رقم المشروع), the title of the project (

عنوان المشروع), the date (

تاريخ الانشاء), and the number of contributors (

عدد المساهمين). The owner can also navigate the requests, the project details (

تفاصيل المشروع), and the project results (

عرض النتائج). The owner can update the project (

تعديل المشروع) or stop the requests (

ايقاف الطلبات).

The project owner can receive a generated dataset exported in an Excel file as a result of each of the seven NLP project types offered. The project owner can obtain a classified image, Arabic text of converted audio, Arabic text of converted images, text of standard Arabic translated from Arabic dialect, Arabic text translated from any language, classified Arabic text, or a classified sound dataset based on the project.

Figure 7 presents a sample of a generated dataset of Arabic text translated from the English language.

Figure 7 depicts examples of the results of a project listed with the test number (

رقم النص). It explains the case of translation from English into Arabic. Here specifically, we see the term ‘antivirus’ in text for the translation column (

النص المراد ترجمتة) and the five translation attempts into Arabic in the translated column

(النض المترجم) (فيروس, برامج للحمايه ضد الفيروسات, مكافحة الفيروسات, مضاد الفيروسات و برنامج حمايه). Another important actor in the crowd (contributors/

مساهم) can view the posted projects, related micro-tasks, and their rewards. After a successful login, the contributor can view a dashboard of previous work performed, which also takes them to the participation details. In order to participate, a list of open projects with the desired skills are listed, as shown in

Figure 8, and more detailed information can be accessed by clicking the project details. The project can be filtered by the skills required, project types, and other properties. The contributors from the crowd can work on the chosen micro-task if access permission is given.

Figure 9 shows an example of a micro-task screen for English to Arabic translation.

Figure 8 shows a screenshot of the platform where the (potential) contributors can find the available projects, e.g., the translation of English technical terms into Arabic (

ترجمة مصطلحات متعلقة بالحاسب و التقنية من الغه الانجليزية الى اللغة العربيه). On this page, the participation in projects can also be filtered by different criteria, including project types (

نوع المشروع), dialects (

اللهجات), and language qualifications (

مستوى اللغه العربيه).

Figure 9 is a screenshot where a contributor can actually perform the micro-task they decided to perform, e.g., as mentioned in

Figure 8, conducting a translation into Arabic. Specifically, the English term appears in the right section

(الرجاء تحويل النص الانجليزي الى نص عربي في المكان المخصص على) (اليسار و حفظ الرد عند الانتهاء منه), and the contributor will conduct the translation in the left blank section. Then, the contributor will save the work

(حفظ المهمة).

4.3. Tashkeel: Testing and Evaluation

As the Tashkeel platform is built in iteration, it is tested throughout the development. Each function developed is unit-tested by the developer and then reviewed on the server by Tashkeel owners. This ensures that each function developed is integrated with the rest of the system, which is referred to as integration testing. For example, if the project owner logs in successfully, the website transfers them to their personal dashboard with all the links and notifications relevant to the signed member. Another example of integration testing is that if a contributor from the crowd undertakes one of the micro-tasks, the reward is calculated, and the job can be viewed and approved by that project owner.

The second testing stage is usability testing to evaluate how easy-to-use the product is for the end users. It is non-functional testing that was conducted for the Tashkeel platform. The usability of the Tashkeel platform has been studied through the System Usability Scale (SUS). This is an industry standard based on ten-scale questions for usability assessment. SUS was developed as a quick and valid usability testing questionnaire [

31,

32]. This technique is reliable and technology independent, as it can detect small differences in small sample sizes. SUS has been proven to effectively distinguish between usable and unusable systems by normalizing the score between 0 and 100 with an average score of 68 [

33], as well as to depict the scale of learnability and acceptability [

34].

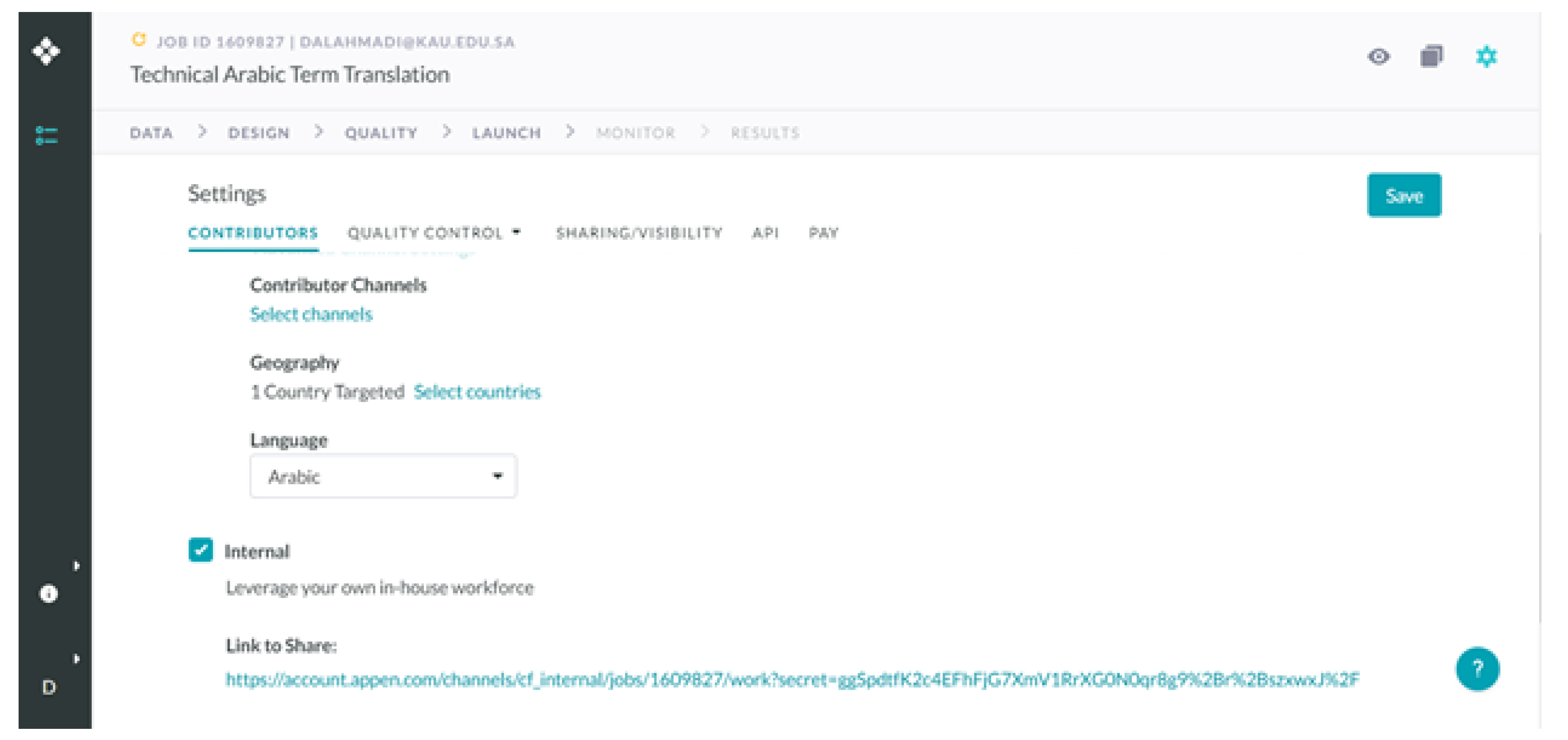

6. Tashkeel Evaluation: Technical Term Translation Case

For the purpose of testing Tashkeel, i.e., the Arabic language NLP crowdsourcing platform conceptualized, designed, developed, and elaborated in this paper, a technical term translation exercise was conducted at the King Abdulaziz University (KAU). The system was tested with real-world users. For this exercise, a beta version of the platform was launched using a remote server and a real case study of the Technical Term Dictionary (

المعجم العربي للمصطلحات التقنية) was conducted. The Tashkeel platform supports different types of problems that require human intelligence tasks to be handled by crowdsourcing. For the purpose of evaluation, the case study conducted was titled Arabic Technical Terms Dictionary (

المعجم العربي للمصطلحات التقنية) in a guided setting, in order to observe user interaction with the system followed by the performance of a survey. Two workflows were written in detailed steps: One for the project owner and the second for the crowd participant. The project owner was asked to create an account and then to create a project of translation from any language to Arabic (

ترجمة من اي لغة الى عربي), followed by the uploading of an Excel sheet containing 100 technical words randomly selected from the Techopedia website [

37]. In this case, the project owner was asked to define 20 words as the minimum number of words to be translated for every micro-task. Therefore, the selected 100 words were divided into five micro-tasks, each containing 20 words. Then, the project owner was asked to publish the project for a one-week period.

On the other end, the crowd contributors were asked to create accounts and access the project listed for this case study. Twenty participants from the Faculty of Computing and Information Technology (FCIT) at KAU served as contributors.

Table 1 summarizes the participants’ demographics.

They followed the instructions given to navigate the platform and undertake the micro-tasks. After conducting the micro-tasks, a survey containing the ten questions found on the SUS questionnaire [

31,

32] was administered, as shown in

Table 2.

Based on the responses collected from the 20 participants, the SUS score obtained was 72.5. This means that the Tashkeel platform was found to have a higher usability than indicated by the average score attained by other platforms. Conducting the SUS in this case study was useful for confirming the importance of the Tashkeel platform in fulfilling the requirements of NLP Arabic research. This has been proven through feedback from researchers who used the beta version of the platform to create a dataset for Arabic sign language translation. Furthermore, the case study highlighted some areas of improvement for enhancing the usability of the platform.

7. Conclusions and Future Work

The objective of this paper was to address the challenge of a lack of a comprehensive Arabic language-sensitive NLP crowdsourcing platforms. The review of existing approaches to crowdsourcing platforms, including the internationally popular crowdsourcing platforms, or to individually designed platforms, highlighted that there was a need to build an Arabic language-sensitive NLP crowdsourcing platform. Accordingly, the platform, named Tashkeel, was built and evaluated via SUS. The score was 72.5, i.e., well above the accepted usability average. Against this backdrop, a case was made that, if further developed and popularized, this crowdsourcing platform might encourage research requiring this tool of research to connect researchers and users in the linguistic domain of the Arabic language. Indeed, the crowdsourcing platform developed in this paper allows Arabic speakers with different levels of proficiency and qualifications to be identified and invited to participate in a research project from its inception. Any Arabic dialects can be chosen and dedicated to the project. In this regard, on the one hand, this crowdsourcing platform provides NLP researchers with new spaces to interact with a highly diversified, and thus, ‘representative’ ‘crowd’, and to perform specific HITs. Hence, research utilizing this NLP crowdsourcing platform acquires validity, relevance, and usability. This feature of the NLP crowdsourcing platform introduced in this paper defines this platform’s value-added in the field of data-driven decision-making and policy processes in general. Certainly, the road from this NLP crowdsourcing platform to the decision-making process is a long one; however, without the platform, masses of important pieces of information might be ignored. We are aware that further work needs to be done to address the issue of quality control as regards the specific HITs, e.g., by means of an algorithm that measures the quality by testing the accuracy of the participants’ answers. Nevertheless, we also want to stress that there is an urgent need to pursue, in parallel, research on the value-added of crowdsourcing platforms for the decision-making process. These two issues shall be addressed in our future research.