Mapping Spectral Composition of Nighttime Lighting in Urban Green Spaces Using SDGSAT-1 NTL Data and Google Earth Imagery

Highlights

- A Swin Transformer-based encoder–decoder framework, UGS-STUNet, was developed to classify five distinct urban green space (UGS) typologies from Google Earth imagery. The proposed UGS-STUNet outperformed state-of-the-art models across multiple evaluation metrics, achieving a precision of 85.72% and an F1 score of 83.73%.

- Blue-to-green (B/G) and green-to-red (G/R) ratios were proposed to map the spectral composition of lighting across different UGS typologies using SDGSAT-1 NTL data. We identified stark spectral heterogeneity across different UGS typologies in Shanghai. Street trees show highest red exposure, while forest patches, forest belts, and other green spaces exhibit blue-rich lighting environment.

- This research provides a scalable method for monitoring the spectral quality of urban nightscapes, offering critical evidence to inform sustainable urban planning and the design of light-mitigation strategies to support global biodiversity and public health.

- The urban ecological health of Shanghai’s UGS is differentially impacted by nighttime lighting, and the high-red-intensity exposure identified in street trees suggests a high risk of shifting the phytochrome photoequilibrium.

Abstract

1. Introduction

2. Materials and Methods

2.1. Study Area

2.2. Data Sources and Preprocessing

2.3. UGS Extraction

2.3.1. UGS Sample Labels Generation

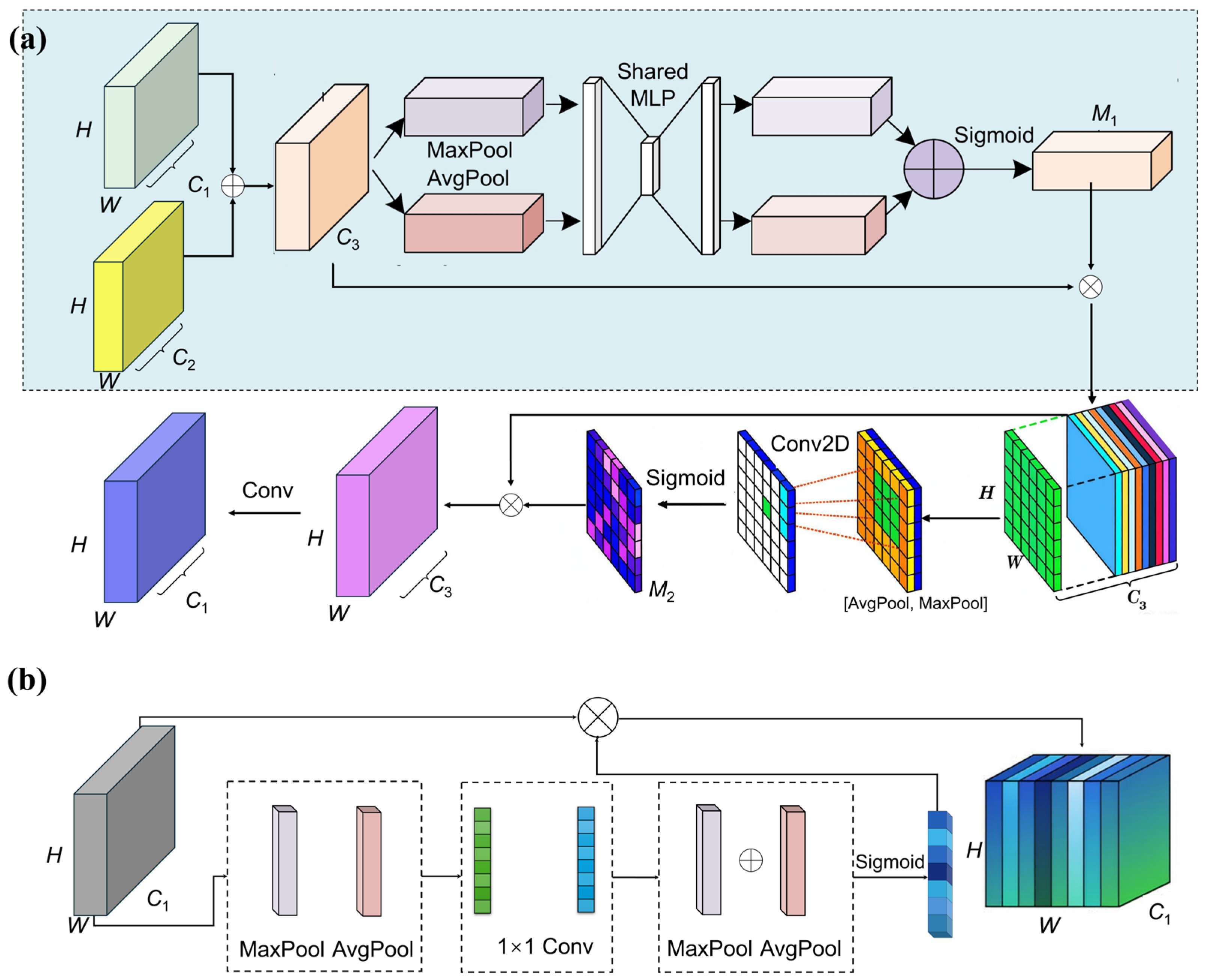

2.3.2. UGS-STUNet Framework

2.4. Spectral Indices Construction

2.5. Evaluation Metrics

3. Results

3.1. Accuracy Evaluation of UGS Extraction

3.2. Distinct Spectral Composition of Nighttime Lighting in UGS

4. Discussion

4.1. Comparison Between the Proposed Method and Existing Methods

4.2. Ablation Study and Module Contribution Analysis

4.3. Implications for Urban Ecosystems and Urban Planning

4.4. Limitations

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Bennie, J.; Davies, T.W.; Duffy, J.P.; Inger, R.; Gaston, K.J. Contrasting trends in light pollution across Europe based on satellite observed night time lights. Sci. Rep. 2014, 4, 3789. [Google Scholar] [CrossRef]

- Zielinska-Dabkowska, K.M.; Schernhammer, E.S.; Hanifin, J.P.; Brainard, G.C. Reducing nighttime light exposure in the urban environment to benefit human health and society. Science 2023, 380, 1130–1135. [Google Scholar] [CrossRef]

- Johnston, A.S.A.; Kim, J.; Harris, J.A. Widespread influence of artificial light at night on ecosystem metabolism. Nat. Clim. Change 2025, 15, 1371–1377. [Google Scholar] [CrossRef]

- Hu, Y.; Zhang, Y. Global nighttime light change from 1992 to 2017: Brighter and more uniform. Sustainability 2020, 12, 4905. [Google Scholar] [CrossRef]

- Li, D.; Zhao, X.; Li, X. Remote sensing of human beings—A perspective from nighttime light. Geo-Spat. Inf. Sci. 2016, 19, 69–79. [Google Scholar] [CrossRef]

- Li, G.; Cao, Y.; Fang, C.; Sun, S.; Qi, W.; Wang, Z.; He, S.; Yang, Z. Global urban greening and its implication for urban heat mitigation. Proc. Natl. Acad. Sci. USA 2025, 122, e2417179122. [Google Scholar] [CrossRef]

- Falchi, F.; Cinzano, P.; Duriscoe, D.; Kyba, C.C.M.; Elvidge, C.D.; Baugh, K.; Portnov, B.A.; Rybnikova, N.A.; Furgoni, R. The new world atlas of artificial night sky brightness. Sci. Adv. 2016, 2, e1600377. [Google Scholar] [CrossRef] [PubMed]

- Katabaro, J.M.; Yan, Y.; Hu, T.; Yu, Q.; Cheng, X. A review of the effects of artificial light at night in urban areas on the ecosystem level and the remedial measures. Front. Public Health 2022, 10, 969945. [Google Scholar] [CrossRef] [PubMed]

- Friulla, L.; Varone, L. Artificial Light at Night (ALAN) as an emerging urban stressor for tree phenology and physiology: A review. Urban Sci. 2025, 9, 14. [Google Scholar] [CrossRef]

- Kuang, W.; Dou, Y. Investigating the patterns and dynamics of urban green space in China’s 70 major cities using satellite remote sensing. Remote Sens. 2020, 12, 1929. [Google Scholar] [CrossRef]

- Zhang, B.; Xie, G.-d.; Li, N.; Wang, S. Effect of urban green space changes on the role of rainwater runoff reduction in Beijing, China. Landsc. Urban Plan. 2015, 140, 8–16. [Google Scholar] [CrossRef]

- Derdouri, A.; Murayama, Y.; Morimoto, T.; Wang, R.; Haji Mirza Aghasi, N. Urban green space in transition: A cross-continental perspective from eight Global North and South cities. Landsc. Urban Plan. 2025, 253, 105220. [Google Scholar] [CrossRef]

- Georgescu, M.; Morefield, P.E.; Bierwagen, B.G.; Weaver, C.P. Urban adaptation can roll back warming of emerging megapolitan regions. Proc. Natl. Acad. Sci. USA 2014, 111, 2909–2914. [Google Scholar] [CrossRef]

- Zhang, Y.; Wang, Y.; Ding, N.; Yang, X. Assessing the contributions of urban green space indices and spatial structure in mitigating urban thermal environment. Remote Sens. 2023, 15, 2414. [Google Scholar] [CrossRef]

- Yao, Z.; Liu, J.; Zhao, X.; Long, D.; Wang, L. Spatial dynamics of aboveground carbon stock in urban green space: A case study of Xi’an, China. J. Arid. Land 2015, 7, 350–360. [Google Scholar] [CrossRef]

- Liu, Z.; Liu, L.; Li, Y.; Li, X. Influence of urban green space landscape pattern on river water quality in a highly urbanized river network of Hangzhou city. J. Hydrol. 2023, 621, 129602. [Google Scholar] [CrossRef]

- Ward Thompson, C.; Roe, J.; Aspinall, P.; Mitchell, R.; Clow, A.; Miller, D. More green space is linked to less stress in deprived communities: Evidence from salivary cortisol patterns. Landsc. Urban Plan. 2012, 105, 221–229. [Google Scholar] [CrossRef]

- Hong, X.-C.; Zhang, D.-Y.; Hu, F.-B.; Guo, L.-H.; Liu, J.; Guo, H. Does urban green space form influence the spatial pattern of noise complaints? Sustain. Cities Soc. 2025, 130, 106506. [Google Scholar] [CrossRef]

- Fuller, R.A.; Irvine, K.N.; Devine-Wright, P.; Warren, P.H.; Gaston, K.J. Psychological benefits of greenspace increase with biodiversity. Biol. Lett. 2007, 3, 390–394. [Google Scholar] [CrossRef] [PubMed]

- De Ridder, K.; Adamec, V.; Bañuelos, A.; Bruse, M.; Bürger, M.; Damsgaard, O.; Dufek, J.; Hirsch, J.; Lefebre, F.; Pérez-Lacorzana, J.M.; et al. An integrated methodology to assess the benefits of urban green space. Sci. Total Environ. 2004, 334–335, 489–497. [Google Scholar] [CrossRef] [PubMed]

- Iwanicki, G.; Ściężor, T.; Tabaka, P.; Kotarba, A.Z.; Kunz, M.; Daab, D.; Kołton, A.; Kołomański, S.; Dłużewska, A.; Skorb, K. Integrating sustainable lighting into urban green space management: A case study of light pollution in Polish urban parks. Sustainability 2025, 17, 7833. [Google Scholar] [CrossRef]

- Ye, Y.; Tong, C.; Dong, B.; Huang, C.; Bao, H.; Deng, J. Alleviate light pollution by recognizing urban night-time light control area based on computer vision techniques and remote sensing imagery. Ecol. Indic. 2024, 158, 111591. [Google Scholar] [CrossRef]

- Lian, X.; Jiao, L.; Zhong, J.; Jia, Q.; Liu, J.; Liu, Z. Artificial light pollution inhibits plant phenology advance induced by climate warming. Environ. Pollut. 2021, 291, 118110. [Google Scholar] [CrossRef] [PubMed]

- Zheng, Q.; Teo, H.C.; Koh, L.P. Artificial light at night advances spring phenology in the united states. Remote Sens. 2021, 13, 399. [Google Scholar] [CrossRef]

- Shivanna, K.R. Impact of light pollution on nocturnal pollinators and their pollination services. Proc. Ind. Nat. Sci. Acad. 2022, 88, 626–633. [Google Scholar] [CrossRef]

- Ditmer, M.A.; Stoner, D.C.; Carter, N.H. Estimating the loss and fragmentation of dark environments in mammal ranges from light pollution. Biol. Conserv. 2021, 257, 109135. [Google Scholar] [CrossRef]

- Le Tallec, T.; Hozer, C.; Perret, M.; Théry, M. Light pollution and habitat fragmentation in the grey mouse lemur. Sci. Rep. 2024, 14, 1662. [Google Scholar] [CrossRef]

- Chen, B.; Wu, S.; Song, Y.; Webster, C.; Xu, B.; Gong, P. Contrasting inequality in human exposure to greenspace between cities of Global North and Global South. Nat. Commun. 2022, 13, 4636. [Google Scholar] [CrossRef]

- Wu, B.; Huang, H.; Wang, Y.; Shi, S.; Wu, J.; Yu, B. Global spatial patterns between nighttime light intensity and urban building morphology. Int. J. Appl. Earth Obs. Geoinf. 2023, 124, 103495. [Google Scholar] [CrossRef]

- Zheng, Q.; Seto, K.C.; Zhou, Y.; You, S.; Weng, Q. Nighttime light remote sensing for urban applications: Progress, challenges, and prospects. ISPRS J. Photogramm. Remote Sens. 2023, 202, 125–141. [Google Scholar] [CrossRef]

- Wu, B.; Song, Z.; Wu, Q.; Wu, J.; Yu, B. A vegetation nighttime condition index derived from the triangular feature space between nighttime light intensity and vegetation index. IEEE Trans. Geosci. Remote Sens. 2023, 61, 5618115. [Google Scholar] [CrossRef]

- Sánchez de Miguel, A.; Bennie, J.; Rosenfeld, E.; Dzurjak, S.; Gaston, K.J. Environmental risks from artificial nighttime lighting widespread and increasing across Europe. Sci. Adv. 2022, 8, eabl6891. [Google Scholar] [CrossRef]

- Garrett, J.K.; Donald, P.F.; Gaston, K.J. Skyglow extends into the world’s Key Biodiversity Areas. Anim. Conserv. 2020, 23, 153–159. [Google Scholar] [CrossRef]

- Koen, E.L.; Minnaar, C.; Roever, C.L.; Boyles, J.G. Emerging threat of the 21st century lightscape to global biodiversity. Glob. Change Biol. 2018, 24, 2315–2324. [Google Scholar] [CrossRef] [PubMed]

- Bennie, J.; Duffy, J.P.; Davies, T.W.; Correa-Cano, M.E.; Gaston, K.J. Global trends in exposure to light pollution in natural terrestrial ecosystems. Remote Sens. 2015, 7, 2715–2730. [Google Scholar] [CrossRef]

- Gaston, K.J.; Duffy, J.P.; Bennie, J. Quantifying the erosion of natural darkness in the global protected area system. Conserv. Biol. 2015, 29, 1132–1141. [Google Scholar] [CrossRef]

- Zheng, Z.; Wu, Z.; Chen, Y.; Guo, G.; Cao, Z.; Yang, Z.; Marinello, F. Africa’s protected areas are brightening at night: A long-term light pollution monitor based on nighttime light imagery. Glob. Environ. Change 2021, 69, 102318. [Google Scholar] [CrossRef]

- Levin, N.; Kyba, C.C.M.; Zhang, Q.; Sánchez de Miguel, A.; Román, M.O.; Li, X.; Portnov, B.A.; Molthan, A.L.; Jechow, A.; Miller, S.D.; et al. Remote sensing of night lights: A review and an outlook for the future. Remote Sens. Environ. 2020, 237, 111443. [Google Scholar] [CrossRef]

- Guo, H.; Dou, C.; Liang, D.; Fu, B.; Chen, H.; Zou, Z.; Huang, P.; Li, X.; Chen, F.; Han, C.; et al. The SDGSAT-1 mission and its role in monitoring SDG indicators. Remote Sens. Environ. 2025, 328, 114885. [Google Scholar] [CrossRef]

- Huang, H.; Wu, B.; Wang, Y.; Yu, B.; Huang, H.; Zhang, W. Towards building floor-level nighttime light exposure assessment using SDGSAT-1 GLI data. ISPRS J. Photogramm. Remote Sens. 2025, 223, 375–397. [Google Scholar] [CrossRef]

- Wang, Y.; Huang, H.; Wu, B. Evaluating the potential of SDGSAT-1 glimmer imagery for urban road detection. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2025, 18, 785–794. [Google Scholar] [CrossRef]

- Guo, H.; Dou, C.; Chen, H.; Liu, J.; Fu, B.; Li, X.; Zou, Z.; Liang, D. SDGSAT-1: The world’s first scientific satellite for sustainable development goals. Sci. Bull. 2023, 68, 34–38. [Google Scholar] [CrossRef]

- Li, J.; Wang, Y.; Huang, H.; Zhang, Z.; Wu, B. Low and uneven rural road lighting coverage in Africa. Commun. Earth Environ. 2025, 6, 960. [Google Scholar] [CrossRef]

- Liu, S.; Zhou, Y.; Wang, F.; Wang, S.; Wang, Z.; Wang, Y.; Qin, G.; Wang, P.; Liu, M.; Huang, L. Lighting characteristics of public space in urban functional areas based on SDGSAT-1 glimmer imagery:A case study in Beijing, China. Remote Sens. Environ. 2024, 306, 114137. [Google Scholar] [CrossRef]

- Shen, Y.; Sun, F.; Che, Y. Public green spaces and human wellbeing: Mapping the spatial inequity and mismatching status of public green space in the Central City of Shanghai. Urban For. Urban Green. 2017, 27, 59–68. [Google Scholar] [CrossRef]

- Fan, P.; Xu, L.; Yue, W.; Chen, J. Accessibility of public urban green space in an urban periphery: The case of Shanghai. Landsc. Urban Plan. 2017, 165, 177–192. [Google Scholar] [CrossRef]

- Liu, S.; Wang, C.; Chen, Z.; Li, W.; Zhang, L.; Wu, B.; Huang, Y.; Li, Y.; Ni, J.; Wu, J.; et al. Efficacy of the SDGSAT-1 Glimmer Imagery in measuring sustainable development goal indicators 7.1.1, 11.5.2, and target 7.3. Remote Sens. Environ. 2024, 305, 114079. [Google Scholar] [CrossRef]

- Huang, H.; Wang, Y.; Li, J.; Zhang, Z.; Zhang, W.; Huang, H.; Wu, B. A linear structure-constrained denoising method for enhancing SDGSAT-1 GLI data. Remote Sens. Environ. 2026; in revision. [Google Scholar]

- Wu, B.; Wang, Y.; Huang, H.; Liu, S.; Yu, B. Potential of SDGSAT-1 nighttime light data in extracting urban main roads. Remote Sens. Environ. 2024, 315, 114448. [Google Scholar] [CrossRef]

- Lin, A.; Chen, B.; Xu, J.; Zhang, Z.; Lu, G.; Zhang, D. DS-TransUNet: Dual swin transformer U-Net for medical image segmentation. IEEE Trans. Instrum. Meas. 2022, 71, 4005615. [Google Scholar] [CrossRef]

- Zhang, J.; Qin, Q.; Ye, Q.; Ruan, T. ST-Unet: Swin Transformer boosted U-Net with Cross-Layer Feature Enhancement for medical image segmentation. Comput. Biol. Med. 2023, 153, 106516. [Google Scholar] [CrossRef]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 9992–10002. [Google Scholar]

- Shafiq, M.; Gu, Z. Deep residual learning for image recognition: A survey. Appl. Sci. 2022, 12, 8972. [Google Scholar] [CrossRef]

- Özdemir, Ö.; Sönmez, E.B. Weighted cross-entropy for unbalanced data with application on COVID X-ray images. In Proceedings of the 2020 Innovations in Intelligent Systems and Applications Conference (ASYU), Istanbul, Turkey, 15–17 October 2020; pp. 1–6. [Google Scholar]

- Shelhamer, E.; Long, J.; Darrell, T. Fully convolutional networks for semantic segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 640–651. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional networks for biomedical image segmentation. In International Conference on Medical Image Computing and Computer-Assisted Intervention, Munich, Germany, 5–9 October 2015; Springer International Publishing: Cham, Switzerland, 2015; pp. 234–241. [Google Scholar]

- Chen, L.-C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-decoder with atrous separable convolution for semantic image segmentation. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 801–818. [Google Scholar]

- Sheerin, D.J.; Hiltbrunner, A. Molecular mechanisms and ecological function of far-red light signalling. Plant Cell Environ. 2017, 40, 2509–2529. [Google Scholar] [CrossRef]

- Chibani, K.; Gherli, H.; Fan, M. The role of blue light in plant stress responses: Modulation through photoreceptors and antioxidant mechanisms. Front. Plant Sci. 2025, 16, 1554281. [Google Scholar] [CrossRef]

- Li, P.; Cheng, H.; Kumar, V.; Lupala, C.S.; Li, X.; Shi, Y.; Ma, C.; Joo, K.; Lee, J.; Liu, H.; et al. Direct experimental observation of blue-light-induced conformational change and intermolecular interactions of cryptochrome. Commun. Biol. 2022, 5, 1103. [Google Scholar] [CrossRef]

- Kaiser, E.; Weerheim, K.; Schipper, R.; Dieleman, J.A. Partial replacement of red and blue by green light increases biomass and yield in tomato. Sci. Hortic. 2019, 249, 271–279. [Google Scholar] [CrossRef]

- Brelsford, C.C.; Trasser, M.; Paris, T.; Hartikainen, S.M.; Robson, T.M. Understorey light quality affects leaf pigments and leaf phenology in different plant functional types. Physiol. Plant 2022, 174, e13723. [Google Scholar] [CrossRef] [PubMed]

- Athanasiadou, M.; Schulz, M.; Meyhöfer, R. The effect of blue and UV light-emitted diodes (LEDs) on the disturbance of the whitefly natural enemies Macrolophus pygmaeus and Encarsia formosa. Biol. Control 2024, 199, 105663. [Google Scholar] [CrossRef]

- Owens, A.C.S.; Lewis, S.M. The impact of artificial light at night on nocturnal insects: A review and synthesis. Ecol. Evol. 2018, 8, 11337–11358. [Google Scholar] [CrossRef] [PubMed]

- Alva, A.; Brown, E.; Evans, A.; Morris, D.; Dunning, K. Dark Sky Parks: Public policy that turns off the lights. J. Environ. Plan. Manag. 2025, 68, 907–934. [Google Scholar] [CrossRef]

- Shi, X.; Kocifaj, M.; Li, X.; Li, D.; Li, J. Impact of atmospheric effect and observation geometry on the directional distribution of blooming effect in VIIRS night-time light images. Remote Sens. Environ. 2025, 331, 115017. [Google Scholar] [CrossRef]

- Tan, X.; Zhu, X.; Chen, J.; Chen, R. Modeling the direction and magnitude of angular effects in nighttime light remote sensing. Remote Sens. Environ. 2022, 269, 112834. [Google Scholar] [CrossRef]

| Band | Gain | Offset | Band Width (μm) |

|---|---|---|---|

| R | 0.0000102744 | 0.0000099253 | 0.294 |

| G | 0.0000041779 | 0.0000060840 | 0.106 |

| B | 0.0000070119 | 0.0000136754 | 0.102 |

| Method | Precision (%) | Recall (%) | F1 Score (%) |

|---|---|---|---|

| FCN | 75.69 | 67.87 | 71.57 |

| U-Net | 78.03 | 76.69 | 77.35 |

| DeeplabV3+ | 80.12 | 78.24 | 79.17 |

| UGS-STUNet | 85.72 | 81.83 | 83.73 |

| Method | Precision (%) | Recall (%) | F1 Score (%) |

|---|---|---|---|

| Baseline | 83.15 | 79.33 | 81.19 |

| Baseline + Residual block | 84.37 | 81.24 | 82.77 |

| Baseline + Residual block + FFM | 85.47 | 81.42 | 83.39 |

| Baseline + Residual block + FEM | 85.49 | 81.46 | 83.42 |

| Baseline + Residual block + FFM + FEM | 85.72 | 81.83 | 83.73 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Yuan, Y.; Lu, Z.; Liu, H.; Wang, B.; Xu, Y.; Zhang, Z.; Li, J.; Wu, B. Mapping Spectral Composition of Nighttime Lighting in Urban Green Spaces Using SDGSAT-1 NTL Data and Google Earth Imagery. Remote Sens. 2026, 18, 732. https://doi.org/10.3390/rs18050732

Yuan Y, Lu Z, Liu H, Wang B, Xu Y, Zhang Z, Li J, Wu B. Mapping Spectral Composition of Nighttime Lighting in Urban Green Spaces Using SDGSAT-1 NTL Data and Google Earth Imagery. Remote Sensing. 2026; 18(5):732. https://doi.org/10.3390/rs18050732

Chicago/Turabian StyleYuan, Yuan, Zhiqiang Lu, Hongbo Liu, Boyang Wang, Yanni Xu, Zhirong Zhang, Jiahuan Li, and Bin Wu. 2026. "Mapping Spectral Composition of Nighttime Lighting in Urban Green Spaces Using SDGSAT-1 NTL Data and Google Earth Imagery" Remote Sensing 18, no. 5: 732. https://doi.org/10.3390/rs18050732

APA StyleYuan, Y., Lu, Z., Liu, H., Wang, B., Xu, Y., Zhang, Z., Li, J., & Wu, B. (2026). Mapping Spectral Composition of Nighttime Lighting in Urban Green Spaces Using SDGSAT-1 NTL Data and Google Earth Imagery. Remote Sensing, 18(5), 732. https://doi.org/10.3390/rs18050732