A Usability Study of Classical Mechanics Education Based on Hybrid Modeling: Implications for Sustainability in Learning

Abstract

:1. Introduction

1.1. Physics Teaching Challenges

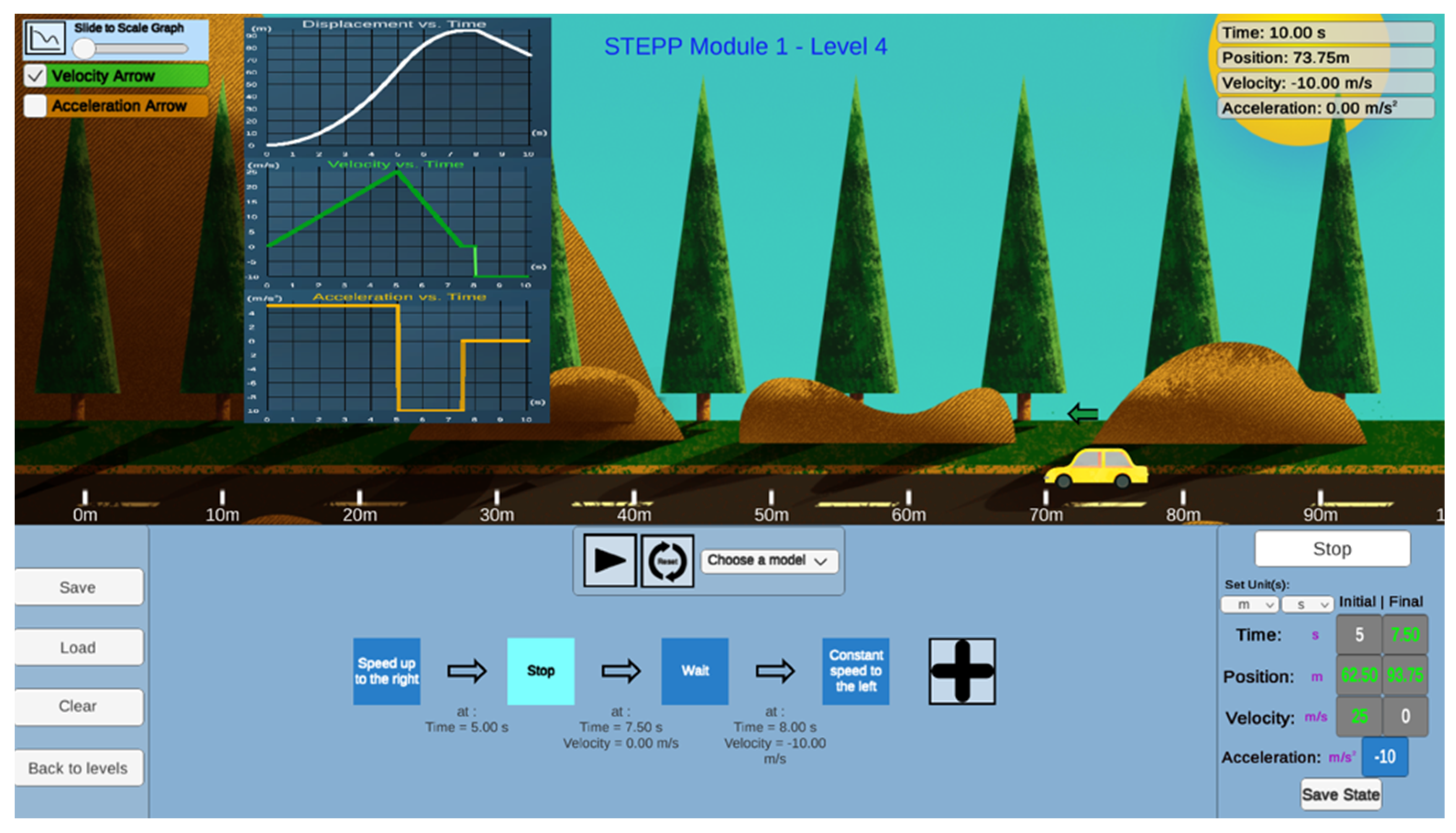

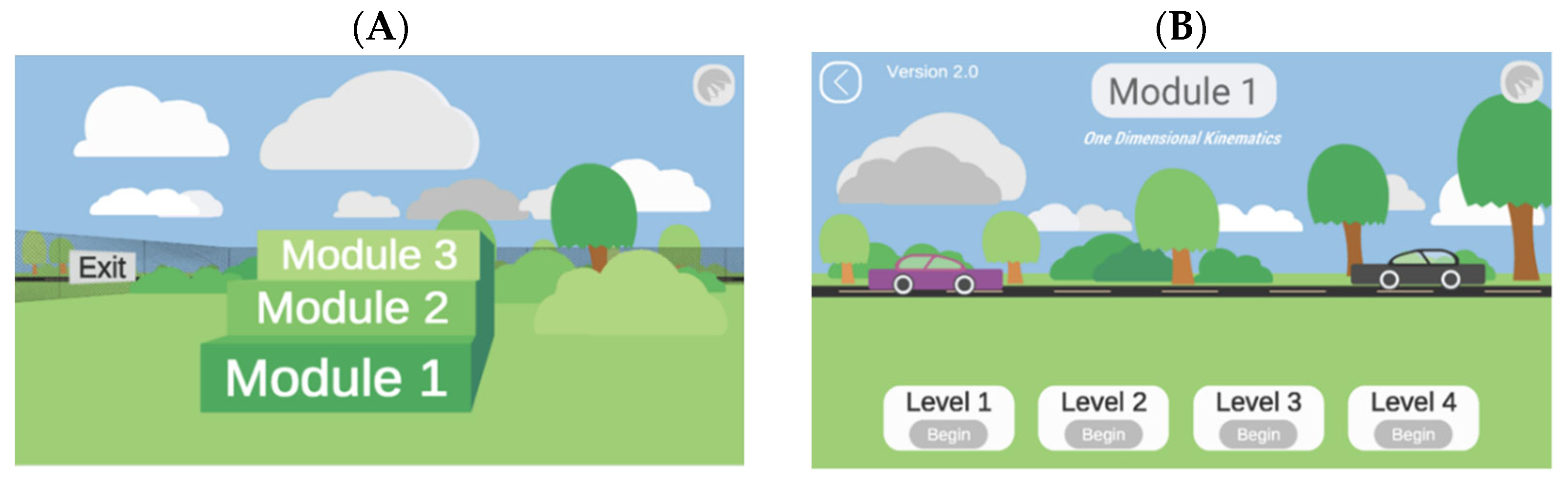

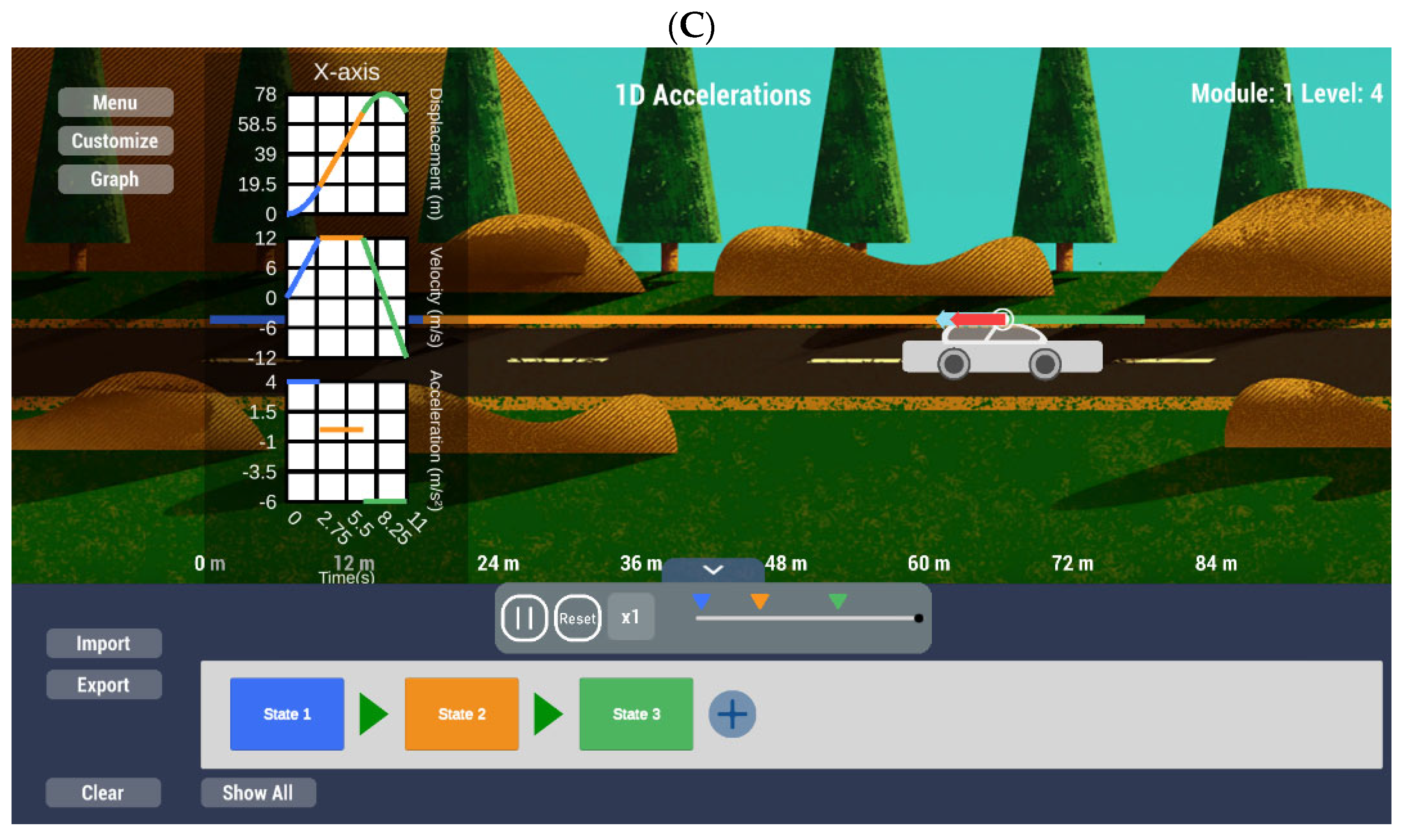

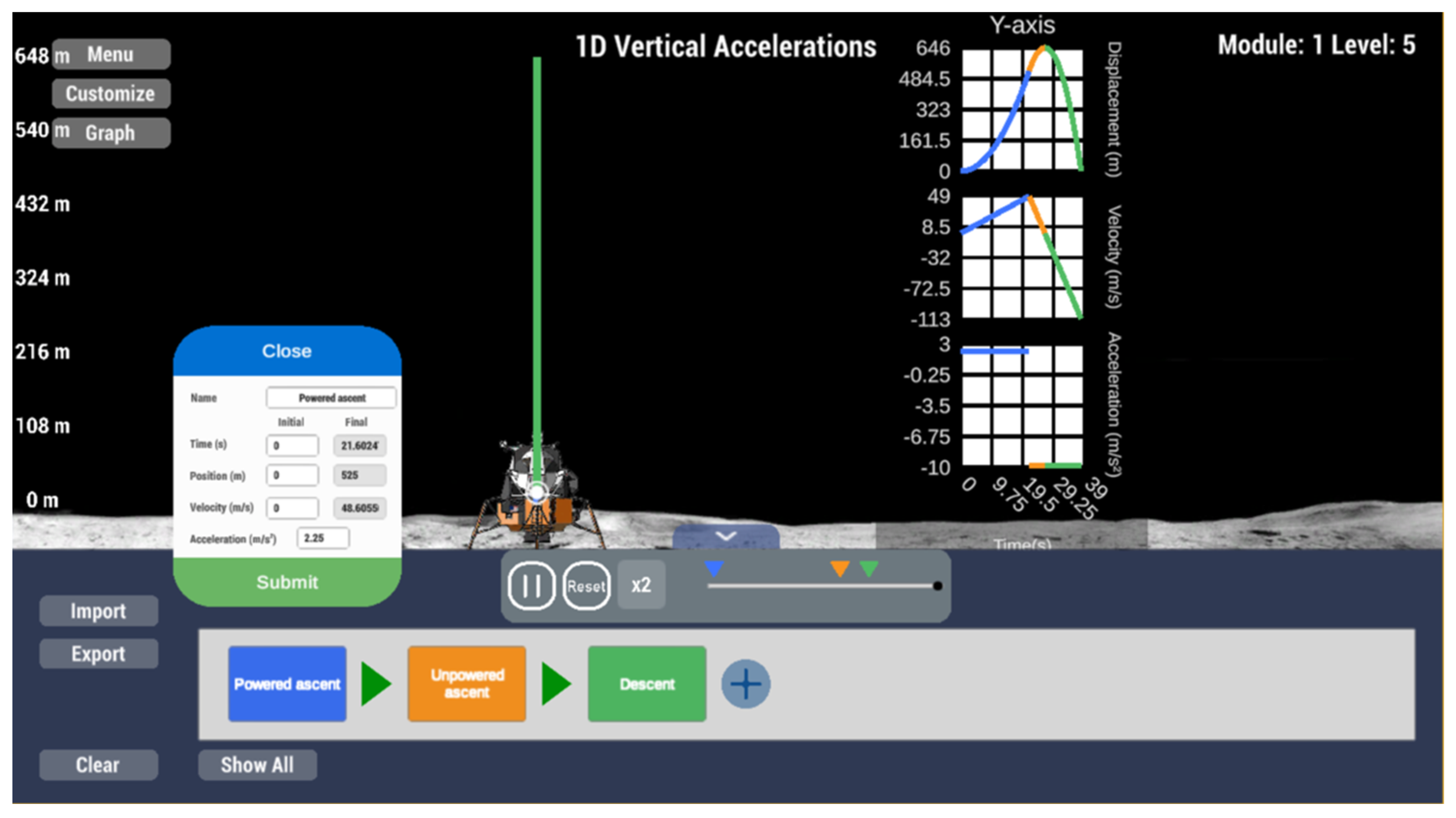

1.2. Overview of STEPP

1.3. Sustainability in Learning through Modeling and Simulation

1.4. Usability Testing and Educational Software

1.5. Overview of the Present Study

2. Materials and Methods

2.1. Participants and Design

2.2. Procedure

2.3. Software

- (a)

- What is the maximum height this rocket will reach above the launch pad?

- (b)

- How much time will elapse after engine failure before the rocket comes crashing down to the launch pad, and how fast will it be moving just before it crashes?

- (c)

- Sketch ay-t, vy-t, and y-t graphs of the rocket’s motion from the instant of blast-off to the instant just before it strikes the launch pad”.

2.4. Measures

3. Results

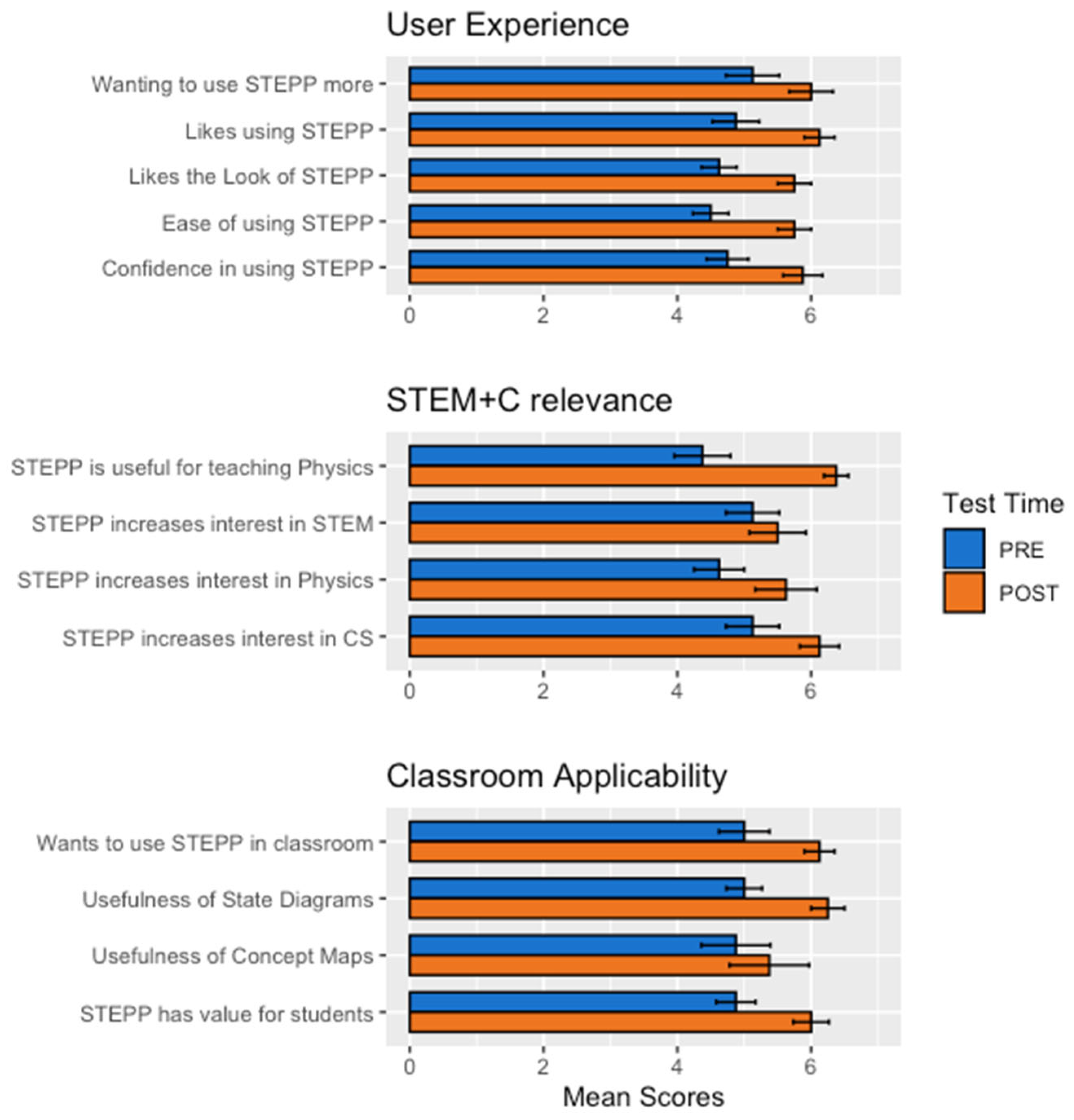

3.1. User Experience (Time 1 Chronbach’s α = 0.88; Time 2 Chronbach’s α = 0.57)

3.2. STEM-C Relevance (Time 1 Chronbach’s α = 0.79; Time 2 Chronbach’s α = 0.77)

3.3. Classroom Applicability (Time 1 Chronbach’s α = 0.83; Time 2 Chronbach’s α = 0.75)

3.4. Qualitative Feedback

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- OECDiLibrary. Available online: www.oecd-ilibrary.org/docserver/9789264266490-en.pdf?expires=1630951176&id=id&accname=guest&checksum=1353D3D4BECFA179C945542BF454CD3E (accessed on 6 September 2021).

- Bozzi, M.; Ghislandi, P.; Zani, M. Highlight Misconceptions in Physics: A T.I.M.E. Project. In Proceedings of the INTED2019 Conference, Valencia, Spain, 11–13 March 2019. [Google Scholar]

- Kuczmann, I. The Structure of Knowledge and Students’ Misconceptions in Physics. In Proceedings of the AIP Conference, Timisoara, Romania, 25–27 May 2017. [Google Scholar]

- Sadler, P.M.; Robert, H.T. Success in introductory college physics: The role of high school preparation. Sci. Educ. 2001, 85, 111–136. [Google Scholar] [CrossRef]

- Baldi, S.; Jin, Y.; Green, P.J.; Herget, D. Highlights from PISA 2006: Performance of US 15-Year-Old Students in Science and Mathematics Literacy in an International Context; National Center for Education Statistics (ED): Washington, DC, USA, 2008. [Google Scholar]

- CSTA. Available online: https://advocacy.code.org/stateofcs (accessed on 6 September 2021).

- Gal-Ezer, J.; Stephenson, C. A tale of two countries: Successes and challenges in K-12 computer science education in Israel and the United States. TOCE 2014, 14, 8. [Google Scholar] [CrossRef]

- Cuny, J. Transforming high school computing: A call to action. ACM Inroads 2012, 3, 32–36. [Google Scholar] [CrossRef]

- The College Board. Available online: https://secure-media.collegeboard.org/digitalServices/pdf/research/AP-Program-Summary-Report-2011.pdf (accessed on 8 April 2021).

- Yadav, A.; Gretter, S.; Hambrusch, S.; Sands, P. Expanding computer science education in schools: Understanding teacher experiences and challenges. Comput. Sci. Educ. 2016, 26, 235–254. [Google Scholar] [CrossRef]

- Kitagawa, M.; Fishwick, P.; Kesden, M.; Urquhart, M.; Guadagno, R.; Jin, R.; Tran, N.; Omogbehin, E.; Prakash, A.; Awaraddi, P.; et al. Scaffolded Training Environment for Physics Programming (STEPP): Modeling High School Physics Using Concept Maps and State Machines. In Proceedings of the ACM SIGSIM Conference, Chicago, IL, USA, 3–5 June 2019. [Google Scholar]

- Mustafee, N.; Powell, J.H. From Hybrid Simulation to Hybrid Systems Modeling. In Proceedings of the Winter Simulation Conference, Gothenburg, Sweden, 9–12 December 2018; pp. 1430–1439. [Google Scholar]

- Brailsford, S.C.; Eldabi, T.; Eldabi, T.; Kunc, M.; Mustafee, N.; Orsorio, A.F. Hybrid simulation modelling in operational research: A state-of-the-art review. Eur. J. Oper. Res. 2019, 278, 721–737. [Google Scholar] [CrossRef]

- Dawson, J.W.; Chen, P.; Hu, Y. Usability Study of the Virtual Test Bed and Distributed Simulation. In Proceedings of the Winter Simulation Conference, Orlando, FL, USA, 4 December 2005; pp. 1298–1305. [Google Scholar]

- Rechowicz, K.J.; Diallo, S.Y.; Ball, D.K.; Solomon, J. Designing Modeling and Simulation User Experiences: An Empirical Study Using Virtual Art Creation. In Proceedings of the Winter Simulation Conference, Gothenburg, Sweden, 9–12 December 2018; pp. 135–146. [Google Scholar]

- Giabbanelli, P.J.; Norman, M.L.; Fattoruso, M. CoFluences: Simulating the Spread of Social Influences via a Hybrid Agent-Based/Fuzzy Cognitive Maps Architecture. In Proceedings of the ACM SIGSIM Conference, Chicago, IL, USA, 3–5 June 2019. [Google Scholar]

- Sherin, B.L. How students understand physics equations. Cognit. Instr. 2001, 19, 479–541. [Google Scholar] [CrossRef]

- Hopcroft, J.E.; Motwani, R.; Ullman, J.D. Introduction to Automata Theory, Languages, and Computation, 3rd ed.; Pearson Education: Boston, MA, USA, 2007; pp. 1–527. [Google Scholar]

- Fowler, M. UML Distilled: A Brief Guide to the Standard Object Modeling Language, 3rd ed.; Addison-Wesley Professional: Boston, MA, USA, 2003; pp. 1–118. [Google Scholar]

- Wing, J.M. Computational thinking and thinking about computing. Philos. Trans. R. Soc. A Math. Phys. Eng. Sci. 2008, 366, 3717–3725. [Google Scholar]

- Meadows, D.H. Thinking in Systems: A Primer; Chelsea Green Publishing Company: Hartford, VT, USA, 2008; pp. 1–240. [Google Scholar]

- Weintrop, D.; Behesti, E.; Horn, M.; Orton, K.; Jona, K.; Trouille, L.; Wilensky, U. Defining computational thinking for mathematics and science classrooms. J. Sci. Educ. Technol. 2016, 25, 127–147. [Google Scholar] [CrossRef]

- Wing, J.M. Computational Thinking. Commun. ACM 2006, 49, 33–35. [Google Scholar] [CrossRef]

- Easterbrook, S. From Computational Thinking to Systems Thinking: A conceptual toolkit for sustainability computing. In Proceedings of the 2nd International Conference on Information and Communication Technologies for Sustainability (ICT4S’2014), Stockholm, Sweden, 24–27 August 2014. [Google Scholar]

- Novak, J.D. Learning, Creating, and Using Knowledge: Concept Maps as Facilitative Tools in Schools and Corporations, 2nd ed.; Routledge: London, UK, 2010; pp. 1–308. [Google Scholar]

- Deshpande, A.A.; Huang, S.H. Simulation games in engineering education: A state-of-the-art review. Comput. Appl. Eng. Educ. 2011, 19, 399–410. [Google Scholar] [CrossRef]

- Smetana, L.K.; Bell, R.L. Computer simulations to support science instruction and learning: A critical review of the literature. Int. J. Sci. Educ. 2012, 34, 1337–1370. [Google Scholar] [CrossRef]

- Lewis, J.R.; Sauro, J. Usability and user experience: Design and evaluation. In Handbook of Human Factors and Ergonomics; Wiley: Hoboken, NJ, USA, 2021; pp. 972–1015. [Google Scholar]

- Shute, V.J.; Smith, G.; Kuba, R.; Dai, C.P.; Rahimi, S.; Liu, Z.; Almond, R. The Design, Development, and Testing of Learning Supports for the Physics Playground Game. Int. J. Artif. Intell. Educ. 2020, 31, 357–379. [Google Scholar] [CrossRef]

- Costabile, M.F.; De Marsico, M.; Lanzilotti, R.; Plantamura, V.L.; Roselli, T. On the usability evaluation of e-learning applications. In Proceedings of the 38th Annual Hawaii International Conference on System Sciences, Big Island, HI, USA, 3–6 January 2005; p. 6b. [Google Scholar]

- Wieman, C.E.; Perkins, K.K. A powerful tool for teaching science. Nat. Phys. 2006, 2, 290–292. [Google Scholar] [CrossRef]

- Lee, W.C.; Neo, W.L.; Chen, D.T.; Lin, T.B. Fostering changes in teacher attitudes toward the use of computer simulations: Flexibility, pedagogy, usability and needs. Educ. Inf. Technol. 2021, 26, 4905–4923. [Google Scholar] [CrossRef]

- Ndihokubwayo, K.; Uwamahoro, J.; Ndayambaje, I. Usability of Electronic Instructional Tools in the Physics Classroom. EURASIA J. Math. Sci. Technol. Educ. 2020, 16, em1897. [Google Scholar] [CrossRef]

- Young, H.D.; Freedman, R.A.; Ford, A.L. Sears and Zemansky’s University Physics with Modern Physics, 14th ed.; Pearson Education: London, UK, 2016; p. 61. [Google Scholar]

- Hasan, L. Evaluating the Usability of Educational Websites Based on Students' Preferences of Design Characteristics. Int. Arab. J. e-Technol. 2014, 3, 179–193. [Google Scholar]

- Virzi, R.A. Refining the test phase of usability evaluation: How many subjects is enough? Hum. Factors 1992, 34, 457–468. [Google Scholar] [CrossRef]

- Gorvine, B.; Rosengren, K.; Stein, L.; Biolsi, K. Research Methods: From Theory to Practice, 1st ed.; Oxford University Press: Oxford, UK, 2017; pp. 1–496. [Google Scholar]

- Cohen, J. A power primer. Psychol. Bull. 1992, 112, 155. [Google Scholar] [CrossRef] [PubMed]

| Item | Conceptual Category | T1 vs. T2 Correlation |

|---|---|---|

| 1. STEPP [will be] was easy to use. | User Exp | 0.54 |

| 2. I [will like] liked the look of STEPP. | User Exp | −0.20 |

| 3. I [will like] liked using STEPP. | User Exp | 0.28 |

| 4. [I expect that] Using STEPP increased my interest in physics. | STEM+C | 0.61 |

| 5. [I expect that] Using STEPP increased my interest in computer science. | STEM+C | 0.44 |

| 6. [I expect that] Using STEPP increased my interest in STEM (Science Technology Engineering Mathematics). | STEM+C | 0.37 |

| 7. [I expect that after using it,] I want to use STEPP more. | User Exp | 0.41 |

| 8. [I expect that] Using STEPP will help me teach physics. | STEM+C | 0.90 ** |

| 9. [I expect that] Using STEPP made me feel more confident that I use it in my classroom to teach physics. | User Exp | 0.72 * |

| 10. I would like to use STEPP in my classroom to help my students learn physics. | CLASS APP | 0.83 * |

| 11. [I expect that] STEPP is valuable for students learning physics. | CLASS APP | 0.91 ** |

| 12. [I expect that] The state diagram is useful for breaking down physics problems I would use with my class into discrete steps. | CLASS APP | 1.0 *** |

| 13. [I expect that] Concept maps are useful for breaking down physics problems I would use with my class into discrete steps. | CLASS APP | 0.66 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Guadagno, R.E.; Gonzenbach, V.; Puddy, H.; Fishwick, P.; Kitagawa, M.; Urquhart, M.; Kesden, M.; Suura, K.; Hale, B.; Koknar, C.; et al. A Usability Study of Classical Mechanics Education Based on Hybrid Modeling: Implications for Sustainability in Learning. Sustainability 2021, 13, 11225. https://doi.org/10.3390/su132011225

Guadagno RE, Gonzenbach V, Puddy H, Fishwick P, Kitagawa M, Urquhart M, Kesden M, Suura K, Hale B, Koknar C, et al. A Usability Study of Classical Mechanics Education Based on Hybrid Modeling: Implications for Sustainability in Learning. Sustainability. 2021; 13(20):11225. https://doi.org/10.3390/su132011225

Chicago/Turabian StyleGuadagno, Rosanna E., Virgilio Gonzenbach, Haley Puddy, Paul Fishwick, Midori Kitagawa, Mary Urquhart, Michael Kesden, Ken Suura, Baily Hale, Cenk Koknar, and et al. 2021. "A Usability Study of Classical Mechanics Education Based on Hybrid Modeling: Implications for Sustainability in Learning" Sustainability 13, no. 20: 11225. https://doi.org/10.3390/su132011225

APA StyleGuadagno, R. E., Gonzenbach, V., Puddy, H., Fishwick, P., Kitagawa, M., Urquhart, M., Kesden, M., Suura, K., Hale, B., Koknar, C., Tran, N., Jin, R., & Raj, A. (2021). A Usability Study of Classical Mechanics Education Based on Hybrid Modeling: Implications for Sustainability in Learning. Sustainability, 13(20), 11225. https://doi.org/10.3390/su132011225