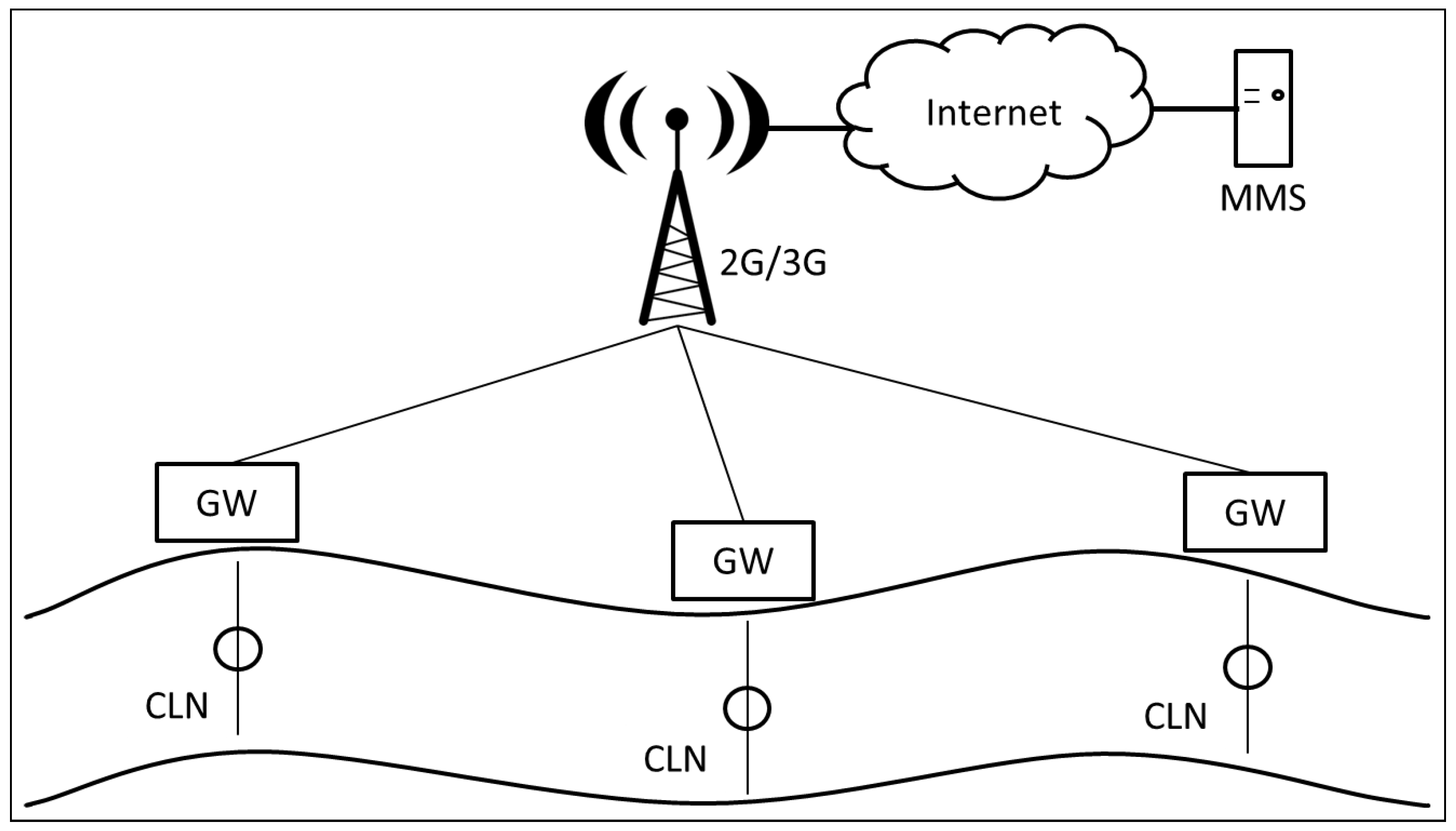

Figure 1.

GOLDFISH project description. Sensor Nodes (CLN), Management and Monitoring Station (MMS), and Gateways (GW) are depicted.

Figure 1.

GOLDFISH project description. Sensor Nodes (CLN), Management and Monitoring Station (MMS), and Gateways (GW) are depicted.

Figure 2.

GOLDFISH project running in Colombia, 2015.

Figure 2.

GOLDFISH project running in Colombia, 2015.

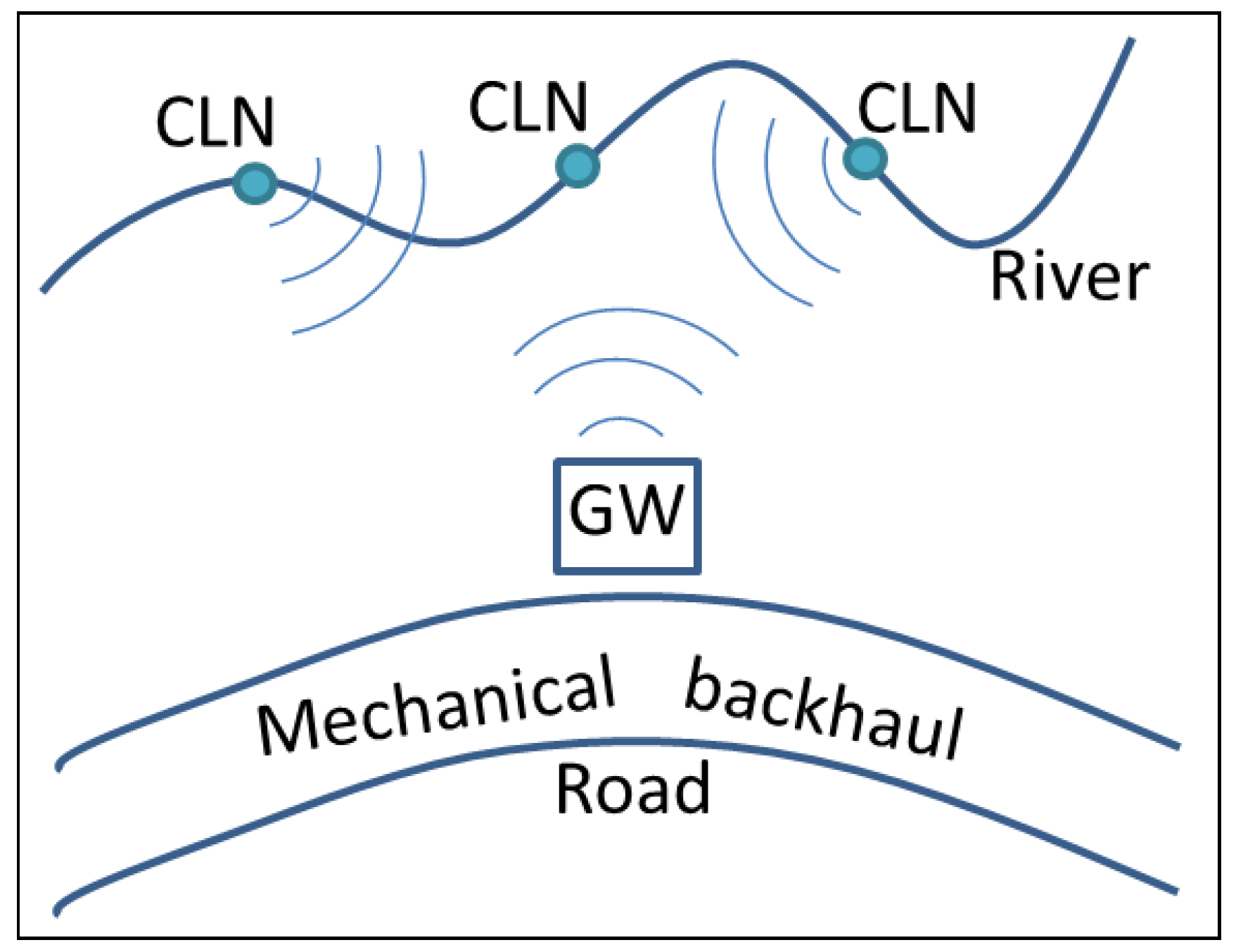

Figure 3.

Modified GOLDFISH project. Cluster Nodes (CLN) and Gateway (GW) are depicted.

Figure 3.

Modified GOLDFISH project. Cluster Nodes (CLN) and Gateway (GW) are depicted.

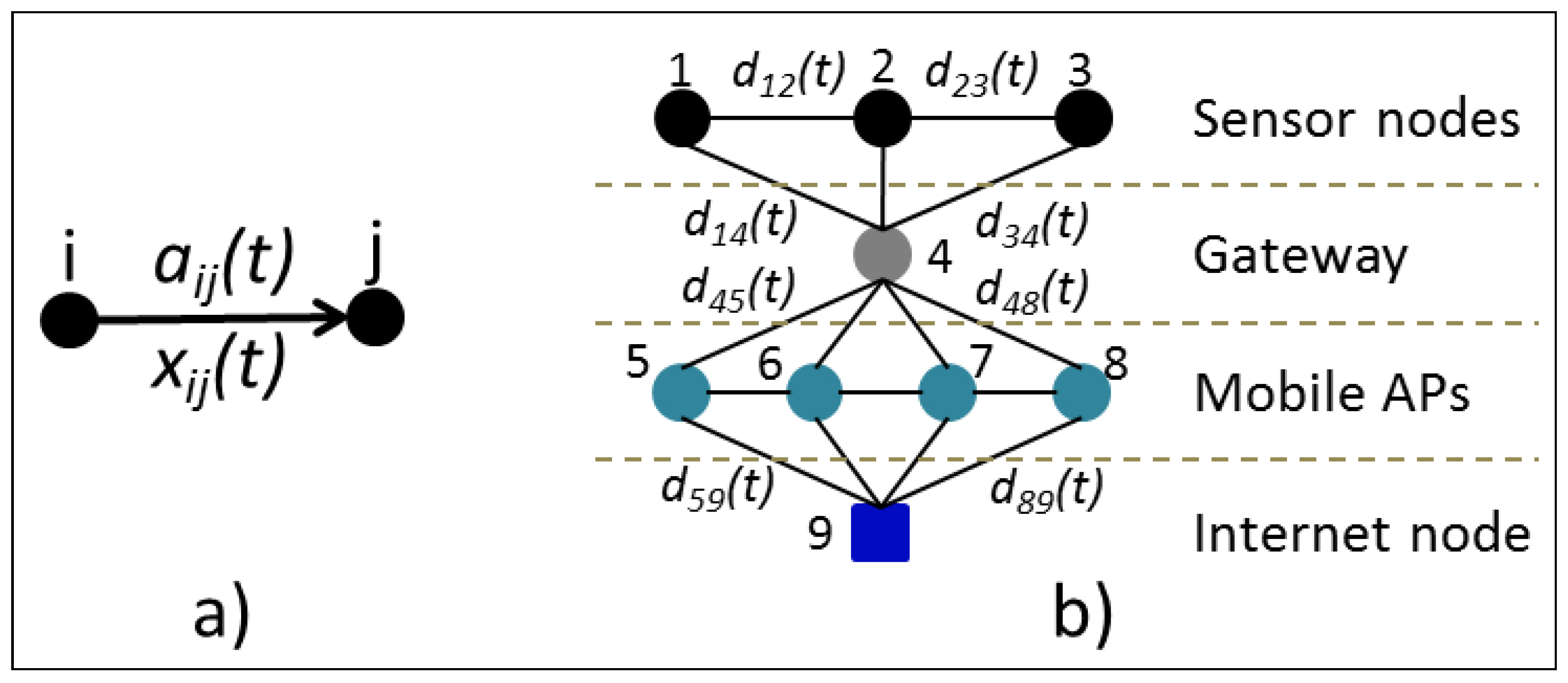

Figure 4.

Problem graph. (a) Availability probability and data flow from node i to node j; (b) Network graph with delivery probabilities varying through time. AP: Access Point.

Figure 4.

Problem graph. (a) Availability probability and data flow from node i to node j; (b) Network graph with delivery probabilities varying through time. AP: Access Point.

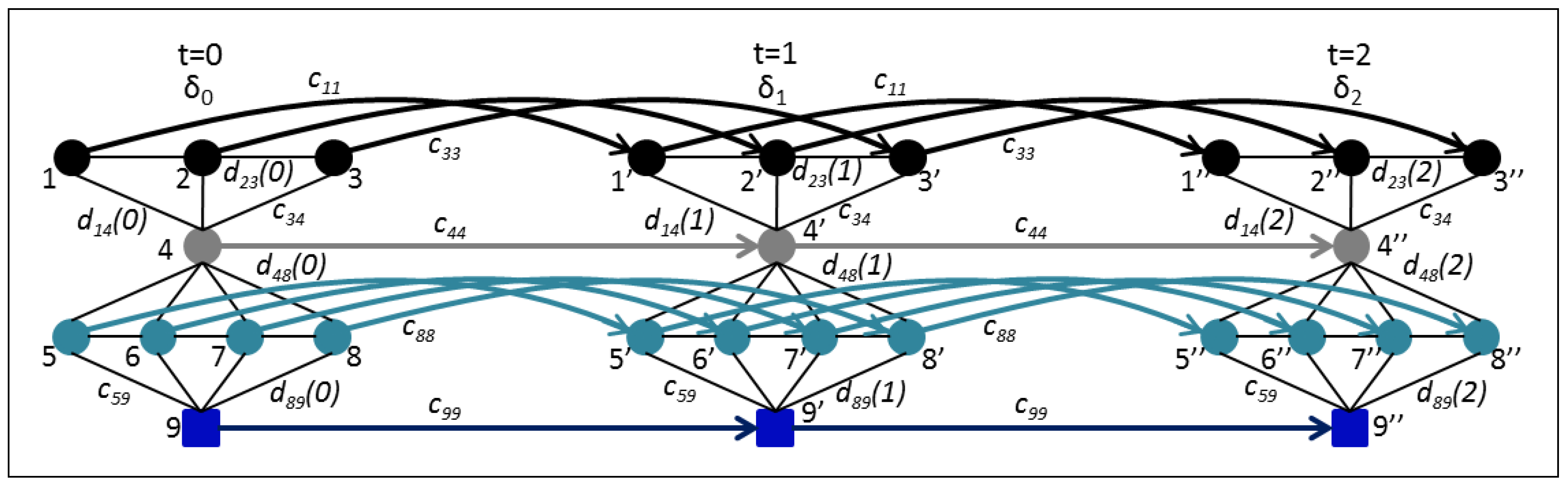

Figure 5.

Problem graph through time. Temporal links go from left to right and represent nodes’ buffers.

Figure 5.

Problem graph through time. Temporal links go from left to right and represent nodes’ buffers.

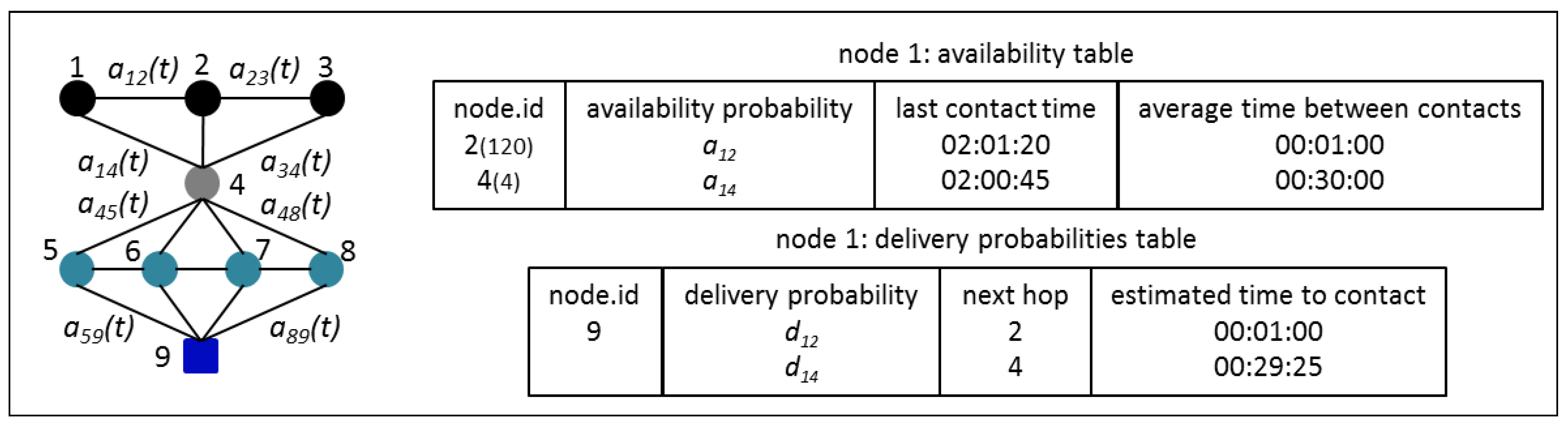

Figure 6.

Availability table and delivery probabilities table for each node.

Figure 6.

Availability table and delivery probabilities table for each node.

Figure 7.

GOLDFISH project map from Google Earth in OpenJump.

Figure 7.

GOLDFISH project map from Google Earth in OpenJump.

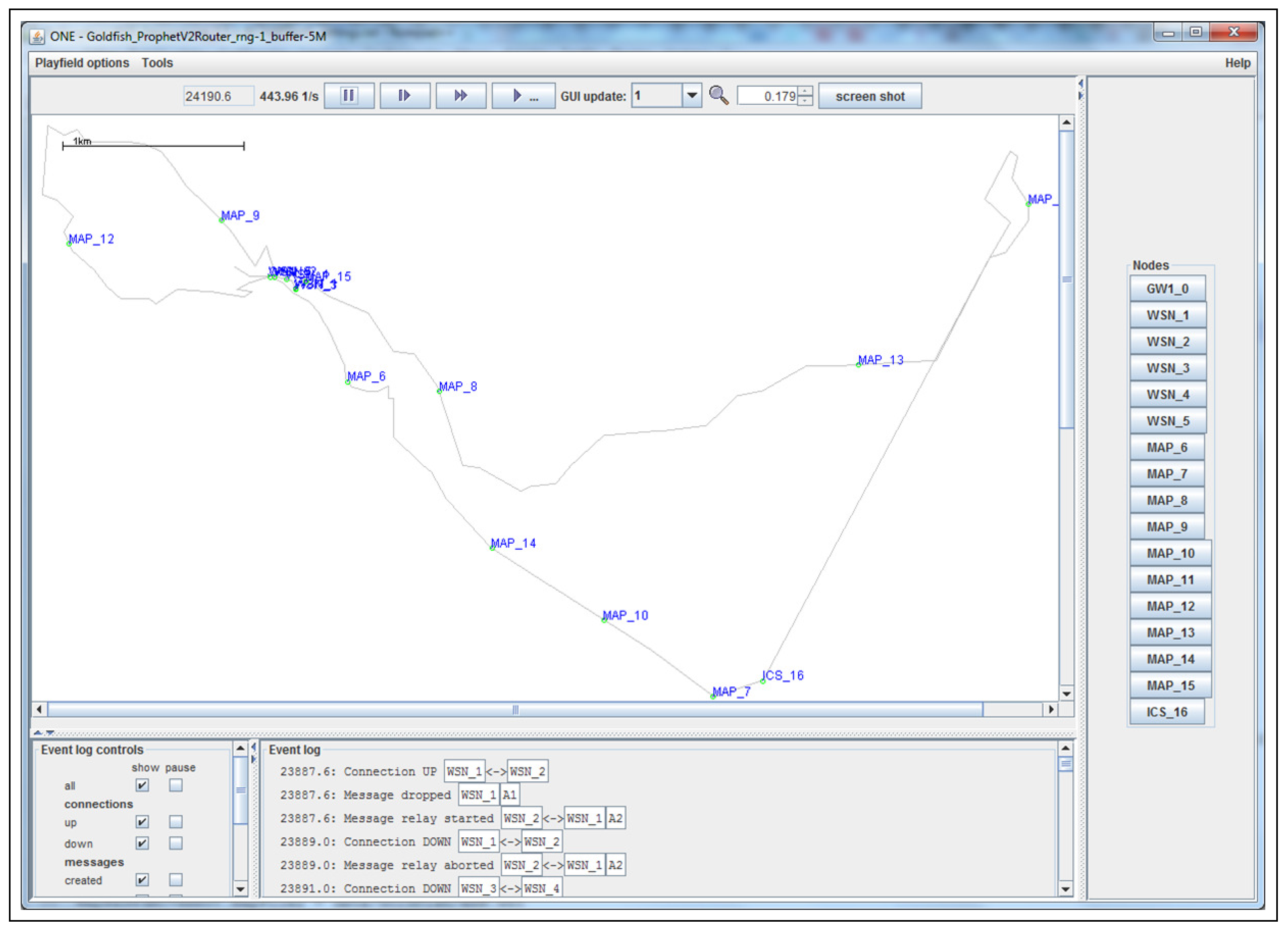

Figure 8.

GOLDFISH project map scaled in the ONE Simulator.

Figure 8.

GOLDFISH project map scaled in the ONE Simulator.

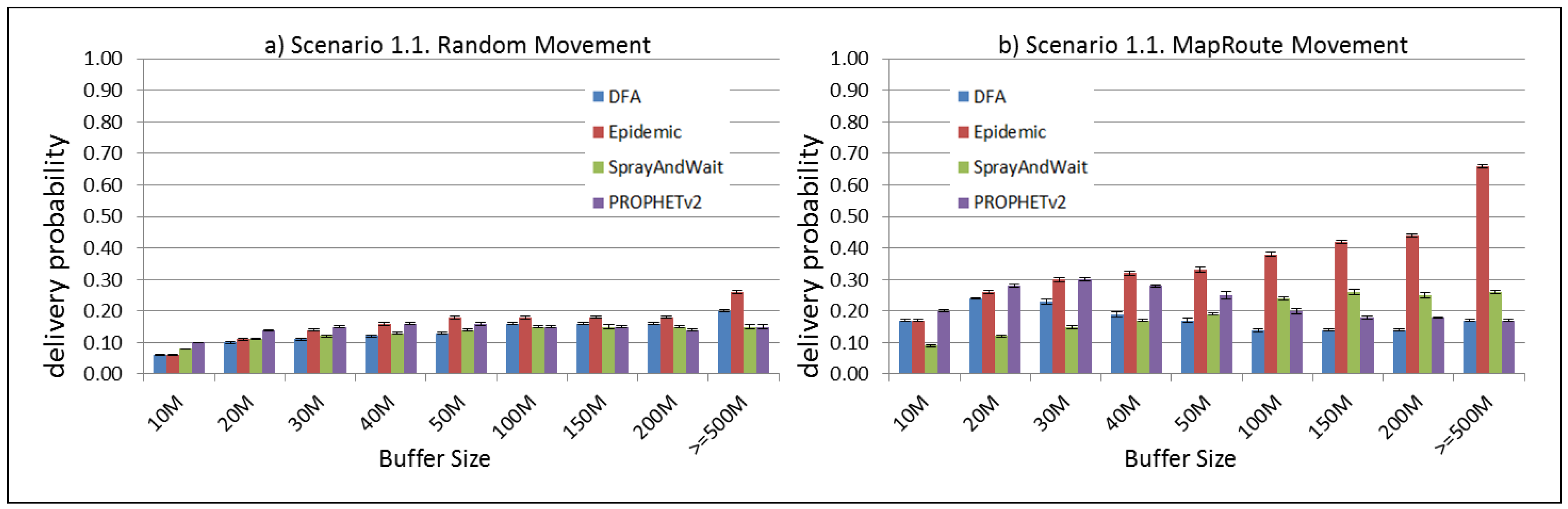

Figure 9.

Results for scenario 1.1. Delivery probabilities with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path. (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 9.

Results for scenario 1.1. Delivery probabilities with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path. (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

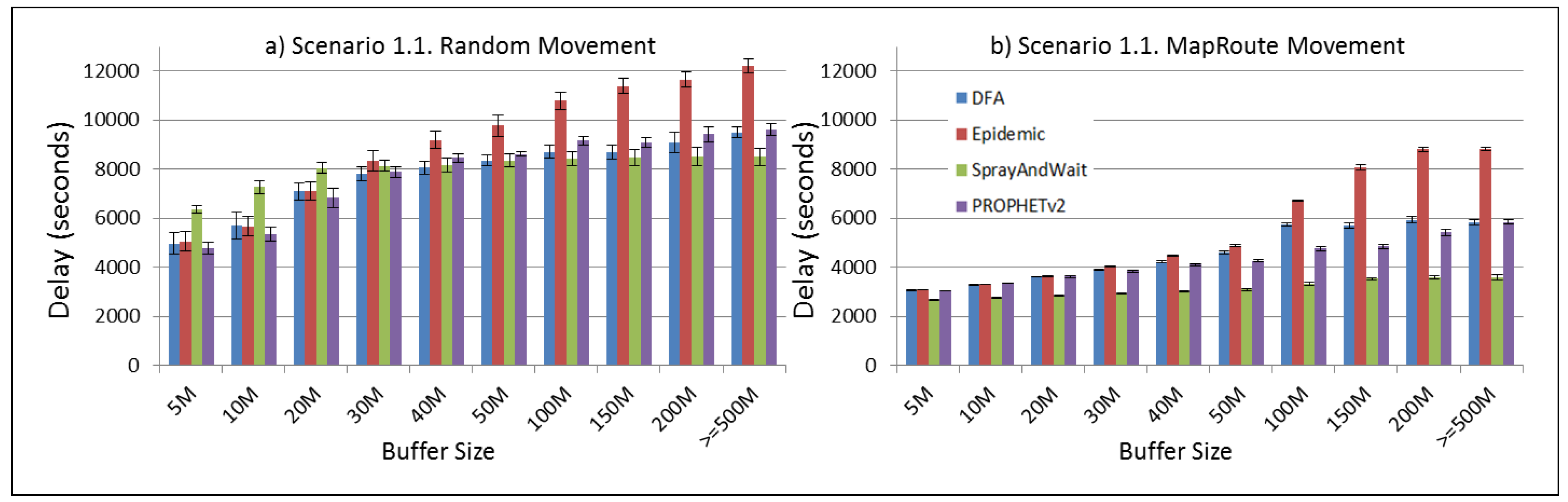

Figure 10.

Results for scenario 1.1. Average delay in seconds with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 10.

Results for scenario 1.1. Average delay in seconds with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 11.

Results for scenario 1.1. Average hop count with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 11.

Results for scenario 1.1. Average hop count with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

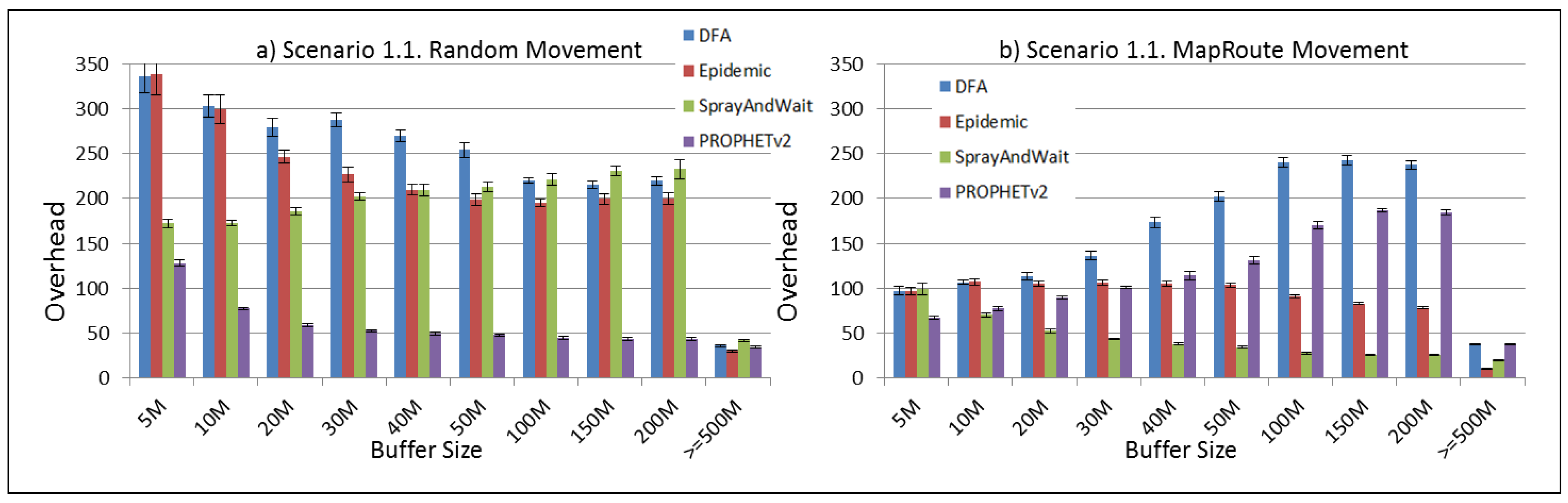

Figure 12.

Results for scenario 1.1. Average overhead with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 12.

Results for scenario 1.1. Average overhead with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

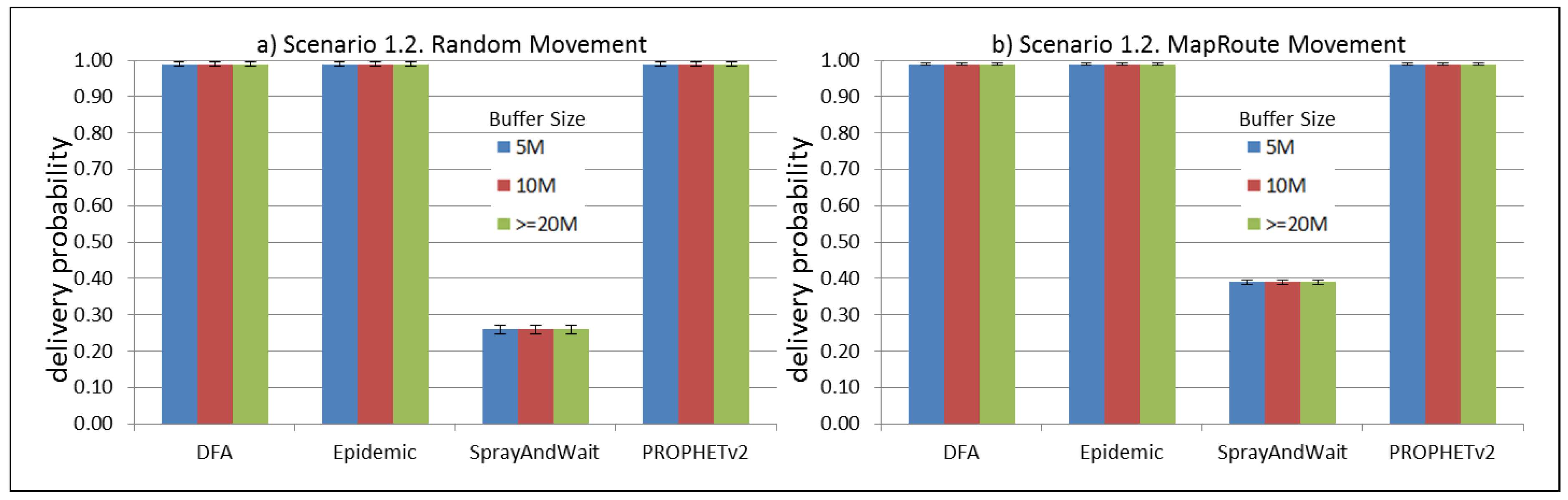

Figure 13.

Results for scenario 1.2. Delivery probabilities with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 13.

Results for scenario 1.2. Delivery probabilities with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

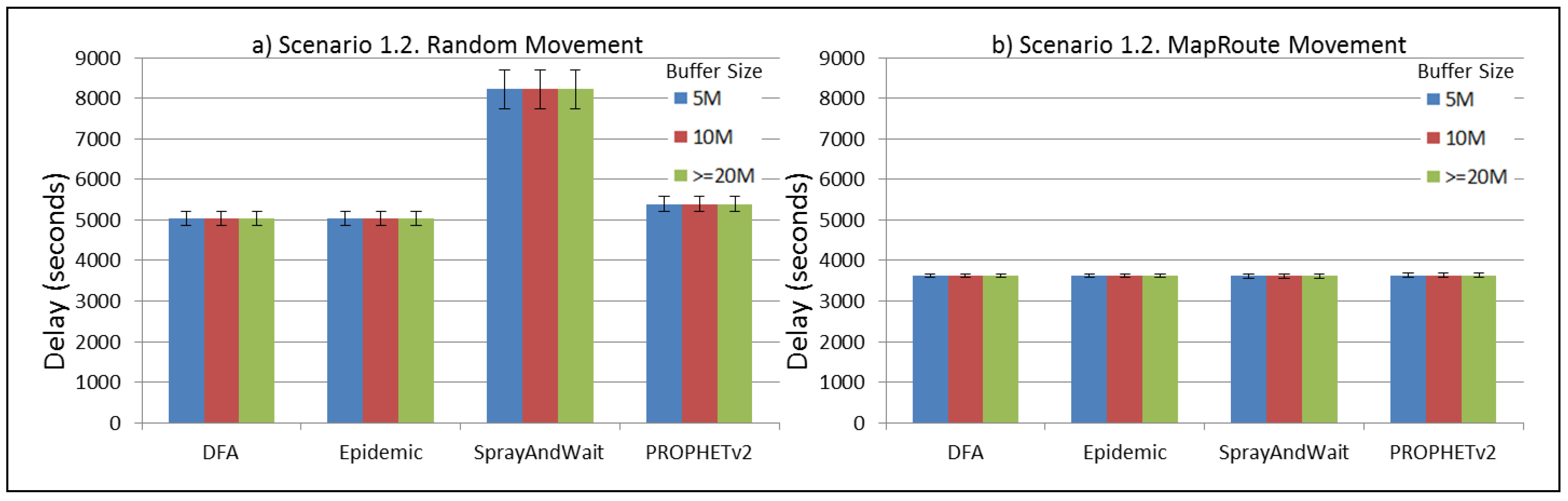

Figure 14.

Results for scenario 1.2. Average delay in seconds with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 14.

Results for scenario 1.2. Average delay in seconds with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 15.

Results for scenario 1.2. Average hop count with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 15.

Results for scenario 1.2. Average hop count with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

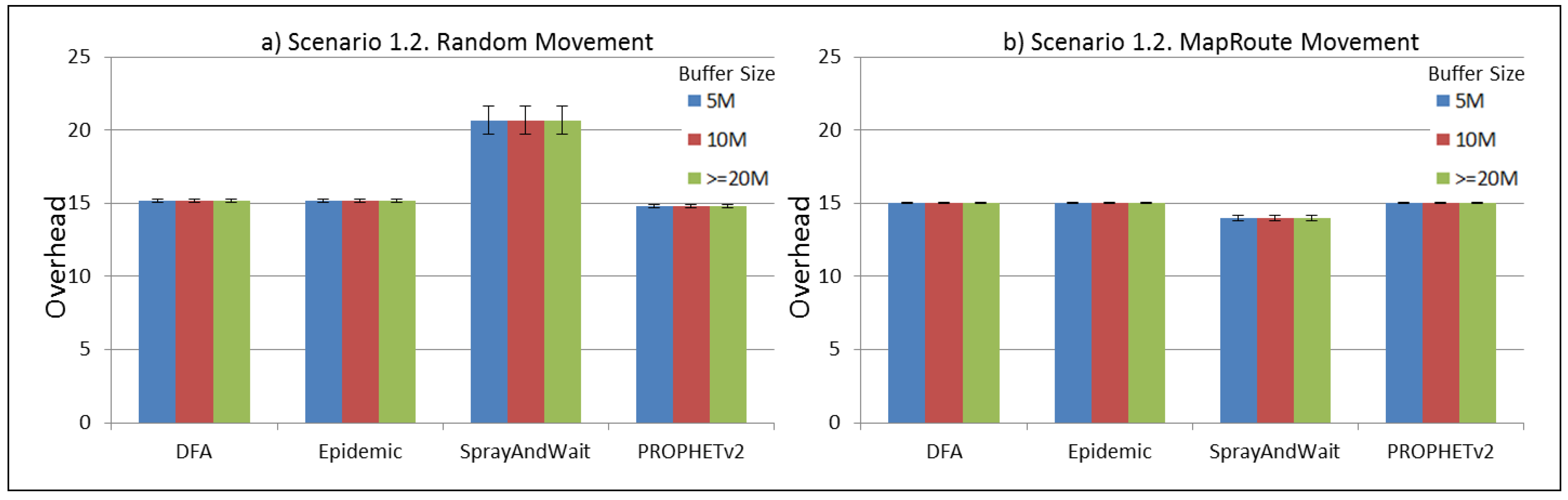

Figure 16.

Results for scenario 1.2. Average overhead with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 16.

Results for scenario 1.2. Average overhead with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

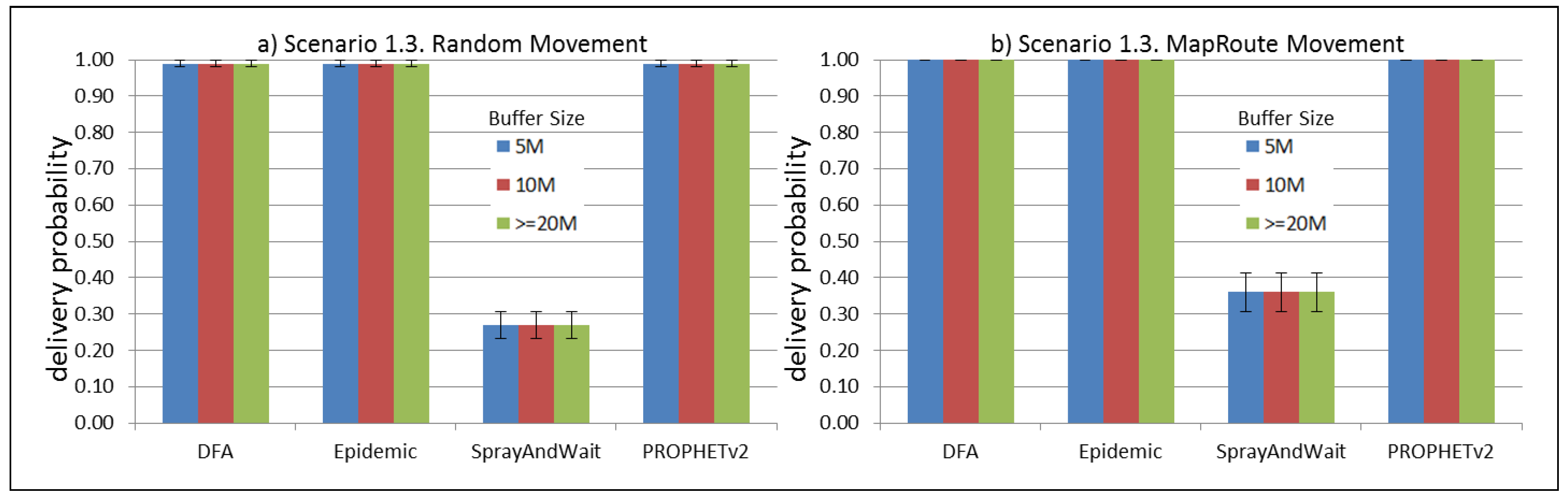

Figure 17.

Results for scenario 1.3. Delivery probabilities with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 17.

Results for scenario 1.3. Delivery probabilities with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

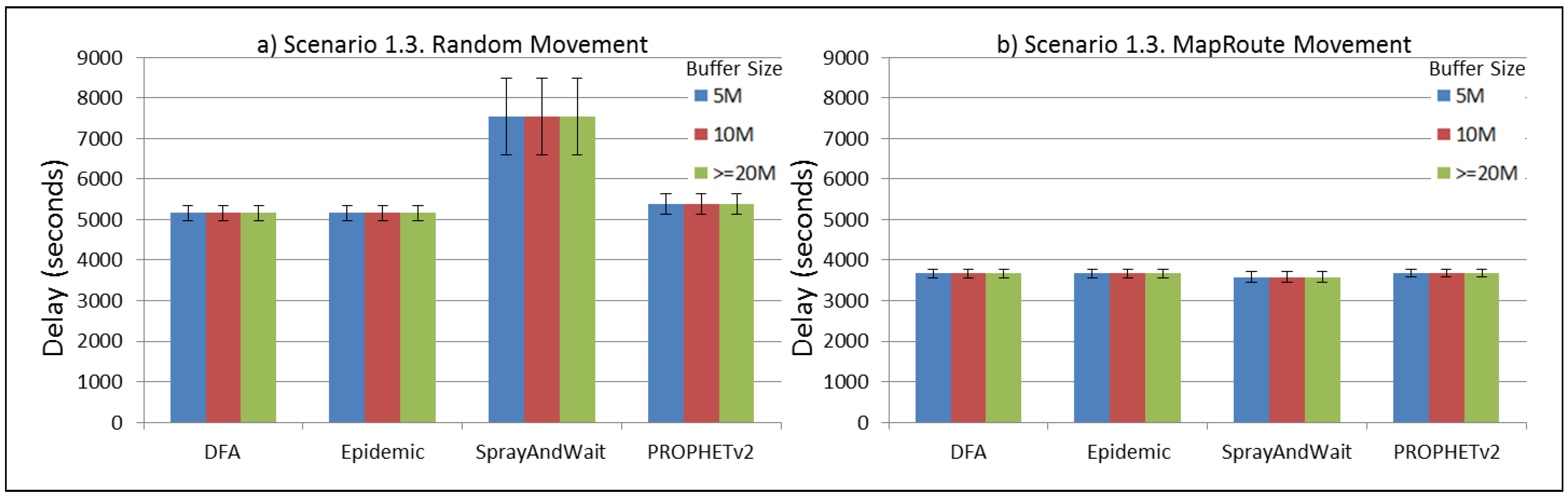

Figure 18.

Results for scenario 1.3. Average delay in seconds with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 18.

Results for scenario 1.3. Average delay in seconds with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

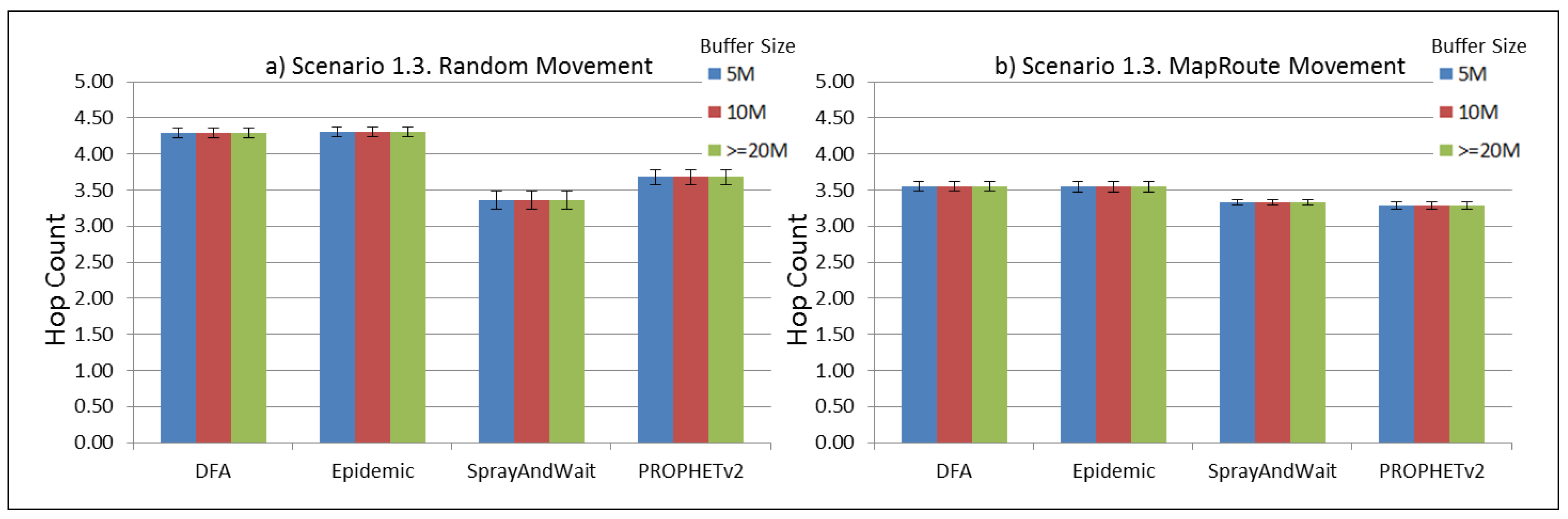

Figure 19.

Results for scenario 1.3. Average hop count with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 19.

Results for scenario 1.3. Average hop count with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

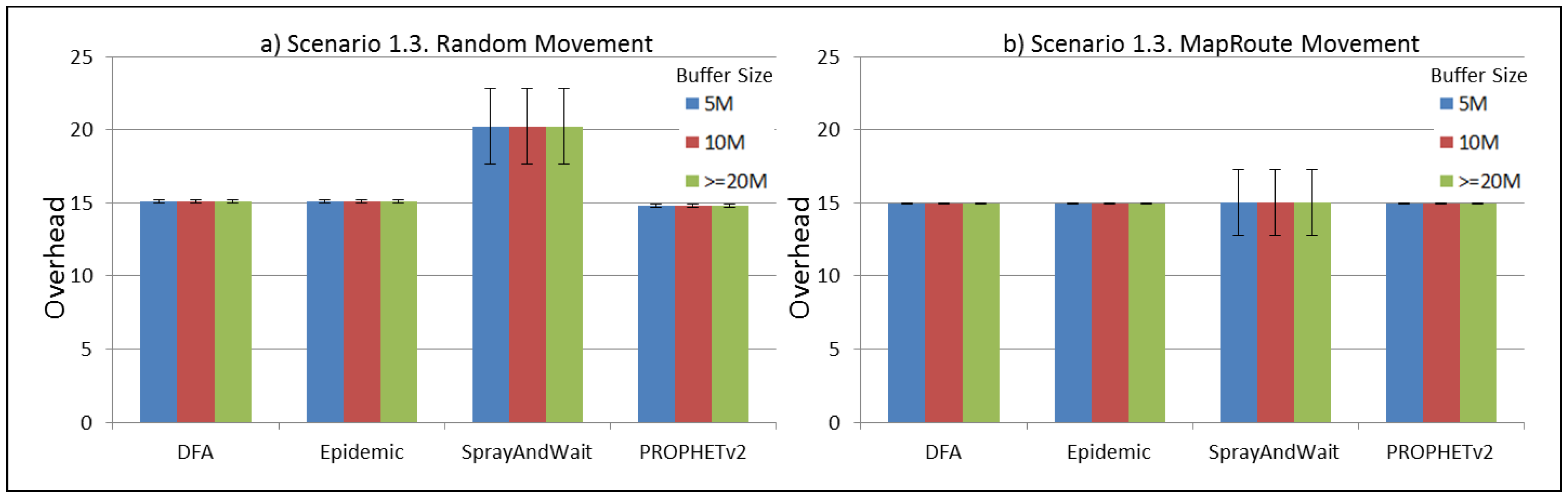

Figure 20.

Results for scenario 1.3. Average overhead with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 20.

Results for scenario 1.3. Average overhead with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

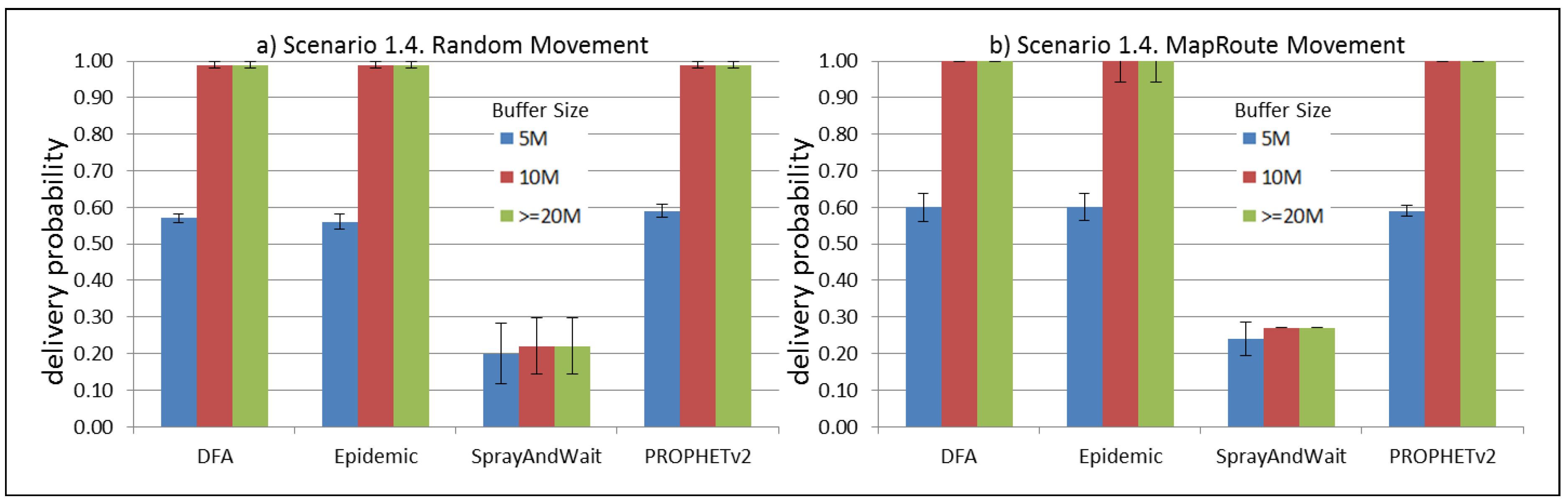

Figure 21.

Results for scenario 1.4. Delivery probabilities with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 21.

Results for scenario 1.4. Delivery probabilities with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

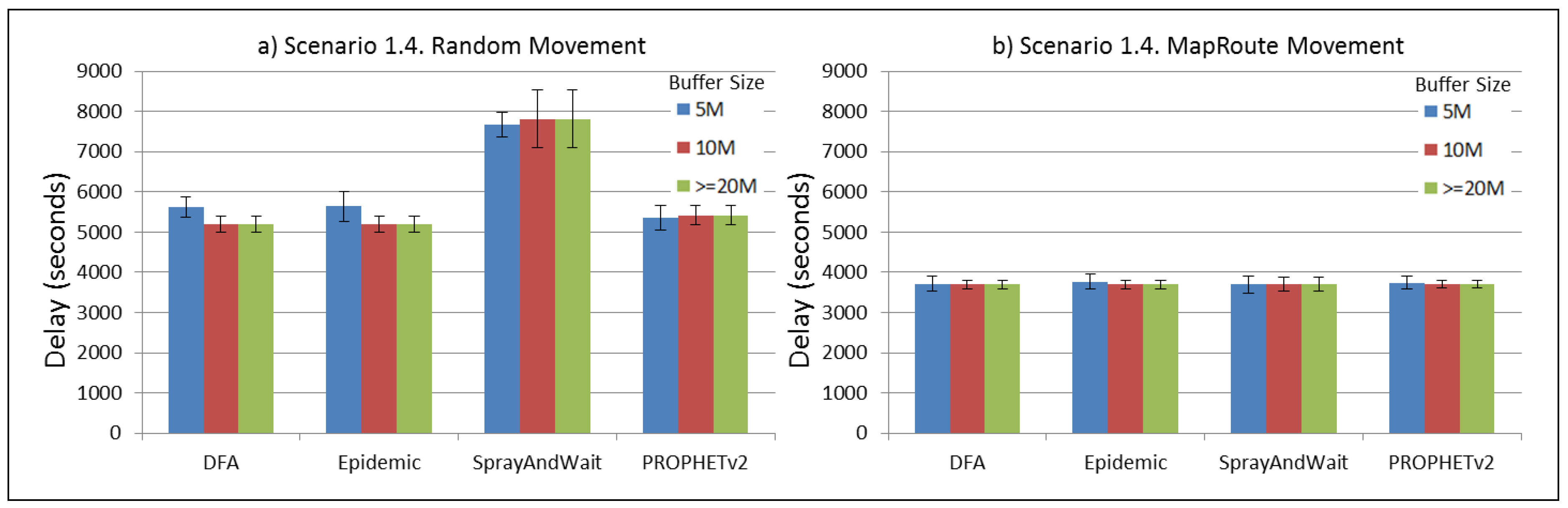

Figure 22.

Results for scenario 1.4. Average delay in seconds with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 22.

Results for scenario 1.4. Average delay in seconds with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

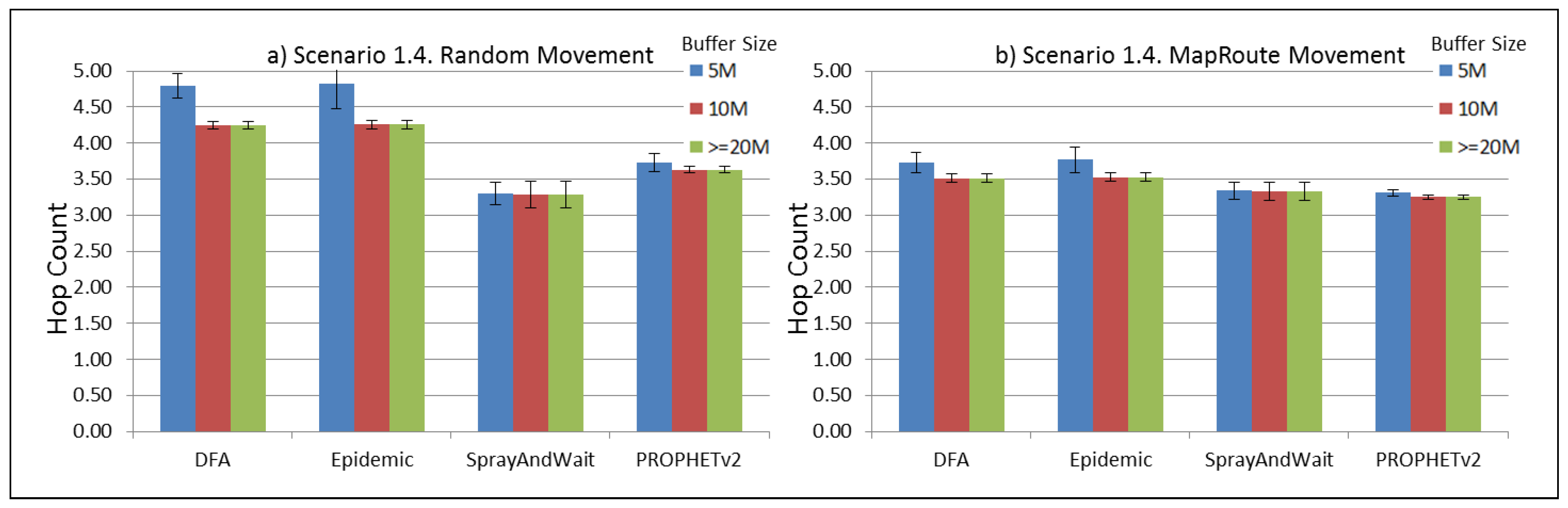

Figure 23.

Results for scenario 1.4. Average hop count with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 23.

Results for scenario 1.4. Average hop count with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

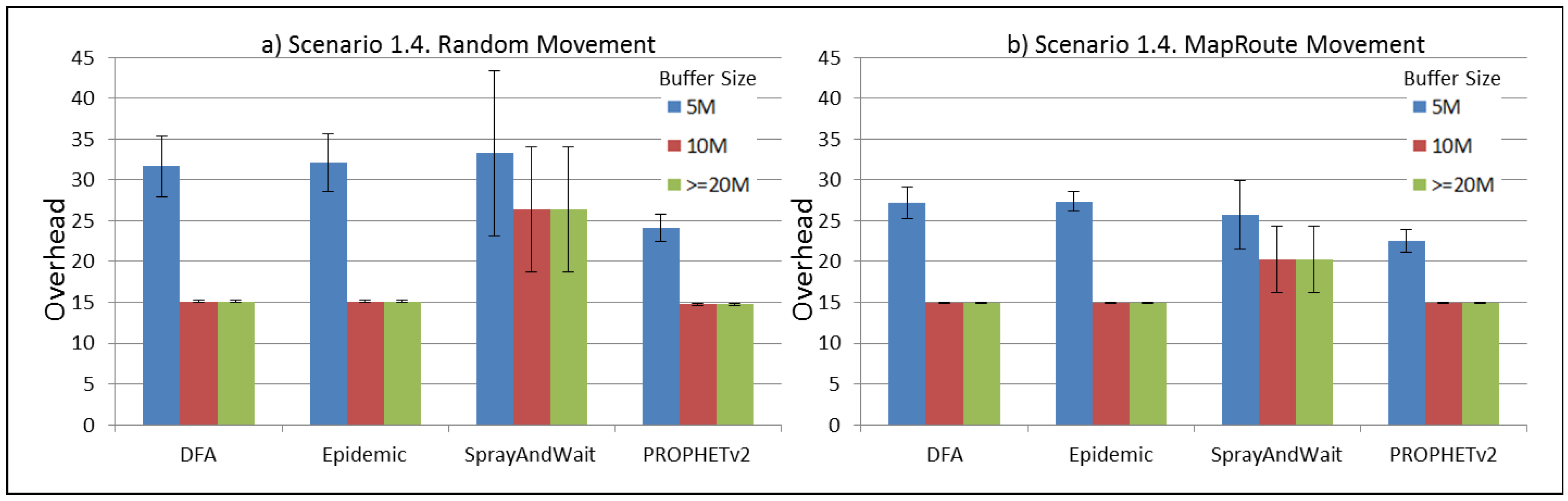

Figure 24.

Results for scenario 1.4. Average overhead with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 24.

Results for scenario 1.4. Average overhead with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

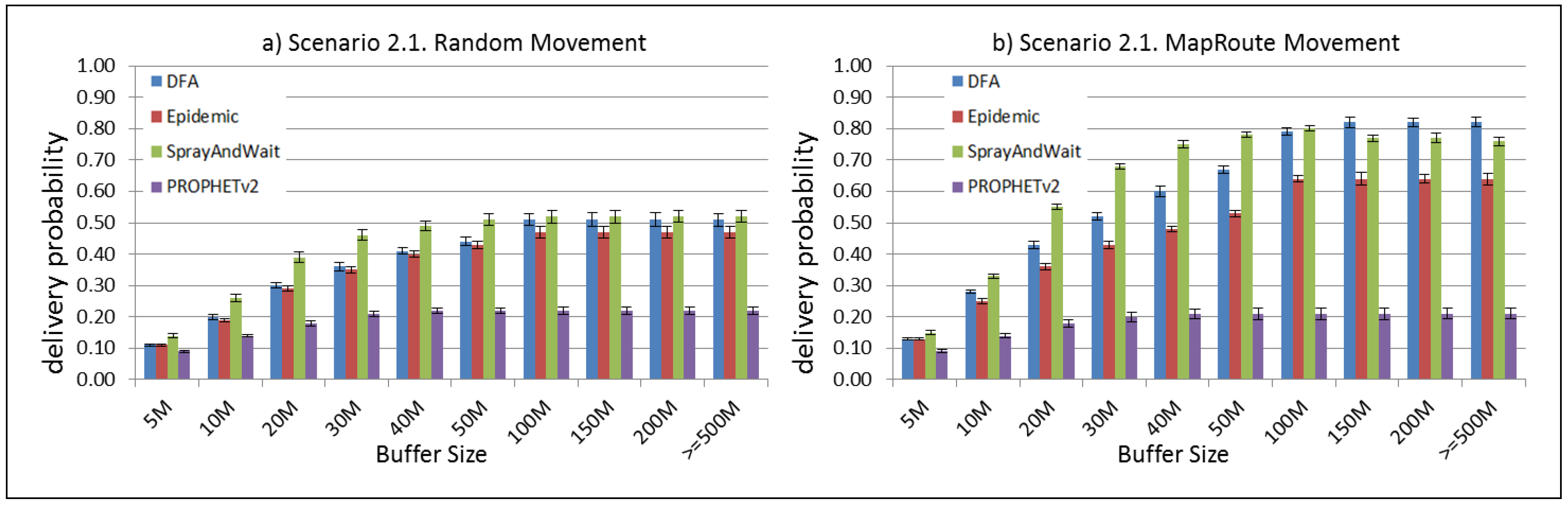

Figure 25.

Results for scenario 2.1. Delivery probabilities with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 25.

Results for scenario 2.1. Delivery probabilities with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

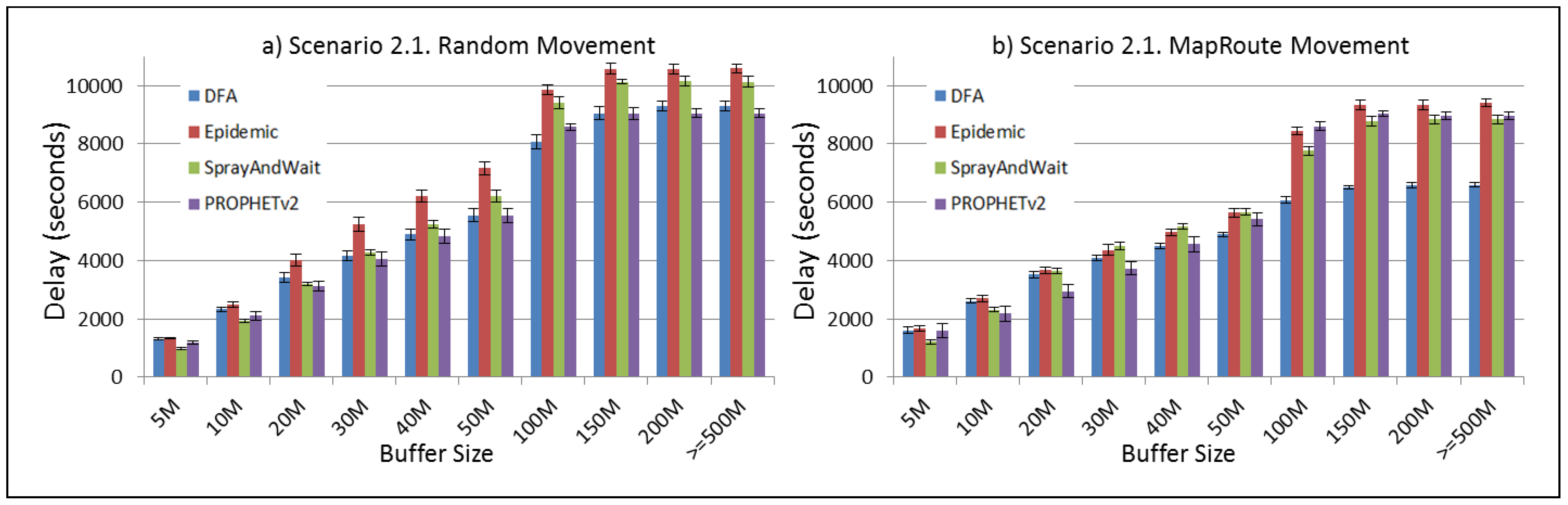

Figure 26.

Results for scenario 2.1. Average delay in seconds with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 26.

Results for scenario 2.1. Average delay in seconds with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

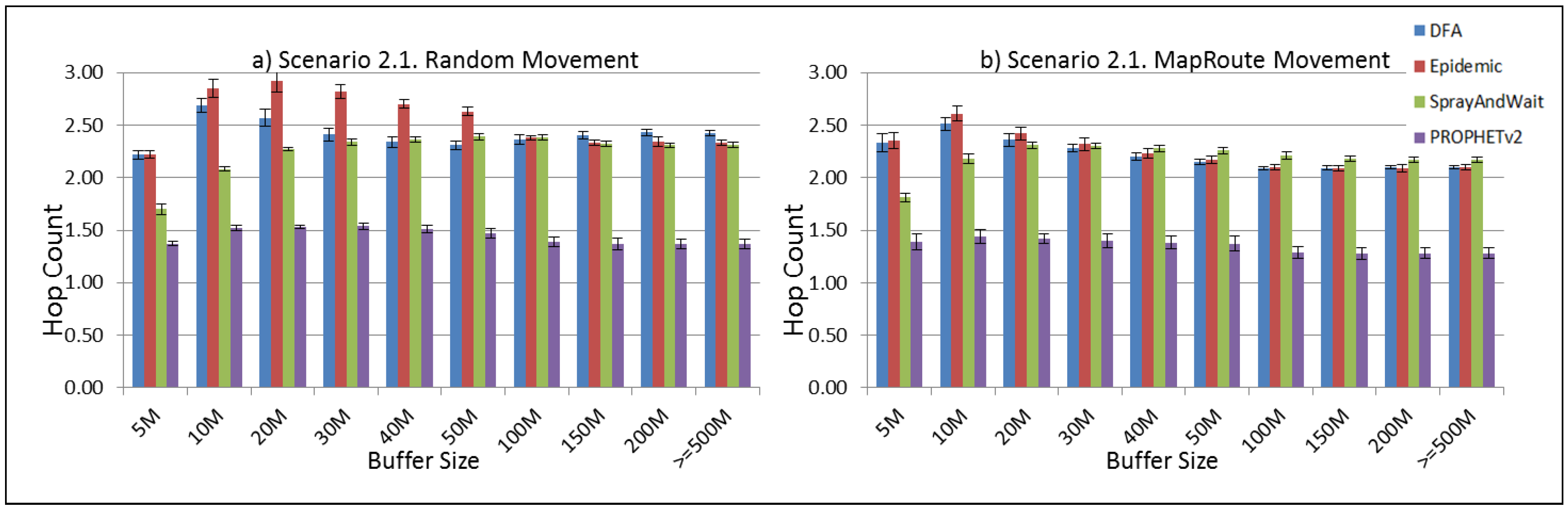

Figure 27.

Results for scenario 2.1. Average hop count with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 27.

Results for scenario 2.1. Average hop count with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 28.

Results for scenario 2.1. Average overhead with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 28.

Results for scenario 2.1. Average overhead with different Buffer Sizes, generating 2 MB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

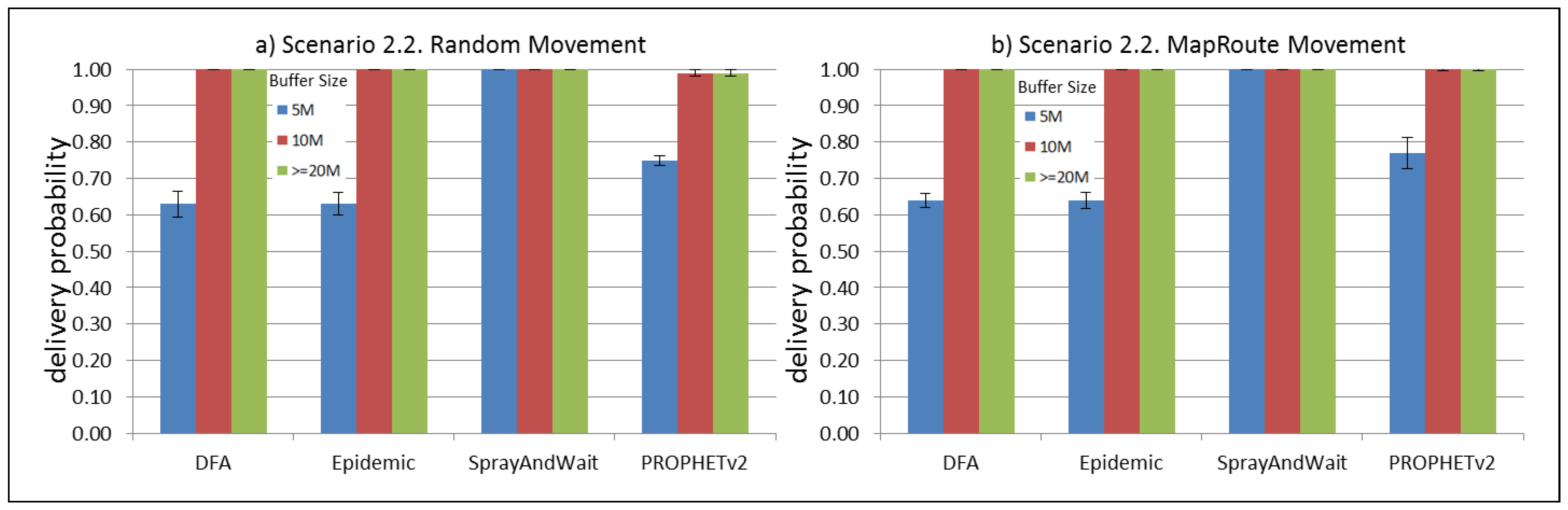

Figure 29.

Results for scenario 2.2. Delivery probabilities with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 29.

Results for scenario 2.2. Delivery probabilities with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

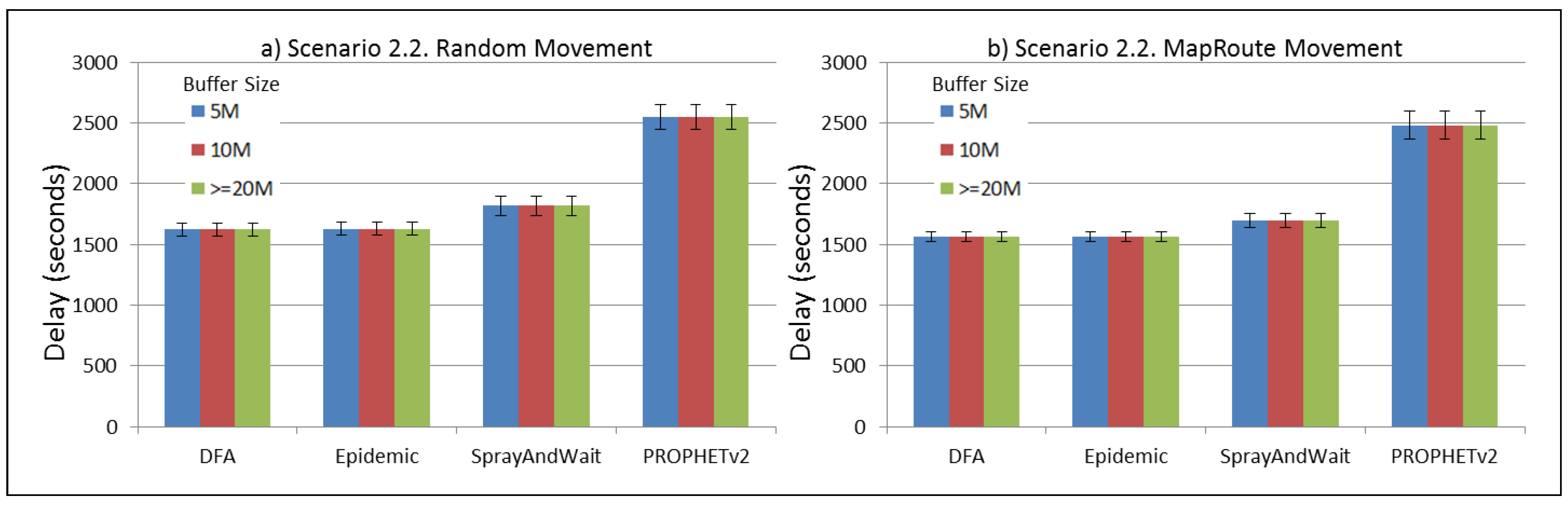

Figure 30.

Results for scenario 2.2. Average delay in seconds with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 30.

Results for scenario 2.2. Average delay in seconds with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 31.

Results for scenario 2.2. Average hop count with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 31.

Results for scenario 2.2. Average hop count with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

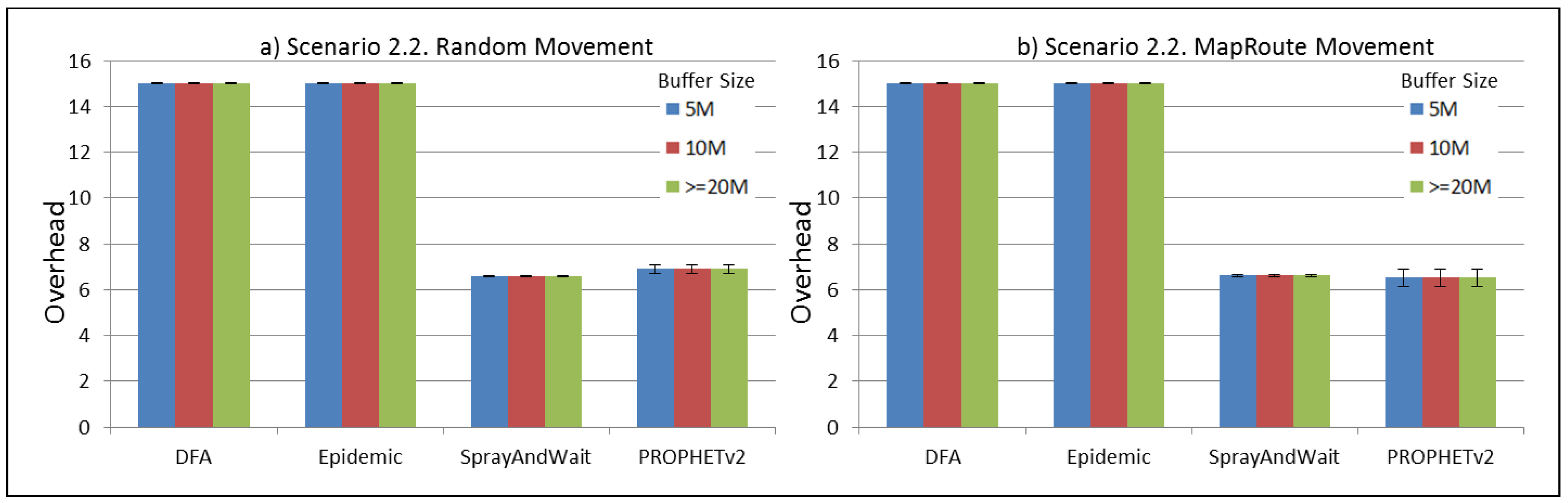

Figure 32.

Results for scenario 2.2. Average overhead with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 32.

Results for scenario 2.2. Average overhead with different Buffer Sizes, generating 2 kB messages every 1 to 2 min, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

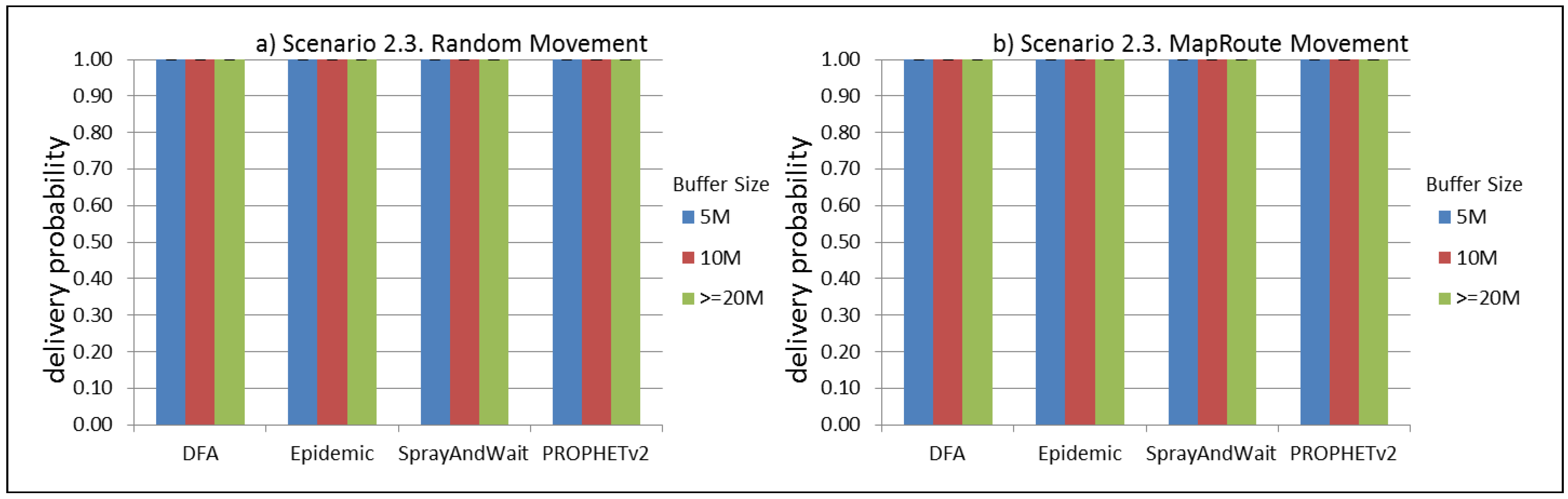

Figure 33.

Results for scenario 2.3. Delivery probabilities with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 33.

Results for scenario 2.3. Delivery probabilities with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

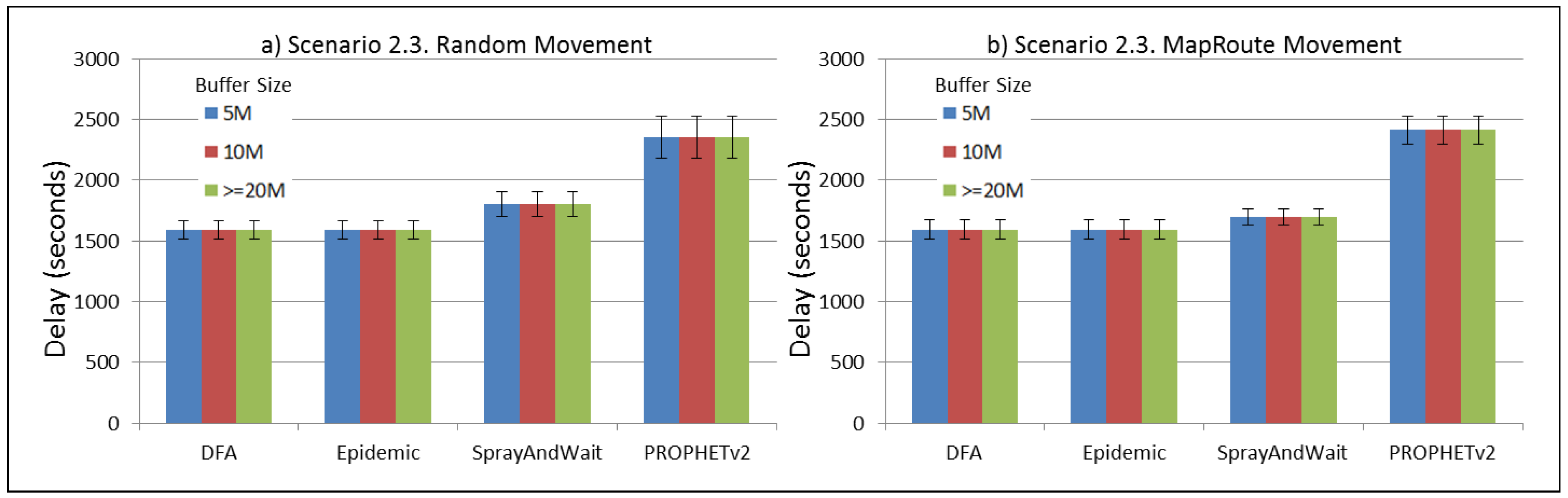

Figure 34.

Results for scenario 2.3. Average delay in seconds with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 34.

Results for scenario 2.3. Average delay in seconds with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

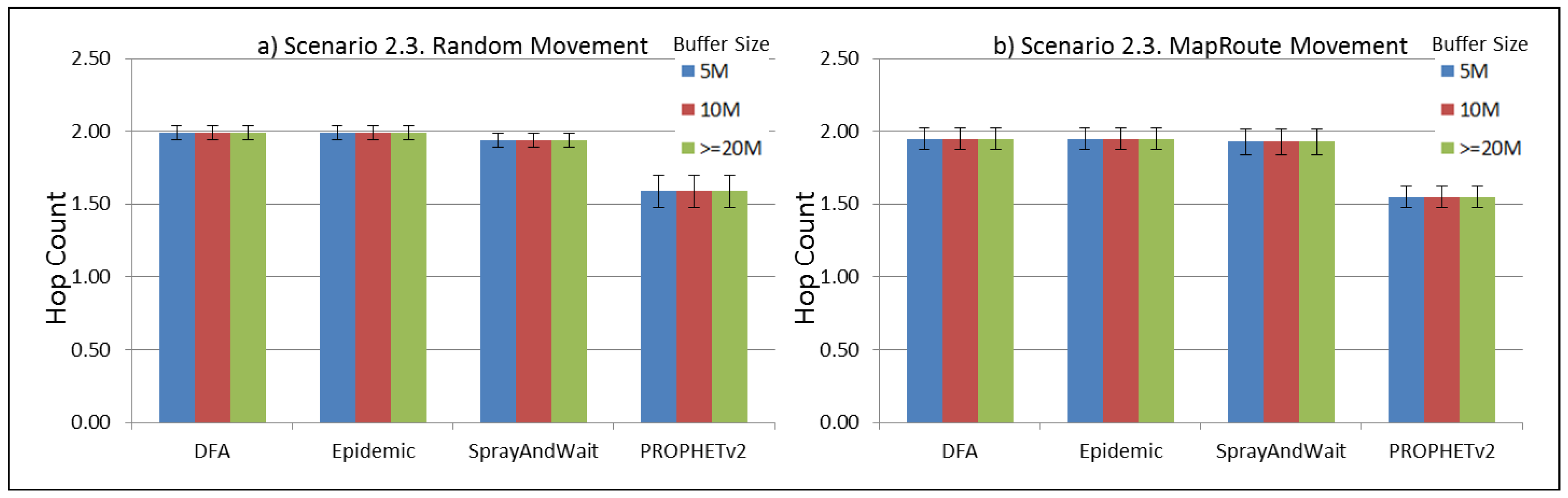

Figure 35.

Results for scenario 2.3. Average hop count with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 35.

Results for scenario 2.3. Average hop count with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

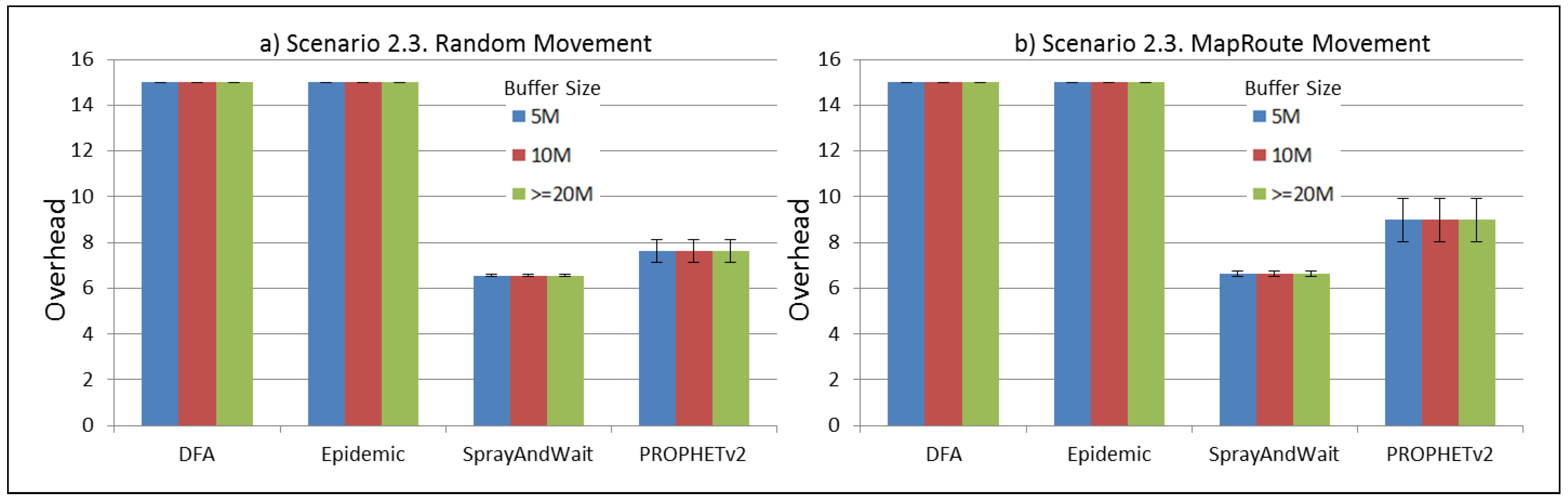

Figure 36.

Results for scenario 2.3. Average overhead with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 36.

Results for scenario 2.3. Average overhead with different Buffer Sizes, generating 2 kB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 37.

Results for scenario 2.4. Delivery probabilities with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 37.

Results for scenario 2.4. Delivery probabilities with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 38.

Results for scenario 2.4. Average delay in seconds with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 38.

Results for scenario 2.4. Average delay in seconds with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

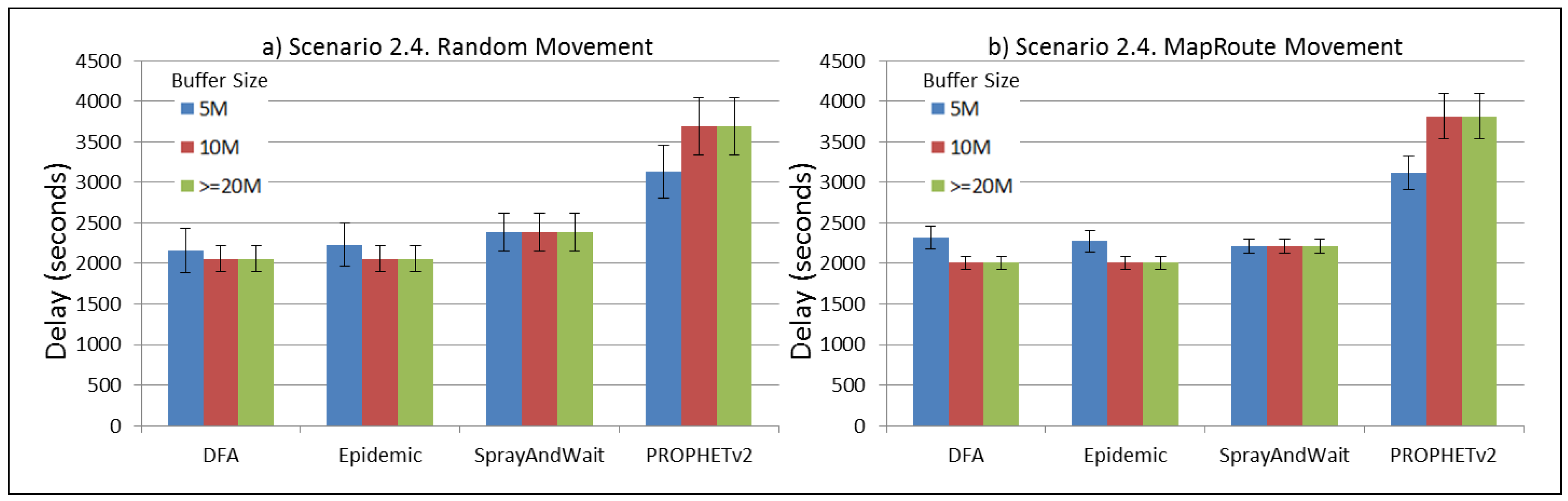

Figure 39.

Results for scenario 2.4. Average hop count with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 39.

Results for scenario 2.4. Average hop count with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

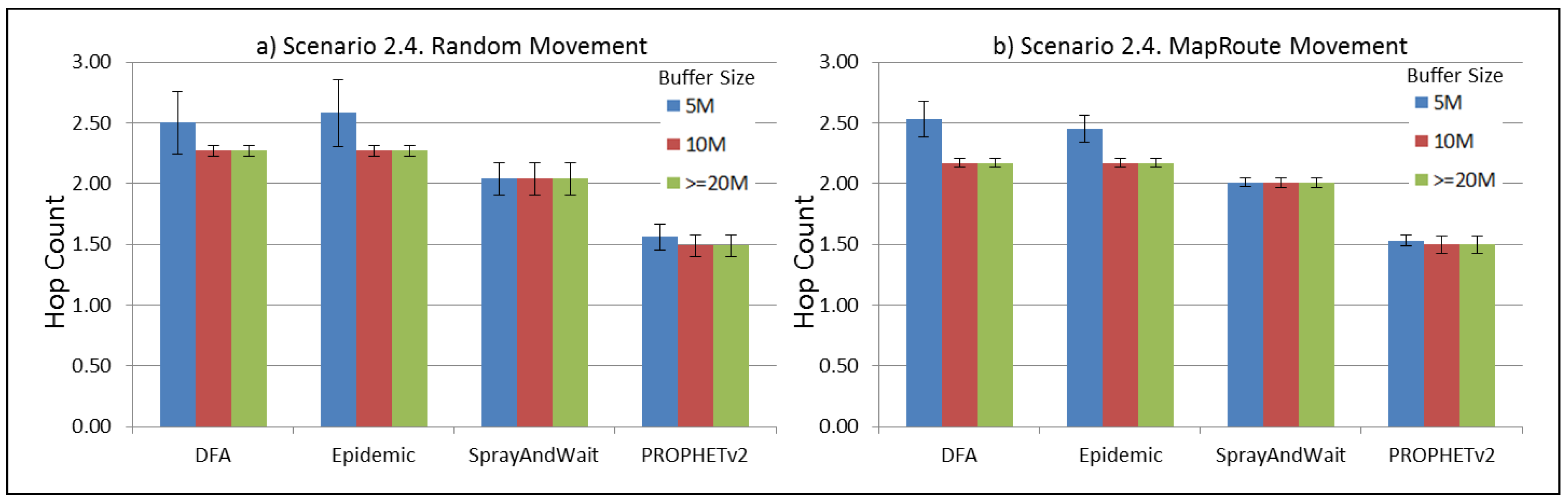

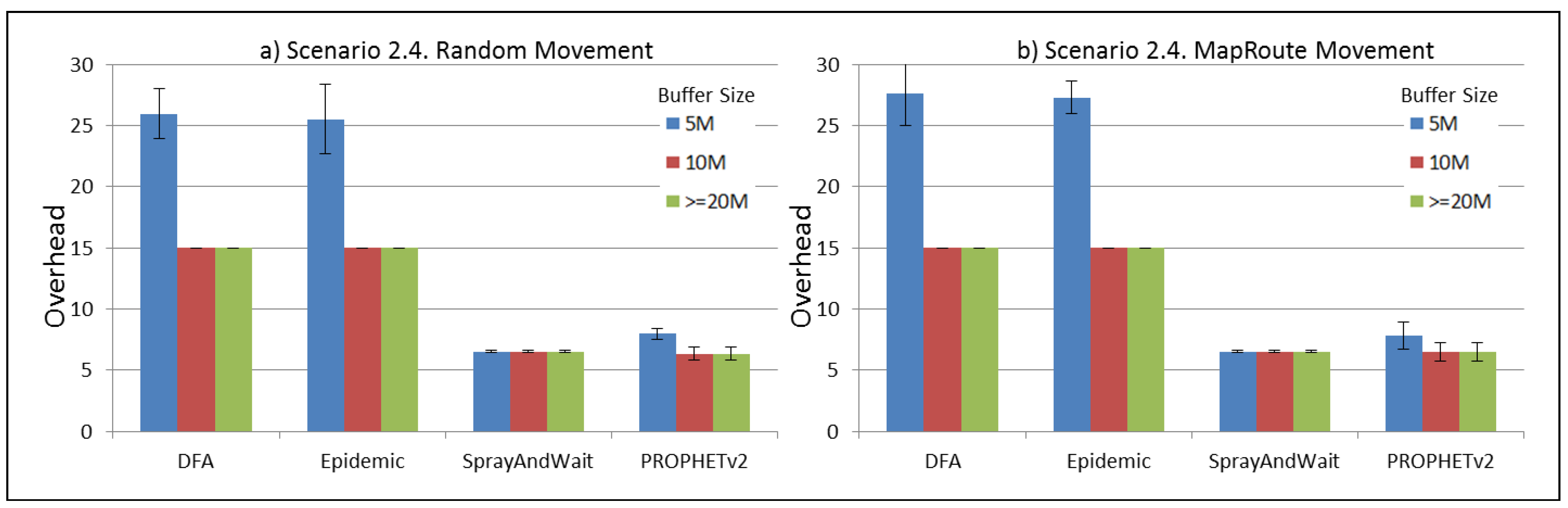

Figure 40.

Results for scenario 2.4. Average overhead with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Figure 40.

Results for scenario 2.4. Average overhead with different Buffer Sizes, generating 2 MB messages every 1 to 2 h, (a) MAPs moving randomly on the path; (b) MAPs following the path in only one direction. DFA: Distributed Forwarding Algorithm.

Table 1.

Related protocols.

Table 1.

Related protocols.

| Characteristics | Protocols |

|---|

| Flooding-based | Epidemic [11], Spray and Wait [12] |

| Social-based | SimBet [15], BUBBLE [13], and PeopleRank [16] |

| Hybrid protocols | PROPHET [9], MPAD [14] RAPID [17], LSF [19] and SMART [18] |

Table 2.

Mathematical model parameters.

Table 2.

Mathematical model parameters.

| Parameter | Definition |

|---|

| N | Set of nodes |

| E | Set of edges |

| P | Set of candidate paths |

| T | Set of discrete time values |

| Capacity of edge |

| Storage capacity of node i |

| Availability Probability of edge at time t. |

| Delivery Probability of node i through node j at time t |

| Set of offers or demands from node i at time t |

| t | discrete time value, where |

| Time interval duration |

| v | number of time values since last update |

| Decision variable. How much data flows through edge at time t |

Table 3.

Simulation parameters. ICS: Internet Connected Server. MAP: Mobile Access Point.

Table 3.

Simulation parameters. ICS: Internet Connected Server. MAP: Mobile Access Point.

| Parameter | Definition |

|---|

| Gateway | 1 node |

| Sensor Nodes | 5 nodes |

| ICS | 1 node (destination node) |

| Mobile APs | 10 nodes going through the defined path |

| RandomMvmt | MAPs going through the path in a random fashion |

| MapRoute | MAPs going through the path following one direction |

| Buffer Size | Going from 5 MB to 1000 MB. How much information a node can hold |

| Message Size | Size of each message. It goes from 2 kB to 2 MB |

| Message Interval | It determines how often messages are generated |

| SnWCopies | Spray and Wait copies set to 8 |

| DFA | Distributed Forwarding Algorithm (Our proposal) |

Table 4.

Simulation scenarios. X = 1. Wireless Sensor Network (WSN) nodes in the river. X = 2. WSN nodes all around the map.

Table 4.

Simulation scenarios. X = 1. Wireless Sensor Network (WSN) nodes in the river. X = 2. WSN nodes all around the map.

| Parameter | Subscenario X.1 | Subscenario X.2 | Subscenario X.3 | Subscenario X.4 |

|---|

| Message Size | 2 MB | 2 kB | 2 kB | 2 MB |

| Message Interval | 1–2 min | 1–2 min | 1–2 h | 1–2 h |