Self-Learning Control for Multi-Agent Consensus

Abstract

1. Introduction

- (1)

- I propose a self-learning control consensus law for MAS that combines a learning term and an updating term, implemented via a single-step algebraic update with low computational cost.

- (2)

- Using a Lyapunov function on the stacked consensus error, I derive uniform ultimate boundedness (UUB) stability under bounded disturbances, and provide transparent gain conditions linking learning intensity, interval, and consensus gains.

- (3)

- Simulations show faster convergence and accuracy compared to non-learning baselines, resulting in an order-of-magnitude improvement over traditional algorithms. I present a practical tuning guideline to help readers implement the scheme.

2. Preliminary and Problem Formulation

2.1. Agent Dynamics with Disturbance

2.2. Graph and Consensus Error

2.3. Control Objective

3. Self-Learning Control for Multiagent Systems

3.1. Self-Learning Consensus Control Law

3.2. Closed-Loop Disagreement Dynamics

3.3. Theorem and Stability Analysis

3.4. Tuning Guidelines and Practical Notes

- Sequential Tuning Workflow: fix according to hardware limits; select for baseline stability; adjust to accelerate convergence.

- Learning intensity : A larger increases prior information usage and accelerates early transients but also increases and sensitivity to . Values in are effective; near 1 may risk peaking if actuators saturate.

- Consensus gain : Boosts damping in the disagreement subspace. Pick to satisfy with a margin; a larger yields a faster decay but may excite saturation.

- Learning interval : choose as one or a few sampling periods so that the learning difference is small; this directly reduces and the ultimate bound (31).

- Saturation: As in our study, learning can cause initial peaks. Reducing at large (variable learning intensity) can weaken saturation; a static conservative choice of already achieves improved speed with low complexity.

4. Simulation

4.1. Communication Graph

4.2. Controller and Implementation Details

4.2.1. Self-Learning Control Law

4.2.2. Baselines and Metrics

4.3. Results

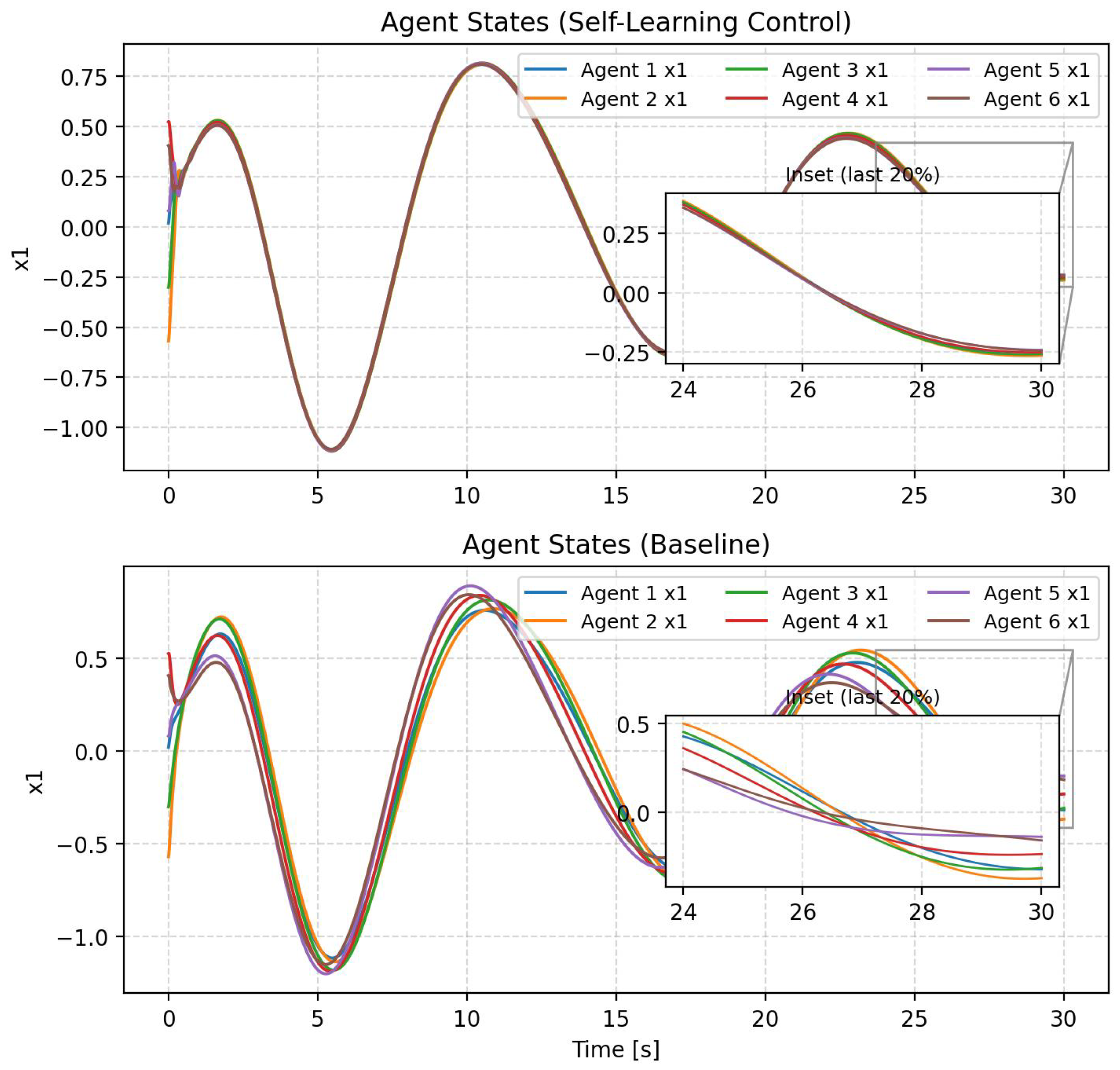

4.3.1. State Trajectories and Cohesion

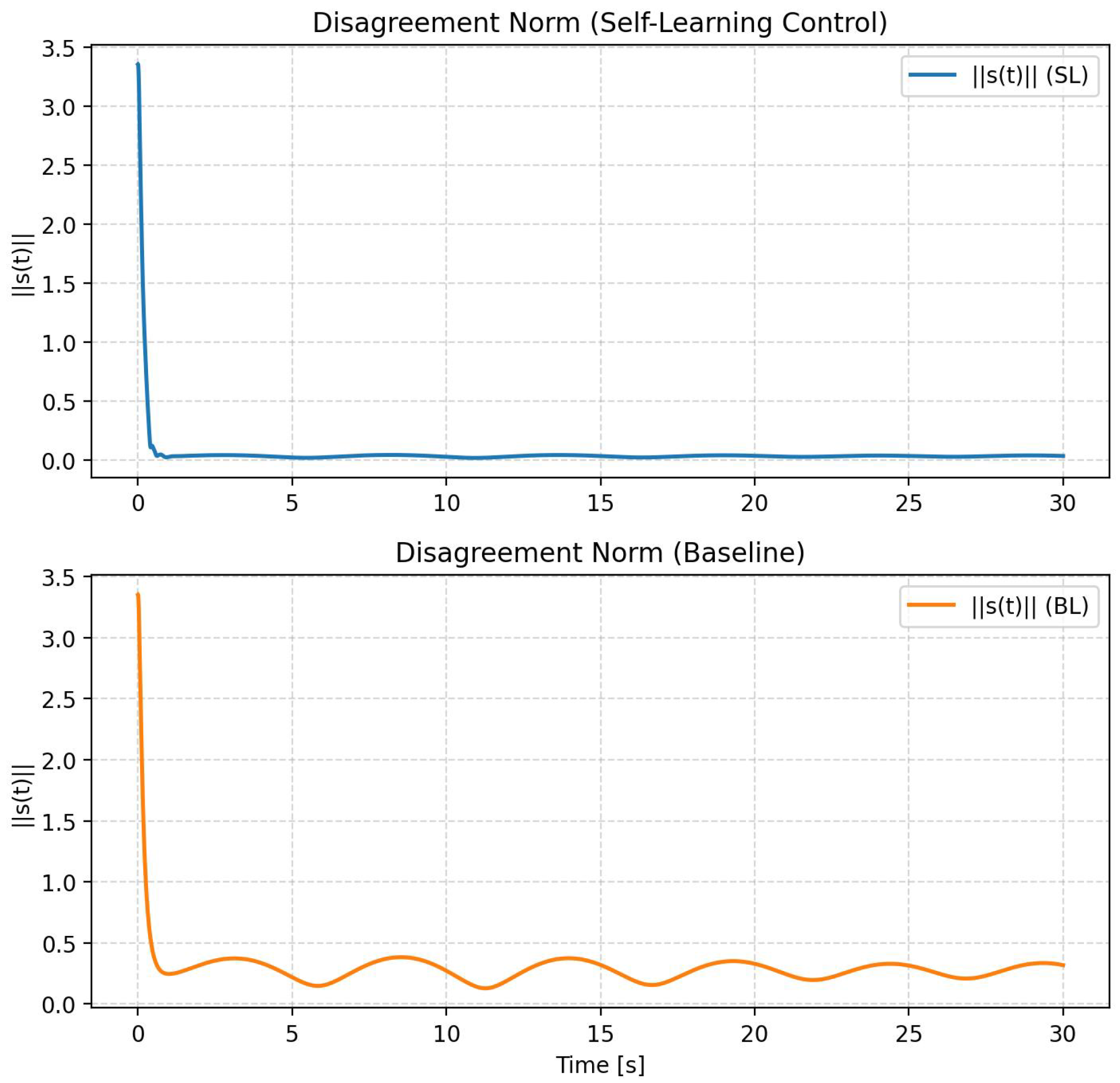

4.3.2. Disagreement Norm

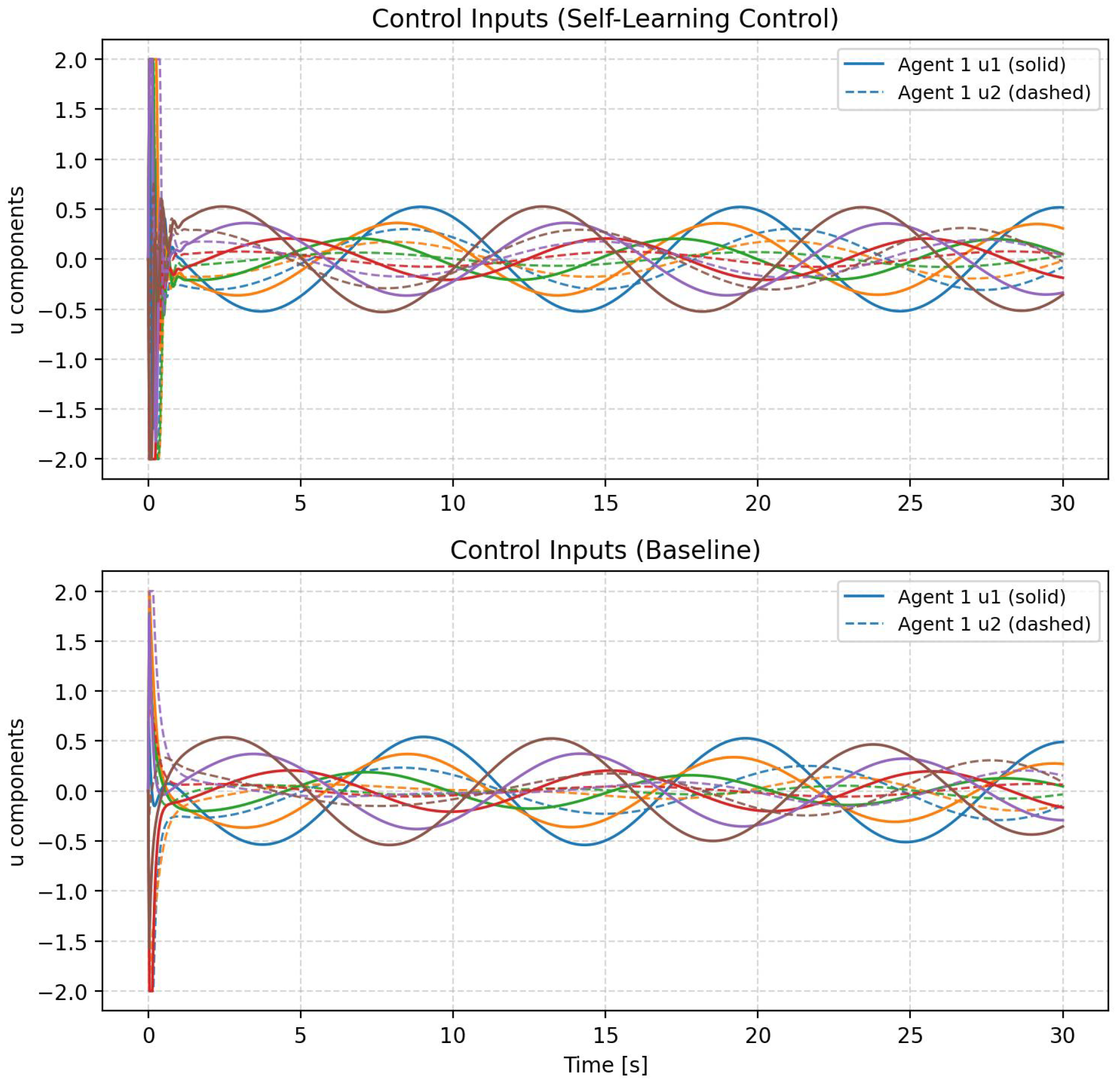

4.3.3. Control Inputs (Raw)

4.3.4. Summary

5. Conclusions

Supplementary Materials

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Zhu, W.; Pu, H.; Wang, D.; Li, H. Event-based consensus of second-order multi-agent systems with discrete time. Automatica 2017, 79, 78–83. [Google Scholar] [CrossRef]

- Jiang, Y.; Fan, J.L.; Gao, W.N.; Chai, T.Y.; Lewis, F.L. Cooperative Adaptive Optimal Output Regulation of Discrete-Time Nonlinear Multi-Agent Systems. Automatica 2020, 121, 109149. [Google Scholar] [CrossRef]

- Yi, X.; Liu, K.; Dimarogonas, D.V.; Johansson, K.H. Dynamic event-triggered and self-triggered control for multi-agent systems. IEEE Trans. Autom. Control 2018, 64, 3300–3307. [Google Scholar] [CrossRef]

- Doostmohammadian, M. Single-bit consensus with finite-time convergence: Theory and applications. IEEE Trans. Aerosp. Electron. Syst. 2020, 56, 3332–3338. [Google Scholar] [CrossRef]

- Zhang, C.; Ahn, C.K.; Wu, J.; He, W. Online-learning control with weakened saturation response to attitude tracking: A variable learning intensity approach. Aerosp. Sci. Technol. 2021, 117, 106981. [Google Scholar] [CrossRef]

- Dai, M.Z.; Xiao, F.; Wei, B. Event-triggered and quantized self-triggered control for multi-agent systems based on relative state measurements. J. Frankl. Inst. 2019, 356, 3711–3732. [Google Scholar] [CrossRef]

- Ruan, X.; Feng, J.; Xu, C.; Wang, J. Observer-based dynamic event-triggered strategies for leader-following consensus of multi-agent systems with disturbances. IEEE Trans. Netw. Sci. Eng. 2020, 7, 3148–3158. [Google Scholar] [CrossRef]

- Amirkhani, A.; Barshooi, A.H. Consensus in multi-agent systems: A review. Artif. Intell. Rev. 2022, 55, 3897–3935. [Google Scholar] [CrossRef]

- Chen, F.; Ren, W. On the control of multi-agent systems: A survey. Found. Trends Syst. Control 2019, 6, 339–499. [Google Scholar] [CrossRef]

- Ni, J.; Zhao, Y.; Cao, J.; Li, W. Fixed-time practical consensus tracking of multi-agent systems with communication delay. IEEE Trans. Netw. Sci. Eng. 2022, 9, 1319–1334. [Google Scholar] [CrossRef]

- Ji, Z.; Wang, Z.; Lin, H.; Wang, Z. Controllability of multi-agent systems with time-delay in state and switching topology. Int. J. Control 2010, 83, 371–386. [Google Scholar] [CrossRef]

- Yu, X.; Yang, F.; Zou, C.; Ou, L. Stabilization parametric region of distributed PID controllers for general first-order multi-agent systems with time delay. IEEE/CAA J. Autom. Sin. 2019, 7, 1555–1564. [Google Scholar] [CrossRef]

- Zhang, C.; Xiao, B.; Wu, J.; Li, B. On low-complexity control design to spacecraft attitude stabilization: An online-learning approach. Aerosp. Sci. Technol. 2021, 110, 106441. [Google Scholar] [CrossRef]

- Zhang, C. Self-Learning Control under Practical Actuation. Aerosp. Eng. Commun. 2026, 1, 36–46. Available online: https://www.icck.org/article/abs/aec.2025.320719 (accessed on 6 February 2026).

- Tan, L.; Jin, G.; Zhou, S.; Wang, L. A Model-Free Online Learning Control for Attitude Tracking of Quadrotors. Appl. Sci. 2024, 14, 980. [Google Scholar] [CrossRef]

- Li, C.; Wang, Y.; Ahn, C.K.; Zhang, C.; Wang, B. Milli-Hertz Frequency Tuning Architecture Toward High Repeatable Micromachined Axi-Symmetry Gyroscopes. IEEE Trans. Ind. Electron. 2023, 70, 6425–6434. [Google Scholar] [CrossRef]

- Zhang, C.; Wu, J.; Ahn, C.K.; Fei, Z.; Wei, C. Learning Observer and Performance Tuning-Based Robust Consensus Policy for Multiagent Systems. IEEE Syst. J. 2022, 16, 431–439. [Google Scholar] [CrossRef]

- Mesbahi, M.; Egerstedt, M. Graph Theoretic Methods in Multiagent Networks; Princeton University Press: Princeton, NJ, USA, 2010. [Google Scholar]

| Method | |||||

|---|---|---|---|---|---|

| self-learning control | 24.31 | 0.0316 | 0.0297 | 0.0403 | |

| BL | 21.79 | 0.318 | 0.2703 | 0.3831 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhang, C. Self-Learning Control for Multi-Agent Consensus. AppliedMath 2026, 6, 37. https://doi.org/10.3390/appliedmath6030037

Zhang C. Self-Learning Control for Multi-Agent Consensus. AppliedMath. 2026; 6(3):37. https://doi.org/10.3390/appliedmath6030037

Chicago/Turabian StyleZhang, Chengxi. 2026. "Self-Learning Control for Multi-Agent Consensus" AppliedMath 6, no. 3: 37. https://doi.org/10.3390/appliedmath6030037

APA StyleZhang, C. (2026). Self-Learning Control for Multi-Agent Consensus. AppliedMath, 6(3), 37. https://doi.org/10.3390/appliedmath6030037