WB Score: A Novel Methodology for Visual Classifier Selection in Increasingly Noisy Datasets

Abstract

:1. Introduction

2. Related Works

3. Data and Methods

3.1. Used Datasets

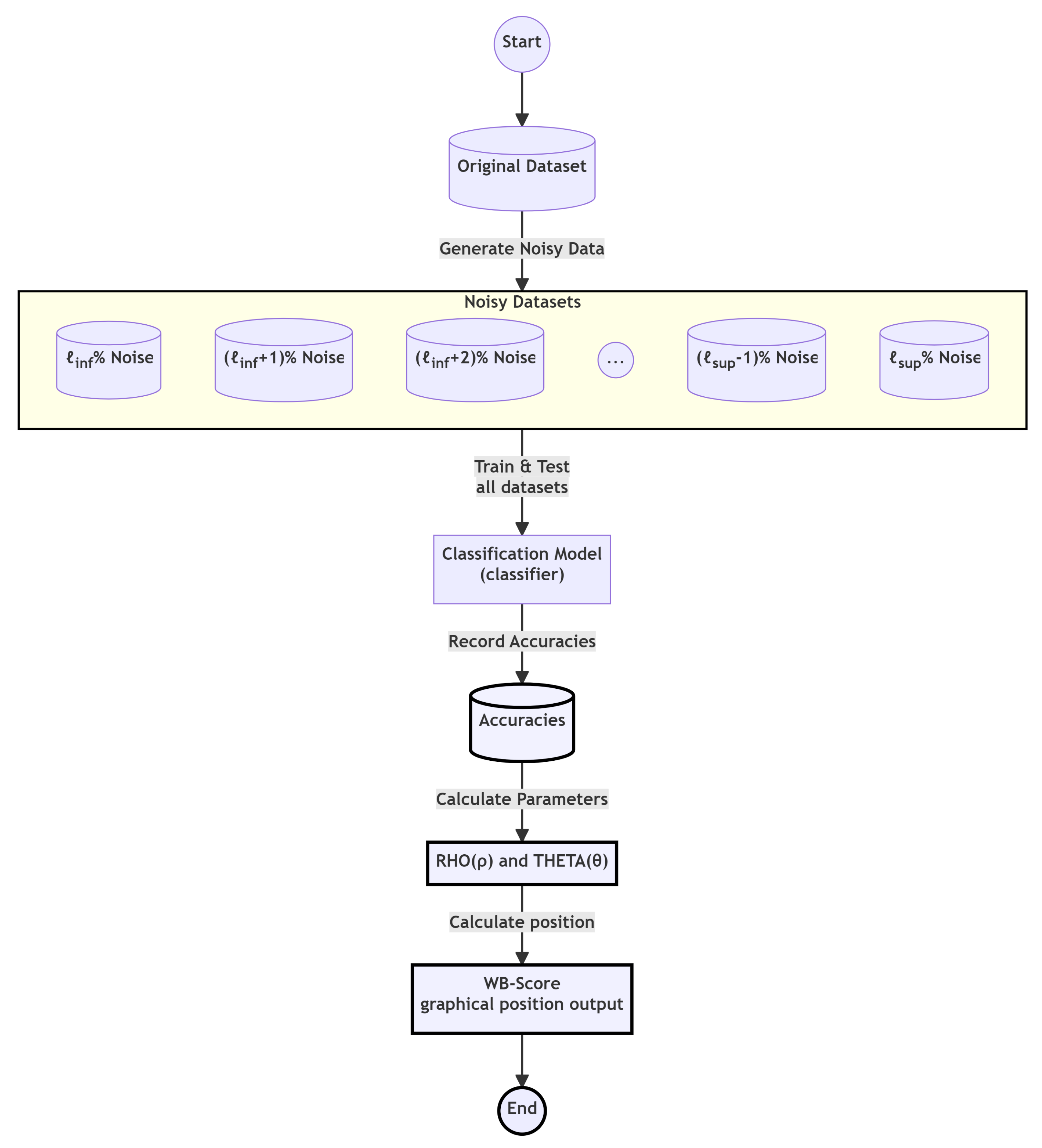

3.2. WB Score Explained

- (i)

- Vectors with generally represent algorithms with robustness in relation to noisy datasets;

- (ii)

- Vectors with generally represent algorithms with robustness in relation to noiseless datasets;

- (iii)

- Vectors with represent algorithms with a balanced response between noisy and noiseless datasets.

3.3. Selected Classifiers for Testing

3.4. The WEKA Software

3.5. Fine Tuning with Grid Search

3.6. Introduced Noises

- Multiplicative: random variations to the original value ensuring that the noise is centered around zero;

- Additive: random variations to the original value with the variations centered around the mean value;

- Both multiplicative and additive: noises are added and divided by two, so the result will remain within the desired noise level.

3.7. Accuracies Calculation

- (i)

- The classifier was trained on the original dataset;

- (ii)

- The classifier was tested on the original dataset;

- (iii)

- For each of the 20 datasets with different noise levels, the classifier was tested, and the accuracies were registered;

- (iv)

- A graph of the classifier as a function of the noise level was plotted.

4. Results and Discussion

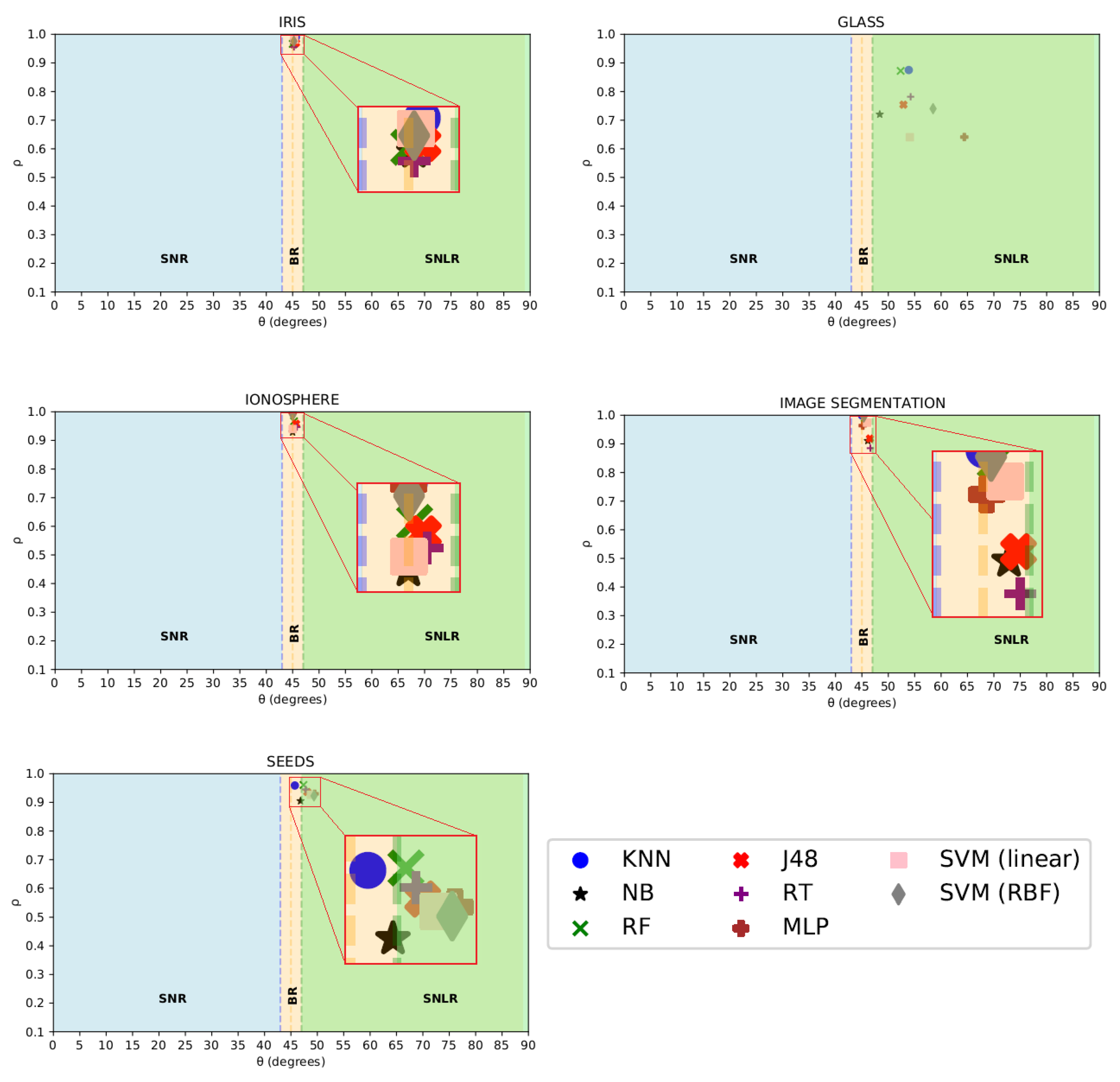

4.1. Classic Datasets

4.2. Customized Flooding Dataset

4.3. Comparison of Classifier Selection Methods

5. Conclusions

6. Future Works

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Russell, S.; Norvig, P. Artificial Intelligence: A Modern Approach; Pearson: London, UK, 2019. [Google Scholar]

- Caruana, R.; Niculescu-Mizil, A. An empirical comparison of supervised learning algorithms. In Proceedings of the 23rd International Conference on Machine Learning, Honolulu, HI, USA, 23–29 July 2006; pp. 161–168. [Google Scholar]

- Hastie, T.; Tibshirani, R.; Friedman, J. The Elements of Statistical Learning: Data Mining, Inference, and Prediction; Springer: Berlin/Heidelberg, Germany, 2009. [Google Scholar]

- Ganin, Y.; Ustinova, E.; Ajakan, H.; Germain, P.; Larochelle, H.; Laviolette, F.; Marchand, M.; Lempitsky, V. Domain-adversarial training of neural networks. J. Mach. Learn. Res. 2016, 17, 2096–2130. [Google Scholar]

- Barr, T.A.; Neyshabur, B. Revisiting small batch training for deep neural networks. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 4320–4330. [Google Scholar]

- Hinton, G.; Vinyals, O.; Dean, J. Distilling the knowledge in a neural network. arXiv 2015, arXiv:1503.02531. [Google Scholar]

- Datta, A.; Sen, S.; Zick, Y. Algorithmic transparency via quantitative input influence: Theory and experiments with learning systems. In Proceedings of the 37th IEEE Symposium on Security and Privacy, San Jose, CA, USA, 23–25 May 2016; pp. 598–617. [Google Scholar]

- Reed, S.E.; Lee, H.; Anguelov, D.; Szegedy, C.; Erhan, D.; Rabinovich, A. Training deep neural networks on noisy labels with bootstrapping. In Proceedings of the 32nd International Conference on Machine Learning, Lille, France, 6–11 July 2015; Volume 37, pp. 1462–1471. [Google Scholar]

- Camps-Valls, G.; Bruzzone, L. Kernel-based methods for hyperspectral image classification. IEEE Trans. Geosci. Remote Sens. 2009, 47, 932–945. [Google Scholar] [CrossRef]

- Sievert, C.; Shirley, K. LDAvis: A method for visualizing and interpreting topics. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), Doha, Qatar, 25–29 October 2018; pp. 564–574. [Google Scholar]

- Seabold, S.; Perktold, J. Statsmodels: Econometric and statistical modeling with Python. In Proceedings of the 9th Python in Science Conference, Austin, TX, USA, 28 June–3 July 2010; pp. 92–96. [Google Scholar]

- Sen, P.; Hajra, M.; Ghosh, M. Supervised classification algorithms in machine learning: A survey and review. In Emerging Technology in Modelling and Graphics; Springer: Berlin/Heidelberg, Germany, 2020; pp. 99–111. [Google Scholar]

- Swan, A.; Mobasheri, A.; Allaway, D.; Liddell, S.; Bacardit, J. Application of machine learning to proteomics data: Classification and biomarker identification in postgenomics biology. Omics J. Integr. Biol. 2013, 17, 595–610. [Google Scholar] [CrossRef]

- Kung, S.; Mak, M.; Lin, S.; Mak, M.; Lin, S. Biometric Authentication: A Machine Learning Approach; Prentice Hall Professional Technical Reference: New York, NY, USA, 2005. [Google Scholar]

- Brunetti, A.; Buongiorno, D.; Trotta, G.; Bevilacqua, V. Computer vision and deep learning techniques for pedestrian detection and tracking: A survey. Neurocomputing 2018, 300, 17–33. [Google Scholar] [CrossRef]

- Khan, A.; Baharudin, B.; Lee, L.; Khan, K. A review of machine learning algorithms for text-documents classification. J. Adv. Inf. Technol. 2010, 1, 4–20. [Google Scholar]

- Vamathevan, J.; Clark, D.; Czodrowski, P.; Dunham, I.; Ferran, E.; Lee, G.; Li, B.; Madabhushi, A.; Shah, P.; Spitzer, M.; et al. Applications of machine learning in drug discovery and development. Nat. Rev. Drug Discov. 2019, 18, 463–477. [Google Scholar] [CrossRef] [PubMed]

- Sharma, P.; Kaur, M. Classification in pattern recognition: A review. Int. J. Adv. Res. Comput. Sci. Softw. Eng. 2013, 3, 1–9. [Google Scholar]

- Kumar, A.; Irsoy, O.; Ondruska, P.; Iyyer, M.; Bradbury, J.; Gulrajani, I.; Zhong, V.; Paulus, R.; Socher, R. Ask me anything: Dynamic memory networks for natural language processing. In Proceedings of the International Conference on Machine Learning, New York, NY, USA, 19–24 June 2016; pp. 1378–1387. [Google Scholar]

- Adam, S.; Alexandropoulos, S.; Pardalos, P.; Vrahatis, M. No free lunch theorem: A review. In Approximation and Optimization; Springer: Berlin/Heidelberg, Germany, 2019; pp. 57–82. [Google Scholar]

- Gómez, D.; Rojas, A. An empirical overview of the no free lunch theorem and its effect on real-world machine learning classification. Neural Comput. 2016, 28, 216–228. [Google Scholar] [CrossRef] [PubMed]

- Khan, I.; Zhang, X.; Rehman, M.; Ali, R. A literature survey and empirical study of meta-learning for classifier selection. IEEE Access 2020, 8, 10262–10281. [Google Scholar] [CrossRef]

- Brazdil, P.; Soares, C. A comparison of ranking methods for classification algorithm selection. In Proceedings of the European Conference on Machine Learning, Barcelona, Spain, 31 May–2 June 2000; pp. 63–75. [Google Scholar]

- Soares, C.; Brazdil, P. Zoomed ranking: Selection of classification algorithms based on relevant performance information. In Proceedings of the European Conference on Principles of Data Mining and Knowledge Discovery, Lyon, France, 13–16 September 2000; pp. 126–135. [Google Scholar]

- Pacheco, A.; Krohling, R. Ranking of classification algorithms in terms of mean–standard deviation using A-TOPSIS. Ann. Data Sci. 2018, 5, 93–110. [Google Scholar] [CrossRef]

- Abdulrahman, S.; Brazdil, P.; Zainon, W.; Adamu, A. Simplifying the algorithm selection using reduction of rankings of classification algorithms. In Proceedings of the 2019 8th International Conference on Software and Computer Applications, Penang, Malaysia, 19–21 February 2019; pp. 140–148. [Google Scholar]

- Ren, M.; Triantafillou, E.; Ravi, S.; Snell, J.; Swersky, K.; Tenenbaum, J.; Larochelle, H.; Zemel, R. Meta-learning for semi-supervised few-shot classification. arXiv 2018, arXiv:1803.00676. [Google Scholar]

- Wu, J.; Xiong, W.; Wang, W. Learning to learn and predict: A meta-learning approach for multi-label classification. arXiv 2019, arXiv:1909.04176. [Google Scholar]

- Brun, A.; Britto, A., Jr.; Oliveira, L.; Enembreck, F.; Sabourin, R. A framework for dynamic classifier selection oriented by the classification problem difficulty. Pattern Recognit. 2018, 76, 175–190. [Google Scholar] [CrossRef]

- Kalousis, A.; Theoharis, T. Noemon: Design, implementation and performance results of an intelligent assistant for classifier selection. Intell. Data Anal. 1999, 3, 319–337. [Google Scholar]

- Cruz, R.; Sabourin, R.; Cavalcanti, G. Dynamic classifier selection: Recent advances and perspectives. Inf. Fusion 2018, 41, 195–216. [Google Scholar] [CrossRef]

- Hasan, R.; Chu, C. Noise in Datasets: What Are the Impacts on Classification Performance? In Proceedings of the ICPRAM, Online, 3–5 February 2022; pp. 163–170. [Google Scholar]

- Saseendran, A.; Setia, L.; Chhabria, V.; Chakraborty, D.; Barman Roy, A. Impact of noise in dataset on machine learning algorithms. Mach. Learn. Res. 2019, 1, 1–8. [Google Scholar]

- Xiao, J.; He, C.; Jiang, X.; Liu, D. A dynamic classifier ensemble selection approach for noise data. Inf. Sci. 2010, 180, 3402–3421. [Google Scholar] [CrossRef]

- Zhu, X.; Wu, X.; Yang, Y. Dynamic classifier selection for effective mining from noisy data streams. In Proceedings of the Fourth IEEE International Conference on Data Mining (ICDM’04), Brighton, UK, 1–4 November 2004; pp. 305–312. [Google Scholar]

- Krawczyk, B.; Cano, A. Online ensemble learning with abstaining classifiers for drifting and noisy data streams. Appl. Soft Comput. 2018, 68, 677–692. [Google Scholar] [CrossRef]

- Pichery, C. Sensitivity analysis. In Encyclopedia of Toxicology, 3rd ed.; Elsevier: Amsterdam, The Netherlands, 2014. [Google Scholar]

- Oliveira Simoyama, F.; Tomás, L.; Pinto, F.; Salles-Neto, L.; Santos, L. Optimal rain gauge network to reduce rainfall impacts on urban mobility—A spatial sensitivity analysis. Ind. Manag. Data Syst. 2022, 122, 2261–2280. [Google Scholar] [CrossRef]

- Saltelli, A.; Tarantola, S.; Campolongo, F.; Ratto, M. Sensitivity Analysis in Practice: A Guide to Assessing Scientific Models; Wiley Online Library: Hoboken, NJ, USA, 2004. [Google Scholar]

- Morris, M. Factorial sampling plans for preliminary computational experiments. Technometrics 1991, 33, 161–174. [Google Scholar] [CrossRef]

- Sin, G.; Gernaey, K. Improving the Morris method for sensitivity analysis by scaling the elementary effects. Comput. Aided Chem. Eng. 2009, 26, 925–930. [Google Scholar]

- Silva Billa, W.; Santos, L.; Negri, R. From rainfall data to a two-dimensional data-space separation for flood occurrence. An. Do(A) Encontro Nac. Model. Comput. Encontro Ciênc. Tecnol. Mater. 2021. [Google Scholar] [CrossRef]

- Kerr, A.R.; Randa, J. Thermal Noise and Noise Measurements—A 2010 Update. IEEE Microw. Mag. 2010, 11, 40–52. [Google Scholar] [CrossRef]

- Hossin, M.; Sulaiman, M. A review on evaluation metrics for data classification evaluations. Int. J. Data Min. Knowl. Manag. Process 2015, 5, 1–11. [Google Scholar]

- Powers, D. Evaluation: From precision, recall and f-measure to roc, auc and informedness. J. Mach. Learn. Res. 2011, 12, 2137–2163. [Google Scholar]

- Kramer, O.; Kramer, O. K-nearest neighbors. In Dimensionality Reduction with Unsupervised Nearest Neighbors; Springer: Berlin/Heidelberg, Germany, 2013; pp. 13–23. [Google Scholar]

- Rish, I.; Smith, J.; Johnson, A.; Davis, M. An empirical study of the naive Bayes classifier. In Proceedings of the IJCAI 2001 Workshop on Empirical Methods in Artificial Intelligence, Seattle, WA, USA, 4–6 August 2001; Volume 3, pp. 41–46. [Google Scholar]

- Cutler, A.; Cutler, D.; Stevens, J. Random forests. Ensemble Mach. Learn. 2012, 45, 157–175. [Google Scholar]

- Ruggieri, S. Efficient C4. 5 [classification algorithm]. IEEE Trans. Knowl. Data Eng. 2002, 14, 438–444. [Google Scholar] [CrossRef]

- Le Gall, J. Random trees and applications. Probab. Surv. 2005, 2, 245–311. [Google Scholar] [CrossRef]

- Murtagh, F. Multilayer perceptrons for classification and regression. Neurocomputing 1991, 2, 183–197. [Google Scholar] [CrossRef]

- Bhavsar, H.; Panchal, M. A review on support vector machine for data classification. Int. J. Adv. Res. Comput. Eng. Technol. (IJARCET) 2012, 1, 185–189. [Google Scholar]

- Liu, Q.; Chen, C.; Zhang, Y.; Hu, Z. Feature selection for support vector machines with RBF kernel. Artif. Intell. Rev. 2011, 36, 99–115. [Google Scholar] [CrossRef]

- Hall, M.; Frank, E.; Holmes, G.; Pfahringer, B.; Reutemann, P.; Witten, I. The WEKA data mining software: An update. ACM SIGKDD Explor. Newsl. 2009, 11, 10–18. [Google Scholar] [CrossRef]

- Syarif, I.; Prugel-Bennett, A.; Wills, G. SVM parameter optimization using grid search and genetic algorithm to improve classification performance. TELKOMNIKA Telecommun. Comput. Electron. Control 2016, 14, 1502–1509. [Google Scholar] [CrossRef]

- Breiman, L. Bagging predictors. Mach. Learn. 1996, 24, 123–140. [Google Scholar] [CrossRef]

- Freund, Y.; Schapire, R.E. A decision-theoretic generalization of on-line learning and an application to boosting. J. Comput. Syst. Sci. 1997, 55, 119–139. [Google Scholar] [CrossRef]

- Bergstra, J.; Bengio, Y. Random search for hyper-parameter optimization. J. Mach. Learn. Res. 2012, 13, 281–305. [Google Scholar]

- Derrac, J.; García, S.; Molina, D.; Herrera, F. A practical tutorial on the use of nonparametric statistical tests as a methodology for comparing evolutionary and swarm intelligence algorithms. Swarm Evol. Comput. 2015, 27, 1–30. [Google Scholar] [CrossRef]

- Fernández-Delgado, M.; Cernadas, E.; Barro, S.; Amorim, D. Do we need hundreds of classifiers to solve real world classification problems? J. Mach. Learn. Res. 2014, 15, 3133–3181. [Google Scholar]

- Pareja, J.A.; Weber, R. Statistical comparison of classifiers through a cross-fitting approach. Pattern Recognit. Lett. 2014, 36, 105–112. [Google Scholar]

| Dataset | Description | Instances | Attributes |

|---|---|---|---|

| IRIS | Classification of iris flowers into three species: setosa, versicolor, and virginica. | 150 | 4 |

| GLASS | Classification of glass types into six categories: window, bottle, table, vehicle, laboratory, and other. | 214 | 9 |

| IONOSPHERE | Classification of ionospheric conditions into three categories: quiet, disturbed, and very disturbed. | 351 | 34 |

| IMAGE SEGMENTATION | The instances were drawn randomly from a database of 7 outdoor images and were hand segmented to create a classification for every pixel. | 210 | 19 |

| SEEDS | Classification of seeds into three species: setaria italica, digitaria sanguinalis, and eleusine indica. | 210 | 7 |

| FLOODINGS SP | Classification of floodings events between 2015 and 2016 in São Paulo. | 825 | 6 |

| Classifier Name | Acronym | Classification Type |

|---|---|---|

| Naive Bayes | NB | Bayes Theory |

| Support Vector Machine (linear kernel) | SVM (linear) | Linear |

| C4.5 (ported in JAVA) | J48 | Nonlinear |

| K-Nearest Neighbor | KNN | Nonlinear |

| MultilayerPerceptron | MLP | Nonlinear |

| Random Forest | RF | Nonlinear |

| Random Tree | RT | Nonlinear |

| Support Vector Machine (Radial Basis Function kernel) | SVM (RBF) | Nonlinear |

| Classifier | Fine-Tuned Parameter | Search Space | Best Configuration (IRIS, GLASS, IONOSPHERE, SEGMENTATION, SEEDS, FLOODINGS SP) |

|---|---|---|---|

| KNN | KNN distanceWeighting | {3, 5, 7, 9, 11} {None, 1/distance, 1-distance} | 11, 3, 7, 3, 3, 3 1/distance (all) |

| NB | useKernelEstimator useSupervisedDiscretization | {0, 1} {0, 1} | 1, 0, 1, 0, 0, 0 0, 1, 0, 1, 1, 1 |

| RF | numIterations maxDepth | {10, 20, …, 190, 200} {1, 2, 3, …, 8, 9, 10} | 200, 30, 60, 200, 80, 200 3, 7, 6, 10, 10, 7 |

| J48 | minNumObj unpruned | {1, 3, 5, 7, 9, 11} {0, 1} | 3, 5, 5, 5, 1, 1 1 (all) |

| RT | breakTiesRandomly maxDepth | {0, 1} {1, 2, 3, …, 8, 9, 10} | 1, 1, 0, 1, 0, 0 2, 6, 6, 5, 5, 9 |

| MLP | learningRate momentum | {0.1, 0.2, …, 0.5} {0.1, 0.2, …, 0.5} | 0.5, 0.5, 0.4, 0.5, 0.5, 0.5 0.5, 0.2, 0.1, 0.1, 0.1, 0.4 |

| SVM (linear) | cost coef0 | {1, 10, 100, 1000, 10000} {0, 1} | 10000, 10, 100, 1, 100, 100 1 (all) |

| SVM (RBF) | cost gamma | {1, 10, 100, 1000, 10000} {0.01, 0.1, 1} | 10, 10, 10, 10000, 10000, 1 0.01, 1, 0.1, 10000, 0.01, 0.1 |

| Algorithm | A0 | Ac | Accuracy Drop % | ||

|---|---|---|---|---|---|

| KNN | 0.971 | 0.946 | 0.958 | 45.74 | 6.78 |

| NB | 0.933 | 0.875 | 0.904 | 46.82 | 9.40 |

| RF | 1 | 0.920 | 0.960 | 47.38 | 15.05 |

| J48 | 0.985 | 0.884 | 0.936 | 48.10 | 20.49 |

| RT | 0.990 | 0.897 | 0.945 | 47.80 | 19.36 |

| MLP | 1 | 0.854 | 0.929 | 49.49 | 28.72 |

| SVM (linear) | 0.985 | 0.863 | 0.926 | 48.78 | 24.85 |

| SVM (RBF) | 0.990 | 0.849 | 0.922 | 49.37 | 27.70 |

| Rank # | Overall Performance () | Strong Noiseless Response () | Balanced Response () |

|---|---|---|---|

| 1 | RF | RF | KNN |

| 2 | KNN | MLP | NB |

| 3 | RT | RT | |

| 4 | J48 | SVM (RBF) | |

| 5 | MLP | J48 | |

| 6 | SVM (linear) | SVM (linear) | |

| 7 | SVM (RBF) | KNN | |

| 8 | NB | NB |

| Algorithm | A0 | Ac | Accuracy Drop % | ||

|---|---|---|---|---|---|

| KNN | 0.975 | 0.958 | 0.967 | 45.49 | 3.74 |

| NB | 0.886 | 0.839 | 0.862 | 46.56 | 6.37 |

| RF | 0.961 | 0.923 | 0.942 | 46.15 | 6.41 |

| J48 | 0.941 | 0.867 | 0.905 | 47.36 | 10.33 |

| RT | 0.927 | 0.864 | 0.896 | 47.00 | 9.49 |

| MLP | 0.827 | 0.822 | 0.825 | 45.17 | 1.55 |

| SVM (linear) | 0.717 | 0.717 | 0.717 | 45.00 | 0 |

| SVM (RBF) | 0.884 | 0.885 | 0.885 | 44.98 | 0 |

| Rank # | Overall Performance () | Strong Noiseless Response () | Balanced Response ) |

|---|---|---|---|

| 1 | KNN | KNN | SVM (RBF) |

| 2 | RF | RF | SVM (linear) |

| 3 | J48 | J48 | MLP |

| 4 | RT | RT | KNN |

| 5 | SVM (RBF) | NB | RF |

| 6 | NB | SVM (RBF) | NB |

| 7 | MLP | MLP | RJ |

| 8 | SVM (linear) | SVM (linear) | J48 |

| Method | Pros | Cons |

|---|---|---|

| WB Score Methodology | Explicitly addresses noise-related challenges. | Specific details of noise robustness and efficient handling are not detailed. |

| Emphasizes robustness to noise and efficient noise handling. | ||

| Utilizes a visually intuitive graph for performance representation. | ||

| Ensemble Learning | Robustness through combination of multiple classifiers. | Increased computational complexity. |

| Effective noise reduction. | Limited insight into individual classifier performance. | |

| Grid Search with Cross-Validation | Systematic exploration of hyperparameter space. | Computationally expensive for large search spaces. |

| Can find optimal configurations. | ||

| Performance-Based Selection | Prioritizes best-performing classifiers. | Assumes consistent noise levels between training and validation data. |

| Evaluates classifiers on validation set. | ||

| Algorithm Selection Heuristics | Guided by dataset characteristics. | May not capture subtle noise patterns. |

| Tailored to specific characteristics. | ||

| Algorithm Ranking Based on Statistical Tests | Ranks classifiers based on performance. | May not fully capture noise-related challenges. |

| Uses statistical tests for significance. | ||

| Portfolio Selection | Optimizes resource allocation based on historical performance. | Requires consideration of noise and performance history. |

| Addresses classifier performance over time. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Billa, W.S.; Negri, R.G.; Santos, L.B.L. WB Score: A Novel Methodology for Visual Classifier Selection in Increasingly Noisy Datasets. Eng 2023, 4, 2497-2513. https://doi.org/10.3390/eng4040142

Billa WS, Negri RG, Santos LBL. WB Score: A Novel Methodology for Visual Classifier Selection in Increasingly Noisy Datasets. Eng. 2023; 4(4):2497-2513. https://doi.org/10.3390/eng4040142

Chicago/Turabian StyleBilla, Wagner S., Rogério G. Negri, and Leonardo B. L. Santos. 2023. "WB Score: A Novel Methodology for Visual Classifier Selection in Increasingly Noisy Datasets" Eng 4, no. 4: 2497-2513. https://doi.org/10.3390/eng4040142

APA StyleBilla, W. S., Negri, R. G., & Santos, L. B. L. (2023). WB Score: A Novel Methodology for Visual Classifier Selection in Increasingly Noisy Datasets. Eng, 4(4), 2497-2513. https://doi.org/10.3390/eng4040142