1. Introduction

Accurate localization is critical for efficient operations and precise control in aerial robotics, particularly in multi-UAV systems where collaborative tasks, formation flying, and mission execution depend on it. However, achieving reliable localization remains challenging in GNSS-denied environments [

1]. To overcome this, researchers have investigated multimodal sensor fusion approaches. Among these, visual-inertial odometry (VIO) has emerged as a widely studied method that integrates camera and IMU data for relative motion estimation and localization [

2,

3].

VIO operates by capturing environmental images through cameras to extract and track visual features, while IMUs measure acceleration and angular velocity to estimate pose changes. Despite its advantages, VIO suffers from two primary limitations [

4]. First, its performance heavily depends on visual conditions: feature extraction becomes unreliable in texture-sparse areas, and sudden environmental changes introduce tracking errors, degrading localization accuracy. Second, the computational complexity of real-time image processing and sensor fusion strains the limited processing capabilities of UAVs, further restricting accuracy and practicality [

5,

6].

To address these challenges in complex environments, multi-UAV collaborative localization methods have been developed. These approaches are broadly classified into centralized and distributed systems based on data processing strategies. Centralized methods rely on transmitting all UAV-collected data to a ground station or central node for unified computation. However, they require stable network connectivity and high-performance hardware at the central node, resulting in scalability and flexibility limitations. In contrast, distributed systems eliminate central nodes by enabling direct communication between neighboring UAVs, offering enhanced robustness and adaptability for dynamic environments. This advantage has made distributed methods increasingly prevalent in multi-UAV applications [

7,

8].

Current VIO-based collaborative localization methods often improve accuracy by sharing environmental characteristics observed across UAV clusters. While effective, such strategies impose high communication bandwidth requirements, which become problematic as UAV numbers or environmental complexity increases. Alternative approaches using pre-deployed UWB anchors enhance localization through anchor-based distance measurements. Nevertheless, these methods lack flexibility due to their dependence on carefully calibrated anchor infrastructure, making them impractical for unknown or dynamic environments where anchor deployment is costly or infeasible [

9,

10].

To address these challenges and meet the demands for low-cost, lightweight design, and real-time performance, this paper proposes a novel distributed anchor-free visual-inertial-UWB-magnetic cooperative localization system (DTVIRM-Swarm) for multi-UAVs based on the sliding window extended Kalman filter framework. Unlike existing distributed SLAM systems such as COVINS-G [

11] and D2SLAM, which require significant communication bandwidth for map sharing, our approach leverages direct UWB ranging between UAVs without relying on pre-deployed anchors, achieving higher communication efficiency. Compared to COO-VIR [

12], which still requires static UWB anchors, our system offers greater flexibility in unknown environments.

In this system, UAVs measure mutual distances using UWB technology without relying on UWB anchors. To fully utilize UWB measurements, multiple UWB keyframes are incorporated into the sliding window, and an adaptive adjustment method for UWB filtering errors based on chi-squared detection is employed to ensure robustness and real-time performance. Additionally, to further enhance accuracy, a visual observation model based on pose-only (PO) theory is adopted, improving the system’s adaptability to challenging visual environments [

13,

14].

The contributions of this paper are as follows:

Distributed Anchor-Free Architecture with Efficient Initialization: Proposes a distributed anchor-free cooperative localization framework for multi-UAV swarms, eliminating the reliance on central nodes or pre-deployed anchors. Integrates ranging, geomagnetic, and MIMU data to develop an MDS-MAP initialization method, which addresses the non-convex optimization problem in ranging-aided SLAM and enables fast and accurate absolute pose initialization of the swarm, outperforming traditional GTSAM-based optimization methods by 40.6% in positioning accuracy and reducing computation time by over 80%.

Optimized Sensor Measurement Processing: Targets the noise and discontinuity of UWB dynamic measurements, presents an adaptive adjustment method based on chi-squared detection, and fuses multiple UWB keyframes to enhance robustness. Adopts pose-only (PO) theory for visual measurement modeling, decouples 3D feature reconstruction, and achieves a balance between positioning accuracy and computational efficiency, reducing the computational burden compared to traditional VIO systems.

Covariance Intersection (CI) for Consistent Measurement Fusion: Incorporates the CI method into UWB measurement fusion to address the challenge of unknown correlations between state estimates from different UAVs. This ensures consistent and robust state estimation even in dynamic environments with frequent communication delays and packet losses, enhancing the overall reliability of the cooperative localization system.

Tightly-Coupled Multi-Sensor Fusion Framework: Constructs a sliding window EKF to fuse inertial, visual, UWB ranging, and geomagnetic data while explicitly modeling the position uncertainty of neighboring nodes. The framework maintains stable operation even in vision-deprived scenarios, breaking through the limitation of traditional methods that rely on continuous visual input and improving adaptability to complex environments. Compared to SOTA methods like VINS-Mono, OpenVINS, and SuperVINS, our system achieves an average positioning error reduction of 38.3%, 40.8%, and 29.7% respectively.

The structure of the paper is as follows.

Section 2 presents a literature review.

Section 3 introduces the proposed algorithm and the detailed mathematical model of collaborative localization.

Section 4 presents simulation experiments and real experiments, and analyzes the results. Finally,

Section 5 concludes the paper.

2. Related Work

In the field of UAV collaborative localization, a key issue is the relative position observation between UAVs. Currently, there are many methods to solve the problem of relative position observation between drones. Marker-based visual mutual observation methods usually extract and deploy markers on the drone, and use markers, such as ultraviolet LED lights and ultraviolet sensitive cameras, combined to perform relative positioning [

15]. Unlabeled visual mutual observation methods often rely on convolutional neural networks (CNN) [

16,

17,

18], which use machine learning-based techniques to extract relative distances, but are easily affected by changes in the appearance of targets and the environment and cannot provide accurate relative estimates.

Multi-robot SLAM methods can use map merging and loop closure between robots to obtain relative attitudes. This method requires the communication of a large amount of data and is not suitable for drone platforms with computational performance constraints [

19]. 3D LiDAR and UWB can also directly measure relative distances. In [

20], the fusion of 3D LiDAR, fisheye camera and UWB data is used to track drones flying above LiDAR unmanned ground vehicles (UGV). In [

21], a distributed LiDAR inertial group range measurement method was proposed, which uses the reflectivity values from LiDAR data to directly detect collaborative drones, but using LiDAR as a relative position observation sensor is costly. In [

22], a relative positioning method for micro drones based on VIO and LiDAR localization was proposed. Slave drones use LiDAR to observe the relative position and distance of the master drone, and integrate it with VIO to improve the positioning accuracy of slave drones. However, this method requires a high precision master drone node. If the master drone node is damaged, the entire drone cluster will not work normally.

To address the issue of substantial positioning errors in GNSS-challenged environments, particularly when visual features are sparse, researchers have proposed several methods. In terms of single drone positioning, ref. [

23] fuses data from static UWB anchors, LiDAR odometers, IMUs and VIOs to improve the positioning accuracy of drones in GNSS-challenged environments, but in emergency situations, it is not feasible to place static UWB anchors in the area. In [

24], a multi-sensor framework is proposed that uses ultra-wideband (UWB) technology and visual inertial measurement (VIO) to provide robust and low-drift positioning. In [

25], a learning-based drone positioning method is proposed that uses fused vision, IMU, and UWB sensors, combined with visual inertia (VIO) and UWB branches, to predict global attitude, but these two methods still require the placement of multiple ground UWB anchors in advance.

In terms of multi-drone positioning, ref. [

26] proposed a method that integrates UWB and VIO for collaborative positioning of two drones, and ref. [

27] proposed a method for distributed formation estimation in large drone clusters. In [

8], a distributed collaborative SLAM system was proposed, which innovatively manages near-field estimation and integrates multiple sensors and map data to achieve accurate near-field relative state estimation and consistent global trajectory far-field estimation. In [

28], the authors fused detection results from CNN with UWB data and VIO for relative positioning in drone clusters. Ref. [

12] fuses data from static UWB anchors, mutual ranging between drones, IMU and VIO to improve the positioning accuracy of drones in GNSS-challenged environments. However, this active vision-based approach cannot cope with urgent tasks.

The authors of [

29] focused on collaborative localization of UGV and UAV teams, and their method relied mainly on UWB and VIO data and used 3D LiDAR detection during initialization. The authors of [

30] utilized a heterogeneous team of UGVs carrying LiDAR and camera-equipped drones with the goal of detecting UGVs from airborne cameras on the drones and using them as a landmark for improving drone positioning. Ref. [

31] proposed a new cooperative localization framework based on optimized belief propagation (BP) for use in GNSS denial areas, and ref. [

32] proposed a technology to improve the accuracy of visual inertial odometer (VIO) by combining ultra-wideband (UWB) positioning technology. However, these methods all require the assistance of multiple UWB anchors, which is not conducive to practical use; ref. [

33] proposes a multi-drone relative scheme based on distributed graph optimization (DGO), which combines on-board ultra-wideband (UWB) modules, cameras and inertial sensors, but the cameras need to observe other drones for position estimation, making it unsuitable for large-scale scenarios. Ref. [

34] fuses the data from these two types of sensors via the Kalman filter to address the positioning drift problem in GPS-denied environments. Ref. [

35] adopts the Information Consistency Filter (ICF) to address the state estimation association problem among multiple devices. Ref. [

36] adopts a strategy of second-order Kalman filter preprocessing combined with EKF gating fusion to tackle the sensor observation data with intermittency and time delay.

Therefore, considering the requirements of low cost, lightweight, and real-time performance, we propose a distributed anchorless vision-inertial-UWB-magnetic multi-drone collaborative positioning system. UAVs measure the distance between drones through UWB. There is no need to rely on UWB anchors, and a filtering framework based on EKF is used to fuse multiple observations, which enhances the lightweight and real-time nature of the algorithm. In order to further improve the accuracy, we incorporate multiple UWB key frames into the sliding window, and adopt an adaptive adjustment method for filtering errors based on chi-squared detection. In addition, in terms of vision observation, we adopt a visual observation model based on PO constraints, which improves the system’s adaptability to challenging visual environments.

3. Methods

The proposed method is a distributed and anchor-free visual-inertial-UWB-magnetic cooperative localization system for UAVs. It consists of three main components: a sliding window extended Kalman filter framework for tightly fusing inertial, visual, Magnetic, and UWB ranging data; an adaptive adjustment method for UWB measurements based on chi-squared detection; and a visual observation model using pose-only (PO) theory.

3.1. Overview of the Architecture

The block diagram of the architecture of the proposed system is shown in

Figure 1. Each UAV is equipped with an IMU, a magnetometer, a camera and a UWB sensor, and the UAVs can share each other’s position and relative distance information through communication links and UWB.

In the structural block diagram shown in

Figure 1, feature tracking and extraction are used to obtain environmental feature information, IMU data is used for state propagation and enhancement to predict the motion state of the UAV, and basic views and sensor data are used to build a visual observation model based on pure pose (PO) theory to improve the accuracy of the algorithm. After the magnetometer is calibrated by the magnetic sensor, it outputs heading angle information for subsequent fusion positioning. Then, the information predicted by the filter is used to perform chi-squared detection on the UWB data and filter out outliers. Finally, the state estimate of the UAV is updated by fusing multiple sensor information in a tight combination method.

3.2. Multi-Sensor Time Synchronization and Delay Handling

In a multi-sensor fusion system for cooperative localization, precise time synchronization is fundamental to estimation accuracy. The sensors in this system, including IMU, camera, magnetometer (MAG), UWB, and inter-UAV position information, operate at different sampling frequencies and exhibit varying latencies. In distributed scenarios, wireless communication delays introduce additional temporal uncertainties. We propose a time synchronization and delay handling scheme built upon the sliding window EKF framework, leveraging IMU’s high-frequency propagation capability for temporal alignment.

3.2.1. Time Synchronization for Onboard Sensors

All sensor measurements carry precise timestamps. When a measurement arrives at time

, the system state at this instant is obtained through IMU-driven interpolation. The detailed steps of the algorithm are illustrated in Algorithm 1. Given filter states

at

and

at

where

:

The synchronized residual is then computed as:

where

is the measurement model.

| Algorithm 1 Multi-Sensor Time Synchronization and Delay Handling. |

- Require:

Sliding window states , timestamps, measurement with timestamp - Ensure:

Aligned state or updated state - 1:

Onboard Sensor Synchronization - 2:

Find states at and at () - 3:

- 4:

- 5:

Inter-UAV Delay Handling - 6:

- 7:

if

then - 8:

Discard measurement - 9:

else - 10:

, - 11:

- 12:

- 13:

- 14:

- 15:

end if - 16:

return Updated state

|

3.2.2. Delay Handling for Inter-UAV Information

In distributed cooperative localization, inter-UAV information suffers from communication delays. Let be the generation timestamp and the reception timestamp, with delay . The delay handling mechanism consists of three steps.

State Storage: A sliding window buffer maintains historical states with timestamps .

Backward Correction: Retrieve the historical state

at timestamp

from the buffer and compute the innovation:

where

is the Kalman gain at

. The corrected state is

.

Forward Propagation: Propagate

from

to the current time

using the state transition matrix:

where

with

being the Jacobian of the system dynamics.

For UWB ranging from neighboring UAVs with delay, the measurement model is:

where

is the stored position at

, with uncertainty explicitly modeled in the measurement noise.

This scheme achieves comprehensive temporal alignment: onboard sensors are synchronized through IMU interpolation, while inter-UAV delays are handled through backward correction and forward propagation, ensuring accurate cooperative localization under communication delays.

3.3. Swarm Absolute Pose and Attitude Initialization

In multi-UAV swarm systems, accurate initialization of absolute positions and orientations is crucial for subsequent collaborative localization tasks. Traditional methods often rely on GNSS or pre-deployed infrastructure, which are not available in GNSS-denied environments. To address this challenge, we propose a novel swarm absolute pose initialization method based on ranging and geomagnetic information.

Our approach utilizes IMU (Inertial Measurement Unit) to estimate roll and pitch angles, magnetometer (compass) to estimate yaw angle, and inter-node ranging information to estimate relative positions. These measurements are fused to estimate the absolute positions and orientations of the UAV swarm in the ENU (East-North-Up) coordinate system.

The initialization process consists of two main steps:

First, individual UAV attitude estimation: Each UAV independently estimates its attitude angles (roll, pitch, and yaw) in a static state. Roll and pitch angles are estimated using accelerometer measurements:

where

is the average gravity vector measured by the accelerometer in the body frame.

Yaw angle is estimated using calibrated magnetometer measurements. The raw magnetometer readings are first calibrated to correct for environmental disturbances:

where

are scale factors and cross-axis sensitivity coefficients, and

are bias parameters. The calibrated magnetometer measurements are then transformed to the navigation frame:

and the yaw angle is computed as:

where

D is the magnetic declination obtained from geomagnetic maps.

Second, initialization of absolute position: The first UAV in the swarm (i = 1) is selected as the ENU coordinate origin . Then move the drone for a short distance, and combine the output of VIO and the geomagnetic heading angle to obtain the displacement vector of the drone in the ENU coordinate system. Then, the MDS-MAP method proposed in this paper can be used to obtain the absolute position coordinates of the entire UAV swarm under the ENU coordinate system. The pseudocode of Algorithm 2 is as follows.

This algorithm takes the number of UAV nodes, pre- and post-movement distance matrices of Node 1, and its absolute displacement as inputs. It solves relative coordinates via MDS, aligns coordinate systems using non-moving nodes, corrects orientation, and applies global translation to output all nodes’ absolute positions, providing fast, robust, and accurate initialization for swarm collaborative localization. There is also a commonly used cluster position initialization method, which is to directly use ranging information to build residuals, build a joint optimization problem and solve it. Its ranging residual construction method is defined as

. Obviously, this approach will introduce a large amount of non-convexity into the optimization problem, which may cause the optimization problem to fall into a local optimal solution, resulting in a decrease in final accuracy. However, the method proposed in this paper not only avoids the problem of non-convex optimization by using algebraic methods but also greatly simplifies the calculation and improves the computational efficiency. We will also conduct experimental verification and analysis in the subsequent experimental evaluation process.

| Algorithm 2 MDS-MAP Absolute Localization Algorithm. |

1:

function MDS_MAP_Localization(N, , , ) N: Number of UAVs, | |

2:

: Distance matrix before Node 1 moves, | |

3:

: Distance matrix after Node 1 moves, | |

4:

: Node 1’s absolute displacement : Absolute positions of all UAVs | |

5:

1. Compute Relative Coordinates via MDS | |

6:

| ▹ Centralization matrix |

7:

, | |

8:

| |

9:

| |

10:

, | |

11:

| |

12:

| |

13:

2. Align Coordinate Systems | |

14:

| ▹ Non-moving nodes |

15:

, | |

16:

, | |

17:

, , | |

18:

| |

19:

3. Estimate Absolute Heading and Correct | |

20:

| |

21:

, | |

22:

| |

23:

| |

24:

| |

25:

4. Compute Absolute Positions | |

26:

| ▹ Global translation |

27:

| |

return

| |

28:

end function |

3.4. Filter State and Propagation

In the proposed multi-UAV collaborative navigation system, we employ the sliding window EKF for state estimation, which is developed from OpenVINS [

37]. The state of any drone in the drone cluster is defined as follows: The error state vector

consists of the current INS error parameter

, vision measurement key frame

, UWB measurement key frame

, magnetic measurement key frame

, and single time offset

between the IMU and the camera clock. They can be defined as:

where:

Here, the current inertial localization error state

includes the attitude error

, position error

, speed error

, gyroscope deviation

and the accelerometer deviation

of the drone. The vision measurement key frame

and the UWB measurement key frame

are defined as follows:

where

and

are the IMU attitude and position errors at the

n-th vision key frame time.

and

are the IMU attitude and position errors at the

m-th UWB key frame time.

and

are the IMU attitude and position errors at the

l-th MAG key frame time. The true state

can be obtained from the estimated state

and the error state

:

For the attitude error, the operator ⊞ is given by

For other states, the operator ⊞ is equivalent to Euclidean addition. When the IMU measurement is available, the INS mechanization is conducted to output the high-frequency prior pose. Meanwhile, the forward propagation of the whole error state and its covariance is similar to the OpenVINS [

37] and will not be repeated here. When a new key frame is added to the sliding window, state augmentation and covariance update are needed to enhance the state into the state vector, and the corresponding covariance

is augmented as:

where

is the Jacobian matrix, which represents the relationship between the new added state and the original state. When the sliding window exceeds its maximum length, it will be marginalized and the state and covariance of the oldest keyframe will be directly deleted [

37].

3.5. Visual Measurement Based on PO Theory

In order to simplify the complexity of the 3D reconstruction process in the traditional VIO system, to reduce the amount of computation, and to avoid the accuracy limitations imposed by direct 3D reconstruction, with reference to the PO theory [

38], we reconstructed the measurement model represented only by pixel coordinates and relative positional pose. In PO theory, the description of multi-view geometry can be realized by using only camera poses. This means that instead of directly estimating the 3D coordinates of the feature points in the scene, we infer their positional relationships from the relative poses between the cameras. Assuming that the projection of feature point

in image

i can be represented as

, where

is the camera internal reference matrix,

and

are the rotation and translation from camera

i to camera

j, respectively, the reprojection error

can be represented by the camera poses in PO theory without directly using the 3D coordinates of the feature points.

Therefore, the geometric description of multiple views based on PO theory can be expressed as follows.

where

is the constraint between images

i and

j,

is the normalized coordinates of the feature points in images

i, and

and

are the rotation and translation from image

i to image

j, respectively. Thus the reprojection error can be redefined as follows [

38,

39].

where

is the reprojection error of the

l-th feature point in the

i-th image,

is the projection obtained by using only the camera pose and 2D features, and

is a transformation vector for converting 3D vectors to 2D. Thus the measurement model based on PO theory can be represented as follows [

13].

As a result, the new measurement model is represented only in pixel coordinates and system attitude and is fully decoupled from the 3D features, avoiding the effects of inaccurate 3D reconstruction processes. The PO-based visual measurement model significantly reduces the computational complexity and avoids the error accumulation caused by 3D point reconstruction while maintaining high positioning accuracy.

3.6. Collaborative Localization with Anchor-Free UWB Measurement and Magnetic Assisted

This section presents our collaborative localization framework that tightly integrates anchor-free UWB measurements and magnetic heading constraints. The framework shown in

Figure 2 addresses the challenges of unknown correlations between UAV state estimates and robustly handles measurement noise and uncertainties.

3.6.1. Magnetic Heading Assisted Localization

Magnetic sensors provide valuable absolute heading information that helps constrain the unobservable yaw dimension identified in our observability analysis. Here we detail the magnetic heading measurement model and robustness enhancements.

Magnetic Heading Measurement Model

The geomagnetic heading provides an absolute measurement of the yaw angle in the IMU state, with the following measurement model:

where

is Gaussian white noise and

is the observed heading at time

t. The residual between the estimated heading

and geomagnetic heading in the filter is:

The Jacobian matrix for the heading measurement is straightforward:

Robust Magnetic Heading Estimation

To enhance the reliability of magnetic heading estimation in challenging environments, we implement five key strategies with corresponding mathematical formulations:

We perform automatic pre-mission calibration to compensate for hard and soft iron distortions. The calibration process estimates the following parameters:

- -

Hard iron bias:

- -

Soft iron matrix:

The calibrated magnetic field vector

is computed as:

- 2.

Adaptive Magnetic Weighting

The weight

for magnetic measurements is dynamically adjusted based on consistency with IMU and visual data:

where

is the reference heading from IMU/visual fusion and

is the consistency threshold.

- 3.

Magnetic Anomaly Detection

We detect magnetic anomalies by comparing current measurements with geomagnetic map predictions:

Measurements are rejected if , where is the anomaly threshold.

- 4.

Heading Complementary Filter

A complementary filter fuses magnetic heading

with gyroscope-derived heading

:

where

is the gyroscope angular velocity, and

are filter gains (

,

).

- 5.

Multi-Sensor Heading Fusion

When magnetic measurements are unreliable, we fuse heading estimates from multiple sensors using weighted least squares:

where

K is the number of sensors,

are individual heading estimates,

are their covariance matrices, and

are adaptive weights.

3.6.2. Anchor-Free UWB Measurement Model

UWB sensors provide direct peer-to-peer distance measurements between neighboring UAVs, which are crucial for maintaining relative position consistency in the swarm.

Basic UWB Measurement Model

For UAV

i and its neighbor

j, the UWB ranging measurement is modeled as:

where

and

are the positions of UAV

i and

j in the global frame, and

is Gaussian measurement noise.

Cumulative UWB Measurement with Neighbor Uncertainty

In distributed systems, neighboring UAVs’ position estimates contain uncertainties. We model these uncertainties by constructing a cumulative UWB measurement for UAV

i with

M neighbors:

where

is the set of neighboring UAVs,

are dynamically adjusted weights (satisfying

) and

represents the estimated position.

The Jacobian matrix for this cumulative measurement is:

Uncertainty Propagation from Neighbors

We explicitly model the uncertainty introduced by neighboring UAVs’ position errors. The observation noise matrix for the cumulative UWB measurement is:

where

is the UWB ranging noise variance, and

is the position covariance matrix of UAV

j.

3.6.3. Covariance Intersection (CI) for Consistent UWB Fusion

To address unknown correlations between UAV state estimates, we incorporate Covariance Intersection (CI) into our UWB measurement fusion process.

CI Fusion Principle

When fusing state estimate

with covariance

from UAV

j with local estimate

and

, CI computes the fused state

and covariance

as:

The optimal mixing parameter

minimizes the trace of

while maintaining consistency:

CI-Enhanced Measurement Noise

The CI-derived covariance is incorporated into the UWB measurement noise matrix:

The CI method ensures that the fused state estimate is consistent even when the correlations between UAV estimates are unknown or time-varying, enhancing the robustness and consistency of our cooperative localization system.

3.6.4. Integrated UWB Range Update Algorithm

Algorithm 3 integrates CI fusion, chi-squared validation, and EKF update into a comprehensive UWB range update process:

This integrated algorithm ensures robust and consistent fusion of UWB measurements in our anchor-free collaborative localization system, effectively constraining the divergence of unobservable dimensions identified in our observability analysis.

The ranging-aided SLAM (RA-SLAM) problem introduces additional non-convexity due to distance measurement models, making it challenging to obtain globally optimal solutions. The maximum a posteriori (MAP) estimation of RA-SLAM is a non-convex optimization problem that heavily relies on good initial values. Traditional methods for solving this problem often face challenges such as local optima and high computational complexity.

| Algorithm 3 UWB Range Update with Chi-squared Test and Covariance Intersection Fusion. |

1: function (, , , ) : UWB measurement, : Local state estimate,

: Local covariance,

: Neighbor states and covariances,

: Updated state estimate, : Updated covariance |

2: 1. Covariance Intersection Fusion | |

3: for all do |

4:

| ▹ Optimal fusion weight |

5: | ▹ Fused covariance |

6: | ▹ Fused state |

7:

| ▹ CI-enhanced noise |

8:

end for | |

9:

| ▹ Total noise matrix |

10:

2. Chi-squared Measurement Validation | |

11:

| ▹ Innovation |

12:

| ▹ Innovation covariance |

13:

| ▹ Chi-squared statistic |

14:

3. Conditional EKF Update | |

15:

if then | |

16:

| |

17:

else | |

18:

| ▹ Reject unreliable measurement |

19: end if return

| |

20: end function |

3.7. UWB Measurements Adaptive Adjustment Based on Chi-Squared Detection

In practical applications, UWB ranging measurements are often affected by non-line-of-sight (NLOS) propagation, multipath effects, and other environmental factors, resulting in outliers that can severely degrade localization performance. To address this issue, we propose an adaptive adjustment method for UWB measurements based on chi-squared detection, with alternative strategies when the chi-squared test fails.

For each UWB measurement, the innovation (residual) is computed as:

where

is the measurement vector,

is the observation matrix, and

is the predicted state vector. The innovation covariance matrix and chi-squared statistic are then computed as:

where

is the a priori error covariance matrix of the Kalman filter, and

is the observation noise covariance matrix. In this article, the threshold of the chi-squared test is set to 95%, and the dimension of the residual vector is 6, so the corresponding threshold is 12.59.

When the chi-squared test fails (i.e.,

), our system implements a weighted measurement update strategy instead of completely rejecting the measurement. The weight assigned to the measurement is inversely proportional to the chi-squared statistic:

where

is the UWB measurement noise parameter. This approach allows for slightly inconsistent measurements to still contribute to the state update with reduced influence, while strongly inconsistent measurements are effectively downweighted.

Finally, each UAV uses the standard EKF update formula with the computed weight to update UWB measurements and obtain state estimates after collaborative positioning.

3.8. Observability Analysis for Ranging + Odometry Swarm Systems

We conduct a rigorous observability analysis for anchor-free UAV swarms with only inter-UAV ranging and on-board odometry, deriving key properties for professional readers. For a swarm with N UAVs, the state vector includes each UAV’s position , velocity , attitude , and IMU biases . Measurements include:

Consider a global transformation

representing 3D translation and yaw rotation. Applying this to the swarm state:

This transformation leaves all measurements invariant:

- -

Odometry measurements depend only on relative motion, so produces identical measurements to

- -

Ranging measurements are preserved: (rotation matrices preserve Euclidean distance)

The observability matrix

for the linearized system is constructed as:

where

is the combined measurement matrix,

is the block-diagonal state transition matrix, and

is the total state dimension.

Since leaves all measurements invariant, it lies in the null space of . This yields:

Theorem 1.

For an anchor-free UAV swarm with only inter-UAV ranging and on-board odometry, and without initial absolute pose information, the absolute heading (yaw) and absolute position (3D translation) are unobservable, resulting in 4 unobservable dimensions.

The rank of is thus at most , confirming the unobservable subspace.

While the 4 unobservable dimensions are fundamental, the divergence rate of these directions can be constrained. This is the core motivation for our absolute accuracy improvement mechanism: through strategic sensor fusion and drift mitigation, we can significantly slow the divergence of the unobservable directions, thereby improving absolute positioning accuracy without changing the fundamental observability properties.

3.9. Analysis of Absolute Accuracy Improvement Mechanism in Anchor-Free Collaborative Localization

Building upon the observability analysis, we now analyze how our proposed system improves the absolute positioning accuracy of anchor-free UAV swarms, even in the presence of 4 unobservable dimensions. While the fundamental unobservability cannot be eliminated without external absolute references, our research demonstrates that the absolute positioning accuracy can be significantly enhanced and network drift can be mitigated through three key mechanisms: (1) accurate absolute pose initialization using MDS-based methods fused with ranging, IMU, and geomagnetic information; (2) continuous accuracy improvement through multi-constraint fusion in a sliding window EKF framework; and (3) explicit network drift mitigation strategies. These mechanisms effectively constrain the divergence rate of the unobservable directions, leading to improved absolute positioning accuracy.

3.9.1. Swarm Initialization with Absolute Pose Estimation

As detailed in

Section 3.2, our MDS-MAP initialization approach utilizes ranging measurements, IMU data, and geomagnetic information to establish accurate absolute positions for all UAVs at the beginning of the mission. This initialization process is crucial as it provides each UAV with a globally consistent reference frame, which serves as the foundation for subsequent collaborative localization. The accurate initialization of the swarm’s absolute pose significantly enhances the overall absolute positioning accuracy of the UAV cluster. By establishing a precise initial reference frame, the accumulated errors in subsequent navigation can be minimized, leading to more reliable and accurate positioning throughout the mission.

3.9.2. Continuous Accuracy Enhancement Through Multi-Constraint Fusion

During system operation, the sliding window EKF framework continuously fuses multiple sensor constraints to improve estimation accuracy and maintain long-term stability. The augmented state vector includes keyframes from visual and UWB measurements:

The observation model combines visual, ranging, and geomagnetic measurements:

This multi-constraint fusion significantly increases the rank of the information matrix, thereby improving the observability and accuracy of the system state estimation. The increased redundancy in measurements also enhances robustness against individual sensor failures or noise. Specifically:

Visual constraints provide high-frequency relative motion information and help correct drift in the inertial navigation system.

UWB ranging constraints offer direct peer-to-peer distance measurements, which are particularly valuable for maintaining inter-UAV positional consistency in the swarm.

Geomagnetic constraints supply absolute heading information, which is essential for maintaining global orientation consistency.

3.9.3. Network Drift Mitigation Strategies

Building upon our observability analysis, we now focus on how our system constrains the divergence rate of the 4 unobservable dimensions. While the fundamental unobservability cannot be eliminated, our proposed strategies effectively mitigate the drift in these unobservable directions, thereby improving the absolute positioning accuracy. We implement three key strategies that directly address the divergence of the unobservable dimensions:

Absolute Heading Constraint: By continuously incorporating geomagnetic measurements into the fusion framework, we provide a stable absolute reference for the swarm’s heading. While this does not change the fundamental unobservability of the yaw angle, it effectively constrains the divergence rate of the absolute heading. The geomagnetic measurements act as a virtual anchor for the yaw direction, preventing the entire swarm from rotating uncontrollably and maintaining consistent heading estimates across all UAVs.

Relative Distance Consistency: The UWB ranging measurements between neighboring UAVs provide strong constraints on the relative positions of the swarm members. These constraints effectively limit the divergence of the unobservable translational dimensions. While the entire swarm can still translate rigidly, the relative distance constraints ensure that this translation occurs in a coordinated manner, preventing the swarm from dispersing and maintaining the relative formation. This coordinated behavior significantly reduces the effective drift rate of the absolute position estimates.

Sliding Window Marginalization: The sliding window mechanism maintains a history of past states and measurements, allowing the system to detect and correct drift over time through loop closure-like effects. By marginalizing older states while retaining their information in the covariance matrix, the system creates implicit constraints that limit the divergence of all states, including the unobservable dimensions. The sliding window effectively extends the temporal horizon of the system, providing long-term consistency constraints that slow down the drift of the unobservable directions.

Collectively, these strategies effectively constrain the divergence rate of the 4 unobservable dimensions, even though they cannot eliminate the fundamental unobservability. By limiting the drift rate, our system significantly improves the absolute positioning accuracy of the swarm, making it suitable for practical applications in GNSS-denied environments.

3.10. Scalability Analysis

Through a series of key design considerations, this system achieves favorable scalability for large-scale unmanned aerial vehicle (UAV) swarms. Specifically, the Pose-Only (PO) theory improves computational efficiency by eliminating the need for 3D feature reconstruction; the sliding-window EKF limits the number of estimated states; and localized processing ensures a linear scaling of computational load. In addition, the system mitigates communication latency and packet loss via time alignment, synchronization, and delay compensation within the sliding window. For future deployment expansion, in terms of communication overhead, each UAV only communicates with neighboring UAVs within its communication range, thus ensuring a linear scaling of communication burden. A dynamic neighbor selection strategy further restricts communication to the nearest K neighbors. Regarding UWB signal management, the system implements the Time Division Multiple Access (TDMA) protocol and Frequency Hopping Spread Spectrum (FHSS) technology to avoid signal collisions in dense swarms. Analysis results show that this system can support swarms of 100 UAVs with appropriate parameter tuning, and is expected to accommodate even larger swarms through additional optimizations such as hierarchical communication and UWB modulation enhancement.

4. Experimental Evaluation

This section provides a comprehensive performance evaluation of our proposed collaborative navigation framework and benchmarks it against the SOTA VIO method and collaborative positioning method, which are commonly used for drone positioning in GNSS-denied situations. Experimental validation was conducted using datasets collected from both the AirSim simulation platform and real-world environments. All evaluations were performed on a laptop configuration (13th Gen Intel® Core™ i9-13900HX 2.20 GHz processor) to ensure practical applicability. This paper employs EVO (Python package for the evaluation of odometry and SLAM), version 1.12.0, for data assessment. When creating visualizations, this study utilizes Python 3.9 and the Matplotlib plotting library (version 3.6.3).

4.1. Swarm Position Initialization Simulation Experiment

To validate the effectiveness of the proposed MDS-MAP-based swarm absolute position initialization method, we conducted comparative experiments against the traditional GTSAM-based optimization approach that builds ranging information residuals. We simulated 12 nodes, set the ranging error to 0.5 m, 500 Montocaro experiments, and the final results were averaged. Multiple Monte Carlo simulations were performed to evaluate both methods in terms of average positioning accuracy and computational time.

The traditional approach formulates the initialization problem as a non-linear optimization task by constructing ranging residuals defined as , where represents the measured distance between UAVs i and j, and , are their respective absolute positions. This optimization problem is solved using the GTSAM library, which iteratively minimizes the residuals to estimate the absolute positions.

In contrast, our proposed MDS-MAP method avoids the non-convexity issues inherent in the optimization approach by employing algebraic methods based on Multidimensional Scaling (MDS) and coordinate alignment techniques. This approach directly computes the relative positions using MDS, aligns the coordinate systems using non-moving nodes, estimates the absolute heading, and finally solves for the absolute positions through global translation. Experimental results, as shown in

Table 1.

The results in

Table 1 clearly show that our proposed MDS-MAP method achieves 44.1% reduction in positioning error and more than 80% reduction in computation time compared to the GTSAM-based optimization approach. To provide more reliable statistical results, we have included error variance and 95% confidence intervals based on 500 Monte Carlo simulations. These improvements are attributed to the elimination of non-convex optimization challenges and the adoption of efficient algebraic computations instead of iterative optimization procedures.

4.2. Swarm Cooperative Localization Simulation Experiment

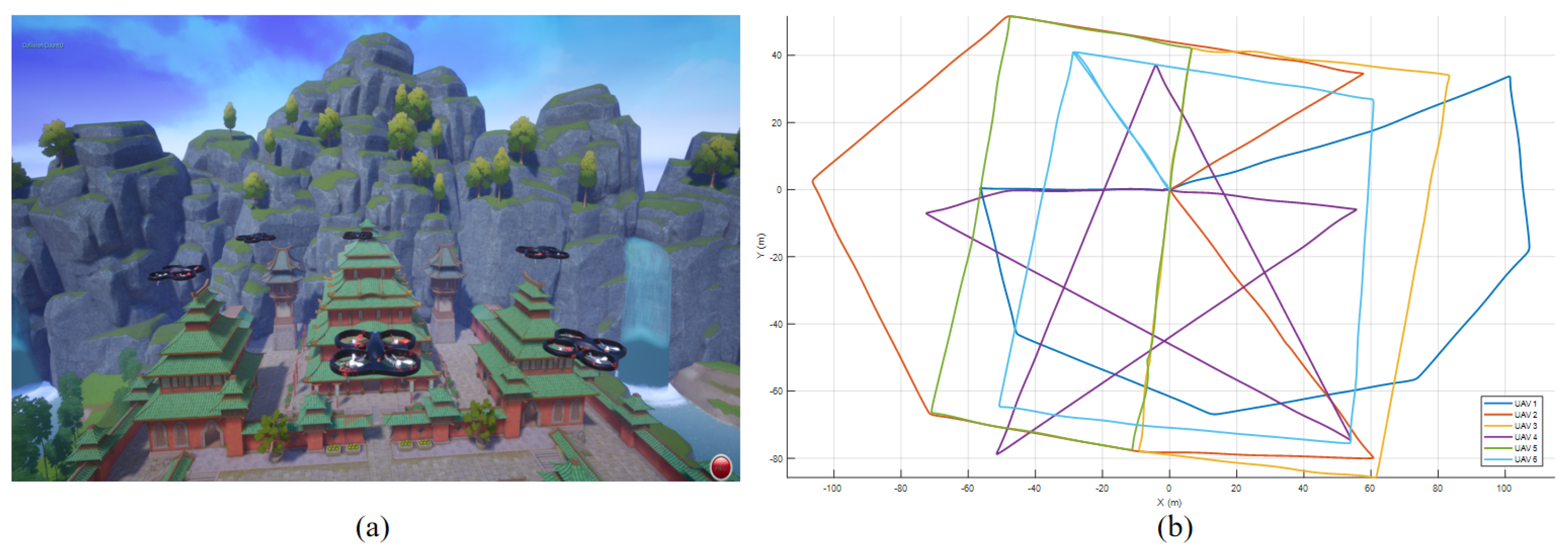

To verify the effectiveness of the new architecture, we conducted simulation experiments based on the AirSim platform. Since the AirSim platform does not have UWB data simulation, we added Gaussian white noise with a mean of 0.3 m based on the true distance to simulate and generate UWB data. The parameter settings of the simulation experiment are shown in

Table 2, and the simulation scenarios and ground truth trajectories are shown in

Figure 3.

In the simulation experiment, “VINS-Mono” and “OpenVINS” represent the two SOTA VIO methods in [

37,

40], “SuperVINS” represents the SOTA VIO method based deep learning proposed in [

41], “COO_VIR” represents the collaborative localization method proposed in [

12], “COVINS-G” represents the collaborative localization method proposed in [

11], and “COO_OUR” represents the method proposed in this paper. The statistics of drone positioning root-mean-square error (RMSE) results are shown in

Table 3. Since this paper is aimed at drones operating in a large-scale outdoor environment and requires real-time positioning information output, when comparing it with OpenVINS and VINS-Mono algorithms, loop back repositioning is not considered.

As shown in

Table 3, in the simulation experiment with 6 UAVs, our method outperforms all other methods. This indicates that our method not only achieves excellent positioning accuracy for each individual UAV but also maintains stable performance in the multi-UAV system, verifying its robustness and effectiveness in extended simulation scenarios.

4.3. Vision Loss Experiment

To verify the efficacy of the proposed algorithm in scenarios with visual loss, we conducted experiments by introducing partial visual loss into the simulation framework established in the previous section, as illustrated in

Figure 4, the curves with different colors in the figure represent the trajectories of six different drones respectively, which is consistent with

Figure 3. The bold part of the curve indicates visual disconnection, and the duration of visual disconnection is 10 s. Unless otherwise specified, the experimental settings, including but not limited to parameters, configurations, and environmental conditions, were consistent with those outlined in the preceding simulation experiment.

In the simulated vision loss experiment, the UAV’s positioning results are shown in

Table 4. It can be seen that COVINS completely failed during the vision loss period as it relies entirely on continuous visual input. The COO_VIR method showed significant performance degradation with an average RMSE of 19.47 m, which is more than triple the error compared to our method. This degradation occurs because these methods heavily depend on the continuous operation of the VIO system, and when vision is lost, the VIO system fails to function properly, causing significant deviation in the overall system’s positioning results. In contrast, our proposed method can effectively handle vision deprivation, maintaining an average accuracy of 6.91 m, which is comparable to the results achieved in normal conditions as shown in

Table 3. This demonstrates that our system maintains robust performance even under adverse visual conditions by leveraging the coupled fusion of UWB ranging and IMU data.

4.4. UWB Abnormal Experiment

To further evaluate the robustness of the proposed method under abnormal UWB conditions, we conducted additional simulation experiments that emulate two typical UWB failures in GNSS-denied outdoor environments: (1) UWB signal loss (no ranging output) and (2) UWB non-line-of-sight (NLOS) ranging bias. Unless otherwise specified, the simulation settings (sensor rates, trajectories, and evaluation protocol) are identical to those in the swarm cooperative localization simulation experiment (

Table 2).

- (1)

UWB signal loss

In this experiment, the UWB ranging packets are assumed to be completely lost during several time intervals, i.e., no distance measurements are available for the EKF update. Practically, this is implemented by dropping the UWB measurements so that the filter performs propagation and updates only with the remaining sensors (IMU, vision, and magnetometer). The UWB loss intervals are highlighted by the bold red segments in

Figure 5.

- (2)

UWB NLOS with positive bias (3–5 m)

Relevant studies have demonstrated that in NLOS scenarios, UWB ranging measurements may generate values larger than the true ones owing to the multipath effect. To model NLOS-induced ranging errors, we add a positive bias to the true inter-UAV distance during NLOS intervals. Specifically, for each inter-UAV link

i–

j, the corrupted ranging measurement is generated as

where

is the ground-truth distance,

is a random positive bias uniformly sampled from 3 to 5 m, and

is zero-mean Gaussian noise with

, consistent with the nominal UWB setting. The NLOS intervals are highlighted by the bold black segments in

Figure 5.

In the simulated UWB abnormality experiment, the UAV’s positioning results are shown in

Table 5. It can be seen that COO_VIR achieved an average RMSE of 11.39 m. This degradation occurs because these methods heavily depend on UWB ranging data for collaborative positioning, and when UWB data is lost or contains large errors, the system’s positioning accuracy is significantly affected.

Our proposed method with UWB adaptive adjustment (UWB_AA) strategy can effectively handle UWB abnormalities, maintaining an average accuracy of 6.47 m. To demonstrate the effectiveness of our chi-squared detection based UWB_AA strategy, we conducted an ablation experiment by removing this strategy, resulting in COO_OUR (without_UWB_AA) with an average RMSE of 8.39 m. This shows that the chi-squared detection based UWB_AA strategy contributes to a significant accuracy improvement of 1.92 m in UWB abnormality scenarios.

The superior performance is attributed to two key factors: (1) our system adopts a multi-modal fusion approach that integrates visual, inertial, and magnetic sensor data, which can compensate for the loss or errors of UWB data; (2) our chi-squared detection method effectively filters out UWB outliers, further improving the system’s robustness to UWB abnormalities.

To further demonstrate the effectiveness of our chi-squared detection method in identifying abnormal UWB measurements, we conducted a statistical analysis of the UWB data processing results. The abnormality criterion is defined as follows: if the error between the UWB measurement and the ground truth is greater than 1 m, the measurement is considered abnormal. The statistical results for the simulation experiment are shown in

Table 6.

Table 6 shows that in the simulation experiment, our chi-squared detection method achieves a high anomaly detection rate of 93%. Specifically, 20.0% of the raw UWB data are abnormal, and our method correctly identifies 18.6% of them. Since the same proportion of UWB measurement anomalies was set for each UAV in the simulation experiment, a single set of statistics is sufficient to represent the overall performance. This result clearly demonstrates the effectiveness of our chi-squared detection method in filtering out abnormal UWB measurements, which is crucial for improving the robustness and accuracy of the cooperative localization system in simulation scenarios.

4.5. Physical System Construction

In order to verify the practical application capabilities of the algorithm, this research builds a distributed multi-drone collaborative localization hardware platform. The collaborative localization platform integrates multi-modal sensors and carries out clock synchronization, and has a built-in embedded computing module that can process sensor data in real time. The platform can be mounted on a small quad-rotor drone, and realizes status information sharing and UWB ranging data interaction through a wireless communication module.

The hardware architecture is shown in

Figure 6, which mainly includes the following modules: (1) Vision module: Equipped with FLIR Blackfly S USB3 global shutter camera, microsecond time synchronization between IMU and camera is achieved through hardware trigger signals (FLIR Systems, Inc., Wilsonville, OR, USA). (2) Inertial module: MEMS-IMU is used to output 200 Hz raw data of gyroscope, accelerometer, and magnetometer (Ruiyan Xinchuang Technology Co., Ltd., Chengdu, Sichuan, China). (3) UWB module: The ranging frequency is 10 Hz, and the accuracy of 0.3 m in dynamic environments is achieved through TDoA technology (Air Recycling Technology Co., Ltd., Shenzhen, Guangdong, China). (4) RTK positioning module: Integrated RTK-GNSS module (positioning accuracy of 2 cm) as a truth reference and is only enabled during the algorithm evaluation stage (Ruiyan Xinchuang Technology Co., Ltd., Chengdu, Sichuan, China). (5) Main processor: Select the RK3588 (Rockchip Electronics Co., Ltd., Fuzhou, Fujian, China) embedded computing module to meet the real-time computing requirements of the sliding window EKF framework. The parameters of each module are shown in

Table 7. The UAVs are each equipped with a compact communication station, which provides a communication bandwidth of no less than 50 Mbps and a communication range exceeding 10 km. Since the proposed cooperative method only requires the sharing of positional data and ranging information, without the need for transmitting image data, this communication setup adequately fulfills the operational requirements.

4.6. Real-World Experiment

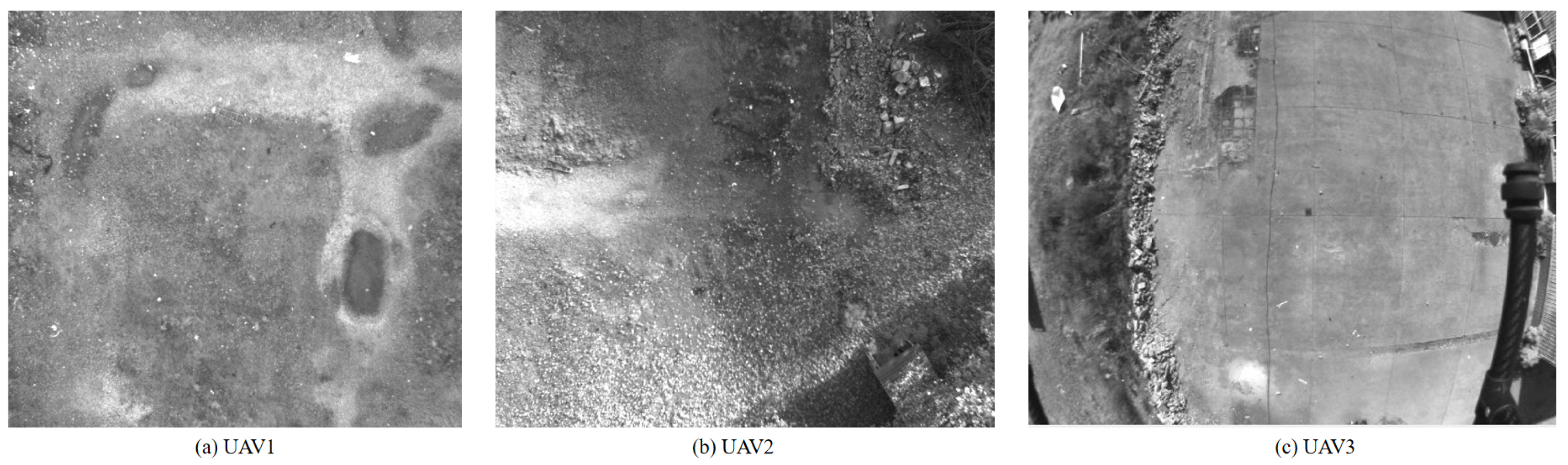

To prove the practicality of the system proposed in this paper in real-world scenarios, we conducted a real-world collaborative localization experiment. A total of three drones were flown during the experiment. The flight trajectory of the drones is shown in

Figure 7b. The sensor parameters carried by the drone are shown in

Table 7. The experimental environment is an outdoor scene, as shown in

Figure 7a. The drones are equipped with high-precision RTK positioning equipment as the ground truth reference. In the real-world experiment, the images captured by the drone camera are shown in

Figure 8. It can be seen that compared with indoor UAVs, outdoor UAVs operate at higher altitudes, where only downward-facing cameras can capture continuous scenes and feature points are typically tens of meters away. This renders the accuracy of VIO significantly susceptible to environmental factors and reduced, thereby necessitating multi-UAV collaboration to improve accuracy.

In order to verify several innovative points of the method proposed in this paper, especially the novel processing methods for both UWB and visual measurements, ablation experiments with different settings were also carried out.

Table 8 shows a detailed evaluation of positioning errors for real-world experiments, where “COO (ours)” represents the algorithm proposed in this paper, “COO (ours_without_PO)” represents that the algorithm proposed in this paper does not use PO constraints, “COO (ours_without_UWB)” represents that the algorithm proposed in this paper does not use UWB measurement, “COO (ours_without_MAG)” represents that the algorithm proposed in this paper without Magnetic Assisted, and “COO (ours_without_UWB_AA)” represents that the algorithm proposed in this paper does not use the chi-squared test based UWB adaptive adjustment (UWB_AA) strategy.

Figure 9 shows a comparison of the running times of different algorithms to evaluate the operating efficiency. It can be seen that our algorithm can maintain high operating efficiency thanks to its lightweight architecture and filter-based advantages. Moreover, the 3588 board (16 GB RAM) on our device runs the algorithm with a memory usage of ≤10% and a CPU usage of ≤30% to meet real-time demands. The system is powered by an independent 12 V/6000 mAh lithium battery, supporting continuous operation for ≥1 h. To sum up, compared with the open source SOTA VIO positioning method and multi-UAV collaborative positioning method, the algorithm proposed in this paper shows excellent positioning performance and computational efficiency.

Furthermore, in real-world experiments, the test scenario for unmanned aerial vehicles (UAVs) is a relatively flat urban square. In contrast, the scenario in simulation experiments is a complex terrain environment that includes undulating buildings, grasslands, rivers and other elements, with a larger motion scale. Consequently, the accuracy of the results obtained from real-world experiments is higher than that of the results from simulation experiments.

Additionally, we conducted a statistical analysis of the UWB data processing results in the real-world experiment. Using the same abnormality criterion (error > 1 m), the statistical results are shown in

Table 9.

Table 9 shows the UWB anomaly detection performance for each UAV and the average performance in the real-world experiment. On average, our chi-squared detection method achieves a high anomaly detection rate of 89.9% across all UAVs. This result further confirms the effectiveness of our chi-squared detection method in real-world scenarios, demonstrating its robustness and practical applicability even when different UAVs experience varying UWB measurement conditions.

5. Conclusions

This paper addresses the challenge of degraded navigation and positioning accuracy in GNSS-challenged environments for unmanned platforms, where visual environmental features are sparse and GNSS communication is constrained. We propose a distributed anchor-free visual-inertial-UWB-Magnetic multi-UAV cooperative localization system. In this system, UWB measurements between UAVs are utilized to estimate inter-drone distances without relying on pre-deployed UWB anchors. A sliding window EKF framework is adopted to fuse multi-modal observations, enhancing the algorithm’s lightweight design and real-time performance. To further improve accuracy, a PO visual observation model is introduced, which strengthens the system’s adaptability to challenging visual environments with limited features. Additionally, a novel MDS (Multidimensional Scaling)-MAP initialization method fuses ranging, IMU, and geomagnetic data to solve the non-convex optimization problem in ranging-aided SLAM, ensuring fast and accurate swarm absolute pose initialization. The improvement mechanism of absolute positioning accuracy in cooperative positioning without anchor points is analyzed, multiple keyframes are integrated into the sliding window, ensuring robustness and precision. Different from the current Vision-Inertial-UWB Cooperative Localization System based on the VIO system, we adopt a tightly-coupled Cooperative Localization System based on IMU, which can effectively deal with vision loss.

Extensive simulation and real-world experiments demonstrate that the proposed method significantly outperforms state-of-the-art localization approaches in GNSS-denied scenarios. In particular, our system achieved an average positioning accuracy of 5.74 m in an outdoor 50-m flight simulation experiment and 1.86 m in the actual experiment, which is a significant improvement over existing methods. Importantly, our method can effectively deal with situations of vision loss, maintaining an accuracy of 6.91 m even when other approaches fail completely. Notably, our chi-squared test-based UWB adaptive adjustment (UWB_AA) strategy effectively filters abnormal UWB measurements, achieving over 88% anomaly detection rate in both simulation and real-world experiments. Ablation experiments confirm it improves accuracy in UWB abnormality scenarios, enhancing the system’s robustness and accuracy.

The successful deployment of our system on small-scale UAV platforms validates its practicality and applicability in real-world lightweight drone systems.

In future research work, we will further explore the application of our system in challenging environments such as large-scale deployments and day-night transitions to obtain a more comprehensive and powerful drone cluster positioning system.