Improved Football Team Training Algorithm Based on Modal Decomposition and BiLSTM Method for Short-Term Wind Power Forecasting

Abstract

1. Introduction

2. Prediction Model Algorithms

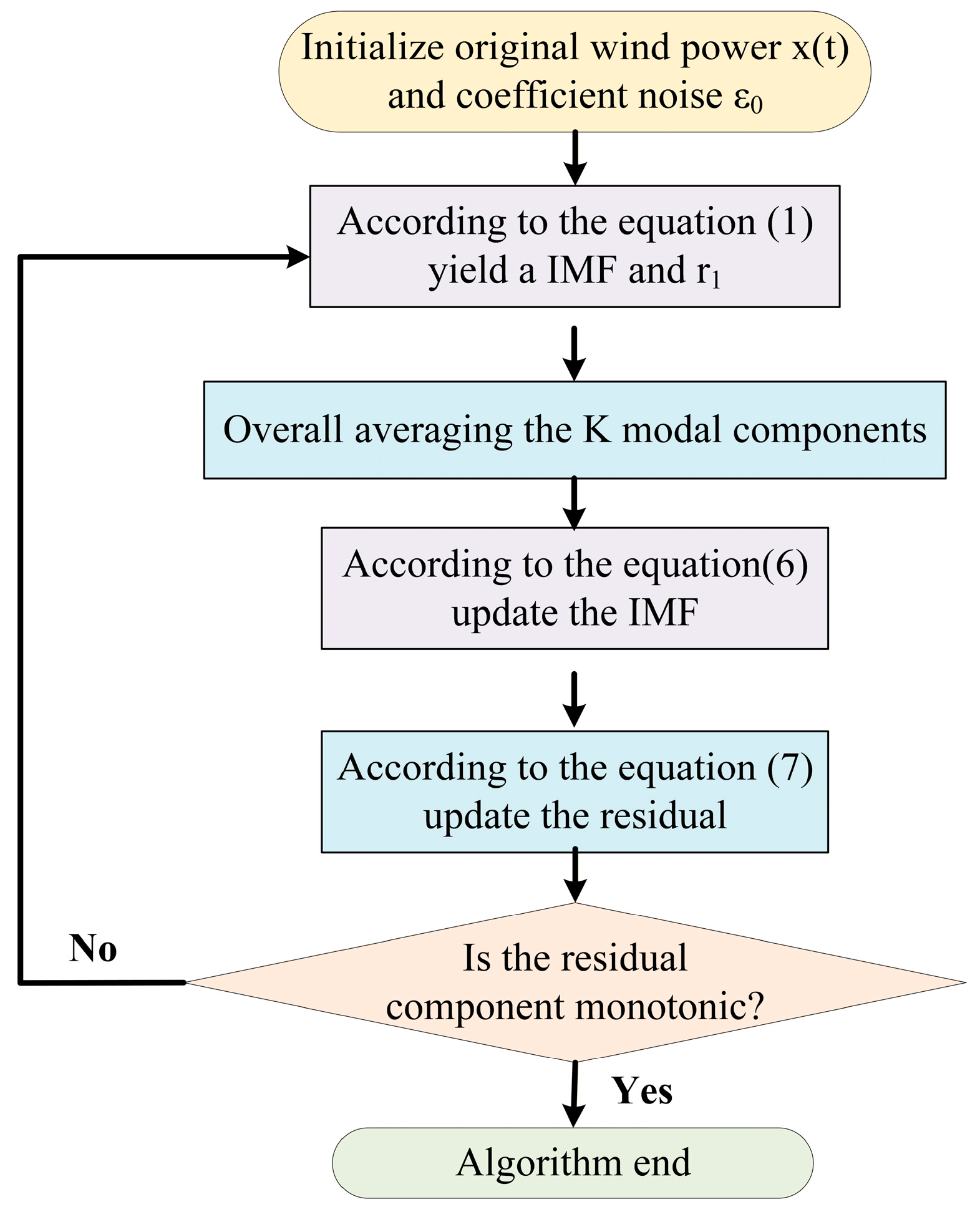

2.1. Complete Ensemble Empirical Mode Decomposition with Adaptive Noise (CEEMDAN)

2.2. Bidirectional Long Short-Term Memory Network Model (BiLSTM)

3. Improved Football Team Training Optimization Algorithm (IFTTA)

3.1. Dynamic Probability Allocation Strategy

3.2. Multi-Modal Training Strategy

- Training Mode 1: Directed learning mode

- 2.

- Training Mode 2: Information-guided training

- 3.

- Training Mode 3: Balance training mode

- 4.

- Training Mode 4: Disturbance training mode

3.3. Uncertainty Analysis of the IFTTA

3.4. Iterative Analysis

4. CEEMDAN–IFTTA–BiLSTM

5. Example Analysis Data Description

5.1. Data Description

5.2. Experimental Environment

5.3. Evaluation Indicators

5.4. CEEMDAN Decomposition Results

5.5. Model Training

5.6. Model Comparison Analysis

6. Conclusions

- (1)

- CEEMDAN can perform adaptive signal decomposition on the original wind power sequence, which effectively reduces the data noise interference and enhances the ability to extract temporal features. It can provide more regular data for the prediction model.

- (2)

- Improving FTTA through a multi-mode search strategy with dynamic probability allocation, the IFTTA has overcome the subjectivity of traditional empirical tuning. In contrast to the FTTA, the IFTTA enhances both the global search and local optimization abilities of hyperparameter tuning, leading to a substantial improvement in the accuracy of wind power forecasting.

- (3)

- Compared to the other six models, the proposed method in this paper reduced the MAE, RMSE, and MAPE by at least 25.29%, 29.62%, and 21.13%, respectively, in Dataset 1, while increasing R2 by at least 0.0069. In Dataset 2, MAE, RMSE, and MAPE decreased by at least 54.03%, 55.78%, and 10.23%, respectively, while R2 improved by at least 0.0093. In Dataset 3, MAE, RMSE, and MAPE decreased by at least 31.94%, 33.26%, and 20.66%, respectively, while R2 improved by at least 0.0089. The proposed forecasting method significantly enhances the prediction accuracy of wind power generation, providing robust support for optimizing the scheduling and economic operation of wind power systems.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- National Energy Administration. Grid Operation Status of Renewable Energy in 2024. 2025. Available online: https://www.nea.gov.cn/20250221/e10f363cabe3458aaf78ba4558970054/c.html (accessed on 29 July 2025).

- Wang, Y.; Zou, R.; Liu, F.; Zhang, L.; Liu, Q. A review of wind speed and wind power forecasting with deep neural networks. Appl. Energy 2021, 304, 117766. [Google Scholar] [CrossRef]

- Lei, Y.; Wang, Z.; Wang, D.; Zhang, X.; Che, H.; Yue, X.; Tian, C.; Zhong, J.; Guo, L.; Li, L.; et al. Co-benefits of carbon neutrality in enhancing and stabilizing solar and wind energy. Nat. Clim. Change 2023, 13, 693–700. [Google Scholar]

- Holttinen, H.; Lindroos, T.J.; Lehtilä, A.; Koljonen, T.; Kiviluoma, J.; Korpås, M. Estimating the CO2 Impacts of Wind Energy in the Transition Towards Carbon-Neutral Energy Systems. Energies 2025, 18, 1548. [Google Scholar] [CrossRef]

- Goyal, V.; Aishwarya, M.; C F, T.C.; Varghese, J.; Marathe, A.; Bhagat, G.P.; Hemalatha, H. Role of Wind Energy in Achieving Global Carbon Neutrality: Challenges and Opportunities. E3S Web Conf. 2024, 591, 02002. [Google Scholar] [CrossRef]

- Nomandela, S.; Mnguni, M.E.S.; Raji, A.K. Adaptive Control and Interoperability Frameworks for Wind Power Plant Integration: A Comprehensive Review of Strategies, Standards, and Real-Time Validation. Appl. Sci. 2025, 15, 12729. [Google Scholar] [CrossRef]

- Xie, Y.; Li, C.; Li, M.; Liu, F.; Taukenova, M. An overview of deterministic and probabilistic forecasting methods of wind energy. iScience 2023, 26, 105804. [Google Scholar] [CrossRef]

- Baningobera, B.E.; Oleinikova, I.; Uhlen, K.; Pokhrel, B.R. Challenges and solutions in low-inertia power systems with high wind penetration. IET Gener. Transm. Distrib. 2024, 18, 4221–4244. [Google Scholar]

- Soman, S.S.; Zareipour, H.; Malik, O.; Mandal, P. A review of wind power and wind speed forecasting methods with different time horizons. In Proceedings of the North American Power Symposium 2010, Arlington, TX, USA, 26–28 September 2010. [Google Scholar]

- Wan, C.; Xu, Z.; Pinson, P.; Dong, Z.Y.; Wong, K.P. Probabilistic Forecasting of Wind Power Generation Using Extreme Learning Machine. IEEE Trans. Power Syst. 2014, 29, 1033–1044. [Google Scholar] [CrossRef]

- Deng, X.; Shao, H.; Hu, C.; Jiang, D.; Jiang, Y. Wind Power Forecasting Methods Based on Deep Learning: A Survey. Comput. Model. Eng. Sci. 2020, 122, 273–302. [Google Scholar] [CrossRef]

- Hanifi, S.; Liu, X.; Lin, Z.; Lotfian, S. A Critical Review of Wind Power Forecasting Methods—Past, Present and Future. Energies 2020, 13, 3764. [Google Scholar] [CrossRef]

- Yang, W.; Wang, J.; Niu, T.; Du, P. A hybrid forecasting system based on a dual decomposition strategy and multi-objective optimization for electricity price forecasting. Appl. Energy 2019, 235, 1205–1225. [Google Scholar] [CrossRef]

- Rayi, V.K.; Mishra, S.; Naik, J.; Dash, P. Adaptive VMD based optimized deep learning mixed kernel ELM autoencoder for single and multistep wind power forecasting. Energy 2022, 244, 122585. [Google Scholar] [CrossRef]

- Hossain, M.A.; Chakrabortty, R.K.; Elsawah, S.; Ryan, M.J. Very short-term forecasting of wind power generation using hybrid deep learning model. J. Clean. Prod. 2021, 296, 126564. [Google Scholar] [CrossRef]

- Lu, H.; Ma, X.; Ma, M. A hybrid multi-objective optimizer-based model for daily electricity demand prediction considering COVID-19. Energy 2021, 219, 119568. [Google Scholar] [CrossRef] [PubMed]

- Liu, C.; Zhang, X.; Mei, S.; Zhen, Z.; Jia, M.; Li, Z.; Tang, H. Numerical weather prediction enhanced wind power forecasting: Rank ensemble and probabilistic fluctuation awareness. Appl. Energy 2022, 313, 118769. [Google Scholar] [CrossRef]

- Giantsidi, S.; Tarantola, C. Deep learning for financial forecasting: A review of recent trends. Int. Rev. Econ. Financ. 2025, 104, 104719. [Google Scholar] [CrossRef]

- Mojtahedi, F.F.; Yousefpour, N.; Chow, S.H.; Cassidy, M. Deep Learning for Time Series Forecasting: Review and Applications in Geotechnics and Geosciences. Arch. Comput. Methods Eng. 2025, 32, 3415–3445. [Google Scholar] [CrossRef]

- Zhang, Y.; Pan, G.; Chen, B.; Han, J.; Zhao, Y.; Zhang, C. Short-term wind speed prediction model based on GA-ANN improved by VMD. Renew. Energy 2020, 156, 1373–1388. [Google Scholar] [CrossRef]

- Torres, M.E.; Colominas, M.A.; Schlotthauer, G.; Flandrin, P. A complete ensemble empirical mode decomposition with adaptive noise. In Proceedings of the 2011 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Prague, Czech Republic, 22–27 May 2011. [Google Scholar]

- Dragomiretskiy, K.; Zosso, D. Variational Mode Decomposition. IEEE Trans. Signal Process. 2014, 62, 531–544. [Google Scholar] [CrossRef]

- You, H.; Bai, S.; Wang, R.; Li, Z.; Xiang, S.; Huang, F. New PSO-SVM Short-Term Wind Power Forecasting Algorithm Based on the CEEMDAN Model. J. Electr. Comput. Eng. 2022, 2022, 7161445. [Google Scholar] [CrossRef]

- Ding, Y.; Chen, Z.; Zhang, H.; Wang, X.; Guo, Y. A short-term wind power prediction model based on CEEMD and WOA-KELM. Renew. Energy 2022, 189, 188–198. [Google Scholar] [CrossRef]

- Tian, Z.; Gai, M. Football team training algorithm: A novel sport-inspired meta-heuristic optimization algorithm for global optimization. Expert Syst. Appl. 2024, 245, 123088. [Google Scholar] [CrossRef]

- Jiang, T.; Liu, Y. A short-term wind power prediction approach based on ensemble empirical mode decomposition and improved long short-term memory. Comput. Electr. Eng. 2023, 110, 108830. [Google Scholar] [CrossRef]

- Graves, A.; Schmidhuber, J.R. Framewise phoneme classification with bidirectional LSTM and other neural network architectures. Neural Netw. 2005, 18, 602–610. [Google Scholar] [CrossRef]

- Zeng, W.; Cao, Y.; Feng, L.; Fan, J.; Zhong, M.; Mo, W.; Tan, Z. Hybrid CEEMDAN-DBN-ELM for online DGA serials and transformer status forecasting. Electr. Power Syst. Res. 2023, 217, 109176. [Google Scholar] [CrossRef]

- Fang, N.; Liu, Z.; Fan, S. Short-Term Wind Power Prediction Method Based on CEEMDAN-VMD-GRU Hybrid Model. Energies 2025, 18, 1465. [Google Scholar] [CrossRef]

- Xiong, J.; Peng, T.; Tao, Z.; Zhang, C.; Song, S.; Nazir, M.S. A dual-scale deep learning model based on ELM-BiLSTM and improved reptile search algorithm for wind power prediction. Energy 2023, 266, 126419. [Google Scholar] [CrossRef]

- Kuang, M.; Liu, X.; Zhao, M.; Zhang, H.; Wu, X.; Tian, Y. MC-VMD-CNN-BiLSTM short-term wind power prediction considering rolling error correction. Eng. Res. Express 2024, 6, 045304. [Google Scholar] [CrossRef]

- Zhang, Z.; Deng, A.; Wang, Z.; Li, J.; Zhao, H.; Yang, X. Wind Power Prediction Based on EMD-KPCA-BiLSTM-ATT Model. Energies 2024, 17, 2568. [Google Scholar] [CrossRef]

- Shi, Y.; Eberhart, R. A modified particle swarm optimizer. In Proceedings of the 1998 IEEE International Conference on Evolutionary Computation Proceedings: IEEE World Congress on Computational Intelligence (Cat. No.98TH8360), Anchorage, AK, USA, 4–9 May 1998. [Google Scholar]

- Kennedy, J.; Eberhart, R. Particle swarm optimization. In Proceedings of the ICNN’95—International Conference on Neural Networks, Perth, Australia, 27 November–1 December 1995. [Google Scholar]

- Neri, F.; Tirronen, V. Recent advances in differential evolution: A survey and experimental analysis. Artif. Intell. Rev. 2010, 33, 61–106. [Google Scholar] [CrossRef]

- Mirjalili, S.; Mirjalili, S.M.; Lewis, A. Grey Wolf Optimizer. Adv. Eng. Softw. 2014, 69, 46–61. [Google Scholar] [CrossRef]

- Liang, J.J.; Qin, A.K.; Suganthan, P.N.; Baskar, S. Comprehensive learning particle swarm optimizer for global optimization of multimodal functions. IEEE Trans. Evol. Comput. 2006, 10, 281–295. [Google Scholar] [CrossRef]

- Akay, B.; Karaboga, D. A modified Artificial Bee Colony algorithm for real-parameter optimization. Inf. Sci. 2012, 192, 120–142. [Google Scholar] [CrossRef]

- Yan, Y.; Qian, Y.; Zhou, Y. Nonparametric Probabilistic Prediction of Ultra-Short-Term Wind Power Based on MultiFusion–ChronoNet–AMC. Energies 2025, 18, 1646. [Google Scholar] [CrossRef]

- Lehmann, C.; Paromau, Y. Quantifying Uncertainty and Variability in Machine Learning: Confidence Intervals for Quantiles in Performance Metric Distributions. aXiv 2025, arXiv:2501.16931. [Google Scholar]

- Wang, S.; Shi, J.; Yang, W.; Yin, Q. High and low frequency wind power prediction based on Transformer and BiGRU-Attention. Energy 2024, 288, 129753. [Google Scholar] [CrossRef]

| Research Categories | Typical Methods | Key Advantages | Limitations Exist |

|---|---|---|---|

| Physical Prediction Methods | Numerical Weather Prediction (NWP) | Based on meteorological physical mechanisms, requiring minimal historical data, suitable for new wind farms | Model complexity, high computational cost, and prediction accuracy heavily influenced by meteorological model precision |

| Traditional Statistical Methods | Time Series Analysis (AR, ARMA, ARIMA) | Simple model structure, fast computation speed, high interpretability | Poor fitting capability for nonlinear, non-stationary wind power sequences; significant impact from data anomalies/missing values |

| Single Deep Learning Model | LSTM | Captures long-term temporal dependencies, adapts to nonlinear fluctuations | Unidirectional structure fails to fully utilize forward and backward temporal context information |

| Composite Depth Prediction Model | BiLSTM | Bidirectional time series modeling simultaneously extracts historical and future correlation features | Hyperparameter sensitivity leads to inefficient manual tuning and limited accuracy |

| Decomposition-Prediction Class Hybrid Model | EEMD/CEEMDAN + Deep Learning Model | Decomposes non-stationary sequences, reduces noise and modal aliasing, enhances prediction stability | Residual interference persists in single decomposition without further enhancement through optimization algorithms |

| Intelligent Optimization Algorithms | PSO, GA, WOA | Automatically optimizes model hyperparameters to enhance generalization capability | Prone to local optima, making it difficult to balance convergence speed and accuracy |

| Novel Intelligent Optimization Algorithms | FTTA (Football Team Training Algorithm) | Superior swarm collaboration mechanism with strong convergence performance | Parameter sensitivity and imbalance between exploration and exploitation limit application effectiveness |

| The Model Proposed in This Paper | CEEMDAN–IFTTA–BiLSTM | 1. CEEMDAN adaptive denoising and deconvolution; 2. IFTTA optimizes model hyperparameters, balancing exploration and exploitation; 3. BiLSTM captures bidirectional temporal features | It exhibits high predictive accuracy, but errors still occur at the extreme points of the prediction curve. |

| Parameter | Value |

|---|---|

| Sliding window | 1 |

| Data window | 24 |

| Number of convolutional layers | 0 |

| BiLSTM hidden units | 64 |

| BiLSTM layers | 1 |

| Optimizer | Adam |

| Initial learning rate | 0.002 |

| L2 regularization | 0.000 |

| Parameter | Optimization Range | Optimization Results | |

|---|---|---|---|

| Dataset 1 | Learning rate | 9.0 × 10−4 | (1 × 10−5, 0.001) |

| BiLSTM hidden nodes | 144 | (100, 200) | |

| L2 regularization parameter | 4.0 × 10−5 | (1 × 10−6, 0.01) | |

| Dataset 2 | Learning rate | 8.0 × 10−4 | (1 × 10−5, 0.001) |

| BiLSTM hidden nodes | 160 | (100, 200) | |

| L2 regularization parameter | 3.0 × 10−5 | (1 × 10−6, 0.01) | |

| Dataset 3 | Learning rate | 9.5 × 10−4 | (1 × 10−5, 0.001) |

| BiLSTM hidden nodes | 152 | (100, 200) | |

| L2 regularization parameter | 4.5 × 10−5 | (1 × 10−6, 0.01) | |

| Prediction Model | MAE (MW) | RMSE (MW) | MAPE (%) | R2 | CT (s) | |

|---|---|---|---|---|---|---|

| Dataset 1 | LSTM | 4.8249 | 7.9631 | 13.74 | 0.8672 | 92 |

| ARIMA | 6.0702 | 9.5338 | 12.92 | 0.8097 | 38 | |

| SVR | 2.9785 | 4.2854 | 11.83 | 0.9615 | 44 | |

| CEEMDAN–BiLSTM | 3.4370 | 5.6831 | 10.58 | 0.9324 | 168 | |

| CEEMDAN–FDA–BiLSTM | 2.5269 | 4.1505 | 11.06 | 0.9639 | 213 | |

| CEEMDAN–FTTA–BiLSTM | 1.7278 | 2.5491 | 9.94 | 0.9864 | 222 | |

| CEEMDAN–IFTTA–BiLSTM | 1.2909 | 1.7941 | 7.84 | 0.9933 | 268 | |

| Dataset 2 | LSTM | 15.4028 | 24.3498 | 14.28 | 0.9009 | 98 |

| ARIMA | 21.3856 | 30.5883 | 11.87 | 0.8437 | 41 | |

| SVR | 10.0610 | 14.1976 | 10.62 | 0.9663 | 46 | |

| CEEMDAN–BiLSTM | 9.5777 | 15.1751 | 8.76 | 0.9615 | 176 | |

| CEEMDAN–FDA–BiLSTM | 7.8531 | 11.7953 | 7.63 | 0.9768 | 228 | |

| CEEMDAN–FTTA–BiLSTM | 7.1189 | 10.6162 | 6.45 | 0.9812 | 235 | |

| CEEMDAN–IFTTA–BiLSTM | 3.2727 | 4.6948 | 5.79 | 0.9905 | 287 | |

| Dataset 3 | LSTM | 5.3516 | 7.9937 | 13.88 | 0.9347 | 87 |

| ARIMA | 6.3030 | 9.1096 | 12.53 | 0.9152 | 36 | |

| SVR | 3.6055 | 5.1104 | 11.37 | 0.9733 | 42 | |

| CEEMDAN–BiLSTM | 3.5502 | 5.6980 | 8.26 | 0.9668 | 161 | |

| CEEMDAN–FDA–BiLSTM | 2.6624 | 3.9612 | 7.84 | 0.9840 | 202 | |

| CEEMDAN–FTTA–BiLSTM | 2.8238 | 4.8120 | 6.63 | 0.9763 | 214 | |

| CEEMDAN–IFTTA–BiLSTM | 1.8119 | 2.6439 | 5.26 | 0.9929 | 259 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Xie, L.; Luo, Y.; Li, C.; Li, L.; Liu, F. Improved Football Team Training Algorithm Based on Modal Decomposition and BiLSTM Method for Short-Term Wind Power Forecasting. Processes 2026, 14, 951. https://doi.org/10.3390/pr14060951

Xie L, Luo Y, Li C, Li L, Liu F. Improved Football Team Training Algorithm Based on Modal Decomposition and BiLSTM Method for Short-Term Wind Power Forecasting. Processes. 2026; 14(6):951. https://doi.org/10.3390/pr14060951

Chicago/Turabian StyleXie, Lingling, Yanjing Luo, Chunhui Li, Long Li, and Fengyuan Liu. 2026. "Improved Football Team Training Algorithm Based on Modal Decomposition and BiLSTM Method for Short-Term Wind Power Forecasting" Processes 14, no. 6: 951. https://doi.org/10.3390/pr14060951

APA StyleXie, L., Luo, Y., Li, C., Li, L., & Liu, F. (2026). Improved Football Team Training Algorithm Based on Modal Decomposition and BiLSTM Method for Short-Term Wind Power Forecasting. Processes, 14(6), 951. https://doi.org/10.3390/pr14060951