Short-Term Load Forecasting in Price-Volatile Markets: A Pattern-Clustering and Adaptive Modeling Approach

Abstract

1. Introduction

- (i)

- Statistical load forecasting methods extract patterns of load variation from historical data, operating on the relatively simplistic assumption that past patterns will replicate in the future. Examples include the ARIMA model [11] and exponential smoothing methods [12]. Wu et al. [13] proposed a model based on a fractionally autoregressive integrated moving average with long-range dependence, incorporating a dynamically adjusted cuckoo search algorithm to optimize the parameters of the forecasting model. This method decomposes the load into three components: autoregressive, differencing, and moving average, to uncover the underlying patterns in historical load variations. Rendon-Sanchez et al. [14] proposed a forecasting approach based on a seasonal exponential smoothing model, utilizing combined forecast results for short-term load prediction. The proposed model can capture seasonality and time-varying volatility, demonstrating favorable forecasting performance. Exponential smoothing methods assign different weights to historical data, with more recent data receiving higher weights (greater importance). This approach employs an exponentially decreasing weighting scheme to smooth out random fluctuations and capture primary trends and seasonal patterns. However, this method primarily captures linear relationships and exhibits limited capability in handling sharp nonlinear fluctuations caused by factors such as abrupt electricity price changes or extreme weather conditions.

- (ii)

- Based on classical machine learning methods, the introduction of multi-factor correlation approaches acknowledges that future load depends not only on past load but also on factors such as weather, date, and electricity prices, constructing complex relationships between load and various influencing factors [15,16]. Zhao et al. [17] proposed a load forecasting method combining a grey model with Least Squares Support Vector Machine (LSSVM). This method employs a feature matching pattern for prediction based on each decomposed component and effectively enhances long-term load forecasting accuracy through the extraction of load characteristics. Support Vector Machine (SVM) operates in a high-dimensional space constituted by numerous features, identifying optimal parameters to fit historical data and ensuring model robustness. This method performs well with small sample sizes but suffers from slower training when dealing with large datasets. Fan et al. [18] introduced a hybrid model integrating Random Forest (RF) with the Mean Generating Function (MGF). This model first obtains predicted values from the time variable, Random Forest, and the Mean Generating Function separately. These are then used as inputs for short-term load forecasting via a multivariate response surface methodology. The model demonstrates stronger robustness and higher forecasting accuracy. Random Forest [19] is an ensemble learning method that constructs multiple decision trees, each trained on randomly sampled data and features, ultimately outputting results through collective decision-making. This approach not only effectively mitigates overfitting inherent in single trees, making the model more robust, but also quantifies the importance of each feature in the prediction.

- (iii)

- Deep learning-based load forecasting methods utilize computational models containing multiple processing layers to learn and extract complex, non-linear temporal characteristics and patterns from massive historical load and related data, thereby achieving high-precision artificial intelligence methods for predicting future short-term load [20,21]. Li et al. [22] proposed a novel hybrid model named CEEMDAN-CNN-LSTM-SA-AE to enhance household electricity load forecasting accuracy. The model first decomposes the original load data using CEEMDAN, then extracts local features via CNN, and captures long- and short-term dependencies using an LSTM-AE model integrated with a self-attention mechanism to complete the forecasting. Experiments on two real-world datasets showed that this model significantly outperforms existing baselines with marked improvements across various performance metrics. Tian et al. [23] proposed a method for short-term electric vehicle charging load forecasting that combines Temporal Convolutional Network (TCN) and Long Short-Term Memory (LSTM) networks. This method employs comprehensive similar-day identification technology and analyzes meteorological factors and historical load data for validation. Experimental results indicate that the model effectively improves forecasting accuracy, with a further 2% reduction possible after introducing similar-day analysis. Ahmad [24] proposed a novel Transformer architecture, TFTformer, to enhance power load forecasting accuracy. The model effectively integrates multi-source data such as weather and time through multi-modal feature embedding, linear transformation layers, and temporal convolutional networks, while also enhancing its ability to capture long-range dependencies. In recent years, to overcome the limitations of single models, hybrid models combining machine learning and deep learning have emerged as a new trend in research and application. Tan et al. [25] proposed a forecasting method that combines the SVMD algorithm with an improved Informer model. This method decomposes load data using SVMD and incorporates relative position encoding, causal convolutions, and skip connections into the Informer model to enhance sequence dependency capture and local feature extraction. This model significantly outperforms several mainstream models and provides a reliable technical reference for optimizing intelligent heating systems. Incremona et al. [26] proposed a Gaussian-process-based load forecasting method with a tailored kernel to address the challenge of predicting electricity demand during the moving holiday of Easter Week. Their results on Italian data show that the proposed approach significantly outperforms GP models with canonical kernels as well as the official forecasts of the TSO Terna.

- (i)

- Most traditional and data-driven models fail to explicitly characterize the evolving coupling between electricity price and load, which makes it difficult to identify typical market regimes or capture their temporal transitions.

- (ii)

- Existing forecasting approaches seldom consider the inertia of historical price fluctuations, leading to weak responsiveness and reduced stability when market dynamics change rapidly.

- (iii)

- Although many deep learning methods can learn temporal patterns, they often lack the ability to model hierarchical dependencies and state transitions across different load regimes, resulting in limited generalization under volatile market scenarios.

- (i)

- A market state identification method based on load–price joint clustering is developed to structurally model the temporal interactions between price and load. It allows the automatic extraction of typical market patterns and helps uncover how price fluctuations drive load variations.

- (ii)

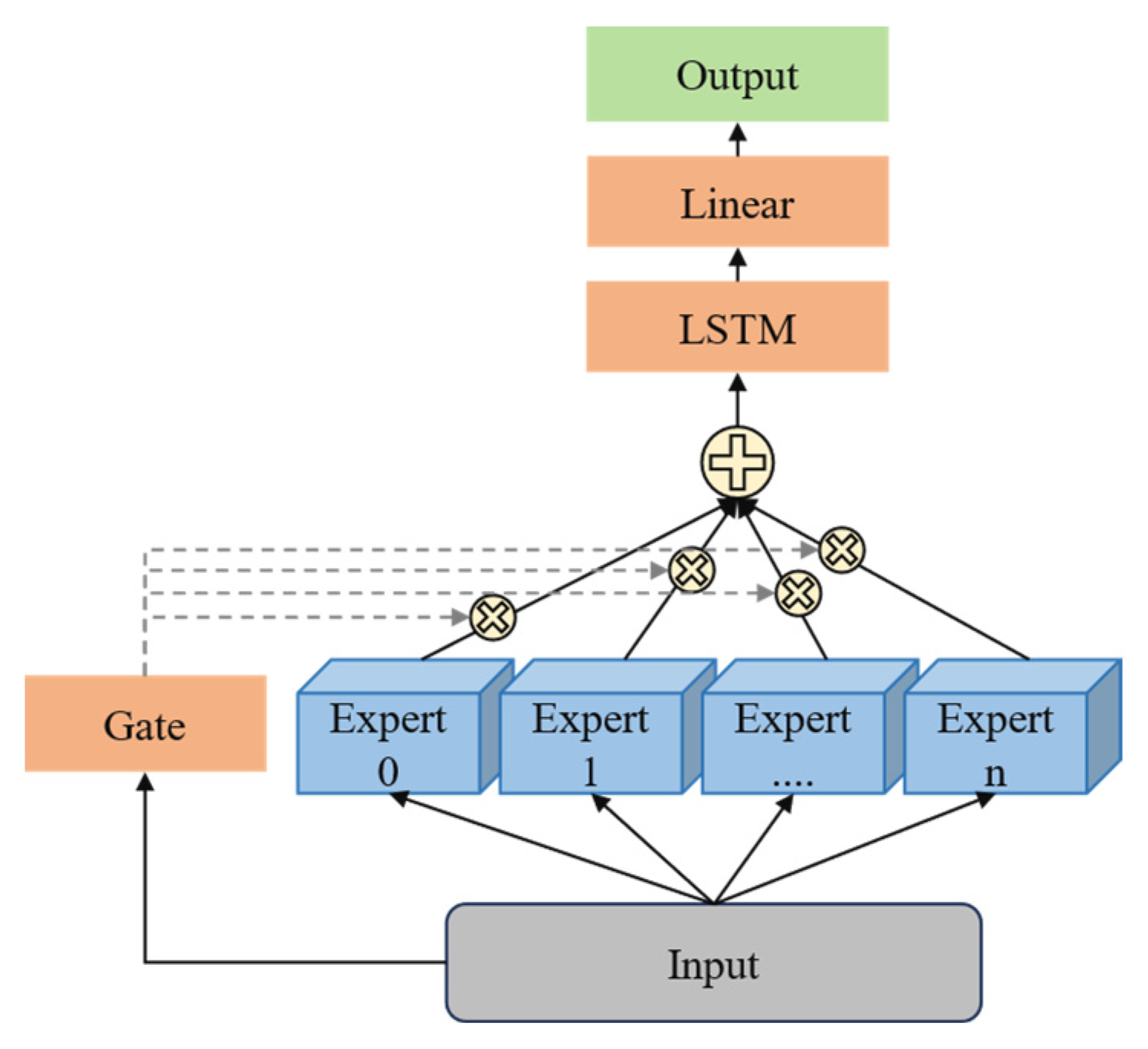

- A gated mixture forecasting network is proposed to dynamically adapt to the inertia of historical price fluctuations. By integrating parallel branches with an adaptive weighting mechanism, the model dynamically captures historical price features and achieves both rapid response and steady correction under market volatility.

- (iii)

- A Transformer-based expert model with multi-scale dependency learning is introduced to capture sequential dependencies and state transitions across different load regimes through self-attention, thereby enhancing model generalization and stability.

2. Overall Description of the Proposed Method

3. Short-Term Load Adaptive Forecasting Method for Non-Stationary Electricity Price Fluctuations

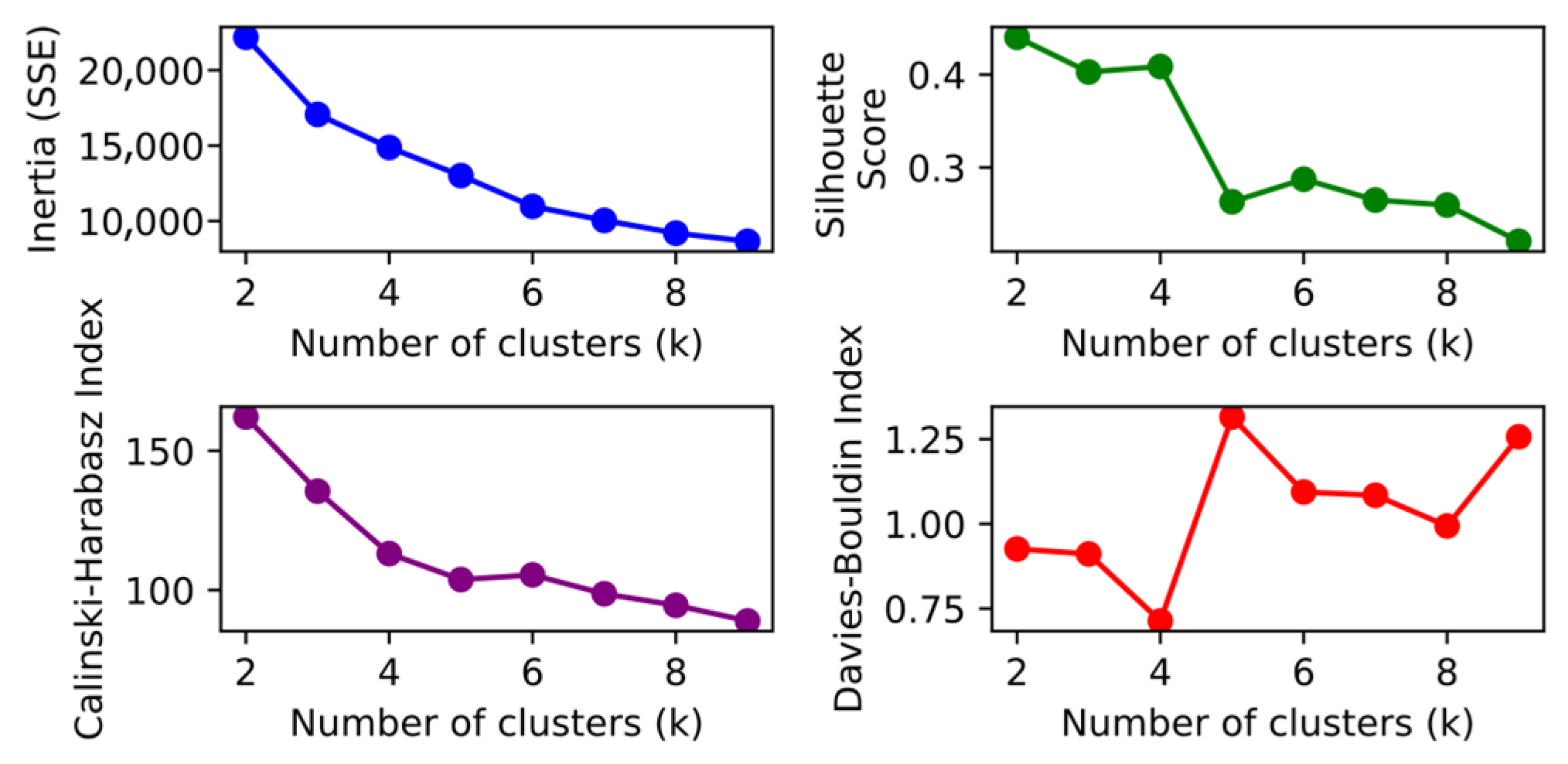

3.1. Typical Daily Pattern Recognition Method Based on Deep Embedding Clustering

3.2. Adaptive Gate Control Network Based on Typical Daily Patterns and Historical Performance Perception

3.3. Expert Model for Load Forecasting Based on Transformer

4. Results

4.1. Evaluation Indicators

4.2. Data and Experimental Setup

4.3. Clustering Results

4.4. Comparison with Conventional Methods

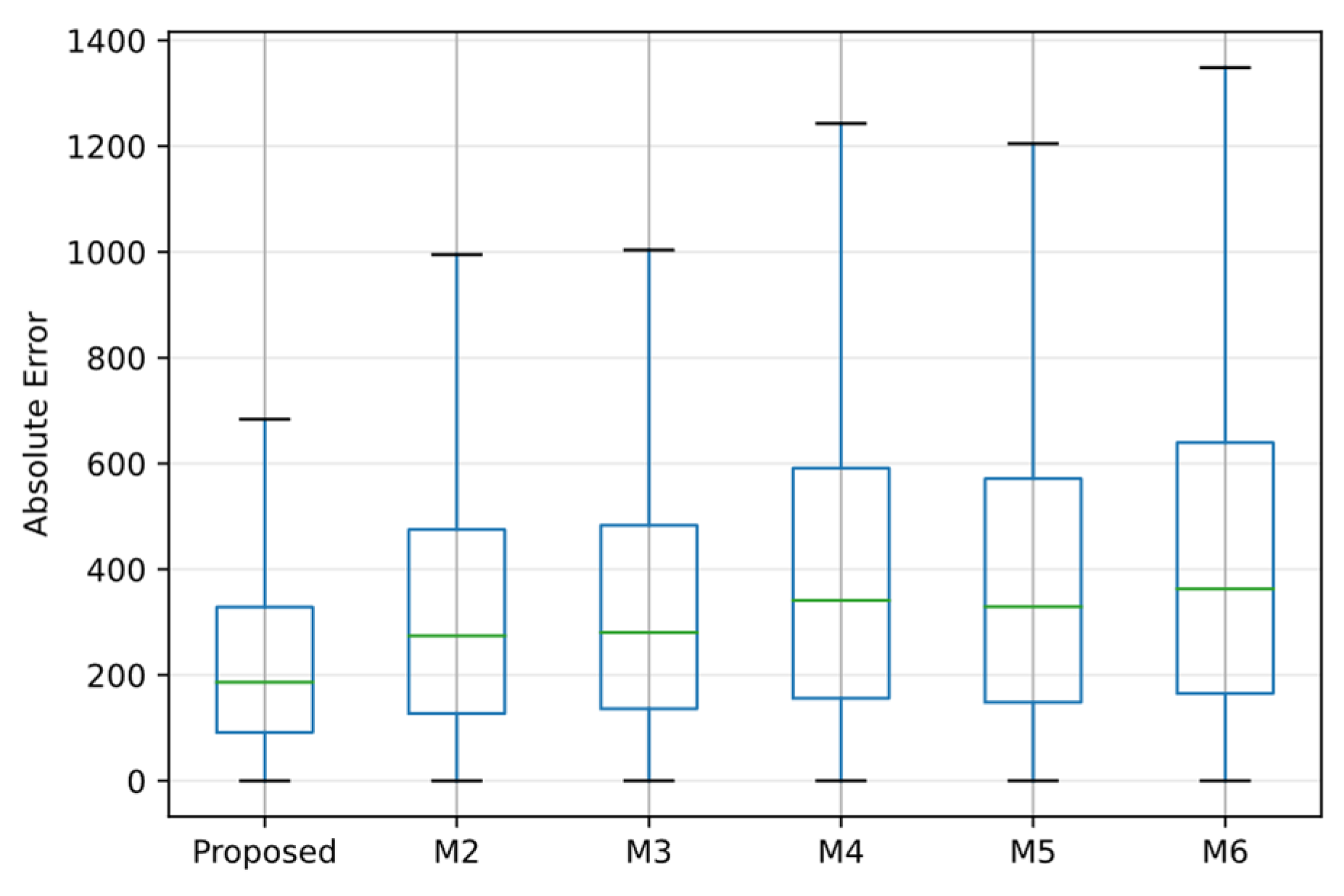

4.5. Ablation Studys

5. Conclusions

- (i)

- The proposed clustering-based partition supplies effective prior structure for modeling. Removing this component raises MAPE from 4.08% to 6.34–7.10%, indicating a clear loss of accuracy.

- (ii)

- The proposed soft gating network fuses multiple experts more effectively than static concatenation or hard assignment. Relative to direct concatenation, MAPE is reduced by an average of 1.08 percentage points.

- (iii)

- The proposed Transformer-expert scheme achieves higher accuracy, reducing MAPE by 1.49 percentage points versus GRU. Replacing GRU with Transformer experts yields a 1.49 percentage-point reduction in MAPE. Against mainstream baselines, the proposed method lowers MAPE by 1.08–2.62 percentage points and exhibits smaller maximum errors.

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Srivastava, M.; Tiwari, P.K. A profit driven optimal scheduling of virtual power plants for peak load demand in competitive electricity markets with machine learning based forecasted generations. Energy 2024, 310, 133077. [Google Scholar] [CrossRef]

- Laitsos, V.; Vontzos, G.; Paraschoudis, P.; Tsampasis, E.; Bargiotas, D.; Tsoukalas, L.H. The State of the Art Electricity Load and Price Forecasting for the Modern Wholesale Electricity Market. Energies 2024, 17, 5797. [Google Scholar] [CrossRef]

- Tong, Q. A Short-Term Electricity Load Forecasting Method Based on Multi-Factor Impact Analysis and BP-GRU Model. Processes 2025, 13, 2336. [Google Scholar] [CrossRef]

- Yao, H.; Qu, P.; Qin, H.; Lou, Z.; Wei, X.; Song, H. Multidimensional electric power parameter time series forecasting and anomaly fluctuation analysis based on the AFFC-GLDA-RL method. Energy 2024, 313, 134180. [Google Scholar] [CrossRef]

- Eren, Y.; Küçükdemiral, I. A comprehensive review on deep learning approaches for short-term load forecasting. Renew. Sustain. Energy Rev. 2024, 189, 114031. [Google Scholar] [CrossRef]

- Hasan, M.; Mifta, Z.; Papiya, S.J.; Roy, P.; Dey, P.; Salsabil, N.A.; Chowdhury, N.-U.; Farrok, O. A state-of-the-art comparative review of load forecasting methods: Characteristics, perspectives, and applications. Energy Convers. Manag. X 2025, 26, 100922. [Google Scholar] [CrossRef]

- Rondón-Cordero, V.H.; Montuori, L.; Alcázar-Ortega, M.; Siano, P. Advancements in hybrid and ensemble ML models for energy consumption forecasting: Results and challenges of their applications. Renew. Sustain. Energy Rev. 2025, 224, 116095. [Google Scholar] [CrossRef]

- El-Keib, A.; Ma, X.; Ma, H. Advancement of statistical based modeling techniques for short-term load forecasting. Electr. Power Syst. Res. 1995, 35, 51–58. [Google Scholar] [CrossRef]

- Phyo, P.P.; Jeenanunta, C. Daily Load Forecasting Based on a Combination of Classification and Regression Tree and Deep Belief Network. IEEE Access 2021, 9, 152226–152242. [Google Scholar] [CrossRef]

- van de Sande, S.N.P.; Alsahag, A.M.M.; Ziabari, S.S.M. Enhancing the Predictability of Wintertime Energy Demand in The Netherlands Using Ensemble Model Prophet-LSTM. Processes 2024, 12, 2519. [Google Scholar] [CrossRef]

- Karamolegkos, S.; Koulouriotis, D.E. Advancing short-term load forecasting with decomposed Fourier ARIMA: A case study on the Greek energy market. Energy 2025, 325, 135854. [Google Scholar] [CrossRef]

- Taylor, J.W. Short-Term Load Forecasting with Exponentially Weighted Methods. IEEE Trans. Power Syst. 2012, 27, 458–464. [Google Scholar]

- Wu, F.; Cattani, C.; Song, W.; Zio, E. Fractional ARIMA with an improved cuckoo search optimization for the efficient Short-term power load forecasting. Alex. Eng. J. 2020, 59, 3111–3118. [Google Scholar] [CrossRef]

- Rendon-Sanchez, J.F.; de Menezes, L.M. Structural combination of seasonal exponential smoothing forecasts applied to load forecasting. Eur. J. Oper. Res. 2019, 275, 916–924. [Google Scholar] [CrossRef]

- Khayat, A.; Kissaoui, M.; Bahatti, L.; Raihani, A.; Errakkas, K.; Atifi, Y. Hybrid model for microgrid short term load forecasting based on machine learning. IFAC-PapersOnLine 2024, 58, 527–532. [Google Scholar] [CrossRef]

- Forootani, A.; Rastegar, M.; Sami, A. Short-term individual residential load forecasting using an enhanced machine learning-based approach based on a feature engineering framework: A comparative study with deep learning methods. Electr. Power Syst. Res. 2022, 210, 108119. [Google Scholar] [CrossRef]

- Zhao, Z.; Zhang, Y.; Yang, Y.; Yuan, S. Load forecasting via Grey Model-Least Squares Support Vector Machine model and spatial-temporal distribution of electric consumption intensity. Energy 2022, 255, 124468. [Google Scholar] [CrossRef]

- Fan, G.-F.; Zhang, L.-Z.; Yu, M.; Hong, W.-C.; Dong, S.-Q. Applications of random forest in multivariable response surface for short-term load forecasting. Int. J. Electr. Power Energy Syst. 2022, 139, 108073. [Google Scholar] [CrossRef]

- Fan, G.-F.; Yu, M.; Dong, S.-Q.; Yeh, Y.-H.; Hong, W.-C. Forecasting short-term electricity load using hybrid support vector regression with grey catastrophe and random forest modeling. Util. Policy 2021, 73, 101294. [Google Scholar] [CrossRef]

- Irankhah, A.; Yaghmaee, M.H.; Ershadi-Nasab, S. Optimized short-term load forecasting in residential buildings based on deep learning methods for different time horizons. J. Build. Eng. 2024, 84, 108505. [Google Scholar] [CrossRef]

- Shen, Q.; Mo, L.; Liu, G.; Zhou, J.; Zhang, Y.; Ren, P. Short-Term Load Forecasting Based on Multi-Scale Ensemble Deep Learning Neural Network. IEEE Access 2023, 11, 111963–111975. [Google Scholar]

- Li, C.; Shi, J. A novel CNN-LSTM-based forecasting model for household electricity load by merging mode decomposition, self-attention and autoencoder. Energy 2025, 330, 136883. [Google Scholar] [CrossRef]

- Tian, J.; Liu, H.; Gan, W.; Zhou, Y.; Wang, N.; Ma, S. Short-term electric vehicle charging load forecasting based on TCN-LSTM network with comprehensive similar day identification. Appl. Energy 2025, 381, 125174. [Google Scholar] [CrossRef]

- Ahmad, A.; Xiao, X.; Mo, H.; Dong, D. TFTformer: A novel transformer based model for short-term load forecasting. Int. J. Electr. Power Energy Syst. 2025, 166, 110549. [Google Scholar] [CrossRef]

- Tan, Q.; Cao, C.; Xue, G.; Xie, W. Short-term heating load forecasting model based on SVMD and improved informer. Energy 2024, 312, 133535. [Google Scholar] [CrossRef]

- Incremona, A.; De Nicolao, G. Short-term forecasting of the Italian load demand during the Easter Week. Neural Comput. Appl. 2022, 34, 6257–6271. [Google Scholar] [CrossRef]

- Zheng, Y.; Jia, C.; Yu, J.; Li, X. Deep embedded clustering with distribution consistency preservation for attributed networks. Pattern Recognit. 2023, 139, 109469. [Google Scholar] [CrossRef]

- Prasanthi, L.; Malyala, L.P.; Krishnan, S.B.; Prasad, K.; Chakrabarti, P. A deep embedded clustering approach for detecting trend class using time-series sensor data. Knowl.-Based Syst. 2025, 320, 113609. [Google Scholar] [CrossRef]

- Liu, Z.-F.; Chen, X.-R.; Huang, Y.-H.; Luo, X.-F.; Zhang, S.-R.; You, G.-D.; Qiang, X.-Y.; Kang, Q. A novel bimodal feature fusion network-based deep learning model with intelligent fusion gate mechanism for short-term photovoltaic power point-interval forecasting. Energy 2024, 303, 131947. [Google Scholar] [CrossRef]

- Begga, A.; Lozano, M.Á.; Escolano, F. AG-GNN: Adaptive gating mechanism for robust node classification in graph neural networks. Inf. Sci. 2025, 726, 122750. [Google Scholar] [CrossRef]

- Wang, Z.; Chen, L.; Wang, C. Parallel ResBiGRU-transformer fusion network for multi-energy load forecasting based on hierarchical temporal features. Energy Convers. Manag. 2025, 345, 120360. [Google Scholar] [CrossRef]

- Zhan, X.; Kou, L.; Xue, M.; Zhang, J.; Zhou, L. Reliable Long-Term Energy Load Trend Prediction Model for Smart Grid Using Hierarchical Decomposition Self-Attention Network. IEEE Trans. Reliab. 2023, 72, 609–621. [Google Scholar] [CrossRef]

- Zhao, H.; Huang, X.; Xiao, Z.; Shi, H.; Li, C.; Tai, Y. Week-ahead hourly solar irradiation forecasting method based on ICEEMDAN and TimesNet networks. Renew. Energy 2024, 220, 119706. [Google Scholar]

- Fang, B.; Xu, L.; Luo, Y.; Luo, Z.; Li, W. A method for short-term electric load forecasting based on the FMLP-iTransformer model. Energy Rep. 2024, 12, 3405–3411. [Google Scholar]

- Hertel, M.; Beichter, M.; Heidrich, B.; Neumann, O.; Schäfer, B.; Mikut, R.; Hagenmeyer, V. Transformer training strategies for forecasting multiple load time series. Energy Inform. 2023, 6, 20. [Google Scholar]

- Jiang, Y.; Gao, T.; Dai, Y.; Si, R.; Hao, J.; Zhang, J.; Gao, D.W. Very short-term residential load forecasting based on deep-autoformer. Appl. Energy 2022, 328, 120120. [Google Scholar] [CrossRef]

- Abumohsen, M.; Owda, A.Y.; Owda, M. Electrical Load Forecasting Using LSTM, GRU, and RNN Algorithms. Energies 2023, 16, 2283. [Google Scholar] [CrossRef]

| Parameter | Value/Type | ||

|---|---|---|---|

| Optimizer | Adam | ||

| Learning rate | 5 × 10−4 (dynamic decay) | ||

| DEC | Learning rate | 4 × 10−4 (dynamic decay) | |

| Network | Linear + 1DCNN | ||

| Dimensionality of embeddings | 128 | ||

| Training epochs | 350 | ||

| Batch size | 64 | ||

| Input sequence length | 96 (24 h) | ||

| forecasting horizon | 96 (24 h) | ||

| Position encoding type | Absolute Positional Encoding | ||

| Experts training method | Pre-training followed by joint training | ||

| Gated mixture architecture | 5 parallel branches; adaptive weighting | ||

| Expert | Encoder | Number of modules | 2 |

| Feed foreword | dmodel = 128, activation functions = ReLu | ||

| Self-Attention | nlayers = 3; dmodel = 128; nheads = 8 | ||

| Decoder | Number of modules | 2 | |

| Self-Attention | nlayers = 3; dmodel = 128; nheads = 8 | ||

| Encoder–Decoder Attention | nlayers = 3; dmodel = 128; nheads = 8 | ||

| feed foreword | dmodel = 128, activation functions = ReLu | ||

| Type | C1 | C2 | C3 | C4 | C5 |

|---|---|---|---|---|---|

| Share | 39.1% | 15.0% | 19.8% | 13.5% | 12.6% |

| Avg. price (¥/MWh) | 30.58 | 16.17 | 25.12 | 24.63 | 26.08 |

| Peak–valley spread | 30.26 | 37.36 | 29.76 | 28.2 | 32.99 |

| Price SD (σ) | 7.2 | 7.89 | 6.75 | 5.98 | 7.43 |

| Mean load (MW) | 6966.20 | 5645.22 | 7112.61 | 5803.93 | 8053.93 |

| Price–load corr | 0.57 | 0.24 | 0.47 | 0.3 | 0.61 |

| Holiday share (%) | 0.0 | 100.0 | 14.6 | 92.9 | 0.0 |

| Model | MAPE/% | NRMSE/% | NMAE/% |

|---|---|---|---|

| Proposed | 4.08 | 8.02 | 5.39 |

| TimesNet | 5.16 | 9.47 | 6.88 |

| iTransformer | 5.38 | 9.87 | 7.20 |

| Transformer | 5.45 | 10.01 | 7.28 |

| Autoformer | 5.59 | 10.29 | 7.46 |

| GRU | 6.70 | 11.75 | 8.98 |

| GP | 5.42 | 9.98 | 7.19 |

| Setting | M1 (Proposed) | M2 | M3 | M4 | M5 | M6 | |

|---|---|---|---|---|---|---|---|

| Experts trained by typical-day classes | √ | √ | √ | √ | × | × | |

| Expert fusion | Soft expert selection | √ | × | √ | × | - | - |

| Direct concatenation | × | √ | × | √ | - | - | |

| Expert backbone | Transformer | √ | √ | × | × | √ | × |

| GRU | × | × | √ | √ | × | √ | |

| Model | MAPE/% | NRMSE/% | NMAE/% |

|---|---|---|---|

| Proposed | 4.08 | 8.02 | 5.39 |

| M2 | 5.47 | 9.86 | 7.26 |

| M3 | 5.57 | 10.16 | 7.45 |

| M5 | 6.34 | 11.25 | 8.50 |

| M4 | 6.63 | 11.79 | 8.88 |

| M6 | 7.10 | 12.78 | 9.51 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Dong, X.; Yu, Y.; Jin, H.; Hu, Z.; Bao, J. Short-Term Load Forecasting in Price-Volatile Markets: A Pattern-Clustering and Adaptive Modeling Approach. Processes 2026, 14, 5. https://doi.org/10.3390/pr14010005

Dong X, Yu Y, Jin H, Hu Z, Bao J. Short-Term Load Forecasting in Price-Volatile Markets: A Pattern-Clustering and Adaptive Modeling Approach. Processes. 2026; 14(1):5. https://doi.org/10.3390/pr14010005

Chicago/Turabian StyleDong, Xiangluan, Yan Yu, Hongyang Jin, Zhanshuo Hu, and Jieqiu Bao. 2026. "Short-Term Load Forecasting in Price-Volatile Markets: A Pattern-Clustering and Adaptive Modeling Approach" Processes 14, no. 1: 5. https://doi.org/10.3390/pr14010005

APA StyleDong, X., Yu, Y., Jin, H., Hu, Z., & Bao, J. (2026). Short-Term Load Forecasting in Price-Volatile Markets: A Pattern-Clustering and Adaptive Modeling Approach. Processes, 14(1), 5. https://doi.org/10.3390/pr14010005