Open vs. Commercial 5G SA Deployments: Performance Assessment

Abstract

1. Introduction

- A literature review and comparative analysis of open and commercial-based 5G SA configurations in terms of throughput and latency results.

- A replicable setup of two 5G SA prototypes (a fully open system and a fully commercial-based system), deployed under similar laboratory conditions.

- An assessment of trade-offs between cost, portability, and scalability of prototypes based on Software-Defined Radio (SDR) versus commercial deployments.

2. Related Works

2.1. SDR-Based (Open) Implementations

2.2. Commercial-Based Implementations

2.3. Comparative Analysis, Discussion

- RAN HW—RAN hardware or a RU.

- RAN SW—the software framework used for RAN.

- CN—the software framework used for CN.

- Source—the source of the 5G architectural components (open/commercial) used in the testbeds.

- Test—the type of experimental setup. The majority relied on P2P links; however, there are a few studies extending to user-driven applications like 4K streaming or industrial monitoring.

- Configuration—summarizes key radio parameters, including operating band, BW or number of RBs if the BW was not specifically mentioned, SCS, and MIMO configuration.

- Measurement Results—present the reported mean latency (RTT) and DL/UL throughput values.

3. Deployment Setup and Configuration

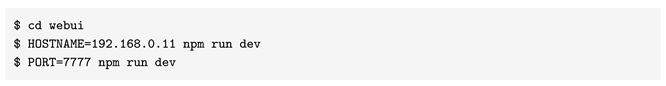

3.1. Open-Source SDR-Based 5G SA Testbed

- Configure MongoDB [25], an open-source database management system that uses JavaScript Object Notation (JSON)-based data models;

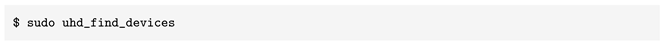

- Configure the USRP Hardware Driver (UHD) [26], an open-source driver that provides a programming interface for USRP hardware;

- Configure a virtual TUN (network TUNnel) interface named ogstun. The virtual TUN interface enables packet handling, routing, and encapsulation, essential for supporting data plane connectivity in the testbed;

- Enable Internet Protocol (IP) forwarding;

- Set Network Address Translation (NAT) rules in iptables and ip6tables to ensure connectivity between the UPF and the Internet;

- Disable the system firewall.

3.2. Commercial-Based 5G SA Testbed

4. Results

4.1. Network Conditions

4.2. Performance Evaluation

5. Discussion

5.1. Performance Assessment

5.2. Benchmarking with Existing Work

5.3. Cost, Flexibility, and Reproducibility Considerations

6. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| 3GPP | 3rd Generation Partnership Project |

| 4G | Fourth-generation |

| 5G | Fifth-generation |

| 5GC | 5G Core |

| AMF | Access and Mobility Management Function |

| APN | Access Point Name |

| ARFCN | Absolute Radio Frequency Channel Number |

| AUSF | Authentication Server Function |

| BBU | Baseband Unit |

| BS | Base Station |

| BSF | Binding Support Function |

| BW | Bandwidth |

| CAMPUS | Center for Advanced Research on New Materials, Products and Innovative Processes |

| COTS | Commercial Off-the-Shelf |

| CPE | Customer Premises Equipment |

| CPU | Central Processing Unit |

| CU | Centralized Unit |

| CN | Core Network |

| CQI | Channel Quality Indicator |

| DL | Downlink |

| DU | Distributed Unit |

| eMBB | enhanced Mobile Broadband |

| FC | Fraunhofer Core |

| FR1 | Frequency Range 1 |

| GPS | Global Positioning System |

| HW | Hardware |

| IMSI | International Mobile Subscriber Identity |

| IP | Internet Protocol |

| IPv4 | IP version 4 |

| IPv6 | IP version 6 |

| JSON | JavaScript Object Notation |

| LTE | Long-Term Evolution |

| MCC | Mobile Country Code |

| MCS | Modulation and Coding Scheme |

| MIMO | Multiple Input Multiple Output |

| MNC | Mobile Network Code |

| NAT | Network Address Translation |

| NDAC | Nokia Digital Automation Cloud |

| NR | New Radio |

| NRF | Network Repository Function |

| NSA | Non-Standalone |

| NSSF | Network Slice Selection Function |

| NUSTPB | National University of Science and Technology Politehnica Bucharest |

| OAI | OpenAirInterface |

| O-FH | Open Fronthaul |

| OOB | Out-of-band |

| O-RAN | Open-Radio Access Network |

| P2P | Point-to-point |

| PCF | Policy Control Function |

| PLMN | Public Land Mobile Network |

| QAM | Quadrature Amplitude Modulation |

| RAN | Radio Access Network |

| RB | Resource Block |

| RF | Radio Frequency |

| RIC | RAN Intelligent Controller |

| RRH | Remote Radio Head |

| RSRP | Reference Signal Received Power |

| RSRQ | Reference Signal Received Quality |

| RTT | Round-Trip Time |

| RU | Radio Unit |

| SA | Standalone |

| SCP | Service Communication Proxy |

| SCS | Subcarrier Spacing |

| SDR | Software-Defined Radio |

| SEPP | Security Edge Protection Proxy |

| SIM | Subscriber Identity Module |

| SINR | Signal-to-Interference-plus-Noise Ratio |

| SISO | Single Input Single Output |

| SoC | System-on-Chip |

| SMF | Session Management Function |

| srsRAN | Software Radio Systems RAN Project |

| SUCI | Subscription Concealed Identifier |

| SW | Software |

| TCXO | Temperature-Compensated Crystal Oscillator |

| TDD | Time Division Duplexing |

| TIP | Telecom Infra Project |

| UE | User Equipment |

| UHD | USRP Hardware Driver |

| UDM | Unified Data Management |

| UDR | Unified Data Repository |

| UiA | University of Agder |

| UL | Uplink |

| UPF | User Plane Function |

| URLLC | Ultra-Reliable Low-Latency Communications |

| USIM | Universal Subscriber Identity Module |

| USRP | Universal Software Radio Peripheral |

| vBBU | virtualized Baseband Unit |

| VoLTE | Voice over LTE |

| VoNR | Voice over NR |

References

- O-RAN Alliance. 2025. Available online: https://www.o-ran.org (accessed on 10 September 2025).

- Telecom Infra Project (TIP). 2025. Available online: https://telecominfraproject.com/ (accessed on 10 September 2025).

- rd Generation Partnership Project (3GPP). 2025. Available online: https://www.3gpp.org/ (accessed on 10 September 2025).

- Bahl, P.; Balkwill, M.; Foukas, X.; Kalia, A.; Kim, D.; Kotaru, M.; Lai, Z.; Mehrotra, S.; Radunovic, B.; Saroiu, S.; et al. Accelerating Open RAN Research Through an Enterprise-scale 5G Testbed. In Proceedings of the 29th Annual International Conference on Mobile Computing and Networking, Madrid, Spain, 2–6 October 2023; p. 138. [Google Scholar]

- Gao, Y.; Zhang, X.; Yuan, H. Integration and Connection Test for OpenAirInterface 5G Standalone System. In Proceedings of the 2021 IEEE 3rd International Conference on Civil Aviation Safety and Information Technology (ICCASIT), Changsha, China, 20–22 October 2021; pp. 1010–1014. [Google Scholar]

- Mehran, F.; Turyagyenda, C.; Kaleshi, D. Experimental Evaluation of Multi-Vendor 5G Open RANs: Promises, Challenges, and Lessons Learned. IEEE Access 2024, 12, 152241–152261. [Google Scholar] [CrossRef]

- Mihai, R.; Craciunescu, R.; Martian, A.; Li, F.Y.; Patachia, C.; Vochin, M.C. Open-Source Enabled Beyond 5G Private Mobile Networks: From Concept to Prototype. In Proceedings of the 2022 25th International Symposium on Wireless Personal Multimedia Communications (WPMC), Herning, Denmark, 30 October–2 November 2022; pp. 181–186. [Google Scholar]

- Tufeanu, L.M.; Martian, A.; Vochin, M.C.; Paraschiv, C.L.; Li, F.Y. Building an Open Source Containerized 5G SA Network through Docker and Kubernetes. In Proceedings of the 2022 25th International Symposium on Wireless Personal Multimedia Communications (WPMC), Herning, Denmark, 30 October–2 November 2022; pp. 381–386. [Google Scholar]

- Ansari, J.; Andersson, C.; de Bruin, P.; Farkas, J.; Grosjean, L.; Sachs, J.; Torsner, J.; Varga, B.; Harutyunyan, D.; König, N.; et al. Performance of 5G Trials for Industrial Automation. Electronics 2022, 11, 412. [Google Scholar] [CrossRef]

- Mallikarjun, S.B.; Schellenberger, C.; Hobelsberger, C.; Schotten, H.D. Performance Analysis of a Private 5G SA Campus Network. In Proceedings of the Mobile Communication—Technologies and Applications; 26th ITG-Symposium, Osnabrueck, Germany, 18–19 May 2022; pp. 1–5. [Google Scholar]

- srsRAN Project. 2025. Available online: https://www.srslte.com/ (accessed on 9 September 2025).

- OpenAirInterface. 2025. Available online: https://openairinterface.org/ (accessed on 9 September 2025).

- BubbleRAN. 2025. Available online: https://bubbleran.com/ (accessed on 11 September 2025).

- Open5GS. 2025. Available online: https://open5gs.org/ (accessed on 25 July 2025).

- Accelleran. 2025. Available online: https://accelleran.com/ (accessed on 25 July 2025).

- Vilakazi, M.; Burger, C.R.; Mboweni, L.; Mamushiane, L.; Lysko, A.A. Evaluating an Evolving OAI Testbed: Overview of Options, Building Tips, and Current Performance. In Proceedings of the 2021 7th International Conference on Advanced Computing and Communication Systems (ICACCS), Coimbatore, India, 19–20 March 2021; pp. 818–827. [Google Scholar]

- Dludla, G.; Vilakazi, M.; Burger, C.R.; Lysko, A.A.; Ngcama, L.; Mboweni, L.; Masonta, M.; Kobo, H.; Mamushiane, L. Testing Performance for Several Use Cases with an Indoor OpenAirInterface USRP-Based Base Station. In Proceedings of the 2022 5th International Conference on Multimedia, Signal Processing and Communication Technologies (IMPACT), Aligarh, India, 26–27 November 2022; pp. 1–5. [Google Scholar]

- Amini, M.; El-Ashmawy, A.; Rosenberg, C. Implementing an Open 5G Standalone Testbed: Challenges and Lessons Learnt. In Proceedings of the IEEE INFOCOM 2023—IEEE Conference on Computer Communications Workshops (INFOCOM WKSHPS), Hoboken, NJ, USA, 20–20 May 2023; pp. 1–2. [Google Scholar]

- Sahbafard, A.; Schmidt, R.; Kaltenberger, F.; Springer, A.; Bernhard, H.P. On the Performance of an Indoor Open-Source 5G Standalone Deployment. In Proceedings of the 2023 IEEE Wireless Communications and Networking Conference (WCNC), Glasgow, UK, 26–29 March 2023; pp. 1–6. [Google Scholar]

- Håkegård, J.E.; Lundkvist, H.; Rauniyar, A.; Morris, P. Performance Evaluation of an Open Source Implementation of a 5G Standalone Platform. IEEE Access 2024, 12, 25809–25819. [Google Scholar] [CrossRef]

- Dória, M.; de Sousa Jr, V.A.; Campos, A.; Oliveira, N.; Eduardo, P.; Filho, P.; Lima, C.; Guilherme, J.; Luna, D.; Diógenes, I.; et al. Virtualized 5G Testbed using OpenAirInterface: Tutorial and Benchmarking Tests. J. Internet Serv. Appl. 2024, 15, 523–535. [Google Scholar] [CrossRef]

- Marțian, A.; Trifan, R.F.; Stoian, T.C.; Vochin, M.C.; Li, F.Y. Towards Open RAN in beyond 5G networks: Evolution, architectures, deployments, spectrum, prototypes, and performance assessment. Comput. Netw. 2025, 259, 111087. [Google Scholar] [CrossRef]

- Orange Future Networks 6G Lab Within the Campus Research Center. 2025. Available online: https://futurenetworks.ro/ (accessed on 15 June 2025).

- USRP B200. 2025. Available online: https://www.ettus.com/all-products/ub200-kit/ (accessed on 29 November 2025).

- MongoDB. 2025. Available online: https://www.mongodb.com/ (accessed on 9 September 2025).

- UHD Software API, Ettus Research. 2025. Available online: https://www.ettus.com/sdr-software/uhd-usrp-hardware-driver/ (accessed on 9 September 2025).

- Building Open5GS from Sources. 2025. Available online: https://open5gs.org/open5gs/docs/guide/02-building-open5gs-from-sources/ (accessed on 20 October 2025).

- srsRAN gNB with COTS UEs. 2025. Available online: https://docs.srsran.com/projects/project/en/latest/tutorials/source/cotsUE/source/index.html (accessed on 20 October 2025).

- Pysim. 2025. Available online: https://pypi.org/project/pysim/ (accessed on 9 September 2025).

- Guide: Enabling 5G SUCI. 2025. Available online: https://downloads.osmocom.org/docs/pysim/master/html/suci-tutorial.html (accessed on 13 August 2025).

- pySim-prog. 2025. Available online: https://osmocom.org/projects/pysim/wiki/PySim-prog (accessed on 20 October 2025).

- Identifying USRP Devices. 2025. Available online: https://files.ettus.com/manual/page_identification.html (accessed on 20 October 2025).

- Benetel RAN550, Indoor 5G Radio Unit. 2025. Available online: https://benetel.com/ran550/ (accessed on 25 July 2025).

- Orange Speedtest. 2025. Available online: http://www.speedtest.ro/ (accessed on 25 July 2025).

- Mobile Signal Strength Recommendations. 2025. Available online: https://wiki.teltonika-networks.com/view/Mobile_Signal_Strength_Recommendations (accessed on 24 July 2025).

- 3GPP. 5G; NR; Physical Layer Procedures for Data (Version 15.14.0 Release 15). Technical Report TS 138 214, 3rd Generation Partnership Project (3GPP), 2021. Available online: https://www.etsi.org/deliver/etsi_ts/138200_138299/138214/15.14.00_60/ts_138214v151400p.pdf (accessed on 2 March 2026).

| Ref. | User Equipment (UE) | RAN Hardware | RAN Software | CN |

|---|---|---|---|---|

| Vilakazi, 2021 [16] | Samsung Galaxy J5 | USRP 2944R | OAI (eNB) | Fraunhofer EPC (4G) |

| Dludla, 2022 [17] | Samsung Galaxy J5 | USRP-2944R | OAI (eNB) | Fraunhofer EPC (4G) |

| Mihai, 2022 [7] | Huawei P40 Pro | USRP X310B200mini | OAI/srsRAN (NSA, 4G/5G) | OAI-EPC (4G) |

| Tufeanu, 2022 [8] | gnbsim (emulated) | gnbsim (emulated) | gnbsim (emulated) | OAI-5GC |

| Mallikarjun, 2022 [10] | Huawei P40 Pro; Quectel RM500Q-GL; Telit FN980 | Nokia RRH (4 × 4 MIMO) | Nokia BBU (NDAC RAN) | Nokia 5G Core |

| Ansari, 2022 [9] | Qualcomm X55 | Ericsson New Radio (NR) RU (n78); LTE eNB (B40, 4G) | Ericsson gNodeB (5G) LTE eNB | Ericsson 5GC (SA); 4G EPC (NSA) |

| Amini, 2023 [18] | Quectel RM502Q-AE | USRP B210 | OAI-RAN/srsRAN | OAI-5GC |

| Sahbafard, 2023 [19] | Quectel RM500Q | USRP B210/N310 | OAI-RAN | OAI-5GC |

| Håkegård, 2024 [20] | OnePlus Nord CE 2 Lite; Simcom SIM8262E; srsUE (ZeroMQ) | USRP B210 | srsRAN | Open5GS |

| Doria, 2024 [21] | Motorola G50 | USRP N310 | OAI-RAN | OAI-5GC |

| Marțian, 2025 [22] | Oneplus Nord CE 5G; Samsung A52 5G | USRP B210 | srsRAN | Open5GS/Magma |

| Ref. | Source | Mode | RAN SW | CN | Test | Configuration | Measurement Results | ||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Band | BW/RBs | SCS | MIMO | Latency (RTT), ms | Throughput [DL, UL] Mbps | ||||||

| Vilakazi, 2021 [16] | Open | 4G | OAI | Fraunhofer Core | P2P | n/a | n/a | n/a | SISO | 14.0 | [30, 28] |

| Dludla, 2022 [17] | Open | 4G | OAI | Fraunhofer Core | 4K stream. | n/a | n/a | 15 kHz | SISO | 23–28 | [13–15, 2–3] (mean) |

| Mihai, 2022 [7] | Open | 4G | OAI | OAI | P2P | n/a | 10 MHz (50 RBs) | 15 kHz | SISO | 36 | [37, 16] |

| Mihai, 2022 [7] | Open | 5G NSA | srsRAN | – | P2P | n/a | 10 MHz (52 RBs) | 15 kHz | SISO | 36 | [34, 11] |

| Tufeanu, 2022 [8] | Open | 5G SA | gnbsim | OAI | P2P | n/a | n/a | n/a | n/a | – | [248, 252] (Minimalist); [190, 192] (Basic) (mean) |

| Mallikarjun, 2022 [10] | Comm. | 5G SA | Nokia AirScale RAN | Nokia 5GC | P2P | n78 | 100 MHz | 30 kHz | 4 × 4 | 11.4 (Quectel) 6.4 (Huawei P40) 9.0 (Telit) | [749, 97] (Quectel); [733, 233] (Huawei); [376, 221] (Telit) (peak) |

| Ansari, 2022 [9] | Comm. | 5G NSA/SA | Ericsson gNodeB/LTE eNB | Ericsson 5GC/EPC | workpiece monitoring | n78; B40 | 100 MHz | 30 kHz | n/a | 4–6 | n/a |

| Amini, 2023 [18] | Open | 5G SA | OAI | OAI | P2P | n78 | 20 MHz | 30 kHz | SISO | 9.9 (min 7) | [56, 9] |

| n78 | 40 MHz | 30 kHz | SISO | 9.9 (min 7) | [114, 20] | ||||||

| Sahbafard, 2023 [19] | Open | 5G SA | OAI | OAI | P2P | n77 | 60 MHz | 30 kHz | SISO | 19 (min 6) | [390, 28] (peak) |

| Håkegård, 2024 [20] | Open | 5G SA | srsRAN | Open5GS | P2P | n77 | 40 MHz | 30 kHz | SISO | 17.3 (min 5.3) | [123, 39] (QAM64); [94, 27] (QAM256) (mean) |

| Doria, 2024 [21] | Open | 5G SA | OAI | OAI | P2P | n78 | 40 MHz | 30 kHz | SISO | 12.0 | [148, n/a] |

| n78 | 60 MHz | 30 kHz | SISO | 12.4 | [215, n/a] (peak) | ||||||

| Marțian, 2025 [22] | Open | 5G SA | srsRAN | Open5GS/Magma | P2P | n78 | 40 MHz | 15 kHz | 2 × 2 | n/a | [146, n/a] (peak) |

| Marțian, 2025 [22] | Open | 5G SA | srsRAN | Open5GS/Magma | P2P | n77 | 80 MHz | 15 kHz | 2 × 2 | n/a | [187, 48] (peak) |

| RSRP (dBm) | RSRQ (dBm) | SINR (dB) |

|---|---|---|

| −75 | −10 | 24 |

| Implementation | DL Throughput (Mbps) | UL Throughput (Mbps) | Latency (ms) |

|---|---|---|---|

| Open-source (srsRAN) | 28 | 14.4 | 47.4 |

| Commercial (Accelleran) | 296.4 | 32.6 | 43.4 |

| Parameter | Open-Source (Research) | Commercial |

|---|---|---|

| Equipment | Motorola Edge

50 Pro CPE Nokia FastMile 5G Gateway 3.2 (5G13-12W-A) | Motorola Edge 50 Pro |

| Duplex/Band | TDD, 5G, n78 | TDD, 5G, n78 |

| ARFCN (Frequency) | 650000 ( 3750 MHz) | 650000 ( 3750 MHz) |

| Bandwidth (MHz) | 20 (51 RBs) | 100 (273 RBs) |

| SCS () | 30 kHz () | 30 kHz () |

| Latency (ms) | 47 (min 28) | 44 (min 27) |

| Average Throughput (Mbps) | DL: 28 (max 41) UL: 14 (max 18) | DL: 296 (max 490) UL: 33 (max 49) |

| Ref. | Latency (RTT), ms | Throughput [DL, UL] Mbps |

|---|---|---|

| SDR-based research | 44 (min 28) | [28, 14] (mean) [41, 18] (peak) |

| Amini, 2023 [18] | 9.9 (min 7) | [56, 9] |

| Håkegård, 2024 [20] | 17.3 (min 5.3) | [123, 39] (QAM256); [94, 27] (QAM64) (mean) |

| Marțian, 2025 [22] | n/a | [146, n/a] (40

MHz); [187, 48] (80 MHz) (peak) |

| Commercial-based testbed | 47 (min 27) | [296, 33] (mean) [490, 49] (peak) |

| Mallikarjun, 2022 [10] | 11.4 (Quectel) 6.4 (Huawei P40) 9.0 (Telit) | [749, 97] (Quectel); [733, 233] (Huawei); [376, 221] (Telit) (peak) |

| Ansari, 2022 [9] | 4–6 | n/a |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Stoian, T.-C.; Mihai, R.-M.; Svertoka, E.; Martian, A.; Patachia-Sultanoiu, C. Open vs. Commercial 5G SA Deployments: Performance Assessment. Technologies 2026, 14, 177. https://doi.org/10.3390/technologies14030177

Stoian T-C, Mihai R-M, Svertoka E, Martian A, Patachia-Sultanoiu C. Open vs. Commercial 5G SA Deployments: Performance Assessment. Technologies. 2026; 14(3):177. https://doi.org/10.3390/technologies14030177

Chicago/Turabian StyleStoian, Teodora-Cristina, Razvan-Marius Mihai, Ekaterina Svertoka, Alexandru Martian, and Cristian Patachia-Sultanoiu. 2026. "Open vs. Commercial 5G SA Deployments: Performance Assessment" Technologies 14, no. 3: 177. https://doi.org/10.3390/technologies14030177

APA StyleStoian, T.-C., Mihai, R.-M., Svertoka, E., Martian, A., & Patachia-Sultanoiu, C. (2026). Open vs. Commercial 5G SA Deployments: Performance Assessment. Technologies, 14(3), 177. https://doi.org/10.3390/technologies14030177