1. Introduction

Today, the sustainability of cities around the world is facing severe challenges posed by constant growth of population. According to [

1], 68.4% of the world’s population will dwell in urban areas by the year 2050. Such rapid urbanization has strained the already limited resources and assets cities can offer. To mitigate the contradiction between supply and demand so that cities can continue growing healthily, both the research community and the industry have settled on the solution of smart city, whose technology aspect involves effectively managing urban resources and assets through employment of information and communication technologies (ICT) [

2]. Thanks to the ICT-enabled infrastructures and services, citizens of modern metropolises are able to access a plethora of real-time information regarding their surroundings for making effective decisions. However, as ubiquitous information access becomes more and more prevalent, it has met with increasing resistance from the conventional human-computer interface and associated interaction techniques due to an interaction seam pointed out by [

3]. The seam demands users to constantly switch their attention between the physical world they live in and the cyberspace they have grown tightly attached to. The swift popularization of ubiquitous information access promoted by smart cities calls for a type of more natural user interface (UI) that can seamlessly bridge these two worlds and the answer is augmented reality (AR). AR is an emerging computer UI technology that superimposes virtual information directly on user senses. Unlike its origin—virtual reality, which replaces user senses completely with a virtual world, AR seeks to enhance our senses with the virtual information. In other words, it would appear that both the real and the virtual objects coexisted in the same space [

4]. Theoretically, all human senses such as vision, hearing, smell, etc. can be augmented, but most research works (including this one) are concerned with vision augmentation. After all, most information we receive is through our visual sense.

Energy fuels all sorts of human activities. Concentration of a large population resulting from urbanization thus requires an immense supply of energy while excessive energy consumption exacerbates the depletion of natural resources and inflicts negative impacts on the environment and the climate. Therefore, smart energy management has always been an integral part of smart city solutions [

5]. Within the urban context, buildings account for 40% of primary energy consumption while contributing more than 30% CO

2 emission globally [

6]. According to [

7], over half of the energy consumption is attributed to the purposes of heating, ventilation and air conditioning (HVAC). Hence, ensuring HVAC systems are functioning efficiently plays a crucial role in meeting the stringent requirements of smart energy. Since the thermal behaviors of HVAC systems often reflect their working conditions, the most common practice nowadays for inspecting and maintaining HVAC systems is through the aid of infrared (IR) thermography technology [

8,

9] due to its non-destructive and non-contact nature.

Facility maintenance field workers employing IR thermography, however, perform plenty of traversals over the aforementioned interaction seam. As pointed out by Iwai and Sato [

10], thermal inspectors need to frequently switch their focus from objects in the real world to the displayed images on a screen because they need to comprehend the heat distribution on the surfaces of the physical objects. By superimposing IR information directly over physical objects of interest, AR addresses the mental mapping challenges adequately.

In this paper, we introduce AR into the traditional façade thermographic inspection process and investigate how user performance of designating positions of IR targets is affected by taking this unconventional approach. Given the popularity of powerful hand-held smart devices nowadays, such as smartphones and tablet computers, we are interested in building our AR system on these devices so that our experiment results can have more practical implications. However, the built-in cameras in these hand-held devices usually have a rather limited field of view [

11,

12]. This creates a dilemma for AR-based target designation applications regarding large physical objects, such as façades in this study: on one hand, a user needs to stay within an arm’s reach of the façade in order to designate (by, for example, marking up) the target positions; on the other hand, he has to back off from the façade for more visual context as well as more features for the tracking system. To overcome this dilemma, we opted for the uncommon third person perspective (TPP) AR [

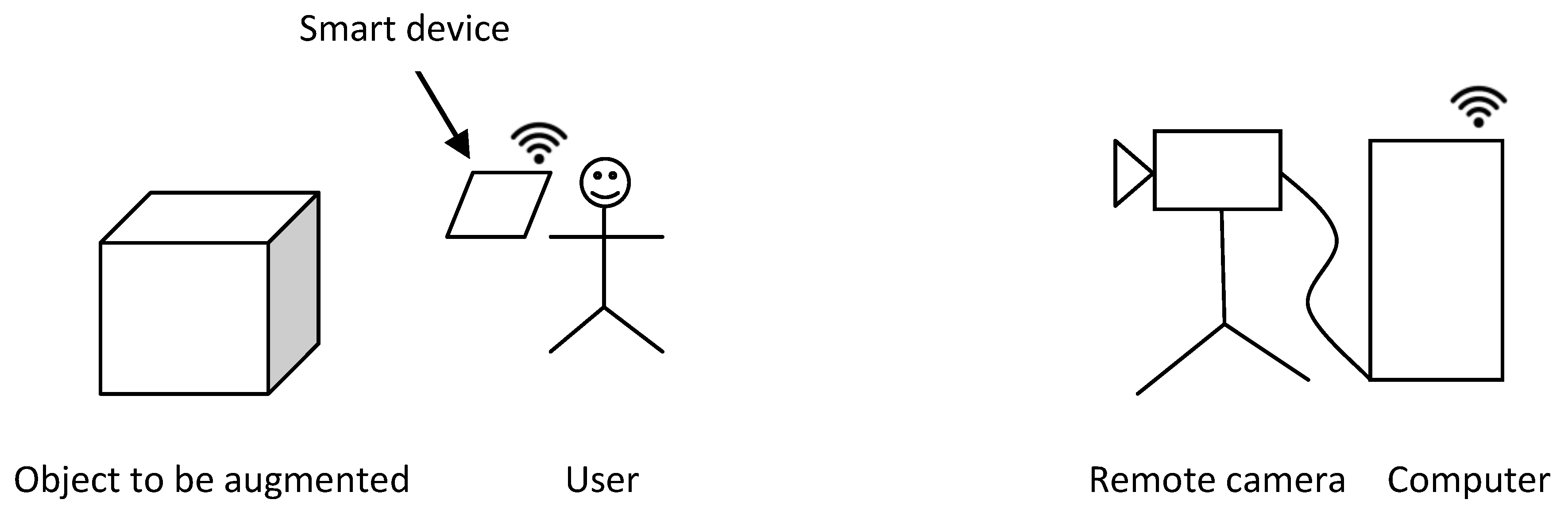

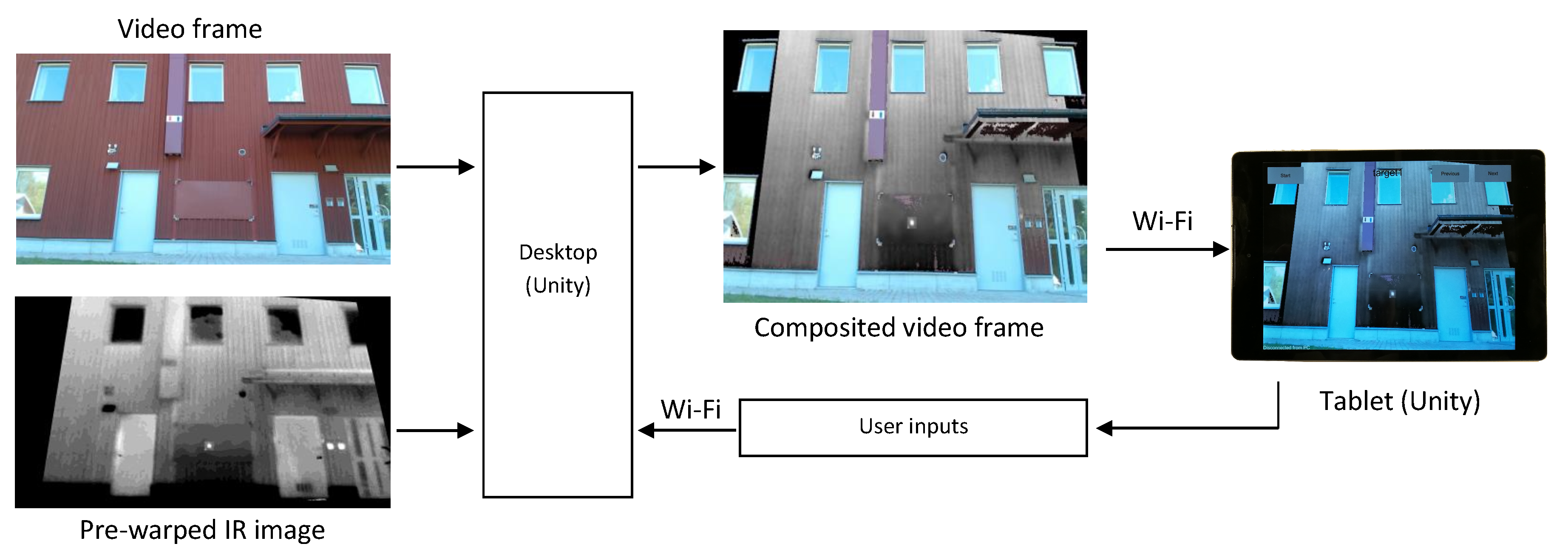

13]. In this type of setting, a remotely placed camera captures both the façade and the user himself. The video is then augmented with desired virtual information (in this case, IR targets) and sent to a smart device held by the user. The TPP AR provides the user with a broader view of the scene while it allows him to stay in front of the physical object to interact with it using the TPP video as a guide. The concept of TPP AR employed to build this system is visualized in

Figure 1.

The IR targets represent imaginary thermal anomalies on a façade whose positions need to be identified for subsequent maintenance operations. Accurately locating maintenance targets can increase work efficiency through reducing operation time, costs and, in extreme cases, it can even avoid endangering workers’ lives [

14,

15]. However, when the task of locating target positions is aided by AR, the accuracy is not only influenced by the adopted AR tools but also by user-related factors. Therefore, this work focuses on evaluating user performance in relation to the new thermal inspection paradigm. To that end, we devised and conducted extensive user experiments, within which target designation accuracy, precision and task completion time were measured and analyzed. To further ground the study in reality, the experiments were carried out on an actual façade in an outdoor setting. The research objective is to provide a detailed error study of AR application in building IR thermography inspection in order to ascertain the viability of this new computer-assisted approach to building diagnostics. Through analyzing the experiment results, we are able to identify and quantify various error sources from the system aspect as well as the aspects of human perception and cognition.

The remainder of this paper is organized as follows: we start with surveying and discussing related work in

Section 2.

Section 3 introduces our AR system and describes the simulation process of those thermal anomalies used in this study as IR targets.

Section 4 is concerned with user experiments and their results, while supplementary benchmark tests with the purpose of identifying various errors are described in

Section 5 together with the test results. Finally, we discuss and conclude the study in

Section 6.

4. User Experiments

4.1. Subjects

We recruited 23 volunteers in total for this study, 10 females and 13 males. The youngest subject was 17 years old while the oldest was 65, with 39.7 years old on average. Three of them were from the building industry (two facility maintenance workers and one contractor). Ten of them were university students or teaching staff related to various fields in built environment, such as urban planning, building engineering, and indoor climate. The remaining of them comprised four lecturers in computer science, five high school students in natural science and an administrative worker. As for the previous experience with AR, seven of them had no experience at all; 12 of them had seen AR on various media and four had personally used AR applications.

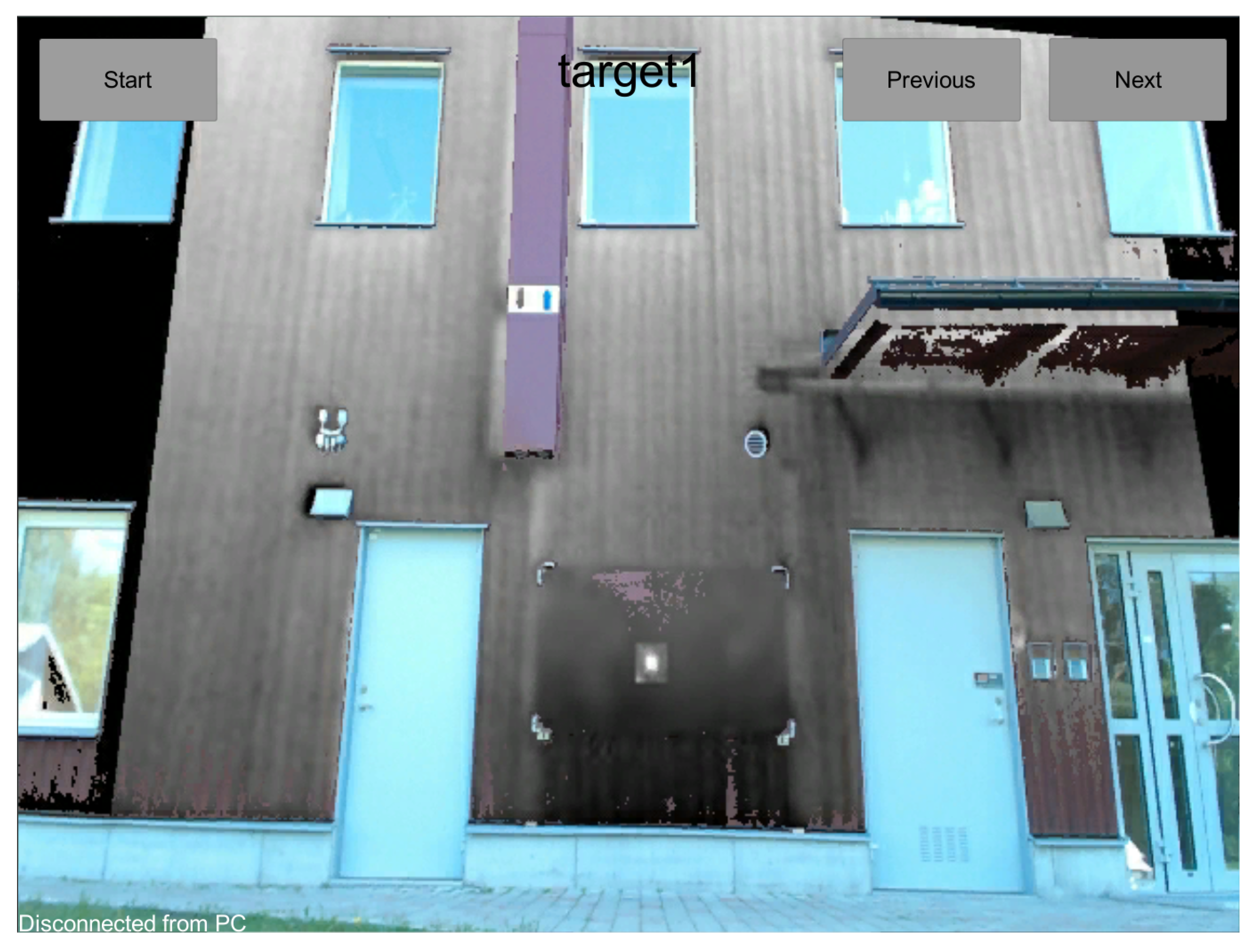

4.2. User Task

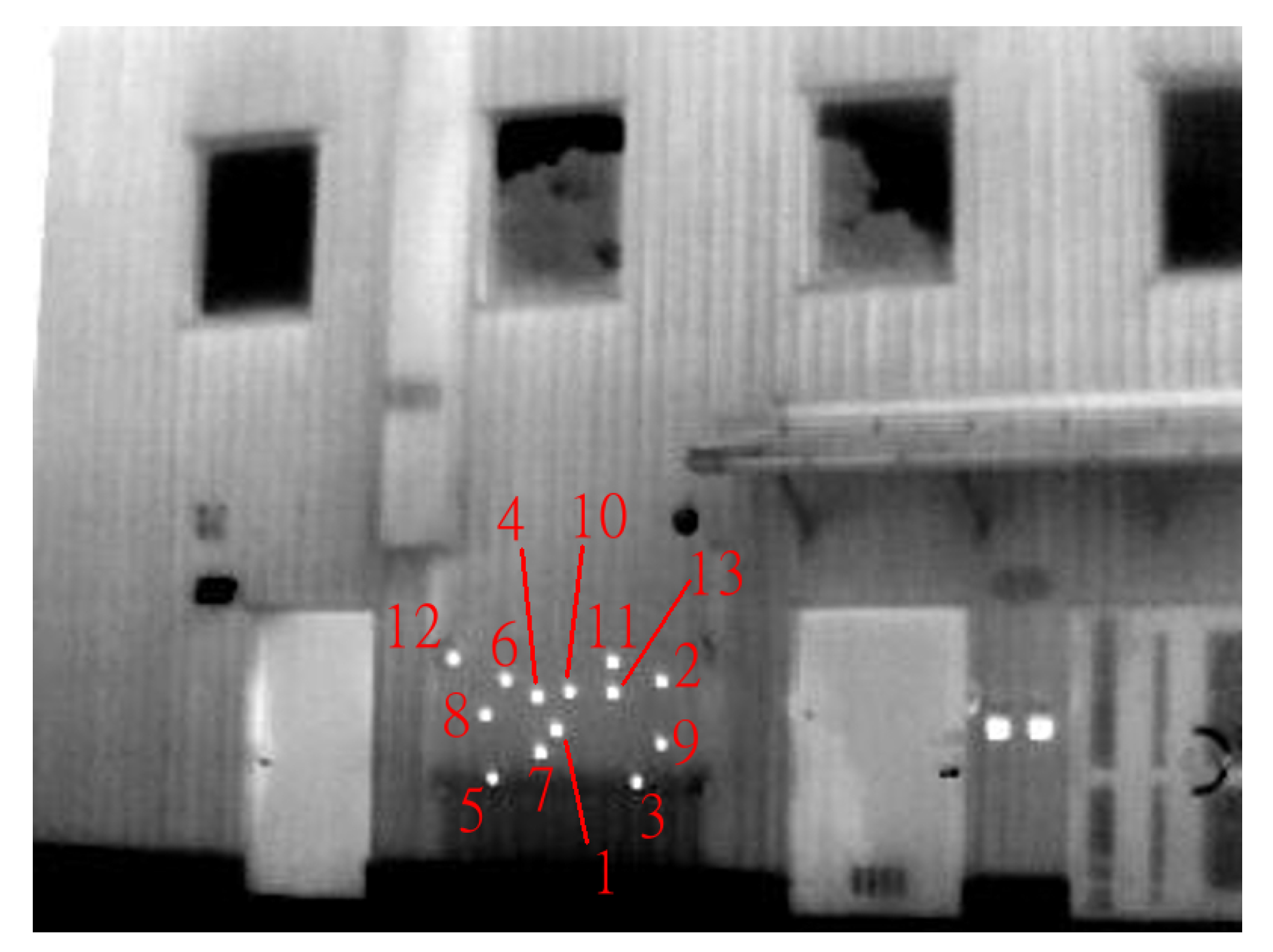

The task for subjects in our study was to designate 13 IR targets, which are displayed on the façade through the TPP AR system. A subject started the experiment by standing in front of the working area while holding the tablet computer. The task comprised 13 steps with only one target shown at each step and to advance to the next step, subjects needed to push the “Next,” button on the screen. The 13 targets were presented sequentially in the order of their identification numbers denoted in

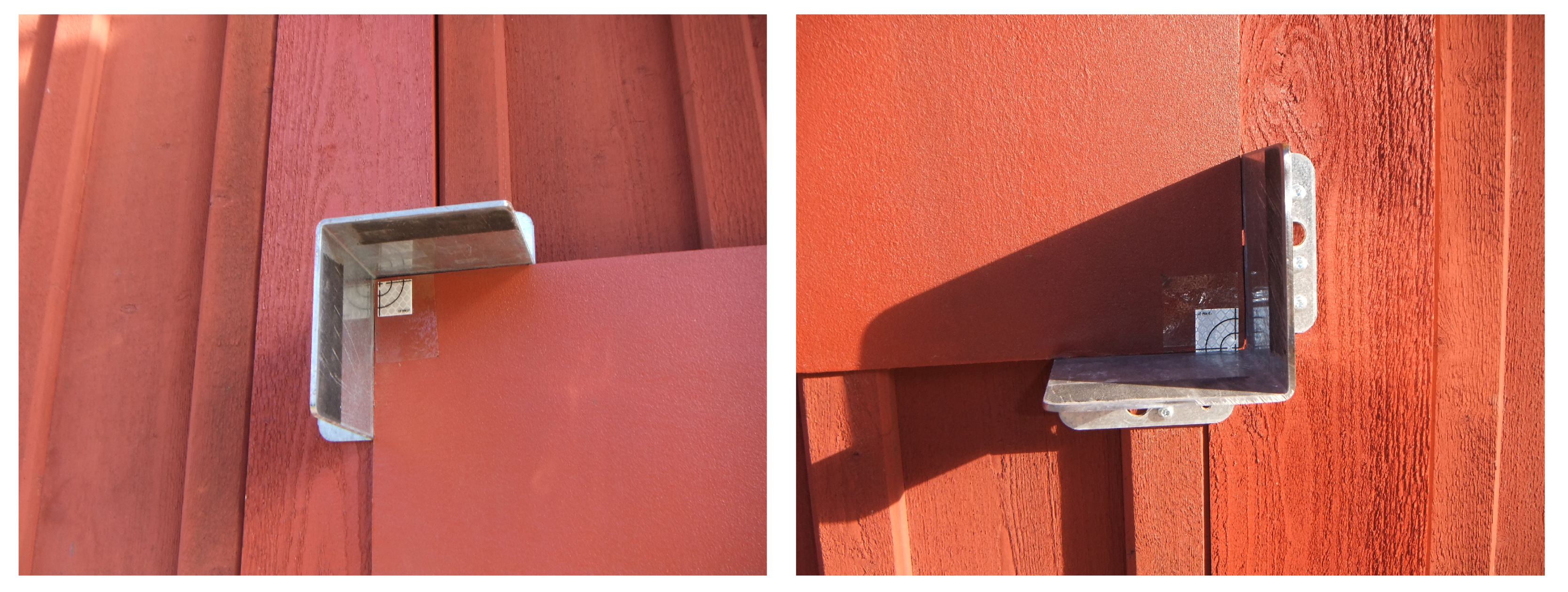

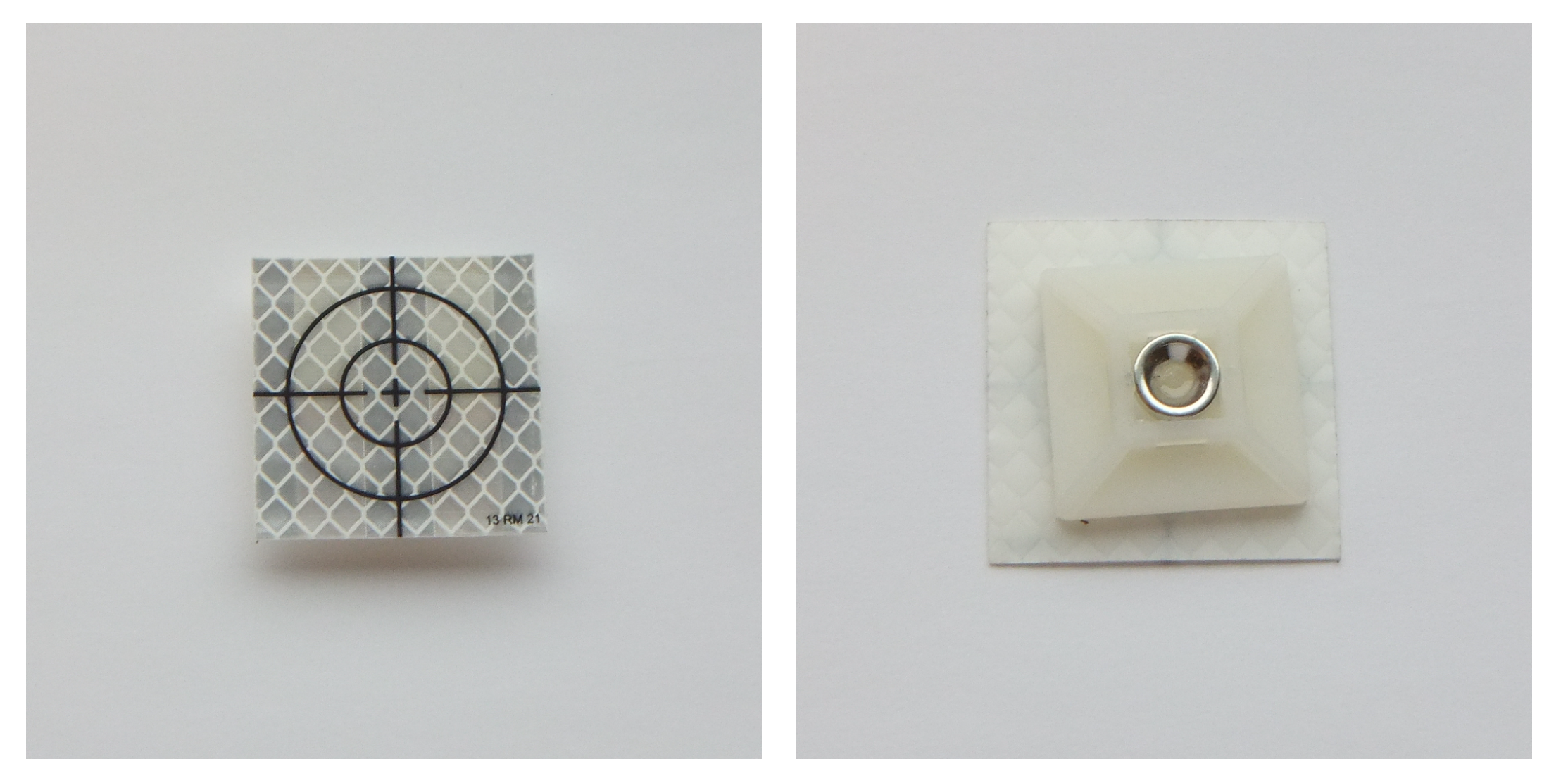

Figure 8. The order was designed in such a way that no subsequent targets were spatially close to each other. At each step, the subject picked up a marker and tried to align it with the target center as seen via the augmented video. The markers were made from a laser reflector adhered to a magnet (see

Figure 10) so they would stick to the working area which was covered with metallic paint, as mentioned in

Section 3.4. Once subjects were satisfied with the marker placement, they proceeded to the next step until all 13 targets were designated. No time limits were imposed on subjects during this experiment.

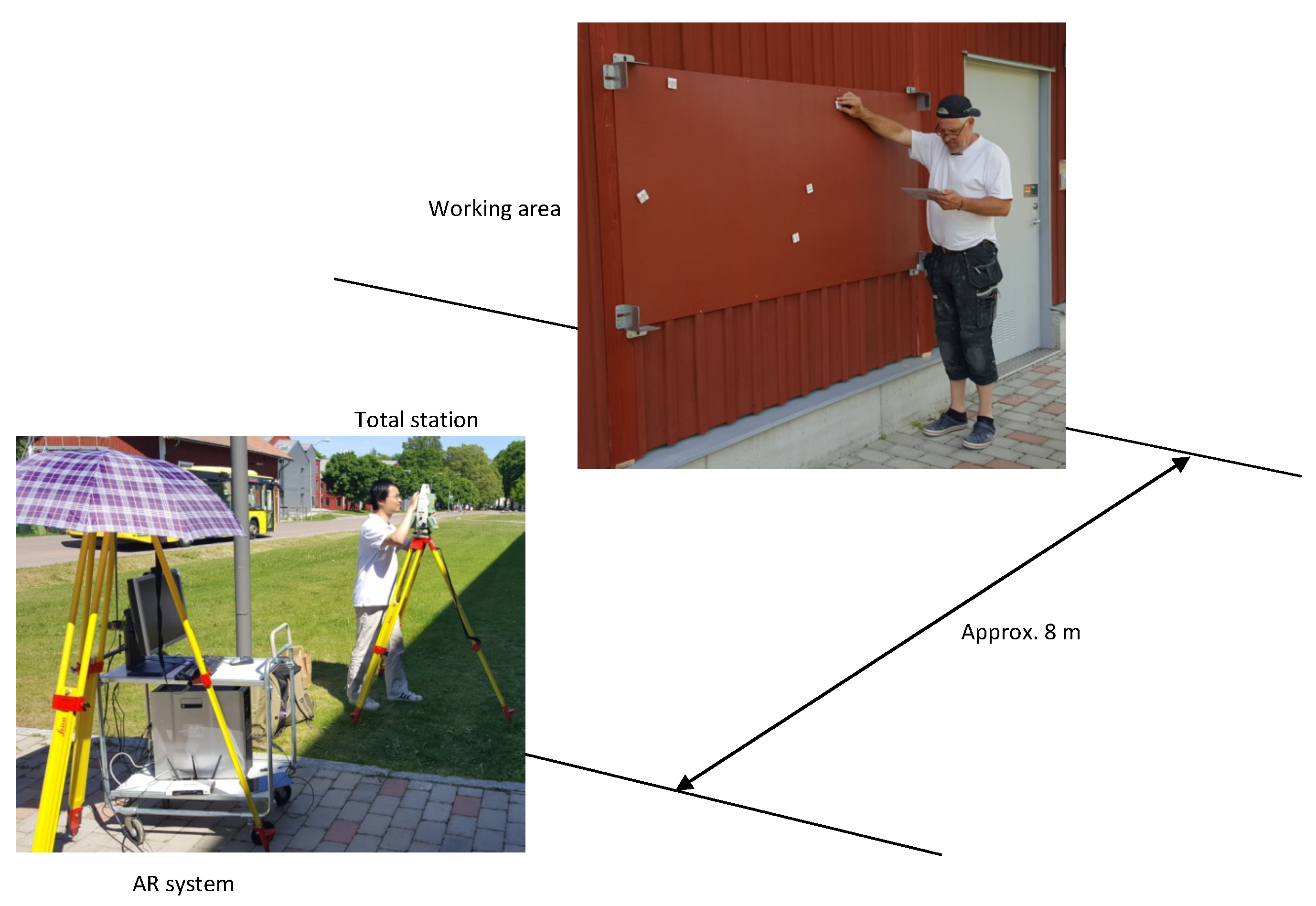

Figure 11 illustrates a typical arrangement of the AR system in relation to the working area during the experiments. The figure also shows a subject performing the target designation task.

4.3. Experimental Procedure

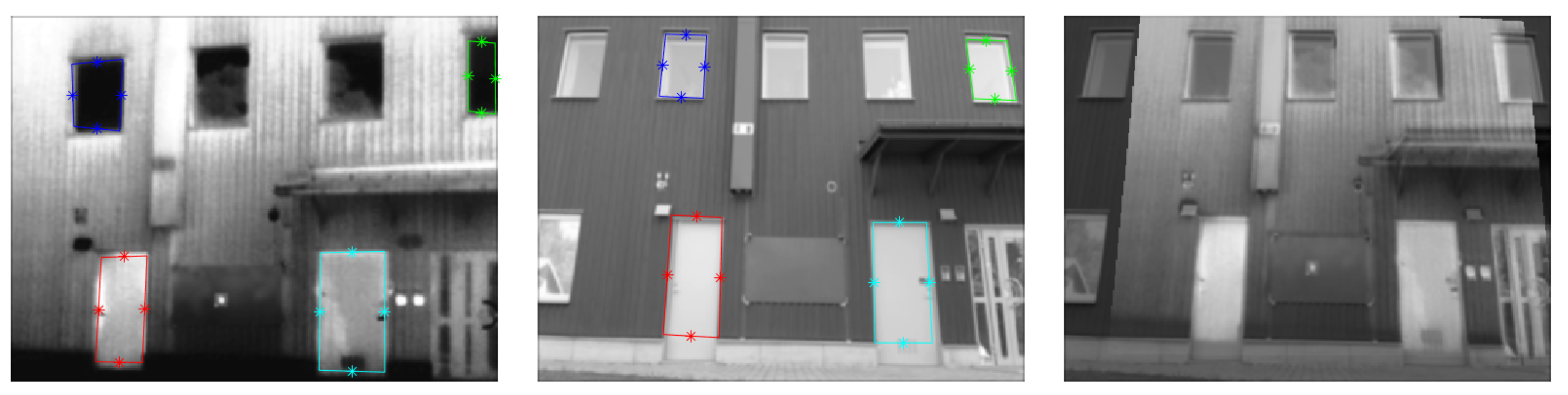

Prior to the arrival of the first subject for each session, we performed two procedures to prepare for the experiments. The first one was setup of the AR system, which started with our moving the trolley to a pre-determined location in front of the façade. This ensured that the façade view captured by the webcam did not vary much between experiment sessions and the registration results were nearly the same for all the subjects, thus avoiding unnecessary system inherent errors. Next, we used the webcam to take a picture of the façade and registered it with all the IR targets by running the method implemented in [

40]. Here, we also included five extra arbitrary targets for a practice session. We made sure these five practice targets did not coincide with any of the 13 targets used in the test. All 18 pre-warped IR images were then imported into our Unity application running on the desktop computer. The second procedure involved establishing a mechanism for retrieving the designated positions in the working area as marked up by subjects. We opted for a total station to measure the positions due to its high accuracy. Positions reported by a total station are expressed in terms of its own coordinate system, hereby denoted as

. Hence, to transform coordinates in

to the coordinate system of the working area,

(wherein the ground truth of target positions were measured), we need to calibrate the total station. To this end, we first set up the total station and then measured the coordinates of the bottom left corner, the bottom right corner and the top left corner of the working area in

. These corners were visually identified by laser reflectors (the white markers inside the metal stands shown in

Figure 9), which could be sensed by the total station. With these three reference coordinates established, coordinates can subsequently be transformed between the two coordinate systems

and

.

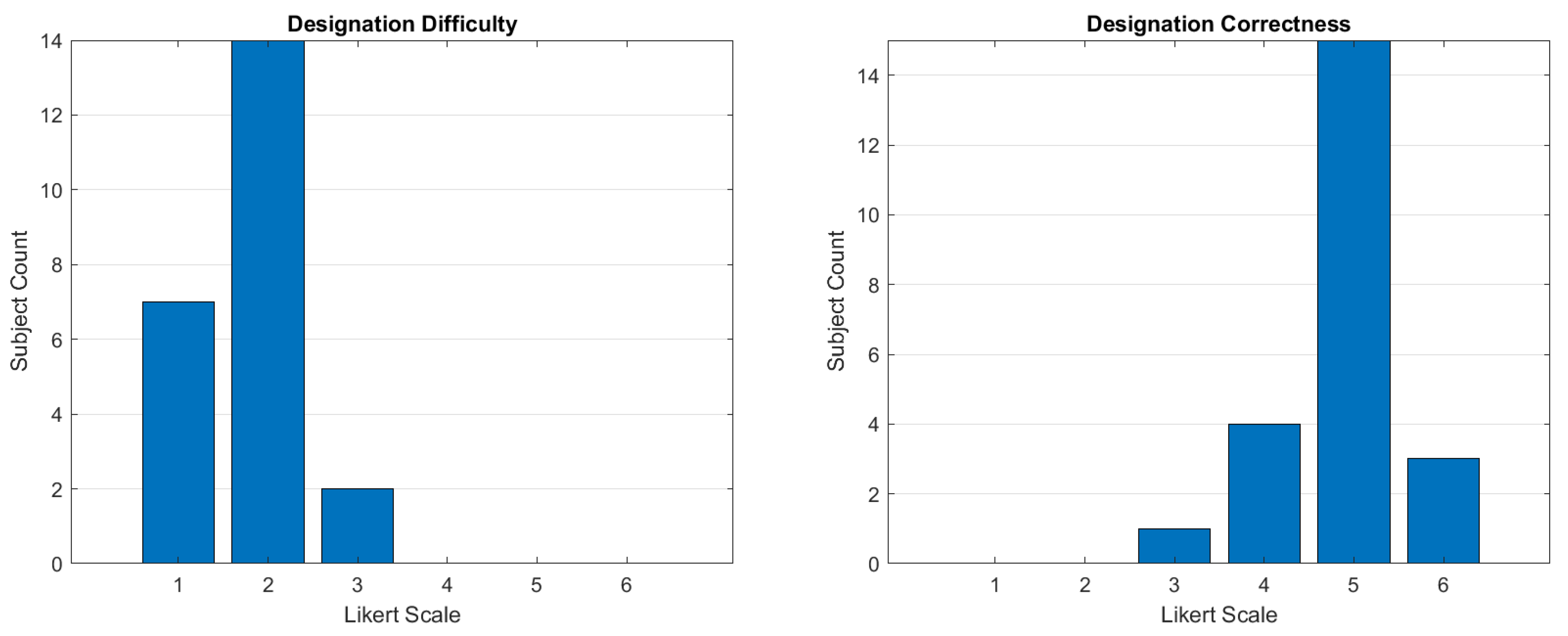

A subject’s experiment began with a short interview, wherein we inquired such basic information as age, occupation, previous experience with AR and eyesight condition. Afterwards, we introduced the subject to the task and the tablet application. The subject was informed that the time of designating each target was recorded, but we also explicitly instructed him to mark the target as precisely as he could. Apart from that, no other user-testing protocols were adopted. These instructions were followed by the practice session where the five practice targets were displayed in turn and the subject took him time to familiarize himself with the task. Once he felt ready, he could proceed to the actual task with the 13 real targets. During this phase, we did not have any further interaction with the subject and he completed the task independently. There was another short interview upon the completion of the task, wherein we asked the subject to rate the difficulty of designating the targets, the impression about the correctness of the designation (both on a Likert scale of 1 to 6) and to answer two open questions: “What do you think could be improved with this application?” and “What was most difficult in performing the task?”. At the end of the subject’s experiment, we measured the coordinates of those 13 markers in the working area using the total station. During the measurement, we aimed the reticle of the telescope at the center cross of each marker.

4.4. Experimental Results

Observations of the user experiment comprise measured positions and completion times recorded for 13 targets from 23 participants in our study, altogether 299 observations. The raw 3D positions acquired by the total station were transformed into the local 2D coordinate system

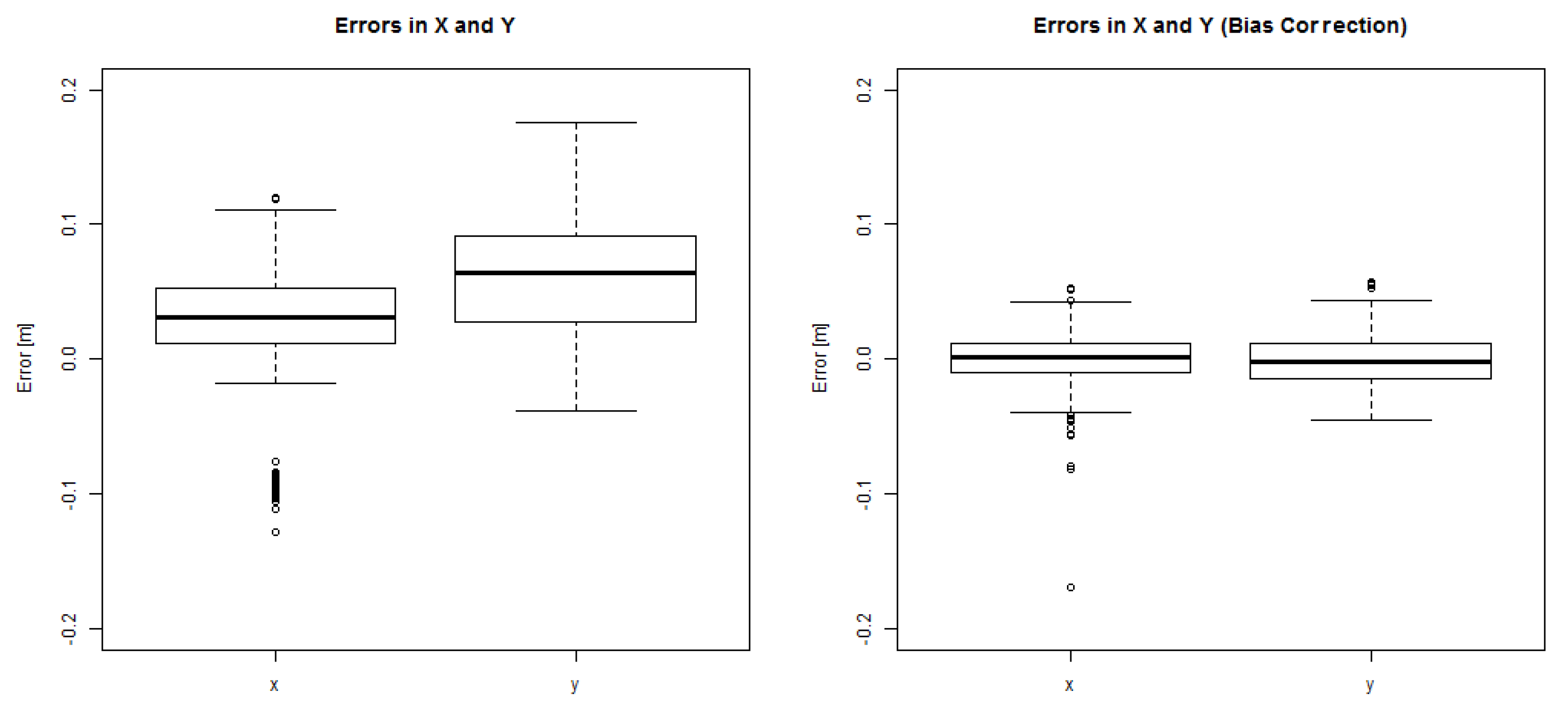

of the working area based on acquisition of aforementioned three reference points after every setup of the total station. For evaluation of precision and accuracy, the deviations in X (horizontal) and Y (vertical) of the measured positions from the known target positions were determined. The boxplot in

Figure 12 (left) shows deviations in X and Y for all 299 observations. Median deviation in X is 3.1 cm and the interquartile range (IQR) is 4.0 cm. For the deviation in Y, the median is 6.4 cm and the IQR is 6.4 cm. In terms of Euclidean distance, the deviations correspond to a median of 7.6 cm with an interquartile IQR of 7.5 cm. Under normal conditions positioning errors are expected to occur similarly to either side of the true target position, but a bias exists here apparently. Measured target positions are positively shifted both in the X and Y directions. In addition, the dispersion is notably larger in the vertical direction (Y).

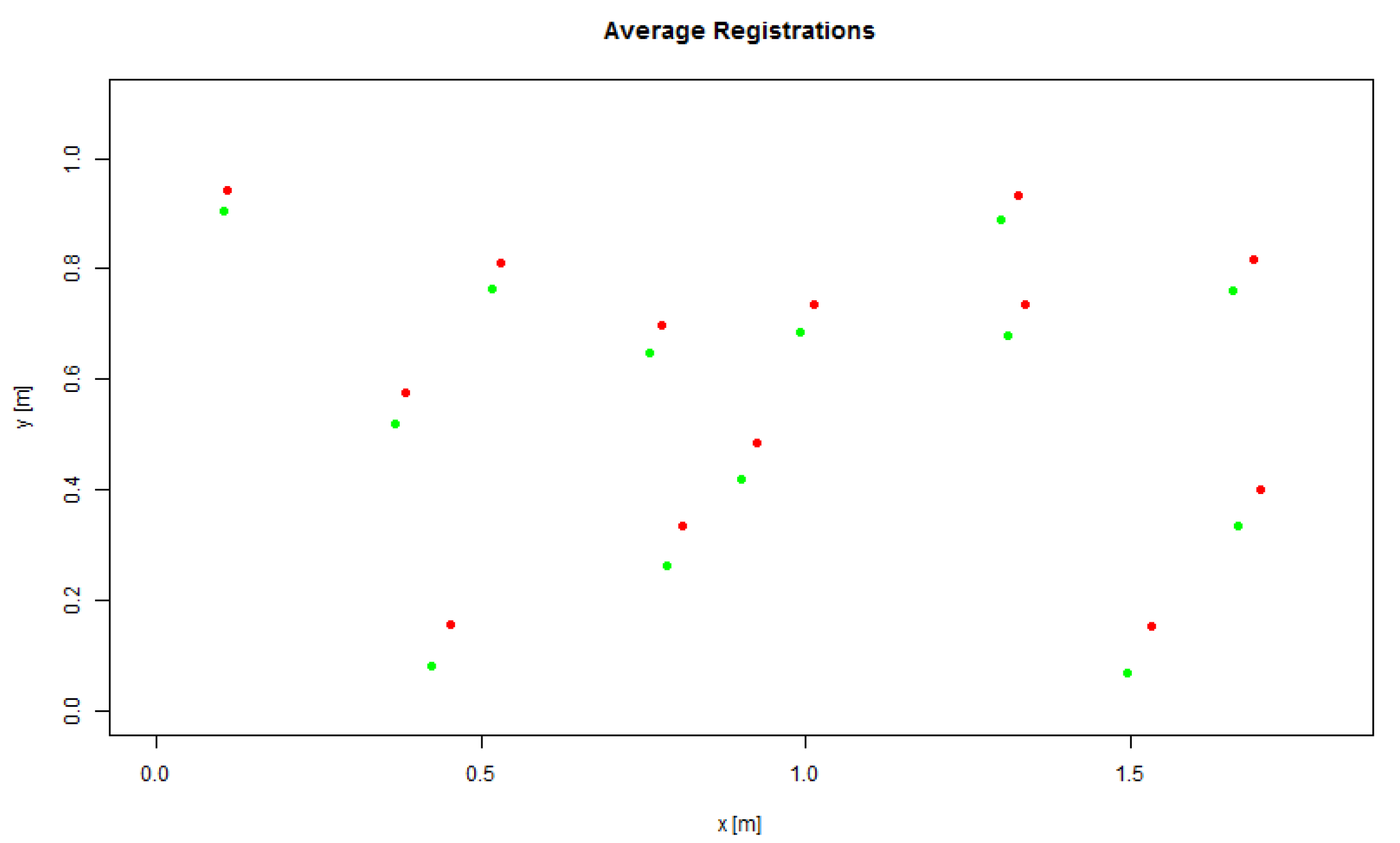

To identify the source of this potentially systematic bias, we further plot in

Figure 13 the average measured positions for each target together with the ground truth positions. Visual inspection of this plot reveals that the suspected shift is consistent and nearly constant in magnitude and direction for all targets. Namely, it does not depend on target positions. A systematic bias of the kind seen here can be either explained by systematic errors of the measuring procedure using the total station or by errors in the AR software calibration, which is the spatial co-registration of the visible and IR images. Measuring errors from the total station are here dismissed because the distances measured between the three reference points within the working area were verified to be close to their true values in all experiment sessions. To further analyze those systematic errors, we group the data by each experiment session.

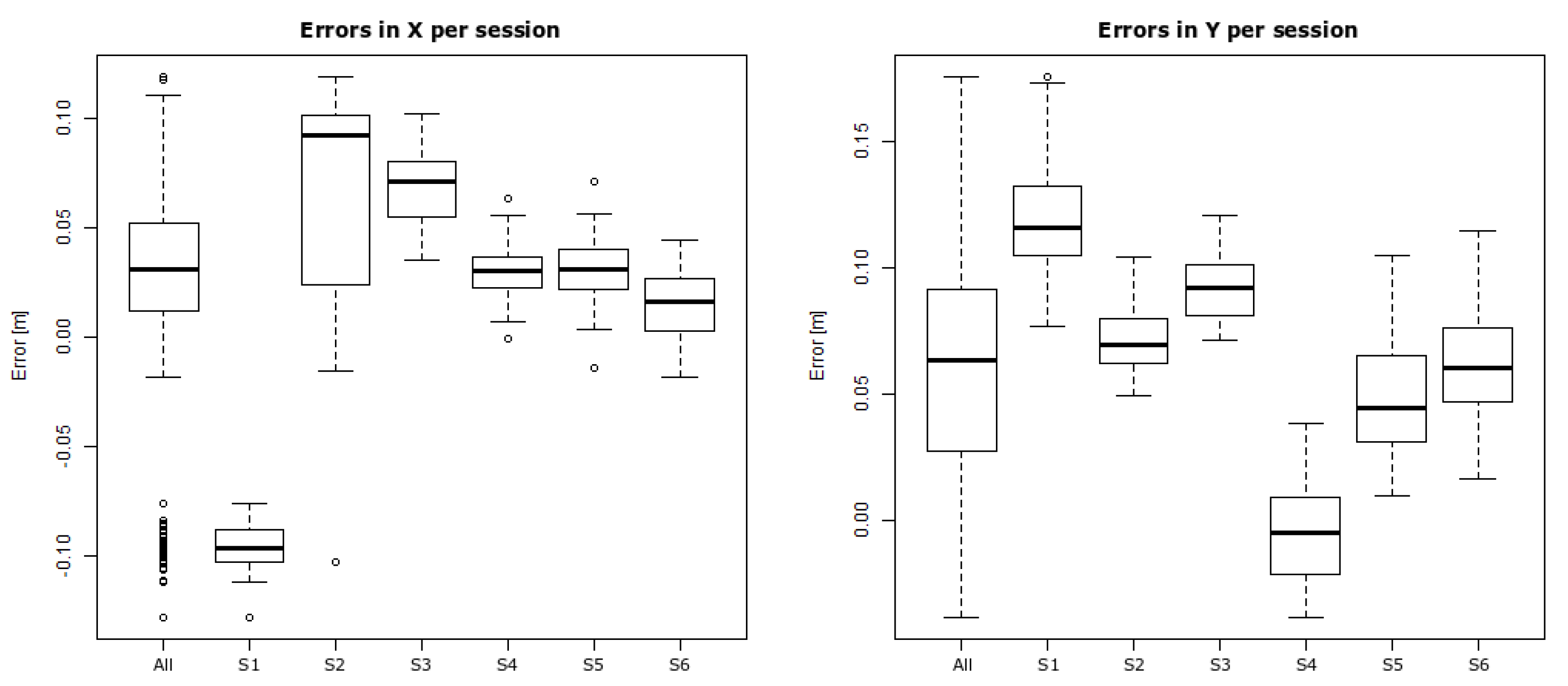

Figure 14 shows boxplots of deviations in X and Y per session. The plots reveal that bias varied largely between subsequent sessions and that dispersion within each session is much less in comparison with the entire dataset.

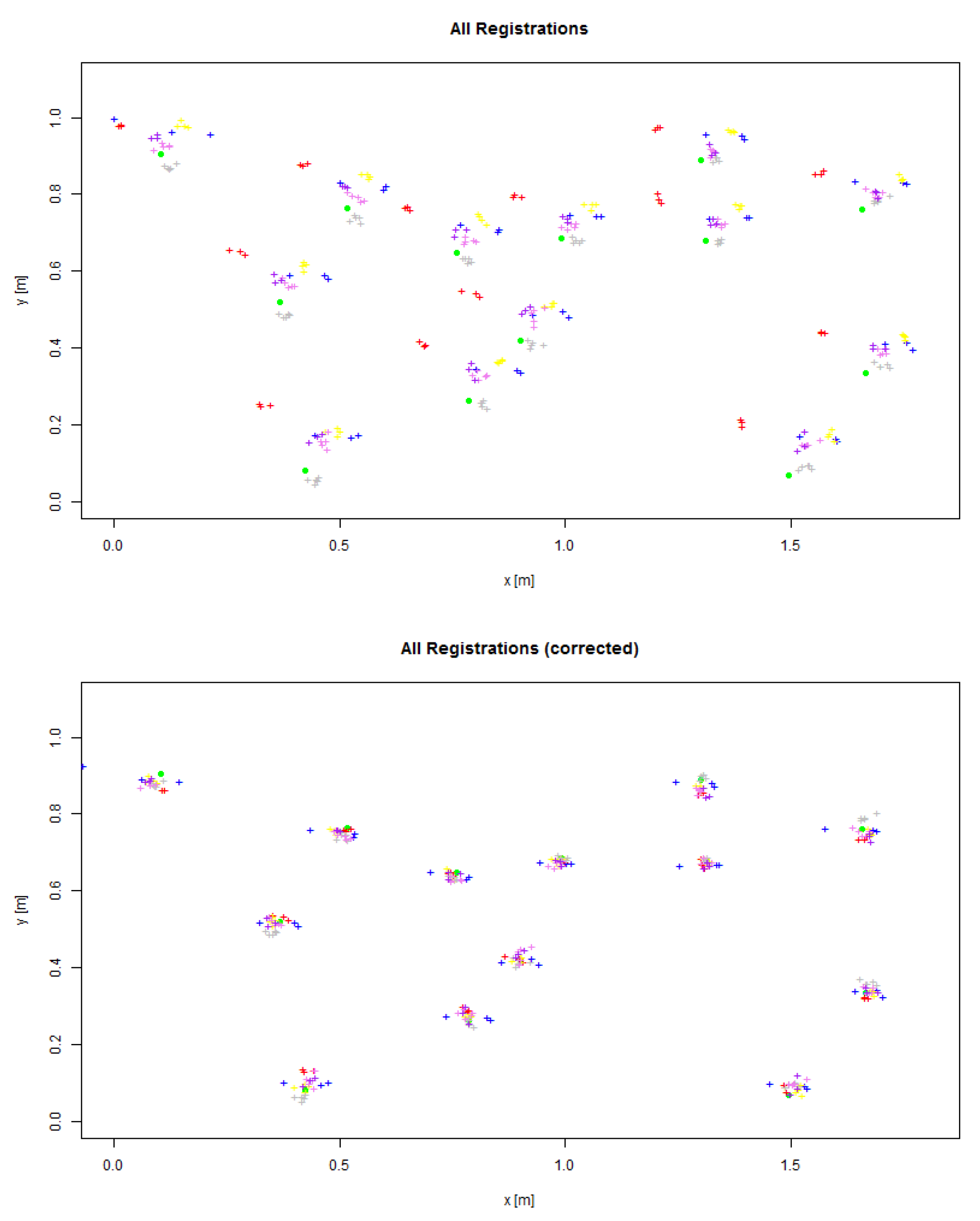

To further verify that bias, again, is consistent for all targets within one experiment session, a more detailed plot is reproduced in

Figure 15 (top) where all observations are plotted (as color-coded crosses) in comparison to the ground truth positions (filled green circle), with colors indicating experiment sessions. As this plot clearly shows, different biases were introduced per experiment session and affected positioning of all targets within that session almost equally. Hence, we attribute those varying biases to the registration of the infrared images with the real images—a process that was carried out before every experiment session given the position of the third person camera. To quantify this registration error further and to separate it from users’ imprecision of acquiring target positions through the AR interface, we perform a bias correction of measured target positions per experiment session. The correction vectors are hereby hypothetically composed of the mean deviations (in X and Y) from the ground truth in each session as shown in the boxplots in

Figure 14.

Table 1 lists exact coordinates of those correction vectors. After session-wise bias correction, measured target positions are on average more closely centered on the true positions (see

Figure 15, bottom). Boxplots of bias-corrected data i.e., the residual errors are seen in

Figure 12, right. The per-session bias adjustment not only leads to a close to zero centering of the observations, it also reduces dispersion of the residual errors. The IQR for X is now 2.1 cm and for Y it is 2.6 cm. Medians are now 0.1 cm in X, and −0.2 cm in Y. In terms of residual distance errors (expressed by Euclidean distance), which are more relevant to describe users’ precision when they interact with the AR system, the median is 2.2 cm and the IQR is 1.8 cm.

In regard to observed times for target designation using the AR tool, our data analysis did not indicate any statistically relevant association between designation times and errors, nor between times and target or experiment sessions. To summarize time observations, overall, subjects took on average 17.92 s per target, but there was huge variation between individual subjects with the fastest subject spending on average 7.57 s per target, while the slowest subject used on average 30.46 s per target. Again, no correlation could be found between subjects’ speed and errors. Lastly, responses to the two open questions at the end of each experiment, namely, “What do you think could be improved with this application?” and “What was most difficult in performing the task?” can be summarized as follows: video resolution was low and it was difficult to determine whether the markers had been placed accurately. Nevertheless, the majority of subjects still believed that the system was easy to use and they had designated the targets accurately, as indicated by the other two Likert scale-based ratings performed along side those two open questions (see

Figure 16).

5. Registration Experiments

Overall target designation errors as observed in the user experiment are, as shown in the previous analysis, subject to several sources of errors. To ascertain the sources and magnitudes of contributing factors, we executed a number of experiments to establish benchmarks for various potential sources.

5.1. Benchmark Tests

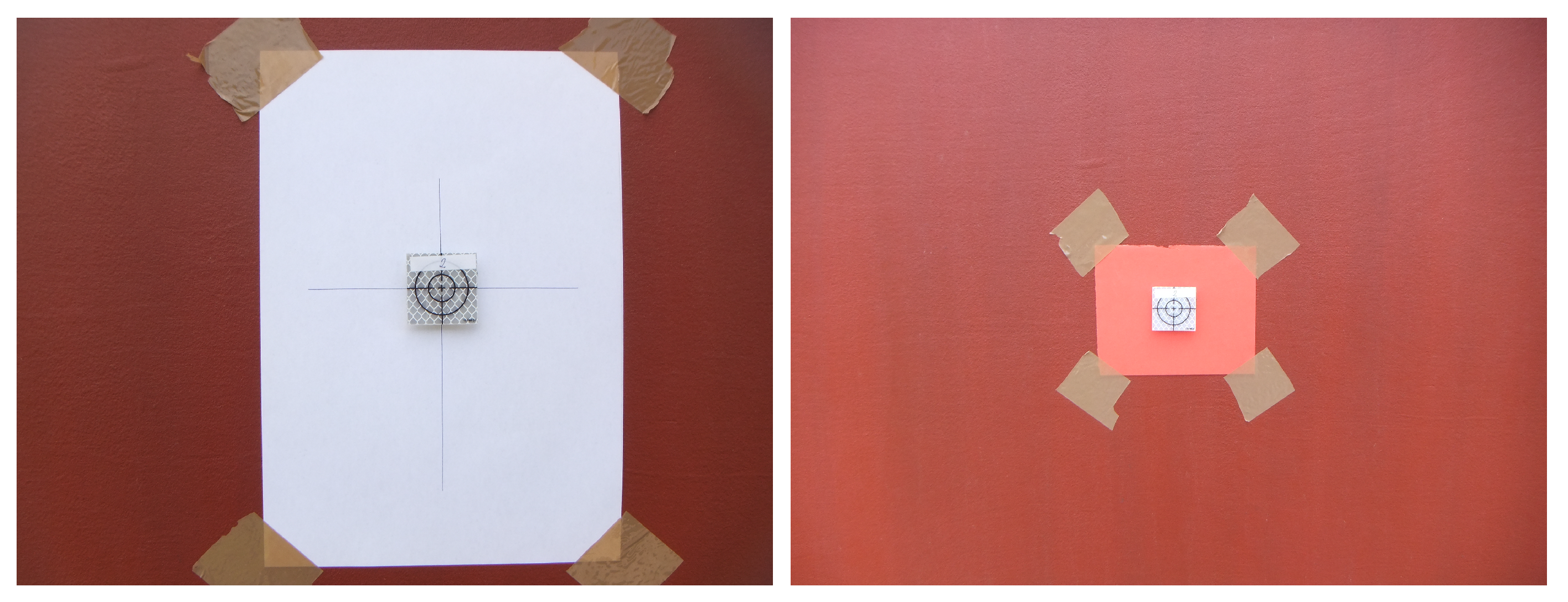

In the first benchmark test (B1), we were interested in finding a human user’s precision in repeatedly repositioning the reflective marker on a given fixed target within the working area under the best circumstance. It characterizes the human’s visual-motoric skills to mark a visual target under an ideal condition. To that end, we chose a highly contrasted crosshair printed on paper and attached it to the working area and asked a user to place the magnetic marker so that it is centered with the crosshair as accurate as possible (see

Figure 17, left). The position of the magnetic marker was then measured using the total station. Thereafter, the marker was removed and this procedure was repeated over for one hundred times.

While the target center marked by a crosshair in B1 is ideal to distinguish, the true center of a heat spot as mediated through the AR interface in the real experiment is of course much less clearly defined. Instead, due to the limited pixel resolution of the AR display and the distance of the third person camera from the working area, a heat spot to be designated by the user has some significant footprint in the real world (on the façade). More precisely, given the resolution of the IR images (320 × 240 pixels) and the horizontal span of the façade being captured by the camera (approximately 8 m), the footprint size of an image pixel on the façade corresponds to roughly 2.5 cm. With the typical size of a heat spot in the IR images between 4 and 5 pixels, we determined the footprint to be of about 12 cm × 10 cm. In a subsequent benchmark test, B2, we therefore used a clearly distinguishable piece of paper of this size as the target to be repeatedly marked by the same user (see

Figure 17, right). The intention was to find out how well a user would visually determine (estimate) and reproduce the center of a target with such large defined area. As in the previous benchmark, the test person performed one hundred such repeated target designations, whereby the designated position was measured using the total station between any successive attempt.

Any potential imprecision in the benchmarks described so far is affected both by human perceptual and motoric limits, but also by imprecision inherent to the (human controlled) measuring procedure using the total station. In order to quantify the latter, we let the test leader (who conducted all measurements using the total station) perform one hundred repeated measurements of a fixed reflective target in another benchmark test (B3), whereby the reticle of the total station was pointed to a random position after each measurement.

Although the described imprecision of target designation and registration up to now is in many regards affected by human factors, there also exist sources of errors that are purely AR system related. The co-registration of visual images with IR images based on an automatic natural feature detection and matching method has already been pointed out as one source of systematic error. To validate this hypothesis, we finally performed a benchmark test (B4) to examine the accuracy of digital image registration. In this procedure, we gathered visible images of the same façade inspected during the experiment from five camera positions which were different from the positions in the experiment. In addition to those visible images, we captured seven different IR images of the same façade under different viewing angles, hence producing notable different perspectives. In those images, we manually marked up 10 pairs of corresponding points (CPs) that served as references for validation. We then let our automatic image registration method, which is agnostic to the manually established CPs, perform image registration based on natural feature extraction and matching. After registration, we used the CPs to determine registration errors in terms of Euclidean distance between the visible images and their corresponding IR images.

5.2. Results

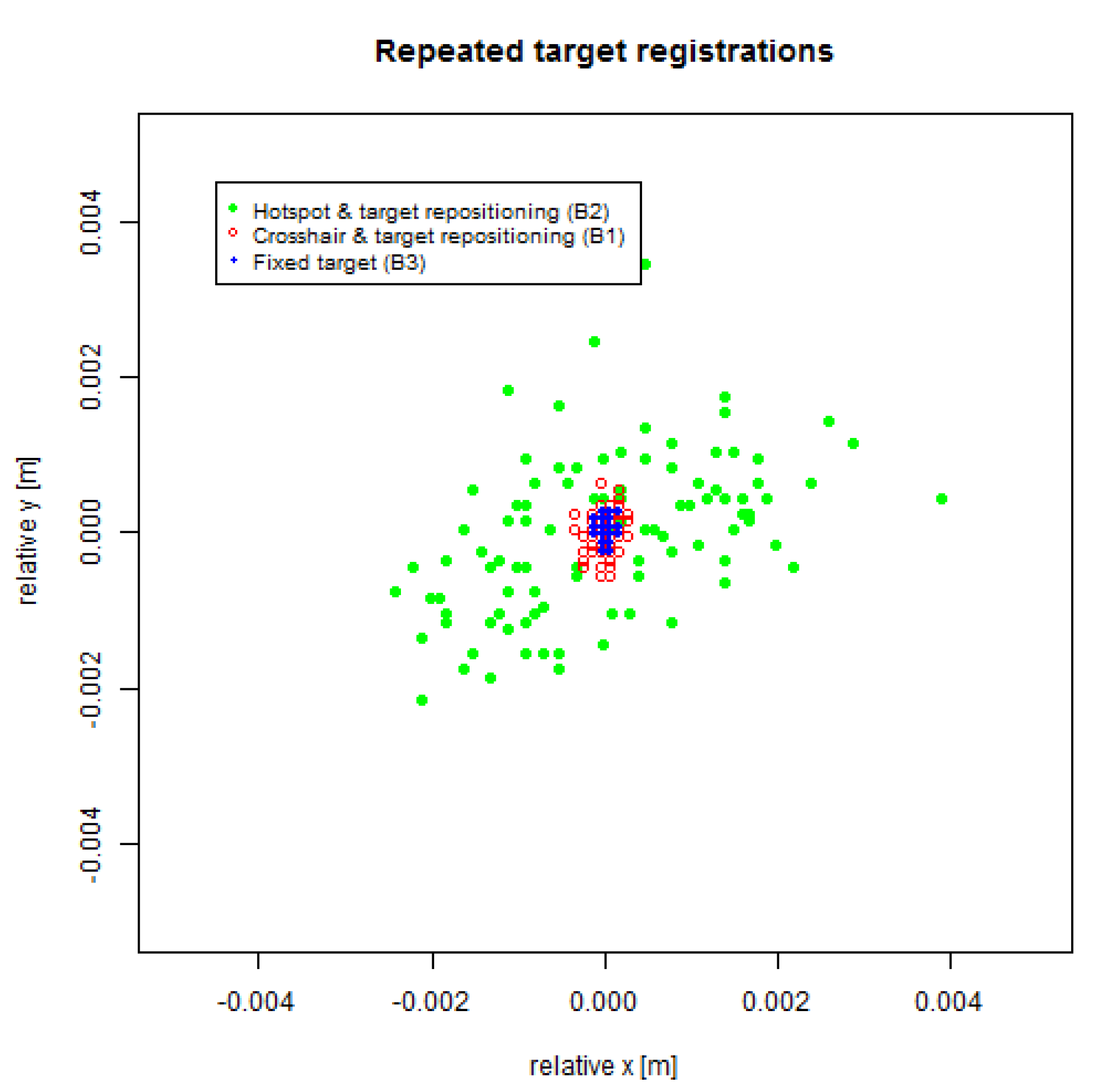

A graphical representation of the repeated manual target registrations is shown in

Figure 18.

Table 2 summarizes relevant quantitative measures in millimeters. As expected, the lowest dispersion and highest precision is observed for repeated measurements of one fixed marker using the total station. Although these measurements require human intervention in terms of sighting through the telescope of the total station, the maximum deviation of any measurement from the center is less than a third of a millimeter. The dispersion (in terms of span) horizontally is below 0.2 mm and below 0.3 mm vertically. By comparison, in test B1 with repeated designation of targets using a crosshair as a target, observed deviation and spans are roughly twice as large, meaning that the manual placement of a marker in our experiment under ideal circumstances (target is the most discernible) contributes only with another fraction of a millimeter to the error. For a more diffusely defined target such as the one in test B2, where the actual target center for registration must be visually extrapolated from the overall shape of a larger target footprint, deviations were an order of magnitude larger as compared with the crosshair target. Nevertheless and quite remarkably, those deviations from the center were always below 4 mm despite the fairly large footprint size (12 cm × 10 cm) of the target.

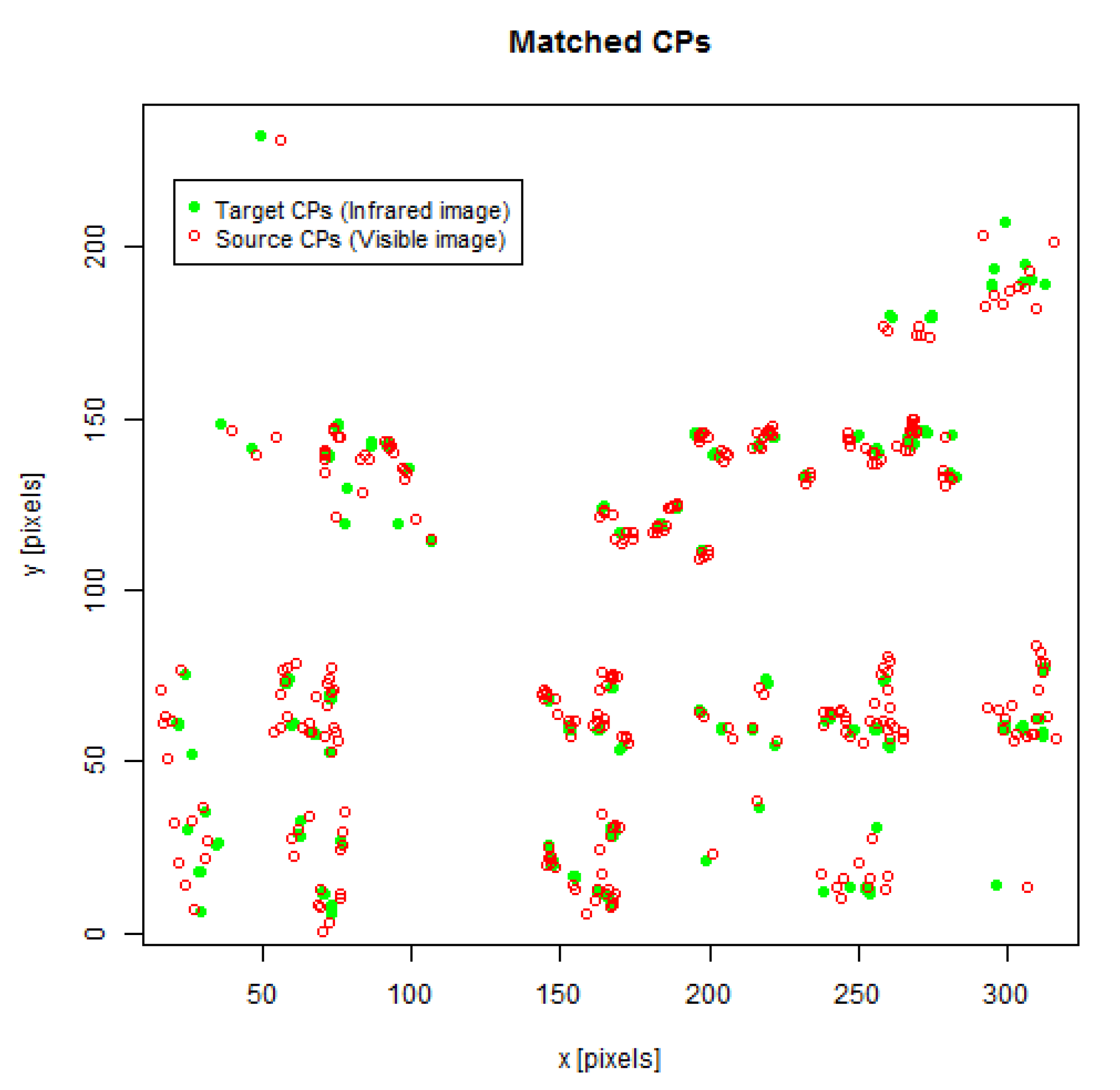

The results from test B4 (image registration errors) comprise matched sets of CPs for 31 out of the 35 possible combinations of visible and IR images because the matching algorithm did not succeed in four cases.

Figure 19 depicts graphically all 310 manually selected CPs in target image space (green points) as well as their corresponding co-registered CPs from the source image (red). At first glance, it seems like the matching process based on natural feature extraction yields in the central parts of the images best results with smallest errors in terms of the Euclidean distance between two CPs. A plot of errors against the CPs’ distances from the image center can be seen in

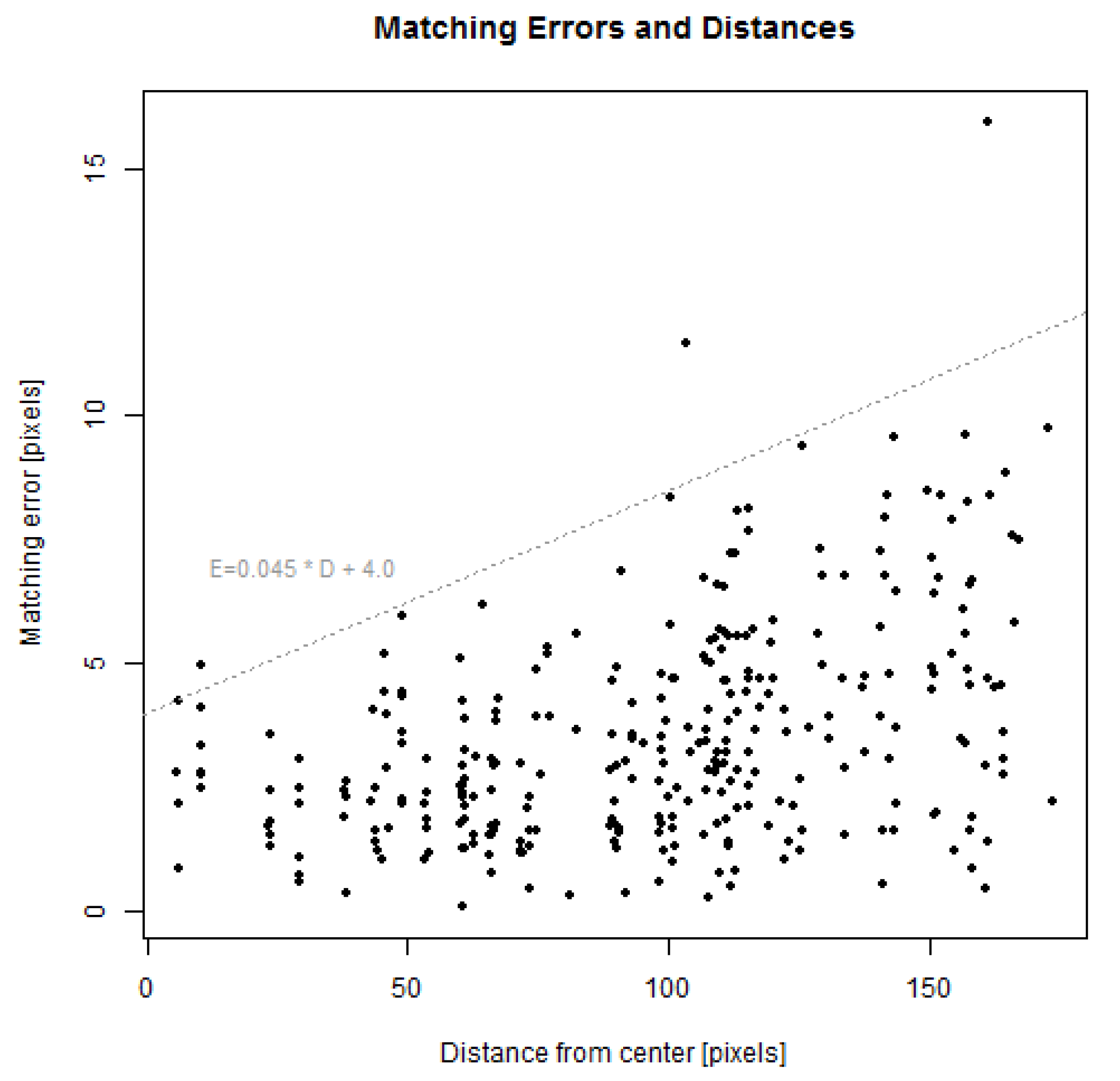

Figure 20. Although there exists no strict linear correlation between distances and errors, the error gradually spreads increasingly with growing distance. For this particular test, matching errors are (except for three outliers) below a line with the slope of 0.045 pixels matching error per one pixel distance from the image center with an intercept at four pixels. The median error for all CPs was 3.1 pixels with an IQR of 2.9 pixels. Considering only CPs in the central parts of the image (with a distance of fewer than 80 pixels from the image center), the median error was 2.3 pixels with an IQR of 1.7 pixels.

6. Discussion and Conclusions

This study presented and evaluated an AR system for designation of thermographic targets in a façade inspection task. The evaluation comprised a comprehensive analysis of various errors through a case study conducted in an actual outdoor setting. Experiments and tests in the study have established that overall errors in terms of deviations from true positions (i.e., accuracy) are on average around 7.6 cm with designation dispersion (i.e., precision) of 7.5 cm described by IQR. These errors are within the size of most façade defects that can be detected by IR thermography, such as thermal bridging, thermal insulation components, air/water leakage, etc. [

9] Thanks to natural feature-based image registration for augmenting live video, our system overcomes the typical difficulty in tracking when AR is adopted outdoors. Furthermore, the unconventional TPP approach towards AR enables us to combat the trade-off between close field interaction with façades and the need for contextual, large field image capture from a greater distance. Both elements are the central pieces of our solution to AR for building inspection and the study results have demonstrated its viability for the designated application scenario.

A deeper analysis of the results identified that the largest influencing factor in this process was image registration. Errors observed in the registration of IR and visible images in benchmark test B4 vary within the image plane and increase from the center of the image towards the borders. For the entire image, registration errors correspond on average to 3.1 pixels, which is well in accordance with the overall registration error of 3.23 pixels found in our previous study [

40]. However, in the central part of the image, which comprises the working area of this experiment, the error is some 2.3 pixels on average. Based on the previously established pixel-to-length conversion rate (roughly 1 pixel to 2.5 cm), we estimate the overall positioning errors entailed by the image registration procedure on average to be around 5–6 cm.

Apart from system-related error sources discussed above, which are obviously application specific, this study also reveals what we believe to be of general relevance to hand-held AR applications that adopt the TPP approach, namely, the residual errors separated from the systematic errors of image registration. These errors can largely be attributed to the actual visual-motoric and cognitive limits of humans when using such AR systems as in this study. More specifically, isolated distance errors (accuracy) are found to be around 2.2 cm on average, precision (again in terms of IQR) around 2.1 cm in the horizontal direction and 2.6 cm in the vertical direction. Upon further dissection, a part of this imprecision results from motoric and visual imprecision when users were placing the markers. For well delineated and distinguishable targets (in B1), this imprecision was found to be less than a millimeter and thus it is practically irrelevant. On the other hand, for larger targets without a clearly defined center (such as in the case of IR heat spots in this study and B2), the visual estimation of the target center brings about an error of less than 5 mm. In view of the established sources and magnitudes of errors, we can conclude that the imprecision in target designation as a consequence of users’ cognitive capacity required to mentally transform target positions between the exocentric coordinate space of the TPP imagery and the local coordinate system of the physical working area is indeed fairly small, and we estimate it to be around 2 cm. This performance should be appreciated in recognition to the size of the simulated heat spots on the façade. Based on the design of the heating devices (

Section 3.4) and due to heat diffusion in the outer layer of the façade material, the size of these artificial defects can be assumed to be substantially larger than 4 cm. Human induced target designation errors are thus clearly below the size of the artifacts to be marked up. Finally, it is worth pointing out that the reasoning above has neglected the imprecision in measuring marker positions with the total station. Although it involved human interaction, those errors were only around fractions of a millimeter on average (B3).

For future work, we plan to draw on state-of-the-art computer vision and machine learning techniques in order to improve the performance of our façade image registration process, both in terms of execution time and registration accuracy. We hope the upgraded version, together with more advanced hardware, could achieve real-time performance on a single hand-held device, thus fulfilling our original envision of a TPP AR tool for façade inspection, which consists of only a wireless camera and a hand-held device. The next step is then to incorporate more professional users from the field of facility management (FM) to identify other practical problems that can be solved by our system while we refine the usability aspect of the system and tackles new challenges that emerge along the process. In view of the more general nature of the TPP approach to AR, we are also interested in finding out whether it can be applied in other industrial sectors than FM.

In conclusion, given the unexpectedly small human errors, it is our belief that TPP AR is a viable approach to outdoor AR when the trade-off between close range interaction and the need for large field image capture at a greater distance for richer context must be tackled. For the specific application in thermographic façade inspection, our study has shown that the system inherent errors from, among others, image registration are also at an acceptable level, thus bringing hand-held AR a step closer to smart facility operation and maintenance.