1. Introduction

Tourism is a popular activity. According to the World Tourism Organization (UNWTO), there were 1.4 billion tourists in 2018, an increase of 5% over 2017 [

1]. Although sightseeing could be inspiring and relaxing, it requires both money and time. Furthermore, visitors to sightseeing spots could experience some challenges, such as the visit not progressing the way they had anticipated. Hence, the number of virtual reality (VR) tourism content is increasing. For example, many 360-degree videos taken at various tourist spots are uploaded on YouTube, where viewers can easily enjoy them from their homes using a VR head-mounted display (VR-HMD). The effects of VR tourism on users have been investigated in many studies [

2,

3,

4,

5,

6]. Tussyadiah et al. investigated the user experience and discussed the ways in which VR tourism could change a user’s attitude toward a destination [

7]. Kim et al. examined how potential tourists were encouraged to visit the tourist destinations presented in a VR environment from the perspectives of the authenticity of the experience afforded by VR activities and the attachment to VR tourism [

8]. It is expected that VR tourism will evolve because it can be experienced easily.

People often research sightseeing spots before visiting them. In doing so, they prepare to efficiently tour the sites and enjoy imagining what they would do there. Before VR technology, there were several ways to obtain information on tourist spots, such as pamphlets and websites with pictures and videos. However, VR technology can provide users a higher sense of presence beyond conventional methods. Furthermore, provisional experience can be gained through VR tourism. A VR tourism system that provides the pleasure of imagination or that enables the users to prepare for a visit to a tourist spot must maximize the effect of the experience by satisfying the following requirements:

If Req1 is satisfied, users can enjoy the VR tourism experience and effectively visualize what a visit to the spot would be like. Furthermore, the VR tourism experience can help users decide on a place to visit. If users cannot visit, for some reason, they can simply enjoy the experience. For Req2 to be fulfilled, the VR experience must have conveyed an accurate tourism experience. The knowledge acquired from such an experience will enable users to plan their subsequent visit effectively. In this study, we aimed to reveal the factors that facilitate both requirements. We hypothesized that providing a self-motion perception to the viewers of a VR tourism video is one of the factors.

To improve the sense of immersion in the VR environment, self-motion perception must be induced, not only through the audiovisual senses but also through the stimulation of the multimodal senses. Many studies have attempted to improve the sense of immersion through the multimodal senses. HapPull [

9] enhances the sensation of bodily motion by pulling users’ clothes. Cardin et al. used eight fan actuators to provide the sensation of the wind blowing [

10].

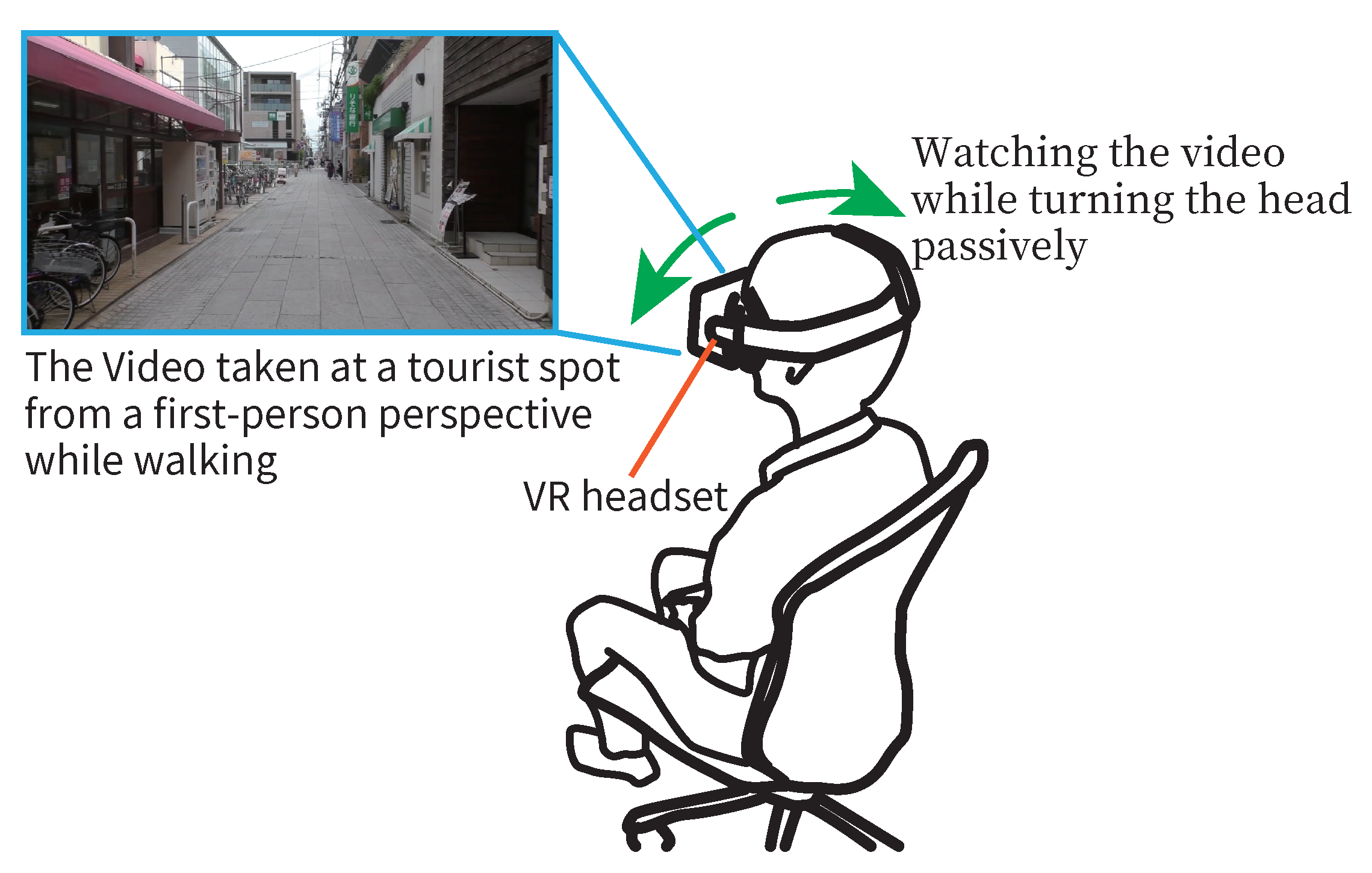

In this study, we show users a video of a tourist spot taken from a first-person perspective, via a VR-HMD. By showing the video in a VR environment, we aimed to convey the sense of presence, as well as the feeling of having been there, if they ever visit the site. In this study, we focused on the actions of tourists turning their heads to the left/right when walking at the tourist spot. We proposed a method wherein the perception of self-motion was achieved by forcibly turning the user’s head according to the video scene to heighten the sense of presence. Experiencing head motions with a form of multimodal perception while watching the video is expected to stimulate a higher sense of immersion. However, if users can turn their heads freely while actively watching the video, they may turn their heads too frequently when using the VR-HMD because the scenes of the video change automatically. Head movements that are too frequent are not natural. There is also a possibility that they might overlook key points in the video. Therefore, we assumed that our proposed method made the VR experience closer to the real tourism experience by getting the users to watch the video taken at the tourist spot while having their heads turned at a natural frequency by an external force (as shown in

Figure 1). We conjectured that it was also necessary to have such passive factors in a VR experience. Based on this assumption, we investigated the effect of passive body movements on the viewing experience.

In this study, we investigated the possibility of the participants experiencing the sense of being physically present at the scene shown in the video. Next, we investigated whether they felt like they had been there before when visiting the place where the video was taken. In addition, we examined whether there were some gaps between these feelings. The videos of the tourist spots were taken from a first-person perspective. The use of a VR-HMD made the viewing experience immersive. We aimed to accentuate the above feelings through a multimodal sensory stimulation. We assumed that existent feelings were accentuated by inducing the participants to perform actions they would naturally perform at the tourist spots as an additional sense.

The remainder of this paper is organized as follows. In

Section 2, we introduce the related work. In

Section 3, we describe the proposed method. The experiment is described in

Section 4 and

Section 5, and our considerations are presented in

Section 6. Finally, we present the conclusions and future work in

Section 7.

2. Related Works

2.1. VR Tourism

Extensive studies have been conducted on VR tourism. Jung et al. conducted a qualitative research on tourists virtually exploring the Lake District [

11]. They discovered that tourists welcomed the opportunity to experience destinations virtually to obtain a better understanding of the destination offerings. Gao et al. investigated the divergent preferences for different urban green spaces [

12]. They compared three approaches, on-site survey, photo-elicitation, and VR technology, and established that VR technology significantly differed from the other two approaches. Pantano et al. tested users’ acceptance of VR tourism as a tool supporting their decision to visit a tourist destination [

13]. Their results suggested that the enjoyment, perceived usefulness, and attitude derived when using the VR system had a direct positive effect on the users’ behavioral intention and the destination choices.

Some studies investigated the effect of watching VR content before or after visiting a site. Tussyadiah et al. investigated the possible effects of the VR experience, specifically the sense of spatial presence, on travel decision [

7,

14]. They confirmed the effectiveness of the VR experience for marketing and concluded that a higher sense of spatial presence led to stronger interest in the destination. Bogicevic et al. investigated how the VR system could be used by tourists to obtain integrated tourist experiences prior to staying at a hotel [

15]. They compared the previews of three hotels (images, 360-degree tours, and VR) and found that the VR preview provided better mental imagery, compared to static images and 360-degree tours. The findings revealed that brand experiences could be obtained from VR stimuli. Wei et al. focused on how the VR technology might enhance the experiences and behaviors of theme park visitors [

16]. They found evidence of the benefits of theme parks adopting VR technology in the improved satisfaction of the visitors. In addition, they confirmed that the visitors were more inclined to revisit and recommend the park to others.

As shown by the results of these studies, VR tourism affects users’ behavior and thoughts. It is important to reveal every characteristic of VR tourism to use it effectively.

2.2. Stimulating Multimodal Perception

Several attempts have been made to improve the sense of immersion through multimodal stimulation. For instance, Tactile Jacket [

17] and Synesthesia Suit [

18] provide vibration sensations or tactile sensations, which are achieved through the installation of vibrators on clothes to stimulate the cutaneous senses. Although both can stimulate a wide range of body parts, they are not designed to provide sensations that induce body movements. Some researchers simulated the blowing of the wind using blowers and stimulus that sprays air [

10,

19,

20]. Although these cannot provide a sensation of acceleration, they can improve the sensation of immersion and present vection. Ito et al. manipulated the perceived directions of the wind through the cross-modal effect using only two wind sources [

21]. LevioPole [

22] is a device with a shape similar to a canoe paddle; it has fans installed on both ends. It simulates canoe paddling or weightlifting by controlling the wind.

In this study, we will make users turn their heads by pulling them using strings in the future. Based on previous studies that attempted to convey the sense of force, we will be able to construct a mechanism that induced head turning. SPIDAR [

23,

24] presents a sense of force in several directions by pulling the body parts of a user using multiple strings. HapPull [

9] stimulates the cutaneous senses by pulling the clothes of the user using a relatively small mechanism.

Besides the use of strings, there are other methods. HangerOVER [

25] utilizes the hanger reflex, a phenomenon in which the head rotates unintentionally when an appropriate pressure distribution is applied to it. To make users turn their heads, HangerOVER uses four air-driven balloons. This enhances the sense of immersion when the user watches a video using a VR-HMD. GyroVR [

26] is a head-worn flywheel designed to render inertia. These flywheels leverage the gyroscopic effect, which impedes the user’s head movement.

Zhang et al. proposed a method that simulates the feeling of flying by providing the user with both seat support and lower body freedom [

27]. They compared their proposed method with existing sitting and standing stances. Our results showed that their proposed method increased the participants’ feelings of flying, compared to similar methods.

As mentioned above, many researchers have heightened the sense of immersion by providing multimodal sensations. In this study, we aimed to improve the feeling of presence and facilitate advanced VR tourism. Our proposed method compelled users to turn their heads to create the illusion of self-motion.

2.3. Experience Transfer with VR

We aimed to provide a sightseeing experience using VR technology. The VR experience has wide applications. Hiyama et al. proposed a learning system using the technology to facilitate the transfer of Japanese traditional papermaking techniques by measuring the expert’s motion and presenting the extracted tacit skills [

28]. They proved that the system enabled learning techniques on improving the quality of paper within a short period. The VR sports training system developed by Strivr Labs, Inc. is utilized by NFL (American football professional league) to train players and referees. They developed another system that is utilized for training supermarket employees. Both have shown good results.

Jack-In Head [

29] is an immersive experience transmission architecture with a wearable camera. A user wears a headgear with a camera and transmits the video to others. Other people can virtually explore the immersive visual experience through their own head motion using a VR-HMD. Users can share their experiences in real time.

As mentioned above, the VR system affects the user’s mind, thought, and behavior. In this study, we anticipated that watching a video via VR-HMD will improve the user’s impression of a tourist spot.

3. Method

In this study, we aimed to reveal the factors that provide the feeling of presence and having been to a tourist spot in VR tourism. We hypothesized that one of the factors is to induce the self-motion perception in the viewer watching a tourist spot video. For our proposed VR tourism system, we have assumed that users have not visited the tourist spots before.

3.1. Method of Head Turning

We focused on the action of turning the head; an action visitors naturally perform at tourist spots. Then, we reproduced it to induce the self-motion perception in the participants. In our proposed method, the users watched a video using a VR-HMD. While watching it, their heads were turned forcibly according to the video scene. The video was taken at tourist spots from a first-person perspective. The VR-HMD provided the immersion sensation. We aim to heighten the sensation by compelling the users to turn their heads to create a multimodal sensation. We assumed that we could improve immersiveness by making the users forcibly perform an action that occurs naturally at tourist spots.

In our proposed method, the users are compelled to turn their heads by an external force. If users can freely move when watching a 360-degree video via VR-HMD, they can look in any direction of their choice. However, if they have unrestricted head movements while actively watching the video, they are likely to turn their heads too much. This is because the scene of the video changes automatically. In this study, because we provide users with a self-motion perception with natural movement, turning the head willfully is not suitable. In addition, when the users watch a video with an ever-changing scenery, there is the possibility of overlooking several points that were not in sight. By guiding their head movement, we can make them view the points we wanted them to see, such as a landmark. In addition, watching a video with rotating scenes to the left and right could induce motion sickness, which can be prevented by coordinating the head movements with the scene. SwiVRChair [

30] is a rotatable chair system that changes the user’s face direction according to the scene of the VR video. Users can watch the video without experiencing motion sickness or overlooking significant points. However, the whole body movement afforded by the chair is not ideal in terms of tourism, for which the proposed system was designed. Hence, we attempt to forcibly turn only the user’s head.

3.2. Rotating the Head

We made a prototype with elastic bands and conducted a preliminary experiment, which resulted in different head rotations for each participant. Therefore, in this study, the experimenter holds the head of the participant. He exerts pressure on the participant’s head and manually rotated it, adjusting the timing according to the scene. The experimenter is the same across all the experiments. In this paper, we do not consider rotation in the vertical direction; we focused only on the left and right directions. In the future, we will develop a mechanism for controlling the angle of the user’s head naturally and precisely.

We instruct the users on how to move their heads before watching the video. Thus, when we tried the proposed method, the users began to turn their heads as soon as the experimenter applied slight pressure to their heads. The experimenter applied a slight force when the rotation needed to be stopped, and the users stopped turning their heads. It was assumed that the rotation was not excessively fast, and the proposed method could be used without any burden on the users. The experiments described subsequently were conducted with the prior consent of the users.

3.3. Experimental Questions

In this paper, we evaluated the effect of the proposed method on the users’ sightseeing experience. The participants answered the following questions:

Q1 was answered in a laboratory after watching the video, and Q2 was answered at the place where the video was taken after walking through it. We considered each result and investigated whether there were some gaps between the results of Q1 and Q2. If there were some gaps, it would be impossible to use the results of Q1 to evaluate systems designed to induce users with a feeling of having been to a site. To evaluate such systems, it is necessary to investigate the feelings of the participants after visiting the site. To examine the possibility of using Q1 as an alternative question, we compared the results of Q1 and Q2.

4. Experiment in the Shopping District

4.1. Experimental Method

Participants watched a video taken by the experimenter; the video was taken while the experimenter was walking through a shopping district. Then, the participants answered whether they experienced the feeling of presence. At a later date, they walked through the shopping district and answered whether they experienced the feeling of having been there before. Using the above questions, we investigated the effect of the proposed method on the participants.

The participants watched the videos in three styles:

Turning their heads according to the scene with a VR-HMD (w/ move)

Keeping their heads immobile (not turning) with the VR-HMD (w/o move)

Having their heads immobile facing a PC display (PC)

We investigated the effect of the proposed method by comparing the results.

Figure 2 shows the appearance of a participant watching the video. The pictures at the upper left of each figure show the scene the participant was watching. At the time of each scene, the video camera was turned to the left of the walking direction. When the participants watched in the

w/ move mode (as shown on the left in

Figure 2), the experimenter applied pressure to their heads as a cue to turn it when the video camera changed direction to the left and right. The experimenter could grasp the timing by observing the indicator on a nearby laptop. When they watched in the

w/o move mode (shown in the middle of

Figure 2), they kept their heads straight. In the

PC mode (shown on the right of

Figure 2), they watched the video on the PC display. We used Oculus Rift (resolution: 2160 × 1200; resolution of one eye: 1080 × 1200) as the VR-HMD and a 27-inch PC display (resolution: 1920 × 1080).

We used a video that the first author captured using a video camera (Panasonic Corporation: HC-W850M, Osaka, Japan). He took the video while walking on a straight road, fixing the camera height position at his eye level (height: 172 cm), and turning the camera multiple times to the left and right. The road had three legs (, , and ). We extracted three videos, one for each leg, from the captured video. Each participant watched each video under any of the three watching modes in a random order (for example, Participant X watched the video of with w/ move first, followed by one of with w/o move, and one of with PC last. Participant Y watched the video of with w/o move at first, one of with PC next, and one of with w/ move finally.). After three days from watching the videos, the participants walked on the road in the following order: , , and .

The distance was

: 98.5 m,

: 91.3 m, and

: 105.8 m. The duration of the videos was 1

36, 1

34, and 1

45, respectively; the number of times the video camera turned to the left and right was seven, eight, and nine, respectively. There was a five-

m interval between

and

and ten

m between

and

. When shown via the VR-HMD, the video was shown as small in size and did not appear in the participant’s peripheral visual field; this prevents motion sickness. On the

PC, the video was displayed at a size of 960 × 540. We used the sound recorded by the video camera. When displayed via the VR-HMD, we output the sound using the VR-HMD’s headphone. The sound used with the PC display was from a speaker connected to the PC. Bindman et al. studied the feelings of presence by comparing a VR-HMD and a smartphone when watching a 360-degree video and concluded that greater feelings of presence were experienced when the participants watched the video while thinking that they attend to the video [

31]. Hence, we instructed the participants to imagine they were actually walking on the street as they watched the video.

When watching the video at a laboratory, the participants answered questions at the end of every video. Based on a seven-point Likert scale, they responded to the following questions: “Did you feel that you were actually walking through the shopping district?” and “Did you experience motion sickness?” Furthermore, they were instructed to provide some descriptive answers to questions such as “Mention the shop names that you remember” and “Describe the video content that you remember freely.”

The participants walked in the shopping district, and, after completing all the legs, they answered the following questions based on a seven-point Likert scale: “Did you feel like you had been to the shopping district before?” They also answered the following questions framed to elicit descriptive answers: “Where did you feel that you had been? (You can describe multiple places freely)” and “Where were you impressed? (You can describe multiple places freely).

When walking, the participants wore a wearable camera (Panasonic Corporation: AG-WN5K1) and eyewear (JINS MEME) with an accelerometer and a gyroscope. The wearable camera recorded how each participant walked and the duration for which they walked. The eyewear recorded the head motion data of the participants while walking.

There were 12 participants (11 men and 1 woman), aged 21 to 25 years. All of them had never walked through the shopping district. Each leg of the video was watched four times in each of the three watching modes. We could not obtain the eyewear data of two participants.

4.2. Experimental Results

4.2.1. Questionnaire Results

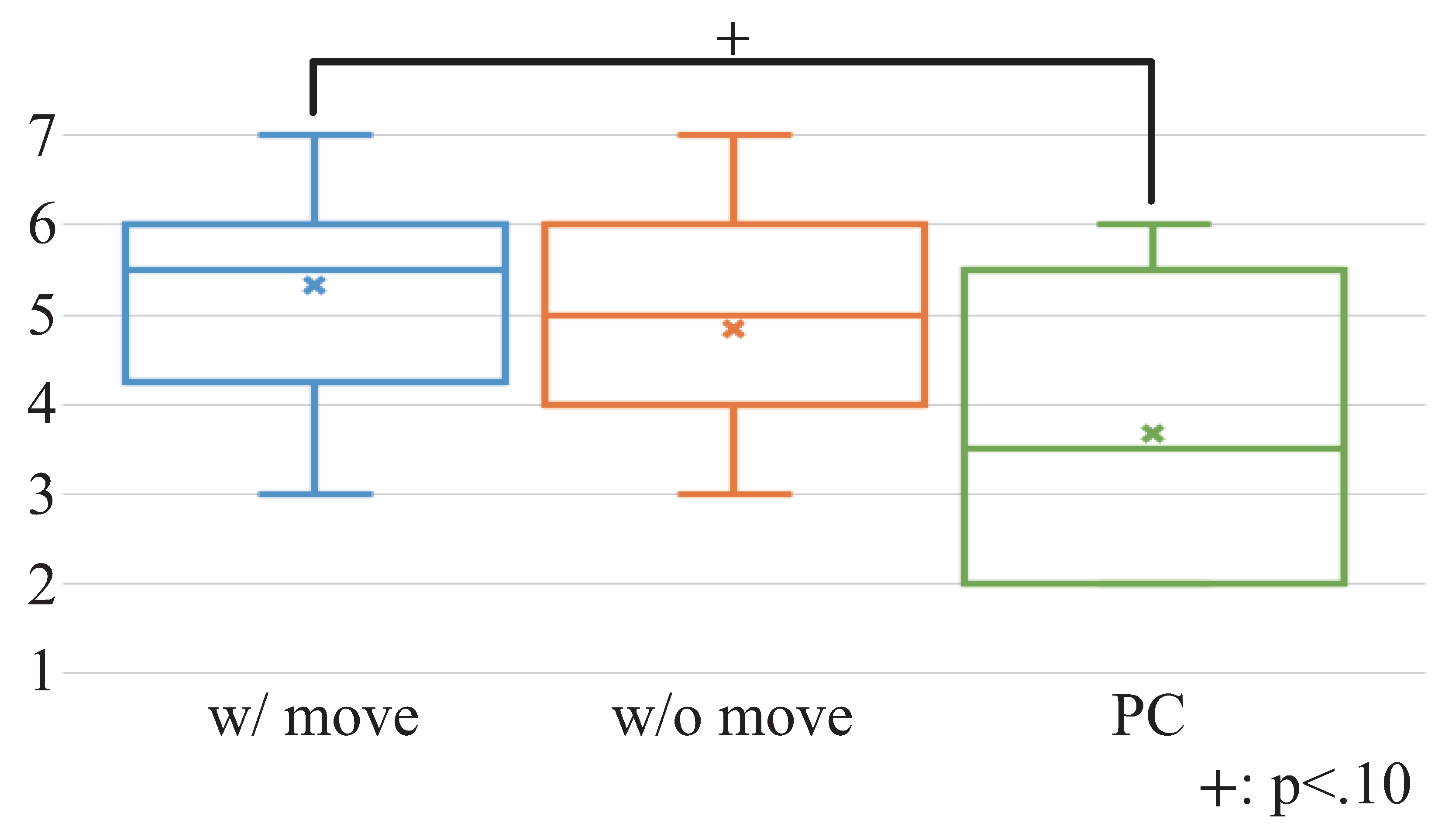

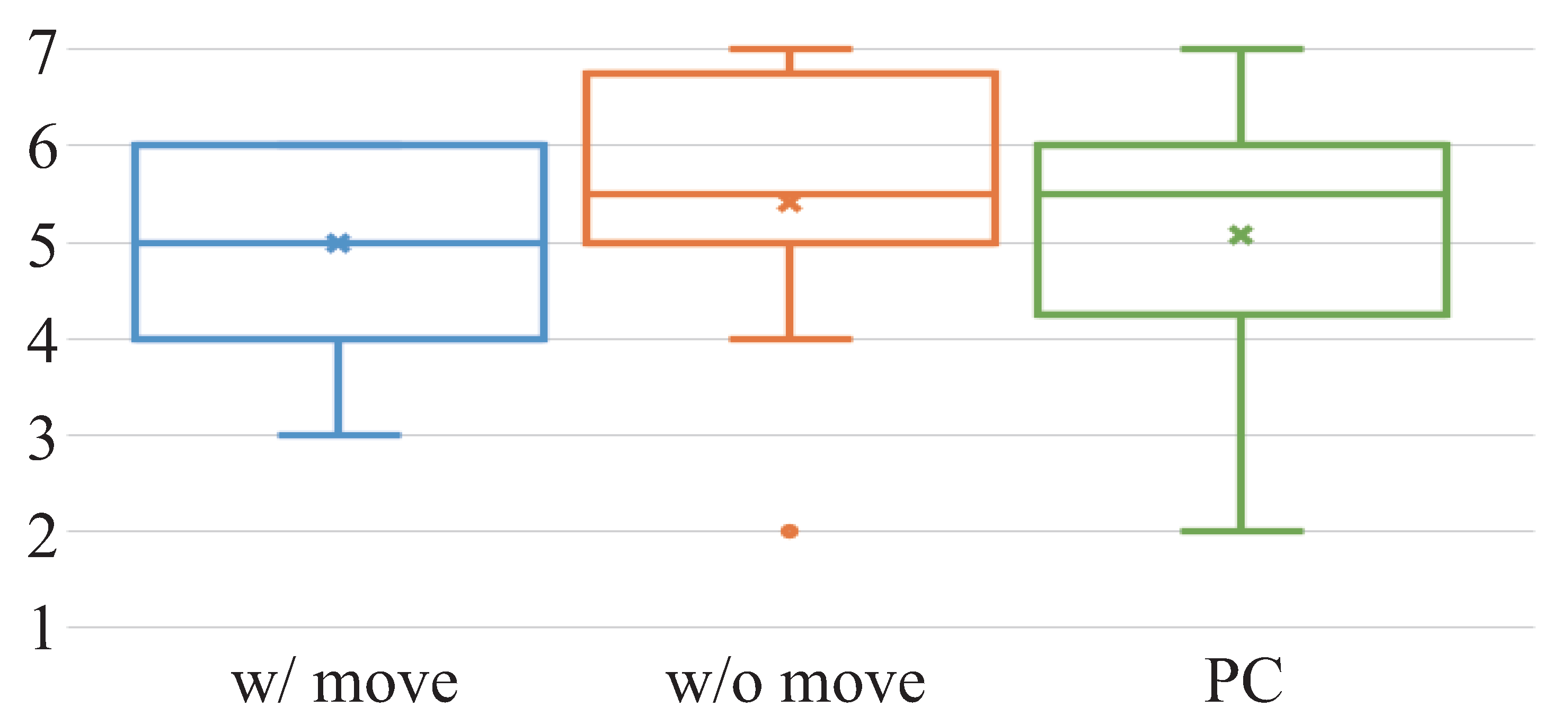

Figure 3 shows the results of

Q1 was asked after watching the videos, which is “Did you feel that you actually walked through the shopping district?”

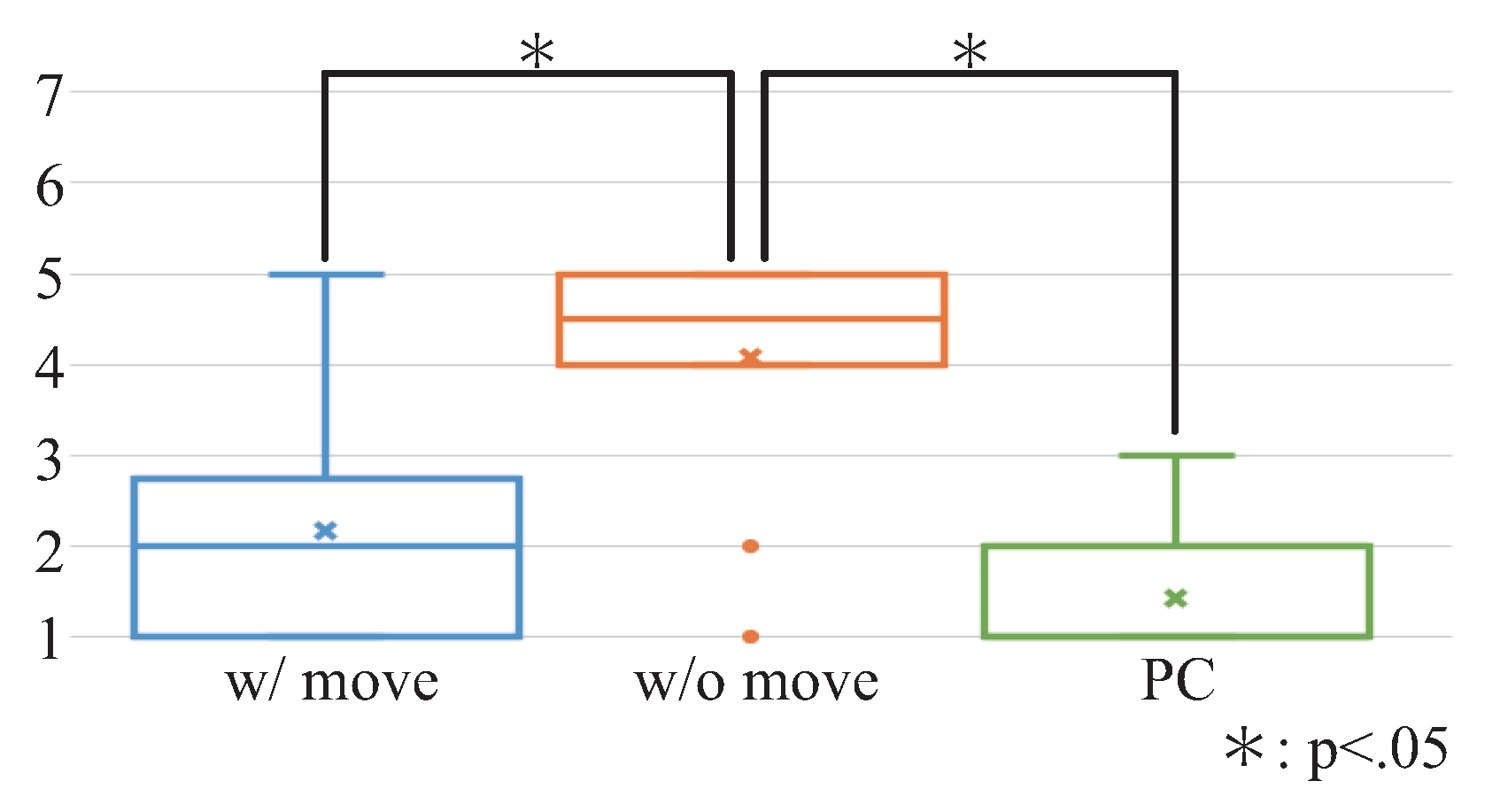

Figure 4 shows the results of

Q2 asked after walking through the shopping district, which is “Did you feel that you have had been to the shopping district before?” We conducted the Friedman test on the results, and the result showed that there was a significant difference for

Q1 (chi-squared = 9.48, df = 2,

). Then, we conducted the Wilcoxon rank sum test with Bonferroni correction on the results, which showed that the result of

w/ move was greater than that of

PC. There was no significant difference for

Q2 (chi-squared = 0.043, df = 2,

).

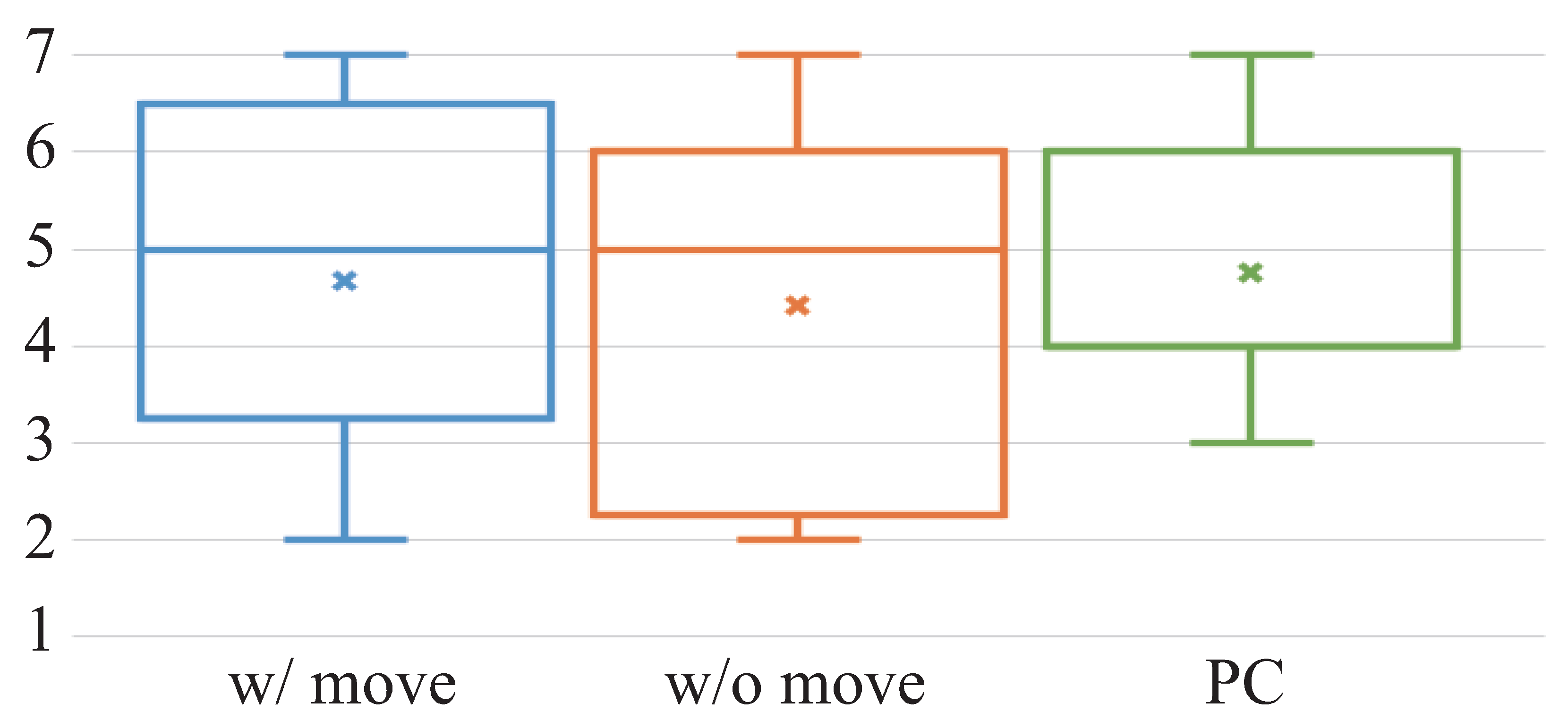

Figure 5 shows the results for the question (

Q3) asked after watching the videos, which is “Did you experience motion sickness?” We conducted the Friedman test on the results and observed a significant difference (chi-squared = 7.37, df = 2,

). We conducted the Wilcoxon rank sum test with Bonferroni correction on the results. There was no significant difference between the groups. Although we aimed to prevent motion sickness by turning the heads of the participants, the result for

w/ move was similar to that of

w/o move and greater than that of

PC.

Many of the answers included the names of chain restaurants that featured prominently in the videos, shop names, and mentions of the places they were impressed with. When we divided the results of Q2 by each leg, had the greatest score, and had the smallest. On , there were many chain restaurants. On , there were few chain restaurants. It appears that it is important to include some familiar views in the video when attempting to elicit the feeling of having been there before. Based on their responses, it was assumed that some participants felt a sense of discomfort. Furthermore, because there were few participants, we could not determine the relationship between the sense of discomfort and feeling of having been there.

4.2.2. Sensor Data Results

Using the eyewear data, we investigated whether the participants turned their heads frequently while walking the leg they had watched on video. We used the yaw-axis data from the gyroscope because the data fluctuated when the participants turned their heads. We calculated the average of the moving variance; the window width was one second, and the slide width was one sample. We obtained the eyewear data using MEMELogger (

https://itunes.apple.com/us/app/memelogger/id1073074817), an iOS application that can yield yaw-axis data within a range of 0 to 360-degrees.

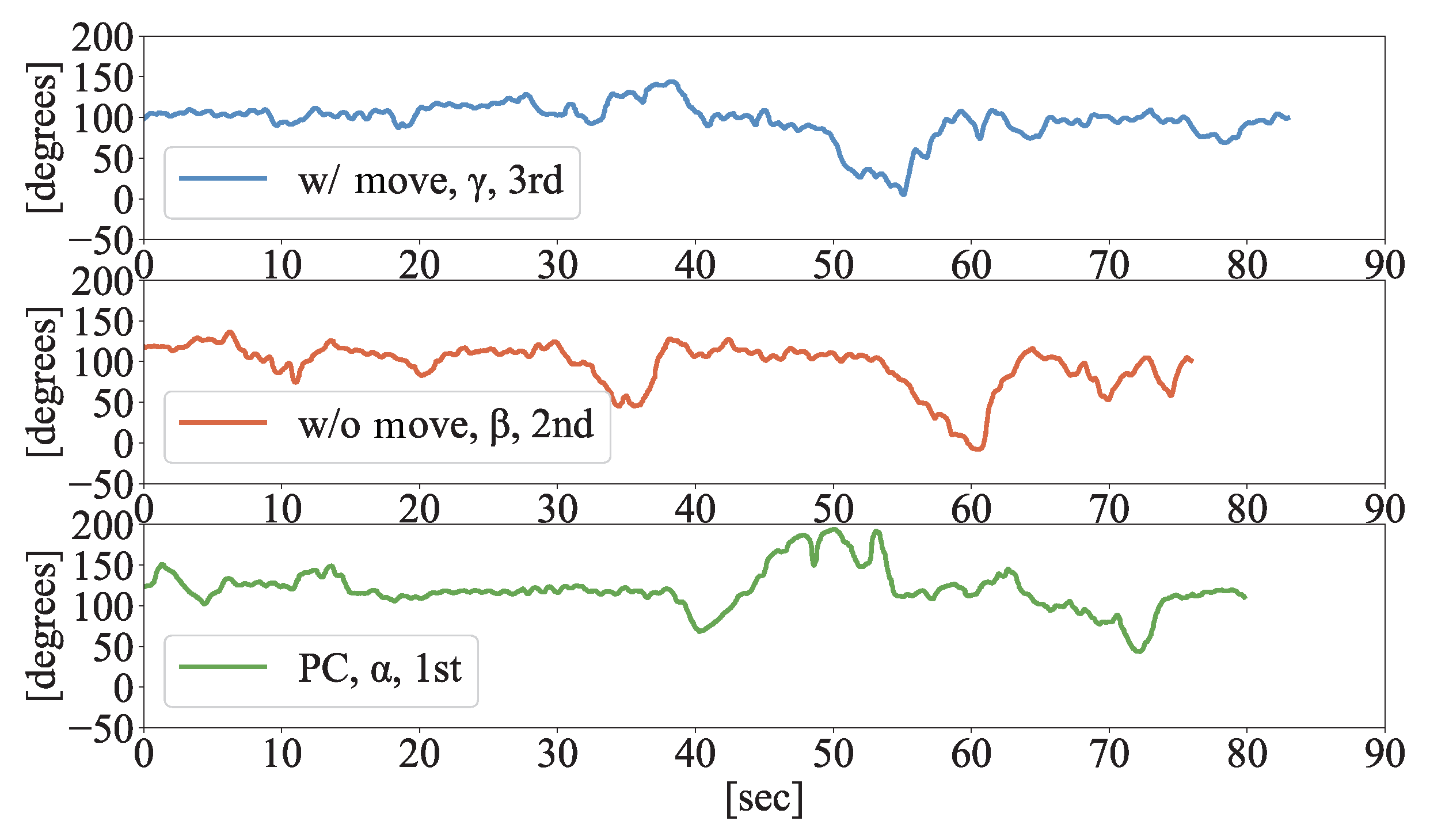

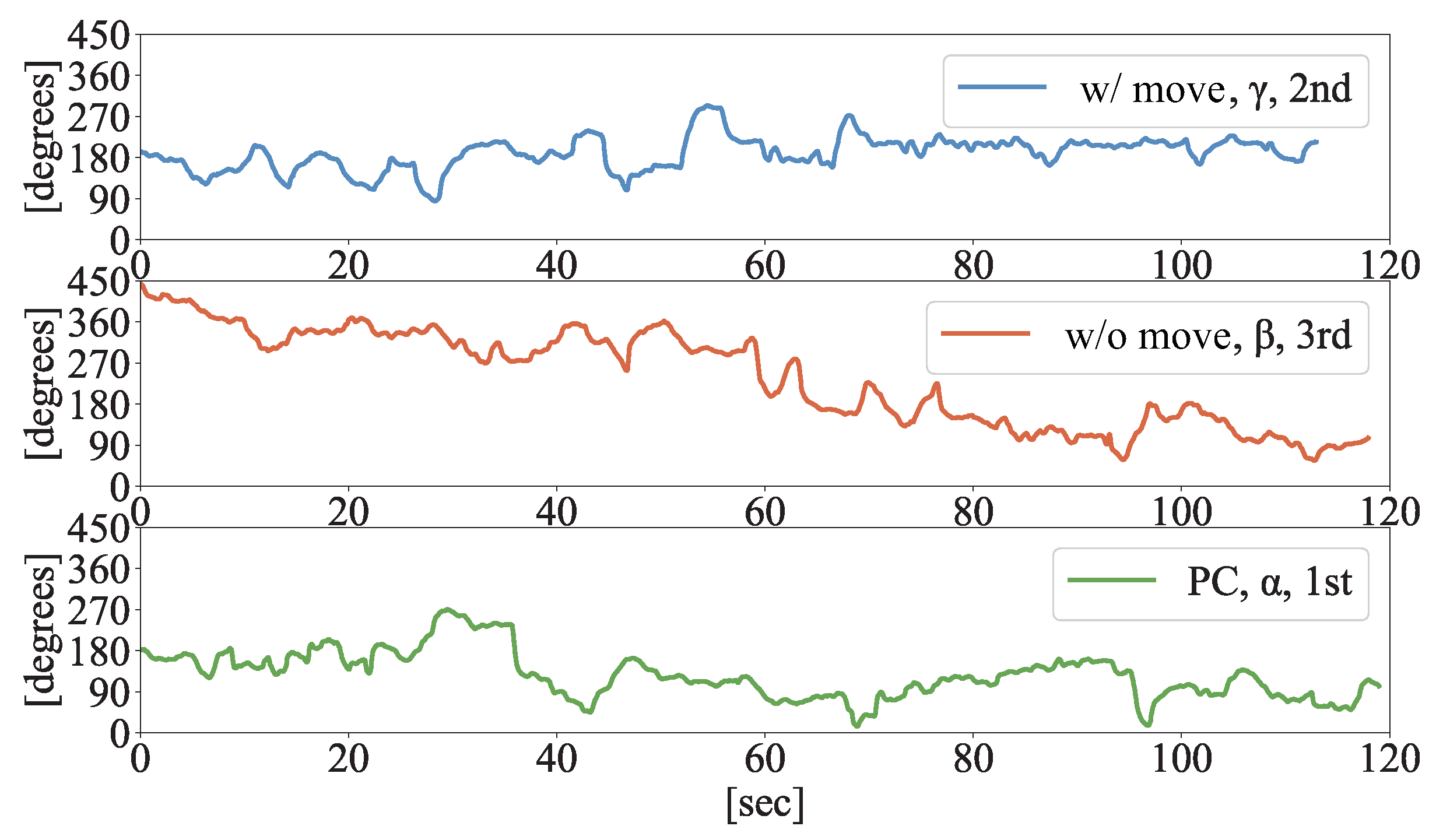

Figure 6 and

Figure 7 show the raw data from two participants (P-A and P-B) walking each leg; 1st, 2nd, and 3rd denoted the order in which they walked.

Figure 8 shows the average of the moving variance for ten participants.

As shown in

Figure 6 and

Figure 7, P-A did not turn his head frequently, and P-B turned his head very frequently. It can be inferred from the error bar (the standard deviation) in

Figure 8 that the head turning frequency varied according to each individual. Although we investigated whether the participants turned their heads at the same places and time as when they were watching the video, we could not confirm this.

We conducted an analysis of variance (ANOVA) on the moving variance. For the main effect, there was a significant difference (). According to the multiple comparisons based on the least significant difference, the results of w/ move and w/o move were smaller than those of PC. When walking the leg they had watched in the w/ move mode, the participants turned their heads more frequently, compared to the leg watched on the PC; furthermore, they walked with a similar frequency to the leg with w/o move style. It appeared that there were some differences between the cases watched via the VR-HMD and those watched on the PC. We need to examine these differences in the future.

5. Experiment in Zoo

5.1. Experimental Method

The video of the shopping district experiment was taken using an ordinary video camera; however, 360-degree videos are more immersive. In this experiment, we investigated the differences in effects when using a 360-degree video.

The methods, equipment, and flow as the experiment on the shopping district were replicated in a zoo.

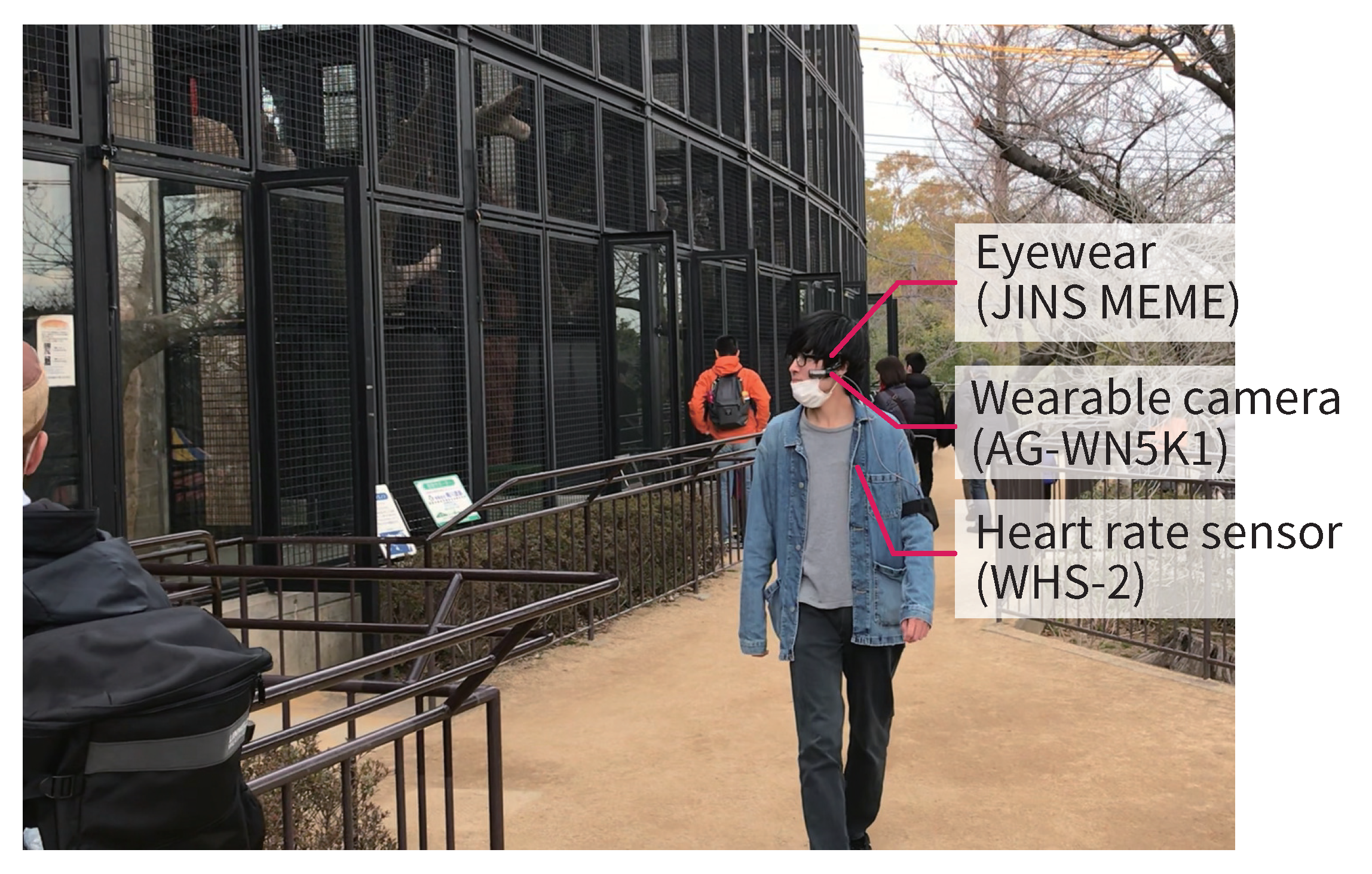

Figure 9 shows the appearance of a participant watching the video. The different methods, equipment, and flow are described below.

Instead of the ordinary video camera, we used Insta360 ONE X, which could record 360-degree videos. The experimenter took the video while walking through a zoo, fixing the camera position at his eye level (height: 172 cm). He took three videos at , , and . The distance was : 134.3 m, : 124.2 m, and : 117.7 m. The duration of each video was 2 min. Because each leg had some slopes and curves, there was a difference in the distance, despite the walking time being the same. When showing the videos under the w/o move and PC modes, the 360-degree videos automatically turned to the left and right at specified timings. In the w/ move mode, the experimenter turned the participant’s head at the same timings. The number of turns was eight times for each leg. Each participant watched each video under the three viewing modes in a random order. When walking in the zoo, the participants walked in a random order, different from the one in the videos they watched. When walking at the intervals between each leg, the participants did not walk at other specified legs.

When watching the videos, the participants answer the questionaries every time they finish watching one video. The participants answered the following questions on a seven-point Likert scale: “Did you feel that you actually walked at the zoo?” and “Did you experience motion sickness?” Furthermore, they answered some descriptive questions such as “Describe freely (e.g., things that you remember and things that you think).”

Similarly, after finishing each leg when walking through the zoo, the participants answered the following question on a seven-point Likert scale: “Did you feel that you had been to the zoo before?” In addition, they answered three descriptive questions: “Where did you feel that you had been? (You can describe multiple places freely),” “Where were you impressed? (You can describe multiple places freely), ” and “Describe freely.”

When walking, the participants wore a wearable camera, eyewear, and heart rate sensor (UNION TOOL CO.: WHS-2). We obtained data indicating the stress level of the participants while walking.

The participants were 12 men aged 21–24 years. Among them, five had participated in the experiment in the shopping district. None of them had ever walked through the zoo. The video of each leg was watched four times under each of the three modes. We could not obtain the eyewear data of two participants and the heart rate sensor data of one participant.

Figure 10 shows the appearance of the participant walking in the zoo.

5.2. Experimental Results

When walking through the zoo, the experimenter led the participants to the start points of each leg. The participants walked the specified leg alone. The 12 participants walked 36 times in total and took the wrong route 11 times. When they took the wrong route, the experimenter who walked behind them told them the right route. We did not use the sensor data for the analysis in the zone where the participants took the wrong route. Five participants walked correctly in all the legs. Four participants made mistakes at one leg, two made mistakes at two legs, and one was wrong at all legs. They were wrong five, four, and two times at the legs they had watched in the w/ move, w/o move, and PC modes, respectively.

5.2.1. Questionnaire Results

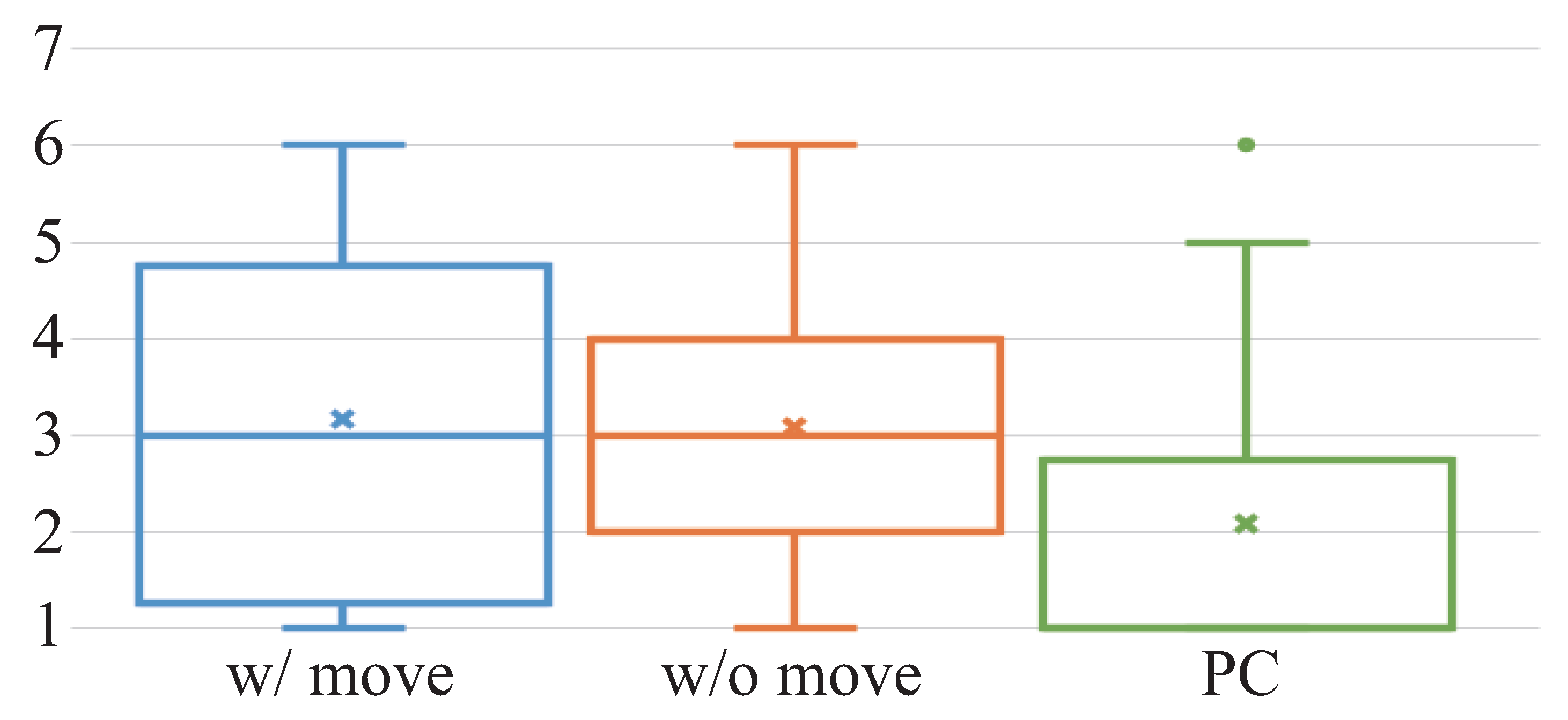

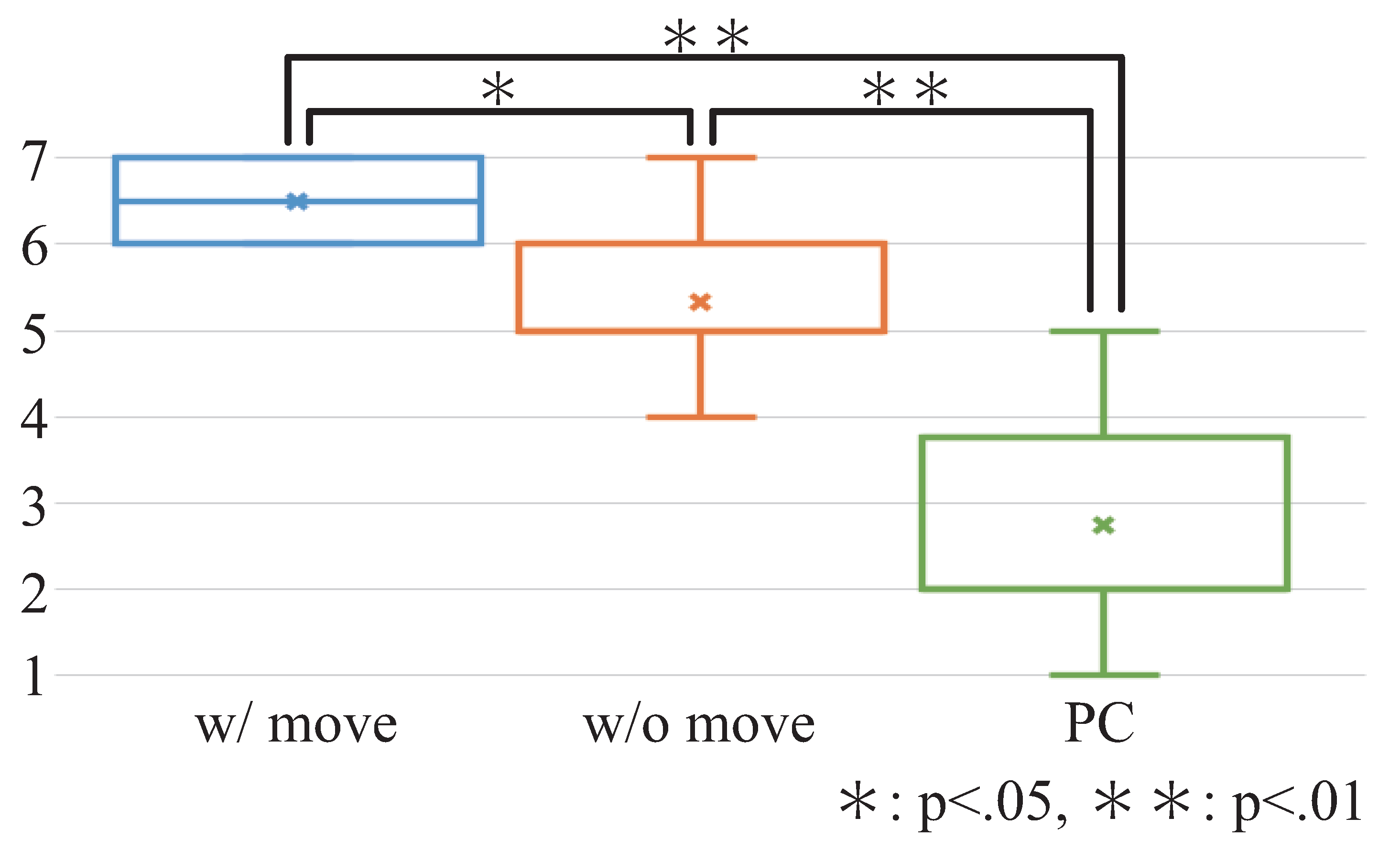

Figure 11 shows the results of the question (

Q1) posed to the participants after watching the video, which is “Did you feel that you actually walked through the zoo?”

Figure 12 shows the results for the question (

Q2), which is “Did you feel that you had been to the zoo before?” after walking in the zoo. We conducted the Friedman test on the results, which showed a significant difference in

Q1 (chi-squared = 22.8, df = 2,

). Furthermore, we conducted the Wilcoxon rank sum test with Bonferroni correction on the result. The results showed that the score of the

w/ move was greater than that of

PC (

) and

w/o move (

). The results showed that the score of

w/o move was greater than that of

PC (

). There was no significant difference for

Q2 (chi-squared = 0.57, df = 2,

).

Figure 13 shows the results for the question (

Q3) asked after watching the video, which is “Did you experience motion sickness?” According to the Friedman test conducted, the results exhibited a significant difference (chi-squared = 17.9, df = 2,

). We also conducted the Wilcoxon rank sum test with Bonferroni correction on the result. The results showed that the result of

w/o move was greater than that of

w/ move and

PC (

). We aimed to prevent motion sickness by turning the head. Unlike the case of the shopping district, the result of

w/ move was smaller than that of the

w/o move when the 360-degree video was used.

When the participants turned their heads when watching the videos, they gave the following descriptions: “I felt that I actually went there, ” and “The feeling of presence that I was there improved.” There were also some negative descriptions: “I felt strange for making turning my head,” and “It was difficult to see distant things.” Three participants who had the same height as the experimenter said they felt that the eye level was higher than their height. These opinions did not lead to lower Q2 results. After walking, some of the participants stated, “I felt that I had been there before.” The participants expressed such opinions on any modes.

5.2.2. Sensor Data Results

We did not conduct ANOVA because some values were missing as a result of some participants taking the wrong routes.

We calculated the average of moving variance of the yaw-axis data, similarly to the shopping district experiment.

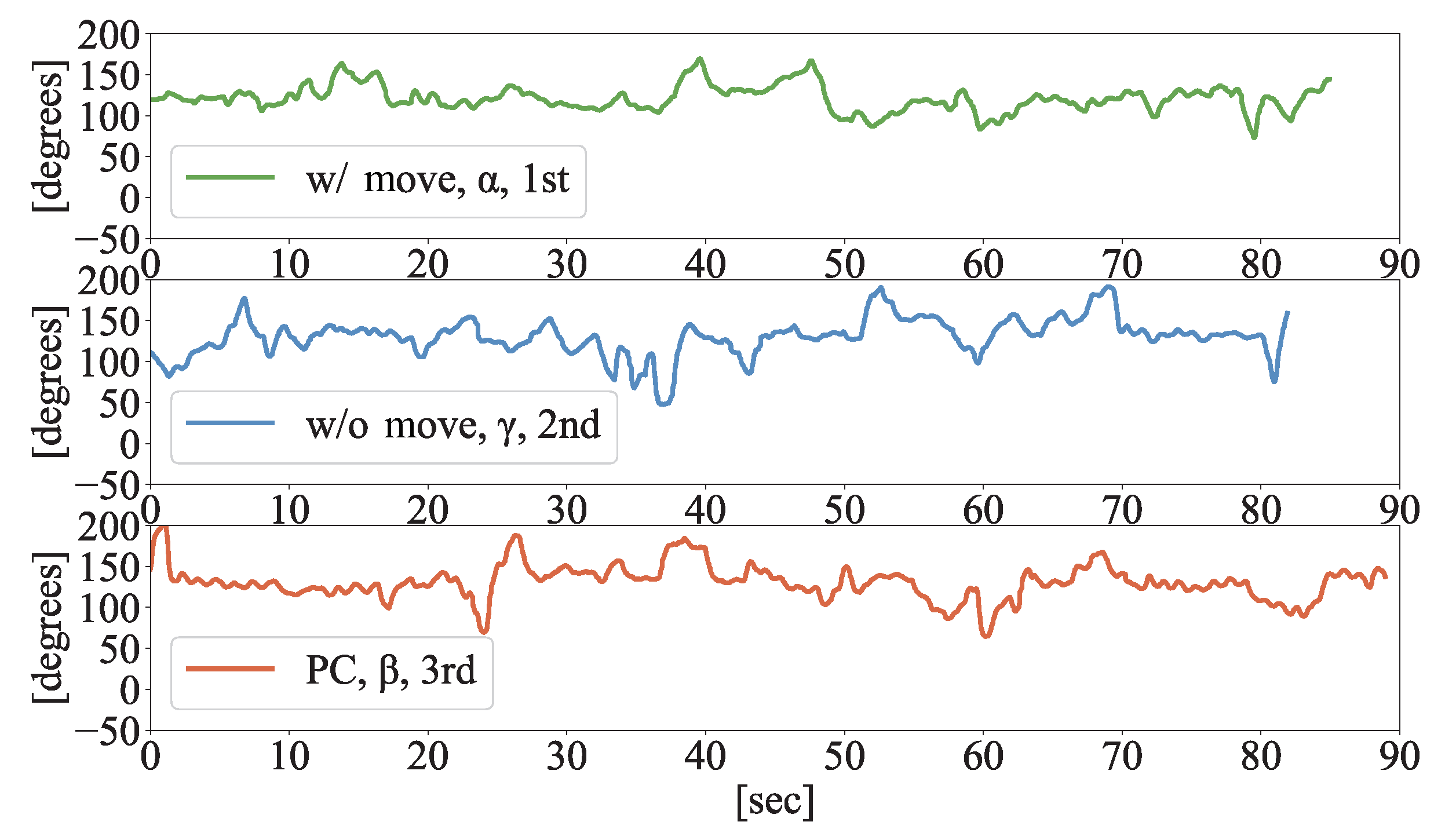

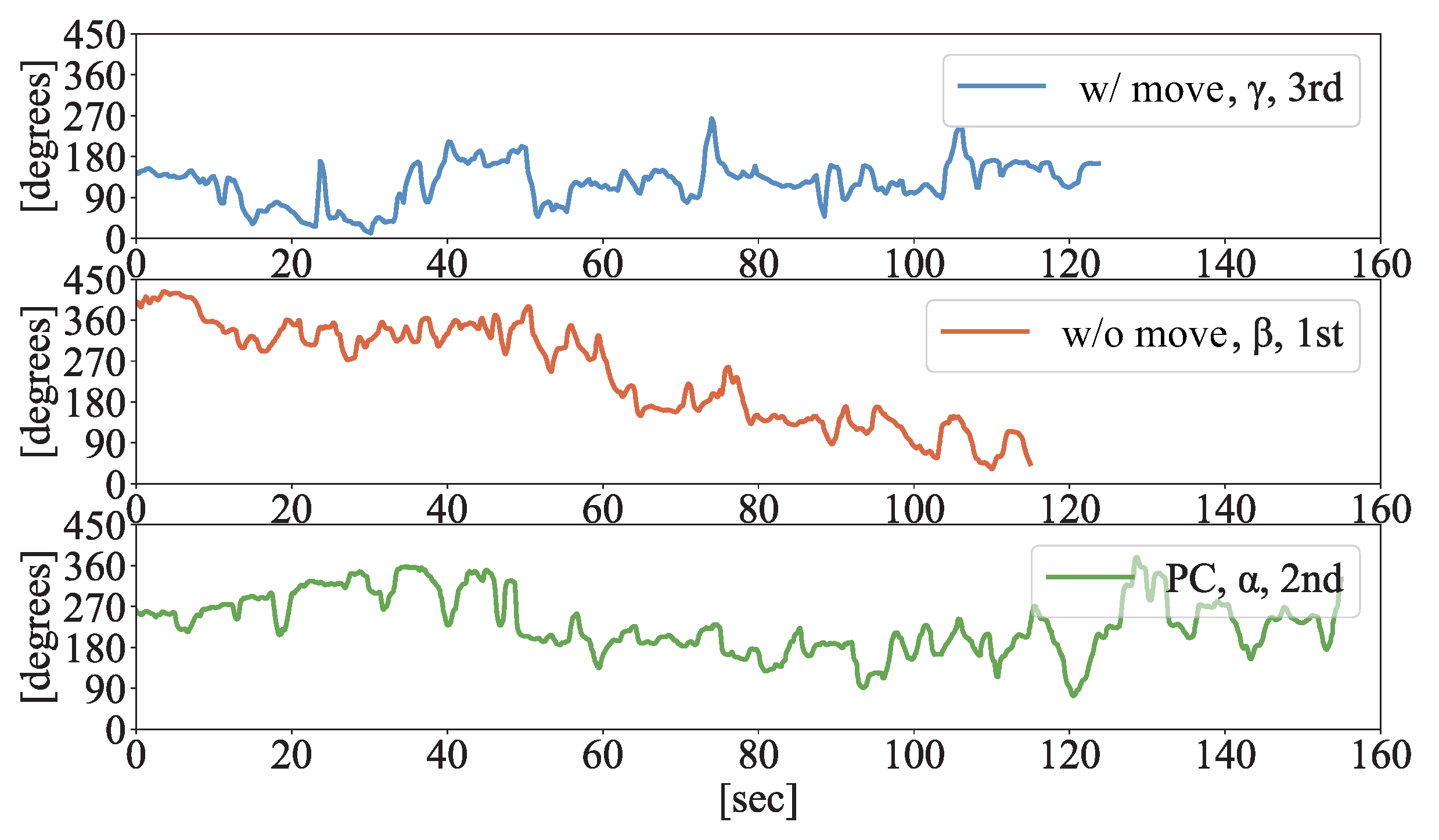

Figure 14 and

Figure 15 show the raw data from two participants (P–C and P–D) when they walked at each leg.

Figure 8 shows the average of the moving variance for ten participants. Unlike the results of the shopping district case, the result of

w/ move was higher than that of

PC.

As shown in

Figure 14 and

Figure 15, P-C did not turn his head frequently, and P-D turned his head very frequently. There were few other visitors in the zoo when P-C walked there. However, there were many visitors when P-D walked. It may be conjectured that P-D turned his head very frequently to verify the safety of the surroundings. As shown in

Figure 16, the results of all the watching modes have large standard deviation values of the average. Because the surrounding situations were not constant for all the participants, the action of turning their heads in the zoo was not controlled. Therefore, we could not obtain sufficient controlled results in this experiment.

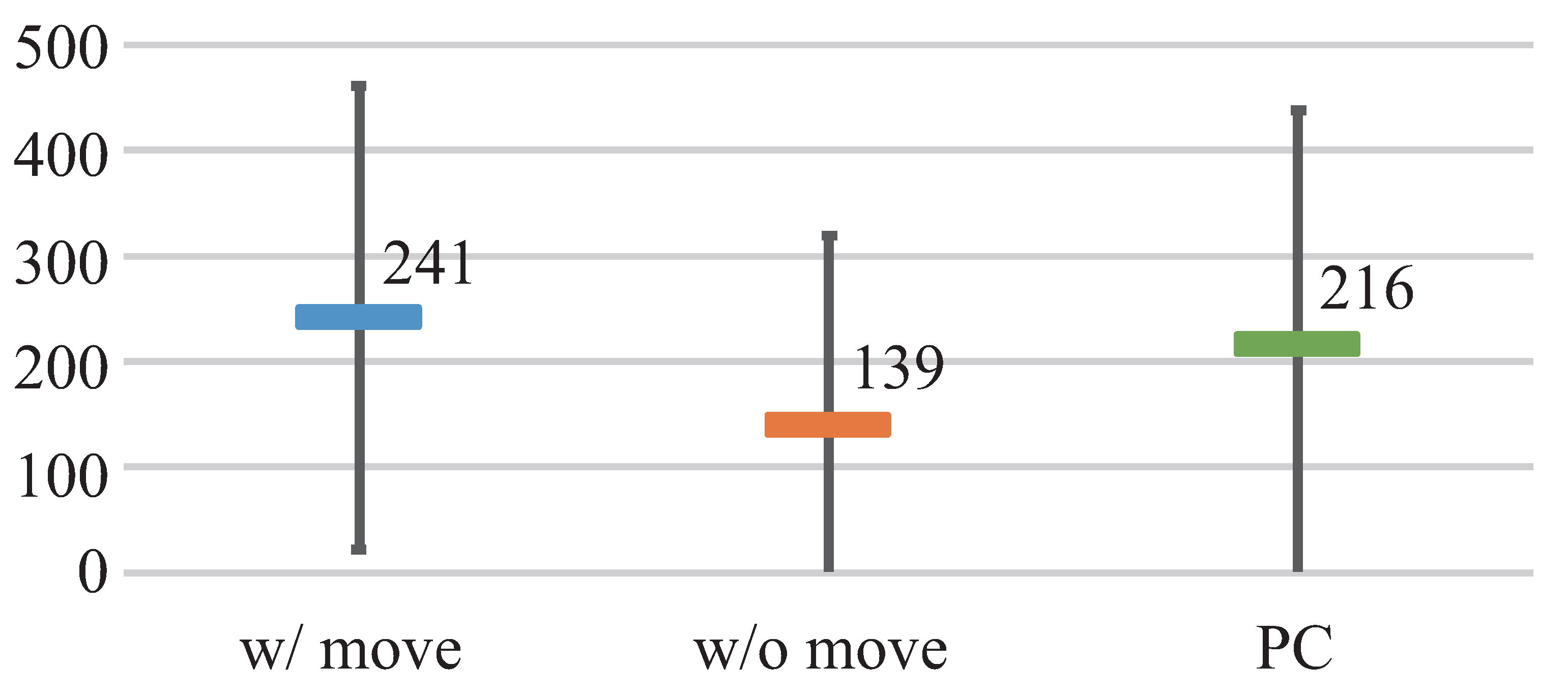

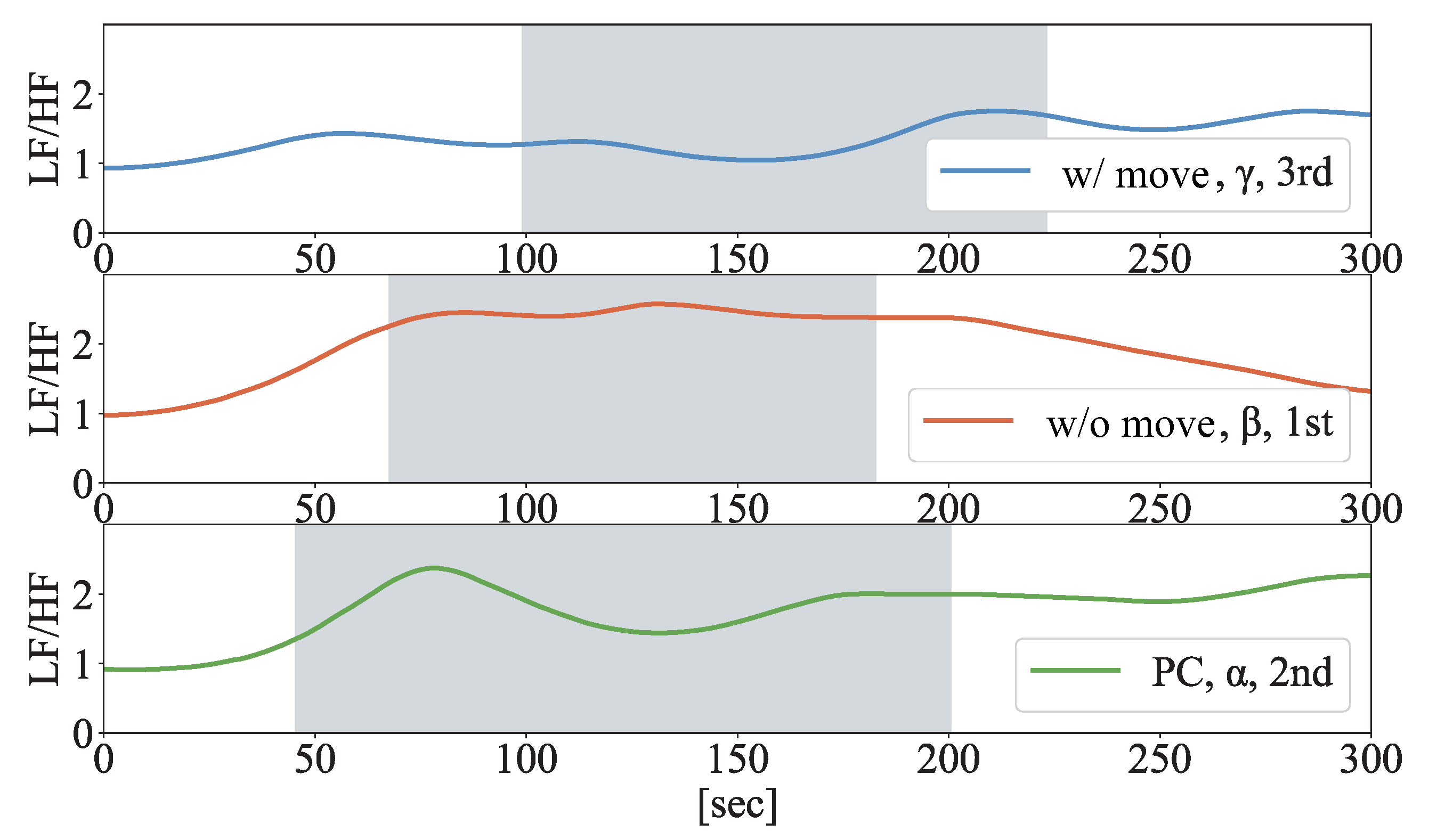

We calculated the LF/HF power from the heart rate sensor data. We investigated whether there was a difference in the stress level and the excitement in the part of the video that the participants watched while turning their heads. The value of the LF/HF power showed the balance between the sympathetic and parasympathetic nerves; a high value indicated that the person was stressed or excited. First, we calculated the LF/HF values for each part. To examine the changes in the values, we selected the maximum value obtained during each part and the value obtained at the start point. We calculated the difference between the maximum and the start-point values and used the average for the consideration.

Figure 17 and

Figure 18 show the LF/HF data for two participants when they walked each leg. The parts shaded in gray indicate the zones where the participant walked the specified legs.

Figure 19 shows the average for 11 participants. In this experiment,

w/ move yielded the greatest value. However, the variances were large, and there were some differences depending on the participants. We could not obtain sufficient data for the detailed analysis because the experimental environment was not suitable for obtaining the heart rate data in a resting state. In addition, we did not prepare any questionnaire items to determine the stress or excitement level. In future experiments, we need to inquire into the feelings of the participants including stress, excitement, and embarrassment.

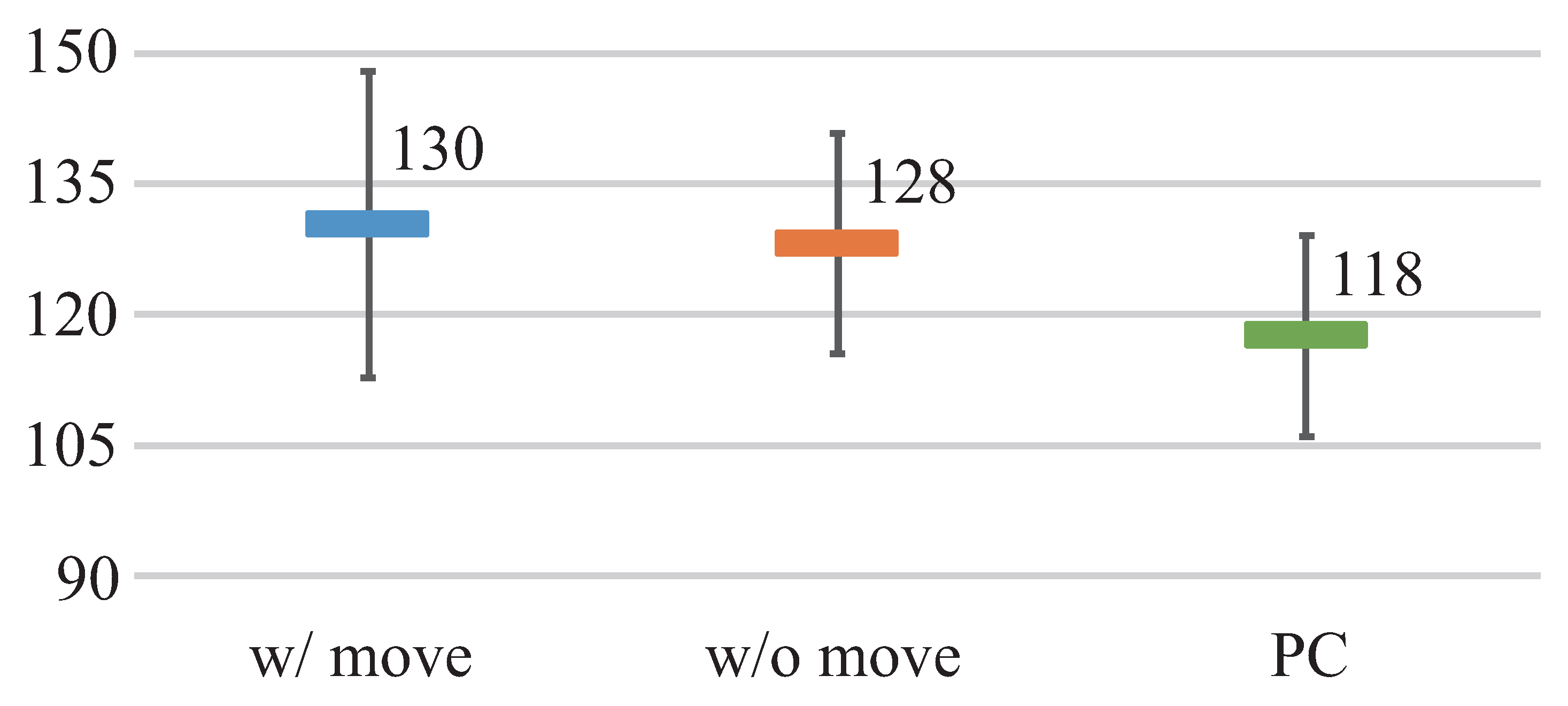

Figure 20 shows the average walking time for 11 participants. Because one participant walked relatively slowly (he spent more than 200 s walking each leg), we excluded his data. There appeared to be no difference between all the results, although the participants walked with the slowest pace in the case of

w/ move. In this experiment, each leg was a short course, and the experimenter could finish walking in 120 s.

6. Discussion

As a VR tourism system, we proposed a method that elicited the feeling of presence when watching a video and the feeling of having been to a tourist site before visiting the site. The users watched the video taken at the tourist spots via the VR-HMD. For the evaluation, we conducted two experiments. In the first experiment, the participants walked through a shopping district after watching the videos taken using a video camera. In the second experiment, they walked through a zoo after watching 360-degree videos.

In the shopping district experiment, the feeling of presence was stronger when watching the video with heads turning than when watching the PC display. However, regarding the feeling that they had been there before, there was no significant difference across the three watching modes. In the zoo experiment, the feeling of presence was stronger in the participants when watching the video while turning their heads, compared to when they watched it with their heads in a stationary position and on the PC. However, regarding the feeling that they had been there before, there was no significant difference across the three watching modes. This is similar to the shopping district experiment. To strengthen the feeling of having been there, we need to review other methods. In the shopping district case, there was no difference between the results of w/ move and w/o move. The difference in the median value of presence was a mere 0.5. Other median values of w/ move and w/o move were the same for the results of the feeling of having been there and motion sickness. In the zoo experiment, the results of the feeling of presence were low under the condition of w/o move. The reason for this is that it is unnatural to experience the rotation of the image in a 360-degree video without moving the face. The results for w/ move were very high. There were more opinions indicating that the 360-degree video elicited a greater feeling of presence compared with the video captured using the ordinary video camera. Hence, we will continue to consider the method using a 360-degree video.

We confirmed that there were some gaps between the feeling of presence and of having been there. The proposed method elicited the feelings of having been there (median values were five in both experiments). However, there was no difference in the results in the case of the PC display; that is, there was no difference between the proposed method and the conventional method in which the users watched the video of the tourist spots on a PC. A method that aims to give the experience of being in a tourist spot via VR tourism cannot be judged based on whether the users experienced the feeling of presence. We need to evaluate the method on the site.

By being compelled to turn their heads, the participants experienced the sense of being there while watching the videos. We can expect that the proposed method can improve the sense of presence when watching videos via the VR-HMD. We can also suppose that the user can experience more content via the 360-degree video. Even if the users cannot visit the tourist spot because of distance and physical challenges, guided head turning can enable them to experience the sense of presence while watching the video.

There was a comment that the participant felt like going there when watching the zoo video while turning the head. There is a possibility that the proposed method can motivate the user to have an interest and willingness to do something by the proposed method. We will also examine whether the proposed method can motivate the user.

We had assumed that the feeling of having been there would prevent the participants from going the wrong direction. However, many participants took wrong turns. In the experimental videos, we changed the camera views randomly. To ensure that the users do not lose the route, we need to investigate the influences on the perception of the content within the camera view, such as changing the playback speed of the video and adding some effects, for instance, changing the prominence of the landmark. In the shopping district experiment, the participants felt like they had been there because of the familiarity of chain restaurants they had previously seen in the videos that included them. We need to investigate the influence of providing such a familiar view.

If the users experience the feeling of having been there, it could be supposed that they could estimate how long they needed to walk the site. However, because we used short legs (approximately 120 m) in the experiments, we did not investigate this assumption experimentally. In the future, we will investigate this.

If a tourist takes a video while recording head motion data at a tourist spot, he/she can share how he/she has enjoyed and seen things in the multimodal condition afforded by the proposed method. Many tourists like to share their tourism experiences on social media, and it is known that sharing has positive effects for tourists [

32,

33]. It is possible that the proposed method can heighten the recipients’ enjoyment of the shared content. Additionally, watching videos taken by a tourist who is good at sightseeing while turning the head in sync with the tourist’s motion can be expected to enable the viewers to grasp tips on appropriate sightseeing behavior.

7. Conclusions

In this paper, we proposed a method wherein the viewers experienced guided head turning when watching videos taken at tourist spots, via VR-HMD. We aimed to convey the feelings of presence and of having been there. In the experiments, the participants watched the videos taken at a shopping district and zoo in three modes: via VR-HMD with and without head turning and via the PC display without head turning. We evaluated the method based on a questionnaire administered to the participants after watching the videos and after walking through the shopping district and zoo. The proposed method conveyed the greatest sense of presence. However, based on the results, there was no difference among the three styles after walking the sites.

In the future, we will consider other methods to convey the feeling of having been there, for instance, the use of sounds recorded binaurally. By using the proposed method, there was a comment that the participant felt like going there. We will investigate whether the proposed method can motivate the participant to have an interest.

Author Contributions

Conceptualization, N.I. and T.T.; methodology, N.I. and T.T.; software, N.I.; validation, N.I. and T.T.; formal analysis, N.I., T.T. and M.T.; investigation, N.I.; resources, N.I.; data curation, N.I.; writing—original draft preparation, N.I.; writing—review and editing, T.T.; visualization, N.I.; supervision, T.T. and M.T.; project administration, T.T. and M.T.; funding acquisition, N.I., T.T., and M.T. All authors have read and agreed to the published version of the manuscript.

Funding

This research was supported in part by JSPS KAKENHI Grant No. JP19K20323 and CREST from the Japan Science and Technology Agency (Grant No. JPMJCR16E1, JPMJCR18A3).

Conflicts of Interest

The authors declare no conflict of interest.

References

- UNWTO. International Tourism Highlights, 2019 Edition. In UNWTO Annual Report 2019; UNWTO: Madrid, Spain, 2019. [Google Scholar] [CrossRef]

- Guttentag, D.A. Virtual Reality: Applications and Implications for Tourism. Tour. Manag. 2010, 31, 637–651. [Google Scholar] [CrossRef]

- Huang, Y.C.; Backman, K.F.; Backman, S.J.; Chang, L.L. Exploring the Implications of Virtual Reality Technology in Tourism Marketing: An Integrated Research Framework. Int. J. Tour. Res. 2016, 18, 116–128. [Google Scholar] [CrossRef]

- Han, D.I.D.; Weber, J.; Bastiaansen, M.; Mitas, O.; Lub, X. Virtual and augmented reality technologies to enhance the visitor experience in cultural tourism. In Augmented Reality and Virtual Reality; Springer: Berlin/Heidelberg, Germany, 2019; pp. 113–128. [Google Scholar] [CrossRef]

- Castro, J.C.; Quisimalin, M.; Córdova, V.H.; Quevedo, W.X.; Gallardo, C.; Santana, J.; Andaluz, V.H. Virtual Reality on e-Tourism. In IT Convergence and Security 2017; Springer: Berlin/Heidelberg, Germany, 2018; pp. 86–97. [Google Scholar] [CrossRef]

- Felnhofer, A.; Kothgassner, O.D.; Schmidt, M.; Heinzle, A.K.; Beutl, L.; Hlavacs, H.; Kryspin-Exner, I. Is Virtual Reality Emotionally Arousing? Investigating Five Emotion Inducing Virtual Park Scenarios. Int. J. Hum. Comput. Stud. 2015, 82, 48–56. [Google Scholar] [CrossRef]

- Tussyadiah, I.P.; Wang, D.; Jung, T.H.; tom Dieck, M.C. Virtual Reality, Presence, and Attitude Change: Empirical Evidence from Tourism. Tour. Manag. 2018, 66, 140–154. [Google Scholar] [CrossRef]

- Kim, M.J.; Lee, C.K.; Jung, T. Exploring Consumer Behavior in Virtual Reality Tourism Using an Extended Stimulus-Organism-Response Model. J. Travel Res. 2018, 59, 69–89. [Google Scholar] [CrossRef]

- Oishi, E.; Koge, M.; Nakamura, T.; Kajimoto, H. HapPull: Enhancement of Self-motion by Pulling Clothes. In Proceedings of the International Conference on Advances in Computer Entertainment, London, UK, 14–16 December 2017; Springer: Berlin/Heidelberg, Germany, 2017; pp. 261–271. [Google Scholar] [CrossRef]

- Cardin, S.; Thalmann, D.; Vexo, F. Head Mounted Wind. In Proceeding of the 20th Annual Conference on Computer Animation and Social Agents (CASA2007), number CONF, San Diego, CA, USA, 5–9 August 2007; pp. 101–108. [Google Scholar]

- Jung, T.; tom Dieck, M.C.; Moorhouse, N.; Tom Dieck, D. Tourists’ Experience of Virtual Reality Applications. In Proceedings of the 2017 IEEE International Conference on Consumer Electronics (ICCE), Las Vegas, NV, USA, 8–10 January 2017; pp. 208–210. [Google Scholar] [CrossRef]

- Gao, T.; Liang, H.; Chen, Y.; Qiu, L. Comparisons of Landscape Preferences through Three Different Perceptual Approaches. Int. J. Environ. Res. Public Health 2019, 16, 4574. [Google Scholar] [CrossRef] [PubMed]

- Pantano, E.; Corvello, V. Tourists’ Acceptance of Advanced Technology-based Innovations for Promoting Arts and Culture. Int. J. Technol. Manag. 2014, 64, 3–16. [Google Scholar] [CrossRef]

- Tussyadiah, I.P.; Wang, D.; Jia, C.H. Virtual Reality and Attitudes Toward Tourism Destinations. In Information and Communication Technologies in Tourism 2017; Springer: Berlin/Heidelberg, Germany, 2017; pp. 229–239. [Google Scholar] [CrossRef]

- Bogicevic, V.; Seo, S.; Kandampully, J.A.; Liu, S.Q.; Rudd, N.A. Virtual reality presence as a preamble of tourism experience: The role of mental imagery. Tour. Manag. 2019, 74, 55–64. [Google Scholar] [CrossRef]

- Wei, W.; Qi, R.; Zhang, L. Effects of virtual reality on theme park visitors’ experience and behaviors: A presence perspective. Tour. Manag. 2019, 71, 282–293. [Google Scholar] [CrossRef]

- Lemmens, P.; Crompvoets, F.; Brokken, D.; Van Den Eerenbeemd, J.; de Vries, G.J. A Body-Conforming Tactile Jacket to Enrich Movie Viewing. In Proceedings of the World Haptics 2009-Third Joint EuroHaptics Conference and Symposium on Haptic Interfaces for Virtual Environment and Teleoperator Systems, Salt Lake City, UT, USA, 18–20 March 2009; pp. 7–12. [Google Scholar] [CrossRef]

- Konishi, Y.; Hanamitsu, N.; Outram, B.; Minamizawa, K.; Mizuguchi, T.; Sato, A. Synesthesia Suit: The Full Body Immersive Experience. In Proceedings of the ACM SIGGRAPH 2016 VR Village, SIGGRAPH ’16; ACM: New York, NY, USA, 1 July 2016. [Google Scholar] [CrossRef]

- Kulkarni, S.; Fisher, C.; Pardyjak, E.; Minor, M.; Hollerbach, J. Wind Display Device for Locomotion Interface in a Virtual Environment. In Proceedings of the World Haptics 2009-Third Joint EuroHaptics Conference and Symposium on Haptic Interfaces for Virtual Environment and Teleoperator Systems, Salt Lake City, UT, USA, 18–20 March 2009; pp. 184–189. [Google Scholar] [CrossRef]

- Seno, T.; Ogawa, M.; Ito, H.; Sunaga, S. Consistent Air Flow to the Face Facilitates Vection. Perception 2011, 40, 1237–1240. [Google Scholar] [CrossRef] [PubMed]

- Ito, K.; Ban, Y.; Warisawa, S. AlteredWind: Manipulating Perceived Direction of the Wind by Cross-Modal presentation of Visual, Audio and Wind Stimuli. In Proceedings of the SIGGRAPH Asia 2019 Emerging Technologies, Brisbane, QLD, Australia, 17–20 November 2019; pp. 3–4. [Google Scholar] [CrossRef]

- Sasaki, T.; Liu, K.H.; Hasegawa, T.; Hiyama, A.; Inami, M. Virtual Super-Leaping: Immersive Extreme Jumping in VR. In Proceedings of the 10th Augmented Human International Conference, AH2019, Reims, France, 11–12 March 2019; ACM: New York, NY, USA, 2019; pp. 18:1–18:8. [Google Scholar] [CrossRef]

- Sato, M. Development of String-based Force Display: SPIDAR. In Proceedings of the 8th iNternational Conference on Virtual Systems and Multimedia. Available online: http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.5.2159&rep=rep1&type=pdf (accessed on 9 September 2020).

- Sato, M. Spidar and Virtual Reality. In Proceedings of the 5th Biannual World Automation Congress, Orlando, FL, USA, 9–13 June 2002; Volume 13, pp. 17–23. [Google Scholar] [CrossRef]

- Kon, Y.; Nakamura, T.; Sakuraqi, R.; Shlonolrl, H.; Yem, V.; Kajirnoto, H. HangerOVER: Development of HMO-Embedded Haptic Display Using the Hanger Reflex and VR Application. In Proceedings of the 2018 IEEE Conference on Virtual Reality and 3D User Interfaces (VR), Reutlingen, Germany, 18–22 March 2018; pp. 765–766. [Google Scholar] [CrossRef]

- Gugenheimer, J.; Wolf, D.; Eiriksson, E.R.; Maes, P.; Rukzio, E. GyroVR: Simulating inertia in virtual reality using head worn flywheels. In Proceedings of the 29th Annual Symposium on User Interface Software and Technology, Tokyo, Japan, 16–19 October 2016; pp. 227–232. [Google Scholar]

- Zhang, Y.; Riecke, B.E.; Schiphorst, T.; Neustaedter, C. Perch to Fly: Embodied Virtual Reality Flying Locomotion with a Flexible Perching Stance. In Proceedings of the 2019 on Designing Interactive Systems Conference, San Diego, CA, USA, 23–28 June 2019; ACM: New York, NY, USA, 2019. DIS ’19. pp. 253–264. [Google Scholar] [CrossRef]

- Hiyama, A.; Onimaru, H.; Miyashita, M.; Ebuchi, E.; Seki, M.; Hirose, M. Augmented Reality System for Measuring and Learning Tacit Artisan Skills. In Proceedings of the International Conference on Human Interface and the Management of Information, Las Vegas, NV, USA, 21–26 July 2013; Springer: Berlin/Heidelberg, Germany, 2013; pp. 85–91. [Google Scholar] [CrossRef]

- Kasahara, S.; Nagai, S.; Rekimoto, J. JackIn Head: Immersive Visual Telepresence System with Omnidirectional Wearable Camera. IEEE Trans. Vis. Comput. Graph. 2016, 23, 1222–1234. [Google Scholar] [CrossRef] [PubMed]

- Gugenheimer, J.; Wolf, D.; Haas, G.; Krebs, S.; Rukzio, E. SwiVRChair: A Motorized Swivel Chair to Nudge Users’ Orientation for 360-degree Storytelling in Virtual Reality. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, CHI ’16, San Jose, CA, USA, 7–12 May 2016; ACM: New York, NY, USA, 2016; pp. 1996–2000. [Google Scholar] [CrossRef]

- Bindman, S.W.; Castaneda, L.M.; Scanlon, M.; Cechony, A. Am I a Bunny? The Impact of High and Low Immersion Platforms and Viewers’ Perceptions of Role on Presence, Narrative Engagement, and Empathy During an Animated 360 Video. In Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems, CHI ’18, Montreal, QC, Canada, 21–26 April 2018; ACM: New York, NY, USA, 2018; pp. 457:1–457:11. [Google Scholar] [CrossRef]

- Wong, J.W.C.; Lai, I.K.W.; Tao, Z. Sharing Memorable Tourism Experiences on Mobile Social Media and How it Influences Further Travel Decisions. Curr. Issues Tour. 2019, 23, 1773–1787. [Google Scholar] [CrossRef]

- Kim, J.; Fesenmaier, D.R. Sharing Tourism Experiences: The Posttrip Experience. J. Travel Res. 2017, 56, 28–40. [Google Scholar] [CrossRef]

Figure 1.

Image of a user watching a video while experiencing head movements corresponding to scene.

Figure 1.

Image of a user watching a video while experiencing head movements corresponding to scene.

Figure 2.

Appearance of participant watching video taken in the shopping district.

Figure 2.

Appearance of participant watching video taken in the shopping district.

Figure 3.

Results (“Did you feel that you actually walked through the shopping district?”).

Figure 3.

Results (“Did you feel that you actually walked through the shopping district?”).

Figure 4.

Results (“Did you feel that you had actually been to the shopping district before?”).

Figure 4.

Results (“Did you feel that you had actually been to the shopping district before?”).

Figure 5.

Results (“Did you experience motion sickness?”).

Figure 5.

Results (“Did you experience motion sickness?”).

Figure 6.

Raw yaw-axis data (P-A).

Figure 6.

Raw yaw-axis data (P-A).

Figure 7.

Raw yaw-axis data (P-B).

Figure 7.

Raw yaw-axis data (P-B).

Figure 8.

Average of the moving variance of yaw-axis data (shopping distinct).

Figure 8.

Average of the moving variance of yaw-axis data (shopping distinct).

Figure 9.

Appearance of the participant watching a video taken at the zoo.

Figure 9.

Appearance of the participant watching a video taken at the zoo.

Figure 10.

Appearance of a participant walking in the zoo.

Figure 10.

Appearance of a participant walking in the zoo.

Figure 11.

Results (“Did you feel that you actually walk at the zoo?”).

Figure 11.

Results (“Did you feel that you actually walk at the zoo?”).

Figure 12.

Results (“Did you feel that you have been to the zoo before?”).

Figure 12.

Results (“Did you feel that you have been to the zoo before?”).

Figure 13.

Results (“Did you feel motion sickness?”).

Figure 13.

Results (“Did you feel motion sickness?”).

Figure 14.

Raw yaw-axis data (P-C).

Figure 14.

Raw yaw-axis data (P-C).

Figure 15.

Raw yaw-axis data (P-D).

Figure 15.

Raw yaw-axis data (P-D).

Figure 16.

Average of the moving variance of yaw-axis data (zoo).

Figure 16.

Average of the moving variance of yaw-axis data (zoo).

Figure 17.

LF/HF data (P-C).

Figure 17.

LF/HF data (P-C).

Figure 18.

LF/HF data (P-D).

Figure 18.

LF/HF data (P-D).

Figure 19.

Average of the difference between the maximum value and the start point data of LF/HF.

Figure 19.

Average of the difference between the maximum value and the start point data of LF/HF.

Figure 20.

Average of the walking time (zoo).

Figure 20.

Average of the walking time (zoo).

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).