1. Introduction

Artificial intelligence (AI) has been widely used to optimize data-driven approaches in fields such as computer vision, speech recognition, robotics and medical applications [

1,

2]. Deep neural networks (DNNs), also referred to as deep learning, are a part of the broad field of AI, and deliver state-of-the-art accuracy on many AI tasks [

3,

4]. However, to complete the tasks with higher accuracy, DNN models become deeper, i.e., the number of layers range from five to more than a thousand. Training large scale DNNs usually requires adjusting a large number of parameters, which is computationally complex. Moreover, the learning algorithms of the DNNs require complex optimization techniques, such as stochastic gradient descent (SGD) and adaptive moment estimation (ADAM) [

5]. These learning algorithms take huge computing resources and can take several days depending on the size of the dataset and the number of layers in the network. In addition, the structure of the network, i.e., the number of neurons and number of layers, should be set before learning [

6], and the optimum structure depends on applications and data characteristics. In other words, if a network is optimized for a specific application, it needs to be structurally changed and re-learned to use it in other applications. Therefore, DNNs cannot support real-time learning and are infeasible for embedded systems with various sensor applications because of their complexity and inflexibility.

In contrast, the restricted coulomb energy neural network (RCE-NN) can actively modify the network structure because it generates new neuron only when necessary. Therefore, it can support various sensor applications and has recently been implemented for various embedded systems [

7,

8,

9,

10,

11]. The RCE-NN efficiently classifies feature distributions by constructing hyperspherical neurons with radii and hypersphere centers. Since the learning scheme of the RCE-NN is based on the distance between the input feature and the stored hypersphere center, it is relatively simple compared with learning algorithms of DNNs and real-time learning is possible. After completing the learning process, if the calculated distance is less than a neuron’s radius, the neuron is activated and the label of the neuron with the minimum distance among the activated neurons becomes a recognition result.

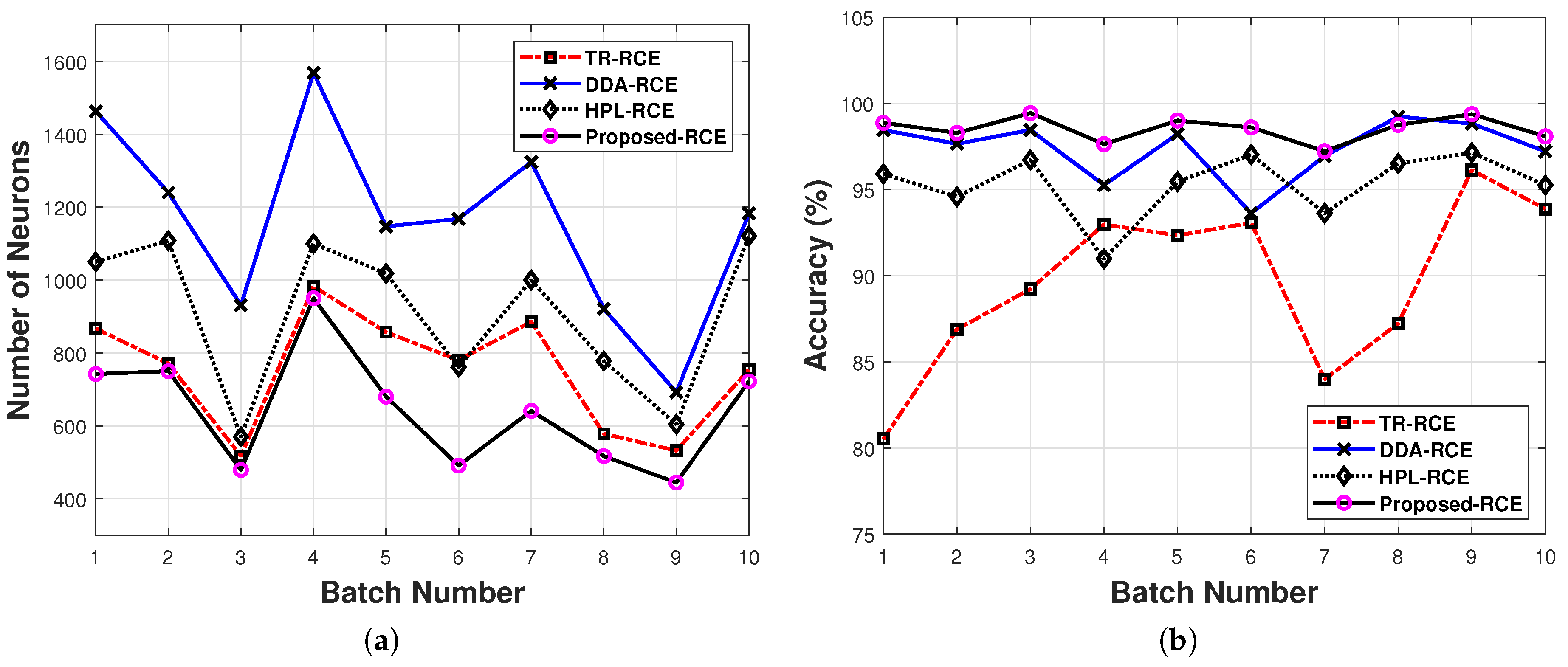

In the initial RCE-NN proposed in [

12,

13], called traditional RCE (TR-RCE) in this paper, all neurons are learned at the same radius. This method causes confusion among some areas of the feature space, which degrades recognition accuracy. In [

14], RCE-NN with a dynamic decay adjustment (DDA) algorithm was proposed, which adjusted the radius of each neuron depending on the uncertainty of activated neurons in the learning process. This technique increases the recognition accuracy in areas of conflict. However, since the radius may be excessively reduced in the learning process, unnecessary neurons are generated, which increases the network complexity.

The RCE-NN proposed in [

15] estimates the reliability of neurons by counting the number of activations for each neuron. In recognition, this activation count is weighted to the output of each neuron to reflect the reliability of each neuron. Although this method improves recognition accuracy, an optimal hyperspherical classifier cannot be generated in the feature space, because all neurons are learned at the same radius, as in the TR-RCE learning method. To solve this problem, an RCE-NN with a hierarchical prototype learning (HPL) algorithm was proposed in [

16,

17], which reduced the learning radius for each iteration of learning. The HPL also eliminates unnecessary learned neurons based on estimated reliability in the learning process. However, since the HPL-based RCE-NN estimates reliability without considering the region of the activated neurons, some unnecessary neurons remained. In addition, various hyperspherical classifiers like DDA-RCE cannot be generated because neurons are learned with the same radius in a specific iteration period.

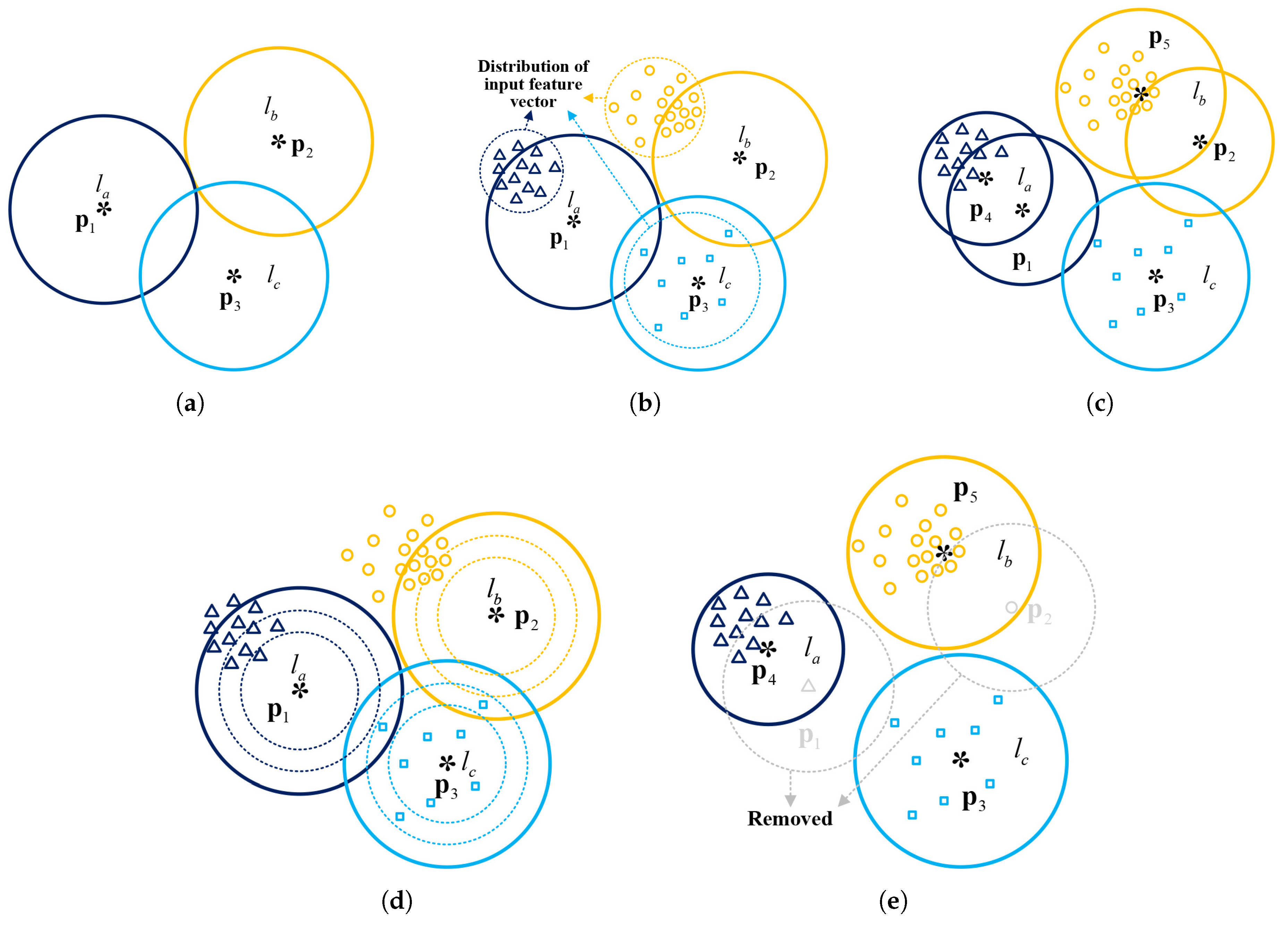

In this paper, an efficient learning algorithm for RCE-NN is proposed: (1) The reliability of each neuron is estimated by considering the activation region with different factors; (2) The radius is gradually reduced at a pre-defined reduction rate to prevent the generation of unnecessary neurons. The design and implementation results of the RCE-NN processor for real-time processing are also presented. The remainder of this paper is organized as follows:

Section 2 briefly reviews the RCE-NN.

Section 3 describes the proposed learning algorithm and its performance evaluation results.

Section 4 describes the hardware architecture of the proposed RCE-NN processor.

Section 5 discusses its implementation results. Finally,

Section 6 concludes the paper.

2. Restricted Coulomb Energy Neural Network

The RCE-NN consists of an input layer, a prototype layer (hidden layer) and an output layer. The input layer comprises feature vectors and all feature vectors are connected to each neuron of the prototype layer. The prototype layer is the most essential part of the RCE-NN. Neurons in this layer save hypersphere centers and radii, which construct hyperspherical classifiers in the feature space. The output layer uses the neuron’s response to output the label value of the neuron that best matches the input feature vector.

Each neuron

in the prototype layer contains the information as follows:

where

is the neuron index and the total number

varies according to the learning results. That is, if

neurons are learned after learning is completed, they are defined as a set of neurons

. Each neuron

contains a hypersphere center

, radius

, and learned label

, where

is the number of features in the input feature vector used in the learning.

If the number of input feature vectors during the learning process is

, the input feature vector set can be represented by

, where the feature vector

consists of

features and a label

. The feature vector

is entered to each neuron and the distance between the feature vector

and the hypersphere center

is computed as follows:

Then, neuron

is activated only if

. If no neurons are activated for the feature vector

, a new neuron

with a hypersphere center

, label

, and radius

R is generated, where

and

are the feature values and label of the feature vector

, respectively, and

R is the pre-defined global radius. In addition, the total number of neurons

is increased by one. Since the TR-RCE learns with one global radius

R for all data, confusion occurs in some areas of the feature space. Consider two neurons

and

learned by the two feature vectors

and

with labels

and

in the 2D feature space, as shown in

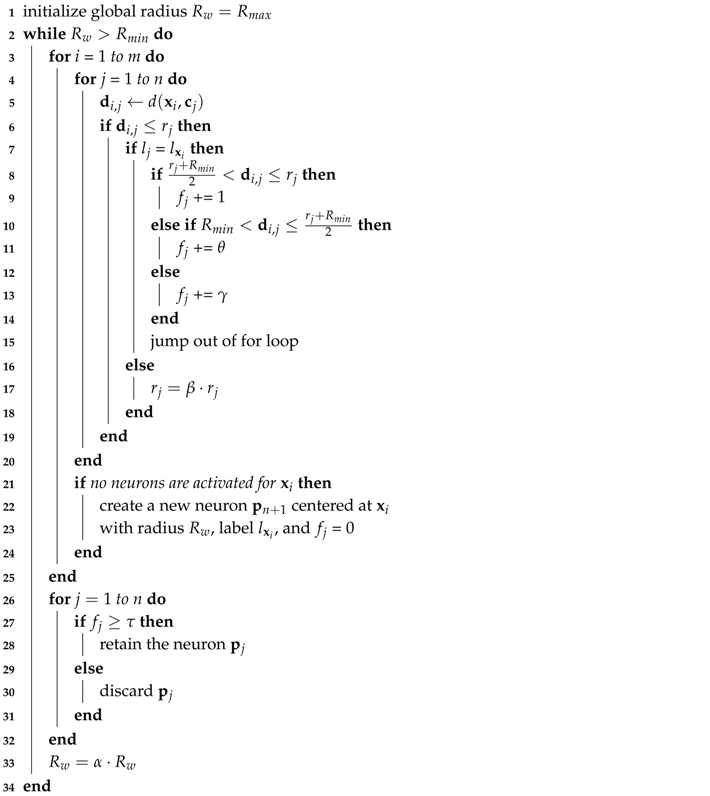

Figure 1a. In other words, neurons

and

can be represented by

and

, where

,

,

,

and

. Then, in the recognition process, a new feature vector

with label

enters the confusion area, as in

Figure 1b, which activates both neurons. In this case, the RCE-NN recognizes the feature vector

as label

because

is smaller than

. The learning method of TR-RCE yields many such areas of confusion, thereby degrading the recognition accuracy.

The DDA-RCE was developed to solve the inherent problems associated with this method. When a neuron

with a different label than the input feature vector

is activated in the learning process as shown in

Figure 1c, the

of the neuron is reduced as follows:

where

u is the index of the neuron with the smallest distance value among those activated by the current input feature vector. Then, in the recognition process, the RCE-NN correctly recognizes the feature vector

as label

, as shown in

Figure 1d. This technique increases the recognition accuracy in areas of confusion. However, the radius of a specific neuron becomes excessively small when the radius is adjusted based on the minimum distance in the learning process, and further unnecessary neurons are learned, thereby increasing the system complexity.

In [

15], the technique of measuring

is introduced, where

is the activation count of each neuron in the learning process. That is, when the specific neuron

is activated for the input feature vector

, the

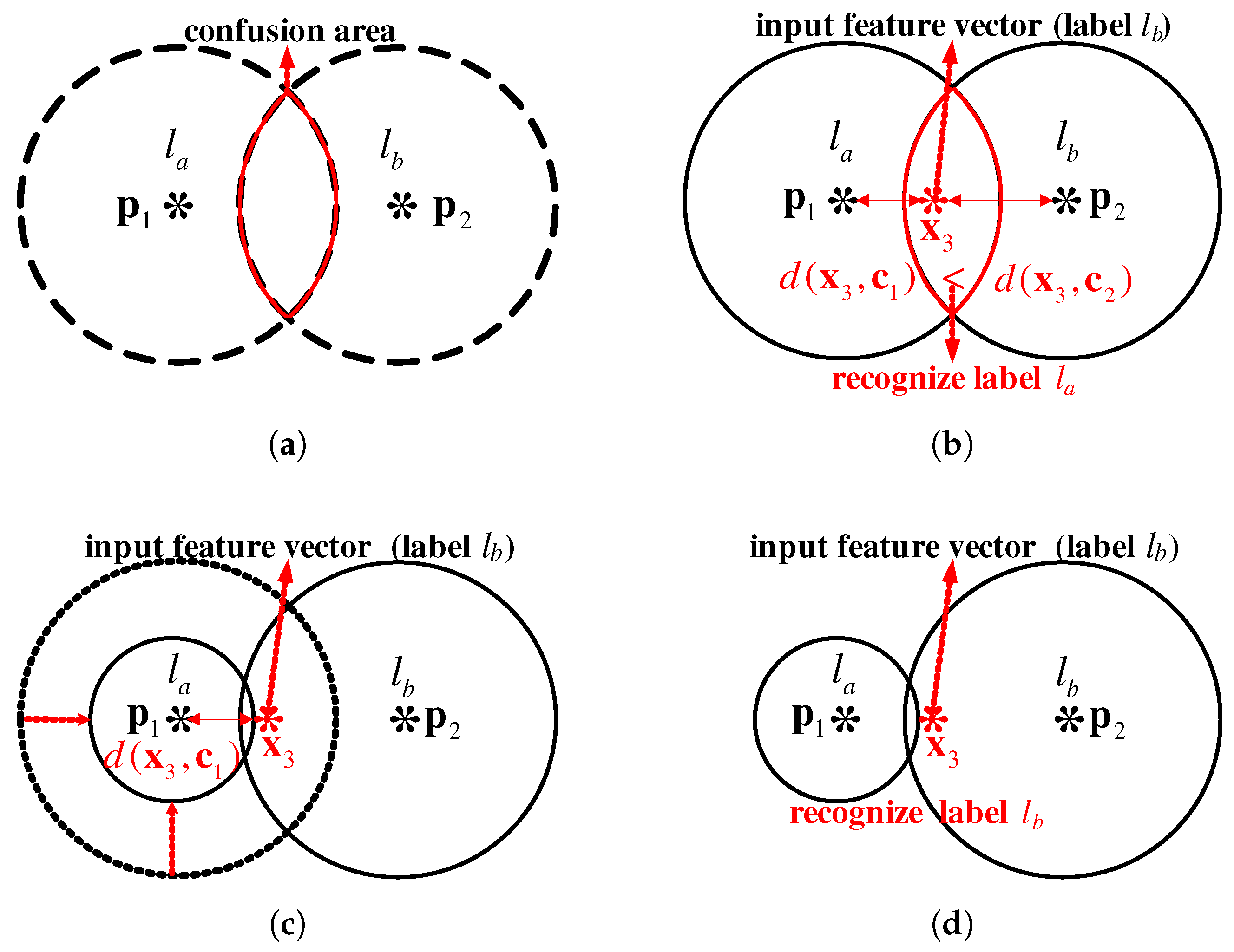

value is incremented by one to estimate the reliability of the neuron. By applying the activation count to the output of each neuron, a recognition result reflecting the reliability of each neuron is obtained. However, since all neurons are learned with a single global radius, as in TR-RCE, a confusion area is created in the feature space, thus reducing recognition accuracy. Therefore, the HPL algorithm was proposed in [

16,

17], which reduced the global radius from the maximum (

) to the minimum (

) according to the iteration interval. The global radius is decreased as follows:

where

is a reduction rate for global radius and

w is the index for iteration. In addition, to determine whether each neuron

is a suitable neuron in the learning process, the prototype density value

is calculated as follows:

where

is the volume of the hyperspherical classifier of each neuron

. If the prototype density value

is less than the pre-defined threshold value

, it is determined to be an inappropriate neuron and that neuron is removed from

. However, the

is updated to the same value regardless of whether the input feature vector is activated near or far from the hypersphere center. Therefore, the estimated reliability of each learned neuron is inaccurate and unnecessary neurons are not removed. In addition, HPL-RCE does not adjust the radius as in DDA-RCE when a neuron with a different label than the input feature vector is activated.

4. Hardware Architecture Design

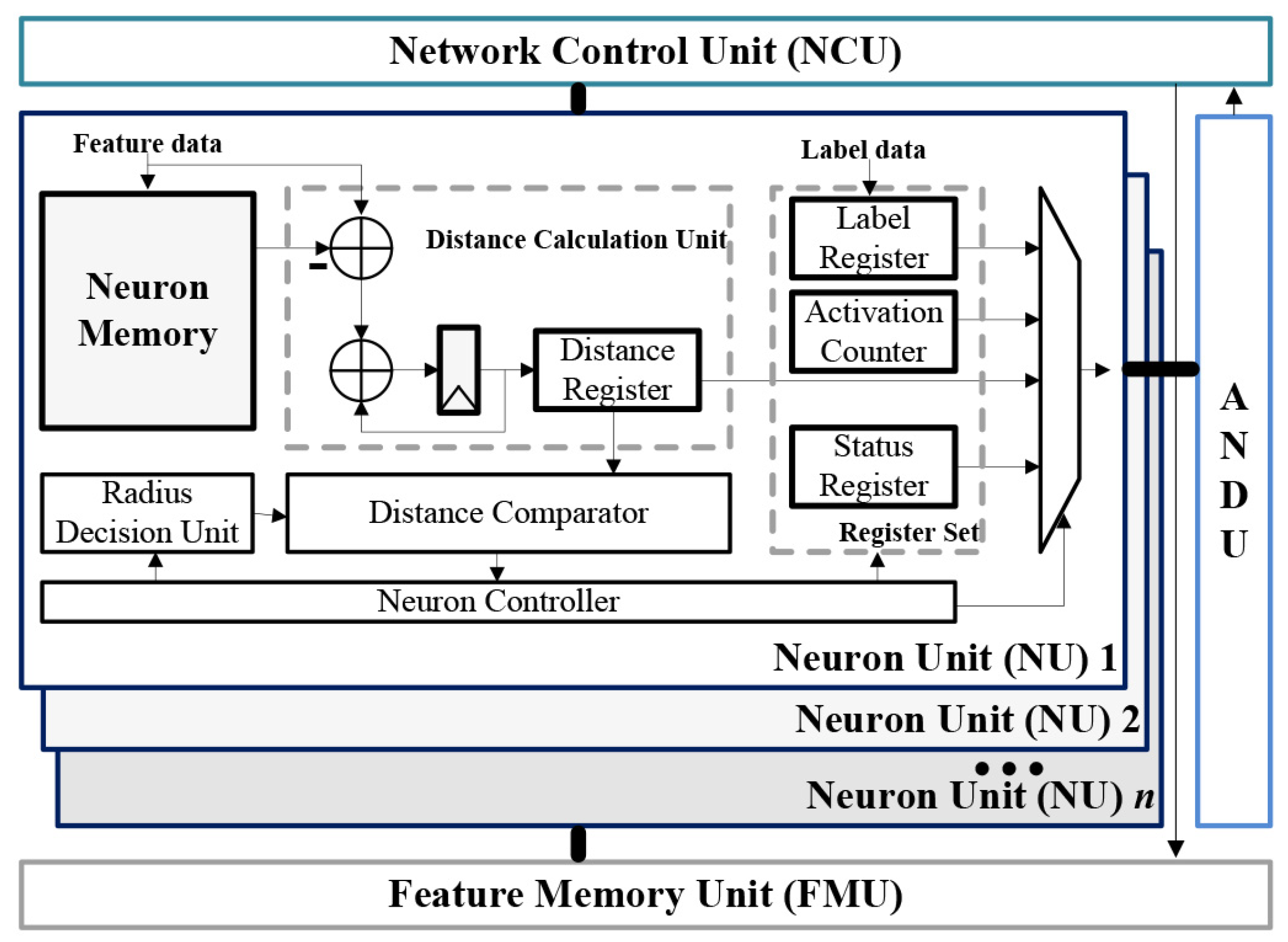

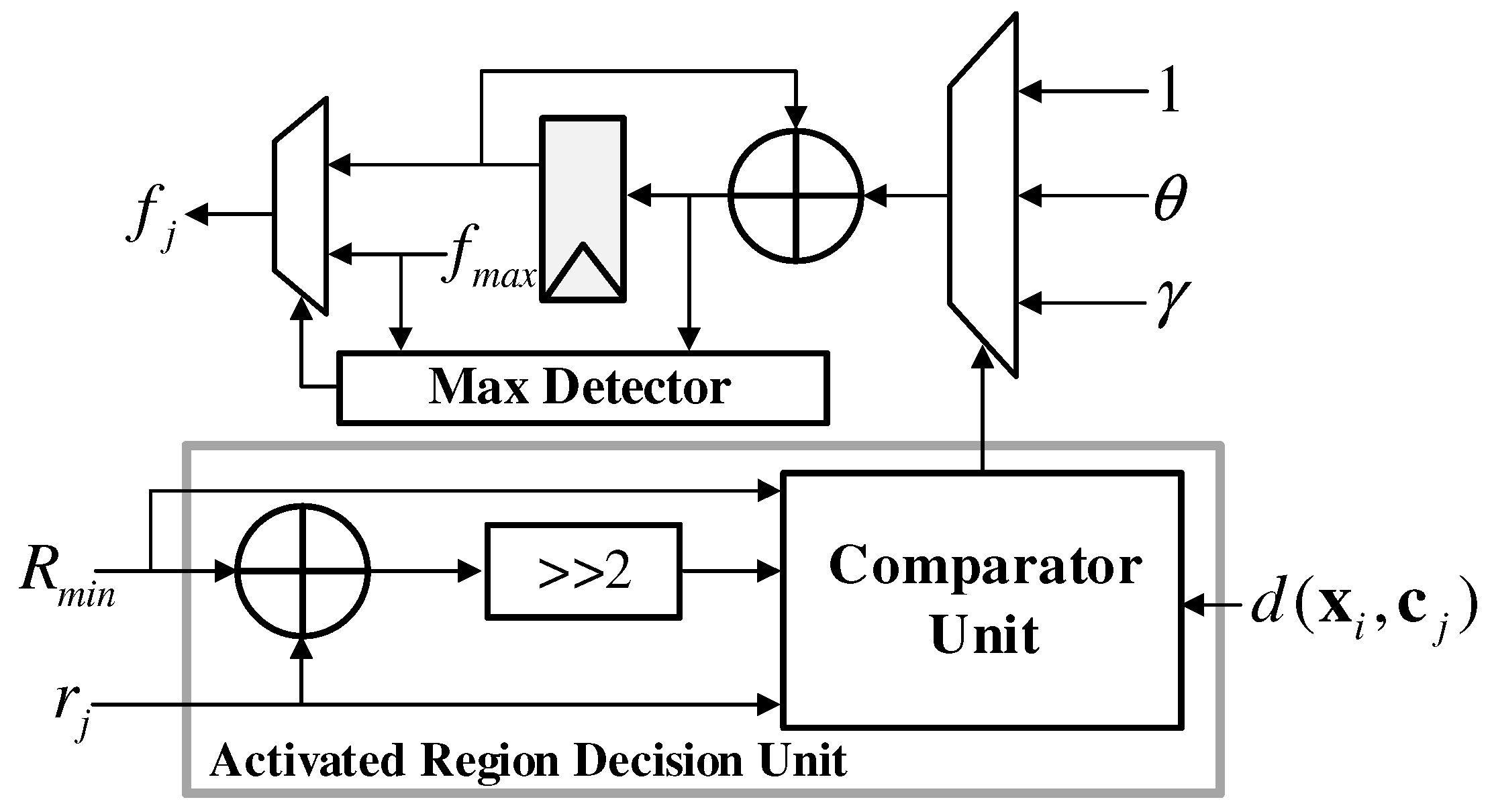

Figure 6 shows the block diagram of the proposed RCE-NN processor, including a feature memory unit (FMU), neuron unit (NU), activated neuron detection unit (ANDU), and network control unit (NCU). In the learning process, the unlearned NUs store the input feature vector from FMU in the neuron memory, and the learned NUs calculate distance between the input feature vector and the stored vector which represents neuron center. Then, the learned NUs compare the distance to the stored radius, and the activated NUs send the distance and label to the ANDU. The ANDU analyzes the output of the activated NUs and transfers the information of the minimum distance and label to the NCU.

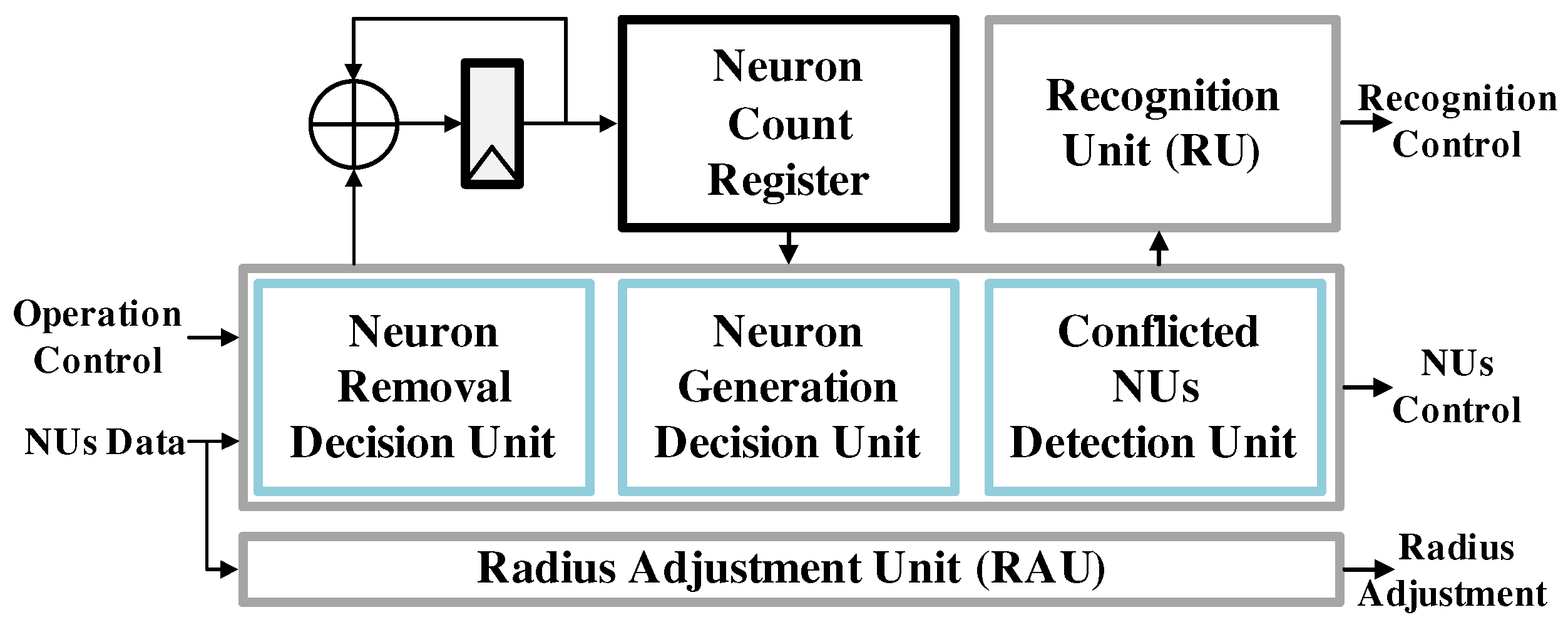

Figure 7 depicts the architecture of the NCU, which determines whether to generate a new NU or remove an existing NU. When a new NU is generated, the value of the neuron count register is increased by 1 to monitor the number of learned NUs. In addition, if the labels of the currently activated NUs are different from each other, the NCU adjusts the radii of the conflicted NUs through the radius adjustment unit (RAU). The RAU transmits the current feature vector’s label

and radius adjustment signal in parallel to each NU, and each activated NU compares

and

. If

and

are the same,

is increased by (

6) through the activation counter as shown in

Figure 8, where

is the upper limit of

to prevent unnecessarily increase. Conversely, the radius of an activated NU with a different label than the input feature vector is decreased by (

7) through the radius decision unit in NU.

In the recognition process, all learned NUs compute the distance between the feature vector from the FMU and the vector stored in the neuron memory, as in the learning process. Then, the activated NUs transmit their distances to the ANDU, and the ANDU sends the best-matched NU’s distance to the NCU. The NCU transmits the best-matched NU’s distance and the recognition control signal to each NU through the recognition unit (RU). Each NU compares the stored distance with the best-matched NU’s distance and outputs the label if it matches. Finally, the ANDU receives the best-matched NU’s label and outputs recognition results.

5. Implementation Results

The proposed RCE-NN processor was designed in Verilog hardware description language (HDL) and synthesized using the Synopsys Design Compiler to gate-level circuits with a 55 nm CMOS standard cell library. The key features of the proposed RCE-NN processor are summarized in

Table 2. It was observed that the proposed architecture required 197.8 K logic gates and 163.8 KB memory with the total die size of 0.535 mm

. Learning time for one feature vector of 128 byte was 0.93

s and recognition time was 0.96

s at the operating frequency of 150 MHz. The total power consumption measured with the test platform is 6.64 mW.

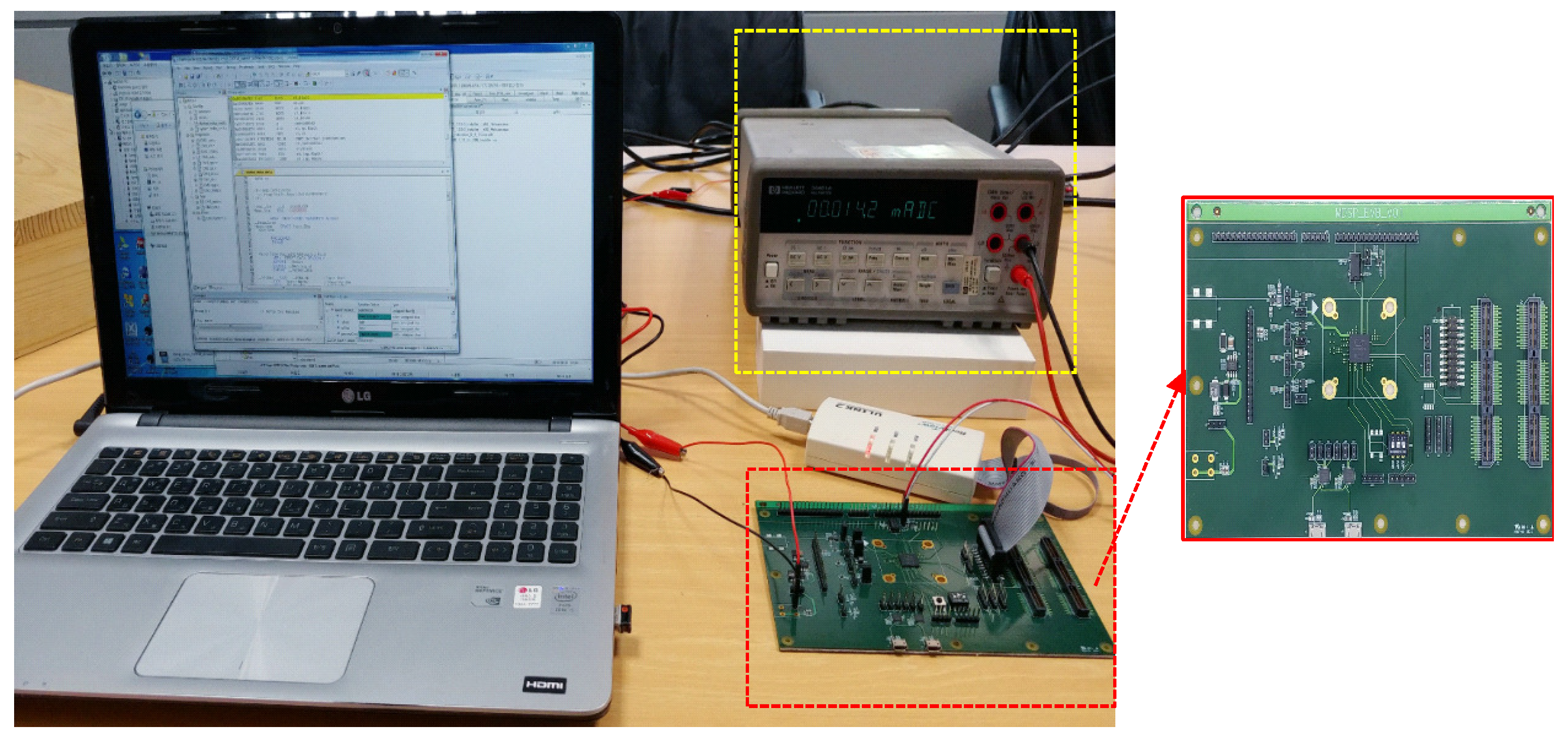

The RCE-NN processor was integrated in a system-on-chip (SoC) designed for sensor signal processing as shown in

Figure 9 and verified in real-time with test platform depicted in

Figure 10. The sensor signal processing SoC consisted of an ARM Cortex-M3 micro controller unit (MCU), a sensor interface unit (SIU), a feature extraction unit (FEU), and the proposed RCE-NN processor with the total die size of 2.97 mm × 2.96 mm. The data extracted from each sensor was pre-processed in the SIU and then converted into feature data in the FEU. The feature data were transferred to the RCE-NN processor which performs the learning and recognition.

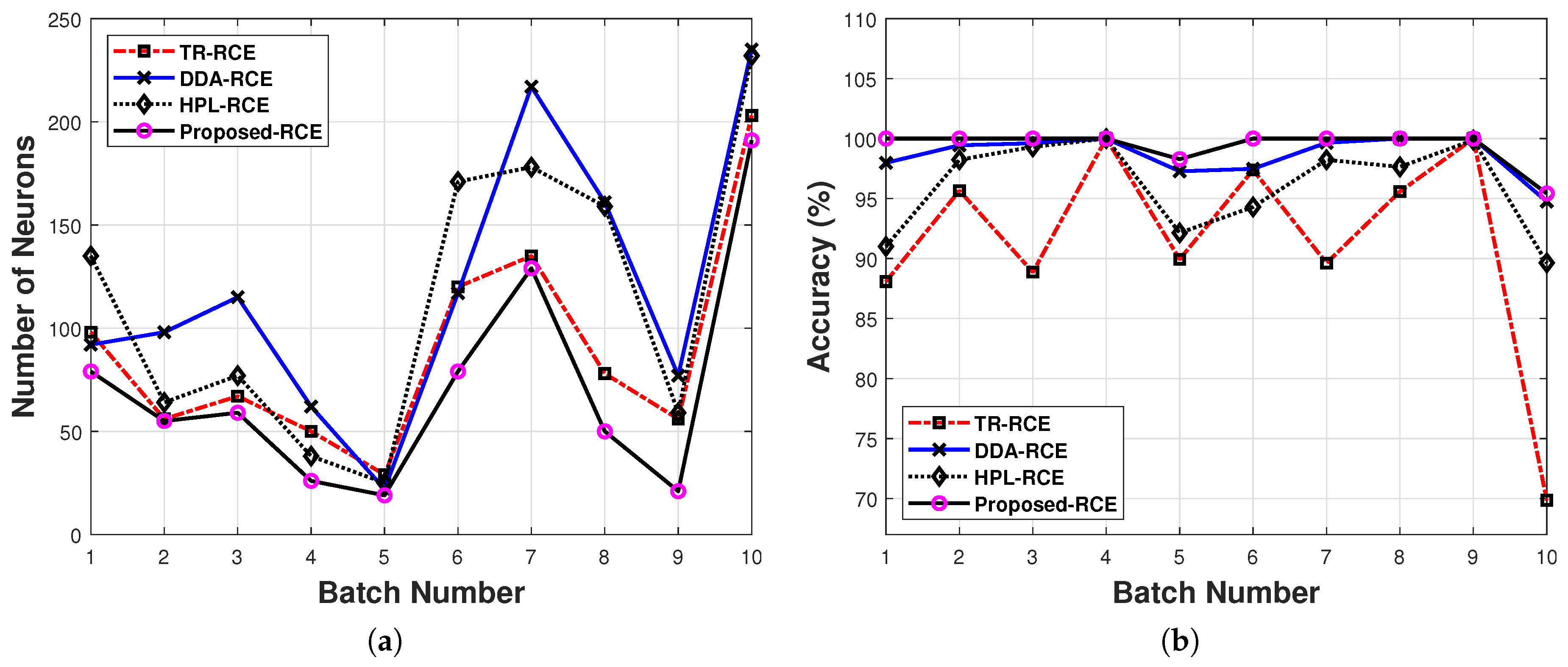

In order to compare the complexity of the proposed RCE-NN processor and the existing RCE-NNs, we implemented each algorithm presented in

Table 1 for the gas dataset.

Table 3 summarizes the complexity metrics such gate counts and memory requirements for each implementation. NU of the proposed RCE-NN processor was the largest because of additional resource for the activation counter shown in

Figure 8. However, since it shows better recognition accuracy with fewer neurons than TR-RCE and HPL-RCE, the total gate counts and internal memory requirements were smallest.