1. Introduction

The evolution of Wi-Fi standards has culminated in the IEEE 802.11ax (Wi-Fi 6) [

1], which introduces mechanisms such as Target Wake Time (TWT), Orthogonal Frequency-Division Multiple Access (OFDMA), and Basic Service Set (BSS) coloring to improve efficiency in dense deployments [

2,

3]. While these mechanisms are well defined at the standard level, real-world application performance, particularly for latency-sensitive video streaming, remains strongly influenced by deployment-specific factors such as receiving signal strength indicator (RSSI), network topology, and application bitrate. Existing studies have largely examined video performance in isolation or under fixed conditions, with limited attention to the combined impact of RSSI, topology, and bitrate in practical Wi-Fi 6 deployments. This study addresses this gap by systematically evaluating video playback delay and Quality of Service (QoS) behavior under realistic operating conditions. This study seeks to address the following three research questions:

What impact do various RSSI values, codec bitrates, and network topology have on video playback delays of a typical 802.11ax network for 2.4- and 5 GHz frequency bands?

What impact do video QoS parameters (playback delays, throughput) have on the changes in the channel conditions linked to RSSI values?

What impact does scaling the number of video clients have on QoS parameters in high-density Wi-Fi network scenarios?

To address these research questions, we conducted testbed experiments and simulation-based modelling. The 802.11ax network performance is evaluated in indoor settings and for dual-frequency band conditions. Two network topologies (i.e., ad hoc and infrastructure) are configured for an extensive testbed campaign. Further, OMNET++ simulations are employed to model network scalability and evaluate QoS for varying client densities.

Although the IEEE 802.11be (Wi-Fi 7) [

4] has recently been standardized, 802.11ax (Wi-Fi 6) remains the dominant high-efficiency Wi-Fi technology currently deployed across enterprises, campuses, and residential environments. Many of the architectural enhancements introduced in Wi-Fi 7, such as multi-link operation, enhanced scheduling, and improved spectral efficiency, build upon the core mechanisms first realized in 802.11ax, including OFDMA and multi-user transmissions. Consequently, a detailed understanding of 802.11ax performance under realistic operating conditions, such as varying RSSI, application bitrate, network topology, and client density, provides critical insight into the practical challenges and optimization strategies that are likely to persist in next-generation Wi-Fi deployments. In this context, 802.11ac [

5] is used as a baseline reference to quantify the relative performance improvements achieved by 802.11ax and to highlight the extent to which it addresses limitations observed in earlier standards.

This paper offers the following key contributions:

We examine the combined effect of RSSI, bitrate, and network topology on video playback delays over a typical 802.11ax client–server network. To achieve this, we develop two practical scenarios (ad hoc and infrastructure networks) to conduct extensive testbed field experiments and validate the system performance.

We explore the effect of 802.11ax dual-band (2.4- and 5 GHz) on system performance. To this end, we measure the video playback delays and throughput for 2.4 and 5 GHz spectra for comparative analysis.

We develop an OMNET++-based simulation model to evaluate the effect of increasing the number of video clients on system performance. The simulation model captures key QoS metrics, including end-to-end delays, throughput, and packet losses across multiple network configurations and scenarios.

We conduct a baseline performance comparison of 802.11ac and 802.11ax to quantify the improvements and limitations of Wi-Fi 6 under varying RSSIs, bitrates, and loadings.

The rest of this paper is organized as follows:

Section 2 reviews related work on 802.11-based performance studies.

Section 3 details the testbed setup, simulation model, and methodology used in the evaluation.

Section 4 presents results for both testbed and simulation studies. The results are discussed and contextualized in

Section 5, and the practical system implications are presented in

Section 6. Finally,

Section 7 concludes the paper with directions for future research.

2. Related Work

Recent studies on 802.11ax and its predecessor technologies have primarily emphasized theoretical modelling and simulation-based assessments. Kuang and Williamson [

3] explored the quality of streaming over legacy 802.11 networks, noting acceptable performance under ideal channel conditions but significant degradation with interference and weak signals. Mena and Heidemann [

6] conducted an empirical analysis of real audio traffic and reported challenges with TCP compatibility, which is critical for video streaming applications.

Shimakawa et al. [

7] examined video traffic performance over IEEE 802.11g using the Distributed Coordination Function (DCF) and Enhanced Distributed Channel Access (EDCA), highlighting the protocol’s limitations in supporting multimedia traffic. Sarkar et al. [

8,

9] modeled and tested 802.11g and 802.11ac networks, offering insights into the effects of access point (AP) configuration, signal strength, and spatial layout on network throughput and latency. While IEEE 802.11ax introduces advanced features such as OFDMA, MU-MIMO, and improved spectral efficiency, its real-world evaluation remains sparse. Another contribution by Linton-Price et al. [

10] extended this work by analyzing the role of IPv4/IPv6 and codec configurations under varying signal environments. Further IEEE publications [

11,

12,

13] have explored specific capabilities of 802.11ax, such as uplink MU transmission [

11], QoS-aware scheduling in dense deployments [

12], and comparative analyses of OFDMA performance [

13]. Park et al. [

14] provided a comprehensive technical overview of 802.11ax’s enhancements over earlier standards. Other works have employed simulation frameworks to assess performance metrics like latency, throughput, and reliability under controlled conditions but have not complemented these with testbed validation.

More recently, research attention has begun shifting toward 802.11be (Wi-Fi 7), which aims to further enhance throughput, latency, and reliability through features such as multi-link operation, wider channel bandwidths, and improved scheduling mechanisms [

15]. However, much of the existing Wi-Fi 7 literature remains focused on standardization aspects and analytical or simulation-based evaluations, with limited empirical validation under realistic deployment conditions. As many of the efficiency mechanisms introduced in 802.11ax form the foundation for subsequent enhancements in 802.11be, empirical performance studies of Wi-Fi 6 continue to provide valuable insight into deployment challenges and optimization strategies relevant to next-generation Wi-Fi.

Recent studies have also explored RSSI-aware optimization using artificial intelligence techniques, particularly in the context of beam selection and resource allocation in wireless networks. Deep learning-based approaches have demonstrated promising results in adapting transmission parameters under dynamic channel conditions. While such techniques were not within the scope of the present study, they represent a complementary research direction for enhancing adaptive performance in future Wi-Fi deployments [

16,

17]. Beyond optimization-centric approaches, empirical and QoE-oriented studies have examined how network dynamics, resource contention, and topology variations impact service-level performance in wireless and mobile environments. These works reinforce the importance of measurement-driven analysis when evaluating real-time applications over shared wireless media, which aligns with the testbed-driven methodology adopted in this study.

A summary of the most relevant related works is presented in

Table 1. The table outlines focus areas, evaluation methods, key findings and limitations. As the table shows, while prior studies have contributed to understanding specific aspects of wireless performance, few have combined field measurements with simulation modelling to assess 802.11ax across frequency bands, bitrate sensitivity, and the impact of RSSI. This study identifies a research gap: a comprehensive performance study of 802.11ax using real hardware and credible simulation is worth considering.

3. Methodology

This section discusses the testbed setup and configurations, simulation environment, and performance metrics used in this study to evaluate 802.11ax in a range of realistic and controlled conditions. The combination of empirical and simulation-based methods enables a holistic understanding of network behavior under varying environmental and traffic loads.

3.1. Study Design and Scenarios

This study adopts a combined experimental testbed and simulation-based methodology to investigate video streaming performance in wireless LAN environments. The primary evaluation of IEEE 802.11ax is conducted using a physical testbed, enabling controlled analysis of the effects of received signal strength (RSSI), application bitrate, frequency band, and network topology on video playback performance.

To complement the testbed and to explore scalability and client-density effects that are impractical to reproduce experimentally, simulation-based analysis is employed. As native IEEE 802.11ax support is not available in the selected simulation framework, the simulation component is based on an IEEE 802.11ac model and is used exclusively to examine relative scalability trends and congestion behavior, rather than to validate IEEE 802.11ax-specific MAC or PHY mechanisms.

To enable a fair and reproducible baseline comparison, the same experimental testbed setup, traffic profiles, and environmental conditions were used for IEEE 802.11ac measurements, differing only in the wireless standard configuration. This allows relative performance trends between IEEE 802.11ac and IEEE 802.11ax to be contextualized within the experimentally evaluated RSSI range.

Three representative scenarios were considered in this study, encompassing ad hoc and infrastructure-based testbed deployments, as well as a simulated wireless LAN environment. These scenarios are summarized in

Table 2, together with the corresponding network topology, operating spectrum, and application parameters.

Across all testbed scenarios, the received signal strength indicator (RSSI) was used as the primary indicator of the channel condition. Other link-quality metrics such as signal-to-noise ratio (SNR) were not directly measured and are therefore not used for quantitative analysis.

3.2. Experimental Testbed Setup

The experimental evaluation was conducted at the Auckland University of Technology (AUT) in both controlled indoor and semi-open environments to reflect practical wireless deployment conditions. Indoor experiments were performed along a 40 m corridor within the engineering building, incorporating typical obstructions such as walls, doors, and office equipment. Semi-open experiments were conducted in an outdoor garden area extending to 80 m, characterized by minimal physical obstructions and reduced external interference.

Two network topologies were implemented in the testbed to reflect commonly encountered wireless configurations: ad hoc and infrastructure-based deployments. In the ad hoc scenario, two Dell Latitude 5420 laptops, each equipped with an Intel AX201 Wi-Fi adapter, were configured in IEEE 802.11 ad hoc (IBSS) mode, with one device acting as a RealPlayer-based media server and the other as a media client. Communication was established over both the 2.4 GHz and 5 GHz frequency bands, and the received signal strength was varied by repositioning the client device at different distances from the server.

For the infrastructure scenario, a TP-Link Archer AX10 (AC1200-class) IEEE 802.11ax access point, manufactured by TP-Link Technologies Co., Ltd., Shenzhen, China, was used to emulate a typical enterprise or home wireless LAN environment. The access point was connected to the media server laptop via a wired Gigabit Ethernet link, while the wireless client accessed video streamed over Wi-Fi. The device operated using the manufacturer-provided firmware available at the time of experimentation, with default configuration settings unless otherwise stated. Firmware version identifiers and negotiated PHY-layer parameters (e.g., MCS, number of spatial streams, and instantaneous link rate) were not explicitly logged during experiments; the devices operated using manufacturer-default rate adaptation mechanisms. Consequently, the analysis focuses on application- and network-level performance metrics rather than PHY-layer optimization behavior.

Both client and server devices ran the Linux operating system and operated using default IEEE 802.11ax/802.11ac driver configurations. Channel bandwidths of 20 MHz in the 2.4 GHz band and 40 MHz in the 5 GHz band were used, with automatic rate adaptation and standard guard interval settings enabled. Security mechanisms were disabled to eliminate encryption overhead, and transmit power was held constant across all testbed experiments to ensure consistency. IPv4 and IPv6 protocol stacks were enabled to examine potential protocol-level impacts on performance.

To enable controlled and repeatable evaluation, the testbed was intentionally limited to a small number of clients. This design choice allows for the isolation of the effects of RSSI variation, bitrate selection, frequency band, and network topology on video playback performance without confounding effects from large-scale contention.

Figure 1 illustrates the IEEE 802.11ax infrastructure-based client–server testbed configuration used in this study. RSSI values were obtained directly from the wireless network interface during active streaming. Noise floor and SNR values were not recorded by the testbed devices and were therefore not available for direct measurement.

3.3. Simulation Environment

To complement the physical 802.11ax testbed and explore high-density scenarios impractical to replicate manually, a simulation study was conducted using OMNeT++ version 5.6 with the INET framework. As OMNeT++ does not yet provide full native support for 802.11ax, the simulation employs an 802.11ac-based model configured to reflect representative system-level operating conditions (e.g., traffic load, client density, and channel utilization). Accordingly, the simulation results are used to analyze relative scalability trends and congestion effects under increasing client load and are not used to validate 802.11ax-specific MAC or PHY mechanisms such as OFDMA or MU-MIMO.

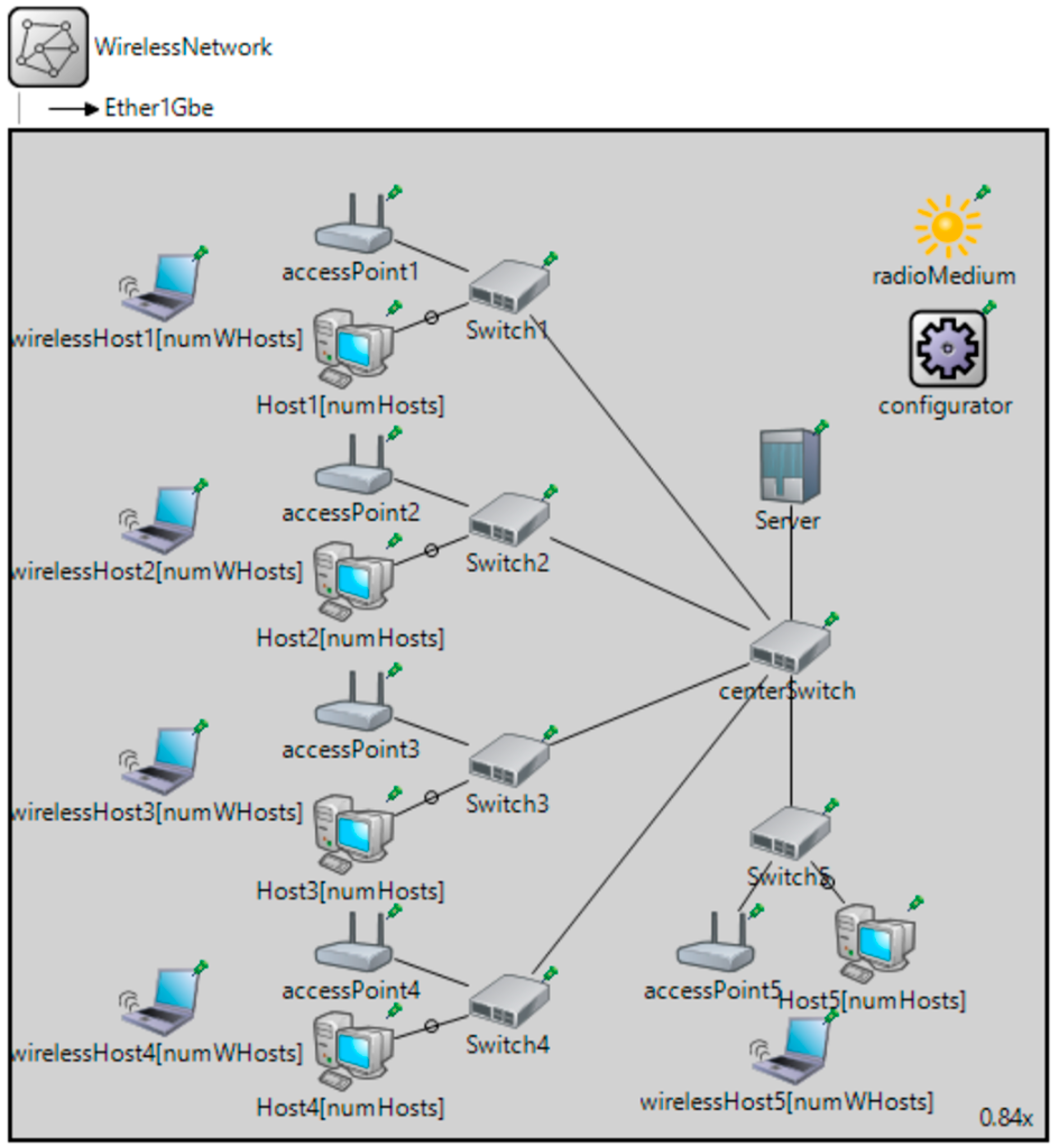

Figure 2 shows the OMNeT++ illustration of the simulated architecture featuring a video server, five access points (APs), and video clients. The five wireless access points are connected through a 1 Gbps backbone to a video streaming server. Up to 50 clients were distributed evenly across the network, and their behavior was modeled using ‘UdpVideoStreamClient’, which mimics real-time video conferencing applications.

The simulation parameters are defined to reflect the conditions of the testbed as closely as possible, as shown in

Table 3. The simulation was executed for a duration of 3600 s, and the collected metrics were aggregated as average values to capture steady-state behavior under each client–load condition. In the simulation environment, RSSI thresholds were configured as model parameters to represent representative channel conditions and to enable comparative analysis across load scenarios. These thresholds are not derived from measured SNR values. Definitions of the evaluated performance metrics and aggregation approach are provided in

Section 3.4.

3.4. Measurement Tools, Metrics, and Data Aggregation

This study evaluates wireless video streaming performance using a set of quantitative and application-level quality-of-service (QoS) metrics commonly adopted in wireless network performance analysis. The methodology combines experimental testbed measurements with simulation outputs; therefore, the measurement tools used in the testbed and the metric definitions used throughout the study are summarized below.

Client Device: Dell Latitude 5420 with Intel AX201 Wi-Fi 6 adapter.

Media Streaming: Video files were encoded at bitrates of 1.1 Mbps, 1.7 Mbps, and 5.1 Mbps using MP4 format and streamed using RealPlayer over real-time streaming protocol (RTSP).

RSSI Monitoring: RSSI levels were adjusted by relocating the client device and recorded using system-level wireless interface statistics (e.g., RSSI values reported by the network driver). SNR values were not explicitly measured and are therefore not used for quantitative analysis.

Throughput Measurement: iPerf3 was used to generate controlled UDP traffic and compute effective throughput.

Throughput (bps): Defined as the average number of bits successfully delivered from the server to the client per second. Throughput is used to assess the effective data delivery capability of the wireless link under varying signal conditions, bitrates, and client load.

End-to-End Delay (milliseconds): End-to-End Delay (milliseconds): Defined as the time taken for a packet to travel from source to destination, accounting for transmission, propagation, and queuing delays. This metric is obtained directly from the simulation environment and is used to characterize network-level latency behavior under increasing client load and congestion.

Packet Loss (%): Defined as the percentage of transmitted packets that fail to reach the intended recipient. Packet loss is used as an indicator of congestion, buffer overflow, or link degradation under increasing traffic load or weak channel conditions.

Playback Delay (milliseconds): In this study, playback delay is defined as an application-level proxy for network-induced latency during active video streaming. The metric represents the delay between media data transmission and its effective availability at the receiver for playback. Playback delay does not correspond to true one-way packet transmission latency, nor does it directly measure user-perceived startup delay or rebuffering duration; rather, it provides a consistent indicator of network responsiveness under varying channel and load conditions.

Playback delay is instantiated differently in the experimental testbed and in the simulation environment while adhering to the same underlying construct. In the experimental testbed, playback delay is derived by temporally aligning ICMP round-trip time (RTT) measurements with application-level playback event timestamps recorded by the media player during active streaming intervals. In the simulation environment, playback delay is derived from simulation-provided application-level timing information in conjunction with packet-level delay statistics produced by the video streaming model. Although the simulation does not explicitly model application-layer playback buffering behavior, the resulting delay values serve as a consistent application-level proxy aligned with the playback-delay construct defined above.

In the experimental testbed, ICMP RTT probes were generated periodically from the client device during active video playback. Playback start and buffer refill events were logged locally by the media player on the same device, eliminating the need for inter-device clock synchronization. Playback delay was computed by temporally correlating RTT samples with the nearest corresponding playback event timestamps during steady-state playback. The initial startup buffering phase was excluded from analysis, and transient RTT spikes were filtered using simple outlier rejection to avoid distortion from non-representative network events.

In the simulation environment, all timing measurements share a common simulation clock, and therefore no clock synchronization assumptions are required. Playback delay is obtained directly from application-level timing outputs and packet delay statistics produced by the video streaming model. As buffering behavior is not explicitly modeled, playback delay reflects a deterministic application-level proxy derived from simulated transmission and reception timing. Given identical model parameters and random seeds, the simulation-derived playback delay values are reproducible.

Each experimental testbed condition (topology × frequency band × bitrate × RSSI level) was evaluated through repeated runs to capture short-term channel variability and measurement repeatability. Depending on link stability and successful playback completion, each configuration was repeated three to five times (n = 3–5).

The reported playback-delay and throughput values represent mean values across the successful runs for a given configuration. As playback delay is derived as an application-level proxy metric, and per-packet timing or buffer-level logs were not recorded, run-to-run variability is discussed qualitatively rather than tabulated numerically, to avoid overstating statistical precision.

4. Results and Analysis

This section presents the testbed and simulation results, organized by the network topology, frequency band, and evaluation metric. Unless otherwise stated, all reported performance metrics in this section represent averaged values obtained from repeated experimental runs or aggregated simulation intervals. In this study, video bitrate refers to the fixed application-layer encoding rate of the streamed content, while throughput denotes the measured network-layer data delivery rate observed at the receiver. It includes a comparative analysis of 802.11ax performance in ad hoc and infrastructure setups across 2.4 GHz and 5 GHz frequencies, as well as the impact of increasing client density in the simulated environment.

4.1. Impact of RSSI on Playback Delay and Throughput

The results reveal a strong correlation between received signal strength (RSSI) and video playback delays. As RSSI degraded from −48 dBm to −70 dBm, playback delays increased significantly, particularly on the 2.4 GHz band. At −48 dBm, 5 GHz links exhibited consistently lower playback delay (single-digit milliseconds) across all bitrates in both ad-hoc and infrastructure topologies, whereas 2.4 GHz ad hoc links experienced substantially higher delays even under strong signal conditions. As RSSI decreased further toward −70 dBm, playback delays often exceeded 100 milliseconds and wireless connection loss (WCL) was observed in several cases. This trend is consistent with the qualitative wireless channel characterization shown in

Table 4. Some non-monotonic variations across RSSI levels (e.g., isolated decreases in average delay at weaker RSSI) are attributable to short-term channel variability, adaptive rate selection, and buffering effects and do not indicate systematic performance improvement. Run-to-run variability is not tabulated numerically due to the exploratory nature of the study and the limited number of repetitions per configuration; instead, the analysis focuses on comparative performance trends observed consistently across scenarios.

The channel quality gradation shown in

Table 4 was reflected in application-level video performance.

Table 5 links these conditions with observed video quality metrics.

This characterization supports the testbed findings that fair-to-bad RSSI values severely degrade playback experience and often result in wireless disconnections.

4.2. Baseline Performance Comparison: 802.11ac vs. 802.11ax

To contextualize the observed performance of 802.11ax, a baseline comparison was conducted against 802.11ac under varying received signal strength (RSSI) and application bitrate conditions. The baseline measurements were obtained using a comparable experimental setup and identical video workloads, allowing for a consistent evaluation of playback delay behavior as signal conditions deteriorate.

The 802.11ac baseline results show that video playback delay remains negligible under strong signal conditions (e.g., RSSI ≥ −67 dBm) across all tested bitrates. However, as RSSI degrades, playback delay increases sharply, particularly for higher bitrates. At RSSI values around −85 dBm, playback delays escalate dramatically, ranging from approximately 116 s to over 550 s depending on the video bitrate—indicating severe buffering and instability. Beyond −87 dBm, wireless connection loss was frequently observed, rendering sustained video playback infeasible.

The 802.11ax testbed measurements in this study span an RSSI range of −48 dBm to −70 dBm. Within this measured range, 802.11ax infrastructure deployments exhibit improved delay stability and link robustness compared to 802.11ac under comparable signal conditions. While performance degradation is still evident at weak RSSI, the results indicate that the scheduling efficiency and enhanced medium access mechanisms of 802.11ax mitigate, but do not fully eliminate, the impact of bad channel conditions.

The baseline comparison highlights relative performance differences between 802.11ac and 802.11ax while avoiding extrapolation beyond the measured 802.11ax operating range. The key differences in playback delays behavior, link robustness, and sensitivity to RSSI are summarized in

Table 6. The 802.11ac results are included as a contextual baseline reference rather than as a direct quantitative comparison at RSSI levels not measured for 802.11ax; therefore, any references to −85 dBm in

Table 6 reflect baseline-only measurements and qualitative comparative interpretation rather than direct 802.11ax testbed data.

4.3. Comparison of Ad Hoc and Infrastructure Network Topologies

The infrastructure network consistently outperformed the ad hoc configuration. This behavior is consistent with the operational characteristics of infrastructure deployments, including centralized access point coordination and improved handling of contention and interference. In contrast, the ad hoc setup experienced frequent connection drops beyond −63 dBm and demonstrated higher playback delays and jitters.

Playback-delay values reported in

Table 7 represent mean values obtained from repeated experimental runs. Each configuration was evaluated multiple times (n = 3–5), depending on link stability and successful playback completion. WCL denotes wireless connection loss, where sustained video playback could not be maintained.

The playback delay values are reported in milliseconds. Wireless connection loss (WCL) indicates a failure to sustain video streaming under the given conditions. The high playback delays observed for ad hoc 2.4 GHz configurations at strong RSSI reflect the absence of centralized coordination and increased contention inherent to peer-to-peer operation, rather than poor signal quality.

The effect of network topology and codec bitrate at various RSSI levels is shown in

Table 7. In ad hoc mode, wireless connection loss (WCL) occurred at −63 dBm and −70 dBm across all evaluated bitrates. In contrast, the infrastructure mode maintained video playback under degraded RSSI conditions, albeit with increased delay. The results further reinforce the benefits of infrastructure topology, particularly on the 5 GHz band, where playback continuity was preserved across all tested RSSI levels.

4.4. Simulation Results: Scalability and Client Density

The OMNeT++ simulations provide indicative insight into network behavior under increasing client load. With a small number of video clients (five clients), the average end-to-end delay was approximately 50 milliseconds, while packet loss was already non-negligible (around 25%), reflecting the conservative buffer and traffic model used in the simulation. As the number of video clients increased to 50, playback delay rose to approximately 140 milliseconds and packet loss exceeded 80%, indicating the severe congestion and saturation of available network resources. These results highlight the rapid degradation in delay and reliability as client density increases in the simulated environment.

For clarity, all result figures are interpreted comparatively by examining performance trends across both infrastructure and ad hoc modes under identical RSSI, bitrate, and frequency band conditions, enabling the direct visual and analytical comparison of topology-dependent behavior.

Figure 3 illustrates the significant decline in throughput as client density increases. The system’s ability to deliver data degrades steadily after 10 clients and drops by more than 75% to 50 clients. Throughput declines with the growth in client count, indicating limited capacity for concurrent transmissions in the simulated wireless network under increasing client load. Starting at over 830,000 bps for 5 clients, throughput drops steadily and falls below 210,000 bps with 50 clients. This downward trend highlights the challenge of maintaining high data rates in dense environments.

Figure 4 displays the corresponding increase in end-to-end delays, showing that latency becomes a critical concern beyond 20 clients. The delay grows in a non-uniform manner, with noticeable jumps around 15 and 30 clients. The end-to-end delays grow gradually but significantly as the client count increases. It rises from approximately 55 milliseconds at 5 clients to nearly 137 milliseconds at 50 clients. This non-uniform growth reflects queue buildup and increases medium access delays in saturated conditions. The observed delay beyond 20 clients suggests potential degradation in real-time application performance, especially for video traffic.

Packet loss, shown in

Figure 5, surges steeply after 25 clients. Starting from 25% loss at 5 clients, it exceeds 80% by the time 50 clients are active. This indicates that the network buffers and medium access control mechanisms are overwhelmed under high traffic conditions. The packet loss increases sharply with the number of clients, beginning at 25% with 5 clients and exceeding 80% with 50 clients. This trend demonstrates that the wireless medium becomes increasingly unreliable as more users contend for limited airtime. The rising packet drop rate indicates excessive retransmissions and congestion, rendering the network unsuitable for real-time services at higher loads without QoS or traffic shaping mechanisms.

These graphs demonstrate that, under the evaluated traffic model, the simulated wireless network struggles to sustain performance beyond moderate client loads without enhanced traffic management techniques.

4.5. Frequency Band Analysis: 2.4 GHz vs. 5 GHz

The 5 GHz band demonstrated better performance at close range due to wider channel width and less interference. However, it was more sensitive to distance and obstacles, leading to connection losses in the ad hoc setup. Conversely, the 2.4 GHz band maintains connectivity over longer distances but with lower throughput. Overall, the findings support the importance of selecting the appropriate band and topology based on the coverage requirements, expected client density, and application type (e.g., real-time video). The validation of the results is discussed next.

5. Results Validation and Discussion

This section provides a detailed discussion on the results, revisits the research questions outlined in

Section 1, and maps them into the results obtained:

What impact do various received signal strength indicator (RSSI) values, codec bitrates, and network topology have on video playback delays of a typical 802.11ax network for 2.4 GHz and 5 GHz frequency bands?

The findings from the evaluation clearly show that weak RSSI values significantly increase playback delays and reduce throughput. Playback became jerky or failed (WCL) at −63 dBm and −70 dBm in ad hoc setups. Conversely, in infrastructure mode, especially on the 5 GHz band, networks maintained playable performance across all RSSI conditions, though with increased delay at lower strengths.

The baseline comparison between 802.11ac and 802.11ax (

Table 6) provides contextual insight into how video playback performance evolves across Wi-Fi generations as channel conditions degrade. Within the RSSI range explicitly measured for 802.11ax, the results indicate improved delay stability and link robustness compared to 802.11ac, particularly in infrastructure deployments. At the same time, the baseline findings highlight that degraded signal strength remains a dominant limiting factor for video-centric applications, as evidenced by the severe performance degradation observed for 802.11ac at very weak RSSI levels. Overall, these observations suggest that system-level enhancements in 802.11ax mitigate, but do not eliminate, the impact of adverse channel conditions, underscoring the continued importance of RSSI-aware deployment and client management strategies.

To corroborate the network-level playback delay metric, application-level startup delay was also examined using timestamps extracted from the media player logs. Consistent trends were observed, with lower startup delay corresponding to scenarios exhibiting reduced playback delay, particularly in infrastructure mode and under stronger RSSI conditions. While detailed QoE metrics such as rebuffering frequency were not the focus of this study, this consistency supports the use of playback delay as a comparative indicator of network responsiveness across the evaluated scenarios.

- 2.

What impact do video QoS parameters (e.g. playback delays) have on the changes in the channel conditions?

Infrastructure networks consistently outperformed ad hoc setups in terms of stability, throughput, and delay. In ad hoc networks, wireless disconnections occurred frequently beyond −63 dBm, while infrastructure setups maintained playable video streams with only moderate delay increases. This highlights the robustness of centralized management for dynamic environments.

- 3.

What is the impact of scaling the number of video clients on QoS parameters in high-density Gigabit Wi-Fi network scenarios?

Simulation results obtained from OMNET++ reveal that as the number of clients increases, network performance deteriorates substantially. Throughput drops by over 75%, end-to-end delay nearly triples, and packet loss exceeds 80% with 50 video clients. These observations indicate that without traffic prioritization and congestion control mechanisms, the simulated WLAN performance is significantly impaired under high user loads.

These results align with prior studies that emphasize the reported spectral efficiency of 802.11ax under ideal laboratory conditions. However, this study reveals key real-world limitations that emerge under dynamic wireless conditions. The most pronounced issues arise under degraded RSSI and higher client densities, where packet loss, throughput degradation, and end-to-end delay severely compromise network performance.

First, the infrastructure network consistently demonstrated better stability and reliability than the ad hoc network. This highlights the importance of centralized access point management, especially for real-time and video-centric applications. The infrastructure mode was able to withstand lower signal strength environments, particularly on the 5 GHz band, reflecting improved stability under centralized access point coordination. The 5 GHz band proved to be more effective for short-range, high-throughput scenarios, while the 2.4 GHz band was more resilient over longer distances. However, the results also indicate that selecting a band is insufficient, and performance is equally dictated by channel conditions (RSSI/SNR), bitrate demands, and device capabilities. From the simulation results, it is evident that network performance under dense client loads is susceptible to bottlenecks. Beyond 20 clients, degradation in throughput and rises in latency and packet loss become pronounced. The simulation effectively emulated realistic network congestion and buffer overflow scenarios, highlighting the need for intelligent traffic shaping and Quality of Service (QoS) provisioning in future deployments.

The observed robustness of the 802.11ax testbed under dynamically varying RSSI conditions reflects improved system-level performance relative to legacy configurations. As MAC- and PHY-layer signaling was not directly instrumented, the results are interpreted at the level of end-to-end and application-level behavior rather than attributed to specific 802.11ax mechanisms.

The qualitative analysis of channel conditions (

Table 4 and

Table 5) correlated well with quantitative playback delays (

Table 7). Excellent and good channel states maintained uninterrupted video delivery, while fair and bad channels led to visual degradation and even connection loss. This reinforces that signal strength and qualitative channel condition indicators should be integral parameters in Wi-Fi 6 deployment planning. In addition, subtle differences observed between IPv4 and IPv6 performance suggest the influence of protocol stack overhead, packet handling mechanisms, and device support. These protocol-related effects merit further study to optimize network-layer performance for emerging applications.

Both IPv4 and IPv6 were supported in the experimental setup. For video streaming scenarios considered, no statistically significant performance differences attributable solely to IP version were observed. The relative impact of IPv4 versus IPv6 header overhead was negligible compared to dominant factors such as RSSI variation, channel conditions, and MAC-layer scheduling.

Overall, our study demonstrates that while 802.11ax introduces several technical advancements, practical implementation success hinges on holistic system design incorporating network topology, environmental factors, device density, frequency planning, and adaptive bitrate control. These insights are particularly valuable for campus, enterprise, and high-occupancy residential deployments where robust and reliable wireless connectivity is essential.

Testbed Results Validation: The reliability of the testbed measurements was enhanced by addressing several factors. First, field experiments were consistently conducted during the same weekly time windows to maintain stable environmental conditions, such as occupancy levels in the test area. Second, efforts were made to minimize co-channel interference from surrounding networks or devices by selecting the wireless channel with the least detected traffic. Lastly, each test scenario was executed multiple times to ensure the repeatability and consistency of the collected data.

Simulation Model Validation: OMNET++ is considered a dependable open-source simulation platform; however, improper parameter configuration can compromise result accuracy [

18,

19]. Initially, the simulation log files were reviewed to confirm error-free execution and smooth operation of the models. To obtain a representative dataset, the simulation was conducted over a duration of one hour. Following this, the setup was examined for network compatibility to ensure the absence of any technical discrepancies. Finally, OMNET++ simulation models were qualitatively compared against field experiment data gathered from two wireless-enabled laptops and an 802.11ax access point. The simulation results exhibit qualitative agreement with the testbed observations, demonstrating consistent trends in throughput degradation and delay increase under comparable load and channel conditions. This supports the use of the simulation model for indicative scalability analysis rather than exact quantitative replication.

7. Concluding Remarks

In this paper, the combined effect of RSSI, bitrate, and network topology on video playback delays in IEEE 802.11ax (Wi-Fi 6) networks was investigated using a combination of real-world testbed experiments and simulation-based analysis. The testbed results demonstrate that, under practical deployment conditions, 802.11ax can exhibit improved robustness to fluctuating RSSI, consistent with improved system-level performance characteristics associated with IEEE 802.11ax. Complementarily, the simulation study highlights general scalability challenges under increasing client density, showing that performance remains strongly influenced by factors such as RSSI, frequency band, network topology, and offered load. The testbed results obtained have shown that the infrastructure network outperformed ad hoc ones, maintaining lower playback delays even under weak signal conditions. At strong signal levels (−48 dBm), infrastructure-mode deployments on the 5 GHz band consistently exhibited low playback delay across all evaluated bitrates. In contrast, ad hoc operation on the 2.4 GHz band experienced markedly higher delays, while ad hoc 5 GHz links remained stable only under strong RSSI conditions. Simulation results revealed that network throughput drops by approximately 75% and packet loss exceeds 80% as video clients increased from 5 to 50. The comparison between 2.4 GHz and 5 GHz bands highlighted performance trade-offs, i.e., 5 GHz offering better speed at close range, while 2.4 GHz ensured broader but slower coverage. This research provides the following deployment guidelines: (i) use an infrastructure network for video-rich environments and maintain an RSSI above −63 dBm; (ii) apply adaptive bitrate control; (iii) manage active video client density per access point to avoid excessive congestion; and (iv) consider QoS mechanisms were supported to prioritize video traffic. These insights support Wi-Fi 6 planning across educational, enterprise, and residential settings. The inclusion of an 802.11ac baseline further contextualizes these findings, indicating that within the evaluated conditions, IEEE 802.11ax offers improved resilience to degraded RSSI and increased traffic load, while careful network planning remains essential to achieving reliable video performance. Future work will address the impact of mobility on system performance. The cross-vendor hardware testing to verify the consistency of 802.11ax performance is suggested as future work. The performance evaluation of the latest 802.11be (Wi-Fi 7) readiness for high-definition streaming and dynamic environments is also suggested as future research work.