1. Introduction

The expansion of renewables in modern power systems and the ongoing industrial digital transformation are pushing power utilities to move from settling by energy to data- and model-driven operation for upstream and downstream industries [

1]. Here, upstream industries refer to raw-material and basic-material sectors at the front of the value chain (e.g., mining, steel, chemicals, and cement), whereas downstream industries refer to mid- to end-manufacturing and application-oriented sectors, e.g., equipment manufacturing, building materials, consumer electronics, and renewables-related manufacturing. Industrial power demand data now form high-frequency, multi-year records across regions, sectors, and user levels and have become a key resource for describing the operating rhythm of industrial value chains and the structure of energy use [

2]. Survey studies show that exploiting structural features and cross-scale temporal dependencies in user-side load data is essential for refined load forecasting and planning-oriented decision support [

3,

4]. However, the field still lacks a unified medium- and long-term modeling and forecasting framework for electricity demand across upstream and downstream industries that also balances forecasting accuracy, computational efficiency, and structural interpretability [

5].

To further motivate the selection of representative sectors in this study,

Table 1 summarizes the annual final energy consumption of major manufacturing sub-sectors in China (in 2019). It shows that the ferrous metals (steel) and chemical sub-sectors are among the largest energy consumers, and they are therefore adopted as representative high-intensity upstream sectors in our case studies. In contrast, lower-energy sub-sectors (e.g., textiles) are included to reflect downstream diversity and to stress-test the proposed framework under heterogeneous industrial rhythms.

In terms of load characteristics, electricity consumption in upstream and downstream industries simultaneously involves short-term intraday fluctuations at 15 min or even finer resolutions, as well as multi-scale periodic structures at weekly, monthly, quarterly, and seasonal horizons. At the same time, differences in production processes, operating conditions, and equipment configurations across industries lead to pronounced diversity and hierarchical structure in the resulting load series [

6]. On the one hand, upstream sectors such as steel, chemicals, and cement typically exhibit high load levels, long operating cycles, and relatively stable shift patterns; their intraday fluctuations are relatively smooth, while weekly, monthly, and seasonal periodicity is more prominent [

7]. On the other hand, downstream sectors such as equipment manufacturing, building materials, and consumer electronics are more strongly influenced by market demand, order fluctuations, and environmental factors, and they thus display more pronounced seasonality and uncertainty [

8]. This multi-sector, multi-scale, multi-pattern nature imposes higher requirements on a unified modeling framework, which must be capable of capturing complex periodic structures while maintaining scalability and interpretability in multi-sector and multi-user scenarios.

Traditional time series methods, such as ARIMA and its seasonal extensions, have demonstrated certain advantages for nearly linear, weakly nonstationary load series [

9,

10]. By parameterizing trend and seasonal components, such models can achieve reasonably stable performance in short- and medium-term forecasting. However, they often struggle when confronted with strong nonlinearities, superposition of multiple periods, and structural breaks, and they have difficulty capturing complex patterns that span multiple time scales simultaneously. In recent years, deep learning methods based on LSTM, GRU, attention mechanisms, and Transformer architectures have achieved notable progress in short- and medium-term load forecasting [

11]. These models can alleviate nonlinear and multi-scale issues by automatically extracting high-dimensional features from raw sequences through deep network structures. Nevertheless, they typically involve a large number of parameters, incur high training costs, and are sensitive to sample size and hyperparameter tuning. Moreover, their internal structures are difficult to interpret directly, along with the mapping from prediction results to physically and operationally meaningful concepts. This lack of transparency poses challenges in power system planning and learning algorithm analysis, where interpretability and communicability are particularly important [

12,

13,

14].

To address these challenges, a promising line of research returns to the perspective of system identification and frequency-domain analysis, treating load time series as dynamic signals formed by the superposition of several dominant periodic components [

15]. The imperative to transition from purely data-driven black-box models to interpretable, mechanistic representations of dynamic systems is driving a significant evolution in time series analysis [

16]. This shift is particularly crucial in energy forecasting, where models must not merely predict but also offer profound mathematical insight into the underlying mechanisms and operational rhythms of complex industrial loads. Modern system identification (SI) is uniquely positioned to address this need by offering a principled approach to constructing parsimonious, real-world-compliant models that avoid the mere stacking of parameters.To address these challenges, a promising line of research returns to the perspective of system identification and frequency-domain analysis, treating load time series as dynamic signals formed by the superposition of several dominant periodic components. The foundation of modern SI was laid by seminal works that formalized the process of constructing mathematical models from observed data, particularly the maximum likelihood principle method [

17] and prediction error method (PEM) [

18,

19]. These methods established rigorous statistical frameworks for estimating model parameters, moving beyond simple curve fitting. Contemporary system identification methods, such as Sparse Bayesian Learning (SBL) and Sparse Identification of Nonlinear Dynamics (SINDy), offer an alternative paradigm by focusing on discovering the underlying governing equations or sparse representations that describe the system’s evolution. Unlike purely data-driven black-box models, these techniques aim to distill complex dynamics into a compact, interpretable mathematical form, often involving ordinary or partial differential equations, or in the time series context, a minimal set of basis functions. The core concept of utilizing kernel methods and achieving sparsity through generalized priors, as seen in the Relevance Vector Machine (RVM) [

20] and subsequent SBL extensions [

21], provides a statistically robust way to select only the most relevant features. SBL, for instance, can be used for sparse feature selection in a regression context, effectively identifying the most relevant past observations or periodic components (e.g., Fourier basis) that contribute to the current load. Meanwhile, the development of SINDy [

22,

23] has revitalized the application of identification to complex, nonlinear time series, including those relevant to industrial processes and energy systems. These data-driven approaches leverage the power of

or sparsity-inducing norms to find the most parsimonious representation of the system dynamics. The success of these modern techniques relies heavily on the maturity of earlier identification works, such as the comprehensive methodology of Ljung’s system identification framework [

24] and the fundamental principles of optimal control and estimation [

25]. Similarly, the spirit of SINDy, which discovers parsimonious models by simultaneously identifying the relevant terms from a library of candidate functions and the coefficients for those terms, can be adapted to time series decomposition. By projecting the load data onto a carefully constructed dictionary (which may include trigonometric functions, wavelets, or other domain-specific patterns), these identification techniques can automatically select the minimal set of multi-scale periodic components and their corresponding amplitudes and phases. This approach inherently balances model complexity and interpretability: the resulting model is a sum of physically meaningful, identifiable periodic oscillations, offering a clear link between the forecast and the observed operational rhythms (e.g., daily shifts, weekly cycles, seasonal variations) of the upstream and downstream industries. This system-identification-based decomposition provides a structural understanding that is difficult to obtain from opaque deep learning models while explicitly handling the multi-scale periodicity that challenges traditional ARIMA models [

26]. Furthermore, the application of subspace identification methods [

27] has provided computationally efficient ways to model high-dimensional, multi-output systems, which is highly relevant for multi-sector load forecasting. The ongoing convergence of robust identification methods, sparse optimization, and control theory [

28] promises to unlock truly interpretable and scalable solutions for new-type power systems, indicating that system identification currently holds immense potential for establishing sophisticated and highly reliable predictive models in time series forecasting across numerous critical domains.

Building on these insights, this paper adopts a system identification perspective and proposes a new load modeling approach for upstream and downstream industries that emphasizes both interpretability and scalability, centered on daily freeze electricity time series at the user and sector levels from SOTA learning methods [

29,

30,

31]. Specifically, we propose the SIABF and follow a technical route of daily aggregation of high-frequency data, frequency-domain analysis, sparse identification, and long-term extrapolation. First, 15 min enterprise-side load measurements are aggregated to the daily scale to construct daily freeze series that reflect the electricity intensity of enterprises or sectors, which reduces modeling dimensionality while preserving long-term trends and periodic information and aligns with existing practice in day-level medium- and long-term load forecasting [

32]. Second, discrete Fourier transforms are applied to the daily freeze series to extract dominant frequency components, from which a library of sinusoidal basis functions with clear frequency-domain interpretation is constructed, and a spectral complexity index is introduced to quantify the periodic complexity of different series. Third, a sparse regression framework with

regularization is employed to automatically select a small set of key basis functions and their coefficients from the candidate library, resulting in a compact and interpretable representation of the load series. Structurally, the SIABF model is explicitly represented as a linear combination of dominant periodic basis functions, which facilitates interpretation of industrial electricity consumption behaviors from the perspectives of daily, weekly, and seasonal cycles; from the identification standpoint, sparsity constraints enable automatic basis selection, thereby enhancing generalization and robustness in line with existing sparse system identification and compressive sensing methods [

33,

34]. In terms of computational complexity, both frequency-domain analysis and sparse regression scale well with data size, and the identified pairs of adaptive basis functions and coefficients can be used to extrapolate daily freeze series of upstream and downstream industries to medium- and long-term horizons, forming a stable day-level long-term forecasting mechanism suitable for large-scale deployment across multiple sectors and numerous users.

The remainder of this paper is organized as follows.

Section 2 describes the structure of upstream–downstream industrial electricity data, the preprocessing workflow, and the forecasting task.

Section 3 develops the adaptive basis sparse system identification framework, and

Section 4 presents model-input design and extensions across upstream and downstream industries.

Section 5 reports the experimental setup and results on real industrial datasets.

Section 6 discusses empirical findings, limitations, and implications for deployment. Finally,

Section 7 concludes this paper and outlines directions for large-scale validation and application-oriented extensions.

2. Electricity Data of Upstream and Downstream Industries and Problem Formulation

In the new-type power systems and coordinated development along industrial value chains, the large-scale user-side electricity data collected by power utilities not only reflect the internal production rhythms and equipment operation patterns of individual enterprises but also embody the supply–demand coupling among upstream and downstream industries. To construct a long-term forecasting model with good interpretability on this basis, it is necessary to systematically sort out the origin, structure, and quality of the electricity data for upstream and downstream industries and to clarify the modeling objectives and constraints within a unified mathematical framework. This section focuses on these issues, elaborating on the organization of upstream and downstream industry electricity data, the preprocessing pipeline, the construction of daily freeze values, and the formalization of the forecasting problem.

2.1. Industrial Chain Structure and User Hierarchy

Consider an industrial chain consisting of multiple sectors,

where some sectors are located in the upstream part of the chain, such as steel, chemicals, and basic raw materials, while others are in the midstream or downstream part, such as equipment manufacturing, building materials, and end-product manufacturing. For each sector

, the utility typically maintains electricity data for a number of users (enterprises); the corresponding user set is denoted by

, and the global user set is then

At a finer granularity, each enterprise can be further decomposed into production line, workshop, or critical equipment. For example, an upstream steel enterprise may be divided into processes such as ironmaking, steelmaking, and rolling, whereas a downstream building materials enterprise may be divided into production lines, packaging lines, and warehousing and logistics subsystems. Correspondingly, electricity load data can be modeled at the whole-enterprise level or at the workshop/equipment level, forming a multi-level data structure spanning “sector–enterprise–equipment”.

From a modeling perspective, such a hierarchical structure has two important implications. First, load patterns differ significantly across sectors and enterprises. Upstream sectors are often characterized by high load levels, long cycles, and pronounced shift structures, while downstream sectors are more prone to demand volatility and seasonality. Second, even within the same sector, different enterprises may exhibit heterogeneity in baseline load levels, periodic structures, and process flows. This calls for a unified modeling framework with enterprise-specific parameterization. Therefore, subsequent modeling in this paper will mainly be described at the “sector–enterprise” two-level structure, and the coupling between upstream and downstream sectors will be understood as mutual influence and transmission among different sectors.

2.2. Measurement Data and Preprocessing Pipeline

For any user

, the utility typically samples active power at fixed intervals (e.g., every 15 min), yielding 96 observations

for day

d, where

denotes the average active power during the

k-th 15 min interval. These records, together with time stamps

, date labels

, user identifiers

, and other metadata, form the basis for aggregation at the daily, weekly, or sector level. To better characterize production and economic rhythms, auxiliary features can be derived from calendar (weekday/weekend, public holidays, month/quarter, and heating or high-temperature seasons) and environment (daily maximum, minimum and mean temperature, precipitation, humidity), and, where available, production-related information such as major maintenance windows, process changes, and order peaks. The sparse identification with adaptive basis functions proposed in this work focuses on extracting periodic structures from the load series themselves, but the framework is conceptually compatible with such exogenous variables and can be extended to include them as model inputs at the implementation stage.

In practice, meter data suffer from missing values, outliers, duplicated records and time misalignment, so a systematic preprocessing pipeline is required. Duplicates at the same user and time stamp are filtered using data-quality flags and acquisition priorities. Sporadic missing points are imputed by local time-neighborhood K-nearest-neighbor (KNN) averaging, while longer gaps are filled using typical-day load curves constructed from neighboring dates (e.g., separate weekday/weekend profiles); enterprises with pervasive data loss or long-term shutdowns can be removed or flagged according to business rules. Outliers—such as short spikes, prolonged abnormal loads, or patterns violating production logic—are detected using sliding-window statistics (e.g., z-scores based on mean and standard deviation) and robust measures (interquartile range or median absolute deviation), possibly in combination with comparisons to historical typical curves of the same day type. Erroneous points are then replaced by interpolated values or typical profiles, whereas genuine production events are retained and annotated. Finally, all multi-source series are aligned to a common time axis to correct hour shifts, time-zone differences, and resolution inconsistencies; for users with hourly data, 15 min series can be reconstructed by uniform distribution or refinement via typical curves. After missing-value handling, outlier correction, and time alignment, a high-quality, temporally consistent 15 min load series is obtained, which underpins the construction of daily freeze values and subsequent time series modeling.

2.3. Formalization of Forecasting Tasks and Modeling Objectives

Although 15 min data can finely depict an enterprise’s production status and equipment on/off behavior, long-term analysis of upstream and downstream industry electricity demand is more concerned with evolution at daily, weekly, monthly, and even longer time scales. It is therefore useful to aggregate high-frequency data to the daily scale and construct so-called “daily freeze values”.

For user

i on day

d, the daily freeze electricity consumption is defined as

where

is the preprocessed 15 min average active power. Equation (

2) compresses the intraday fine-grained fluctuations into a single scalar that preserves the total consumption, thereby providing a concise and intuitive representation for medium- and long-term trend and dominant-period identification.

On this basis, multi-scale representations can be constructed as needed. At the weekly scale, the daily freeze values of a user in the same week can be aggregated to form a weekly freeze value , defined as the sum of all daily freeze values within that week, thus characterizing the weekly load level. Similarly, monthly freeze values can be defined or sliding-window smoothing can be applied to the daily series to highlight seasonality and long-term trends while attenuating short-term random disturbances. In addition, the daily freeze series can be decomposed into a long-term baseline component and a short-term fluctuation component, thereby distinguishing structural changes from transient variations and supporting forecasting and scenario analysis at different time scales.

At the sector level, for sector

, one can select

representative users from

and construct a sector-level load vector

thereby obtaining a multivariate time series

. In this way, the evolution of sector-level load and the heterogeneity among representative enterprises can be jointly captured.

Given the above data construction, the load forecasting problem for upstream and downstream industries can be formalized as a standard time series forecasting task. For a single user

i, the observed daily freeze series over the study period is

During model training, the first

T daily freeze values are taken as historical observations to build the forecasting model. The forecasting task is then to estimate the electricity consumption for the next

H days given this historical window, that is,

which yields the predicted trajectory of daily load for user

i over the forecast horizon.

At the sector level, for the sector load vector series

, the forecasting task is to estimate the future

H days of sector-level load vectors given a historical window of length

T,

where each

is an

-dimensional vector representing the predicted electricity consumption of the representative enterprises in sector

s.

Taking into account the upstream–downstream structure of the industrial chain, joint multi-sector forecasting tasks can also be defined. Let

and

denote the sets of upstream and downstream sectors, respectively. A joint time series

can then be employed to build models that simultaneously capture the dynamics of multiple sectors and identify their shared periodic structures. On this basis, one can analyze how common dominant frequencies and long-term trends are reflected across different sectors and how these patterns can be exploited to improve forecasting performance in a joint modeling setting. Such modeling provides a quantitative basis for medium- and long-term planning and capacity allocation decisions of the power system.

Building upon this modeling foundation, the next section will develop a sparse system identification and forecasting framework with adaptive basis functions to extract frequency-domain structures and perform long-term extrapolation of industrial electricity load time series.

3. Sparse System Identification Framework with Adaptive Basis Functions

Based on the data construction and forecasting task definition in the previous section, this section develops a sparse system identification framework with adaptive basis functions for long-term electricity load modeling of upstream and downstream industries. The central idea is to first extract dominant periodic components from the daily freeze series through frequency-domain analysis, then construct a compact yet expressive basis function library, and finally perform sparse coefficient identification so that only a small number of basis components are retained. In this way, the model captures the essential periodic structure of industrial loads while remaining interpretable and computationally efficient.

3.1. Frequency-Domain Analysis and Adaptive Basis Construction

Consider a univariate daily freeze load series of length

D for a given user or sector,

For convenience, this series can be regarded as a realization of a discrete-time signal defined on the index set

. To reveal its periodic structure, the series is first transformed into the frequency domain by the discrete Fourier transform (DFT). The DFT at frequency index

k is given by

where

is the angular frequency and

i is the imaginary unit. The amplitude spectrum is defined as

and characterizes the contribution of each frequency component to the overall series.

In practice, only a finite number of frequencies carry substantial energy. To quantify the concentration of energy in the leading frequencies, a simple spectral complexity index

is constructed. Let

denote the amplitudes sorted in descending order, that is,

, where

is the number of distinct frequencies considered. The index

is defined as

When is close to one, the spectrum is dominated by a few leading frequencies and the periodic structure is relatively simple. When is significantly smaller, energy is more evenly spread across frequencies and the periodic structure is more complex. This index provides a guideline for selecting the number of basis frequencies used in the subsequent modeling.

Based on the amplitude spectrum, a set of dominant frequencies is selected. Suppose that

K frequency indices are chosen, denoted by

, corresponding to angular frequencies

. The normalized frequency of the

j-th component can be defined as

For each normalized frequency

, two real-valued basis functions are constructed,

This leads to a basis library containing sinusoidal functions, each corresponding to a dominant oscillatory mode of the series. These basis functions are interpretable because each pair captures a periodic pattern characterized by a specific period and phase shift.

Collecting all basis functions into a matrix yields

which serves as the design matrix for the subsequent sparse identification step. The number of basis functions

can be determined adaptively by starting from a moderate upper bound and adjusting it according to the spectral complexity index

and cross-validation performance.

3.2. Sparse Coefficient Identification and Long-Term Forecasting Mechanism

Given the basis matrix

in (

9) and the daily freeze vector

the load series can be approximated as a linear combination of basis functions,

where

is the coefficient associated with the

ℓ-th basis function. In matrix form, this model can be written as

where

is the coefficient vector and

is the residual term.

To avoid overfitting and enhance interpretability, the model encourages sparsity in

by introducing an

-type regularization. The coefficient identification problem is formulated as

where

is the Euclidean norm,

is the

norm, and

is a regularization parameter controlling the degree of sparsity. This optimization problem is a standard LASSO formulation. In practice,

is selected by time series cross-validation on a validation segment (e.g., a blocked split or a rolling-origin split), and we choose

, which achieves low validation error while retaining a sparse set of dominant periodic bases (see Algorithm 1). We adopt the

penalty in this work due to its convexity and computational efficiency, which are important for large-scale deployment; other sparse penalties, such as elastic net to handle correlated bases and structured sparsity to promote shared components across enterprises, can be incorporated in the same framework, while exploring nonconvex penalties for reduced shrinkage bias is left for future work.

| Algorithm 1 System Identification in Adaptive Bayesian Framework (SIABF) |

| Require: Daily freeze series for a user or sector; maximum number of dominant frequencies ; forecast horizon H; candidate regularization set . |

| Ensure: Sparse coefficient vector (or matrix) (or ), adaptive basis functions , and forecasts (or ). |

- 1:

Daily series preparation: Construct the daily freeze series from preprocessed 15 min load data. Optionally split the series into a training segment and a validation segment. - 2:

Frequency-domain analysis: Compute the discrete Fourier transform of the training segment using ( 6), obtain the amplitude spectrum , and sort in descending order. Compute the spectral complexity index via ( 7) and determine a candidate range for the number of dominant frequencies . - 3:

Adaptive basis construction: Select K dominant frequencies with the largest amplitudes and compute their normalized frequencies . For each , construct the sinusoidal basis functions according to ( 8) and assemble the design matrix as in ( 9). - 4:

for each candidate do - 5:

Sparse coefficient identification (univariate case): Solve the LASSO problem

to obtain a sparse coefficient vector . - 6:

Sparse coefficient identification (multivariate sector case, optional): For a sector matrix , solve

to obtain the sparse coefficient matrix . - 7:

Validation (if a validation segment is available): Use the identified coefficients to generate forecasts on the validation segment, compute prediction errors (for example, mean absolute percentage error), and record the performance associated with . - 8:

end for - 9:

Hyperparameter selection: Select between forecasting accuracy and sparsity based on validation performance. Fix the corresponding basis matrix and coefficients . - 10:

Long-term forecasting: For each future day , evaluate the basis vector

and compute the forecast - 11:

Long-term forecasting (sector case, optional): For each future day , compute

yielding forecasts for all representative enterprises in sector s. - 12:

Model evaluation and interpretation: Evaluate forecasting performance over the horizon H using appropriate error metrics.

|

This step also admits a Bayesian interpretation. Assume Gaussian observation noise

in (

11) and independent Laplace priors on coefficients,

, where

is linked to

and

. Then solving (

12) yields the maximum a posteriori estimate of

. After sparsity identifies an active set

, uncertainty can be quantified by refitting a Bayesian linear regression on the reduced design matrix

. With a Gaussian prior

, the posterior is closed-form with

and

. For any future day

, the predictive distribution is Gaussian with mean

and variance

, which directly yields prediction intervals.

From the viewpoint of system identification, the nonzero elements of reveal which periodic components are essential for reconstructing the observed load series. Their magnitudes determine the contribution of each component to the overall load profile, and the corresponding frequencies provide interpretable information about daily, weekly, or seasonal cycles.

The optimization problem in (

12) can be solved using coordinate descent, proximal gradient methods, or other convex optimization algorithms. Since the dimension

is usually much smaller than the number of samples

D, the computational cost is moderate, which is advantageous for large-scale applications involving many users or sectors.

Once the sparse coefficient vector

is obtained by solving (

12), the model can be used to produce long-term forecasts. For any future day index

, the basis functions in (

8) can be evaluated at

d by simple substitution. The forecast at day

d is then given by

where

is the vector of basis evaluations at

d. Because

are sinusoidal functions with fixed frequencies, the forecast naturally extrapolates the periodic patterns identified from historical data into the future.

This forecasting mechanism has several desirable properties, where the forecast at any horizon depends only on the identified periodic structure and not on the accumulation of short-term prediction errors, which helps to maintain stability over long horizons. The periodic basis functions make the medium- and long-term behavior of the model transparent: the presence of a weekly component corresponds to a specific frequency, a seasonal component corresponds to another, and so on. Furthermore, the sparse coefficient vector highlights only a few dominant periodicities, making it easier to interpret forecasts in industrial applications such as planning and capacity allocation.

3.3. Bayesian State-Space Prediction with Implicit Physical Information

The sparse identification step provides an interpretable periodic representation. However, industrial load patterns may exhibit gradual drifts due to operational adjustments and seasonal transitions. To capture such implicit physical inertia and to quantify uncertainty, we formulate a Bayesian state-space model on the retained adaptive basis.

Let

denote the active set obtained from the sparse regression in (

12). Define the reduced basis vector

by keeping only the active components, and let

be the corresponding coefficient vector at day

d.

We adopt the following linear Gaussian model:

with a Gaussian prior

. Here,

Q controls the adaptivity of the coefficients (implicit physical inertia), and

R represents measurement noise and unmodeled disturbances. In implementation,

can be initialized by the sparse estimate restricted to

, and

with

.

Given posterior

at day

, the one-step prediction is

Let

. Define the innovation and its variance:

The Kalman gain and posterior update are

This yields adaptive parameter updating together with posterior uncertainty.

For any future day

, the predictive distribution is Gaussian:

where

Therefore, a

prediction interval is given by

where

is the standard normal quantile. We set

and tune

on the validation segment together with

to balance adaptivity and long-horizon stability. Since

in typical cases, the Bayesian update is computationally efficient and scalable for multi-user and multi-sector deployment.

3.4. Multivariate and Multi-Sector Extension

The above development focuses on a single univariate series, such as the daily freeze load of one enterprise. For sector-level modeling, where multiple representative enterprises are considered simultaneously, the framework can be extended to a multivariate setting.

Suppose sector

s has

representative enterprises with daily freeze loads

, where

and

. For each day

d, define the sector-level load vector

Stacking these vectors over time yields the matrix

If the same basis matrix

in (

9) is used for all enterprises within the sector, the multivariate model can be written as

where

is a coefficient matrix whose columns correspond to different enterprises.

To promote sparsity across the entire sector and to reveal shared periodic structure, a group-wise or element-wise regularization can be introduced. A simple choice is to penalize the element-wise

norm,

where

is the Frobenius norm and

denotes the sum of absolute values of all entries in

. This formulation allows different enterprises to share a common set of basis functions, while their coefficients may differ in magnitude and sign. More structured regularization terms, such as row-wise group norms, can further encourage enterprises to share a subset of dominant periodic components.

Forecasting at the sector level is obtained in an analogous way. For a future day

, the basis vector

is evaluated and the sector-level load forecast is computed as

where

is the estimated coefficient matrix. This provides forecasts for all representative enterprises in sector

s and can be aggregated further if sector-level totals or averages are of interest.

3.5. Algorithmic Implementation

The SIABF procedure consists of daily aggregation, spectral analysis, adaptive basis construction, and sparse identification. To realize the adaptive Bayesian component, we further embed the retained basis expansion into a Bayesian inference step on the active coefficients. This step produces posterior uncertainty and predictive intervals in addition to point forecasts. The resulting workflow remains interpretable and scalable for multi-user and multi-sector deployment.

The complete sparse system identification procedure with adaptive basis functions can be summarized as follows. First, daily freeze series are obtained from preprocessed high-frequency data for the user or sector under consideration. Second, a DFT is computed and the amplitude spectrum is analyzed to identify dominant frequencies and to compute the spectral complexity index

. Third, a set of

K dominant frequencies is selected and the corresponding sinusoidal basis functions are constructed, yielding the basis matrix

. Fourth, the LASSO optimization problem in (

12) or its multivariate counterpart in (

28) is solved to obtain sparse coefficient estimates. Finally, the identified model is used to generate medium- and long-term forecasts by evaluating the basis functions at future time indices as in (

13) and (

29).

In practice, several implementation details deserve attention. The regularization parameter is selected by time series cross-validation by splitting the historical series into training and validation segments and choosing the value that balances validation forecasting accuracy and sparsity. The number of dominant frequencies K may be determined by combining the information from , visual inspection of the spectrum, and validation performance. When the daily series is short or exhibits nonstationarities, it may be helpful to restrict the maximum K or to update the basis functions in a rolling manner. These details do not change the core framework but are important for robust performance in real-world applications.

The SIABF algorithm takes as input a daily freeze load series

for a given user or sector, a maximum number of dominant frequencies

, and a regularization parameter

(or a search range for

). The primary outputs are the identified sparse coefficient vector (or matrix in the multivariate case), the set of dominant basis functions, and the corresponding long-term load forecasts over a prescribed horizon

H. The detailed steps of SIABF are summarized in Algorithm 1.

The SIABF algorithm thus provides a complete and implementable procedure for sparse system identification and long-term load forecasting based on adaptive periodic basis functions. By explicitly separating the steps of spectral analysis, basis construction, and sparse identification, the algorithm offers both computational efficiency and structural interpretability and can be applied consistently at the enterprise level and at the sector level for upstream and downstream industrial electricity load modeling.

5. Experiments

5.1. Data Description and Preprocessing

To establish a power consumption forecasting model for electricity utility customers, power consumption information was collected from industrial customers of a regional power utility in China. The dataset comprises 15 min interval power consumption data for multiple customers within three distinct electricity-consuming sectors, namely, steel, photovoltaics (PV), and chemical. Customer IDs are anonymized for confidentiality. In particular, the steel-sector dataset contains multiple steel enterprises, and unless otherwise stated, the quantitative comparisons and the illustrative time series figures in

Section 5.3 report results for a representative steel enterprise rather than an average across multiple steel companies. Data for each sector are stored in separate Excel files, structured as illustrated in

Table 2.

Each row in the dataset records the power consumption of a customer from a specific industry across the 96 observation points for that day. Consumption records for most industry customers span a period of one year, typically from 1 July 2023 to 30 June 2024.

Prior to model construction, the missing values within the data must be addressed. Power consumption time series data for industrial users inevitably contain missing values due to sensor failures, transmission interruptions, human error, or external disturbances. If not handled properly, these missing values can compromise the stability and accuracy of the model. Simply deleting records containing missing values would not only result in the loss of valuable historical data but also damage the temporal continuity of the series, potentially leading to biased model predictions. Therefore, appropriate imputation of missing values is critical for enhancing both the predictive accuracy and the robustness of the model. Considering the characteristics of industrial data, this paper employs a hybrid imputation method. First, a KNN mean imputation approach, using eight neighboring points, is applied to fill the missing values. If a data point remains after KNN imputation, a forward-filling method is used for secondary imputation. If the element is the first in the series and still after the previous steps, the global mean is finally applied for imputation. The daily freezing value, which represents the total daily power consumption, is calculated by summing the 96 observation points from 00:00 to 24:00. In this study, the pandas 3.0.0 library in Python 3.10 is used to perform column-wise summation. The result is added as a new column named “Daily Freezing Value” to the dataset, thereby recording the total daily power consumption for each industrial customer.

5.2. Model Input and Evaluation Metric

To prevent data leakage, the dataset is partitioned into training and testing sets. The training set is used to construct and train the prediction model, while the testing set is used to evaluate the model’s performance. This division ensures that the model’s performance on unseen data can be effectively validated, guaranteeing the model’s generalization ability and predictive accuracy.

The daily freezing value is used as the one-dimensional feature for our time series, establishing a univariate time series forecasting model. The daily freezing value data from customers with a continuous one-year record (1 July 2023 to 30 June 2024) is selected for the task. The daily freezing value data from the first 11 months are used as the training set input, and the data from the last month are used as the testing set for final model performance evaluation.

The Mean Absolute Percentage Error (MAPE) is adopted as the primary evaluation metric for this experiment. The formula for MAPE is defined as

where

is the actual value in the testing period (all days in June 2024),

is the value forecasted for days in June 2024 by the method trained with data of 1 July 2023 to 31 May 2024, and

n is the number of observations. MAPE is particularly suitable for power consumption forecasting in industrial and utility scenarios compared to scale-dependent metrics such as Mean Absolute Error (MAE). The primary industrial value of MAPE lies in its scale independence and interpretability. Power consumption across different industrial sectors (e.g., cement vs. photovoltaics) and individual customers can vary by orders of magnitude. MAE reports error in the original units (e.g., kWh), making it impossible to directly compare the forecasting performance between a large-scale steel mill and a smaller renewables facility. Conversely, MAPE expresses the error as a percentage, providing a normalized measure of accuracy that allows for equitable comparison of model performance across heterogeneous customer groups, which is crucial for benchmarking in the utility sector. Furthermore, electric utilities and system operators typically manage risk and assess performance based on relative error. An absolute error of

might be negligible for a plant consuming

per day (

error) but highly significant for a customer consuming only

per day (

error). MAPE’s direct translation into a percentage provides immediate industrial context and helps decision-makers, such as grid planners and energy traders, understand the relative impact of forecasting errors, thus aligning better with industrial Key Performance Indicators (KPIs).

In this work, the forecasting target is the daily freezing value, defined as the total daily electricity consumption obtained by summing the 96 measurements within each day. For each customer with a continuous one-year record (1 July 2023 to 30 June 2024), we adopt a one-month-ahead evaluation: the first eleven months (July 2023 to May 2024) are used for training, and the last month (June 2024) is used for testing. Note that the PV industry in our experiments refers to industrial electricity consumption of PV-related enterprises, e.g., manufacturing and supporting operations, rather than PV plant energy generation.

5.3. Forecasting Results and Comparative Analysis

This section presents the power consumption forecasting results obtained by the proposed SIABF and compares its performance against several established benchmark models. To comprehensively evaluate the robustness and predictive accuracy of the SIABF framework, the following models were selected as comparative baselines. These models represent the current state of the art and theoretically sound baseline methods widely adopted in the industrial power forecasting domain. The comparison set includes statistical models like ARIMA (Autoregressive Integrated Moving Average), which is a fundamental benchmark for linear time series analysis. It also incorporates machine learning and system identification models such as SBL (Sparse Bayesian Learning) and SINDy (Sparse Identification of Nonlinear Dynamics), which are valued for their potential in providing sparse and interpretable representations of the system dynamics. Finally, advanced deep learning models, specifically the LSTM (Long Short-Term Memory) network, the GRU (Gated Recurrent Unit) network, and the ResNet (Residual Network), are included to benchmark SIABF’s capability against methods known for capturing complex, long-term nonlinear temporal dependencies characteristic of modern energy consumption time series.

The SIABF model’s performance is systematically compared with the benchmark models across all three industrial sectors using the MAPE metric on the designated test set (the last month of data). The comparative results are summarized in

Table 3, demonstrating the superior ability of the SIABF model to capture complex, potentially physics-informed, temporal dependencies in the energy consumption data.

5.3.1. Steel Industry

To provide deeper insight into the models’ behavior, we select representative forecasting results from specific customers that highlight the characteristic performance differences. These include high-load industrial customers (e.g., in the steel sector) to evaluate robustness under high variance and inertia, and volatile customers (e.g., in the PV or renewables sector) to assess the ability to handle irregular and rapidly changing consumption patterns. Visualizations depicting the curves of the daily freezing values are presented, comparing the forecasted daily freezing values against the actual values for the selected cases, in order to qualitatively demonstrate the superior accuracy and stability of the SIABF predictions compared to the leading benchmark models.

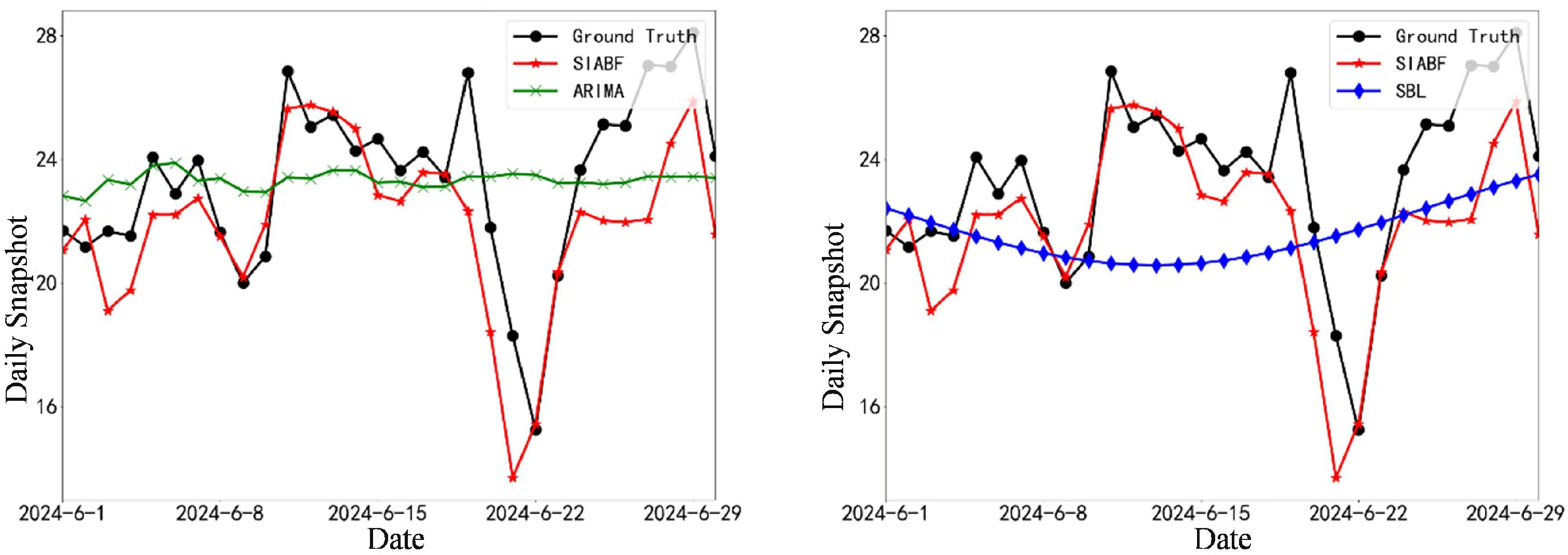

The initial comparison of SIABF with a sparse Bayesian baseline (SBL), shown in

Figure 1, highlights the enhanced stability and closer fit of the SIABF predictions. While SBL exhibits pronounced deviations, particularly around consumption peaks, SIABF maintains a more reliable trajectory. Moreover, the ARIMA and SBL models fail to accurately learn the overall trend and struggle particularly with data volatility, exhibiting noticeable phase lag and amplitude errors.

As illustrated in

Figure 2, the comparison between the system identification method SINDy and the representative deep learning model LSTM shows the challenges faced by both approaches. SINDy demonstrates high variance and substantial lag, failing to capture the time series structure effectively. In contrast, while LSTM shows better responsiveness, it still struggles with accurately predicting the sharp rises and falls in the industrial load profile.

The power consumption forecasting results for a representative steel industry customer are comprehensively illustrated in

Figure 3. SIABF effectively tracks the genuine consumption curve (Ground Truth), even successfully predicting the sharp decline and rebound observed around June 22nd as observed. In contrast, traditional statistical and sparse learning methods struggle significantly. This result validates that by incorporating implicit structural information, the SIABF is highly effective at minimizing prediction errors and yields the most accurate and reliable forecast for the complex operational requirements of the steel industry.

5.3.2. Photovoltaic Power Generation Industry

The forecasting performance for a representative customer in the PV-related industry exhibits distinct characteristics due to high volatility and sensitivity to external factors, e.g., temperature and operational variability. We emphasize that this subsection studies electricity consumption (daily freezing value) of a PV-related enterprise rather than PV plant energy generation. The following figures report one-month-ahead forecasts for June 2024 using the previous eleven months as training.

Analyzing the first comparison in

Figure 4, both the proposed SIABF model and the sparse baseline SBL struggle with the sharp, rapid fluctuations typical of the PV load profile. However, SIABF demonstrates a significant advantage, as its physically informed structure allows it to maintain a closer trajectory to the actual consumption, while SBL shows large, delayed deviations, failing to adapt quickly to sudden changes in consumption. ARIMA fails entirely, demonstrating a smooth, almost static line that misses all temporal dependencies, resulting in a large MAPE of 22.947%. SINDy’s performance is even worse, with MAPE 33.908%, yielding erratic and severely misaligned predictions.

The performance contrast between SINDy and the deep learning model LSTM is clearly depicted in

Figure 5. SINDy, struggling with the high-frequency noise inherent in PV data, yields highly inaccurate and erratic predictions. LSTM, by leveraging its recurrent structure, manages to capture the overall trend much better but still suffers from oversmoothing the extreme peaks and valleys, a common limitation when dealing with nonstationary renewable energy data.

The forecasting performance in the PV industry is crucial due to the sector’s high sensitivity to external factors, leading to volatile load profiles.

Figure 6 confirms the superior capability of the SIABF model in this challenging environment. Visually, the SIABF model consistently achieves an excellent fit to the true consumption curve, i.e., Ground Truth, successfully capturing both the overall trend and the sharp daily fluctuations characteristic of PV operations. In stark contrast, traditional and sparse models perform exceptionally poorly.

The PV-related customer exhibits the lowest MAPE among the tested sectors. This outcome is consistent with the mechanism of SIABF, which constructs an adaptive sinusoidal basis from dominant frequency components and then performs sparse identification. When the daily freezing series contains a small number of stable periodicities, such as weekly production schedules and smooth seasonal variations, its spectrum is more concentrated and can be represented by a compact set of basis functions. In such cases, the sparse periodic model tends to extrapolate reliably over a one-month-ahead horizon, which can lead to a low MAPE. Importantly, the PV case in this paper corresponds to industrial electricity consumption of a PV-related enterprise rather than PV power generation. For PV generation forecasting, the dominant drivers are meteorological conditions and physical irradiance mechanisms, and high daily accuracy typically requires exogenous weather forecasts and physics-informed features. Extending SIABF by integrating meteorological covariates and validating over multi-year and broader populations is therefore a necessary direction before drawing conclusions for PV generation forecasting.

5.3.3. Chemical Industry

The power consumption profile of the chemical industry is typically characterized by high inertia and strong seasonality, reflecting continuous process operations. The following figures present the comparative forecasting results for a representative chemical customer.

As illustrated in

Figure 7, we observe the performance of the SIABF model against the SBL sparse learning baseline. The chemical industry’s consumption curve is generally smoother than the steel or PV sectors, yet SBL still exhibits noticeable deviations from the actual data, especially during transitions. In contrast, the SIABF model leverages its underlying structure to provide predictions that hug the actual values much more closely, demonstrating superior tracking capability. In addition, the numerical results from the table show that the ARIMA model yields the lowest MAPE at 8.420%, narrowly outperforming SIABF. Specifically, the two key sharp consumption drops (mutations) shown in the figure are entirely missed by the ARIMA prediction. This suggests that while ARIMA provides a slightly better average fit due to linear stability, it is highly inadequate for predicting critical, nonlinear operational events. Conversely, both SBL and SINDy models show a substantial divergence from the real data, confirming their limited applicability to the nonlinear dynamics of industrial processes.

Figure 8 compares SINDy with the representative deep learning model, LSTM. The SINDy framework faces substantial difficulties in modeling the complex, unobserved dependencies of the chemical process, resulting in highly amplified errors and poor temporal alignment. LSTM, benefiting from its architecture designed for sequential data, produces a much more reasonable forecast, effectively capturing the overall low-frequency trends in the consumption pattern.

The power consumption data for the chemical industry represents a relatively stable, high-inertia load with strong underlying periodicities. Analysis of

Figure 9 reveals a nuanced performance landscape for this sector. Obviously, the SIABF model provides an excellent fit to the Ground-Truth curve, accurately tracing the consumption trajectory throughout the testing period.

6. Discussion

The empirical results on representative enterprises from the steel, PV-related, and chemical sectors indicate that the proposed sparse system identification framework, instantiated as the SIABF model, achieves competitive forecasting performance for industrial electricity consumption, i.e., daily freezing values, compared with classical statistical models and deep learning baselines. For the PV-related industrial customer, the load series exhibits higher volatility and sensitivity to external factors, and SIABF is able to exploit recurring temporal structures, e.g., weekly schedules and seasonal components, under our one-month-ahead evaluation. We emphasize that these PV case studies focus on the electricity consumption of PV-related enterprises rather than photovoltaic power generation. Therefore, this manuscript does not claim that SIABF alone can provide accurate PV generation forecasting, which typically relies on meteorological forecasts and physics-informed modeling; extending SIABF with exogenous weather drivers is left for future work.

At the same time, several limitations of the present study suggest directions for further research. The empirical evaluation is based on one year of data and a limited set of customers, and it mainly relies on daily freeze values without fully exploiting rich exogenous information such as detailed weather variables, production schedules, or market indicators. The current implementation also implicitly assumes local stationarity of the load series within the chosen time window; when structural breaks or process reconfigurations occur, the frequency content may shift, calling for online or rolling implementations with adaptive basis updates and time-varying regularization. Moreover, although the Bayesian formulation improves robustness, uncertainty quantification is not yet explicitly translated into probabilistic prediction intervals, which would be valuable for risk-aware decision-making. Future work will therefore focus on extending the framework to incorporate structured exogenous covariates, develop adaptive and probabilistic variants suitable for long-term deployment, and implement large-scale joint modeling of correlated sectors in real utility environments.