Familiar Strategies Feel Fluent: The Role of Study Strategy Familiarity in the Misinterpreted-Effort Model of Self-Regulated Learning

Abstract

1. Introduction

1.1. Metacognitive Theory

1.2. Metacognitive Illusions and the Interleaving Effect

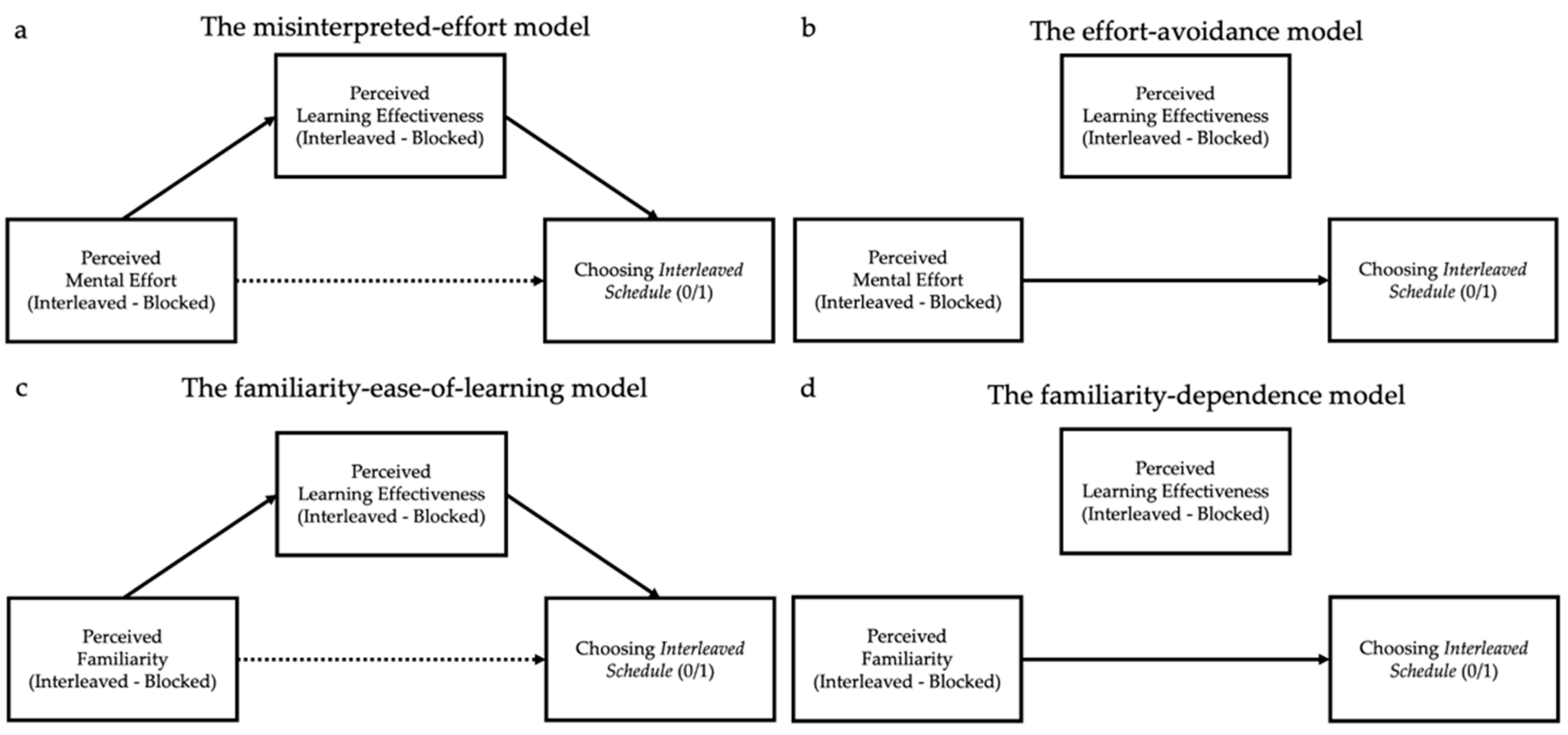

1.3. The Misperceived-Effort Hypothesis

1.4. Habits and Familiarity in Self-Regulated Learning

1.5. Present Work

2. Materials and Methods

2.1. Study 1

2.1.1. Participants

2.1.2. Materials

Learning Materials

Immediate-Perception Questionnaire

Retrospective Semantic-Differential Questionnaire

2.1.3. Procedure

2.2. Study 2

2.2.1. Participants

2.2.2. Materials

Learning Materials

Immediate-Perception Questionnaire

Retrospective Semantic-Differential Questionnaire

2.2.3. Procedure

3. Results

3.1. Study 1

3.1.1. Strategy Choice

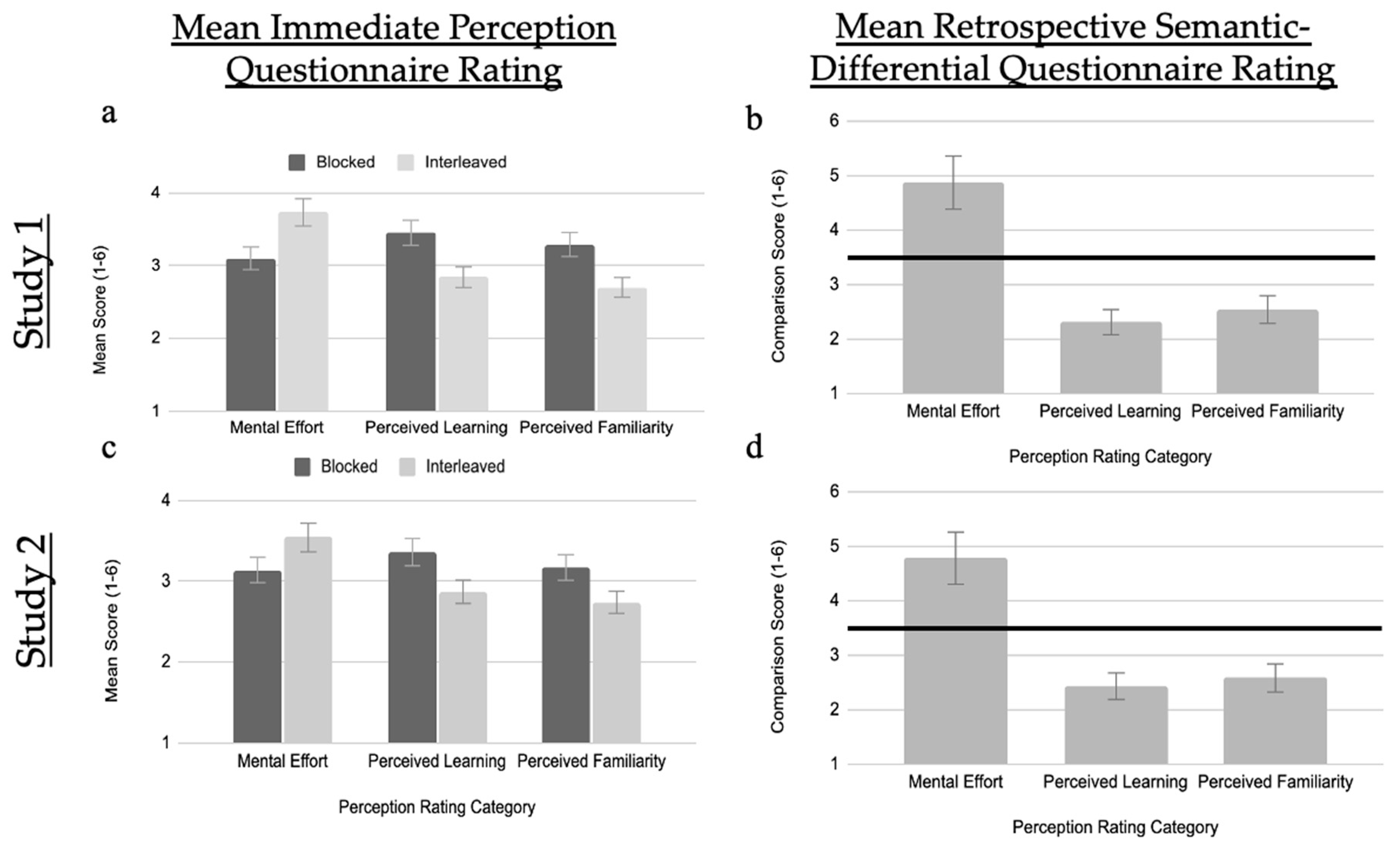

3.1.2. Immediate-Perception Questionnaire

3.1.3. Retrospective Semantic-Differential Questionnaire

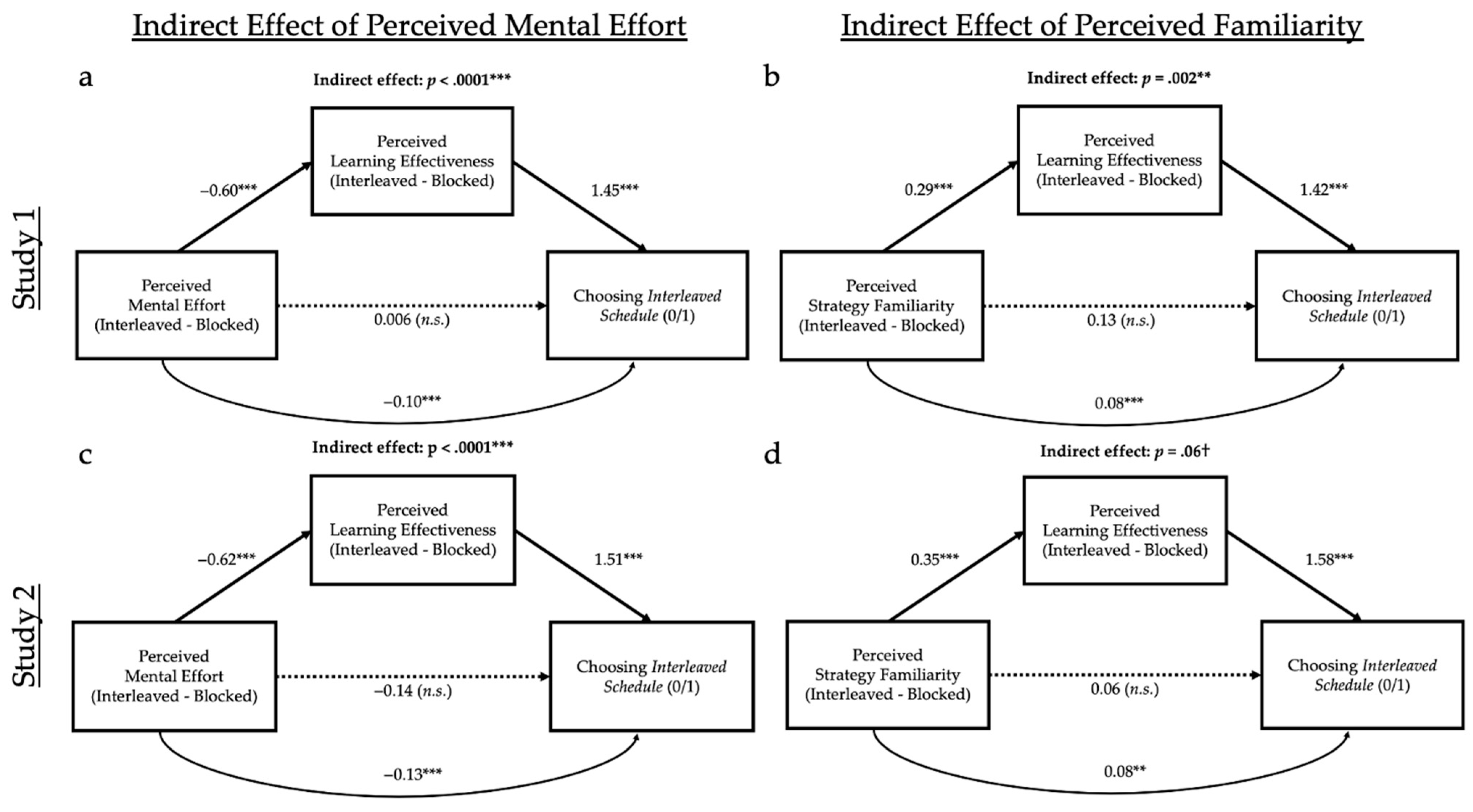

3.1.4. Relation of Immediate Perceptions to Strategy Choice

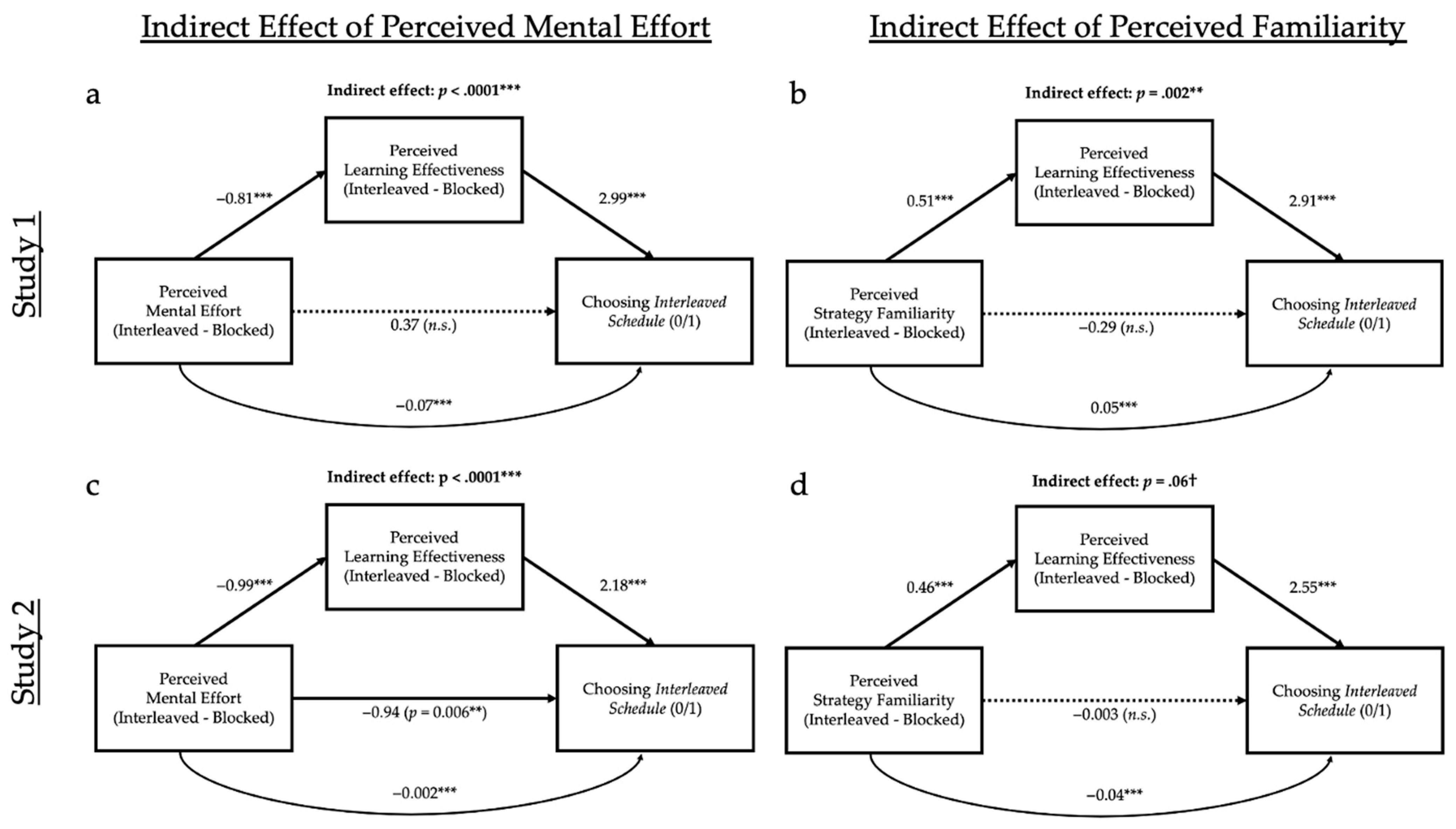

3.1.5. Relation between Retrospective Semantic-Differential Perceptions and Choice of Final Strategy

3.1.6. Free-Response about Changing Strategy Choice

3.1.7. Free-Response about Overall Experience

3.2. Study 2

3.2.1. Strategy Choice

3.2.2. Immediate-Perception Questionnaire

3.2.3. Retrospective Semantic-Differential Questionnaire

3.2.4. Relation of Immediate Perceptions to Strategy Choice

3.2.5. Relation of Retrospective Semantic-Differential Perceptions to Choosing Final Strategy

3.2.6. Objective Learning

3.2.7. Free-Response about Changing Strategy Choice

3.2.8. Free-Response about Overall Experience

4. Discussion

4.1. Review of Key Findings

4.2. Misinterpreted-Effort Hypothesis and the Influence of Familiarity

4.3. Objective Learning

4.4. Perceptions: Causal or Correlation?

4.5. Open-Ended Items

4.6. Limitations and Future Directions

4.7. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

Appendix A.1. Immediate-Perception Assessments of Perceived Mental Effort, Perceived Learning, and Perceived Familiarity

- (1)

- How tiring was the last exercise?1 = Not at all, 6 = A lot

- (2)

- How mentally exhausting was the last exercise?1 = Not at all, 6 = A lot

- (3)

- How difficult was the last exercise?1 = Not at all, 6 = A lot

- (4)

- How boring was the last exercise?1 = Not at all, 6 = A lot

- (1)

- How likely are you to be able to distinguish between the types of birds?1 = Not very likely, 6 = Extremely likely

- (2)

- How good do you think your memory for the different types of birds will be?1 = Not very good, 6 = Extremely good

- (3)

- How effective was this exercise in helping you to distinguish between the types of birds?1 = Not very effective, 6 = Extremely effective

- (4)

- How well did you learn to distinguish the types of birds?1 = Not very well, 6 = Extremely well

- (1)

- How much did the last exercise resemble the structure of your classes?1 = Not well, 6 = Extremely well

- (2)

- How familiar are you with the study strategy you used?1 = Not familiar, 6 = Very familiar

- (3)

- How new is this study strategy to you? *1 = Not new, 6 = Very new

- (4)

- How well did the last exercise match your study habits?1 = Not very well, 6 = Very well

Appendix A.2. Retrospective Semantic-Differential Comparison Assessments between the Blocked Schedule and the Interleaved Schedule

- (1)

- Which exercise required more mental effort?1 = Grouped together, 6 = Not Grouped Together

- (2)

- Which strategy would be harder for other people?1 = Grouped Together, 6 = Not Grouped Together

- (3)

- Which strategy was more enjoyable? *1 = Grouped Together, 6 = Not Grouped Together

- (4)

- Which strategy was harder for you?1 = Grouped Together, 6 = Not Grouped Together

- (5)

- Which birds do you think you’ll remember better?1 = Birds Grouped Together, 6 = Birds Not Grouped Together

- (6)

- Which do you think is a more effective learning strategy for you?1 = Grouped Together, 6 = Not Grouped Together

- (7)

- Which do you think is a more effective learning strategy for the average person?1 = Grouped Together, 6 = Not Grouped Together

- (8)

- Which strategy would you use to study in the future?1 = Grouped Together, 6 = Not Grouped Together

- (9)

- Which exercise better resembled the structure of your classes?1 = Grouped Together, 6 = Not Grouped Together

- (10)

- Which method of studying was more familiar?1 = Grouped Together, 6 = Not Grouped Together

- (11)

- Which exercise was newer to you? *1 = Grouped Together, 6 = Not Grouped Together

- (12)

- Which exercise better matched your study habits?1 = Grouped Together, 6 = Not Grouped Together

| 1 | When participants with perfect scores were removed, 63% of practice items were answered correctly. Our results revealed a full mediation for perceived mental effort on perceived learning on strategy choice (direct effect: p = .93, indirect effect: p < .001, total effect: p <.001) and marginal support for our mediation for perceived familiarity on perceived learning on strategy choice (direct effect: p = .38, indirect effect: p = .002, total effect: p = .002). |

| 2 | When participants with perfect scores were removed, 63% of practice items were answered correctly. Our results revealed a full mediation for perceived mental effort on perceived learning on strategy choice (direct effect: p = .32, indirect effect p < .001, total effect: p < .001 and marginal support for our mediation for perceived familiarity on perceived learning on strategy choice (direct effect: p = .71, indirect effect: p = .07, total effect: p = .006). |

| 3 | It is not precisely clear why the indirect effect did not quite reach significance in the retrospective comparisons in Study 2 but, given that the two studies were identical up to the point of the ratings, it is unlikely to be due to methodological differences and more likely attributable to sampling error. |

References

- Anand, Punam, and Brian Sternthal. 1990. Ease of Message Processing as a Moderator of Repetition Effects in Advertising. Journal of Marketing Research 27: 345–53. [Google Scholar] [CrossRef]

- Ariel, Robert, and John Dunlosky. 2013. When Do Learners Shift from Habitual to Agenda-Based Processes When Selecting Items for Study? Memory & Cognition 41: 416–28. [Google Scholar] [CrossRef]

- Ariel, Robert, Ibrahim S. Al-Harthy, Christopher A. Was, and John Dunlosky. 2011. Habitual reading biases in the allocation of study time. Psychonomic Bulletin & Review 18: 1015–21. [Google Scholar] [CrossRef]

- Ariel, Robert, Jeffrey D. Karpicke, Amber E. Witherby, and Sarah K. Tauber. 2021. Do Judgments of Learning Directly Enhance Learning of Educational Materials? Educational Psychology Review 33: 693–712. [Google Scholar] [CrossRef]

- Ariel, Robert, John Dunlosky, and Heather Bailey. 2009. Agenda-Based Regulation of Study-Time Allocation: When Agendas Override Item-Based Monitoring. Journal of Experimental Psychology General 138: 432–47. [Google Scholar] [CrossRef]

- Baddeley, A. D., and D. J. Longman. 1978. The Influence of Length and Frequency of Training Session on the Rate of Learning to Type. Ergonomics 21: 627–35. [Google Scholar] [CrossRef]

- Begg, Ian, Susanna Duft, Paul Lalonde, Richard Melnick, and Josephine Sanvito. 1989. Memory Predictions Are Based on Ease of Processing. Journal of Memory and Language 28: 610–32. [Google Scholar] [CrossRef]

- Benjamin, Aaron S., and Jonathan Tullis. 2010. What Makes Distributed Practice Effective? Cognitive Psychology 61: 228–47. [Google Scholar] [CrossRef]

- Benjamin, Aaron S., Robert A. Bjork, and Elliot Hirshman. 1998. Predicting the Future and Reconstructing the Past: A Bayesian Characterization of the Utility of Subjective Fluency. Acta Psychologica 98: 267–90. [Google Scholar] [CrossRef]

- Benjamin, Aaron, and Randy Bird. 2006. Metacognitive Control of the Spacing of Study Repetitions. Journal of Memory and Language 55: 126–37. [Google Scholar] [CrossRef]

- Besken, Miri. 2016. Picture-Perfect Is Not Perfect for Metamemory: Testing the Perceptual Fluency Hypothesis with Degraded Images. Journal of Experimental Psychology: Learning, Memory, and Cognition 42: 1417–33. [Google Scholar] [CrossRef] [PubMed]

- Birnbaum, Monica S., Nate Kornell, Elizabeth Ligon Bjork, and Robert A. Bjork. 2013. Why Interleaving Enhances Inductive Learning: The Roles of Discrimination and Retrieval. Memory & Cognition 41: 392–402. [Google Scholar] [CrossRef]

- Bjork, Robert A. 1994. Memory and metamemory considerations in the training of human beings. In Metacognition: Knowing about Knowing. Edited by J. Metcalfe and A. P. Shimamura. Boston: The MIT Press, pp. 185–206. [Google Scholar]

- Bjork, Robert A., and Elizabeth. L. Bjork. 1992. A new theory of disuse and an old theory of stimulus fluctu-ation. In Learning Processes to Cognitive Processes: Essays in Honors of William Estes. Edited by A. Healy, S. Kosslyn and R. Shiffrin. Hillsdale: Erlbaum, Volume 2, pp. 35–67. [Google Scholar]

- Bjork, Robert A., John Dunlosky, and Nate Kornell. 2013. Self-Regulated Learning: Beliefs, Techniques, and Illusions. Annual Review of Psychology 64: 417–44. [Google Scholar] [CrossRef]

- Borkowski, John G. 1992. Metacognitive Theory: A Framework for Teaching Literacy, Writing, and Math Skills. Journal of Learning Disabilities 25: 253–57. [Google Scholar] [CrossRef] [PubMed]

- Brunmair, Matthias, and Tobias Richter. 2019. Similarity Matters: A Meta-Analysis of Interleaved Learning and Its Moderators. Psychological Bulletin 145: 1029–52. [Google Scholar] [CrossRef] [PubMed]

- Carpenter, Shana K., Harold Pashler, John T. Wixted, and Edward Vul. 2008. The Effects of Tests on Learning and Forgetting. Memory & Cognition 36: 438–48. [Google Scholar] [CrossRef]

- Carvalho, Paulo F., and Robert L. Goldstone. 2014a. Effects of Interleaved and Blocked Study on Delayed Test of Category Learning Generalization. Frontiers in Psychology 5: 936. [Google Scholar] [CrossRef]

- Carvalho, Paulo F., and Robert L. Goldstone. 2014b. Putting Category Learning in Order: Category Structure and Temporal Arrangement Affect the Benefit of Interleaved over Blocked Study. Memory & Cognition 42: 481–95. [Google Scholar] [CrossRef]

- Carvalho, Paulo F., and Robert L. Goldstone. 2015. What You Learn Is More than What You See: What Can Sequencing Effects Tell Us about Inductive Category Learning? Frontiers in Psychology 6: 505. [Google Scholar] [CrossRef]

- Carvalho, Paulo F., and Robert L. Goldstone. 2017. The Sequence of Study Changes What Information Is Attended to, Encoded, and Remembered during Category Learning. Journal of Experimental Psychology: Learning, Memory, and Cognition 43: 1699–719. [Google Scholar] [CrossRef]

- Corno, Lyn. 1986. The Metacognitive Control Components of Self-Regulated Learning. Contemporary Educational Psychology 11: 333–46. [Google Scholar] [CrossRef]

- Dobson, John L. 2011. Effect of Selected ‘Desirable Difficulty’ Learning Strategies on the Retention of Physiology Information. Advances in Physiology Education 35: 378–83. [Google Scholar] [CrossRef] [PubMed]

- Dunlosky, John, and Christopher Hertzog. 1998. Training Programs to Improve Learning in Later Adulthood: Helping Older Adults Educate Themselves. In Metacognition in Educational Theory and Practice. The Educational Psychology Series; Mahwah: Lawrence Erlbaum Associates Publishers, pp. 249–75. [Google Scholar]

- Dunlosky, John, and Janet Metcalfe. 2009. Metacognition. Thousand Oaks: Sage Publications, Inc. [Google Scholar]

- Dunlosky, John, and Robert Ariel. 2011. Self-Regulated Learning and the Allocation of Study Time. In The Psychology of Learning and Motivation: Advances in Research and Theory. The Psychology of Learning and Motivation. San Diego: Elsevier Academic Press, Volume 54, pp. 103–40. [Google Scholar]

- Dunning, David. 2011. The Dunning-Kruger Effect: On Being Ignorant of One’s Own Ignorance. In Advances in Experimental Social Psychology. Advances in Experimental Social Psychology. San Diego: Academic Press, vol. 44, pp. 247–96. [Google Scholar] [CrossRef]

- Evans, Jonathan St. B. T., and Jodie Curtis-Holmes. 2005. Rapid Responding Increases Belief Bias: Evidence for the Dual-Process Theory of Reasoning. Thinking & Reasoning 11: 382–89. [Google Scholar] [CrossRef]

- Firth, Jonathan, Ian Rivers, and James Boyle. 2021. A Systematic Review of Interleaving as a Concept Learning Strategy. Review of Education 9: 642–84. [Google Scholar] [CrossRef]

- Flavell, John H. 1979. Metacognition and Cognitive Monitoring: A New Area of Cognitive–Developmental Inquiry. American Psychologist 34: 906–11. [Google Scholar] [CrossRef]

- Geller, Jason, Alexander R. Toftness, Patrick I. Armstrong, Shana K. Carpenter, Carly L. Manz, Clark R. Coffman, and Monica H. Lamm. 2018. Study Strategies and Beliefs about Learning as a Function of Academic Achievement and Achievement Goals. Memory 26: 683–90. [Google Scholar] [CrossRef]

- Hartwig, Marissa K., and John Dunlosky. 2012. Study Strategies of College Students: Are Self-Testing and Scheduling Related to Achievement? Psychonomic Bulletin & Review 19: 126–34. [Google Scholar] [CrossRef]

- Hertwig, Ralph, Stefan M. Herzog, Lael J. Schooler, and Torsten Reimer. 2008. Fluency Heuristic: A Model of How the Mind Exploits a by-Product of Information Retrieval. Journal of Experimental Psychology: Learning, Memory, and Cognition 34: 1191–206. [Google Scholar] [CrossRef]

- Higgins, Erin, and Brian Ross. 2011. Comparisons in Category Learning: How Best to Compare For What. Proceedings of the Annual Meeting of the Cognitive Science Society 33: 33. [Google Scholar]

- Kang, Sean H. K., and Harold Pashler. 2012. Learning Painting Styles: Spacing Is Advantageous When It Promotes Discriminative Contrast. Applied Cognitive Psychology 26: 97–103. [Google Scholar] [CrossRef]

- Karpicke, Jeffrey D. 2009. Metacognitive Control and Strategy Selection: Deciding to Practice Retrieval during Learning. Journal of Experimental Psychology: General 138: 469–86. [Google Scholar] [CrossRef] [PubMed]

- Karpicke, Jeffrey D. 2012. Retrieval-Based Learning: Active Retrieval Promotes Meaningful Learning. Current Directions in Psychological Science 21: 157–63. [Google Scholar] [CrossRef]

- Karpicke, Jeffrey D., and Henry L. Roediger. 2007. Repeated Retrieval during Learning Is the Key to Long-Term Retention. Journal of Memory and Language 57: 151–62. [Google Scholar] [CrossRef]

- Karpicke, Jeffrey D., and Henry L. Roediger. 2008. The Critical Importance of Retrieval for Learning. Science 319: 966–68. [Google Scholar] [CrossRef]

- Kirk-Johnson, Afton, Brian M. Galla, and Scott H. Fraundorf. 2019. Perceiving Effort as Poor Learning: The Misinterpreted-Effort Hypothesis of How Experienced Effort and Perceived Learning Relate to Study Strategy Choice. Cognitive Psychology 115: 101237. [Google Scholar] [CrossRef]

- Koriat, Asher, Limor Sheffer, and Hilit Ma’ayan. 2002. Comparing Objective and Subjective Learning Curves: Judgments of Learning Exhibit Increased Underconfidence with Practice. Journal of Experimental Psychology: General 131: 147–62. [Google Scholar] [CrossRef]

- Koriat, Asher. 1995. Dissociating Knowing and the Feeling of Knowing: Further Evidence for the Accessibility Model. Journal of Experimental Psychology: General 124: 311–33. [Google Scholar] [CrossRef]

- Koriat, Asher. 1997. Monitoring One’s Own Knowledge during Study: A Cue-Utilization Approach to Judgments of Learning. Journal of Experimental Psychology: General 126: 349–70. [Google Scholar] [CrossRef]

- Kornell, Nate, Alan D. Castel, Teal S. Eich, and Robert A. Bjork. 2010. Spacing as the Friend of Both Memory and Induction in Young and Older Adults. Psychology and Aging 25: 498–503. [Google Scholar] [CrossRef]

- Kornell, Nate, and Robert A. Bjork. 2007. The Promise and Perils of Self-Regulated Study. Psychonomic Bulletin & Review 14: 219–24. [Google Scholar] [CrossRef]

- Kornell, Nate, and Robert Bjork. 2008a. Learning Concepts and Categories Is Spacing the ‘Enemy of Induction’? Psychological Science 19: 585–92. [Google Scholar] [CrossRef]

- Kornell, Nate, and Robert Bjork. 2008b. Optimising Self-Regulated Study: The Benefits—and Costs—Of Dropping Flashcards. Memory 16: 125–36. [Google Scholar] [CrossRef] [PubMed]

- Kornell, Nate, Matthew G. Rhodes, Alan D. Castel, and Sarah K. Tauber. 2011. The Ease-of-Processing Heuristic and the Stability Bias: Dissociating Memory, Memory Beliefs, and Memory Judgments. Psychological Science 22: 787–94. [Google Scholar] [CrossRef] [PubMed]

- Kruger, Justin, and David Dunning. 1999. Unskilled and Unaware of It: How Difficulties in Recognizing One’s Own Incompetence Lead to Inflated Self-Assessments. Journal of Personality and Social Psychology 77: 1121–34. [Google Scholar] [CrossRef] [PubMed]

- Lavis, Yvonna, and Chris Mitchell. 2006. Effects of Preexposure on Stimulus Discrimination: An Investigation of the Mechanisms Responsible for Human Perceptual Learning. Quarterly Journal of Experimental Psychology 59: 2083–101. [Google Scholar] [CrossRef]

- Loewenstein, George. 1996. Out of Control: Visceral Influences on Behavior. Organizational Behavior and Human Decision Processes 65: 272–92. [Google Scholar] [CrossRef]

- Logan, Jessica M., Alan D. Castel, Sara Haber, and Emily J. Viehman. 2012. Metacognition and the Spacing Effect: The Role of Repetition, Feedback, and Instruction on Judgments of Learning for Massed and Spaced Rehearsal. Metacognition and Learning 7: 175–95. [Google Scholar] [CrossRef]

- McCabe, Jennifer. 2011. Metacognitive Awareness of Learning Strategies in Undergraduates. Memory & Cognition 39: 462–76. [Google Scholar] [CrossRef]

- Metcalfe, Janet, and Bridgid Finn. 2008. Evidence That Judgments of Learning Are Causally Related to Study Choice. Psychonomic Bulletin & Review 15: 174–79. [Google Scholar] [CrossRef]

- Metcalfe, Janet, and Judy Xu. 2016. People Mind Wander More during Massed than Spaced Inductive Learning. Journal of Experimental Psychology: Learning, Memory, and Cognition 42: 978–84. [Google Scholar] [CrossRef]

- Metcalfe, Janet, Bennett L. Schwartz, and Scott G. Joaquim. 1993. The Cue-Familiarity Heuristic in Metacognition. Journal of Experimental Psychology: Learning, Memory, and Cognition 19: 851–61. [Google Scholar] [CrossRef]

- Michalski, Ryszard S. 1983. 4—A Theory and Methodology of Inductive Learning. In Machine Learning. Edited by Ryszard S. Michalski, Jaime G. Carbonell and Tom M. Mitchell. San Francisco: Morgan Kaufmann, pp. 83–134. [Google Scholar] [CrossRef]

- Mitchell, Chris, Scott Nash, and Geoffrey Hall. 2008. The Intermixed-Blocked Effect in Human Perceptual Learning Is Not the Consequence of Trial Spacing. Journal of Experimental Psychology: Learning, Memory, and Cognition 34: 237–42. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Nelson, Thomas O., and John Dunlosky. 1991. When People’s Judgments of Learning (JOLs) Are Extremely Accurate at Predicting Subsequent Recall: The ‘Delayed-JOL Effect.’. Psychological Science 2: 267–70. [Google Scholar] [CrossRef]

- Nelson, Thomas O., and Louis Narens. 1990. Metamemory: A theoretical framework and new findings. In The Psychology of Learning and Motivation. Edited by G. Bower. New York: Academic Press, pp. 125–73. [Google Scholar]

- Noh, Sharon M., Veronica X. Yan, Robert A. Bjork, and W. Todd Maddox. 2016. Optimal Sequencing during Category Learning: Testing a Dual-Learning Systems Perspective. Cognition 155: 23–29. [Google Scholar] [CrossRef] [PubMed]

- Poropat, Arthur E. 2009. A Meta-Analysis of the Five-Factor Model of Personality and Academic Performance. Psychological Bulletin 135: 322–38. [Google Scholar] [CrossRef] [PubMed]

- Postman, Leo. 1965. Retention as a function of degree of overlearning. Science 135: 666–67. [Google Scholar] [CrossRef]

- Pyc, Mary A., and John Dunlosky. 2010. Toward an Understanding of Students’ Allocation of Study Time: Why Do They Decide to Mass or Space Their Practice? Memory & Cognition 38: 431–40. [Google Scholar] [CrossRef][Green Version]

- Pyc, Mary A., and Katherine A. Rawson. 2012. Why Is Test–Restudy Practice Beneficial for Memory? An Evaluation of the Mediator Shift Hypothesis. Journal of Experimental Psychology: Learning, Memory, and Cognition 38: 737–46. [Google Scholar] [CrossRef]

- Qiu, Weiliang. 2021. PowerMediation: Power/Sample Size Calculation for Mediation Analysis. R Package Version 0.3.4. Available online: https://CRAN.R-project.org/package=powerMediation (accessed on 11 August 2022).

- Rau, Martina, Vincent Aleven, and Nikol Rummel. 2010. Blocked versus Interleaved Practice with Multiple Representations in an Intelligent Tutoring System for Fractions. In International Conference on Intelligent Tutoring Systems. Berlin/Heidelberg: Springer, p. 422. [Google Scholar] [CrossRef]

- Rawson, Katherine A., and John Dunlosky. 2011. Optimizing Schedules of Retrieval Practice for Durable and Efficient Learning: How Much Is Enough? Journal of Experimental Psychology: General 140: 283–302. [Google Scholar] [CrossRef]

- Rhodes, Matthew G., and Alan D. Castel. 2008. Memory Predictions Are Influenced by Perceptual Information: Evidence for Metacognitive Illusions. Journal of Experimental Psychology: General 137: 615–25. [Google Scholar] [CrossRef]

- Roberts, Maxwell J., and Elizabeth J. Newton. 2001. Inspection Times, the Change Task, and the Rapid-Response Selection Task. The Quarterly Journal of Experimental Psychology A: Human Experimental Psychology 54A: 1031–48. [Google Scholar] [CrossRef]

- Roediger, Henry L., and Jeffrey D. Karpicke. 2006. Test-Enhanced Learning: Taking Memory Tests Improves Long-Term Retention. Psychological Science 17: 249–55. [Google Scholar] [CrossRef]

- Roediger, Henry L., and Mary A. Pyc. 2012. Inexpensive Techniques to Improve Education: Applying Cognitive Psychology to Enhance Educational Practice. Journal of Applied Research in Memory and Cognition 1: 242–48. [Google Scholar] [CrossRef]

- Rohrer, Doug, Robert F. Dedrick, and Sandra Stershic. 2015. Interleaved Practice Improves Mathematics Learning. Journal of Educational Psychology 107: 900–8. [Google Scholar] [CrossRef]

- Schmidt, Richard A., and Robert A. Bjork. 1992. New Conceptualizations of Practice: Common Principles in Three Paradigms Suggest New Concepts for Training. Psychological Science 3: 207–18. [Google Scholar] [CrossRef]

- Schraw, Gregory, and David Moshman. 1995. Metacognitive Theories. Educational Psychology Review 7: 351–71. [Google Scholar] [CrossRef]

- Schroyens, Walter, Walter Schaeken, and Simon Handley. 2003. In Search of Counter-Examples: Deductive Rationality in Human Reasoning. The Quarterly Journal of Experimental Psychology Section A 56: 1129–45. [Google Scholar] [CrossRef]

- Schwartz, Bennett L., Aaron S. Benjamin, and Robert A. Bjork. 1997. The Inferential and Experiential Bases of Metamemory. Current Directions in Psychological Science 6: 132–37. [Google Scholar] [CrossRef]

- Serra, Michael J., and Janet Metcalfe. 2009. Effective Implementation of Metacognition. In Handbook of Metacognition in Education. The Educational Psychology Series; New York: Routledge/Taylor & Francis Group, pp. 278–98. [Google Scholar]

- Son, Lisa K. 2004. Spacing One’s Study: Evidence for a Metacognitive Control Strategy. Journal of Experimental Psychology: Learning, Memory, and Cognition 30: 601–4. [Google Scholar] [CrossRef] [PubMed]

- Son, Lisa K. 2010. Metacognitive Control and the Spacing Effect. Journal of Experimental Psychology: Learning, Memory, and Cognition 36: 255–62. [Google Scholar] [CrossRef]

- Son, Lisa, and Janet Metcalfe. 2000. Metacognitive and Control Strategies in Study-Time Allocation. Journal of Experimental Psychology. Learning, Memory, and Cognition 26: 204–21. [Google Scholar] [CrossRef]

- Song, Hyunjin, and Norbert Schwarz. 2008. If It’s Hard to Read, It’s Hard to Do: Processing Fluency Affects Effort Prediction and Motivation. Psychological Science 19: 986–88. [Google Scholar] [CrossRef]

- Song, Hyunjin, and Norbert Schwarz. 2009. If It’s Difficult to Pronounce, It Must Be Risky: Fluency, Familiarity, and Risk Perception. Psychological Science 20: 135–38. [Google Scholar] [CrossRef] [PubMed]

- Susser, Jonathan A., Andy Jin, and Neil W. Mulligan. 2016. Identity Priming Consistently Affects Perceptual Fluency but Only Affects Metamemory When Primes Are Obvious. Journal of Experimental Psychology. Learning, Memory, and Cognition 42: 657–62. [Google Scholar] [CrossRef] [PubMed]

- Tauber, Sarah K., John Dunlosky, Katherine A. Rawson, Christopher N. Wahlheim, and Larry L. Jacoby. 2013. Self-Regulated Learning of a Natural Category: Do People Interleave or Block Exemplars during Study? Psychonomic Bulletin & Review 20: 356–63. [Google Scholar] [CrossRef]

- Thiede, Keith W., Mary C. M. Anderson, and David Therriault. 2003. Accuracy of Metacognitive Monitoring Affects Learning of Texts. Journal of Educational Psychology 95: 66–73. [Google Scholar] [CrossRef]

- Tingley, Dustin, Teppei Yamamoto, Kentaro Hirose, Luke Keele, and Kosuku Imai. 2014. Mediation: R Package for Causal Mediation Analysis. Journal of Statistical Software 59: 1–38. [Google Scholar] [CrossRef]

- Toppino, Thomas C., Michael S. Cohen, Meghan L. Davis, and Amy C. Moors. 2009. Metacognitive Control over the Distribution of Practice: When Is Spacing Preferred? Journal of Experimental Psychology: Learning, Memory, and Cognition 35: 1352–58. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Tullis, Jonathan G., and Aaron S. Benjamin. 2011. On the Effectiveness of Self-Paced Learning. Journal of Memory and Language 64: 109–18. [Google Scholar] [CrossRef] [PubMed]

- Tullis, Jonathan G., Jason R. Finley, and Aaron S. Benjamin. 2013. Metacognition of the Testing Effect: Guiding Learners to Predict the Benefits of Retrieval. Memory & Cognition 41: 429–42. [Google Scholar] [CrossRef]

- Undorf, Monika, and Edgar Erdfelder. 2011. Judgments of Learning Reflect Encoding Fluency: Conclusive Evidence for the Ease-of-Processing Hypothesis. Journal of Experimental Psychology: Learning, Memory, and Cognition 37: 1264–69. [Google Scholar] [CrossRef]

- Vaughn, Kalif E., and Katherine A. Rawson. 2011. Diagnosing Criterion-Level Effects on Memory: What Aspects of Memory Are Enhanced by Repeated Retrieval? Psychological Science 22: 1127–31. [Google Scholar] [CrossRef]

- Verkoeijen, Peter P. J. L., and Samantha Bouwmeester. 2014. Is Spacing Really the ‘Friend of Induction’? Frontiers in Psychology 5: 259. [Google Scholar] [CrossRef] [PubMed]

- Wahlheim, Christopher N., Bridgid Finn, and Larry L. Jacoby. 2012. Metacognitive Judgments of Repetition and Variability Effects in Natural Concept Learning: Evidence for Variability Neglect. Memory & Cognition 40: 703–16. [Google Scholar] [CrossRef][Green Version]

- Wahlheim, Christopher N., John Dunlosky, and Larry L. Jacoby. 2011. Spacing Enhances the Learning of Natural Concepts: An Investigation of Mechanisms, Metacognition, and Aging. Memory & Cognition 39: 750–63. [Google Scholar] [CrossRef]

- Winne, Philip H., and Allyson F. Hadwin. 1998. Studying as Self-Regulated Learning. In Metacognition in Educational Theory and Practice. The Educational Psychology Series; Mahwah: Lawrence Erlbaum Associates Publishers, pp. 277–304. [Google Scholar]

- Wixted, John T., and Gary L. Wells. 2017. The Relationship Between Eyewitness Confidence and Identification Accuracy: A New Synthesis. Psychological Science in the Public Interest 18: 10–65. [Google Scholar] [CrossRef]

- Yan, Veronica X., Elizabeth Ligon Bjork, and Robert A. Bjork. 2016. On the Difficulty of Mending Metacognitive Illusions: A Priori Theories, Fluency Effects, and Misattributions of the Interleaving Benefit. Journal of Experimental Psychology: General 145: 918–33. [Google Scholar] [CrossRef]

- Yan, Veronica X., Nicholas C. Soderstrom, Gayan S. Seneviratna, Elizabeth Ligon Bjork, and Robert A. Bjork. 2017. How Should Exemplars Be Sequenced in Inductive Learning? Empirical Evidence versus Learners’ Opinions. Journal of Experimental Psychology: Applied 23: 403–16. [Google Scholar] [CrossRef]

- Yeager, David Scott, Rebecca Johnson, Brian James Spitzer, Kali H. Trzesniewski, Joseph Powers, and Carol S. Dweck. 2014. The Far-Reaching Effects of Believing People Can Change: Implicit Theories of Personality Shape Stress, Health, and Achievement during Adolescence. Journal of Personality and Social Psychology 106: 867–84. [Google Scholar] [CrossRef]

- Zimmerman, Barry J. 2000. Attaining Self-Regulation: A Social Cognitive Perspective. In Handbook of Self-Regulation. San Diego: Academic Press, pp. 13–39. [Google Scholar] [CrossRef]

- Zimmerman, Barry J. 2008. Investigating Self-Regulation and Motivation: Historical Background, Methodological Developments, and Future Prospects. American Educational Research Journal 45: 166–83. [Google Scholar] [CrossRef]

- Zulkiply, Norehan, and Jennifer S. Burt. 2013a. The Exemplar Interleaving Effect in Inductive Learning: Moderation by the Difficulty of Category Discriminations. Memory & Cognition 41: 16–27. [Google Scholar] [CrossRef]

- Zulkiply, Norehan, and Jennifer S. Burt. 2013b. Inductive learning: Does interleaving exemplars affect long-term retention? Malaysian Journal of Learning and Instruction 10: 133–55. Available online: http://mjli.uum.edu.my/ (accessed on 11 August 2022).

- Zulkiply, Norehan, John McLean, Jennifer S. Burt, and Debra Bath. 2012. Spacing and Induction: Application to Exemplars Presented as Auditory and Visual Text. Learning and Instruction 22: 215–21. [Google Scholar] [CrossRef]

- Zulkiply, Norehan. 2015. The role of bottom-up vs. top-down learning on the interleaving effect in category induction. Pertanika Journal of Social Science & Humanities 23: 933–44. Available online: http://www.pertanika.upm.edu.my/ (accessed on 11 August 2022).

| Coded Response | Frequency of Response (%) | Example | |

|---|---|---|---|

| Study 1 | Study 2 | ||

| Originally chose blocked | |||

| Not change strategy | 63.7 | 60.6 | “No this does not change my strategy that I would use, it is easier for me to group it together.” |

| Switch to interleaved | 20.8 | 24.8 | “Yes, actually. Now that I think about it, studying them not grouped together helped me notice patterns between the types of birds.” |

| Hesitant about switching to interleaved | 9.6 | 8.2 | “I think it does, I usually group together but if 90% of people really do learn better without it being grouped together, maybe I should give it a try.” |

| Alternative study strategy | 5.4 | 2.0 | “I would personally start out studying with examples grouped together, and then move to examples that are not grouped together.” |

| Originally chose interleaved | |||

| Not change strategy | 85.3 | 80.4 | “Having an unexpected pattern makes your brain remember things much better.” |

| Switch to blocked | 10.5 | 16.2 | “Yes, I think it will take less of a mental toll to differentiate between topics.” |

| Hesitant about switching to blocked | 0.0 | 0.0 | _ |

| Alternative study strategy | 1.2 | 0.0 | “I [would] use a different strategy depending on the subject. For math, I think examples that are not grouped together is better, but for topics like biology and chemistry, I enjoy grouping up related topics.” |

| Coded Response | Frequency of Response (%) | Example | |

|---|---|---|---|

| Study 1 | Study 2 | ||

| Hard to remember | 4.3 | 24.5 | “At the beginning of the study, I felt much more confident … At the end though, I found myself second guessing all my answers and being really unsure about what kind of bird I was looking at.” |

| Productive | 21.3 | 22.6 | “I really liked participating and learned the two different study methods and how not grouping them together fit best for me and my study habits.” |

| Suggestion | 25.5 | 5.7 | “I understand that the study might’ve had to commit to a purely visual learning style, but I think the hardest part is not knowing what differences I’m supposed to be looking for.” |

| Positive | 40.4 | 28.3 | “I found the study really intriguing and would definitely do more research in the future on this study.” |

| Negative | 4.3 | 5.7 | “I could barely focus when looking at the photos.” |

| Other | 4.3 | 13.2 | “Birds are a cool animal to study.” |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Macaluso, J.A.; Beuford, R.R.; Fraundorf, S.H. Familiar Strategies Feel Fluent: The Role of Study Strategy Familiarity in the Misinterpreted-Effort Model of Self-Regulated Learning. J. Intell. 2022, 10, 83. https://doi.org/10.3390/jintelligence10040083

Macaluso JA, Beuford RR, Fraundorf SH. Familiar Strategies Feel Fluent: The Role of Study Strategy Familiarity in the Misinterpreted-Effort Model of Self-Regulated Learning. Journal of Intelligence. 2022; 10(4):83. https://doi.org/10.3390/jintelligence10040083

Chicago/Turabian StyleMacaluso, Jessica A., Ramya R. Beuford, and Scott H. Fraundorf. 2022. "Familiar Strategies Feel Fluent: The Role of Study Strategy Familiarity in the Misinterpreted-Effort Model of Self-Regulated Learning" Journal of Intelligence 10, no. 4: 83. https://doi.org/10.3390/jintelligence10040083

APA StyleMacaluso, J. A., Beuford, R. R., & Fraundorf, S. H. (2022). Familiar Strategies Feel Fluent: The Role of Study Strategy Familiarity in the Misinterpreted-Effort Model of Self-Regulated Learning. Journal of Intelligence, 10(4), 83. https://doi.org/10.3390/jintelligence10040083