1. Introduction

Large-scale knowledge graphs, such as DBpedia [

1], Freebase [

2], Wikidata [

3], or YAGO [

4], are usually built using heuristic extraction methods, by exploiting crowd-sourcing processes, or both [

5]. These approaches can help creating large-scale public cross-domain knowledge graphs, but are prone both to errors as well as incompleteness. Therefore, over the last years, various methods for refining those knowledge graphs have been developed [

6].

Many of those knowledge graphs are built from semi-structured input. Most prominently, DBpedia and YAGO are built from structured parts in DBpedia, e.g., infoboxes and categories. Recently, the DBpedia extraction approach has been transferred to Wikis in general as well [

7]. For those approaches, each page in a Wiki usually corresponds to one entity in the knowledge graph, therefore, this Wiki page can be considered a useful source of additional information about that entity. For filling missing relations (e.g., the missing birthplace of a person),

relation extraction methods are proposed, which can be used to fill the relation based on the Wiki page’s text.

Most methods for relation extraction work on text and thus usually have at least one component which is explicitly specific for the language at hand (e.g., stemming, POS tagging, dependency parsing), like, e.g., [

8,

9,

10], or implicitly exploits some characteristics of that language [

11]. Thus, adapting those methods to work with texts in different natural languages is usally not a straight forward process.

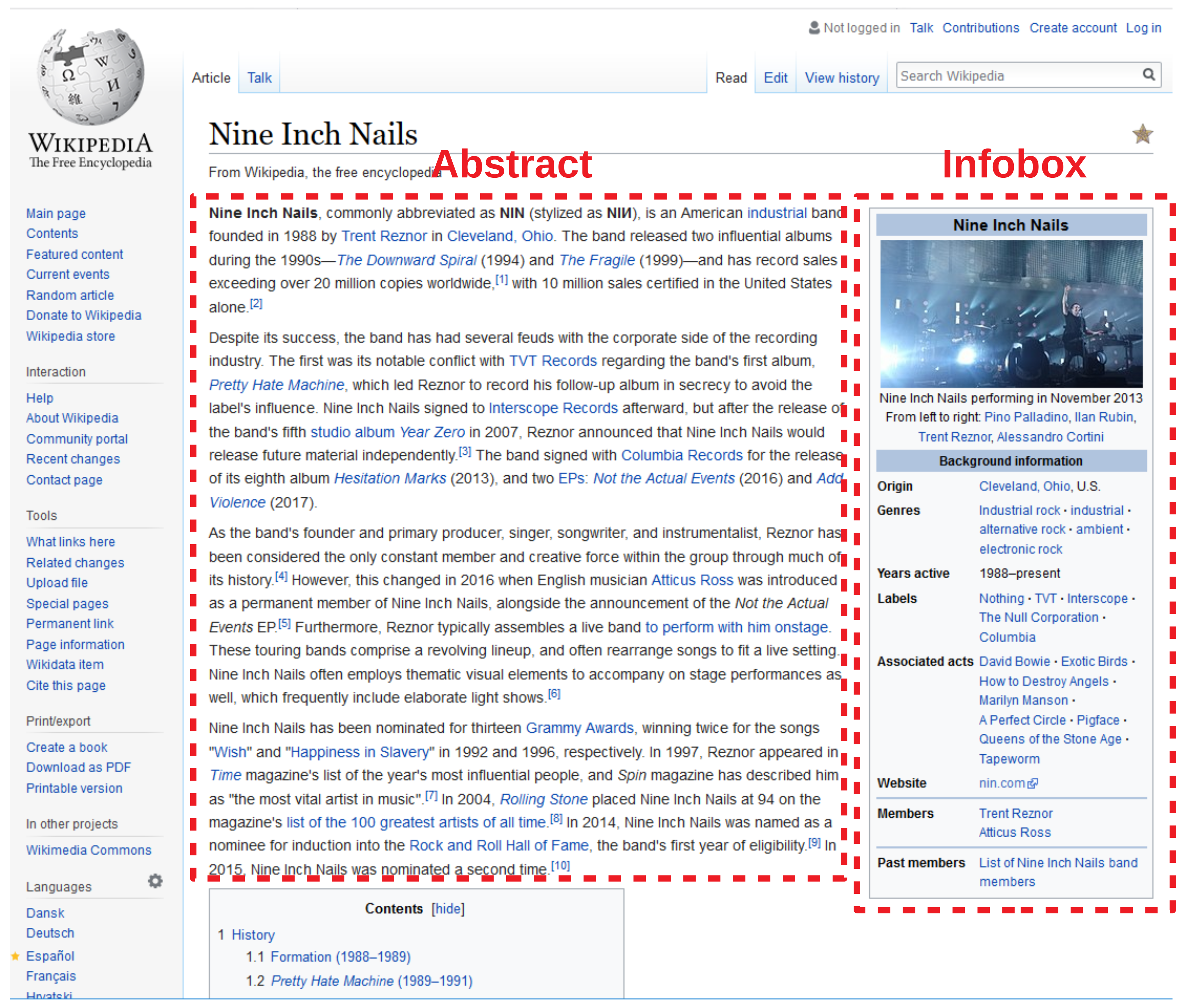

In this paper, we pursue an approach to extract knowledge from abstracts from Wikis. While a

Wiki generally is a collaborative content editing platform [

12], we consider everything a Wiki that has a scope (which can range from very broad, as in the case of Wikipedia, to very specific) and content pages that are interlinked, where each page has a specific topic. Practically, we limit ourselves to installations of the MediaWiki platform [

13], which is the most wide-spread Wiki platform [

14], although implementations for other platforms would be possible. As an abstract, we consider the contents of the Wiki page that appear in a Wiki before the first structuring element (e.g., a headline or a table of contents), as depicted in

Figure 1.

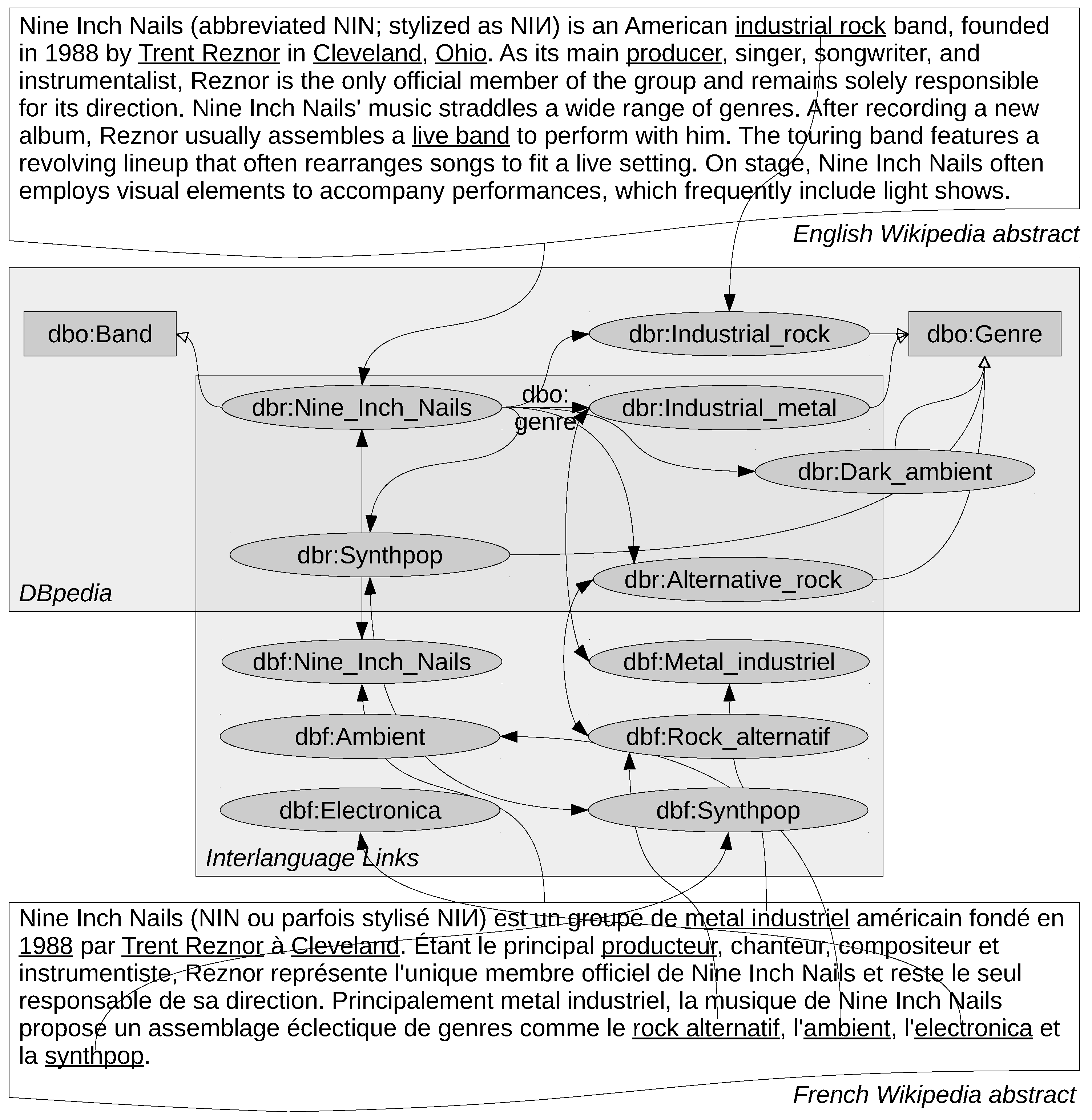

In this paper, we propose a language-agnostic approach. Instead of knowledge about the language, we take background knowledge from the a knowledge graph into account. With that, we try to discover certain patterns in how Wiki abstracts are written. For example, in many cases, any genre mentioned in the abstract about a band is usually a genre of that band, the first city mentioned in an abstract about a person is that person’s birthplace, and so on. Examples for such patterns are shown in

Table 1, which shows patterns observed on 10 randomly sampled pages of cities, movies, and persons from the English Wikipedia.

In that case, the linguistic assumptions that we make about a language at hand are quite minimal. In fact, we only assume that for each Wiki, there are certain ways to structure an abstract of a given type of entity, in terms of what aspect is mentioned where (e.g., the birth place is the first place mentioned when talking about a person). Thus, the approach can be considered as language-independent (see [

11] for an in-depth discussion).

The choice for Wiki abstracts as a corpus mitigates one of the common sources of errors in the relation extraction process, i.e., the entity linking. When creating a knowledge graph from Wikis, an unambiguous 1:1 mapping between entities in the knowledge graph and Wiki pages is created. Moreover, the percentage of wrong page links is usually marginal (i.e., below 1%) [

15], and particularly below the error rate made by current entity linking tools [

16,

17], thus, the corpus at hand can be directly exploited for relation extraction without the need for an upfront potentially noisy entity linking step.

By applying the exact same pipeline without any modifications to the twelve largest languages of Wikipedia, which encompass languages from different language families, we demonstrate that such patterns can be extracted from Wikipedia abstracts in arbitrary languages. We show that it is possible to extract valuable information by combining the information extracted from different languages, and we show that some patterns even exist across languages. Furthermore, we demonstrate the usage of the approach on a less structured knowledge graph created from several thousands of Wikis, i.e., DBkWik.

The rest of this paper is structured as follows. In

Section 2, we review related work. We introduce our approach in

Section 3, and discuss various experiments on DBpedia in

Section 4 and on DBkWik in

Section 5. We conclude with a summary and an outlook on future work.

A less extensive version of this article has already been published as a conference paper [

18]. This article extends the original in various directions, most prominently, it discusses the evidence of language-independent patterns in different Wikipedia languages, and it shows the applicability of the approach to a less well structured knowledge graph extracted from a very large number of Wikis, i.e., DBkWik [

7].

2. Related Work

Various approaches have been proposed for relation extraction from text, in particular from Wikipedia. In this paper, we particularly deal with closed relation extraction, i.e., extracting new instantiations for relations that are defined a priori (by considering the schema of the knowledge graph at hand, or the set of relations contained therein).

Using the categorization introduced in [

6], the approach proposed in this paper is an

external one, as it uses Wikipedia as an external resource in addition to the knowledge graph itself. While internal approaches for relation prediction in knowledge graphs exist as well, using, e.g., association rule mining, tensor factorization, or graph embeddings, we restrict ourselves to comparing the proposed approach to other external approaches.

Most of the approaches in the literature make more or less heavy use of language-specific techniques. Distant supervision is proposed by [

19] as a means to relation extraction for Freebase from Wikipedia texts. The approach uses a mixture of lexical and syntactic features, where the latter are highly language-specific. A similar approach is proposed for DBpedia in [

20]. Like the Freebase-centric approach, it uses quite a few language-specific techniques, such as POS tagging and lemmatization. While those two approaches use Wikipedia as a corpus, [

21] compare that corpus to a corpus of news texts, showing that the usage of Wikipedia leads to higher quality results.

Nguyen et al. [

22] introduce an approach for mining relations from Wikipedia articles which exploits similarities of dependency trees for extracting new relation instances. In [

23], the similarity of dependency trees is also exploited for clustering pairs of concepts with similar dependency trees. The construction of those dependency trees is highly language specific, and consequently, both approaches are evaluated on the English Wikipedia only.

An approach closely related to the one discussed in this paper is

iPopulator [

24], which uses Conditional Random Fields to extract patterns for infobox values in Wikipedia abstracts. Similarly,

Kylin [

25] uses Conditional Random Fields to extract relations from Wikipedia articles and general Web pages. Similarly to the approach proposed in this paper,

PORE [

26] uses information on neighboring entities in a sentence to train a support vector machine classifier for the extraction of four different relations. The papers only report results for English language texts.

Truly language-agnostic approaches are scarce. In [

27], a multi-lingual approach for open relation extraction is introduced, which uses Google translate to produce English language translations of the corpus texts in a preprocessing step, and hence exploits externalized linguistic knowledge. In the recent past, some approaches based on deep learning have been proposed which are reported to or would in theory also work on multi-lingual text [

28,

29,

30,

31]. They have the advantages that (a) they can compensate for shortcomings in the entity linking step when using arbitrary text and (b) that explicit linguistic feature engineering is replaced by implicit feature construction in deep neural networks. In contrast to those works, we work with a specific set of texts, i.e., Wikipedia abstracts. Here, we can assume that the entity linking is mostly free from noise (albeit not complete), and directly exploit knowledge from the knowledge graph at hand, i.e., in our case, DBpedia.

In contrast to most of those works, the approach discussed in this paper works on Wikipedia abstracts in

arbitrary languages, which we demonstrate in an evaluation using the twelve largest language editions of Wikipedia. While, to the best of our knowledge, most of the approaches discussed above are only evaluated on one or at maximum two languages, this is the first approach to be evaluated on a larger variety of languages. Furthermore, while many works in the area evaluate their results using only on one underlying knowledge graph [

6], we show results with two differently created knowledge graphs and two different corpora.

4. Experiments on DBpedia

To validate the approach on DBpedia, we conducted different experiments to validate the approach. First, we analyzed the performance of the relation extraction using a RandomForest classifier on the English DBpedia only. The classifier is used in its standard setup, i.e., training 20 trees. The code for reproducing the results is available online [

41].

For the evaluation, we follow a two-fold approach: for once, we use a cross-validated silver standard evaluation, where we evaluate how well existing relations can be predicted for instances already present in DBpedia. Since such a silver-standard evaluation can introduce certain biases [

6], we additionally validate the findings on a subset of the extracted relations in a manual retrospective evaluation.

In a second set of experiments, we analyze the extraction of relations on the twelve largest language editions of Wikipedia, which at the same time are those with more than 1 M articles, i.e., English, German, Spanish, French, Italian, Dutch, Polish, Russian, Cebuano, Swedish, Vietnamese, and Waray [

42]. The datasets of the extracted relations for all languages can be found online [

41]. Note that this selection of languages does not only contain Indo-European, but also one Austronesian and two Austroasiatic languages.

In addition, we conduct further analyses. First, we investigate differences of the relations extracted for different languages with respect to topic and locality. For the latter, the hypothesis is that information extracted, e.g., for places from German abstracts is about places in German speaking countries. Finally, with RIPPER being a symbolic learner, we also inspect the models as such to find out whether there are universal patterns for relations that occur in different languages.

For 395 relations that can hold between entities, the ontology underlying the DBpedia knowledge graph [

43] defines an explicit domain and range, i.e., the types of objects that are allowed in the subject and object position of this relation. Those were considered in the evaluation.

4.2. Cross-Lingual Relation Extraction

In the next experiment, we used the RandomForests classifier to extract models for relations for the top 12 languages, as depicted in

Table 7. One model is trained per relation and language. The feature extraction and classifier parameterization is the same as in the previous set of experiments.

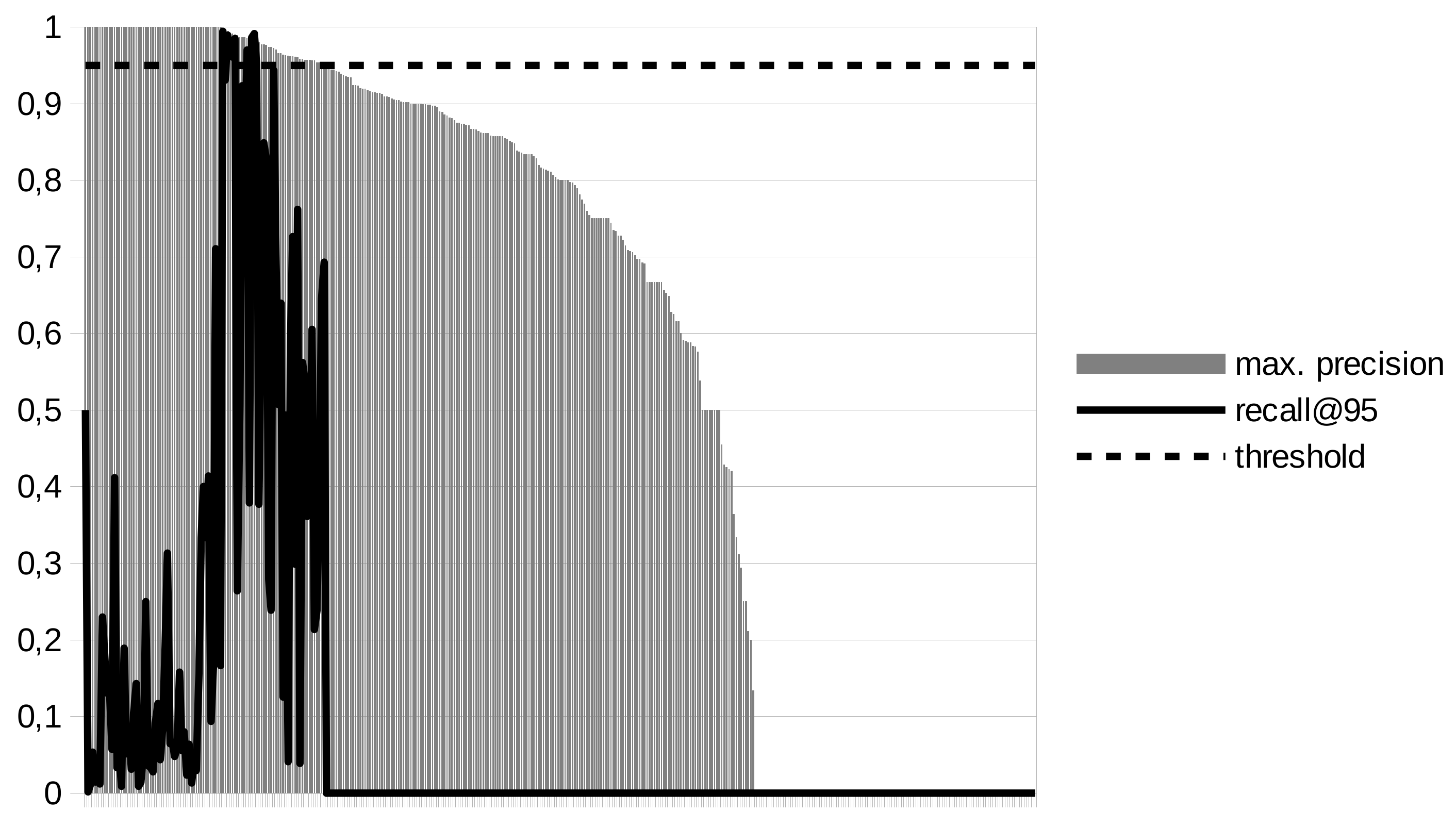

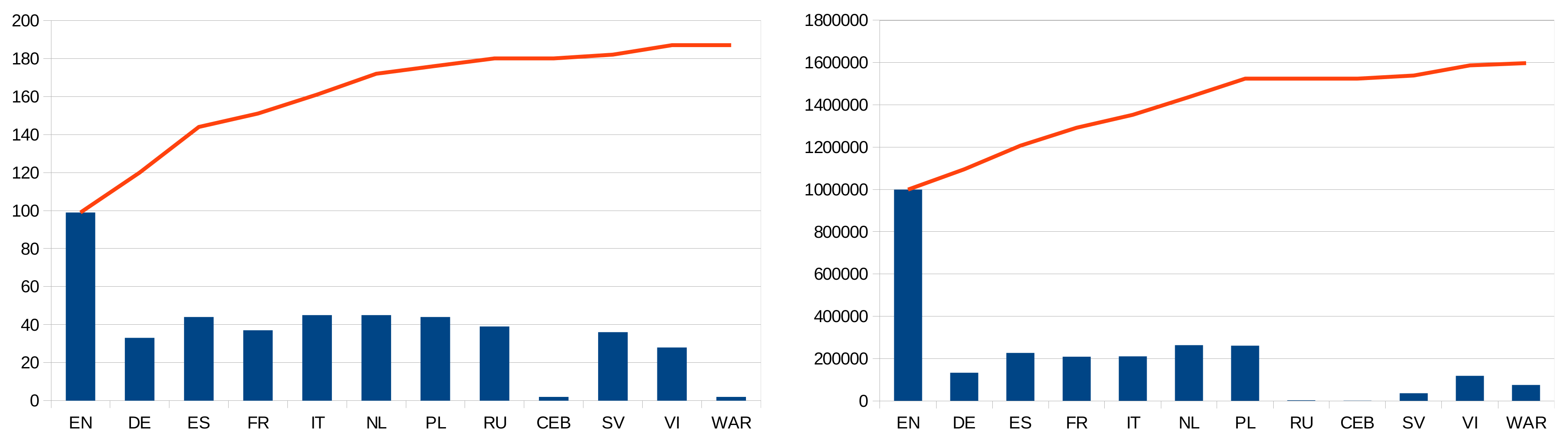

As a first result, we look at the number of relations for which models can be extracted at 95% precision. While it is possible to learn extraction models for 99 relations at that level of precision for English, that number almost doubles to 187 when using the top twelve languages, as depicted in

Figure 5. These results show that it is possible to learn high precision models for relations in other languages for which this is not possible in English.

When extracting new statements (i.e., instantiations of the relations) using those models, our goal is to extract those statements in the canonical DBpedia knowledge graph, as depicted in

Figure 2. The number of extracted statements per language, as well as cumulated statements, is depicted in

Figure 5.

At first glance, it is obvious that, although a decent number of models can be learned for most languages, the number of statements extracted are on average an order of magnitude smaller than the number of statements that are extracted for English. However, the additional number of extracted relations is considerable: while for English only, there is roughly 1 M relations, 1.6 M relations can be extracted from the top 12 languages, which is an increase of about 60% when stepping from an English-only to a multi-lingual extraction. The graphs in

Figure 5 also shows that the results stabilize after using the seven largest language editions, i.e., we do not expect any significant benefits from adding more languages with smaller Wikipedias to the setup. Furthermore, with smaller Wikipedias, the number of training examples also decreases, which also limits the precision of the models learned.

As can be observed in

Figure 5, the number of extracted statements is particularly low for Russian and Cebuano. For the latter, the figure shows that only a small number of high quality models can be learned, mostly due to the low number of inter-language links to English, as depicted in

Table 7. For the former, the number of high quality models that can be learned is larger, but the models are mostly unproductive, since they are learned for rather exotic relations. In particular, for the top 5 relations in

Figure 4, no model is learned for Russian.

It is evident that the number of extracted statements is not proportional to the relative size of the respective Wikipedia, as depicted in

Table 7. For example, although the Swedish Wikipedia is more than half the size of the English one, the number of extracted statements from Swedish is by a factor of 28 lower than those extracted from English. At first glance, this may be counter intuitive.

The reason for the number of statements extracted from languages other than English is that we only generate candidates if both the article at hand and the entity linked from that article’s abstract have a counterpart in the canonical English DBpedia. However, as can be seen from

Table 7, those links to counterparts are rather scarce. For the example of Swedish, the probability of an entity being linked to the English Wikipedia is only

. Thus, the probability for a candidate that both the subject and object are linked to the English Wikipedia is

. This is pretty exactly the ratio of statements extracted from Swedish to statements extracted from English (

). In fact, the number of extracted statements per language and the squared number of links between the respective language edition and the English Wikipedia have a Pearson correlation coefficient of 0.95. This shows that the low number of statements is mainly an effect of missing inter-language links in Wikipedia, rather than a shortcoming of the approach as such.

If we were interested in extending the coverage of DBpedia not only w.r.t. relations between existing entities, but also adding new entities (in particular: entities which only exist in language editions of Wikipedia other than English), then the number of statements would be larger. However, this was not in the focus of this work.

It is remarkable that the three Wikis in our evaluation with the lowest interlinkage degree—i.e., Swedish, Cebuano, and Waray—are known to be created by a bot called

Lsjbot to a large degree [

45]. Obviously, this bot creates a large number of pages, but at the same time fails to create the corresponding interlanguage links.

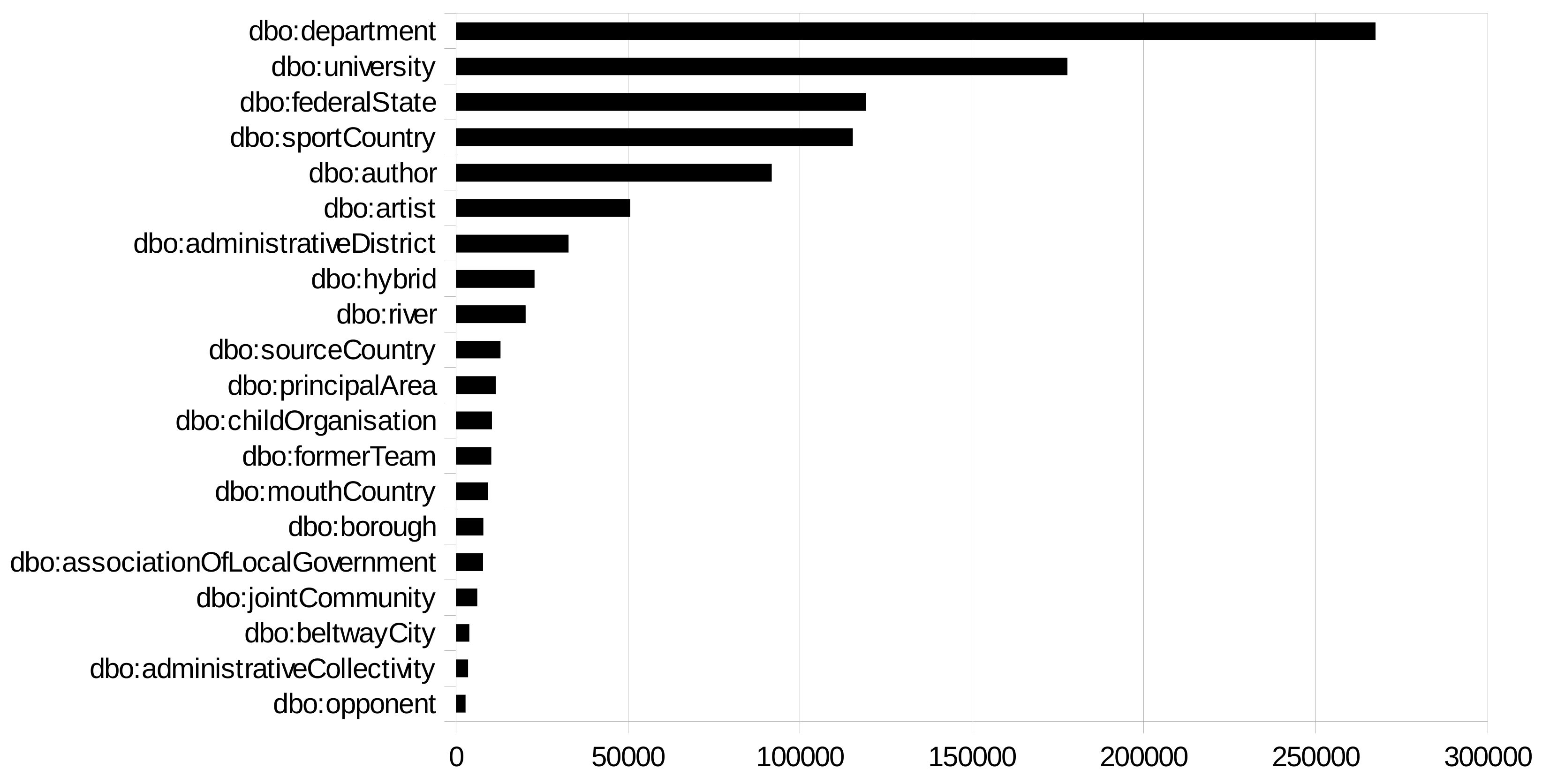

4.3. Topical and Geographical Analysis by Language

To further analyze the extracted statements, we look at the topical and geographical coverage for the

additional statements (i.e., statements that are not yet contained in DBpedia) that are extracted for the twelve languages at hand. First, we depict the most frequent relations and subject classes for the statements. The results are depicted in

Figure 6 and

Figure 7. It can be observed that the majority of statements is related to geographical entities and their relations. The Russian set is an exception, since most extracted relations are about musical works, in contrast to geographic entities, as for the other languages. Furthermore, the English set has the largest fraction of person related facts.

We assume that the coverage of Wikipedia in different languages is, to a certain extent, biased towards places, persons, etc. from countries in which the respective language is spoken [

46]. Thus, we expect that, e.g., for relations extracted about places, we will observe that the distribution of countries to which entities are related differs for the various language editions.

To validate this hypothesis, we determine the country to which a statement is related as follows: given a statement s in the form

s p o .

we determine the set of pairs of relations and countries that fulfill

s r c .

c a dbo:Country .

and

o r c .

c a dbo:Country .

For all statements

S extracted from a language, we sum up the relative number of relations of a country to each statement, i.e., we determine the weight of a country

C as

The analysis was conducted using the RapidMiner Linked Open Data Extension [

47].

Figure 8 depicts the distributions for the countries. We can observe that while in most cases, facts about US related entities are the majority, only for Polish, entities related to Poland are the most frequent. For Swedish, German, French, Cebuano and Italian, the countries with the largest population speaking those languages (i.e., Sweden, Germany, France, Philippines, and Italy, respectively), are at the second position. For Spanish, Spain is at the second position, despite Mexico and Colombia (rank 11 and 6, respectively) having a larger population. For the other languages, a language-specific effect is not observable: for Dutch, the Netherlands are at rank 8, for Vietnamese, Vietnam is at rank 34, for Waray, the Philippines are at rank 7. For Russian, Russia is on rank 23, preceded by Soviet Union (sic!, rank 15) and Belarus (rank 22).

The results show that despite the dominance of US-related entities, there is a fairly large variety in the geographical coverage of the information extracted. This supports the finding that adding information extracted from multiple Wikipedia language editions helps broadening the coverage of entities.

6. Conclusions and Outlook

Adding new relations to existing knowledge graphs is an important task in adding value to those knowledge graphs. In this paper, we have introduced an approach that adds relations to knowledge graphs which are linked Wikis by performing relation extraction on the corresponding Wiki page’s abstracts. Unlike other works in that area, the approach presented in this paper uses background knowledge from DBpedia, but does not rely on any language-specific techniques, such as POS tagging, stemming, or dependency parsing. Thus, it can be applied to Wikis in any language. Furthermore, we have shown that the approach cannot only be applied to Wikipedia, but also presented experiments on a larger and more diverse Wikifarm, i.e., Fandom powered by Wikia.

We have conducted two series of experiments, one with DBpedia and Wikipedia, the other with DBkWik. The experimental results for DBpedia show that the approach can add up to one million additional statements to the knowledge graph. By extending the set of abstracts from English to the most common languages, the coverage both of relations for which high quality models can be learned, as well as of instantiation of those relations, significantly increases. Furthermore, we can observe that for certain relations, the models learned are rather universal across languages.

In a second set of experiments, we have shown that the approach is not just applicable to Wikipedia, but also to other Wikis. Given that there are hundreds of thousands of Wikis on the Internet, and—as shown for DBkWik—they can be utilized for knowledge graph extraction, this shows that the approach is valuable not only for extracting information from Wikipedia. Here, we are able to extend DBkWik by more than 300,000 relation assertions.

There are quite a few interesting directions for future work. Following the observation in [

29] that multi-lingual training can improve the performance for each single language, it might be interesting to apply models also on languages on which they had not been learned. Assuming that certain patterns exist in many languages (e.g., the first place being mentioned in an article about a person being the person’s birth place), this may increase the amount of data extracted.

The finding that for some relations, the models are rather universal across different languages, gives way to interesting refinements of the approach. Since the learning algorithms sometimes fail to learn a high quality model for one language, it would be interesting to reuse a model which performed good on other languages in those cases, i.e., to decouple the learning and the application of the models. With such a decoupled approach, even more instantiations of relations can be potentially extracted, although the effect on precision would need to be examined with great care. Furthermore, designing ensemble solutions that collect evidence from different languages could be designed, which would allow for lowering the strict precision threshold of 95% applied in this paper (i.e., several lower precision models agreeing on the same extracted relations could be a similarly or even more valuable evidence as one high-precision model).

Likewise, for the DBkWik experiments, as discussed above, entities and and relations are not matched. This means that each model is trained and applied individually for each Wiki. As for the future, we plan to match the Wikis upon each other, we expect much more statements as a result after that preprocessing step, since (a) each model can be trained with more training data (i.e., it therefore has a higher likelihood to cross the 0.95 precision threshold), and (b) each model is applied on more Wikis, hence will be more productive.

In our experiments, we have only concentrated on relations between entities so far. However, a significant fraction of statements in DBpedia, DBkWik, and other knowledge graphs also have literals as objects. That said, it should be possible to extend the framework to such statements as well. Although numbers, years, and dates are usually not linked to other entities, they are quite easy to detect and parse using, e.g., regular expressions or specific taggers and parsers such as

HeidelTime [

60] or the CRF-based parser introduced in [

61]. With such a detection step in place, it would also be possible to learn rules for datatype properties, such as:

the first date in an abstract about a person is that person’s birthdate, etc.

Furthermore, our focus so far has been on adding missing relations. A different, yet related problem is the detection of wrong relations [

6,

62,

63]. Here, we could use our approach to gather

evidence for relations in different language editions of Wikipedia. Relations for which there is little evidence could then be discarded (similar to DeFacto [

64]). While for adding knowledge, we have tuned our models towards

precision, such an approach, however, would rather require a tuning towards

recall. In addition, since there are also quite a few errors in numerical literals in DBpedia [

65,

66], an extension such as the one described above could also help detecting such issues.

When it comes to the extension to multiple Wikis, there are also possible applications to knowledge fusion, which has already been examined for relations extracted from Wikipedia infoboxes in different languages [

67]. Here, when utilizing thousands of Wikis, it is possible to gain multiple sources of support for an extracted statement, and hence develop more sophisticated fusion methodologies.

So far, we have worked on one genre of text, i.e., abstracts of encyclopedic articles. However, we are confident that this approach can also be applied to other genres of articles as well, as long as those follow typical structures. Examples include, but are not limited to: extracting relations from movie, music, and book reviews, from short biographies, or from product descriptions. All those are texts that are not strictly structured, but expose certain patterns. While for the Wikipedia abstracts covered in this paper, links to the DBpedia knowledge graph are implicitly given, other text corpora would require entity linking using tools such as DBpedia Spotlight [

68].

In summary, we have shown that abstracts in Wikis are a valuable source of knowledge for extending knowledge graphs such as DBpedia and DBkWik. Those abstracts expose patterns which can be captured by language-independent features, thus allowing for the design of language-agnostic systems for relation extraction from such abstracts.