A Computational Approach for the Assessment of Executive Functions in Patients with Obsessive–Compulsive Disorder

Abstract

1. Introduction

2. Materials and Methods

2.1. Participants

2.2. Ethics Statement

2.3. Protocol

2.4. Neuropsychological Battery

2.5. VMET

2.6. VMET Scoring

2.7. Data Analysis

- Logistic regression classification algorithm with ridge regularization;

- Random forest classification using an ensemble of decision trees;

- Support vector machine (SVM), to map inputs to higher-dimensional feature spaces that best separated different classes.

3. Results

4. Discussion

Author Contributions

Funding

Conflicts of Interest

References

- APA. Diagnostic and Statistical Manual of Mental Disorders-DSM-5; American Psychiatric Publishing: Arlington, VA, USA, 2013. [Google Scholar]

- Garfinkel, P.E.; Goldbloom, D.S. Mental health-getting beyond stigma and categories. Bull. World Health Organ. 2000, 78, 503. [Google Scholar] [PubMed]

- Muller, J.; Roberts, J.E. Memory and attention in Obsessive-Compulsive Disorder: A review. J. Anxiety Disord. 2005, 19, 1–28. [Google Scholar] [CrossRef] [PubMed]

- Morein-Zamir, S.; Craig, K.J.; Ersche, K.D.; Abbott, S.; Muller, U.; Fineberg, N.A.; Bullmore, E.T.; Sahakian, B.J.; Robbins, T.W. Impaired visuospatial associative memory and attention in obsessive compulsive disorder but no evidence for differential dopaminergic modulation. Psychopharmacology 2010, 212, 357–367. [Google Scholar] [CrossRef] [PubMed]

- Burgess, P.W.; Veitch, E.; De Lacy Costello, A.; Shallice, T. The cognitive and neuroanatomical correlates of multitasking. Neuropsychologia 2000, 38, 848–863. [Google Scholar] [CrossRef]

- Chan, R.C.; Shum, D.; Toulopoulou, T.; Chen, E.Y. Assessment of executive functions: Review of instruments and identification of critical issues. Arch. Clin. Neuropsychol. 2008, 23, 201–216. [Google Scholar] [CrossRef]

- Grafman, J.; Litvan, I. Importance of deficits in executive functions. Lancet 1999, 354, 1921–1923. [Google Scholar] [CrossRef]

- Abramovitch, A.; Dar, R.; Schweiger, A.; Hermesh, H. Neuropsychological Impairments and Their Association with Obsessive-Compulsive Symptom Severity in Obsessive-Compulsive Disorder. Arch. Clin. Neuropsychol. 2011, 26, 364–376. [Google Scholar] [CrossRef]

- Lader, M. Current Controversies in the Anxiety Disorders. BMJ 1996, 312, 984. [Google Scholar] [CrossRef]

- Shin, N.Y.; Kang, D.H.; Choi, J.S.; Jung, M.H.; Jang, J.H.; Kwon, J.S. Do organizational strategies mediate nonverbal memory impairment in drug-naïve patients with obsessive-compulsive disorder? Neuropsychology 2010, 24, 527. [Google Scholar] [CrossRef]

- Moritz, S.; Kloss, M.; Von Eckstaedt, F.V.; Jelinek, L. Comparable performance of patients with obsessive–compulsive disorder (OCD) and healthy controls for verbal and nonverbal memory accuracy and confidence: Time to forget the forgetfulness hypothesis of OCD? Psychiatry Res. 2009, 166, 247–253. [Google Scholar] [CrossRef]

- Abramovitch, A.; Abramowitz, J.S.; Mittelman, A. The neuropsychology of adult obsessive–compulsive disorder: A meta-analysis. Clin. Psychol. Rev. 2013, 33, 1163–1171. [Google Scholar] [CrossRef] [PubMed]

- Pauls, D.L.; Abramovitch, A.; Rauch, S.L.; Geller, D.A. Obsessive-compulsive disorder: An integrative genetic and neurobiological perspective. Nat. Rev. Neurosci. 2014, 15, 410–424. [Google Scholar] [CrossRef] [PubMed]

- Penades, R.; Catalan, R.; Rubia, K.; Andres, S.; Salamero, M.; Gasto, C. Impaired response inhibition in obsessive compulsive disorder. Eur. Psychiatry 2007, 22, 404–410. [Google Scholar] [CrossRef] [PubMed]

- Sbordone, R.J.; Purisch, A.D. Hazards of blind analysis of neuropsychological test data in assessing cognitive disability: The role of confounding factors. Neurorehabilitation 1996, 7, 15–26. [Google Scholar] [CrossRef]

- Burgess, N. Spatial memory: How egocentric and allocentric combine. Trends Cogn. Sci. 2006, 10, 551–557. [Google Scholar] [CrossRef] [PubMed]

- Rand, D.; Rukan, S.B.A.; Weiss, P.L.; Katz, N. Validation of the Virtual MET as an assessment tool for executive functions. Neuropsychol. Rehabil. 2009, 19, 583–602. [Google Scholar] [CrossRef]

- Schultheis, M.T.; Himelstein, J.; Rizzo, A.A. Virtual reality and neuropsychology: Upgrading the current tools. J. Head Trauma Rehabil. 2002, 17, 378–394. [Google Scholar] [CrossRef]

- Riva, G. Virtual reality in psychotherapy: Review. Cyberpsychol. Behav. 2005, 8, 220–230. [Google Scholar] [CrossRef]

- Difede, J.; Hoffman, H.G. Virtual reality exposure therapy for World Trade Center post-traumatic stress disorder: A case report. Cyberpsychol. Behav. 2002, 5, 529–535. [Google Scholar] [CrossRef]

- Serino, S.; Triberti, S.; Villani, D.; Cipresso, P.; Gaggioli, A.; Riva, G. Toward a validation of cyber-interventions for stress disorders based on stress inoculation training: A systematic review. Virtual Real. 2014, 18, 73–87. [Google Scholar] [CrossRef]

- Villani, D.; Grassi, A.; Cognetta, C.; Cipresso, P.; Toniolo, D.; Riva, G. The effects of a mobile stress management protocol on nurses working with cancer patients: A preliminary controlled study. Stud. Health Technol. Inform. 2012, 173, 524–528. [Google Scholar] [PubMed]

- Safir, M.P.; Wallach, H.S.; Bar-Zvi, M. Virtual reality cognitive-behavior therapy for public speaking anxiety: One-year follow-up. Behav. Modif. 2011. [Google Scholar] [CrossRef] [PubMed]

- Repetto, C.; Gaggioli, A.; Pallavicini, F.; Cipresso, P.; Raspelli, S.; Riva, G. Virtual reality and mobile phones in the treatment of generalized anxiety disorders: A phase-2 clinical trial. Pers. Ubiquitous Comput. 2013, 17, 253–260. [Google Scholar] [CrossRef]

- Serino, S.; Dakanalis, A.; Gaudio, S.; Carrà, G.; Cipresso, P.; Clerici, M.; Riva, G. Out of body, out of space: Impaired reference frame processing in eating disorders. Psychiatry Res. 2015, 230, 732–734. [Google Scholar] [CrossRef]

- Pedroli, E.; Serino, S.; Cipresso, P.; Pallavicini, F.; Riva, G. Assessment and rehabilitation of neglect using virtual reality: A systematic review. Front. Behav. Neuroscience. 2015, 9, 226. [Google Scholar] [CrossRef]

- Cipresso, P.; Pedroli, E.; Serino, S.; Semonella, M.; Tuena, C.; Colombo, D.; Pallavicini, E.; Riva, G. Assessment of Unilateral Spatial Neglect using a free mobile application for Italian clinicians. Front. Psychol. 2018, 9. [Google Scholar] [CrossRef]

- Cipresso, P.; Albani, G.; Serino, S.; Pedroli, E.; Pallavicini, F.; Mauro, A.; Riva, G. Virtual multiple errands test (VMET): A virtual reality-based tool to detect early executive functions deficit in Parkinson’s disease. Frontiers in Behavioral Neuroscience. 2014, 8, 405. [Google Scholar] [CrossRef]

- Raspelli, S.; Pallavicini, F.; Carelli, L.; Morganti, F.; Poletti, B.; Corra, B.; Silani, V.; Riva, G. Validation of a Neuro Virtual Reality-based version of the Multiple Errands Test for the assessment of executive functions. Stud. Health Technol. Inform. 2010, 167, 92–97. [Google Scholar]

- La Paglia, F.; La Cascia, C.; Rizzo, R.; Riva, G.; La Barbera, D. Decision Making and Cognitive Behavioral Flexibility in a OCD Sample: A Study in a Virtual Environment. Stud. Health Technol. Inform. 2016, 219, 53–57. [Google Scholar]

- Serino, S.; Pedroli, E.; Keizer, A.; Triberti, S.; Dakanalis, A.; Pallavicini, F.; Chirico, A.; Riva, G. Virtual Reality Body Swapping: A Tool for Modifying the Allocentric Memory of the Body. Cyberpsychol. Behav. Soc. Netw. 2016, 19, 127–133. [Google Scholar] [CrossRef]

- Serino, S.; Mestre, D.; Mallet, P.; Pergandi, J.M.; Cipresso, P.; Riva, G. Do not get lost in translation: The role of egocentric heading in spatial orientation. Neurosci. Lett. 2015, 602, 84–88. [Google Scholar] [CrossRef] [PubMed]

- Ferrucci, R.; Serino, S.; Ruggiero, F.; Repetto, C.; Colombo, D.; Pedroli, E.; Marceglia, S.; Riva, G.; Priori, A. Cerebellar Transcranial Direct Current Stimulation (tDCS), Leaves Virtual Navigation Performance Unchanged. Front. Neurosci. 2019, 13. [Google Scholar] [CrossRef] [PubMed]

- La Paglia, F.; La Cascia, C.; Rizzo, R.; Sideli, L.; Francomano, A.; La Barbera, D. Cognitive rehabilitation of schizophrenia through NeuroVR training. Stud. Health Technol. Inform. 2013, 191, 158–162. [Google Scholar] [PubMed]

- Cipresso, P.; La Paglia, F.; La Cascia, C.; Riva, G.; Albani, G.; La Barbera, D. Break in volition: A virtual reality study in patients with obsessive-compulsive disorder. Exp. Brain Res. 2013, 229, 443–449. [Google Scholar] [CrossRef]

- La Paglia, F.; La Cascia, C.; Rizzo, R.; Cangialosi, F.; Sanna, M.; Riva, G.; La Barbera, D. Cognitive assessment of OCD patients: NeuroVR vs neuropsychological test. Stud. Health Technol. Inform. 2014, 199, 40–44. [Google Scholar]

- Van Bennekom, M.J.; Kasanmoentalib, M.S.; de Koning, P.P.; Denys, D. A virtual reality game to assess obsessive-compulsive disorder. Cyberpsychol. Behav. Soc. Netw. 2017, 20, 718–722. [Google Scholar] [CrossRef]

- Bragdon, L.B.; Gibb, B.E.; Coles, M.E. Does neuropsychological performance in OCD relate to different symptoms? A meta-analysis comparing the symmetry and obsessing dimensions. Depress. Anxiety 2018, 35, 761–774. [Google Scholar] [CrossRef]

- El Emam, K.; Moreau, K.; Jonker, E. How Strong are Passwords Used to Protect Personal Health Information in Clinical Trials? J. Med. Internet Res. 2011, 13, 13–22. [Google Scholar] [CrossRef]

- Magni, E.; Binetti, G.; Bianchetti, A.; Rozzini, R.; Trabucchi, M. Mini-Mental State Examination: A normative study in Italian elderly population. Eur. J. Neurol. 1996, 3, 198–202. [Google Scholar] [CrossRef]

- Monaco, M.; Costa, A.; Caltagirone, C.; Carlesimo, G.A. Forward and backward span for verbal and visuo-spatial data: Standardization and normative data from an Italian adult population. Neurol. Sci. 2012, 34, 749–754. [Google Scholar] [CrossRef]

- Novelli, G.; Papagno, C.; Capitani, E.; Laiacona, M. Tre test clinici di memoria verbale a lungo termine: Taratura su soggetti normali. Arch. Psicol. Neurol. Psichiatr. 1986, 47, 278–296. [Google Scholar]

- Appollonio, I.; Leone, M.; Isella, V.; Piamarta, F.; Consoli, T.; Villa, M.L.; Forapani, E.; Russo, A.; Nichelli, P. The Frontal Assessment Battery (FAB): Normative values in an Italian population sample. Neurol. Sci. 2005, 26, 108–116. [Google Scholar] [CrossRef] [PubMed]

- Amodio, P.; Wenin, H.; Del Piccolo, F.; Mapelli, D.; Montagnese, S.; Pellegrini, A.; Musto, C.; Gatta, A.; Umiltà, C. Variability of trail making test, symbol digit test and line trait test in normal people. A normative study taking into account age-dependent decline and sociobiological variables. Aging Clin. Exp. Res. 2002, 14, 117–131. [Google Scholar] [CrossRef]

- Allamanno, N.; Della Sala, S.; Laiacona, M.; Pasetti, C.; Spinnler, H. Problem solving ability in aging and dementia: Normative data on a non-verbal test. Ital. J. Neurol. Sci. 1987, 8, 111–119. [Google Scholar] [CrossRef]

- Novelli, G.; Papagno, C.; Capitani, E.; Laiacona, M. Tre test clinici di ricerca e produzione lessicale. Taratura su sogetti normali. Arch. Psicol. Neurol. Psichiatr. 1986, 47, 477–506. [Google Scholar]

- Riva, G.; Gaggioli, A.; Grassi, A.; Raspelli, S.; Cipresso, P.; Pallavicini, F.; Vigna, C.; Gagliati, A.; Gasco, S.; Donvito, G. NeuroVR 2-a free virtual reality platform for the assessment and treatment in behavioral health care. Stud. Health Technol. Inform. 2011, 163, 493–495. [Google Scholar]

- Cipresso, P.; Serino, S.; Riva, G. Psychometric assessment and behavioral experiments using a free virtual reality platform and computational science. BMC Med. Inform. Decis. Mak. 2016, 16(1), 37. [Google Scholar] [CrossRef]

- Raspelli, S.; Pallavicini, F.; Carelli, L.; Morganti, F.; Pedroli, E.; Cipresso, P.; Poletti, B.; Corra, B.; Sangalli, D.; Silani, V.; et al. Validating the Neuro VR-based virtual version of the Multiple Errands Test: Preliminary results. Presence Teleoper. Virtual Environ. 2012, 21, 31–42. [Google Scholar] [CrossRef]

- Love, J.; Selker, R.; Marsman, M.; Jamil, T.; Dropmann, D.; Verhagen, A.J.; Wagenmakers, E.J. JASP (Version 0.7.1.4)[Computer Software]; JASP Project: Amsterdam, The Netherlands, 2015; Available online: https://jasp-stats.org (accessed on 16 October 2019).

- Caruana, R.; Niculescu-Mizil, A. An empirical comparison of supervised learning algorithms. In Proceedings of the ACM 23rd International Conference on Machine Learning, Pittsburgh, PA, USA, 25 June 2006. [Google Scholar]

- Suthaharan, S. Supervised Learning Algorithms. In Machine Learning Models and Algorithms for Big Data Classification; Springer: Berlin, Germany, 2016; pp. 183–206. [Google Scholar]

- Wang, S.; Li, D.; Petrick, N.; Sahiner, B.; Linguraru, M.G.; Summers, R.M. Optimizing area under the ROC curve using semi-supervised learning. Pattern Recognit. 2015, 48, 276–287. [Google Scholar] [CrossRef]

- Kotsiantis, S.B.; Zaharakis, I.; Pintelas, P. Supervised Machine Learning: A Review of Classification Techniques. 2007. Available online: https://datajobs.com/data-science-repo/Supervised-Learning-[SB-Kotsiantis].pdf URL (accessed on 16 October 2019).

- Davis, J.; Goadrich, M. The relationship between Precision-Recall and ROC curves. In Proceedings of the ACM 23rd International Conference on Machine Learning, Pittsburgh, PA, USA, 25 June 2006. [Google Scholar]

- Quinlan, J.R. Induction of decision trees. Mach. Learn. 1986, 1, 81–106. [Google Scholar] [CrossRef]

- Wagacha, P.W. Induction of Decision Trees. Foundations of Learning and Adaptive Systems. 2003. Available online: https://www.researchgate.net/profile/Peter_Wagacha/publication/283569199_Instance_Based_Learning/links/563f951808ae34e98c4e723a/Instance-Based-Learning.pdf URL (accessed on 16 October 2019).

- Sheth, N.; Deshpande, A. A Review of Splitting Criteria for Decision Tree Induction. Fuzzy Syst. 2015, 7, 1–4. [Google Scholar]

- Cipresso, P. Modeling behavior dynamics using computational psychometrics within virtual worlds. Front. Psychol. 2015, 6, 1725. [Google Scholar] [CrossRef] [PubMed]

- Macniven, J.A. Neuropsychological Formulation: A Clinical Casebook; Springer: Berlin, Germany, 2015. [Google Scholar]

- Savage, G. Cognitive Neuropsychological Formulation. In Neuropsychological Formulation; Springer: Berlin, Germany, 2016; pp. 221–239. [Google Scholar]

| Group | N | Mean | SD | SE | |

|---|---|---|---|---|---|

| Age | 1 | 29 | 33.07 | 9.906 | 1.840 |

| 2 | 29 | 40.48 | 15.588 | 2.895 | |

| y.o.e. | 1 | 29 | 12.03 | 3.201 | 0.594 |

| 2 | 29 | 12.03 | 3.029 | 0.563 | |

| MMSE | 1 | 29 | 26.56 | 2.675 | 0.497 |

| 2 | 29 | 28.53 | 1.028 | 0.191 |

| W | p | |

|---|---|---|

| Age | 312.5 | 0.094 |

| y.o.e. | 425.5 | 0.936 |

| MMSE | 194.0 | <0.001 |

| Test | Mean | Standard Deviation | Normative Data |

|---|---|---|---|

| MMSE | 26.56 | 2.68 | >18 |

| Frontal Assessment Battery (FAB) | 14.97 | 1.4 | >13.5 |

| Trail Making Task A (TMTA) | 63.07 | 23.58 | <93 |

| Trail Making Task B (TMTB) | 191.93 | 112.04 | <282 |

| Trail Making Task B-A (TMTBA) | 129.93 | 100.27 | <186 |

| Phonemic Fluency (PF) | 27.38 | 9.42 | >16 |

| Semantic Fluency (SF) | 33.69 | 8.43 | >24 |

| Tower of London (TOL) | 22.72 a | 5.45 | Not available |

| Digit Span (Digit S) | 5.28 | 1.09 | >3.5 |

| Paired-Associate Learning Test (PALT) | 10.84 | 4.04 | >6 |

| Corsi Span (Corsi S) | 4.51 | 0.78 | >3.5 |

| Short Story | 12.62 | 5.24 | >7.5 |

| Corsi Block Task (Corsi BT) | 16.09 | 8.21 | >5.5 |

| Test | Group | N | Mean | SD | SE |

|---|---|---|---|---|---|

| MMSE | 1 | 29 | 26.565 | 2.675 | 0.497 |

| 2 | 29 | 28.532 | 1.028 | 0.191 | |

| FAB | 1 | 29 | 14.965 | 1.403 | 0.261 |

| 2 | 20 | 16.274 | 0.849 | 0.190 | |

| TMTA | 1 | 29 | 63.069 | 23.584 | 4.379 |

| 2 | 29 | 37.632 | 15.624 | 2.901 | |

| TMTB | 1 | 29 | 191.310 | 112.041 | 20.806 |

| 2 | 29 | 95.448 | 46.144 | 8.569 | |

| TMTBA | 1 | 29 | 129.931 | 100.269 | 18.619 |

| 2 | 29 | 58.616 | 45.439 | 8.438 | |

| PF | 1 | 29 | 27.379 | 9.420 | 1.749 |

| 2 | 29 | 41.138 | 11.192 | 2.078 | |

| SF | 1 | 29 | 33.690 | 8.431 | 1.566 |

| 2 | 29 | 48.172 | 10.275 | 1.908 | |

| TOL | 1 | 29 | 22.724 | 5.450 | 1.012 |

| 2 | 29 | 28.448 | 3.582 | 0.665 | |

| Digit S | 1 | 29 | 5.284 | 1.087 | 0.202 |

| 2 | 29 | 6.010 | 0.847 | 0.157 | |

| PALT | 1 | 29 | 10.845 | 4.036 | 0.749 |

| 2 | 20 | 13.072 | 4.759 | 1.064 | |

| Corsi S | 1 | 29 | 4.508 | 0.778 | 0.144 |

| 2 | 29 | 6.345 | 2.660 | 0.494 | |

| Short Story | 1 | 29 | 12.621 | 5.242 | 0.973 |

| 2 | 29 | 14.491 | 4.454 | 0.827 | |

| Corsi BT | 1 | 29 | 16.091 | 8.208 | 1.524 |

| 2 | 29 | 21.239 | 5.843 | 1.085 |

| VMET | Group | N | Mean | SD | SE |

|---|---|---|---|---|---|

| Errors | 1 | 29 | 17.276 | 2.840 | 0.527 |

| 2 | 29 | 13.897 | 1.633 | 0.303 | |

| Break in time | 1 | 29 | 13.379 | 2.821 | 0.524 |

| 2 | 29 | 11.655 | 2.844 | 0.528 | |

| Break in choice | 1 | 29 | 9.655 | 1.951 | 0.362 |

| 2 | 29 | 8.379 | 0.979 | 0.182 | |

| Break in social rules | 1 | 29 | 10.517 | 2.181 | 0.405 |

| 2 | 29 | 8.793 | 1.544 | 0.287 | |

| Inefficiencies | 1 | 29 | 22.552 | 4.733 | 0.879 |

| 2 | 29 | 24.379 | 6.439 | 1.196 | |

| Rule break | 1 | 29 | 21.172 | 3.733 | 0.693 |

| 2 | 29 | 22.897 | 5.453 | 1.013 | |

| Strategies | 1 | 29 | 36.414 | 7.238 | 1.344 |

| 2 | 29 | 31.793 | 6.298 | 1.170 | |

| Interpretation failures | 1 | 29 | 5.207 | 0.940 | 0.175 |

| 2 | 29 | 5.241 | 0.872 | 0.162 | |

| Time | 1 | 29 | 649.448 | 320.076 | 59.437 |

| 2 | 29 | 595.759 | 266.793 | 49.542 | |

| Sustained attention | 1 | 29 | 8.345 | 1.610 | 0.299 |

| 2 | 29 | 7.759 | 0.830 | 0.154 | |

| Sequence | 1 | 29 | 8.241 | 1.640 | 0.305 |

| 2 | 29 | 7.828 | 0.805 | 0.149 | |

| Instructions | 1 | 29 | 8.276 | 1.623 | 0.301 |

| 2 | 29 | 7.517 | 0.634 | 0.118 | |

| Divided attention | 1 | 29 | 10.448 | 2.667 | 0.495 |

| 2 | 29 | 8.276 | 1.556 | 0.289 | |

| Organization | 1 | 29 | 10.483 | 3.158 | 0.586 |

| 2 | 29 | 8.000 | 1.282 | 0.238 | |

| Self-corrections | 1 | 29 | 9.241 | 1.902 | 0.353 |

| 2 | 29 | 7.759 | 0.786 | 0.146 | |

| Perseverations | 1 | 29 | 8.724 | 1.830 | 0.340 |

| 2 | 29 | 7.414 | 0.682 | 0.127 |

| Test | t | df | p | Mean Difference | SE Difference | Cohen’s d |

|---|---|---|---|---|---|---|

| Executive Function Domain↓ | ||||||

| FAB | −4.061 | 46.36 | <0.001 | −1.309 | 0.322 | −1.082 |

| TMTA | 4.842 | 48.61 | <0.001 | 25.437 | 5.253 | 1.272 |

| TMTB | 4.260 | 37.23 | <0.001 | 95.862 | 22.501 | 1.119 |

| TMTBA | 3.489 | 39.04 | 0.001 | 71.315 | 20.442 | 0.916 |

| PF | −5.065 | 54.42 | <0.001 | −13.759 | 2.717 | −1.330 |

| SF | −5.868 | 53.94 | <0.001 | −14.483 | 2.468 | −1.541 |

| TOL | −4.726 | 48.38 | <0.001 | −5.724 | 1.211 | −1.241 |

| Other Cognitive Domains↓ | ||||||

| MMSE | −3.696 | 36.10 | <0.001 | −1.967 | 0.532 | −0.971 |

| Digit S | −2.836 | 52.84 | 0.006 | −0.726 | 0.256 | −0.745 |

| PALT | −1.712 | 36.44 | 0.095 | −2.228 | 1.301 | −0.513 |

| Corsi S | −3.568 | 32.75 | 0.001 | −1.837 | 0.515 | −0.937 |

| Short Story | −1.465 | 54.58 | 0.149 | −1.871 | 1.277 | −0.385 |

| Corsi BT | −2.752 | 50.58 | 0.008 | −5.149 | 1.871 | −0.723 |

| VMET | t | df | p | Mean Difference | SE Difference | Cohen’s d |

|---|---|---|---|---|---|---|

| Errors | 5.555 | 56.00 | <0.001 | 3.379 | 0.608 | 1.459 |

| Break in time | 2.318 | 56.00 | 0.024 | 1.724 | 0.744 | 0.609 |

| Break in choice | 3.148 | 56.00 | 0.003 | 1.276 | 0.405 | 0.827 |

| Break in social rules | 3.474 | 56.00 | <0.001 | 1.724 | 0.496 | 0.912 |

| Inefficiencies | −1.232 | 56.00 | 0.223 | −1.828 | 1.484 | −0.323 |

| Rule break | −1.405 | 56.00 | 0.166 | −1.724 | 1.227 | −0.369 |

| Strategies | 2.593 | 56.00 | 0.012 | 4.621 | 1.782 | 0.681 |

| Interpretation failures | −0.145 | 56.00 | 0.885 | −0.034 | 0.238 | −0.038 |

| Time | −0.033 | 56.00 | 0.974 | −2.117 | 64.666 | −0.009 |

| Sustained attention | 1.743 | 56.00 | 0.087 | 0.586 | 0.336 | 0.458 |

| Sequence | 1.220 | 56.00 | 0.228 | 0.414 | 0.339 | 0.320 |

| Instructions | 2.344 | 56.00 | 0.023 | 0.759 | 0.324 | 0.616 |

| Divided attention | 3.789 | 56.00 | <0.001 | 2.172 | 0.573 | 0.995 |

| Organization | 3.923 | 56.00 | <0.001 | 2.483 | 0.633 | 1.030 |

| Self-corrections | 3.879 | 56.00 | <0.001 | 1.483 | 0.382 | 1.019 |

| Perseverations | 3.613 | 56.00 | <0.001 | 1.310 | 0.363 | 0.949 |

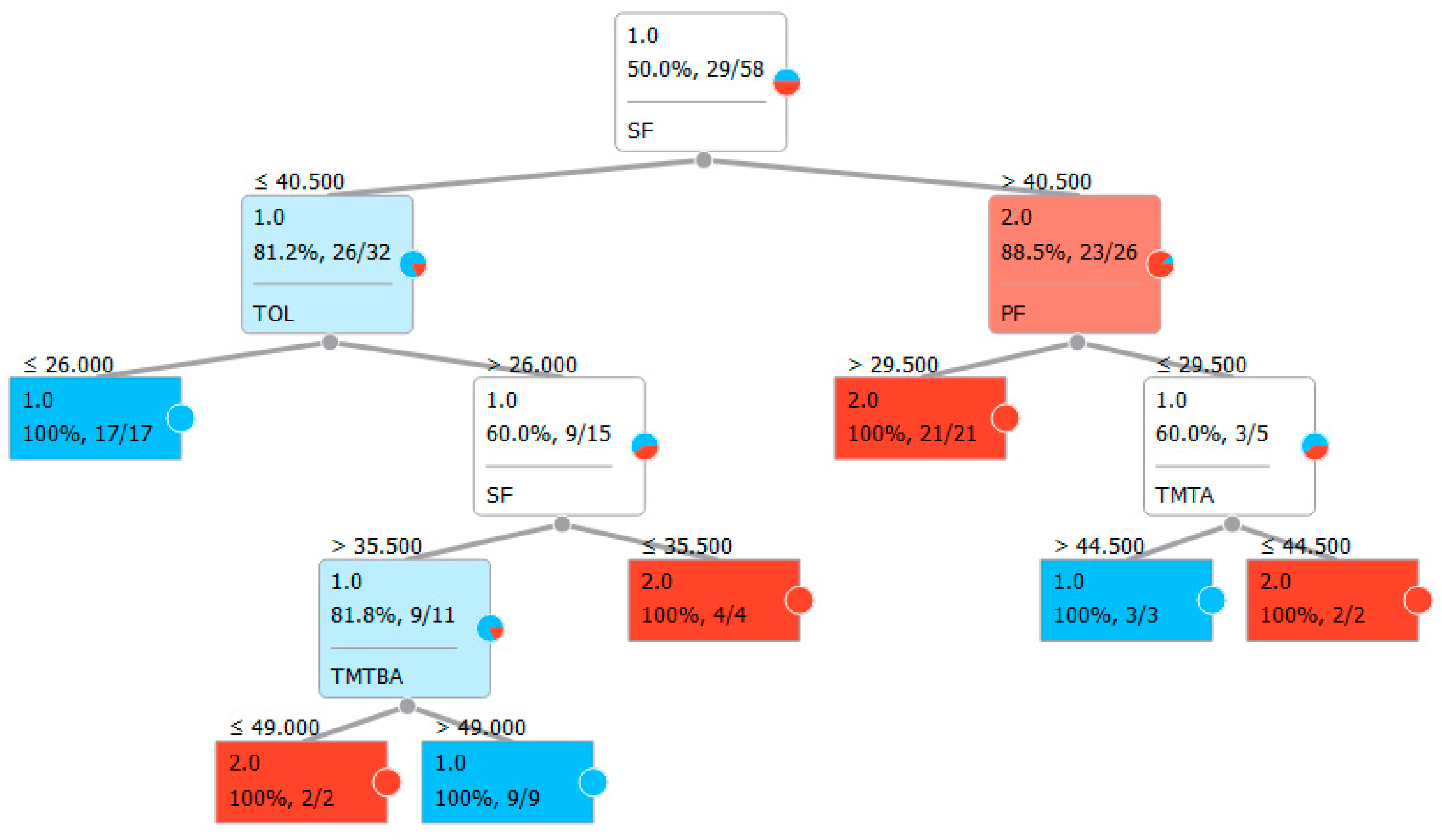

| Panel A: Classification with classic neuropsychological test for executive functions. | |||||

| Features: | FAB, TMTA, TMTB, TMTBA, TOL, PF, SF | ||||

| Sampling type: | Stratified 10-fold cross-validation | ||||

| Target class: | Average over classes | ||||

| Method | AUC | CA | F1 | Precision | Recall |

| LogReg | 0.742 | 0.741 | 0.746 | 0.733 | 0.759 |

| Random forest | 0.817 | 0.810 | 0.800 | 0.846 | 0.759 |

| SVM | 0.783 | 0.776 | 0.787 | 0.750 | 0.828 |

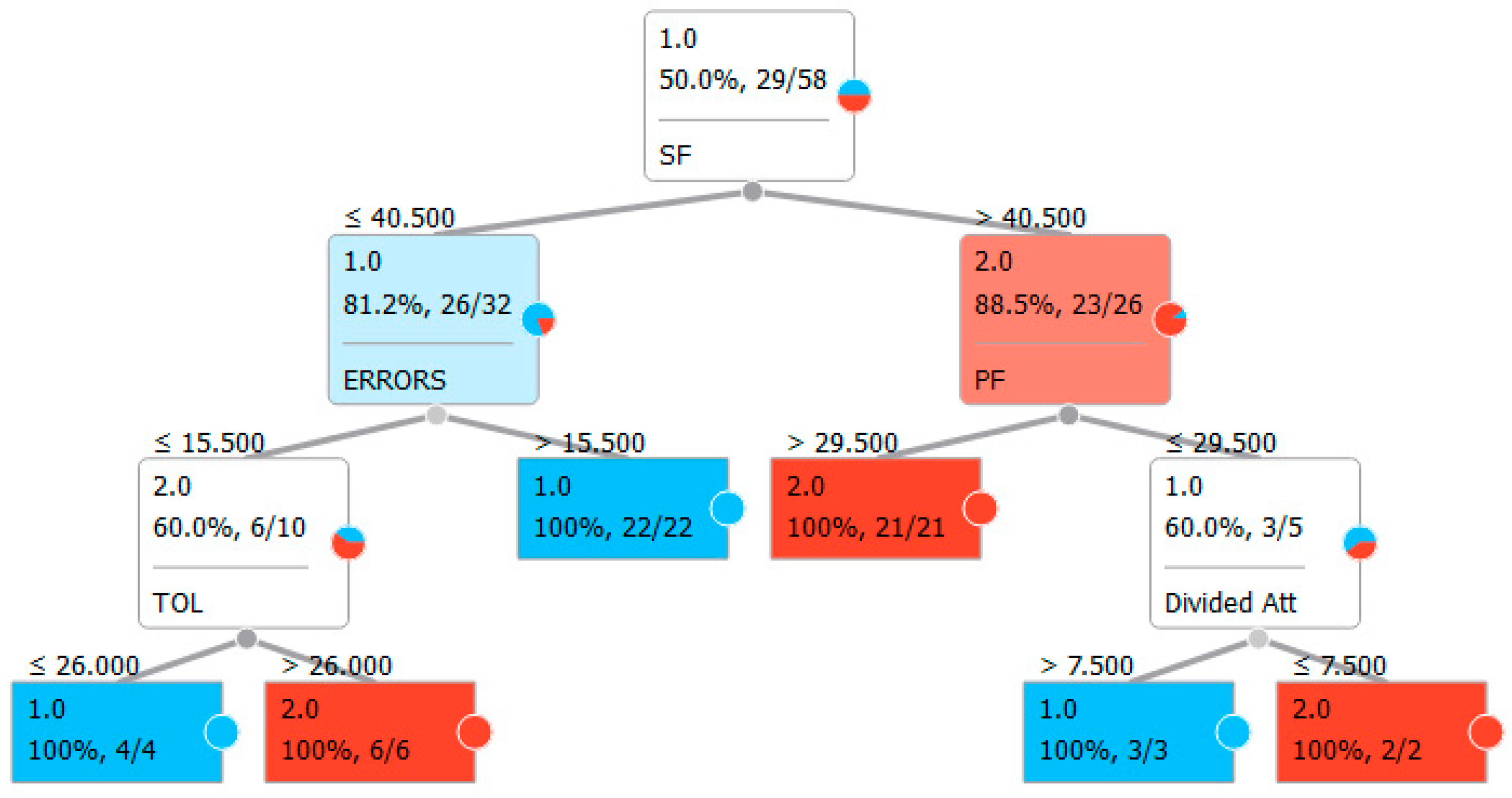

| Panel B: Classification with classic neuropsychological test for executive functions and the VMET. | |||||

| Features | FAB, TMTB, TMTA, TMTBA, TOL, PF, SF, ERRORS, break in time, break in choice, break in social rules, inefficiencies, rule break, strategies, interpretation failures, sustained attention, sequence, instructions, divided attention, organization, self-corrections, perseverations | ||||

| Sampling type | Stratified 10-fold cross-validation | ||||

| Target class | Average over classes | ||||

| Method | AUC | CA | F1 | Precision | Recall |

| LogReg | 0.700 | 0.707 | 0.702 | 0.714 | 0.690 |

| Random forest | 0.850 | 0.845 | 0.852 | 0.812 | 0.897 |

| SVM | 0.775 | 0.776 | 0.772 | 0.786 | 0.759 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Pedroli, E.; La Paglia, F.; Cipresso, P.; La Cascia, C.; Riva, G.; La Barbera, D. A Computational Approach for the Assessment of Executive Functions in Patients with Obsessive–Compulsive Disorder. J. Clin. Med. 2019, 8, 1975. https://doi.org/10.3390/jcm8111975

Pedroli E, La Paglia F, Cipresso P, La Cascia C, Riva G, La Barbera D. A Computational Approach for the Assessment of Executive Functions in Patients with Obsessive–Compulsive Disorder. Journal of Clinical Medicine. 2019; 8(11):1975. https://doi.org/10.3390/jcm8111975

Chicago/Turabian StylePedroli, Elisa, Filippo La Paglia, Pietro Cipresso, Caterina La Cascia, Giuseppe Riva, and Daniele La Barbera. 2019. "A Computational Approach for the Assessment of Executive Functions in Patients with Obsessive–Compulsive Disorder" Journal of Clinical Medicine 8, no. 11: 1975. https://doi.org/10.3390/jcm8111975

APA StylePedroli, E., La Paglia, F., Cipresso, P., La Cascia, C., Riva, G., & La Barbera, D. (2019). A Computational Approach for the Assessment of Executive Functions in Patients with Obsessive–Compulsive Disorder. Journal of Clinical Medicine, 8(11), 1975. https://doi.org/10.3390/jcm8111975