A Comprehensive Framework to Reinforce Evidence Synthesis Features in Cloud-Based Systematic Review Tools

Abstract

1. Introduction

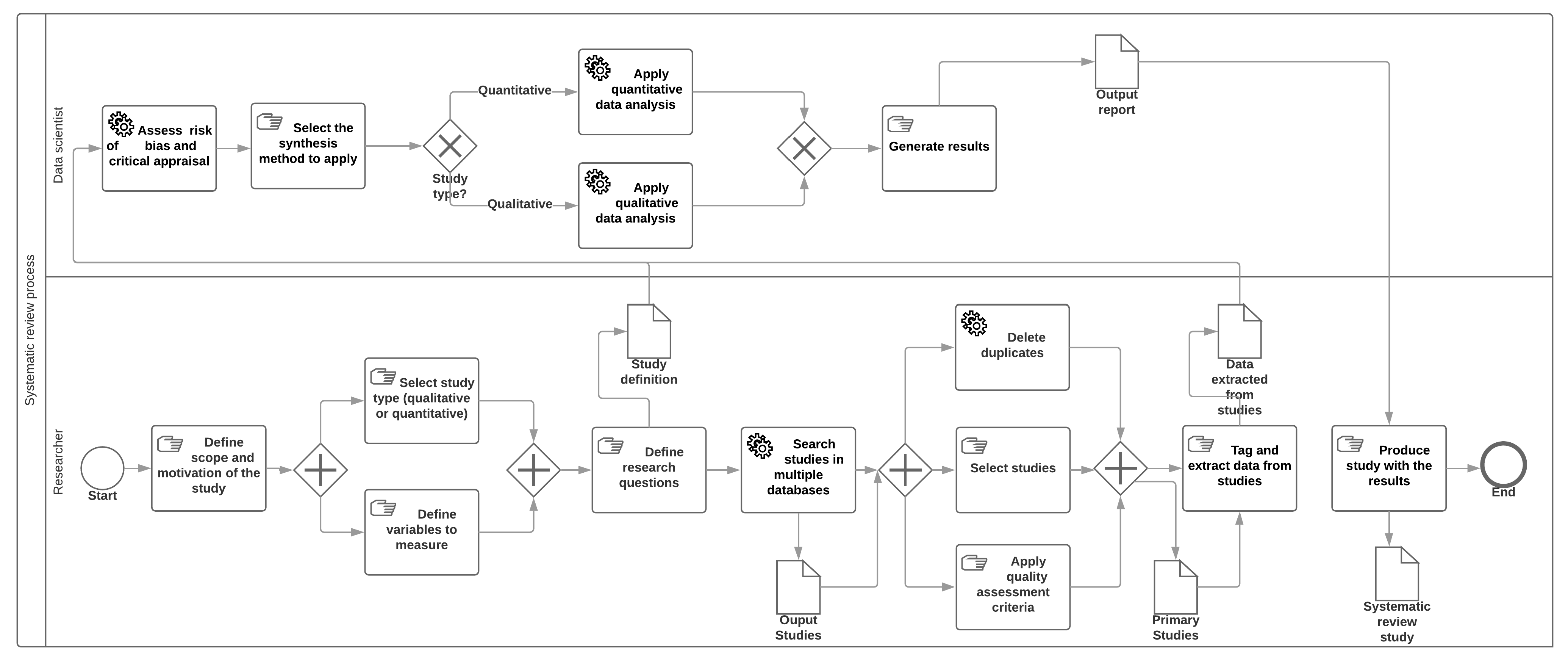

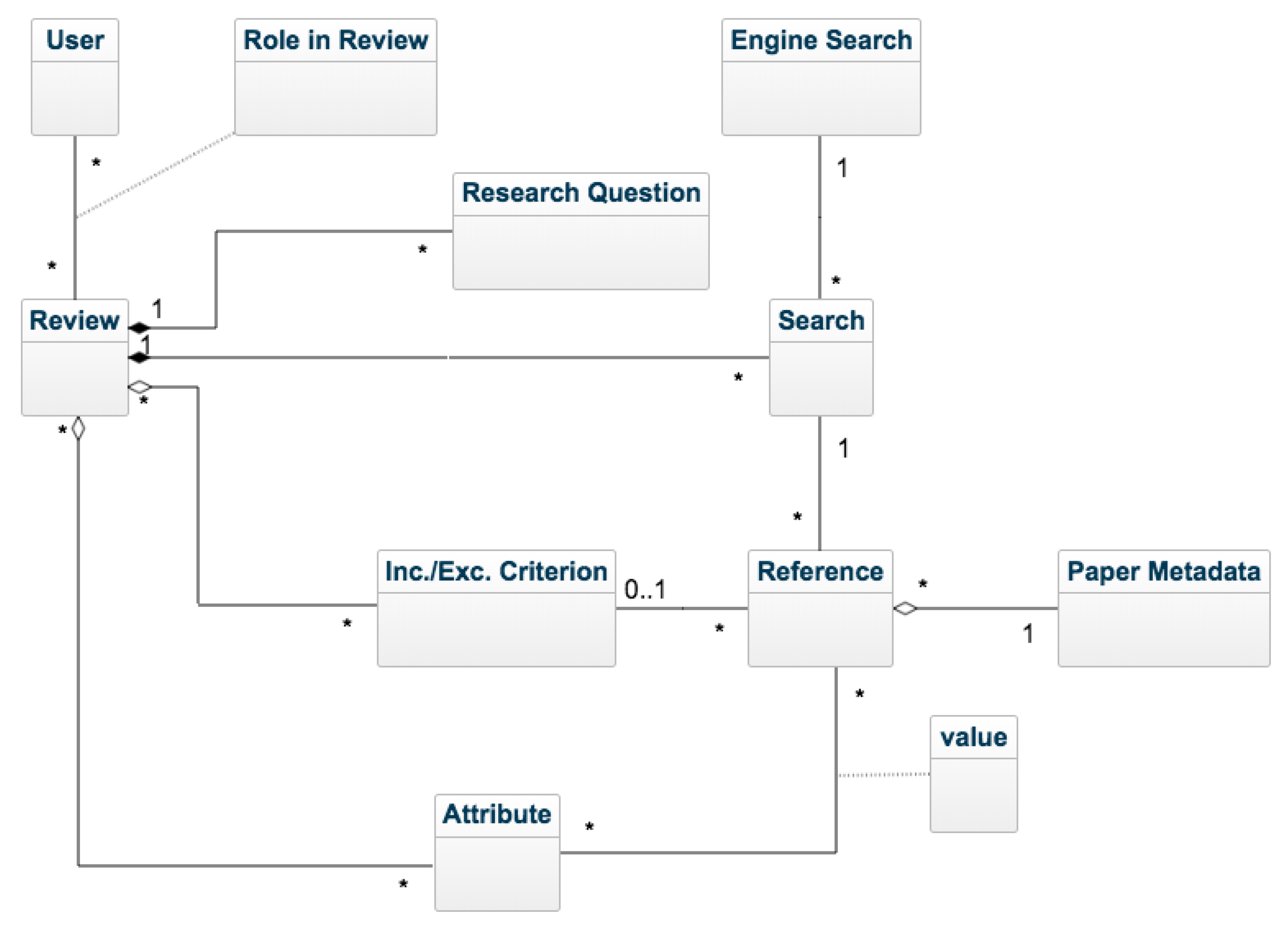

2. Evidence-Based Systematic Review Analysis Framework

- Non-functional: This category contains non-functional features related to the SR tool, such as open source code availability, licensing mode (and cost), availability of user guides, focus on specific disciplines, etc.

- Overall functionality: This category contains features that, not being specific to SR tools, can be interesting and make the task easier. These include the capability for collaborative work, management of user roles, maintenance of data history and traceability of the SR process steps.

- Information management: This category includes SR-specific features related to the steps for studies’ inclusion, selection and management. These steps include: definition of the research questions and scope of the study; definition and running of queries; study selection; and application of quality assessment criteria over the studies.

- Evidence synthesis: This category includes features related to the synthesis of relevant data and information obtained from the selected studies. The steps required for this are the following [20]: choosing the type of synthesis (either qualitative or quantitative); selecting the synthesis method; defining the study variables to measure; tagging and extraction of information from studies; critical appraisal of the risk of biased assessments; configuring the individual values from selected studies to calculate the global values of variables to measure; and generating reports with the synthesized data gathered.

- Non-functional features: 80%.

- Overall functionality: 75%.

- Information management: 100%.

- Evidence synthesis: 14.28%.

- Average score: 67.32%.

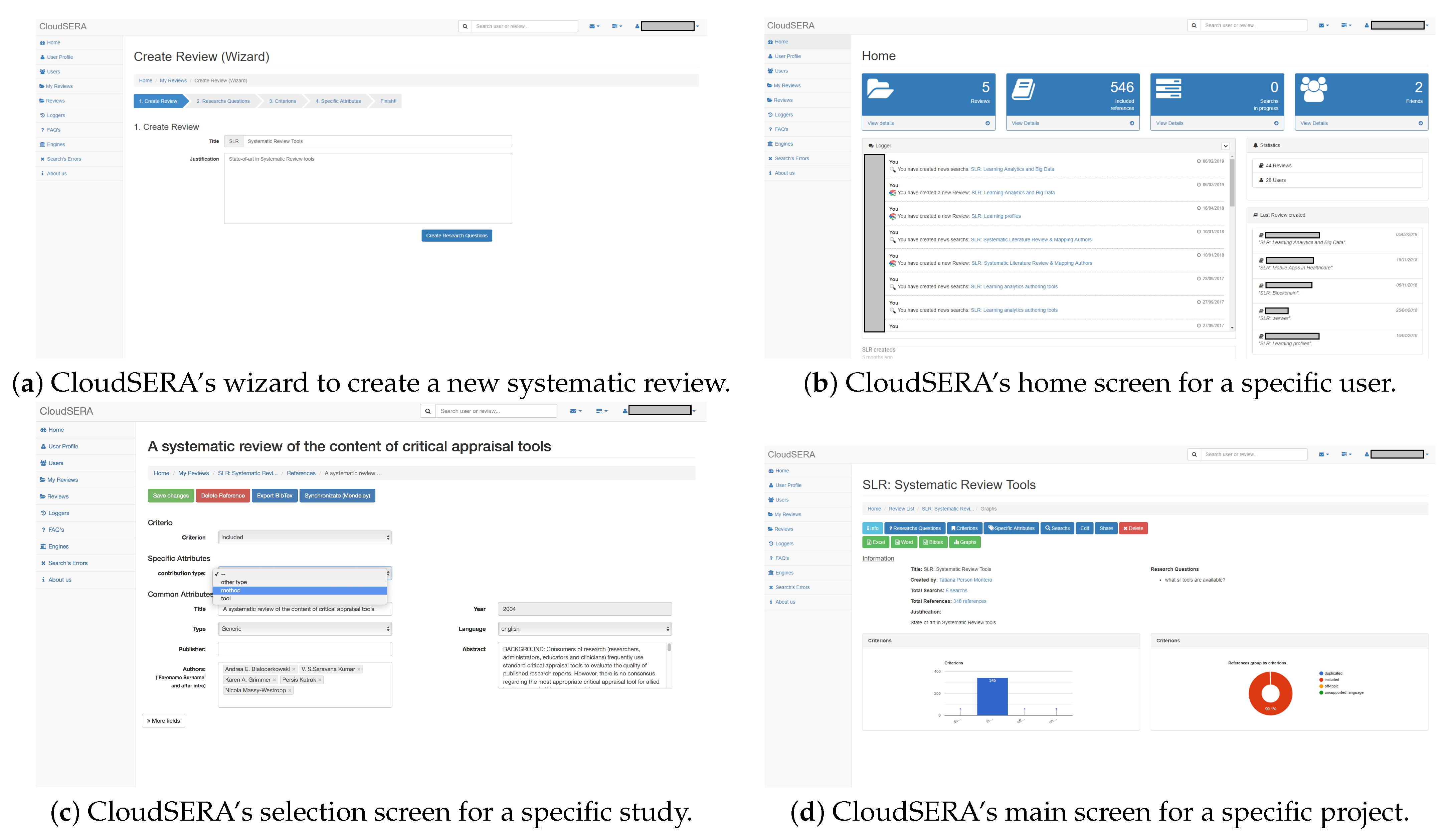

3. Cloudsera Features and Implementation

3.1. Cloudsera Features

3.2. Technical Quality and Utility Evaluation

4. Experts’ Survey on Evidence-Based Systematic Review

4.1. Expert Screening and Survey Questions

4.2. Data Collection and Analysis

- The complete sample: a total of 11 experts were involved in the survey.

- Research academic discipline: Humanities (2), Applied sciences (2), Formal sciences (2), Social sciences (3) and Natural Sciences (2).

- Expertise level: researchers who have supervised more than one SR (8) and those who have only supervised one (3).

4.2.1. Information Management Features

- First, this section of the survey asked the experts about the reference management systems they use. In this case, the experts’ responses enumerated the following: Mendeley, Zotero, JabRef, EndNote, and RefWorks.

- Second, they were asked about the search engines used. In this case, the experts from the Humanities mentioned ACM Digital Library, JSTOR, Web of Science, Google Scholar, and ResearchGate. On the other hand, the experts from the Applied Sciences mentioned PubMed, Scopus, EBSCO CINAHL, and MathSciNet; and experts from the Social Sciences mentioned—in addition to the search engines used in Humanities—Medline, Wolters Kluwer Ovid, and Sociological Abstracts. Researchers from the Natural Sciences mentioned AGRIS, Agricola, Academic Search Premier, CAB Direct, GreenFILE, Aquatic Sciences and Fisheries Abstracts, PsycINFO, DOAJ, EconLit, Sociological Abstracts, ProQuest Dissertations and Theses, DART eTheses Portal, and EThOS.

- All features included in this category were considered relevant for the experts.

- The duplicate deletion feature has a lower than average score; this might indicate that integrating the remaining features into the SR tool to cover the experts’ requirements should be a requisite. Besides, this can indicate that the features needed for information management in the SR process are applied in the same way in all disciplines.

- The experts suggested including the following features: stakeholder engagement, inter-rater reliability in the screening process, detect future lines, new questions and challenges, and the possibility of setting the sample size.

4.2.2. Evidence Synthesis Features

- First, this section asked the experts about the type of study more frequently conducted or supervised. In this case, four researchers indicated quantitative studies, two researchers indicated qualitative studies and five researchers indicated mixed studies.

- Second, they were asked about the techniques used to synthesize collected evidence. In this case, the researchers mentioned the following techniques: grounded theory, content analysis, case survey, meta-study, meta-ethnography, thematic analysis, narrative summary, Bayesian meta-analysis and meta-study. Additionally, they contributed qualitative comparative analysis method and meta-synthesis to the previous list.

- Third, the experts were asked about the method used to collect data from primary studies. In this case, the experts responded with: manually, using Mendeley, using online survey data, using face-to-face surveys or phone surveys. Other experts indicated that, in certain disciplines, it is complicated to gather data because “some studies do not provide data, for example, patient data are not commonly available”.

- Fourth, they were asked about tools used to analyze data. In this case, the experts from the Humanities mentioned Atlas.ti, or SPSS, experts from the Social Sciences mentioned R, meta-regression and Forest Plot, and experts from the Natural Sciences named Microsoft Excel. Other researchers indicated that they manually perform the analysis, without using any support tool.

- Fifth, the experts were asked about the techniques used to measure the risk of bias assessment. In this case, only the experts from the Applied Sciences and Social Sciences indicated that they used this type of technique. In the case of the Applied Sciences, experts performed a revision using a risk of bias table, whereas they used GRADE and AHQR guidelines in the case of the Social Sciences.

- Sixth, they were asked about the methods used for representing results. In this case, seven of them indicated that they used visual representations for synthesizing results; five of them indicated that they used a flowchart to depict the selection process of each study; and three of them indicated that they used visual representations for indicating the included and excluded studies.

- Seventh, the experts were asked about their opinion on whether quantitative reports should be different to qualitative ones. In this case, seven of them responded affirmatively and two of them negatively.

- Eighth, they were asked about including extra information in the reports. In this case, one expert from the Social Sciences indicated that the addition of summary tables and additional files with the complete information is needed and one expert from the Applied Sciences indicated that it is relevant to include personal opinions.

- All features included in this category were considered relevant by experts.

- The experts from the Humanities and Formal Sciences gave a higher score to the ES features than experts from the Applied Sciences, Social Sciences and Natural Sciences. This might indicate that the experts of the former disciplines invest a greater effort into the application of evidence synthesis techniques in their SR processes.

4.2.3. Overall Features

- First, the experts were asked about their interest level in the availability of tools to support the SR process. In this case, the average level of interest is 4.18, indicating that the inclusion of this kind of tool is very relevant.

- Second, they were asked whether they have used SR tools previously. In this case, only one of the participants had used this type of tool and mentioned EPPI-Reviewer, CADIMA, Rayyan, SysRev and Colandr.

5. Results

- Provide a more exhaustive integration with other reference management systems, such as Zotero, JabRef, EndNote, and RefWorks.

- Extend the built-in search engines, including others such as: JSTOR, Web of Science, ResearchGate, PubMed, EBSCO CINAHL, MathSciNet, Medline, Ovid, Sociological Abstracts, AGRIS, Agricola, Academic Search Premier, CAB Direct, Aquatic Sciences and Fisheries Abstracts, GreenFILE, PsycINFO, DOAJ, EconLit, Sociological Abstracts, ProQuest Dissertations and Theses, DART eTheses Portal, and EThOS.

- Implement the integration with guides to measure the quality of the methodology used in the primary studies, such as: PRISMA, ENTREQ or Cochrane collaboration.

- Include computational support to partially automate some of the evidence data synthesis techniques, such as: grounded theory, content analysis, case survey, meta-ethnography, meta-study, narrative summary, meta-study, qualitative comparative analysis methods, meta-synthesis, and thematic analysis.

- Provide the following features from the information management category: Inter-rated reliability in the screening process, detect future lines, new questions and challenges, and the ability to set the sample size.

6. Conclusions and Future Work

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| ES | Evidence Synthesis |

| SR | Systematic Review |

| RQ | Research Question |

| SMS | Systematic Mapping Study |

| SLR | Systematic Literature Review |

| OLAP | On-Line Analytical Processing |

Appendix A. Survey Questions

Appendix A.1. User Profile

- E-mail.

- Full name.

- Highest academic degree.

- Kind of research: academic research, industrial research, government research, etc.

- Academic discipline.

Appendix A.2. Systematic Review Experience

- Number of SRs performed.

- Number of SRs supervised.

- Level of expertise doing SRs (in a scale from 1 to 5).

Appendix A.3. Information Management

- Most frequently utilized reference management systems: EndNote, Mendeley, RefWorks, Zotero, CiteULike, JabRef, etc.

- Most frequently used search engines: Web of Science, Springer Link, Google Scholar, etc.

- Followed methodological guides: Cochrane Collaboration, Kitchenham’s guidelines, etc.

- Personal rating of significance of each feature collected in the Information management category of the framework (in a scale from non-relevant to essential).

Appendix A.4. Evidence Synthesis

- Type of the study more frequently carried out or supervised: quantitative, qualitative, or both quantitative and qualitative.

- Used techniques for data synthesis: thematic analysis, meta-study or Bayesian meta-analysis, among others [8].

- Methods used to collect data from studies.

- Tools used to analyze studies’ data.

- Techniques used to assess the risk of bias.

- Representation techniques used to convey the results of the review study: flowcharts with the selection of studies process, tables/charts with percentages of studies analyzed or discarded according to the inclusion/exclusion criteria, tables/charts summarizing the synthesized data of the primary studies, etc.

- Opinion about the differentiating factors between a qualitative report and a quantitative one.

- Additional information commonly included in the final reports.

- Personal rating of significance for each feature collected in the Evidence synthesis category of the framework (in a scale from non-relevant to essential).

Appendix A.5. Systematic Review Tools

- Interest level in the availability of tools to support the SR process.

- Tools used during the SR process.

- Personal rating of significance for each feature collected in the Non-functional and Overall functionality categories of the framework (scale from non-relevant to essential).

References

- Moed, H.F.; Glänzel, W.; Schmoch, U. Handbook of Quantitative Science and Technology Research; Springer: Dordrecht, The Netherlands, 2015. [Google Scholar]

- Börner, K.; Chen, C.; Boyack, K.W. Visualizing knowledge domains. Annu. Rev. Inf. Sci. Technol. 2003, 37, 179–255. [Google Scholar] [CrossRef]

- Cobo, M.J.; López-Herrera, A.G.; Herrera-Viedma, E.; Herrera, F. Science mapping software tools: Review, analysis, and cooperative study among tools. J. Am. Soc. Inf. Sci. Technol. 2011, 62, 1382–1402. [Google Scholar] [CrossRef]

- Fortunato, S.; Bergstrom, C.T.; Börner, K.; Evans, J.A.; Helbing, D.; Milojević, S.; Petersen, A.M.; Radicchi, F.; Sinatra, R.; Uzzi, B.; et al. Science of science. Science 2018, 359, eaao0185. [Google Scholar] [CrossRef] [PubMed]

- GRADEpro. GRADEpro Guideline Development Tool [Software]. McMaster University (Developed by Evidence Prime, Inc.). Available online: gradepro.org (accessed on 22 May 2021).

- Noblit, G.W.; Hare, R.D.; Hare, R. Meta-Ethnography: Synthesizing Qualitative Studies; Sage: Newbury Park, London, UK, 1988; Volume 11. [Google Scholar]

- Cooper, H.; Hedges, L.V.; Valentine, J.C. The Handbook of Research Synthesis and Meta-Analysis; Russell Sage Foundation: New York, NY, USA, 2009. [Google Scholar]

- Dixon-Woods, M.; Agarwal, S.; Jones, D.; Young, B.; Sutton, A. Synthesising qualitative and quantitative evidence: A review of possible methods. J. Health Serv. Res. Policy 2005, 10, 45–53. [Google Scholar] [CrossRef] [PubMed]

- Cruzes, D.S.; Dybå, T. Synthesizing evidence in software engineering research. In Proceedings of the 2010 ACM-IEEE International Symposium on Empirical Software Engineering and Measurement, ACM, Bolzano, Italy, 16–17 September 2010; p. 1. [Google Scholar]

- Cochrane Collaboration. The Cochrane Reviewers’ Handbook Glossary; Cochrane Collaboration, 2001; Available online: https://books.google.es/books?id=xSUzHAAACAAJ (accessed on 22 May 2021).

- Kitchenham, B.A.; Budgen, D.; Brereton, P. Evidence-Based Software Engineering and Systematic Reviews; CRC Press: Boca Raton, FL, USA, 2015; Volume 4. [Google Scholar]

- Daniels, K.; Langlois, É.V. Applying Synthesis Methods in Health Policy and Systems Research. In Evidence Synthesis for Health Policy and Systems: A Methods Guide; World Health Organization: Geneva, Switzerland, 2018; Chapter 3; pp. 26–39. [Google Scholar]

- Haddaway, N.R.; Bilotta, G.S. Systematic reviews: Separating fact from fiction. Environ. Int. 2016, 92–93, 578–584. [Google Scholar] [CrossRef] [PubMed]

- Kitchenham, B.; Charters, S. Guidelines for performing Systematic Literature Reviews in Software Engineering; Technical Report EBSE 2007-001, Keele University and Durham University Joint Report; 2007; Available online: https://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.117.471&rep=rep1&type=pdf (accessed on 22 May 2021).

- Petersen, K.; Vakkalanka, S.; Kuzniarz, L. Guidelines for conducting systematic mapping studies in software engineering: An update. Inf. Softw. Technol. 2015, 64, 1–18. [Google Scholar] [CrossRef]

- Oates, B.J. Researching Information Systems and Computing; Sage: London, UK, 2005. [Google Scholar]

- Ruiz-Rube, I.; Person, T.; Mota, J.M.; Dodero, J.M.; González-Toro, Á.R. Evidence-Based Systematic Literature Reviews in the Cloud. In Intelligent Data Engineering and Automated Learning—IDEAL 2018; Yin, H., Camacho, D., Novais, P., Tallón-Ballesteros, A.J., Eds.; Springer: Berlin/Heidelberg, Germany, 2018; pp. 105–112. [Google Scholar]

- Kohl, C.; McIntosh, E.J.; Unger, S.; Haddaway, N.R.; Kecke, S.; Schiemann, J.; Wilhelm, R. Online tools supporting the conduct and reporting of systematic reviews and systematic maps: A case study on CADIMA and review of existing tools. Environ. Evid. 2018, 7, 8. [Google Scholar] [CrossRef]

- Hassler, E.; Carver, J.C.; Hale, D.; Al-Zubidy, A. Identification of SLR tool needs–results of a community workshop. Inf. Softw. Technol. 2016, 70, 122–129. [Google Scholar] [CrossRef]

- Manterola, C.; Astudillo, P.; Arias, E.; Claros, N.; Mincir, G. Revisiones sistemáticas de la literatura. Qué se debe saber acerca de ellas. Cirugía Espa Nola 2013, 91, 149–155. [Google Scholar] [CrossRef] [PubMed]

- Cheng, S.; Augustin, C.; Bethel, A.; Gill, D.; Anzaroot, S.; Brun, J.; DeWilde, B.; Minnich, R.; Garside, R.; Masuda, Y.; et al. Using machine learning to advance synthesis and use of conservation and environmental evidence. Conserv. Biol. 2018, 32, 762–764. [Google Scholar] [CrossRef] [PubMed]

- Tan, M.C. Colandr. J. Can. Health Libr. Assoc. De L’Association Des Bibliothèques De La Santé Du Can. 2018, 39, 85–88. [Google Scholar] [CrossRef]

- Partners, E. DistillerSR [Computer Program]; Evidence Partners: Ottawa, ON, Canada, 2011. [Google Scholar]

- Thomas, J.; Brunton, J.; Graziosi, S. EPPI-Reviewer 4.0: Software for Research Synthesis. EPPI-Centre Software. London: Social Science Research Unit. Inst. Educ. Univ. Lond. 2010. Available online: https://eppi.ioe.ac.uk/cms/Default.aspx?tabid=1913 (accessed on 2 May 2021).

- Lajeunesse, M.J. Facilitating systematic reviews, data extraction and meta-analysis with the metagear package for R. Methods Ecol. Evol. 2016, 7, 323–330. [Google Scholar] [CrossRef]

- Ouzzani, M.; Hammady, H.; Fedorowicz, Z.; Elmagarmid, A. Rayyan—A web and mobile app for systematic reviews. Syst. Rev. 2016, 5, 210. [Google Scholar] [CrossRef] [PubMed]

- ESEG. REviewER. 2013. Available online: https://sites.google.com/site/eseportal/tools/reviewer (accessed on 22 May 2021).

- Molléri, J.S.; Benitti, F.B.V.; da Rocha Fernandes, A.M. Aplicação de Conceitos de Inteligência Artificial na Condução de Revisões Sistemáticas: Uma Resenha Crítica. An. Sulcomp 2013. Available online: http://periodicos.unesc.net/index.php/sulcomp/article/viewFile/1028/971 (accessed on 22 May 2021).

- Fernández-Sáez, A.M.; Bocco, M.G.; Romero, F.P. SLR-Tool: A Tool for Performing Systematic Literature Reviews. ICSOFT 2010, 2, 157–166. [Google Scholar]

- González Toro, N.R.; SPI&FM, T. CloudSERA’s Tool. 2019. Available online: http://slr.uca.es/ (accessed on 22 May 2021).

- González Toro, N.R.; SPI&FM, T. CloudSERA’s GitHub Repository. 2019. Available online: https://github.com/spi-fm/CloudSERA (accessed on 22 May 2021).

- Von Alan, R.H.; March, S.T.; Park, J.; Ram, S. Design science in information systems research. MIS Q. 2004, 28, 75–105. [Google Scholar]

- Nielsen, J. Ten Usability Heuristics, 2005. Available online: https://pdfs.semanticscholar.org/5f03/b251093aee730ab9772db2e1a8a7eb8522cb.pdf (accessed on 22 May 2021).

- Person, T.; Ruiz-Rube, I.; Mota, J.M.; Cobo, M.J.; Tselykh, A.; Dodero, J.M. Expert Survey Form. 2019. Available online: http://bit.ly/clousera-experts-survey (accessed on 22 May 2021).

- Person, T.; Ruiz-Rube, I.; Mota, J.M.; Cobo, M.J.; Tselykh, A.; Dodero, J.M. Consent Revocation Form for Data Collection and Analysis for Participants in the Expert Survey. 2019. Available online: http://bit.ly/clousera-revocate-form (accessed on 22 May 2021).

- Person, T.; Ruiz-Rube, I.; Mota, J.M.; Cobo, M.J.; Tselykh, A.; Dodero, J.M. Spreadsheet with the Data Collected and Analyzed in the Survey. Available online: http://bit.ly/cloudsera-data (accessed on 22 May 2021).

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G.; Prisma Group. Preferred reporting items for systematic reviews and meta-analyses: The PRISMA statement. PLoS Med. 2009, 6, e1000097. [Google Scholar] [CrossRef] [PubMed]

- Tong, A.; Flemming, K.; McInnes, E.; Oliver, S.; Craig, J. Enhancing transparency in reporting the synthesis of qualitative research: ENTREQ. BMC Med. Res. Methodol. 2012, 12, 181. [Google Scholar] [CrossRef] [PubMed]

- Godlee, F. The Cochrane collaboration. Br. Med. J. 1994, 309, 969. [Google Scholar] [CrossRef] [PubMed]

| Feature | Description |

|---|---|

| Non-functional | |

| F1. Cloud | Accessible for use online in web |

| F2. Open source & Free | Availability of the source code. Free of charge |

| F3. Updated | Tool maintenance carried out less than a year ago |

| F4. Not focused | Not focused on any particular academic discipline |

| F5. User guides | User and installation guides, tutorials, and any type of resources that guide users to use the tool easily |

| Overall functionality | |

| F6. Collaboration | Collaboration to perform the SR tasks with other users |

| F7. User role management | Creating and management of user roles and permissions in an SR project |

| F8. Data maintenance | Data maintenance and preservation functions to access past research questions, protocols, studies, data, metadata, bibliographic data and reports |

| F9. Traceability | Forward and backward traceability to link goals, actions, change history and results for accountability, standardization, verification and validation |

| Information management | |

| F10. Research question | Ability to define research questions and related problems |

| F11. Scope | Ability to define the scope of the study |

| F12. Integrated search | Ability to search multiple databases without having to perform separate searches |

| F13. Duplicate deletion | Automatic deletion of duplicate studies |

| F14. Study selection | Selection of primary studies using inclusion/exclusion criteria |

| F15. Quality assessment | Evaluation of primary studies using quality assessment criteria |

| Evidence synthesis | |

| F16. Study type | Definition of the type of the study based on the qualitative or quantitative analysis to apply |

| F17. Synthesis method | Selection of the synthesis method to apply (according to the study type selected) |

| F18. Study variables | Definition of the variables to measure and their types |

| F19. Tag and data extraction | For quantitative studies extraction of data and tags automatically from studies |

| F20. Risk of bias and critical appraisal | Assess risk of bias and critical appraisal in individual studies |

| F21. Summarizing | Summarizing results from studies data |

| F22. Reporting | Adapted for the analysis type (graph type and statistical results more adequate in each case) |

| Non-Functional | Overall Functionality | Information Management | Evidence Synthesis | |||||||||||||||||||

| F1 | F2 | F3 | F4 | F5 | F6 | F7 | F8 | F9 | F10 | F11 | F12 | F13 | F14 | F15 | F16 | F17 | F18 | F19 | F20 | F21 | F22 | |

| CADIMA | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ||||

| Colandr | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ||||||

| DistillerSR | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | |||||||||

| EPI-Reviewer | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ||||||||||

| METAGEAR R | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | |||||||||||||

| Rayyan | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | |||||||||||||

| ReviewER | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ||||||||||||||||

| SESRA | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | |||||||

| SLR-Tool | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ||||||||||

| CloudSERA | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ✔ | ||||||||

| Tool | Non-Functional | Overall Functionality | Information Management | Evidence Synthesis |

|---|---|---|---|---|

| CADIMA | 100 | 100 | 83.3 | 57.1 |

| Colandr | 100 | 100 | 50 | 57.1 |

| DistillerSR | 80 | 50 | 50 | 57.1 |

| EPI-Reviewer 4 | 60 | 75 | 33.3 | 57.1 |

| METAGEAR R | 80 | 50 | 0 | 42.8 |

| Rayyan | 100 | 75 | 33.3 | 0 |

| ReviewER | 40 | 50 | 33.3 | 0 |

| SESRA | 100 | 100 | 50 | 42.8 |

| SLR-Tool | 40 | 50 | 66.6 | 57.1 |

| CloudSERA | 80 | 75 | 100 | 14.28 |

| Average | 78 | 72.5 | 49.98 | 38.54 |

| Dimension | Information Management | Evidence Synthesis | Overall Functionality |

|---|---|---|---|

| Academic Discipline | |||

| Humanities | 3.58 | 3.36 | 3.17 |

| Applied sciences | 4.75 | 4.75 | 4.58 |

| Formal sciences | 3.5 | 2.82 | 2.91 |

| Social sciences | 4.28 | 4.31 | 3.5 |

| Natural sciences | 4.58 | 4.32 | 4.17 |

| Expertise level | |||

| Medium | 3.22 | 4 | 3.94 |

| High | 3.81 | 4.21 | 3.88 |

| Complete sample | |||

| 3.65 | 4.15 | 3.9 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Person, T.; Ruiz-Rube, I.; Mota, J.M.; Cobo, M.J.; Tselykh, A.; Dodero, J.M. A Comprehensive Framework to Reinforce Evidence Synthesis Features in Cloud-Based Systematic Review Tools. Appl. Sci. 2021, 11, 5527. https://doi.org/10.3390/app11125527

Person T, Ruiz-Rube I, Mota JM, Cobo MJ, Tselykh A, Dodero JM. A Comprehensive Framework to Reinforce Evidence Synthesis Features in Cloud-Based Systematic Review Tools. Applied Sciences. 2021; 11(12):5527. https://doi.org/10.3390/app11125527

Chicago/Turabian StylePerson, Tatiana, Iván Ruiz-Rube, José Miguel Mota, Manuel Jesús Cobo, Alexey Tselykh, and Juan Manuel Dodero. 2021. "A Comprehensive Framework to Reinforce Evidence Synthesis Features in Cloud-Based Systematic Review Tools" Applied Sciences 11, no. 12: 5527. https://doi.org/10.3390/app11125527

APA StylePerson, T., Ruiz-Rube, I., Mota, J. M., Cobo, M. J., Tselykh, A., & Dodero, J. M. (2021). A Comprehensive Framework to Reinforce Evidence Synthesis Features in Cloud-Based Systematic Review Tools. Applied Sciences, 11(12), 5527. https://doi.org/10.3390/app11125527