Fingerprint Classification through Standard and Weighted Extreme Learning Machines

Abstract

1. Introduction

- (i)

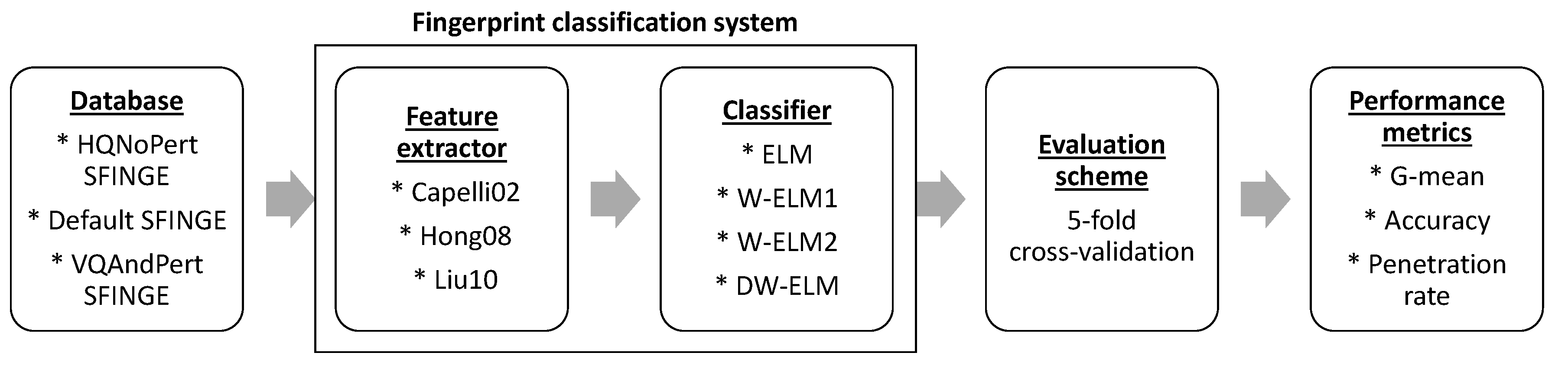

- As fingerprint classification system, we propose an ELM model based on feature descriptors with the highest performance for fingerprint identification. The introduction of the ELM algorithm is due to its training stage consumes short time, which allows to increase the identification in large fingerprint databases.

- (ii)

- In the weighted ELM, original and decay weighting schemes are developed to improve the classification capability of the classifier by considering complex data distribution, such as fingerprint classes.

- (iii)

- The hyper-parameters of the ELMs (regularization and decay parameters, and the number of hidden nodes) are numerically optimized in terms of the geometric mean since this metric normalizes the classification accuracy of each class.

- (iv)

- The combination of the Hong08 feature extractor and the weighted ELM with the presence of the golden-ratio in the weighted matrix is superior to the rest of combinations of feature extractors and ELMs, and almost matches the CNN-based methods in terms of accuracy and penetration rate. Nevertheless, our approach has the benefit of a fast learning speed by using any commercial computer.

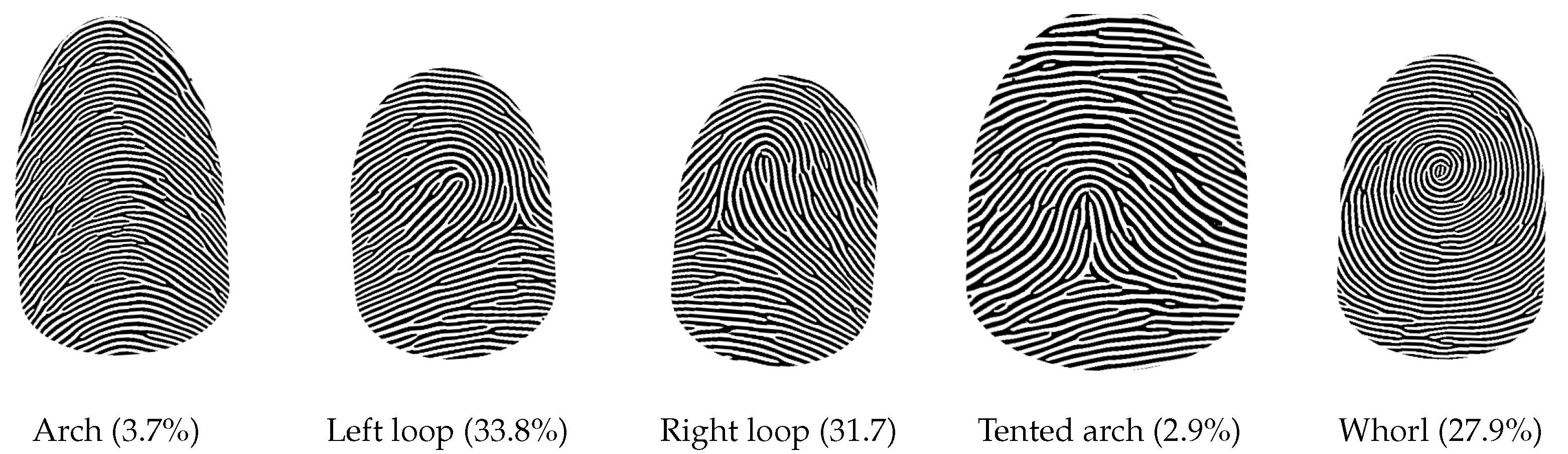

2. Related Works

- (i)

- Via feature extractors that obtain the most important characteristics of the fingerprint image, by reducing the original size severally. In this context, the feature extractor models with the best-reported results in the literature are [3,5]: Capelli02 [22], Hong08 [23], and Liu10 [24], which are based on global level characteristics of the image such as orientation maps, ridge structure, and singular points, respectively. Afterward, the classification problem is performed trough a supervised learning technique, e.g., support vector machines, or artificial neural networks based on the gradient operation.

- (ii)

- By employing only a CNN directly on the input images, where the feature extractors are discarded. In practice, CNNs are complex networks that combine different types of neuron layers (convolutional, pooling, and fully connected) with diverse activation functions (e.g., Rectified linear unit (RELU), softmax, RELU plus dropout). Besides, it can be accompanied by a Bayesian framework. However, CNN-based approaches require very time-consuming training process with millions of parameters to be optimized.

3. Background

3.1. Feature Extractors

- (i)

- Capelli02 [22] is based on the orientation map of the fingerprint. The approach registers the core point by using the Poincare method [45]. Then, the fingerprint is represented by a vector of five positions, which is computed by applying a set of dynamic masks directly derived from each class. The feature vector also stores the orientations.

- (ii)

- (iii)

- Liu10 [24] represents the fingerprint by building a feature vector based on the relative measures among the singular points. Singular points are detected by computing complex filter responses at multiple scales [47]. Thus, the feature vector consists of the relative position, direction, and certainties of each singular point for each scale.

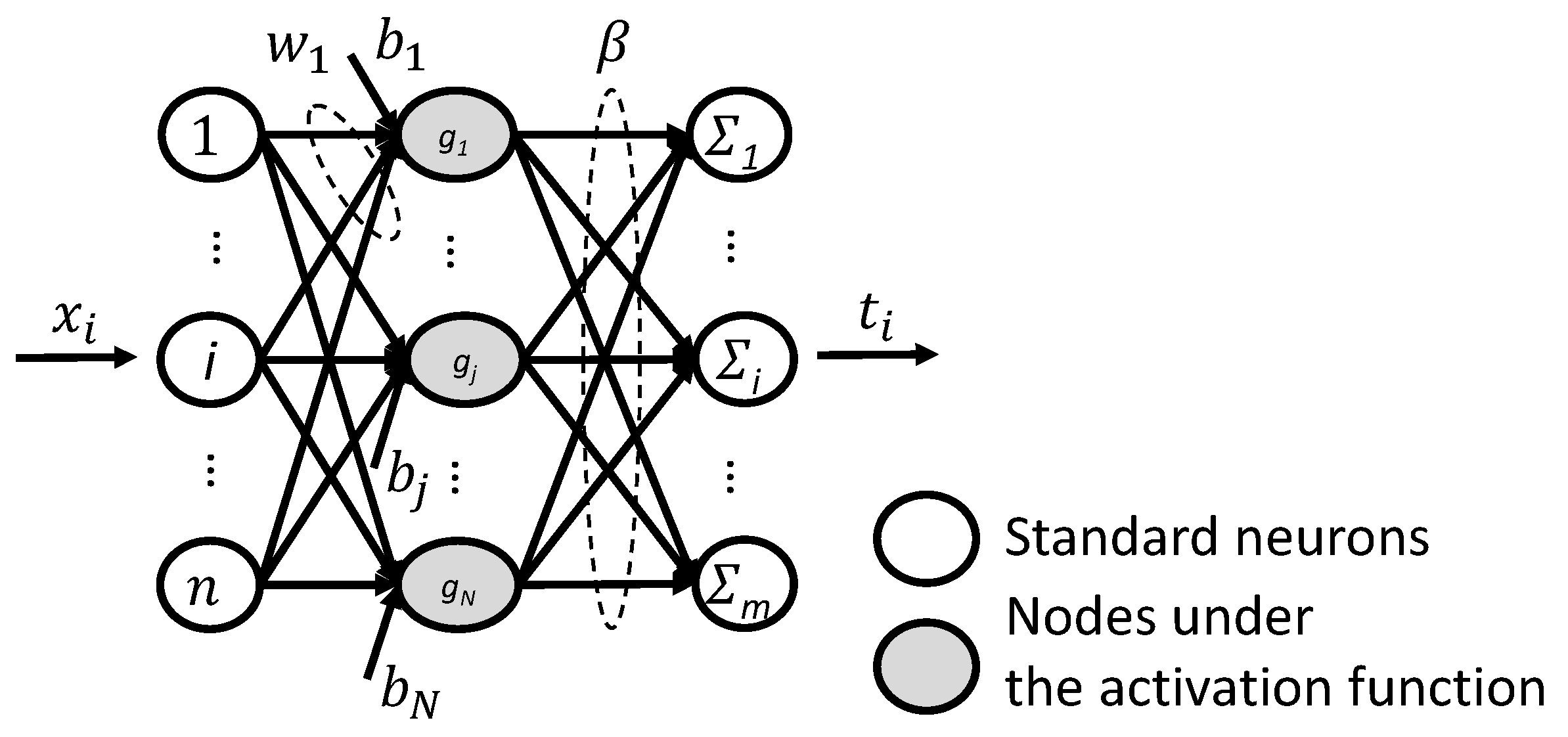

3.2. Extreme Learning Machines

| Algorithm 1 ELM learning procedure. |

| Given the training set , activation function , regularization parameter C, and hidden neuron number N. |

|

4. Methodology

4.1. Fingerprint Database

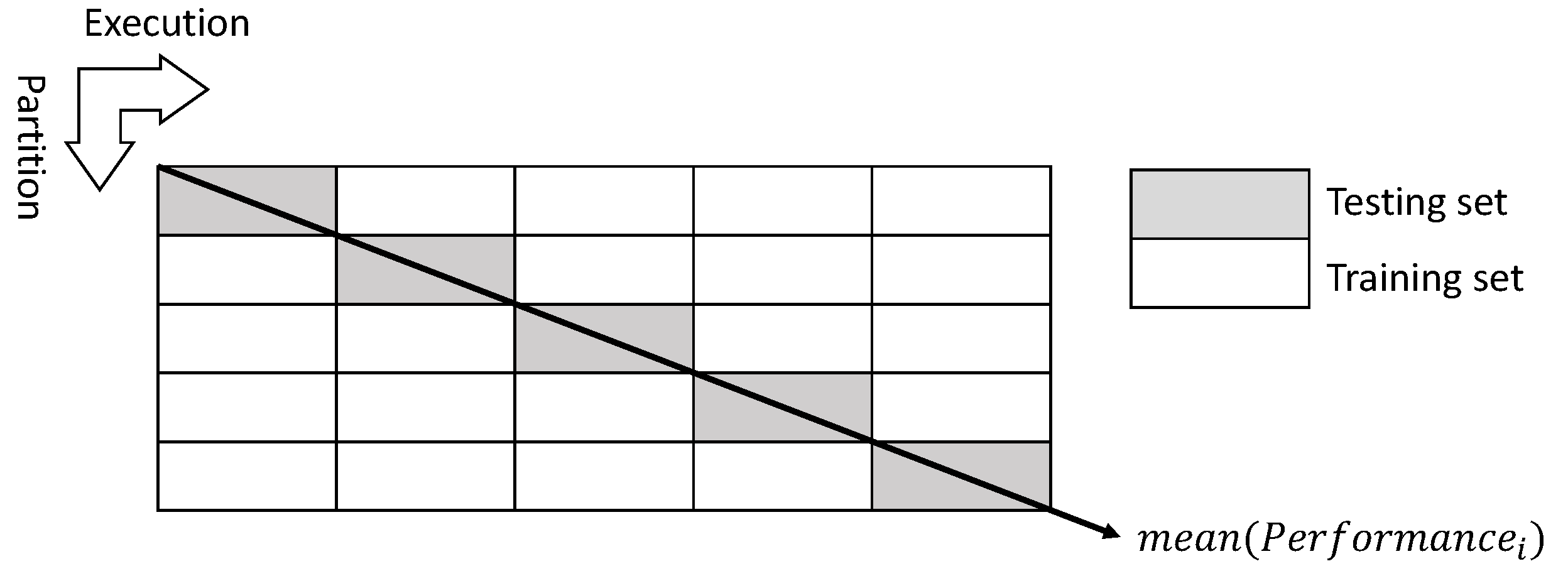

4.2. Results Evaluation by the Five-Fold Cross-Validation Scheme

4.3. Performance Metrics

5. Results and Discussion

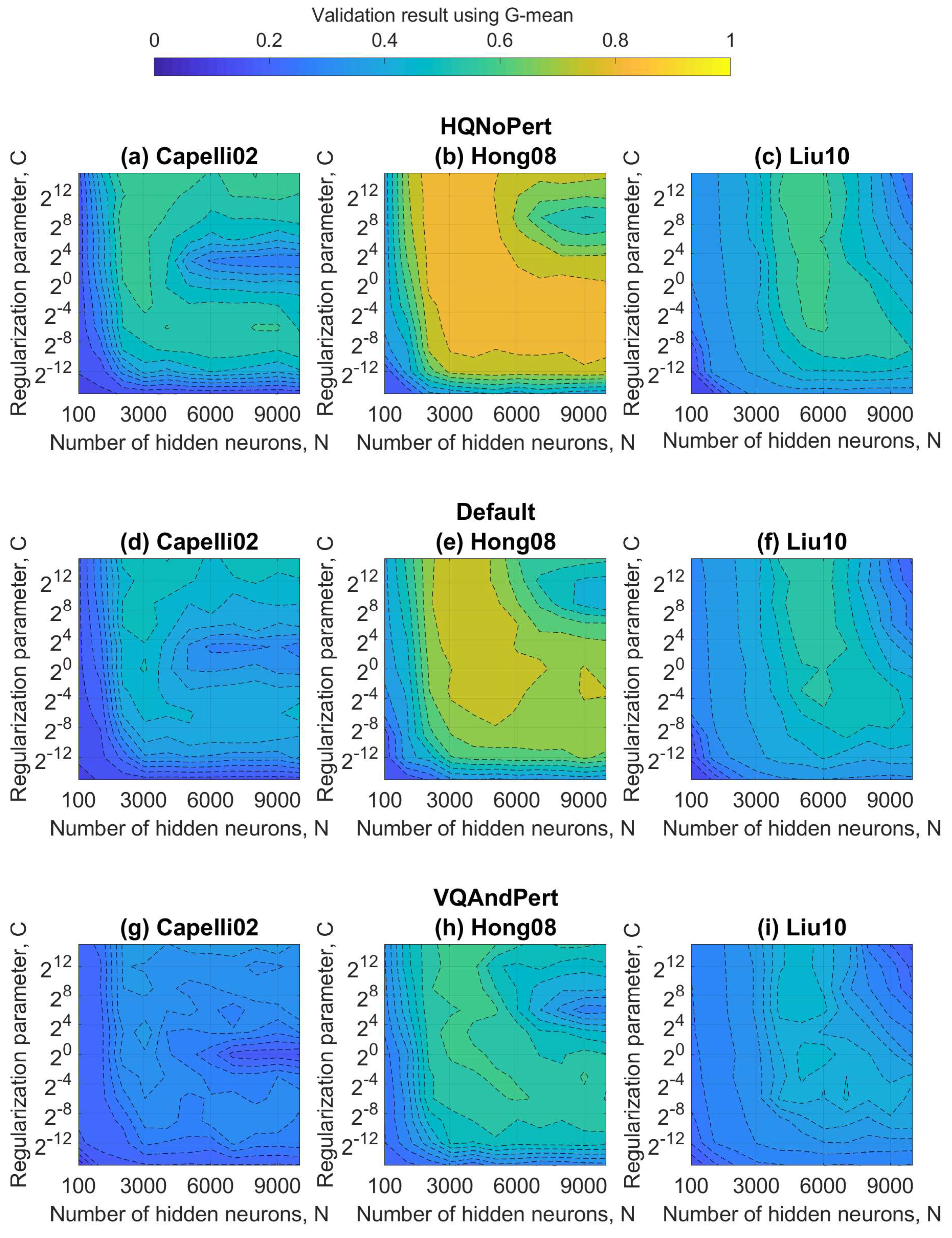

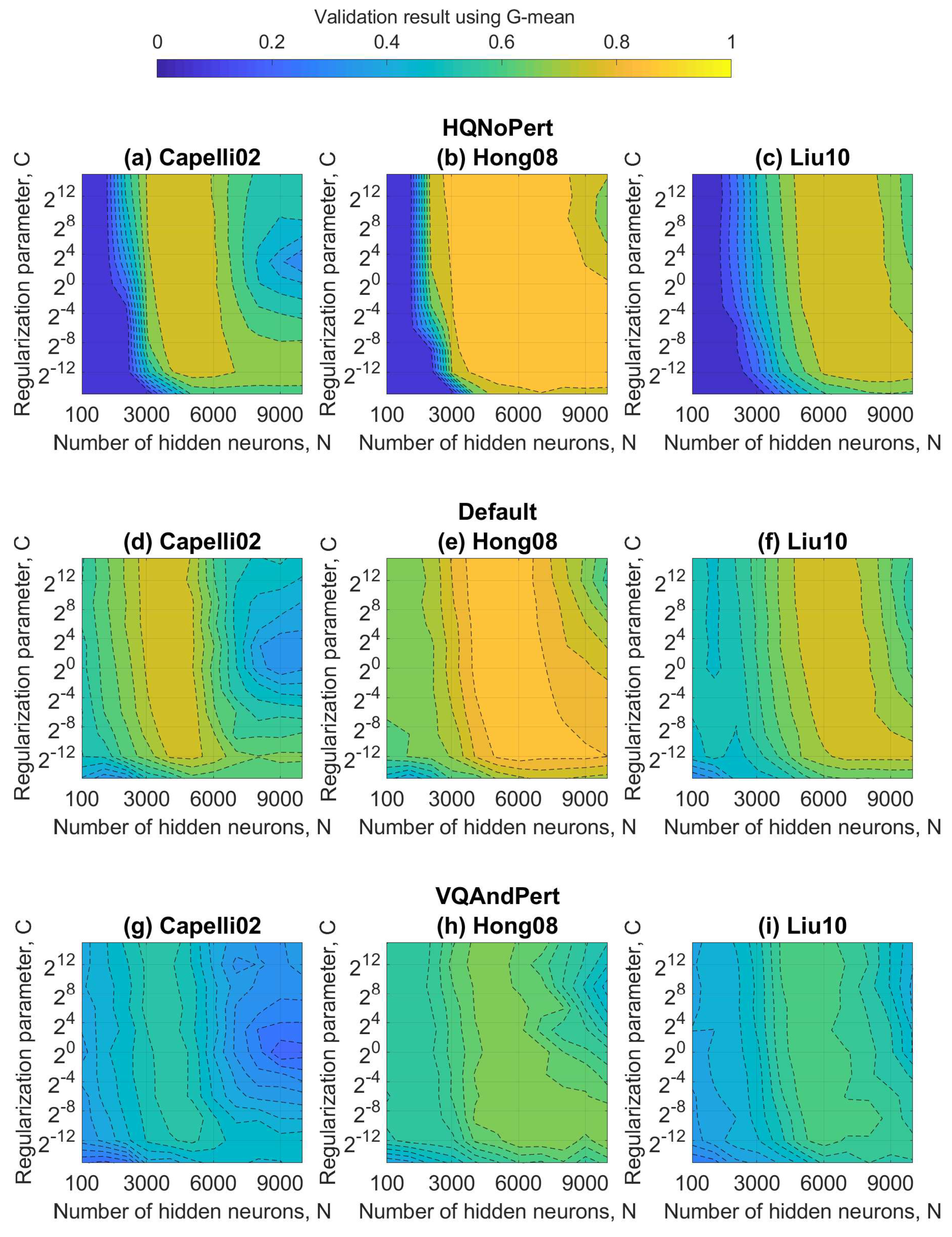

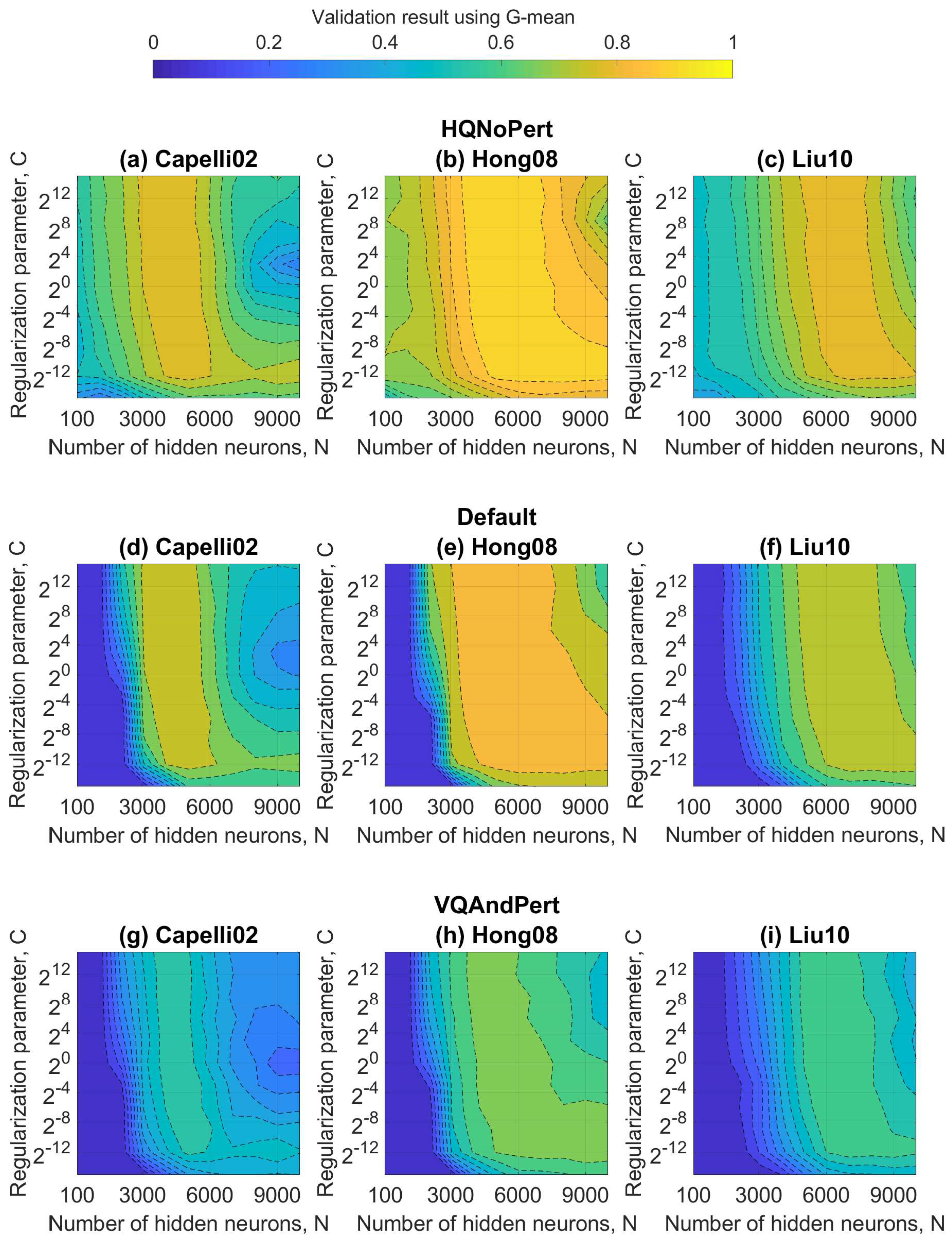

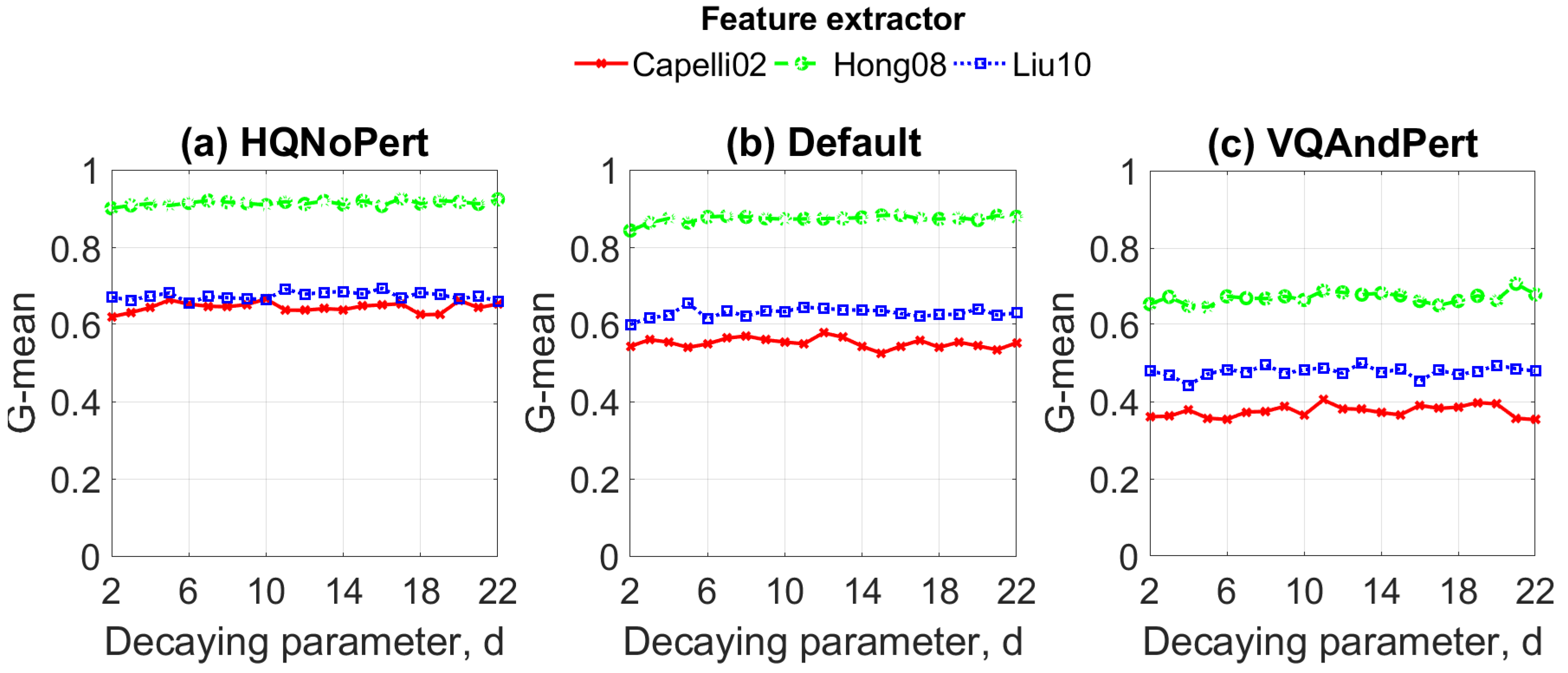

5.1. Estimation of Optimal Hyper-Parameters of the ELMs

5.2. Evaluation and Comparison by Using Classical Metrics: Accuracy and Penetration Rate

5.3. Complexity Analysis

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| CNN | Convolutional neural network |

| ELM | Extreme learning machine |

| SLFN | Single hidden layer feedforward neural network |

| NIST | National institute of standard and technologies |

| FVC | Fingerprint verification competition |

| OM | Orientation map |

| SFINGE | Synthetic fingerprint generator |

| RELU | Rectified linear unit |

| W-ELM1 | Weighted ELM1 |

| W-ELM2 | Weighted ELM2 |

| DW-ELM | Decay weighted |

| G-mean | Geometric mean |

| Acc | Root mean square error |

| PR | Absolute error of the penetration rate |

References

- Tehseen, Z.; Mubeen, G.; Syed, A.T.; Imtiaz, A.T. Robust fingerprint classification with Bayesian convolutional networks. IET Image Process. 2019, 13, 1280–1288. [Google Scholar]

- Galar, M.; Derrac, J.; Peralta, D.; Triguero, I.; Paternain, D.; Lopez-Molina, C.; García, S.; Benítez, J.M.; Pagola, M.; Barrenechea, E.; et al. A survey of fingerprint classification Part I: Taxonomies on feature extraction methods and learning models. Knowl.-Based Syst. 2015, 81, 76–97. [Google Scholar] [CrossRef]

- Peralta, D.; Triguero, I.; Garcia, S.; Saeys, Y.; Benitez, J.M.; Herrera, F. On the use of convolutional neural networks for robust classification of multiple fingerprint captures. Int. J. Intell. Syst. 2018, 33, 213–230. [Google Scholar] [CrossRef]

- Shrein, J.M. Fingerprint classification using convolutional neural networks and ridge orientation images. In Proceedings of the IEEE Symposium Series on Computational Intelligence (SSCI), Honolulu, HI, USA, 27 November–1 December 2017; pp. 1–8. [Google Scholar]

- Galar, M.; Derrac, J.; Peralta, D.; Triguero, I.; Paternain, D.; Lopez-Molina, C.; Garcia, S.; Benitez, J.M.; Pagola, M.; Barrenechea, E.; et al. A survey of fingerprint classification Part II: Experimental analysis and ensemble proposal. Knowl.-Based Syst. 2015, 81, 98–116. [Google Scholar] [CrossRef]

- Henry, E.R. Classification and Uses of Finger Prints; HM Stationery Office: London, UK, 1905. [Google Scholar]

- Ding, S.; Zhao, H.; Zhang, Y.; Xu, X.; Nie, R. Extreme learning machine: Algorithm, theory and applications. Artif. Intell. Rev. 2015, 44, 103–115. [Google Scholar] [CrossRef]

- Koziarski, M. Radial-based undersampling for imbalanced data classification. Pattern Recognit. 2020, 102, 107262. [Google Scholar] [CrossRef]

- Han, W.; Huang, Z.; Li, S.L.; Jia, Y. Distribution-sensitive unbalanced data oversampling method for medical diagnosis. J. Med. Syst. 2019, 43, 39. [Google Scholar] [CrossRef]

- Zong, W.; Huang, G.B.; Chen, Y. Weighted extreme learning machine for imbalance learning. Neurocomputing 2013, 101, 229–242. [Google Scholar] [CrossRef]

- Guo, J.M.; Liu, Y.F.; Chang, J.Y.; Lee, J.D. Fingerprint classification based on decision tree from singular points and orientation field. Expert Syst. Appl. 2014, 41, 752–764. [Google Scholar] [CrossRef]

- Peralta, D.; Triguero, I.; GarcÃa, S.; Saeys, Y.; Benitez, J.M.; Herrera, F. Distributed incremental fingerprint identification with reduced database penetration rate using a hierarchical classification based on feature fusion and selection. Knowl.-Based Syst. 2017, 126, 91–103. [Google Scholar] [CrossRef]

- Michelsanti, D.; Ene, A.D.; Guichi, Y.; Stef, R.; Nasrollahi, K.; Moeslund, T.B. Fast fingerprint classification with deep neural networks. In Proceedings of the 12th International Joint Conference on Computer Vision, Imaging and Computer Graphics Theory and Applications (VISIGRAPP 2017), Porto, Portugal, 27 February–1 March 2017; pp. 202–209. [Google Scholar]

- Ge, S.; Bai, C.; Liu, Y.; Liu, Y.; Zhao, T. Deep and discriminative feature learning for fingerprint classification. In Proceedings of the 3rd IEEE International Conference on Computer and Communications (ICCC), Chengdu, China, 13–16 December 2017; pp. 1942–1946. [Google Scholar]

- Huang, G.; Huang, G.B.; Song, S.; You, K. Trends in extreme learning machines: A review. Neural Netw. 2015, 61, 32–48. [Google Scholar] [CrossRef] [PubMed]

- Zabala-Blanco, D.; Mora, M.; Azurdia-Meza, C.A.; Dehghan Firoozabadi, A. Extreme learning machines to combat phase noise in RoF-OFDM schemes. Electronics 2019, 8, 921. [Google Scholar] [CrossRef]

- Huang, G.B.; Zhu, Q.Y.; Siew, C.K. Extreme learning machine: Theory and applications. Neurocomputing 2006, 70, 489–501. [Google Scholar] [CrossRef]

- Huang, G.; Song, S.; Gupta, J.N.D.; Wu, C. Semi-supervised and unsupervised extreme learning machines. IEEE Trans. Cybern. 2014, 44, 2405–2417. [Google Scholar] [CrossRef]

- Zhang, K.; Luo, M. Outlier-robust extreme learning machine for regression problems. Neurocomputing 2015, 151, 1519–1527. [Google Scholar] [CrossRef]

- Shen, Q.; Ban, X.; Liu, R.; Wang, Y. Decay-weighted extreme learning machine for balance and optimization learning. Mach. Vis. Appl. 2017, 28, 743753. [Google Scholar] [CrossRef]

- Saeed, F.; Hussain, M.; Aboalsamh, H.A. Classification of live scanned fingerprints using histogram of gradient descriptor. In Proceedings of the 21st Saudi Computer Society National Computer Conference (NCC), Riyadh, Saudi Arabia, 25–26 April 2018; pp. 1–5. [Google Scholar]

- Cappelli, R.; Maio, D.; Maltoni, D. A multi-classifier approach to fingerprint classification. Pattern Anal. Appl. 2002, 5, 136–144. [Google Scholar] [CrossRef]

- Hong, J.H.; Min, J.K.; Cho, U.K.; Cho, S.B. Fingerprint classification using one-vs-all support vector machines dynamically ordered with naive Bayes classifiers. Pattern Recognit. 2008, 41, 662–671. [Google Scholar] [CrossRef]

- Liu, M. Fingerprint classification based on Adaboost learning from singularity features. Pattern Recognit. 2010, 43, 1062–1070. [Google Scholar] [CrossRef]

- Cappelli, R.; Maio, D.; Maltoni, D. Synthetic fingerprint-database generation. In Object Recognition Supported by User Interaction for Service Robots; IEEE: New York, USA, 2002; Volume 3, pp. 744–747. [Google Scholar]

- Maltoni, D.; Maio, D.; Jain, A.K.; Prabhakar, S. Handbook of Fingerprint Recognition; Springer: London, UK, 2009. [Google Scholar]

- Fingerprint Database NIST-4. Available online: https://www.nist.gov/srd/nist-special-database-4 (accessed on 20 May 2020).

- Fingerprint Database FVC-2004. Available online: http://bias.csr.unibo.it/fvc2004/download.asp (accessed on 20 May 2020).

- El-Hamdi, D.; Elouedi, I.; Fathallah, A.; Nguyen, M.K.; Hamouda, A. Fingerprint classification using conic radon transform and convolutional neural networks. In Advanced Concepts for Intelligent Vision Systems; Blanc-Talon, J., Helbert, D., Philips, W., Popescu, D., Scheunders, P., Eds.; Springer International Publishing: New York, NY, USA, 2018; pp. 402–413. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet classification with deep convolutional neural networks. Commun. ACM 2017, 60, 84–90. [Google Scholar] [CrossRef]

- Chatfield, K.; Simonyan, K.; Vedaldi, A.; Zisserman, A. Return of the devil in the details: Delving deep into convolutional nets. Br. Mach. Vis. Conf. 2014, arXiv:cs/1405.3531. [Google Scholar]

- Alias, N.A.; Radzi, N.H.M. Fingerprint classification using support vector machine. In Proceedings of the Fifth ICT International Student Project Conference (ICT-ISPC), Nakhon Pathom, Thailand, 27–28 May 2016; pp. 105–108. [Google Scholar]

- Wang, R.; Han, C.; Guo, T. A novel fingerprint classification method based on deep learning. In Proceedings of the 23rd International Conference on Pattern Recognition (ICPR), Cancun, Mexico, 4–8 December 2016; pp. 931–936. [Google Scholar]

- Gupta, P.; Gupta, P. A robust singular point detection algorithm. Appl. Soft Comput. 2015, 29, 411–423. [Google Scholar] [CrossRef]

- Dorasamy, K.; Webb, L.; Tapamo, J.; Khanyile, N.P. Fingerprint classification using a simplified rule-set based on directional patterns and singularity features. In Proceedings of the International Conference on Biometrics (ICB), Phuket, Thailand, 19–22 May 2015; pp. 400–407. [Google Scholar]

- Jung, H.W.; Lee, J.H. Noisy and incomplete fingerprint classification using local ridge distribution models. Pattern Recognit. 2015, 48, 473–484. [Google Scholar] [CrossRef]

- Vitello, G.; Sorbello, F.; Migliore, G.I.M.; Conti, V.; Vitabile, S. A novel technique for fingerprint classification based on fuzzy C-means and naive Bayes classifier. In Proceedings of the Eighth International Conference on Complex, Intelligent and Software Intensive Systems, Birmingham, UK, 2–4 July 2014; pp. 155–161. [Google Scholar]

- Galar, M.; Sanz, J.; Pagola, M.; Bustince, H.; Herrera, F. A preliminary study on fingerprint classification using fuzzy rule-based classification systems. In Proceedings of the IEEE International Conference on Fuzzy Systems (FUZZ-IEEE), Beijing, China, 6–11 July 2014; pp. 554–560. [Google Scholar]

- Luo, J.; Song, D.; Xiu, C.; Geng, S.; Dong, T. Fingerprint classification combining curvelet transform and gray-level cooccurrence matrix. Math. Probl. Eng. 2014, 2014, 1–15. [Google Scholar] [CrossRef]

- Saini, M.K.; Saini, J.S.; Sharma, S. Moment based wavelet filter design for fingerprint classification. In Proceedings of the International Conference On Signal Processing And Communication (ICSC), Noida, India, 12–14 December 2013; pp. 267–270. [Google Scholar] [CrossRef]

- Cao, K.; Pang, L.; Liang, J.; Tian, J. Fingerprint classification by a hierarchical classifier. Pattern Recognit. 2013, 46, 3186–3197. [Google Scholar] [CrossRef]

- Rajanna, U.; Erol, A.; Bebis, G. A comparative study on feature extraction for fingerprint classification and performance improvements using rank-level fusion. Pattern Anal. Appl. 2010, 13, 263–272. [Google Scholar] [CrossRef]

- Feng, J.; Jain, A.K. Fingerprint reconstruction: From minutiae to phase. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 33, 209–223. [Google Scholar] [CrossRef]

- Bazen, A.M.; Gerez, S.H. Systematic methods for the computation of the directional fields and singular points of fingerprints. IEEE Trans. Pattern Anal. Mach. Intell. 2002, 24, 905–919. [Google Scholar] [CrossRef]

- Kawagoe, M.; Tojo, A. Fingerprint pattern classification. Pattern Recognit. 1984, 17, 295–303. [Google Scholar] [CrossRef]

- Jain, A.K.; Prabhakar, S.; Hong, L. A multichannel approach to fingerprint classification. IEEE Trans. Pattern Anal. Mach. Intell. 1999, 21, 348–359. [Google Scholar] [CrossRef]

- Nilsson, K.; Bigun, J. Localization of corresponding points in fingerprints by complex filtering. Pattern Recognit. Lett. 2003, 24, 2135–2144. [Google Scholar] [CrossRef]

- Zabala-Blanco, D.; Mora, M.; Azurdia-Meza, C.A.; Dehghan Firoozabadi, A.; Palacios Jativa, P.; Soto, I. Relaxation of the radio-frequency linewidth for coherent-optical orthogonal frequency-division multiplexing schemes by employing the improved extreme learning machine. Symmetry 2020, 12, 632. [Google Scholar] [CrossRef]

- Lu, C.; Ke, H.; Zhang, G.; Mei, Y.; Xu, H. An improved weighted extreme learning machine for imbalanced data classification. Memet. Comput. 2019, 11, 27–34. [Google Scholar] [CrossRef]

- Akbulut, Y.; Şengür, A.; Guo, Y.; Smarandache, F. A novel neutrosophic weighted extreme learning machine for imbalanced data set. Symmetry 2017, 9, 142. [Google Scholar] [CrossRef]

- Maimaitiyiming, M.; Sagan, V.; Sidike, P.; Kwasniewski, M.T. Dual activation function-based extreme learning machine (ELM) for estimating grapevine berry yield and quality. Remote Sens. 2019, 11, 740. [Google Scholar] [CrossRef]

- Moreno-Torres, J.G.; Saez, J.A.; Herrera, F. Study on the impact of partition-induced dataset shift on k-fold cross-validation. IEEE Trans. Neural Netw. Learn. Syst. 2012, 23, 1304–1312. [Google Scholar] [CrossRef]

- Deng, J.; Dong, W.; Socher, R.; Li, L.; Kai, L.; Fei-Fei, L. ImageNet: A large-scale hierarchical image database. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009; pp. 248–255. [Google Scholar]

- Huang, N.; Yuan, C.; Cai, G.; Xing, E. Hybrid short term wind speed forecasting using variational mode decomposition and a weighted regularized extreme learning machine. Energies 2016, 9, 989. [Google Scholar] [CrossRef]

- Sokolova, M.; Lapalme, G. A systematic analysis of performance measures for classification tasks. Inf. Process. Manag. 2009, 45, 427–437. [Google Scholar] [CrossRef]

- Ferri, C.; Hernández-Orallo, J.; Modroiu, R. An experimental comparison of performance measures for classification. Pattern Recognit. Lett. 2009, 30, 27–38. [Google Scholar] [CrossRef]

- Khellal, A.; Ma, H.; Fei, Q. Convolutional neural network features comparison between back-propagation and extreme learning machine. In Proceedings of the 37th Chinese Control Conference (CCC), Wuhan, China, 25–27 July 2018; pp. 9629–9634. [Google Scholar]

- Pang, S.; Yang, X. Deep convolutional extreme learning machine and its application in handwritten digit classification. Comput. Intell. Neurosci. 2016, 2016, 1–10. [Google Scholar] [CrossRef]

- Lekamalage, C.K.L.; Song, K.; Huang, G.; Cui, D.; Liang, K. Multi layer multi objective extreme learning machine. In Proceedings of the IEEE International Conference on Image Processing (ICIP), Beijing, China, 17–20 September 2017; pp. 1297–1301. [Google Scholar]

- Tang, J.; Deng, C.; Huang, G. Extreme learning machine for multilayer perceptron. IEEE Trans. Neural Netw. Learn. Syst. 2016, 27, 809–821. [Google Scholar] [CrossRef] [PubMed]

| Authors | Year | Feature Extractor | Classifier | Database | Accuracy (%) | Evaluation Time (s) |

|---|---|---|---|---|---|---|

| Tehseen et al. [1] | 2019 | None | Bayesian deep CNNs | NIST-DB4 (3300 samples) and FVC2002 (1600 samples) | 96.1 and 95.5 | 4393 and 3801 |

| El-Hanmdi et al. [29] | 2019 | Conic Radon transform (image functions are integrated over conic sections) | CNNs (4 convolutional layers with 3 max-pooling layers followed by a fully-connected layer) | NIST-DB4 (3300 samples) | 95.0 | 0.06 |

| Saeed et al. [21] | 2018 | Orientation field with histograms of oriented gradients | Basic ELM with the radial activation function | FVC2004 (3520 samples) | 98.7 | Not reported |

| Peralta et al. [3] | 2018 | None | CNNs (a new network and a modification of the CaffeNet CNN [30]) with softmax probabilities for the last layer | NIST-DB4 (3300 samples) and SFINGE (120,000 samples) | 93.73 and 94.58 | 960 and 2,306 |

| Shrein [4] | 2017 | Normalized orientation angles | CNNs with various convolutional, max-pooling, and fully connected layers | NIST-DB4 (3300 samples) | 95.4 | Not reported |

| Ge et al. [14] | 2017 | None | Deep CNNs with 6 diverse layers | NIST-DB4 (3,300 samples) | 97.9 | Not reported |

| Michelsanti et al. [13] | 2017 | None | Pre-trained CNNs known as VGG-F and VGG-S [31] | NIST-DB4 (3300 samples) | 94.4 and 95.95 | 32,600 and 108,000 |

| Alias et al. [32] | 2016 | Minutiae extraction | Support vector machines | FVC2000 and FVC2002 (each has 880 samples) | 92.3 and 92.8 | Not reported |

| Wang et al. [33] | 2016 | Orientation field based on a support vector machine | Deep CNNs with 3 complex hidden layers | NIST-DB4 (3300 samples) | 98.4 | Not reported |

| Gupta et al. [34] | 2015 | A combination of the orientation field, directional filtering, and Poincare index | Support vector machines | FVC2004 (1600 samples) | 97.9 | 2.6 for input features |

| Galar et al. [5] | 2015 | Singular points, ridge structure, and filter response | Support vector machines | NIST-DB4 (3300 samples) and SFINGE (30,000 samples) | 92.6 and 95.7 | Not reported |

| Dorasamy et al. [35] | 2015 | Directional patters and singular points | Decision tree | FVC2002 and FVC2004 (each has 880 samples) | 91.54 and 93.2 | Not reported |

| Jung et al. [36] | 2015 | Ridges based on a block of 16 × 16 pixels | Regional local models using conditional probabilities | FVC 2000, 2002, and 2004 (each has 10,304 samples) | 97.4 | Not reported |

| Vitello et al. [37] | 2014 | Fuzzy C-means based on centroids | Naive Bayes | NIST-DB4 (3300 samples) and FVC2002 (3200 samples) | 91.74 and 80.1 | Not reported |

| Galar et al. [38] | 2014 | FingerCode and/or singular points (cores and deltas) | Fuzzy rule learning based on linguistic terms | SFINGE (30,000 samples) | 93.78 | Not reported |

| Guo et al. [11] | 2014 | Singular points and orientation field | Decision tree | FVC 2000, 2002, and 2004 (7,920 samples in total) | 92.74 | Out of context |

| Luo et al. [39] | 2014 | Curvelet transform together with gray-level co-ocurrence matrices | K-nearest neighbors | NIST-DB4 (3300 samples) | 94.6 | 1.47 |

| Saini et al. [40] | 2013 | Hu moments based Wavelet designing | Probabilistic neural network along with support vector machines | FVC 2004 (880 samples) | 98.24 | Not reported |

| Cao et al. [41] | 2013 | Orientation image, complex filter responses, and ridge line flows | Hierarchic network with five stages (heuristic rules, K-nearest neighbor, and support vector machines) | NIST-DB4 (3300 samples) | 95.9 | 4.31 |

| Liu [24] | 2010 | Multi-scale singularities via complex filters | Addaboosted decision trees (combination of weak classifiers) | NIST-DB4 (3300 samples) | 94.1 | 1.6 |

| Rajanna et al. [42] | 2010 | Orientation map and orientation collinearity | Rank level fusion with K-nearest neighbors | NIST-DB4 (3300 samples) | 91.8 | Out of context |

| (a) Standard ELM | Capelli02 | Hong08 | Liu10 | ||||||

|---|---|---|---|---|---|---|---|---|---|

| N | C | G-Mean | N | C | G-Mean | N | C | G-Mean | |

| HQNoPert | 3000 | 0.64 | 3000 | 0.86 | 5000 | 0.65 | |||

| Default | 0.54 | 0.80 | 0.62 | ||||||

| VQAndPert | 0.31 | 0.58 | 0.40 | ||||||

| (b) W-ELM1 | Capelli02 | Hong08 | Liu10 | ||||||

| N | C | G-Mean | N | C | G-Mean | N | C | G-Mean | |

| HQNoPert | 4000 | 0.64 | 5000 | 0.92 | 5000 | 0.67 | |||

| Default | 0.54 | 0.88 | 0.63 | ||||||

| VQAndPert | 0.37 | 0.65 | 0.49 | ||||||

| (c) W-ELM2 | Capelli02 | Hong08 | Liu10 | ||||||

| N | C | G-Mean | N | C | G-Mean | N | C | G-Mean | |

| HQNoPert | 4000 | 0.66 | 5000 | 0.93 | 5000 | 0.69 | |||

| Default | 0.57 | 0.89 | 0.64 | ||||||

| VQAndPert | 0.40 | 0.67 | 0.51 | ||||||

| DW-ELM | Capelli02 | Hong08 | Liu10 | |||

|---|---|---|---|---|---|---|

| d | G-Mean | d | G-Mean | d | G-Mean | |

| HQNoPert | 9 | 0.67 | 16 | 0.93 | 15 | 0.69 |

| Default | 11 | 0.58 | 15 | 0.89 | 4 | 0.65 |

| VQAndPert | 10 | 0.40 | 20 | 0.71 | 12 | 0.50 |

| (a) Capelli 02 | ELM | W-ELM1 | W-ELM2 | DW-ELM | ||||

|---|---|---|---|---|---|---|---|---|

| Acc | PR | Acc | PR | Acc | PR | Acc | PR | |

| HQNoPert | 0.80 | 0.1788 | 0.79 | 0.1650 | 0.81 | 0.1500 | 0.79 | 0.1645 |

| Default | 0.79 | 0.2112 | 0.74 | 0.1969 | 0.76 | 0.1785 | 0.64 | 0.1942 |

| VQAndPert | 0.61 | 0.2913 | 0.60 | 0.2522 | 0.63 | 0.2349 | 0.61 | 0.2521 |

| (b) Hong08 | ELM | W-ELM1 | W-ELM2 | DW-ELM | ||||

| Acc | PR | Acc | PR | Acc | PR | Acc | PR | |

| HQNoPert | 0.95 | 0.0485 | 0.94 | 0.0340 | 0.95 | 0.0332 | 0.95 | 0.0330 |

| Default | 0.94 | 0.0662 | 0.93 | 0.0412 | 0.94 | 0.0406 | 0.94 | 0.0412 |

| VQAndPert | 0.86 | 0.0954 | 0.88 | 0.0519 | 0.88 | 0.0512 | 0.88 | 0.0521 |

| (c) Liu10 | ELM | W-ELM1 | W-ELM2 | DW-ELM | ||||

| Acc | PR | Acc | PR | Acc | PR | Acc | PR | |

| HQNoPert | 0.78 | 0.2060 | 0.79 | 0.1727 | 0.80 | 0.1651 | 0.79 | 0.1711 |

| Default | 0.79 | 0.2220 | 0.77 | 0.1866 | 0.77 | 0.1751 | 0.78 | 0.1787 |

| VQAndPert | 0.66 | 0.2696 | 0.67 | 0.2327 | 0.68 | 0.2166 | 0.68 | 0.2315 |

| Hong08 and W-ELM2 | Modified CaffeNet CNN | New CNN | ||||

|---|---|---|---|---|---|---|

| Acc | PR | Acc | PR | Acc | PR | |

| HQNoPert | 0.94 | 0.0332 | 0.99 | 0.0051 | 0.99 | 0.0031 |

| Default | 0.93 | 0.0406 | 0.97 | 0.0211 | 0.98 | 0.0153 |

| VQAndPert | 0.88 | 0.0512 | 0.96 | 0.0329 | 0.96 | 0.0279 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zabala-Blanco, D.; Mora, M.; Barrientos, R.J.; Hernández-García, R.; Naranjo-Torres, J. Fingerprint Classification through Standard and Weighted Extreme Learning Machines. Appl. Sci. 2020, 10, 4125. https://doi.org/10.3390/app10124125

Zabala-Blanco D, Mora M, Barrientos RJ, Hernández-García R, Naranjo-Torres J. Fingerprint Classification through Standard and Weighted Extreme Learning Machines. Applied Sciences. 2020; 10(12):4125. https://doi.org/10.3390/app10124125

Chicago/Turabian StyleZabala-Blanco, David, Marco Mora, Ricardo J. Barrientos, Ruber Hernández-García, and José Naranjo-Torres. 2020. "Fingerprint Classification through Standard and Weighted Extreme Learning Machines" Applied Sciences 10, no. 12: 4125. https://doi.org/10.3390/app10124125

APA StyleZabala-Blanco, D., Mora, M., Barrientos, R. J., Hernández-García, R., & Naranjo-Torres, J. (2020). Fingerprint Classification through Standard and Weighted Extreme Learning Machines. Applied Sciences, 10(12), 4125. https://doi.org/10.3390/app10124125